Abstract

Background

The incidence of stroke and stroke-related hemiparesis has been steadily increasing and is projected to become a serious social, financial, and physical burden on the aging population. Limited access to outpatient rehabilitation for these stroke survivors further deepens the healthcare issue and estranges the stroke patient demographic in rural areas. However, new advances in motion detection deep learning enable the use of handheld smartphone cameras for body tracking, offering unparalleled levels of accessibility.

Methods

In this study we want to develop an automated method for evaluation of a shortened variant of the Fugl-Meyer assessment, the standard stroke rehabilitation scale describing upper extremity motor function. We pair this technology with a series of machine learning models, including different neural network structures and an eXtreme Gradient Boosting model, to score 16 of 33 (49%) Fugl-Meyer item activities.

Results

In this observational study, 45 acute stroke patients completed at least 1 recorded Fugl-Meyer assessment for the training of the auto-scorers, which yielded average accuracies ranging from 78.1% to 82.7% item-wise.

Conclusion

In this study, an automated method was developed for the evaluation of a shortened variant of the Fugl-Meyer assessment, the standard stroke rehabilitation scale describing upper extremity motor function. This novel method is demonstrated with potential to conduct telehealth rehabilitation evaluations and assessments with accuracy and availability.

Introduction

Studies report up to 85% of stroke survivors experience upper extremity (UE) hemiparesis in at least 1 arm 1 and 78% fail to achieve the average UE function for their age, even after 3 months of treatment and rehabilitation. 2 Loss or partial loss of function in even one of the limbs can be extremely debilitating and depressive, as many basic daily tasks require bimanual function. In fact, dependence on bilaterality has been shown to increase with age. 3 Common tasks like buttoning a shirt, writing, reaching for objects, and opening bottles mean the survivor must unlearn old habits and relearn new ones.4,5

The growing issue of poor accessibility to healthcare exacerbates this functional decline, particularly for patients with disabilities in rural areas and largely attributable to a wide variety of factors. 6 In Texas, for example, the geographic disparities between rural and urban America are apparent; 71% of rural counties lack outpatient rehabilitation clinics for stroke patients, whereas only 19% of urban counties share the same issue. 7 Parekh and Barton 8 describe other contributing factors and the complications of healthcare delivery to an aging and increasingly disabled population, citing 75 million people who have multiple chronic conditions. These comorbidities reduce patient compliance and stand in the way of treatment that is best realized by active participation. Current telerehabilitation programs assess motor impairment utilizing technology that is expensive, out of reach for many, or utilize a hybrid in-person assessment, as there is limited availability of quantifiable remote motor assessment.9 -11 Uninsured and underinsured patients tend to have increased disability after stroke, are less likely to be discharged to inpatient rehabilitation, and may have minimal or no access to outpatient therapies following a stroke.12 -14 These reasons inspire us to advance technologies that can reach an increasingly isolated patient demographic.

An automated assessment of the UE post-stroke that can occur in an outpatient setting will provide clinicians with important data to guide decision-making and maximize session time for targeted intervention, whether it is in the home or via telerehabilitation. Automation of the Fugl-Meyer assessment, which is used extensively as the primary metric to quantify post-stroke recovery, can provide objective data on range-of-motion, strength, and functional abilities that would otherwise require time and labor from healthcare professionals. In this paper, we present a novel approach to using machine learning for automatic scoring of the Fugl-Meyer assessment to measure UE function in stroke patients. Our primary objective is to demonstrate the feasibility of using a single digital camera for motion detection and machine learning methods for automatic scoring. We developed and tested the predictive ability of 4 machine learning models on videos provided by consenting stroke patients and compared the results with scores provided by a trained healthcare professional. Our results show that machine learning models can achieve similar or better accuracy than human experts in predicting Fugl-Meyer assessment scores. This approach has the potential to reduce clinician burden and improve accessibility to marginalized groups.

Methods

Patient Recruitment

A total of 45 adult study participants with acute or subacute weakness or unilateral hemiplegia as a result of ischemic or hemorrhagic stroke were recruited after admission to inpatient rehabilitation facilities within the Memorial Hermann Health System. Patients were ineligible to participate in the study if they were younger than 18 years old and at the discretion of their attending physician; this refers to any limiting reason from the physician who is responsible for the patient’s well-being, including their current physical condition or interference with important treatment. No physicians recommended exclusion of any subject for this study. Subjects enrolled in the study if they could comprehend and follow basic instructions. All subjects provided in-person or electronic informed consent after an explanation of the study protocol and prior to any study activity, which was approved by the UT Health Institutional Review Board (IRB) and Committee for the Protection of Human Subjects (IRB number: HSC-MS-20-0767).

Study Activities

After enrollment, researchers performed Fugl-Meyer assessments with subjects every 2 days. Fugl-Meyer exercise items were recorded only after the activity was described by the investigator, demonstrated by the investigator, and the subject showed understanding by demonstration. For the recordings, study participants repeated each movement with both arms, first on their non-paretic side, between 3 and 5 times. Fugl-Meyer assessments were ended immediately upon request of the subject for any reason. The movements were captured by a video camera at a resolution of 1080p and a frame rate of 60 Hz placed 3 to 5 m away on a tripod 1.5 m in height. Consistent camera placement, ample lighting, and an unobscured subject improved the quality of motion detection. The Fugl-Meyer was scored in-person by the investigator leading the assessment and by a licensed occupational therapist after the video was spliced into individual activity items. The occupational therapist completed standardization training for an NIH trial and had BlueCloud certification for scoring visual recordings of Fugl-Meyer assessments. All identifiable patient health information, including raw audio- and visual-recording data, was stored locally on an encrypted hard drive and later on a secure UTHealth School of Biomedical Informatics server. Subject videos were separated into smaller clips consisting of individual Fugl-Meyer activity items for ease of scoring by both the model and by the licensed occupational therapist.

Deep-Learning Motion Detection Algorithm and Feature Extraction

We modified a joint recognition pipeline 15 to extract body joints locations from videos. The pipeline uses YOLO V3 16 object detection model to obtain bounding boxes of the patient’s presence in the image. The cropped bounding boxes are then fed to the HRDNet model 17 to extract joints and other landmarks on the body. The output would be extracted xy-positional coordinates of body joints (nose, neck, hip center, and shoulder, elbow, wrist, hip, knee, ankle, eye, and ear for both sides of body), which would be further used as input along the timeline of the patient’s video as an input to score classification model.

Besides major body joints, several Fugl-Meyer assessment items (exercises in hand or wrist groups) require high-precision location identification of hand joints from a patient’s video. A finger joint detection model 18 is implemented which firstly fits a palm detector to provide a bounding box for the hand’s skeleton, and then lock joint landmark locations (wrist alone and 4 joints from all 5 fingers respectively for hand model).

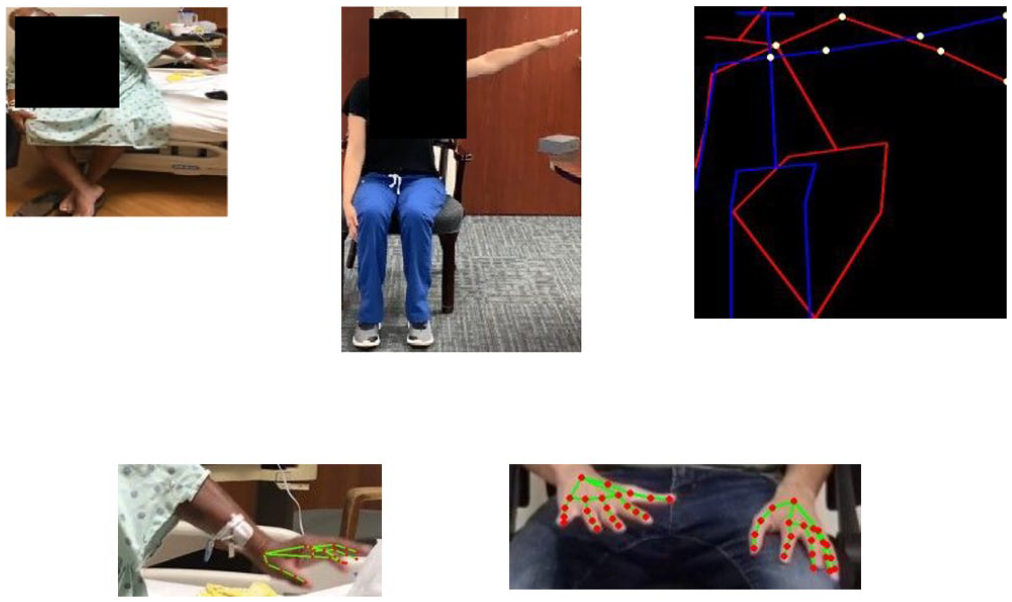

For both models, the output is a (f × 3) vector for each frame, where the first dimension f is the number of features and the second dimension 3 contains the xy-positional coordinates and a confidence level. The number of features is 21 for each joint in the hand model and 19 for each joint in the body model. Normalization of joint position coordinates controlled for differences in subject size and allowed fair comparison between samples. A demonstration of 2 models on original videos are shown in Figure 1.

Visual representation of normalized joint coordinates depicting final position of shoulder abduction performed poorly by subject (left, red) and correctly by investigator (middle, blue) with important joints identified (right, yellow). A hand detection model depicting joints (red) is superimposed on sample images of the subject (bottom left) and another investigator (bottom right).

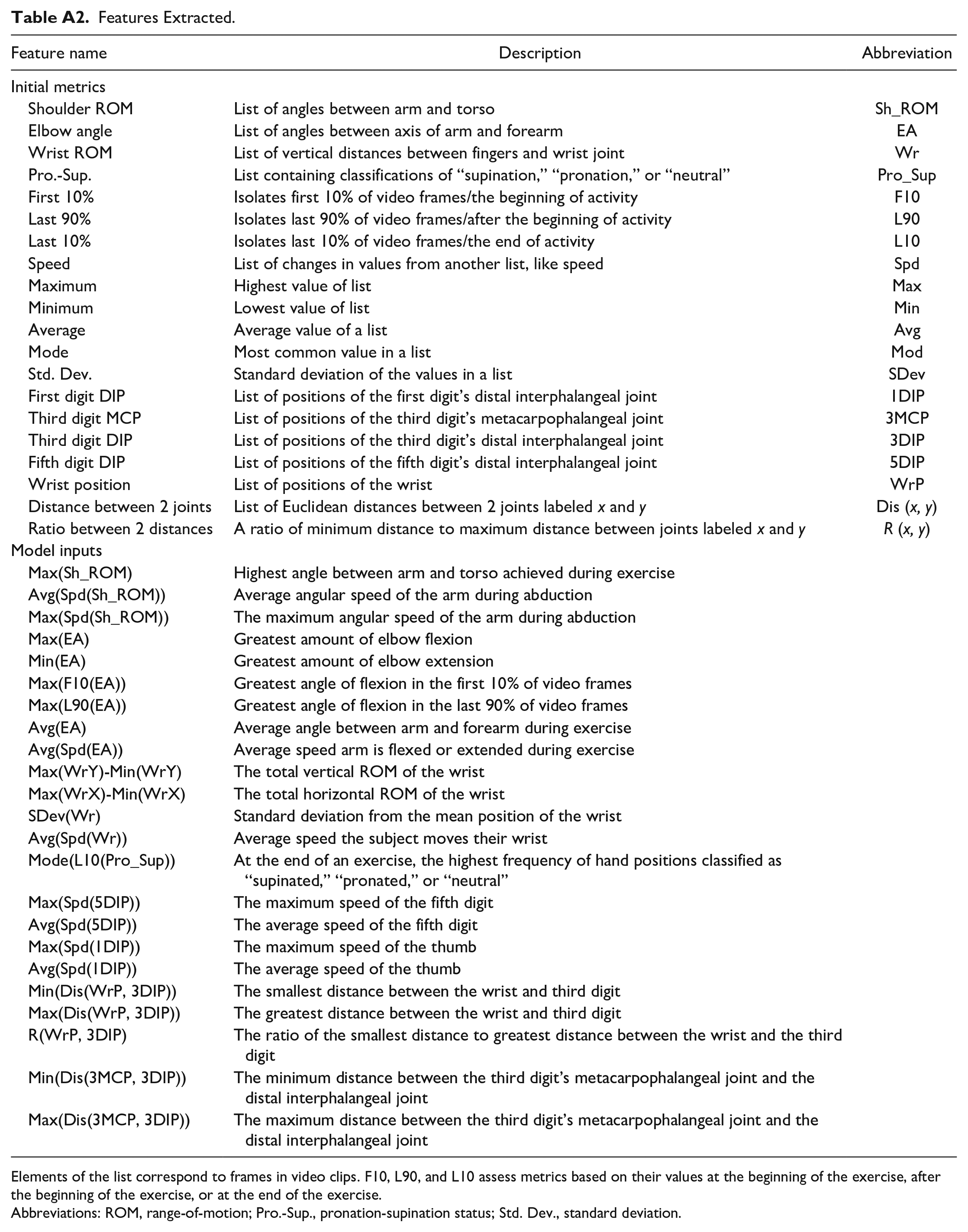

The positional coordinates of features were extracted from video clips for analysis by the Fugl-Meyer Auto-Scoring Models described in section D. Due to symmetry across the sagittal plane, metrics could be calculated both on the left and right side of the subject without adaptations. A summary of the features extracted and final inputs for the auto-scoring models are described in Table A2. Additional information on individual features is provided in Appendix.

Fugl-Meyer Auto-Scoring Models

A total of 16 items of the Fugl-Meyer assessment (described in Table 2) are recorded using only a smartphone camera and scored using machine learning methods. Multiple deep learning models including a convolutional neural network (CNN), recurrent neural network (RNN), and dilated CNN were evaluated to find the highest performing model.

For each video, a 3D tensor of size

For the CNN model, our plain action recognition network was to extract spatial-temporal information from the frame-wise joint locations. It consisted of 3 convolution layers with a filter of 3 × 3, a stride of 1 and a padding of 1, and as the feature map size is halved, the channels (number of filters) is doubled. Two sets of filter numbers were tested: 64 and 128 for the number of filters in the first convolutional layer, respectively. Each convolutional layer is followed by batch normalization.

To further improve the action recognition performance, we used a CNN layer as a backbone for encoding, and then added a layer of RNN layer as a CNN–RNN model (hidden size = 64), and a layer of dilated CNN where the extracted encoded features are flattened and concatenated along the time dimension. A demonstration of the models’ structure can be found in Figure A1. To compare prediction accuracies of deep learning models with advanced machine learning models, we chose eXtreme Gradient Boosting (XGBoost) to be the machine learning benchmark model.

Evaluation and Statistics

For each Fugl-Meyer assessment item score there are 3 possibilities: 0, 1, and 2, where 2 implies the patient performs no/little difference in this item with the weak side compared to the strong side, while 0 implies that the patient cannot finish/have great difficulty conducting such movement. The ground truth data used in calculating the accuracies was the experts’ scores of the same videos that were fed to the algorithm. The actual FMA scores were not used in the calculation. Our model was trained on the experts’ scores and then applied to reserved videos for testing. We treated item-wise Fugl-Meyer assessment scoring as a classification problem of 3 classes, did a 10-time cross-validation of randomly train-test split in item-wise level, and calculated the averaged accuracy, AUROC, and its standard deviation for comparison. In these cross-validations, training and testing sets were kept separate. Moreover, we then conducted group-wise Fugl-Meyer assessment scoring evaluation by fitting a linear regression model between predicted and actual group scores to calculate the coefficient of determination, and root-mean-square error (RMSE) of difference.

Results

Patient Characteristics

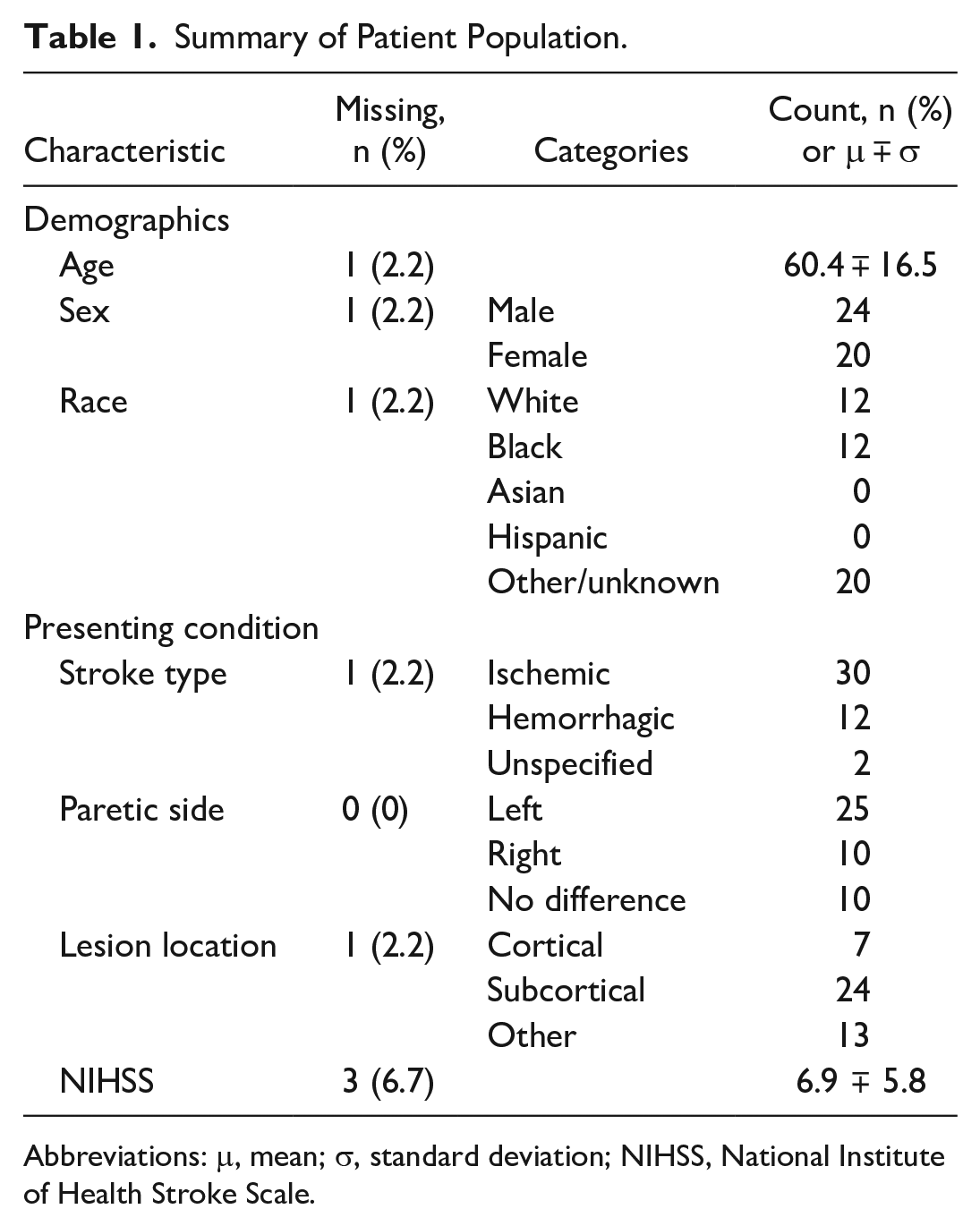

A total of 45 study participants completed at least 1 Fugl-Meyer assessment and are included in the analysis. A summary describing patient demographics and conditions is provided in Table 1. NIH Stroke Scores (NIHSS) were taken at admission and recorded by hospital staff on the patient’s electronic health record. Demographic information for 1 patient was missing due to a documentation glitch, but the subject provided informed consent and participated in all study activities.

Summary of Patient Population.

Abbreviations: μ, mean; σ, standard deviation; NIHSS, National Institute of Health Stroke Scale.

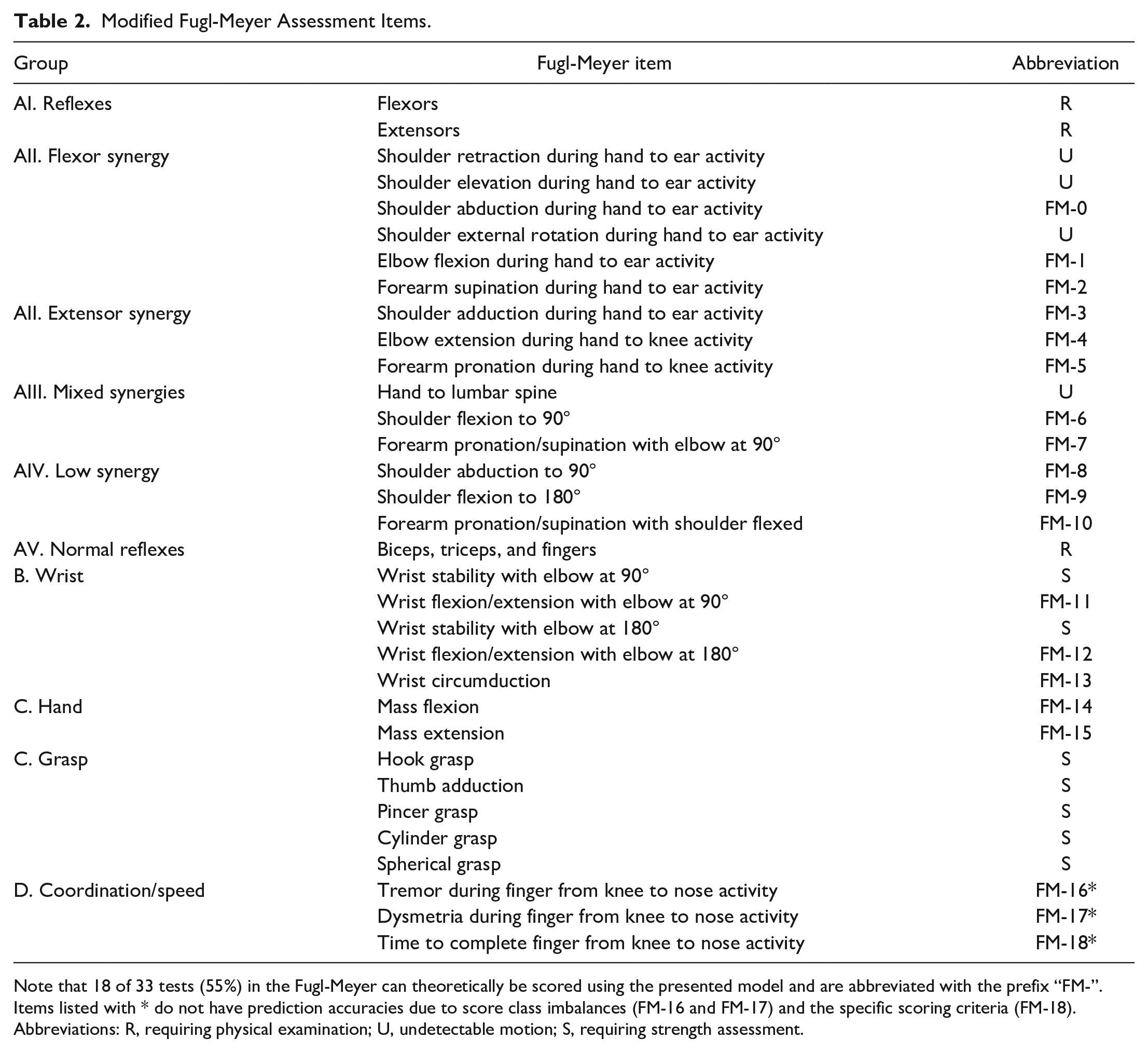

Modified Fugl-Meyer Assessment Items

Table 2 categorizes and summarizes the Fugl-Meyer assessment and identifies scorable items with an abbreviation. Items that can not be scored fall under 1 of 3 categories:

Requiring physical examination (R)

Involving occluded joints or undetectable motion (U)

Requiring strength assessment (S)

Modified Fugl-Meyer Assessment Items.

Note that 18 of 33 tests (55%) in the Fugl-Meyer can theoretically be scored using the presented model and are abbreviated with the prefix “FM-”. Items listed with * do not have prediction accuracies due to score class imbalances (FM-16 and FM-17) and the specific scoring criteria (FM-18).

Abbreviations: R, requiring physical examination; U, undetectable motion; S, requiring strength assessment.

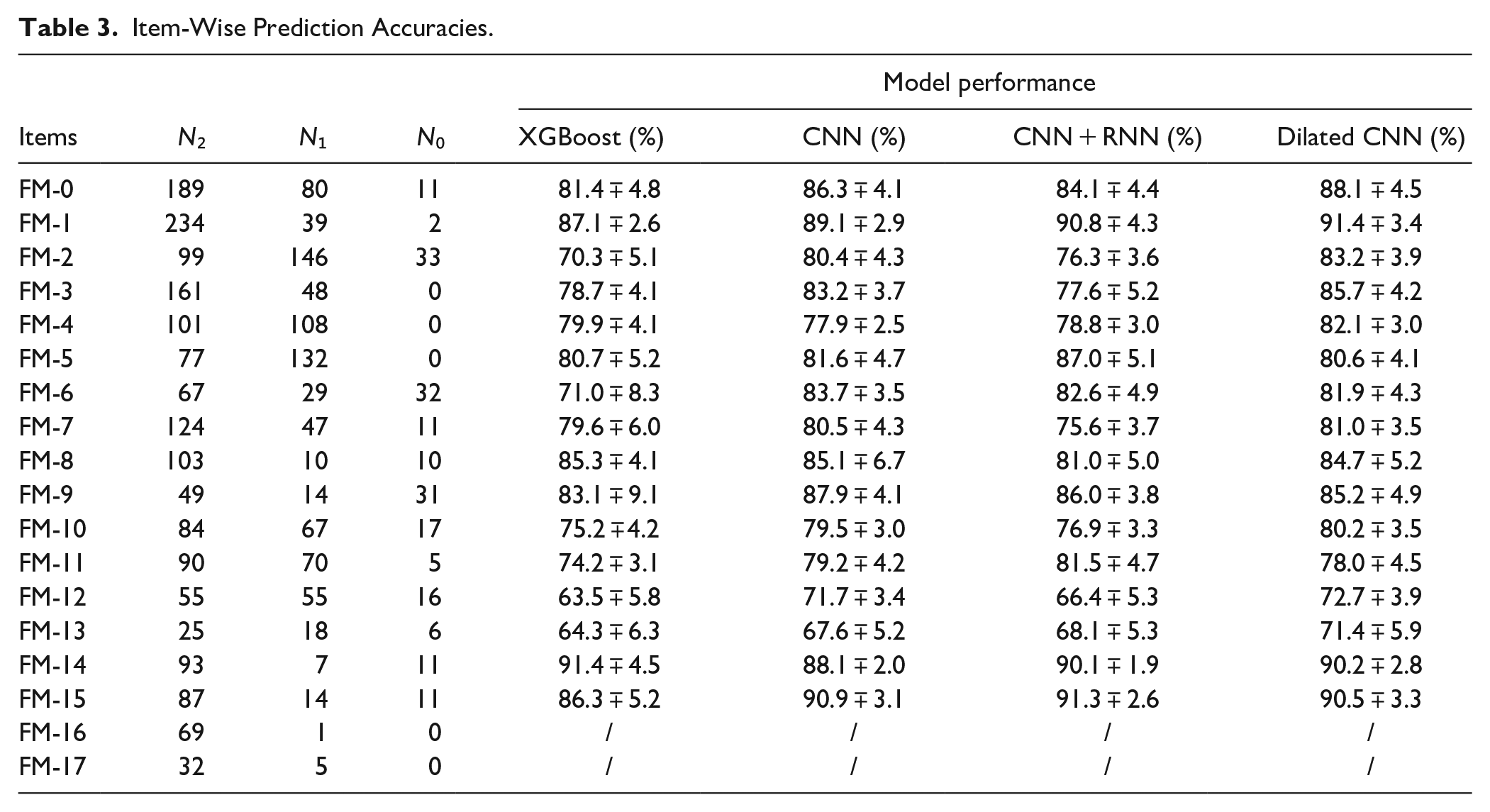

Item-Wise and Group-Wise Prediction Accuracies

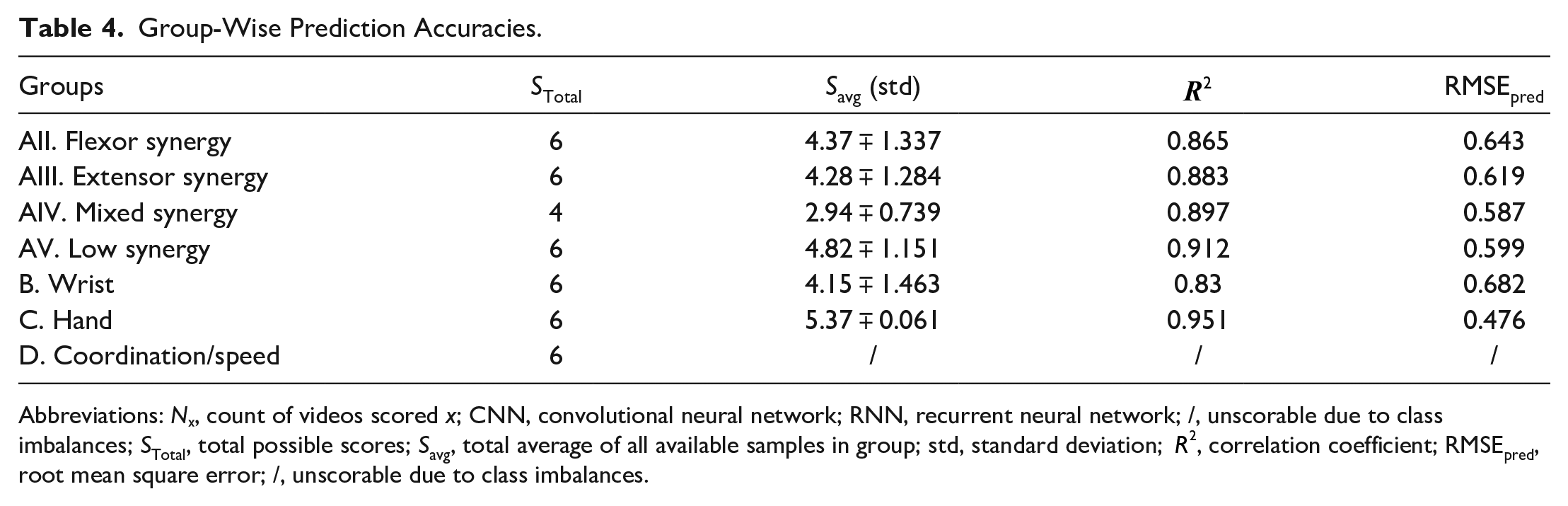

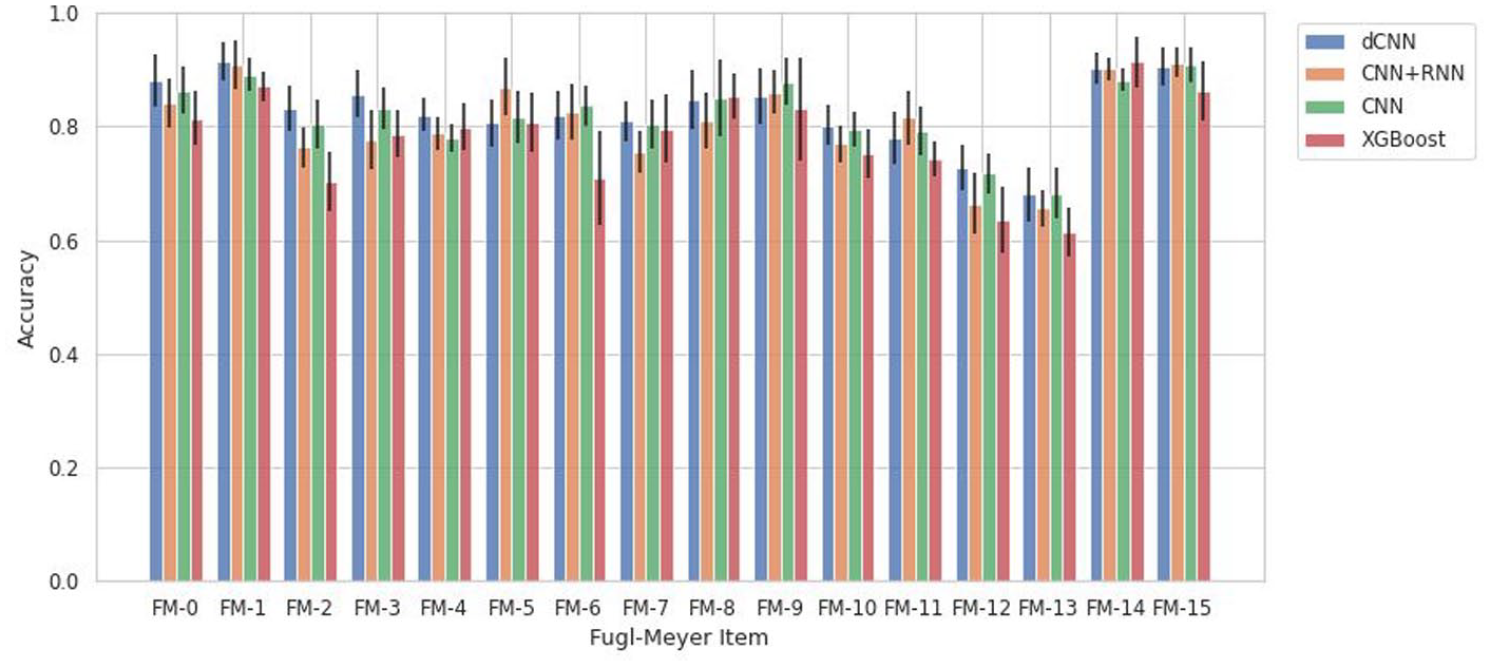

Tables 3 and 4 illustrates various models’ ability to predict scores from the videos for each individual item, described as item-wise, and the predefined categories of the Fugl-Meyer, described as group-wise. It also lists the number of videos for each class (0, 1, 2) for each Fugl-Meyer item. To test accuracy and generalizability of the model at multiple structural levels, group-wise predictions were conducted for the dilated CNN model, described in Table 4. Since in each group 2 or 3 items are included, we take the sum of scores for each patient with potential total score as 4 or 6, respectively, and treat it as a regression problem and evaluate the performance using RMSE. Figure 2 reiterates the tabular item-wise prediction accuracies in a graphical form. Average accuracies are 82.7 ∓ 1.6%, 80.7 ∓ 1.7%, 76.4 ∓ 1.6%, and 78.3 ∓ 2.2% for the dilated CNN, CNN and RNN, CNN, and XGBoost models respectively. Strong correlation between model prediction and actual scores are seen when analyzed group-wise; correlation coefficients range between 0.83 and 0.951 and average 0.89. For XGBoost models, we tried to identify features that contribute mostly to prediction on each items, and the results are shown in Figure A2. Moreover, we demonstrated the inter-rater agreement over scoring Fugl-Meyer items through video slices, and the details of comparison experiment can be found in Inter-rater Agreement Analysis part in Appendix.

Item-Wise Prediction Accuracies.

Group-Wise Prediction Accuracies.

Abbreviations: Nₓ, count of videos scored x; CNN, convolutional neural network; RNN, recurrent neural network; /, unscorable due to class imbalances; STotal, total possible scores; Savg, total average of all available samples in group; std, standard deviation;

Prediction accuracies with standard deviation bars generated from the various scoring models grouped by Fugl-Meyer item.

Discussion

In this study, we demonstrate the feasibility of a low cost and very accessible method to automatically score components of the Fugl-Meyer UE assessment. We used data provided from 45 study participants who share similar demographic and clinical diversity to the greater stroke patient population.

Traditional methods automating the Fugl-Meyer assessment rely on a combination of different motion capture devices and scoring techniques: Table A1 summarizes the recording apparatus, count of scorable Fugl-Meyer items, scoring methods, and results of several studies for reference. All related studies use at minimum 1 Kinect camera to capture motion for their automation. With this recording configuration, 1 model 19 predicts Fugl-Meyer scores with accuracies ranging between 65% and 87% depending on the item, and another models’ 20 results, which are described as correlations between qualitative and quantitative scores, vary greatly depending on the activity, showing virtually no correlation for flexor synergy (.03) and strong correlation for wrist flexion (.97). Other methods 21 use 2 Kinect cameras to capture 3D body representations and a random forest model to predict 2 Fugl-Meyer item scores at 91% and 59% accuracy. Studies 22 also occasionally employ the use of force sensors and inertial measurement units to score up to 26 and 25 items, respectively; support vector machines and backpropagation neural networks for scoring achieved prediction accuracies of 86% and 93% for each model 22 and scoring activities using a binary rule-based classification method 22 yielded accuracies ranging between 66.7% and 100% depending on the Fugl-Meyer item.

Among the most important shortcomings of these studies is the employment of complex and costly technologies. All related studies rely heavily on depth sensing with the Microsoft Kinect camera. Issues with this camera include detection of subtle movements like supination and pronation, noise and inaccuracy when joints are occluded, reliance on infrared for motion capture, and poor hand tracking. 22 The use of external devices 22 allow scoring of additional Fugl-Meyer items which improves clinical utility, but at the expense of reducing accessibility of the proposed technology, which is a focus of our study.

Video information analyzed by deep-learning motion detection models is the most accessible and least costly alternatives to Kinect depth sensors and marker-based motion capture technology. The smartphone is a ubiquitous tool among all generations and in all households, making it a prime candidate for reaching geographically and financially isolated populations; the methods presented in our study can be implemented practically with pre-existing technology in remote settings, although it will be important in future studies to assess our automated method on handheld devices. Most importantly, we show that these methods can compete with and even outperform traditional methods of automating the Fugl-Meyer assessment. Depending on the model, the average accuracy ranged between 78.1% and 82.7% for individual Fugl-Meyer items. Strong correlation (

For item-wise accuracies, all models struggled the most with wrist circumduction, likely attributable to the low sample size of this activity. This item’s videos were not cut into individual repetitions because the activity is performed quickly and with poorly identifiable start and finish points. The group-wise accuracies presented in Table 4 suffer from low sample size due to the frequency of the therapist being unable to score items on the Fugl-Meyer assessment due to the subject’s unique disability in the acute hospital setting. This often led to samples with incomplete Fugl-Meyer assessment scores and exclusion from this table, even if only 1 item was unscored. We plan to conduct future studies in outpatient settings, in order to conduct more complete Fugl-Meyer recordings, which could inform us on the method’s errors and possible correlations with severity of stroke. However, this is not a focus of this study as our goal is to study the individual components of the Fugl-Meyer and we were able to obtain a sufficient number for each component to conduct the ML analyses (as indicated in Table 3). Furthermore, we wanted to focus on the individual components of the score which are clinically more meaningful than the total score.

Alternative methods to auto-scoring machine learning models were attempted, most notably rule-based classification. 22 An assortment of features described in Table A2 were calculated from the joint positional coordinates and employed in a logical scoring system that was both clinically interpretable and unique to each item. However, noise generated by the motion detection algorithm and volatility of angles produced when joints were collinear with the camera line-of-sight led to poor performance overall: rule-based classification averaged an accuracy of 66.7% with 3 items failing to exceed 50% and 6 items failing to exceed 60%. Auto-scoring machine learning methods tolerate noise from the motion detection algorithm and the volatility natural to 2D joint extractions from 3D movements; a sufficiently large sample of training data could compensate for the associated loss in clinical interpretability.

Limitations of this study include some loss of clinical utility described previously, attributable to several factors. The motion detection model used in this study does not appreciate the real geometry of many joints and physical position of the upper extremities. The ball-and-socket glenohumeral joint allows for internal and external rotation of the arm, which is undetectable by the current model. This paired with obfuscation of the scapulothoracic joint reduces the number of scorable items and may limit the scope of the model’s clinical utility. These critical, unidentifiable movements reduce the total item count by 4. However, it is possible that this model could infer information about these joints and mitigate this occlusion with sufficient data. Other unscorable items involve UE functions that are invisible to cameras and require an in-person examiner, including reflexes, wrist strength, and grip strength.

The distribution of video scores among subject videos presents another challenge to model performance: imbalanced classes are most evident in items FM-3, FM-4, FM-5, FM-16, and FM-17. However, FM-3, FM-4, and FM-5 still have enough samples distributed between 2 of the 3 classes for differentiation by the auto-scorer. Fugl-Meyer items assessing tremors and dysmetria, abbreviated FM-16 and FM-17, were collected and scored by the occupational therapist, but severe imbalances prevented training of the models: there were 69, 1, and 0 videos scored 2, 1, and 0 for tremors and 32, 5, and 0 videos scored 2, 1, and 0 for dysmetria, respectively. For this reason, it is likely these items can be scored by these model architectures in theory with sufficient data, but it is not proven in this study.

FM-18, or the time taken during the coordination and speed activity item, can not be scored using the machine learning models because the criteria is strictly rule-based in design. Inputs for the neural networks and XGBoost do not include any reference to the total number of video frames, so differences in activity duration are undetectable. However, this item is scorable by other means very simply; if submitted videos begin at the start of the activity item and finish at the end of the activity item, the quantity of frames and frame rate of the camera provide a score for FM-18.

Conclusion

This paper presents a method for low-cost automatic assessment of UE impairment in stroke patients. We show the designed models can score 16 of 33 (49%) items in the Fugl-Meyer assessment, with accuracies ranging from 78.1% and 82.7% for each item. When grouped by Fugl-Meyer category, strong correlations between model prediction and actual scores were achieved (

In future studies, we envision several changes that could help establish this method as an effective solution to the growing issue of healthcare inaccessibility among stroke patients in rural settings. We would also like to explore the feasibility of this method in a larger population; recording in an outpatient clinical setting or subject’s home would help acquire more data for training the models and test this technology’s ability to function in its intended environment. Utilizing automated Fugl-Meyer could be used in rehabilitation trials to provide intermittent assessments during interventions, easily performed in the patient’s own home. Linking the data obtained through automated Fugl-Meyer assessment could be further applied to define “rehabilitation success” and even “rehabilitation potential,” enabling clinicians to make informed decisions for patient care. However, before widespread applications of our method, we will first need to determine which additional components of the FM can be automated and then re-test its validity and reliability. We also need to determine in longitudinal studies whether this method will be able to discern minimal clinically important differences in FM. Lastly, we believe that consistent camera placement, ample lighting, and an unobscured subject are important for optimal quality of motion detection. Future studies will be helpful to determine which of these parameters are essential for optimal quality.

Footnotes

Appendix

Features Extracted.

| Feature name | Description | Abbreviation |

|---|---|---|

| Initial metrics | ||

| Shoulder ROM | List of angles between arm and torso | Sh_ROM |

| Elbow angle | List of angles between axis of arm and forearm | EA |

| Wrist ROM | List of vertical distances between fingers and wrist joint | Wr |

| Pro.-Sup. | List containing classifications of “supination,” “pronation,” or “neutral” | Pro_Sup |

| First 10% | Isolates first 10% of video frames/the beginning of activity | F10 |

| Last 90% | Isolates last 90% of video frames/after the beginning of activity | L90 |

| Last 10% | Isolates last 10% of video frames/the end of activity | L10 |

| Speed | List of changes in values from another list, like speed | Spd |

| Maximum | Highest value of list | Max |

| Minimum | Lowest value of list | Min |

| Average | Average value of a list | Avg |

| Mode | Most common value in a list | Mod |

| Std. Dev. | Standard deviation of the values in a list | SDev |

| First digit DIP | List of positions of the first digit’s distal interphalangeal joint | 1DIP |

| Third digit MCP | List of positions of the third digit’s metacarpophalangeal joint | 3MCP |

| Third digit DIP | List of positions of the third digit’s distal interphalangeal joint | 3DIP |

| Fifth digit DIP | List of positions of the fifth digit’s distal interphalangeal joint | 5DIP |

| Wrist position | List of positions of the wrist | WrP |

| Distance between 2 joints | List of Euclidean distances between 2 joints labeled x and y | Dis (x, y) |

| Ratio between 2 distances | A ratio of minimum distance to maximum distance between joints labeled x and y | R (x, y) |

| Model inputs | ||

| Max(Sh_ROM) | Highest angle between arm and torso achieved during exercise | |

| Avg(Spd(Sh_ROM)) | Average angular speed of the arm during abduction | |

| Max(Spd(Sh_ROM)) | The maximum angular speed of the arm during abduction | |

| Max(EA) | Greatest amount of elbow flexion | |

| Min(EA) | Greatest amount of elbow extension | |

| Max(F10(EA)) | Greatest angle of flexion in the first 10% of video frames | |

| Max(L90(EA)) | Greatest angle of flexion in the last 90% of video frames | |

| Avg(EA) | Average angle between arm and forearm during exercise | |

| Avg(Spd(EA)) | Average speed arm is flexed or extended during exercise | |

| Max(WrY)-Min(WrY) | The total vertical ROM of the wrist | |

| Max(WrX)-Min(WrX) | The total horizontal ROM of the wrist | |

| SDev(Wr) | Standard deviation from the mean position of the wrist | |

| Avg(Spd(Wr)) | Average speed the subject moves their wrist | |

| Mode(L10(Pro_Sup)) | At the end of an exercise, the highest frequency of hand positions classified as “supinated,” “pronated,” or “neutral” | |

| Max(Spd(5DIP)) | The maximum speed of the fifth digit | |

| Avg(Spd(5DIP)) | The average speed of the fifth digit | |

| Max(Spd(1DIP)) | The maximum speed of the thumb | |

| Avg(Spd(1DIP)) | The average speed of the thumb | |

| Min(Dis(WrP, 3DIP)) | The smallest distance between the wrist and third digit | |

| Max(Dis(WrP, 3DIP)) | The greatest distance between the wrist and third digit | |

| R(WrP, 3DIP) | The ratio of the smallest distance to greatest distance between the wrist and the third digit | |

| Min(Dis(3MCP, 3DIP)) | The minimum distance between the third digit’s metacarpophalangeal joint and the distal interphalangeal joint | |

| Max(Dis(3MCP, 3DIP)) | The maximum distance between the third digit’s metacarpophalangeal joint and the distal interphalangeal joint | |

Elements of the list correspond to frames in video clips. F10, L90, and L10 assess metrics based on their values at the beginning of the exercise, after the beginning of the exercise, or at the end of the exercise.

Abbreviations: ROM, range-of-motion; Pro.-Sup., pronation-supination status; Std. Dev., standard deviation.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: XJ is CPRIT Scholar in Cancer Research (RR180012), and he was supported in part by Christopher Sarofim Family Professorship, UT Stars award, UTHealth startup, the National Institute of Health (NIH) under award number R01AG066749 and U01TR002062, and the National Science Foundation (NSF) #2124789. KT and SZ are partially supported by Giassell family research innovation fund through School of Biomedical Informatics. SS and XJ were supported by Ovarian Cancer Research Alliance (OCRA) through research grant support (CRDGAI-2023–3-1002).