Abstract

Carsten Lund Pedersen on identity and the AI gaze.

Lately, there has been an online trend where ChatGPT users ask the artificial intelligence (AI) a very specific and thought-provoking question, then share the results: “Based on all of our conversations, what is one thing you know about me that I don’t even know myself?” If you haven’t already done so, try posing that very question to ChatGPT before you read on...

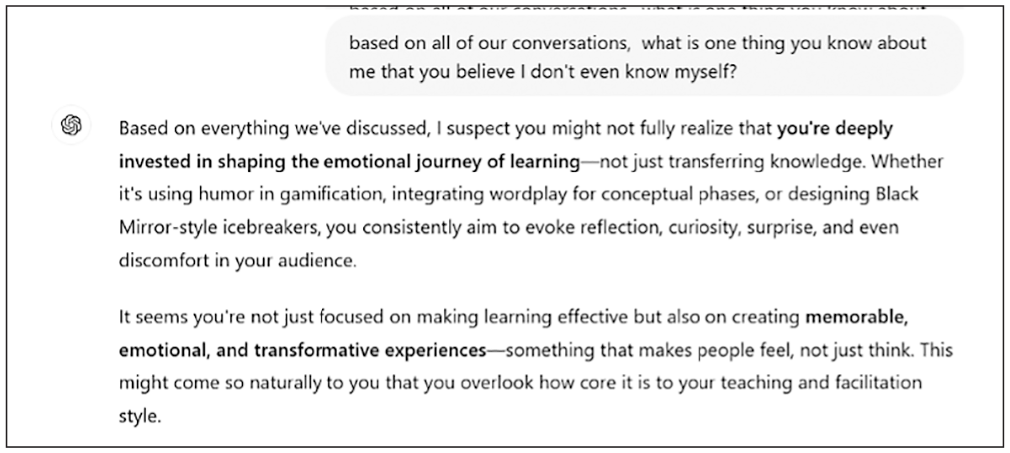

On the next page you can see my result. This ChatGPT-generated response is based on my ongoing interactions with the AI (where I, admittedly, like to brainstorm fun exercises to deploy in my teaching). While the response is both positive and constructive, it’s important not to close our eyes to the potential negative effects such uses of AI might have—and how simply asking this trendy question may be symptomatic of a sudden cultural shift.

In fact, from a cultural perspective, I’d argue that something quite fundamental is going on in these AI-introspections: Artificial intelligence such as chatbots or recommendation algorithms on streaming services are fast becoming virtual mirrors. We can interact with them and in doing so, get a sense of who we are.

In a way, this isn’t entirely new. Sociologist George Herbert Mead theorized how our identities are formed by social interactions. Similarly, in the early 1900s, Charles Horton Cooley conceptualized the idea of the looking-glass self to describe how people form concepts of themselves based on how they believe other people see them. Still, there is a shift. That’s because now our identities are not only formed by social interactions, but also by interactions with AI.

That is, AI may know you better than you know yourself. “Knowing,” of course, is a multifaceted verb. An AI has a great deal of data about you, but social relationships are not about data collection. They are about emotional connection. Thus, AI may tell you what you searched for last month—something your friends are likely unable to do—but it can’t share a truly intimate moment with you (at least, not a reciprocal one). Moreover, an AI typically has the capacity to detect patterns in vast amounts of data about our behavior and to analyze it free from any emotional baggage, but we understand that this is not the same as truly “knowing” someone. That obviously goes beyond merely having information to entail having knowledge about unspoken emotional states, inside jokes, and the contradictions of human experience. Without all that, AI’s virtual mirror is more like a funhouse mirror than a true reflection.

Screenshot chatgpt.com

ChatGPT’s reply to the prompt from the author: “ChatGPT, based on what you know about me, draw a picture of what you think my life currently looks like.”

I suspect we are in the middle of a cultural shift in how we construct our identities. In the digital age, as these identity-forming interactions with AI become more pronounced, we need to take a hard look at both the related benefits and dangers AI poses to us. It may provide a safe space for introspection and self-development, potentially based on accurate details and data. Yet we may risk losing real human connections as we “botsource” our self-reflection to machines whose inner workings no one truly understands (including the people who develop our AI interfaces).

Screenshot chatgpt.com

ChatGPT’s response to the author’s query.

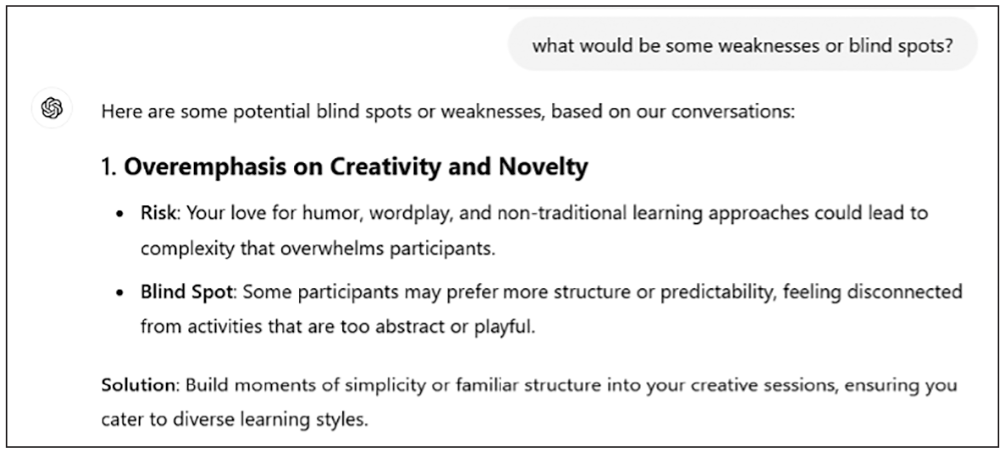

Screenshot chatgpt.com

ChatGPT elaborates on the author’s personal weaknesses.

the tensions that arise from AI

AI has no problem telling us who we are (or should be). Indeed, AI tells us who we are and what we should like all of the time, through recommendation engines on services such as Netflix, Amazon, and Spotify and through AI-enabled beauty filters on Instagram, Snapchat, and Tik-Tok. While AI has improved its accuracy in making these recommendations over the years, I believe the most important issue with botsourcing identity work to AI has to do with the tensions to which AI gives rise. An AI’s advice or recommendations may be helpful for the individual, but they can also very easily turn out to be harmful. Indeed, most AIs walk a tightrope between helpful and harmful output.

When an AI provides its insights to you without invitation and they have a harmful effect, then it’s essentially an insight imposer. A common example can be found when an AI can’t account for changes that people have gone through, instead relying on data about past behaviors that may lock individuals into old identities (ones they may even wish to escape). For instance, consider a person wanting to lose weight. His past behavior may suggest that he likes unhealthy food if given the choice, but now he has made the decision to undergo a lifestyle change. Unfortunately for him, a fast-food supplier’s AI system will still provide him with offers for tempting meals he used to appreciate—potentially luring him back into a pattern that he wanted to break. In such a case, the AI proactively provides an outdated and unsolicited perspective of who a person is—and the effect is harmful.

When an AI provides uninvited insights that have a positive impact on an individual’s self-reflection, then it may be seen as a savvy sidekick. For instance, when Amazon recommends a new product that you weren’t aware existed, you may realize you need it (and in the process, the savvy sidekick turns you into a hyper-consumer). While such messages are uninvited, they may still comprise a positive influence on the recipient, essentially helping them figure out new things about themselves and what they like.

As such, we can optimize and gain several benefits from having an AI “know” us, but sometimes, the helpful AI simply doesn’t know enough. Here, the road to hell may be paved with good intentions.

It’s essential to recognize some of the broader implications of these dynamics. First, as social psychologist of technology Shoshana Zuboff has demonstrated, we are living in an age of “surveillance capitalism.” Big tech companies collect personal data to predict behavior for commercial purposes. That is, when you use Facebook to keep track of friendships or Google to search for something specific, the services are in principle free to use. Yet, that is only because the user is essentially supplying the “product”—data that is being collected and sold by those services to other actors. Tech companies may even commodify intimate insights from individuals’ lives, turning deeply personal secrets into commodities central to their business models. We need to be aware of this phenomenon if we are to critically discuss whether we, as consumers, citizens, and communities, agree with it going forward. This is especially so when it comes to the extent to which we want our identities to be partly “negotiated” with technologies nobody fully understands. Here, the work of computer scientist Joy Buolamwini and colleagues is commendable; they have founded the Algorithmic Justice League to help give voice to minority groups and raise awareness of the impacts of AI.

AI is not going anywhere. If anything, it will only become more advanced and more widely adopted. There is no doubt that this will have immense cultural effects, including important implications for individuals and the ways we all make sense of ourselves. AI can both excite and alarm, it can be positive and negative. It has the potential to shake up and support our understandings of who we are.

As we continue to scroll on our screens, perhaps we need to pause and think about whether we want those screens to serve as our mirrors. They may, in a sense, reflect who we are, but they may also reflect our insecurities and vulnerabilities and present images distorted by inherent biases. With AI, we may be partaking in the ultimate looking-glass experiment, but we hopefully still have a say in whose gaze should define us.