Abstract

Elizabeth T. Harwood on networks of provocation.

The March 2019 massacre by a self-identified fascist in New Zealand that left 50 dead and even more injured is part of a growing trend in White supremacist terrorism. Journalists were quick to latch onto the terrorist’s manifesto and livestream as evidence of this being, according to Kevin Roose of the New York Times, “an internet-native mass shooting, conceived and produced entirely within the irony-soaked discourse of modern extremism.” His manifesto, first released on the online imageboard site 8chan, heavily references various memes, online trends, and internet celebrities. He claims to have been most radicalized by Candace Owens, a young, Black woman who serves as the Communications Director for the conservative advocacy group Turning Point USA. Is this reference a genuine reflection of her extremist politics? A sarcastic attempt to throw journalists off by blaming his White supremacy on a Black woman? It may be difficult–near impossible–to cut through the dense field of acerbic mockery the terrorist left behind. Preliminary computational analysis of the text reveals similarities in discourse between the terrorist and various influencers like Candace Owens.

Online Radicalization

The terrorist responsible for the New Zealand massacre was radicalized through online right-wing subcultures. According to YouTube’s mission statement, the platform exists to give everyone a voice through freedom of expression, freedom of information, freedom of opportunity, and freedom to belong. They go so far as to build inclusion into their mission statement: “We believe everyone should have easy, open access to information and that video is a powerful force for education, building understanding, and documenting world events, big and small.” Indeed, You-Tube has the potential to spread information very broadly, even hateful ideologies.

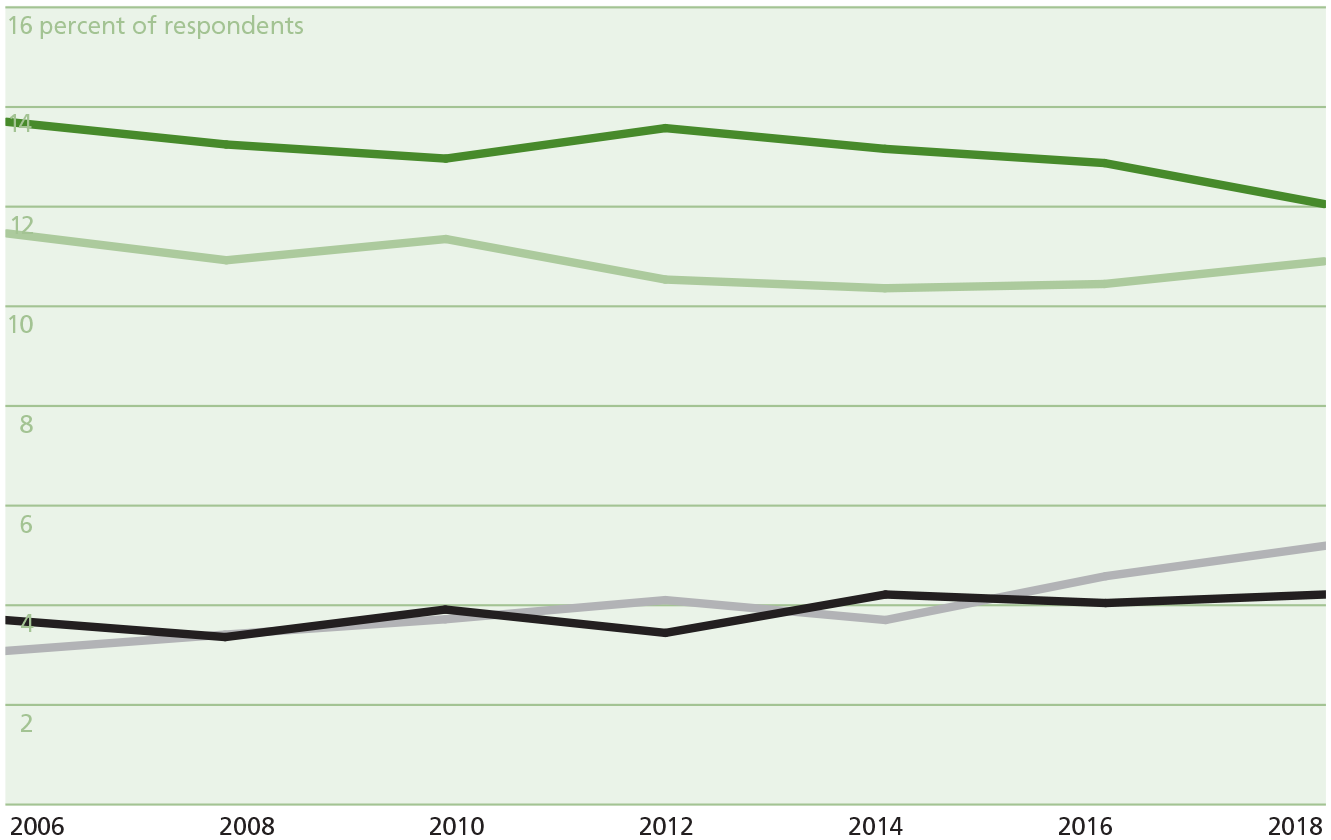

Political views of GSS respondents

Source: General Social Survey

Those who attempt to use YouTube as a news and information source are often nudged toward more radical ideas. Jack Nicas published a summary of The Wall Street Journal’s investigation into YouTube radicalization: “…YouTube’s recommendations often lead users to channels that feature conspiracy theories, partisan viewpoints and misleading videos, even when those users haven’t shown interest in such content. When users show a political bias in what they choose to view, YouTube typically recommends videos that echo those biases, often with more-extreme viewpoints.” The WSJ investigation also found that viewing clips of mainstream channels like Fox News and MSNBC can lead YouTube search results to recommend far-right or far-left videos.

The General Social Survey (GSS) reveals that respondents increasingly receive news from internet-based sources (15.1% in 2012 compared to 23% in 2018). The GSS also supports the claim that U.S. citizens have become more extreme in their political views in this same time period. Between 2006 and 2018, fewer respondents identified as being “slightly liberal” or “slightly conservative.” More came to identify their political views as “extremely liberal” or “extremely conservative.” This increase in extreme political identifications thrives in online spaces with user-generated content, such as YouTube and Twitter.

Content producers from YouTube frequently use Twitter and other online platforms to engage with fans, political pundits, and other influencers. Political scientist Richard Hanania of Columbia University found Twitter more frequently bans conservative political accounts than liberal ones, though it is unclear whether that might be the result of more conservative rulebreakers or harsher sanctions on conservatives. Though YouTube and Twitter present different stances on censoring content and banning users, the two platforms share a large user base.

Methods

I compare the text of the New Zealand mass-shooter’s manifesto to a previously established group of influencers that communications scholar Rebecca Lewis calls the Alternative Influence Network (AIN). She defines these 65 users as “an assortment of scholars, media pundits, and internet celebrities who use YouTube to promote a range of political positions, from mainstream versions of libertarianism and conservatism, all the way to overt White nationalism.” By developing a network of influencers through guest appearances on one another’s channels, Lewis makes connections between moderate conservatives, such as Dave Rubin, and alt-right White supremacists, such as Jared Taylor.

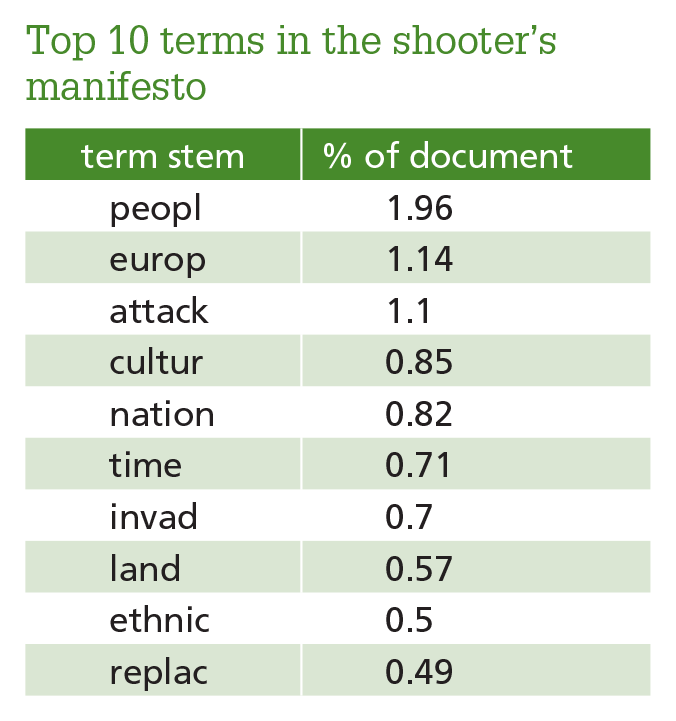

To provide a sense of the manifesto without reposting its full contents, I present a table of the top ten terms (as their word stems), after eliminating commonly used words (“the”, “could”, “there”, etc.).

Unsurprisingly, words like “attack,” “replace,” and “invade” were among this terrorist’s most frequently used terms, expressing his views that non-White immigrants use high fertility rates to replace White populations. He goes so far as to condemn children with this language as well, justifying it with sparing future generations from invaders. These terms and phrases represent the essence of the text and its extremist language.

As a proxy for right-wing online discourse, I utilize tweets from members of the AIN. After reducing the AIN member sample to only those who have active Twitter accounts in English with at least 5,000 tweets, my final analysis includes 39 of the influencers Lewis identified. I also include PewDiePie; while not a member of the AIN, the shooter made a brief reference to this online celebrity in his livestream of the massacre.

Top 10 terms in the shooter’s manifesto

I then compared each AIN member’s Twitter corpus to the terrorist’s manifesto using a measure of cosine similarity, a statistical method for comparing how terms are used in various documents. The closer the measure is to 0, the less similar two documents are; the closer to 1, the more similar. Documents with a cosine similarity above .4 can be said to have a moderate similarity, and those over .7 express a strong similarity. Text research is sometimes limited by its ability to account for rhetorical features such as tone, irony, or sarcasm; here, since we are comparing an “irony-soaked” document to a sarcastic, reactionary sect of the internet, the similarity between terms should be an appropriate proxy for how similar the discourses are, regardless of tone or intent.

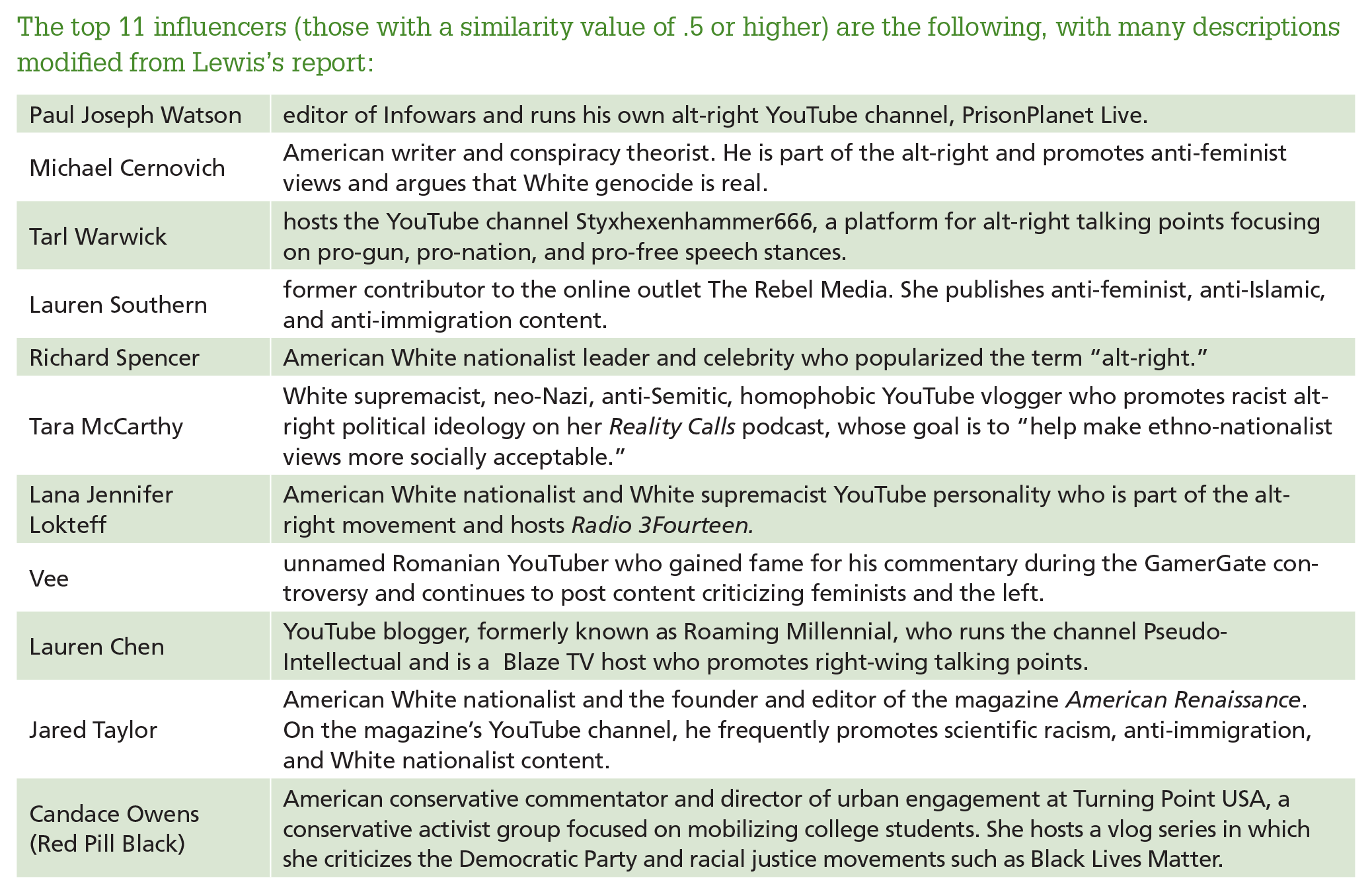

The top 11 influencers (those with a similarity value of .5 or higher) are the following, with many descriptions modified from Lewis’s report:

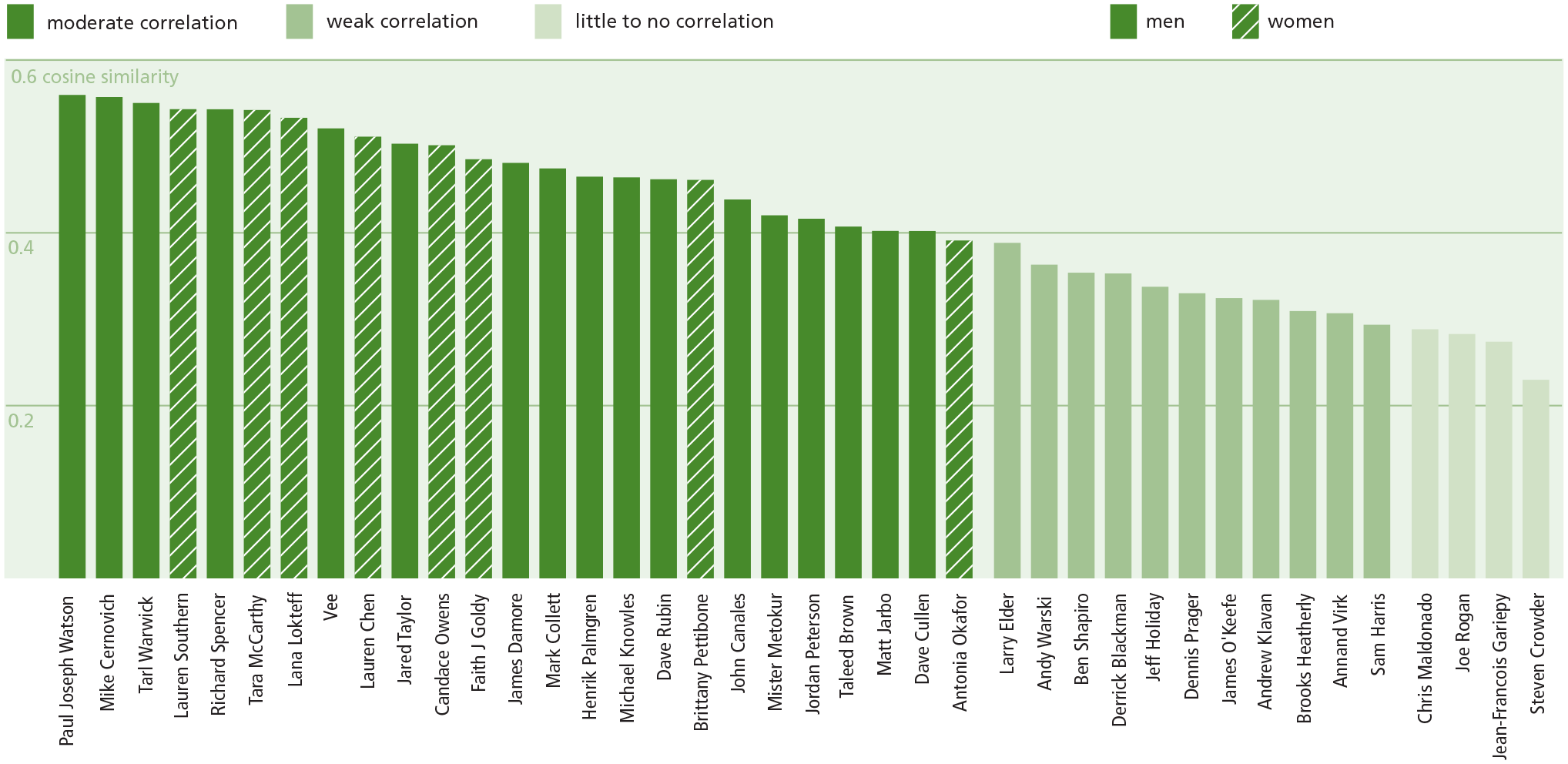

Similarity of discourse between right-wing influencers and Brenton Tarrant (with gender indication)

Source: Author cosine analysis of Christchurch shooter’s manifesto against Twitter corpuses of AIN identified influencers

The cosine similarity measure for each influencer ranged from .23 to .56, with 25 influencers’ Twitter corpuses having a moderate similarity strength to the shooter’s manifesto. The remaining 15 influencers have very little or a weak positive correlation to the document. The influencers are graphed below according to their similarity.

Does Gender Play a Role?

Some who made the list, such as Paul Joseph Watson, Mike Cernovich, Richard Spencer, and Jared Taylor, are well-known as extremists and White supremacists within the broader right-wing culture. Others, such as Lauren Chen and Candace Owens, are not considered extremists but still employ radical language in their online discourse. Interestingly, women account for only about a fifth of the AIN users, but closer to half of those with the highest similarity to the terrorist’s discourse. This gender dynamic is worth exploring; perhaps right-wing women influencers struggle to gain viewers in the traditional “manosphere” of the internet and therefore rely on more polarizing language.

The cosine similarity value for men had a median of .402 and an average of .401. For women influencers, these values were .506 and .496, respectively. The following graph illustrates the differences in extremist rhetoric by gender, with reference lines for men, women, and total corpus median cosine values.

It is notable that most violent extremists are men, but the case of the New Zealand terrorist’s manifesto shows a greater similarity to women’s right-wing discourse in the corpus. Only one woman from this sample was below the corpus median in her similarity to the manifesto’s text. Further, Lana Lokteff, one of the influencers with the most similar rhetoric to the terrorist in New Zealand, has a much greater similarity to the document than Henrick Palmgren, despite the couple being married and co-producing the same alt-right channel, Red Ice TV.

Concluding Thoughts

There is much more to explore regarding gender and conservative extremist influencers. Do audiences differently read content from men and women and, if so, how? How do women become part of male-dominated online subcultures? Is their extremist rhetoric a genuine reflection of their political ideology, or do women need to use more extreme language in order to gain fame in what primarily exists as an online boys’ club?

Popular conservative figures such as Ben Shapiro and Jordan Peterson do not have online narratives that share much in common with the terrorist. However, their online productions can play a role at the early stages of radicalization because YouTube recommends more extreme content when viewers watch their videos. Until YouTube and other platforms adjust their algorithms to eliminate recommendations to extreme content, such moderate right-wing influencers ought to be considered gateways to radicalism. One solution would not necessarily be to de-platform specific users, which would grant legitimacy to a common right-wing argument that they are being targeted and censored for their political views, but rather to hold YouTube and other platforms responsible for recommendation algorithms that shift people from moderate to extremist content.