Abstract

Teachers critically need classroom management skills. To develop these skills, teachers need high-quality professional development (PD). To support teachers, schools need practical tools for assessing the effects of PD on teachers’ use of classroom management practices. The adapted alternating treatments design (AATD) may be a tool for this purpose. We conducted an initial pilot investigation testing the potential of AATDs to evaluate the effects of PD on teachers’ use of classroom management practices for eight Pre-K–12 teachers. We successfully used AATDs to evaluate PD effects in all cases across two phases of PD (online module instruction, online module + peer coaching). Results suggest the potential promise of using AATDs to evaluate the effects of PD on teachers’ use of classroom management practices. Results also showed didactic training alone was often insufficient to increase use of practices, whereas peer coaching was effective in increasing use of at least one practice for all teachers.

Keywords

Teachers consistently report difficulty managing student behavior (O’Neill & Stephenson, 2012; Reinke et al., 2011). Student misbehavior is associated with teacher burnout (Aloe et al., 2014), lower teaching efficacy (Reinke et al., 2013), and emotional exhaustion (Reinke et al., 2013). Early career teachers report having limited training to address student problem behavior (Dutton Tillery et al., 2010) and need structured supports to effectively use classroom management techniques (Headden, 2014). Improving teachers’ classroom management skills may improve teacher well-being and teacher retention (Brunsting et al., 2014; Hastings & Brown, 2002). This is a worthwhile investment of time and resources because almost half of all new teachers leave the field within 5 years (Ingersoll, 2012).

Effective classroom management also improves student outcomes. Research shows evidence-based classroom management practices such as behavior-specific praise (BSP), opportunities to respond (OTRs), and precorrection (Simonsen et al., 2008; Sutherland et al., 2019) decrease disruptive behavior, increase engagement, and improve academic outcomes (e.g., Gage et al., 2018; Haydon et al., 2010; Larson et al., 2018). However, researchers have found teachers use these practices at low rates, highlighting a need for effective professional development (PD) on classroom management practices (Reinke et al., 2013; Scott et al., 2011).

Professional Development for Classroom Management

There are a range of PD approaches shown to increase teacher use of classroom management practices. Systematic reviews of PD methods for classroom management find effective PD usually includes multiple components (Allen & Forman, 1984; Wilkinson et al., 2020). The most frequently included components of effective PD include didactic instruction, coaching, and performance feedback (Wilkinson et al., 2020). Didactic instruction is a low-resource approach to PD and is often included in multicomponent PD packages, but rarely evaluated in isolation. Didactic instruction uses evidence-based instructional practices, including providing an overview of the skill, examples and nonexamples, opportunities for practice, and planning for implementation. The small body of research evaluating the effects of didactic instruction alone on teachers’ classroom management skills shows mixed effects (Brady et al., 1988; Jones & Chronis-Tuscano, 2008; Stoiber & Gettinger, 2011; Wheldall et al., 1985).

Comparatively, coaching is an approach with consistent, strong evidence for improving teaching practice (Kretlow & Bartholomew, 2010), but requires more resources. Kretlow and Bartholomew described effective coaching to include group training, follow-up observations, and specific feedback. Peer coaching is one coaching approach that requires fewer expert supervisors relative to supervisory coaching. In reciprocal peer coaching, paired teachers observe and provide feedback on their partner’s use of target practices (Golden et al., 2021). Peer coaching has produced positive effects for a range of teaching practices and types of teachers. For example, studies have shown increases in the accuracy of implementing instructional practices, including Direct Instruction (e.g., Pierce & Peterson Miller, 1994), ClassWide Peer Tutoring (e.g., Kohler et al., 1999), and high leverage practices in special education (Ackerman et al., 2023). Peer coaching has also been successfully used with preschool teachers to increase use of Pyramid Model practices (Golden et al., 2021), classroom organization (Johnson et al., 2017), responsive statements (Tschantz & Vail, 2000), and class activity procedures (Kohler et al., 1995). Moreover, the peer coaching is perceived as effective, feasible, and useful by teachers (Ackerman et al., 2023; Golden et al., 2021; Johnson et al., 2017; Kohler et al., 1999; Stichter et al., 2006; Tschantz & Vail, 2000).

Although there is strong evidence for using peer coaching to improve implementation of many teaching practices, less is known about the effects of peer coaching on teachers’ use of classroom management practices. Stichter et al. (2006) descriptively compared the effects of peer coaching with traditional in-service training on teachers’ use of OTRs and explored the effects of increased OTRs on student behavior. Their results suggest teachers were able to increase OTRs, increases were associated with positive student academic outcomes, and teachers rated peer coaching positively on a social validity questionnaire. Hasbrouck (1997) descriptively studied the utility and feasibility of mediated peer coaching. Although they observed an initial increase in classroom management scores, they were unable to conclude the change was due to peer coaching. Ackerman et al. (2023) evaluated the effects of peer coaching on two teachers’ use of high leverage practices. One teacher targeted choral, individual, and peer-to-peer OTRs, whereas the other teacher targeted BSP, corrective feedback, and instructional feedback. They demonstrated functional relations for both participants when peer coaching was present. Participants strongly agreed peer coaching was effective in increasing their use of target practices. Combined, these studies suggest further research evaluating the effects of peer coaching for classroom management practices and assessing the extent to which teachers report peer coaching as socially valid is warranted.

Assessing the Effects of PD in Practice

Assessing the effects of PD for individual teachers is important because teachers show differential responses across PD types (Myers et al., 2011; Noell et al., 2002). Assessing PD effects from initial training through follow-up enables PD providers to adapt and layer supports and apply resource-intensive PD methods only when needed.

Although important, it may be difficult for a PD provider to assess the effects of PD on teacher behavior in practice. Single-case designs (SCDs) are well suited for evaluating individual response to treatment; however, these designs are resource-intensive and may be inconvenient to use in practice. For example, many studies evaluating the effects of classroom management PD use multiple baseline designs (e.g., Gage et al., 2017; Hirsch et al., 2019; Simonsen et al., 2017). This is a strong experimental design for research purposes but may be challenging for PD providers to use due to an inability to stagger the introduction of intervention or collect frequent enough data across tiers to infer functional relations. The changing criterion design is an SCD that addresses some of the limitations of the multiple baseline design. It does not require staggered introduction of intervention. However, it has limited utility because it can only be used with practices that easily conform to a criterion. While an A-B design is a practical descriptive method for measuring change in teacher behavior, it prevents the detection of functional relations by insufficiently controlling for threats to internal validity.

The adapted alternating treatments design (AATD; Sindelar et al., 1985) overcomes limitations of other SCDs and does not require additional observations beyond what would be required for an A-B design. This gives the AATD potential practical utility to rigorously assess PD effects on the use of classroom management practices. The AATD was developed as a variation of the alternating treatments design (ATD) for use with nonreversible behaviors in which different conditions are applied to different behaviors or behavior sets of equal difficulty, rather than applying different conditions to the same behavior as in the ATD (Sindelar et al., 1985; Wolery et al., 2018). Typically, the AATD is used to compare the efficiency of multiple interventions and experimental control is demonstrated when an experimental condition shows a consistent increase in level or trend relative to a different (experimental or control) condition. While the AATD often includes multiple intervention conditions, it only requires two conditions to demonstrate a functional relation (Wolery et al., 2018). As such, this design can be used to evaluate efficacy by comparing an experimental condition with a control condition. Furthermore, collecting data on a control behavior strengthens the rigor of the design by allowing detection of multitreatment interference, maturation, and history effects (Wolery et al., 2018).

A PD provider could use an AATD to assess the effects of PD on teachers’ use of classroom management practices by identifying at least two practices expected to occur at similar frequencies using data from a baseline observation. The coach would select one of these practices as the target practice, which would receive PD, and the other practice as the control practice, which would not receive PD. The coach would continue to measure both practices during follow-up visits. Similar levels of target and control practices during baseline followed by an increase in the target practice and no change in the control practice following PD would suggest a functional relation between PD and the target practice. If a functional relation between PD and the target practice was not identified, the coach could use data and teacher consultation to inform adaptations to PD (e.g., more intensive coaching) until identifying an effective approach. The coach could replicate effective PD procedures with other practices.

A key benefit to using an AATD to assess the effects of PD on teachers’ use of classroom management practices is that it enables data-based decision-making to drive teacher development. Adapted alternating treatment designs allow for the identification of functional relations between PD and target practices with fewer observations than other SCDs, informing which PD approaches are effective or ineffective more expediently and when PD should change to better promote improved practice. Adapted alternating treatment designs allow PD providers to compare the effects of less intensive (e.g., didactic instruction) and more intensive (e.g., coaching) PD approaches to determine whether intensifications produced added benefit. Adapted alternating treatment designs also allow for component analyses, informing the effects of an individual intervention component when systematically added to or removed from an intervention package. This type of analysis may help PD providers determine whether a resource-intensive PD component is a worthwhile investment by briefly piloting it with limited scope.

Research is needed to assess the reliability and validity of using an AATD to assess the effects of classroom management PD. The AATD approach would establish reliability if the control behavior demonstrated sufficient consistency to provide the basis for prediction, verification, and replication of the control condition (Cooper et al., 2020). The AATD could be viewed as valid if there is consistent differentiation or lack of differentiation between target and control behaviors, suggesting the presence or absence of a functional relation between PD and the target behavior.

Purpose and Research Questions

The purpose of this study was twofold. The first purpose was to conduct an initial pilot investigation of using AATDs to assess the impact of PD on teachers’ use of classroom management practices. The second purpose was to evaluate the effects of two PD approaches: (a) online didactic instruction and (b) online instruction + peer coaching on teachers’ use of targeted classroom management practices using AATDs. Our research questions (RQs) were the following:

Method

Participants and Setting

After obtaining institutional review board approval, we recruited participants from an online, graduate-level education course in classroom management. Participants included general and special education teachers in any level of Pre-K–12 school settings. The eligibility criterion to participate was course enrollment. Nine of 13 eligible participants consented to participate. One participant was excluded due to a lack of participation in a significant portion of course content and study activities. Four participants were first-year teachers, three were second-year teachers, and one was a fourth-year teacher. Four were general education teachers, three were special education teachers, and one was an inclusive preschool teacher. Of the Pre-K–12 teachers, five taught in elementary schools and two taught in high schools. Three teachers targeted math instruction, three targeted literacy instruction, one targeted both math and literacy instruction, and the preschool teacher targeted centers. Supplemental Table S1 summarizes participant characteristics. All study activities were completed online or in the teachers’ classrooms and submitted via video recording for data collection and analysis.

Materials

Participants completed online activities via the course website and a secure cloud storage service. They used personal devices or Pre-K–12 school-owned devices to record and submit videos via cloud storage for direct observation coding by the research team. Researchers used electronic data forms in Microsoft Word to collect study data. Participants completed peer coaching using a researcher-created electronic Microsoft Word document (adapted from a paper–pencil form in Golden et al., 2021) and submitted forms to cloud storage.

Research Design

We used an AATD (Sindelar et al., 1985) embedded in an A-B-C design to assess the effects of the online module (Phase B) and online module + peer coaching (Phase C) by comparing them with a control (no intervention) condition. A target practice (i.e., BSP, unison OTRs, choice) was assigned to the intervention condition (online module, online module + peer coaching), and a different practice (i.e., precorrection, individual OTRs) was assigned to the control condition. Target and control practices were measured during the same sessions.

During baseline (Phase A of A-B-C design), no treatment was present for the target or control practice. We used this phase to select each participant’s target practices. Potential practices included BSP, unison OTRs, individual OTRs, pre-correction, choice, and active supervision. We selected practices that occurred at low frequencies and were appropriate for the teacher’s educational context. We equated target and control practices by comparing baseline frequencies across all measured practices and selecting a control practice the teacher used with similar frequencies to the target practice and that would be expected to occur at similar frequencies if used as recommended. For all participants, we selected precorrection as the control for BSP and choice, while individual OTRs were the control for unison OTRs.

Following baseline (Phase B of A-B-C design), participants completed an online module on the target practice (BSP, unison OTRs, or choice) and were instructed to increase use of the practice. Participants did not complete a module on the control practice. This phase allowed us to infer functional relations between the online module and the target practice via the AATD.

During online module + peer coaching (Phase C of A-B-C design), participants received peer coaching on the target practice only (no change to the control practice). This phase allowed us to infer functional relations between peer coaching and the target practice using the AATD.

Most participants (n = 6) received PD for two different practices (BSP and unison OTRs). Participants received PD on a target practice for at least 4 weeks before receiving PD (online module or peer coaching) on a second target practice.

We used visual analysis to infer the presence or absence of functional relations between the target practice and PD approach within each AATD. We confirmed a functional relation when there was a higher level or increasing trend in the target practice relative to the control practice during intervention while observing stability in the control practice (Wolery et al., 2018). We also observed for immediate changes in level or trend of the target practice following phase changes, consistency within an intervention phase, and decreased or low variability in the target practice relative to the control. Three independent raters (first three authors) visually analyzed graphs to infer the presence or absence of functional relations. In cases of disagreement, the raters discussed and came to consensus. Initial agreement was 89.3%.

To assess the effects of each PD approach across participants, we calculated success rates by dividing the number of evaluations for which we observed a functional relation by the total number of evaluations and multiplied this quotient by 100% (Torelli & Pickren, 2023).

Dependent Measures and Data Collection Procedures

Teacher Behaviors

We measured teacher behaviors using event recording on the 10-min teacher-submitted videos of instruction. We used event recording, rather than timed event recording because of the goal to evaluate the utility and feasibility of the AATD for data-based decision-making. This method is more closely aligned with what PD providers could use in practice. We scored behavior-specific praise each time a participant gave a verbal acknowledgment to the student(s) of a specific behavior (Royer et al., 2019). The statement had to clearly indicate the student(s) referenced, occur immediately following the behavior, and be sincere (not sarcastic). Examples were “Thank you for raising your hand, Alex,” and “excellent work following directions, Jai.” Nonexamples were general praise (e.g., “nice job”) or behavior narration (e.g., “Ken is taking notes”) and sarcastic or punitive comments (e.g., “Simon is quiet, unlike others”).

We defined an OTR as each time a participant provided an instructional statement requiring a visible (public) academic response. We scored a unison OTR when the OTR was directed toward the entire group of students receiving instruction (e.g., entire table during small group instruction or entire class) in which students responded simultaneously (MacSuga-Gage & Simonsen, 2015). Examples were choral responses, turn-and-talks, simultaneous written responses, and response cards. Nonexamples were questions in which one student responded at a time, such as a hand raise (scored as individual OTR). We scored an individual OTR when the OTR was directed toward one to two students (unless this was the entire group) or the teacher directed only one student to respond at a time (e.g., cold call questions).

We scored choice each time a participant explicitly offered the student(s) a choice between at least two things (e.g., activities, materials, ways to complete a task; Golden et al., 2021). We included between activity, within activity, and reward choices. We excluded if/then statements, implicit choices (e.g., choosing to complete work or put head down), and choices presented as a consequence for problem behavior (e.g., you can choose to do your work or go to the principal’s office).

We scored precorrection each time a participant provided a verbal, visual, or gestural reminder of a behavioral expectation prior to the occurrence of problem behavior (Barton et al., 2013). We excluded reminders that occurred following or due to problem behavior. Examples were pointing at a poster of classroom expectations or vocally reminding students of behavioral expectation(s) in the absence of problem behavior. Nonexamples were setting academic expectations such as “Complete your math work in pencil” or saying “make sure your eyes are on the speaker” when several students were looking elsewhere.

Interobserver Agreement

Trained observers simultaneously and independently collected data for at least 25% of sessions across participants. We randomly selected sessions across participants and conditions to code for reliability. We scored agreement for each behavior using gross agreement (smaller number/larger number × 100%). Mean agreement was 99.4% (range = 80%–100%) for BSP, 93.6% (range = 81.3%–100%) for unison OTRs, 97.0% (range = 83.3%–100%) for individual OTRs, 100% for choice, and 100% for precorrection. Supplemental Table S2 summarizes interobserver agreement (IOA) by participant and condition.

Social Validity

We created a short social validity questionnaire based on the Intervention Rating Profile–15 (Martens et al., 1985) to assess treatment acceptability, utility, and feasibility. Participants rated six items on a 5-point Likert-type scale (strongly disagree to strongly agree). The questionnaire also contained three open-ended questions that asked participants about the parts of the intervention they liked most, least, and found most difficult. Participants completed the questionnaire online after they completed all study activities.

Procedures

General Procedures

Prior to collecting study data, the second author met with potential participants via videoconference to describe the study and obtain informed consent. After this meeting, study activities were completed asynchronously using the course learning management system, a cloud storage system, and email.

Throughout study phases, participants submitted 10-min videos 1 to 2 times per week for a planned total of 27 videos (actual number of videos ranged from 21 to 27 due to participants’ differing teaching schedules). Participants selected a time of day when they had difficulty managing student behavior and provided teacher-directed instruction. They recorded all videos during the same type of instruction (e.g., large group math instruction; exceptions noted on graphs). Two participants (Teachers 2 and 3), who were special education teachers, recorded videos during both math and reading instruction due to scheduling constraints, but all videos occurred during small group explicit instruction.

Baseline

During baseline, teachers received instruction on classroom management practices not addressed in the study (e.g., setting classroom expectations, developing rules and routines) and submitted videos during their identified instructional times. After submitting at least three baseline videos and observing low levels or a countertherapeutic trend in one target practice, participants were given access to one target practice module.

Online Module

Following baseline and prior to collecting module phase data, participants completed an online module on the target practice. Modules included the following: (a) a short (M = 14 min) recorded lecture that defined and described how to apply the practice, (b) examples and nonexamples of how to use the practice, (c) a prompt for participants to plan to incorporate the practice into instruction, and (d) an assessment of their understanding. The first author provided feedback on both the assessment and participants’ plans for incorporating the practice into instruction. Participants were told to increase their use of the target practice after completing the module but were not given a specific goal. We reminded them weekly to try to increase use of the target practice. They did not receive additional lectures or feedback on the practice in this phase. The module phase continued for at least 2 weeks until there was a stable or countertherapeutic trend in the target behavior.

Online Module + Peer Coaching

Peer coaching included (a) a brief (20 min) online module on peer coaching, (b) an instructor-set frequency goal for the target practice, and (c) reciprocal feedback with a classmate on use of the target practice. The module included an overview of peer coaching, a rationale for its use, the steps to complete peer coaching, operational definitions for target practices, how to provide feedback, and how to use the peer coaching form. We set frequency goals using descriptive data (means and ranges) and visual analysis of the participant’s performance. Goals were also informed by research on recommended practice rates (Hollo & Hirn, 2015; Kestner et al., 2019; MacSuga-Gage & Simonsen, 2015; O’Handley et al., 2023). The average BSP goal was five (range = 2–10) and the average unison OTR goal was 27 (range = 12–38). Teacher 8’s goal for choice was two. If participants regularly met their goal, we increased it.

To complete peer coaching, participants watched their partner’s video and completed an electronic coaching form that included (a) tallying use of the target practice, (b) providing examples of use of the target practice, and (c) recommending opportunities for use of the practice (adapted from Golden et al., 2021). They completed this process within 24 hr after a video submission. Each participant was required to read through their coach’s feedback, initial and date the form, and move it to a “reviewed” folder on the cloud storage system prior to submitting their next video. In Weeks 3 to 10, participants received peer coaching on all videos. In Weeks 10 to 15, participants received peer coaching on every other video (i.e., once weekly). Importantly, six of eight participants completed a precorrection module during the peer coaching phase for BSP, which may have influenced precorrection frequencies during this phase (times noted on graphs).

Treatment Integrity

We assessed treatment integrity of module completion using data analytics in the course learning management system. These data confirmed participants watched target practice modules in their entirety and completed the quiz prior to module phase data collection.

We assessed treatment integrity of peer coaching for 25% to 50% of randomly selected sessions across participants. The treatment integrity checklist measured (a) form submission, (b) on-time submission, (c) completion of each section of the form (i.e., tally data, goal data, examples, and opportunities for use), and (d) the appropriateness of the feedback based on the teacher’s performance in the selected video (i.e., objective feedback aligned to performance). Fidelity varied by component with high fidelity for tally data, examples, opportunities for use, and appropriate feedback (average at least 96% per component). Participants had low fidelity marking whether their partner met their goal (M = 54%). Participants submitted forms on time in 80% of opportunities. The overall average treatment integrity was 74% (range = 46%–100%). Supplemental Table S3 shows treatment integrity by component.

Results

Using AATDs to Assess PD Effects

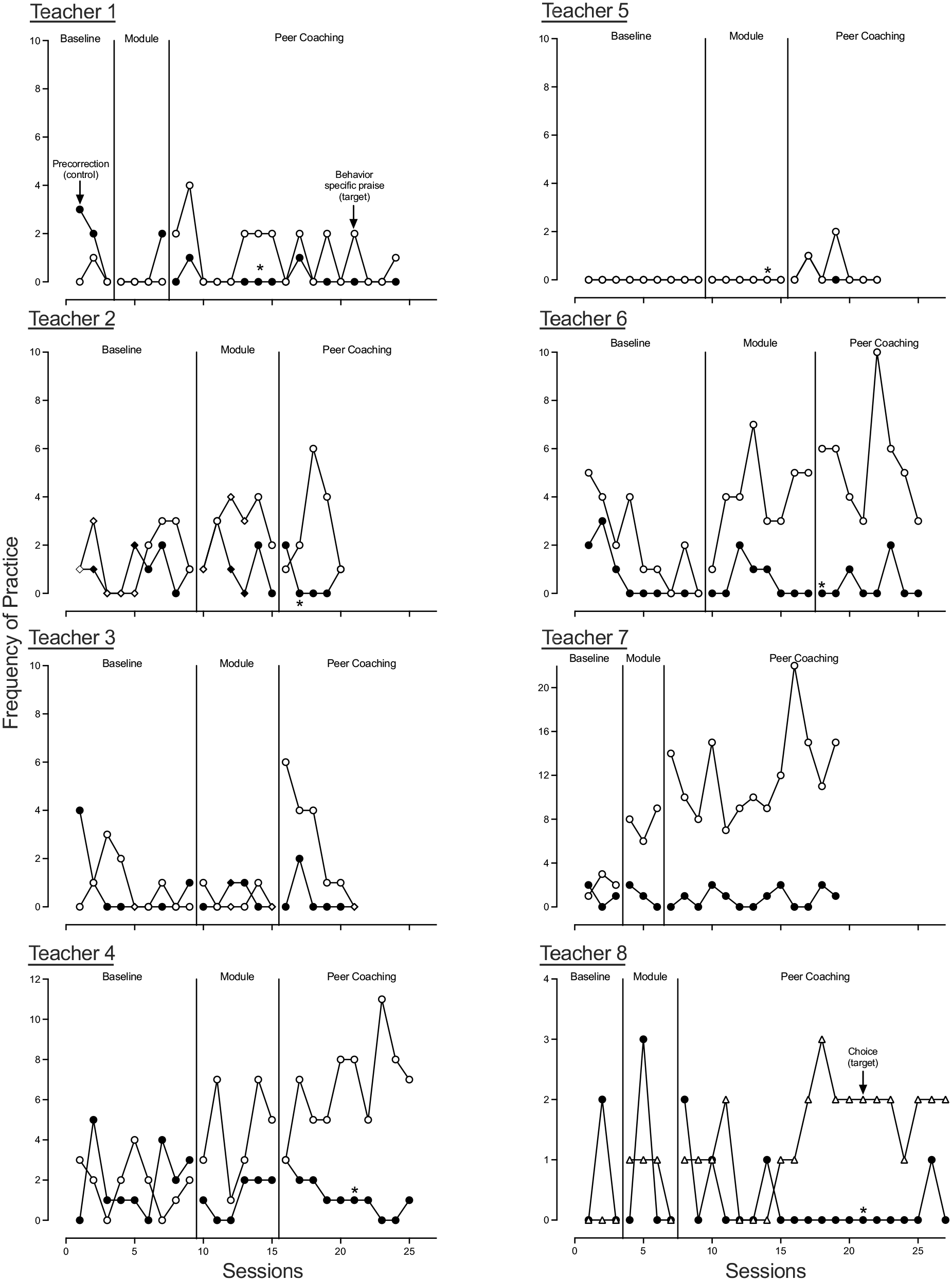

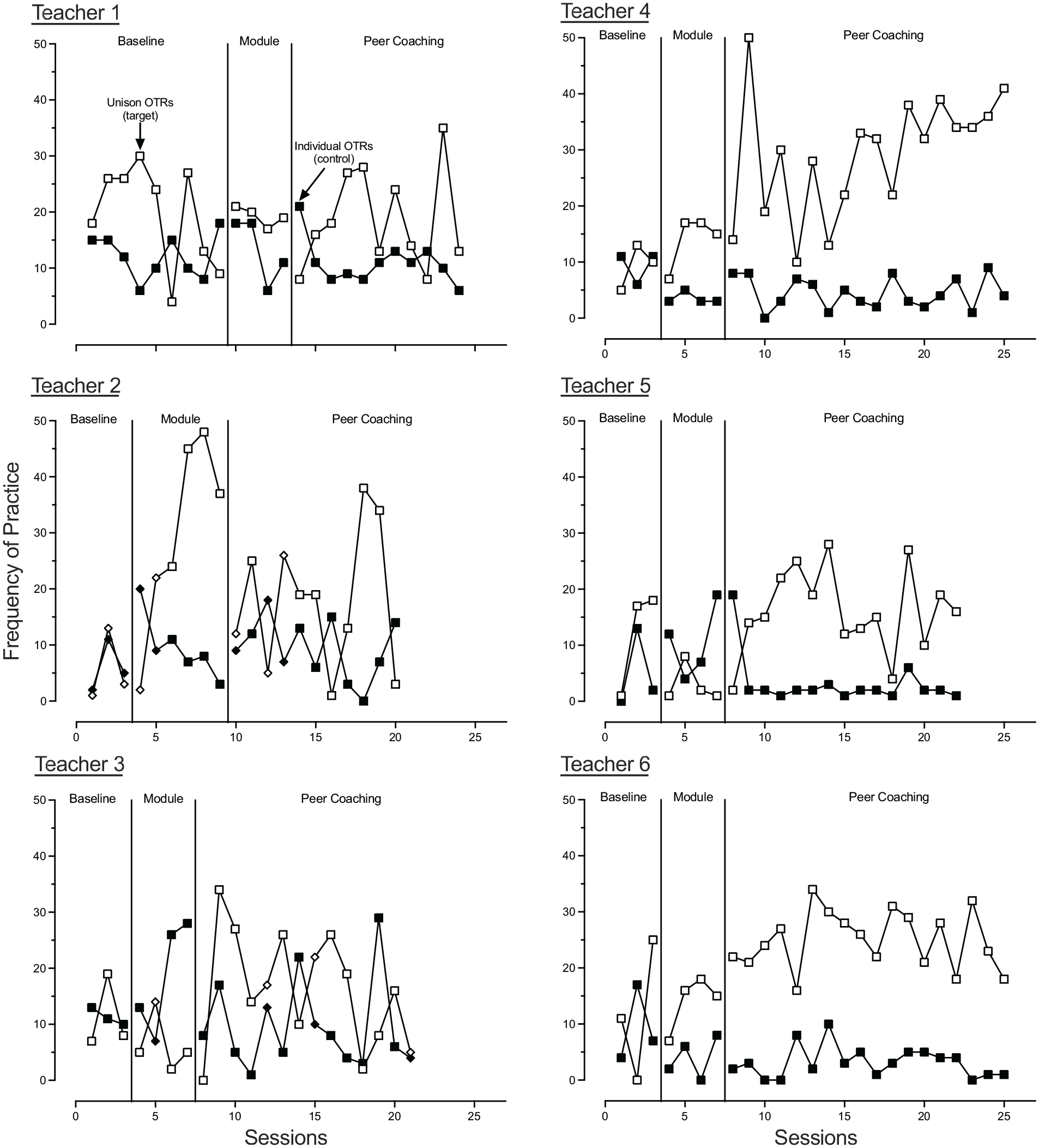

To address RQ1, we applied AATDs to one to two target practices across eight Pre-K–12 teachers. We used a nontarget practice with similar baseline frequencies as the control and compared it with the target practice to infer functional relations. We defined AATD results as interpretable if (a) they produced differentiated response patterns between target and control practices suggesting the presence of a functional relation or (b) they produced consistently undifferentiated response patterns suggesting the absence of a functional relation. We defined AATD results as uninterpretable if the control practice did not remain stable (e.g., highly variable or change in level or trend with phase change), suggesting we did not sufficiently control for threats to internal validity. We found AATDs were interpretable in all cases (14 opportunities) across two PD phases (online module, peer coaching). Figure 1 shows treatment evaluation results for BSP and choice, and Figure 2 shows results for OTRs.

Treatment Evaluation Results for Behavior-Specific Praise and Choice.

Treatment Evaluation Results for Unison Opportunities to Respond.

Online Module and Peer Coaching Effects

To address RQ2 and RQ3, we used visual analysis to observe for functional relations between use of the target practice during the online module or online module + peer coaching phase in each AATD and calculated success rates for each PD approach. Supplemental Table S4 summarizes the presence and absence of functional relations by participant. We observed a 50% success rate (seven of 14 opportunities) across target practices for the online module. We observed a 57% success rate for BSP (four of seven opportunities), a 50% success rate for unison OTRs (three of six opportunities), and a 0% success rate for choice (zero of one opportunity).

In assessing the effects of online module + peer coaching, we observed a 64% success rate (nine of 14 opportunities) across target practices. We observed a 71% success rate for BSP (five of seven opportunities), a 50% success rate for unison OTRs (three of six opportunities), and a 100% success rate for choice (one of one opportunity).

Social Validity of Peer Coaching

To address RQ4, we descriptively summarized questionnaire data and reviewed responses to open-ended questions. Six of eight participants completed the questionnaire. Supplemental Table S5 summarizes participant ratings by item. Overall, participants found peer coaching to be feasible, acceptable, and useful with mean ratings of between 4.2 and 4.7 and no individual scores below 3 on a 5-point scale. Responses to open-ended questions further supported the social validity of peer coaching. The most frequently cited parts of the intervention participants enjoyed were trying out new practices (n = 3), observing their partner (n = 2), and the positive results of using the practices (n = 2). Parts of the intervention participants liked the least varied by participant with no consistent themes.

Discussion

Single-case designs are well suited to assessing an individual teacher’s response to PD for classroom management. We conducted an initial pilot study to test the potential of AATDs as a design PD providers could use to assess PD effects. We selected this design because of its strong potential practical utility—it does not require withdrawing intervention (withdrawal design), staggering introduction of intervention (multiple baseline design), or behavior to conform to a criterion (changing criterion design). In this initial pilot, we used AATDs to evaluate the effects of PD on teachers’ use of one to two classroom management practices for eight Pre-K–12 teachers. We successfully used AATDs in all cases for both phases of intervention (online module, online module + peer coaching). However, six of eight participants completed an online module on precorrection during the BSP evaluation and were told to continue focusing on BSP. These procedures may have influenced teachers’ frequencies of precorrection tempering conclusions regarding functional relations between BSP and PD. Considering this limitation, results tentatively replicate and extend existing research on peer coaching to provide further support for its use to increase use of classroom management practices with a cautionary note that peer coaching may be better suited to increasing some practices over others depending on the teacher.

We observed some variability in response to PD approaches across teachers and practices. Three participants (Teachers 1, 3, and 5) did not increase use of one of two target practices during either phase of PD. These three participants all responded to peer coaching, but not the module for their other target practice. The AATD allowed us to detect these idiosyncratic effects. These results suggest teachers may be more likely to use some practices over others. Additional factors beyond the PD approach, such as teaching context (e.g., grade level) or preference for practices, may influence implementation. Thus, while this form of peer coaching and assessment can be effective, additional research is needed to improve consistency of effects.

Overall, these data support the need for regular data collection on classroom management practices. Furthermore, the data support the need to better understand factors predicting teacher response to PD, such as preference, which may aid PD providers in individualizing PD.

Limitations

This study has two primary limitations. First, six of eight participants completed an online module on precorrection during the BSP evaluation. It is possible this module increased use of precorrection (control practice) while evaluating the effects of PD on BSP. However, we told participants not to focus on their use of precorrection, and we did not observe a change in precorrection following module completion for any participant. The timepoint at which participants completed the precorrection module is noted on the graphs in Figure 1.

Second, treatment integrity data were variable and are reported only for participants in the study. Some participants had peer coaching partners who did not consent to the research study. While treatment integrity varied, the component implemented with the lowest fidelity was marking goal completion, while tally data, examples, and opportunities for use were completed with high fidelity. We suspect participants did not remember their partner’s goal and thus did not check the appropriate box. Providing feedback on goal completion was likely not a critical component of the intervention. Some previous peer coaching studies have not included a goal component and have demonstrated positive effects (e.g., Golden et al., 2021; Kohler et al., 1999; Tschantz & Vail, 2000). Furthermore, although treatment integrity data were not comprehensive, the instructor checked that all teachers, including those outside the study, completed peer coaching on a weekly basis. Thus, there is no reason to believe treatment integrity would have differed meaningfully for teachers who did not participate in the study.

Implications for Classroom Instruction and Intervention

This study has four key implications for practice. First, results provide tentative initial support for using AATDs to evaluate the effects of PD on teachers’ use of classroom management practices. While additional research replicating these findings is necessary before making recommendations for practice, these preliminary results show promise for practitioners who want to assess the effects of PD on teacher behavior. Adapted alternating treatment designs may be a useful tool for PD providers who need to determine whether to adapt or intensify supports for an individual teacher. To apply in practice, a PD provider could collect frequency data on use of classroom management practices using existing measures (e.g., Kestner et al., 2019) or coach-created forms during walk-through observations. The PD provider could use initial observations to select target and control practices. Then, the teacher would receive PD on a target practice, and the PD provider could collect data on practices during the following observations. The PD provider could use prespecified mastery criteria to determine when to intensify or fade PD. When PD includes peer coaching, peer coaching data could be used for the AATD, lessening the PD provider’s observation time, who could analyze the data and check in with peer coaching partners as needed. The feasibility of this approach in practice may vary based on PD providers’ training and experience with SCD. School-based behavior analysts with graduate training in SCD or instructors in teacher preparation programs may find this a more feasible approach than school-based coaches with limited background in SCD who may need training in the AATD to apply this assessment method.

Second, results underscore previous findings that brief, didactic trainings may need to be supplemented (Brock & Carter, 2017; Simonsen et al., 2017) for many teachers. In half of cases (seven of 14 opportunities), we did not observe an increase in the target practice following module completion. These results replicate prior research in which a single didactic training session was insufficient to change teacher behavior (Gage et al., 2016, 2017). While brief, didactic trainings are a low-resource method for delivering PD, practitioners should take heed that these trainings will need to be supplemented with additional supports.

Third, results provide further support for peer coaching to improve teaching practices, strengthening peer coaching research on classroom management skills. All teachers increased use of at least one target practice and participants reported peer coaching to be acceptable, feasible, and useful. This study builds on the growing evidence base supporting the use of peer coaching to increase a range of teaching skills and replicates studies reporting peer coaching as socially valid (Golden et al., 2021; Morgan et al., 1994; Tschantz & Vail, 2000).

Finally, there were a few cases in which teachers did not increase use of both target practices. Consistent with results of previous studies, results suggest teachers need differing supports to increase use of classroom management practices (Gage et al., 2017; Simonsen et al., 2014). The results also suggest additional factors, beyond PD approach, may influence implementation.

Supplemental Material

sj-docx-1-aei-10.1177_15345084231190285 – Supplemental material for The Potential Promise of Using Adapted Alternating Treatment Designs to Assess Teachers’ Use of Classroom Management Practices

Supplemental material, sj-docx-1-aei-10.1177_15345084231190285 for The Potential Promise of Using Adapted Alternating Treatment Designs to Assess Teachers’ Use of Classroom Management Practices by Jessica N. Torelli, PhD, Christina R. Noel, PhD, Thomas J. Gross, PhD and Kaitlin A. Morris, MA in Assessment for Effective Intervention

Footnotes

Acknowledgements

We thank Evyn Hendrickson, Mallory Glowatz, Alyson Parker, and Haylee Hood for their assistance with data collection.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

We received funding from Western Kentucky University, College of Education and Behavioral Sciences, through a Quick Turn-Around Grant awarded to Dr. Jessica Torelli.

Supplemental Material

Supplemental material is available on the Assessment for Effective Intervention webpage with the online version of the article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.