Abstract

This article focuses on the psychometric properties and characteristics of the Assessing School Settings: Interactions of Students and Teachers (ASSIST), an observational assessment administered by trained external observers of teacher practices, classroom context, and student behaviors at the classroom level. Study 1 examines variability, reliability, and convergence between ASSIST scores with data from 3,298 classrooms nested within 185 elementary, middle, and high schools. We report school-level intraclass correlations (ICCs), standard deviations, means, and reliability estimates of ASSIST scales; investigate the correspondence among ASSIST-measured constructs with multilevel correlations; and explore school-level predictors of ASSIST global scale scores. Study 2 further examines reliability over time and convergent validity using repeated ASSIST and Classroom Assessment Scoring System–Secondary (CLASS-S) observations in classrooms of 335 teachers in 41 middle schools. The ASSIST global measures exhibited moderate to good reliability across three same-teacher observations (ICCs ranged from .69 to .82). Associations between all ASSIST and CLASS-S scales suggested close correspondence of the measures, especially at the teacher level and school level. Together, these findings highlight the utility of the ASSIST observational measure, both as a research and practice tool, across multiple school types and classroom contexts, and with potential to inform coaching to improve teachers’ classroom management.

Effective classroom management creates conditions that are critical for students’ academic, social, and emotional learning (Evertson & Weinstein, 2011). Classroom management practices, or strategies, are tools that teachers can use to create such conditions. Research has shown that teachers’ use of effective classroom management practices have positive effects on students’ academic, behavioral, and social-emotional outcomes (Korpershoek et al., 2016). A common theme in the classroom management literature is the importance of foundational, preventive, and responsive practices, with a preference for preventive, proactive strategies (Collier-Meek et al., 2019). Another component of classroom management is reactive strategies, which are appropriate when proactive efforts are unsuccessful (Korpershoek et al., 2016).

Observational measures of teachers’ classroom management practices have emerged as preferred methods of assessment and are useful in both research and practice. In fact, external observations of teacher practices and student outcomes are often considered the gold standard in educational evaluation research. Only a handful of such measures have been developed for wide-scale use in school-based trials, and even fewer are routinely implemented for practice purposes. One measure which has received increased attention in recent years is the Assessing School Settings: Interactions of Students and Teachers (ASSIST; Rusby et al., 2011). The current article focuses on the psychometric properties of this instrument, leveraging extant data from several studies using the ASSIST across elementary, middle, and high school settings. We have organized this information into two specific studies, the first of which largely focuses on presenting ASSIST descriptive statistics and reliability estimates that would be helpful to researchers aiming to utilize the ASSIST in future research or practice. This first study also examines correlations among the scales of the ASSIST to understand whether the scales correspond as expected based on known relations among the classroom management constructs they are designed to measure. The second study explores reliability across repeated measures and convergent validity evidence (i.e., the relations with other conceptually similar measures; American Educational Research Association [AERA], American Psychological Association [APA], & National Council on Measurement in Education [NCME], 2018) using the Classroom Assessment Scoring System–Secondary (CLASS-S). Together, this set of studies is intended to provide evidence of the reliability, validity, and utility of the ASSIST as an observational tool for assessing teachers’ practices and student behaviors in classroom, both from a research and practice perspective.

Commonly Used Observational Tools of Teachers’ Classroom Practices

There are a variety of observational tools to assess classroom management that differ in method and focus. While some measures were initially created to focus on classroom management practices as they relate to a specific target student (e.g., Setting Factors Assessment Tool [Stichter et al., 2009]; Teacher-Pupil Observation Tool [Martin et al., 2010]), others were developed to assess the teachers’ classroom practice as a whole (e.g., Classroom Assessment Scoring System [CLASS]; La Paro et al., 2004; Classroom Management Observation Tool; Simonsen et al., 2020). Among the most commonly used of these measures is the CLASS, which assesses the quality of teachers’ interactions with students in the classroom. The CLASS involves a 20-min observation period where trained observers assess the quality of teachers’ interactions with students in various dimensions on a scale of 1 to 7. The CLASS dimensions are traditionally organized into three overarching domains: Emotional Support, Classroom Organization, and Instructional Support; the Classroom Organization domain is comprised of dimensions aligned with classroom management (i.e., Behavior Management, Productivity, and Negative Climate; La Paro et al., 2004). The secondary-level version of the measure (CLASS-S) also includes a single dimension for Student Engagement, which is typically considered separately from the three overarching domains.

In contrast to global measures of classroom management such as the CLASS, assessments of teachers’ use of discrete classroom management practices are also useful, as they can isolate specific practices that are known to be effective and allow for concrete feedback to be given to teachers. Despite their utility, such measures are relatively new (e.g., Brief Classroom Interaction Observation–Revised; Reinke et al., 2015), with much more to be known about their reliability, validity, and utility in real-world classroom settings. There is also a need for measures that can be easily administered in schools without considerable burden on schools or financial drain on research budgets. The ASSIST measure was created in part to reduce these burdens. Later, we describe in greater detail the background and structure of the ASSIST and some of the ways it has been used in research studies.

ASSIST Procedures and Key Constructs

The ASSIST was originally developed by Rusby et al. (2011) as a low-inference observational assessment providing objective assessments of teachers’ classroom management practices and student behavior. A unique feature of the ASSIST is its inclusion of indicators of both teacher and student behaviors, whereas most other extant observational tools focus on just one or the other, and rarely both. The ASSIST also was designed to incorporate both “global” or general assessments of classroom conditions and teacher behaviors, in addition to more discrete assessments referred to as “tallies.” As such, it provides a snapshot of teachers’ global classroom management practices and concrete counts of specific classroom management practices and student behaviors. The ASSIST is administered in a 15-min observation period during which a trained observer tallies (i.e., counts) specific teacher and student behaviors and thereafter completes Likert-style global ratings of teacher and student behaviors. The observers first tally the behaviors of teachers and students, including strategies to engage students, specifically proactive behavior management strategies (“Proactives”), positive reinforcement of desirable student behaviors (“Approvals”), and providing opportunities for student response (“Opportunities to Respond”). Other teacher behaviors tallied include responses to student behavior, such as verbal or non-verbal cues to non-punitively correct student behavior (“Reactives”), as well criticisms, punitive consequences, or threats in response to student behavior (“Disapprovals”). Student behaviors include instances when students do not cooperate (“Noncompliance”), as well as disruptive behaviors (“Disruptives”).

Immediately after conducting the tallies in real time as a prime for the global ratings, observers step out of the classroom and perform the following ratings, which take approximately 5 to 10 min to complete. These ratings are organized into five theoretically derived scales that reflect the foundational, preventive, and responsive nature of effective classroom management practices (as described by Collier-Meek et al., 2019), including: (a) Teacher Direction and Influence, which assesses for evidence of classroom routines and how the teacher influences student behavior; (b) Teacher Proactive Behavior Support scale, which assesses teachers’ use of foundational practices, including items related to provision of clear instructions and learning objectives, and praise; (c) Teacher Monitoring assesses whether a teacher positions themselves to engage in active supervision of student behavior; (d) Teacher and Student Meaningful Participation assesses whether teachers provide students opportunities to respond, make choices, and take leadership roles, as well as prosocial classroom behavior among students; and (e) Teacher Anticipation and Responsiveness focuses on teacher precorrections and anticipation of student behavioral and academic difficulties. The latter three global rating scales measure preventive classroom management practices that help teachers avoid the emergence of problem behaviors, through active supervision, creating opportunities to respond, and offering precorrections. In addition, responsive classroom management practices reinforce appropriate behavior and discourage problem behavior. The Teacher Anticipation and Responsiveness scale of the ASSIST spans both preventative and responsive practices by additionally assessing teacher responsiveness to student needs. Finally, the global ratings of student classroom behavior are organized into two scales: (a) Student Cooperation and (b) Student Socially Disruptive Behavior. Student Cooperation assesses whether students comply with rules, are respectful, and demonstrate academic readiness. Student Socially Disruptive Behavior focuses on a range of disruptive behaviors in the classroom, including social conversations occurring at unauthorized times, irritability, sarcasm, arguing, verbal aggression, and bullying.

Prior Research on the ASSIST

There is a robust and growing body of research using the ASSIST across a range of school types and settings (e.g., Bradshaw et al., 2018, 2021; McDaniel et al., 2022). In terms of measurement, the ASSIST is known to demonstrate evidence of the internal structure (i.e., via factor analysis) and relatively strong or partial measurement invariance across school levels (i.e., elementary, middle, and high school), class sizes, and classes of various racial compositions (McDaniel et al., 2022). Importantly, teachers’ classroom management practices as measured by the ASSIST are sensitive to intervention, suggesting the measure also has practical utility in real-world classroom settings (Bradshaw et al., 2018, 2021). Given the ASSIST was designed to focus largely on classroom management and behavior (Rusby et al., 2011), it has been particularly helpful for assessing behaviorally focused preventive interventions, such as those focused on classroom management, positive behavior support, and other teacher-focused preventive interventions directed at increasing positive student behaviors and reducing student disruptions (see, for example, Bradshaw et al., 2018).

The Present Study

The ASSIST has been used in several prior studies of classroom management and universal preventive interventions, suggesting it is a promising tool for detecting intervention effects. This article contributes to the growing body of research on the ASSIST by documenting foundational psychometric work, which will be helpful for researchers interested in using this tool for both research and practice purposes. We aimed to provide specific information regarding the instrument’s psychometric properties and characteristics useful to designing studies using the ASSIST (e.g., power analysis). To achieve these goals, we present findings from two studies, leveraging extant ASSIST data collected by our team over multiple trials. The goal of Study 1 is to report scale descriptive statistics (i.e., means and standard deviations), alphas, and intraclass correlations (ICCs) within school levels (i.e., elementary, middle, and high schools), consistent with the measurement reporting standards outlined by the American Educational Research Association (AERA, 2018). In addition, we examined correspondence of ASSIST scale mean scores (i.e., multilevel correlations among ASSIST global scales and tallies) and explored school-level correlates of the ASSIST global scales. The goal of Study 2 was to explore within-teacher reliability of the ASSIST across repeated measures, as well as convergence with the CLASS-S. Together, this collection of studies is intended to document the psychometric properties of the ASSIST and inform future use of the measure.

Study 1: Scale Descriptives, Alphas, and ICCs (Clustering Within Schools)

Sample

Data for this study were drawn from baseline assessments across seven separate research projects. The analytic sample consisted of data from 3,298 classrooms nested within 185 schools. The participating schools were located in two states in the Southern region of the United States and ranged in enrollment from 188 to 2,285 students, with an average of 814 students enrolled per school. Students enrolled in participating schools were, on average, 41.61% African American (SD = 27.04%), 11.58% Latinx (SD = 11.88%), and 37.96% White (SD = 26.10%). On average, 51.06% (SD = 22.91%) of students in a school received free or reduced-price meals. Of the participating classroom teachers, 19.19% of teachers (n = 633) were in elementary school classrooms, 38.17% in middle school classrooms (n = 1,259), and 42.63% in high school classrooms (n = 1,406). Teachers were typically female (49.58% female; 21.13% male; 29.29% missing) and in classrooms with 21 students on average (SD = 6.40), of which 41.71% were White (SD = 28.62%) and 50.06% male (SD = 14.63%).

Procedure

We conducted an integrative data analysis (Curran & Hussong, 2009), combining data from seven randomized controlled trials (RCTs) of school-based interventions (see Bradshaw et al., 2021, 2018; Lindstrom Johnson et al., 2023; Pas et al., 2019; Pas et al., 2022). Although the data were from separate projects, the same training procedures and administration of observations were coordinated by a shared research team. Trial participation involved a trained ASSIST observer from the research team who conducted live classroom observations using a handheld tablet. To avoid the influence of intervention effects, only data from baseline assessments were used in the present study. Participation by schools and teachers in each trial was voluntary. The Institutional Review Board (IRB) approved each of the RCTs.

ASSIST Training

The same training procedures were used across all studies from which the ASSIST data were drawn. All observers were trained to reliability and certified in the ASSIST by the same lead trainers using the same training procedures. Independent observers were contracted and completed an 8-hr didactic training, including extended video coding practice and feedback. After the didactic training, observers underwent in-school live practice with an expert ASSIST observer. Observers then completed a live, in-school reliability assessment. Observer trainees were deemed certified and reliable in the ASSIST after passing the in-school reliability assessment with 80% or higher inter-rater reliability with expert observers across student and teacher tallies. Midway through the baseline data collection for the trials, observers completed field-based or video-based reliability assessments to recalibrate and correct any rater drift. At midpoint recalibration, observers were allowed to continue observations only after again meeting or exceeding 80% reliability with an expert observer. In prior research on the ASSIST, ICCs indicated little variability across multiple observations of the same teacher over time. Specifically, ICCs of the ASSIST global scales (i.e., repeated observations of the same teacher across time) ranged from .72 to .81, with an average of .75, suggesting relatively low variability across the three observations, with most of the variance nested at the teacher level (see Gaias et al., 2019). For additional details on the training and reliability of the observers, see Debnam et al. (2015) and Pas et al. (2015).

Administration of the ASSIST

Data were collected using a handheld Samsung tablet and the Pendragon Forms Software Program. Per the ASSIST administration protocol (Rusby et al., 2011), upon entering the classroom, observers spent 3 min adjusting to the physical and social environment and recording descriptive information about the classroom. The observer then commenced with the 15-min observation window during which they kept a live tally of teacher and student behaviors. After conducting the tallies, the observers then stepped outside of the classroom and completed the global ratings. Due to logistical considerations, the timing of observations ranged from morning to afternoon across the seven projects. For additional details on the ASSIST administration procedures, see Bradshaw et al. (2018); Debnam et al. (2015); and Pas et al. (2015).

Measures

ASSIST Measure

The ASSIST is an observational measure of teachers’ classroom management strategies, which consists of the following behavioral tallies (i.e., frequency counts of specific teacher and student behaviors) and global ratings (Rusby et al., 2011).

Tallied Scales

Tallied counts of teacher practices and student behaviors included Proactive Behavior Management (“proactives”; for example, teacher explains or has students practice an expected behavior before behavior becomes a problem); Reactive Behavior Management (“reactives”; for example, teacher uses verbal or non-verbal cues to non-critically/non-punitively correct or supportively redirect behavior); Approvals (e.g., teacher gives tangible reinforcement, verbal praise, or approving gesture in response to student academic performance or behavior); Disapprovals (e.g., teacher gives tangible punitive consequence or threat in response to student behavior); Opportunities to Respond (OTRs; for example, teacher provides prompt requiring an immediate response from students); Noncompliance (e.g., student or students do not follow a directive for a behavior change); and Disruptives (e.g., student disrupts or interferes with activities of other student or the classroom, taking the teacher off-task).

Global Scales

The 42 Likert-style items were designed to be theoretically organized into seven scales focused on teachers’ classroom management practices and student classroom behavior rated on a 5-point Likert scale (i.e., 0 = “Never”; 1 = “Seldom”; 2 = “Some of the time”; 3 = “A lot of the time”; 4 = “Almost continuously”) (with the exception of the Student Socially Disruptive Behavior items). Items for each scale were averaged to create a scale mean. Missing data was rare and fairly negligible. Specifically, on the global scales, there was approximately 1% missingness on each of the items, with the exception of four items which had a “Not applicable” items which had a “Not applicable” response option. Missingness on these four items (i.e., due to “Not applicable” or non-response) ranged from 31.69% to 51.73%. A prior study by McDaniel et al. (2022) validated the factor structure of these scales. Whereas factor scores partial out measurement error, they are less amenable to practice-based use. Thus, the current study utilized scale means, which are more often used in practice settings. The five-item Teacher Direction and Influence scale assesses the establishment of classroom routines and how well the teacher influences student behavior (e.g., “Teacher has good control of or influence on students”). Higher scores indicate greater direction and influence. The four-item Teacher Proactive Behavior Support scale reflects how often teachers proactively utilize strategies that prevent disruptive behavior (e.g., “Teacher gives clear instructions and directives to students”). Higher scores indicate greater support. The four-item Teacher Monitoring scale assesses the extent to which the teacher uses strategies such as positioning and scanning to monitor student behavior (e.g., “Teacher monitors all students and all areas”). Higher scores indicate greater monitoring. The nine-item Teacher and Student Meaningful Participation scale captures student and teacher behaviors, assessing teacher facilitation and scaffolding of student involvement as well as student behaviors that demonstrate their engagement (e.g., “Teacher encourages students to share their ideas and opinions”). Higher scores indicate greater participation. The six-item Teacher Anticipation and Responsiveness scale assesses how frequently the teacher anticipates and is responsive to various student needs (e.g., “Teacher anticipates when students may have problems academically”). Higher scores indicate greater teacher anticipation and responsiveness. The seven-item Student Cooperation scale assesses how often students comply with classroom expectations, are respectful, and cooperate (e.g., “Students are focused and engaged”). Higher scores indicate greater cooperation. The seven-item Student Socially Disruptive Behavior scale captures a range of socially disruptive behaviors, from social conversations occurring at unauthorized times, to verbal aggression toward the teacher, to peer bullying (e.g., “Students argue with peers”). Items were rated on a 5-point Likert scale, that is, 0 = “Rarely occurred (0 times)”; 1 = “Rarely occurred (1 time)”; 2 = “Occurred a few times (2–3 times)”; 3 = “Sometimes occurred (4–6 times)”; and 4 = “Often occurred (6+ times).” Higher scores indicate more frequent disruptive behavior.

Analytic Plan

Preliminary Analyses

Data preparation and analysis was conducted in IBM SPSS Statistics, Mplus, and R. Some of the research projects included three baseline assessments per teacher; for these projects, we utilized the sample_n function in the dplyr package (Wickham et al., 2020) in R to randomly sample one observation per teacher at baseline to be included in the current analyses.

ASSIST Descriptive Statistics

To aid in interpretation and serve as a reference for those interested in using the ASSIST in future applied research or practice, where common practice is to calculate scales using the algebraic mean, we calculated for each subscale: the alpha, ICC (based on the multilevel teachers nested within schools random effects model; Koo & Li, 2016), and the algebraic mean and standard deviation by classroom level (i.e., elementary, middle, and high school classrooms).

Correspondence Among ASSIST Scales

To understand whether correspondence among ASSIST scales is consistent with the ASSIST’s hypothesized nomological net (Cronbach & Meehl, 1955), multilevel (teacher level and school level), bivariate correlations among the ASSIST global and tally scores were estimated in Mplus. Correlations were estimated separately for each pair of ASSIST global scales using latent variance partitioning of the two variables into their teacher-level and school-level components under robust maximum likelihood estimation (Heck & Thomas, 2020). As correlations for normally distributed variables are not relevant for count variables, the association between each tally and global scale was estimated by regressing each global scale (i.e., outcome) on each tally score (i.e., predictor) in a two-level model with a random intercept using robust maximum likelihood estimation. Both teacher-level tally scores (group mean centered) and school-level tally scores (i.e., average of all teacher scores within a school; grand mean centered) were included as predictors in each model.

School-Level Correlates With ASSIST Global Scales

To explore school-level correlates of ASSIST global scales, a series of two-level models were fit in Mplus utilizing robust maximum likelihood estimation. One model was estimated for each global scale specified as the outcome with a random intercept. Models included no teacher-level predictors, whereas the following school demographics were specified as predictors at the school level: number of students enrolled, percentage of Black students, percentage of Latinx students, and percentage of students receiving free or reduced-price meals (all grand mean centered). These analyses were done to ensure that ASSIST scores were not serving as proxies for school demographics (i.e., support for discriminant validity of the scales; AERA, 2018).

Results

ASSIST Descriptive Statistics

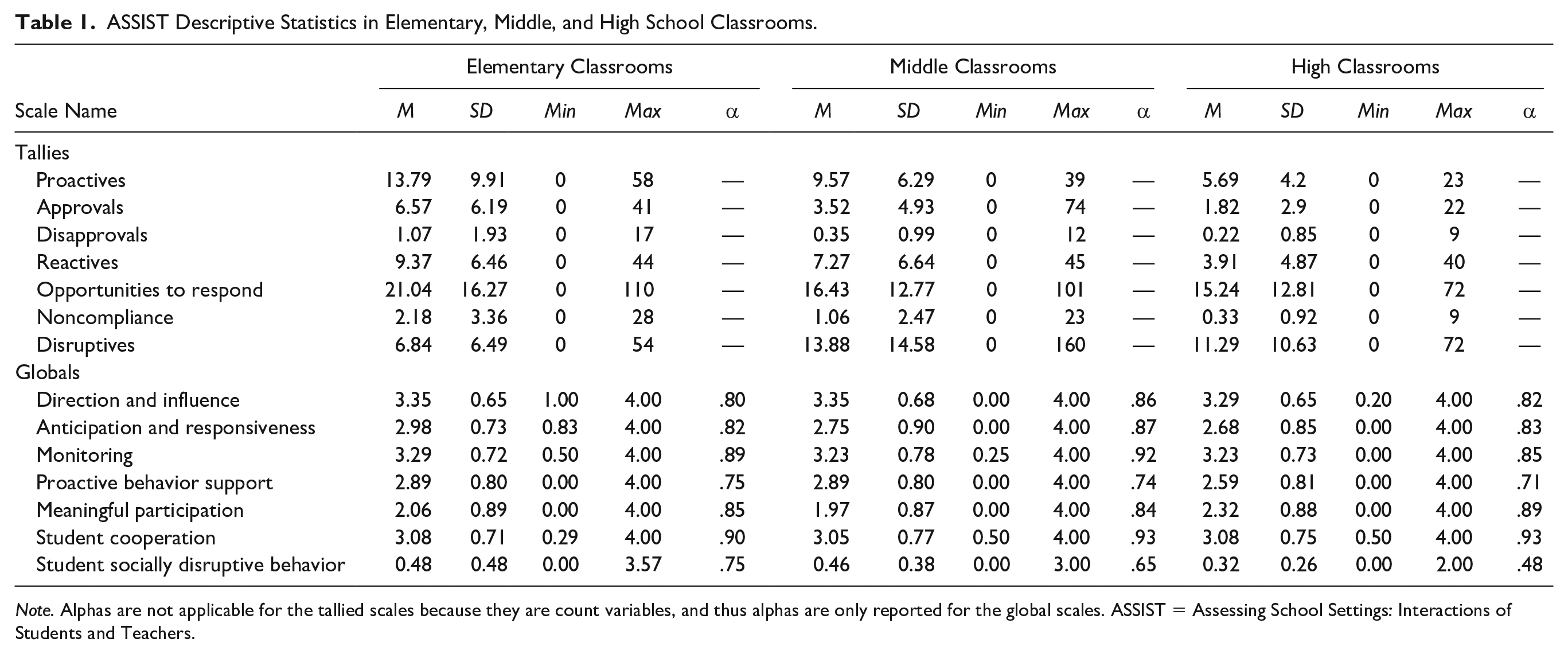

Descriptive information for the ASSIST tally and global scores is provided in Table 1 (excluding Cronbach’s α for tallies, given these are inappropriate for count data). Additional descriptive information on ASSIST global scale means is presented in online supplemental Table S1(Note. Tables with S in front of a number are supplemental tables.)

ASSIST Descriptive Statistics in Elementary, Middle, and High School Classrooms.

Note. Alphas are not applicable for the tallied scales because they are count variables, and thus alphas are only reported for the global scales. ASSIST = Assessing School Settings: Interactions of Students and Teachers.

Correlations Among ASSIST Scales

Bivariate multilevel correlations among the ASSIST global scales are presented in Table S1. All statistically significant teacher-level correlations were in the expected direction, as were the school-level correlations with the exception of the correlation between Teacher Monitoring and Student Socially Disruptive Behavior (r = .811, p < .001) for elementary schools. This correlation suggests that as elementary school-level Teacher Monitoring increases, there is a corresponding school-level increase in Student Socially Disruptive Behavior. The standardized bivariate associations among each tally and global scale are presented in online supplemental Table S2.

Associations Between ASSIST Global Scales and School Demographics

The standardized results of analyses exploring the association between the school-level demographic variables and the ASSIST global scales are presented in Table S3. As anticipated, there were very few significant associations between the school-level demographic characteristics and the ASSIST global scores, as only two associations were at the p < .01 level. Specifically, elementary schools with a greater percentage of Latinx students demonstrated lower levels of school-level Student Cooperation (β = −.405, p < .01), and middle schools with a greater percentage of students eligible for free or reduced-price meals demonstrated higher levels of school-level Student Socially Disruptive Behavior (β = .574, p < .01). This suggests that the ASSIST scores contribute information beyond school demographics.

Study 2: Within-Teacher Reliability and Convergent Validity With the CLASS-S

Sample

Data for Study 2 were drawn from baseline assessments from an RCT in 335 middle school classrooms nested within 41 middle schools in four primarily urban and urban fringe school districts in the Mid-Atlantic region of the United States (see Pas et al., 2022). Classrooms were recruited across four consecutive years; the schools served a relatively high proportion of Black students (M = 63%; range: 4%–99%) and Hispanic/Latinx students (M = 11%; range: 0%–75%) compared with national averages. The proportion of students qualifying for free or reduced-price meals varied across schools (M = 58%; range: 4%–94%). Participating teachers’ self-reported demographics indicated that a vast majority were general education teachers (97.2%), with others were in special education, paraprofessional, or assistant roles. Most teachers taught sixth grade (38.8%), seventh grade (29.4%), or multiple grades (24.8%); relatively few (7.0%) taught eighth grade by design because the larger evaluation was multi-year and enrolling eighth-grade teachers meant their students would not be as easily available for the follow-up year. The majority of teachers identified as female (76.8%) and most identified as either White (53.3%) or Black (35.2%). Table S4 provides more detail on Study 2 teacher characteristics.

Procedure

Participation was voluntary and the project was approved by the IRB. Largely, the same ASSIST training and administration procedures as described for Study 1 were used for this study, with two exceptions. First, whereas Study 1 utilized only one ASSIST cycle per teacher, Study 2 analyzed approximately three ASSIST cycles and approximately three Classroom Assessment Scoring System–Secondary (CLASS-S®; Allen et al., 2013) cycles per teacher, all collected at baseline. That is, in addition to completing ASSIST training as described under Study 1, observers in this study were also certified in the CLASS-S, with training provided by Teachstone. Specifically, after conducting each ASSIST tally observation and completing the ASSIST global rating scales, observers re-entered the classroom to conduct CLASS-S observations. However, due to some logistical and scheduling challenges in conducting observations within school contexts, not all teachers were observed exactly three cycles with each measure. There were a total of 952 observation cycles (2.84 cycles of ASSIST and CLASS observations per teacher).

Measures

ASSIST Measure

The same global ratings and tallied scales included in Study 1 were used in Study 2 (see Study 1 Measures). Each ASSIST item was missing less than 4%, on average.

CLASS-S Measure

The CLASS-S focuses on the quality of interactions among teachers and students, considering both teacher and student behaviors to assess quality. In accordance with the measure’s framework, classroom interactions were rated based on 10 dimensions of quality on a 7-point scale (1 = low; 7 = high). Domains of interaction quality were calculated by averaging specific dimensions; these were Emotional Support (i.e., Positive Climate, Teacher Sensitivity, Regard for Student Perspectives), Classroom Organization (i.e., Behavior Management, Productivity, and Negative Climate-reversed), and Instructional Support (i.e., Instructional Learning Formats, Content Understanding, Analysis and Inquiry, Quality of Feedback, and Instructional Dialogue). Student Engagement focuses primarily on behavioral dimensions of engagement and stands alone as a single dimension.

Analytic Plan

Within-Teacher Reliability

To assess the reliability of the ASSIST measures across observation occasions, one-way random intraclass coefficients were calculated, that is, ICC (1, k), different from the school-level nesting ICCs calculated in Study 1. ICCs less than .50 were considered poor, ICCs between .50 and .75 were considered moderate, ICCs between .75 and .90 were considered good, and ICCs above .90 were considered excellent (Koo & Li, 2016).

Convergent Validity: Relations With CLASS-S

To explore how ASSIST scales and CLASS domains were related, three-level bivariate associations were examined in Mplus Version 8, with measurement occasions (Level 1) nested within teachers (Level 2) nested within schools (Level 3). For ASSIST Global scales, which are continuous measures, bivariate correlations were modeled separately for each pair of ASSIST scale and CLASS domain. As with the analysis in Study 1 focused on correspondence among ASSIST scales, this analysis used latent partitioning of teacher-level and school-level variance of the two variables. The robust maximum likelihood estimator was used to account for multivariate non-normality. Standardized covariances (i.e., correlations) were the parameter of interest.

As in Study 1, relations for the ASSIST Tally scales were examined utilizing regression. Each CLASS domain was regressed on each ASSIST Tally scale, separately in a three-level model. At the occasion level (Level 1), the ASSIST Tally scales were centered within teacher; at the between-teacher level (Level 2), ASSIST Tally scales were averaged and then centered within their school; at the between-school level (Level 3), ASSIST Tally scales were averaged and grand mean centered. This centering approach ensured that associations were examined separately at each level. Standardized regression coefficients were the parameter of interest.

Results

Table S5 provides sample descriptive statistics for the ASSIST global scales, ASSIST tally scores, and CLASS domains, including sample size, mean, standard deviation, median, minimum, and maximum.

ASSIST Within-Teacher Reliability

Table S5 also provides ICCs for the ASSIST global scales, ASSIST tally scores, and CLASS domains. Three of the ASSIST global scales exhibited moderate reliability, Proactive Behavior Management: ICC(1, k) = .72; Teacher-Student Meaningful Engagement: ICC(1, k) = .73; and Student Socially Disruptive Behavior: ICC(1, k) = .69. The other four exhibited good reliability, Direction and Influence: ICC(1, k) = .81; Anticipation and Responsiveness: ICC(1, k) = .77; Monitoring: ICC(1, k) = .82; and Student Cooperation: ICC(1, k) = .81. Among ASSIST Tally scales, one exhibited poor reliability, Disapprovals: ICC(1, k) = .24; five exhibited moderate reliability, Proactives: ICC(1, k) = .54; Approvals: ICC(1, k) = .66; Reactives: ICC(1, k) = .65; OTRs: ICC(1, k) = .65; and Noncompliance: ICC(1, k) = .74; and one exhibited good reliability, Disruptives: ICC(1, k) = .80. All three CLASS Domains, as well as the Student Engagement dimension, exhibited moderate reliability, ICC(1, k) ranged from .69 to .74.

Convergent Validity: Relations Between ASSIST and CLASS-S

Table S6 provides correlations estimated at three levels for the CLASS Domains and ASSIST Global scales. Correlations were all in the expected direction (i.e., all positive, with the exception of correlations for Student Socially Disruptive Behavior) and were generally weakest at the within-teacher level (Level 1) and strongest at the between-teacher level (Level 2). At the within-teacher level (Level 1), correlations ranged in magnitude from |r| = .12 to .39 (average |r| = .21) and were significant at the p < .001 level for 16 (57%) out of the 28 correlation coefficients estimated. At the between-teacher level (Level 2), correlations ranged in magnitude from |r| = .62 to .99 (average |r| = .87) and were significant at the p < .001 level for all (100%) of the 28 correlation coefficients estimated. At the between-school level (Level 3), correlations ranged in magnitude from |r| = .10 to 1.00 (average |r| = .64) and were significant at the p < .001 level for 21 (75%) out of the 28 correlation coefficients estimated.

Table S7 provides standardized regression coefficients for models regressing each CLASS domain on each ASSIST Tally scale. Correlations were all in the expected direction (i.e., positive for Proactives, Approvals, and OTRs; negative for Disapprovals, Reactives, Noncompliance, and Disruptives) and were weakest at the within-teacher level (Level 1). Correlations were generally largest in magnitude at the school level (Level 3) but most often significant at p < .001 at the teacher (Level 2). At the within-teacher level (Level 1), coefficients ranged in magnitude from |B|= 0.01 to 0.20 (average |B|= 0.08) and were significant at the p < .001 level for 4 (14%) out of the 28 coefficients estimated. At the between-teacher level (Level 2), coefficients ranged in magnitude from|B| = 0.19 to 0.68 (average |B| = 0.38) and were significant at the p < .001 level for 21 (75%) out of the 28 coefficients estimated. At the between-school level (Level 3), correlations ranged from |B| = 0.09 to 0.75 (average |B| = .45) and were significant at the p < .001 level for 14 (50%) out of the 28 coefficients estimated.

Discussion

Effective classroom management is critical to fostering a positive and equitable classroom context for students’ academic, social, and emotional learning. The ASSIST’s observational nature, combination of global assessments and discrete assessments of concrete practices, and measurement of both the proactive and reactive strategies known to be the cornerstones of classroom management makes it particularly useful in intervention research and practice. Prior studies also suggest that it is sensitive to intervention effects (e.g., Bradshaw et al., 2018, 2021), indicating it can be helpful for intervention researchers and practice. The current study aimed to advance prior work on the ASSIST by providing further evidence of its reliability and validity through a series of two studies, spanning elementary through high schools. Later, we consider the main takeaways and implications for research and practice.

ASSIST Scale Correspondence and Reliability

The scales scores of the ASSIST demonstrate adequate reliability and correspondence, indicating that the items for each subscale function well as a group and scales correspond as hypothesized via the nomological net. These findings, in conjunction with previous research confirming the factor structure of the ASSIST (McDaniel et al., 2022), provide further evidence that the ASSIST demonstrates adequate internal consistency reliability, internal subscale correspondence, and structural validity for use in research studies conducted across elementary, middle, and high schools. Few other observational tools span such a wide range of use across multiple grade levels, making the ASSIST particularly versatile for large-scale studies. The means and standard deviations reported here also provide descriptive information about the relative frequency of these classroom management practices, indicating, for example, that classrooms are generally observed to exhibit higher levels of teacher Monitoring than Meaningful Participation. Modest, but not exceptionally high, correlations among ASSIST scales provide evidence that the scales are related in hypothesized directions and indicate that the scales do, as expected, measure distinct constructs.

Furthermore, Study 2 demonstrates that most of the ASSIST tallies and scales exhibit moderate to strong within-teacher reliability across multiple observations. One exception is the teacher tally of Disapprovals. These findings indicate that the ASSIST is relatively stable in within-teacher reliability when administered over the course of multiple days, adding to the confidence that although varying somewhat across observation cycles, they reflect a teacher’s underlying tendency to use particular practices. Generally speaking, the tally scales exhibited lower reliability than the global scales, which is largely expected as frequency-based scales are known to fluctuate more widely across time than more holistic scales (Stuhlman et al., 2014). The lower reliability for tallied Disapprovals may indicate that this behavior is more context dependent, which is reasonable given by definition they are tangible, punitive responses to student misbehavior (e.g., office discipline referrals). Consistent with this notion, Disapprovals were the least frequently observed behavior among the teacher tallies, with a relatively restricted range and a median score of zero, indicating they were more isolated actions.

ASSIST at the School Level

Assessments of the amount of variance in the measure due to school-level differences were also informative. The ICCs varied slightly by school level, ranging from .09 to .22 for elementary, .19 to .31 for middle, and .12 to .41 for high school. These ICCs suggest that if the ASSIST is used for research across multiple schools, particularly at the secondary level, multilevel modeling should be used to account for the nested nature of these data. With regard to the correlations with school-level characteristics, relatively few significant effects emerged. In fact, just two effects out of 35 tests were significant at p < .01, and the two that were significant were for student behaviors, not teacher practices. Although additional research is needed, this suggests that the ASSIST is not systematically linked to school demographic characteristics.

Convergent Validity: ASSIST and CLASS

As expected, the ASSIST global ratings and tallies were strongly correlated with all domains of the CLASS-S, with correlations strongest at the teacher level and school level, and slightly less strong with Instructional Support compared with other CLASS-S domains. These findings are aligned with the design of the ASSIST as a measure of teacher practice and behaviors at the classroom level, as opposed to capturing immediate context-specific information at a specific point in time. It is perhaps not surprising that most within-teacher correlations were not significant at the p < .001 level between the tally scales and CLASS-S domains, given the tallies may be capturing more context-specific (occasion-specific) variance than either the ASSIST global scales or the CLASS-S measures. Furthermore, findings indicating the less strong relations with CLASS-S Instructional Support are consistent with the conceptualization of the ASSIST as a measure of teachers’ classroom management, rather than instructional practices. Together, the results are consistent with what would be expected for a measure designed to capture teacher-level practices for managing classrooms and engaging students.

Strengths and Potential Limitations of the ASSIST

The ASSIST is a particularly nimble tool for observing teacher practices and student behaviors. First, few existing measures include both assessments of discrete behaviors and a global assessment of the classroom environment. The ability to have a raw tally of the performed behaviors has important implications for providing specific feedback to teachers to improve practice. At the same time, a global assessment of the observational period provides a window into the classroom climate and culture. Second, the discrete teacher practices tallied capture both positive, proactive strategies (i.e., Proactives and Approvals) and reactive strategies (i.e., Reactives and Disapprovals). It is rare that observational measures attempt to capture behaviors that might be considered negative in the classroom. Moreover, the ASSIST provides an assessment of some student behaviors in the classroom. Generating ratios of teacher to student behaviors using the tallies can be an eye-opening practice for teachers to better understand the effects of their classroom management practices on students. For example, contrast the rate of OTRs to Disruptives in elementary classrooms (21.04 to 6.84) to high school classrooms (15.24 to 11.29) within a 15-min observation period (see Table 1). Such data can also be helpful in calculating the cumulative amount of disruptions or time spent on discipline, as a basic cost analysis, thereby indicating the return on investment in implementing positive behavior supports.

Despite these and other potential benefits, there are some notable limitations of the ASSIST. Given the short tally window of the observation, observers are not able to capture teacher and student interactions for a full class period or lesson. Although increasing the tally window might sound advantageous, practically, it could overload the cognitive capacity of observers. As a result, the 15-min tally window provides only a brief snapshot of the classroom. The ASSIST is also not designed to assess or track individual student behavior. As such, it is impossible to determine whether the tallied student behaviors were being performed by one student, a group of students, or the entire classroom. Furthermore, the quality of the tallied teacher behavior is challenging to ascertain with the ASSIST. The tally indicates that a practice occurred, but it does not provide information about the teacher’s tone and delivery. There are also some limitations of this study. For example, in the rare instance, when an observer drifted below the 80% reliability, it was not feasible to remove all their prior data or determine exactly where in time the drift occurred. We did not have another measure to assess convergent validity beyond the CLASS-S. We only had one ASSIST observation per teacher for some of the data sources to examine ICCs within teacher. Despite these and other limitations, there are several strengths, including the overall scale and scope of the expansive data collection.

Implications for Research

Careful consideration should be given to the assumptions made regarding the handling of the ASSIST tally scales. Being count variables, many studies by our team (e.g., Bradshaw et al., 2018) have utilized the Poisson and/or negative binomial distribution when modeling the ASSIST tally scales as outcome variables. Depending on the sample, however, the distribution of certain tally scales has been large enough in range and within appropriate skew that, at times, we have determined some to be more appropriately characterized by a normal distribution (especially if/when averaging tally counts across multiple observations). We do not anticipate these tallies being used in practice in a way which explicitly tests for or delineates distributional assumptions between tally and global scales; as the purpose of this article was to guide practice, we presented the same within-teacher reliability metrics for the tally scales as for the global scales (analysis of variance [ANOVA]-based metrics, which are appropriate for continuous and normally distributed variables). In examining relations between the ASSIST globals and the CLASS domains, we did not make explicit assumptions about the distribution of the scales; however, there should be further work to better understand the best way to analyze the tallies in light of the distributional characteristics exhibited by each of the scales (Bradshaw et al., 2018).

We also examined the reliability and validity of the global mean scores, as we anticipated these would be straightforward to use in practice (e.g., in comparison to factor analysis or item response theory–derived scores). However, a limitation of such an approach is that there is no partitioning of true score and error variance, as all measurement error is retained in the mean score. In addition, mean scores assume that each item on a scale contributes to the latent construct in a similar manner, which may not be the case. Some items may be more or less indicative of the construct being measured. Scores derived from factor analysis or item response theory overcome these challenges by partitioning true score and error variance, weighting items that are more or less indicative of the latent construct, and accounting for differential item functioning by groups or settings (McDaniel et al., 2022). In fact, a recent study by McDaniel et al. (2022) examined the validity of global scale factor means and demonstrated partial invariance across elementary, middle, and high school classrooms. Without appropriately accounting for this differential item functioning, we cannot directly compare across these settings (Byrne et al., 1989; Vandenberg & Lance, 2000). As such, in the current article, we present the pattern of results within elementary, middle, and high school classrooms without comparison between these settings. Future research should consider how to translate differential item functioning-adjusted factor scores, or related scoring procedures, for use in practice. However, based on the present results, the global means and tallies demonstrate promising reliability and validity.

Implications for Practice

Coaching to improve teacher practices and student outcomes has increasingly gained traction in research as an evidence-based intervention (e.g., Pas et al., 2023; Reinke et al., 2015). One core feature of evidence-based coaching models is the use of observation to generate and provide data-based performance feedback to the teacher (see Pas et al., 2023). Notably, the observations that coaches use vary across models, though many observations overlap with the content assessed by the ASSIST. With its emphasis on both teacher and student behaviors, spanning teacher practices targeting social and academic behaviors (e.g., proactive behavior expectations and opportunities to respond), and with data collected both discretely (tallies) and more generally (global ratings), the ASSIST could be integrated into multiple coaching models.

Conclusions and Future Directions

The ASSIST is a particularly helpful classroom observational tool for research and practice, given that it is nimble and includes a combination of global assessments and tallied behaviors. It also captures both proactive and reactive classroom strategies used by teachers, as well as an assessment of student behaviors. This article provides an overview of the descriptive properties, reliability, and validity of the ASSIST measure scores, along with practical considerations for those interested in using the measure in research and practice. The ASSIST was also designed to be a relatively low burden tool for both research and practice, as most assessors reached reliability within a day or two of training. It is also relatively low cost and easy to use and can be used across elementary, middle, and high schools, making it efficient and economical for researchers and practitioners. An interactive, online training is currently under construction to meet the increased demand for use of the ASSIST and will further facilitate scale-up of the ASSIST to meet its growing demand among both researchers and practitioners.

More recent versions of the ASSIST have included scales regarding teachers’ use of equitable or culturally responsive classroom practices. One such version included a seven-item global rating scale of culturally responsive practices (CRP). In analyses, teachers self-reported higher rates of CRP than observers reported, suggesting there may be important differences between teachers’ and observers’ perceptions of teachers’ CRP use. In addition, significant associations between observations of CRP and teachers’ self-efficacy ratings (Debnam et al., 2015) suggest the initial promise of the measure. As such, our research team subsequently developed a full observational measure assessing teacher CRP and extending it to include equitable and culturally sustaining practices, building from this initial ASSIST global scale. This new measure was designed to assess five core components:

Supplemental Material

sj-docx-1-aei-10.1177_15345084231187087 – Supplemental material for Examining the Psychometrics and Characteristics of the Assessing School Settings: Interactions of Students and Teachers

Supplemental material, sj-docx-1-aei-10.1177_15345084231187087 for Examining the Psychometrics and Characteristics of the Assessing School Settings: Interactions of Students and Teachers by Catherine P. Bradshaw, Heather L. McDaniel, Chelsea A. Kaihoi, Summer S. Braun, Elise T. Pas, Jessika H. Bottiani, Anne H. Cash and Katrina J. Debnam in Assessment for Effective Intervention

Footnotes

Acknowledgements

The authors thank Dr. Julie Rusby who provided generous support and consultation to our research team during the early stages of the development and adaptation of the ASSIST. Additional thanks go to our many ASSIST project collaborators, including Drs. Sarah Lindstrom Johnson, Patrick Tolan, and Alexis Harris.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported here was supported by the Institute of Education Sciences under award numbers R324A110107 and R305A150221, the National Institute of Justice under award numbers 2014-CK-BX-005 and 2015-CK-BX-008, and the U.S. Department of Education and Spencer Foundation, awarded to Catherine Bradshaw at the University of Virginia and Johns Hopkins University. Additional support was provided by the Robert Wood Johnson Foundation, awarded to Patrick Tolan at the University of Virginia. The opinions expressed are those of the authors and do not represent views of the funders.

Supplemental Material

Supplemental material for this article is available on the Assessment for Effective Intervention website with the online version of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.