Abstract

The literature has identified the use of data-based, problem-solving as an essential element in the promotion of positive behavior interventions and supports (PBIS) within the classroom. The Components of a Successful Classroom (CSC) tool is an instrument which has undergone initial validation to support its use for measuring critical features of Tier 1. This study provides initial support for the validation of this classroom management observation tool as part of a problem-solving consultation framework by examining the extent to which items on the CSC tool sample the constructs of interest in classroom management. Behavioral consultants utilized the tool to evaluate Tier 1 behavior management strategies implemented by teachers in the classroom. An exploratory factor analysis (EFA) was conducted to determine factor structure of the classroom management observation tool. Findings of the EFA supported a unidimensional construct best measured with three factors (i.e., preventive supports, feedback provision and engagement, and expectations and consequences). Currently, this tool may be useful to practitioners by guiding general education consultation after identifying main concerns in teacher’s implementation of Tier 1 supports and supporting implementation of PBIS practices in the classroom.

Implementation of a positive behavioral interventions and supports (PBIS) framework within the classroom environment is essential; especially the use of data-based, problem-solving (Coffey & Horner, 2012; McIntosh et al., 2011). Positive classroom behavior support (PCBS) practices have been shown to benefit students both behaviorally and academically including increased student appropriate behavior, decreased challenging behavior, and improved academic outcomes (Center on PBIS, 2021). Simonsen and colleagues (2021) highlighted foundational, positive classroom practices implemented within a multitiered system of support. Specifically, Tier 1 PCBS practices focus on the development and teaching of classroom routines, connecting with students, adoption of school expectations for the classroom, delivery of prompts and precorrections, active classroom supervision, engaging students in effective and explicit academic instruction, and providing high ratios of positive to corrective feedback. This article focuses on Tier 1 as these foundational supports relate to the classroom environment.

While Tier 1 strategies are relatively easy for teachers to employ (MacSuga-Gage & Simonsen, 2015) and demonstrate a positive impact on student behavioral and academic outcomes (OSEP, 2015), some of these strategies are often missing or implemented at lower levels within the classroom (Reinke et al., 2013). This suggests that teachers may need additional support in identifying deficits in their PCBS implementation. Simonsen et al. (2019) argued for a threefold approach to supporting student learning and classroom behavior: (a) implementation of core PCBS practices, (b) school leadership teams supporting teachers’ implementation, and (c) teachers and school leadership actively using data to monitor and modify supports.

Simonsen and colleagues (2020) highlighted the need to utilize a brief and validated measure of PCBS strategies to guide professional development. They emphasized that available measures are either simple but not psychometrically validated or psychometrically validated but too complex to be sustainably implemented. Given what we know about the critical components that support teachers’ and students’ achievement of desired outcomes, it is essential to develop and validate observation tools that can be easily implemented by school-based observers. A psychometrically sound and simple measure will enhance feasibility and approachability, thus leading to greater usage of data-driven measures by school personnel to assess utilization and implementation of PCBS strategies.

Because the Components of a Successful Classroom (CSC; Fischer et al., 2019) was created based on the aforementioned foundational Tier 1 guidelines of Simonsen and colleagues (2019, 2020, 2021), Simonsen’s work is the theoretical framework this study is based on. Furthermore, given that the current study looks to begin the validation of the CSC measure, another framework used as a reference for the initial validation of this measure is Kane’s (2013) four-pillared validity framework for an interpretive argument. The four pillars include scoring, generalization, extrapolation, and decisions. The general framework consists of a two-step process for each pillar wherein the possible interpretations and uses of the data are determined and critically evaluated. An analysis of the proposed interpretations is then conducted to assess the plausibility of the arguments.

Purpose and Research Questions

Following Kane’s framework, this study provides support for the initial validation of a classroom management observation tool as part of a problem-solving consultation framework with special interest in the extent to which items on the CSC tool adequately sample the constructs of interest in classroom management. To address the initial validation of the tool, the following research question was addressed: What is the factor structure of the CSC and what items are retained following an exploratory factor analysis (EFA)?

Method

Participants and Setting

Elementary school teachers (i.e., kindergarten to sixth grade) from a large school district in the Mountain West region of the United States participated in this study. The elementary schools are part of a public school district that participated in an extensive PBIS collaboration with an institute of higher education called the Behavior Response Support Team (BRST). The study utilized data from a total of 47 teachers across three elementary schools. The data were observed and collected by graduate-level, school psychology students who also served as behavioral consultants.

Measure

Behavioral consultants utilized the CSC (Fischer et al., 2019) to support data-based problem-solving and evaluate Tier 1 behavior management strategies implemented by teachers in the classroom during a 60-min observation of whole group instruction. The CSC consists of 15 items rated on a 0- to 1-, 2-, or 3-point scale for a possible 42 points total. Observers evaluated classroom rules, praise, positive-to-negative statement ratio, attention signals, behavior tracking, opportunities to respond, and class-wide on-task behavior.

Procedures and Analyses

A literature review on PBIS and effective teaching practices was conducted. Three experts in school PBIS collaborated to determine items to be included in the current iteration of the CSC tool based on literature review findings and the Tier 1 foundations highlighted by Simonsen and colleagues (2021). A behavioral consultation program then provided monitoring, coaching, and training to school-based teams through a problem-solving consultation framework. To systematically assess need, consultants utilized the CSC to observe all general education classrooms once at the beginning of the school year, providing feedback to teachers after their observation and Tier 1 consultation to specified teachers. Based on Kane’s (2013) scoring pillar, a CSC cutoff score was selected to determine which teachers would receive further support through consultation services. Teachers who received consultation were observed a second time at the end of the school year. For teachers with multiple observations, both observations were included in the final analysis. Team members from this consultation program met weekly to discuss specific cases as well as the use of the CSC. The meetings provided an opportunity for debrief and discussion of consistency of results. When certain items on the CSC were unclear or ambiguous, the team, including the experts in school PBIS, refined the items to ensure consistency across consultants using the observation tool. While Simonsen and colleagues’ (2019, 2020, 2021) Tier 1 guidelines served to highlight positive classroom practices and determine the potential constructs being measured, additional information was needed to determine general commonalities in the items observed. An EFA was then conducted to determine the factor structure of the classroom management observation tool utilizing observations of teachers’ classroom management.

Guided by Kane’s generalization pillar, and using EFA methods, the produced correlation matrices were analyzed to improve item reliability. As this study was exploratory in nature, principal axis factoring (PAF) was chosen over principal component analysis, as it has been seen as more suitable to assess a construct that cannot be measured (i.e., CSC; Kahn, 2006). Prior to performing PAF, the data were examined for suitability for factor analysis with the Kaiser–Meyer–Olkin value exceeding the recommended value of 0.6 (Tabachnick & Fidell, 2013) and Bartlett’s test of sphericity reaching statistical significance, thus supporting factor extraction. When deciding factor retention, the authors followed the recommendation that multiple techniques should be used (Fabrigar et al., 1999). Retention tests included Kaiser’s criterion (eigenvalues greater than 1.0), Cattell’s scree test, and use of intuition to ensure factor extraction is theoretically possible. All retention rules were observed prior to proceeding with each extraction.

Results

Bartlett’s test of sphericity analysis indicated that the correlation matrix was not random, χ2(105) = 309.35, p < .05. The Kaiser–Meyer–Olkin statistics was .71, thus exceeding the recommended minimum standard (Kline, 1994). These results suggested the correlation matrix was appropriate for factor analysis. The second step of Kane’s (2013) validity framework, analysis of proposed interpretations, was referenced to further interpret the results. Visual examination of the scree plot indicated evidence for four factors, but interpretability was problematic when the authors attempted to interpret beyond a general factor. Due to interpretability and the exploratory nature of the study, forced three-factor and two-factor solutions were examined. In addition, given the nature of this brief report and the limit in page length, the authors were unable to provide additional detail on fit statistics as would be expected.

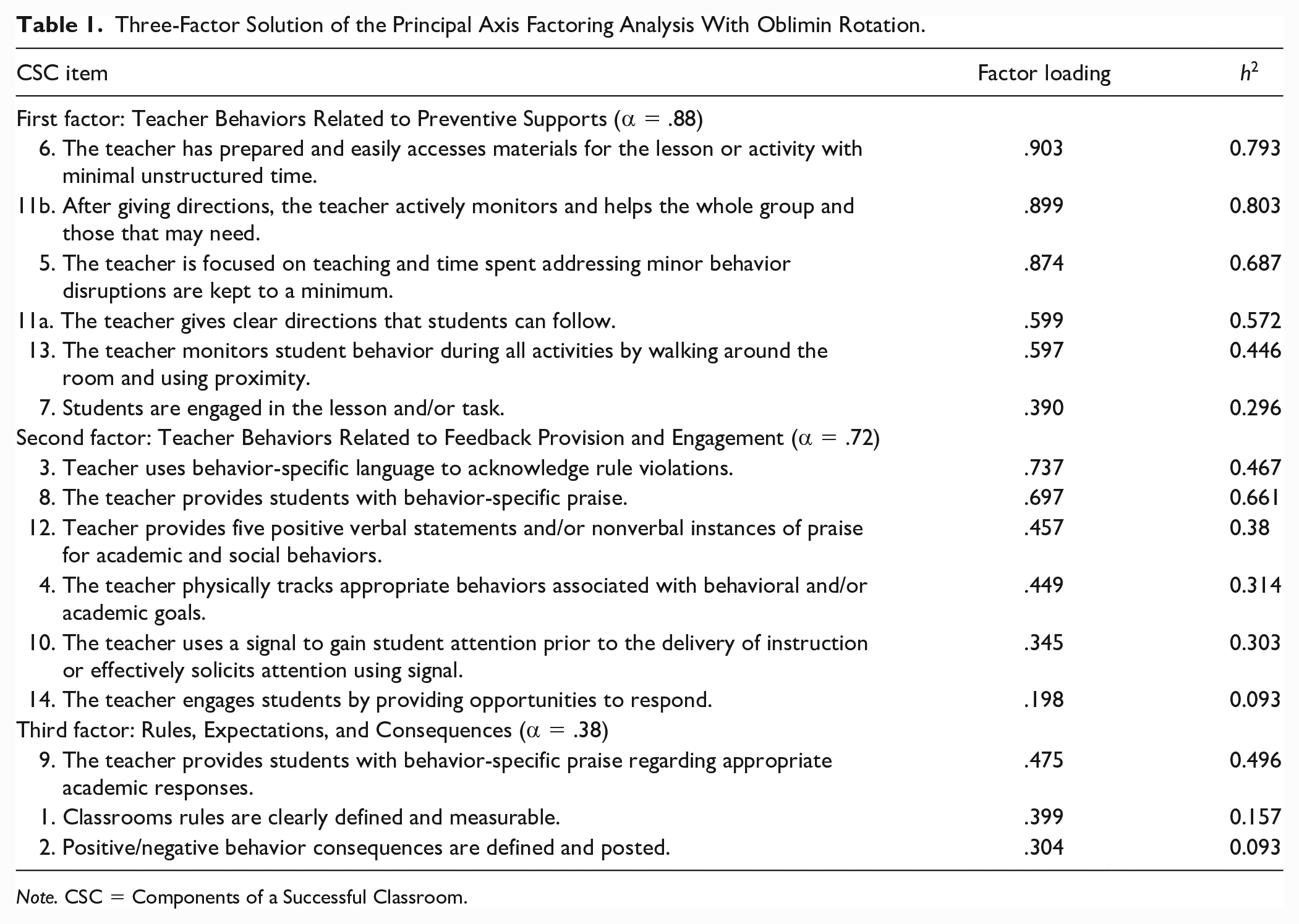

Table 1 presents the results of the PAF analysis with oblimin rotation. The three-factor solution accounted for 54.47% of the variance. Factor 1, Teacher Behaviors Related to Preventive Supports, explained 35.96% of the variance and contained six items. Factor 1 had internal consistency of .88. Factor 2, Teacher Behaviors Related to Feedback Provision and Engagement, consisted of six items with an internal consistency of .72. Factor 3, Rules, Expectations, and Consequences, had three items that loaded onto it with an internal consistency of .38.

Three-Factor Solution of the Principal Axis Factoring Analysis With Oblimin Rotation.

Note. CSC = Components of a Successful Classroom.

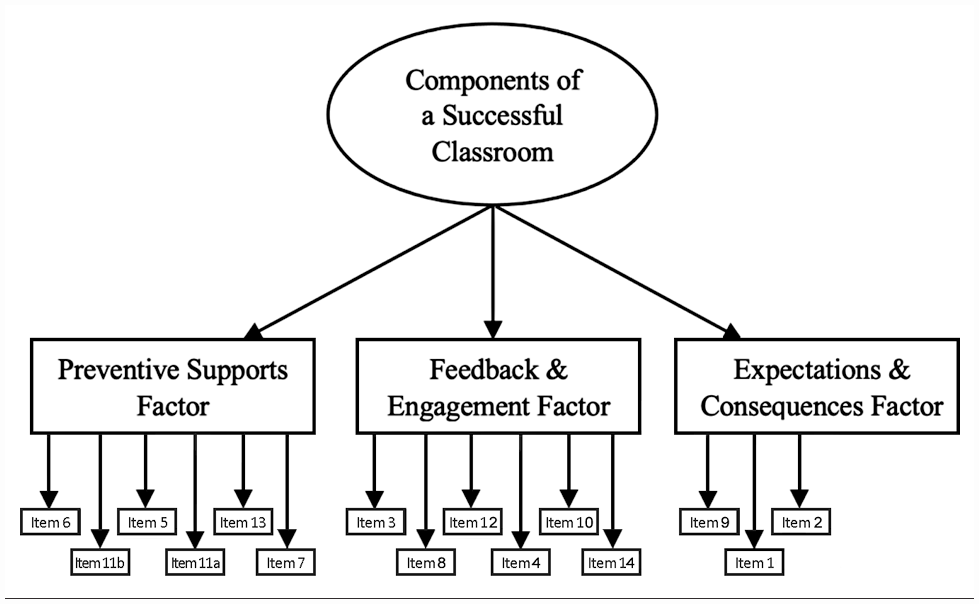

Exploratory Factor Analysis Breakdown.

Discussion

Findings of an EFA, conducted for the initial validation of a classroom management tool, supported a unidimensional factor structure (i.e., CSC). This unidimensional construct was best measured with three factors (i.e., preventive supports; feedback provision and engagement; and items related to rules, expectations, and consequences). This factor structure fits well and provides a thorough and comprehensible structure that is in accord with theoretical frameworks utilized to determine tool development (Simonsen et al., 2019, 2020, 2021) and items to be included in the current iteration of the CSC observation tool. Specifically, the factors were labeled based on Simonsen and colleagues’ (2021) Tier 1 PCBS foundational practices as they relate to the classroom environment. It is important to note that the smaller sample size of this study may be insufficient for a three-factor solution which may explain the limited variability accounted for in the results.

Implications

The findings of this study provide initial psychometric validation of the CSC in evaluating teachers’ implementation of three broad, evidence-based classroom practices: preventive supports, feedback and engagement, and expectations and consequences. Currently, this tool may be useful in guiding consultation after identifying main concerns in teacher’s implementation of Tier 1 supports. The use of this tool may provide a structure for preliminary identification of students’ academic or behavioral needs. However, greater investigation into the external validity of the CSC is necessary and should be undertaken, specifically related to student outcomes as this was not addressed through the current study and is a limitation. In addition, testing a hierarchical structure would be a reasonable next step in the validation process. The CSC may also be used by school administrators, teacher coaches, and mental health and behavior support staff (e.g., social workers, school psychologists) allowing for the sustainable implementation of the tool beyond supports outside the school. While this tool is intended to be used within PBIS systems, future work should include a more general sample of teachers, including those without PBIS training to further explore generalizability of results. Future validation efforts of this classroom management tool should also explore other validity frameworks.

Footnotes

Acknowledgements

We would like to acknowledge Tevyn Tanner, Rovi Hidalgo, April Zmudka, and John Davis, for their contributions on the initial iterations of the measure.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.