Abstract

Monitoring learning progress enables teachers to address students’ interindividual differences and to adapt instruction to students’ needs. We investigated whether using learning progress assessment (LPA) or using a combination of LPA and prepared material to help teachers implement assessment-based differentiated instruction resulted in improved reading skills for students. The study was conducted in second-grade classrooms in general primary education, and participants (

Keywords

Reading is an essential cultural skill that is needed not only for social participation but also for lifelong and self-determined learning (Schiefele et al., 2012). The early school years are crucial for the development of reading skills and are highly predictive for reading performance in later years (Cunningham & Stanovich, 1997). The latest results of the Progress in International Literacy Study (PIRLS) indicate that the achievement gap between high- and low-performing readers is large (Mullis et al., 2017). To meet all students’ needs, teachers should adapt instruction to students’ individual skills and learning growth (Connor et al., 2013). To do this, teachers need objective information on students’ learning progress, as it allows teachers to engage in data-based decision-making. Consequently, teachers should provide students with a learning environment that enables differentiated instruction.

Nonetheless, such activities might be challenging because collecting data on students’ learning growth and developing material that meets each student’s needs may be time-consuming. Moreover, assessment-based differentiated instruction is not part of every teachers’ daily teaching routine (Brunner et al., 2005). A solution to these challenges might be to provide teachers with computer-based assessment tools and to offer instructional approaches (Connor, 2019; L. S. Fuchs & Vaughn, 2012). Thus, the aim of the current study was to investigate whether providing teachers either with information about students’ learning progress or a combination of both learning progress information and prepared material to differentiate instruction would improve students’ reading skills compared with a control group. The study was conducted in second-grade classrooms of general primary education, and the effects for all students, including lower- and higher-performing students, were investigated.

Research on the implementation of innovative and research-based concepts in schools has been informed by two main perspectives (Century & Cassata, 2016). First, some studies investigate whether the core components of the concepts were implemented

In the following, we will introduce (a) assessment as a basis for instructional decisions and (b) research-based instruction methods for different stages of development in reading. Subsequently, we will (c) summarize research on the challenges of implementing assessment-based differentiated instruction in daily teaching practice and how teachers can be supported.

Assessment

An approach that encourages teachers to collect, interpret, and use data to inform instruction at regular intervals is formative assessment (Bennett, 2011). Several studies have shown that especially approaches of formative assessment that include professional development and computer-based systems have a positive impact on student reading achievement (Kingston & Nash, 2011; Klute et al., 2017). Thus, teachers should be encouraged to use computer-based approaches that enable them to collect, analyze, and document data with minimal effort for streamlining monitoring processes (L. S. Fuchs, 2004). One practical and validated approach to do this is learning progress assessment (LPA), where all students in a class have their achievement assessed at short intervals (every 3 weeks) using short, parallel, web-based tests (Förster & Souvignier, 2014, 2015). Using LPA, teachers regularly receive information on different aspects of students’ reading skills, such as reading accuracy, reading fluency, and reading comprehension. Hence, LPA can be administered quickly in the classroom, and the results can be used to both inform and evaluate instruction. Förster and Souvignier (2014, 2015) found that in general primary schools, reading progress was higher for students whose teachers used LPA compared with a control condition. Yet, the effectiveness of LPA may depend on whether teachers use the progress data to differentiate instruction according to students’ learning abilities and reading progress (Espin et al., 2017; Stecker et al., 2005).

Differentiated Reading Instruction

Gains in reading achievement depend on how well a particular instructional method fits with a student’s individual level of reading competence (Connor, 2019). For instance, Juel and Minden-Cupp (2000) found that higher-performing first graders benefited more from a demanding meaning-based approach, whereas lower-performing students’ reading progress was higher when they received code-based instruction (i.e., a phonics approach). Several studies show that individualized reading instruction is more effective than regular reading instruction (Connor et al., 2013), and reading growth is higher when students receive recommended reading instruction (Connor et al., 2011). As reading competence develops, several processes build upon one another: As decoding skills become more automated, students can read more fluently. In turn, a high reading fluency releases a reader’s cognitive resources (e.g., working memory), allowing them to focus on comprehension (Wolf & Katzir-Cohen, 2001). In early school years, reading difficulties are often associated with one or more of these processes and students should be fostered according to their needs. However, these processes do not cover the full range of components of reading competence that should be addressed. For instance, studies report that in second grade, most of the explained variance in reading comprehension is made up of oral language comprehension (Foorman et al., 2015, 2017), which can be effectively addressed within interventions (Foorman et al., 2016). Other relevant components that are closely related to reading achievement are students’ background knowledge on the written content (O’Reilly et al., 2019) as well as reading motivation (Hebbecker et al., 2019). In the current study, however, the focus lies on reading accuracy, reading fluency, and reading comprehension, which are malleable and central components of reading competence (e.g., Stecker et al., 2005). For instance, Förster et al. (2018) established and evaluated prepared material (the “reading sportsman”) that offers tasks to differentially foster these three components, which is described in more detail in the “Method” section.

Intervention research on reading instruction has offered empirical evidence that distinct, specific methods are effective for fostering reading accuracy, reading fluency, and reading comprehension. Especially cooperative learning settings in which students work in pairs or small groups have shown to have a positive impact on reading growth and allow teachers to deal with large interindividual differences (Slavin et al., 2009). One such approach is peer-assisted learning strategies (PALS) where students work in pairs, execute a set of reading tasks, and take turns acting as a tutor (D. Fuchs et al., 1997). Studies have shown that PALS has a positive impact on reading fluency and on reading comprehension (U.S. Department of Education, 2012). Besides PALS, there are more research-based approaches to differentially foster reading competence. For instance, reading accuracy can be targeted via syllable-based reading (Müller et al., 2017), whereas reading fluency is positively influenced by repeated reading (Samuels, 1979; Therrien, 2004). Once students read fluently, they should receive instruction that provides strategies for improving comprehension, for example, reciprocal teaching (Hacker & Tenent, 2002; Palincsar & Brown, 1984). Thus, rather than implementing the same reading instruction for all students, teachers can pair students and allocate tasks depending on students’ abilities and learning growth.

Challenges and Support for Teachers

Using data to differentiate instruction may be challenging for teachers, as it is often not a central component in teacher education (Darling-Hammond, 2006) and uncommon in everyday school practice (Brunner et al., 2005). In addition, although there are several research-based instructional approaches to foster different aspects of students’ reading competence, implementing differentiated instruction for an entire class is not yet part of teachers’ skill set (L. S. Fuchs & Vaughn, 2012). The results of the PIRLS (Mullis et al., 2017) indicate that in Germany, many teachers teach reading as a whole-class activity and only rarely differentiate instruction. Furthermore, differentiated instruction is often focused on the remediation and support of low-performing students (Reis et al., 2011). Therefore, teachers need additional support in using LPA data to tailor instruction to students’ needs and integrate it into their daily routines (Allinder et al., 2000; Förster et al., 2018; Stamann et al., 2017).

One effective approach to support teachers in implementing formative assessment and differentiated instruction is to provide them with a combination of training (Bennett, 2011; Hondrich et al., 2016) and instructional recommendations by means of prepared material (Förster et al., 2018; Stecker et al., 2005). In trainings, teachers can be supported with theoretical background knowledge and concrete suggestions on how to use data to differentiate instruction (Filderman et al., 2020). Instructional recommendations within a computer-based progress monitoring system were found to lead to higher learning growth compared with sole or no use of progress monitoring (L. S. Fuchs et al., 1992). When using the LPA tool (Förster & Souvignier, 2014), teachers in our study were provided with a documentation sheet that summarizes strengths, weaknesses, and learning goals based on students’ learning progress data. Furthermore, it was recommended which competence (i.e., reading accuracy, fluency, or comprehension) should be trained. However, the documentation sheet did not predetermine how exactly instruction should be differentiated because the aim was to support teachers in making their own instructional decisions. Furthermore, research has shown that providing teachers using an LPA tool with prepared material to differentiate instruction positively impacts implementation outcomes concerning assessment-based instruction and students’ reading competence (Förster et al., 2018).

Implementing assessment-based differentiated instruction seems to be useful for dealing with large heterogeneities in general education, but few studies have investigated how teachers can be supported to adapt instruction to the needs of all students in a class. However, the large gap between high- and low-performing readers (Mullis et al., 2017) raises the question of how students with different levels of reading competence benefit from assessment-based differentiated instruction.

The aim of the current study was to evaluate whether students’ reading competence improved when teachers were provided with differentiated information about students’ learning progress and prepared teaching material to differentiate instruction in their classrooms. The study addressed two main research questions:

We assume that providing teachers with LPA should lead to higher gains in reading fluency and reading comprehension compared with business-as-usual instruction. The combination of LPA plus prepared material is expected to outperform both other treatments. Furthermore, we expect comparable patterns of results for both high- and low-performing readers, as the study was conducted in entire classrooms and the aim was to implement differentiated instruction based on each student’s level of reading achievement. To facilitate interpretation and discussion of the results, treatment integrity was investigated concerning teachers’ use of the LPA tool and the prepared teaching material.

Method

Sample

The original sample consisted of 696 second-grade students. Participation in the study was voluntary, and informed consent was obtained from students’ parents; 77 parents denied study participation, resulting in 619 students. In this final sample, 47.5% of the students were female, and the average age was 7 years (

The study involved 33 teachers and their classes from 13 different schools. At the beginning of the school year, 90.9% of teachers were female, and they were on average 47 years old (

Procedure

The current study used a three-group quasi-experimental design. Schools in and around a medium-sized town in Germany were informed via an information letter and phone about the possibility of participating in a study to foster second-grade students’ reading skills. Subsequently, the schools’ secretaries or principals informed teachers. When they showed interest in participating, schools were assigned to one of the three conditions depending on their equipment and willingness to use the prepared teaching material we provided. Teachers in the control group conducted regular reading instruction, which in Germany is characterized by one teacher being responsible for about 22 students with highly varying achievement levels and usually no additional systematic support from a special education teacher (Tarelli et al., 2012). Furthermore, teachers rarely use research-based instructional approaches in everyday instructional practice in Germany (Bremerich-Vos et al., 2017). In the first experimental group (LPA), teachers were provided an LPA tool and had access to the results of eight tests taken by their students. In the second experimental group (LPA-RS), teachers were provided the LPA tool and additionally received prepared material to differentiate reading instruction. A pretest at the beginning and a posttest at the end of the school year were conducted to assess all students’ reading comprehension and reading fluency. Data collection and digital entry were completed by trained research assistants. All teachers received their students’ test results, including means, standard deviations, norm values, and additional information to provide support in interpreting the values.

Learning progress assessment

In the LPA and LPA-RS conditions, teachers were provided with an online LPA tool. Over the course of the school year, students filled out eight parallel tests every 3 weeks that could be completed within 10 to 15 min. Students could log in the system and were automatically and randomly administered one of the parallel tests. Before the first test, students were instructed by headphones on how to use the tool and completed a subsequent tutorial. The LPA tool offers series of tests from Grade 1 through 6. In the default, students complete tests that are assigned for their respective grade. If students score significantly below or above grade level, teachers may have assigned tests of a higher or lower grade to single students. As for Grade 2, the tests consisted of three parts to assess word comprehension, sentence comprehension, and text comprehension. Word comprehension was assessed by displaying either a word or pseudoword. Students were asked to indicate whether the word exists in the German language. Sentence comprehension was assessed by a task in which students decided whether a sentence (e.g., “Strawberries are blue”) is correct. Finally, text comprehension was assessed by asking students whether a sentence continues ongoing events of a short story in a meaningful way. In addition, reading speed was measured for all these tasks. Correlations of the tests with standardized achievement tests on reading comprehension (ELFE, Lenhard & Schneider, 2006; word level:

Teachers could view the test results in an online test menu. They had access to students’ progress in reading accuracy and reading speed on the word, sentence, and text levels as well as norms and median values of all students who had completed the tests. Test results could be viewed in a table and a graph for the whole class or for single students. In addition, teachers of the LPA-RS condition had access to a documentation sheet for each student that summarized strengths and weaknesses in reading, learning goals, and recommendations, pointing out which competence (i.e., reading accuracy, fluency, or comprehension) should be trained. Teachers could use the documentation sheet to inform their instructional decisions when they used the prepared material. Thus, the documentation sheet did not portray prescriptions which instructional method should be used but aimed at supporting and encouraging teachers in implementing differentiated instruction.

Teachers who used the LPA tool received a 2-hr training session conducted by the authors at the beginning of the school year. In the training, the authors presented the test concepts, explained how students were instructed by the LPA tool when working on the tests, and provided teachers with information on technical functions of the LPA tool. Furthermore, teachers received suggestions on how to use the LPA tool for whole classes, how to access and read the results as well as organizational information.

Prepared material to differentiate instruction

Teachers in the LPA-RS group received material that enabled them to implement differentiated instruction by using cooperative and research-based methods. The prepared material was referred to as the “reading sportsman” and contained three tasks, each of which had three levels of difficulty.

The first task, named the “reading slalom,” is an effective way to foster reading accuracy (Müller et al., 2017). It uses syllable-based reading and was available on the word and sentence level. Students took turns repeatedly reading out loud a number of words or sentences while identifying and underlining syllables.

The second task, named the “reading sprint,” incorporates the method of repeated reading, a well-known and research-based approach to foster reading fluency (Samuels, 1979). In this task, students worked together in pairs and repeatedly read the same word list aloud for a predefined time, each taking turns as the loud reader (the sportsman) and the silent reader (the coach). Students were instructed to read as accurately and fast as they can.

The third task, named the “reading tandem,” employs the method of reciprocal teaching, an approach to foster reading comprehension by the transfer and usage of reading strategies (Palincsar & Brown, 1984). For this task, teachers first instructed students to use three reading strategies: making predictions after reading the heading, summarizing the content of a story, and making predictions about the continuation of a story. As in the other tasks of the “reading sportsman,” students worked in pairs and assumed different roles. The “sportsman” was asked to read a text passage out loud and use the strategies while the coach instructed and monitored the other student’s use of strategies.

All teachers of the LPA-RS condition attended a 2-hr training on the prepared material and differentiated instruction at the beginning of the school year. Teachers were provided with information on the theoretical background of the prepared material, they received a guided tour on the material, and there was a discussion about the practical application of the tasks. It was proposed that teachers should introduce the three tasks of the “reading sportsman” to their students in small, homogeneous groups at the beginning of the school year and should use the material at least once per week. However, teachers could autonomously decide when and how often to use the prepared material. This was essential as the current study was implemented in ecologically valid environments and it was important to leave teachers free to make their own instructional decisions. Over the course of the school year, teachers were asked to continuously check the results of the LPA to adapt instruction to students’ needs. Five months after the initial meeting, we arranged a second 2-hr training to reflect on the implementation of the material.

Measures

Reading fluency

Reading fluency was assessed using a standardized achievement test that mainly measures reading speed but also assesses reading accuracy indirectly and less sensitively (SLS 2-9; Salzburger Lese-Screening; Wimmer & Mayringer, 2014). In the SLS, which is a speed test, students are asked to read short sentences and state whether each sentence is correct (e.g., “Snow is red”). The measure of reading fluency is determined by the number of correctly rated sentences within 3 min. Two pseudo-parallel versions of the test exist, whereby the order of items is changed; both versions were used at both pretest and posttest and students who sat next to each other randomly received different forms. Thus, to prevent students from looking at their seatmates’ results, every second person received either Version A or Version B of the test. Parallel-form reliability is stated to be high (

Reading comprehension

Reading comprehension was assessed using a standardized achievement test (ELFE II; Lenhard et al., 2017) that assesses reading comprehension at the word, sentence, and text levels. On the word level, students were presented with 75 pictures, each picture with four words below it. Within 3 min, students were asked to underline the word that best describes what is shown in the picture. Next, they were presented with 36 sentences, where each sentence had a missing word. The task was to choose and underline the appropriate word out of five words. To assess text comprehension, students read 26 short stories and were asked to choose from four sentences which option is the most appropriate and best fits the story. Two parallel versions of this test exist, but we only used one of the versions at both pretest and posttest. Retest reliability is satisfactory (

Treatment integrity

For teachers in the LPA and LPA-RS conditions, we measured the integrity with which the LPA tool was implemented; for those in the LPA-RS condition, we also measured the integrity with which they used the prepared material. These measurements offered some background information that enabled us to better interpret and discuss the results in the context of the main research questions.

Teachers of the LPA and LPA-RS conditions were asked whether and how often per month they viewed the results of the LPA using the online test menu. Teachers of the LPA-RS condition were also asked whether and how often they viewed the documentation sheet. In addition, they were asked whether they used the prepared material in the current school year and, on average, how often they used it per month and how many minutes they used it each time. Teachers were also asked to state, on average, what percentage of students worked with each of the tasks of the “reading sportsman” during the school year. In addition, teachers filled out questionnaires related to the implementation of the LPA and the prepared material; again, these questionnaires were not relevant for the current study. Information about these questionnaires is provided on the Open Science Framework (

To analyze teachers’ answers in the (relevant) questionnaire, we computed means, standard deviations, and percentages. In total, 81.6% of students in the LPA group and 94.9% of students in the LPA-RS group completed at least seven out of eight LPA tests. Thus, information about reading progress was available to teachers for most students at most measurement points. All teachers stated that they viewed the results of the LPA during the school year. Teachers in the LPA condition viewed the results

Teachers of the LPA-RS condition stated that during the school year, they used the “reading sportsman” about 3 times each month (

In all classrooms of the LPA-RS condition, treatment integrity for using the methods of the “reading sportsman” was rated by university research assistants. For this purpose, an observation sheet was developed to document whether the procedure and the research-based aspects of each task of the “reading sportsman” (e.g., underlining syllables in the task “reading slalom”) were implemented correctly. All research assistants attended a 3-hr observer training, in which assistants practiced using the observation sheet while watching video-taped sequences of the “reading sportsman.” In the LPA-RS classrooms, pairs of students working with one of the “reading sportsman” tasks (i.e., “reading slalom,” “reading sprint,” or “reading tandem”) were observed by two research assistants for 5 min each. Interrater reliability was satisfactory to high (Cohen’s kappa: .47 <

Data Analysis

To evaluate the effectiveness of the LPA and the combination of the LPA plus the prepared material, we computed latent change models in

On average, 5.18% of data were missing at pretest (

Results

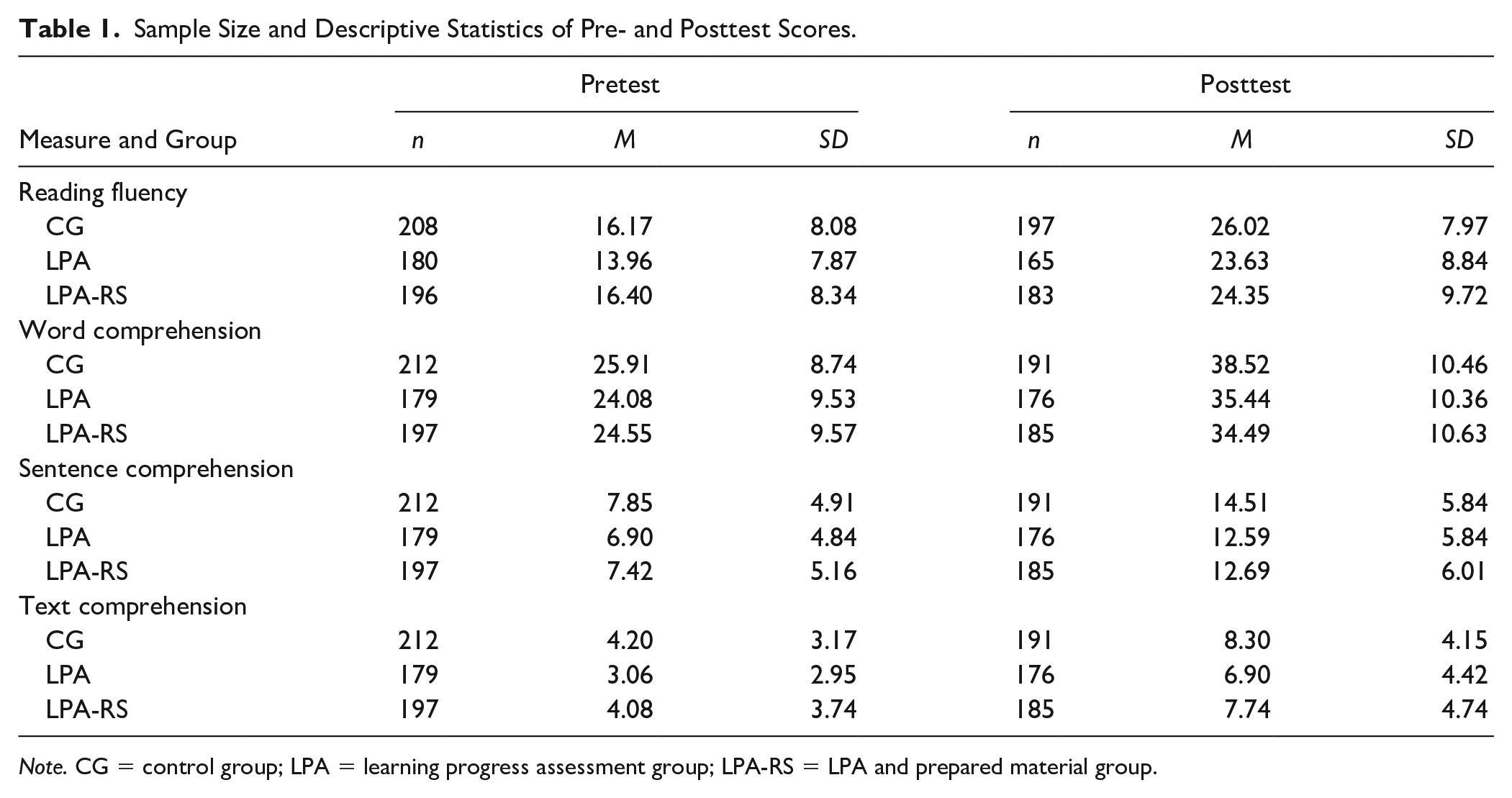

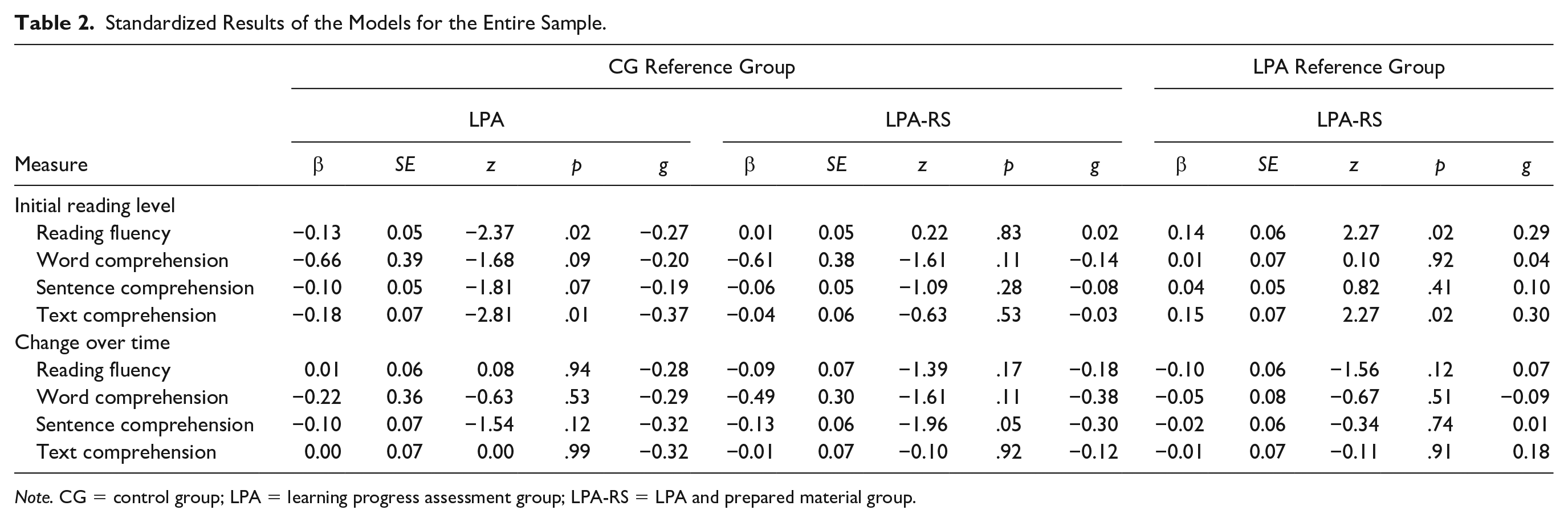

Table 1 shows the sample sizes, means, and standard deviations for pre- and posttest. The latent change models show an acceptable fit to the data (see supplemental material). Overall, the analyses revealed no significant differences between the CG and LPA conditions (see Table 2). Similarly, there were no significant differences between the LPA and LPA-RS conditions or between the CG and LPA-RS conditions. However, a substantial, if nonsignificant effect size indicates that growth in sentence comprehension was somewhat higher for students of the CG compared with the LPA or LPA-RS conditions (β = −0.13,

Sample Size and Descriptive Statistics of Pre- and Posttest Scores.

Standardized Results of the Models for the Entire Sample.

The supplemental material shows the results of the change models for students below and above the median. All latent change models show an acceptable fit to the data. For lower-performing students, there were no significant differences between conditions. For higher-performing students, students in the CG showed a significantly larger growth in sentence comprehension than students in the LPA-RS group (β = −0.19,

Discussion

The current study investigated whether providing teachers with information on students’ learning progress in reading (LPA) or with a combination of both LPA and prepared material affected the reading fluency and reading comprehension of second-grade students in general primary education. Given the growing achievement gaps in reading reported in the PIRLS (Mullis et al., 2017), it is especially important to examine whether and how lower-performing and higher-performing students benefit from assessment-based differentiated instruction. Therefore, along with investigating the effects on all students who took part in the study, we also examined differential effects on students below and above the median.

Summary and Possible Explanation of the Findings

The results indicate that providing teachers with LPA did not lead to higher gains in reading fluency or reading comprehension compared with a control condition. In addition, providing teachers with a combination of LPA and prepared material did not lead to greater reading gains than in the other groups. Similarly, for students below and above the median, providing teachers with LPA did not lead to higher gains in reading fluency and reading comprehension, and neither did providing teachers with a combination of LPA and prepared material. Contrary to our expectations, higher-achieving students in the control condition showed more growth in sentence comprehension than students whose teachers had access to both LPA data and prepared material. We assume that there are three central factors that are related to the results of our study: (a) population, (b) teachers’ experience and training, and (c) amount of instruction. We next discuss the results in the context of these aspects and the study’s theoretical background, and then we present the study’s limitations and implications.

Reviews on past studies investigating the effects of assessing students’ learning progress and using instructional material did reveal that these measures positively impact reading competence (Bennett, 2011; Stecker et al., 2005). However, the focus of most of these studies was on students with reading difficulties, whereas the current study was conducted for entire second-grade classes in general primary education under ecologically valid conditions. Furthermore, all tasks of the “reading sportsman” were conducted in pairs. Cooperative approaches enable teachers to implement differentiated instruction and several studies have shown that they have a positive impact on reading competence (e.g., D. Fuchs et al., 1997; Samuels, 1979; Therrien, 2004). Students may benefit from working with a partner as they have the possibility to practice reading themselves as well as listening to and monitoring another students’ reading process. However, the second-grade students in our study were not familiar with working in cooperative settings. Consequently, it was challenging and time-consuming for teachers to organize collaborative settings and to allocate different tasks to students depending on the results of the LPA. It was especially difficult for lower-performing students to engage in cooperative processes and monitor their partners’ reading process. As a result, students may not have benefited from the training with the reading sportsman. This may be one explanation why providing teachers with a combination of LPA and prepared material did not lead to positive effects on students reading competence compared with business-as-usual reading instruction.

As the general effectiveness of the LPA concept had been proven in earlier studies (Förster & Souvignier, 2014, (2015), one aim of our study was to examine the effects of the provided material

Observations of classroom instruction in the LPA-RS condition showed that the overall implementation of the reading sportsman was successful and that, on average, teachers used the material regularly. Nevertheless, in the LPA-RS condition, students’ progress in reading achievement was not higher compared with the other conditions. On the contrary, the results indicate that students of the CG tend to show a larger growth in sentence comprehension than students of the condition LPA-RS and that higher-performing students, in particular, benefit more from regular reading instruction than from a combination of LPA and prepared material. The frequency and duration (i.e., about 63 min per month) with which teachers used the prepared material (i.e., the reading sportsman) might not have been high enough to reveal positive effects. For interventions that revealed positive effects on reading competence, a much higher intervention intensity has been identified in meta-analyses. For instance, Wanzek and Vaughn (2007) pointed out 100 or more sessions of reading intervention (i.e., 20 weeks of daily intervention) as a proxy for interventions that lead to high effect sizes. Hence, students might not have spent enough “time on task” with the research-based methods of the prepared material to show effects on all students’ reading competence.

Study Limitations and Implications

The current study has some limitations that should be considered when interpreting the results. First, the main aim of the study was to evaluate effects of the concepts

Alongside investigating teachers’ and students’ use of the LPA tool and the prepared material, it might be useful to gain more information on the process of assessment-based differentiated instruction. In future studies, it would be interesting to examine how to best support teachers and schools and to determine which specific competences teachers need to engage in assessment-based differentiated instruction (e.g., skills for reading and interpreting LPA graphs and for planning differentiating instruction). Furthermore, closer contact and stronger collaborations between researchers and teachers might help to improve the quality and implementation of research-based concepts in schools.

Unfortunately, the reading fluency assessment that we used assessed reading accuracy only indirectly and less sensitively (Wimmer & Mayringer, 2014). It would have been useful to differentially assess reading accuracy, too. Although reading accuracy, reading fluency, and reading comprehension are three central and malleable aspects of reading competence, current research suggests that it would be useful to study effects of LPA and prepared material on more relevant constructs in future studies (e.g., oral language comprehension, Foorman et al., 2015; reading motivation, Hebbecker et al., 2019; and students’ background knowledge, O’Reilly et al., 2019). Furthermore, especially the results including the condition LPA should be interpreted with caution as students of this condition showed a lower level of reading fluency and text comprehension than students of the other two conditions at the beginning of the school year. In addition, less students of the condition LPA were missing at the reading comprehension test (word, sentence, and text comprehension) than in the other two conditions at posttest. Thus, due to the lack of baseline equivalence for two dependent variables and differences in attrition between the three conditions, the quasi-experimental design of our study is not entirely unflawed.

Despite its limitations, the current study provides evidence that LPA as well as a combination of LPA and prepared material can generally be implemented to entire second-grade classrooms in general education given minimal teacher training. Nevertheless, it remains challenging for both researchers and teachers to successfully implement assessment-based differentiated instruction in a way that fosters students’ reading competence. Future approaches to improve implementation may address barriers to implementation frequency. The current study echoes past findings indicating that concepts and approaches similar to the ones used here can be effective (Bennett, 2011), but teachers need further support to harness the full potential of assessment-based differentiated instruction (e.g., Stamann et al., 2017).

Supplemental Material

sj-pdf-1-aei-10.1177_15345084211014926 – Supplemental material for Effects of Providing Teachers With Tools for Implementing Assessment-Based Differentiated Reading Instruction in Second Grade

Supplemental material, sj-pdf-1-aei-10.1177_15345084211014926 for Effects of Providing Teachers With Tools for Implementing Assessment-Based Differentiated Reading Instruction in Second Grade by Martin T. Peters, Karin Hebbecker and Elmar Souvignier in Assessment for Effective Intervention

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available on the

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.