Abstract

Competent dementia care requires caregivers with specialized knowledge and skills. The Knowledge of Dementia Competencies Self-Assessment Tool was developed to help direct care workers (DCWs) assess their knowledge of 7 dementia competencies identified by the Michigan Dementia Coalition. Item selection was guided by literature review and expert panel consultation. It was given to 159 DCWs and readministered to 57 DCWs in a range of long-term care settings and revised based on qualitative feedback and statistical item analyses, resulting in 82 items demonstrating good internal consistency and test–retest reliability. Performance on items assessing competencies rated as most important was significantly related to training in these competencies. The DCWs in day care obtained higher scores than those in home care settings, and their sites reported a greater number of hours of dementia training. Validation in a more diverse group of DCWs and assessing its relationship to other measures of knowledge and skill is needed.

Introduction

Estimates show that over 2.5 million Americans were diagnosed with Alzheimer’s disease (AD), and nearly 4 million had AD and other forms of dementia in 2002. 1 By the year 2050, it is estimated that between 11 and 16 million persons would have been diagnosed. 2 Therapeutic nihilism, or the belief that the progressive nature of dementia precludes effective care and management of symptoms, can interfere with caregiver’s willingness to implement quality dementia care. Limited knowledge of dementia and insufficient or lack of standardized training in dementia is shown to decrease the quality of care provided by direct care workers (DCWs). 3,4 Caregivers in home care and institutional long-term care (LTC) settings vary in the level of formal or informal training in dementia, dementia care, and management of disruptive behavior they receive. 5 A review of the effectiveness of caregiver training programs for behavioral problems with persons with dementia found that training increased knowledge and skills and reduced frequency of behavioral problems, improved caregiver satisfaction, and decreased turnover rates. 6 This provides rationale for ensuring DCW knowledge and skills in the delivery of dementia care in a range of LTC settings.

A group of professionals from the Michigan Dementia Coalition (MDC) were concerned about the lack of standard identified knowledge and skills DCWs need to provide quality dementia care. For the purposes of this group, DCWs were defined as caregivers who provide the vast majority of hands-on care within our LTC networks. The DCW’s help care for people with physical, mental or emotional illness, or who are injured, disabled, or infirm who live in hospitals, LTC facilities, mental health settings, homes, or residential care facilities. The DCW’s are also known as nursing assistants (NAs), certified nursing assistants (CNAs), geriatric aides, unlicensed assistive personnel, home health aides, unpaid home help caregivers, orderlies, or hospital attendants. Assigned tasks include assisting persons with dementia with meals, dressing, bathing, toileting, skin care, transferring (assisting clients move from one location to another), walking, recreation, socialization, and assisting with some health care procedures such as taking and recording vital signs. A group of 7 experts in dementia care across disciplines met monthly for over a year, representing psychology, advanced practice nursing, education, policy, and administration staff from a range of LTC settings, the Mental Health and Aging Project, MDC, Greater Michigan Chapter of the Alzheimer’s Association, the Michigan Department of Community Health, Michigan Office of Services to the Aging, and the Michigan Public Health Institute. Additional input was obtained from 17 adjunct members including NAs, licensed practical nurses, registered nurses, social workers, and recreation therapists. A thorough review of the literature on dementia care and training was completed to identify a comprehensive set of dementia competencies (see resources section of Knowledge and Skills Needed for Dementia Care: A Guide for Direct Care Workers, www.dementiacoalition.org). No training curricula or model was found to cover the full range of dementia care knowledge and skills. From this literature, the MDC expert panel and adjunct partners identified a standard of 7 areas of competency that DCWs need to provide quality care for older adults who have dementia in a range of LTC settings: knowledge of dementia disorders, person-centered care, care interactions, life enrichment support, understanding behaviors, interacting with families, and caregiver self-care. This standard was titled Knowledge and Skills Needed for Dementia Care: A Guide for Direct Care Workers (www.dementiacoalition.org).

From this effort, a need was identified for a measure of DCW competency in dementia care to recognize and promote specialized care. Competency in an area of clinical practice is understood to include knowledge, skills, and attitudes (KSAs). 7,8 Caregiver perceived competence was improved by caregiver training, 9 related to dementia sensitive attitudes, increased meaning for family caregivers, and improved job satisfaction of DCWs in facilities. 10,11 A tool to recognize DCWs for dementia competency could promote self-perceived competence. Developing specialization in fields of medicine, psychology, nursing, and other disciplines begins with an identified set of competencies. One way to determine professional competency is to create a self-assessment, such as the Competency Rating Tool developed to summarize strengths, areas for growth, education, and training goals for the specialization of geriatric psychology. 12 Similarly, a self-assessment could be used to empower DCWs to understand their strengths, areas for growth, education, and training goals for providing dementia competent care. An Alzheimer’s Disease Demonstration Grant to the State of Michigan was funded by the Administration on Aging, with a key aim to develop a self-assessment tool to measure DCW knowledge of the 7 dementia care competencies identified. In this article, we describe the development of the self-assessment tool and report on internal consistency and test-retest reliability.

Methods

Instrument Development Procedures

The development of the Knowledge of Dementia Competencies Self-Assessment Tool (KDC-SAT) consisted of a search of the literature, expert panel consultation, DCW feedback, and content validity ratings reflecting the relative importance of the 7 dementia competencies.

Literature search

A search of the literature was conducted for measures and information on assessing knowledge and skills in each of the 7 areas of dementia competency between 1995 and 2005. Search Engines used included PubMed, AgeLine, and PsychInfo databases. Key words included measure, knowledge, and disorders, dementia, philosophy of care, care interactions, life enrichment support, understanding behaviors, interacting with families, family interactions, direct care, and self-care. In addition, relevant references of identified publications were obtained.

Expert panel consultation

An expert panel met 3 times over 3 months to give feedback regarding the development of the tool. The panel included 10 professionals with expertise in the area of dementia care and training. The panel represented a range of professions including trainers, directors, managers, nurses, recreational therapists, and academic professors, and were located in diverse areas of Michigan, including Ann Arbor, Grand Rapids, Kalamazoo, Lansing, Ontonagon, and Traverse City.

The panel provided guidance on format, length, and reading level of the tool and on the content of the items to ensure representation of the 7 competency domains. They selected items from current measures in the public domain appropriate for DCW assessment that covered 1 of the 7 competency areas. They helped create new items and revise items to ensure the content assessed the key components they identified in each competency area. Formatting decisions were made to ensure that a DCW could and would use the tool.

Empirical keying 13 was conducted with a subgroup of the expert panel and guided item revisions. They ranked the answers of the multiple choice questions from most correct to least correct in order to help identify distracters that may be chosen more or equally as often as the correct answer. This subgroup also engaged in a situational judgment test by providing 2 good and 2 bad answer choices to new questions developed to identify the best answers and distracter choices.

DCW feedback

Four DCW focus groups were conducted, including 8 DCWs in an Assisted Living facility, 4 DCWs and 2 CNAs in a continuing care retirement community and 6 DCWs in home care. These groups provided input on the content areas the assessment tool should cover, its length, reading level, and its potential uses. The DCWs who piloted the tool were asked about their experience with taking the tool following completion. This was done through a series of questions that they could respond to in writing and/or through verbal discussion regarding the format of the questions, the relevance of the questions to their work as DCWs, whether there was additional content that should be covered by the tool, and on the length of time the tool should take.

Content Validity Procedures

Content validity was established through 3 steps. First, the MDC identified 7 dementia competencies outlining the KSAs needed to provide dementia care, included in Knowledge and Skills Needed for Dementia Care: A Guide for Direct Care Workers (www.dementiacoalition.org). Then a separate group of subject matter experts described earlier was asked to provide ratings on the importance of each competency/KSA. Ratings by subject matter experts were also compared with DCW ratings of importance of each of the 7 dementia competency areas. Finally, test items were weighted to be consistent with the ratings obtained by the subject matter experts and DCWs themselves.

Expert panel competency percentage weightings

The expert panel was asked to rate the importance of each competency by separately assigning percentage weightings for each of the 7 dementia competency areas, to add up to 100%. These weightings were to reflect their perspective of relative importance and guide the proportion of respective questions addressing each dementia care competency area. Percentage weightings were averaged to create an overall weighting for each competency area.

DCW competency rankings

The DCWs in the focus groups were also asked to rate the importance of each competency. They were asked to individually rank the 4 most important competency areas as they found this task easier than assigning percentage weightings. The rankings of DCW were reverse coded so that the top rated competency area received a ranking of 4 and the lowest given a ranking of 1. Rankings were then summed under each competency area, and divided by the total sum of all the rankings. These average rankings are considered ordinal data and represent only which competency is more important and not how much more important one competency is than another.

Item Analyses Procedures

Procedures

Pilot sites were recruited through their connection with the MDC and several were willing to facilitate the piloting of the tool. Eleven sites were approached and able to participate. Sites were selected to represent a range of LTC settings and urban, suburban and rural areas in Western, Eastern, and Northern areas of Michigan. This was a convenience sample recruited through the MDC that may not be representative of all LTC settings or DCWs. Connected with the MDC, these sites may be more committed to dementia care or provide more training relevant to dementia competencies than that of other sites.

Approval from IRB was achieved. Written informed consent was obtained from each DCW participant before piloting the tool. Participants’ information and answers remained confidential and were not shared with their employers. Demographic characteristics of the respondents, including age, race, gender, years of education, receipt of a CNA certification or any other specialized training were collected by self-report. Respondents were asked to answer each of the 100 questions to the best of their ability. Participants were given an opportunity to retake the tool within 2-6 weeks in order to help establish test-retest reliability.

Participants

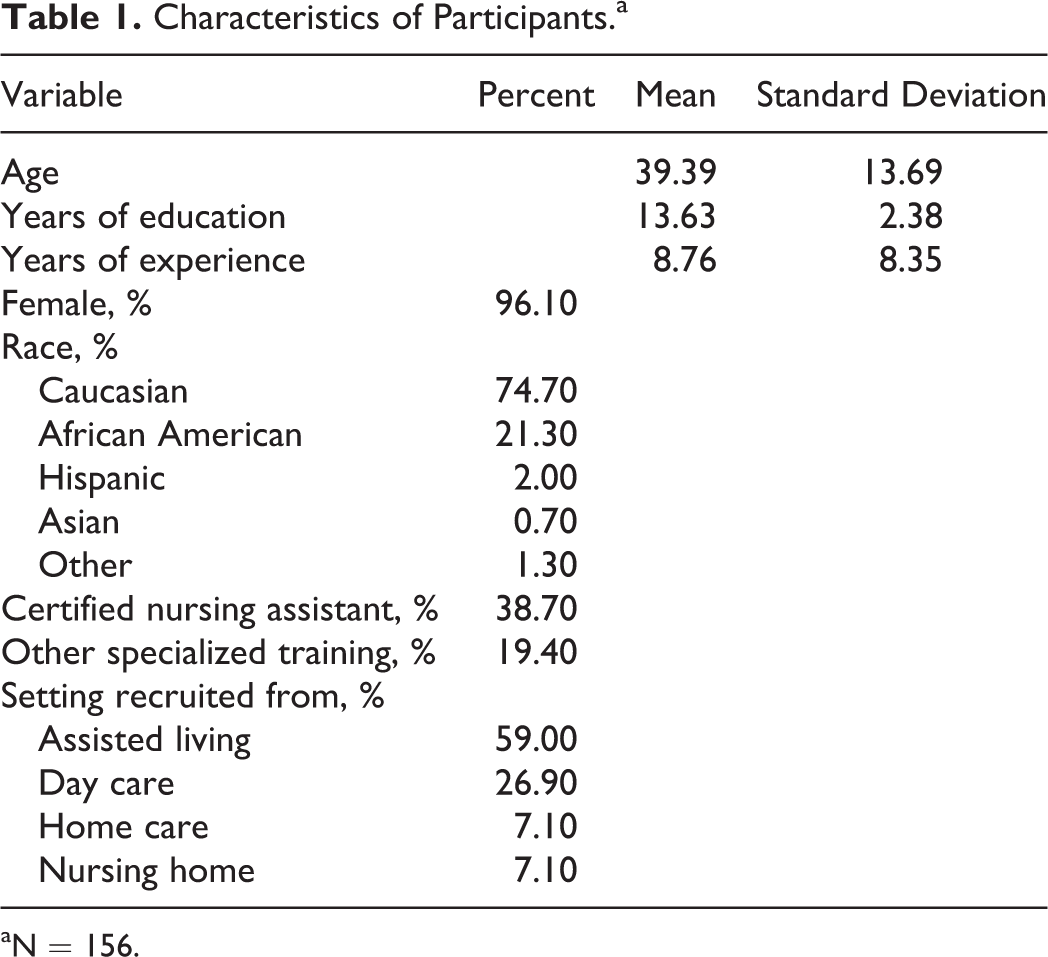

A convenience sample of 159 DCWs piloted the 100 item pilot tool. They were representative of DCWs more broadly (see Table 1), with an average age of 39 years and the majority of the participants being female (96.1%). This sample was primarily caucasian (74.7%), with the next largest racial group being African American (21.3%). Participants had received an average of 13.6 years of education and reported an average of 8.8 years of experience, 38.7% obtained a CNA certification, and 19.4% reported receiving other specialized training.

Characteristics of Participants.a

aN = 156.

Setting

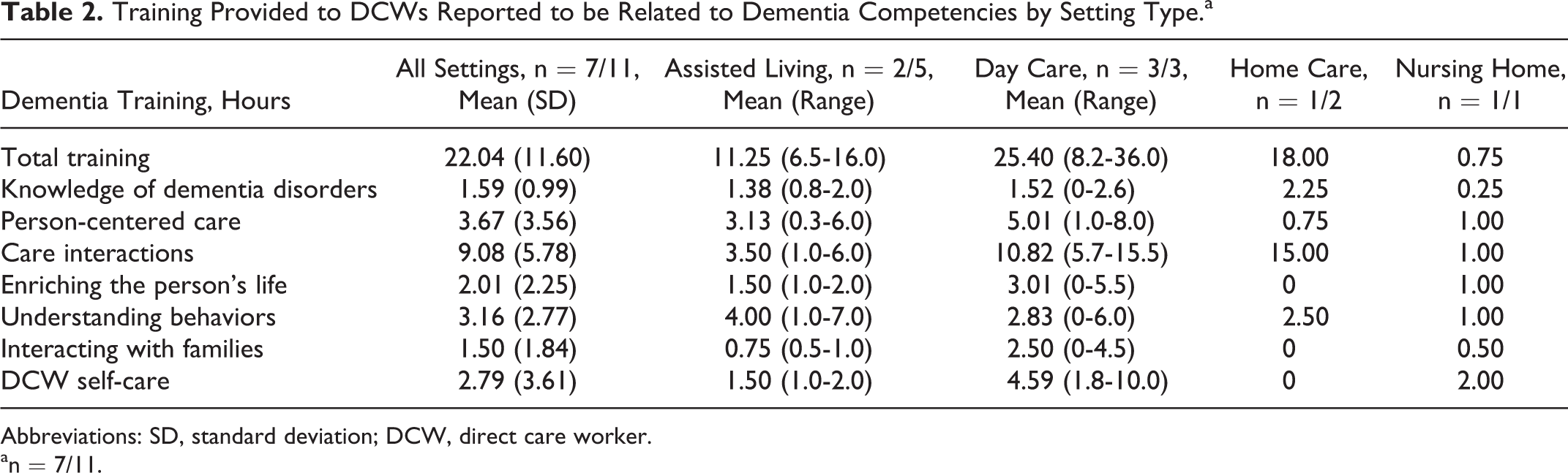

The DCWs were recruited from the 11 pilot sites, including 4 sites in the Detroit area, 1 in Gaylord, 3 sites in Grand Rapids, and 2 sites in Lansing. They worked in various settings including assisted living (59%), skilled nursing facilities (7.1%), day care (26.9%) and home care (7.1%), the latter including both home health and a small number of unpaid home help caregivers. Of these, 7 of the 11 reported the number of hours of training provided to all their staff in dementia care (see Table 2). Average amount of dementia training reported was 22.04 hours (standard deviation [SD] = 11.60), with the largest number of hours related to care interactions, and the least number of training hours related to interacting with families. Adult day health care sites reported greater number of hours of training than the other sites. This difference was not tested for significance due to the small number of pilot sites.

Training Provided to DCWs Reported to be Related to Dementia Competencies by Setting Type.a

Abbreviations: SD, standard deviation; DCW, direct care worker.

an = 7/11.

Item discrimination

Procedures were based on item analysis and test construction theory. 14,15 Item means, SDs, and measures of skewness and kurtosis were obtained for multiple choice items. Corrected item-total correlation and Cronbach’s α if item deleted were obtained for multiple choice and point biserial correlations for dichotomous true/false items. Item difficulty was calculated by the proportion that answered the item correctly for both dichotomous true/false and multiple choice items. Retention of items was primarily based on corrected item-total correlations - keeping items that were positively correlated with the total score and those with correlations greater than chance, or 2 standard errors or more above zero (r>.16). Items that were negatively correlated or less than 2 standard errors from zero were dropped. Items were dropped if answered correctly by 100 percent of respondents or answered correctly less than chance. Item response choices were revised when the proportion of responses was greater for the distracter than the correct answer or when a distracter option was never chosen.

Test–retest reliability

A convenience sample of 57 DCWs were asked to retake the final 82-item KDC-SAT 2 to 6 weeks after the first pilot, and the Pearson’s correlation was used to assess reliability over time.

Internal consistency

The 82-item KDC-SAT was assessed for internal consistency using the Cronbach’s α and Kuder Richardson 20 coefficient.

Convergent validity

Validity was not a focus of the pilot study, but was explored by using analysis of variance comparisons for categorical variables such as gender, race, setting and the total number of items correct on the KDC-SAT. Pearson’s correlations were used for comparison of continuous variables including years of education, hours of training received from their current employer, reported years of experience and total number correct. Significance was determined at the 0.01 level. The number of training hours received through the DCW’s current employer rated by the employer to be specific to each competency area was compared to percentage of items correct that related to that same competency area using the Pearson correlation.

Results

Instrument Development

Literature search

Through the literature search, several instruments were found and reviewed, including The Knowledge of Memory Aging Questionnaire, 16 The Knowledge of Behavior Management Questionnaire, 17 The Penn State Mental Health Caregiving Questionnaire, 18 The Positive and Negative Appraisal of Care, 19 The Knowledge about Memory Loss andCare test, 20 The Alzheimer’s Disease Knowledge Test, 21 The Inventory of Geriatric Nursing Self-Efficacy, 22 The Knowledge Items on Alzheimer’s Disease, 23 The Revised Scale for Caregiver Self-Efficacy, 24 Learning About Dementia, 25 The Mary Starke Harper Aging Knowledge Exam, 26 the Dementia Care Knowledge Inventory, 27 and The Alzheimer’s Disease Knowledge Test. 28 These measures assessed a narrow area of dementia knowledge such as understanding the disorder and its symptoms, caregiver efficacy, and behavior management and assessed knowledge in other disciplines such as medicine and behavioral health. No measure itself assessed all dementia competency areas nor was it designed as a self-assessment tool appropriate for DCWs.

Expert panel consultation

The panel recommended the use of person-centered wording, avoiding the use of wording specific to a particular care setting, and including items that assessed understanding of why a competency was important. They recommended items to cover gaps in key content areas for each competency. To allow for item selection and deletion after piloting the KDC-SAT, 100 items were included. Of 100 questions, 56 questions were revised from previously developed tests, and 44 questions were developed specifically for this tool.

The expert panel recommended that the format of the questions to be primarily multiple choice with some true/false to maintain reliability and ease in scoring. Open-ended questions, oral questions, and video vignettes were recognized as more reflective of on-the-job skills but difficult to use in a self-assessment tool. Recommendations on the length of the assessment ranged from 50 to 75 questions in length, taking no longer than 1.5 hours to complete. The recommended reading level was between fourth and eighth grade. Words difficult to read or understand that were determined by the expert panel as important concepts in dementia care (ie, delirium) and necessary to keep were included in a glossary developed as a reference when taking the tool.

Based on empirical keying, 6 items needed revisions as a distracter was chosen more often than the correct answer and 10 items were revised because a distracter was chosen with equal frequency.

DCW feedback

The DCW focus groups recommended the tool take less time than the expert panel, between 30 and 60 minutes to complete. They recommended a reading level to range between 5th and 12th grade, higher than expert panel responses. The DCWs endorsed potential uses of the tool to help identify areas of training needed for an individual or group of DCWs and reported that they would use a self-assessment tool for their own development.

The DCWs who piloted the tool also reported the questions to be relevant to their jobs and covered what a DCW needed to know in order to care for someone with dementia. They indicated that the tool should be between 45 and 60 minutes (84/141 responses) and 30 and 44 minutes (36/141 responses).

Many participants reported the questions to be clear and easy to understand. Others thought that the questions were too long, had to be read several times, and could have more than 1 correct answer. They indicated that questions were reflective of nursing home and assisted living settings rather than day care or home care settings. They also requested additional items to cover understanding behaviors, resident-to-resident altercations, interacting with and educating family members, and stages and/or types of dementia.

Content Validity

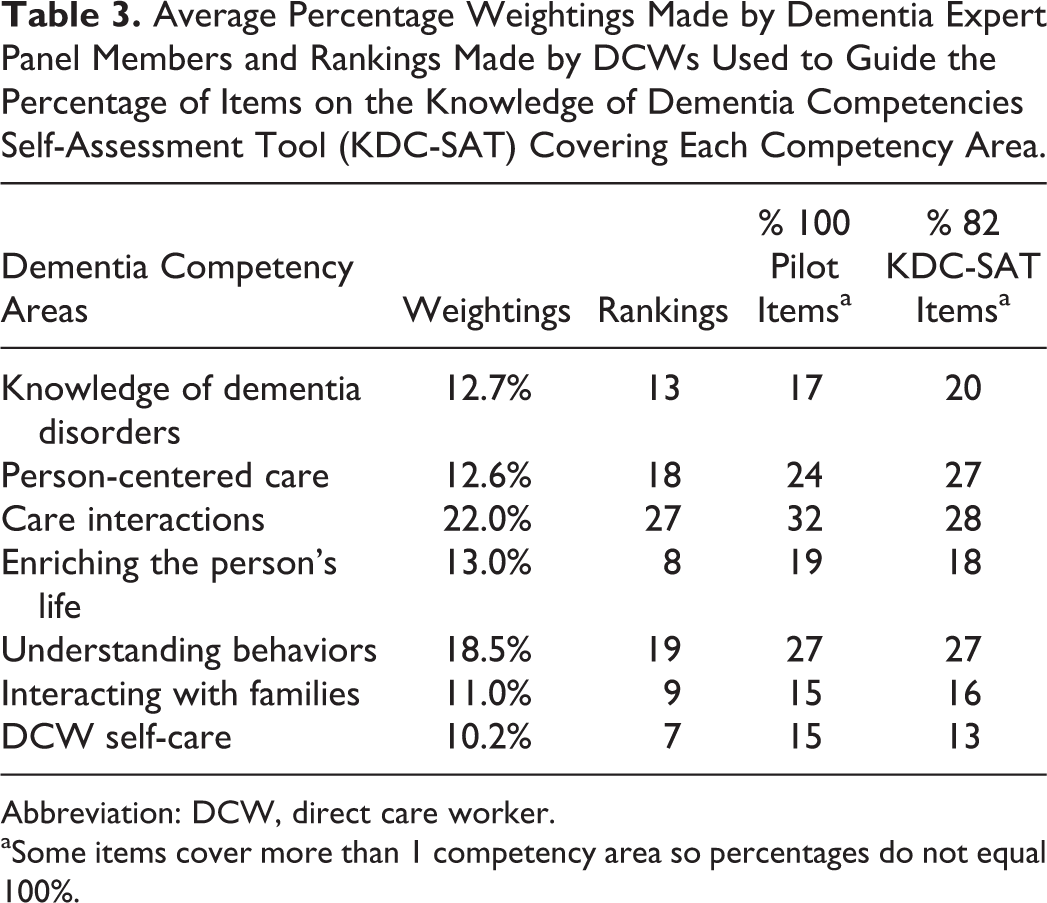

Expert panel percentage weightings

The average percentage weightings obtained from the expert panel identified care interactions as the competency area most important to cover (22.0%) followed by understanding behaviors (18.5%; see Table 3). The other competency areas were weighted more evenly, with DCW self-care chosen as the least important (10.2%) to cover in the assessment tool.

Average Percentage Weightings Made by Dementia Expert Panel Members and Rankings Made by DCWs Used to Guide the Percentage of Items on the Knowledge of Dementia Competencies Self-Assessment Tool (KDC-SAT) Covering Each Competency Area.

Abbreviation: DCW, direct care worker.

aSome items cover more than 1 competency area so percentages do not equal 100%.

DCW competency rankings

The DCWs also ranked care interactions as the most important area of dementia care (27) to be covered followed by understanding behaviors (19) and person-centered care (18; see Table 3). They also ranked DCW self-care as the least important area (7) to be covered, with enriching the person’s life ranked as the next lowest in importance.

Creating the pilot tool

The 100 items on the KDC-SAT were selected to represent the relative importance placed on each competency area proportionate to the expert panel percentage weightings and DCW ratings (see Table 3). Items often covered information in more than 1 dementia competency area, and so a higher percentage of items covered each competency area than was indicated by ratings. Two Lexile analyzers, the Flesch–Kincaid grade level analyzer and the Gunning fog Index demonstrated that the tool was at a 7.5 grade reading level, lowered to a 6th grade reading level when the glossary items were removed.

Item Analyses

Item discrimination

Corrected item-total correlations demonstrated that of 100 items, 16 items were negatively correlated or less than 2 standard errors from 0 and were dropped. Two items were dropped because 100% of respondents answered them correctly. This left 82 items in the final KDC-SAT. Of the remaining items, 29 items required revision of response options as a distracter was chosen more often than the correct answer or a distracter option was never chosen.

Test–retest reliability

A total of 57 DCWs took the tool again 2 to 8 weeks later. Good test–retest reliability was demonstrated with a Pearson’s correlation r = .865, P < .001.

Internal consistency

The KDC-SAT demonstrated good internal consistency, with a Cronbach’s α = .906 and Kuder Richardson 20 coefficient of .807.

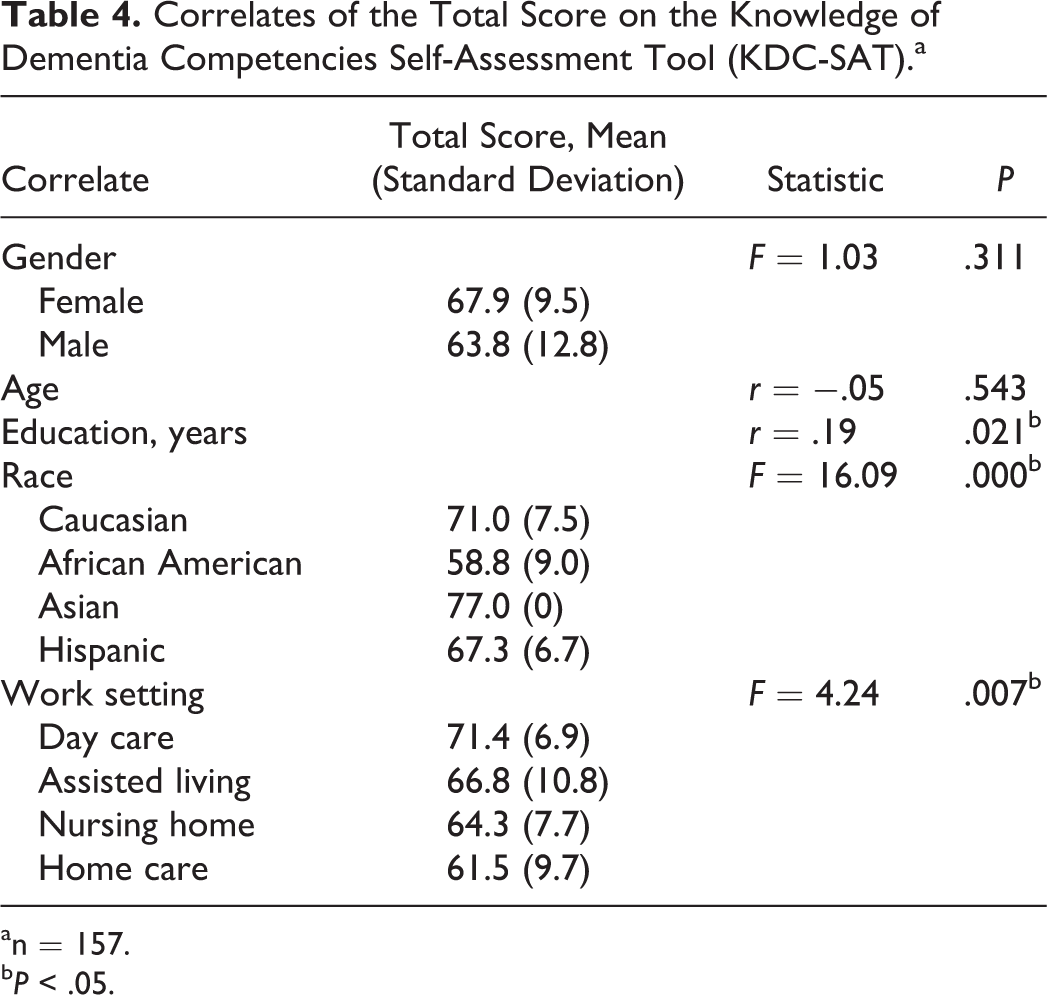

Convergent validity

Comparisons of total correct on the KDC-SAT with descriptive variables were made (see Table 4). Total correct on the KDC-SAT was significantly related to LTC setting (F = 4.24, P < .01), with higher scores from DCWs in day care settings in contrast to lower scores in home care settings. No differences were found on the basis of DCW age or gender, although very few (3.8%) were male workers. There were significant differences in total correct by race (F = 16.09, P < .001), demonstrating higher scores in Caucasian DCWs and lower scores in African American DCWs, with too few Asian and Hispanic DCWs to report differences. There was a significant positive relationship between years of education and total correct (r = .192, P < .05), indicating that those with more education performed better on the tool.

Correlates of the Total Score on the Knowledge of Dementia Competencies Self-Assessment Tool (KDC-SAT).a

an = 157.

b P < .05.

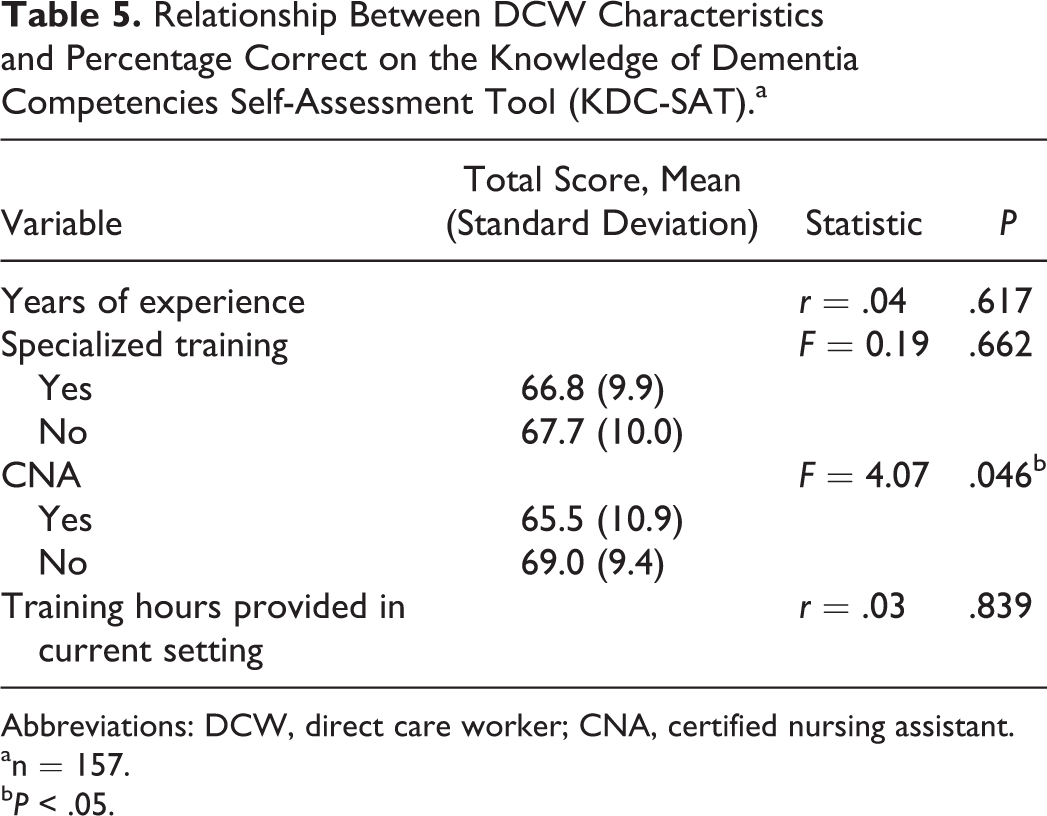

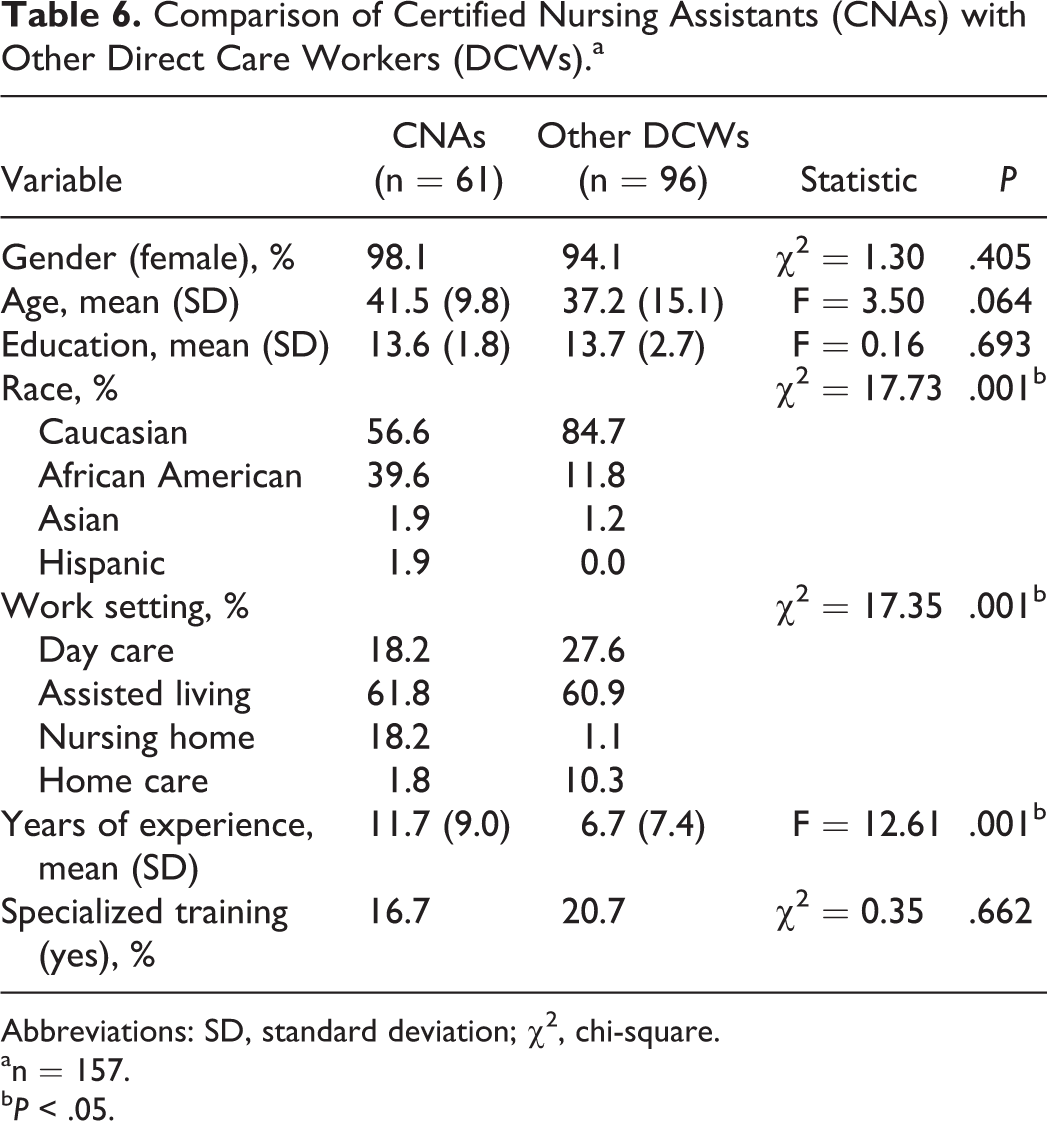

There was no relationship demonstrated between total score and total number of hours of dementia training DCWs received through their current employer, self-reported specialized training or number of years of experience (see Table 5). Surprisingly, those self-reported as CNAs obtained, on average, significantly lower scores than other DCWs (F = 4.07, P < .05). The DCWs who were and were not CNAs were similar in gender, age, years of education, and report of specialized training, but CNAs report more years of experience (F = 12.61, P < .01) and were more likely to be African American (chi-square [χ2] = 17.73, P < .01), and of course were more likely to work in the nursing home setting (χ2 = 17.35, P < .01; see Table 6).

Relationship Between DCW Characteristics and Percentage Correct on the Knowledge of Dementia Competencies Self-Assessment Tool (KDC-SAT).a

Abbreviations: DCW, direct care worker; CNA, certified nursing assistant.

an = 157.

b P < .05.

Comparison of Certified Nursing Assistants (CNAs) with Other Direct Care Workers (DCWs).a

Abbreviations: SD, standard deviation; χ2, chi-square.

an = 157.

b P < .05.

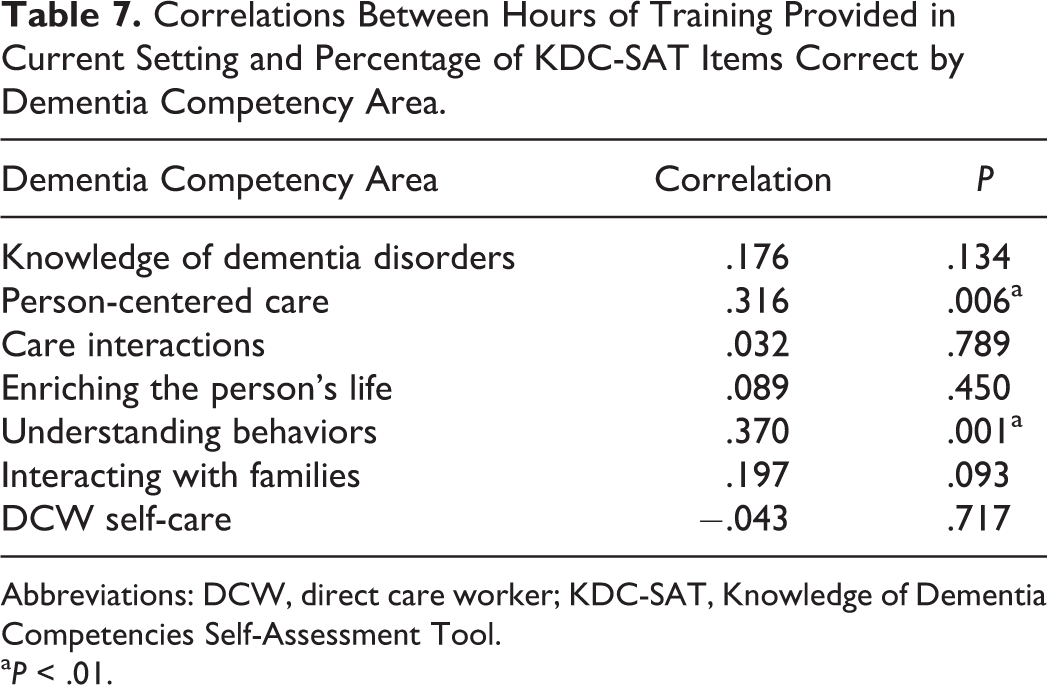

Few relationships were found between number of training hours and percentage of items correct specific to each competency area (see Table 7). However, there is a significant relationship between number of training hours and percentage correct specific to 2 competencies, person-centered care (r = .316, P < .01) and understanding behaviors (r = .370, P < .01).

Correlations Between Hours of Training Provided in Current Setting and Percentage of KDC-SAT Items Correct by Dementia Competency Area.

Abbreviations: DCW, direct care worker; KDC-SAT, Knowledge of Dementia Competencies Self-Assessment Tool.

a P < .01.

Final Tool

The final tool included 82 items. Each item is scored as either correct (1 point) or incorrect (0 points), ranging in a potential score of 0 to 82 points. Based on DCW feedback, 29 items were revised to ensure that the correct answer was clearer, and 16 items revised to decrease amount of reading required and improve generalization to a variety of care settings. Additional items were not added to supplement the content areas as suggested, but may be created in future work.

A number of questions cover dementia care skills that relate to more than 1 of the 7 competency areas (see Table 3). There are 16 questions related to knowledge of dementia disorders, 22 related to person-centered care, 23 covering care interactions, 15 items related to enriching the person’s life, 22 items covering understanding behaviors, 13 questions covering family interactions, and 11 related to caregiver self-care. The percentage of questions that assessed each competency area did not substantially change when comparing the 100-item pilot tool with the final 82-item KDC-SAT. A higher percentage of questions covered knowledge of disorders after revisions, which was more reflective of expert panel weightings and DCW rankings.

Discussion

The population of individuals with dementia is increasing, and with it increases the need for competent caregivers. Providing dementia care requires special knowledge and skills. The DCWs are concerned about the quality of dementia care, benefit from feeling competent in dementia care, and dementia training and increased knowledge is shown to be related to quality of care. 3,4,6,10 A self-assessment of the 7 DCW dementia competencies was created through guidance from dementia experts and DCWs as a way to empower DCWs to increase their own competency in dementia care. Tool development ensured that the 7 areas of dementia competency were comprehensively assessed. The KDC-SAT is demonstrated to be internally consistent, reliable over time, and is a promising tool to help DCWs in any setting identify strengths, areas for growth, education, and learning needs to ensure dementia care competencies. Its validity should be explored in future work in order to establish how this measure compares with available measures that assess knowledge of dementia disorders, understanding behaviors, and caregiver self-efficacy.

The site the DCW was recruited from was related to performance in this sample, with DCWs employed in day care settings obtaining higher scores in contrast to lower scores from DCWs in home care settings. Day care settings reported providing a greater total number of hours of training in dementia competencies than nursing home, assisted living and home care sites, which may be related to their DCWs obtaining higher scores on the KDC-SAT. Other factors not assessed could contribute to differences in each setting, such as ease of access to training, differences in on-the-job experience, or the DCWs that these settings recruit. It is also possible that the larger sample of DCWs and smaller sample of home health workers affected the representation of DCWs in these settings and demonstrated differences that would not be generalizable. If the questions were more relevant to the day care setting, these DCWs would likely do better. However, qualitative feedback from DCWs demonstrated that the items were seen to be more reflective of nursing home and assisted living settings. Items were revised to ensure generalizability to all LTC settings and future work should continue to ensure items reflect each setting sufficiently or create different versions of the tool dependent on where the DCW is practicing.

Other DCW characteristics were related to performance on the KDC-SAT. Caucasian DCWs obtained higher scores on the tool than African American DCWs. This may not be a generalizable finding as the former had higher representation (71.7%) than the latter (21.3%). Despite statistical significance, the less than 4 point difference in mean scores between CNAs and other DCWs with relatively equal SDs suggests that it may not be clinically significant. The CNAs typically receive some dementia training where there are no guarantees that other DCWs would have training in dementia care. However, in light of recruitment from the MDC the participating sites may be more likely to provide dementia training, surpassing the CNA minimum requirement of training. The type of training and its relationship with scores on the KDC-SAT will be an important area of future study. Finally, those with higher education performed better on the tool despite its 6th grade reading level. This may reflect test taking ability or be caused by a third variable such as enjoyment in learning that would make individuals more likely to complete more years in school and perform better on a tool measuring knowledge of dementia care.

The relationship between performance on the KDC-SAT and DCW characteristics may be unique to this convenience sample, as the majority of DCWs who took the KDC-SAT were male, Caucasian, not CNAs, from day care and assisted living settings, with less representation from nursing home and home care settings. These relationships should be investigated further before making conclusions. It will be important to increase the sample of DCWs from home care and nursing home settings in order to further assess difference between facilities in performance on the KDC-SAT and its potential relationship to number of hours of training in dementia competencies. Increased diversity of DCW gender, race, and including more CNAs in future samples will help increase understanding how these characteristics are related to performance on the KDC-SAT.

The lack of relationship between the total number of hours of dementia training received through their current employer, self-reported specialized training, or number of years of experience and performance on the KDC-SAT may lead to questioning the tool’s validity and what it is assessing. However, the quality of dementia training provided was not assessed and the types of experience and training to report were not systematically defined or measured. Training received not only through their current employer but from other avenues or employers would likely affect performance on the tool and was not collected. Also, total number of hours in dementia training may cover a limited number of competency areas and interfere with any relationship found between training and knowledge of all competency areas as broadly assessed by the tool. The validity of the tool in relationship with a more rigorous assessment of the type of training received and on-the-job performance will be important to explore in future work.

No relationship was found between training hours and scores in most of the competency areas, but the amount of training provided by their current employer related to person-centered care and understanding behaviors was significantly related to their performance on the items linked to each of these areas respectively. This is initial evidence for the importance of directing training to specific dementia competency areas. In creating the KDC-SAT, person-centered care and understanding behaviors were rated as 2 of the most important competencies and a large number of items were included to assess them. In addition, the reported training hours were higher in these 2 competency areas than all other competency areas other than care interactions. The larger number of questions assessing these competency areas would increase reliability of assessment, and a larger range of training hours in a competency area would provide more variability to detect a relationship. It will be important to ensure that the KDC-SAT items reliably assess each competency area and that a range of training experience in each competency area is obtained to further investigate this relationship.

Future samples used for validation should systematically sample DCWs to ensure equal representation of employment settings, racial diversity, specialization such as CNA, and a broader range of rigorously documented dementia training experiences. Future work is needed to compare the KDC-SAT to other measures of knowledge, test this tool’s ability to document change in knowledge before and after training, and explore its relationship to related constructs such as perceived competence and on-the-job skill and ability.

The KDC-SAT was designed to assess all areas of DCW dementia competency identified as important for quality care. It is a reliable self-assessment tool of dementia competency for DCWs to promote self-perceived competence and empower DCWs to understand their strengths, areas for growth, and education and training goals. With validation, the KDS-SAT has the potential to recognize, support, and increase the number of dementia competent caregivers and improve the quality of dementia care.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a subcontract from Michigan Public Health Institute under the Alzheimer’s Disease Demonstration Grant to the State of Michigan from the Administration on Aging.