Abstract

Breast Cancer (BC) is a major health issue in women of the age group above 45. Identification of BC at an earlier stage is important to reduce the mortality rate. Image-based noninvasive methods are used for early detection and for providing appropriate treatment. Computer-Aided Diagnosis (CAD) schemes can support radiologists in making correct decisions. Computational intelligence paradigms such as Machine Learning (ML) and Deep Learning (DL) have been used in the recent past in CAD systems to accelerate diagnosis. ML techniques are feature driven and require a high amount of domain expertise. However, DL approaches make decisions directly from the image. The current advancement in DL approaches for early diagnosis of BC is the motivation behind this review. This article throws light on various types of CAD approaches used in BC detection and diagnosis. A survey on DL, Transfer Learning, and DL-based CAD approaches for the diagnosis of BC is presented in detail. A comparative study on techniques, datasets, and performance metrics used in state-of-the-art literature in BC diagnosis is also summarized. The proposed work provides a review of recent advancements in DL techniques for enhancing BC diagnosis.

Keywords

Introduction

Breast cancer (BC) is a major killer disease and the second leading cause of death in women. More women die from BC every year than any other type of cancer. 1 As per global cancer observatory 2020 statistics, 2.3 million new BC cases are estimated globally which is 11.7% of total cancer cases. 2 According to epidemiological studies, the rise in BC is anticipated to reach 2 million by the year 2030 globally. 3 The survival rate of patients with BC is low in developing and underdeveloped countries. To increase the survival rate, suitable intervention in early-stage detection and treatment is essential.

Pathological breast examination is the frequently used gold standard for BC diagnosis. However, it is invasive and time-consuming. Imaging techniques can be used to detect anomalies in the breast, which can aid in diagnosis before the need for invasive procedures. Screening based on imaging data obtained from X-rays, mammograms, sonograms, magnetic resonance images (MRI) are used for quick and early diagnosis. Mammogram and ultrasound sonogram are commonly used imaging methods for BC diagnosis.

Mammogram is a popular imaging method but has reduced sensitivity in younger women with dense breasts. Ultrasonography is a breast examination technique that detects and classifies breast nodules and is widely employed for physical examination. As ultrasound (US) is less prone to radiative hazards and is noninvasive, it is highly preferred to mammograms. An ultrasound examination begins by scanning breast tissues from various angles and using various probe modes and pressures to capture images that best describe the tissue properties. 4

Imaging-based diagnosis of BC at an early stage reduces mortality rate and increases longevity. 5 Manual disease diagnosis requires a high level of clinician expertise. Computational intelligence techniques have been used to automate BC disease diagnosis. Computer-aided diagnosis (CAD) has helped to reduce the time spent and subject expertise needed to diagnose BC. Automatically analyzing breast images can aid surgeons in making a more reliable and accurate diagnosis. Various CAD approaches have recently been used for image-based detection, segmentation, and classification. 4 CAD-based approaches have further improved the diagnostic performance of BC. 6

Emerging technologies such as artificial intelligence (AI) and radiomics have shown significant improvement in diagnosing BC. 7 Machine learning (ML) and deep learning (DL) techniques are commonly used to automate BC detection based on the images obtained from the scanners. ML algorithms use features obtained from the images to detect/classify BC. ML demands knowledge of feature descriptors for effective classification. On the contrary, DL techniques directly classify cancer from the raw images. Convolutional Neural Network (CNN) and Transfer Learning (TL) algorithms in DL exhibits improved performance over ML. Deep CNN architectures classify breast lesions effectively with increased accuracy, sensitivity, and specificity. 8

The contributions mainly addressed in this review are outlined below:

A brief overview of CAD systems used for BC diagnosis is presented. Deep CNN and TL approaches in disease diagnosis are outlined. A review on DL-based CAD approaches in the detection of BC is discussed. A comparative study on the performance, database, and methods used in DL breast CAD is summarized.

CAD Systems in BC Diagnosis

Automated systems for diagnosis or detection were developed using computers involving AI, computer vision, and image processing to assist medical experts in the correct identification of medical images by improving image quality and highlighting the conspicuous parts clearly. It also helps doctors to quickly evaluate and analyze the abnormality in a short time and make decisions. The primary goal of CAD systems is to detect unusual signs that an expert would miss and to provide better diagnostic analyses as a second opinion to overcome the subjective analysis arrived by the professionals.

Computer-aided detection (CADe) systems are confined to identify abnormal parts or structures. On the contrary, computer-aided diagnosis (CADx) assesses the abnormal parts identified in CADe for providing diagnostic solutions. Both CADe and CADx models together are essential in identifying the anomaly at an early stage. Many CAD algorithms have been developed for detecting BC at an early stage over the past 4 decades using various modalities of images such as X-ray, MRI, and US. Histology images are also used in CAD systems.

X-ray-based CAD systems are used to identify and highlight the microcalcification clusters, small lumps in dense tissue, marginal structure of suspicious masses, architectural distortions, and highly dense structure of the tissue in mammograms. Further, the CAD systems can classify a benign or malignant mass through its size, shape, and texture characteristics, so that the radiologist can draw conclusions easily. Either mediolateral oblique (MLO) view or craniocaudal (CC) view is used in single view systems. In - double view systems, both views are used together to utilize the missed information in one view.

Mammography-based CAD is mostly preferred for screening, as it can detect the anomalies present in the breast before the onset of symptoms. MRI produces fine details of inner breast tissues using strong radio waves and magnetic waves and is much helpful in identifying high-risk BC cases. The breast US may be an echogram or elastogram produced with the help of sound waves. Echography reconstructs the echo reflection property of the tumor regions whereas the elastogram reproduces the elasticity of the same. Both could be used to develop a CAD to provide a second opinion for diagnosis. Few CAD systems have been proposed to use both echographic as well as elastographic properties of these ultrasound images together to draw better conclusions. US of the breast is preferred for patients at high risk who can’t undergo MRI or women in pregnancy for whom X-ray shouldn’t be exposed. Also, US is a very commonly used screening method for women having denser breast tissues which X-ray fails to detect.

Usually, MRI or US is supplementarily used along with the X-ray mammogram specifically for patients with highly denser breast tissues which could not be compressed easily. But it doesn’t mean that mammography can be totally replaced. Some of the researchers used the information from more than one modality to improve the diagnostic conclusions by fussing the unique characteristics of each individual image modality. Feature fusion of two view images of mammograms (MLO and CC view)/US (echography and elastography) has further improved the diagnostic accuracy in multiview/multimodality CAD systems.9,10

In general, any CAD system has a sequence of steps. The workflows of single view and multiview/multimodality CAD using feature fusion frameworks are presented in Figures 1–3, respectively.

Single view or single modality CAD. Abbreviation: CAD, computer-aided diagnosis.

Multiview or multimodality CAD using feature fusion—framework 1. Abbreviation: CAD, computer-aided diagnosis.

Multiview or multimodality CAD using feature fusion—framework 2. Abbreviation: CAD, computer-aided diagnosis.

Deep CNN and Transfer Learning

DL is a superset of ML and consists of Artificial Neural Networks (ANN) with more hidden layers. DL algorithms use multiple layers to learn better the feature representation from raw data.11,12 DL-based networks such as Deep Belief Network (DBN), Recurrent Neural Networks (RNN), Auto Encoder and CNN, are frequently employed architectures for detection, classification, and recognition tasks.

CNN is widely used in image-based tasks and the performance surpasses human experts. 13 The initial layers in CNN learn the low-level features, while the higher layer learns the features that better represent the input image. CNN is a type of ANN with input layer, number of hidden layers, an output layer, and a number of parameters that allows to learn meaningful features from an image. The various layers present in CNN are outlined in the following section.

Input Layer

The input layer represents the image with dimensions HxWxD, where H is the height, W the width, and D the depth of the image.

Convolutional Layer

Convolution layer is the basic block of CNN. In the convolution layer, image is convolved with the filters to generate feature maps. The convolved output is passed to the next layer. Filters are convolved with the image to extract features. Convolution layer is followed by nonlinear activations. Rectified Linear Unit (ReLU) activation is commonly used and generates a rectified feature map.

Pooling Layer

Pooling layer in CNN improves the computational speed by reducing the spatial size of the feature map. Max pooling and average pooling are the types of pooling. The maximum pixel value in a feature map is retained by max pooling. The average pixel value of the feature map is retained in average pooling.

Fully Connected Layer

In fully connected (FC) layer, all neurons are connected to activations in the previous layer. The feature vector in the FC layer is used for classification after the completion of training.

Output Layer

In Softmax layer, activation is applied to the last layer of CNN to perform classification task. Softmax function maps a CNN's non-normalized output to a probability value. The classification is favored to the class with high probability.

The architecture of CNN is detailed in Figure 4.

CNN architecture. Abbreviation: CNN, Convolutional Neural Network.

Despite the improved performance in image-based tasks, DL suffers from the following limitations:

DL algorithms demand a massive amount of labeled training data; The need for high-end computational resources such as a Graphical Processing Unit; and Poor generalization capability on a real-time dataset. An available pretrained model trained on a large dataset is selected (Alexnet, GoogLeNet, Resnet, Inceptionresnet, Efficientnet, densenet, darknet, VGG, Mobilenet, Nasnet, Shufflenet, Squeezenet). The last layers in the pretrained model are replaced in accordance with the data of the new task. The FC layer is modified as per the classes of the new task. The model is retrained on the new biomedical dataset. Performance of the trained network is assessed with test data and performance metrics are evaluated.

TL a new paradigm in DL is used to overcome the limitations of DL.14,15 In TL, the knowledge learned from a task is transferred to a related similar task. TL and CNN-based framework is proposed to improve BC diagnosis using mammogram images.

16

The steps involved in TL for biomedical-related task is listed below.

Pretrained networks are also used for deep feature extraction. Earlier layers provide low-level information, whereas deeper layers provide high-level information. Activations on the global pooling layer yield depth information, whereas activations on convolution layers yield basic information. Information extracted from the local specification of an object such as edges, corners, color variations, and angles corresponds to low-level CNN features. Information extracted from the global specification of an object such as the structure of the object, and depth information corresponds to high-level features. Deep features extracted from pretrained networks are classified using ML classifiers.

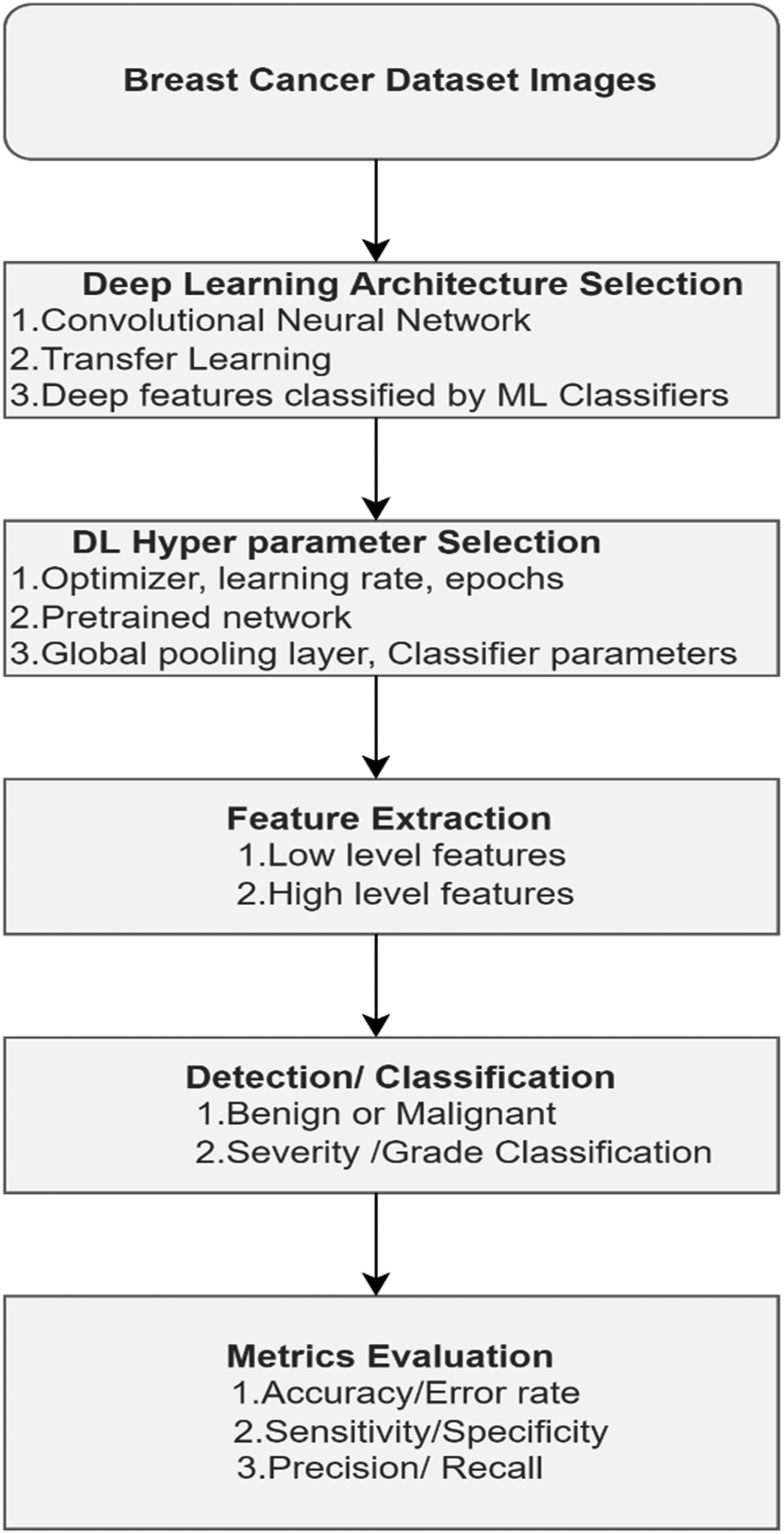

The steps involved in building a DL-based CAD system for BC diagnosis is outlined in Figure 5.

Steps involved in DL-CAD. Abbreviations: DL-CAD, Deep Learning- Computer-Aided Diagnosis; ML, Machine Learning.

The choice of optimal hyperparameters is also an important step to build high-performance DL systems. Metrics derived from the confusion matrix are evaluated to analyze the system's performance.

Deep Learning-Based CAD Approaches for Breast Cancer Diagnosis

In recent days, DL plays a prominent role in disease diagnostics involving analysis and understanding of signal or image. Many structures were proposed for DL to successfully simulate the complex structural mechanism of the human brain which enables the transfer of information from lower to higher levels. An overview of the use of DL for BC CAD is presented in this section.

A CNN involving 5 layers of convolution, 7 layers of ReLU, 3 pooling, and 3 FC layers had been designed and trained using natural images of Large Scale Visual Recognition Challenge (LSVRC) dataset after converting into gray scale with a learning rate of 0.01. The learning rate was divided by 10, if the validation error rate is reduced to improve with the current learning rate, and the training was repeated for 100 cycles. Then, a second stage of training is provided to the pretrained network with an initial learning rate of 0.00001 for 100 cycles. Then it is fine-tuned by using the 600 mass images of DDSM dataset. Both high-level and middle-level features were fused and classified by Support Vector machine (SVM) with k-fold cross-validation. The accuracy of the classification achieved was 96.7%. 17

An integrated methodology with minimal user intervention was proposed to detect, segment, and classify mammogram breast masses. In the mass detection stage, a set of mass candidates have been generated as a combination of the results obtained from a coarse-to-fine DBN (m-DBN) model and a Gaussian Mixture Model pruned by a series of R-CNNs. Segmentation was performed using deep-structured learning followed by the hypothesis refinement step by Chan-Vese's active contour level set method based on Bayesian optimization. For classification, a CNN was trained in 2 steps. In the first step, a DL classifier pretrained using regression is employed on image-based features. Then it is fine-tuned with the help of the annotations of breast masses from the INbreast dataset. Two RF classifiers were trained using the hand-crafted and 781 deep features from fine-tuned CNN model, respectively, with 5-fold cross-validation for parameter estimation. The dice coefficients of 0.85 ± 0.01 and 0.85 ± 0.02 were obtained for the segmentation through the proposed method for training and test set, respectively. For detection, the deep features showed better results than the hand-crafted ones. For classification, the authors claimed that their system is more robust to false negatives and false positives. 18

A multiscale CNN was designed and trained through a curriculum learning strategy in 2 stages. Stage 1 uses the training of a classifier for estimating the likelihood of the presence of a mass in an image patch. In stage 2, image-level training by a scanning-window method is employed. Aggregation of features extracted from each patch is further classified. 19

Extraction of low-level to mid-level features from 5 max pool layers using a pretrained VGG19 CNN model, normalized individually by Euclidean norm and concatenated and normalized. Further, hand-crafted radiomic features describing the physical properties of the segmented lesion through conventional CADx methods were also computed. Then these features were classified separately by nonlinear SVM with Gaussian radial basis function kernels. An internal grid search was used to optimize the hyperparameters of SVM with 5-fold cross-validation. It was concluded that diagnostic performance was improved when fusing deep features and image-based features. Area under the receiver operating characteristic curve (AUC) was used to evaluate the performance. This was tested on 690 cases of MRI, 245 cases of digital mammogram, and 1125 cases of US images. The AUCs of the above methods are 0.89, 0.86, and 0.90, respectively. The statistical significance was assessed through the DeLong test. To account for multiple comparisons, Bonferroni-Holm corrections were used. 20

Multiparametric MRI in conjunction with DL is used as an imaging method to improve BC diagnosis. From each patient, 2 sequences viz, a Dynamic Contrast-Enhanced (DCE)-MRI sequence and a T2-weighted (T2w) MRI sequence were collected. The dataset collected for the study consists of 927 images obtained from 616 women. Deep features extraction is from DCE-MRI and T2w-MRI images of VGG19 pretrained network and further classified using SVM classifier. The sequences were integrated at image, feature, and classifier levels through fusion, and analyzed using the AUC curve and compared using the DeLong test. The AUC values obtained through single-sequence classifiers are 0.85 and 0.78 for DCE and T2w, respectively. The multiparametric fusion schemes at image, feature, and classifier levels yielded AUCs of 0.85, 0.87, and 0.86, respectively. From the results, it was concluded feature fusion performed better than DCE (P < .001). 21

An integrated CAD, You-Only-Look-Once (YOLO) detection, Full resolution CNN (FrCNN) for segmentation, and a deep CNN for classification was proposed. 22 It was evaluated using INbreast database and the following results were obtained. Detection results—accuracy: 98.96%, Matthews Correlation Coefficient (MCC): 97.62%, and F1-score: 99.24%. Segmentation results—accuracy: 92.97%, MCC: 85.93%, Dice (F1-score): 92.69%, and Jaccard similarity coefficient: 86.37%. Classification results—accuracy: 95.64%, AUC: 94.78%, MCC: 89.91%, and F1-score: 96.84%.

Feature extraction through an 8-layer DL network and classified by a multilayer perceptron with one hidden and one logistic regression layer with 4-fold cross-validation were experimented. An overall AUC of 0.790 ± 0.019 was obtained.23 A survey was conducted on the performance analysis of various CNNs in analyzing mammogram images by reviewing 83 studies. Further, the work focused on the best practices used to improve diagnostic performance. The architecture of CNNs and the description of the 10 mammographic repositories used in literature were also discussed in this survey. In addition to the above, the authors have also addressed 16 questions related to mammographic research for BC diagnosis.24

Performance of CNNs trained through fine-tuning and from scratch is analyzed. 25 It was observed that the performance of shallow tuning is inferior to training from scratch and the performance of deeper fine-tuning is similar or even superior to training from scratch. The authors also concluded that (1) Fine-tuning should be preferred irrespective of the dimension of training sets, (2) Fine-tuned CNN achieves maximum performance than a network trained from scratch, and (3) Prominent performance improvement using fine-tuning all deep layers.

A new method was proposed to design a deep network with 4 pairs of CNN and 1 FC layer individually trained with 100 regions of interest (ROI) of size 52 ×52 from each image. The final prediction of each case was obtained from the average scores of all the 100 ROIs. The performance was analyzed using AUC. An AUC of 0.6982 in ROI-based analysis and an AUC of 0.7173 in the case-based analysis were obtained. 26

An automated framework using CNN called BC-DROID was proposed. 27 It was first pretrained based on mammographic ROIs defined by doctors and then trained on mammograms. The YOLO model was adapted to identify ROI and label it as benign or cancer. After preprocessing, 25 000 ROIs were generated through cropping and data augmentation processes. The model was modified so that the last FC layer has only 2 neurons and pretrained with these ROIs. Then, the weights learned were initialized as the initial values for the main training process which uses whole mammogram images. This framework achieved accuracies up to 90% and 93.5% for detection and classification, respectively.

Breast masses were classified by a shallow CNN, Alexnet, and GoogLeNet's last FC layer were modified for 2 output classes. The system is evaluated using 1820 images containing masses collected from the DDSM dataset by randomly splitting data into 80%, 10%, and 10% for training, validation, and testing, respectively. Input image is considered in 2 contexts: a small region of 50 pixels with fixed padding around the breast mass and a large region that is 2 times the mass bounding box with proportional padding. GoogLeNet with large context was the best model with 0:934 recall at 0:924 precision, outperforming radiologist recall between 0:745 and 0:923. 28

The use of TL for classifying breast masses on reduced mammogram dataset was explored. 29 GoogLeNet and Alexnet pretrained networks were considered. The performance was analyzed on BCDR-F03 (Film Mammography dataset number 3) in terms of AUC. GoogLeNet and Alexnet achieved an AUC of 0.88 and 0.83, respectively, and outperformed shallow Neural Network (NN) and conventional classifiers.TL improved the performance of breast CAD significantly with limited data. CNN in conjunction with TL surpassed the performance of ML-based systems.

Training a classifier with reduced image dataset obtained from X-ray, US, and MRI is highly challenging. To avoid this limitation, cross-modality TL was experimented for improved performance. 30 A pretrained network was proposed using images collected from various breast imaging modalities to improve the robustness of the classifiers and classification performance of fine-tuned networks. This technique has been employed in the identification of masses in breast MRI from a deep network trained on MRI. An improved accuracy of 0.93 is observed over the baseline accuracy of 0.90.

A robust CAD system using ROI-based CNN called YOLO was proposed. 31 It consists of 4 stages: image preprocessing, feature extraction, mass detection, and mass classification. In preprocessing, multiple threshold peripheral equalization technique was applied. In Feature extraction stage, deep features were obtained from different convolution layers in CNN. A model based on confidence score was used for mass detection. Fully Connected Neural Network (FCNN) is used for mass classification. The CAD system was tested on 600 images (300 benign and 300 malignant) collected from the DDSM database to detect and classify breast masses. YOLO learns the characteristics from the breast image and ROI during the training stage. A deep architecture with 24 convolution layers with 3 × 3 kernel, max pool layers of size 2 × 2 and 2 fully connected layers were experimented. For the detection of breast masses, an accuracy of 96.33% was obtained. In the classification task, benign and malignant cases are classified with an accuracy of 93.20% and 78.00%, respectively. The system performed well on both detection and classification tasks. Further, the classification also resulted in an overall accuracy of 85.52%.

A mobile-based classification system combined with a cloud computing platform is proposed for BC diagnosis. 4 Image enhancement, classification and false negative identification are the components in the CAD system. Inception v3 pretrained network is used for BC diagnosis from US images. stacked auto-encoders with residual connection blocks are used for image reconstruction. Generative Adversarial Networks are used for detecting breast anomalies and to reduce the false negative rate. An accuracy of 87.00% is obtained for the proposed system exhibiting a strong correlation between the scores of human experts.

Deep features extracted from CNN and pretrained networks are experimented for BC detection and classification. 32 Deep features obtained from Alexnet pretrained network is reduced by Relief-based feature selection to choose discriminant features. Four different ML classifiers such as SVM, KNN, RF and Naive Bayes (NB) are used for cancer detection and classification. An accuracy of above 99% is obtained after feature selection using LS-SVM classifier.

The use of CAD system in various imaging modalities such as Digital Mammography (DM), MRI, and digital breast tomosynthesis (DBT) has been explored. 33 Further the application of DL algorithms is also analyzed to enhance BC detection. The authors claim that, AI-based CAD system outperforms traditional CAD in terms of performance metrics such as low recall, accuracy, specificity, and sensitivity. AI framework using CNN has been employed for screening BC using DM. 34 AI enabled screening outperformed radiologist screening with an accuracy of 72.7%. Further combining AI and radiologist decision improved the detection performance of 83.6% accuracy with reduced false positive rates.

Radiomics and Deep Learning-Based CAD Approaches for Breast Cancer Diagnosis

Radiomics is the process of extracting quantitative features from medical images for better CAD. 35 This data reflects the underlying physiological characteristics of the radiographic image. Radiomics is effective because it targets these features in cancer diagnosis and prognosis. Radiomics can aid in classifying the type of cancer in an efficient way by using phenotype information. DL-based radiomics in the early detection of BC is presented. 36 Conventional radiomics uses features extracted from the characteristics of images such as texture, wavelet, statistical, geometric, and shape. Feature extraction is followed by feature selection and classification using ML classifiers. In DL radiomics, feature representation is directly learnt from the images using generative and discriminative DL models.

In addition to BC diagnosis, radiomics is also used in predicting the status of Auxiliary Lymph Node (ALN), molecular subtypes, response to chemotherapy, and survival outcomes. The use of radiomics in the diagnosis of BC using MRI and mammogram image modalities resulted in improved accuracy, sensitivity, and specificity. Prediction of accurate ALN status is an important step in BC prognosis and treatment. Radiomics-based approaches yielded better results in ALN prediction. Molecular subtype classification into 4 grades based on hormone status using breast MRI proved to be effective with radiomics features. The radiomic characteristics after chemotherapy exhibits direct correlation to the response of therapy and improved pathological complete response (pCR). Similarity between survival outcome and entropy/uniformity characteristics of breast images is reported through radiomics-based diagnosis. 37

The workflow used in the radiomics-based BC diagnosis is outlined in Figure 6. Image acquisition is followed by preprocessing and feature extraction. The various radiomics features used are shape features, first-order histogram-based features, second-order texture features, and higher-order transform-based features. 38 Fusion of deep features and radiomic features can improve the efficiency in BC detection and classification.

Radiomics workflow in breast cancer diagnosis.

Comparative Study From the Review

A comparative study is presented in Tables 1 and 2 based on the papers reviewed in this work. Table 1 compares the performance metrics derived from different literature studies. Table 2 compares the dataset and techniques used in the literature for enhancing the diagnosis of BC.

Comparison of Metrics Used in the Literature.

Abbreviations: AUROC- Area under receiver operating characteristic; BCDR, Breast Cancer Digital Repository; CNN, Convolutional Neural Network; DCE-MRI, dynamic contrast-enhanced magnetic resonance images; DDSM, Digital database of screeningmammography; FFDM, Full field digital mammography; MCC, Matthews Correlation Coefficient; MIAS -Mammographic Image Analysis Society; US, ultrasound.

Comparison of Database and Techniques Used in Literature.

Abbreviations: AI, artificial intelligence; BCDR, Breast Cancer Digital Repository; CAD, computer-aided diagnosis; CNN, Convolutional Neural Network; DCE-MRI, dynamic contrast-enhanced magnetic resonance images; DDSM, Digital database of screeningmammography; DNN, Deep Neural network; FFDM, Full field digital mammography; FrCNN, Full Resolution CNN; GAN, Generative Adversarial Networks; GMM, Gaussian Markov Model; KNN, K-Nearest Neighbor; LASSO, Least Absolute Shrinkage and Selection Operator; MCC, Matthews Correlation Coefficient; m-DBN - multi-scale deep belief nets; MIAS -Mammographic Image Analysis Society; ML, machine learning; SVM, Support Vector machine; US, ultrasound; YOLO, You-Only-Look-Once.

Clinical Potential, Challenges, and Impact of AI-Assisted Breast Cancer Diagnosis

Literature studies suggest that there has been a huge clinical potential for AI in the diagnosis of BC .AI is widely used in the field of BC screening, diagnosis, classification of molecular subtypes, histopathology classification and prediction of chemotherapy response and lymphnode metastasis. 39

AI in BC diagnosis can provide a second opinion to radiologists to make correct decisions in areas where the false diagnosis is to be avoided. Integrating AI can help in differentiating suspicious/more suspicious breast lesions. Identifying the correct feature for effective classification is a challenge in ML-based diagnosis. However, feature selection is avoided in DL but requires a massive amount of labeled data for accurate diagnosis. 40

Breast images with low quality and the presence of subtle or complex variations in the disease necessitate the requirement of radiologist expertise and CAD knowledge for precise and quick diagnosis. The advancements in AI have improved the ability of CAD in accelerating diagnosis and treatment. AI-assisted diagnosis offers the following advantages: (1) Minimally invasive and reduces biopsies; (2) Molecular features can be easily differentiated to classify subtypes; (3) The heterogeneity of breast lesions can be easily identified; and (4)Tumor progression or response to the treatment can be analyzed. AI-based diagnosis can be used as an assistive tool to support radiologist in decision making but independent imaging and clinical diagnosis are not possible. The limitations of AI-based diagnosis are (1) Lack of generalization in the methodologies used in AI to produce reproducible results; (2) Algorithms with reduced false positive rates and high specificity are essential to deal with image data obtained from different modalities and patient independent variations; and (3) The applicability of AI in diagnosis is to be validated in real-time through clinical trials on a large sample size before adopting to the clinical practice. 41

DL systems based on breast US images have improved diagnostic accuracy, minimized interpretation time, and reduced the number of call-backs and biopsies. The performance of AI CAD is better when compared to radiologists with less experience. However, the potential impact of AI can be measured only when more multicentric and prospective studies are performed to analyze the feasibility of incorporating AI in the clinical diagnosis of radiologists. 42

AI-based approaches in BC diagnosis have reduced the misidentification and diagnosis error caused by radiologists. AI provides objective analysis by considering texture, internal structural details, discriminative and unique features for effective classification and diagnosis. The current state of AI in BC diagnosis can be improved by technological advancements thereby resulting in improved accuracy, precision and correct treatment and management options for breast ailments. AI-based diagnosis suffers from shortage of datasets, requirement of high-quality datasets, appropriate segmentation techniques to delineate the desired ROI. AI system trained on a single task cannot be used for multitasking, thereby limiting its usage in real-time scenarios. 43

Inference From the Review

The results of ML networks for various applications mainly depend on the dataset used to train the network. DL architectures use deep convolutional networks and it is possible to extract a highly discriminating feature set at multiple abstraction levels. Hence, it provides better results compared to simple ML architectures. On the other hand, it is not easy to train a deep network from the scratch.

The following requirements make the training process much more tedious, complex, tiresome, and slow.

Need of huge training dataset with labeled output. In biomedical disease detection problems, it is difficult to collect large dataset due to the following reasons: (1) annotation by experts is expensive and (2) scarcity of diseased and normal data. Requirement of extensive memory and computational resources to speed up the training process, to reduce training time. Requirement of fine-tuning of number of layers and hyperparameters to overcome the complications in CNN training due to overfitting and convergence.

Therefore, the DL task from scratch demands more attention, expertise, experience, and patience.

To overcome these limitations following solutions have been proposed in the literatures as follows:

TL using pretrained CNN with proper fine-tuning during training are more robust than training with a small dataset size. Based on the data size availability at hand, layer-wise fine-tuning may also be used to obtain the best results.

When CNN models pretrained using natural images are used for medical image applications, it is hard to get better results. Because natural images are typically texture-rich and of low contrast compared to medical images. This could be alleviated by fine-tuning CNN network through a cross-modal approach. This approach suggested that CNN must be pretrained on other modalities of the same imaging domain. For example, a network pretrained with X-ray mammograms could be fine-tuned to classify breast tumors in MRI.

As breast X-ray is the commonly used screening method, large dataset is available.- Breast MRI is time-consuming and costly, it is preferred only for high-risk patients. In addition to that, it is very tedious to interpret and annotate the ground-truth of an MRI. Hence, a large, curated MRI breast image dataset is hardly available for research. Therefore, cross-modality TL between breast X-ray and breast MRI can yield better results. It is very much useful for improving the classification performance even with the available, small training dataset. Researchers can propose and work on some new cross-model fine-tuning of DL approaches for various breast image modalities to improve the BC diagnosis performance.

Imaging techniques such as X-ray, echography, elastography, and MRI use different and unique techniques and principles for obtaining mammographic images at different forms and levels. When features are extracted from these images, they reflect those details. If the unique features from the images of 2 or more image modalities are combined through an appropriate feature fusion technique, a new feature set containing the fine details available from all those image modalities will be generated. Therefore, the use of this new feature set for the diagnosis of breast abnormalities will offer a better performance than obtained with features of single modality images. Based on this feature fusion concept, deep features derived from different breast imaging modalities could be a better choice for the future research direction.44,45

Conclusion

DL approaches for improving the detection performance of BC is analyzed in this study. This review focuses on the use of various DL architectures such as CNN, TL, cross-modal learning, fine-tuning CNN for CAD of BC. CNN can be used as a feature extractor, and classifier. CNN offers enhanced performance through proper hyperparameter tuning. TL proves to be an effective solution for biomedical problems such as BC as it contains only limited data. Radiomics in conjunction with DL also improved CAD performance.

Designing a TL framework only with breast images such as mammograms, US sonogram, elastography images, histopathological images, and MRI can help in learning BC-specific features. The above network trained on images collected from different modalities could provide deep features irrespective of the modality. Feature transformation approaches could also be applied to the deep features for fine-tuning the performance. Optimizing the hyperparameters involved in building CNN and using appropriate feature selection strategies can further refine the system's performance. Feature fusion from multiple imaging modalities, single view, and multiview image fusion can also boost the diagnostic accuracy of the CAD systems.

Footnotes

Abbreviations

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.