Abstract

Public perceptions of science and scientific institutions have become more negative in recent years, especially among individuals who identify as ideologically conservative in the United States. While there is much work investigating the origins and implications of this decline, we focus instead on understanding the ways in which symbols of scientific expertise, like the university, convey information in a politicized environment. Universities are seen as trusted scientific experts or biased propagandists, depending on individuals’ ideological identification. Are individuals more likely to believe research coming out of universities that they perceive to reflect their own ideological biases? This project looks at the effect of the academic source cue – the university label – on individual assessments of the research that these universities produce. Drawing on results from two survey experiments focused on climate change and racial wealth disparity research, we find that while liberals are more likely to believe research that confirms their previously held beliefs, they are also more likely to believe incongruent information when it comes from a university that they believe shares their bias. Conservatives, on the other hand, remain skeptical of academic research despite the message or its’ source. The findings point toward both “blind trust” and “blind skepticism” in academic institutions.

Public perceptions about science, scientific experts, and universities have become more negative in recent years (e.g., Gauchet, 2012; Pew Research Center, 2019, 2021). In this so called “post-truth” era, the “public’s trust in facts and evidence more generally is eroding” (Farrell et al., 2019, p. 192). This phenomenon has been attributed to, among other things, growing anti-intellectualism or the “expression of negative affect toward intellect, intellectuals, and the intellectual establishment” (Barker et al., 2022, pp. 40-41; see also Motta, 2017). In the United States, anti-intellectualism aligns, to some extent, with politically conservative ideology (Gauchet, 2012; Hornsey et al., 2018; Barker et al., 2022; but see Enders et al., 2022), casting universities and scientific expertise in a political light. While liberals and Democrats are quick to accept scientific evidence for everything from masking for COVID-19 prevention to anthropogenic causes of climate change, conservatives and Republicans remain skeptical, sometimes even after the scientific community agrees about a particular phenomenon.

Scholarship on anti-intellectualism and scientific skepticism primarily emphasizes their origins and explanations (e.g., Hornesy et al., 2018; Lunz Trujillo, 2022) as well as their effects on mass behavior, such as increased rejection of scientific consensus, epistemic hubris, and information searches (Motta, 2017; Barker et al., 2022; Merkley & Loewen, 2021; Hornsey et al., 2018, etc.). But by focusing on the origins and implications of anti-intellectualism and scientific skepticism more broadly, we overlook the ways in which symbols of scientific exploration and expertise, like the university, convey information in a politicized environment. In this paper, we conceptualize academic institutions as source cues potentially conveying ideological information beyond the mapping of research findings onto ideological positions. If this is the case, individuals’ pre-existing judgements of a university’s ideological position could shape their assessments of research produced by that university. In other words, while theories of motivated reasoning suggest that individuals will be more inclined to see research findings that align with their pre-existing beliefs as more credible, we argue that they will also see that research as more credible if they perceive the university conducting the research as aligned with their views.

Our focus on understanding the university as a symbol and source of intellectualism also leads to potential mitigation strategies. Can scientific information from a source that is perceived to share your ideological bias change your mind? This paper looks at the effect of the academic source cue – the university label – on individual assessments of the research that these universities produce. We use two survey experiments to show that individuals’ understanding and interpretation of academic research is affected by both motivated reasoning and source cues.

What is more, liberals are particularly affected by the university source cue. Across both experiments, liberals are more likely to believe research if it comes from a university that is perceived as more liberal, even if the research goes against scientific consensus. The same is not true for conservatives, who find university research to be similarly — or less — persuasive or credible regardless of its provenance. Our findings suggest that while conservative distrust of the academy writ large motivates their assessment of academic research, liberals’ easy acceptance of the same research also explains the gap in assessments of both source and research credibility. Both conservative skepticism and liberal acceptance of academic research have implications not only for us as a community of scholars, but also for evidence-based public policy and scientific inquiry more generally.

The Politicization of Academic Research

In today’s polarized political climate, liberals and conservatives use a different set of “facts” and evidence to support their political positions (e.g., Stein et al., 2021). Liberals and conservatives also vary in the extent to which they identify as intellectuals (intellectualism) and in their feelings about intellectuals and the intellectual establishment (anti-intellectualism) (Barker et al., 2022). This politicization of science is particularly concerning because “the credibility of scientific knowledge is tied to cultural perceptions about its political neutrality and objectivity” (Gauchet, 2012, p. 168). If scientists and universities that produce this science are no longer viewed as objective or unbiased, their credibility is weakened (Eagly & Chaiken, 1993), ultimately leading to greater distrust of scientific research.

This decline in public trust in science is not uniform across the population but is concentrated among self-identified conservatives (Gauchet, 2012; Mooney, 2006). Conservatives are more distrustful of the scientific community (McCright et al., 2014; Nisbet et al., 2015) and more likely to reject evidence for climate change (Kahan et al., 2012; Cook & Lewandowsky, 2016; Motta, 2017; Hornsey et al., 2018). Anti-intellectualism, or negative affect toward intellectuals or the intellectual elite, is also more predominate among those on the right (Barker et al., 2022). Individuals with hierarchical and individualistic values – values corresponding to conservatism – are more likely to dismiss scientific evidence when that evidence would lead to restrictions on commerce and industry as compared with individuals with more communitarian and egalitarian values (Kahan, 2010). And, conservatives are more likely to evaluate science rejectors as more credible than are liberals (Stein et al., 2021).

While, overall, scholarship suggests that distrust in science and scientific institutions is concentrated among conservatives, liberals are not immune. Ditto et al. (2019), for example, find that liberals and conservatives are equally biased, on average, of their assessment of scientific research. Liberals also express lower trust in science when the scientific conclusions are at odds with their worldview (McCright et al., 2014). Furthermore, more recent research suggests that the relationship between skepticism of some scientific evidence and conservative ideology may be constrained to the United States (Hornsey et al., 2018). And, the relationship between conspiratorial thinking and conservative ideology may depend on the conspiracy theory itself as well as when and where surveys are conducted (Enders et al., 2022).

While academic research has documented declining trust in science, skepticism of academic institutions is also visible in the public sphere. Historically, as Barker et al. (2022) remind us, “elite universities have long invited scorn from mainstream America for their relative receptiveness towards Marxist thought and other culturally liberal ideas” (p. 42). A quick canvass of headlines from conservative media sources point to “Radical Liberal Bias in U.S. Colleges” (Williams, 2018), lament that some colleges have “No G.O.P. Professors” (Fox News, 2018), and raise alarms about “Liberal Indoctrination on Campus” (Polumbo, 2018). According to a 2018 Pew Research Report (Brown, 2018), 73% of Republicans say that higher education is going in the wrong direction compared to 52% of Democrats. Interestingly, Republicans attribute this process to professors “bringing their political and social views into the classroom” to a much greater extent than do Democrats – 79% versus 17%, respectively.

Both scientific and cultural explorations demonstrate that scientific institutions are increasingly viewed through a partisan and ideological lens. While it has been repeatedly demonstrated that the average citizen does not organize his or her political thoughts ideologically (Converse, 1964), they do use knowledge of ideologically-affiliated groups or entities to construct an understanding of liberal or conservative identity (Kinder & Kalmoe, 2017; Mason, 2018a, 2018b). In short, “ideology is everywhere” (Jost, 2006, p. 652). If universities in general are perceived as a liberal “group,” then information from these sources—including academic research—will be interpreted through that lens. As liberal symbols, their authority will be accepted by most liberals and challenged by conservatives. It follows that individuals who identify as conservative should discredit academic research to a greater extent on average, relative to liberals. However, these relationships may be reversed if a university serves as a conservative source cue, leading liberals to be more skeptical of the institution and its research while conservatives find it more credible.

The Academic Source Cue

When making decisions, individuals use heuristics to reduce decision-making costs (Lau & Redlawsk, 2001). Source cues are regularly employed as a heuristic in political evaluations and constitute specific attributes of communicators such as their credibility or likeability (Chaiken, 1980; Chong, 2013). Heuristic processing of source cues “provide(s) a critical mechanism by which the citizen can form reliable judgements while simultaneously conserving valuable cognitive resources” (Mondak, 1993a, pp. 168–9). Common source cues in political science include interest groups (Callaghan & Schnell, 2009; Weber et al., 2012), political parties (Goren et al., 2009), elected officials and political candidates (Mondak 1993a, 1993b; Weber et al., 2012), ideologies (Hartman & Weber, 2009), the U.S. military (Kam, 2020), first ladies (Aronow et al., 2018; Kam, 2020), and news organizations, think tanks, scientific experts, and non-partisan entities (Callaghan & Schnell, 2009; Jerit, 2009; Kam, 2020). As an example, knowledge of the insurance industry’s position on complicated insurance reform propositions led less informed voters in California in 1988 to make voting decisions that looked similar to those of the most-informed voters (Lupia, 1994).

In general, prior research has focused on how individuals interpret information that comes from sources that are explicitly political (but see Jerit, 2009; Kam, 2020). Interest groups, candidates, parties, and elected officials all have a stake in a particular political outcome; therefore, it should come as no surprise that individuals interpret cues from these organizations and individuals through a political lens. While academic institutions, and scientific research in general, are not inherently partisan, it is reasonable to expect that they also convey meaningful political and even ideological signals, especially in today’s polarized political climate. If individuals hold different ideological appraisals of specific universities, then those evaluations can impact the assessment of the research associated with them. Specifically, colleges and universities may act as source cues, providing meaningful information that individuals utilize in making assessments of academic research.

Academic institutions attempt to convey both expertise and trustworthiness. Universities employ leading experts in many fields and utilize the scientific method and peer review process. Universities are also seen as trustworthy sources; individuals not only trust information from these sources, but they also trust them to educate and care for the next generation. Credible sources are typically rated as more persuasive and convincing (Eagly & Chaiken, 1993; Hovland & Weiss, 1951; Weber et al., 2012). However, the perceived credibility of universities – over and above other political actors – largely stems from its objectivity and non-partisan status (Jerit, 2009).

While researchers assume that individuals accept the findings and conclusions of academic research on the basis of its scientific merits, the university cue could also evoke differing levels of identification among individuals. For example, an alumnus of a university would more strongly identify with that university than a non-alumnus (Hartman & Weber, 2009). What’s more, the name of a university may convey an ideological identification, triggering directional motivations and necessitating that individuals adopt a position on university research in order to maintain their ideological identification as politically liberal or conservative (Kunda, 1990; Taber & Lodge, 2006). Information from an ideologically congruent source should be deemed as more persuasive and convincing than cues from an ideologically incongruent, or threatening, source (Callaghan & Schnell, 2009; Feygina et al., 2010; Kahan, 2010). Like credibility, ideological identification with the source could also work to increase the perceived expertise and trustworthiness of the source. The more individuals see themselves as ideologically aligned with an academic source, the more they may be likely to accept the credibility of the research the institution produces.

Our expectations regarding individual and source cue ideology are three-fold. First, research findings will shape individuals’ assessments of overall credibility. When individuals read results of academic research that confirm (disconfirm) their previously established beliefs, they’ll be more likely to trust (distrust) the source as well as (not) believe the results of the research. Finding support for this hypothesis would be in line with previous work in this area (e.g., Kahan et al., 2012; Kuru et al., 2017; Lewandowsky & Oberauer, 2016; Stein et al., 2021).

Individuals perceive academic research to be more credible when the research findings are congruent with their ideological beliefs. Of course, it could also be the case that universities and their association with the scientific method act as a cue for accuracy goals, encouraging people to set their ideological beliefs aside. Therefore, they may not be any more likely to agree with the research findings, but they will see them as credible. This would lead us to reject Hypothesis 1. Second, we expect that the university may convey information about the credibility of the source by communicating either a politically liberal or a conservative signal. Regardless of whether the outcome of the research supports a particular ideological viewpoint, participants will assess it based on the perceived ideological leanings of the university that sponsored it. And, we expect that these results will vary by respondent ideology. Individuals who identify as politically liberal (politically conservative) will be more likely to believe disconfirming research results when they come from a traditionally liberal (conservative) university.

Individuals perceive academic research to be more credible when the university sponsoring the research is perceived to align with their ideological beliefs. Again, it is important to acknowledge that there are reasons that the null hypothesis might hold here as well. For example, conservatives might know that the scientific consensus does not support their position, but hold conservative beliefs anyway. If this is the case, they would not be persuaded by the ideological signal of the university, nor would they find the university itself any more or less credible. It could also depend on the issue area and/or the current political environment, limitations we return to in the article’s conclusion. Finally, research demonstrates that credibility is a combination of an individual’s assessment of a target’s expertise and their trustworthiness (e.g., Chong, 2013). It could be the case that the two components of credibility – expertise and trustworthiness – are assessed differently across the ideological spectrum. Conservatives and liberals may be equally likely to view academic institutions as experts but have differing levels of trust in those institutions. While previous research has demonstrated ideological variation in trust in universities (Parker, 2019), little has been done to assess that trust relative to evaluations of expertise. In other words, if we disaggregate source credibility into its component parts, we should see a difference in liberals’ and conservatives’ assessments of the university’s trustworthiness. However, we should not see a difference in their perception of the university’s qualifications to conduct the research.

Conservatives are more likely than liberals to distrust universities.

Conservatives and liberals are equally likely to see universities as qualified to conduct research.

Experiment #1

Participants and Procedure

Participants in Experiment #1 were recruited through Amazon’s Mechanical Turk in October 2018. MTurk workers self-selected into the survey experiment and were paid $1.25 for their participation; 835 respondents completed the full questionnaire.1 Like the majority of samples collected on Mechanical Turk, the participants tended to be white, college educated and liberal at a higher proportion than one would find in the national population (Berinsky et al., 2012). The demographic make-up of participants, as well as comparison data from the 2010 Census, is included in Appendix B.

Participants responded to demographic questions and then were randomized into one of six treatments. Three of the treatments took the scientific-consensus position on climate change – attributing climate change to human activity. Of those three treatments, one listed the research coming from a liberal university (the University of California at Berkeley), one coming from a conservative university (Texas A&M University), 2 and the other contained no university label.

The other three treatments took the “climate denier” position on climate change – questioning the extent to which climate change resulted from human activity. These three treatments also varied in their attribution to either the University of California at Berkeley, Texas A&M University, or no university. 3 Approximately 120 participants received each condition (See Appendix C for all treatments and Appendix D for the survey instrument). After receiving the climate change information, individuals responded to several questions about the research and the research sponsor.

In our hypotheses, we suggest that source cue and partisan motivation effects occur because of attitudinal congruence between the individual and the information they are receiving. In our analyses, we consider conservative “attitudinal congruence” to be a match between self-identified conservatives4 and exposure to the “climate denier” research, while liberal congruence is a match between self-identified liberals and exposure to the “scientific consensus” treatment (Hornsey et al., 2018).

Of course, one can be a conservative and believe in human contributions to global warming while disagreeing with liberals about the way to solve climate issues. To capture this distinction, we asked participants if they believed global warming was happening. Only 7% of the sample responded in the negative. Of those who stated they did not believe climate change was happening, the majority (79.6%) identified as conservative. While conservatives make up the majority of climate change deniers, 80% of conservatives in our sample have policy beliefs that align with the scientific consensus that climate change is exacerbated by human decisions. This sets up a hard test for ideological motivated reasoning, as what is perceived as the ideologically “correct” response differs from some conservatives’ stated policy attitudes.

To ensure participants read the paragraph of information on climate change and paid attention to the source of the research, we did two things. First, we held participants on the treatment page for 15 seconds. Second, we included a manipulation check at the end of the study that asked participants to select the type of organization that sponsored the research in the article that they read (a college or university, a non-partisan organization, no organization was specified, or other). For the manipulation to be effective, a greater proportion of those in the treatments should have selected “college or university” and those in the controls needed to have selected “no organization specified.” Two tests of difference in proportions demonstrate that this is the case. Sixty-nine percent of treatment respondents reported that the sponsoring organization from their article was a college or university, while only 15% of those in the control did the same (one-tailed p < .01). In contrast, 45% of those in the controls did not recall any organization sponsoring the research, as opposed to 20% of those in the treatment (one-tailed p = .0001).

We looked at the effects of the climate change vignettes on three types of evaluation: subjective persuasion, research credibility, and source credibility. Rather than measure objective persuasion through a pre- and post-treatment assessment of policy attitudes, which are unlikely to change based on a single brief intervention, 5 we adapted a measure of subjective persuasion used by Weber et al. (2012) in their study of campaign ads’ persuasive appeal. In their research, Weber et al. argue for the distinction between measurement of “objective” and “subjective” persuasion because “there may be differences between one’s overall evaluation of the advertisement (subjective persuasion) and the evaluations of the ad-advocated candidate (objective persuasion)” (2012, p. 571). We believe a similar distinction is important here— participants may evaluate the university research differently than they do the outcome of that research (attribution of climate change). This measure assesses the extent to which participants found the research to be persuasive and convincing, then combines the two assessments into an additive scale where zero indicates that the research was not at all persuasive and one indicates that it was very persuasive (alpha = .91; M = .50, sd = .35).

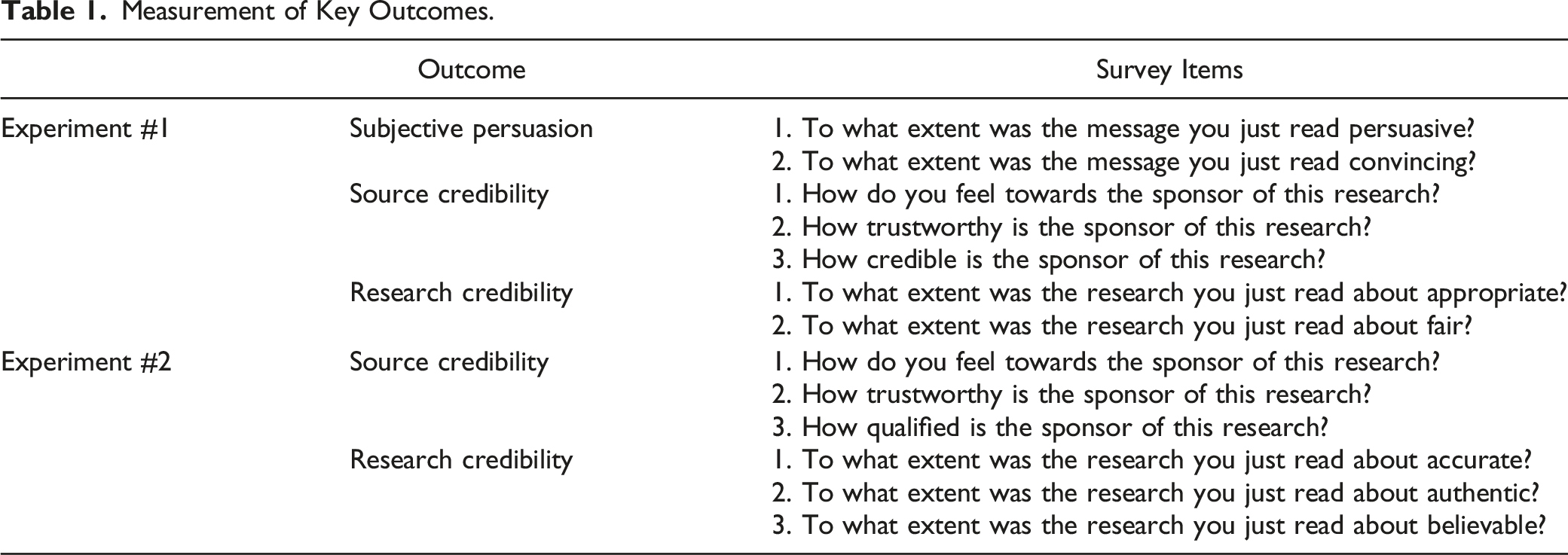

Measurement of Key Outcomes.

To assess participant ideology, we included the standard American National Election Study (ANES) measure coded on a single dimension from 1 (extremely liberal) to 7 (extremely conservative), then collapsed into three categories for ease of analysis (see Appendix B for the ideological breakdown). In each model we also controlled for party identification (using the standard 7-point scale where 1 indicates a strong Democrat and 7 represents a strong Republican), participants’ belief in global warming, and education levels. Each control was measured pre-treatment.

Findings

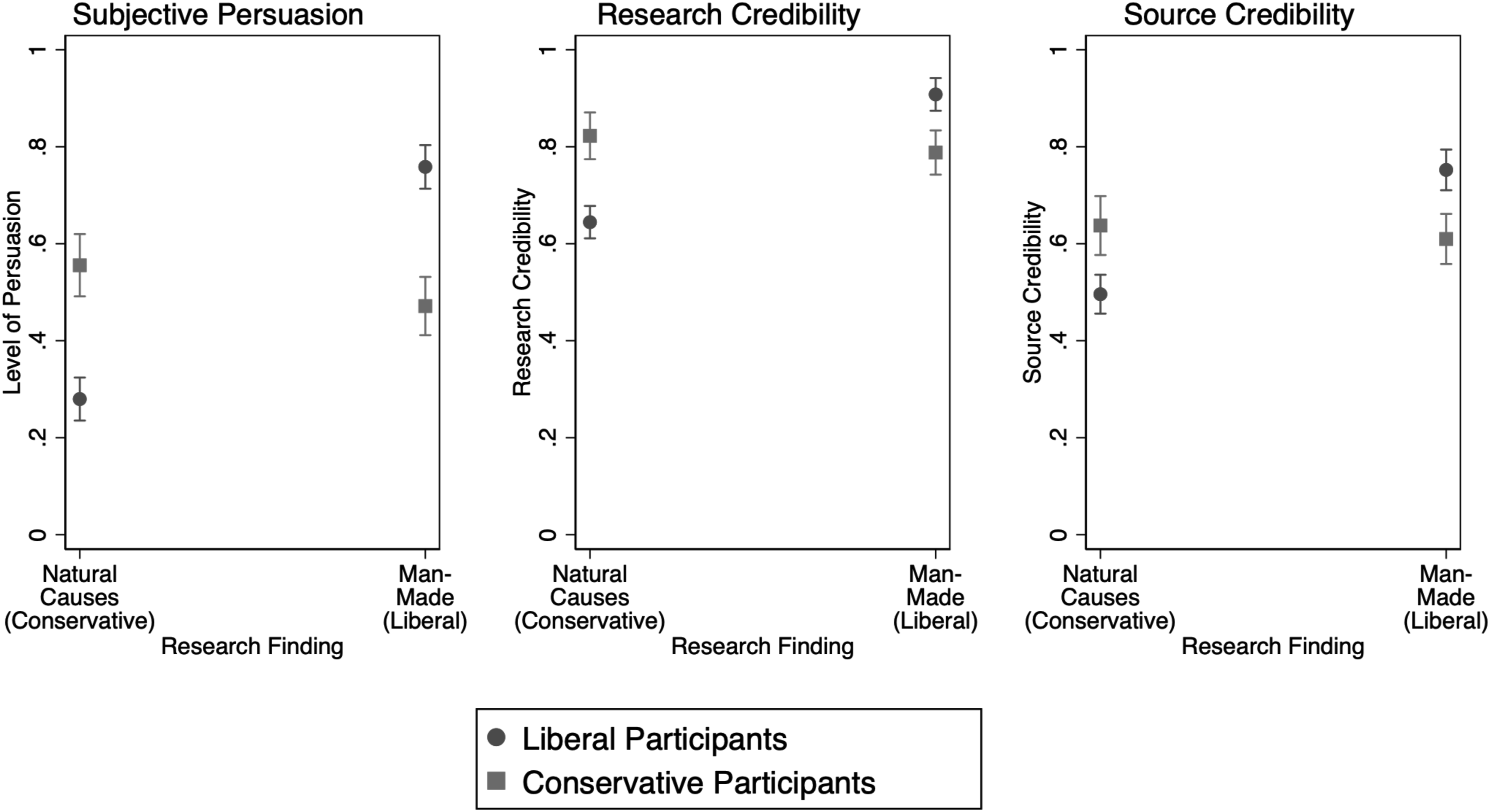

The first hypothesis focuses on expectations of motivated reasoning — liberals would be more likely to see the research that global warming results from human decisions as more credible than research suggesting global warming is a natural process, while conservatives would have the opposite reaction, regardless of the source of the research. To investigate this hypothesis, we ran a series of OLS regressions of participant ideology and treatment on the three indices for subjective persuasion, research credibility and source credibility. 7 To better isolate the effect of the research content, we pooled treatments into two groups—exposure to research about natural causes of global warming, and exposure to research in which global warming is affected by human behavior, regardless of the university to which the research was attributed.

Figure 1 displays the linear predictions from each model (full model specification is in Table F.1). The clearest support for our hypothesis would manifest as an “position swap” in each panel; conservatives would find the natural-causes research more compelling, while liberals would be more persuaded by the human-causes research. Liberal participants behave as we would predict, finding the conclusion that climate change is man-made more persuasive and legitimate than the argument that climate change is a natural phenomenon. Conservatives, however, are statistically and substantively indifferent between the two findings; there is no significant difference in their assessment of the conclusions of the research. Motivated reasoning effects, Experiment #1. Note. MTurk sample. Each panel represents the linear predictions from an OLS model examining the effects of treatment (holding research content constant) and participant ideology, controlling for partisanship, education, and belief in global warming. The underlying model can be found in Appendix F, Table F.1. While ideological moderates are included in the model, they are not displayed in the figure for ease of interpretation.

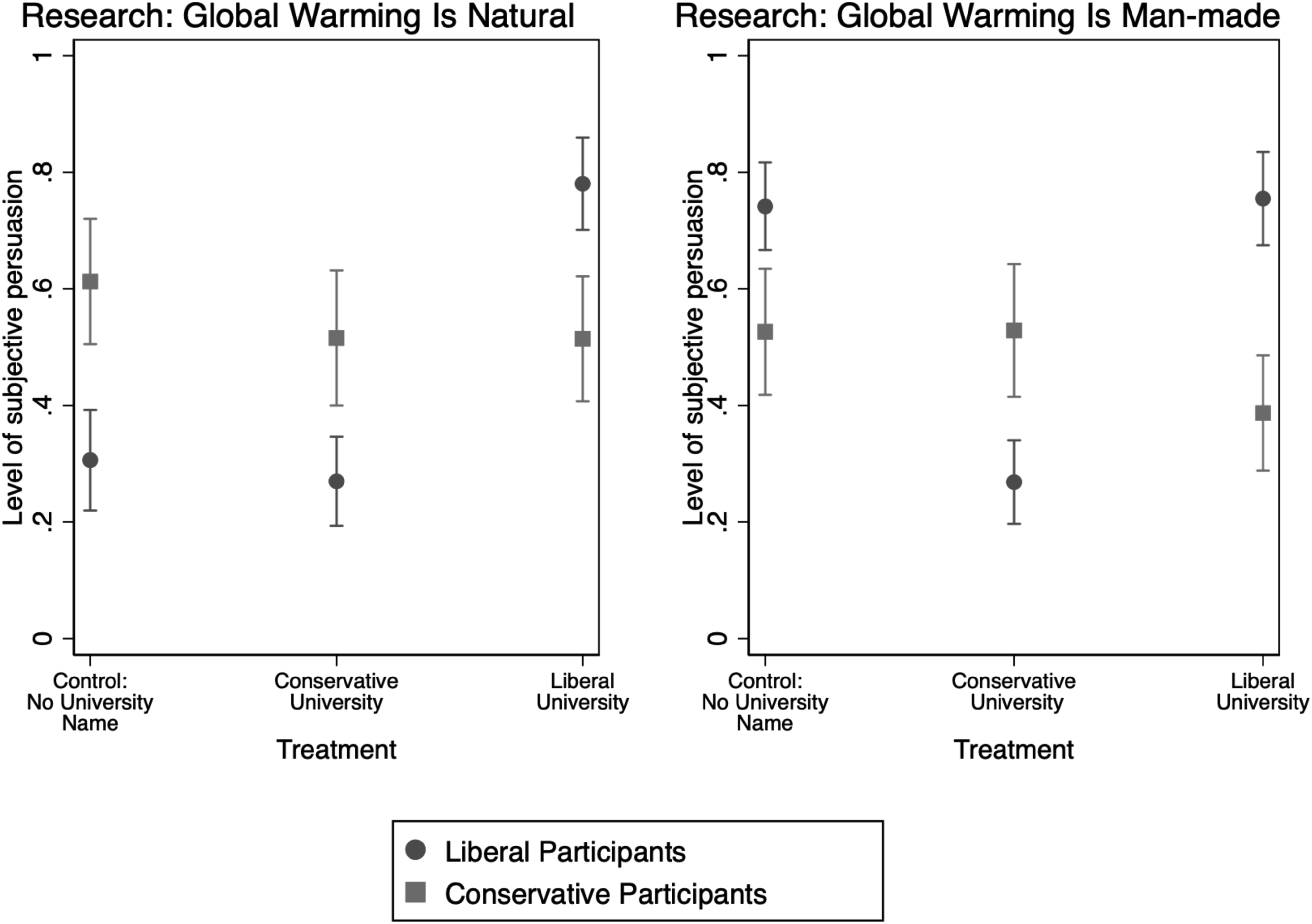

Beyond motivated reasoning, we wanted to know whether or not the academic source of the message could get individuals to change their minds. If conservatives are skeptical about academic research, can a perceived “conservative” university change their minds? Hypothesis 2, the source cues hypothesis, argued that liberals and conservatives would find research from a university they perceived as similar in ideology more compelling than that from a university they saw as holding different political values from their own. Figure 2 depicts linear predictions from two OLS regressions (Table F.4) of participant ideology and treatment on subjective persuasion in Experiment #1.

8

If the source of the research had no effect on how persuasive participants found the information, we would see little change in liberals’ and conservatives’ positions across both panels in Figure 2. Instead, we see large, substantial swings in subjective persuasion, particularly among liberals. Persuasive effects of the source of research, Experiment #1. Note. MTurk sample. Plots represent the linear predictions from two OLS model examining the effects of treatment (holding research content constant) and participant ideology, controlling for education, partisanship, and belief in global warming. The control is the condition that discusses the research on climate change but does not attribute it to a university (no university label). The underlying model can be found in Appendix F, Table F.4. While ideological moderates are included in the model, they are not displayed in the figure for ease of interpretation.

Without a university cue, liberals rank the persuasiveness of the research supporting global warming as a natural process at .31 on the zero to one scale—they’re not particularly persuaded. Providing them with the cue that a conservative university (Texas A&M) conducted the research does not appear to change their level of persuasion; the linear prediction moves to .27, an insignificant change. However, when they hear the same information attributed to the University of California at Berkeley, they find it much more persuasive (.78 on the zero to one scale; statistically significant difference at p < .01). The reverse is true for research arguing that climate change is exacerbated by humans. The liberal university cue does not shift perceptions of the persuasiveness of the research relative to the control and the absence of a university cue—the linear predictions are .75 and .74 respectively. Liberals find the result much less compelling when it is from Texas A&M (.27), a difference that is statistically significant (p < .01).

While the university source cue appears to have a significant effect on liberals’ perceptions of the research, it does not appear to have the same pull on conservatives. There is no statistically significant difference in the persuasive effects of the interaction of conservative participant ideology and university cue when research is focused on the natural causes of global warming—the linear predictions hover between .50 and .61. The same is true when we look at research focused on global warming as man-made. While conservatives’ assessment of the research’s persuasiveness drops when it is associated with a liberal university, that change (−.15) is not statistically significant. These patterns hold across the measures of source and research credibility: the liberal university source cue signals greater research credibility among liberals for research on the natural process of global warming, and the conservative university cue depresses the credibility of research arguing climate change is man-made (Table F.4 and Figure F.1). Thus, we have mixed support for Hypotheses #1 and #2; while liberals follow the expected patterns, we do not see the same effects for conservatives, a pattern we will discuss further below.

Experiment #2

While Experiment #1 offers some support for both Hypotheses #1 and #2, it nonetheless suffers from theoretical and methodological concerns. By using real universities, we increase the ecological validity of our experiment, but we sacrifice some equivalency across treatments— Berkeley and A&M differ on more dimensions than simply ideology. Using these universities also does not help us assess what happens when individuals cannot ascribe an ideological perspective to a university. What’s more, there may be something specific about climate change as a political issue that impacts our results. To resolve these concerns, and test Hypothesis #3, we ran a second experiment using a fictional university and a different research topic: explanations for racial wealth disparities.9 Relatedly, Experiment #2 allows us to more effectively examine the mechanism by which source cues impact credibility.

Participants and Procedure

Our second experiment was conducted using a sample of 1233 Americans recruited using Prolific in September 2021. 10 To maximize the number of liberal and conservative participants, we excluded moderates from the sample based on Prolific’s initial screening for ideology. Many of the same caveats of online samples discussed above also apply here; in particular, we note a substantial imbalance in the proportion of liberals (86%) and conservatives (13%) who participated in the experiment relative to their presence in the U.S. population. We include the demographic features of this sample in Appendix B and reflect on the impact of the (relative) small number of conservative participants and other sample disparities (such as gender) in Appendix E and in the Discussion section below. This experiment was pre-registered with OSF. 11

The procedure for Experiment #2 closely adhered to that of Experiment #1, although we changed details of the experimental treatments and the outcome variables. We made two changes to the experimental treatments in this study. First, based on responses to a pilot test conducted in May 2021 (see Appendix A), we chose to make racial inequality and the wealth gap the primary focus of the research described in the treatment. The liberal treatment indicated that the research findings supported the argument that the “difference in blacks’ and whites’ financial success is the result of institutional discrimination and policymaking,” while the conservative treatment’s research findings emphasized the role of “individual choices and behaviors” in explaining the racial wealth gap. Second, we also used a fictional university—Central Illinois University—that was described as being “known for its liberal/conservative faculty and student body.” In the control condition, this statement did not appear at all; participants were just told the university name (see Appendix C for all treatments).

We used slightly different measures of source and research credibility as our primary outcome variables in Experiment #2 and dropped the measure of subjective persuasion. 12 Table 1 details the differences between the outcome measures used in the two experiments. While we kept the first two items in the source credibility index used in Experiment #1 (“How do you feel towards the sponsor of this research?” and “How trustworthy is the sponsor of the research?”) we changed the third from “How credible is the sponsor of this research?” to “how qualified is the sponsor of this research”. When we create the additive index from these three questions, we find the scale continues to hold together well (alpha = .73) and that, on average across all treatments, the university sponsor is seen as moderately credible (M = .59, sd = .22). We also ask about the extent to which the research itself is accurate, authentic and believable. These items are highly interrelated (alpha = .96) and indicate participants saw the research as somewhat credible (M = .62, sd = .35).

Findings

Across all participants, results from a one-way ANOVA demonstrate that research that supported the “liberal” argument that the “difference in blacks’ and whites’ financial success is the result of institutional discrimination and policymaking” was seen as more credible than research concluding that the difference could be traced to “individual choices and behaviors” (F (64.3, 83.1) = 930.3, p < .01). The same is true when participants were asked to measure the university’s credibility across the different research findings (F (11.0, 45.1) = 292.35, p < .01).

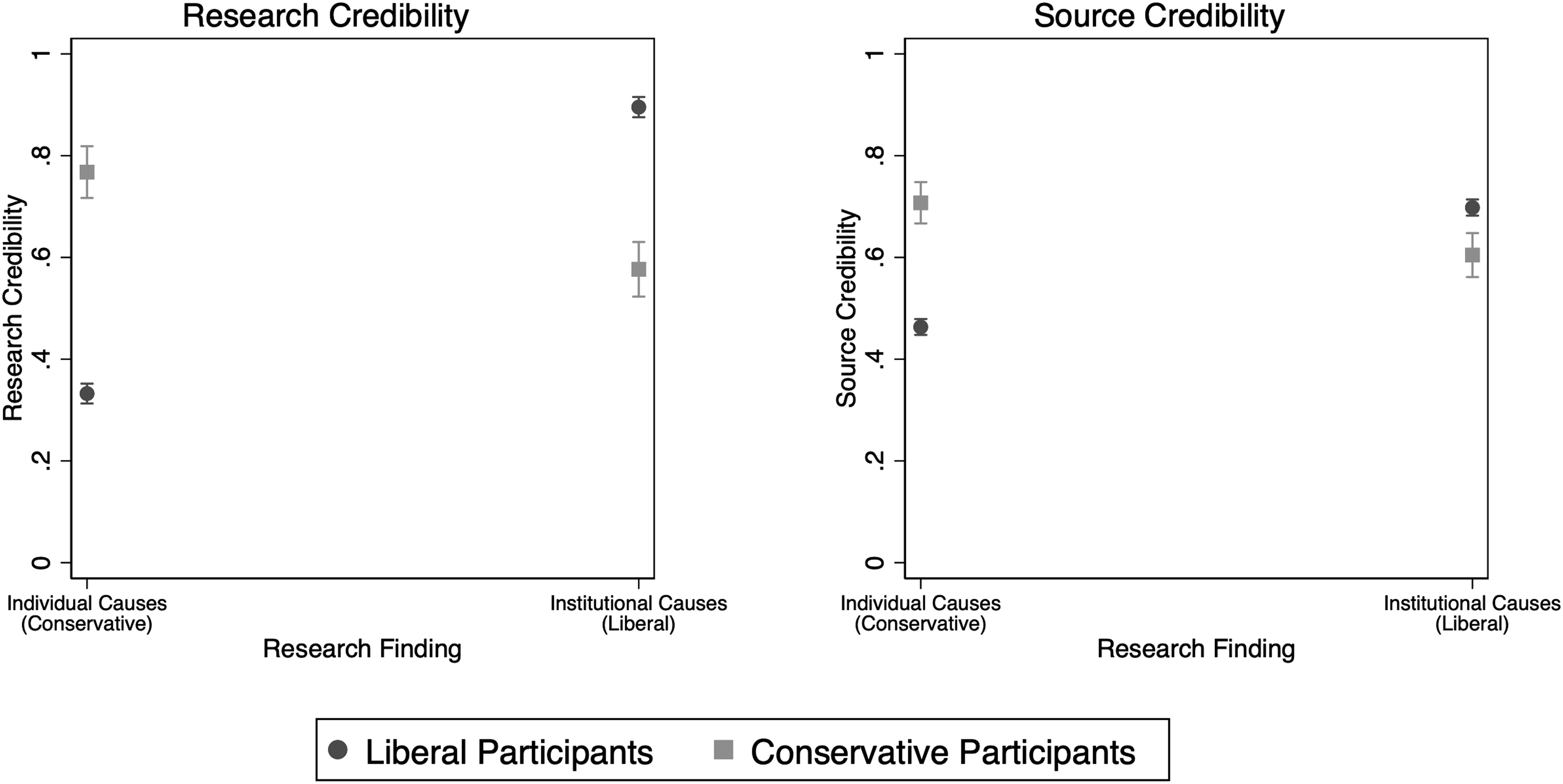

However, when we break assessments of credibility down by ideology, we see differential effects for liberals and conservatives. While both liberals and conservatives find research confirming the impact of institutionalized racism to be credible, there is nonetheless a statistically significant difference in their assessments, as measured in an OLS regression of the treatment, ideological identification, and the interaction between the two on message credibility. The left-hand panel of Figure 3 displays these results graphically. Unlike Experiment #1, we see the expected pattern for both liberals and conservatives. Liberals’ average evaluation of research credibility lands at a high .89 on a zero to one scale, while conservatives rate the same research as moderately credible (.57). The reverse is true for the research finding attributing differences to individual choices; the average conservative assessment of credibility falls at .76 while liberals rate it at .33. These patterns hold when we measure source credibility as well, although the effects are much smaller (see the right-hand panel of Figure 3). Thus, Experiment #2 offers strong support for Hypothesis #1, that the ideological direction of the research findings shapes participants’ perceptions of the research. Motivated reasoning effects, Experiment #2. Note. Prolific sample. Each panel represents the linear predictions from an OLS model examining the effects of treatment (holding university cue constant) and participant ideology. The dependent variables—source credibility and research credibility—were measured on a zero to 1 scale, with 1 indicating greater credibility. The underlying model can be found in Appendix F, Tables F.2 and F.3.

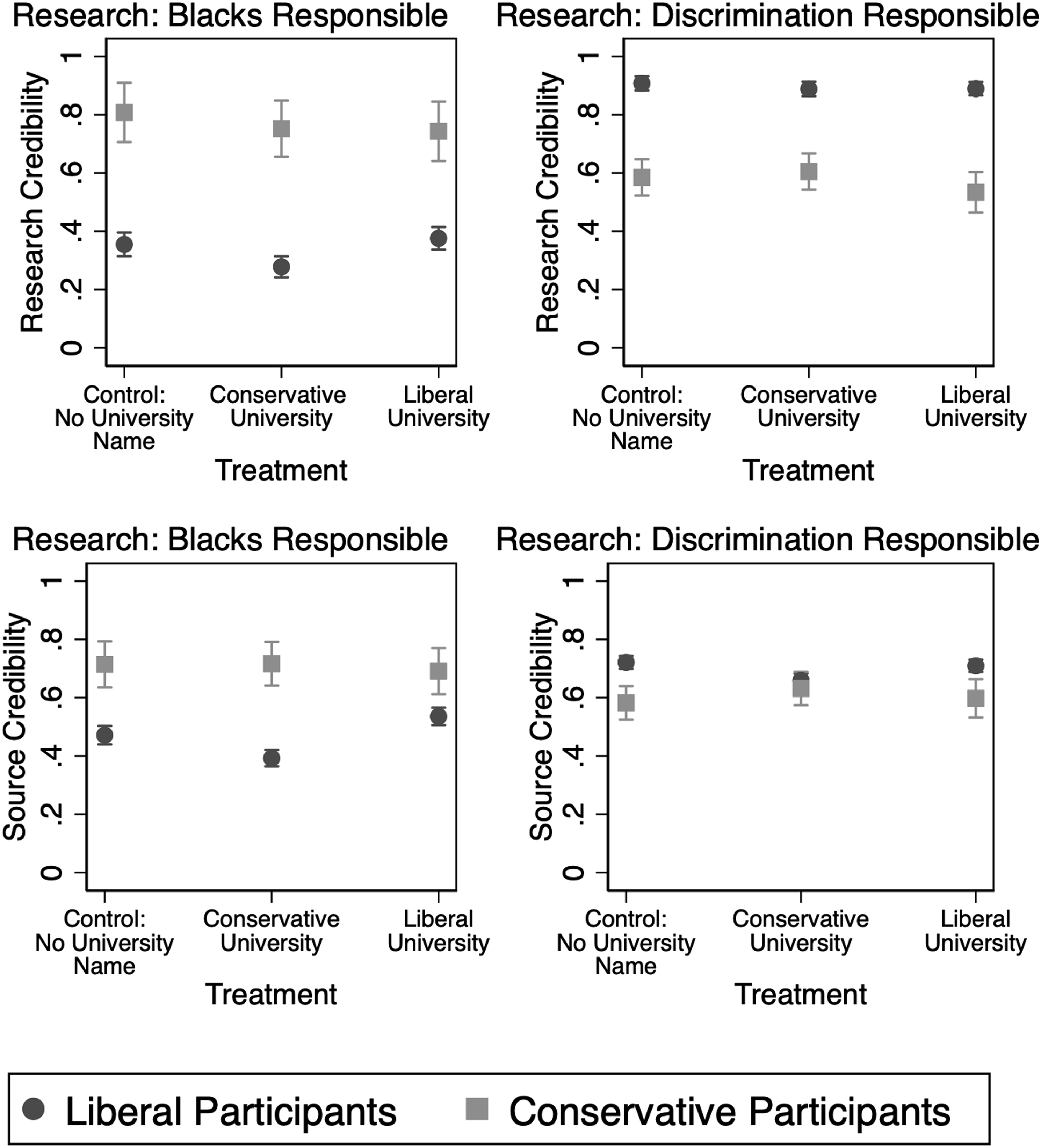

We also find support for Hypothesis #2, that the ideological cue associated with a university drives perceptions of credibility. Like Experiment #1, this finding seems particularly true for liberals. Across the four panels in Figure 4, we once again see clear motivated reasoning—liberals are less likely to rate both the research and source as credible when they are told research finds that Blacks are mostly responsible for their own position in society, while conservatives find research arguing discrimination is why Blacks cannot get ahead less credible. Credibility effects of the source of research, Experiment #2. Note. Prolific sample. Each panel represents the linear predictions from an OLS model examining the effects of treatment (holding the research finding constant) and participant ideology. The dependent variables—source credibility and research credibility—were measured on a zero to 1 scale, with 1 indicating greater credibility. The underlying model can be found in Appendix F, Table F.5.

However, the ideological position of the university also shifts these perceptions. When liberals are told research holding Blacks responsible for their own condition is being produced by a university that hosts primarily conservative students and faculty (top left panel of Figure 4), their assessment of the research’s credibility is predicted to be around .27 on a zero to one scale. This is statistically significantly lower than both the control (.36, p = .006) and the liberal university (.38, p = .001). Relative to the control, liberals’ assessment of the university’s credibility as a source (bottom left panel of Figure 4) decreases when the university is conservative (from .47 to .39), and increases when it is liberal (to .54). Once again, these differences are statistically significant at p < .001. Conservatives, on the other hover at around the same credibility assessment regardless of whether they are considering the research or the university.

When the research finds that discrimination is behind Blacks’ inability to get ahead, the university cue becomes less important for liberals’ assessment of the credibility of the research— the only statistically significant difference in this model (top right panel of Figure 4) is between liberals and conservatives. However, the university cue does impact both liberals’ and conservatives’ assessments of source credibility (bottom right panel, Figure 4). Both groups find the source relatively credible in the control condition, with predicted credibility of .72 (liberals) and .58 (conservatives). When participants are told the research comes from a conservative university, liberals are less likely to find the source credible (.66, p = .001), while conservatives find it more credible (.63, p = .013). Learning the research came from a liberal university, on the other hand, does not change either group’s evaluation of source credibility relative to the control. These results are mostly consistent with the patterns we found in Experiment #1: liberals respond to the academic source cue more strongly than do conservatives, particularly when the research contradicts their ideological beliefs.

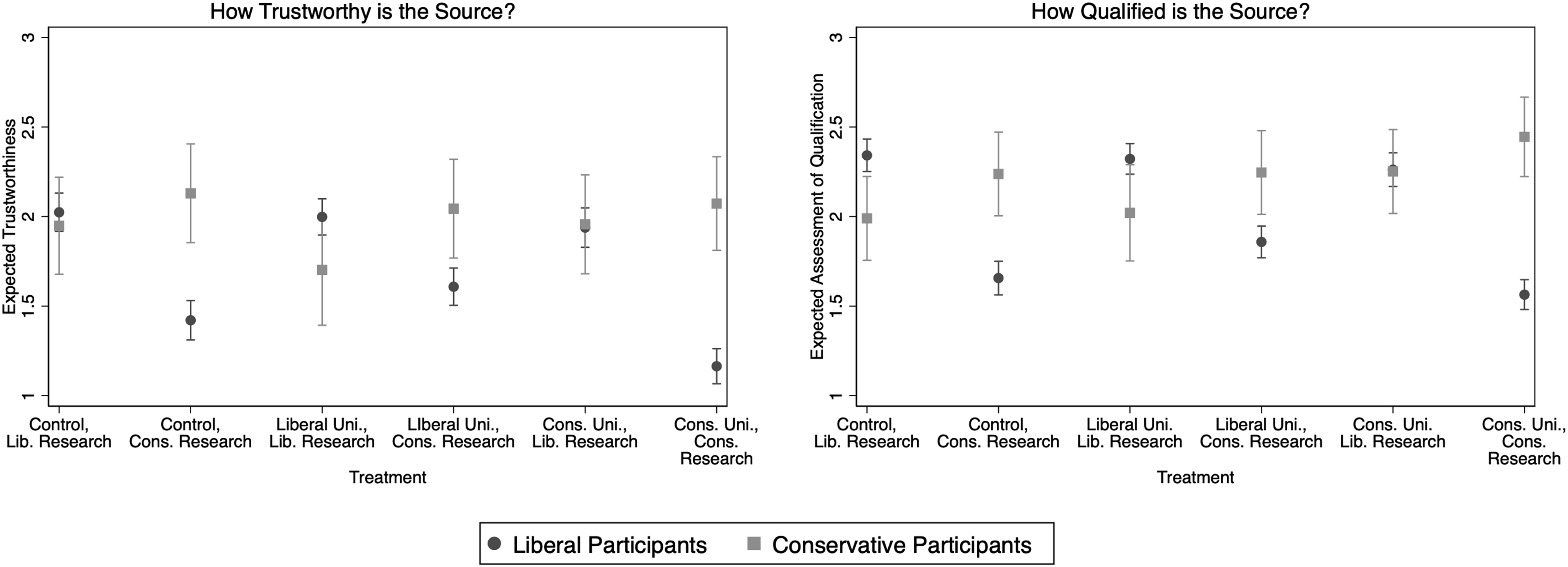

Our final hypotheses argued that differences in assessment of university credibility stems from the fact that conservatives are more likely to distrust universities than liberals, but that they are equally likely to see universities as qualified to conduct research. To examine this hypothesis, 13 we broke the source credibility scale back into its component parts—the extent to which participants believed the sponsor of the research (the university) was trustworthy (a 1–4 scale from extremely untrustworthy to extremely trustworthy, M = 1.72, sd = .77) and the extent to which they perceived the university sponsor to be qualified (1–4 scale from not at all qualified to extremely qualified, M = 2.02, sd = .67). We then split the sample by ideology and ran a two-sample t-test to determine if there were differences in liberals and conservatives perceptions of university qualifications and trustworthiness. There is a statistically significant difference in trust, with the average liberal falling at 1.68 (sd = .79) while the average for conservatives is 1.99 (sd = .63, p = .001). Conservatives in our sample have higher assessments of the trustworthiness of universities than liberals – in contrast to Hypothesis 3a. The difference between liberals’ (M = 2.00, sd = .68) and conservatives’ (M = 2.21, sd = .55) belief that the university is qualified is also statistically significant (p = .001) – again, contrary to our expectations in Hypotheses 3b. Liberals’ assessments of both the trustworthiness and the credibility of universities are lower than conservatives’ assessments.

If we examine this relationship using OLS regression, holding other variables including treatment assignment and political and demographic variables constant (Appendix F, Table F.6), we see one possible explanation for why the evidence is counter to our hypothesized relationships. As Figure 5 shows, we once again see evidence of motivated reasoning and source cue effects among liberals. Liberals are more likely to see the source of research as trustworthy and qualified when the research findings support their worldview, in line with McCright et al. (2014) previous findings. We also see a university source cue effect on top of motivated reasoning driven by the research finding; when liberals are told counter-attitudinal research (research that demonstrates that individual behaviors are responsible for racial disparities) comes from a conservative source, they find that source even less trustworthy or qualified than they do a liberal university that puts out the same research (p = .001) or a control university with no ideological cue (p = .016). Conservative assessments of the university’s qualifications and trustworthiness remain statistically indistinguishable across all treatments, with one exception: exposure to pro-attitudinal research (individual behaviors are responsible for racial disparities) from a conservative university increases their assessment of the university’s qualifications relative to the control condition (conservative research, but no university ideological cue). Ideological differences in trust and credibility across treatments, Experiment #2. Note. Prolific sample. Each panel represents the linear predictions from an OLS model examining the effects of treatment holding other variables constant (see Table F.6 for underlying model and controls). A value of 1 on the dependent variable represents an assessment of “not very trustworthy” or “not very qualified” while 3 indicates “extremely” trustworthy or qualified; each scale runs from zero to one.

Discussion and Implications

In order to repair public perceptions of science in the United States, we must first understand what information symbols of scientific expertise – in our case, the university – convey in a polarized political climate. We have argued that the university acts as a source cue, communicating, among other things, an ideological perspective. Our results suggest that when individuals perceive a university as liberal or conservative, they adjust their perceptions of the credibility of the research those universities produce. This pattern exists primarily among liberals, however; and scientific information from a university perceived as ideologically conservative does not decrease conservatives’ skepticism of the research.

Our findings confirm familiar and intuitive patterns of motivated reasoning among both liberals and conservatives. When learning about research into politicized topics like climate change or racial inequality, both liberal and conservative participants are more persuaded by research findings that confirm their previously held beliefs. Liberals report being more persuaded by the research that demonstrates that humans have contributed to climate change and find research that argues racial wealth disparities are systemic more credible. They are less persuaded by research that demonstrates that humans have not contributed to climate change and that the disparities are a result of individuals causes. Conservatives, on the other hand, find research that demonstrates racial wealth disparities are a result of individual choices and behaviors as more credible than research that points to systemic causes. The patterns for conservatives in Experiment #1 (climate change) are not significant.

For liberals, motivated reasoning not only guides their response to the research findings, but also their reaction when they are told a university has a “liberal” or “conservative” perspective, either because of cultural norms around those universities or because of specific ideological cues associated with them. In a perfect world, universities would not convey an ideological cue. And, for conservative participants in our study, the ideological cue signaled by the university has no effect. Yet, results from both experiments depict that the academic source of the research can influence liberals’ assessment of the research itself. Even when exposed to counter-attitudinal climate change and racial wealth disparity research, liberals who were told it came from the University of California at Berkeley —a “liberal” university— or a hypothetical university with “liberal students and faculty” were more persuaded than they were if the same research came from an unidentified or conservative source. Conversely, they were less persuaded by ideologically congruent information simply because it came from a university that was perceived as “conservative.” These shifts in source credibility are driven by the effect of the source cue and ideological direction of information on both the perceived trustworthiness and the qualifications of the university. On the one hand, liberal trust in academic institutions provides grounds for optimism. On the other hand, liberals’ “blind trust” of universities could lead them to believe research that goes against scientific consensus if that research is attributed to a liberal university and to not believe research supporting the scientific consensus if it comes from a university perceived to be conservative.

This project has many strengths, in particular its focus on multiple issues and results across multiple ways of cuing university ideology. If we had only used real universities, our results from Experiment #1 could be ascribed to factors unique to the specific universities we selected for inclusion in the treatments. Not all colleges and universities are perceived as equivalently ideological. Other attributes—their geographic location, religious affiliation, historical traditions, and major donors—can lead some institutions to be perceived as more conservative (or liberal) than others. Still other universities lack an ideological leaning altogether. Experiment #2 helps us resolve some of these concerns. By using a university that individuals do not know the ideology of and randomizing how the university is described, we feel more confident that we are actually estimating the pure effect of ideology. Our findings suggest that liberals will be less likely to find research credible if it contradicts their pre-existing beliefs and if it comes from a conservative university.

However, it is also important to note that the similarities and differences between the two issues examined—climate change and the racial wealth gap—may affect our results. Both are polarizing issues, but climate change is a global, nebulous issue for which it is difficult to allocate personal blame. The racial wealth gap, on the other hand, can be individualized and impacted by respondents’ knowledge of the issue, racial biases, understanding of racism, and the respondents’ own racial identification. At the same time, both have been politicized such that conservatives are going to be less supportive. Future research should consider how these relationships play out with issues on which liberals would be less supportive and with issues that have been less politicized.

The biggest limitation of our studies is their sampling procedure. Online samples are increasingly liberal and our second experiment, in particular, tilts heavily towards liberal participants over conservative ones. These liberal participants are also young—43% are 23 or younger--and more highly educated, which could impact the salience of the university cue (see description in Appendix B and more information in Appendix E). In short, our sample might be more susceptible to university source cues than the average American simply because they are more aware of different universities and the perceived ideological make-up of those universities. But they could also be more knowledgeable of the research process and scientific approaches to tackling social problems, counteracting their ideological thinking about universities and making our sample a harder test of the hypotheses.

Furthermore, while we did our best to balance statistical power with research costs, it is still possible that some of our models containing interactions were underpowered, particularly in Experiment #1. Therefore, we cannot be sure that our results for conservatives are a function of the sample demographics, a lack of statistical power, or of a true lack of responsiveness to the source cue. Additional research should be done on larger samples that are balanced on key demographic variables like gender and education and that contain a greater percentage of conservatives to see if our results are replicable in those scenarios.

Arguably, academics see their mission as one of knowledge creation and development, whether through research or teaching. If conservatives are dismissive of or even avoid this type of education, we are faced with another form of social sorting, increasing affective polarization, prejudice, and participation motivated by ideological and partisan identity rather than democratic outcomes (Mason 2018a; 2018b; Peer et al., 2017). If liberals are quick to accept research solely because of its ideological congruence with their beliefs, or the congruence between the source’s ideological position and their own, we face concerns about the development of critical thinking and selective exposure. Waning trust in universities among Americans of all ideological stripes has important implications not only for universities as enrollment-driven institutions, but also as mission-driven ones. The politicization of higher education challenges our ability to provided evidence-based solutions to difficult societal problems.

Supplemental Material

Supplemental Material - Blind Trust, Blind Skepticism: Liberals’ & Conservatives’ Response to Academic Research

Supplemental Material for Blind Trust, Blind Skepticism: Liberals’ & Conservatives’ Response to Academic Research by Emily Sydnor, and Lauren Alyssa Ratliff Santoro in American Politics Research.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the American Political Science Association under the Centennial Center Grant.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.