Abstract

We synthesized the vast, contradictory scholarly literature on gender bias in academic science from 2000 to 2020. In the most prestigious journals and media outlets, which influence many people’s opinions about sexism, bias is frequently portrayed as an omnipresent factor limiting women’s progress in the tenure-track academy. Claims and counterclaims regarding the presence or absence of sexism span a range of evaluation contexts. Our approach relied on a combination of meta-analysis and analytic dissection. We evaluated the empirical evidence for gender bias in six key contexts in the tenure-track academy: (a) tenure-track hiring, (b) grant funding, (c) teaching ratings, (d) journal acceptances, (e) salaries, and (f) recommendation letters. We also explored the gender gap in a seventh area, journal productivity, because it can moderate bias in other contexts. We focused on these specific domains, in which sexism has most often been alleged to be pervasive, because they represent important types of evaluation, and the extensive research corpus within these domains provides sufficient quantitative data for comprehensive analysis. Contrary to the omnipresent claims of sexism in these domains appearing in top journals and the media, our findings show that tenure-track women are at parity with tenure-track men in three domains (grant funding, journal acceptances, and recommendation letters) and are advantaged over men in a fourth domain (hiring). For teaching ratings and salaries, we found evidence of bias against women; although gender gaps in salary were much smaller than often claimed, they were nevertheless concerning. Even in the four domains in which we failed to find evidence of sexism disadvantaging women, we nevertheless acknowledge that broad societal structural factors may still impede women’s advancement in academic science. Given the substantial resources directed toward reducing gender bias in academic science, it is imperative to develop a clear understanding of when and where such efforts are justified and of how resources can best be directed to mitigate sexism when and where it exists.

Keywords

The literature on women in science, both scholarly and popular, portrays academic sexism today as an omnipresent, pervasive force in the daily lives of tenure-track women in science, technology, engineering, and mathematics (STEM) fields. Throughout this article, we highlight quotes from prestigious journals and other outlets alleging such bias and the contexts in which it is said to occur. In response to these claims, we evaluated the scholarly evidence over a 20-year time frame—2000 to 2020—to reveal the areas of the academy in which gender bias has been addressed, as well as the areas in which it persists. First, however, we briefly describe the authors’ background positions in the preface to familiarize readers with the adversarial nature of our collaboration.

Preface

The two female authors of this article share personal histories rife with egregious examples of gender bias in academic science and beyond. Born in 1950 and 1960, respectively, they endured substantial sexism and were victims of cruelty during the earliest decades of their careers. Despite these experiences, today they share the belief—rooted in empirical data—that although the situation in academia was often deeply unfair to women in the past, it has dramatically improved over recent decades. The key question today is, in which domains of academic life has explicit sexism been addressed? And in which domains is it important to acknowledge continuing bias that demands attention and rectification lest we maintain academic systems that deter the full participation of women?

Just as there are negative consequences of not acknowledging bias, there are also costs of believing that sexism in academic science is pervasive when it is not—key among them that women will be discouraged from choosing academic careers in science, and resources will be wasted in combatting nonexistent bias claims. For this and many additional reasons, the two female authors of this article request that readers approach the topic with an open mind.

This article represents more than 4.5 years of effort by its three authors. By the time readers finish it, some may assume that the authors were in agreement about the nature and prevalence of gender bias from the start. However, this is definitely not the case. Rather, we are collegial adversaries who, during the 4.5 years that we worked on this article, continually challenged each other, modified or deleted text that we disagreed with, and often pushed the article in different directions. Although the three of us have exchanged hundreds of emails and participated in many Zoom sessions, Kahn has never met Ceci and Williams in person.

Kahn has a long history of revealing gender inequities in her field of economics, and her work runs counter to Ceci and Williams’s claims of gender fairness. Kahn was an early member of the American Economics Association’s Committee on the Status of Women in the Economics Profession (CSWEP). Articles of hers in the American Economics Review (Kahn, 1993) and in the Journal of Economic Perspectives (Kahn, 1995) were the first publications on the status of women in the economics profession. She was the first to identify gender inequities as a concern in economics, something she has revisited every decade since then in her publications. In 2019, she co-organized a conference on women in economics, and her most recent analysis in 2021 found gender inequities persisting in tenure and promotion in economics (Ginther & Kahn, 2021). In short, gender bias in academia has been a long-standing passion of Kahn’s. Her findings diverge from Ceci and Williams’s, who have published a number of studies that have not found gender bias in the academy, such as their analyses of grants and tenure-track hiring in Proceedings of the National Academy of Sciences (PNAS; Ceci & Williams, 2011; Williams & Ceci, 2015).

Although our divergent views are real, they may not be evident to readers who see only what survived our disagreements and rewrites; the final product does not reveal the continual back and forth among the three of us. Fortunately, our viewpoint diversity did not prevent us from completing this project on amicable terms. Throughout the years spent working on it, we tempered each other’s statements and abandoned irreconcilable points, so that what survived is a consensus document that does not reveal the many instances in which one of us modified or cut text that another wrote because they felt it was inconsistent with the full corpus of empirical evidence.

In this article, we analyze six specific contexts—key points of evaluation for academics—in which frequent claims have arisen of bias against women scientists who are equally as accomplished as men but who nevertheless find themselves downgraded. If support is found for such bias, then it adds urgency to mitigation efforts. If support is not found, it suggests that resources might be redeployed in other ways to achieve greater benefits for women in STEM fields. There are, after all, additional aspects of academic lives beyond these six evaluation points. In particular, we do not address claims of broad societal systemic factors in this article, inasmuch as doing so would require its own monograph-length treatment.

For instance, we consider the evidence for bias in tenure-track hiring, but there is also a broader context that considers whether women reach the point of even applying for such jobs. Even if search committees treated men and women with identical curricula vitae (CVs) equivalently when they applied for tenure-track positions, women still might be impeded from applying for tenure-track positions in the first place for a host of systemic reasons, such as difficult work schedules and inflexible timing imposed by the tenure-track system during the decade when most people are building families, dominant male values in publishing style, penalizing of women for violating cultural norms when they are agentic and engage in forceful negotiating or even when they provide negative feedback in grading of students (Buser et al., 2022), and unequal childcare expectations. Reasonable people differ in their views about such broad societal construals and whether they should be called bias, and such differences exist among the authors of the present article. In contrast to these broad societal construals, everyone agrees that if job-search committees favor male applicants over comparable female applicants, or if grant reviewers favor proposals that have male names as principal investigators (PIs), or if journal reviewers favor manuscripts that contain male names, or if the same lecture receives higher ratings when it is presented by a man, these behaviors constitute prima facie evidence of gender bias.

Introduction

A staggering number of articles has been published about women in science, reflecting research across a wide range of disciplines—psychology, sociology, economics, philosophy, biology, chemistry, physics, education, anthropology, engineering, medicine, and mathematics (more than 15,000 articles in the past decade alone). 1 It would be impossible to cover this literature in anything less than a lengthy monograph. However, our aim is not to cover it, but rather to uncover it—by dissecting findings across a very wide scientific landscape to achieve a synthesis.

Previous work has shown that what happens early in development can influence later gender representation in scientific careers. Such factors as early socialization differences, stereotypes, and teachers’ and parents’ attitudes affect students’ later choices of high school advanced placement courses, which in turn are often gateways to college STEM majors and later occupations. We will not reprise here the evidence for the importance of these early social factors, because many other researchers, including ourselves, have already done so (e.g., Ambady et al., 2001; Bian et al., 2017, 2018; Carlana, 2019; Carlana & Corno, 2021; Ceci et al., 2014; Cvencek et al., 2011; Dunham et al., 2015; Lavy & Sand, 2015; Terrier, 2020).

Instead of focusing on these early systemic factors, we will focus on women who have overcome early socialization barriers to earn a STEM PhD and who are interested in tenure-track academic careers in science. We will not synthesize the research literature on non-tenure-track positions in academia (e.g., postdocs, tech/lab workers, teaching-only faculty—including adjuncts), government jobs, or jobs in industry. Nor will we discuss studies of aspects of undergraduate and graduate education. Our focus is on women eligible to compete for tenure-track positions, grants, journal acceptances, salaries, et cetera.

In short, the analysis we present in these pages is limited to claims regarding biases that women have faced in the tenure-track academy since 2000. We will not delve into pre-2000 biases and barriers that may have impeded women from vying for and succeeding in tenure-track positions. Additionally, we will not address intersectionalities of gender with race and social class because very limited data exist. Where research does exist, we will describe it, including some of our own research on race and on National Institutes of Health (NIH) grants (Ginther et al., 2016; Ginther & Kahn, 2013).

Even when limiting ourselves to a consideration of STEM tenure-track jobs, there are nevertheless many contexts in which women may face barriers to success in entering and succeeding in these jobs. In this article, we comprehensively examine evidence in six key evaluation contexts: (a) Are similarly accomplished women and men treated differently by academic hiring committees? (b) Are grant reviewers biased against female PIs? (c) Are journal reviewers biased against female authors? (d) Are recommendation-letter writers biased against female applicants for tenure-track positions? (e) Are faculty salaries biased against women? And, (f) are student teaching evaluations biased against female instructors? Claims of gender bias are omnipresent in all six of these domains (see quotes below). Our goal is to synthesize the best empirical evidence related to each of these claims to determine whether it supports the bias claims. We synthesize findings across these six evaluation contexts in an effort to reconcile contradictory results. (We also review the literature in a seventh context, gender differences in publication rates, because publishing productivity can moderate evaluation in most of these six contexts.)

These six contexts do not exhaust all possible sources of bias, even within tenure-track academia. For example, they do not cover factors such as speed of promotion, “chilly climate,” speaking invitations, professional society awards, election to prestigious societies, citation imbalances, tenure rates, and persistence in the academy (e.g., Card et al., 2022; Cassad et al., 2021; Farr et al., 2017; Holliday et al., 2014; Holmes et al., 2011; Kaminski & Geisler, 2012; Mehto, 2021; National Academy of Sciences, 2007; Teich et al., 2022; Webber & Canché, 2018). Nor do these six contexts address broad systemic factors that result from underlying conditions or societal expectations that may indirectly impede women’s progress, independently of whether they are treated equitably once they enter these six contexts, such as when an institution requires long working days of young mothers or when tenure schedules lack flexibility that young families need.

However, we chose these six specific contexts because they represent the most active areas of empirical research, because they are especially important early in a tenure-track career, and because they are specific enough so that gender differences in outcomes can be measured. Unlike the situation with broader factors, virtually everyone agrees when they see evidence of these six forms of bias. If the identical CV is rated higher when a man’s name as opposed to a woman’s name is on it, everyone recognizes this as bias; there is no convincing counterargument. But this is not true for broad societal factors that some people regard as defensible institutional practices, such as high expectations of productivity coinciding with childbearing years for women. In such cases, the causal links demonstrating outright bias are often less compelling than is the case when a study shows that the identical grant proposal is rated higher when a man’s name (vs. a woman’s) is listed as PI.

Because we are interested in the current situation, we consider studies that measured the existence of bias in each of these six contexts starting in 2000. Women’s roles in all aspects of society changed dramatically over the last half of the 20th century. Our research on women in academia is partially borne from earlier evidence for considerable former bias along many dimensions. Although we are interested in understanding what the current situation is, our focus is not intended to minimize or deny the existence of gender bias in the past. Thus, when we discuss each of the six contexts, where there are highly visible studies published before 2000, we note them and point out major changes over time.

Claims of gender bias in the six domains

Examples of bias claims

Claims of specific forms of gender bias in the six domains are ubiquitous, found in publications of prestigious scientific outlets such as the National Academy of Sciences and in top journals such as Nature, The Lancet, PLOS Biology, PNAS, and Science (e.g., Casselman, 2021; Johnson et al., 2016; Lawrence, 2006; National Academy of Sciences, 2007; Shen, 2013; Witteman et al., 2019; Witze, 2020), as well as in popular media (e.g., Harvard Business Review, Wired, The New York Times, Slate, Huffington Post). As a backdrop to the comprehensive empirical analysis that follows, we begin with a few narrative examples illustrating claims commonly made about gender bias in these six specific contexts. These quotes demonstrate that these claims appear in premier scientific journals and in policy statements by major scientific societies. Most of these quotes combine one or more of the six contexts that we address with references to other contexts that we do not address, illustrating the tendency to attribute bias to wide ranges of behavior. As a backdrop, consider the following quotations:

Researchers in recent years have found that women are less likely than men to be hired and promoted, and face greater barriers to getting their work published. (Casselman, 2021, The New York Times, para. 9) Women in academia contribute more labour for less credit on publications, receive less compelling letters of recommendation, receive systematically lower teaching evaluations despite no differences in teaching effectiveness[, and] receive less start-up funding as biomedical scientists. . . . [Publications] led by women take longer to publish and are cited less often [and] are accepted more frequently when reviewers are unaware of authors’ identities. . . . When fictitious or real people are presented as women in randomised experiments, they receive lower ratings of competence from scientists [and] worse teaching evaluations from students. (Witteman et al., 2019, The Lancet, p. 531) In sum, there is considerable evidence that women face persistent barriers in academia and science. (Witteman et al., 2018, bioRxiv, p. 3)

2

Implicit bias is pervasive. Men are preferred to women even if they have the same accomplishments. Psychologists have shown this by testing scientists’ responses to fictitious CVs that are identical other than coming from “John” or “Jennifer.” (Witze, 2020, Nature, p. 25) A vast literature . . . shows time after time, women in science are deemed to be inferior to men and are evaluated as less capable when performing similar or even identical work. This systemic devaluation of women results in an array of real consequences: shorter, less praise-worthy letters of recommendation; fewer research grants, awards, and invitations to speak at conferences; and lower citation rates for their research. Such wide-ranging devaluation of women’s work makes it harder for them to progress in the field. . . . These are just a few of the hundreds of peer-reviewed studies that clearly show, on average, the bar is set higher for women in science than for their male counterparts. (Coil, 2017, Wired, paras. 3 and 5) Considerable research has shown . . . evaluation criteria contain arbitrary and subjective components that disadvantage women. (National Academy of Sciences, 2007, p. 3) Women have fewer publications and collaborators and less funding, and they are penalized in hiring decisions when compared with equally qualified men. (Fortunato et al., 2018, Science, p. 3, citations omitted)

Why discuss absence of bias?

Some readers might ask why it is important to discuss situations in which there is no evidence of gender bias, as well as those in which there is evidence. We believe that there are three major reasons. First, identifying areas in which bias no longer exists allows the research community to focus its efforts on career aspects, junctures, and systems that continue to disadvantage women (e.g., lengthy periods of postdoctoral study). Second, as in the story of the boy who cried wolf, if people claim unfair bias every time there is outcome asymmetry—and if the well-intentioned efforts of many academic leaders and administrators, teachers, policymakers, and funders over the past 20 years to equalize treatment of scientists irrespective of gender appear not to have improved the situation—many people may give up in discouragement (which would be particularly regrettable if their efforts actually worked). Third, if women erroneously believe that every aspect of academia is biased against them, some STEM PhDs may be reluctant to enter any area of academia, even those that are biased in favor of women, and may instead seek jobs in industry or government. Some women may simply not consider a research career in STEM because they fear working in a sexist environment, even when data might show that this environment is not biased against them.

Again, we emphasize that, in what follows, we focus on the evaluation of gender bias in academic tenure-track jobs, which are often seen as the pinnacle of employment, well-paid and secure. Perhaps more importantly, faculty in these positions educate the next generation of scientists. Although there are claims of bias outside the tenure-track academy (e.g., among lecturers or in industry, such as by Ding et al., 2021), any assessment of such claims is outside of our objective.

As we review the empirical evidence related to each of the six tenure-track evaluation contexts (as well as the seventh domain of productivity), we will highlight analyses addressing causal factors. These include randomized experiments, naturally occurring events that provide opportunities to test impacts under investigation, and multivariate analyses controlling for confounding factors. Our goal is to determine whether these analyses lead to the same conclusion. In all, we discuss hundreds of studies—some of which are meta-analyses of hundreds of other studies—exploring how fairly women are evaluated. Thus, we cover a very wide swath of research.

We strove to include studies that were sound methodologically, even if they contradicted each other or the personal views of some team members. 3 When appropriate, we conducted and report on our own meta-analyses in domains in need of them. When we did not review all studies in a given domain, we provide a principled reason. (See Kahn et al., 2022a, 2022b, for specification of the meta-analyses’ search terms, inclusion criteria, Preferred Reporting Items for Systematic Reviews and Meta-Analyses [PRISMA] diagrams, funnel plots, forest charts, and related technical information that guided our searches and informed our meta-analyses.) Finally, we based our analyses and conclusions on the actual data published in these articles, which sometimes diverged from interpretations that the authors or others made about these data.

Context matters

Bias occurs within a context. Not all scientific fields are equal in their representation of women (Ceci et al., 2014; Cheryan et al., 2017), and therefore, they may not be equivalent in their evaluation of women. Similarly, as we show below, granting agencies in different countries have very different gender gaps in success rates. When the data show that differences exist across scientific fields, professorial ranks, and nations, we are careful to point out these differences.

Historical background on women’s participation in science

Historically in the United States, men were more likely to earn baccalaureates than women. In 1900, only 19% of college degrees went to women. This number rose steadily throughout the century, except during the aftermath of World War II (influenced by the 1944 GI Bill, 41% of bachelor of arts [BA] recipients were female in 1939, but only 27% were female in 1950 because of the influx of World War II veterans). 4 By 1982, women received slightly more than 50% of BAs, and this rose to 57% by the turn of the century, after which the percentage of BAs awarded to women stabilized. Women’s educational ascendancy does not stop with the baccalaureate: They also earn more master’s degrees (59%) and more PhDs (53%) than men.

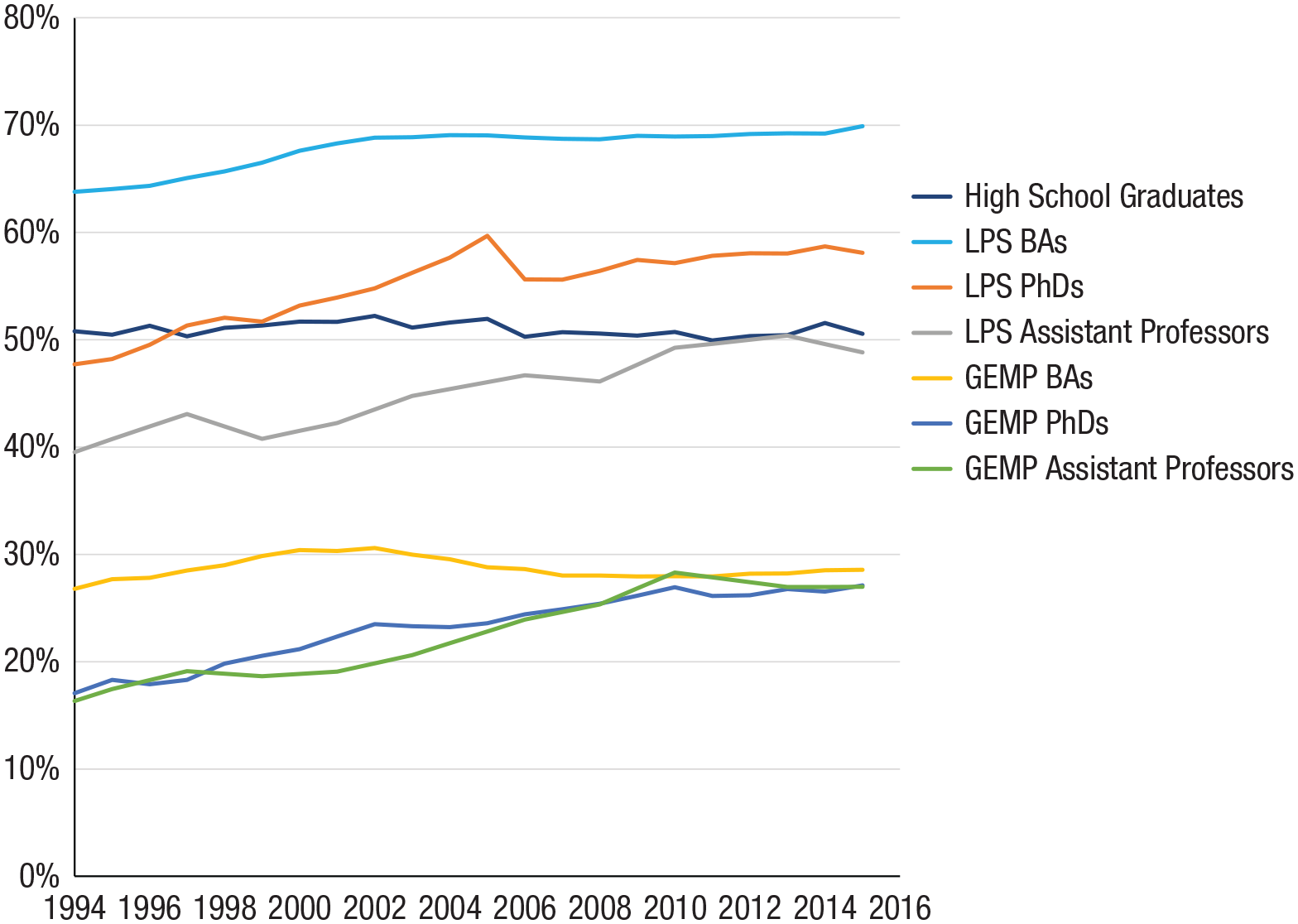

Notwithstanding the progress made by women, in math-intensive fields, they have a much lower level of representation than men. Figure 1 empirically demonstrates this, showing women’s representation among baccalaureate, PhD, and tenure-track appointments. In this graph, we divided two national data sets into the most mathematically intensive fields—geosciences, engineering, economics, mathematics/computer science, and physical science (GEMP)—and less math-intensive fields—life sciences, psychology, and social sciences (LPS). Because of these differences in women’s representation, it is common to distinguish between GEMP and LPS fields, and we do so here when possible. The dearth of women in GEMP fields has been attributed to many factors, such as mathematical ability, mathematical education, and people-vs.-things orientation (Su & Rounds, 2015; Su et al., 2009). Litigating these alleged reasons is not our focus here. For interested readers, Ceci et al. (2014) provide a discussion of these factors.

Percentage of female high school graduates, bachelor of arts (BAs), PhDs, and tenure-track assistant professors by major field from 1994 to 2016. GEMP = geoscience, engineering, economics, mathematics, computer science, and physical science; LPS = life sciences, psychology/behavioral sciences, and social sciences. Source for baccalaureate degrees: WebCaspar (https://ncsesdata.nsf.gov/webcaspar/); source for assistant professor data: National Science Foundation’s Survey of Doctorate Recipients (https://www.nsf.gov/statistics/srvydoctoratework/).

Below, we consider claims of gender bias in six evaluative contexts, as well as the potentially important and cross-cutting influence of productivity—for example, the role journal publications might play in hiring, receipt of grants, recommendation letters, and salary decisions.

A Search for Gender Bias in Six Evaluation Contexts

Evaluation Context 1: tenure-track hiring

High-profile claims are often made that STEM hiring is biased against women. This includes the quotes by Witze (2020) in Nature and Casselman (2021) in The New York Times cited earlier. Many similar claims of gender bias in tenure-track hiring have been made. Consider the following quotations:

Research has pointed to bias in peer review and hiring. . . . A female applicant had to . . . publish at least three more papers in a prestigious science journal or an additional 20 papers in lesser-known specialty journals to be judged as productive as a male applicant. (Hill et al., 2010, p. 24) Even after earning STEM degrees, women are less likely to be hired into STEM jobs compared with equally qualified men. (Cech & Blair-Loy, 2019, p. 4182)

A few studies examined the presence of racial or gender bias in the evaluation of résumés and CVs (e.g., Moss-Racusin et al., 2012) or in recruitment processes (Milkman et al., 2015; Posselt, 2016), suggesting that the lack of faculty diversity can be attributed to bias in institutional gatekeeping processes, such as hiring (O’Meara et al., 2020). However, none of these were tenure-track-hiring studies, and later we show that there are important reasons for not assuming that such studies inform gender bias in the tenure-track academy.

Because of the heterogeneity of dependent variables, forms of evidence, nations, epochs, ranks, type of institutions, and disciplines, meta-analysis in the tenure-track-hiring domain is inappropriate. Fortunately, the findings and effect sizes from the myriad analyses strongly point in the same direction, as we will show. In synthesizing these findings, we will examine three types of evidence that have been invoked in support of the gender-bias argument: (a) cross-sectional, (b) cohort, and (c) experimental. Below, we provide systematic coverage of the empirical literature addressing two of these three types of evidence.

Cross-sectional comparisons

Many claims of bias in tenure-track hiring are based on contemporaneous cross-sectional percentages of PhDs, tenure-track untenured professors, tenured associates, and full professors. An example illustrates this:

Between 1969 and 2009, the percentage of doctorates awarded to women in the life sciences increased from 15% to 52%. Despite the vast gains at the doctoral level, women still lag behind in faculty appointments. Currently, only 36% of assistant professors and 18% of full professors in biology-related fields are women. The attrition of women from academic careers . . . undermines the meritocratic ideals of science and represents a significant underuse of the skills that are present in the pool of doctoral trainees. (Sheltzer & Smith, 2014, p. 10107)

However, given the growth in the percentage of female PhDs in life sciences over these decades, we should not expect the percentage of female full professors in 2009 (most of whom received their PhDs from 1980 to 1995) to be 52%. Expectations of the percentage of female full professors must be based on the relevant cohorts rather than on contemporaneous PhD numbers. In another example, the European Commission, Directorate-General for Research and Innovation (2019) concluded, “Women face greater difficulties than men in advancing to the highest academic positions in all the countries examined” (p. 125). Once again, this conclusion was linked to the number of newly minted PhDs rather than to the relevant cohort. In a third example, Golbeck (2016) used cross-sectional data to argue that women were underrepresented as tenure-track professors in 2014, pointing out that they comprised 46% of new PhDs but only 33% of tenure-track assistant professors—a 13 percentage points (ppt) gap. However, using the appropriate 2014 cohort, we found that female assistant professors in 2014 comprised 40% of PhDs but only 37% of tenure-track assistant professors, a 3 ppt gap. This gender gap was even smaller or reversed when we adjusted for cohort because 2008 is the oldest year in which candidates could receive PhDs and still be on the tenure track in 2014, and women comprised only 31% of PhDs that year. This erases Golbeck’s estimate of a 13% gender gap.

Thus, these gender differences need not indicate a bias in the hiring of women with newly minted PhDs, for two reasons. The first reason is that in fields where women constitute steadily increasing proportions of PhDs each year, cross-sectional contemporaneous comparisons will always overestimate gender differences in the probability of proceeding to tenure-track jobs at a given point. This is not a new insight (see, e.g., Abramo et al., 2021; Hargens & Long, 2002; Stewart et al., 2009), yet many scholars still fail to take this issue into account. The next section presents evidence on whether in the United States, PhD women graduates progressed into academia at a similar pace as men. The second reason that gender differences in the likelihood of PhDs entering tenure-track jobs need not imply bias is that women PhDs are less likely to apply for tenure-track positions. We address evidence for this in the following section. We then describe studies that do address whether there is gender bias in the likelihood that PhDs who apply for tenure-track jobs are hired.

Evidence on transitioning from PhD to tenure-track positions based on actual or synthetic U. S. cohort comparisons

To compare women’s and men’s transition from PhD to tenure track, one can use actual cohorts (longitudinal data); “synthetic cohorts,” matching professors to the actual PhD cohorts feeding them; or counterfactual hypothetical candidates from the relevant cohort. For the United States, we created synthetic cohorts using the National Science Foundation’s (NSF’s) Survey of Doctorate Recipients (SDR), a biennial survey of 120,000 doctoral degree holders conducted by the National Center for Science and Engineering Statistics (NCSES) within NSF. The SDR is the best source of national longitudinal data on PhD recipients in science and health fields. In these analyses, we juxtaposed the annual supply of new PhDs in GEMP and LPS fields, respectively, with the assistant professors in the years during which they would be expected to be at that rank if they entered tenure-track academia.

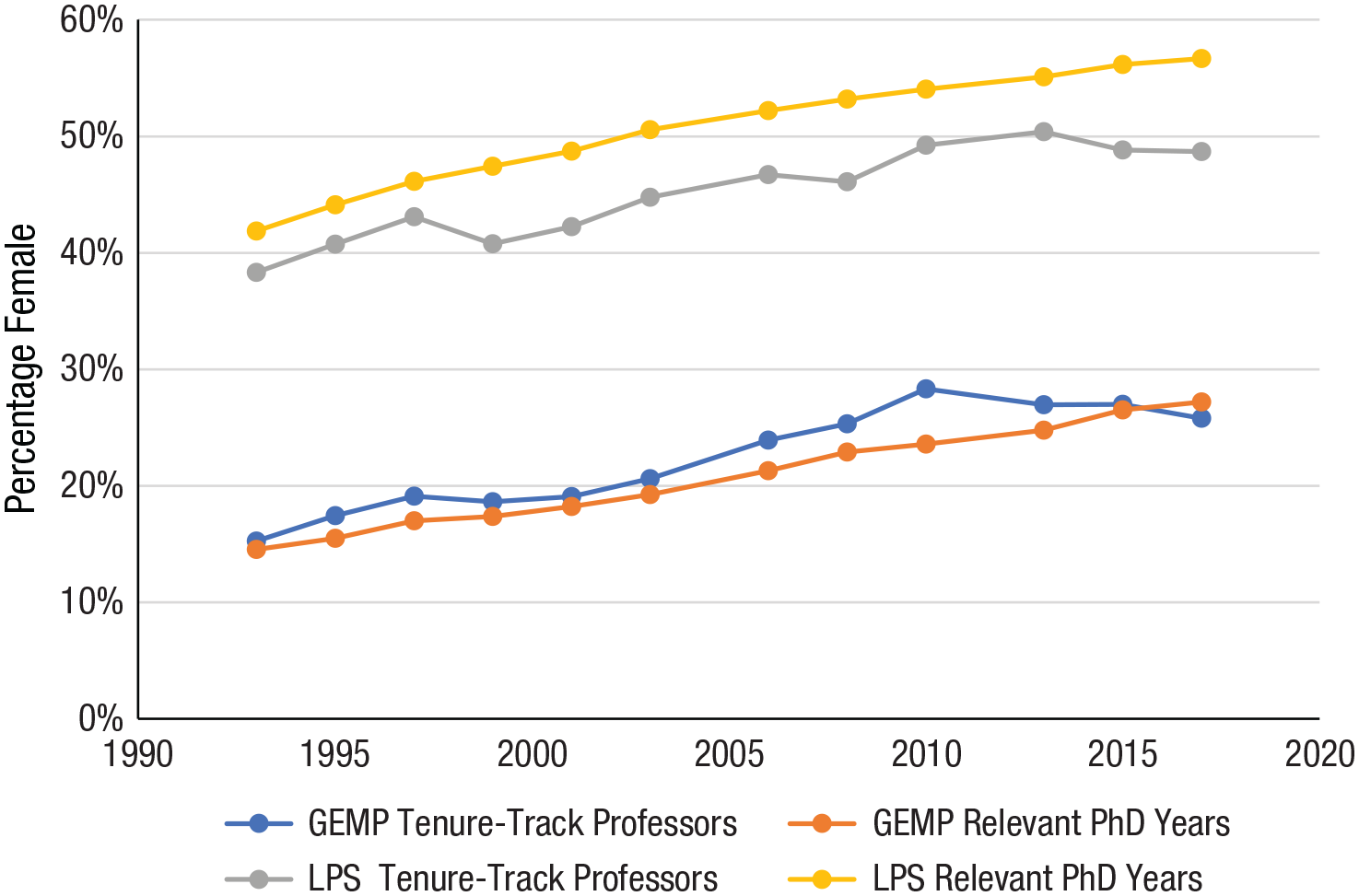

For separating GEMP and LPS fields by year, we first calculated the smallest range of PhD years of at least 50% of tenure-track assistant professors that year. (For instance, 50% of 1993 assistant professors received PhDs between 1985 and 1990.) With this information, we calculated the percentage of women who received PhDs each year and the percentage of female assistant professors we would expect to see 5 to 8 years later if men and women were equally likely to progress from PhD to assistant professor (see Fig. 2).

Percentage of female tenure-track assistant professors from 1994 to 2017 and women in the relevant PhD cohorts by major field. Calculations were made from data obtained from the National Science Foundation’s Survey of Doctorate Recipients (https://www.nsf.gov/statistics/srvydoctoratework/). Relevant PhD years are the period when the middle 50% (25th–75th percentile) of the assistant professors in the corresponding year graduated. GEMP = geoscience, engineering, economics, mathematics, computer science, and physical science; LPS = life sciences, psychology/behavioral sciences, and social sciences.

Figure 2 shows that for fields in which women are most underrepresented—the math-intensive GEMP fields—the percentage of female assistant professors is either approximately the same as the percentage of female PhDs in the relevant feeder years for that cohort or is greater (as in 2010). The prima facie evidence, therefore, suggests that female applicants for GEMP tenure-track positions have been slightly more likely to be hired than men since 1993. This female advantage, while small, runs counter to the common claim that women are far less likely to be hired as a result of bias.

However, the pattern is different in the LPS fields, despite women’s overall representation being much greater than in GEMP fields: The percentage of female tenure-track assistant professors in LPS is always lower than the percentage of female PhDs in the relevant feeder years. The gap was smallest in the mid-90s—less than 4 ppt—growing to 8 ppt in 2017. The percentage of female tenure-track assistant professors in LPS fields has hardly changed since 2010, hovering between 49% and 50%, despite increases each year in the feeder pool of female PhDs.

Using actual or synthetic cohort analyses, other researchers have also found less pronounced gender gaps or even overrepresentations of women in GEMP fields. Comparing the percentage of PhDs conferred to women between 1996 and 2005 with faculty in 2007, Kessel and Nelson (2011) reported that female PhDs had similar or higher probabilities than men of entering assistant professorships in 100 top “highly quantitative” departments but not in other STEM fields. Ceci et al. (2014) compared the percentage of female PhDs with the percentage of female assistant professors 5 to 6 years later in GEMP fields and found similar results. And in philosophy—the humanities field most like GEMP in gender composition and quantitative emphasis—among 2008 to 2019 PhDs, women had a 10% to 17% greater likelihood than men of entering permanent academic placements (Allen-Hermanson, 2017; Kallens et al., 2022).

Thus, these cohort analyses offer little support for the claim of widespread gender discrimination in tenure-track hiring in GEMP, even before 2000. Economics is the exception; it is the only GEMP field in which analyses of synthetic cohorts indicate otherwise. CSWEP creates synthetic cohorts annually (e.g., Chevalier, 2019), showing that the percentage of women among tenure-track assistant professors (within 7 years after obtaining their PhDs) was similar to the percentage of women among PhDs only through 2004; for the next eight PhD cohorts, however, the percentage of female assistant professors stagnated, despite growth of newly minted female PhDs.

Two studies used the longitudinal capability of NSF’s SDR. Wolfinger et al. (2008) found that among PhDs from 1981 to 1995, women were 7% less likely than men to transition to the tenure track. However, using a longer range of the SDR and differentiating among fields, Ginther and Kahn (2009) found that among people who earned PhDs between 1973 and 2001, women and men in physical sciences and engineering were equally likely to transition to the tenure track, whereas in life sciences, women were 7.7 ppt less likely. For social sciences, Ginther and Kahn (2014) found that women were 3.7% less likely. Although these analyses all controlled for PhD department ranking, they did not control for publication productivity or for the aspiration for tenure-track positions, which differs between women and men, as we will show later. (Interestingly, both Wolfinger et al., 2008, and Ginther and Kahn, 2009, found that the largest female demographic of job hunters—unmarried single women—were 15% more likely than men to transition to the tenure track.)

Finally, an older audit of the hiring of assistant professors across all nine University of California campuses between 1990 and 1999 compared the percentage of women among those hired as assistant professors with the percentage of women among recent PhDs awarded in the University of California system. Women were overrepresented among new hires in four of the six GEMP fields: In physics, the percentage female among hired assistant professors was higher than among recent PhDs (women were hired 14% of the time vs. 10% female PhDs); in engineering, women were hired 13% of the time versus 8% female PhDs; in geoscience, women were hired 28% of the time (vs. 19% women PhDs); and in computer science, women were hired 14% of the time (vs. 10% female PhDs). However, in two GEMP fields, there was a pronounced male advantage: In mathematics, women were hired as assistant professors far less often than the numbers of recent female PhDs (women hired 7% of the time vs. 20% female PhDs), and in chemistry, women were hired 7% of the time (vs. 26% PhDs; California State Auditor, Bureau of State Audits, 2001, Table 2).

Female PhDs are less likely to apply for tenure-track positions

However, even if men and women in the same cohort had proceeded at different rates to the tenure track, this would not prove bias in hiring because not every PhD holder aspires to or applies for tenure-track jobs. Surveys in both the United States and Europe consistently show that women are significantly less likely than men to pursue a tenure-track job as they advance through training (e.g., Bataille et al., 2017; Blinkenstaff, 2005; Salinas & Bagni, 2017; Schubert & Engelage, 2011; Trevino et al., 2017).

Studies suggest that the lower rate of applying for tenure-track positions is the result of systemic social/structural and biological factors, such as women’s disproportionate family, childbearing, and child-rearing responsibilities combined with the rigid time frame of the tenure process. For instance, in the United States, women are twice as likely as men to abandon their careers after childbirth (Cech & Blair-Loy, 2019; Skibba, 2019). Goulden et al. (2009) found leakage from research careers among postdocs to be especially apparent among women with children or planning to have children (28% leakage for women vs. 16% for men), whereas women without children or plans to have children were as likely as men to pursue the tenure track. Goulden et al. (2009) found that the gender gap in applying for faculty positions was completely accounted for by family formation decisions, and Martinez et al. (2007) found in a survey of 1,300 NIH postdocs that 21% of women compared with 7% of men said that plans to have children or additional children were extremely important in planning research careers. Finally, Ecklund and Lincoln’s (2011) survey of 3,455 biologists, astronomers, and physicists in top-20 departments found that 4 times as many female graduate students and 50% more female postdocs worried that a science career would keep them from having a family. We will revisit this important point of leakage of women below.

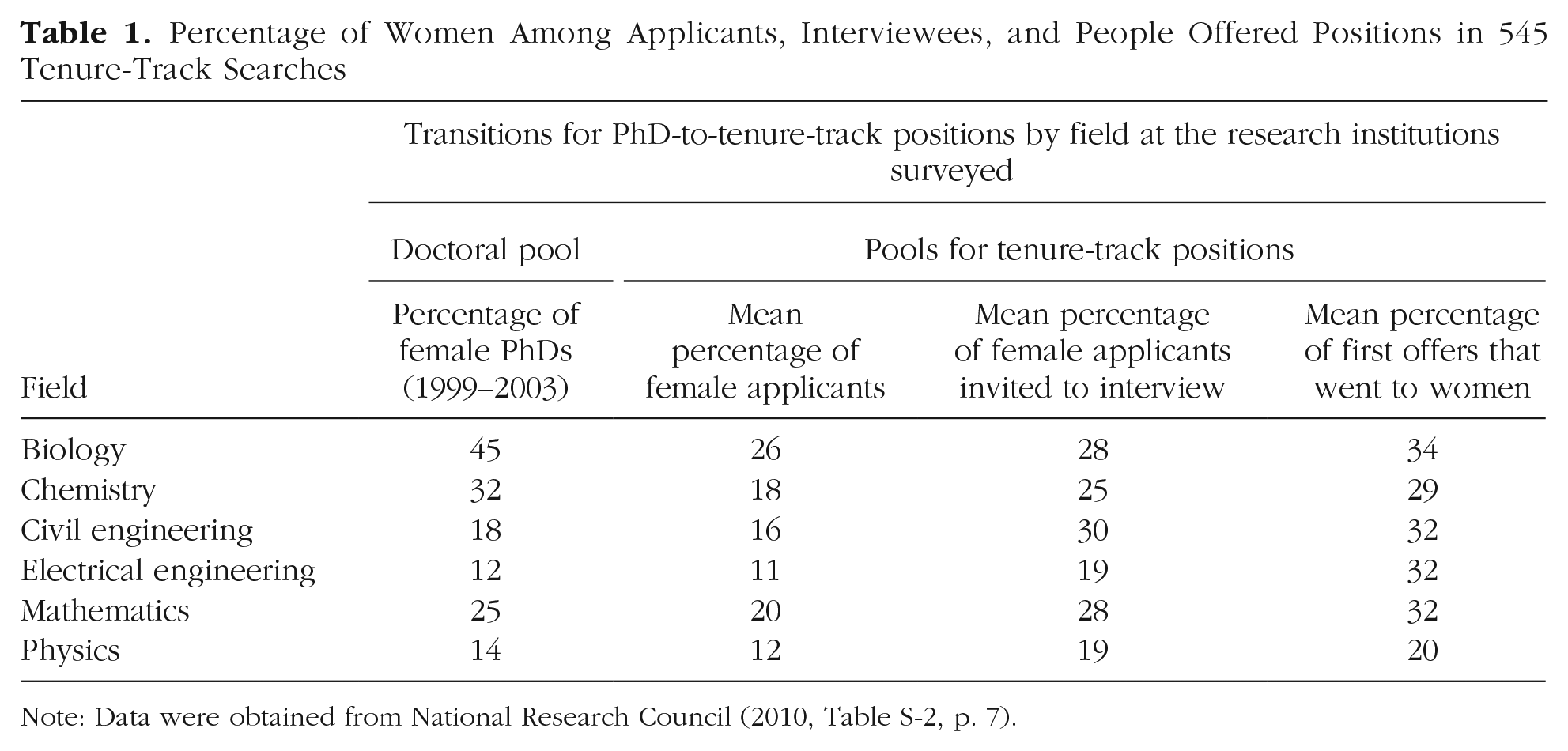

We saw that in LPS fields, women PhDs were less likely to proceed to tenure-track jobs than men. Is the lower likelihood of applying particularly marked for women in these fields? It would make sense, given that postdocs are usually required in biology and are increasingly common in psychology, to enable a chance of getting tenure-track jobs, making them even less attractive to PhD women thinking of starting a family. We can see in the National Research Council (NRC; 2010) data shown in Table 1 that the percentage female of tenure-track applicants is only 58% of the percentage female of PhDs in biology, and that this is much smaller than the percentage for engineers, mathematicians, or physicists. Relatedly, Martinez et al. (2007) also found extremely different career plans for men and women in biomedical fields. And recent research by Cheng (2020) also found that in biological sciences, mothers with children are much less likely to enter or remain in tenure-track jobs.

Percentage of Women Among Applicants, Interviewees, and People Offered Positions in 545 Tenure-Track Searches

Note: Data were obtained from National Research Council (2010, Table S-2, p. 7).

Such leakage is likely the result of systemic challenges posed by inflexible tenure-track jobs, coupled with women’s and men’s differing experiences with and expectations of childbearing and parenting. However, it says nothing about bias in the tenure-track hiring process, which may be gender fair for those women who do apply. Thus, it is important to control for actual numbers of women applying for tenure-track jobs rather than assume that equal percentages of men and women who earn PhDs vie for tenure-track jobs. The latter is simply not true.

To recap, despite claims of gender bias in tenure-track hiring, our national cohort analyses show no increased likelihood that men proceed to tenure-track jobs relative to women in the very fields in which women are most underrepresented (GEMP), although there is a difference in LPS fields. A major factor—if not the only factor—responsible for women being less likely to make this transition is that they do not apply as often for these jobs. Neither of these facts, however, directly answers the question, “If an equally qualified man and women apply for a tenure-track job, will the man be more likely to get it?” Below, we describe three kinds of studies that directly address this question: (a) cohort studies limited to graduates who entered academia, (b) large-scale institutional analyses, and (c) experimental evidence. These three categories of evidence point in the same direction, which suggests gender-fair or female-preferred hiring.

Cohort analyses limited to people likely to apply

Two studies limit their analysis of hiring to graduates who applied for academic jobs by including only those women and men who did compete for academic jobs at some point and by measuring whether women were hired into more prestigious departments. In the United States, two studies limited their analyses to women entering academic jobs. In computer science, Way et al. (2016) found that more highly ranked departments hired women and men at comparable rates, holding constant publications, department prestige, geography, and postdoc experience. An earlier study by Clauset et al. (2015) used the same methodology on more fields but had no information on applicants’ publications or postdocs: This study found that women tended to be hired by lower-ranked departments than men were. Combining these two findings suggests that publications and postdocs accounted for the entirety of the tenure-track hiring gender gap in prestigious departments.

Two other studies relate to German academia. Germany’s academic system is different than that in the United States. There are no entry-level tenure-track jobs, only (national) competitions for tenured professorships (the only academic rank with permanent appointments). Schröder et al. (2021) analyzed the entire population of German political science departments in 2018 or 2019, excluding individuals with PhDs received before 1980. (These included current predocs, postdocs, temporary faculty, and professors.) Lutter and Schröder (2016) did the same for individuals in sociology departments in universities in 2013. They limited the analysis to only individuals who published at least once and also only to those observed at that point in time in a university. These two criteria probably eliminated a large proportion of PhDs not interested in academic research jobs.

Among political scientists, Schröder et al. (2021) found that female political scientists had a 20% greater likelihood of obtaining a tenured position than comparably accomplished males in the same cohort after controlling for personal characteristics and accomplishments (publications, grants, children, etc.). Lutter and Schröder (2016) found that women needed 23% to 44% fewer publications than men to obtain a tenured job in German sociology departments. As Schröder et al. (2021) concluded,

Ours is the first study to use a virtually complete sample of all German academic political scientists to show that women tend to be favored over men in the hiring process for tenured professorships, before and after controlling for various factors, most importantly productivity (but see [Lutter & Schröder, 2016] for the discipline of sociology in Germany). This means that women get hired with fewer measurable publications than men do, indicating that there is no bias against women when judging their competency, different from what other studies found. (para. 52)

Evidence of bias based on actual university hiring data

Audits of actual university hiring, which often occur for affirmative action/inclusion purposes, can establish whether women who apply are less likely to be hired than men. Generally, this evidence consists of institutional data that are not posted online or accessible outside the university or available through online search engines. Below, we describe seven such reports that were published or temporarily available online before being taken down. 5

This subsection is not based on a comprehensive search but rather on institutional findings that have been made available, and therefore they may entail biases associated with a university’s willingness to make its administrative data available: Institutions that discover evidence of employment discrimination in either direction may be less likely to make such reports discoverable. On the other hand, the NRC (2010) study of approximately 500 departments in 89 of the most prestigious research-intensive (R1) universities in the United States had an 85% response rate, avoiding the above limitations. Also, some of these reports are dated, based on hiring during the 1970s to 2000. Thus, we offer the evidence in this subsection with caution, and readers may in fact wish to de-emphasize this subsection even though it is consistent with the findings from the cohort analyses described above and the experimental analyses that follow.

One of the largest sources of hiring audit data is an NRC (2010) study of 545 tenure-track hires and 97 tenured faculty hires from 1995 to 2003 at 89 R1 U.S. universities in five GEMP fields plus one LPS field (biology). As was true of the studies discussed above, this NRC report found, “The percentage of applications from women are consistently lower than the percentage of PhDs awarded to women” (p. 48). As shown in Table 1 (which reports data from Table S-2 of NRC, 2010), they found differences between disciplines and specifically that, “in the fields with the largest representation of women with PhDs—biology and chemistry—the percentage of PhDs awarded to women exceeds the percentage of applications from women by a large amount” (pp. 47–48).

This table also shows that in all disciplines, women who applied for tenure-track jobs were invited to interview and were offered positions at rates higher than men were, leading the NRC team to conclude that

women fared well in the hiring process at Research I institutions, which contradicts some commonly held perceptions of research universities. If women applied for positions, they had a better chance of being interviewed and receiving offers than male job candidates had. (NRC, 2010, pp. 4–5)

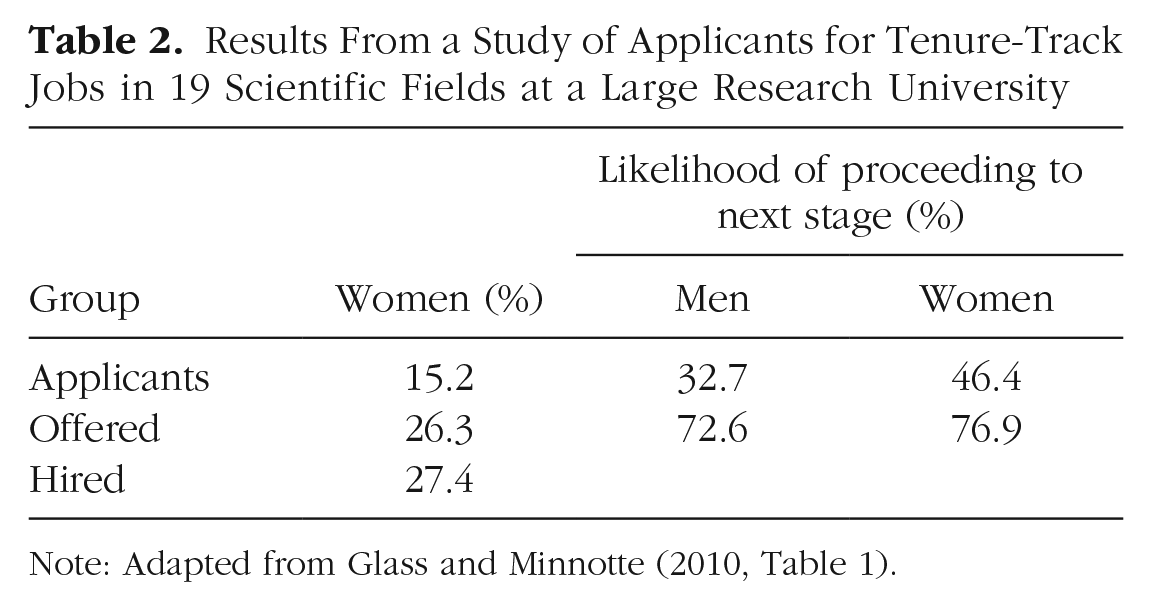

Similar results from actual university hiring data were reported for a later period (2000–2005) by Glass and Minnotte (2010), who studied 3,077 applicants for 63 tenure-track jobs in 19 scientific fields at a large research university. We calculated the percentages shown in Table 2 from their data. Again, women’s likelihood of being hired was greater than men’s.

Results From a Study of Applicants for Tenure-Track Jobs in 19 Scientific Fields at a Large Research University

Note: Adapted from Glass and Minnotte (2010, Table 1).

There are two published Canadian reports of hiring that, although older, accord with the findings above. At the University of Western Ontario, across departments in 1992 to 1999, women constituted 23.2% of applicants, 30.4% of interviewees, and 36.2% of hires for tenure-track jobs (The University of Western Ontario, Office of the Provost and Vice-President [Academic], 2001). At Simon Fraser University and University of British Columbia in 2001, of 4,525 applicants, women were more likely than men to be one of the 105 hired, comprising 38.9% of applicants but 41.0% of those hired (Kimura, 2002).

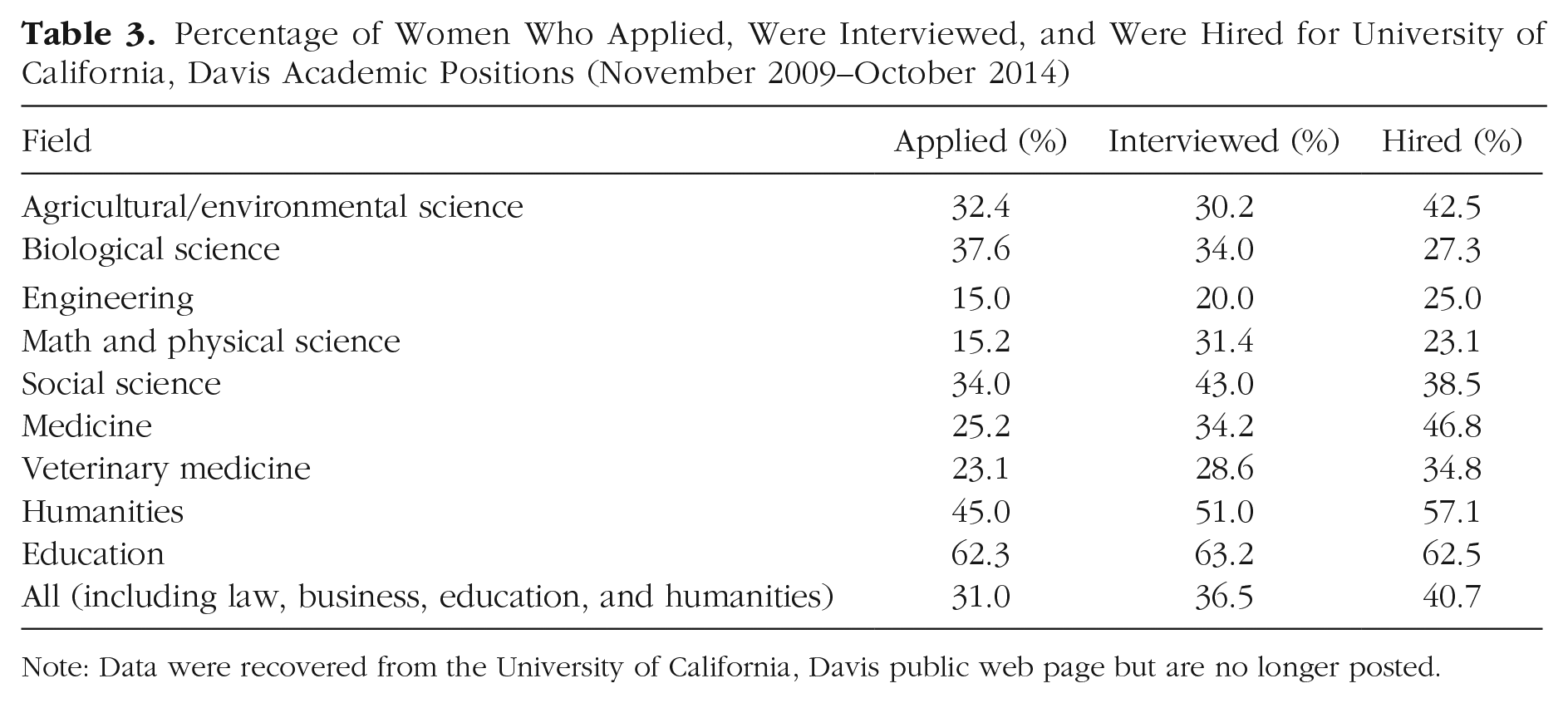

Another example of university administrative hiring data is an online report of 5 years of hiring at the University of California, Davis (November 2009–October 2014). Table 3 shows increasing percentages of women as we move through the hiring process. By our calculation, 31.0% of the University of California, Davis applicants were women, 36.5% of those interviewed were women, and 40.7% of those hired were women. As was true in the NRC (2010) findings, there was considerable heterogeneity, but in all fields except biology, the percentage of women hired surpassed the percentage of female applicants. The biology results contrast with the NRC findings, however.

Percentage of Women Who Applied, Were Interviewed, and Were Hired for University of California, Davis Academic Positions (November 2009–October 2014)

Note: Data were recovered from the University of California, Davis public web page but are no longer posted.

The same overall picture appears in administrative data from other countries. An analysis by Moratti (2020) of hiring for the decade from 2007 to 2017 at Norway’s largest university revealed no gender bias in hiring: Seventy-seven searches generated 1,009 applicants for new associate professorships, with women slightly more likely than men to be hired, leading Moratti to conclude that

women applicants between 2007 and 2017 had a slightly higher likelihood of victory compared to their men competitors. . . . Much of the international literature . . . on application patterns and selection outcomes for permanent academic positions reports that women are either reluctant to apply or systematically dispreferred (or both); that is not what we found. (pp. 924 & 927)

These analyses of institutional hiring data are consistent with a national audit of computer-science hiring showing that women were more successful in obtaining offers of faculty positions. The Computer Research Association commissioned a national audit of U.S. and Canadian computer-science hiring (Stankovic & Aspray, 2003). They found that new women recipients of PhDs applied for far fewer academic jobs than men: Women with PhDs applied for six positions, whereas men applied for 25 positions. However, female PhDs were offered twice as many interviews per application (0.77), whereas men received only 0.37. Further, women received 0.55 job offers per application, whereas men received only 0.19: “Obviously women were much more selective in where they applied, and also much more successful in the application process” (Stankovic & Aspray, 2003, p. 31).

In summary, all of the seven administrative reports reveal substantial evidence that women applicants were at least as successful as and usually more successful than male applicants were—particularly in GEMP fields. (This is also the case in the eighth study we chose to delete because its authors have not made their data publicly available yet.) Several of these reports also found that women PhDs were less likely to apply to these jobs than men. This conclusion is ratified by Salinas and Bagni’s (2017) review of the underrepresentation of women scientists in European studies:

Analyses of the female candidates applying for independent positions suggest that [underrepresentation of women] is not due to discrimination against women, but rather to the fact that fewer women apply for jobs as independent investigators. . . . Once a female applicant enters the recruitment process, she has an equal chance to be offered the position compared to her male counterparts. (p. 722)

To recap, both the cohort analyses that are limited to individuals who likely applied for academic jobs and the seven university administrative hiring analyses just reviewed point to gender-fair or even pro-female hiring. Moreover, even without limiting analyses to applicants or likely applicants, women PhDs in GEMP fields are just as likely as men to proceed to tenure-track jobs. On the other hand, the cohort analysis for women PhDs in LPS fields found that women were less likely than men to proceed to tenure-track jobs. However, there is some evidence that women in biology are particularly less likely to seek tenure-track jobs.

Unfortunately, the audits of administrative hiring data presented above are limited to what is publicly available, and much of it is at least 15 years old. It is possible—even likely—that similar data exist at other universities but are not publicly posted. Despite numerous search strategies, we were unable to unearth these data. We hope that studies similar to these are pursued to add to the results to date.

Below, we discuss the final source of hiring information—experimental studies. Overall results accord with the same gender-fair (or pro-female) interpretation seen in the administrative and cohort findings just reviewed.

Experiments in hypothetical hiring

The above large-scale analyses of nationally representative cohort data, as well as institutional data, document gender-neutral or pro-female hiring but not its cause. To explore whether women are favored in tenure-track hiring because they are stronger candidates (e.g., women’s greater attrition during graduate school could theoretically result in those who survive being stronger and having more publications) or for other reasons (e.g., desire for diversity), some researchers have conducted experiments or quasi-experiments in which identically qualified men and women vie for jobs. These studies do not mirror hiring by search committees because (a) when faculty in experiments evaluate hypothetical applicants, they may engage in virtue signaling because nothing is at stake, and (b) actual hiring is influenced by discussions among committee members or entire departmental faculties, and such conversations are missing from hiring experiments. On the other hand, experiments provide a means of testing many hypotheses, such as whether variations in a given factor (e.g., one extra publication or the presence of children) affect women’s and men’s hirability similarly.

We conducted an exhaustive search on Web of Science and Google Scholar of studies of CV-matched tenure-track hiring experiments. Only four such studies met our inclusionary criteria of being tenure-track experiments in the 2000 to 2020 period (and a fifth was from 1999). We supplemented these searches with a bottom-up search of every individual cite to each of these five studies (> 1,300 cited studies). No other experimental tenure-track study emerged during the past 20 years, save one that was published a few months after the closing of the inclusion period (Henningsen et al., 2021).

We begin with a 1999 experimental study of tenure-track hiring, even though it fell 1 year outside our inclusionary date. We decided to include it because it had more Google Scholar cites (913 through 2021) than all of the other four studies combined, and it is the sole study to show results in the opposite direction from our conclusion. For these reasons, including it seemed important. Steinpreis et al. (1999) sent 238 academic psychologists one of two hypothetical CVs of a fictional scientist applying for a new tenure-track post, with either a female or a male name. Male candidates were more likely to be recommended for a tenure-track job by both male and female psychologists. (Steinpreis et al. also studied promotion to tenure for two identically qualified advanced candidates but found no gender difference.)

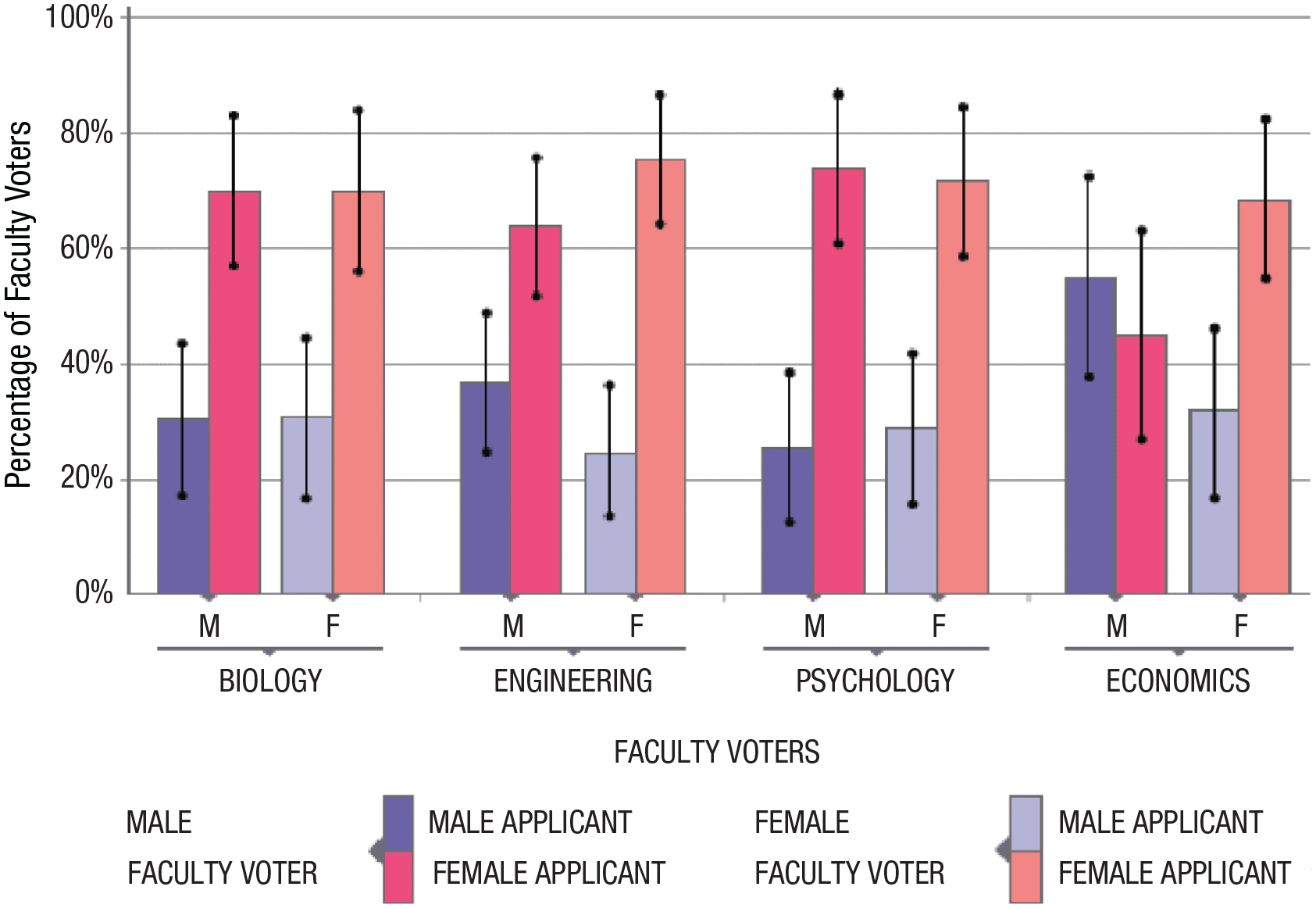

Williams and Ceci (2015) studied a stratified national sample of 872 faculty from two GEMP fields (engineering and economics) and two LPS fields (biology and psychology) to determine preferences for identically qualified men and women possessing outstanding credentials. Figure 3 shows that in the authors’ main experiment (N = 363), faculty expressed a significant preference for hiring women. This pro-female preference was similar across fields, types of institution, and gender and rank of faculty. The only group in which the preference did not appear was male economists, who showed no gender preference. The difference between the findings of Steinpreis et al. (1999) and Williams and Ceci (2015) may be due to differences in the strength of the hypothetical candidates (see Ceci & Williams, 2015), although both studies described them as being very strong, or perhaps to changing faculty attitudes toward gender diversity over the intervening 16 years. Steinpreis et al.’s data were collected in the mid-1990s, and differences may be due to changes over time.

Percentage of faculty members who ranked male (M) and female (F) applicants as the top candidate, separately for male and female faculty voters in each of four disciplines. Error bars indicate ±1 SE. Adapted from Williams and Ceci (2015, Experiment 1).

In a natural experiment, French economists used national exam data for 11 fields, focusing on PhD holders who form the core of French academic hiring (Breda & Hillion, 2016). They compared blinded and nonblinded exam scores for the same men and women and discovered that women received higher scores when their gender was known than when it was not when a field was male dominant (math, physics, philosophy), indicating a positive bias, and that this difference strongly increased with a field’s male dominance. Specifically, women’s rank in male-dominated fields increased by up to 40% of a standard deviation. In contrast, male candidates in fields dominated by women (literature, foreign languages) were given a small boost over expectations based on blind ratings, but this difference was small and rarely significant. 6

Carey et al. (2020) conducted an experiment with 869 faculty at two U.S. state universities. They presented participants with two profiles of hypothetical candidates to be hired as faculty members. Each profile included several attributes relevant to the hiring decision, one of which was randomly selected per faculty respondent and the order of which was randomized across participants. This resulted in a conjoint analysis that allowed for the calculation of marginal component effects to reveal the combination of attributes that faculty rely on to make their faculty-hiring decisions. The authors found that all else being equal, faculty were between 5% and 10% more likely to favor a female candidate or a gender nonbinary candidate, respectively, over an identically accomplished male.

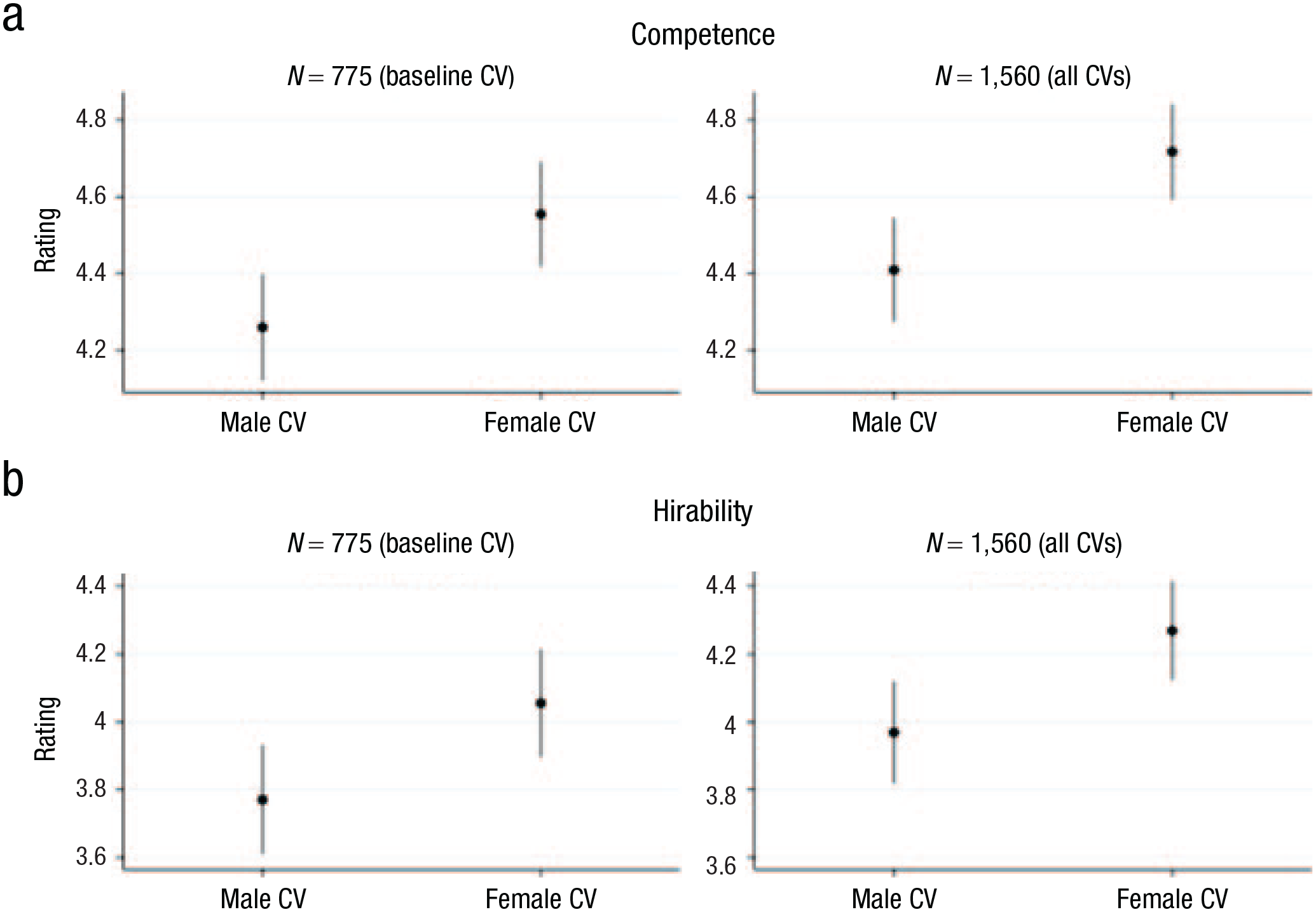

Finally, a large-scale experiment by M. Carlsson et al. (2021) using faculty at 17 large institutions in Iceland, Sweden, and Norway also revealed a pro-female hiring bias. Their experimental design most closely resembles that of Williams and Ceci (2015), who found a significant female advantage, although Carlsson et al. used a between-subjects manipulation rather than the within-subjects manipulation that Williams and Ceci used in most of their experimental conditions. On the basis of actual CVs, Carlsson et al. prepared experimental CVs that varied the hypothetical applicant’s gender, marital status, family status (children vs. no children), and research productivity (four vs. six international publications). Out of a target population of 2,000 faculty in STEM fields plus law, 775 faculty agreed to participate (39%). The authors found a significant pro-female advantage, with faculty rating female applicants’ competence and hirability significantly higher than identically accomplished male applicants’. This can be seen in the nonoverlapping competence and hirability ratings in Figure 4.

Mean ratings of curricula vitae (CVs) for (a) competence and (b) hirability of male and female candidates, separately for analyses including only baseline CVs (left column) and all CVs (right column). In all graphs, the gender difference is statistically significant. Error bars show confidence intervals. Graph reproduced from M. Carlsson et al. (2021, Fig. 2).

These findings contradicted several of M. Carlsson et al.’s (2021) preregistered hypotheses, leading them to conclude as follows:

We expected our survey experiment to reveal a male advantage. . . . We also expected female candidates to have a lower return to children and strong[er] CVs than males. . . . Contrary to our main hypothesis, however, [our analyses showed that] female candidates are perceived as both more competent and hireable compared to equally qualified male candidates. Furthermore, we find no evidence of a child penalty for [either] male [or] female applicants and no gender difference in the pay-off from a strong CV. (p. 407)

Experimental studies of hiring for non-tenure-track jobs

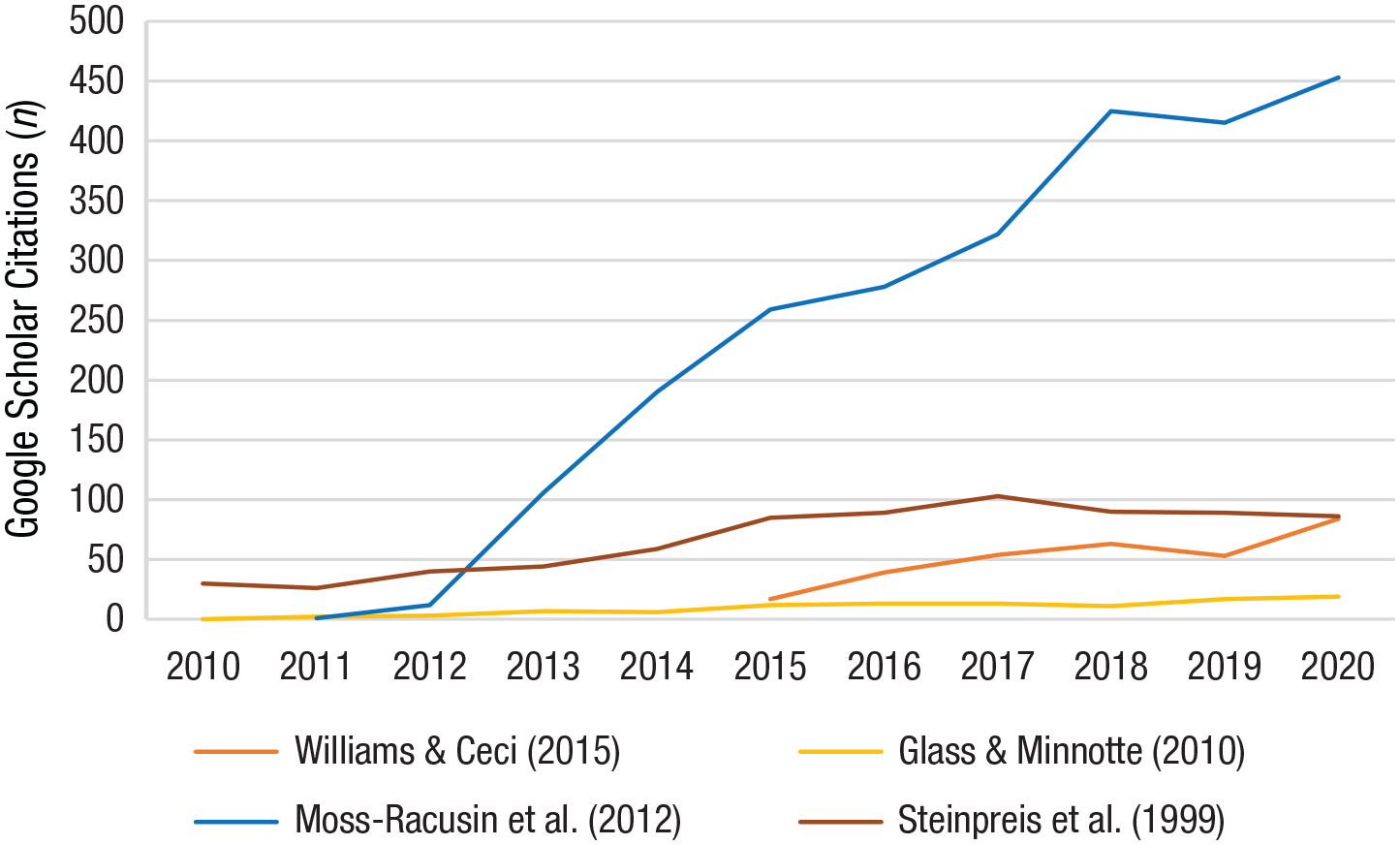

Although the above synthesis focuses on tenure-track hiring, there is a large research corpus that fell outside our inclusionary criteria because it concerned experimental and nonexperimental studies of non-tenure-track hiring. Hundreds of experiments, quasi-experiments, field audits, and observational studies of non-tenure-track hiring have been conducted, focusing on hiring of lab managers, civil servants, software engineers, postdocs, and student employees. In contrast to tenure-track hiring studies, these studies have often reported significant pro-male hiring advantages and double standards (e.g., see Koch et al.’s, 2015, meta-analysis; see also Foschi, 1996; Foschi et al., 1994; Reuben et al., 2014, Moss-Racusin et al., 2012). Some of these studies are highly influential and have been cited to support beliefs about bias in tenure-track hiring, even though none of them involved tenure-track hiring. This is conceptually problematic for reasons we offer below.

Koch et al.’s (2015) meta-analysis of 136 experimental studies of hypothetical hiring situations (not tenure track) explains why tenure-track hiring is unlikely to be gender biased. She and her colleagues found that bias was drastically reduced or completely absent when experienced professionals with motivation to make careful decisions chose whom to hire and when information about applicants was available that clearly indicated their high competence. In such cases, gender bias was nonexistent (.02 SD). Tenure-track hiring checks all three of Koch et al.’s boxes: Faculty doing tenure-track hiring are experienced professionals, applicants for tenure-track posts have high observable competence (CVs, talks, interviews), 7 and faculty are motivated to make careful decisions because unlike in typical experiments, their decisions result in lifetime colleagues.

Summary

The vast majority of findings—from (a) synthetic cohort analysis, (b) institutional hiring records, and (c) experiments—indicate that women are less likely than men to apply for tenure-track jobs, but when they do apply, they receive offers at an equal or higher rate than men do. Even though these three sources of evidence cannot be meta-analyzed, their findings, and those in powerful new experiments, 8 point in the same direction and are not consistent with claims of widespread bias against hiring women for tenure-track jobs. These conclusions extended to studies discussed here from the 1990s. However, there are no experimental studies of academic hiring between 1960 and 1990. Evidence from outside academia, including Schaerer et al.’s (2022) meta-analysis, suggests decreasing gender bias in hiring from 1976 to 2009; similarly, Birkelund et al.’s (2022) harmonized, cross-national callback analysis shows no discrimination against women in six countries differing along institutional, cultural, and economic dimensions. 9

None of this means that women do not face very real barriers in completing their doctoral and postdoctoral training and segueing to tenure-track careers. For example, women are more likely than men to give up their initial aspirations to become tenure-track professors while in graduate school, a finding primarily true of women with children or contemplating children. Undoubtedly, broad systemic factors are partly responsible, along with biological factors, for these women not applying for tenure-track positions. But the data do show that the reason women do not occupy a larger fraction of tenure-track positions is not because of a discriminatory tenure-track hiring process, as many researchers have alleged.

A counter to this conclusion is that in LPS fields (the very fields in which women are very well represented), female PhDs are less likely than male PhDs to apply for tenure-track positions, and this appears to be the primary reason why there are not even more female tenure-track assistant professorships in LPS fields than in the feeder PhD cohorts—something easy to overlook in view of the gender parity or even female superiority among assistant professors in LPS fields.

As shown in the large national analyses of actual tenure-track hiring by various universities and the NRC (2010) panel, female applicants in GEMP fields are usually either equally or more likely than men to be offered tenure-track jobs. Nearly all analyses in the past two decades accord with this conclusion, including the largest and best-controlled ones. Identical-CV quasi-experiments, which two decades ago revealed significant bias against women (Steinpreis et al., 1999), today do not. In the 22 years since Steinpreis et al.’s (1999) study, their finding has been supplanted by neutral and pro-female hiring results from larger studies (Breda & Hillion, 2016; Carey et al., 2020; M. Carlsson et al., 2021; Henningsen et al., 2021; Williams & Ceci, 2015). Identical-CV experiments for non-tenure-track jobs, particularly when candidates do not have unambiguously high qualifications (Eaton et al., 2020; Moss-Racusin et al., 2012) and when evaluators are neither experienced professionals nor have much at stake, are not predictive of the outcomes of hiring of excellent male and female tenure-track applicants, despite these non-tenure-track-hiring studies being cited more often than the latter (evidence presented in the Discussion section).

Evaluation Context 2: grant funding

In this section, we address the frequent claim of bias in grant reviews: “Understanding and targeting potential sources of bias in grant selection processes could be particularly important in improving the career advancement of women” (Alvarez et al., 2019, p. E9).

Grants are crucial to most of science. This makes the question especially important of whether funding agencies are more likely to fund grant applications from men than from women. We synthesize that literature here.

The obvious way to address this question is to compare men’s and women’s success rates: the probability that a grant application is funded. However, that is not the only way to pose the question. Whether a grant application is funded depends on the agency’s assessment of the success of the research, and many researchers believe that PIs who have been successful in past research and publications are also likely to be successful in their currently proposed research. In fact, many grant agencies instruct reviewers to consider the publication record of PIs. And on average, men have more of a track record both in terms of publications and in terms of past grants. There are two reasons for this. First, in most fields and years, male PIs are older and therefore have a larger corpus of publications and are more likely to have been previously funded: Male full professors are, on average, 1.78 to 3.5 years older than women (e.g., van den Besselaar & Sandström, 2017; Brower & James, 2020), and in an analysis of 3,033 tenured and tenure-earning faculty members from 17 R1 universities in the United States, Samaniego and colleagues (2023) found that men were on average 3.8 to 5.50 years older. The second reason is that female scientists publish less than men in the same cohort and field, often because of more career interruptions resulting from family leaves (see Context 7). There have been several reviews of the grants literature (e.g., Ceci, 2018; Ceci et al., 2014; Ceci & Williams, 2011). There is one meta-analysis of many older studies (Bornmann et al., 2007) and a second meta-analysis of the same data 2 years later (Marsh et al., 2009). Here, we briefly review the pre-2006 studies and meta-analyses. We then describe more recent studies in more detail, including a meta-analysis that we ourselves have done, and provide dissectional analysis.

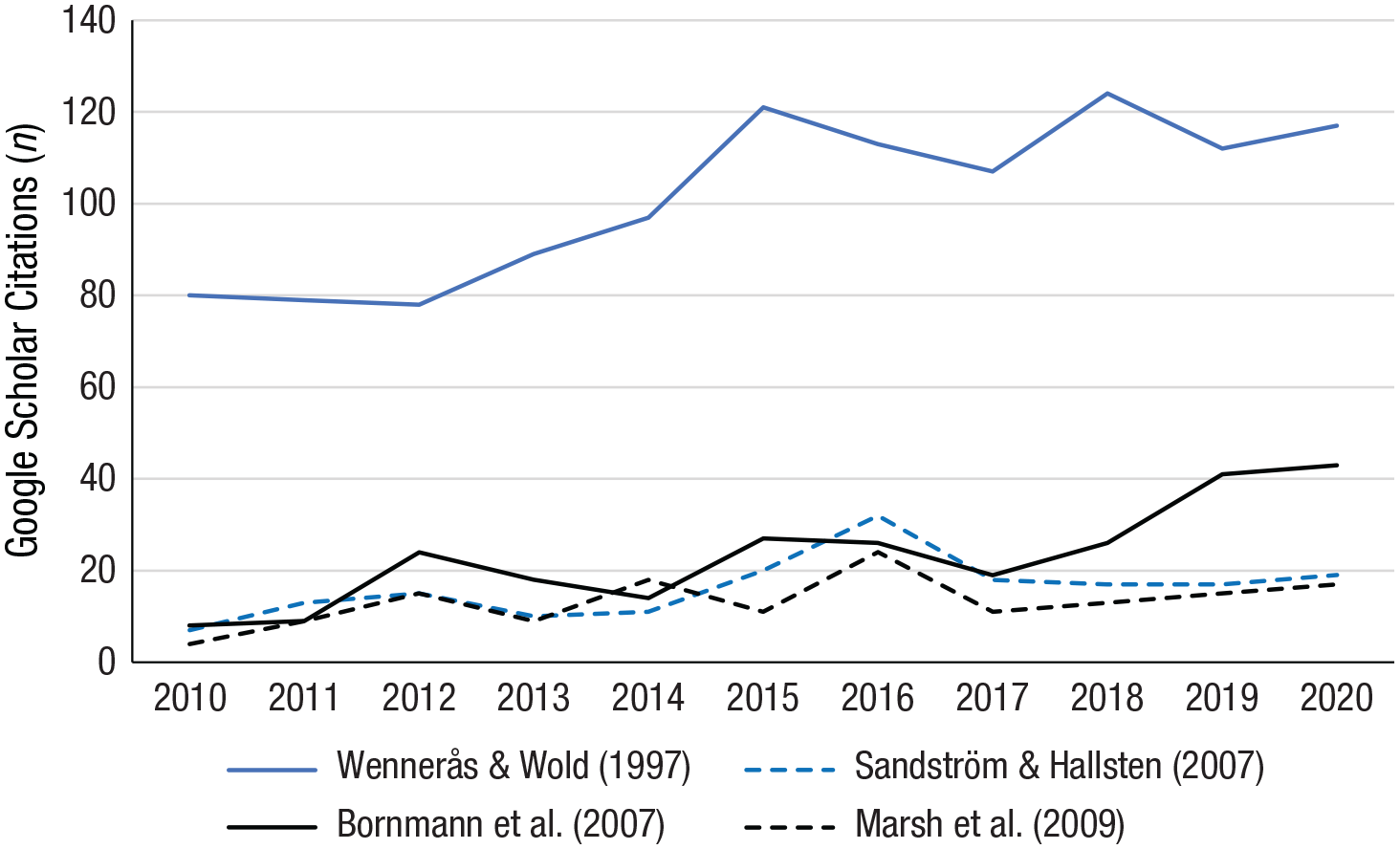

Grants before 2006

Many early studies have examined funding agencies outside the United States. In a highly cited article (1,981 Google Scholar cites as of May 24, 2022), Wennerås and Wold (1997) found Sweden’s Medical Research Council (MRC) postdoctoral fellowships to be biased against women, even after controlling for research productivity. This study has been repeatedly invoked as prima facie evidence of gender bias in grants even after controlling for productivity, for example by Kaatz et al. (2014): “Lending support to this is the classic study by Wenneras [sic] and Wold in which female applicants for a postdoctoral research fellowship needed more than twice as many publications to receive the same competence scores as comparable male applicants” (p. 372). Notwithstanding this claim, Wennerås and Wold’s methodology was problematic (see, e.g., Ceci & Williams, 2011, supplemental text S4; Hansson, 2009), and their data were lost (according to Wennerås and Wold), precluding reanalysis. Sandström and Hällsten (2008) also analyzed Swedish MRC postdoctoral fellowships, now for 2004, using better statistical methodology than Wennerås and Wold’s. In contrast to the latter’s claim of antifemale bias, Sandström and Hällsten found a 10% advantage for women. They (and Wold subsequently, in Wold & Chrapkowska, 2004) note that institutional changes at the MRC over this period may have improved women’s success rates.

There were many other studies of grants during this early period—some found bias against women, a few found bias against men, and many found small and insignificant gender differences (for reviews, see Ceci & Williams, 2011; Grant et al., 1997). In their meta-analysis of these 21 older studies, Bornmann et al. (2007) found that the probability that women were funded relative to men (odds ratio) was on average 7% lower for women, with variation ranging from 22% higher for women to 23% higher for men. However, they (along with colleagues) conducted a second meta-analysis of the same data 2 years later (Marsh et al., 2009), employing an improved methodology, and found “no evidence for any gender effects in favor of men, and even some evidence of an effect in favor of women. . . . This lack of gender difference for grant proposals is very robust” (p. 1311). For postdoctoral fellowships, they did find “a small, but highly statistically significant difference in favor of men,” but concluded that “the size of this effect is sufficiently small that we still interpret [gender differences] as supporting a gender similarity hypothesis” (p. 1311).

We will not separately discuss all 21 of the early studies in the Bornmann et al. (2007) metastudy, except to note that only two of them controlled for productivity (one of which was Wennerås & Wold, 1997, discussed above), but we will summarize the few pre-2006 studies of U.S. granting agencies not included there. A large study of funding cycles from 1997 to 2004 (Hosek et al., 2005) for several U.S. agencies was not included in the Bornmann et al. (2007) and Marsh et al. (2009) meta-analyses. Hosek et al. (2005) found that women had lower success rates and received less money per award than men at the NIH (for 2001–2003 grant cycles), although an earlier study of the NIH found no such gender difference. At the NSF, Hosek et al. found wide differences year to year, ranging from advantages for women to advantages for men, with no time trend and no overall gender difference. A final study of earlier grants found that women and men at Harvard Medical School from 2001 to 2003 had similar grant success, “controlling for academic rank, grant success rates were not significantly different between women and men” (Waisbren et al., 2008, p. 207) and that there were also similar ratios in the proportion of money awarded to money requested.

As noted, only one pre-2006 study beside Wennerås and Wold (1997) had controls for research productivity: Bornmann and Daniel’s (2005) study of German Boehringer Ingelheim Fonds for postgraduate fellowships from 1985 to 2000 found that when productivity was controlled, there was no gender bias.

In sum, pre-2006 evidence suggests that although some agencies evaluated men and women differently, on average they did not. Moreover, even if the flawed Wennerås and Wold (1997) research correctly identified bias, a later study by the same agency (Sandström & Hällsten, 2008) reversed their finding, showing that no bias existed by 2004, and even provided some evidence that women’s grant applications at the same agency were favored.

Later grant studies and a new meta-analysis. 10

Because the only large meta-analysis of grant studies used data from before 2006 (90% before 2001) and analyses of grant awards have proliferated greatly since then, we conducted a series of meta-analyses of gender differences in grant awards starting with the award year of 2000 and ending in 2020 (see Kahn et al., 2022, which contains PRISMA diagrams, funnel graphs, and forest plots). We briefly summarize our approach and results here.

In order to have a truly comparable measure of grant success across all studies in our meta-analysis, we focused on one outcome measured in the same way: the gender differences in the percentage of applications that are funded. Of course, equal average success rates would not necessarily be evidence of gender fairness, for two reasons. First, one gender may have better research proposals or better past productivity. However, on the basis of past productivity, we would expect men to have higher success rates, so equal success rates are likely to suggest no bias against women or even pro-female bias. Second, men and women may work in different fields, countries, et cetera. Therefore, in addition to our basic measurement of effect sizes—which we report below as Cohen’s ds (which equaled Hedges’s gs in this study to three significant digits), the difference measured in terms of standard deviations of the success rate (Cohen, 1988, Formula 2.2.6), we controlled for several different moderators—country, years, fields, and when possible, past productivity.

The criteria for inclusion were that the study must have (a) data on both the number of grants submitted and grants funded by gender and (b) been published between 2000 and November 2020 in English and been based on data from 2000 to 2020. We first searched the Web of Science 11 and then supplemented this by searching the references of articles found for others we had missed. Often studies cited their data sources to be online funding agency websites, and when possible, we used these websites to add additional years of data. When published studies were based on the very same agencies and grants in the same years, we were careful not to double count them.

It is important to stress that the goal of our metastudy was not to investigate what published studies claimed and argued but instead to aggregate the data on applications and grant awards to measure actual gender differences in success rates of grant applications and how they differed across various moderators.

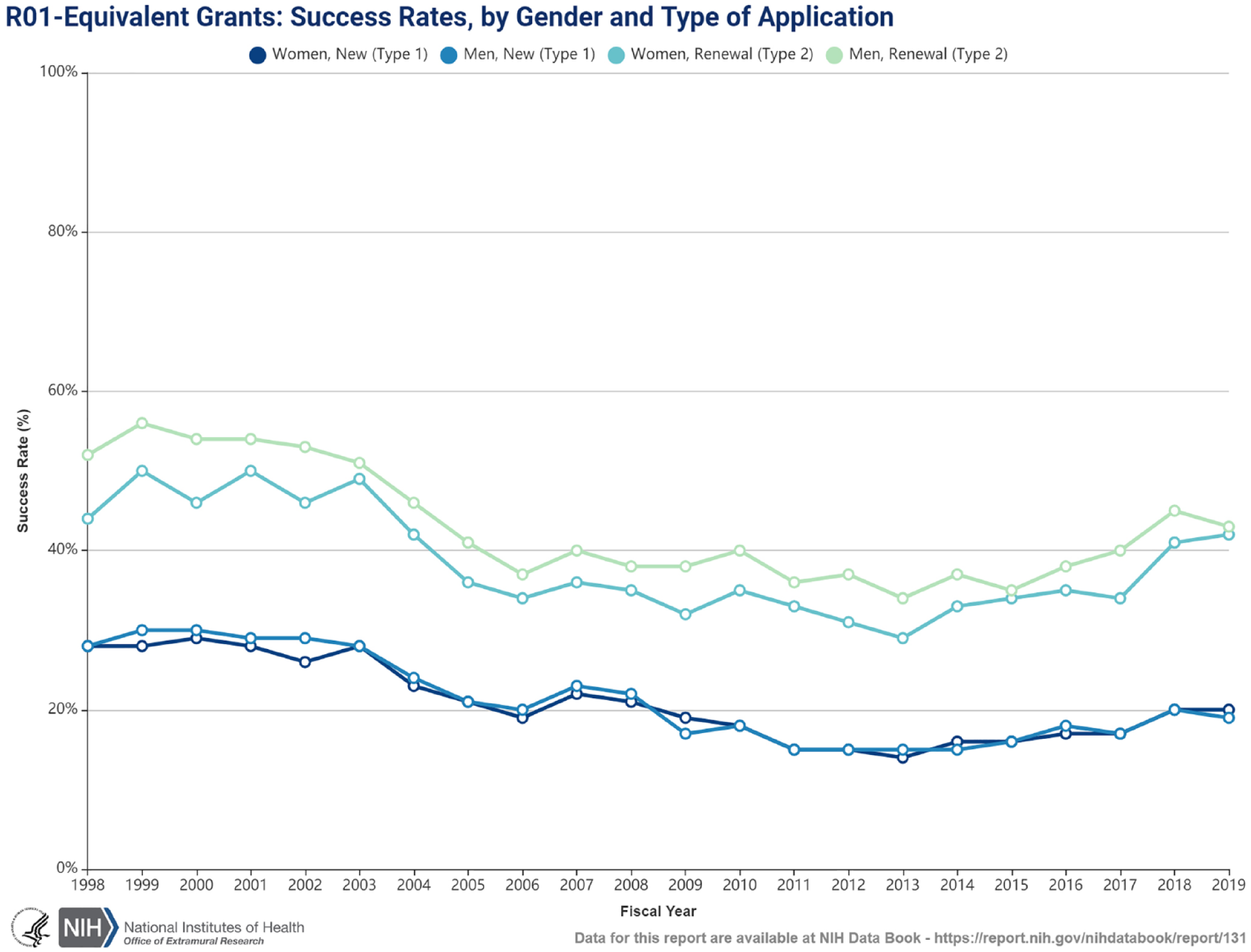

All in all, our meta-analysis included 39 studies with data on 2,051,485 grant applications and 481,485 grants awarded by 27 different granting agencies. When we simply added all grants awarded and all applications by gender, we found that the gender difference in the likelihood of acceptance was 1.1 ppt out of an average success rate of 23.5%. However, this assumes a one-time competition for grants. When we used meta-analysis that allowed for random effects by study and agency and calculated the gender effect size measured as Cohen’s d, we found a male advantage of 0.027 of a standard deviation, exactly equal to the 1.1-ppt difference in success rates without meta-analysis. 12 There was a great deal of between-study heterogeneity in effect sizes (I2 = 91.3). In particular, effect sizes depended strongly on the location of the granting agency: United States, Canada, Europe, and elsewhere. We also separately measured effect sizes by location. Within the United States (where 84% of all grant applications we analyzed originated), the point estimate of Cohen’s d (+ 0.005) suggests that women actually had a tiny advantage, on average, although this was not significant (p = .5). In contrast, in Europe, the male advantage (d = 0.041) was equivalent to 1.7-ppt difference in acceptance rates, whereas in Canada, the male advantage (d = 0.102) was equivalent to a large, 4.2-ppt difference in acceptance rates.

We also use multivariate methods to calculate results controlling for location, broad field, average year, and career stage of the grant program simultaneously. 13 With these controls, we found a time trend, where on average, the male advantage (Cohen’s d) shrinks and the female advantage rises by 0.2 each year, equivalent to a decrease of male advantage of 2.4 ppt over the two decades (controlling for such variables as location). This means that even in locations with large average male advantages, these are considerably smaller by the end of the 20-year period.

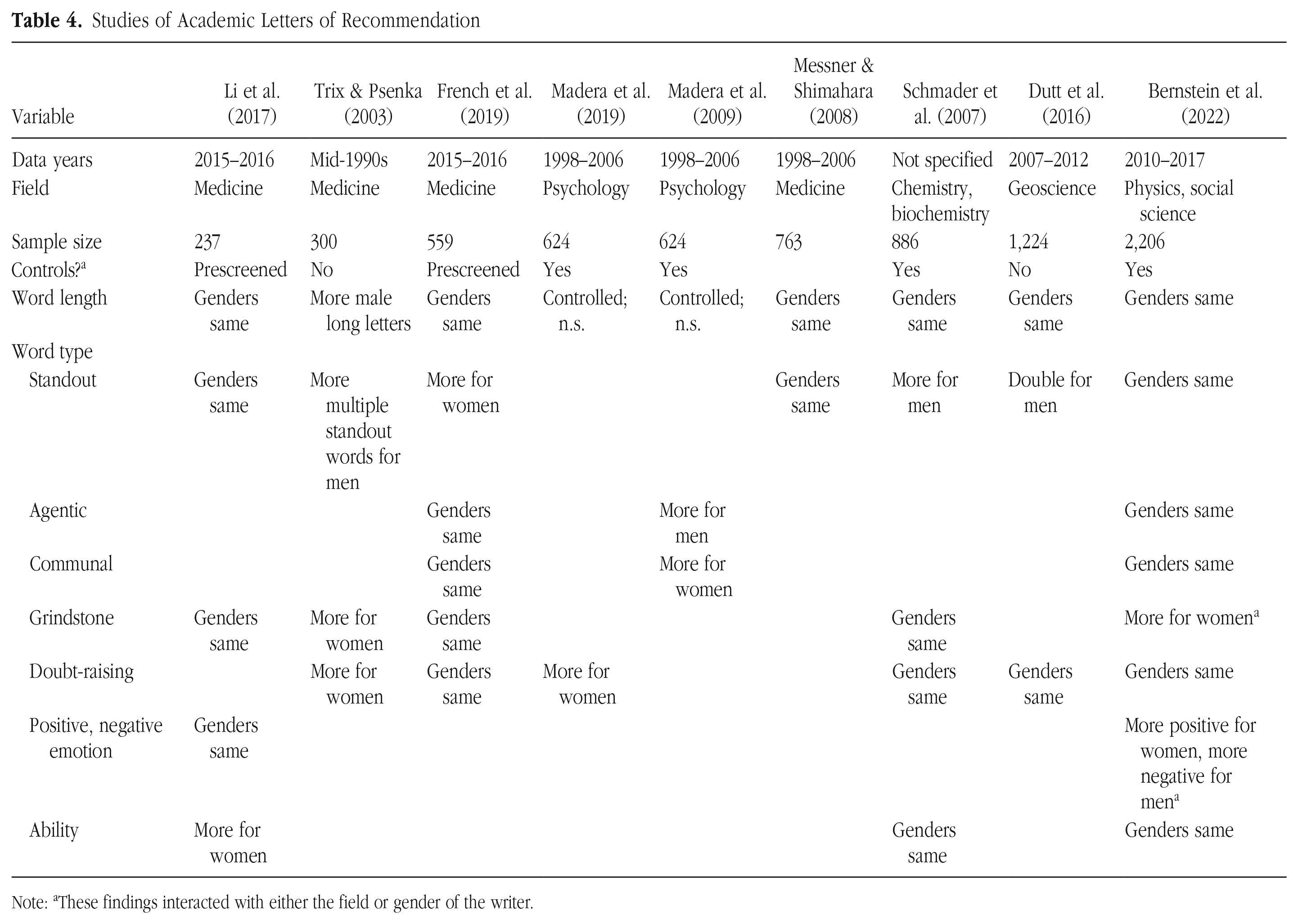

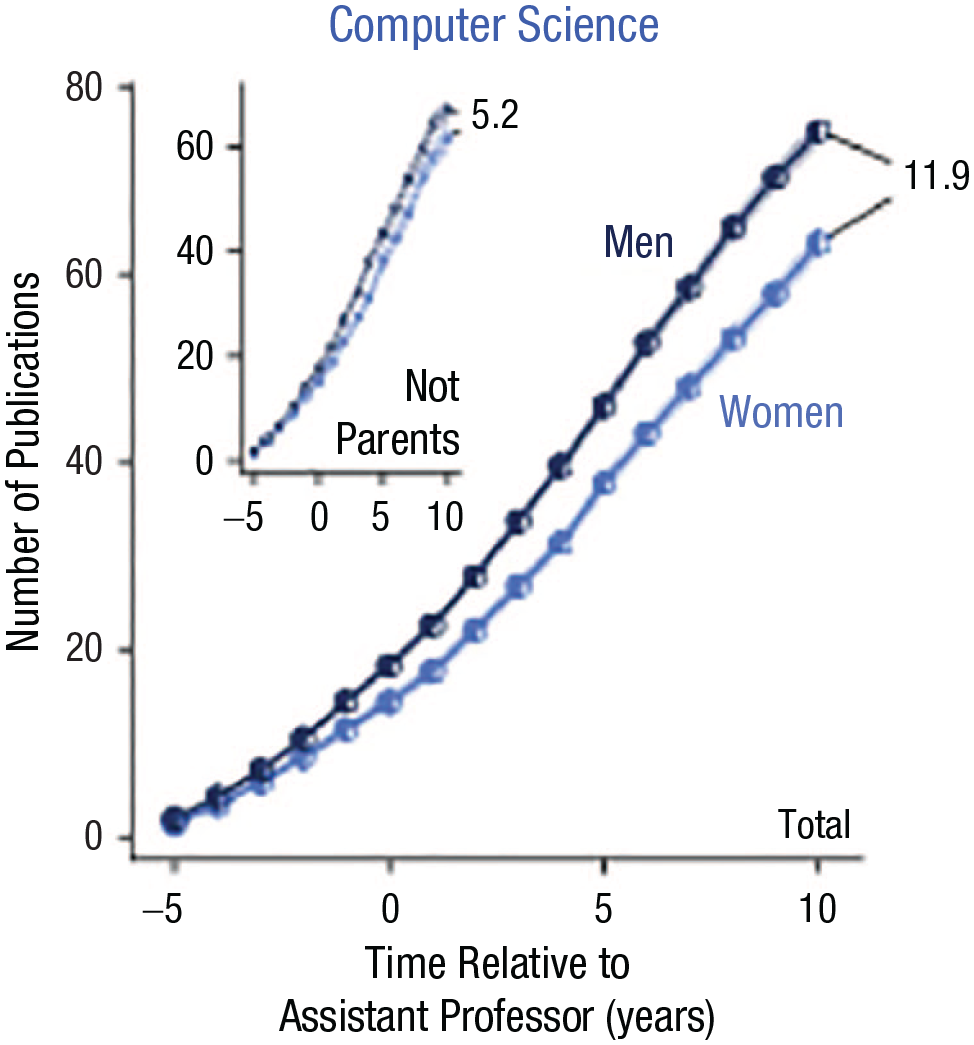

We also found that in social sciences, controlling for other factors, the male-advantage effect size (Cohen’s d) was lower (giving more advantage to women) than in other fields and particularly (d = 0.08) lower than in biomedical or physical science, equivalent to a 3.4-ppt difference in acceptance rates. Grant programs for more experienced researchers had a greater female advantage (d = 0.025) than the average grant program (equivalent to a 1.1-ppt difference in acceptance rates).