Abstract

This article explores how metaphors about what the internet is inform policymaker and industry discourses, when they propose solutions on internet safety. More specifically, it analyzes documents by key players in this debate during a period when the UK government proposed direct regulation of online harms. The study finds that policy documents construct the internet primarily as a “place” that is separate from offline experience; and to a smaller extent as a “tool” that can be abused if it falls in the wrong hands. The article argues that these constructions obscure any links between online and offline risk, and that they legitimize solutions which may not take into account the social roots of online harms. It also suggests that the discourses of policymakers and SNS companies differ in the degree of agency they attribute to users, indicating a discrepancy in their approaches as direct regulation is introduced in the UK.

Scholars analyzing discourse around online risk and safety have often focused on the representation of (particularly young) internet users in public debate. A question that has received less attention in the literature is how online safety discourses conceptualize what the internet is. This article extends previous knowledge, by exploring how key institutional participants in this debate use metaphors of the internet in their narratives about online safety. It argues that metaphoric language that constructs the internet as a separate place from daily experience legitimizes separate measures to control online risk and closes off possible connections with managing offline risk.

The study analyzes policy discourse in a period when the UK government was preparing to introduce regulation against online harms. This was the first time the UK, or any major Western state, established direct regulation in this area. As will be discussed subsequently, the norm in Europe until the mid-2010s was self and co-regulation of the internet. The article analyzes the government’s proposal as well as documents by key players around the same period, to compare their discourse at this moment of veritable shift in policy.

The article uses discourse analysis to investigate how metaphor was used in selected documents published by the UK government, Ofcom (the UK’s telecommunications regulator), and two Social Network Services (SNS) companies, which operate both in the UK and internationally. It suggests that these institutions do not construct online risk as a problem embedded in social experience, but as a phenomenon that happens somewhere else, in a separate online space. I argue that this representation distances the problem from the real world and obscures its connection to behavior in other realms of life. This is significant because our daily experience and interaction with the social world are increasingly mediated digitally (Carrington 2017; Fernback 2007).

Moreover, the article finds that whereas for the policy makers studied the solution lies in direct regulation of this online space, the SNS industry discourse views internet users as responsible for their own safety. This long-established liberal perspective has its roots in decades of self-regulation in this industry, but it clashes with the current move toward state intervention.

Regulation of the Internet

Direct regulation, namely regulation of an industry by a state-appointed body that enforces its own legal rules (Marsden 2011), had not been a preferred option for regulating the internet in Europe until the mid-2010s. This was because state regulation was perceived as too rigid to respond to a complex and changing converged media sector, states were not perceived as having the insider knowledge to regulate the internet, whereas the global companies in this sector are not based within the jurisdiction of a single state (McLaughlin 2013).

Instead the preferred way of regulating the internet in its early years was self-regulation, where service providers and platforms collectively create their own codes of conduct and commit to upholding them—the industry itself creates and enforces the rules (Marsden 2011; McLaughlin 2013). Self-regulation measures to address online risk include internet companies using software that detects and filters inappropriate content on their platforms, tools for users to report misconduct, human or automated content removal, help pages and educational material for users (deHaan et al. 2013). In SNSs’ view, effective safety mechanisms teach users how to control their information sharing whilst maintaining freedom of speech (Jorgensen 2017); and have them moderate each other by signaling to the company which posted content is inappropriate, thus shifting the responsibility for identifying such content from the company to the user (Milosevic 2016, 5173). Indeed established SNSs’ published guidelines often represent responsibility for user safety as a task shared between the SNS and the user community (Milosevic 2016). This rationale, argues Staksrud (2016), outsources responsibility for citizens’ welfare from institutions to citizens themselves, denies them their citizen right to protection from harm, and makes it reliant on how they interact with the technology.

Self-regulation was the norm until the late 2000s, after which point Europe turned to co-regulation, whereby statutory regulators delegate responsibility for regulation to the industry but agree the rules with the industry and maintain an oversight of their implementation (Marsden 2011). This direction of travel from self-regulation toward gradually more involvement of the state seems to have continued, at least in the UK, in light of both the increasing role the internet plays in daily life and a decline of faith in self-regulation to alleviate online safety concerns.

In April 2019, a formal turning point for UK internet regulation policy, the Department for Digital, Culture, Media and Sport (DCMS) and the Home Office published the Online Harms White Paper, with its proposals to set out “the world’s first framework designed to hold internet companies accountable for the safety of their users” (Skelton 2020). Eventually this led to the appointment of Ofcom in late 2020 as a national regulator for online harms. Its role would be to shape rules on behalf of the state, monitor and oversee their enforcement by the industry, and apply sanctions.

Although this shift to direct regulation remains in progress at the time of writing, this article focuses on the period surrounding the publication of the White Paper. It aims to establish how metaphoric discourse both in the Paper and in publications by public bodies and industry players around that time may legitimize or oppose particular solutions. It looks at a key moment in the evolution of regulation and explores how discursive constructions of what the internet is have implications for how it is regulated.

Discourses of Online Safety

Much academic literature analyzing online safety discourses focuses on the safety of young people, who are seen as particularly vulnerable. This research has found that public discourse emphasizes the risks rather than the opportunities young people encounter online (Livingstone et al. 2018). Such risks include exposure to harmful or inappropriate content and contact with individuals who may harm them, but less attention is given to young people’s own potentially problematic conduct online (Livingstone et al. 2014). Bulger et al. (2017) suggest that internet safety discourse internationally has tended to view young people as innocent, in need of protection from risks and harms on digital platforms, but they argue that this fails to acknowledge evidence of young people’s agency in these environments.

The same rationale that characterizes broader public discourse is also reflected in media coverage of internet safety. Literature specifically analyzing mediated discourse agrees that the mass media focus on the negatives and harms of the internet (Haddon and Stald 2009; Ponte et al. 2009), view internet users as “innocent” (Haddon and Stald 2009), “passive” (Ponte et al. 2009), potentially “untrustworthy,” and in need of “monitoring” in an online environment (Fisk 2016; Hartikainen 2016), and only sometimes as potential “aggressors” (Ponte et al. 2009). The kinds of risks the media report tend to involve content and contact risks (Haddon and Stald 2009), as these were defined above. Experts and institutional voices are heard more often than users in media reports (Hartikainen 2016; Ponte et al. 2009).

However perceiving young people as active agents might be important when educating them on risk. Encountering risk can be essential in learning to protect oneself, scholars argue, and media literacy attempts should pay attention to how people deal with risk and develop the ability to cope (Fisk 2016; Staksrud and Livingstone 2009).

Similarly to the public and media discourses discussed above, policymakers broadly tend to see internet risks as isolated from young people’s social contexts. Livingstone et al. (2018) however stress that young people’s experience alters as the digital ecology changes and new generations have these technologies integrated into their lives at increasingly earlier stages. They call for research to focus “no longer [on users’] relationship with the internet as a medium but, more profoundly, [on] their relationship with the world as mediated by the internet in particular and changing ways” (Livingstone et al. 2018, 1117).

This article speaks to this context, by examining whether and to what extent the policy and industry discourses it analyzes discuss online risk as part of users’ relationship with the world. The article makes an original contribution by analyzing metaphoric discourse to address this question. As seen in the literature reviewed above, previous research on internet safety discourses has described common themes within these discourses, but it has not looked at how their use of metaphor constructs this problem and its solutions. However, how we conceptualize solutions for online risk stems from how we understand what the internet is in the first place. The present article takes a step back to analyse policy and industry solutions within the context of what we know about different metaphoric ways of understanding the internet.

Metaphors and the Internet

Lakoff and Johnson’s (1980) cognitive linguistic theory of conceptual metaphor views metaphor not just as a figure of speech, but as a way of understanding concepts in day-to-day experience. This involves constructing one conceptual domain, namely the target domain, in terms of another, the source domain, from which vocabulary is borrowed and transferred. Their premise that people not only speak but also think of target domains in terms of source domains has been criticized on the grounds that such mappings are partial and not all properties of a domain are transferred to the target; that transferring vocabulary between domains does not necessarily reflect how these domains are mentally represented; or that more detail is needed to explain the mental processes involved in metaphorical mapping (see Thibodeau et al. 2019, for a detailed discussion). However several studies have collectively shown that, although linguistic patterns cannot necessarily predict how people will behave, “metaphors can influence how people think about a wide range of sociopolitical issues” and do reflect patterns of thought (Thibodeau et al. 2019, 9).

Metaphor frames issues by selectively highlighting some of their aspects and concealing others and these frames can influence thinking within political and social institutions (Ryall 2008). Metaphors about technology have the potential to set the direction and the agenda for internet research and policy (Wilken 2013). For this reason, “it is crucial to re-examine our use of particular linguistic constructions in policy making” (Markham 2003, 14).

Markham (2003) identifies three metaphors for understanding the internet—as a tool, as a place and as a way of being—which she suggests represent an evolution in how we experience it. Seeing the internet as a tool involves perceiving it as an extension of our physical selves, that allows us to do things like accessing information located far away. Seeing the internet as a place, involves understanding it as a separate environment where we interact “online,” beyond the physical world. A view of the internet as a way of being, by contrast, suggests that there is no distinction between living on and off line, as separate “places,” but the internet is just one “way one learns about, makes sense of, and ultimately knows the social world” (Markham 2003, 10). This metaphor constructs the internet as one of the ways available to interact with others and do things, which is not separate from, nor less authentic than our offline experience (Markham 1998, 168). Consequently, risks that we may face offline, such as scam, theft, terrorism or bullying, also exist online because they are part of the same social world.

Of these three metaphors, the one that constructs the internet as physical place has long been “fundamental to how virtual technologies are framed and understood” (Wilken 2007, 49). In the 1980s and 1990s, spatial metaphors became common in describing this new technology, for instance the information superhighway and cyberspace metaphors (Blavin and Cohen 2002; Nunes 1995). Each spatial metaphor has ideological implications for what can and should be done with the internet: the superhighway metaphor legitimates external regulation and emphasizes the ephemerality of online content; whereas the cyberspace metaphor precludes regulation and emphasizes the boundless nature of the internet (Blavin and Cohen 2002). Similarly, the metaphoric construction of the internet as a platform, which is common in the discourse of social media companies, emphasizes its empowering, democratizing role and, at the same time, limits these companies’ liability for what is published on their “platforms,” thus averting external regulation (Gillespie 2010). Indeed, as the above authors demonstrate, “in so far as metaphor is intertwined with rhetoric, metaphor is never innocent” (Wilken 2013, 642) because it is part of an attempt to persuade. Internet services that position themselves as platforms are not necessarily successful in avoiding regulation when political pressures prevail; indeed social media platforms are currently being targeted by European regulation. This though does not change the fact that the associations the platform metaphor carries are part of a rhetoric that attempts to deflect responsibility from these companies.

Although the superhighway and cyberspace metaphors became redundant over time, the internet as a separate physical space remains a dominant metaphor (Matlock et al. 2014). However, constructing the internet as separate from reality conceals that it does not create problems that aren’t also encountered in the real world (Blavin and Cohen 2002). Indeed as the internet gradually becomes central to how people do things in their daily lives, Blavin and Cohen (2002) predict a move toward a metaphor of the internet as “real space” (or as a way of being in Markham’s terms). Nunes (1995, 317) goes further to suggest that the internet will one day replace real place and become “more real than real,” as our experience of life is increasingly mediated through it. Mobile smart technology providing constant online access is already contributing to this (Wilken 2013).

Similarly, Carrington (2017) highlights that “online” and “offline” are themselves metaphors which are getting increasingly old, because the internet is no longer something we “connect” to some of the time via a modem, but it is there, “ready-to-hand,” constantly. “All our lives are technologically mediated in some way” (Carrington 2017, 17) and how we experience the world is partly constructed through technology.

Method

This article applies insights from the above research on internet metaphors to an analysis of current debates on online safety. It addresses two questions:

RQ1 Which metaphors inform the way policy makers and SNS companies discuss online safety in their respective documents? What does this reveal about how they understand potential solutions to online risk?

RQ2 Who do policy makers and SNS companies represent as responsible for addressing online risk?

It uses qualitative discourse analysis of documents produced around the period of the publication of the UK government’s Online Harms White Paper. This was the first time the UK government proposed direct regulation of the internet and the article analyzes the proposal itself, as well as material about online safety published by other public and private players in the period before/after this proposal. The purpose is to compare the discourse of the government at this official turning point to that of other players in the field in the same period. More specifically, this article analyzes:

The UK government’s Online Harms White Paper, presented to the UK Parliament in April 2019 by the Secretary of State for Digital, Culture, Media & Sport and the Home Secretary, setting out plans for establishing a new regulatory framework for online safety. These plans were subsequently put out for public consultation.

The UK’s Office for Communications (Ofcom) Addressing Harmful Content Online paper, published in September 2018 and presenting the regulator’s perspective on online safety regulation. Ofcom was at the time the regulator for UK broadcasting, and also the main candidate to be allocated the role of regulating online services.

The UK Council for Internet Safety (UKCIS) Digital Resilience Framework, published in September 2019. This aimed to help internet companies in supporting resilience to risk. The UKCIS is part of the government’s Department for Digital, Culture, Media and Sport, but brings together technology companies, government and the third sector to cooperate on online safety. Its members include digital media companies such as Facebook and Google, as well as Ofcom and other key players.

All press releases and blog posts posted on the corporate websites of TikTok and Instagram, which had online safety as their subject and were published between May 2019 and February 2020.

All videos included under TikTok’s “You’re in control” online safety tag. These were published in November 2019 to educate users on TikTok’s safety features.

These actors represent UK government, regulators, industry bodies and two major SNSs which operate both in the UK and globally. TikTok and Instagram were specifically selected due to their growing number of users in the period studied (Statista 2020a, 2020b) and their popularity among younger users (Ofcom 2020), since the safety of young people is central to these debates.

All the content of the above documents was subjected to discourse analysis. As a qualitative method, discourse analysis works with small corpora and delivers an in-depth explanation of how language is used to reproduce a particular “system of values and beliefs” (Scannel 1998, 256). It is not about measuring or statistically representing elements of texts but about interpreting them: the researcher’s task is to build a convincing explanation of the text based on theoretical and empirical arguments (Chouliaraki and Fairclough, 1999). Its validity relies on the systematic application of the analytical tools chosen, on citing excerpts to back up the inferences made, on reporting any instances that contradict these inferences, and on providing a convincing account with evidence from the analyzed texts (Lincoln and Guba, 1985).

My analysis focused on two aspects of the language used: metaphor analysis (Charteris-Black 2011) and an analysis of transitivity (Richardson 2007). Metaphor analysis is based on Lakoff and Johnson’s (1980) cognitive linguistic theory of metaphor, reviewed in the previous section. I followed Charteris-Black’s (2011) empirical approach: this involves reading each text carefully and systematically identifying every instance where words or phrases are used with a non-literal meaning; then identifying the source domains these were taken from and mapping the correspondences between source and target domains (Charteris-Black 2011). The target domain I focused on was the internet, so I mapped all metaphoric expressions about it to their corresponding source domains. I classified together all excerpts where the same source domain was used (instances of the same metaphor) and I present the metaphors I identified in the first two findings sections below. I use excerpts from among those classified under each metaphor to illustrate how the metaphor typically appears in the texts. Conceptual metaphors are in small caps, as is conventional in cognitive linguistic theory (Lakoff and Johnson, 1980).

An analysis of transitivity involves systematically identifying all the verbs used in a text, and their syntactic subjects and objects. This is done to discuss who is presented as doing what to whom, who is in subject position controlling action and is thus represented as powerful, and who is affected by the actions of others (Fowler 1991; Richardson 2007). In each of the analyzed texts, I identified the syntactic positions occupied by the main actors in the safety debate (internet users, regulators, and the government) in every sentence they were mentioned, I grouped together excerpts where the same actors held the same syntactic positions, and I thus identified patterns in the different texts. I discuss these patterns in the third findings section below, and I present typical examples illustrating them.

Findings

The Internet as a Place

The first finding of the analysis is that the internet is not represented as “a way of being” (Markham 2003) by most of the actors involved in addressing online risk. Although the internet is increasingly an integral part of everyday life, the main metaphor used to describe it in the discourse of policymakers, regulators, and the industry is that of a place. Going to this “online place” implies that it is located separately from offline experience (to “go” somewhere presupposes leaving another place behind) and, in this way, the discourse analyzed conceals the possibility that the risks that exist online may be rooted in the “offline world.”

The metaphor of the internet as a separate place (

Similarly, the White Paper also warns that “if we surrender our online spaces to those who spread hate, abuse, fear and vitriolic content, then we will all lose” (HM Government 2019, 3). In this instance of the place metaphor, it is combined with a war metaphor, whereby the internet is represented as a land that can be “surrendered” to an enemy. The enemy is those who cause online risk (“those who spread hate, abuse, fear, and vitriolic content”) and any attempts to deal with online risk are thus constructed here as a war against these anonymous individuals. The war metaphor serves to evoke a threat “that must be curbed by human intervention,” which is a common rhetorical technique in political discourse around technology (Puschman and Burgess 2014, 1697).

Further examples of the place metaphor can be found in Ofcom’s Addressing Harmful Online Content report, where “a debate is underway about whether regulation is needed to address a range of problems that originate online” (2018, 1). “Online” again is a linguistic indicator of place in this excerpt, and this time this place is a breeding space for problems. Later in the same report, the internet is constructed as not just a separate place, but a completely different world: “existing frameworks could not be transferred wholesale to the online world” (Ofcom 2018, 4).

In line with the two policymakers’ documents above, the industry documents also metaphorically construct the internet as a distinct place. However, whereas in the policy documents this place is presented as rather negative, problematic and risky, as was seen in the earlier examples, in the industry material the dominant version of the place metaphor is that of a more positive “environment”: “people socialise, explore, create and work in digital environments” (UKCIS 2019, 1) “our Community Guidelines support a safe and open environment for everyone” (Instagram 2020a) “our platform empowers users to express themselves, inspire others, and have fun in a safe and welcoming environment” (TikTok 2019b).

Understandably, in the above examples the industry itself sees the internet as a place for self-expression and entertainment, rather than a breeding ground for negative experiences. In line with this more favorable deployment of the place metaphor, the industry documents also use the spatially-based metaphor

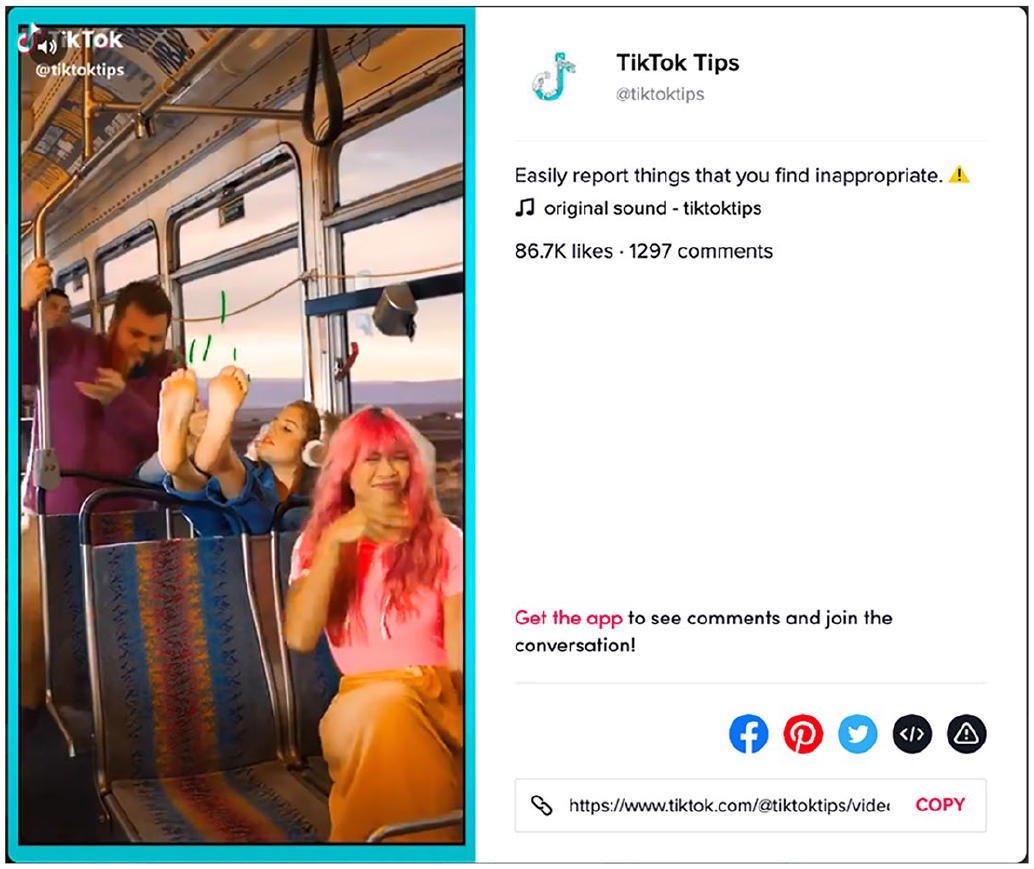

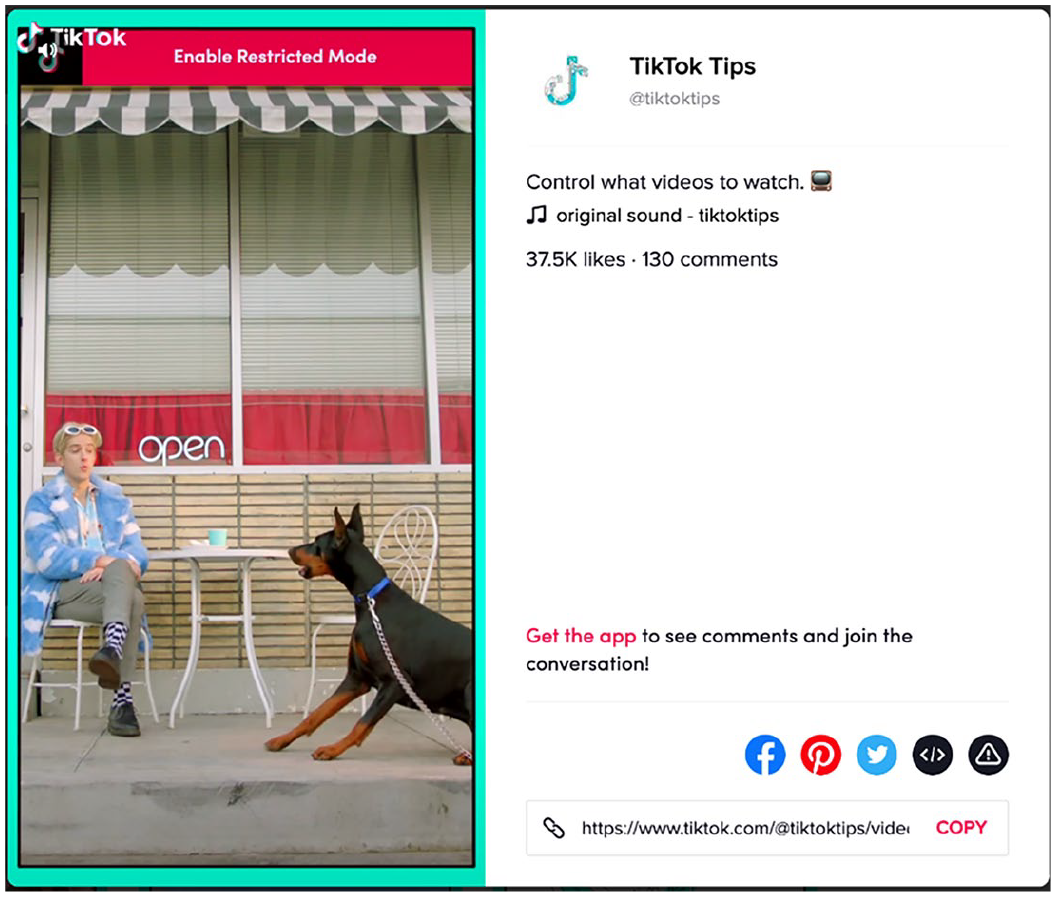

The only material analyzed where the construction of the internet as a separate place is challenged are the “You’re in control” videos that TikTok uses to promote online safety among its young users. Similarly to the examples analyzed so far, these videos also feature

By contrast to the previous examples, the spatial metaphor here connects online and everyday experience. These instances are the closest any of the analyzed material came to

The solutions given in the videos are initiated either by the recipient of the annoying behavior or their peers: a bus driver throws a pair of shoes for the offender to cover their bare feet, another character throws negative words down a toilet. Internet users are represented as in control of their own and each other’s experience. This is consistent with SNS’s longstanding approach to online safety, as discussed in an earlier section, and is an example of a tendency to outsource institutional responsibility for reducing risk to citizens, positioning them as making decisions previously made by institutions, and making it their fault if they fail to protect themselves (Staksrud 2016). They may be granted agency, but they are also treated as consumers of a product, not as citizens who have a right to be protected from harm (Milosevic 2016; Staksrud 2016).

The Internet as a Tool

A second finding of the analysis is that the UK government’s White Paper, setting national policy on internet safety, constructs the internet as a tool that may fall in the wrong hands and be used to harm. This is significant because framing the internet in such a way portrays risk as intentionally caused by wrongdoers who take advantage of the “tool” to harm innocents, and not as something that emerges from social behaviour. As such, the government adopts an understanding of online harm as an external threat to (British) users and not as something they may cause themselves.

“Online services can be used to spread terrorist propaganda and child abuse content, they can be a tool for abuse and bullying, and they can be used to undermine civil discourse” HM Government (2019, 12).

This metaphor transfers to the internet qualities normally associated with a tool: it helps materialize the intentions of its user and directly affects those on whom it is used, in the way that the user intends it to. The metaphor thus carries over connotations of control and domination (Warnick 2004) into the target domain of the internet. It also carries over the expectation that, like a tool, the internet was created with some specific purpose to fulfill (ibid) and other uses of it are wrong.

The metaphor additionally allows the document to construct an anonymous enemy who uses this manipulatable “tool” to threaten national safety. In other instances of this metaphor, the Online Harms White Paper states that the internet “can be used to undermine our democratic values” (HM Government 2019, 5); “threaten our national security” (ibid, 11); or “threaten our way of life in the UK” (ibid, 30). The identity of this threatening enemy is concealed through agentless passive voice and nominalizations, but the discourse creates an entity for the British government to fight against and thus legitimizes punitive legislation.

This understanding of the internet as a potentially dangerous tool in the wrong hands precludes an understanding of online risk as part of societal behavior. The tool metaphor “separate[s] the technology from its users as well as from the contexts of its use” (Koteyko et al. 2015, 480). Users of the internet are absent in the example above and have no agency over the “spread of terrorist propaganda,” “abuse and bullying,” or over what happens to “civil discourse.”

Taking Action Against Online Risk

The previous sections established that in the analyzed documents online risk is constructed as something happening in a separate place from ordinary experience, and as a misuse of the internet by an enemy that needs to be fought against. This section will explore who is represented as responsible to undertake this “fight.”

Policy makers and the industry have divergent views on this matter, as these emerge from the analysis of their respective documents. Whereas for policy makers the solution lies in government initiative and legislation, in the industry documents internet users have more agency, supported by the SNS companies themselves.

In the Online Harms White Paper, the British government is constructed as the main agent taking action against online risk. Although there are some instances in this discourse where regulators and internet companies are also presented as having some responsibility, this is presented as a result of the government mobilizing them. For instance: “This White Paper sets out government action to tackle online content or activity that harms individual users, particularly children, or threatens our way of life in the UK” (HM Government 2019, 30). “The government will establish a new statutory duty of care on relevant companies to take reasonable steps to keep their users safe and tackle illegal and harmful activity on their services” (HM Government 2019, 42).

The main actor controlling action verbs in the two excerpts above is the government (and its White Paper via metonymy). This actor is responsible “to tackle” online risk, and to “establish” a duty for internet companies. In the second example, companies appear in subject position of action verbs too (“take steps,” “keep,” “tackle”) but these are all in secondary clauses, resulting from the action of the government (“establish a statutory duty”).

The role of internet users in the Online Harms White Paper is overall limited. Users appear rarely in the document as subjects of verbs—they are usually affected by the actions described in sentences. For example: “All users, children and adults, should be empowered to understand and manage risks so that they can stay safe online” (HM Government 2019, 85). “This White Paper sets out a programme of action to tackle content or activity that harms individual users” (HM Government 2019, 6).

In the first example above, users are the subject of an agentless passive (“should be empowered”) and the active voice verbs “understand” and “manage.” Although they are positioned as “managing” their online experience, this is not possible unless another nameless agent enables them (“should be empowered to”). Besides “empower” triggers the presupposition that users are currently powerless. In the second example, as in many others in the document, users are the object of a verb (“harms”) controlled by other actors, in this case by the nominalizations “content” and “activity.” On the few occasions in this document where internet users are subjects of verbs, these are thought processes (such as “understand” of “feel”) but never action verbs. The document does not assign internet users agency over their safety.

In Ofcom’s Addressing Harmful Online Content document, legislation, and regulation are the agents responsible for dealing with online risk. Once again, internet users are powerless. Heavy use of nominalizations excludes human agents from the narrative, but the implied agents responsible are governments and regulators. For example: “New European legislation will increase the level of regulation of online video content” (Ofcom 2018, 19). “We also cover how regulatory and voluntary initiatives have developed various protections in certain parts of the online world” (Ofcom 2018, 14).

In both the above excerpts, nominalizations replace human agents (“legislation,” “regulatory and voluntary initiatives”) as subjects of action verbs (“will increase,” “have developed”). The implied human agents are legislative authorities. Moreover, and similarly to the government, Ofcom’s document constructs the public as passive in regards to its own safety: “But there is an intensifying, global debate over how to address the various problems that people experience online” (2018, 3). “However, users should be able to trust or, at least, critically assess the factual content they view online. People should be able to know who has created the content they see” (2018, 30).

In the examples above, users are the subject of mental state/action verbs (“experience,” “trust,” “assess,” “know”) but don’t yield any power over their online experience. Here users are constructed as in control of what they think, but not in a position to do anything about their safety.

Responsibility for causing online risk is not attributed to anyone in Ofcom’s Addressing Harmful Content document. Nominalizations (“bullying,” “harassment,” “conduct”) replace human agency wherever online risk is mentioned, thus avoiding to identify who does these actions. For example, “personal conduct that is illegal or harmful - such as bullying, grooming and harassment” (Ofcom 2018, 12) does not reveal who is the agent of the “conduct,” “bullying,” or “grooming” referred to. This contrasts with the discourse of the White Paper examined earlier, which attributes online harms to an enemy of the nation. For Ofcom, online harms are therefore represented as phenomena (nouns) rather than as actions (verbs).

Clearly neither Ofcom nor the UK Government regulate individuals’ behavior online. These actors’ remit is to regulate the SNS industry, which then enforces measures on users. This may to an extent justify why users don’t feature as powerful actors in regulators’ documents though, that said, the SNS industry does not feature as very powerful either. These documents place policymakers or regulation as key agents in securing safety, thus helping to reinforce the need for external regulation.

By contrast to the government and Ofcom documents, which represent users as broadly powerless over their own safety, the SNSs’ press releases and safety-themed blogs position them in control of their experience: “Your teen’s account can be set to private, meaning their content will only be seen by approved followers. They can also block and report abusive accounts” (Instagram 2020b). “It’s also important for our community to look after their wellbeing, which means having a healthy relationship with online apps and services” (TikTok 2020)

In these examples, internet users are in subject position of physical action verbs (“block,” “report,” “look after”) affecting themselves and their peers. They are thus positioned as powerful actors who can take action to protect themselves against risk. Both platforms’ discourse also features several instances where the SNS is in control of action that assists users in their effort to protect themselves. For example: “In addition to features that help you stand up to bullying, we’ve created new ways to help stop bullying before it happens” (Instagram 2020c).

In this excerpt the SNS controls action (“help”) which has users as its object and allows them to carry out the action that they control in the secondary clause (“stand up”). Although the user is still responsible for standing up to bullying, the SNS contributes to this task and controls three action verbs (“help,” “created,” “stop”). Online safety is thus a collaboration between companies and users, where the former enable the latter. Established SNSs often represent responsibility for user safety as shared between the SNS and the user community: previous research revealed that SNSs view user communities moderating themselves as “an advanced or evolved self-regulatory mechanism” (Milosevic 2016, 5174). This tendency to shift responsibility was formed over years of industry self-regulation, as discussed earlier, but clashes with the discourse of direct regulation found in the policymakers’ documents, which place responsibility on institutions and not on users.

Finally, responsibility for causing online risk is attributed differently in the discourse of the two SNS companies: Instagram sometimes attributes responsibility to users for causing harm, whereas TikTok avoids this. Like in Ofcom’s document, TikTok’s press releases and safety blogs do not present users themselves as causing online harms: “Harmful or dangerous content, violence, discrimination or hate speech, abuse or sexual activity, harassment or cyberbullying, and misleading content has no place in the TikTok community” (TikTok 2019a)

Wherever TikTok refers to harmful behavior, this is in nominalizations (“content,” “violence,” “discrimination,” “speech,” “abuse,” “activity,” “harassment,” “cyberbullying” in the example above). Using these nouns, instead of the equivalent verbs, represents these behaviors as phenomena rather than as someone’s deliberate action. By contrast, Instagram occasionally attributes harmful behavior to its own users: “Young people face a disproportionate amount of online bullying but are reluctant to report or block peers who bully them, so last year we created a new feature called Restrict” (Instagram 2020c).

“Peers who bully them” directly attributes responsibility not to some enemy, like the government’s White Paper did earlier, but to ordinary users. This avoids representing bullying as an abstract phenomenon and is consistent with viewing it as part of social behavior, perhaps not too dissimilar to real-life bullying.

Conclusion

Metaphors open up ways of thinking about things by making connections of analogy between these things and originally unrelated domains of our experience (Lakoff and Johnson 1980). At the same time though, when a metaphor becomes the standardized way of speaking about an issue, it constructs a system of meaning that pushes back other ways of understanding this issue. Spatial metaphors of the internet originate in a time when it was a relatively new technology (Nunes 1995), that people accessed some of the time to seek information or to have interactions “somewhere else.” However, thinking about going online as going to another place discourages an understanding of what happens there as behavior that is also encountered offline.

This article has found that an understanding of the internet as a place separate from the “real” world remained dominant in official discourse at a time when the UK was taking a major step toward direct regulation of the industry. Moreover it found that UK legislators specifically also viewed the internet as a tool that could fall into the wrong hands and be used for harm. Such constructions legitimize rules to control this online “place” and institutions to “punish” those who intentionally cause harm.

The metaphor of the internet as a separate place was common across both the policymakers’ and industry documents. One of the SNSs used the internet “as a way of being” (Markham 2003) metaphor, but only in videos addressing its users. The fact that, as opposed to policymakers, SNSs have a responsibility to set rules directly for individuals may have influenced this but, even so, this metaphor was relatively rare.

Place and tool metaphors encourage a view of online risk as having different qualities from risk in everyday life. They discourage policymakers, regulators, and the industry from assessing what learnings can be transferred from dealing with harms in other realms of life. These discourses therefore restrict policy interventions by obscuring the possibility that doing harm online might be one way people do harm within the social world and addressing it might involve exploring causes and solutions in society.

The solutions encouraged by the place and tool metaphors are punitive and top-down. Dealing with risk people encounter in a place foreign to their ordinary experience, or stopping evil wrongdoers using a potentially dangerous tool against the powerless both require strict rules to be set and implemented by policing institutions. By contrast, intervening to prevent people harming others as part of everyday interactions would involve engaging with users, consulting them and understanding how these interactions work.

In general the documents analyzed either present the perpetrators of online harms as enemies of the state (the White Paper), or don’t even refer to them as human actors. Online harms are often written about as phenomena without agents. This makes it difficult to see them as behaviors that need to be comprehended in order to resolve.

In addition, the findings suggest that the discourse of UK policymakers veers away from the industry’s perspective that puts responsibility for online safety on the end user. This latter approach is well-established among SNSs and it is how the industry has traditionally managed self-regulation of online safety (Jorgensen 2017; Milosevic 2016). However the policymakers’ documents examined here put responsibility on the state and regulators for addressing online harms and protecting users’ rights. This discrepancy between companies’ neoliberal and legislators’ interventionist discourse might suggest a disconnect between them, as the UK proceeds with direct regulation.

In an era when the internet is constantly available and we live and manage interpersonal and professional relationships through it (Blavin and Cohen 2002; Carrington 2017), the distinction between online and offline is perhaps more blurred than the analyzed documents may suggest. Our social world is experienced through multiple means, some of them physical and some digitally mediated. Perhaps this interconnectedness could also extend to thinking about preventing or addressing risk.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.