Abstract

Background:

Community-based social marketing (CBSM) offers a pragmatic five-step approach to developing a program that fosters sustainable behaviour. However, how the CBSM theoretical framework has been implemented into practice remains largely under-evaluated. To help address this gap, Lynes et al. developed 21 benchmarks to assess CBSM programs. This research builds upon these benchmarks by using both the benchmarks and additional assessment criteria to assess five Canadian programs that have used CBSM principles.

Focus:

This paper is related to research and evaluation of community-based social marketing.

Research Question:

How has the CBSM theoretical framework been implemented in practice at the community level?

Importance to the Social Marketing Field:

By exploring how five Canadian programs have implemented CBSM, this paper enables practitioners to align their programs with CBSM principles more closely. It also contributes to the literature on CBSM effectiveness.

Methods:

Five qualitative case studies were assessed, each featuring a Canadian community program seeking to influence residential water efficiency behaviour. In order to systematically assess each program’s adherence to the CBSM theoretical framework, a CBSM benchmark assessment tool that proposes additional assessment criteria to Lynes et al.’s 21 benchmarks was developed. The assessment tool allowed for replicable benchmark assessments across multiple programs. Triangulation of data from both primary (survey and interview) and secondary (peer-reviewed literature, gray literature, and online reporting) data sources informed the assessment of each case study.

Results:

On average, over the five case studies, just over half of the 21 benchmark criteria were fully integrated into the programs, whereas just under a third were partially integrated, and approximately one fifth were not integrated at all.

Recommendations for Research or Practice:

While the benchmarks were fairly well integrated overall, this paper outlines several recommendations that programs may consider to improve alignment with the CBSM theoretical framework and benchmarks. Recommendations for future research to explore CBSM effectiveness are also made.

Limitations:

Lack of generalizability due to small sample size, unable to make assessments of programmatic success, and inherent limitations of the benchmark assessment tool.

Community-based Social Marketing (CBSM) is widely used by practitioners as a framework for developing campaigns that foster pro-environmental behaviour. Over the past 2 decades more than 70,000 practitioners worldwide have received formal training by CBSM’s founder, Doug McKenzie-Mohr (McKenzie-Mohr, 2019b). Presumably many others have familiarized themselves with CBSM through informal channels or through McKenzie-Mohr’s open access book Fostering Sustainable Behaviour (McKenzie-Mohr, 1999, 2011). There is strong theoretical evidence from social psychology and adjacent disciplines to support the foundation on which CBSM is based. However, despite CBSM’s popular use as a framework for practitioners, there is very little documented evidence of who has used the framework, how it has been applied in practice and what factors have contributed to successful program outcomes. To date, the information available related to the application of CBSM has centered largely around anecdotal stories from practitioners and single descriptive case studies (see, e.g., Tools of Change, n.d). Stemming from this paucity of data, Lynes et al. (2014) developed a set of benchmark criteria to use as a tool to explore how practitioners have mobilized the CBSM framework in their programs and practices. The objective of this paper is, in essence, to “test drive” the benchmark tool established by Lynes et al. (2014) by applying the criteria to five Canadian water efficiency programs that used the CBSM framework.

This research is part of a larger study that is creating an inventory of campaigns that have used CBSM in their program development and identifying through program evaluation, factors that contribute to and impede successful program outcomes. This research contributes to the ongoing discourse on the link between theory and practice in behaviour change initiatives more generally. The practical implications of this project are equally important. As more is learned about how programs have implemented CBSM, practitioners and academics can better understand how behaviour change theories operate in real-world contexts. Further, increased measurement may also empower investigators to develop an improved understanding of how effective the CBSM model is at fostering sustainable behaviour, and which elements are most critical to the success of creating meaningful behaviour change.

Background

The CBSM framework offers a pragmatic five-step approach to encouraging pro-environmental behaviour by developing community programs that shift away from information-based campaigns toward campaigns that apply knowledge from the social sciences to elicit behaviour change (McKenzie-Mohr, 2000; McKenzie-Mohr & Schultz, 2014). More specifically, CBSM merges “knowledge from psychology with expertise from social marketing” (McKenzie-Mohr, 2000, p. 546). In this way, CBSM is differentiated from the social marketing discipline, which primarily applies knowledge and techniques from commercial marketing to elicit behaviour change (Andreasen, 1995).

CBSM has a rigid focus on selecting specific behaviours to change, which is the first step that informs the steps that follow. The five steps of CBSM are: 1) selecting behaviours, 2) barrier and benefit research, 3) strategy development, 4) piloting, and 5) broad-scale implementation and evaluation (McKenzie-Mohr, 2011; McKenzie-Mohr & Schultz, 2014). This five-step behaviour change approach has been used by numerous practitioners worldwide (McKenzie-Mohr, 2020), yet little knowledge exists in terms of the extent to which these programs have adhered to the five-step methodology, and to what extent the programs were successful.

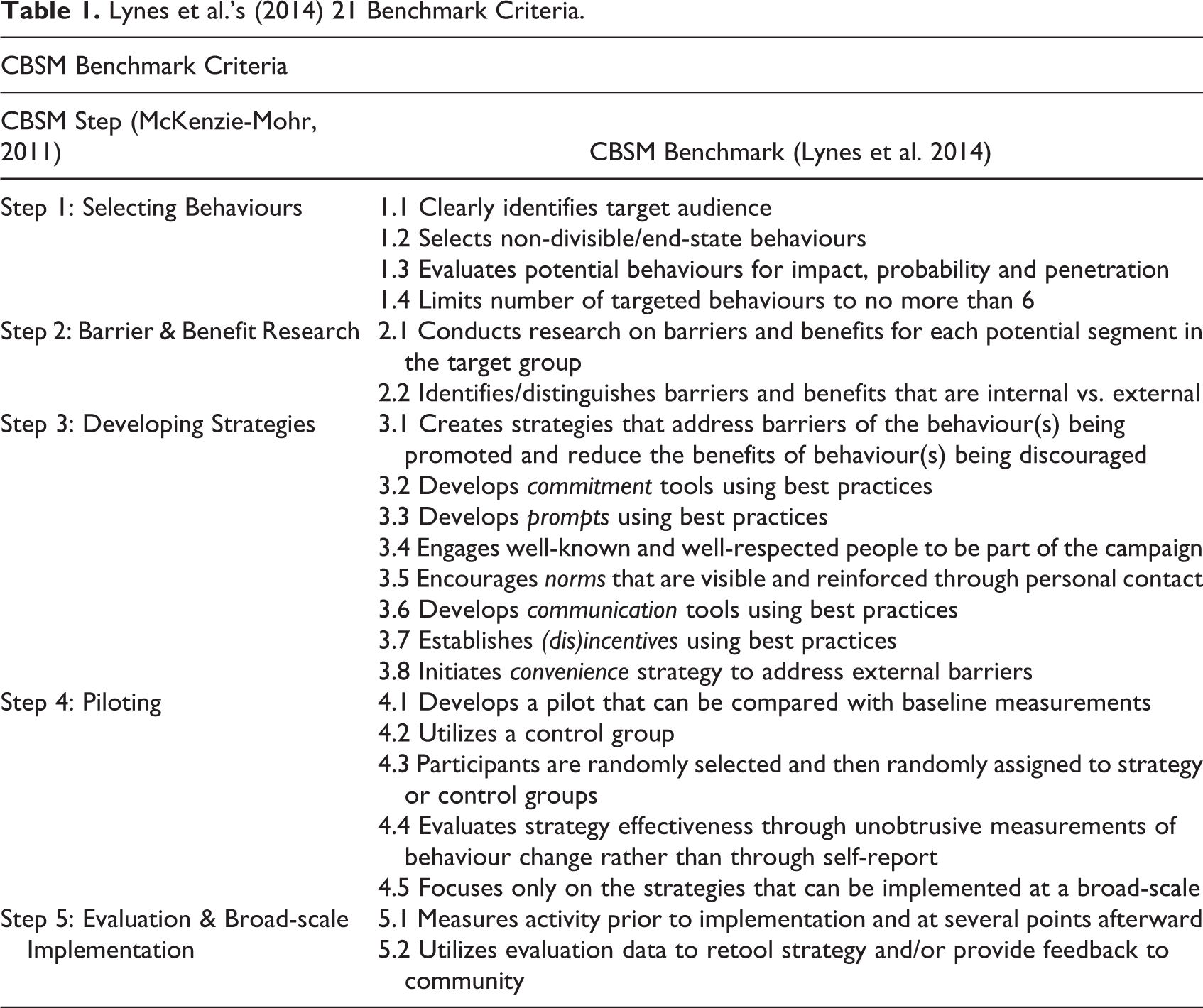

In the discipline of social marketing, through which CBSM is partly derived, there have been attempts to create benchmarks for measuring the success of social marketing programs (Andreasen, 2002; French, 2012; French and Blair-Stevens, 2007), and numerous social marketing programs have applied those benchmarks (Fujihira et al., 2015; Gracia-Marco et al., 2011; Luecking et al., 2017). In contrast, a set of benchmarks to measure adherence to program methodology and program success in CBSM did not exist until recently, when Lynes et al. (2014) developed a set of 21 benchmark criteria (Table 1) to assess the performance of CBSM programs using a qualitative scale of “integrated,” “partially integrated,” and “not integrated.” However, though the five-step CBSM model is widely used, Lynes et al. (2014)’s set of benchmarks has not been widely adopted by practitioners or academics for assessing community programs. The reasons for this are not clear but may be due to a relative lack of monitoring and evaluation in CBSM programs generally. It may also be possible that there is a dissonance between the CBSM academic community and CBSM practitioners.

Lynes et al.’s (2014) 21 Benchmark Criteria.

Regardless of the reasons for this lack of adoption, the result is that we know little about how CBSM programs are being implemented in practice, how closely they are adhering to the CBSM methodology, and ultimately how successful they are (Lynes et al., 2014). The current research attempts to fill this knowledge gap by applying Lynes et al. (2014)’s CBSM benchmarks to five Canadian community-based programs that sought to influence pro-environmental behaviour. By doing so, we hope to improve the accuracy and completeness of the evidence base for CBSM (Wettstein & Suggs, 2016) as well as to propose a methodology for exploring how the CBSM model has been employed.

Residential Water Efficiency Behaviour

Increasingly, organizations and municipalities are seeking to maximize residential water efficiency by influencing how people demand and consume water. Residential water use is tied to many everyday behaviours and is deeply ingrained in behavioural, social, and cultural norms (Elizondo & Lofthouse, 2010; Jorgensen et al., 2014). Through adjustments to common behaviours and routines, it is possible to “dramatically reduce domestic water use” to protect precious freshwater supply and reduce the need for energy-intensive desalination of saltwater (McKenzie-Mohr et al., 2011, p. 77). For example, peak days for water occur when residential water usage is highest, typically occurring on the hottest days of the year and placing an increased burden on a municipality’s water infrastructure. In an effort to reduce peak day demand, Durham Region in Ontario, Canada utilized behaviour change tools to influence lawn watering and other outdoor water behaviours. Durham dispatched college students to go door-to-door to communicate with residents about reducing outdoor water use and encouraged residents to display a public commitment sticker that recognized their household as saving water. Residents were also given a prompt to place over their outdoor faucet that offered “watering reminders” about when to water their lawn. Of the roughly 500 homes included in the selected community, the program resulted in a 32% reduction in outdoor water use (McKenzie-Mohr et al., 2011). Due to the key role that behaviour plays in increasing residential water efficiency, this research will focus specifically on community programs that utilized CBSM principles while working toward increasing water efficiency.

Method

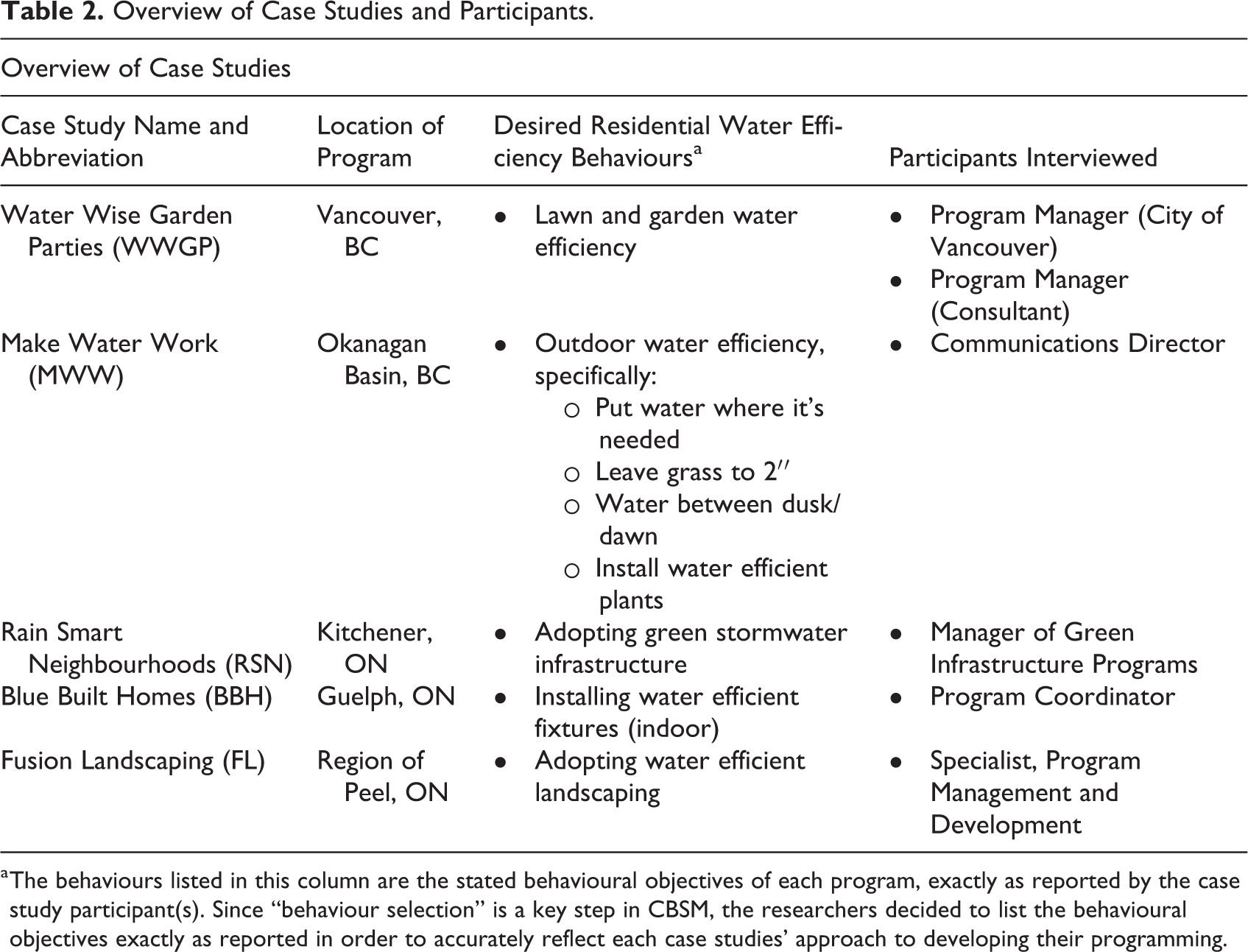

To explore how the CBSM theoretical framework has been implemented, the researchers assessed five qualitative case studies of community programs that utilized CBSM principles. While engaging in purposeful scanning of potential case study candidates, the differentiation between “CBSM programs” and “programs that use CBSM” became an important distinction in how programs described themselves. The former implies that the program was developed explicitly as a CBSM program from the outset, while the latter suggests more flexibility in how CBSM was integrated or evolved into the program. With this research’s objective to explore how the CBSM framework has been implemented at the community level, the researchers decided to opt for criteria that were flexible enough to capture a realistic snapshot of community organizations’ attempts at integrating CBSM. Thus, five Canadian programs related to residential water efficiency and who self-identified as utilizing CBSM principles were selected as case studies (for a description of each case study, refer to Online Appendix A found in the supplemental materials of this paper). Each case study organization committed at least one participant to the study who was directly involved in the program’s development and implementation. One program committed two participants to the study, bringing the total number of participants to 6 (n = 6). Table 2 below displays pertinent information about each of the case studies featured in this research.

Overview of Case Studies and Participants.

a The behaviours listed in this column are the stated behavioural objectives of each program, exactly as reported by the case study participant(s). Since “behaviour selection” is a key step in CBSM, the researchers decided to list the behavioural objectives exactly as reported in order to accurately reflect each case studies’ approach to developing their programming.

Data Collection and Analysis

Before engaging in primary data collection, each case study provided resources, documentation, and gray literature specific to their program and its development, including research that was conducted, evaluations, reports, and presentations (a full list of data sources used in case study analyses can be found in Online Appendix B). The secondary data resources were coded using NVivo 12 software based on the following: which case study the information belonged to, which of McKenzie-Mohr’s five CBSM steps the information aligned with, and which of Lynes et al.’s 21 benchmarks it related to. This first coding exercise was an essential step toward developing a baseline knowledge of each of the programs, how the programs were developed and implemented, and which CBSM components appeared to be present.

Each participant from each case study then participated in an online survey, followed by an in-depth interview. The purpose of the survey was to gauge the participant’s perception as to whether CBSM principles were integrated into the development of their program, and if so, to what degree. The information gathered through the online survey was then uploaded into NVivo 12 and coded alongside the secondary data for each program from the first coding exercise. Informed by the coded secondary and survey data for each program, the researchers then conducted in-depth interviews with each participant. The purpose of the in-depth interviews was to probe for further elaboration on the survey responses and secondary data to develop a deep understanding of how CBSM was integrated into the program’s development. This interview was semi-structured to allow for flexibility and spontaneity within the interview while ensuring that key considerations for addressing the research question were captured. Each interview was transcribed using online speech-to-text software, and once again, the data was uploaded into NVivo 12 and coded alongside the secondary and survey data for each program. This step-by-step process of coding and data collection allowed for iterative assessment and an emergent understanding of each program. It also enabled the researcher to identify and address any contradictions from the data through cross-referencing or discussing the contradiction in the participant interview and follow-up.

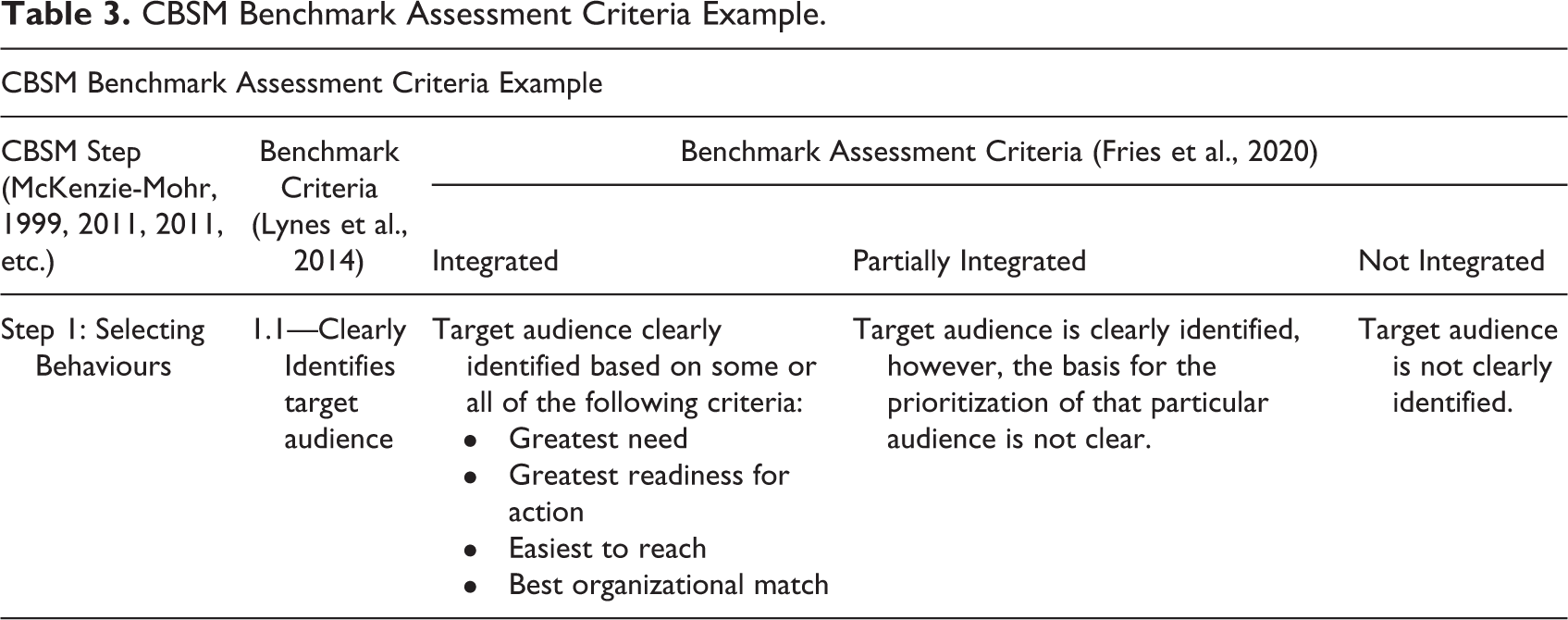

Once all primary and secondary data had been collected and coded, data triangulation was used to inform the assessment of each case study’s adherence to the CBSM model. In order to make assessments of each of the five case studies, this research utilized and built upon a set of 21 benchmarks that was developed by Lynes, Whitney, and Murray with the objective of “[providing] increased detail regarding the design and implementation of the CBSM model” (Lynes et al., 2014, p. 115). Lynes et al.’s (2014) 21 benchmarks were assessed using three qualitative criteria: integrated, partially integrated, or not integrated. Since this research is applying the benchmarks to multiple case studies, the potential for subjectivity in making assessments using only these three qualitative criteria was high. It was important to develop a method for assessment that was replicable and reliable by reducing the impact of subjective assessments. Thus, the researchers built upon Lynes et al.’s CBSM benchmarks by developing an assessment tool that established standards of action required to meet each level of integration (fully, partially, or not integrated) of each of the 21 benchmarks. Table 3 depicts the first of the 21 benchmarks and its associated criteria that were developed. The assessment criteria were grounded in theoretical knowledge from the CBSM framework to ensure consistent alignment with the intended model. Lynes et al.’s benchmarks were developed based on a synthesis of work published between 1999 and 2012 by CBSM’s founder, Doug McKenzie-Mohr (Lynes et al., 2014; McKenzie-Mohr, 2000, 2011; McKenzie-Mohr et al., 2011; McKenzie-Mohr & Smith, 1999). Thus, the current research utilized the same body of work to establish the assessment criteria. The full benchmark assessment tool can also be viewed in Online Appendix C of this paper’s supplemental material.

CBSM Benchmark Assessment Criteria Example.

For each case study, the researcher gave an assessment of either “integrated,” “partially integrated,” or “not integrated” for each benchmark based on the information triangulated within each code. Furthermore, peer debriefing with a colleague using anonymized data also occurred to help bolster validity (Creswell, 2014). Each case study’s benchmark assessment is included with the programs’ description, which can be found in Online Appendix A within the supplemental material.

Findings

Overall trends were observed across case studies related to benchmark performance, case study performance, and successes and challenges.

Benchmark Performance

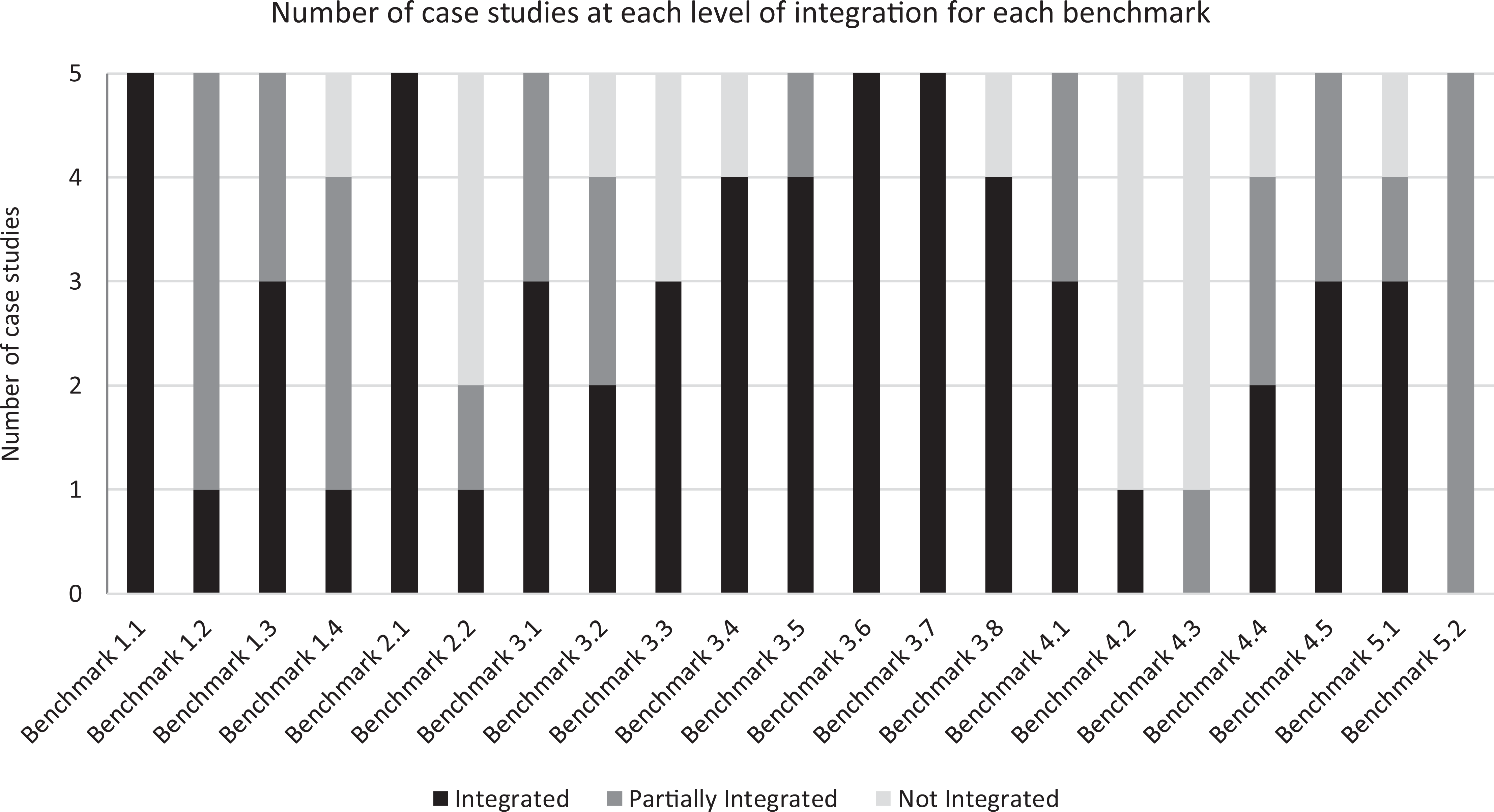

Figure 1 depicts each of the 21 CBSM benchmarks along the chart’s x-axis, and shows how many programs integrated, partially integrated, and did not integrate each benchmark. Out of all 21 benchmarks, four benchmarks were fully integrated by all five case studies: benchmark 1.1. (“clearly identifies a target audience”); benchmark 2.1 (“conducts barrier & benefit research for each segment of the target audience”); benchmark 3.6 (“develops communication tools using best practices”); and benchmark 3.7 (“establishes incentives/disincentives”), making them the four best performing benchmarks. Eleven of the 21 benchmarks were either fully or partially integrated by all five case studies, while the remaining 10 benchmarks were not integrated by at least one of the five case studies. The eleven fully or partially-integrated benchmarks are interspersed throughout all five of the CBSM steps, suggesting that programs are sampling from every step of CBSM instead of focusing their efforts only on specific steps or elements of CBSM.

Level of integration of each benchmark across all case studies.

The two poorest performing benchmarks were benchmark 4.2 (“utilizes a control group”) and 4.3 (“participants randomly selected and randomly assigned to strategy and control conditions”). Notably, both of the lowest-performing benchmarks fall within the fourth step of piloting. In particular, benchmark 4.3 of randomly assigning and selecting participants to pilot groups was the least integrated. Only one case study partially integrated this benchmark while the remaining four did not.

Case Study Performance

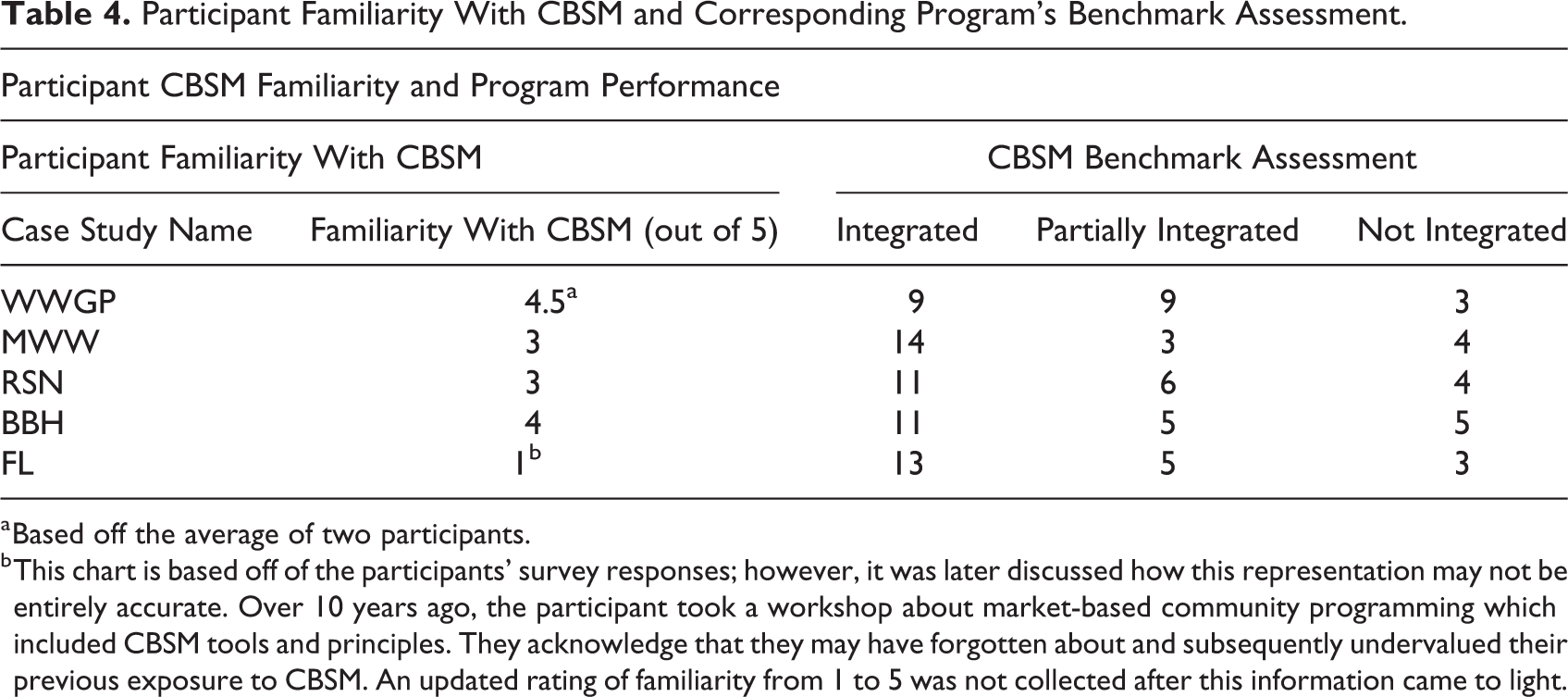

In the survey that participants completed before the in-depth interview, participants were asked to rate their familiarity with the CBSM framework from 1 (“not familiar at all”) to 5 (“extremely familiar”). Due to many factors in this research, no conclusions can be drawn about a relationship between a participants’ familiarity with CBSM and the programs’ performance. Nonetheless, it is worth discussing the participants’ familiarity with CBSM in relation to each program’s performance in the assessment. Table 4 displays the results of these survey responses and plots them alongside the number of benchmarks that a program integrated, partially integrated, or did not integrate. Four of the five case studies report a CBSM familiarity of 3 (“fairly familiar”) or higher. Participants from the WWGP program, the MWW campaign, and the BBH program explicitly noted attending McKenzie-Mohr’s CBSM workshop in the past. Though statistical inferences cannot be drawn, there does not appear to be a clear relationship between the level of benchmark integration and the participants’ familiarity with CBSM. While the WWGP had two participants with a high familiarity with CBSM, the WWGP fully integrated the least number of benchmarks out of all five case studies. The participant from FL indicated the lowest familiarity with CBSM on their survey; however, later discussions revealed that this representation from the survey might not be entirely accurate as they had forgotten about CBSM training that they had received earlier in their career.

Participant Familiarity With CBSM and Corresponding Program’s Benchmark Assessment.

a Based off the average of two participants.

b This chart is based off of the participants’ survey responses; however, it was later discussed how this representation may not be entirely accurate. Over 10 years ago, the participant took a workshop about market-based community programming which included CBSM tools and principles. They acknowledge that they may have forgotten about and subsequently undervalued their previous exposure to CBSM. An updated rating of familiarity from 1 to 5 was not collected after this information came to light.

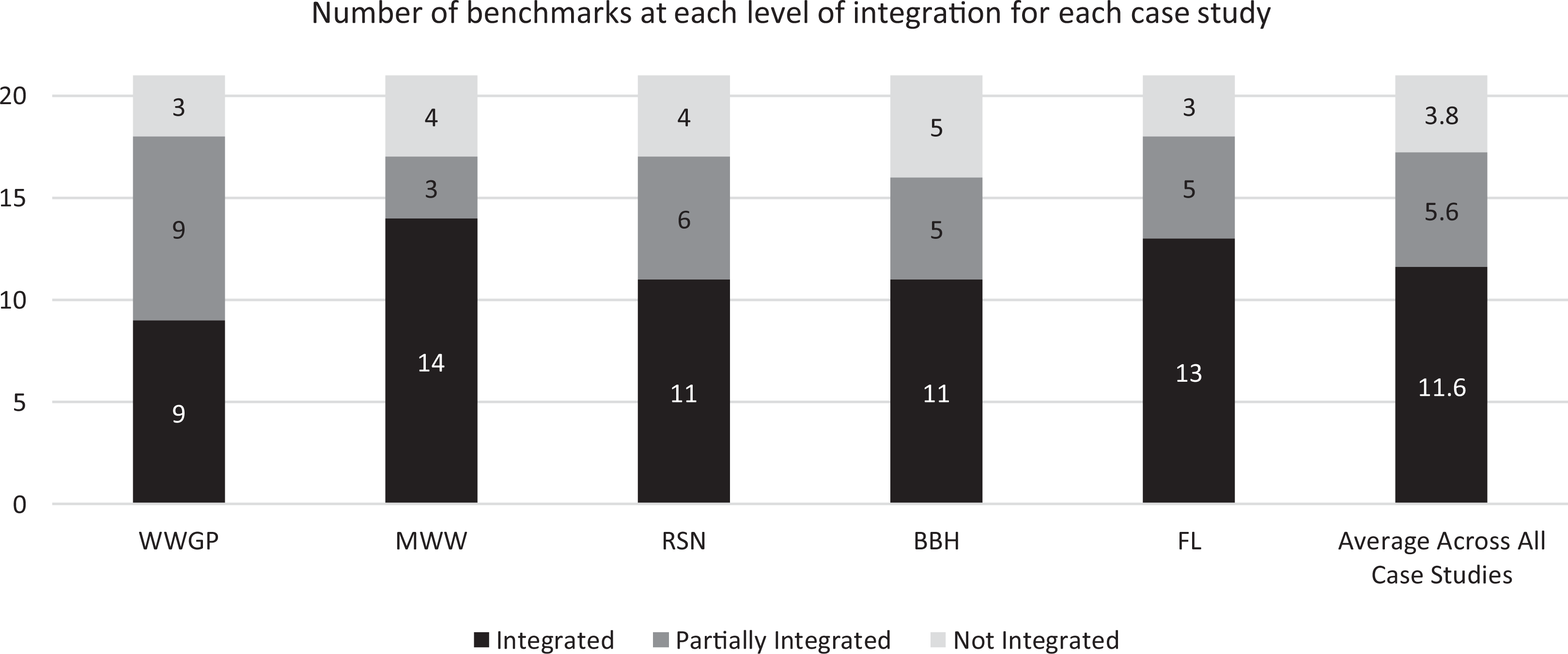

Figure 2 displays each of the five case studies’ program names, and then tallies the number of benchmarks that were assessed as integrated, partially integrated, and not integrated for each program. The number of integrated benchmarks ranged across the five case studies from 9 up to 14 in a single case study, with a mean average of 11.6 and a median and mode average of 11. The MWW campaign had the highest number of integrated benchmarks with 14 integrated, and the WWGP had the least number of benchmarks integrated with 9.

Number of benchmarks at each level of integration for each case study.

Partially integrated benchmarks ranged from 3 up to 9 benchmarks, with a mean average of 5.6, a median of 5, and a mode of 5. Interestingly, the results of these are the inverse of the integrated benchmarks; the WWGP had the most partially integrated benchmarks at 9, and MWW had the least partially integrated benchmarks at 3. Finally, the benchmarks that were not integrated had a much smaller range, from a minimum of 3 to a maximum of 5 benchmarks not integrated within a single case study. Across five case studies, the benchmarks that were not integrated had a mean of 3.8, a median of 4, and a mode of 3 and 4.

In sum, benchmarks that were integrated had a mean average of 12 (11.6), benchmarks that were partially integrated had a mean of 6 (5.6), and benchmarks that were not integrated had a mean of 4 (3.8) across the five case study programs. These results show that, on average, over the five case studies, just over half of the 21 criteria were fully integrated into the programs, whereas just under a third were partially integrated, and approximately one fifth were not integrated at all. Therefore, the programs assessed in this research were more likely to fully or partially integrate a CBSM benchmark than they were not to integrate it at all.

Successes and Challenges

This research focused on exploring how communities had implemented CBSM into practice. Due to the complexities of measuring and evaluating behaviour change, which will be explored in greater detail in the Discussion, outcome evaluations remained outside the scope of this study.

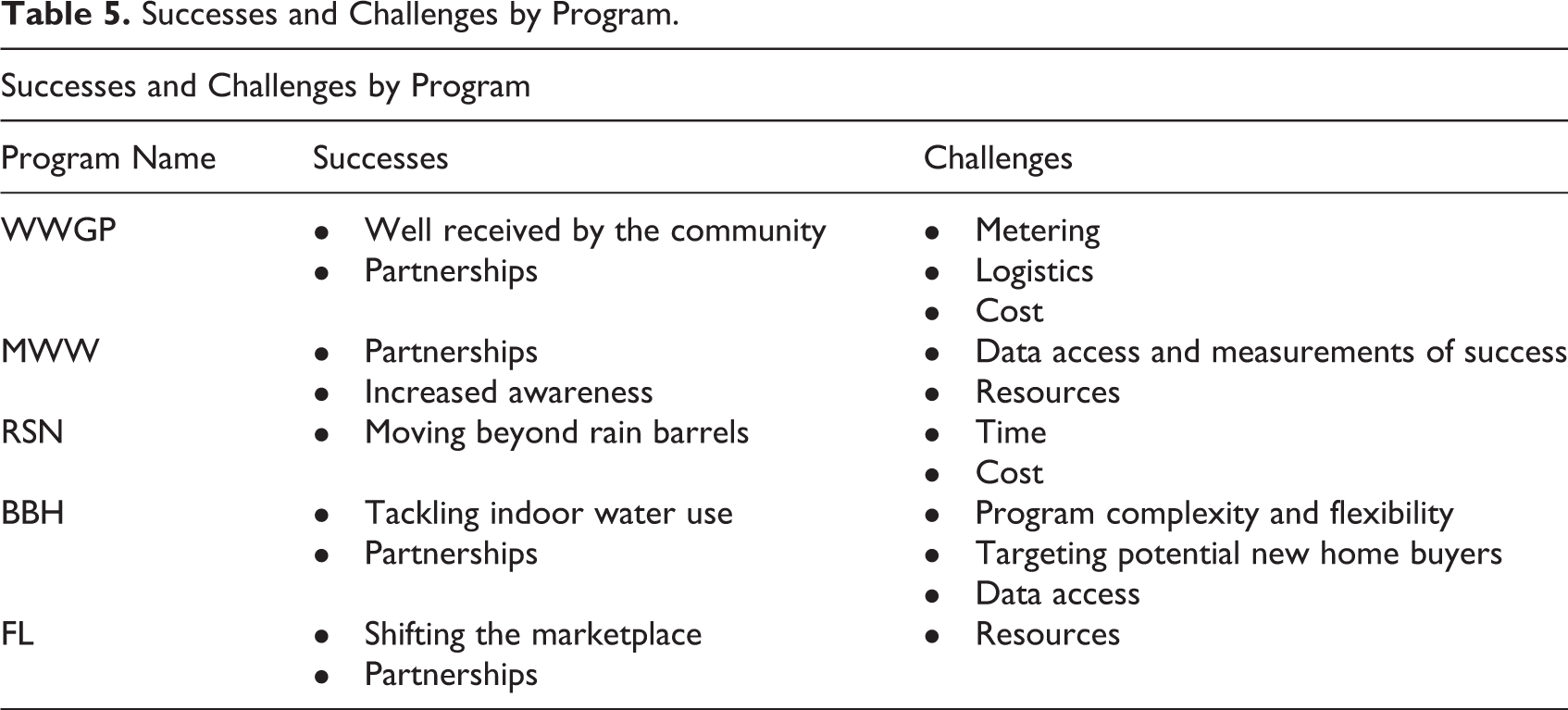

Successes and challenges noted by each of the programs are displayed in Table 5. These successes and challenges were discussed regarding program development, program implementation, program strategy, and the CBSM model. Since these discussions were limited to the survey, interview, and secondary literature, this is not an exhaustive list of these programs’ successes and challenges. Future community programs that endeavour to implement similar strategies to the ones highlighted in this research may benefit from seeing what other programs have accomplished, and where there are lessons to be learned.

Successes and Challenges by Program.

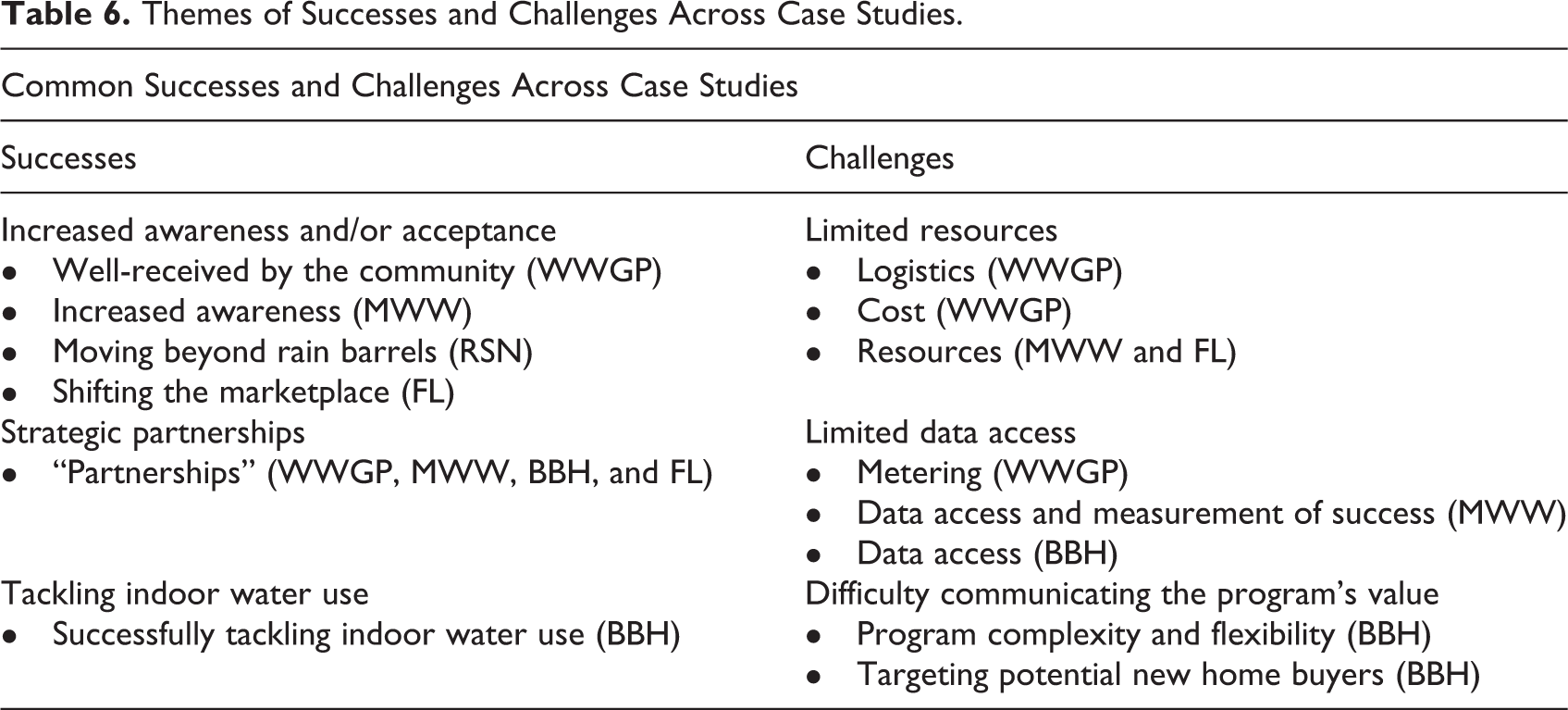

It is interesting that while each program experienced a unique set of successes and challenges, there was also considerable overlap in terms of higher-level themes in these areas. Programs differed greatly in terms of the type of organization, the employed strategies, and the various external factors that influence program implementation, such as the environmental, infrastructural, societal, and geopolitical landscape that the programs were operating within. Nonetheless, common successes and challenges were discussed. Table 6 displays the common themes identified for both successes and challenges, as well as which programs identified a success or challenge that fit within that theme. “Increased awareness and/or acceptance” as well as “strategic partnerships” were two apparent themes that participants pointed to when discussing the success of their programs.

Themes of Successes and Challenges Across Case Studies.

Conversely, “limited resources” were the most common challenges encountered, with resources encompassing financial, time, and human resources. Closely behind the challenge of resources are difficulties accessing data, which has implications for a program’s ability to measure success concretely. The third group of successes and challenges are outliers because they exclusively include successes and challenges noted by the BBH program. Since the BBH program was the only case study that targeted indoor residential water efficiency behaviour, it is presumable that the nature of successes and challenges the program faced would differ from those of programs that target outdoor water efficiency behaviours.

Discussion

The following section will explore the benchmark performances of each of the five steps of the CBSM model, discuss the successes and challenges encountered by programs, and make recommendations for programs to more closely align their strategies with the intended model. It will conclude with a discussion of the limitations of this study.

Step 1: Selecting Behaviours

The four benchmarks within Step 1 pertain to selecting behaviours to promote in the program. The majority of programs only partially or did not integrate benchmark 1.4 (“limits the number of targeted behaviours to no more than six non-divisible behaviours”) and benchmark 1.2 (“selects non-divisible and end-state behaviours”), which suggests opportunities for improved adherence to the CBSM model in these areas. The primary reason why programs did not achieve full integration of both of these benchmarks was that they selected behaviours that were divisible into more than six behaviours, meaning they were also not end-state. Divisible behaviours refer to actions that can be broken down further; for example, “lawn and garden water efficiency behaviours” can be broken down into behaviours such as installing a cistern to collect rainwater for gardening, watering the lawn at appropriate times of the day, or planting drought-tolerant plants. In the CBSM model, the rigid approach to selecting a limited number of non-divisible behaviours is important because barriers and benefits are behaviour-specific; each behaviour has a unique set of considerations that require different strategies to address them (McKenzie-Mohr, 2011). At the 2019 World Social Marketing Conference held in Edinburgh, Scotland, Doug McKenzie-Mohr presented about the importance of “selecting and unpacking behaviours,” which suggests that McKenzie-Mohr also recognizes behaviour selection as an area for improvement in programs (McKenzie-Mohr, 2019a). However, the suggestion to limit the number of non-divisible, end-state behaviours in each campaign should not be interpreted as CBSM “narrowly target[ing] one behaviour change at a time” (McKenzie-Mohr et al., 2011, p. 87). This is a “common but mistaken criticism of CBSM” (McKenzie-Mohr et al., 2011, p. 87) that reflects a misunderstanding about what is most important to target. It is not that a program should only target one behaviour at a time, but rather that the level of specificity required to understand the barriers and benefits of each behaviour warrants an intentional approach to strategizing for each behaviour. Programs that are struggling to influence desired behaviours may consider narrowing their focus to a limited number of end-state, non-divisible behaviours in order to strategically address the unique barriers and benefits of each behaviour (McKenzie-Mohr et al., 2011)

The performance of benchmark 1.3 (“evaluates behaviour for impact, probability, and penetration”) also suggests an opportunity for improved program methodology. Challenges that programs encountered at this benchmark were often related to measurement and resource constraints, such as a lack of metered data collecting information about impact, probability, or penetration. Lack of data collection and resources were common challenges identified by programs and will be discussed in greater detail in a subsequent section of this Discussion. In cases where concrete measurements (such as water consumption data) are not available, programs should consider incorporating self-report, observational, or even secondary data collection measures for investigating impact, probability, and penetration of the target behaviours.

Step 2: Barrier and Benefit Research

The second step of CBSM contains only two of the 21 CBSM benchmarks; however, these benchmarks are essential for capturing a program’s ability to understand their audience well enough to target and reduce the barriers of the desired behaviour, as well as to increase the benefits. Benchmark 2.1 (“conducts barrier and benefit research for each potential segment of the target audience”) was one of the four most commonly integrated benchmarks, with all five case studies fully integrating this benchmark. It is interesting then that benchmark 2.2 (“distinguishes between barriers and benefits that are internal versus external to the target audience”) was poorly integrated, with only one program fully integrating and three not integrating it at all. This result suggests that while programs are collecting information about the barriers and benefits that their target audience experiences generally, they could better align with the CBSM framework by further categorizing those barriers and benefits into internal (e.g., lack of audience understanding of water efficiency best practices) versus external (e.g., lack of appropriate water infrastructure at the community-level) factors for the target audience. Both internal and external factors can inhibit or encourage the adoption of behaviour; therefore, understanding what barriers and benefits are internal and external to the audience is important for developing effective strategies (McKenzie-Mohr & Schultz, 2014). For example, a curbside organics collection program in Halifax, Canada, identified that the “yuck factor” of composting (an internal barrier) and the space required to own and operate a compost cart (an external factor) were barriers to collecting kitchen organics. To overcome these, the program allowed the use of bags to contain the organics and limit the “yuck factor,” as well as gave an option for residents to share carts to reduce the amount of space required (McKenzie-Mohr et al., 2011). This is a simple example of how an understanding of both internal and external factors enabled the development of an effective strategy.

Step 3: Developing Strategies

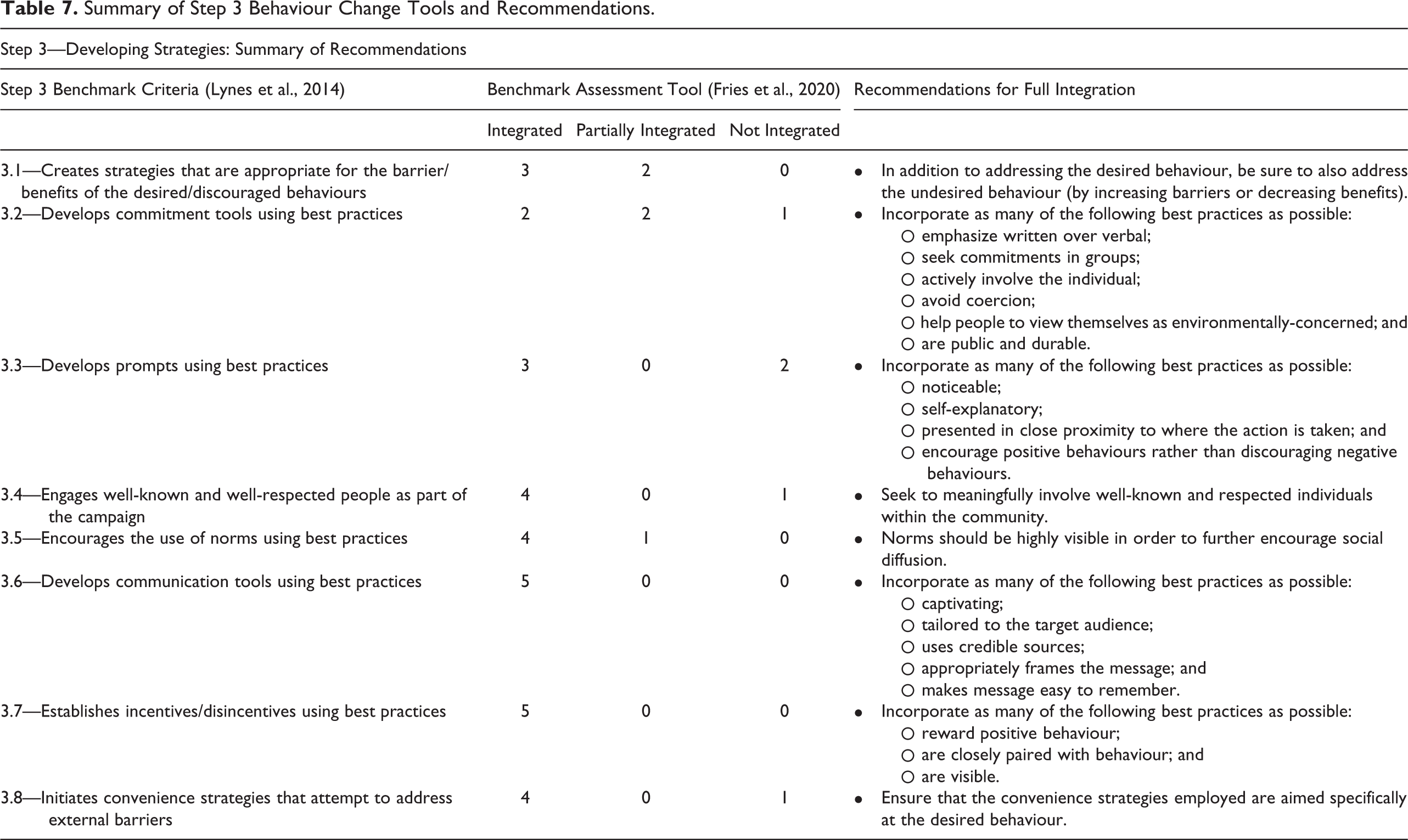

The third step of CBSM is to develop strategies for using behaviour change tools grounded in psychology and social marketing. The CBSM textbook outlines best practices for seven tools that can be implemented to increase the benefits and decrease the barriers of the desired behaviour, while simultaneously decreasing the benefits and increasing the barriers of the undesired behaviour (McKenzie-Mohr, 2011). The following table has summarized the performance and associated recommendations for achieving full integration of each of the eight benchmarks within Step 3.

It is worthwhile to elaborate on a few benchmarks within Step 3. Benchmark 3.1 (“creates strategies that are appropriate for the barriers/benefits of the desired/discouraged behaviours”) was relatively well done, but it is noteworthy that two programs only partially integrated the benchmark. These programs received assessments of partially integrated because, while they may have addressed the barriers and benefits of the desired behaviour successfully, they did not simultaneously decrease the benefits and increase the barriers to the undesired behaviours. Influencing the undesired behaviour is considerably harder to do; programs that fully integrated benchmark 3.1 utilized tools such as levying local taxes, fees, or bylaw enforcement, which requires the authority of being (or partnering closely with) a municipal government. A notable exception to this is the FL case study, which fully integrated this benchmark and addressed both desired and undesired behaviours without the use of additional fees or taxes. FL’s utilization of a market-based strategy to increase market demand for water-efficient landscaping had the inverse effect of driving down consumer demand (in effect, decreasing the benefits) of inefficient landscapes. In focusing heavily on water-efficient landscaping aesthetics, FL positioned traditional landscapes as boring, dull, and expensive to maintain (Patterson, T. et al., 2017). Strategic use of marketing may be an attractive option to consider for organizations that do not have the authority or desire to utilize disincentives like taxes, fees, and bylaw enforcement.

Additionally, benchmarks 3.2 (“develops commitment tools using best practices”) and 3.3 (“develops prompts using best practices”) warrant additional discussion. The results of these two benchmarks are mixed, which suggests there may be some difficulty in integrating these two tools compared to the others. In the benchmark assessment tool, each of these benchmarks includes prescriptive best practices for aligning with CBSM’s framework. Benchmark 3.2 (“commitment”) has a list of six best practices within the CBSM benchmark, while benchmark 3.3 (“prompts”) lists four best practices (see Table 7's recommendations to view the prescribed best practices). The fact that these best practices are outlined explicitly suggests that it is not enough to simply include some form of commitment or prompt, but that the commitments and prompts should be designed deliberately utilizing these best practices. The MWW campaign was able to fully integrate both benchmark 3.2 and 3.3 by developing a public online pledge for residents to sign and offering promotional materials that also served as prompts for the desired behaviours. Programs struggling to incorporate commitments and prompts effectively should strive to incorporate each of the best practices outlined within benchmark 3.2 and 3.3 in order to align with the CBSM framework successfully.

Summary of Step 3 Behaviour Change Tools and Recommendations.

The final consideration for Step 3 is that in some cases, some behaviour change tools may simply not be appropriate to utilize within a program. There is currently no evidence to suggest that failing to integrate some of the tools is detrimental to a program. In Choosing Effective Behaviour Change Tools (2014), McKenzie-Mohr introduces the behaviour change tools and outlines when and how to use them, but there are no implications that integrating each tool is required for success. It could be suggested that successful integration of the CBSM framework is not about how many of the behaviour change tools were integrated, but rather how well the tools employed addressed the desired behaviours; however, further research is required to investigate this suggestion. For programs looking to align their strategies to the CBSM framework, arguably the most critical element when making strategic decisions is to simultaneously target the desired behaviours (increase benefits + decrease barriers) and the undesired behaviours (decrease benefits + increase barriers). This strategic targeting of the behaviours is reflected in benchmark 3.1 and requires strong audience insights developed in Step 2’s barrier and benefit research.

Step 4: Piloting

The results in Step 4 are mixed but also provide insights into how the programs conducted pilots. Of the five benchmarks within Step 4, two were fully or partially integrated by all five case studies. Benchmarks 4.1 (“develops a pilot that can be compared with baseline measurements”) and 4.5 (“focuses only on strategies that can be implemented on a broad scale”) were fully or partially integrated by all five case studies, which indicates that all case studies conducted pilot projects that could be adapted for wide-scale implementation. In contrast to the performance of benchmarks 4.1 and 4.5, the two worst performing benchmarks from the entire set of 21 were benchmark 4.2 (“utilizes a control group”) and 4.3 (“participants are randomly selected and assigned to groups”). In particular, benchmark 4.3 was the least integrated benchmark by all programs, with only one case study partially integrating the benchmark. Notably, these two benchmarks are related; if one does not have a control group, random assignment to a control or strategy group is not possible. Incorporating control groups allows program developers to determine the effectiveness of their strategy by comparing the results of a test group who received the intervention with a comparison group who did not receive any intervention (McKenzie-Mohr et al., 2011). Case studies did not use control groups and random selection and assignment for a variety of reasons. Most commonly, programs utilized residential neighborhoods as pilot areas, making segmenting and randomly assigning participants to groups difficult. Programs may consider utilizing demographic or household characteristics to segment and target pilot audiences when it is appropriate to do so.

The final benchmark to consider within Step 4 is benchmark 4.4 (“evaluates the strategy through the use of unobtrusive measurements”), which returned mixed results at best. While some programs successfully employed unobtrusive measurements of the desired behaviour, other programs relied on self-report data or did not collect measurement data at all. Unobtrusive measurements are important for measuring program effectiveness because they are more likely to depict behaviour change accurately (McKenzie-Mohr, 2011). However, unobtrusive measurements are also considerably more challenging to employ; the behaviours that programs are targeting are often private and difficult to measure without installing metering technology. Similar to earlier discussions of benchmark 1.3 (“evaluates behaviour for impact, probability, and penetration”), benchmark 4.4 is another example of how the lack of access to data posed a challenge for programs. Thus, many programs were limited to self-report measurements. However, the FL case study overcame this challenge by utilizing a “photographic catalogue” for outdoor landscape changes, which did not require the installation of metering technology but did allow for unobtrusively measuring the impact of the pilot.

Step 5: Evaluation and Broad-Scale Implementation

The fifth and final step of CBSM is “evaluation and broad-scale implementation,” which includes two benchmarks. Benchmark 5.1 (“measures activity prior to implementation and at several points afterwards”) showed mixed degrees of integration across the case studies. For full integration of this benchmark, it is suggested that programs measure the target audience’s activity after the pilot has been completed and before the program is widely implemented, as well as at several times after implementation (McKenzie-Mohr et al., 2011). Some programs did this successfully, while others only measured implementation post-hoc or not at all. The lack of robust monitoring activities may be telling about why there is a dearth in knowledge about how CBSM has been implemented and why there has been relatively little uptake of Lynes et al.’s CBSM benchmarks (2014); however, future research is required to explore this hypothesis further.

The final benchmark, benchmark 5.2 (“utilizes evaluation data to retool strategy and/or provide feedback to the community”), was partially integrated by all five case studies. Unanimously across all cases, programs used what they had learned to “retool” their strategy; however, no programs indicated that they provided feedback back to the community. Providing feedback about the impact that the behaviour change has had on the environment is important for reinforcing the changes that have been made and establishing the individuals’ self-perception as someone who is environmentally-minded (McKenzie-Mohr, 2011). Thus, providing the community with feedback is essential in maintaining the strategy across a broader scale and longer-term. While revising the strategy overtime is certainly important, one could suggest that developing a feedback loop with the target audience would only serve to bolster efforts to improve programs.

Successes and Challenges

From a CBSM and behavioural standpoint, the most important metric of success would be measuring the CBSM interventions’ impact on the residents’ overall water consumption or use. However, challenges discussed by case study participants illuminate the complexities of accessing, implementing, and obtaining the necessary data on a large scale. The two most commonly discussed challenges faced by programs were limited access to data and limited access to resources. Limited access to data, especially within a water-efficiency context, meant that programs lacked important measurements like household water consumption, peak-day usage, stormwater run-off reduction, or whatever water metric that measures the specific water-use behaviour. When asked if the lack of metering was a significant barrier, one participant responded: Absolutely…how can we measure the success of this program? (P04, 2019)

Similarly, the other most commonly discussed challenge that programs faced was related to accessing resources. Resources include financial resources, as well as the resources that employees provide to programs, including time and effort, and the physical and intangible resources required to implement the program. In the case of both of these challenges, managerial buy-in and momentum from high-level decision-makers are often required. In order to achieve this buy-in, increased information and training about behaviour change frameworks like CBSM, as well as advocating for the importance of collecting household data, is likely required.

Despite that behaviour change outcomes were outside the scope of this research, programs did discuss areas that they felt their programs had achieved success. The two most commonly discussed successes experienced by programs were increasing awareness and acceptance of the desired behaviour and the strategic use of partnerships with other local organizations. Programs that reported increased awareness or acceptance based their evaluations on factors such as how well the program was received by the community, the amount of media attention and community awareness that existed around the campaign, or whether they were able to accomplish objectives of their own. While increasing awareness and acceptance is a successful outcome, research has indicated that awareness of environmental issues does not translate into behaviour change (Kollmuss & Agyeman, 2002; Komendantova & Neumueller, 2020; McKenzie-Mohr, 2011). Whenever possible, programs should aim to align their indicators of success with measurements of changes to the desired behaviour.

Strategic use of partnerships was important to the success of programs for several reasons, but most importantly, it allowed programs to address resource challenges and ultimately expand their capacity. For example, one participant noted: I think the only thing I would add is how important it’s been to have those partners. I couldn’t do it alone from our office. Cost-wise, it would be very difficult. Being able to leverage a $25,000 campaign into $50,000, we are able to do a lot more…Plus just the amplification of the message by having those partners is huge. (P05, 2019)

Limitations

It is necessary to acknowledge the limitations of the study’s design and implementation, namely: Lack of generalizability: Due to the small sample study of programs investigated, as well as the nature of this qualitative inquiry, the results of this study cannot be generalized to other programs that utilize CBSM. Unable to make a determination of programmatic success: No determination of the relative behaviour change “success” of each case study was possible because a common metric of success was not used across programs, and in some cases, measurements were not collected. This research’s main contribution to the literature is a method for exploring how the CBSM model has been implemented into practice across multiple case studies, which provides a stepping stone toward evaluating the success of the CBSM methodology and its influence on behaviour. However, future research is needed to determine the actual impact that increased alignment to the CBSM framework has on a programs’ success at influencing desired behaviours. Limitations of the benchmark assessment tool: In pursuing this objective and in being one of the first to operationalize this set of benchmarks, many lessons were learned along the way about the benchmarks themselves, the assessment process, and how these benchmarks translate from theory to practice. Firstly, assessing the benchmarks using the qualitative criteria of “integrated,” “partially integrated,” and “not integrated,” even with the benchmark assessment tool, leaves ample room for interpretation (Lynes et al., 2014; Wettstein & Suggs, 2016). To combat this, future endeavours may consider bolstering the benchmark criteria by including tools such as the Social Marketing Indicator (SMI), which is a visual representation of the extent to which intervention corresponds to social marketing methods based on two procedural dimensions: process integrity and process quality (Wettstein & Suggs, 2016). There were also a few limitations to the benchmarks themselves. Each benchmark is weighted equally, which does not indicate priority actions within the benchmarks. There is also no prescribed minimum number of benchmarks that a program must integrate to have implemented CBSM successfully (Lynes et al., 2014; Wettstein & Suggs, 2016). Finally, the set of benchmarks that were developed by Lynes et al. (2014) did not entirely align with McKenzie-Mohr’s CBSM principles (2011).

Conclusion

In pursuing this research, it became clear that there is an inherent tension between CBSM theory and practice. The case studies featured did not develop and implement their programs based exclusively on CBSM methodology, which resulted in some tensions between trying to adhere to the theory while also accounting for what and how it was done in practice. Of particular challenge in comparing theory and practice was the principle that “each step builds on those that precede it” (McKenzie-Mohr et al., 2011, p. 44). In theory, this consecutive approach is pragmatic and straightforward; in practice, however, it became clear that programs learned, evaluated, and modified their strategies as they evolved, and often did not follow the linear fashion of the five CBSM steps. It was sometimes difficult to accurately capture this information within the benchmark assessment tool, both from the perspective of capturing the program’s activities and honoring the chronology of the CBSM methodology. These tensions between the programs and the CBSM model beg the question: how much can (or should) the CBSM theoretical model be altered to suit the needs of the programs before it loses its theoretical value in changing behaviour? There were many reasons for why these programs did not rigidly adhere to the CBSM principles. The CBSM framework and benchmarks do not identify anything “wrong” about altering or sampling from a theory; however, this research does call into question how effective that framework then becomes once implemented in the community. Another question that emerges is when tensions between theory and practice arise, who wins—theory or practice? And, in either case, how is that accomplished? Challenges exist in both scenarios, such as maintaining the integrity of theory and program effectiveness (i.e., a theoretical challenge) or obtaining adequate knowledge, resources, and buy-in from the outset of a program (i.e., a practitioner challenge). Without knowing how effective these programs have been in changing behaviour and which benchmarks are most important to achieving program success, it is difficult to address these questions.

The five case studies presented in this research allowed for a holistic exploration of how each featured program integrated the CBSM model, and the CBSM benchmark assessment tool enabled the researcher to streamline assessment across multiple case studies, determine the degree to which each case study integrated each benchmark, and identify areas for better alignment with the CBSM model. In pursuing this study, a number of recommendations and considerations for future research were identified and can be summarized as follows: Scrutinize and validate benchmarks: While Lynes et al.’s (2014) CBSM benchmarks and this paper’s proposed CBSM benchmark assessment tool offer a systematic approach to evaluating a program’s CBSM integration, the benchmarks and tool remain unscrutinized. There are likely several critical considerations that may suggest future areas of refinements for CBSM benchmark criteria and the assessment tool. Increase the sample size: While the case studies in this research are useful for an in-depth exploration of each program featured, they are not generalizable and thus, no inferences can be made about pro-environmental programming or CBSM programming more generally. Further, this research focused exclusively on water-efficiency programs; however, CBSM can be employed in a wide range of environmental disciplines. There is a need for increased breadth in knowledge about how the model applies in various contexts, environments, and disciplines. Quantify effectiveness: Since the CBSM model can be applied to a variety of diverse contexts and programs, discussions of relative “success” are currently limited to a case-by-case basis. Quantitative analysis that yields statistical information about the effectiveness of CBSM programs in fostering sustainable behaviour is necessary to make truly reliable and robust claims about the success of CBSM. However, without a common metric to measure each CBSM program’s success against others, the true effectiveness of the CBSM model in eliciting behaviour change is difficult to determine beyond anecdotal evidence. This challenge is compounded by the fact that measuring changes in behaviour reliably is notoriously challenging and also varies from case to case, making quantifying effectiveness an elusive—yet necessary—future objective.

Supplemental Material

Supplemental Material, CBSM_in_Theory_and_Practice___SMQ_supp._material_-_appendices - Community-Based Social Marketing in Theory and Practice: Five Case Studies of Water Efficiency Programs in Canada

Supplemental Material, CBSM_in_Theory_and_Practice___SMQ_supp._material_-_appendices for Community-Based Social Marketing in Theory and Practice: Five Case Studies of Water Efficiency Programs in Canada by Sarah Fries, Julie Cook and Jennifer Kristin Lynes in Social Marketing Quarterly

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.