Abstract

Dear editor,

We were interested to read the paper by Álvaro-Afonso FJ et al. 1 published in the April 2018 issue of Diabetes & Vascular Disease Research. The authors’ aim was to determine the interobserver reliability of the ankle-brachial index, toe-brachial index and distal pulse palpation depending on the training of the professional involved. 1 Various indices of 21 patients (42 foot) such as the ankle-brachial index, toe-brachial index and distal pulses were assessed by three clinicians with different levels of experience on the same day. The interobserver agreement was interpreted using kappa statistic. Based on their results, kappa values and agreement for the palpation of posterior tibial arteries and dorsalis pedis arteries were (K = 0.45, moderate) and (K = 0.33, low), respectively. The agreement for ankle-brachial index between clinicians in patients with medial arterial calcification and normal ankle-brachial index were (K = 0.43, moderate) and (K = 0.4, low). Also, the measurement of toe-brachial index had moderate agreement between clinicians in patients with a normal toe-brachial index (K = 0.4) and in patients with medial arterial calcification (K = 0.60). 1

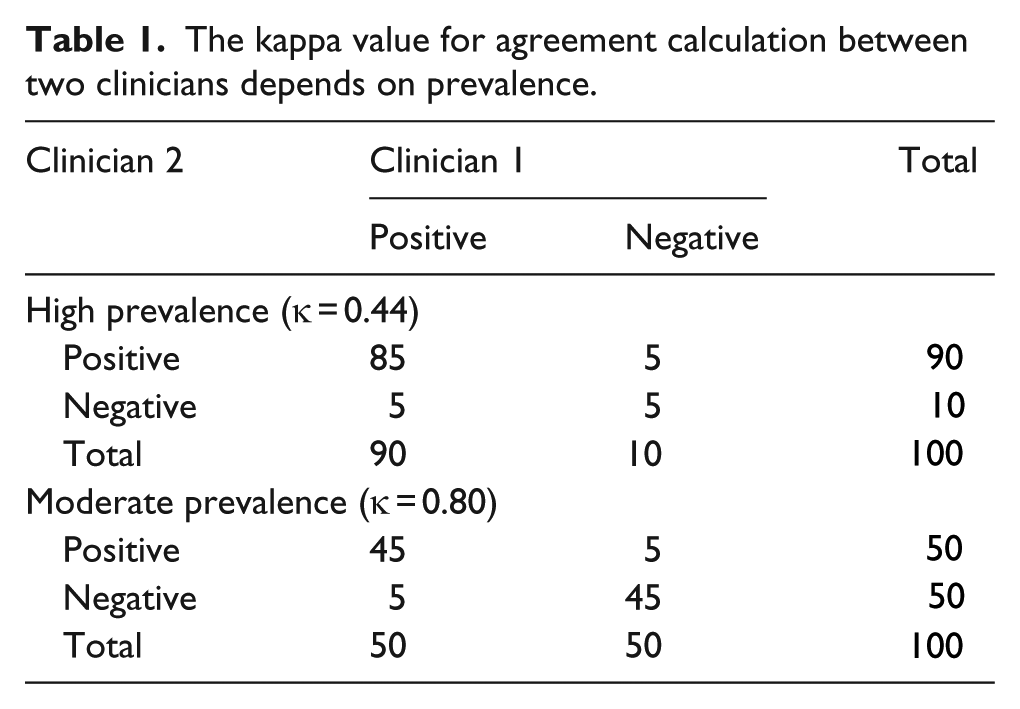

It is of crucial importance to know that no value of kappa can be universally accepted as a sign of a good agreement. The kappa value to assess the agreement of a qualitative variable has two weaknesses: First, it depends on the prevalence in each category. In other words, it is possible to have different kappa values with the same percentage for both concordant and discordant cells! Table 1 shows that in both (a) and (b) situations, concordant (agreement) and discordant (disagreement) cells have the prevalence of 90% and 10%, respectively; however, we get different kappa values. (0.44 as moderated and 0.80 as very good). Second, the kappa value is also dependent on the number of categories.2–6 In such a situation, especially having more than two observers, our suggestion is to apply weighted or Fleiss kappa, since the mentioned estimates provide unbiased results.2–6

The kappa value for agreement calculation between two clinicians depends on prevalence.

The authors concluded that the palpation of distal pulses, ankle-brachial index and toe-brachial index determination in patients with diabetes are not highly reproducible and reliable between clinicians with different levels of experience under routine conditions. In this letter, we discussed two important limitations of the kappa value to assess reliability.2–6 Any conclusion in reliability analyses needs to be supported by the methodological and statistical issues mentioned above.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.