Abstract

Education reform efforts stemming from the Programme in International Student Achievement have strengthened in recent years, particularly in response to the growth of global references societies – high achieving educational jurisdictions such as Finland, Hong Kong-China, and more recently Estonia and Singapore. Despite political rhetoric, evidence-based policy development associated with this international benchmark measure is rarely, if ever, a neutral enterprise that is guided by the best available evidence. Indeed, political discourse and policy framing surrounding PISA often results in the selective use of results to justify contested policy reforms. Brief cases from Japan, Sweden, and Canada illustrate how national policies have been adopted that are not grounded, and may even run counter, to research findings. The discussion examines the politicization of PISA and its symbolic role in adding legitimacy to education reform agendas. Collectively, the analysis offers an alternative perspective to the popular notion that PISA guides evidence-based decision-making.

Keywords

Introduction

Since the initial administration of the Programme in International Student Assessment (PISA) in 2000, this international benchmark measure has increasingly grown in global importance. Administered every 3 years by the Organisation for Economic Cooperation and Development (OECD), PISA assess reading, mathematics, and science literacy skills cross-nationally in a representative sample of 15-year-old students. In addition to the previously noted areas, the OECD has also added additional tests of Financial Literacy in 2012, Collaborative Problem-Solving in 2015, Global Competencies in 2018, Creative Thinking in 2022, and the forthcoming Digital World assessment in 2025. Collectively, the range of assessment areas, along with broad international participation levels, which included 90 countries/economies and approximately 3,000,000 students globally in the most recent PISA administration in 2022, makes this triennial survey particularly appealing to policymakers around the world. Indeed, cross-national research studies and public policy discourses suggest PISA is the most influential international benchmark measure when compared against other large-scale tests such as the Trends in International Mathematics and Science Study (TIMSS), Progress in International Reading Literacy Study (PIRLS), and/or International Computer and Information Literacy Study (ICILS), administered by the International Association for the Evaluation of Educational Achievement (IEA) (Carnoy et al., 2016; Volante, 2016).

The widespread and evolving use of PISA as a critical policy lever is evidenced by a wide range of national and cross-national studies that underscore its influence on strategic planning and system-reform discourses (Baird et al., 2011; Breakspear, 2012; Niemann et al., 2016; Pons, 2012; Teodoro, 2022; Volante, 2018). Undoubtedly, there is no continent or corner of the world that has not been captivated with the explanatory power of PISA, nor the seemingly straight-forward policy prescriptions that stem from the highly anticipated results (Sellar and Lingard, 2013; Sjøberg and Jenkins, 2022). Supported by their open access series PISA in Focus policy briefs and a plethora of country profiles and policy reports, the OECD encourages national governments to learn from successful educational jurisdictions (often referred to as reference societies) in the hopes of emulating their relative success (Harris et al., 2016; Santos and Centeno, 2023; Volante and Klinger, 2023). Unfortunately, the latter occurs despite academic concerns with the often-touted technical excellence of this measure (Goldstein, 2018; Hopfenbeck et al., 2017; Rutkowski and Rutkowski, 2013; Zieger et al., 2022). Overall, the use of PISA to make causal determinations of policy effects, along with unintended negative consequences such as an emphasis on system homogenization, narrowing of the curriculum, and the predictable focus on short-term education ‘fixes’ (Meyer and Zahedi, 2014; Sellar et al., 2017; Zhao, 2020) remain pressing, and widely documented, challenges associated with the utilization of this international large-scale assessment survey.

The present discussion is chiefly concerned with examining the dynamics between PISA, politics, and evidence-based policy discourses. Policy-making based on the utilization of large-assessment data is not merely a technical planning exercise, rather it requires framing and persuasion strategies (Breakspear, 2012; Weible et al., 2012). Drawing on brief cases from Japan, Sweden, and Canada, we illustrate how national education policies have been adopted that are not grounded, and even run counter, to the best available evidence. In some cases, enacted policies have been strengthened in spite of underlying equity issues that are also referenced in OECD reports and policy briefs. Issues such as curriculum reform, school-choice, test-based accountability, and national assessment design are discussed in relation to specific policy reforms and juxtaposed against the available evidence. In doing so, we illustrate how PISA results are often politicized in the domain of public policy and how policy framing and the associated construction of policy narratives reflect purposeful political strategies and ideologies. Collectively, the salience and interplay of cultural and political issues skew the use of test results, primarily to align with an overarching standards-based neoliberal orientation.

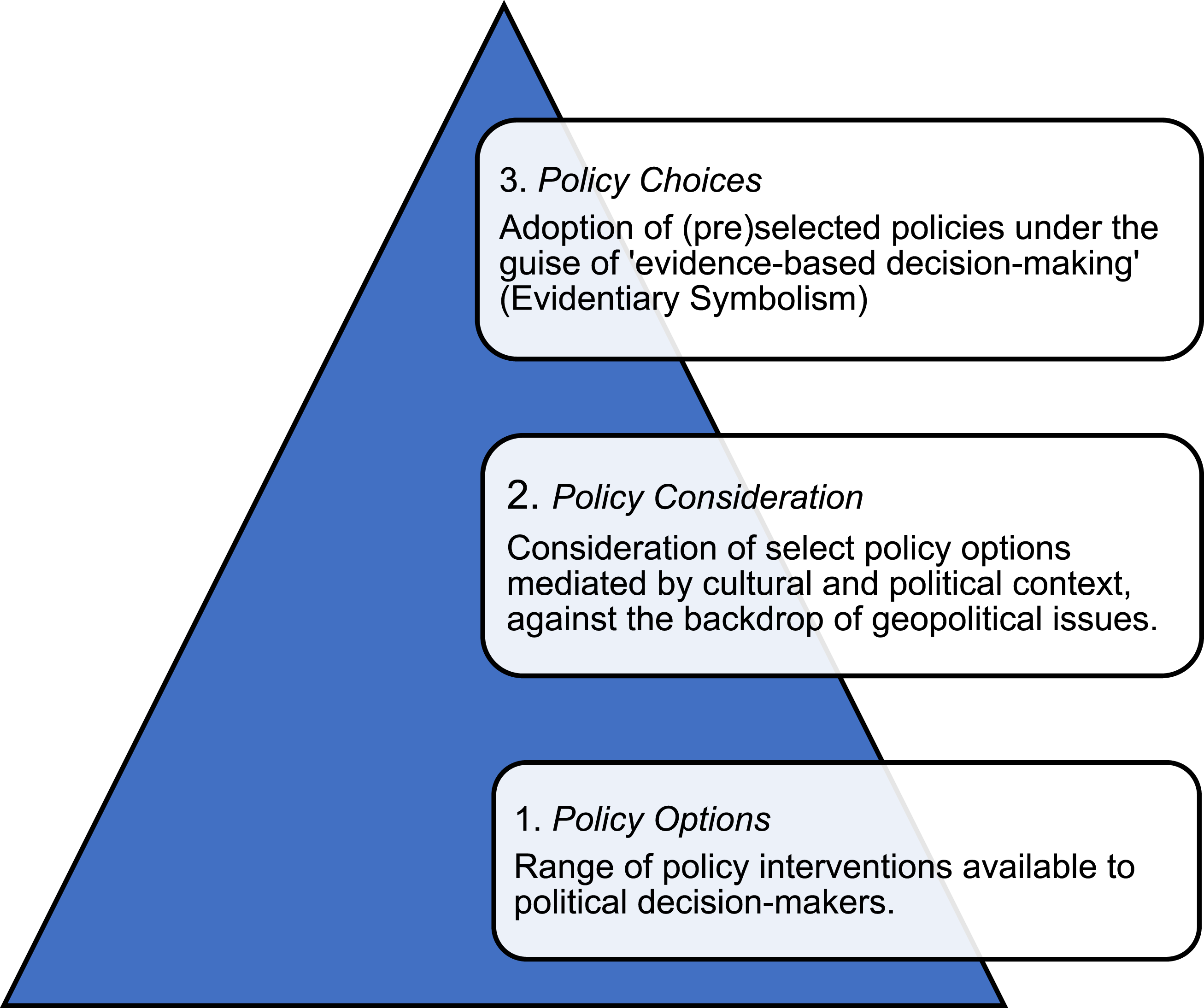

Prior to our critique of the conventional policy options-consideration-choices model, we situate international comparison testing within broader historical and geopolitical developments that have elevated standards-based reform and the accompanying use of large-scale testing measures for accountability purposes (Checchi and Mattei, 2021). In the next section we offer a brief critique of the role of large-scale assessment in shaping global education policy agendas, prior to examining the impact of PISA in specific national contexts. Although PISA burst onto the education scene at the turn of this century, it is part of a larger zeitgeist that has elevated the role and salience of international large-scale student testing data for accountability and system-reform purposes.

Large-scale assessment and global education policy

Large-scale assessments – particularly those tied to system accountability and policy objectives – have been in existence for well over a century. The Prussian education system, for example, included specific training and certification for teachers and student testing used primarily to determine suitability for job training (Volante, 2012). More recently, Margaret Thatcher’s British government popularized the coupling of standards, national student testing, and policy monitoring during the late 1980s which helped facilitate its rapid uptake in other parts of the industrialized world (Mattei, 2012; Volante and Earl, 2013). Nevertheless, the rise of international large-scale assessments and the field of comparative education fundamentally changed national educational policy processes by benchmarking student performance against an international, not national, student achievement metric (Addey et al., 2019). Indeed, governments around the world now focus their gaze outward to guage the quality and equity of their education system – focussing heavily on their positioning in international league tables and making cross-national comparisons to evaluate the relative ‘success’ of their education system. In many respects, the design and administration of measurement tools that matter now rest outside national boundaries, and in doing so, are undoubtedly less sensitive to specific cultural contexts.

Educational quality and equity are broadly conceptualized as high achievement standards, which are shared by all students regardless of one’s gender, socioeconomic status (SES), or migrant background. Unsurprisingly, more equitable education systems possess small achievement gaps between diverse segments of their student population as well as smaller performance variations across schools (Schnepf et al., 2019). Both the IEA and OECD encourage policymakers to juxtapose achievement gaps and performance variations against education system policies and structures. Yet, the academic community have raised numerous concerns with the growing influence of international organizations and the policy implications stemming from international test results (see Hopfenbeck, 2019; Lundahl and Serder, 2018; Meyer and Benavot, 2013; Sahlberg, 2016). In the case of PISA, these concerns were aptly summarized in an open letter addressed to the OECD Director for the Directorate of Education and Skills, Andreas Schleicher, that was published in The Guardian (British national daily) newspaper (Andrews, 2014). It is worth noting that this letter was signed by more than a hundred academics around the world (Meyer and Zahedi, 2014).

Chief among the concerns raised in The Guardian open letter were that the OECD and PISA: 1. Shifts attention to short-term fixes designed to help a country quickly improve their international rankings, despite research which consistently suggests changes in education take decades to come to fruition; 2. Focuses attention away from the less measurable or immeasurable educational objectives like physical, moral, civic, and artistic development, thereby dangerously narrowing our collective view regarding the purpose of education; 3. Is naturally biased in favour of the economic role of public schools versus how to prepare students for participation in democratic self-government, moral action, and a life of personal development, growth, and well-being; and 4. With its continuous cycle of global testing, harms children and adversely impacts schools, as it inevitably involves more testing, scripted lessons, and less professional autonomy for teachers. In this way, PISA has further increased stress levels in schools, which endangers the well-being of students and teachers (Andrews, 2014).

Despite the expansion of tested domains and greater attention to well-being and other non-cognitive student characterictics in recent years by the OECD, many of the concerns noted above remain. Overall, scholars around the world continue to assert that international large-scale assessment results, particularly those stemming from PISA, exert a hegemonic grip on global education policy agendas (see Ornelas, 2021; Seitzer et al., 2021). Even more disconcerting is that the global appeal of PISA has made these results particularly susceptible to politicization in ways that do not support best policy or practice (see Carvalho, 2020; Volante and Klinger, 2021; Zhao, 2020).

PISA, politics, and policy

For more than three decades PISA has captured the attention and imagination of popular media outlets resulting in a flurry of unabated headline stories celebrating, bemoaning, and comparing the state of national education systems. Terms like ‘PISA-Shock’, initially used to describe poor German performance in PISA 2000, are now widely used by policymakers and popular media outlets around the world to describe poor national results, and relatedly, the need for education reform. Indeed, a quick search of google using the term ‘PISA Shock’ returned more than five million results (as of January 1, 2024). As previously suggested, PISA has been widely promoted and embraced by national policymakers as a key metric for judging educational quality and the effectiveness of their education system.

The degree to which national governments have utilized PISA results, however, varies across countries. For instance, in Italy and France, evidence-based policy making in education reforms has only been narrowly based on the PISA data (Breakspear, 2012; Damiani, 2016). In Europe, the trend towards test-based accountability is clearly visible in the introduction and thereon continued development of the first General Certificate in Secondary Education (GCSE) examinations in England in 1988, and the INVALSI (Istituto Nazionale per la Valutazione del Sistema Educativo di Istruzione e di Formazione or National Institute for the Evaluation of the Education and Training System) tests in Italy in 1999. INVALSI is the Italian National Evaluation Agency responsible for the evaluation of the school system, and more generally, for the design and administration of assessment instruments in the Italian schooling system. Rarely are PISA reports discussed in the national public media in Italy, despite the country performance below the OECD average and deterioration of results in PISA 2018. In the national public discourse, the national test results by INVALSI receive instead large public and media attention. This is not to say that education reforms in Italy are based on evidence-based research. For instance, in Italy, the 2018 PISA results and earlier academic research (Checchi, 2004) have shown a steady increase in territorial differences of students’ performance between North and South Italy. Despite this trend, education reforms generally have not tried to address systematically this problem.

It is also worth noting that PISA attempts to occupy two parallel positions within global and national policy arenas: as an objective measure of student achievement, and by extension, educational quality; but also, as a promoter of ‘best-practices and policies’ gleaned from the available evidence. Thus, PISA is not merely descriptive in representing existing conditions, it is also performative in attempting to create new ones (Gorur, 2016). Higher correlations between national achievement results and select policies are ultimately the basis upon which ‘better policies for better lives’ (PISA website tagline) are identified and diffused via the OECD’s extensive online library and impressive knowledge mobilization mechanisms. Promoted policies are largely, although not exclusively, offered as an important way to improve a nations’ human capital. Unsurprisingly, given the salience of PISA scores, national test results are also reflected in related international projects such as the World Bank’s Human Capital Index (World Bank Group, 2020). Nevertheless, the human capital construct and validity of statistical claims asserting improvements in international test results will ultimately translate into higher Gross Domestic Product (GDP) growth rates remain highly contested (Forestier and Adamson, 2017; Kamens, 2015; Komatsu and Rappleye, 2017, 2021). Similarly, limitations of cross-sectional data, potential errors in survey estimates, fitness of international comparison testing for guiding national policymaking, as well as the explanatory power of single measures to capture the complexity of schooling outcomes, are equally problematic features associated with policy monitoring and evaluation tied to PISA data (Schnepf et al., in press).

Unfortunately, policymakers and media outlets have paid relatively scant attention to PISA’s limitations or questioned the underlying assumption that it operates as an objective and neutral policy lever. Indeed, PISA is often used as a starting point to contemplate policy reforms across Europe (Grek, 2009; Pons, 2012), North America (Brochu, 2014; Martens and Niemann, 2013), Asia (Liu, 2018; Takayama, 2010), Australasia (Davis et al., 2020; Waldow et al., 2014), and more recently, select parts of South America such as Brazil (Nato, 2022; Pugliese and Santos, 2022). Clearly, PISA occupies a privileged position in transnational and global policy discourses and is often referenced as a reliable and valid measure to help policymakers frame their policy choices in relation to evidence-based decision-making.

Modified evidence-based decision-making model

It is important to remember that the OECD’s is not an elected body with jurisdictional authority, nor are the policy suggestions disseminated by this international organization binding for member states. Nevertheless, the tools utilized for transmitting select policies and expert advice by the OECD are often referred to as a ‘soft power’ that results in transnational regulation and governance (Ornelas, 2021; Waldow and Steiner-Khamsi, 2019; Ydesen, 2019). As one example, the strategic framework for European cooperation in education and training contains seven key indicators – one of which stipulates that the share of 15 year olds with insufficient reading, mathematics and science abilities, as measured by PISA, should be less than 15% (European Commission, 2022). The latter suggests PISA results are ascribed higher status and relevance than national and regional assessment indicators, which are undoubtedly more reflective of local teaching, learning, and cultural contexts.

Similarly, although it is tempting to assume stagnant or slumping PISA scores are used to support a cadre of new reform initiatives – or positive results to support current trajectories of reform – there are many instances where the trajectory of results, negative and/or positive, is largely irrelevant to the nature of the policy choices enacted. France and Italy are examples of education reform trajectories that seem indifferent to the policy discourse of PISA shocks. In such instances, PISA is merely a reference point to add legitimacy to a course of action, sometimes predetermined, to the release of results. Overall, the scope and trajectory of education reforms associated with PISA results can be heavily influenced by the political predilections of a governing party. Similar to the bending of light through a prism, PISA results can also be bent in response to political prisms.

The policy options-consideration-choices steps that undergird dominant evidence-based decision-making narratives are undoubtedly mediated by national and geopolitical issues, sometimes in ways that discount the available evidence-base. They are also mediated by domestic bureaucratic traditions and culture. The concept and practice of public accountability, and the associated dimensions of monitoring and assessment, varies considerably across countries (Mattei, 2009). In Italy, for instance, the central administration (including the Department of Education) draws its legitimacy on the traditionalist Weberian model of public administration, concerned with internal control mechanisms and not output-legitimacy and service-oriented practices. Of course, educational jurisdictions around the world vary significantly in their uses of PISA – but it is clear industrialized nations with a higher reactivity to international comparison testing and a more firmly entrenched history of standards-based reform, tend to be more susceptible to the politicization and selective uses of this global benchmark (Volante, 2018).

The proposed modified evidence-based decision-making model, noted in Figure 1 above, offers a counter to the simplistic notion that national policymaker’s reference and utilise the best available evidence to inform their policy choices. Collectively, the three steps undergird PISA’s function as a political tool in the realm of public policy. The ensuing national cases offer select examples from three countries, and continents, to illustrate the strategic use of PISA results over time. Taken together, the analysis highlights the evidentiary policy symbolism that PISA can assume, and the support or cover it provides to adopt politically contentious education reforms. Modified evidence-based decision-making model.

PISA and policy development: International cases

Japan is one of the more intriguing countries to examine the strategic use PISA as a policy lever. Actively referencing Finnish success on the initial administration of PISA in 2000, Japanese policymakers promoted a series of sweeping reforms to address ‘slumping’ PISA 2003 results (Takayama, 2010; Watanabe, 2005). The vast majority of the reforms undertaken were ascribed to socio-political factors at the time and signalled an important pivot to a more centralized model for making school-related decisions (Ho, 2006). Takayama (2008) asserts that the PISA 2003 results reverberated with Japanese cultural, political, and contextual features and were used by the nations Ministry of Education, Culture, Sports, Science and Technology (MEXT) to legitimize controversial policy measures. More specifically, the existing yutori (low pressure) curriculum policy, with reduced school hours and 30% less educational content in curriculum guidelines, was abandoned (Knipprath, 2010). National testing designed to tap into PISA-like skills and domains was also introduced. Takayama (2008) astutely points out that both these reforms were under consideration by MEXT prior to the release of PISA 2003 results. Perhaps more importantly, these reforms also run counter to some of the most salient characteristics of the Finnish education system which has one of the shortest school days in the world and the absence of high-stakes large-scale testing measures. Unfortunately, these conflicting messages were largely absent in the popular media – with the three national newspapers Yomiuri, Asahi, and Nikkei providing extensive coverage of the PISA results that supported a neoliberal structural reform narrative (Takayama, 2008). Taken together, the Japanese case illustrates the strategic use of both PISA results and the Finnish reference society, to help promote seemingly contradictory and contentious reforms that were likely to take place in the absence of PISA score changes during the initial administrations.

In comparison to its Nordic neighbour Finland, Sweden has received relatively little attention in the global discourse surrounding PISA and education governance. Nevertheless, this Scandinavian country possesses a unique story – one that shows a downward trajectory of educational achievement, but far more germane to the current discussion, an intensification and steadfast commitment to failed policy approaches that have made it one of the most privatized education systems in the Western world. Evaluations of the effects of national reforms have suggested that Swedish schools have become more segregated over time (Böhlmark et al., 2015). More robust counterfactual studies also suggest the structural transformations and the rapid growth of voucher-financed, private (‘independent’) schools in Sweden during the 2000s, is the most plausible reason for increases in between-school variance and achievement gaps (Osth et al., 2013). Interestingly, one analysis of 200 protocols from Swedish parliamentary debates between 2000 and 2016, along with 380 newspaper articles from the largest media outlets that made explicit reference to ‘PISA’ and/or to ‘educational research’ concluded that PISA data and educational research were used to legitimize selective (political party) solutions (Lundahl and Serder, 2018). The same analysis concluded that PISA seemed to offer politicians sufficient and ‘neutral’ authoritative knowledge and was utilized as the first way to obtain legitimate support for education reforms. Even more troubling is that over five separate waves of PISA, Sweden has gone from a mediatized achievement crisis to a mental health crisis in their schools (Björn and Lindgren, 2023). Overall, the increased and selective use of PISA data in the Swedish context has created a vicious cycle where benchmarking fuels competition, which in turn increases educational inequality (Muench et al., 2022), and more recently, a marked decline in students’ sense of belonging (Björn and Lindgren, 2023).

The final case comes from Canada, one of the few nations where education is devolved to its ten provinces and three territories. Recognizing this somewhat unique governance structure, the OECD disaggregates Canadian results and reports provincial scaled scores, in addition to the Canadian mean. Canadian policymakers use PISA to monitor instructional quality, learn from other provinces, and to establish policy goals (Engel and Frizzell, 2015). Unsurprisingly, Alberta figures prominently in national discourses related to policy learning and borrowing given that this province traditionally sits at the top of Canadian and international league tables. Nevertheless, recent drops in Alberta’s achievement sparked renewed calls for greater standardized testing, back-to-basics curriculum and instruction, and ‘plenty of choice’ for parents (Zwaagstra, 2023). It is important to recognize that ‘choice’ in the Alberta context refers to the availability of private (‘charter’) schools, which are not permitted in other Canadian provinces. Additionally, while this province has consistently remained a top performer in reading and science domains, lower math scores recently drew the ire of policymakers. As evidenced in one CBC News (Canada’s national public broadcaster) editorial Former Alberta Education staffer warned that curriculum approach could tank international rankings, the status of PISA is evident (Edwardson, 2021). The same news article concluded that ‘it’s clear that the (curriculum revision) decisions … were driven by politics and not education’ and that proposed changes are ‘built on the idea of winners and losers, and that you’re weeding kids out as you go along’ (Edwardson, 2021). Given that Alberta has historically possessed lower high school completion rates when compared to the majority of its provincial counterparts, it is noteworthy this issue has received a muted response in comparison to school choice and curriculum reform issues. Overall, Alberta policymakers, similar to their Swedish and Japanese counterparts, have used PISA to add greater legitimacy to select policy reforms. As one executive from the Alberta Teachers Association aptly summarized: ‘PISA has historically been used as a way to declare a crisis in education … to justify knee-jerk reactions by education bureaucrats, which include increasing standardized testing and the narrowing of the curriculum’ (McRae, 2016: 5).

Taking stock of the ‘evidence’ for evidence-based policy

The previous cases show tenuous, contradictory, and nonexistent relationships between PISA-initiated policy reforms and the available evidence. For example, research reviews, including those using PISA data sets, suggest increases in instruction time, similar to the yutori reform in Japan, are subject to ceiling effects, mediated by the composition of classrooms, and offer mixed, or no, impact on achievement (see Dagli, 2019; Long, 2014; Meyer and Van Klavaren, 2013). It is also worth noting that the OECD policy brief ‘Is spending more hours in class better for learning?’ concluded ‘what really matters is how effectively that (instructional) time is used’ and that ‘simply increasing the number of hours students spend in class will not automatically help to improve students’ performance’ (Organization for Economic Cooperation and Development, 2015a: 3–4). Thus, it is difficult to argue that the Japanese reforms related to the yutori curriculum were supported by clear empirical findings or OECD’s policy guidance.

Similarly, the Swedish case illustrated the use of declining PISA results to accelerate school choice provisions and privatization, which is generally recognized to exacerbate (not decrease) equity concerns (Zancajo and Bonal, 2022). It is also worth noting that the strength of the association between choice and reduced educational opportunities has escalated over time within the Swedish context (Fjellan et al., 2019). Similar to the Japanese case, OECD Policy Briefs also provide little evidence to support the proposed reforms. For example, the policy brief ‘when is competition between schools beneficial?’ notes that ‘the relationship between school choice and student performance is weak’ and ‘competition among schools is related to greater socio-economic segregation among students’ (Organization for Economic Cooperation and Development, 2014: 1–3).

Finally, the Canadian case illustrated how the trajectory of PISA results had little bearing on reform discourses. High and relatively lower achievement results in select test domains were both used to support the intensification of test-based accountability policies that align with a standards-based neoliberal approach. The latter occurred despite clear evidence suggesting such approaches have failed to improve overall achievement and positively address equity and well-being concerns – particularly in the most heavily tested Western educational jurisdictions (see Koretz, 2017–2018; Santori, 2020). Indeed, studies within the Canadian context have underscored the potential contribution to inequities and the segregation of students based on their race and social class where large-scale testing is used for system-level accountability (Rezai-Rashti and Lingard, 2021). Additionally, an OECD working paper on the topic of test-based accountability found no conclusive evidence that these policies affect performance or equity within high-income countries (Torres, 2021). The same report repeatedly noted the potential for unintended negative consequences such as narrowing of the curriculum, academic segregation in schools, and other negative washback effects associated with high-stakes testing.

Overall, the ‘evidence’ used to justify the reform proposals in the previous cases was largely unsupported by the available research or guidance within the OECD’s policy briefs. Rather, PISA served an important symbolic and strategic function to support preferred educational reforms, which largely align with a standards-based neoliberal reform orientation. In some respects, PISA enjoys an elevated ‘brand’ status that allows politicians to seemingly avoid critique or provide a coherent evidence-based justification to support their policy positions. Undoubtedly, PISA’s national and international salience allows it to function as an important global and transnational governance tool. Collectively, the previous cases converge with similar cross-national analyses that highlight the evidentiary policy symbolism and legitimacy function that PISA can provide for politically and ideologically contested policy agendas (Volante and Klinger, 2021).

Future research

Given the close confluence of neoliberal approaches with education reform and the strategic use of PISA results, it is important to understand how changing cultural and political factors mediate this relationship moving forward. More specifically, to what degree have other industrialized countries, not discussed in the present paper, succumbed, or resisted, an overarching neoliberal standards-based orientation to education policy formation? Conversely, what are the catalytic factors that provoke shifts from opinion-based to evidence-informed to evidence-based decision-making approaches? Most importantly in relation to the present discussion, how do policymakers successfully mediate the unintended and inappropriate uses of PISA results within their national contexts to promote a broader range of valued learning outcomes? Collectively, research examining these questions in a broad range of national and cultural contexts contributes to the development of strategies that help mitigate the politicization of assessment data.

Admittedly, the efficacy of politicization mitigation strategies is largely influenced by domestic issues and geopolitical developments. As one editorial in the Guardian British daily newspaper asserted, neoliberalism has swallowed the world … and the authority of a reformer or legislator does not derive from the market, but from humanistic values (Metcalf, 2017). Neoliberal views have become such an accepted part of educational discourses – they are often presented as though there is no alternative (Baird and Elliott, 2018; Uljens, 2007). Overall, future studies across national contexts, including those in lower- and middle-income countries that now participate in the recently launched PISA for Development (PISA-D) initiative, can provide valuable insights on the conditions that lead to more authentic, and less skewed, evidence-based decision-making approaches. In doing so, they also offer the international community easily referenced cases that highlight effective educational change.

Lastly, it is worth noting that despite the introduction of additional tests by the OECD, the traditional content domains of reading, mathematics, and science literacy remain the most important in terms of public policy discourse. This hierarchy of subjects has been present since the emergence of compulsory education systems during the Industrial Revolution. Nevertheless, it will be interesting to examine the degree to which the results of the Creative Thinking assessment in 2022, Digital World assessment in 2025, or perhaps the rapid emergence of Artificial Intelligence (AI) applications, may disrupt global governance discourses. Whether these assessments or technological tools lead to the emergence of new global reference societies, national and transnational reform agendas, or ideally provoke a re-think of the relative importance and assessment of other interdisciplinary academic skills, student mental health outcomes, and/or school completion rates, remains to be seen. Given that national assessment systems such as those in Germany, Ireland, and Canada were redesigned to essentially mirror PISA (Volante, 2016), it will be interesting to observe the degree of influence they may (or may not) exert on educational policy developments around the world.

Conclusion

In their review of 450 education reforms that were adopted across countries between 2008 and 2014, the OECD concluded that the reforms needed for coming close to the ‘best’ performing nations and the actual reforms implemented were (very) weak (Organisation for Economic Cooperation and development, 2015b). This statement is a tacit acknowledgement, that even when one considers the best evidence from the perspective of the OECD, governments have largely failed to engage in evidence-based decision-making. Thus, one must naturally wonder what purpose PISA serves in the domain of public policy and how the utilization of results is mediated by political strategies and discourses. The preceding discussion suggested that the descriptive and performative functions of PISA, previously noted, are heavily influenced by national, cultural, and geopolitical issues. Domestic politics and institutions determine the utilization of research results independently from its technical data. In some respect neoliberalism, not evidence-based decision-making, provides the best heuristic to understand the uptake of education policies in relation to this global benchmark measure.

The education ministers that laid the groundwork for PISA in the 1990s were principally concerned with developing a robust measure of student competencies that would help promote human capital. Although they would undoubtedly be content with the salience of this benchmark measure, they likely would not have foreseen its evolution into a political and symbolic tool to add legitimacy to select policy reforms which would otherwise be unpopular and difficult to implement by national standards. And unlike the Tower of PISA that leaned for 800 years before it was stabilized, the figurative counterweights need to buffer the skewed and selective uses of the PISA survey cannot wait. This is particularly the case given the significant disruptions to in-person learning caused by COVID-19, and the aftereffects on students. Indeed, a wide range of national and cross-national research studies have reported troubling learning losses associated with the pandemic (Bailey et al., 2021; Blazko et al., 2021; Donnelly and Patrinos, 2021; Dorn et al., 2020; Engzell et al., 2021; Kaffenberger, 2021; Maldonato and De Witte, 2022; Milner et al., 2021; Schnepf et al., in press; Volante et al., 2022), further underscoring the critical importance of utilizing the most effective policies to promote student learning outcomes, particularly for the most vulnerable student groups.

Clearly, conventional evidence-based decision-making models are presently ill-suited to explain the uptake of PISA in national policy discourses or their related impact on educational policy reform agendas. The previous discussion suggests PISA results are particularly susceptible to politicization and serve as an important evidentiary symbolic role to justify contested policy reforms. Undoubtedly, strategies to mitigate the continued robustness of our modified evidence-based decision-making model should be enacted. The latter would facilitate more objective considerations of ‘evidence’ in evidence-based policy discourses to the betterment of students and education systems.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by the Social Sciences and Humanities Research Council of Canada (SSHRC) (435-2023-0664).

![]() . She is a past recipient of the T.H. Marshall Fellowship at the Department of Social Policy, London School of Economics and Political Science (2005–2008).

. She is a past recipient of the T.H. Marshall Fellowship at the Department of Social Policy, London School of Economics and Political Science (2005–2008).