Abstract

This paper provides a strategic classification of artificial intelligence (AI) techniques based on a systematic literature review and four levels of potential: the levels of input, output, collaboration and creativity. The classification demonstrates the potential and challenges of the AI techniques when used in early stages of architectural design. We aspire to help architects, researchers and developers to choose which AI techniques might be worth pursuing for specific tasks, optimising the use of today’s computational power in architectural design workflows. The results of the classification strongly indicate that Evolutionary Computing, Transformer Models and Graph Machine Learning hold the greatest potential for impact in early architectural design, and thus merit the attention to achieve that potential. Moreover, the classification assists with building multi-technique applications and helps to identify the most suitable AI technique for different circumstances such as the architect’s programming skills, the availability of training data or the nature of the design problem.

Introduction

This paper provides a strategic classification of artificial intelligence (AI) techniques that helps architects, researchers and developers assess which AI techniques maximise the available computational power for different design tasks in early architectural design stages. Artificial intelligence is an umbrella term that covers a wide range of techniques, and depending on the nature of the task and the availability of the (training) data, some AI techniques are better suited than others. Rather than classifying AI techniques on computational or technical features, this paper reframes AI techniques in relation to their potential applicability in early stages of architectural design.

Background

State of the art research1–3 indicates that AI techniques can be implemented in various tasks for early architectural design: from performance based goals to form finding, spatial programming and multi-objective optimization. While the state of the art shows which AI techniques have been used in research, it fails to provide a strategic overview on which AI techniques are useful for architectural design practice. A classification that shows the potential and challenges of the AI techniques in relation to early stages of architectural design could help future architects, researchers and developers to choose which AI techniques might be worth pursuing for specific tasks at hand. This leads to the main research question of this paper: RQ: Which AI techniques hold the greatest potential for architecture in early design stages, and what are the challenges associated with each of those AI techniques?

Research methodology

Definitions

Before we can classify AI techniques in relation to early stages of architectural design, we define the scope of artificial intelligence techniques as a whole and identify each AI technique relevant to early architectural design.

Scope of artificial intelligence techniques

Artificial intelligence was first coined by John McCarthy and Marvin Minsky in 1955 4 to find out how machines could attain a level of intelligence comparable to human cognition. The field of artificial intelligence – or ‘machine intelligence’ as it was originally introduced by Alan Turing in his groundbreaking paper Computing Machinery and Intelligence 5 – has evolved steadily and resulted in various definitions of ‘intelligent’ machines. This paper adheres to Margaret Boden’s view on AI techniques to narrow the scope of the perceived intelligence. Boden is an authority at the intersection of cognitive sciences and artificial intelligence. She describes intelligence as a wide spectrum of information-processing capacities, which results in AI using different techniques to solve different tasks. Boden states that these AI techniques aren’t usually considered to be ‘intelligent’ (e.g. computer vision), but do involve humans’ psychological skills such as prediction, perception, association, etc. 6 Therefore, this paper only considers computational techniques that have a psychological evaluation aspect as artificially intelligent techniques. Thus, we do not consider computational techniques such as rule-based generative production systems (e.g. cellular automata, shape grammars) to be AI techniques in this paper.

Artificial intelligence techniques relevant to early architectural design

We investigated past research to identify AI techniques relevant to architectural design, starting with three state of the art reviews. The 1995-2021 review from Pena et al. covers articles on the use of AI in conceptual architectural design and shows that a majority of the 75 studied articles made use of Evolutionary Computing techniques. 1 The 2007-2022 review from Topuz et al. covers the use of Machine Learning for architectural design – a subset of AI that categorizes AI techniques that ‘learn’ from training data – and shows that a majority of the 60 studied articles made use of Neural Networks and their subcategories, such as Artificial Neural Networks (ANN), Convolution Neural Networks (CNN), Deep Neural Networks (DNN) or Generative Adversarial Networks (GAN). 2 These findings are consistent with the 2012-2022 review from Bölek et al. which showed a dominance of both Evolutionary Computing and Neural Networks in past research. 3

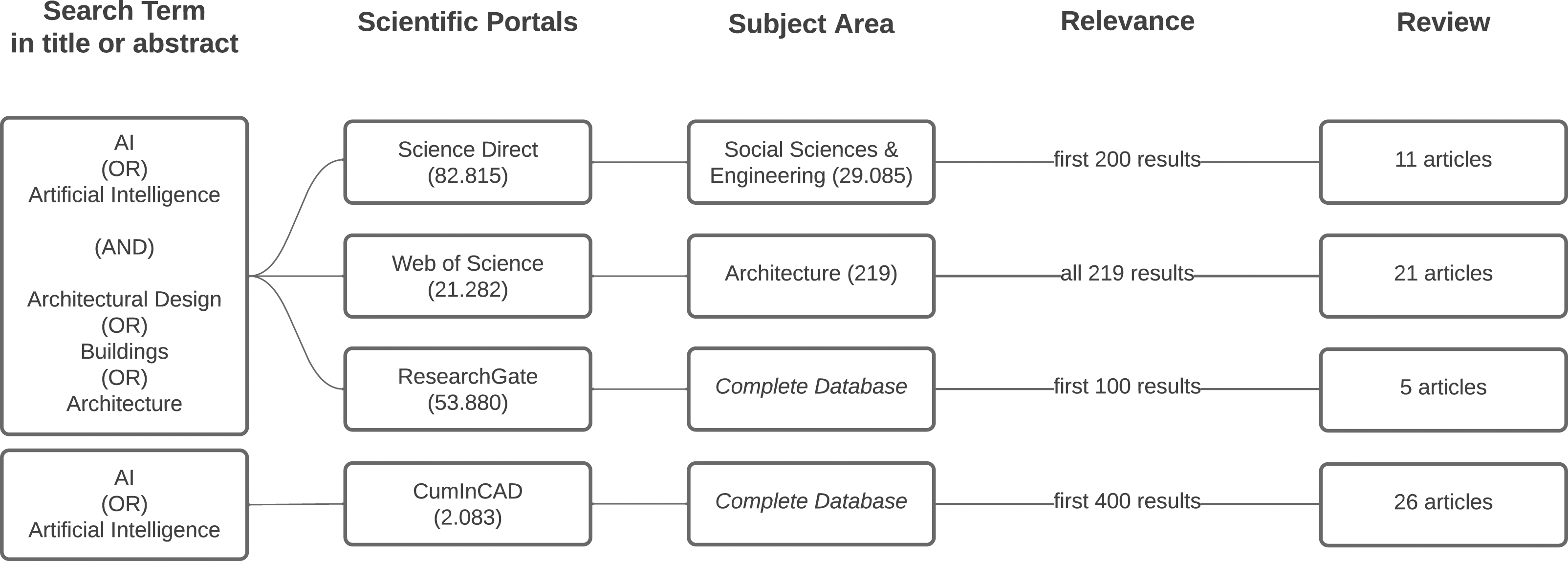

Because the three examined state of the art reviews do not clearly indicate which specific AI techniques have been used, we conduct an additional systematic literature search through the databases Science-Direct, Web of Science, ResearchGate and CumInCAD. This heterogenic group of databases was purposefully selected to conduct a broad and varied search of different AI techniques. We combined the keywords ‘AI’ or ‘artificial intelligence’ with ‘architectural design’ and variants such as ‘architecture’ and ‘building’. The search focuses on the early design stages of architectural design and therefore excludes results of urban design, fabrication or construction. As ‘architecture’ and ‘design’ often appear in the context of computer science (e.g. ‘computer architecture’ or ‘system design’), the search was further restricted in terms of subject areas. On Science-Direct this meant only the subject areas ‘engineering’ and ‘social sciences’ were included, which reduced the results from 82.815 to 29.085 articles. Furthermore, out of the first 200 results, only 11 articles covered the pursued use of AI in architectural design and most of them appeared in the top 50 results. Therefore, those results were considered as a basis for this paper. On Web of Science, the results were restricted to the category ‘architecture’, yielding a total of 219 results. Out of those results, 21 articles covered the pursued use of AI in architectural design. On ResearchGate the search term yielded 53.880 results. Out of the first 100 results, five articles covered the use of AI in architectural design and appeared mostly in the first 50 results. Therefore, those results were considered for this paper. On CumInCAD, the search for ‘artificial intelligence’ or ‘AI’ in either the title or keywords led to 2.083 results. Out of the first 400 results, 26 articles covered the pursued use of AI in architectural design and appeared mostly in the first 200 results. Therefore, only those results were considered as a basis for this paper Figure 1. Systematic literature search. Created by first author (2024).

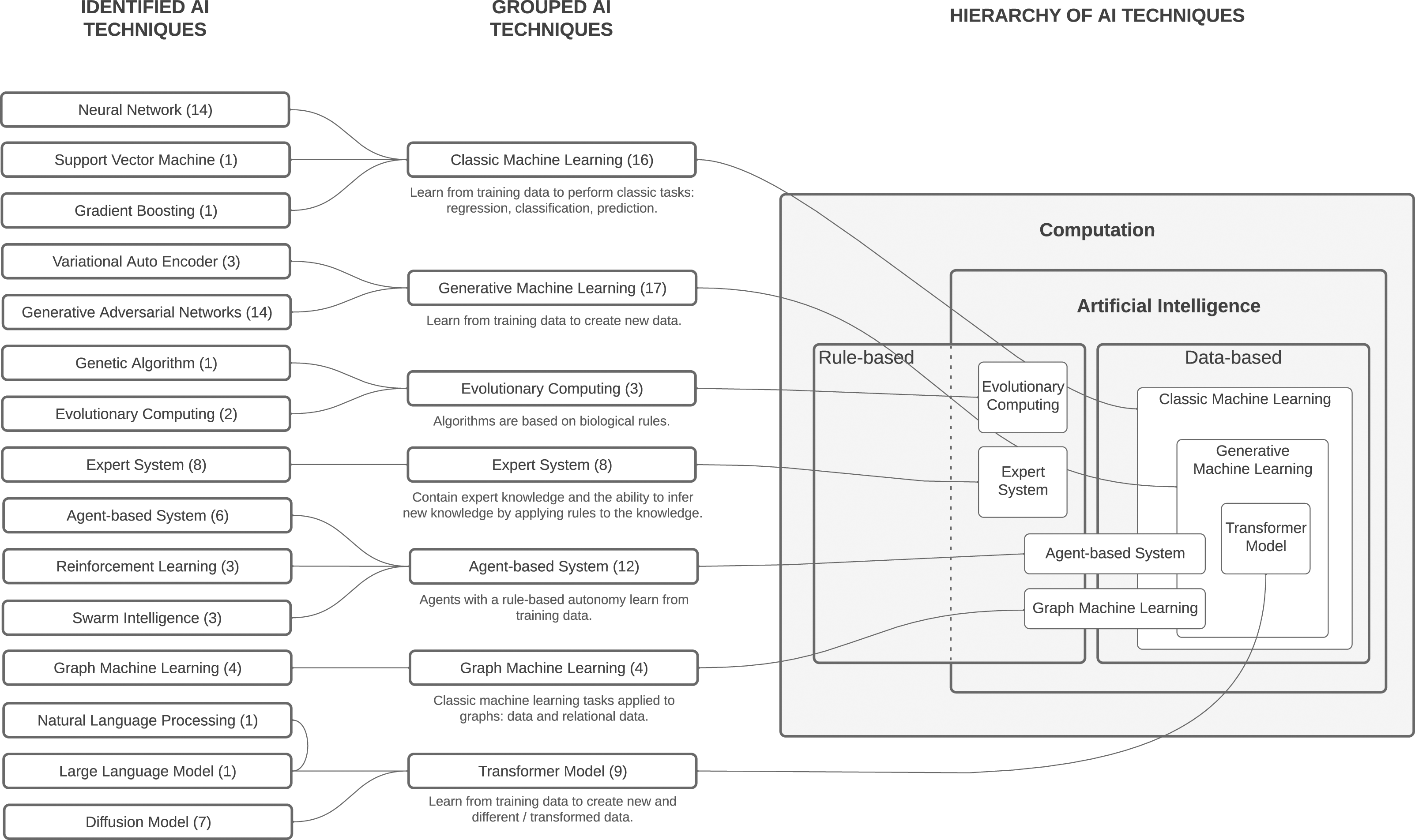

The systematic search yields 63 academic publications about the use of AI for a specific task during early architectural design.7–69 Since 67% of the articles was published during or after 2020, the results can be seen as a representation of recent research. The sum of the techniques in the articles that adhere to the above defined scope of artificial intelligence leads to the following distribution of AI techniques: Agent-based System (6), Reinforcement Learning (3), Swarm Intelligence (3), Graph Machine Learning (4), Generative Adversarial Networks (14), Neural Network (14), Genetic Algorithm (1), Evolutionary Computing (2), Expert System (8), Cellular Automata (2), Support Vector Machine (1), Gradient Boosting (1), Auto Encoder (3), Natural Language Processing (1), Large Language Model (1), Diffusion Model (7). 69

In order to keep the classification clear and useful, we group some of the identified techniques together in super categories. This leads to seven AI techniques that will be classified in this paper. Reinforcement learning and swarm intelligence are both subcategories of agent-based systems (ABS), which consist of agents that have a certain rule-based autonomy and are often able to learn from training data. Graph machine learning (GML) is a subfield of machine learning that works with graphs – mathematical data constructs that hold information about entities and the relationships between those entities. Classic machine learning (CML) contains techniques that use training data to learn in order to perform ‘classic’ tasks such as regression, classification and prediction, which includes neural networks, support vector machines and gradient boosting. Generative machine learning (GenML) contains techniques that learn from training data to create new data, such as generative adversarial networks or variational autoencoders. Diffusion models and large language models are subcategories of Transformer models (TM), which are extensively trained on large datasets and able to generate new data based on other kinds of data, for example generating images based on textual input. Genetic algorithms are a subcategory of evolutionary computing (EC), a field where algorithms are based on biological rules. Expert systems (ES) contain an embedded database of expert knowledge and are able to infer new knowledge by applying rules to the existing knowledge.

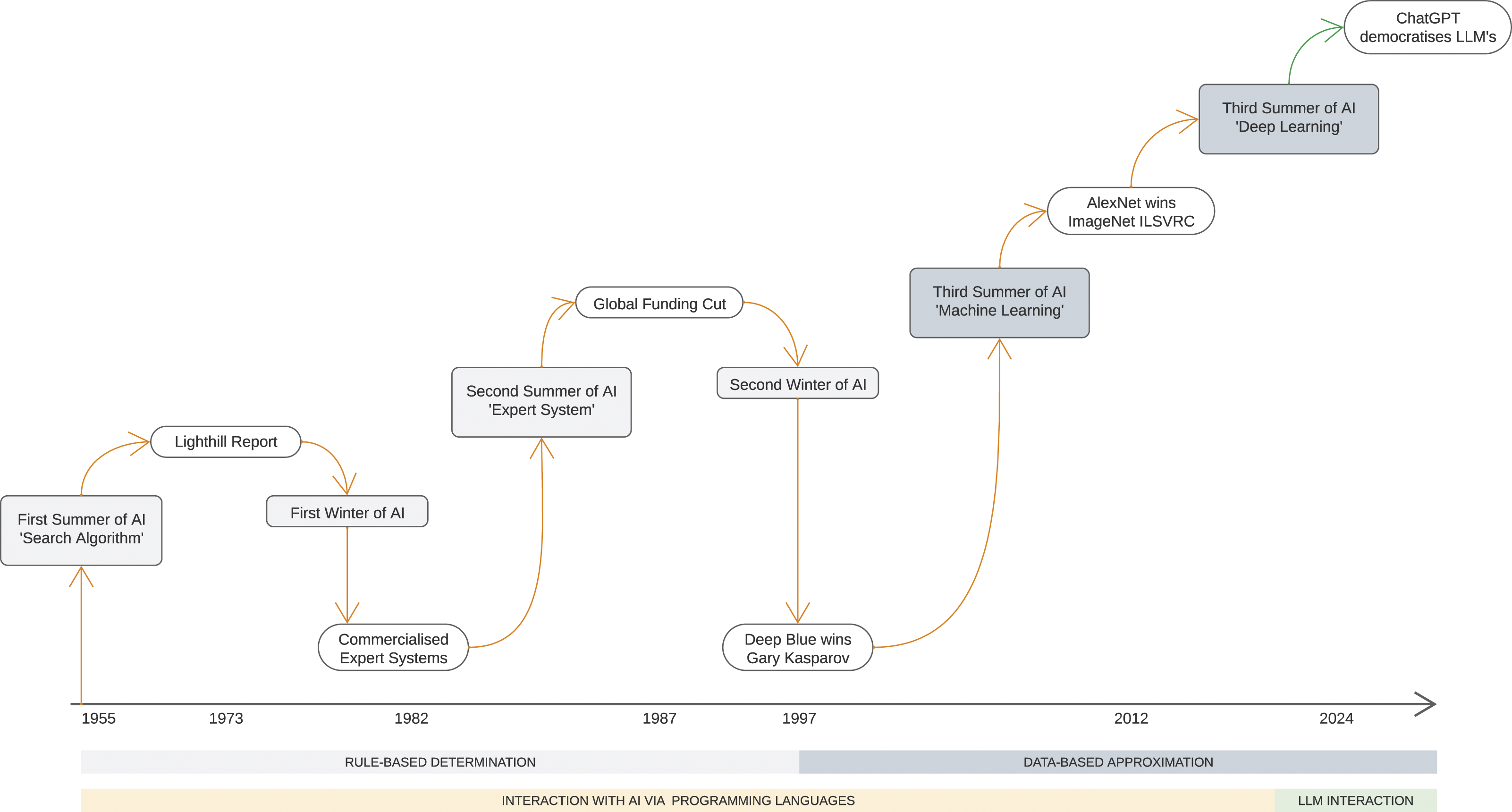

Figure 2 shows the origin of the seven AI techniques and their relationships, while indicating whether the techniques are rule- or data-based. Rule-based AI techniques achieve their inherent intelligence by applying rules to the input data in order to produce novel output. Data-based AI techniques achieve their inherent intelligence by studying both the input and output data (referred to as training data) to resolve the rules of the problem. AI techniques in this paper. Created by first author (2024).

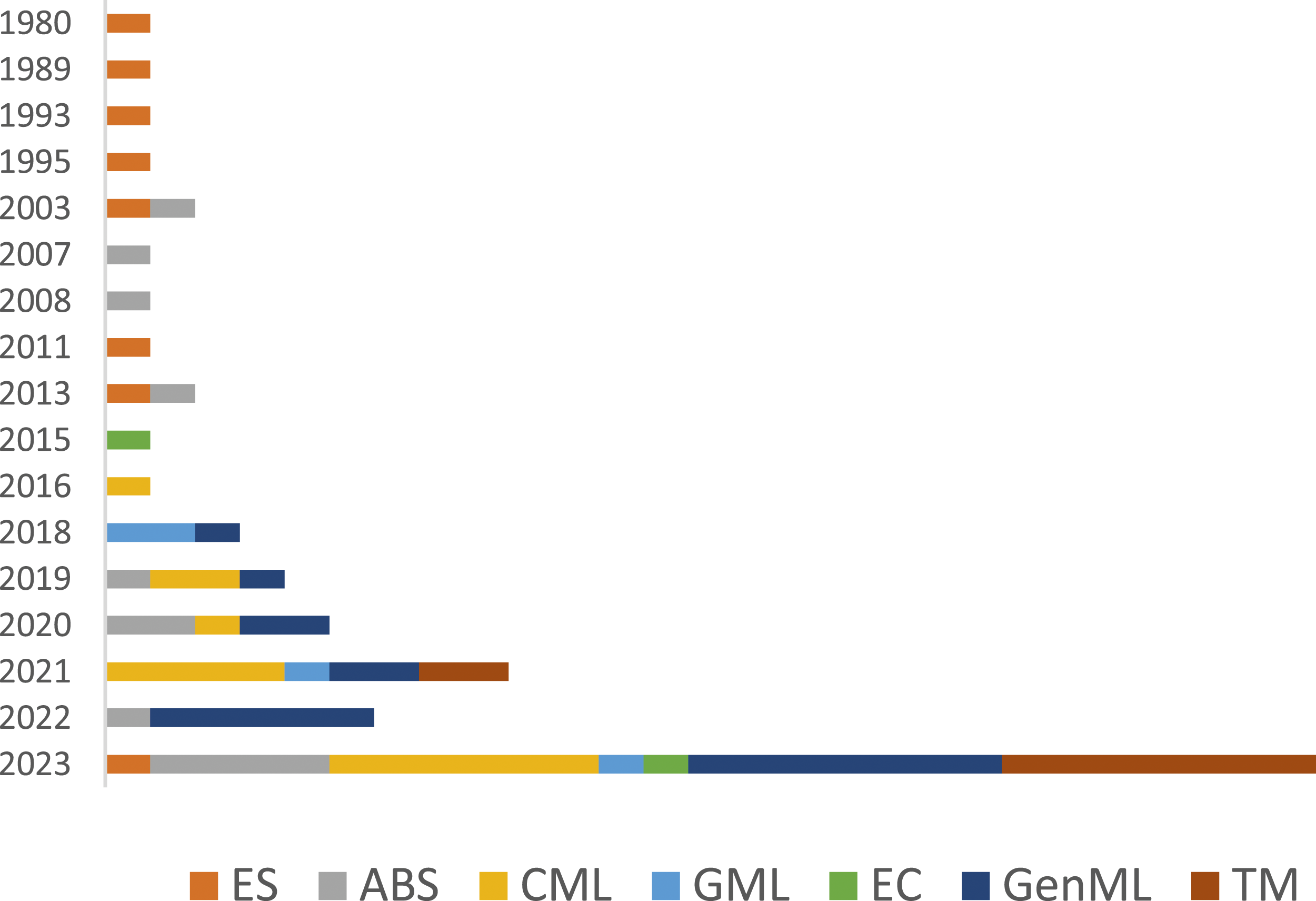

While we recognise that our systematic literature search will not have detected all articles relating to the use of artificial intelligence in early architectural design, we are confident to have identified the relevant categories for AI techniques for early architectural design. Furthermore, the AI techniques from the systematic literature search are grouped in the established categories and sorted by year of publication to indicate research trends. Figure 3 shows the dominance of Classic and Generative Machine Learning over the past years, and the rise of Transformer Models – bearing in mind that Diffusion Models

70

were only discovered in 2021 and the first publicly available Large Language Model

71

was launched in 2022. Distribution of AI techniques from the systematic literature search, by year of publication, created by first author (2024).

Methods

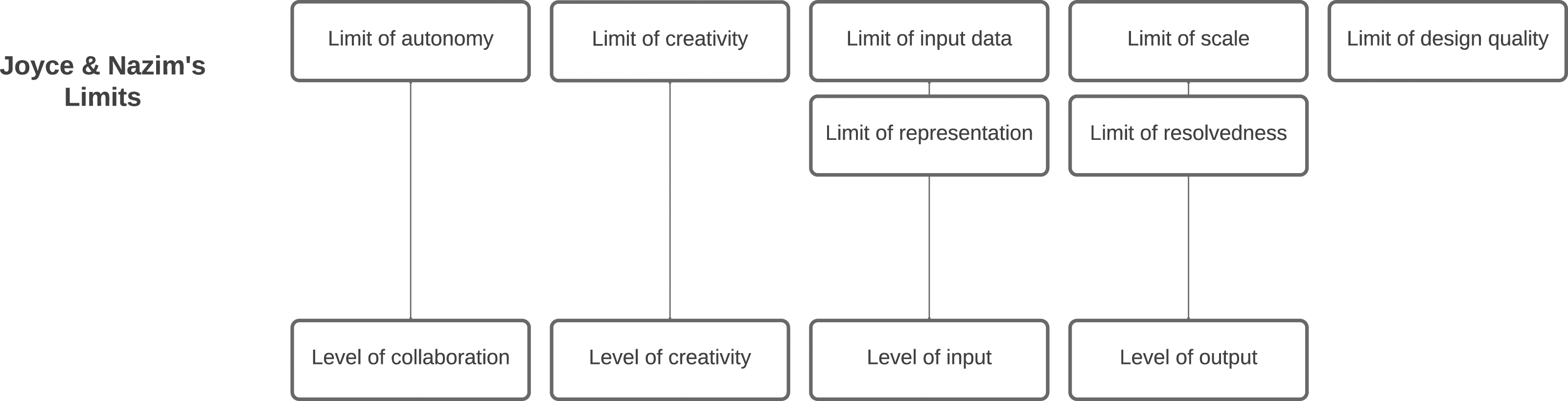

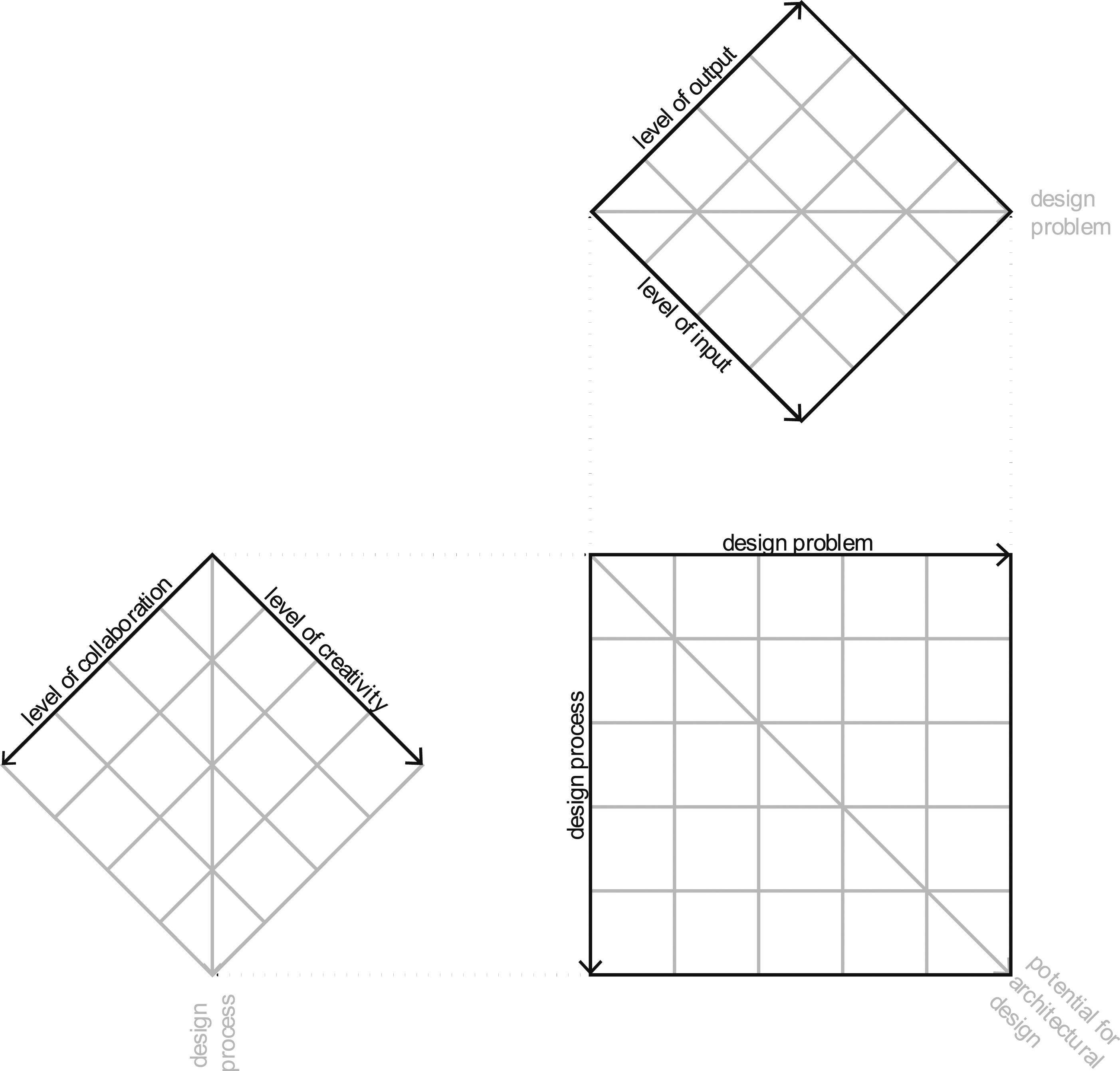

Figure 4 The AI techniques are mapped to their potential applicability in early stages of architectural design by evaluating four levels of potential: the level of input, the level of output, the level of creativity and the level of collaboration. These criteria are adapted from Joyce & Nazim, who established the limits of machine learning techniques relevant to design tasks.

72

Joyce & Nazim’s original limit of design quality has been omitted, as the discussion of good or bad architecture is part of a larger debate that is out of the scope of this paper. Levels of potential and the limits of machine learning techniques for architectural design. Created by first author (2024).

The level of input refers to all that is necessary to use a certain AI technique, such as training data, input data, the design problem definition, the architect’s expertise, etc. The level of input includes Joyce & Nazim’s original limits of representation and input-data. The level of output refers to the desired output from the AI technique, such as textual or geometric data. The level of output includes the original limits of scale and resolved-ness as defined by Joyce & Nazim. The level of creativity refers to the creative freedom that can be achieved by the AI technique, where creativity is regarded as “the ability to come up with ideas or artifacts that are new, surprising, and valuable” as defined by Margaret Boden. 73 The level of collaboration is based on Joyce & Nazim’s original limit of autonomy and refers to the human-machine interaction, collaboration with partners and the integration of the AI techniques into the architect’s workflows.

The level of input merits two additional notes. Firstly, training and input data can take on any format, from textual city regulations to drawings in PNG or OBJ files. However, there is a lack of large qualitative training datasets for architectural design, 64 which highly impacts data-based techniques. The datasets are often restricted to the architectural design data that are most commonly available to the public – i.e. images of rasterized floorplans. Therefore, those AI techniques that can make use of architectural design data that are available (i.e. using floorplans or the architect’s own expertise as input data) or that can generate their own synthetic datasets, are favoured for the level of input. Secondly, architectural design problems can either be well- or ill-defined, which also makes a difference for the level of input. As a whole, design problems are wicked 74 and therefore ill-defined by nature: they consist of a complex mixture of client demands, city regulations, energetic requirements, social aspects, cultural layers, personal preferences etc. However, certain design tasks could be isolated and considered as well-defined problems for the level of input.

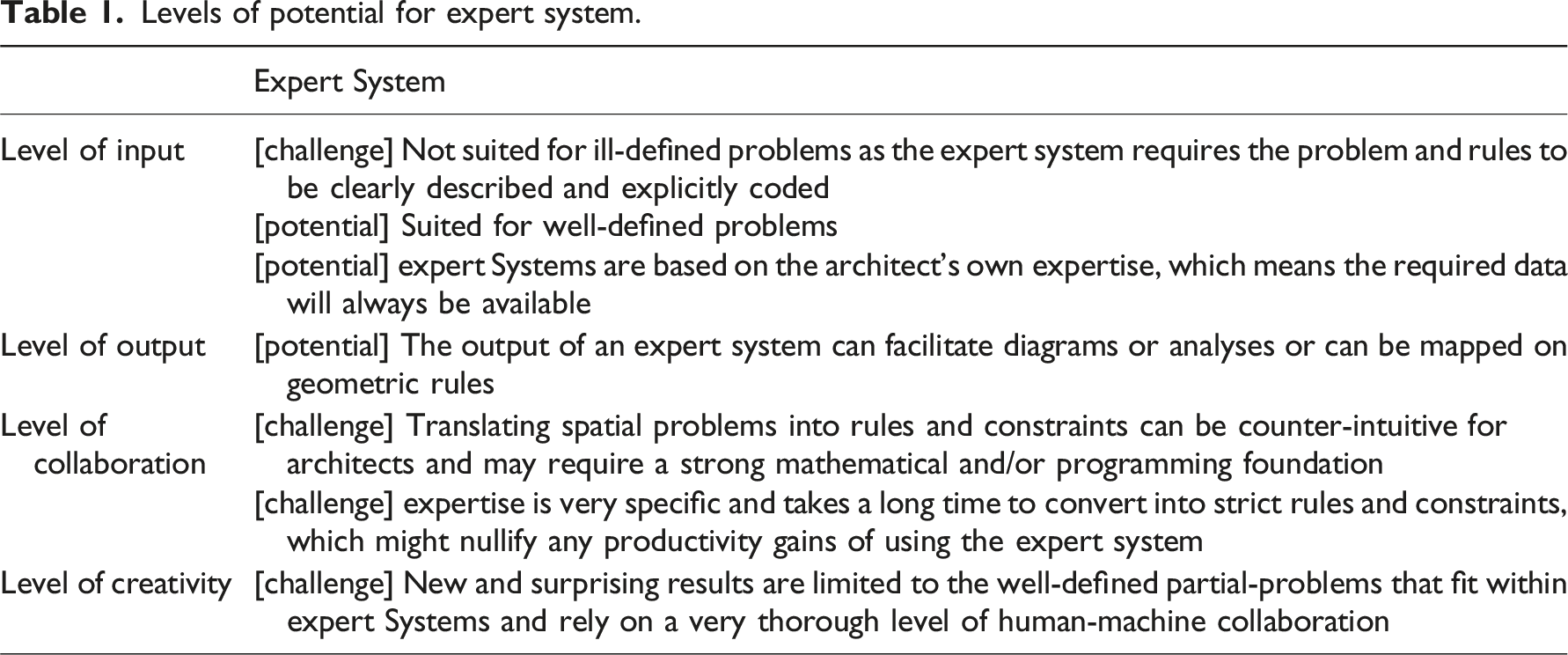

Each AI technique is evaluated for each level of potential, which results in a set of potentials and/or challenges. Those are then visually mapped onto a classification structure as proposed in Figure 5. The classification structure is composed of hierarchical diagrams that visually map each level of potential. Values are attributed to the smaller, outer diagrams and projected onto the larger, inner diagram. The levels of output and input are mapped together to create a classification for the design problem. The levels of creativity and collaboration are mapped together to create a classification for the design process. The results of both diagrams are projected onto the larger diagram, which unites them to map the potential of the AI techniques in early architectural design. The classification structure can be interpreted in various ways. e.g., the largest diagram (i.e. the final classification) not only shows the potential of the AI techniques on the resulting diagonal, but also shows whether the AI techniques are more suited towards solving the design problem or being integrated into the design process. Similar interpretations apply to each of the smaller diagrams. Classification structure to classify the potential of AI techniques for architectural design. Created by first author (2023).

Classification

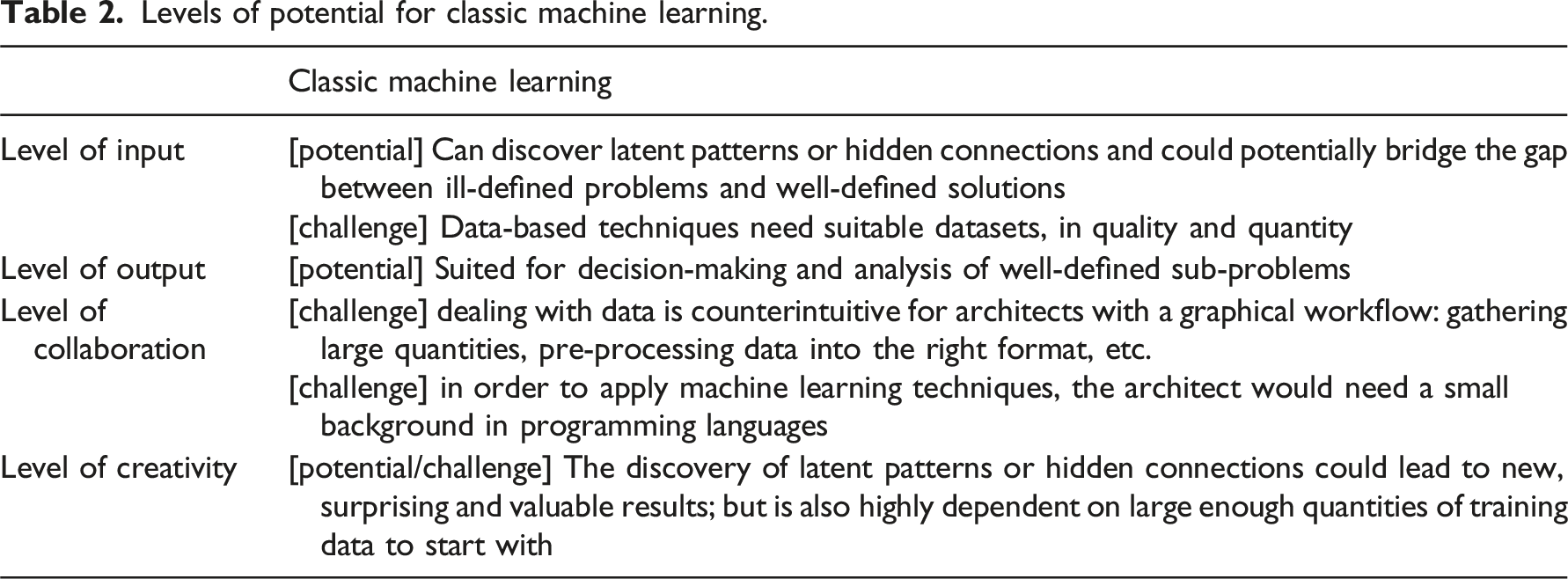

Levels of potential for expert system.

Levels of potential for classic machine learning.

Levels of potential for graph machine learning.

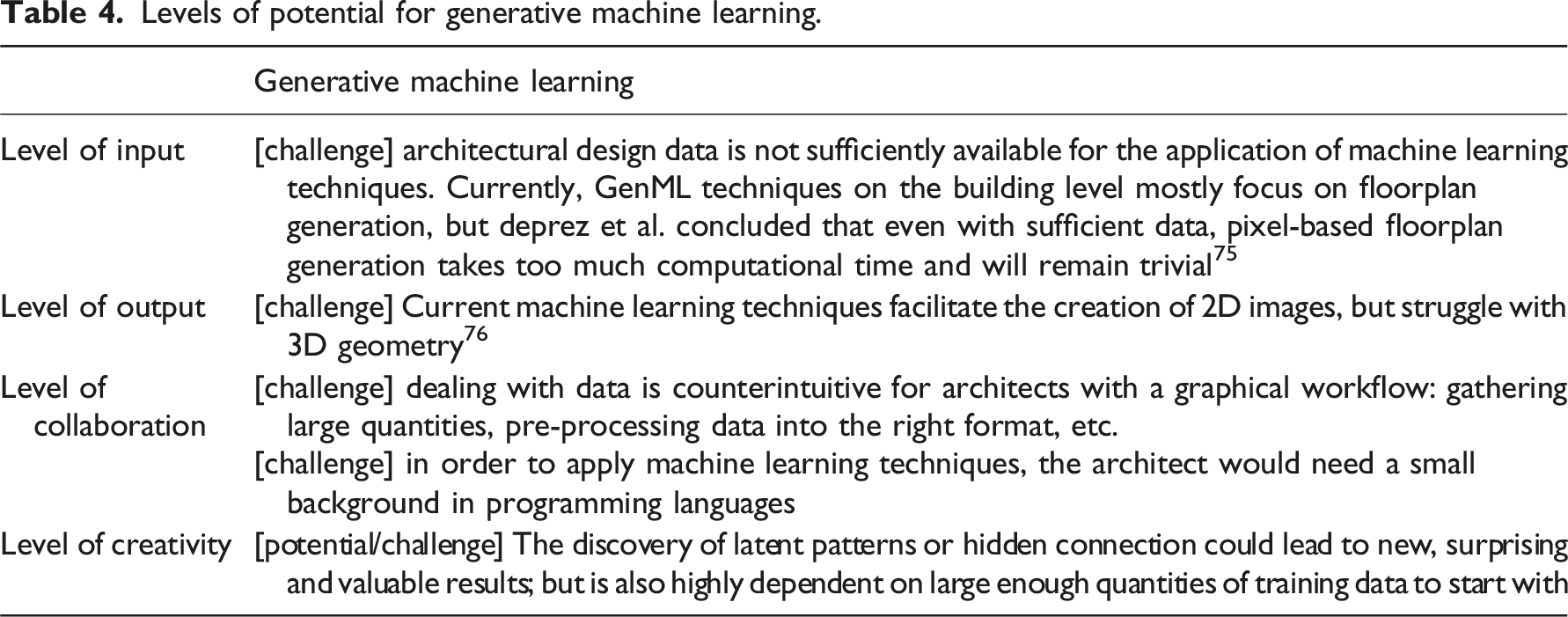

Levels of potential for generative machine learning.

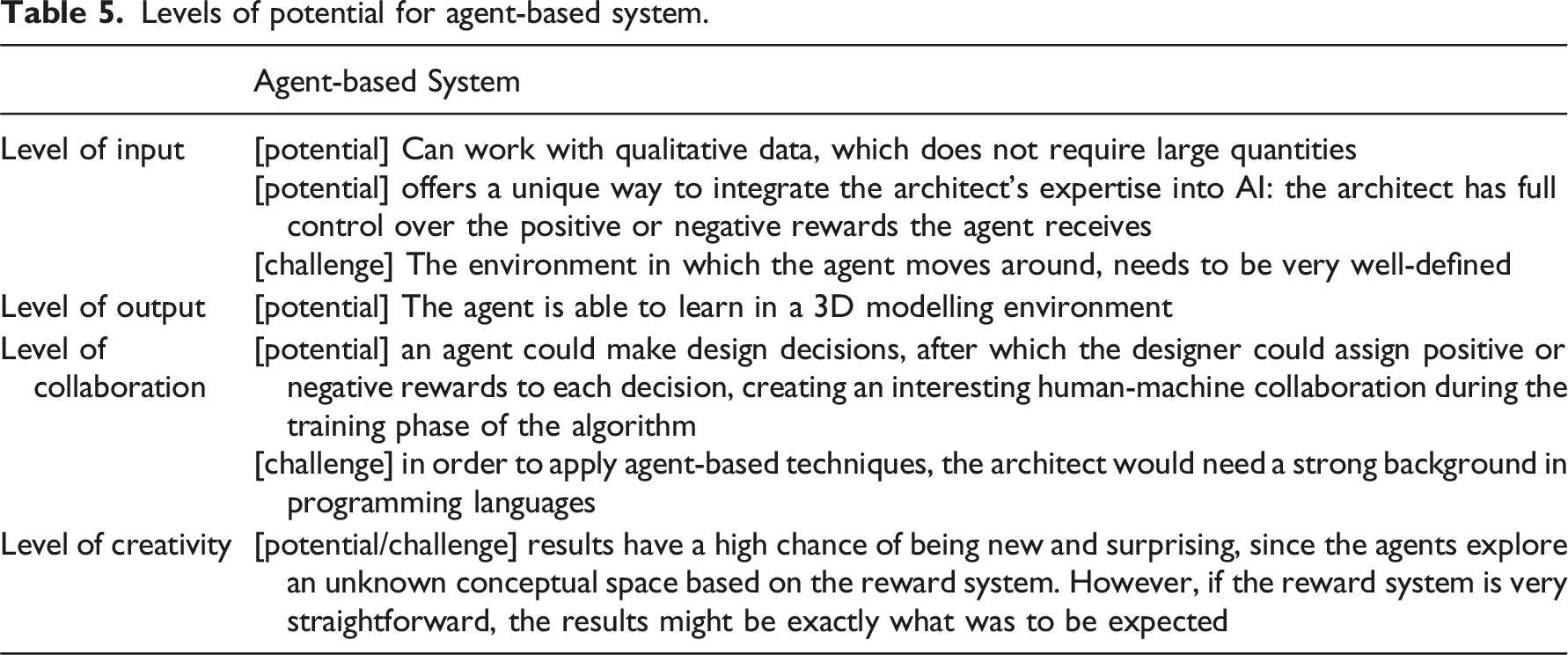

Levels of potential for agent-based system.

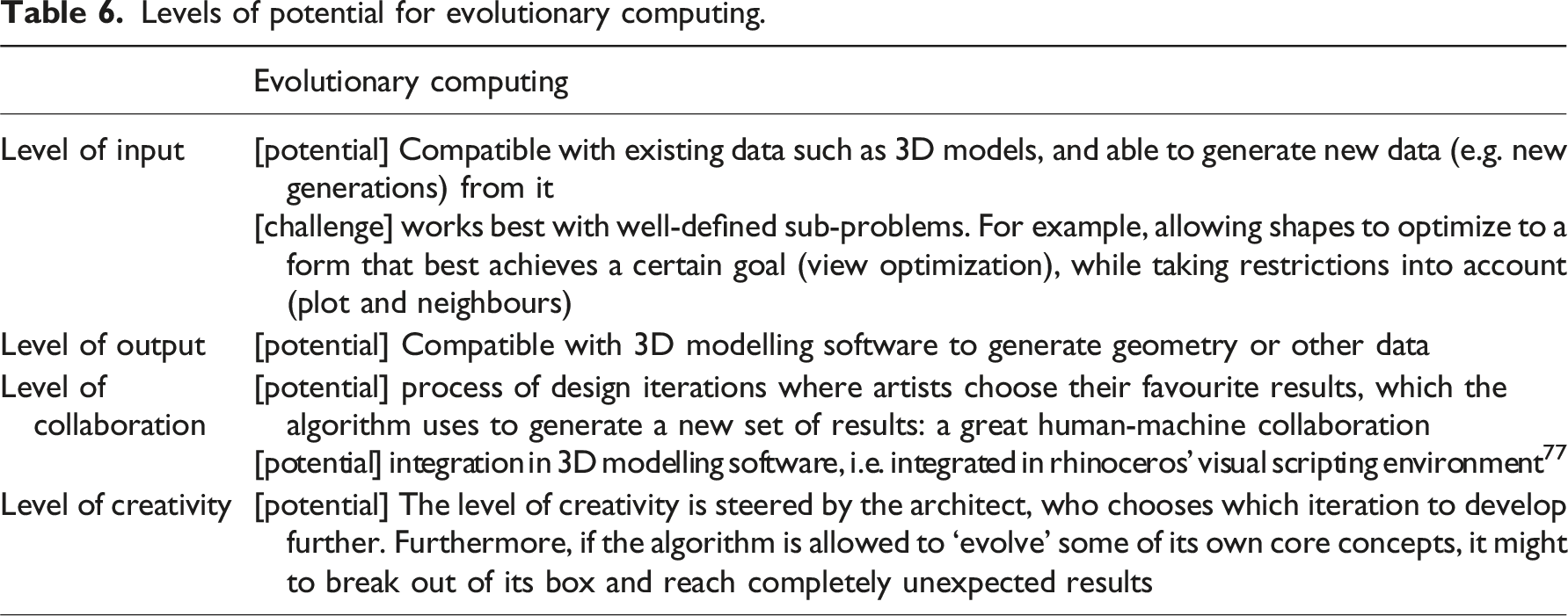

Levels of potential for evolutionary computing.

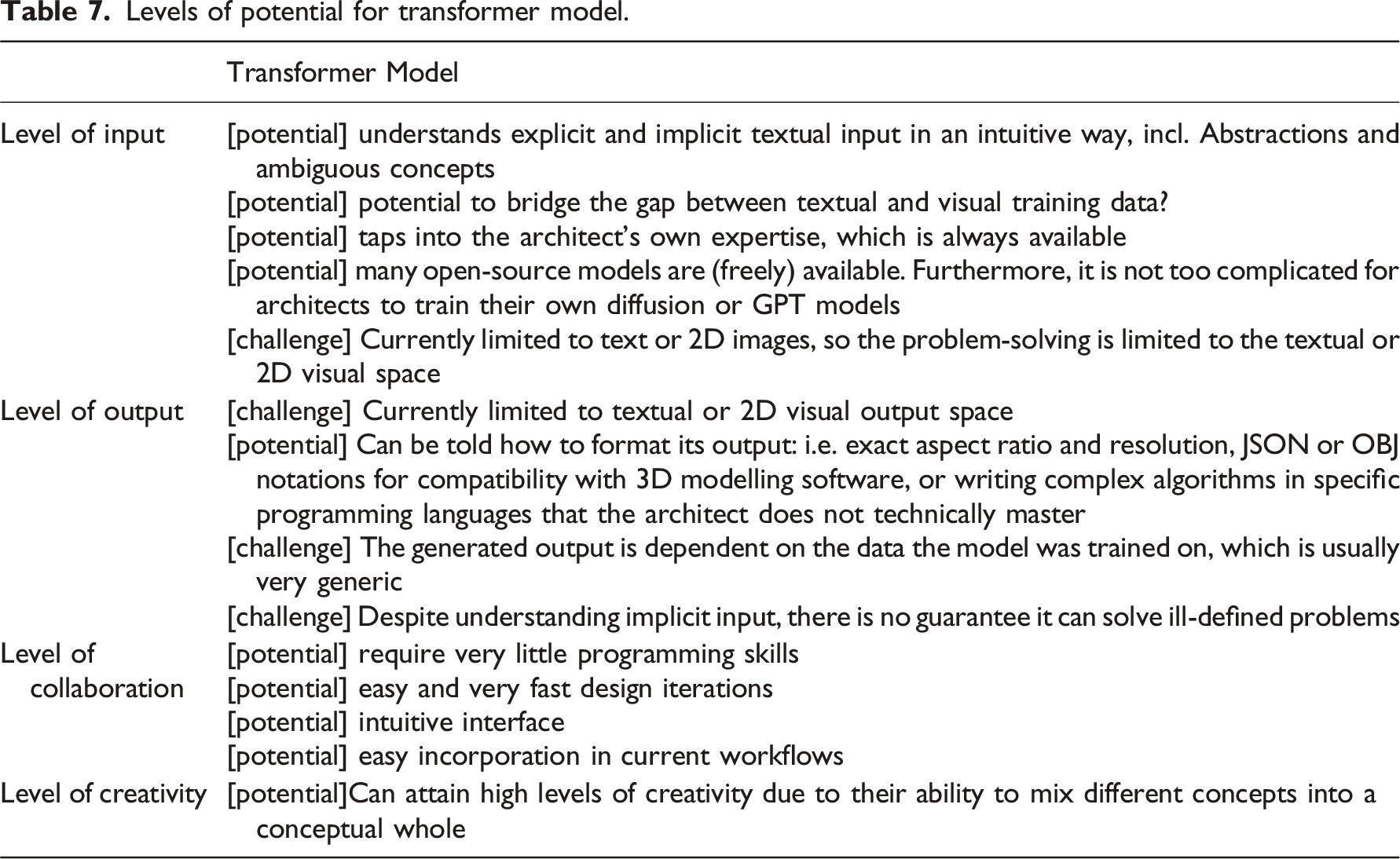

Levels of potential for transformer model.

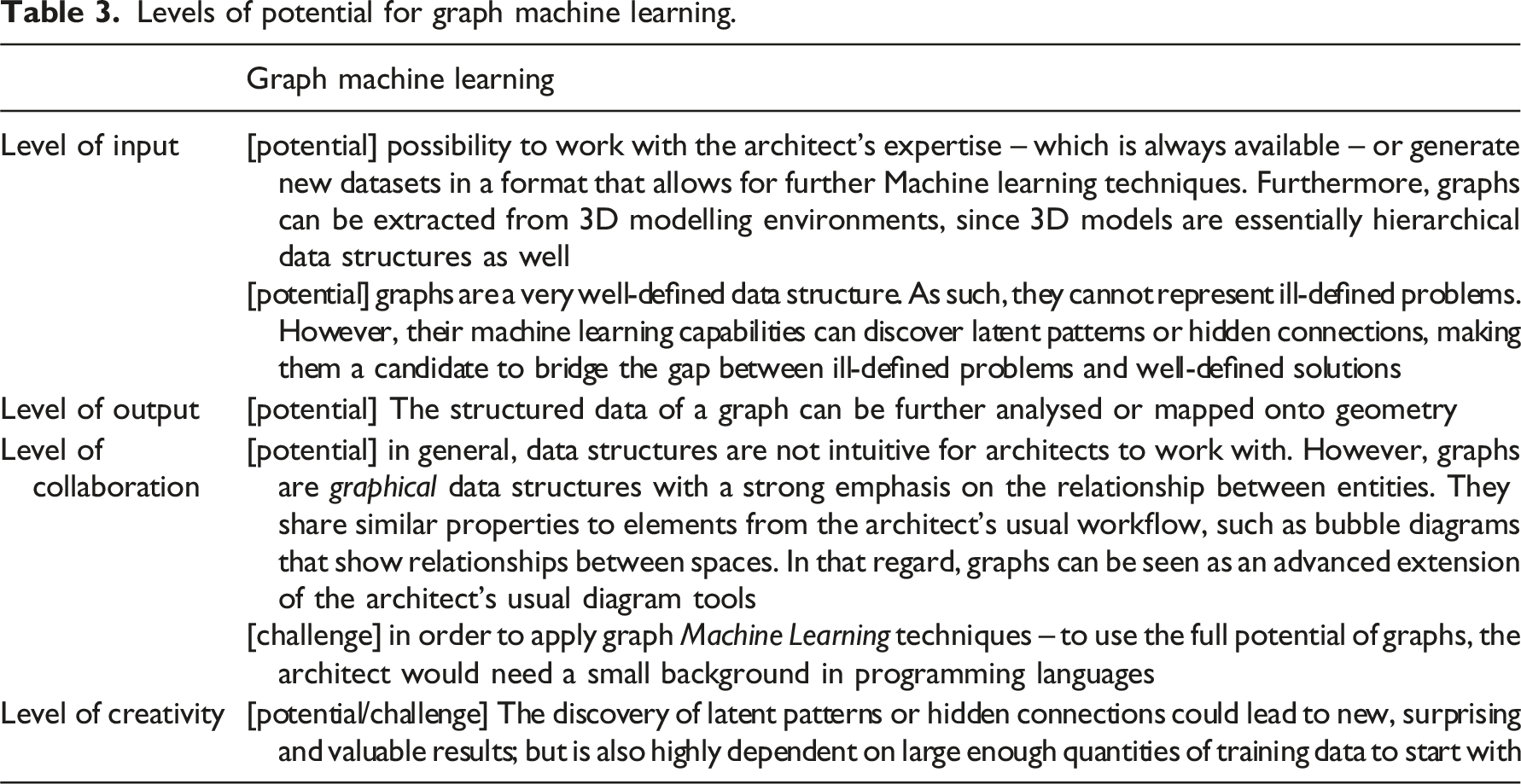

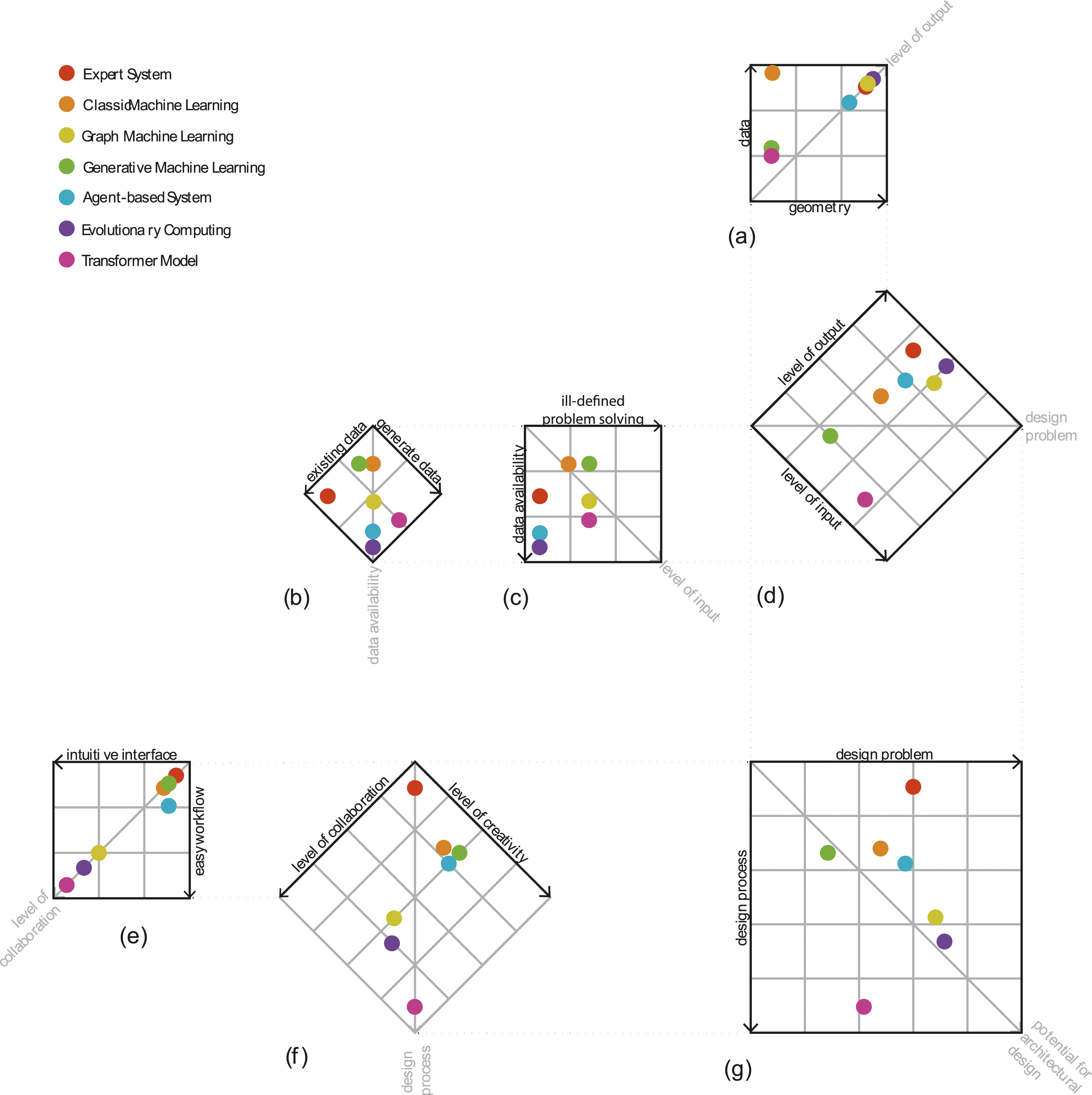

The classification structure is extended (Figure 6) to incorporate the various lessons-learned from the evaluations in the tables. The availability of architectural design data, the ill-defined nature of design problems, the compatibility of the output with 3D geometry and the need for architects to possess basic or advanced programming skills – which impacts both the necessary interface and the current design workflow – lead to the addition of four new diagrams in the classification structure. The level of output (Figure 6(a)) is composed by data (i.e. analyses and diagrams vs plain text) and geometry (i.e. 3D models vs 2D images). The level of input (Figure 6(c)) is composed by the capability to solve ill-defined design problems and the availability of data (Figure 6(b)), which in turn is composed by the compatibility with existing architectural design data or the capability to generate new data. The level of collaboration (Figure 6(e)) is composed by how intuitive the interface is for architects and how easy it is to integrate the AI techniques into the architectural design workflow (i.e. too time-intensive vs productive design iterations). Classification of AI techniques for architectural design created by first author (2023).

The results of the evaluations are mapped onto the final classification structure as output data and geometry (Figure 6(a)), existing data availability and data generation (Figure 6(b)), ability to solve ill-defined problems (Figure 6(c)), intuitive interface and workflow integration (Figure 6(e)) and creativity (Figure 6(f)). This leads to the potential of AI techniques for early architectural design in terms of the design problem (Figure 6(d)), the design process (Figure 6(f)) and early architectural design as a whole (Figure 6(g)).

Discussion

The interpretation of the classification results (Figure 6(g)) is as follows, from a high potential to a low potential: Evolutionary Computing, Transformer Model, Graph Machine Learning, Agent-based System, Classic Machine Learning, Expert System, Generative Machine Learning. Based on this classification, there are strong indications that Evolutionary Computing, Transformer Models and Graph Machine Learning hold the greatest potential for future research on the application of AI in early stages of architectural design.

Evolutionary computing

The systematic literature search has shown that Evolutionary Computing techniques for early architectural design have been studied extensively since the conception of architectural computing, yet lost traction around the 2010’s. This is consistent with the rise of the current ‘summer’ of AI, also known as the era of machine (deep) learning, 78 when focus shifted from rule-based determination towards data-based techniques that rely on approximation.

Figure 7 The classification indicates that Evolutionary Computing techniques hold the greatest potential for early architectural design. The deterministic nature of these techniques – meaning that solutions mostly evolve within an a priori set system – can therefore be seen as both a strength and a question for future research: can Evolutionary Computing techniques evolve and change their own system over time? We recommend that research on Evolutionary Computing is continued and viewed through a different lens. Moreover, in combination with Transformer Models – more specifically, Large Language Models that are trained to write algorithms in various programming languages – the architect can further explore Evolutionary Computing techniques without needing to fully master technical programming languages. History of AI created by first author (2024), redrawn and adapted from Toosi et al.

78

Transformer models

Transformer Models score very high on the criteria of the design process (Figure 6(f)): the intuitive interface, ease to incorporate in current workflows and ability to enhance the creative process makes them very convenient for architects to experiment with. A logical consequence is the fast increase of research into Transformer Models – as shown by the systematic literature search. However, more research needs to be conducted into exactly how and when Transformer Models can be integrated into a productive workflow. Do they actually accelerate the architect’s process, or do they form an additional step that prolongs the process? Is there more to them than rapid ideation and visualisation?

Transformer Models score rather low on the criteria of the design problem (Figure 6(d)). This is mostly due to the fact that Transformer Models are currently only able to generate textual and 2D visual output. However, this is a rapidly developing field and the control over the desired output increases every day. The 2D visual output is already compatible with depth maps and line drawings thanks to plugins such as ControlNet, 79 which could serve as a stepping stone towards 3D model output in the future. More research and development on 2D spatial composition or 3D model output could be conducted to investigate Transformer Models’ true potential for architectural design. If Transformer models could attain a similar efficiency on 3D model data, that would be a massive leap forward for the field of architectural computing and the practice of architectural design.

Graph machine learning

Graph Machine Learning vastly outperforms Classic and Generative Machine Learning techniques in the classification. This is related to the way graphs store data: both information on the entities and information on the relationships between those entities is saved and easily accessible to apply rules and machine learning analyses such as classification or prediction. Those relational data translate well into the field of architectural design, where spatial relationships are of great importance. Like other machine learning techniques, Graph Machine Learning depends heavily on the availability of sufficient training data. Since graphs are a mathematical data construct, the extraction of graphs from IFC Models – a standard for BIM models that stores shapes, spatial elements, materials etc. in a hierarchical data structure – can be automated, 80 or graphs can be constructed based on floorplans fairly easily with the aid of computer vision techniques. 81 Further research could be conducted into the ideal graph structure for widespread research goals: which information i.e. relevant in early design stages should or should not be contained in graphs? The most basic graph configuration (i.e. names of spaces and their spatial relationships) corresponds with the architect’s use of ‘bubble diagrams’. This basic graph structure could be extended with additional information such as: are the borders between spaces load bearing, what is the energy production/consumption of each space, how is vertical and horizontal circulation embedded in the graphs, how should graphs handle latent transient spaces, how can complex data about the environment be integrated, etc.

Graph Machine Learning scores fairly high on the criteria for the design process – due to the intuitive overlap with ‘bubble diagrams’ and the integrated 3D modelling that software such as topologic grants, 82 and scores even better on the criteria for the design problem due to the fact that they can output spatial information. Current research already shows the potential growth by focusing on graph machine learning: classifying building/ground relationships and enhance energy analysis workflows in architectural practice.83,84 We recommend that the research community uses the accumulated expertise from classic and generative machine learning techniques in architectural design to shift the focus on graph machine learning applications.

Multi-technique applications

The proposed classification not only shows which AI techniques hold the greatest potential for architectural design individually (Figure 6(g)), but also sheds light on which AI techniques perform better in certain circumstances. For example, when there is no existing training data, architects can opt for Transformer Models, Evolutionary Computing or Agent-based Systems that can generate data (Figure 6(b)). This opens the door for a targeted multi-technique approach: applications usually make use of several chained AI techniques and the proposed classification can help identify which AI techniques are more suitable for each part of the chain. This may lead to, for example, Transformer Agent-based Models where the Transformer Model generates a wide variety of custom agents that are fed into an Agent-based Model.

Architectural design data

The accessibility, quantity and quality of architectural design data strongly impacts the level of input. A wide range of architectural design data – such as material, geometric, social, cultural or site information – remains untapped in current architectural design datasets, which are often restricted to spatial layout information (i.e. rasterised floorplans, bubble diagrams). Even so, the existing databases of architectural design data are comparatively small in relation to databases used for AI techniques in other fields, which often contain millions of datapoints. 85 In architectural design, one of the largest known databases, RPLAN, consists of 80.000 color-coded 255 x 255 pixel images of floorplans. 86 Other datasets contain significantly less datapoints, such as the CubiCasa5k dataset of 5.000 floorplans with manually added annotations and the matching CubiGraph5k dataset of 4.000 graphs.81,87 As more architects integrate AI techniques in their workflows, disclosing architectural design data might become an evident part of the design process. This would in turn impact the results of the classification (through Figure 6(b)) in the coming years and potentially lead to a profound shift in establishing accessible, quantitative and qualitative architectural design datasets. As disclosing the data is a crucial factor, we recommend that future research looks into facilitating ways of sharing data among both researchers and architects.

Conclusion

The proposed classification provides a strategic overview of suitable AI techniques for early architectural design stages and thus offers a targeted direction for architects, researchers and developers to determine areas of focus in the coming years. The results strongly indicate that Evolutionary Computing, Transformer Models and Graph Machine Learning hold the greatest potential for impact in early architectural design, and thus merit the research community’s attention to achieve that potential. Computer science and AI models develop rapidly, hence it is not out of the question that an evolution of these or some other models might come to dominate the landscape in the years to come. As such, we urge the researchers to monitor new developments. The results of the proposed classification suggest that research and development resources could be optimised by shifting towards the application of Evolutionary Computing, Transformer Models and Graph Machine Learning in early architectural design. Moreover, the classification assists with building multi-technique applications and helps to identify the most suitable AI technique for different circumstances such as the architect’s programming skills, the availability of training data or the nature of the design problem.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.