Abstract

Assessing the learning outcomes from professional learning is crucial for understanding its impact, especially for training focusing on capability development. This paper explores the benefits of using scenario-based assessments to assess the immediate capability learning outcomes in professional learning settings. Existing research underscores the complexities of assessing capability in tertiary education environments. Unlike knowledge and skills, capabilities are demonstrated in context, and their application varies with each situation which poses a challenge in their assessment. Additional challenges are imposed on the assessment of capability in professional learning contexts where professionals take time outside of their work practice to develop additional knowledge and skills. As such, they are time-constrained, and therefore in depth assessment activities suitable for university environments are not practical in professional learning contexts. This paper explores the benefits of a pre- and post-learning scenario-based assessment in professional learning contexts. It demonstrates that these assessments offer a dual advantage of formative and summative learning assessment, while also contextualising the learning assessment within professional practice, aligning with the principles of effective professional learning.

Introduction

Professional learning is crucial for ensuring that professionals maintain their knowledge and capabilities as expectations, culture, and technology evolve (Holdsworth et al., 2022). As such, ongoing learning has become essential to a professional’s working life (Billett & Harteis, 2014; Collin et al., 2012; Webster-Wright, 2009). Assessing the learning outcomes from professional learning is a key step in understanding the impact of the learning experience on the professional. This is especially relevant for professional training targeting the development or enhancement of capabilities rather than the transfer of knowledge which dominates traditional professional development approaches.

Capabilities refer to a combination of knowledge, skills, and personal attributes that enable professionals to effectively handle diverse, complex, and uncertain situations. Capabilities enable professionals to respond to new and evolving situations beyond familiar, specialised settings (Holdsworth & Thomas, 2020; Sandri, 2010; Stephenson, 1998). While capabilities are an increasingly desired outcome of tertiary education programs to ensure graduates are ‘work ready’ (Down, 2006), developing, re-iterating, or enhancing capabilities is equally important for working professionals. Professionals generally hold knowledge and skills developed through formal education, as well as professional experience; however, it is recognised that ongoing professional learning is a key part of ensuring professionals maintain their capabilities as professional expectations, culture, technology, and economies evolve. As such, ongoing professional learning has become an essential part of a professional’s working life (Billett & Harteis, 2014; Collin et al., 2012; Holdsworth et al., 2022; Webster-Wright, 2009). This paper is concerned with assessing the effectiveness of professional learning. Professional learning is learning that facilitates further development of professional capabilities and supports their application to and in professional practice.

Continuing professional learning while naturally occurring as part of the day-to-day practices in the workplace can also be formalised through professional development programs (Rooney et al., 2012). Webster-Wright (2009) argues that professional development programs predominantly focus on imparting content, viewing learning as the process of filling a professional’s mind with knowledge. The superficial nature of this learning results in the diminishment of this content over time with no change to normalised practice. Aside from the lack of deep learning outcomes, this traditional approach to professional development is not effective in supporting further development of capabilities as training is often disconnected from real-life work contexts, and the return on investment regarding learning outcomes achieved is poor (Corrigan et al., 2015). Webster-Wright (2009) uses the term professional learning, to encapsulate learning that integrates with professional practices differentiating it from professional development. Assessing the learning outcomes from professional learning is a key step in understanding the impact of the learning experience on the professional and the construction of the learning experience itself.

In higher education, learning outcomes are assessed to provide evidence that students are achieving the required learning levels as part of quality assurance, continuous educational improvement, meeting employer needs, and informing student choice. Effective assessment of learning outcomes including development of capabilities is considered the ‘holy grail’ of educational measurement because of the complexities of assessing capability in tertiary education environments (DEEWR, 2011; Sandri et al., 2018). Unlike knowledge and skills, which can be demonstrated in a written test or by performing a task, capabilities are demonstrated in context, and their application varies with each situation. For instance, effective problem-solving capabilities differ depending on the situation and the problem at hand.

In higher education, most courses run over 12 weeks and have well-established systems of learning and assessment. These systems include requirements for learners to demonstrate the attainment of learning objectives and associated learning outcomes. However, assessing capability in professional learning contexts imposes additional and different challenges. Most professional learning is bespoke, designed around an organisation’s learning needs and schedules, and professionals take time outside of their work practice to develop additional knowledge and skills. Education processes and outcomes in a professional context are ‘often quite distinct from those that tertiary education institutions provide for younger adults, most of whom are able to study full-time and focus their efforts on their education’ (S. R. Billett et al., 2024, p. viii). Learning in a professional setting is time-constrained with limited opportunity for detailed assessment tasks with measurable objectives to determine learning outcomes (Nagar, 2009). Despite this, any evaluation program that aims to assess the effectiveness of professional learning must be able to assess critical aspects including the development of the professional knowledge base, competence and capability in professional action, and reflection (Kreber, 1999; Nicholls, 2001).

In light of these challenges, this paper explores the benefits of using scenario-based assessments to assess the immediate capability learning outcomes in professional learning settings. This paper proposes scenario-based assessments as a form of professional learning program evaluation to improve the delivery, experiences, and outcomes of professional learning activities. The scenario assessment explored in this paper was designed to evaluate the learning outcomes of professional learning modules designed for energy pipeline engineers to support them in developing or reinforcing capabilities for making decisions that consider public safety. This paper delves into the unique benefits of pre- and post-learning scenario-based assessment used in this professional learning context. It asserts that these assessments offer a dual advantage of formative and summative learning assessment, while also contextualising the learning assessment within professional practice, aligning with the principles of effective professional learning.

The paper begins with the challenges of assessing capabilities and the limitations of existing assessment approaches for assessing capabilities in professional learning contexts. It then explores the benefits of scenario and vignette assessment approaches and presents the context and design of the professional learning modules for engineering decisions in public safety. It then outlines the assessment design and the methods with which these learning modules were evaluated. The findings and implications explore and demonstrate the benefits of incorporating the scenario-based assessment approach in professional learning for both formative and summative assessment outcomes in the context of professional capability learning outcomes.

Existing capability assessment approaches and limitations in professional learning contexts

Formative and summative assessments are the two major assessment methods used in education. Standard formative and summative assessment involves progressively assessing a sequence of learning activities at specific points to inform teaching and help students determine their progress through the curriculum (Fergusson et al., 2022). Formative assessment includes a task that feeds back into and builds on learning during the learning process (Biggs & Tang, 2011), while summative assessment is undertaken at the conclusion of a learning program to assess final learning outcomes (Brownlie et al., 2024). Summative approaches are most appropriate for evaluating final learning outcomes from professional learning.

Measuring capabilities as a learning outcome is challenging and complex due to their contextual/situational nature (van der Velden, 2013). While competency can be assessed by observing the application of knowledge and skills in specific, well-defined, and frequent workplace situations (Australian Skills Quality Authority, 2019), capabilities are applied in response to new and changing situations where high levels of judgement are needed that draw on a range of skills, knowledge, personal qualities, and values (Stephenson, 1998). As such, it is essential to consider real-life contexts and interactions when assessing one’s capabilities. Assessing capabilities in a generic manner is hindered by the fact that they are situation-specific and require real-life contexts for proper expression (Hager, 2006, p. 34; Lozano et al., 2012, p. 143).

Authentic assessment has been used as a way to capture learning including the development of capabilities in real-life contexts. Authentic assessment uses both formative and summative assessment; however, it focuses on demonstrating learning through practical and real-world situations and demonstration of associated capabilities. It also plays a role in the learning process itself rather than solely assessing learning outcomes, and can be important form of assessment (and learning) in work based learning (Fergusson et al., 2022). Building on the work of Villarroel et al. (2018), Fergusson et al. (2022) explain that authentic assessment can be characterised by the following key principles: 1. Situations and problems contextualised to everyday life 2. Relevance and worth of assessment beyond the classroom 3. Authentic performance and practical value 4. Development of competencies for work performance 5. Exposure to similar tasks to the real world of work 6. Reflective practice skills and higher order thinking 7. An ability to solve problems 8. An ability to make decisions 9. Feedback 10. A formative sense 11. Criteria known a priori (Fergusson et al., 2022, pp. 1204–1205)

These principles provide a valuable framework to guide the selection of learning outcome assessment methods to assist in selecting those methods that capture real-life complexity and workplace contexts and the authentic performance of capabilities in decision-making. In addition, according to a review of existing literature on primary and secondary school assessment approaches, Brownlie et al. (2024) argue that summative assessments, in particular, should be valid, reliable, fair, and flexible, in addition to being authentic (as described above). Brownlie et al. (2024) note significant differences in the context of assessment in higher education, and as such, the appropriateness of these indicators will likely vary depending on the learning context in which they are applied. However, the four indicators can be met in assessments by • ‘assessing what has been taught, which can be easily identified against the curriculum and by the student (valid); • allowing for a consistent judgement to be made, limiting subjectivity (reliable); • not advantaging or disadvantaging a student or a group of students (fair); • being seen as relevant to the student (authentic); and • allowing for student choice (flexible)’ (Brownlie et al., 2024, p. 42).

Based on existing research and theory, there is a range of methods that have been used in an attempt to capture learning in professional or work-based contexts. These existing methods of learning outcome assessment hold value in their respective contexts; however, when applied to professional learning, common assessment approaches are limited in terms of practicality and validity.

A common and efficient way to assess learning outcomes for educational research purposes is self-assessment in the form of surveys. In a literature review of research on graduate learning in engineering, it was found that most of the empirical research on learning outcomes was based on quantitative surveys (Martin et al., 2005). Self-assessment of learning outcomes is limited by the learners’ subjective interpretation of their own capability (Bath et al., 2004). Surveys generally require the respondents to interpret and reflect on their own learning with limited guidance or examples to clarify (Martin et al., 2005). Spiel et al. (2013) found that students’ self-reporting of their skills and abilities was much higher than external assessments provided by academic supervisors and employers. One key advantage of self-reported quantitative measures is the efficiency in terms of data collection and analysis. Self-reporting can easily be adapted and applied in large-scale studies and emailed to thousands of students, with the quantitative data analysed faster than qualitative data but research shows the accuracy is marginal.

An additional method for evaluating capability is through observing work practices. This approach is often used in Vocational Educational Training (VET) to confirm an individual’s ability to meet the necessary standards, typically in completing physical tasks. This assessment method requires an assessor to gather evidence by observing the individual, conducting formal tests, or obtaining references from employers to assess the student’s competence (WA Department of Training and Workforce Development, 2013). This approach allows for the measurement of competency in situational contexts, but it is not practical in a professional learning setting, nor does observation of work practice necessarily reveal the demonstration of capability unless the situation calls on it (Lozano et al., 2012) or allow for insight into the thought process occurring in practice associated with capabilities.

Assessing capability in traditional higher education courses is often undertaken using reflective essays or setting students a task that allows for external evaluation of the learning acquired. However, summative assessments such as essays, tests, or presentations (Queensland Department of Education Training and Employment, 2014) are impractical for professionals to undertake due to the time and effort required for completion. Additionally, performance in such tasks would not necessarily reflect a professional’s real-life application of capability.

While it is important to note that ‘no research tool can truly reflect people’s real-life experiences’ (Hughes, 1998, p. 383), based on this review of potential assessment methods, it is clear that common approaches to assessing capability including self-assessment surveys, workplace observation, or formal summative assessments are limited in their application in a professional learning context. This is due to their limited validity in the case of self-reporting, the work practice impediment and validity of external work practice observation, and similarly the time and resource limitations of completing a formal undergraduate-style assessment task.

Benefits of vignette/scenario assessment design

Real-life contexts and interaction play a key role and are indeed necessary to assess capability. In line with the theories of situational learning, it is a learner’s interaction with their environments and social contexts where learning can be demonstrated and assessed. According to Down (2006, p. 195), in situational learning, ‘learning (formal or informal) does not derive from a series of learning experiences and assessment tasks, but though the learner actively interacting with the contexts (social, physical, intellectual and emotional) and situations (work, domestic and social) in order to better understand and to work within them’. Scenario or vignette assessment approaches offer a way to provide such real-life contexts and interactions in the assessment design.

Vignettes have been used widely in mental health research to understand a respondent’s beliefs, attitudes, and responses (Leighton, 2010). Vignettes present hypothetical situations or stories to which participants can respond to questions rather than placing the research participant in the hypothetical situation themselves (Bloor & Wood, 2006; Finch, 1987; Hughes, 1998). The question responses are then used to assess if the capability has been developed. Vignettes differ slightly from a scenario, which are largely used in learning and teaching activities and assessment, as they require the learner to play an active role in a purposefully designed scenario to assess capability development (Clark & Mayer, 2013).

In either case, vignette or scenario, the value of this method of assessment is the ability to present a real-life/professional situation through a short narrative to which participants in their response can be assessed against the learning outcomes. In describing their response, they can demonstrate their thought processes. Capabilities demonstrated through these responses and thought processes can be assessed by an external assessor. The scenario response is time-efficient and can be completed within the delivery of the professional learning module. Based on the noted benefits of scenario/vignette approaches to assess capabilities and the limitations of more common assessment methods noted earlier, the learning designers developed and used a scenario/vignette to evaluate the learning outcomes of the professional learning modules for energy pipeline engineers to support them in developing or reinforcing capabilities for making decisions that consider public safety. The following section describes the context and design of the professional learning modules in which the scenarios were used.

Professional learning modules: Considering public safety in engineering decisions

The scenarios explored in this paper were designed for a series of professional learning workshops for Australian pipeline engineers to develop capabilities for considering public safety in decision-making. The professional learning workshops were developed in recognition that typically professional learning in engineering focuses on technical skills and knowledge despite the need for the development of soft skills or engineering capabilities. This is not surprising given that engineering sits within the ‘hard applied’ disciplines as defined by Becher (1994). Disciplinary contexts have a significant impact on teaching methods and assessment approaches (Swarat et al., 2017). Jessop and Maleckar (2016) explain that ‘hard pure’ disciplines like chemistry and mathematics tend to prefer examinations, quizzes, and laboratory reports to assess quantifiable, impersonal knowledge forms containing universally accepted truths. On the other hand, ‘hard applied’ disciplines such as engineering focus on applying such knowledge and often use similar assessments along with case studies and simulations. In contrast, ‘soft pure’ disciplines like humanities, arts, and social sciences, as well as ‘soft applied’ disciplines like law and social work, emphasise interpretation and values and use essays, presentations, reports, simulations, and case studies to assess learning.

Despite the traditional emphasis on hard technical skills and associated assessments in engineering, the importance of soft skills or capabilities that guide decision-making based on values, moral judgements, and interpretation has been increasingly recognised as especially crucial in engineering. These skills are essential for making decisions that take into account the impacts of practices beyond the technical aspects, particularly in relation to public safety and sustainability. Engineers’ safety imagination and moral reasoning are vital to the prevention of disaster events (Maslen, 2019; Maslen et al., 2021), with previous research pointing to how engaging with disaster cases cultivates these ways of knowing (Hayes & Maslen, 2015, 2020). As such, development and assessment of such learning outcomes is an important area of professional learning in engineering.

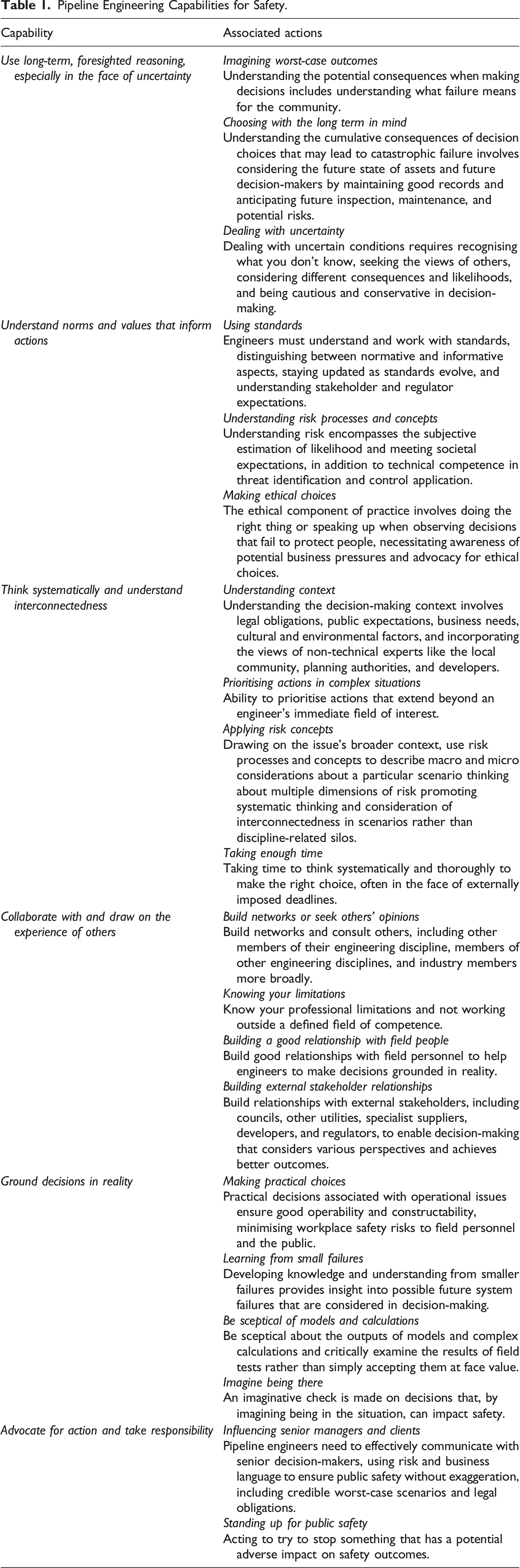

Pipeline Engineering Capabilities for Safety.

Two distinct professional learning workshops, each featuring two different sets of case studies, were developed and delivered using an experiential, case-based learning approach. Each workshop comprised two 1.5-hour face-to-face sessions facilitated by two professional trainers. The first set, intended for transmission pipeline engineers, included the San Bruno pipeline failure (Hayes & Hopkins, 2014) and the Challenger space shuttle accident (Rogers, 1986). The second set, aimed at network engineers, included the Massachusetts pipeline failure (NTSB, 2019) and the amusement ride accident at Dreamworld (Coroners Court of Queensland, 2020). During the first half of the workshops, participants discussed an accident case within their professional context. In the second half, they engaged in a role-play exercise based on a case outside the pipeline sector, facilitating learning about engineering decision-making in a different context and encouraging the application of these insights to their profession.

San Bruno/Challenger workshop

Part 1 of the workshop introduced the discussion of the 2010 San Bruno natural gas pipeline failure with a presentation, followed by a facilitated discussion of lessons to be learned. Participants explored the San Bruno case study, reflected on the decisions made in the lead-up to the incident, and discussed challenges and opportunities for different decisions (with a focus on decisions regarding integrity management). Questions designed to prompt discussion about the links between the case study, capabilities, and the participant’s professional practice were used to facilitate discussion in the second part of the workshop. San Bruno case is useful for reflecting on the importance of the following capabilities in particular: • Use long-term, foresighted reasoning, especially in the face of uncertainty • Understand norms and values that inform actions • Think systematically and understand interconnectedness • Ground decisions in reality.

The 1986 space shuttle Challenger disaster case was explored and discussed using a scripted role-play in Part 2 of this workshop. Participants played key characters and reflected on lessons for their own professional practice. Slides explaining the incident, video dramatisation, and graphics from the accident inquiry were used as learning materials to support the role-play. Questions designed to prompt discussion about the links between the case study, capabilities, and the participant’s professional practice were used to facilitate discussion in the second part of the workshop. The Challenger case is useful for reflecting on the importance of the following capabilities in particular: • Collaborate with and draw on the experience of others • Ground decisions in reality • Advocate for action and take responsibility.

Massachusetts/Dreamworld workshop

Part 1 introduced the discussion of the 2018 Massachusetts gas pipeline failure with a presentation, followed by a facilitated discussion of lessons to be learned. Participants explored the Massachusetts case study, reflected on the decisions made in the lead-up to the incident, and discussed challenges and opportunities for different decisions. Questions designed to prompt discussion about the links between the case study, capabilities, and the participant’s professional practice were used to facilitate discussion in the second part of the workshop. The Massachusetts case is useful for reflecting on the importance of the following capabilities in particular: • Think systematically and understand interconnectedness • Collaborate with and draw on the experience of others • Ground decisions in reality.

The 2016 Dreamworld Thunder River Rapids ride accident case was explored and discussed using a scripted role-play in Part 2 of this workshop. Participants played key characters and reflected on lessons for their own professional practice. Slides explaining the incident, video, images, and graphics from the Coroner’s report into the incident were used as learning materials to support the role-play. Questions designed to prompt discussion about the links between the case study, capabilities, and the participant’s professional practice were used to facilitate discussion in the second part of the workshop. The Dreamworld case is useful for reflecting on the importance of the following capabilities in particular: • Use long-term, foresighted reasoning, especially in the face of uncertainty • Understand norms and values that inform actions • Advocate for action and take responsibility.

Methods

The scenario assessment sat within a broader evaluation methodology, designed to evaluate and assess the professional learning modules (Holdsworth et al., 2022), based on a widely used framework for evaluating professional learning/development programs, called the Kirkpatrick Model (Reio et al., 2017). Kirkpatrick’s model for evaluation was introduced in a series of articles (Kirkpatrick, 1959, 1960) and suggests four levels at which assessment and evaluation may occur: 1. Participants’ reactions 2. Participants’ learning 3. Changes in participants’ behaviour (job performance) 4. Desired results (organisational impact)

The Kirkpatrick Model was used as a holistic assessment of the workshop outcomes, including experiences, learning, changes to workplace practice, and organisational outcomes. This recognises that a multi-method approach is important for assessing learning outcomes (Lockyer et al., 2017).

The scenario assessment was selected and designed to measure the immediate learning outcomes of the workshops at Level 2 of the Kirkpatrick Model. The purpose of the analysis presented in this paper is to show how scenarios can demonstrate capability learning outcomes and to explore the benefits of using this assessment method to assess the immediate learning outcomes in professional learning settings for evaluation purposes.

Scenario design

In the professional learning context, the learning designers selected a scenario-based assessment method for the reasons detailed in the previous sections, including being time- and resource-efficient, appropriate for the professional nature of the learning modules, allowing for external evaluation, and placing professional learners in real-life scenarios that could demonstrate the presence or absence of the intended capability learning outcomes. The assessment methods were informed by a previous study conducted by some of the learning designers Holdsworth et al., 2019) that explored and tested a similar approach for assessing graduate capability in professional contexts (Sandri et al., 2018a, 2018b).

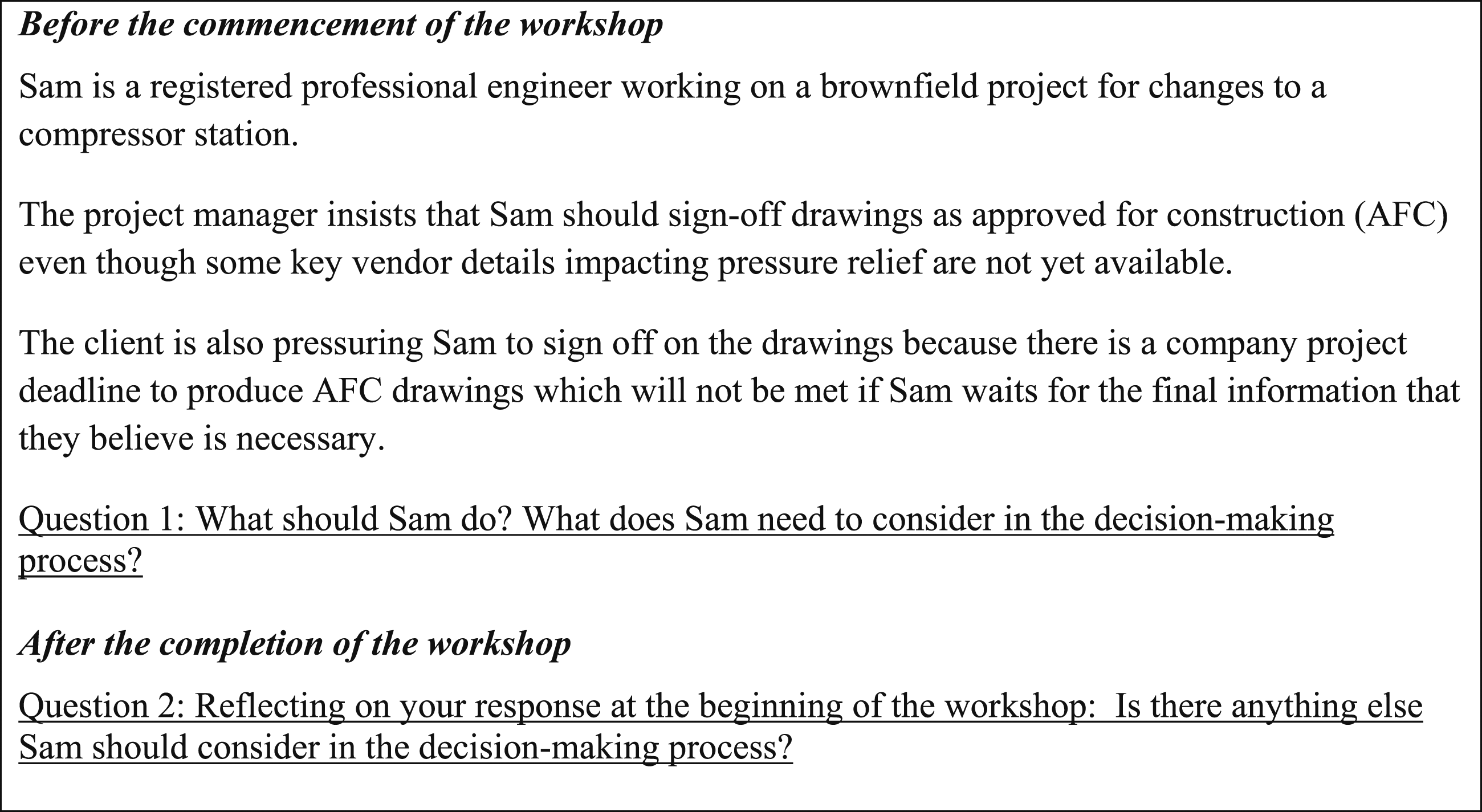

At the beginning of the workshop, participants were given a short-written scenario to respond to in which an engineer faces a decision-making dilemma. The situation that the protagonist in the story faces is simple, realistic, and something that the training participants could easily identify with. Despite its seeming simplicity, the protagonist is faced with a dilemma between waiting until important information is available to complete the work or following the instructions of those with organisational (but not professional) authority. As we will see in what follows, many of the non-technical capabilities that the workshop aims to foster in participants can be drawn on in describing the way the protagonist should proceed.

Participants were asked to reflect on how they thought the engineer (named Sam) should respond. Following the workshop, participants had the opportunity to comment on whether there was anything else Sam should consider in the decision-making process in light of their experience during the workshop. Figure 1 shows the scenario and question prompts. Scenario.

Analysis of responses

A thematic analysis of the scenario responses was undertaken using NVivo 12. The analysis was undertaken on the scenario responses to measure the immediate learning outcomes (the demonstration of capabilities) of the workshops at Level 2 of the Kirkpatrick Model. The analysis was then used as part of the suite of assessment methods used to evaluate the effectiveness of the workshops.

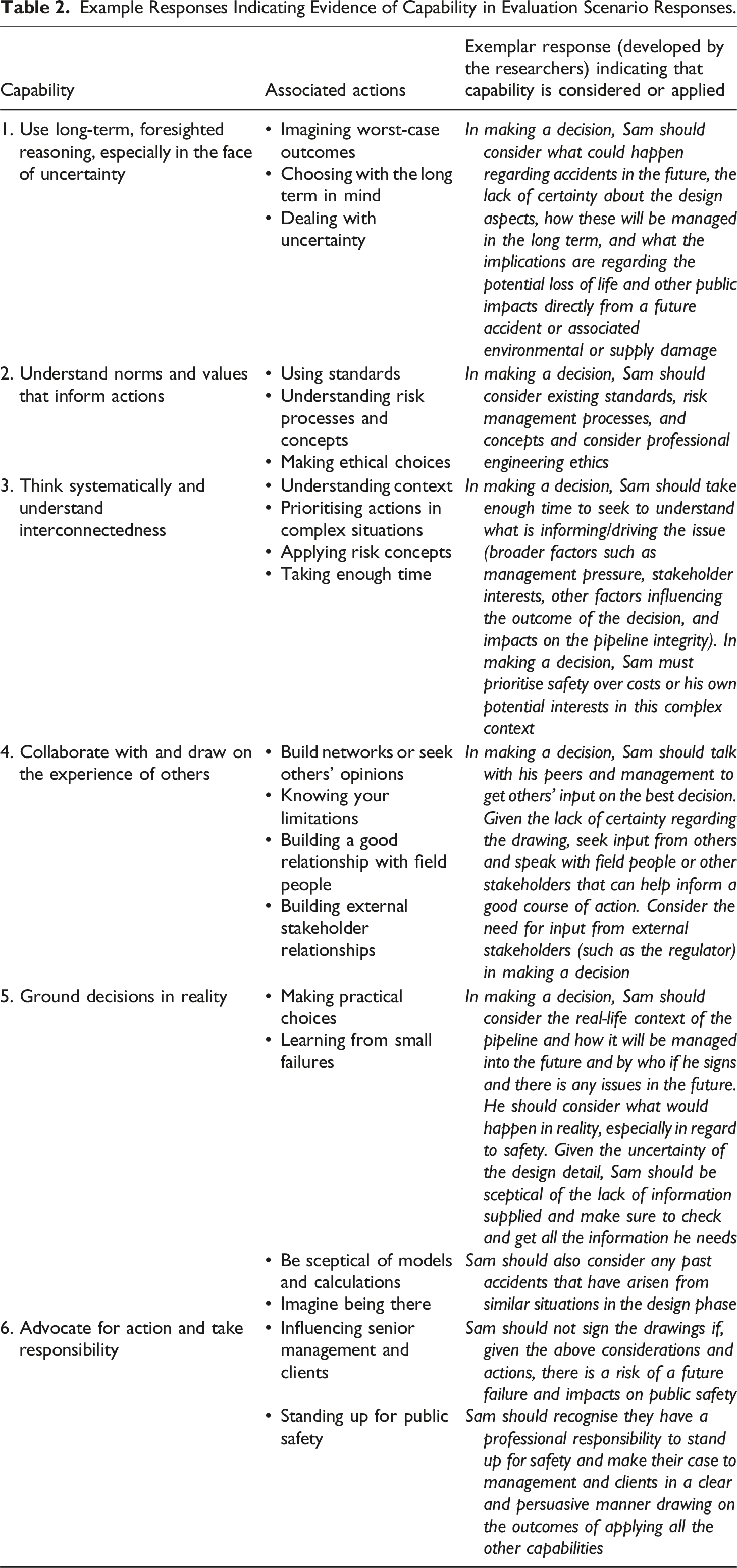

Example Responses Indicating Evidence of Capability in Evaluation Scenario Responses.

From the thematic analysis, a frequency count was also undertaken to provide high-level quantitative data on the evidence of the capabilities in pre- and post-scenario responses. Note that in the post-workshop response, participants were asked if there is anything else Sam should consider in the decision-making process, and therefore not all post-workshop responses repeated what had already been stated in the pre-workshop response. Therefore, in our analysis, if the capability is present in the pre-workshop response, the capability is assumed to be present in the post-workshop response as well based on the wording of the post-workshop question.

From the thematic analysis of the pre- and post-workshop responses from each participant, the researchers were able to determine the extent and type of learning that occurred during the workshops in the context of the capability learning objectives.

Response rate

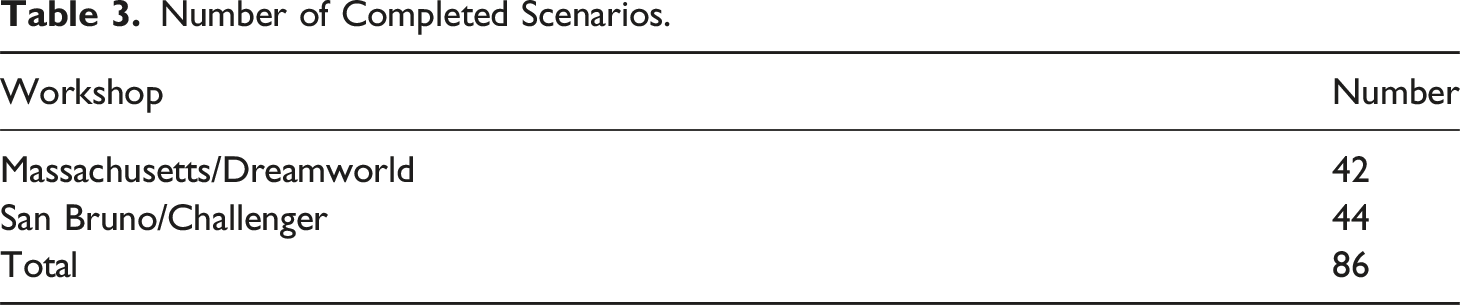

Number of Completed Scenarios.

The following describes how the scenario responses can be used to demonstrate the extent of development or reinforcement of the capabilities.

Findings

This section explores the type of evidence of learning attained using the scenarios and analysing them for evidence of capability.

Establishing baseline evidence of existing professional capability

Analysis of the pre-workshop scenario responses was able to provide a baseline of participant’s existing decision-making capability and associated approaches. In our case, the analysis of pre-workshop responses could show that none of the 86 responses suggested that Sam should sign off on the drawings based on the information available in the scenario. 1 For the researchers, this indicated that most participants in the pre-workshop responses had some level of professional responsibility for the integrity of the pipeline and understanding of their responsibilities for public safety. This was to be expected given the participants are working professionals with varying level of expertise in their field. While existing capability was demonstrated, assessment of the post-workshop responses revealed evidence of increased use of the capabilities. The post-workshop scenario assessment demonstrated how decision-making had changed from the workshop experience, a point that will be explored later.

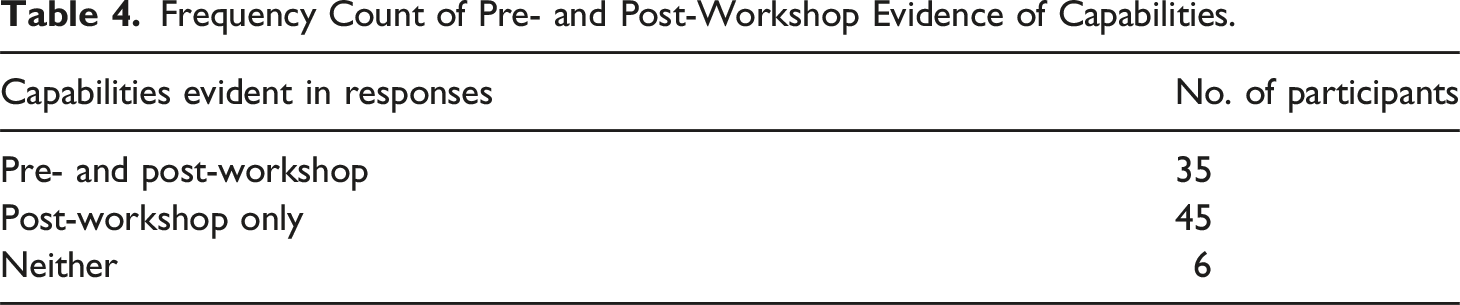

Determining pre- and post-workshop capabilities using frequency counts

Frequency Count of Pre- and Post-Workshop Evidence of Capabilities.

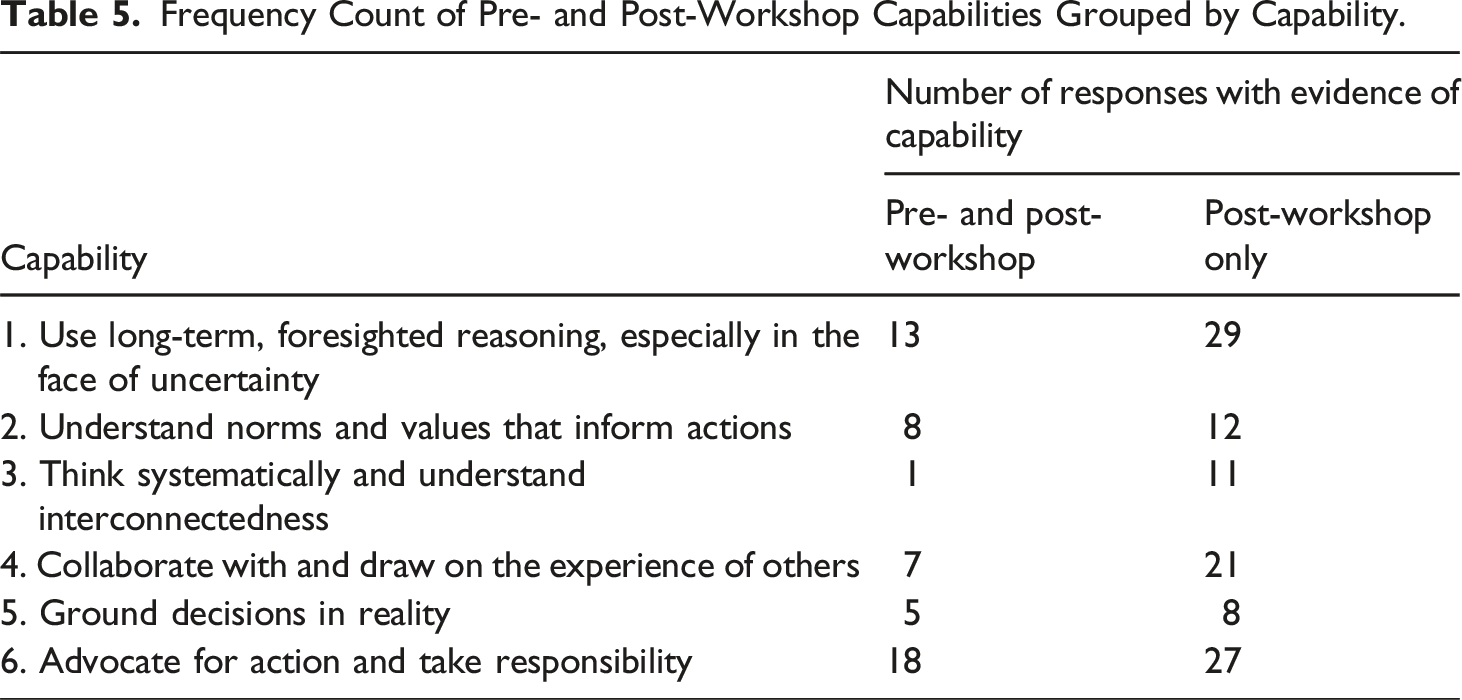

Frequency Count of Pre- and Post-Workshop Capabilities Grouped by Capability.

Based on the data shown in Table 5, the capabilities, particularly 1, 3, 4, and 6, were present in post-workshop responses to a much greater extent than in the pre-workshop responses, indicating that participation in the workshop did influence and increase the application of these capabilities in response to the scenario.

Exploring the extent of professional learning through thematic analysis

The rationale, approach, and context for the decisions changed in many of the post-workshop responses. As such, the thematic analysis of the scenarios was able to demonstrate different degrees of professional learning during the workshop regarding the capabilities including • No evidence of learning • Changes in how responses are articulated and expanded considerations in decision-making • Additional capabilities demonstrated

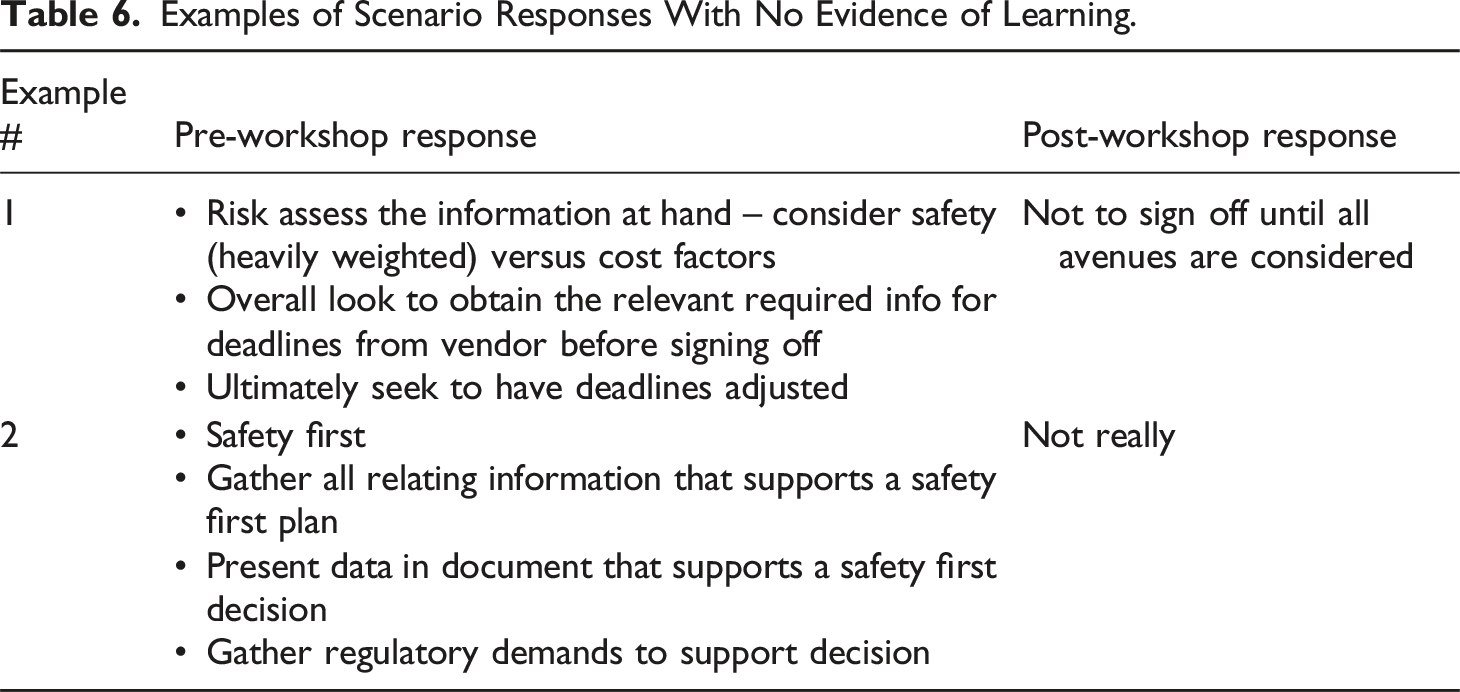

No evidence of learning

Examples of Scenario Responses With No Evidence of Learning.

As was typical of such responses, both these participants see safety as the primary consideration in exercising their professional judgement, but they articulate their responses in technical and process terms.

Change in how the response is articulated or expanded considerations in decisions

As described earlier, the scenarios were able to show that there was existing baseline capability in considering risk as evidenced by most of the pre-workshop responses. The scenarios, however, evidenced a change in capability sub-factors considered when making the decision post-workshop. The scenario responses were also able to demonstrate where participants had developed or expanded on their terminology for responding to the situation.

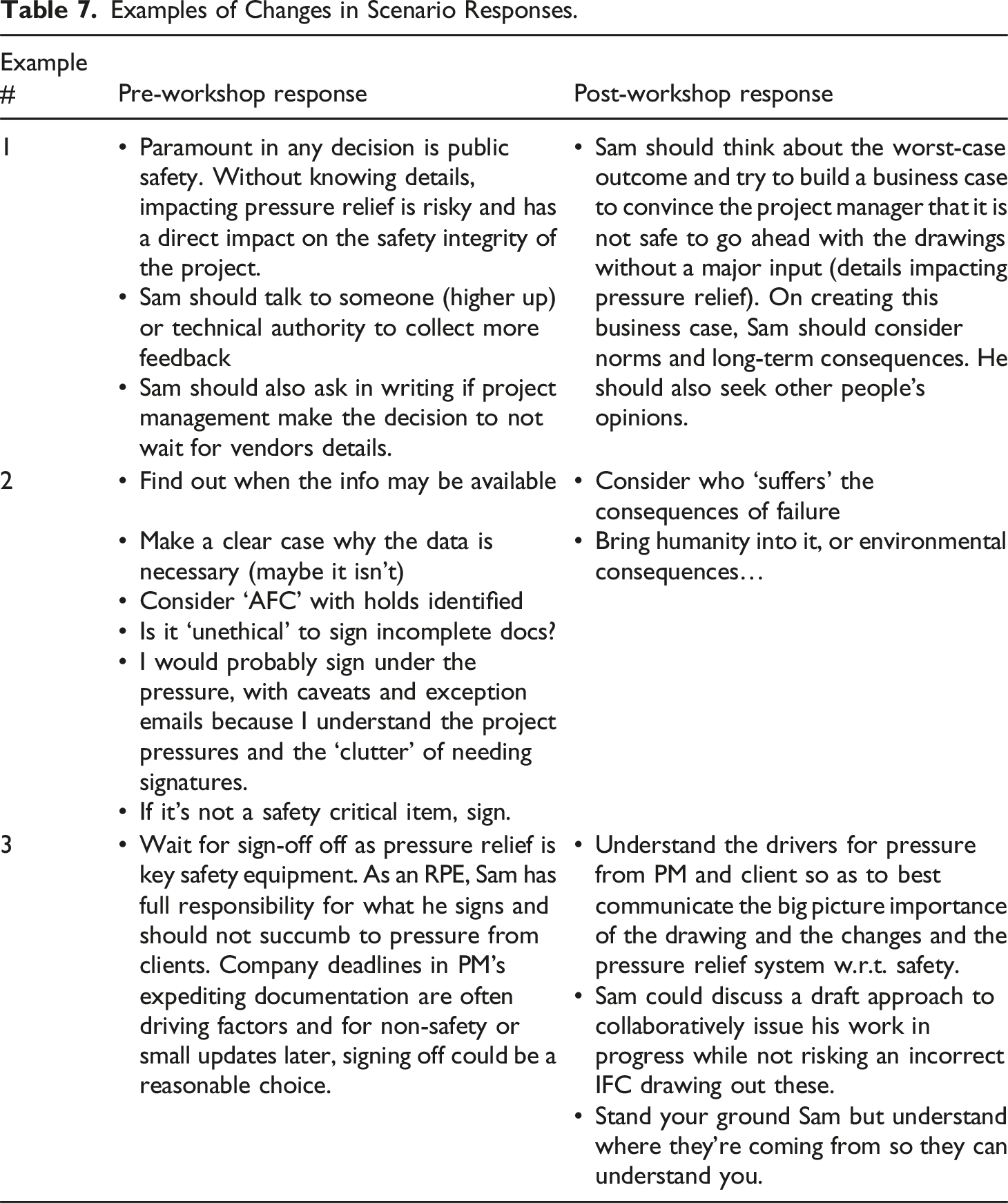

Examples of Changes in Scenario Responses.

Example 2 in Table 7 indicates that there was a shift from considering if it is ‘unethical’ to sign the documents to articulating these ethical concerns using factors associated with the capabilities, such as ‘who suffers’ and the human and environmental consequences of actions undertaken. This is an example of imagining worst-case outcomes as part of using long-term, foresighted reasoning, especially in the face of uncertainty capability. This participant seems to have changed their overall position from probably signing under duress to standing up for safety, a significant shift in professional judgement.

Example 3 in Table 7, the reference to Registered Professional Engineer (RPE), indicates that this participant is considering legal compliance and liability in deciding what Sam should do. The post-workshop response shifts to using capabilities regarding systemic thinking (understanding pressures), collaborating with others to find the best solution, and reaffirming their original position regarding standing up for public safety.

It is also noteworthy that in Table 7, Example 2, the participant uses the term ‘I’ while reflecting on what they would do in Sam’s situation. This suggests that the scenario effectively placed the participant in a real-life situation that they personally identify with.

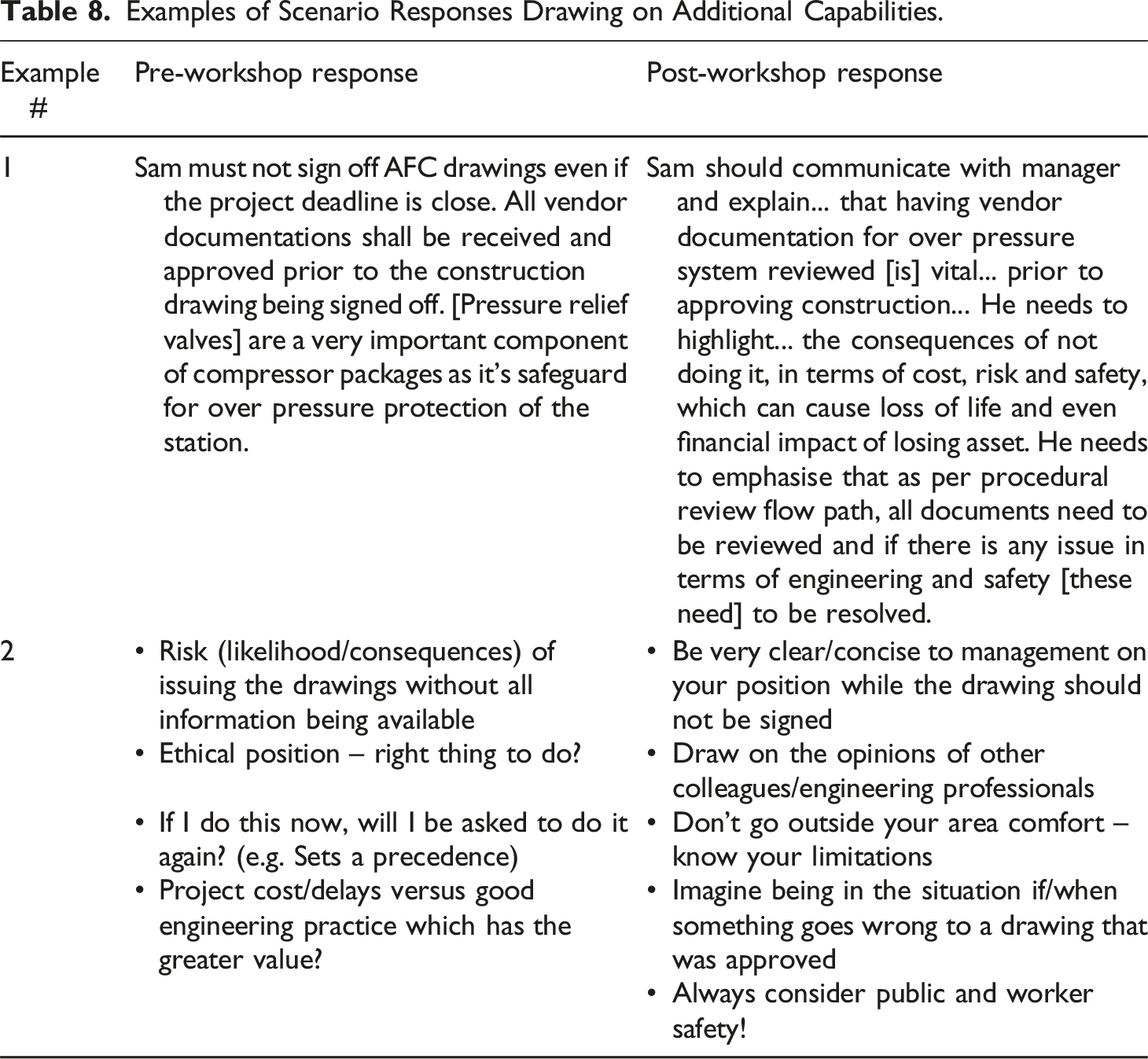

Additional capabilities demonstrated

Examples of Scenario Responses Drawing on Additional Capabilities.

Discussion

In professional learning contexts, several key challenges are associated with assessing learning outcomes. These challenges include time constraints and the need to fit in with professional work practices and involve external assessment of learning outcomes rather than relying on learners’ self-assessment of their learning. Capability assessments hold the additional challenge of capturing behaviour in context, so it is important to situate assessments in a way that captures the likely use of capabilities in real-work practices. This study has used scenario-based assessment as a way to address these challenges.

The developed scenario depicted a realistic work-related situation that required the application of acquired knowledge or capabilities. The use of a scenario-based assessment to demonstrate soft skills or capabilities is a move away from assessment methods traditionally favoured in the ‘hard applied’ engineering discipline as described by Becher (1994) and Jessop and Maleckar (2016). Furthermore, the findings highlight how the scenarios provide an authentic assessment method as described by Villarroel et al. (2018) and Fergusson et al. (2022) within the assessment constraints of the professional learning environment. The use of scenarios based on professional practice can demonstrate relevance and connection to the real-world professional environment. The scenario was also specific to the learners’ roles and responsibilities, ensuring that they could easily relate to the scenario. Furthermore, the scenario did not have a clear, straightforward solution but instead prompted critical decision-making with the aim of eliciting any capabilities drawn on through the response. By using the same scenario both before and after the workshop, learners can demonstrate if and how their response to the scenario has evolved as a result of the workshop. The findings highlight that the scenario approach provides time-efficient insight into the extent and type of learning that has occurred.

While the context for assessing learning outcomes differs considerably to primary, secondary, and even higher education, indicators of quality assessment developed by Brownlie et al. (2024) have also been met by this assessment method. The assessment, as described previously, meets the principles of authentic assessment. The scenario-based assessment also provides a valid method that aligns with the intended learning outcomes (in this case, decision-making capabilities for public safety). The reliability of the assessment learning outcomes is dependent on an exemplar model as demonstrated in this study, which can then be used to assess the degree to which the capabilities have been demonstrated in the pre- and post-workshop scenarios. As with any assessment requiring judgement on the part of the assessor, it is important that those assessing outcomes have sufficient expertise to make the judgement of learning outcomes (Lockyer et al., 2017). The exemplar assists in this process and can be used by multiple assessors as a guide for the demonstration of capability. Regarding indicators of fairness and flexibility, however, the professional learning context in which this assessment is applied means that participants do not achieve certification or qualification based on completion of the workshop and the assessment outcomes; therefore, they are not disadvantaged if the assessment method is not ‘fair’ and ‘flexible’ in this context. That said, the scenario-based assessment allows for the assessment of progression or development starting from the assumption that each participant already brings a level of expertise. In this sense, this is a fair and inclusive assessment that allows for flexibility in terms of how the responses are articulated and the level at which capabilities are demonstrated from the pre- to post-workshop scenario response.

Comments provided by some participants following the workshops recognised the value of the scenarios for helping them reflect on the shift in how they approached the scenario pre- and post-workshop. This experience highlights how scenarios can serve a dual purpose for both formative and summative assessment, with the formative aspect allowing for professionals to reflect on their own learning as a result of the activities and the summative aspect allowing designers to evaluate the outcomes of the learning experience. This dual purpose is valuable in a time-constrained standalone workshop by embedding reflective and experiential learning without focusing excessively on formal assessment.

It is important to note that the scenario assessment method has limitations inherent in any hypothetical assessment method. This includes the assumption that participants’ responses reflect their intended or actual behaviour/capability. As such, the assessment of learning outcomes should be accompanied by an evaluation model that includes a systematic, formative framework instead of a summative outcome and objective-oriented evaluation applied after the completion of the training program.

The summative evaluation should focus on the entire training process and its achievement of clearly identified objectives that reflect the training program’s desired outcomes. Assessment techniques and indicators should be suitable for the descriptive and subjective nature of the assessment models. Factors that influence the success of the training program but are outside of the learning must be considered, and where possible, identification of causal relationships between the evaluation levels should be considered. There needs to be consideration of the complex relationship between behaviour and impact, as acquired knowledge and skills necessarily equate to behavioural changes or job performance.

As informed by the Kirkpatrick evaluation model, this scenario evaluation method has been developed and applied to the workshops in this study in conjunction with a suite of assessment methods. This recognises that all methods of assessment have limitations, and a multi-method approach is important for assessing learning outcomes (Lockyer et al., 2017) and, in the context of this study, evaluating the learning outcomes against Kirkpatrick’s levels of learning. Participant follow-up interviews after the workshop was also undertaken to determine if and to what extent capabilities were applied in professional practice to determine the success and impact of the workshop.

Based on the data we collected from the scenarios, for our specific context with Australian pipeline engineers, the workshop served as a reinforcement of existing capabilities in maintaining a strong safety record. The scenarios captured a strong baseline inclination for making decisions regarding public safety, which was refined, further articulated, or expanded on in the workshops. Based on the findings, however, the scenarios have the ability to capture more dramatic changes in thinking and capability if, for example, professional learning activities involve novel or disruptive learning outcomes.

Conclusion

In professional learning contexts, assessing learning outcomes to evaluate the effectiveness of professional learning poses several key challenges, such as time constraints and the need to align with work practices. This study uses scenario-based assessment to address these challenges, providing insight into immediate learning outcomes. The scenario approach provides time-efficient insight into the extent and type of learning outcomes that have been achieved. This approach allows for formative and summative assessments in a standalone workshop, demonstrating relevance to the real-world professional environment. Looking ahead, future iterations could explore the use of first-person scenario approaches as described in the literature rather than vignettes. Additionally, designing the second scenario response to prompt participants to respond again rather than only build on their previous response may provide for clearer identification of changes in learning. Overall, the assessment of learning outcomes in professional learning contexts is a complex yet vital aspect of ensuring the relevance, applicability, and effectiveness of the learning experiences provided.

Footnotes

Acknowledgements

This work is funded by the Future Fuels CRC, supported through the Australian Government’s Cooperative Research Centres Program. The cash and in-kind support from the industry participants is gratefully acknowledged.

Author contributions

S.H.: Conceptualisation; methodology; investigation; formal analysis; writing – review and editing. O.S.: Conceptualisation; methodology; investigation; formal analysis; writing – original draft. J.H.: Funding acquisition; project administration; conceptualisation; methodology; investigation; writing – review and editing. S.M.: conceptualisation; methodology; investigation; writing – review and editing.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is funded by the Future Fuels CRC, supported through the Australian Government’s Cooperative Research Centres Program (N/A).

Ethical statement

Data Availability Statement

The dataset generated during the study is not publicly available as it contains proprietary information that cannot be released without permission from the funding body. Information on how to obtain it and reproduce the analysis is available from the corresponding author on request.