Abstract

Research was conducted in partnership with the Revenge Porn Helpline (RPH) to examine the location and removal of non-consensual intimate image (NCII) abuse. By examining reports to the helpline, data were collected to uncover where intimate images were being non-consensually distributed, how they were proportionally distributed across platforms, and avenues for image removal. The data confirm that social media plays a key role in NCII distribution and provides further insight into where images are being distributed outside of social media platforms. Data on image removal indicate that knowledge of how to navigate different types of platforms is important for image removal success, making contributions from organisations such as the RPH vital, and highlighting the need to make reporting processes more accessible. The findings also indicate significant gaps within the Online Safety Act which will need to be addressed if the Act is to effectively protect victim-survivors. In particular, the need to move beyond focusing on services with the largest user numbers and broadening the scope to include smaller high-risk and problematic platforms.

Keywords

Introduction

Recent years have seen a growing scholarly interest in image-based sexual abuse (IBSA), which includes a wide range of abusive practices surrounding the non-consensual taking, making, and sharing of intimate images (see Law Commission, 2021; McGlynn et al., 2017). Academics, third-sector organisations, government agencies, and journalists have all been pivotal to knowledge generation. We now recognise the wide range of image-based harms, including the non-consensual taking of images (through voyeurism, hacking, hidden cameras, upskirting and downblousing), the non-consensual making of images (image alternation or creation, i.e. photoshop and deepfake images) and the non-consensual sharing of, and threats to share, intimate images (both physically and electronically) (Law Commission, 2021; McGlynn et al., 2017). We also recognise the severe and often ongoing impact of IBSA, with research uncovering a multitude of consequences on every aspect of victims' lives (Citron and Franks, 2014; Cyber Civil Rights, 2014; Henry and Powell, 2016; Huber, 2023a; McGlynn et al., 2019; Short et al., 2017).

Increased understanding of the nature and impact of IBSA has resulted in some positive legislative changes in England and Wales, including the criminalisation of non-consensual intimate image (NCII) distribution in 2015 under section 33 of the Criminal Justice and Courts Act. In October 2023, the Online Safety Bill received Royal Assent, introducing the Online Safety Act (OSA). The aim of the Act is to increase the regulation of services hosting user-generated content and impose duties on search engines to minimise the amount of harmful content shared online, including NCII distribution (Gov.uk, 2023). While these legislative changes are vital, they are not without their limitations, particularly with regard to scope and ability to adequately capture the range of NCII practices and contexts in which distribution occurs (see Huber, 2023b; Law Commission, 2022; McGlynn, 2016). It is therefore important that we continue to further our understanding beyond identifying forms of abuse and its impact, to examine patterns which can be used to further inform legislation and increase protection for victim-survivors.

One area where knowledge is limited includes patterns of NCII distribution and the image removal process for victim-survivors. While we do have some information on the types of platforms being used to share images, research has tended to provide in-depth analysis of specific platforms or identify broader categories of platforms where image sharing occurs, such as social media, pornography websites, and message boards (see Hall and Hearn, 2018; Henry and Flynn, 2019; Langlois and Slane, 2017; Uhl et al., 2018). Research is yet to quantitatively explore the exact location of images, including the identification of larger volumes of URLs, how images are proportionally distributed across these, and what this means for image removal. This information is important for a number of reasons: (1) it can increase our understanding of where NCII distribution is taking place and the nature of the platforms, (2) it provides an insight into the difficulties of the image removal process, and (3) it allows for consideration of whether such platforms are likely to fall within the remit of the OSA and the extent to which the OSA will improve the image removal process.

A knowledge exchange partnership was therefore formed with the Revenge Porn Helpline (RPH) (a support service for victim-survivors of intimate image abuse) to provide one of the few empirical studies examining the location and removal of NCII distribution. Using data collected from reports to the helpline, this is the first study to examine image distribution and removal utilising such data, and it is the first to quantitatively examine the distribution of images across public URLs. The partnership also allows for the research process and findings to be informed by the second author's years of experience as an RPH practitioner.

This article begins with an outline of literature surrounding the impact of NCII distribution, the location of image distribution, and current responses within England and Wales. This is followed by an overview of the research methodology. The findings are then presented in two parts: data pertaining to image distribution followed by image removal. The article ends with a discussion on the importance of effective reporting tools, communication, and collaboration to ensure the swift removal of NCII, as well as the need for further provision within the OSA in order for regulation to effectively respond to NCII distribution.

The impact of NCII distribution

Research identifies that NCII distribution has wide-ranging emotional, physical, and financial consequences for victim-survivors. This includes depression, anxiety, suicidal thoughts, paranoia, shame, humiliation, reputational damage, occupational issues, forced changes or loss in occupation or homes, fear, restriction in the use of space, and an increased vulnerability to further harassment and abuse (Citron and Franks, 2014; Cyber Civil Rights, 2014; End Violence Against Women Coalition, 2013; Henry and Powell, 2016; Huber, 2023a; Short et al., 2017; Stroud, 2014). Qualitative research has been vital in uncovering the everyday lived realities of victim-survivor experiences, consistently showing that victimisation has daily, and potentially long-term impacts. Through interviews with 18 victim-survivors to examine mental health, Bates (2017) identified that nearly all victim-survivors were left with trust issues, experienced severe mental health issues (including posttraumatic stress disorder), and experienced a loss of self-esteem, confidence, and control over their body.

Furthermore, McGlynn et al.’s (2021: 550) (see also Henry et al., 2021; McGlynn et al., 2019) interviews with victim-survivors across the UK, Australia, and New Zealand confirmed that the consequences are consistent across countries. Using their concept of ‘social rupture’ they identify the impact on victim-survivors as ‘all-encompassing and pervasive, radically altering their everyday life experiences, relationships and activities, and causing harms which permeated their personal, professional and digital social worlds’. In doing so, McGlynn et al. (2021) uncover how far-reaching victim-survivors’ lack of trust is, with women becoming distrustful and wary of men, even in public spaces, as a result of feeling susceptible to future victimisation. They use the concept of ‘constrained liberty’ to encapsulate the omnipresent threat of further abuse resulting in self-protective measures to keep safe, subsequently narrowing victim-survivors’ liberty and creating intense feelings of isolation. McGlynn et al. (2021) also identify the impact of ‘existential threat’ and ‘constancy’ where victim-survivors remain in constant fear that victimisation will continue, or that images will re-surface, and constantly check websites to see whether their images have been (re)posted online.

In essence, McGlynn et al. (2021) uncovered the importance of understanding that victimisation is not something that is short-lived, but instead has a constant and likely long-lasting impact on intersecting parts of victim-survivors’ everyday lives. The need to examine victimisation as a whole; looking beyond the event of image dissemination was also confirmed by Huber (2020) who found that image sharing is often part of a wider process which can include the range of behaviours that fall under IBSA as well as subsequent forms of victimisation such as ‘doxing’. All of these were found to have a detrimental impact on the daily emotional, physical, and social well-being of victim-survivors.

Research has also found that the digital distribution of IBSA images has exacerbated consequences compared to images which are shared offline. For example, academics have highlighted how the unlimited audience in cyberspace increases the harm caused to victim-survivors, as too does the ability for us to instantaneously communicate and share information, resulting in images being shared to audiences of an unimaginable scale and victim-survivors being more accessible than ever before (Huber, 2023a; Powell et al., 2018). This accessibility is particularly problematic in instances of doxing, often resulting in victim-survivors being inundated with abusive messages and unsolicited ‘dick pics’ (Citron and Franks, 2014; End Violence Against Women, 2013; Henry and Powell, 2016; Huber, 2020; Stroud, 2014). Citron (2019) also identifies employers’ increasing reliance on internet search results when making recruitment decisions because it is less risky to hire people whose internet reputation or profile is not questionable or damaged. Thus, a Google search result which displays sexualised images may have a fundamental impact on how employers see victim-survivors and disadvantage them compared to non-victim-survivors. This is confirmed by Cyber Civil Rights (2014) who found that 82 percent of victim-survivors suffered from occupational problems as well as Bloom (2014) and Franklin (2014) who argue that reputational damage to victim-survivors causes employers to fear reputational damage (see also Short et al., 2017).

With the impact of NCII distribution being so severe, a better understanding of image distribution and removal is vital in trying to reduce harm. The longer the images remain online, the greater the likelihood of further circulation, and increased susceptibility to further forms of abuse. Furthermore, a report by Refuge (2022) investigating women's experiences of reporting technology-facilitated abuse to online platforms found that negative reporting experiences had significant consequences for victim-survivor well-being with many feeling disappointed, frustrated, and vulnerable as a result of having to wait months for platforms to respond and remove images. Therefore, it is important to maximise the potential for prevention and ensure swift removal when prevention fails. To do this, we must understand where images are likely to be distributed so that target areas for legislation, regulation, and moderation can be identified. We must also understand removal processes in order to improve them and ensure that such processes do not exacerbate harm.

The location of NCII distribution

Although it is generally known that IBSA images are likely to be hosted on many different platforms, and in vast numbers, it is impossible to definitively quantify exactly how many platforms host the material. There are various reasons for this. For example, it is often difficult to distinguish between images consensually and non-consensually shared, especially on pornography platforms (Henry and Flynn, 2019; Huber, 2023c). We also cannot definitively identify how many pornography sites are in operation or the number of other platforms which host this content (Henry and Flynn, 2019), especially given the global and dynamic nature of the internet. However, the growing amount of literature examining victim-survivor experiences and the limited literature examining websites hosting this material provides a valuable, although not exhaustive, insight into where images are being shared. Henry and Flynn’s (2019) ethnographic study of 77 platforms outlines four types of sites where IBSA material often appears. Firstly, IBSA content was found on pornography websites. Although non-consensual content is difficult to identify on these sites, they argue that reports to organisations globally about the material being uploaded on to these platforms confirm that these websites are being used to facilitate distribution. Secondly, content was found on community forums which allows members to form communities and hold discussions about a range of topics through sub-forums, with some specialising in IBSA. Thirdly, material was found on imageboards which allow users to anonymously post images on a range of topics. Users often shared IBSA material via ‘threads’ and members used file-sharing to distribute mass loads of content. The final category identified by Henry and Flynn (2019) is websites that are specifically designed to host IBSA content, on which users were actively encouraged to post images and personal information about victim-survivors.

Reports from victim-survivors have confirmed that social media platforms are used to distribute IBSA material. This includes Facebook, X, Instagram, and Snapchat (End Violence Against Women, 2013; Huber, 2020; Matthews, 2020). Victim-survivors have also stated that fake social media accounts have been made in their names to make it seem as though images have been consensually shared. Similar accounts have been created on dating apps with abusers interacting with individuals who think they are building relationships with those in the images (Huber, 2020). Images are also shared via instant messaging apps (including group chats), for example, via email, text, Facebook Messenger, WhatsApp, and Telegram (Huber, 2020; Kraus, 2020; Revenge Porn Helpline n.d.). While this literature provides an indication of where images generally tend to be shared there is only one study which attempts to uncover how images are proportionally distributed. Short et al.’s (2017) survey with 66 respondents found that IBSA images were most commonly distributed on social media platforms (37%), followed by mobile phones (27%) and websites such as YouTube (25%).

Legal responses in England and Wales

In England and Wales, the non-consensual sharing of private sexual material was criminalised in 2015 under the Criminal Justice and Courts Act. While welcomed, the legislation has been heavily criticised for having narrow legal definitions and a lack of applicability to a variety of cases, resulting in the Law Commission undertaking consultation with stakeholders and producing recommendations for legislative change (see Huber, 2023b; Law Commission, 2022; McGlynn, 2016). Until recently, there was also a lack of regulation which targeted platforms at risk of hosting IBSA content, namely platforms hosting user-generated content. Before the introduction of the OSA, social media and other platforms hosting user-generated content were left to self-regulate harmful content through community standards and terms of use (Woodhouse, 2022). The government merely provided a ‘code of practice’ for social media platforms under section 103 of the Digital Economy Act 2017 (Gov.uk, 2019). Even regulators such as the Competition and Markets Authority, the Advertising Standards Authority, the Information Commissioner's Office, the Financial Conduct Authority, and Ofcom who have some authority in overseeing online activity only broadly worked to regulate forms of communication from companies to the wider public, and not user-generated content such as that on social media (Woodhouse, 2022).

While social media and search engine companies have made attempts to respond to IBSA and online harm (see BBC News, 2015a; BBC Newsbeat, 2015; Google Support, 2022; Laville, 2016; Solon, 2019), they continue to face criticism for being slow in responding to victim-survivor requests and responding inadequately. For example, Google has been seen to resist requests from victim-survivors to remove images from its search results (BBC Newsbeat, 2015; Goldberg, 2019). Refuge’s (2022) interviews and surveys with victim-survivors of technology-facilitated abuse also found that over half of victim-survivors did not receive a response from providers (including meta-owned companies, X, YouTube, Snapchat, and dating sites), and half of those who did were informed that the abuse did not breach community standards (Refuge suggests much of it did). On some occasions, it still took weeks to get a response to NCII distribution, even when reported by Refuge as a trusted flagger. This left most victim-survivors stating that none of the social media platforms were safe, and 95 percent were unhappy with provider responses. Victim-survivors were also dissatisfied with the reporting process; 47 percent found reporting abuse difficult and 29 percent found it very difficult. More broadly, research has uncovered high levels of misogynistic content on social media platforms (Bartlett et al., 2014) and systematic failures to protect women from harassment (Paul, 2022). The failure of online platforms, particularly social media, to address forms of online harm resulted in calls for statutory regulation (Woods and Perrin, 2019).

In April 2019, the Online Safety Bill was proposed (Woodhouse, 2022), and having now been passed, the OSA introduces new rules for companies providing online user-to-user services to minimise and respond to harmful content on their platforms as well as requiring search engines to minimise harmful content in their search results. Services in the scope of the Act will need to put measures in place to prevent illegal content and will need to remove the content if it does appear on their platform, minimising the length of time such content is available (Online Safety Act, 2023). Some services will also need to implement ‘empowerment tools’ to provide users more control over who they can interact with and the content they can see, as well as providing all adult users with the option to verify their identity (Online Safety Act, 2023). Ofcom is appointed as the regulator and will be given powers to make companies comply by imposing fines of up to 18 million pounds or 10 percent of the persons qualifying worldwide revenue (whichever is greater) (Online Safety Act, 2023). Criminal sanctions can also be brought against senior managers who fail to comply with Ofcom's information notices. Senior managers are individuals whom the company identifies as being reasonably expected to ensure compliance with the notice, due to their involvement in managing and organising company activities (Online Safety Act, 2023).

Recognising the inadequacy of existing legislation around NCII distribution, the Act also introduces new legislation, namely the act of sharing or threatening to share intimate photographs or films. This offence supersedes section 33 of the Criminal Justice and Courts Act, providing substantially improved legislation by adopting many of the recommendations put forward by the previously mentioned Law Commission’s (2022) review (see Huber, 2023b; Law Commission, 2022; McGlynn, 2016). The OSA creates a base offence that criminalises the sharing of intimate images without consent, as well as more serious separate offences including (i) when images are shared with the intent to cause alarm, humiliation, and distress, (ii) when images are shared with the purpose of sexual gratification, and (iii) when there is a threat to share images, regardless of whether images are shared (Online Safety Act, 2023). These changes are crucial as they remove the previous requirement of demonstrating intent to cause distress for prosecution which was seen as a fundamental barrier to justice for victim-survivors. The offence also includes images made or altered by computer graphics which means that deepfake, and other altered images, will fall within the law's remit, something for which section 33 also faced heavy criticism (see Huber, 2023b; Law Commission, 2022; McGlynn, 2016). Additionally, the Act identifies NCII distribution as a priority offence. Priority offences are used to identify particularly serious and prevalent online harms and in doing so, platforms will have greater obligations in terms of prevention and swift removal of such content (House of Commons, 2022). However, with the Act only having recently been passed, we are yet to see what impact it will have on combating NCII distribution and other harmful practices.

Who are the Revenge Porn Helpline?

The Revenge Porn Helpline (RPH) are a partially UK government-funded service supporting victim-survivors of intimate image abuse over the age of 18. The service was established in 2015 in line with the criminalisation of NCII distribution. They support victim-survivors with instances of image sharing, threats to share images, images recorded without consent (voyeurism), webcam blackmail (sextortion), and upskirting and downblousing. They do this by providing a range of practical support including social media advice, advice on reporting to the police, and signposting to legal services. They have also played a key role in the development of technological solutions to stave off NCII distribution including the hashing of images. This is when images are assigned a unique digital fingerprint so that attempts to upload hashed images on participating platforms will be blocked. This was initially trialled by Facebook (Solon, 2019) but has been further developed by the RPH in collaboration with Meta to create NCII.org which allows individuals to create hashes for their images which are then shared with Facebook, Instagram, TikTok, Bumble, Threads, Aylo, Reddit, OnlyFans, Pornhub, and Snap Inc. (NCII.org, 2022). The helplines’ main roles are to assist victim-survivors with image removal and act as a trusted flagger for many platforms (Revenge Porn Helpline, n.d). Reports from organisations deemed to be ‘trusted flaggers’ tend to be quickly actioned because reports from them are automatically confirmed as genuine and needing urgent action. With the RPH playing such a vital role in image removal, their data can bridge the current knowledge gap around the nuances and realities of image distribution and removal. Therefore, the study presented in this paper is the result of a partnership between academia and the RPH aiming to undertake knowledge exchange to further understand NCII distribution and removal.

Methodology

The data presented in this paper are part of a larger study which quantitatively explored NCII distribution and removal as well as qualitatively examining the nature of platforms and practices of users. The quantitative results are presented here alongside initial observations of platforms for the purposes of categorising their nature. The study is also informed by the experiences of the second author (hereafter, helpline practitioner) who was able to draw on her years of experience as an RPH practitioner.

To gain information on image distribution and removal, data were collected using the database of reports at the RPH. To do this, it was first necessary to identify which websites were being used for NCII distribution. To obtain a representative sample, a random sample of 200 cases was generated from over 2600 cases of NCII distribution between 2015 (when the Helpline opened) and quarter 1 of 2022, using a random number generator. The sample was necessary for two reasons. Firstly, we wanted to qualitatively explore the cases at a later date, meaning that the helpline practitioner had to manually pull data for each of the cases from across different databases to identify relevant URLs (internet links). Secondly, the practitioner undertook this work as a voluntary research assistant. Both of these circumstances meant that it was important to keep the dataset manageable.

From these 200 cases, 121 victim-survivors did not disclose the location of their images meaning that we are able to identify image location for 79 of the cases. In 44 of these 79 cases, researchers were not able to gain further information beyond how they had been categorised in the RPH database (e.g. Facebook or OnlyFans) as the private nature of the location (i.e. personal user accounts) made them inaccessible for further analysis. This left 35 cases (17.5% of the random sample) with accessible public URLs suitable for further analysis. The Helpline define public URL's as any content that is ascribed to an internet link that is public to any internet user without the need of ‘following’, or paying for access to said URLs.

The date range for the 35 cases was from September 2018 to February 2022 and within those cases 16,937 images were shared across 325 websites. To ensure that the victim-survivors were not identifiable, the helpline practitioner stripped all URLs of any personal data so that when the URLs were put in the dataset the URL led to the homepage of the relevant platform, rather than the page where the victim-survivor's images were located.

As part of their proactive searches to remove content, the RPH also collate information on methods of contact for websites where illegal content is reported. This allowed for the creation of a dataset which provided an overview of the image removal methods across the 35 cases and whether these methods were successful. Once the anonymised dataset was complete each of the 325 URLs was accessed and observed to uncover more information about the nature of the platforms, such as whether the URL was a pornography website or a forum, and whether NCII distribution was a main feature of the platform. Essentially, the researchers were looking to find if the site was specifically an NCII distribution website, such as the original ‘revenge porn’ site created by Hunter Moore in 2010 (Lee, 2012), whether the website had a broader focus but contained subsections or threads which users were primarily using for NCII distribution, such as AnonIB (Huber, 2023c), or whether NCII distribution was ancillary to the platforms main purpose, for example, pornography platforms such as Pornhub and xHamster.

Image distribution

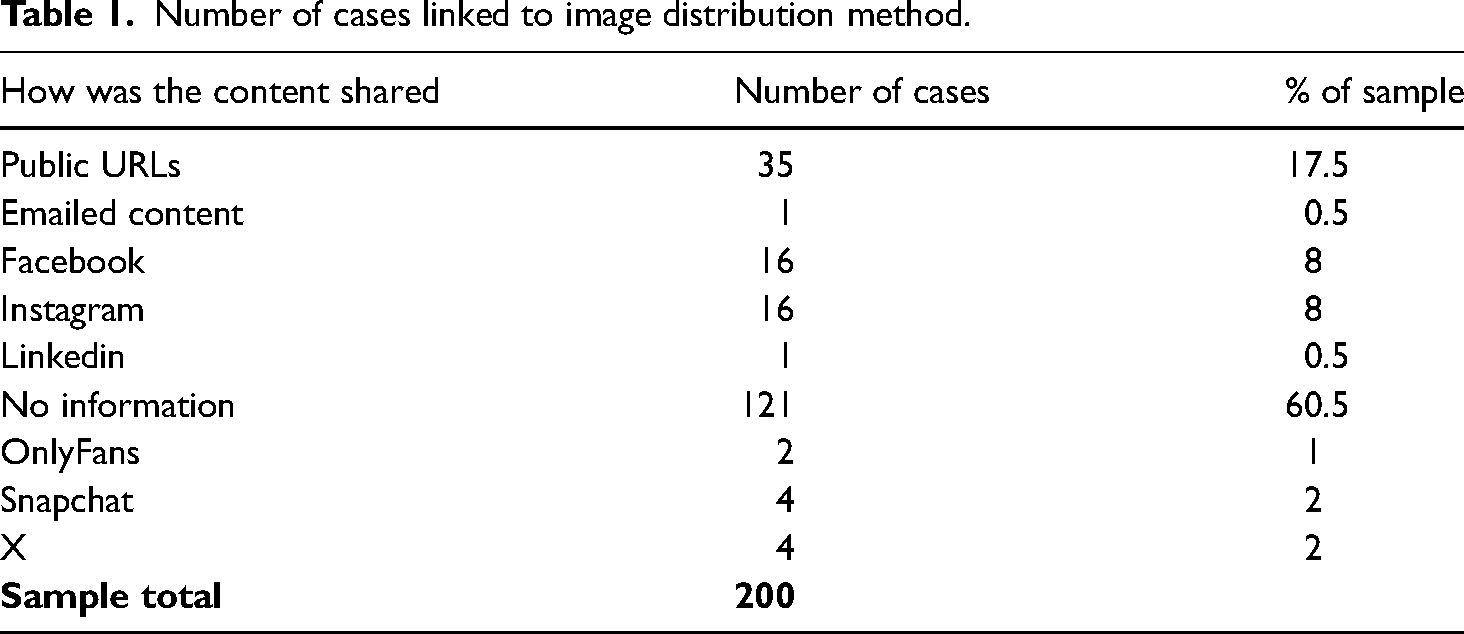

From the random sample of 200 cases, it was possible to identify the distribution method and the number of cases each distribution method was associated with (Table 1). Of the 79 cases where image location was disclosed, the most common distribution methods were social media, used in 41 cases (52%), with Facebook (n = 16) and Instagram (n = 16) being used in the highest number of cases, meaning that Meta-owned companies facilitated the majority of NCII distribution on social media platforms (78%). Other social media outlets, namely X and Snapchat only equated to four cases apiece, while two cases were shared on OnlyFans. Distribution via public URLs was linked to 35 cases (44%), making it the second most common form of image distribution.

Number of cases linked to image distribution method.

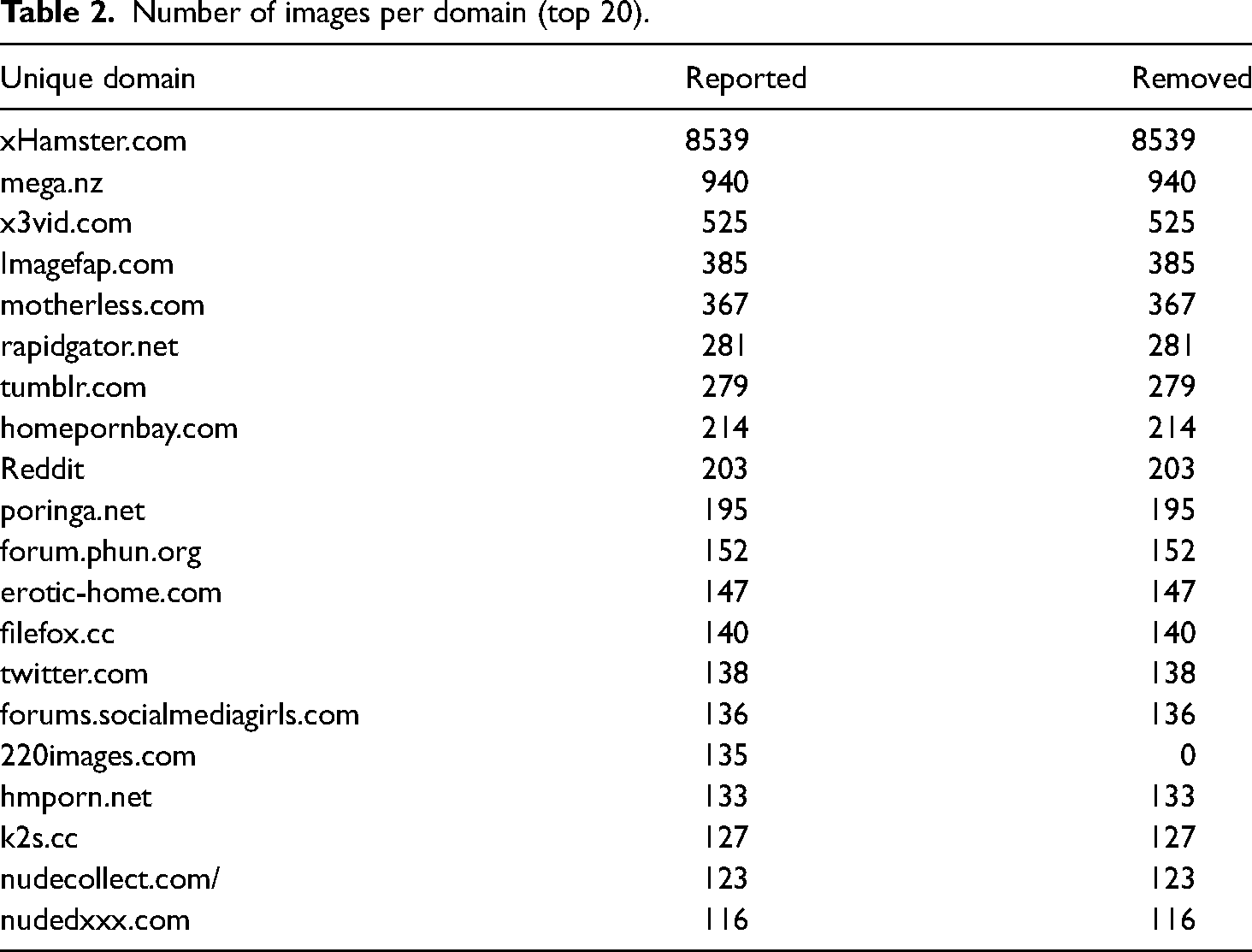

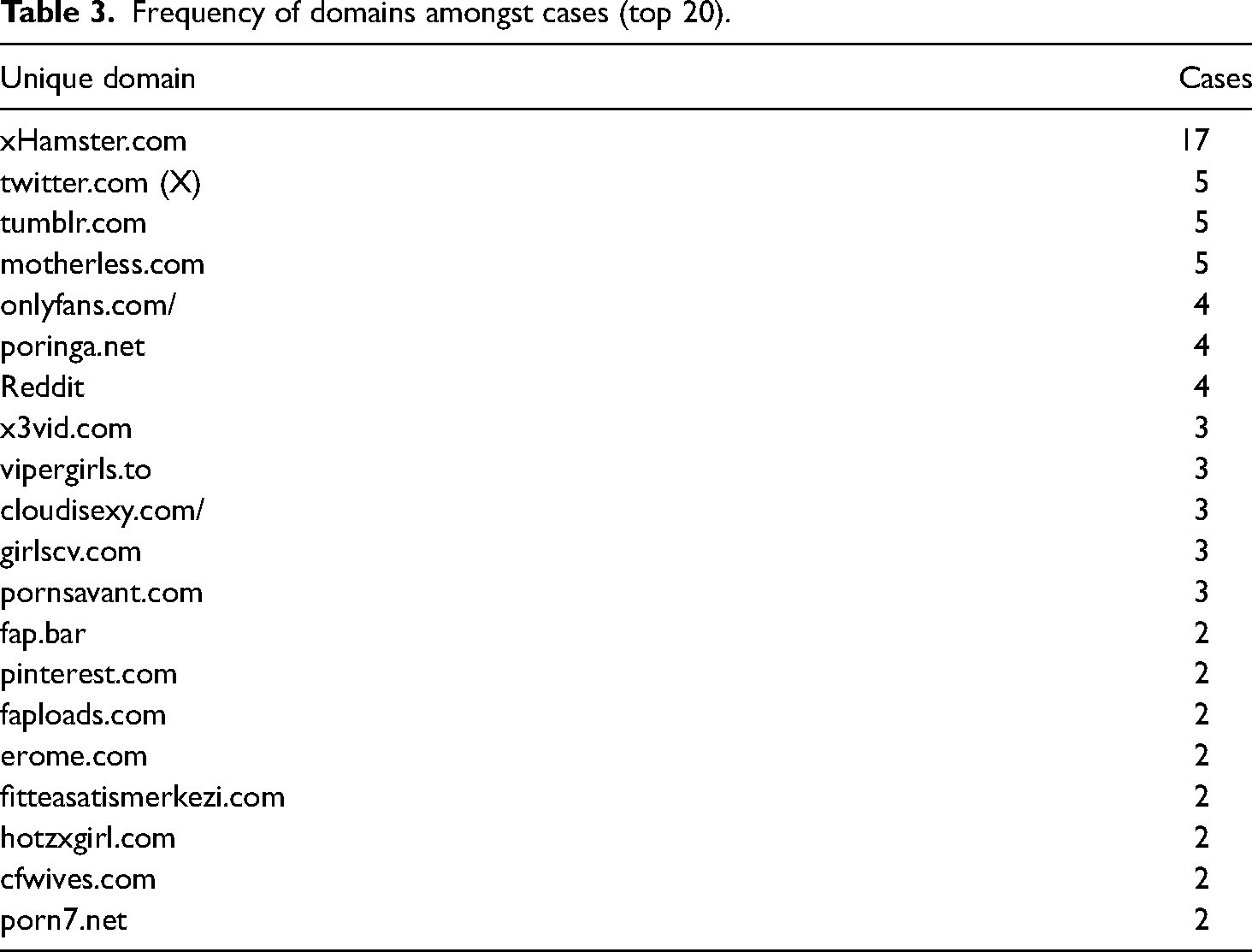

It was not possible to further explore instances where images were shared on social media and private platforms as the private nature of these spaces made them practically inaccessible, and any attempts to draw further data from these spaces without victim-survivors’ consent would have been unethical as the data would not be classed as publicly available (Elm, 2009; Eynon et al., 2009; Williams et al., 2018). However, it was possible to provide a further breakdown of the 35 cases where 325 URLs were used to distribute images. Table 2 presents the 20 websites with the most images distributed. Table 3 presents the frequency of websites used to distribute images across the cases.

Number of images per domain (top 20).

Frequency of domains amongst cases (top 20).

When accessing the URLs in May 2022, 50 of the sites were bouncing back with errors, which can often be temporary and can occur for a multitude of reasons. The website domain could have been removed due to a low payment, it could be transferring hosts, or the website could have been taken down. In one domain's case, it was ‘removed by Homeland Security’. A further analysis was conducted at the end of July 2022 to examine the websites and categorise them in accordance with their nature. A total of 73 (22.3%) websites were not accessible (an increase of 23 since May 2020).

From the URLs sampled, 42.5 percent (n = 139) were pornography websites of varying sophistication and around 18 percent (n = 52) were forums for sexual content and chat threads that allow users to share sexual content anonymously. These sites can house a lot of collections (large quantities of content linked by file sharing) and often contain personal details of the victim-survivors (doxing). Escort websites made up 5.23 percent (n = 17) of the sample and file-sharing sites made up 0.9 percent (n = 3). ‘Desi’ websites, platforms sharing only Asian pornographic content, constituted 2.7 percent of the sample (n = 9). Eight percent (n = 26) of the websites seemed to be openly hosting IBSA content. This included sites dedicated to the sharing of this content and forums in which there is no, or little attempt, to regulate such content allowing the platforms to be openly utilised in this way.

Across the 35 cases, xHamster (a pornographic website) was the most used domain to share images, making up around 50 percent of the total images shared (Table 2). xHamster was also a common place for image distribution across the cases, being used across 50 percent of cases (Table 3). The next website with the most images shared is the file-sharing site mega.nz where large quantities of images can be shared and downloaded for users. Sites such as xHamster allow multiple users to upload individual videos and images producing different URLs for each piece of content whereas file-sharing sites such as mega.nz allow individual users to upload multiple images and videos available to download from a single URL. Therefore, file-sharing platforms are often used to share large collections of images evidenced by the fact that the 940 images shared on mega.nz only make up one case in the dataset. This means that all 940 images belonged to one victim-survivor and were shared online as a collection within a single file.

Image removal

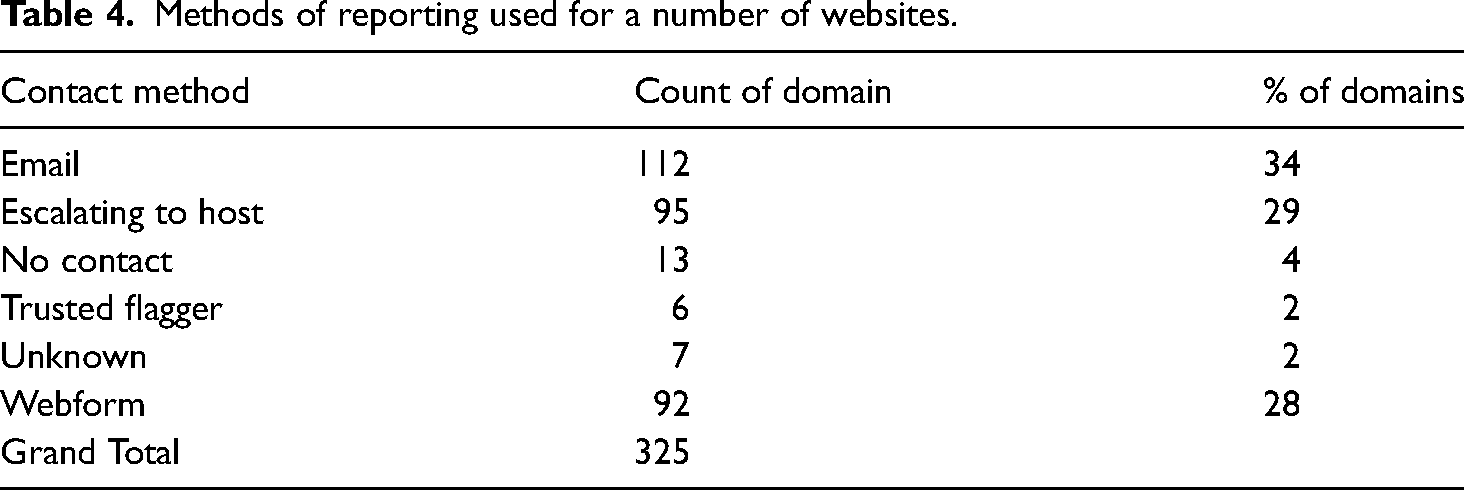

Using information from the 35 cases which included publicly available URLs we were able to identify the method of reporting taken by the helpline and the success rate of image removal. Table 4 presents the contact method used by the helpline to request image removal across the 325 websites.

Methods of reporting used for a number of websites.

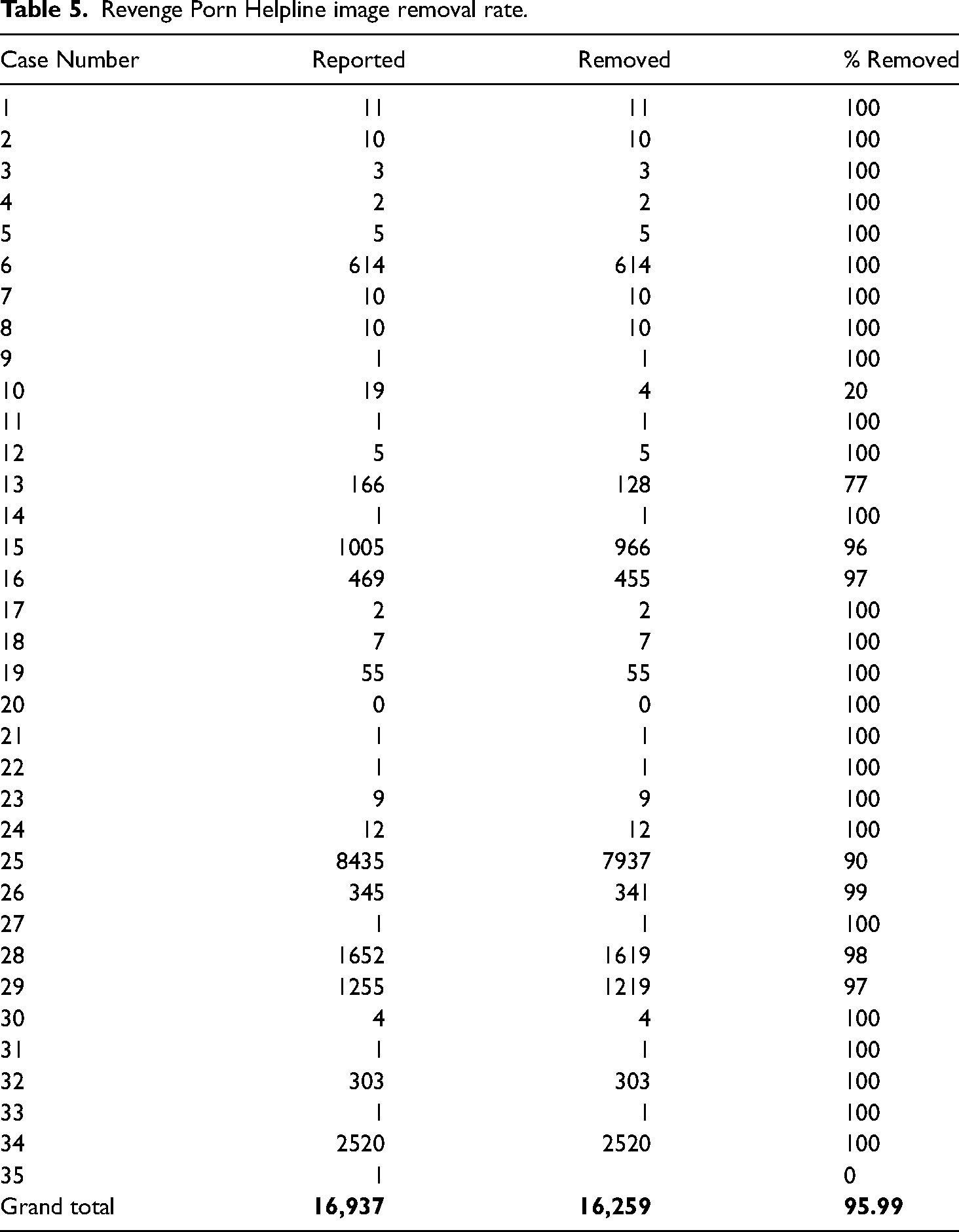

The most common method of reporting was using email addresses belonging to website owners (34% of reports). Although email addresses are often publicly available somewhere on the website, they are often difficult to find due to the layout; websites are often set up to present the user with never-ending pages making this information inaccessible. Websites might also create continuous loops which deter users from reporting content. For example, when attempting to gain access to a contact page, the page may take the user back to the main homepage or may not load at all. Difficulty also often occurs with web forms too (28% of reports); they can be incredibly difficult to use, and it is unclear what information needs to be included to get a positive response. If websites do not have any contact information/reporting routes or do not cooperate with reports from the helpline, then the helpline will report the images to the hosting provider (29% of reports). A hosting provider is an internet service that provides services to organisations and individuals to serve content. Despite these difficulties, efforts made by the RPH resulted in an impressive 96 percent removal rate (see Table 5).

Revenge Porn Helpline image removal rate.

Discussion

The data confirm the results of previous studies that social media platforms are commonly used for NCII distribution and may be the most common method of distribution (End Violence Against Women, 2013; Matthews, 2020; Short et al., 2017). The data also provide a further understanding of the nature of public URLs being used for NCII distribution. While URLs were the second most common method, they were still used across 44 percent of cases. It is therefore important that non-social media platforms are understood to be significant facilitators of NCII distribution. However, further examination of the public URLs indicates that cautious optimism can be found in the fact that NCII distribution was the primary purpose or key feature on only 8 percent of websites. This means that most images are being distributed to platforms which do not condone NCII distribution, suggesting that removal can and should be a quick and easy process.

The importance of swift image removal is demonstrated in the fact that although we were only able to further examine URLs for 35 of the cases, these 35 cases alone generated 16,937 images shared across 325 platforms. That's an average of 484 images per victim-survivor. This is the result of images being shared numerous times on platforms, and across different platforms as images can spread quickly online. This can happen through people manually resharing content and through the design of the platform themselves. For example, crawling platforms are designed to ‘crawl’ or pull content from other parts of the internet. This technique is often used by smaller pornography platforms to pull content from larger, more established platforms. This means content posted on one site will also appear on other sites which are crawling its content. Given that this research and others (Henry and Flynn 2019) identify pornography sites as a key concern it is likely that such software is contributing to NCII distribution.

The need for swift removal means that accessible reporting tools are vital. The data gathered on image removal by the helpline indicates that understanding the websites and how to navigate their reporting processes is important for successful removal. For example, for pornography websites, such as xHamster, an email with the URL where images can be found is enough, especially if coming from a trusted flagger. However, on platforms such as mega.nz (where file dumping occurs) removal requires a more complex response as images may be buried in files containing hundreds of other non-related images.

While such reporting might seem relatively easy in most instances, Refuge (2022) identified a fundamental failure on the part of online platforms to provide user-friendly reporting mechanisms, with many victim-survivors finding reporting difficult even on mainstream social media platforms. Hence, reporting and removal becomes even more difficult on fringe sites with little regulation. Some reporting mechanisms are so difficult or unresponsive that the RPH were required to escalate the report to the hosting provider. This kind of knowledge is not something the everyday user is likely to hold and victim-survivors who are distressed at having found their images online are unlikely to be able to navigate this process easily. Therefore, in addition to user-friendly reporting tools, collaborations with trusted flagger organisations are vital given that such navigational knowledge is likely to impact the success and speed of image removal.

In addition to accessible reporting tools and collaboration, regulation is becoming important as platform owners increasingly need to take greater responsibility in moderating their platforms and responding to victim-survivors. Given that NCII distribution has been criminalised in England and Wales since 2015, and in many other countries across the globe including Australia and many US states (Henry et al., 2019), the process and speed of image removal should be much quicker than it currently is. It is clear from the literature, and from helpline practitioners, that service providers are not fully supporting legislative moves in addressing forms of online harm (BBC Newsbeat, 2015; Goldberg, 2019; Paul, 2022; Woods and Perrin, 2019). As a result, the OSA places further statutory regulation on service providers in a bid to reduce harmful practices online (see Woods and Perrin, 2019).

The Act obligates a duty of care on companies which provide user-generated services and search services within the UK. Obligations for providers falling within the remit of the OSA include conducting illegal content risk assessments, taking steps to mitigate and manage harm, and minimising the dissemination and overall presence of illegal content on their platforms. They must also have reporting and complaint procedures which are transparent, easy to access and use (Online Safety Act, 2023). The Act creates different categories of regulated services (Category 1, 2A and 2B), which determine the duties that online services will need to undertake (Online Safety Act, 2023). Category 1 services will include the largest user-generated services and will be subject to higher levels of regulation compared to Category 2 providers (Online Safety Act, 2023). Given that the size of the provider will be determined by the number of users, it is anticipated that the largest social media companies will fall into Category 1 (Demos, 2021), with the potential for larger pornography sites to fall within remit. For example, Facebook is estimated to have around 3 billion monthly active users (Statista, 2023) and comparatively, Pornhub is estimated to have 2.5 billion monthly active users (AccessNow, 2023), with the UK being the second highest source of traffic (Pornhub Insights, 2023).

The OSA is undoubtedly a step in the right direction to ensure that platforms are held accountable for the harm that their services can facilitate; however, the data presented here suggest that the scope of the Act may need to be broadened if it is to be more effective in addressing NCII distribution. For example, the number of users being a key factor in determining whether websites sit within the specialised categories (Online Safety Act, 2023) means that the highest level of regulation is mostly targeted at the biggest social media platforms, search engines, and pornography websites (Gov.uk, 2023). While this will be effective for a good proportion of victim-survivors (given the use of sites such as xHamster to distribute material), these kinds of sites are more likely to be compliant and responsive to takedown requests given that many of these companies are already using the RPH has a trusted flagger and are making efforts to adopt technology aimed at prevention (NCII.org, 2022). Thus, in terms of prevention and image removal, experiences from the RPH suggest that it is the smaller, less sophisticated platforms, such as individually owned message boards and websites, that are likely to side-step prevention and removal efforts (Southwest Grid for Learning, n.d). This is confirmed by the dataset as very few of the URLs identified within this study are likely to fall within the remit of the Act, even though they are responsible for hosting NCII in 44 percent of cases. These same platforms were also the ones most likely to ignore image removal requests. Demos (2021) has also raised concerns that a focus on larger platforms would likely cause users to migrate to smaller fringe platforms which will continue to be more hospitable for abusive content. Therefore, to address harmful content effectively there is a need to target larger and smaller service providers simultaneously.

Other concerns over the need to ensure the correct levels of regulation have also been raised by Michcon de Reya (a law firm well known for working and supporting victim-survivors of IBSA) and SPITE (a pro bono service offering legal advice to victim-survivors) (LawWorks, 2022). While Ofcom has yet to determine which websites will fall within Category 1, Michcon de Reya and SPITE have recommended a rebuttable presumption that websites which host pornographic content should be included within Category 1 (Michcon de Reya, 2022). Data from this study support such recommendations given that pornography platforms were linked to over 50 percent of public URL distributions, indicating that pornography platforms play a leading role in facilitation.

Research has also identified that differentiating between genuine pornography and NCII content on pornography websites is particularly difficult given the sexualised nature of both types of material (Henry and Flynn 2019; Huber, 2022b). This means that regulation of these platforms is more important than ever, not just because they are a key mechanism for distribution, but because ensuring NCII is identified is likely to be a very complex process. Given the difficulties, potential costs and resources this may require, it would be easy for companies to commit to a minimal level of regulation that would prevent repercussions from Ofcom. It should therefore be considered whether some platforms, including pornography platforms, should be considered high-risk due to their nature and become subject to regulation in accordance with such risk, as opposed to being categorised as high-risk due to user numbers.

Although the Act includes services hosted within and outside of the UK, services only fall within scope if they have a significant number of UK users, or the UK is a target market for the service (Online Safety Act, 2023). This presents a problem in instances where images may be uploaded to platforms with fewer UK users than is deemed ‘significant’. Given that the internet is a borderless space (Grabosky, 2016), the locations in which users are predominantly based are not likely to be the fundamental concern for victim-survivors.

Data showed that 3 percent of the websites were ‘desi’ sites. ‘Desi’ is a term used to identify individuals whose descent comes from South Asian countries, including India, Pakistan, Bangladesh, Sri Lanka, the Maldives, Nepal, and Bhutan. In the pornography context, the term ‘desi porn’ has come to be used colloquially as identifying content which explicitly focuses on performers who can be identified as Indian or Pakistani (Chatterjee, 2017). Nine websites within our data were seen to take this approach. Experience from helpline practitioners tells us that these types of sites are often more difficult to remove content from because they are not based in the UK. This may also mean that most platform users are not based within the UK. This is somewhat confirmed by the fact that these sites tended to be written in South Asian languages. Yet, research tells us that victim-survivors who belong to these communities are at risk of exacerbated impact, including physical violence, honour violence, and ostracisation from communities (Law Commission, 2022). In these contexts, perpetrators often know these will be the consequences for victims and this forms part of the motivation for NCII distribution (Huber, 2020; Law Commission, 2022). Therefore, even if the victim-survivor is based in the UK these platforms are likely to fall outside of the OSA remit. This means that platforms where some of the most vulnerable victim-survivors are targeted remain unregulated.

Admittedly, addressing harms on these smaller platforms would not be a simple task. The data showed that the landscape of NCII distribution is constantly changing with websites becoming inaccessible. Furthermore, in instances where efforts were later made to re-visit data analysis, some of these sites had become live again. This presents a problem which is technologically unique; if something exists one moment, is removed the next, and then re-surfaces again, it will be extremely difficult to monitor and enforce regulations. This also makes it very difficult for victim-survivors to keep track of whether their images have been removed from platforms and whether they are likely to re-surface when the platform does. Responding to this shifting landscape is going to require more thought and innovation, although there is potential for the hashing of images to play a role (see NCII.org; Solon, 2019) if the technology were to be utilised across all platforms. Nonetheless, the shifting landscape makes it incredibly difficult to research, monitor, and track the nature of online abuse, constantly limiting and outdating our knowledge of the landscape.

Therefore, while the OSA should be commended for its recognition and attempts to combat online harms, more needs to be done to ensure that the platforms being used to host NCII are doing everything possible to combat the issue. At present, the focus on services with the highest number of users means that many platforms where NCII distribution occurs may either not be regulated enough or may not fall within the scope of the Act altogether. This is fundamentally problematic given that platforms falling into Category 1 are likely to belong to large, and legitimate, companies who are more likely to adhere to OSA measures anyway. Given that, thus far, UK victim-survivors have been fundamentally let down by both policing and legislative responses (see Huber, 2023b), IBSA victim-survivors will need much more legislative and policy change than the OSA offers in order to ensure effective preventative and responsive measures online.

Limitations

While the study contributes to the literature and regulatory debate in the field, there are limitations. Firstly, the data held at the helpline are only representative of the victim-survivors who report to this particular service. Although we have seen increasing recognition of NCII distribution over the past few years, such victimisation remains under-reported (Yar and Drew, 2019). Moreover, many victim-survivors do not know where their images are shared, or the extent of such sharing, and so the data may not capture every relevant platform across the random sample. It is also important to note that the random sample did not contain cases which fell outside of the legal definition specified within section 33 of the Criminal Justice and Courts Act. This is due to the RPH only being able to work within the parameters of the law at the time of data collection. In relation to the 35 cases where public URLs could be further examined, while this is the highest number of individual URLs examined within this context thus far, they only span across 35 cases making the sample size small in this respect. These factors mean that the results cannot be generalised. However, it is important to note that practitioner experience indicates that the platforms that occurred most frequently in this dataset are the same platforms which practitioners see reported to the helpline prolifically and are generally considered to be top-ranking sites for NCII distribution.

Conclusion

Using data collected in collaboration with the RPH, and informed by practitioner knowledge, this article provides insight into the nature of non-consensual image distribution, identifying where images are being shared and avenues for image removal. In doing so, it contributes to debates surrounding the need for appropriate regulation aimed at both prevention and response strategies to victimisation. The data confirm that social media plays a key role in NCII distribution and provides further insight into non-social media distribution, namely pornography platforms, image/message boards and file-sharing sites. Data on image removal indicate that knowledge of how to navigate different types of platforms is important for image removal success, making organisations such as the RPH vital in supporting victim-survivors. Findings also highlight the fundamental need to reduce the complexity of reporting processes in order to increase the speed and ease of image removal.

Concerns have also been raised about how to respond to platforms which are still not likely to be obligated to address NCII distribution under the OSA. While data confirm that large social media and pornography companies seem to be facilitating a large proportion of cases, there remain significant gaps in current approaches to regulation which will likely leave many victim-survivors no better equipped. If images are shared on smaller platforms or platforms with a limited UK user base, the OSA will not apply, and service providers will be able to side-step regulatory measures. This is particularly problematic given that data suggest that such sites are likely to be contributing to a large proportion of NCII distribution, and it is anticipated that this will likely increase due to displacement from larger platforms. Therefore, more consideration needs to be given to the high-risk nature of particular platforms and how this should impact upon regulatory efforts. Overall, the research highlights the need to ensure increased accessibility when reporting online abuse, increased collaboration and support for third-sector services, and regulation which appropriately targets and encompasses the breadth of NCII distribution.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Correction (October 2024):

Article updated to correct the in-text reference citation from ‘Author, 2020' to ‘Huber, 2020' since its original publication.