Abstract

This essay examines how emerging relations between digital technology, people, and organizations create trajectories for learning but also may reduce organizational intelligence. We study these trajectories from the perspective of three organizational learning models by looking at each one’s premises related to experience, continuity, and time. The community of practice learning model emphasizes shared experience through social practices. The behavioral learning model builds on the organization’s current knowledge base and involves trade-offs via adaptations that can be slow or fast, suggesting continuity in organizational learning. The information-seeking and helping model produces knowledge that is vetted by the community or organization in terms of its currency. Juxtaposing these premises with recent literature on the evolving relations between digital technology, people, and organizations, we assert that learning becomes less constituted by shared experience, by continuity in knowledge accumulation, and by its currency in terms of questions and answers that share a temporal frame. In these evolving trajectories, learning is less intertwined with relations that are associated with organizations, such as people, routines, and processes. We seek to provoke further research by asking: Are new theories of organizational learning needed to understand the new relations? And what effect do the trajectories for learning have on organizational intelligence? Is intelligent technology stripping organizations from their intelligence?

Keywords

Introduction

Digital technology—with its expansive, intelligent 1 , capabilities—has penetrated every facet of organizations and society (Bailey et al., 2022; Faraj and Leonardi, 2022; Hinds and Von Krogh, 2024). The implications for individual and team learning have been widely studied. Some researchers anticipate that intelligent machines can simply “run away” with their learning—that is, they can “outperform some human experts in law, finance and medicine” (Lyytinen et al., 2021: 439). Others conclude that humans ought to remain “in the loop” to provide organizational experience (Anthony et al., 2023; Baird and Maruping, 2021; Grønsund and Aanestad, 2020; Raisch and Fomina, 2025; Sturm et al., 2021; Weber et al., 2024). The consensus appears to be that “humans and machines together” beat “humans alone.” Still, the question about the extent to which such evolving relations represents organizational intelligence is unresolved. At least it remains open from the vantage point of organizational learning—that is, how organizations create, transfer, and retain knowledge through their interpretative processes and replicative routines (Argote et al., 2003). Such learning processes then undergird and affect organizational intelligence: As suggested by Huber (1991, 2016), intelligence is about the existence, breadth, and depth of organizational learning. When intelligent technology automates organizational functions (e.g. human resource planning and allocation) and the technology then renders these functions unobservable by the human members of the organization (Hinds and Von Krogh, 2024), what happens to organizational learning and organizational intelligence?

We focus on organizational learning in our analysis of organizational intelligence because the two are “mutually facilitative” (Huber, 1991, 2016). Greater intelligence facilitates learning, and more learning facilitates intelligence, including contextual understanding. Specifically, organizational intelligence assumes that organizational learning exists and that it has breadth and depth (Huber, 1990, 1991). Learning happens when it is shared and authorized, even if it is vicarious, unintentional, or accidental (Huber, 1991, 2016). The breadth of learning captures the diversity of interpretations of a particular learning event or process of learning across the organization, and the depth of learning addresses the understanding that organizational units have of each other’s interpretations of that learning (Huber, 1991; Huber and Lewis, 2011). Without such organizational learning, organizations lose their capacity for adaptive action (Huber, 1991; Levitt and March, 1988), and hence may not survive (March, 1995).

We suggest that the premises of organizational learning are under pressure. Organizational learning commonly is understood to be based on shared experience in an organization and to be dependent on continuous learning from experience over time (Argote and Miron-Spektor, 2011; Argote et al., 2021; Huber, 1990, 1991, 2016; Levitt and March, 1988). The shared experience produces beliefs about knowledge in the world (Vygotsky, 1978), and it also reveals the meta learning of “how we know what we know” (Knorr Cetina, 1999: 1). In this way—that is, by learning continuously from experience—organizational learning seeks to “mindfully engag(e) in opportunities while simultaneously keeping things on track” (Hernes and Irgens, 2012: 254). March (1991) similarly emphasized continuity as he sought to balance exploring new knowledge and exploiting existing knowledge. Weick (1996: 738) stated “constancy, continuity, and focus are necessary for adaptation” in organizational learning. Beyond experience and continuity, learning involves interaction and relationships with others who share and can understand each other’s thought worlds and the context. This context includes shared currency in terms of the temporal relevance of the questions and answers (Bluedorn and Waller, 2006; Constant et al., 1996) that leads to joint accomplishment (Grodal et al., 2015).

If organizational learning is indeed premised on the factors of experience, continuity, and currency, how are these premises affected by the expanding capabilities of intelligent technology? What are the implications for organizational intelligence and why? We begin by introducing three theories of organizational learning. We derive the salient premise from each of the theories and use them as the analytic lens to understand whether the trajectories emerging from the relations between technology, people, and organizations imply organizational intelligence or lack thereof. We do not revise the definitions of organizational learning and organizational intelligence; rather, we call for research on questions that may potentially revise the premises and the definitions. We conclude with a proposed research agenda.

Organizational learning theories and organizational intelligence

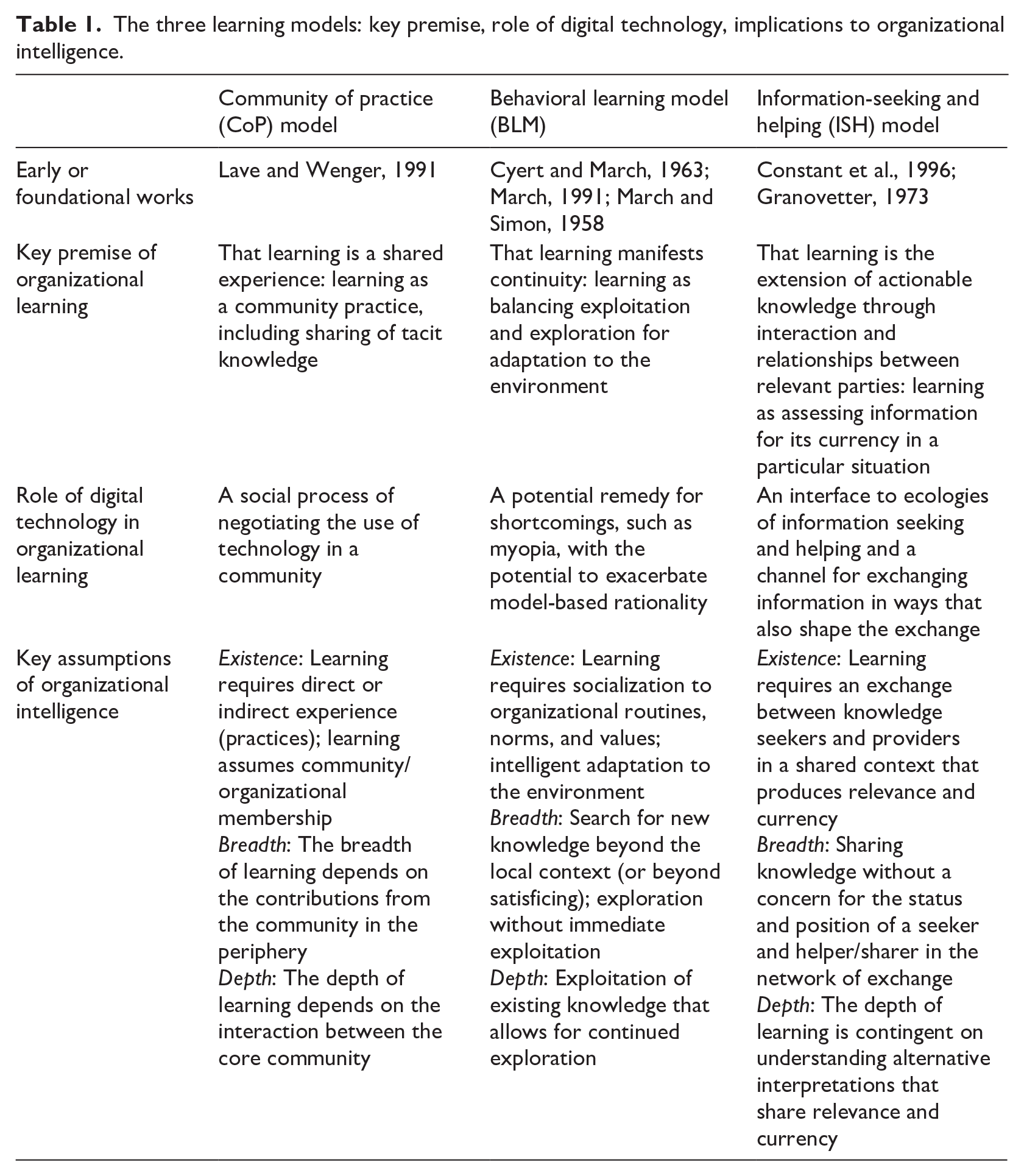

We consider three organizational learning theories: the community of practice (CoP) model (Lave and Wenger, 1991), the behavioral learning model (BLM) (March, 1991), and the information-seeking and helping (ISH) model (Constant et al., 1996). We focus on these learning theories because they have influenced the past few decades of research that establishes what is theoretically known about organizational learning. We chose these learning theories not only because they are dominant in their influence but also because they offered potential for analyzing the impact of intelligent technology and its trajectories of learning. We discuss the main premises of each learning model. The three theories share the main premises but nuance them in different ways. These premises are important because they support organizational intelligence. We review each learning theory in turn.

CoP model: learning premised on shared experience in practice

The CoP learning model builds on Lave and Wenger’s (1991) view of learning as socially embedded. To illustrate, Orr’s (1996) study of Xerox photocopier technicians was hailed as a “model for understanding the postindustrial economy” (Bechky, 2006: 1759). “Talking about Machines” (Orr, 1996) represented technicians’ work as embodied, narrated, and situated and as involving tacit knowledge and enmeshed with the practice—doing or engaging in activities (Nicolini and Monteiro, 2017)—of maintaining the copiers, complicated by unhelpful user behavior.

The CoP model explicitly shifts “the analytic focus from the individual . . . to learning as participation in the social world” (Lave and Wenger, 1991). Learning takes place in communities through direct experience; as members interact or attentively observe the experiences of others (Myers, 2018), they learn the “tricks of the trade” (Nicolini et al., 2022: 696). CoPs are defined as “groups of people informally bound together by shared expertise and passion for a joint enterprise, who interact regularly to learn about or improve their practice” (Nicolini et al., 2022: 680). CoPs comprise informal nodes for exchanging and interpreting information, retaining knowledge in “living” ways, stewarding competences, and becoming homes for their members’ identities (Wenger, 1998). In the CoP model, learning is culturally and historically bound (Brown et al., 1995); the model describes what learning is rather than what it ought to be (Farnsworth et al., 2016). Learning in CoPs assumes social presence, being with other members of the community. The core of the community has more experience than the periphery, and the learning is transferred as the core and periphery interact (Lave and Wenger, 1991; Thompson, 2005). Learning takes place in the present but is affected by the ongoing sensemaking of the past and the expectations for the future (Hernes and Irgens, 2012).

The use of technology, such as intranets, expert systems, and knowledge management systems are negotiated as part of the community’s social process. Digital technology facilitates and augments shared experiences in online communities (Wasko and Faraj, 2005) and in the networks of practice (Brown and Duguid, 2001) by allowing individuals to engage and interact outside a physically constrained space (Barrett et al., 2004). Virtual spaces provide fluidity “where boundaries, norms, participants, artifacts, interactions, and foci continually change” (Faraj et al., 2011: 1226). Contributions that come from online members that are peripherally related to the topics being discussed as well as those who are core members make valued contributions (Safadi et al., 2021). Member behavior and social interaction also can be temporally flexible, with individual experiences varying in time or with time lags (Faraj et al., 2016). Yet, even if experiences codify knowledge in digital artifacts that are perpetual in time, the experiences in social practices are still what render mutual learning. This mutual learning shares history and thought worlds for meaning making (Faraj et al., 2016; Nicolini and Monteiro, 2017).

Organizational intelligence

The existence of learning in this model depends on a shared practice among organizational members, like the technicians with Xerox machines in the study by Orr (1996). The breadth of learning is related to the notion of legitimate peripheral participation (Lave and Wenger, 1991)—whether and how newcomers become part of the practice. The depth of learning values the experience-based knowledge in the community beyond formal training: “[The technicians] recognize the superficiality of the[ir formal] training because they are conscious of the full complexity of the technology and what it takes to keep it running” (Brown and Duguid, 1991: 43). Organizational intelligence thus is rooted in the community members’ sharing their experience.

BLM: learning premised on continuity and speed

The BLM has roots in the work of March and colleagues (Cyert and March, 1963; March and Simon, 1958) who were pioneers in modeling organizational behavior as a new field of study. The BLM involves observing and evaluating the changing environmental or organizational conditions and taking action to respond to the complexity in the world (Levitt and March, 1988; March, 1991). The seminal BLM work by March (1991) draws attention to the complexities of adaptation, which present uncertain returns to organizational learning. The model’s ontological underpinning is the “problem of balancing exploration and exploitation, [which is] exhibited in distinctions made between refinement of an existing technology and invention of a new one (Winter, 1971; Levinthal and March, 1981)” (March, 1991: 72). This balancing is modeled in a social context of organizational learning where newcomers learn the existing knowledge base of the organization. The focus is on how the organizational “code”—the norms, values and routines but also the existing knowledge—learns from the newcomers but also adapts to environmental change (Miller et al., 2006; Wilden et al., 2018).

Organizational knowledge is based on past learning, minus forgetting and knowledge depreciation (Argote, 2013). Updates to organizational knowledge follow the evolutionary logic of variation, selection, and retention, and general guidelines influence what is selected and retained for future use (Levinthal and Marino, 2015). In addition to the effects of adaptation, the BLM attends to how learning curves (Argote, 2013), myopia (Levinthal and March, 1993), and garbage can models (Cohen et al., 1972), among others, link to the existing knowledge base and how they influence social behavior and constraints in the organization, including aspiration levels (Greve, 1998), attention (Ocasio, 1997), and search (Katila and Ahuja, 2002).

The pace of learning new knowledge is important. March (1991) emphasized the speed at which socialization to organizational norms and values takes place, suggesting that slowness allows the organization to learn (more) from its newcomers. In contrast, fast learning focuses on rapid absorption and diffusion of knowledge that reduces variation. A rapid reduction in variation can come from quick resolutions and from a narrower base of sources (March, 1991). Fast and narrow learning affects what is attended to, examined, and evaluated, as well as the level of intensity achieved (Li et al., 2013; Ocasio et al., 2020). The likelihood of shared goals, beliefs, or knowledge declines. Exploratory action may fail both to pursue long-term targets and to accumulate deep expertise for addressing important problems (Ferraro et al., 2015; Wang, 2021).

Argote and Miron-Spektor (2011) point to technology’s forming a (part of) the context for learning and facilitating creation, transfer, and retention. Digital technology, such as online knowledge repositories and enterprise social media, can facilitate socialization to the organizational values and norms (Jarvenpaa and Tuunainen, 2013), but the engagement with social media also can shape and even alter the norms and values and can distract members from the pursuit of organizational goals (Leidner et al., 2018). Digital technology can favor narrow learning (Kane and Alavi, 2007), highly specialized learning curves (Schilling and Steesma, 2001), and autonomous problem-solving (Benner and Tushman, 2015) without direct managerial supervision (Massa and O’Mahony, 2021; O’Mahony and Ferraro, 2007). In his seminal article, March (1991) warned of the possibility that digital technology deprives organizations of their ability to benefit from sharing their past experiences, and hence, they “suffer the costs of experimentation without gaining many of its benefits” (March, 1991: 76).

Organizational intelligence

The existence of learning in the BLM depends on slowing down the pace of learning enough to benefit from exploration. The breadth of learning depends on the amount of exploration the organization’s members can accomplish, and the depth of learning is related to the exploitation of existing, accumulated knowledge. Well aware of the shortcomings of organizational learning and hence intelligence, March (1995) was concerned about information technology (IT) that potentially could make organizations disposable—that is, about its capacity to adapt to any environmental change so fast that no exploration would remain, thus endangering organizations’ survival over the longer term. Further, March (2006) warned about overly rational behavior that would suppress imagination and “foolishness.” “Technologies of model-based rationality” have largely become “instruments of intelligence” that have improved performance but generally fail in complex situations (March 2006: 208). Technology is seen as productive in relatively simple decision-making environments but potentially harmful in more complex situations, in part because of difficulties in parsing causalities, identifying criticalities, and understanding uncertainties in complex environments. The harm also may come from a lack of generative variation that goes beyond local improvements.

To Huber (2016), the kind of organizational learning required by rapid environmental change was a conundrum. An inflow of newcomers to an organization would likely lead to a change of culture in the organization as newcomers might not be socialized to existing values and norms, but whether this change would increase or decrease the organization’s intelligence was not clear. The answer would depend on the newcomers’ capacity to pursue evolving organizational goals while adapting to the environment (Huber, 2016).

ISH model: learning premised on its currency

The ISH learning model is based on interdisciplinary research across communication, social psychology, organizational studies, and information systems fields (Constant et al., 1996). Building on the strong–weak tie relationships (Granovetter, 1973), the ISH model includes the premise that the exchange of information in networks of knowledge accelerates and improves organizational learning (Borgatti and Cross, 2003; Constant et al., 1996; Cross and Sproull, 2004). What is central in ISH are interpersonal relationships and “immediate progress on a current assignment or project” (Cross and Sproull, 2004: 446). ISH positions organizational learning in relation to the organization members who can provide helpful or relevant information (Constant et al., 1996; Kellogg et al., 2021), who are identifiable and available to support learning (Bailey and Barley, 2011; Leonardi, 2007), and who have status and legitimacy within the field (Grodal, 2018). From this perspective, the “individual is foregrounded to others in terms of assistance” (Bailey and Barley, 2011: 264). Such assistance may be sought from superiors or peers (Cross and Sproull, 2004) or, with the help of computer networks, from “the kindness of strangers” (Constant et al., 1996), as long as the legitimacy of the knowledge claims, the positional standing of the seeker, and the status of the provider are not in question (Bailey and Barley, 2011; Kellogg et al., 2021).

To make progress on the task at hand (Cross and Sproull, 2004; Kim et al., 2018), the advice giver and seeker in the ISH model must share a relevant context (Grodal et al., 2015) including an epistemic understanding (Fayard et al., 2016). That is, the learning model of advice giving and seeking assumes that one is able to formulate a question, a request, and a comment in a way that another actor can understand and respond to it. If the advice seeker has formulated the question based on short term efficiency, but the advice giver answers based on a long horizon of what is valuable (Bluedorn and Denhardt, 1988), the two have no shared temporal context for joint accomplishment (Fayard et al., 2016; Grodal et al., 2015). Providing advice can be costly in areas where currency requires constantly updating the knowledge (Bailey and Barley, 2011). Haas et al. (2015) emphasize that advice giving and receiving are not just about the helper-seeker relationships but also about the match between helper and problem: Which problems are helpers willing to devote their time and knowledge to?

The ISH model has evolved with the advances in information and communication technology in organizations (Constant et al., 1996). The learning model assumes supportive technology (e.g. intranets, search engines, expert systems) that facilitates but also shapes the relations and interaction between helpers and learners (Choi et al., 2010). Collaboration technology (e.g. wikis) allows the joint publication of contents and their shaping—that is, the continuous revision of information (Majchrzak et al., 2013).

From the organizational knowledge perspective digital technology provides “the meta-memory (i.e. the location and subject of the knowledge)” for transactive memory systems (TMSs) (Nevo et al., 2012: 71). TMSs represent the individual and collective understanding of who knows what, and of how to retrieve the knowledge (Miller et al., 2014; Ren and Argote, 2011). Social media-based informal advice networks improve the visibility of experts and can ease expert identification and access issues (Leonardi, 2014; Leonardi and Treem, 2020). Yet TMSs still require members to share experiences and to develop a joint understanding of the context (Brandon and Hollingshead, 2004; Ren and Argote, 2011). Hierarchical position and status can still matter in the asking and helping processes in enterprise online forums (Pu et al., 2022; Riemer et al., 2015). Although an intense interplay emerges between digital technology, helpers, and seekers, the authority and validity of knowledge depends on its point of origination.

Organizational intelligence

Bailey and Barley (2011) illustrate different ISH environments with varying consequences for organizational intelligence. In one exchange environment, senior structural engineers orchestrated the learning that the juniors would experience, sometimes curtailing a newcomer’s own appetite to learn. This context contrasts with the environment of hardware engineers who shared information among all, independent of rank. Each person had a responsibility for their own learning and was expected to know to whom they could turn for help. Learning in these two contexts was conditioned on a senior’s pedagogical approach in the first case and on a junior’s individual initiative in the second case. The study highlights the importance of the diversity in how people teach others and learn—by themselves, by seeking info, and by asking or offering help.

The existence of learning depends in part of the particular organizational environment. In the Bailey and Barley’s (2011) first case, one junior employee’s desire to learn was not heeded, whereas in the second case, learning was conditional on each individual’s interest and motivation. In the first case, the breadth of learning is defined by the superior’s judgment of what is needed for the apprentices to learn, and the depth of learning depends on the superiors’ capability to convey the particular expertise. In the more egalitarian second situation, the breadth of learning depended on the junior’s awareness of the particular knowledge to be learned and shared, whereas depth may be a combination of factors, depending on the engineer’s capability to learn and share. The organizational structures, processes, values, and norms thus influence organizational intelligence.

Generally speaking in the organizational and information systems literatures, digital technology increasingly is constructed in terms of “evolving relations” (Faraj and Leonardi, 2022: 776)—not as a specific digital application or a generalized digital context. A relational view implies that technology is not exogenous to organizational learning but is “constitutively entangled with all aspects of organizing and strategizing” (Faraj and Leonardi, 2022: 776). Hinds and Von Krogh (2024: 3) explain: “The relational view was built on a long history of organizational research that treats technology and technology use as intertwined with the social context (e.g. Barley, 1990; Orlikowski, 2000). Table 1 summarizes the learning models in terms of their key premise that we argue is at stake in terms of the evolving relations. In rather broad terms, we relate the learning models to the role of digital technology. We do not contest that technology, people, and organizations are evolving; instead, we emphasize that they seem to be doing so with potentially unprecedented implications for organizational learning and intelligence.

The three learning models: key premise, role of digital technology, implications to organizational intelligence.

Intelligent technology-enabled trajectories for learning: implications for organizational intelligence

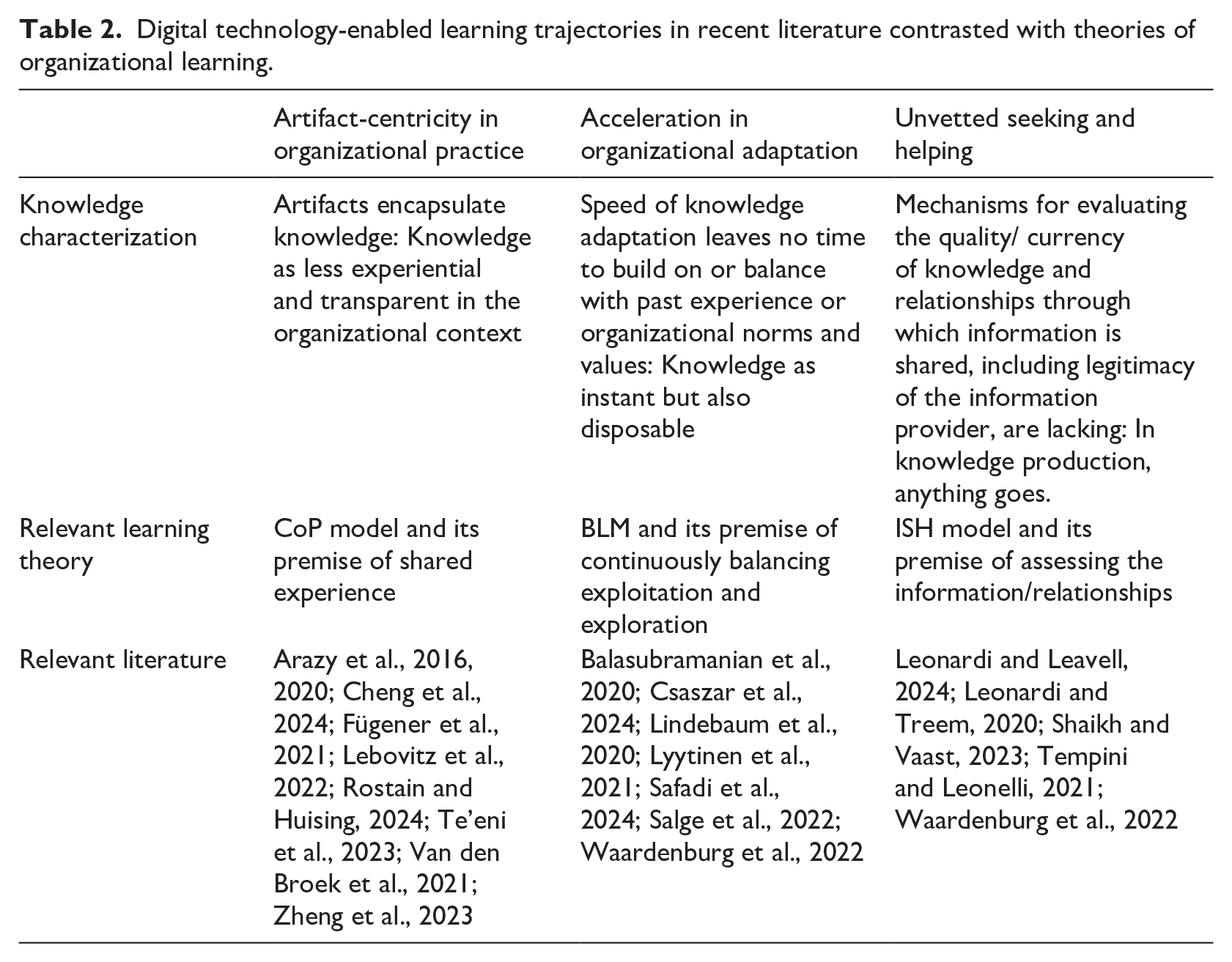

We identify three intelligent technology-enabled trajectories based on recent literature and discuss them from the perspective of the learning theories. Table 2 identifies the works that influenced our conceptualization of these trajectories. It should be noted that the authors do not necessarily advance these trajectories explicitly nor tie them to practices, organizational adaptation, or the temporal context. The trajectories are stylized in order to highlight how they may challenge the premises of the three learning models. We label these three trajectories as follows:

Artifact-centricity in organizational practice. Learning becomes encapsulated rather than shared in experiential practice.

Acceleration in organizational adaptation. Adaptation occurs so fast that learning in organizations provides no time to draw on existing organizational knowledge or to keep exploring further.

Unvetted information in seeking and helping. This trajectory results in learning that lacks a mechanism to assess relevance and currency of questions and answers in terms of organizational goals, values, and norms.

We next discuss each intelligent technology-enabled trajectory in its relation to the organizational learning premise of concern and consider the implications for organizational intelligence. We do not claim that the learning that these trajectories manifest is new, in the sense of novel or new to the world; rather, the trajectories have been emerging and accelerating as digital technology has been evolving.

Digital technology-enabled learning trajectories in recent literature contrasted with theories of organizational learning.

Artifact-centricity in organizational practice: challenges to COP’s learning premised on shared experience

Digital artifacts transfer and store individually or collectively curated information. However, the typical opacity of these digital artifacts leads to challenges under the CoP learning model. In their ethnography of a machine shop, Rostain and Huising (2024) examine the efforts of shop floor machine operators to learn programming skills to penetrate this opacity. The machine operators were determined to scrutinize technology’s outputs (e.g. digital models and figures). However, the logic and workings are hidden in outputs (i.e. digital artifacts). The complex, algorithmically generated simulations, forecasts, and decisions are impenetrable (Anthony, 2021). For example, radiologists could not understand how the algorithm arrived at its conclusion in a study by Lebovitz et al. (2022). Although such encapsulation is potentially expedient, it also makes creating any shared experiences of problem-solving difficult and hence puts pressure on the premise of the CoP learning model in terms of arriving at joint practices.

Another challenge arises because artifact-centricity is supported in open knowledge production models in which participants co-create without any or minimal communication (Arazy et al., 2016, 2020; Zheng et al., 2023). Information embedded in digital artifacts increasingly is accessible to anyone at any point in time, and no temporal connection or community membership is required (Crowston et al., 2019; Howison and Crowston, 2014). Artifacts confer a sort of digital immortality that is independent of any people or organizations (Jarvenpaa and Keating, 2024). Monteiro and Parmiggiani (2019) suggest that digital artifacts may have a reality of their own that they retain. Aaltonen et al. (2021) imply that the connection to what the digital artifacts originally or materially were intended to represent is partially lost when the digital objects are taken out of their original context.

Implications for organizational intelligence

We assert that digital artifacts challenge the tenets of shared experience and community-based learning that are foundational to the CoP model’s perspective of organizational learning. Digital artifacts support the perpetual accumulation of knowledge but are detached from social processes of a community. No interactional experience or observation of the practice may be required (Arazy et al., 2016, 2020; Zheng et al., 2023) as digital artifacts capture the knowledge (Cheng et al., 2024). As explicit knowledge becomes coded, tacit knowledge may weaken and even disappear as the community no longer shares experiences (Massa and O’Mahony, 2021). Clicking a button becomes the sole practice or initiative in launching and engaging a full-scale protest action (Massa and O’Mahony, 2021). If the community is not learning in terms of breadth and depth, the artifacts will not benefit from its accumulated knowledge but may be shaped by sources outside the experience of the community resulting into a loss of organizational distinctiveness.

The lack of community learning does not mean that there would be no learning between the human and the machine. To illustrate the potential, Van den Broek et al. (2021) discuss the development of a new algorithmic system to support hiring job candidates. A human–machine hybrid practice evolved as an outcome of a laborious and long-term deep engagement between the developers, the users, and the algorithmic system. Interestingly, there was no preassigned model for delegating to the human vs delegating to the machine; rather, the human–machine hybrid evolved its division of labor over time as a result of mutual learning and reflection. In contrast, in the Te’eni et al. (2023) study, reciprocal human–machine learning happens as machines and humans are delegated to different tasks in text message classification. The expectation is for co-evolution as both humans and machines learn over time, but no explicit expectations of learning at the organizational level are articulated.

Acceleration in organizational adaptation: challenges to BLM’s learning premised on continuity and speed

Digital technology is accelerating organizational decisions and challenging the learning on which such decisions are based. For example, bots—automated accounts in online social networks—facilitate speed (Salge et al., 2022). When bots become responsible for curation and transfer, they also may reconfigure and embellish the curated content before disseminating it (Safadi et al., 2024). Such reformulation can break any connection to the past. The “rapid scaling” (Salge et al., 2022) makes following the changes difficult for organizational members, thus challenging the continuity between past, present, and future.

Exacerbating the impact, current algorithmic training programs are based on datasets that can cover only a narrow and short past; these narrow and short pasts can lead to unreliable predictions in turbulent environments (Balasubramanian et al., 2020). Such datasets may automate organizational action rather than augment it (Benbya et al., 2021; Raisch and Krakowski, 2021). Such automation is valued. In contexts of strategic decisions, Csaszar et al. (2024) illustrate empirically how large language models (LLMs) can generate venture plans and evaluate them at a much higher speed and with equal quality to entrepreneur-generated plans. Acceleration may thus enhance performance at least in the short term.

Implications for organizational intelligence

From the perspective of BLM, accelerated speed may generate instant but narrow learning. The fast learning shuts down exploration in the form of developing new competence and can make organizations potentially “disposable” thus limiting an organization’s adaptability in terms of future goals. Disposable organizations manifest short-run efficiency but have limited life spans (March, 1995). Hence, the challenge to organizational intelligence is to maintain ambidexterity: the ability to exploit and explore knowledge (Park et al., 2020).

The existence and breadth of learning can increase in the short term, but such learning allows no time for cogitating (Weick, 1995) and hence for rendering intelligence in depth. Humans may not be able to keep up with the pace at which algorithms learn from each other, and algorithms also may reinforce a programmatic or formal decision-making rationale that differs from humans’ way of reflecting upon ambiguities related to a decision (Lindebaum et al., 2020) or taking time to explore novel opportunities (March, 1991). The integration of these different reasoning capacities can engender benefits if the developers of the algorithmic system, its users, and the system itself are part of the laborious mutual learning process (Van den Broek et al., 2021). Acceleration may become an enemy of such a process. In addition, forgetting, and even selective “amnesia” that are key aspects of organizational learning (Argote, 2013) can be challenging with complex algorithmic models (Greengard, 2022). Algorithms do not “forget,” unless designed to do so. Without this forgetting capacity, organizations may not be able to adapt as environments change (Argote, 2013).

In sum, acceleration may sever the link between people and organizations. Intelligent technology’s capability for fast speeds and broad scale leaves people unable to follow or to participate on their own terms. Synthetic knowing also can override the sensory experiences of people that is crucial to navigating, exploring and exploiting operating contexts (Monteiro and Parmiggiani, 2019). As human users’ attention is blocked or diverted or, alternatively, as human users are misled or mis-guided about where they should focus their attention, machines may “cultivate humans” (Lyytinen et al., 2021: 438). Lyytinen et al. (2021: 438) express confidence however that metahuman systems—humans + machines—will eventually “come to address social processes with multiple viewpoints” overcoming current challenges such as biases or “systems run amok.” Any organizational code consisting of norms and values, as suggested by the BLM, might then become part of a potentially monolithic metahuman system consisting of “large ecosystems in manufacturing, agriculture, transportation, finance, medicine, and other fields” (Lyytinen et al., 2021: 439).

Information that remains unvetted: challenge to ISH’s learning premised on its currency

Widely accessible technology has expanded the boundary for information seeking and helping (Grodal, 2018; Kane et al., 2014; Kim et al., 2018; Raisch and Fomina, 2025). Information may travel across different interfaces, thus expanding the number of accessible sources for inquiry. The information may originate from people outside an organizational boundary who lack any shared interest or context (Jarvenpaa and Selander, 2023). Information may come from a record that offers no historical context, which degrades and diminishes its currency, assumed by the ISH learning model. These trajectories exacerbate when algorithms may automate the interfaces between modules (Shaikh and Vaast, 2023) complicating the assessment of information.

Assessment of knowledge becomes a challenge when the original sources are unknown or unfamiliar (Leonardi and Treem, 2020; Tempini and Leonelli, 2021). In their study of predictive policing, Waardenburg et al. (2022: 75) found intelligence officers facing an “impassable” boundary between the algorithmic crime prediction system and their ability to interpret it to the Dutch police officers. The intelligence officers returned to their own local sources and solutions. The police officers accepted the outputs of the intelligence officers without knowing that the algorithmic system no longer generated them.

Furthermore, digital outputs may not be questioned even when they lose the intended mirroring of the physical reality. Leonardi and Leavell (2024) suggest that digital twins—interactive representations of a real-world phenomenon—may be taken-for-granted by their human users despite missing critical reality. The severing of the link between digital and physical representations may become evident when a territory reproduced in a computer simulation results in the loss of knowledge about the corresponding real-world territory (Leonardi and Leavell, 2024). Beane (2019) documents “shadow learning” in robotic surgery as the trainees were not able to interact with senior surgeons to develop their skills. In banking, Anthony (2021) found that algorithmic techniques in banking potentially harmed junior members’ careers because their use replaced the development of professional expertise, including the assessment about the outcomes of the algorithm-based decision-making.

Implications for organizational intelligence

To the extent that a shared script or thought world (Von Krogh et al., 2003; Preece and Shneiderman, 2009), anchored to a shared point in time, is lacking, information seekers and helpers face difficulty in evaluating the acquired information (Gherardi and Nicolini, 2001). Information may remain unvetted, and there may be no connection to the organization’s goals with shared time horizons, challenging relevance. Argote (2024: 417) underlines the importance of cohesive groups in interpreting knowledge, such as communities of practice or networks that share an epistemic cohesiveness. The ability to assess knowledge may require co-location or perceived similarity (Argote, 2024: 422) and a shared conception of time (Bluedorn and Denhardt, 1988). Establishing whether the learning is emerging or whether it already exists and how to access it can become difficult without sharing a temporal and epistemic context (Brandon and Hollingshead, 2004). Groups and organizations validate learning through the interaction of their members: “Group discussions can provide an opportunity for that information . . . to be validated as accurate and relevant to the group” (Brandon and Hollingshead, 2004: 640). The significance of this accuracy and relevance pertains to the responsibilities that group members accept and take on.

As with the shifts in other organizational learning premises (i.e. in shared experience in practice and in continuity and speed), the currency of knowledge may become a personal choice rather than an organizational one. Information sources and channels, and the information itself, are no longer assessed by any organizational authority, as premised in the ISH learning model, but an individual preference. The information may then become disconnected from organizational goals, values, and temporalities.

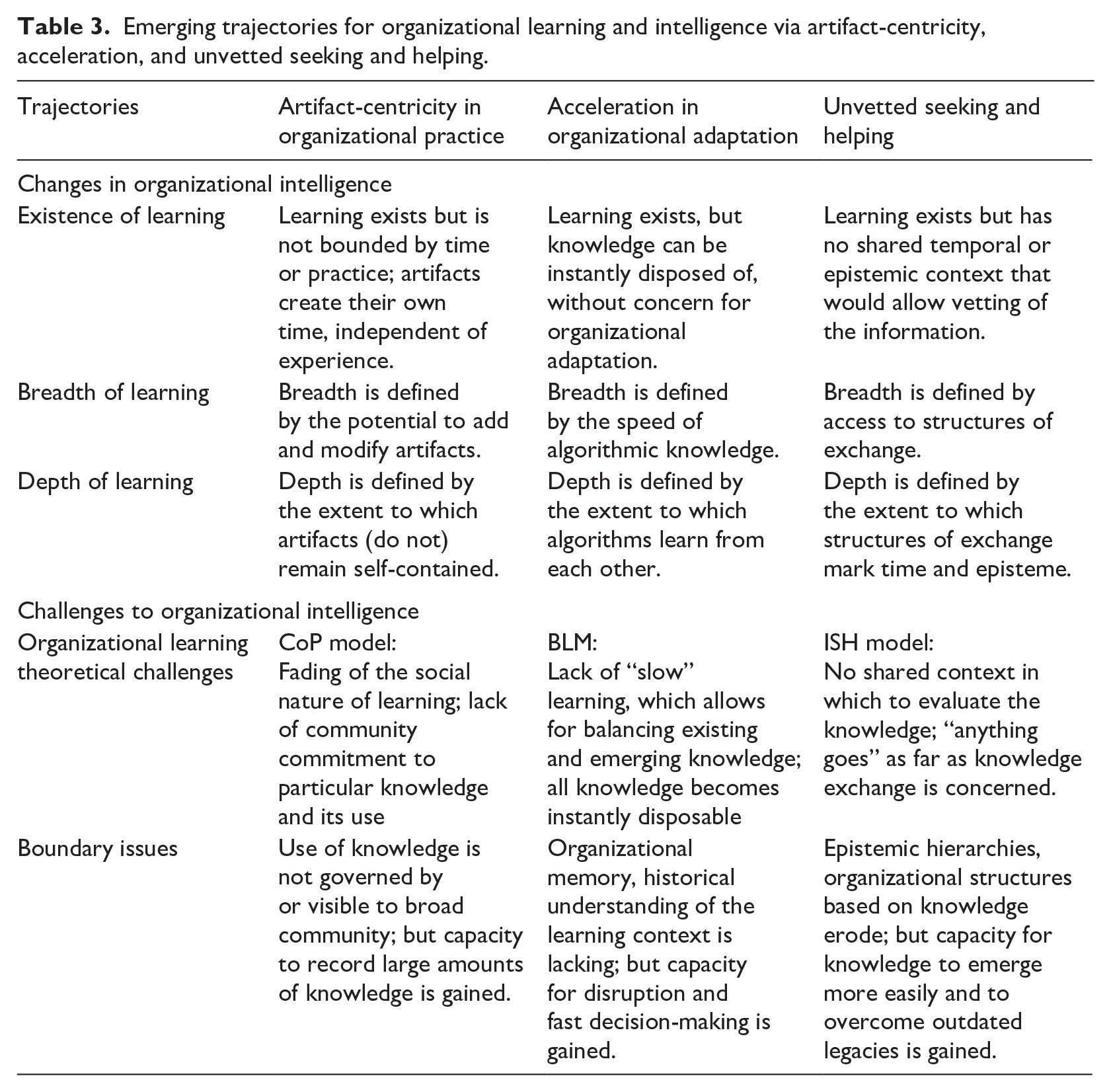

Table 3 provides a summary of the trajectories and their related challenges to organizational learning and intelligence. The relationships between technology, people, and organizations evolve but these relations do not necessarily increase organizational intelligence. In fact, we argue that the three technology-enabled trajectories of artifact-centricity, acceleration, and unvetted seeking and helping challenge the existing learning models and their basic premises.

Emerging trajectories for organizational learning and intelligence via artifact-centricity, acceleration, and unvetted seeking and helping.

Despite the challenges, these trajectories also open up opportunities for knowledge that can be traded and accessed as artifacts, accelerating and perhaps automating organizational action, and eliminating the need for context-sensitive human interaction. The outcome likely will be a radically different notion of intelligence located not in human experience but in artifacts and intelligent machines’ learning. There are challenges, as discussed in this essay, and also benefits in terms of the capacity to record and access large amounts of data, to accelerate decision-making, and to emerge and develop new knowledge, independent of the constraints that can be imposed by legacy social structures and systems.

Note that, in terms of the boundaries of our assertions, our argument is about organizational learning and intelligence. The trajectories for learning may improve performance, even though the survival of an organization as a meaningful, distinct unit may become less certain. The trajectories may make organizational boundaries increasingly porous, leading to difficulties in distinguishing organizations based on their competences and history. Organizations may then become increasingly commoditized and easily substitutable as part of larger cooperative networks. Intelligent technology may gain agency relative to people and organizations even if individuals also gain further capabilities for scaling up action. The logic of decision-making may shift from experiential learning to predictive and generative learning by machines.

Provocations for future research: implications for organizational intelligence?

As technology becomes ever more intelligent, relations between technology, people, and organizations continue to change (Bailey et al., 2022; Faraj and Leonardi, 2022). We assert that rather than increasing the entanglement between the three, there may be weakening links as intelligent technology encapsulates knowledge, functions, or processes. Many of the writings on intelligent technology point to the lack of visibility and transparency of the knowledge and its origins or applications (Anthony, 2021; Beane, 2019; Waardenburg et al., 2022). In anticipation, March (1995) emphasized the need for “awareness of the existence of relevant knowledge, access to the knowledge, and the capability to use it” but also warned that “[n]one of these is assured” from the organizational perspective.

As a result, understanding how to adapt technology to people and organizations, and vice versa, may become difficult (Benbya et al., 2021). Technology, people, and organizations may follow evolutionary paths that diverge from each other. Such trajectories may weaken or constrain human agency; the trajectories also might expand the agency as people engage in more autonomous local action (Waardenburg et al., 2022).

However in our organizational level analysis, organizational learning—as understood in prior theory—is challenged by intelligent technology. The implications for organizational intelligence are potentially harmful. The picture may be different at the individual or metahuman system level (Lyytinen et al., 2021). To salvage their capacity to learn, organizations urgently need to learn to use intelligent technology intelligently, without sacrificing shared community experiences, learning from the past to see into the future (Kaplan and Orlikowski, 2013), and vetting the information that is on offer. Or, absent these premises, to what extent is there a need for new theories of organizational learning? Will organizational intelligence be supported by something else than learning in the future?

To move forward, we propose directions for future research focusing on intelligent technology redefining the existence, breadth and depth of organizational learning and thereby potentially giving organizational intelligence new meaning.

To what extent might intelligent technology redefine organizational learning?

Digital artifacts can expand opportunities for individual learning (Te’eni et al., 2023). However, the artifacts produced will not necessarily be integrated into the organizational knowledge base; hence, the knowledge base would not be able to help organizations adapt to their environment. Accelerated learning, and the related “fast” knowledge it produces, also can mean that knowledge is executed immediately, as soon as it is accessed; hence, an organizational knowledge base may no longer be a relevant concept. Similarly, information seeking and helping may be replaced by automated functions potentially using new forms of assessment for relevance and currency.

Managing risks may also be deemed less significant (Bengtsson et al., 2020) as human error plays a less of a role (Park et al., 2023). Instead, organizational intelligence may become redefined as the speed of adaptation, potentially resulting in the erosion of organizational life spans. Artifacts might eventually replace organizations as symbols of institutions that have longevity. Future research might examine the extent to which instantaneous adaptation qualifies for or replaces the accumulation of knowledge in organizations.

How does intelligent technology add to the breadth of learning?

People and organizations are notoriously narrow, or myopic, in their organizational learning (Levinthal and March, 1993). Access to ubiquitous information may add to the breadth of knowledge. That is, information may be sourced more widely, even if the value to the organization of the information’s claims is difficult to establish (Kim et al., 2018, Bremner and Eisenhardt, 2021). What happens when or if these multiple sources, with their competing claims, replace epistemic communities? How might the value of knowledge, and hence organizational intelligence, be established without shared goals, values, or temporalities? Future research might explore ways of learning that are not rooted in the joint community experience. Organizational intelligence may then become redefined potentially as the extent of sources to be consulted without the capacity to prioritize, valorize or memorize. Organizational intelligence may also disappear as a meaningful concept if any required information is instantly available and automatically applied.

How does intelligent technology add to the depth of learning?

According to Huber (1991), the depth of organizational intelligence is important for communities and organizations because it gives them distinctiveness and temporal continuity. Translating learning, through organizational socialization, into the structures (routines) and episteme of the organization (DeSanctis and Scott Poole, 1994) is necessary for knowledge to be proper and useful. On the one hand, without such translation, “the structure of meaning” that is “normally suppressed as conscious concern” may be lost, creating a vacuum for “learning [that] occurs within it” (Levitt and March, 1988: 324). On the other hand, such structures of meaning might be holding us back and failing to open new frontiers (see also Pakarinen and Huising, 2023).

Future research might consider bases of learning for organizations that are other than shared meanings and practices. To illustrate, a shared interpretation may cease being a concern between a seeker and helper. How much and what does intelligent technology need to know about the information seeker to understand the individual and organizational goals and interests and then to produce preprocessed solutions? The technology helper may also wish to influence the seeker toward a particular solution through emotional engagement. What might the moral rather than cognitive considerations be? How might the trajectories of learning override existing theories’ focus on organizational cognition and move toward affect and morality in the relationship between people and technology (Sage et al., 2019)? Maybe this is the sea change challenging organizational learning theories: more affective engagement and potentially a greater need to consider moral implications.

Is the received cognitive wisdom of organizational learning and intelligence still a concern in the era of intelligent technology?

The premises of existing organizational learning theories may be outdated. We may be moving toward a reality where intelligence is neither the experiential accumulation of knowledge that is distinctive of a community, providing continuity, nor is it the interactive reflection of the currency of that knowledge. Rather, intelligence may become synthetic and expansive across multiple platforms and networks, and individually channeled. Intelligence may exist within the individual’s or organization’s capacity to access and use particular intelligent technology. Breadth may be about the user’s capacity to prompt the technology while depth may be about the multiple tools and technologies available. Organizational intelligence may become a background against which individuals exercise their agency within the opportunities and constraints that are predefined and/or dynamically adapted. Fooling the system may even become a game showing technology-independent intelligence.

The definition of organizational intelligence remains open. The learning theories matter as they continue to influence organization studies with their premises. Critically examining the existing theories of organizational learning may help us to understand something important about the evolving nature of organizational intelligence but also to examine the possibility that organizational intelligence may be reduced by intelligence technology. To begin this analysis, we have focused on three trajectories for organizational learning but there are many others.

Conclusion

The current essay is a beginning of a conversation about the diverging co-evolution of organizational learning and digital technology in terms of organizational intelligence. In many ways, technology is a boon—in particular, to individual learning.

The issue is organizational. Based on our analysis of organizational learning premises and intelligent technology, our first concern is that emerging trajectories of learning may deprive organizations from their intelligence. This being the case, there is a need to apply intelligent technology more intelligently and respect the premises on which organizational learning depends on for its intelligence. Our second concern is that prior theorizing on organizational learning may pose limits to the future understandings of technology, people, and organizations. Organizations and individuals may be learning in new ways, but such learning may not result in what we have come to understand as organizational intelligence. In that case, there is a need for new theorizing on organizational learning and intelligence.

We invite scholars to explore the implications of the evolving technology frontiers on organizational intelligence. Such examination will suggest further trajectories and boundaries to the promise of intelligent technology and organizational learning and help define organizational intelligence amid the fast-evolving relations between technology, people, and organizations.

Footnotes

Acknowledgements

We would like to thank the special issue editor team for clear developmental feedback and the two anonymous reviewers for their helpful comments.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.