Abstract

Generative artificial intelligence (GenAI) is transforming teaching and learning yet little is known about predictors and outcomes of GenAI use among psychology students. Based on theories of new technology uptake, we examined perceived usefulness, ease of use, attitudes toward technology, perceived risk and anxiety, sensitivity to quality and rewards, and workload and time pressure as potential predictors of self-reported GenAI use among psychology students (N = 164) at the University of Technology Sydney. We also examined potential associations with self-reported procrastination, memory difficulties, and grades on GenAI secure (in-person, supervised) versus unsecure (unsupervised) assessments. More positive attitudes toward technology, greater perceived ease of use, greater workload, and less time pressure predicted greater use of GenAI. Additionally, greater use of GenAI was associated with greater procrastination and memory difficulties, but did not correlate with assessment grades. These findings suggest that, when time permits, GenAI may be used by psychology students as a tool to alleviate academic workload demands, with unclear implications for authentic learning. Exploratory analyses revealed that grades on GenAI secure and GenAI unsecure assessments were consistently positively correlated. This suggests that, in the first half of 2024, students were using GenAI in a way that did not artificially inflate grades on GenAI unsecure assessments.

Generative artificial intelligence (GenAI) tools such as generative pretrained transformers (GPTs) are reshaping teaching and learning in higher education. These tools use machine learning techniques to produce novel text, images, and audio that resemble human-generated content. It has become clear that psychology students will require competence in working effectively and ethically with GenAI (Richmond & Nicholls, 2024). As the American Psychological Association (2024) notes, researchers in psychology will benefit from efficient analysis of large datasets, the ability to simulate human behavior, and innovative tools for experimental design. Clinicians will benefit from reduced administrative burden, the ability to train with simulated interactions, improved diagnostic precision, and provision of more personalized treatments. Since GenAI chatbots are software programs that simulate human conversations, they may also be used to deliver therapy, making psychological services cheaper and more accessible (American Psychological Association, 2024). To ensure psychology students are well prepared for this workforce of the future a critical first step is to understand predictors and outcomes of their use of this technology.

Released to the public in November 2022, the user-friendly ChatGPT (OpenAI, 2025) received widespread attention and rapid adoption. This technology has the potential to provide a personalized adaptive learning experience. However, GenAI tools are limited by the quantity, quality, and context of data they are trained on, making them prone to “hallucinations” that are likely to impair student learning (Alkaissi & McFarlane, 2023). GenAI also puts academic integrity at risk if used by students in a way that bypasses learning or misrepresents abilities (Sullivan et al., 2023). These benefits and risks of GenAI are likely to influence students’ willingness to use this new technology.

GenAI and Technology Acceptance Theories

Several well-validated theories help to explain the acceptance and use of novel technology. The Theory of Reasoned Action posits that attitudes and norms produce behavioral intentions that influence actual behavior (Ajzen & Fishbein, 1980). Based on this idea, the Technology Acceptance Model (TAM; Davis, 1989; Davis et al., 1989) was devised as a framework to understand the complex factors influencing acceptance and use of new technology. It proposes that the extent to which someone believes the system will enhance their performance (i.e., perceived usefulness) and be easy to learn (i.e., perceived ease of use) will influence their attitudes, which in turn affects intention to use, and actual use, of the new technology. The TAM is the most used framework in research aiming to understand adoption of AI by teachers and students (for a review of 22 studies see Al-Momani & Ramayah, 2024). A unified theory has also been developed based on a combination of the TAM and other frameworks such as the uses and gratification theory, which suggests that users are motivated to interact with technology based on their unique needs and preferences (Katz et al., 1973; Xie et al., 2024). The Unified Theory of Acceptance and Use of Technology (UTAUT; Venkatesh et al., 2003) identifies three predictors of intention to use technology: performance expectancy (i.e., perceived usefulness), effort expectancy (i.e., perceived ease of use), and social influence (i.e., the degree to which the technology is recommended by people with valued opinions). According to the UTAUT, intention and facilitating conditions (i.e., perception of organizational and technical support for the new system) predict usage behavior.

Existing theories explaining the uptake of new technology have been adapted to specific contexts. For example, the Extended UTAUT (UTAUT2; Venkatesh et al., 2012) included three additional variables influencing new technology uptake in a consumer context: price value (costs and benefits), hedonic motivation (enjoyment), and habit (automatic behavior associated with repeated use). This theory was further adapted by Foroughi et al. (2023) to examine the use of AI among university students by replacing price value with “learning value” (i.e., AI as a tool to enhance knowledge, save time, and achieve learning goals). They found that students enrolled in coursework in Malaysian universities (N = 406) reported performance and effort expectancy (i.e., usefulness and ease of use), hedonic motivation, and learning value, but not social influence or habit, to significantly influence intention to use ChatGPT for learning purposes. A further study extended the TAM to develop and validate the TAM Edited to Assess ChatGPT Adoption (TAME-ChatGPT) questionnaire (Sallam et al., 2023). They found that lesser perceived risks (e.g., concerns about reliability of information or privacy), and anxiety (e.g., being afraid of becoming too dependent on ChatGPT), as well as greater perceived usefulness (e.g., belief that information is reliable or saves time), perceived ease of use (e.g., belief that the tool is easy to learn and does not require specific expertise), and positive attitudes (e.g., enthusiasm to use the technology and perceiving it to be fun) predicted greater adoption of ChatGPT among university healthcare students.

In potentially the first study to examine adoption of GenAI in coursework specifically among psychology students, Gado et al. (2022) developed and tested an AI acceptance model. They surveyed 216 students (75.9% women) studying psychology at German universities (61.2% graduate/master's, 36.9% undergraduate/bachelor's students, and 1.9% studying psychology as a minor). The students reported on their attitudes toward AI and specifically, the likeability (e.g., friendliness) and perceived intelligence (e.g., competence) of AI, as well as their perceptions of safety (e.g., feeling anxious about using AI). They also reported on their intention to use AI in the coming months, whether others might influence them to use GenAI (i.e., perceived social norm), their perceptions of GenAI usefulness and ease of use, and their perceived knowledge of AI in comparison to other students. They found that greater perceived usefulness, perceived social norm, perceived knowledge, and attitudes toward AI, but not perceived ease of use, predicted greater intention to use AI. However, it was also shown that greater perceived usefulness and ease of use predicted more positive attitudes, which in turn predicted intention to use AI. The current study extended this work by examining self-reports of actual (rather than intended) use of GenAI among university students studying psychology, and by investigating additional potential predictors associated with academic integrity.

GenAI and Academic Integrity

To maintain accreditation with the Australian Psychology Accreditation Council, higher education providers must demonstrate how their programs ensure students acquire the knowledge, skills and attributes required for competent and safe practice. Similarly, Australian universities must also demonstrate to the Tertiary Education Quality and Standards Agency (2024) how students attain course intended learning outcomes. In this context, academic integrity is not only an ethical concern but a mechanism for assuring competence. Inappropriate use of GenAI may allow students to bypass opportunities to develop core competences (Eke, 2023). However, Cavazos et al. (2024) found that first- and second-year students (N = 569) from a U.S. university reported “information gathering” (e.g., finding sources for assignments) as their main use of ChatGPT. This was considered an ethical academic practice relative to “response gathering” (e.g., answering exam questions). Students were also able to accurately identify behaviors associated with use of AI that constitute cheating (e.g., responding to a discussion topic or assignment prompt) as opposed to ethical behaviors (e.g., validating or verifying information). Similarly, the students were motivated primarily by the “value and convenience” offered by ChatGPT (e.g., provides useful feedback or friends reported a positive experience) as opposed to “hedonism” (e.g., beating the system or being rushed for time). This distinction is critical, as feedback-seeking behaviors can support specific learning processes central to competence development, including error correction, skill refinement, and self-regulated learning. Despite students’ apparent orientation toward ethical use, it remains essential to design assessment and teaching practices that ensure these GenAI-supported activities translate into demonstrable competence rather than superficial task completion.

Students have attributed breaches of academic integrity, such as plagiarism, to high workload demands and tight deadlines (Mulenga & Shilongo, 2024). It has also been shown that university students report greater use of ChatGPT when they have higher academic workload and time pressures (Abbas et al., 2024). The authors explained that greater workload demands and time pressure create stress, which may lead students to seek short cuts in meeting their deadline. These short cuts may include the use of tools such as GenAI that may in turn compromise learning (Devlin & Gray, 2007; Guo, 2011). Conversely, students reported lesser use of ChatGPT when they were more sensitive to academic rewards (grades), suggesting concerns about poorer grades if the technology was used (Abbas et al., 2024). It was reasoned that the students may have perceived their own work as higher quality than the AI output, even though there was no evidence for an association between sensitivity to quality (i.e., increased concerns about standard of own academic output) and ChatGPT use. In the current study, we argue that sensitivity to rewards and quality should have a similar influence on use of GenAI. As such, we expected that greater sensitivity to both academic rewards and coursework quality would be associated with lesser use of GenAI. We also expected that greater workload demands and time pressure would predict greater use of GenAI.

In addition to students’ perceptions of workload and time pressures, their grades on different types of assessments may shed light on whether GenAI is being used in a way that bypasses learning. One approach to transforming assessment in the context of GenAI has been the “two-lane approach” (see Curtis, 2025). In the first lane, assessments prohibit the use of GenAI tools and require a high degree of security, such as in supervised in-person exams (i.e., secured assessments). In the second lane, assessments may be completed with unlimited use of GenAI tools and are considered “unsecured” assessments. In the current study we refer to secure assessments as those completed under supervision, where GenAI tools are not available, and unsecure assessments as those completed outside of a secure environment (i.e., unsupervised). The unsecure assessments may be completed with the assistance of GenAI tools even when use of GenAI is prohibited. Typically, students’ grades on different types of assessments are correlated since students who perform well on one assessment often perform well in other assessments. However, evidence of the extent to which GenAI use is already influencing grades for unsecure assessment tasks is currently lacking. An empirical question is whether students who use GenAI perform well on unsecure assessments but not secure assessments. This is an exploratory question in the current study, without a preregistered hypothesis.

In addition to potential effects on grades, greater use of GenAI has been associated with greater procrastination and memory difficulties (Abbas et al., 2024). Although these variables may also be conceptualized as predictors of GenAI use, Abbas et al. argue that GenAI is likely to lead to greater procrastination if students put off completing an assessment task until the last minute with knowledge that GenAI may reduce the time and effort typically required. They also argue that overreliance on GenAI may weaken mental exertion and critical thinking which in turn will result in memory difficulties. The current study therefore examined procrastination and memory difficulties as outcomes associated with greater use of GenAI.

The Current Study

The aim of the current study was to examine predictors and outcomes of GenAI use among psychology undergraduate students. A secondary goal was to explore associations between use of GenAI and assessment grades. The preregistered hypotheses were that greater perceived risk and anxiety would be associated with lesser use of GenAI (H1), while more positive attitudes toward technology (H2), greater perceived usefulness and ease of use (H3), and greater workload and time pressure (H4) would predict greater use of GenAI. Sensitivity to rewards (i.e., grades) and quality of coursework were expected to predict lesser use of GenAI (H5). We also expected that use of GenAI would be associated with greater procrastination and memory difficulties (H6).

As noted in the preregistration, we explored correlations between grades on different types of assessments and use of GenAI, as well as correlations between grades for two types of unsecure assessment (i.e., an unsupervised online quiz and an unsupervised written assignment) and one secure assessment (i.e., a supervised in-person quiz) in each of two first-year undergraduate subjects (i.e., Introduction to Psychology A and Positive Psychology). These two subjects were delivered in the first half of 2024 at the University of Technology Sydney.

Methods

Participants

Students enrolled in a psychology degree at the University of Technology Sydney (N = 164; M age = 21.7 years, SD = 8.28; 73.8% women, 23.2% men, 3.0% other) participated in this study in exchange for course credit. The sample included 142 first-year undergraduate students and 22 graduate diploma students, noting that only grades from undergraduate assessments were analyzed in the exploratory analyses. According to a priori G*Power analysis, a multiple regression with nine predictors requires 141 participants to achieve 90% power to detect a medium sized effect (f2 = 0.15). English was a first language for 92.1% of the participants. Among the 92.1% of participants (i.e., 151 out of 164) who had used GenAI, frequency of use was reported as daily or almost daily (5.3%), several times a week (42.4%), a few times a month (40.4%), or once or twice a year (11.9%). A small proportion (12.6%) reported paying to use GenAI, and the university provided all students with free access to Copilot (Microsoft, 2025). The study was approved by the University of Technology Sydney Human Research Ethics Committee (ETH24-9156) and was preregistered at AsPredicted (https://aspredicted.org/h47c-dk94.pdf).

Measures and Procedure

After providing informed consent, participants reported demographic information and information about their use of GenAI, including frequency of use and paid use. They then completed questions about use of GenAI, predictors of GenAI use, and outcomes of GenAI use.

Perceived Risks, Technology Attitudes, Anxiety, Usefulness, and Ease of Use

The TAME ChatGPT survey (Sallam et al., 2023) measures student attitudes toward ChatGPT. We adapted the survey by replacing “ChatGPT” with “GenAI.” Participants were advised that “GenAI in this survey refers to GenAI such as ChatGPT, Claude, Copilot, Perplexity, or similar tools.” All participants responded to questions about perceived risks (five items; e.g., “I am concerned about the reliability of the information provided by GenAI,” and “I am concerned that using GenAI would get me accused of plagiarism”), positive attitudes toward technology (five items; e.g., “I am enthusiastic about using technology such as GenAI for learning and research,” and “I trust the opinions of friends or colleagues about using GenAI”), and anxiety (three items; e.g., “I am afraid of relying too much on GenAI and not developing my critical thinking skills”). Only participants who reported having used GenAI answered questions about perceived usefulness (six items; e.g., “GenAI is more useful than other sources of information that I have used previously”), and perceived ease of use (13 items; e.g., “GenAI does not require extensive technical knowledge”). Each item was assessed using a 5-point scale from 1 (disagree) to 5 (agree). Each subscale demonstrated good reliability in the current sample (Cronbach's alphas .84 to .88). Note that Sallam et al. (2023) also included an additional three-item perceived risk subscale which was completed only by students who had previously used GenAI and which was not analyzed in the current study. Instead, we analyzed data from the five-item perceived risks subscale (as described above) as all participants (rather than only those who had used ChatGPT) completed this scale.

Workload, Time Pressure, and Sensitivity to Rewards and Quality

Predictors of GenAI use were measured using the Abbas et al. (2024) questionnaire which included a four-item subscale measuring workload (e.g., “My academic workload is too heavy”), a four-item subscale measuring time pressure (e.g., “I find it difficult to submit my assignments and projects within the deadlines”), and two 2-item subscales measuring sensitivity to academic rewards (e.g., “I am worried about my GPA”) and sensitivity to quality (e.g., “I am sensitive about the quality of my course assignments”). Participants responded to each item on a scale from 1 (strongly disagree) to 5 (strongly agree). Each subscale demonstrated good to excellent reliability in the current sample (Cronbach's alphas .80 to .93).

Procrastination and Memory Loss

Outcomes of GenAI use were measured using the Abbas et al. (2024) questionnaire which includes a four-item subscale measuring procrastination (e.g., “I often start things at the last minute and find it difficult to complete them on time”), and a three-item subscale measuring memory difficulties (e.g., “Nowadays, I can’t retain too much in my mind”). Participants responded to each item on a scale from 1 (strongly disagree) to 5 (strongly agree). Each subscale demonstrated good reliability in the current sample (Cronbach's alphas = .86 for procrastination and .87 for memory).

Use of GenAI

Use of GenAI was measured using the Abbas et al. (2024) eight-item scale, which was adapted by replacing “ChatGPT” with “GenAI.” Examples of questions include, “I use GenAI to prepare for my tests or quizzes” and “I rely on GenAI for my studies.” Participants responded on a scale from never (1) to always (6). The scale demonstrated excellent reliability in the current sample (Cronbach's alpha = .94).

Assessment Grades

We analyzed assessment grades as a proportion of the full grade (i.e., 100%) for each of three assessments in two undergraduate psychology first-year subjects completed in the first half of 2024. These subjects included the same psychology students, and no graduate students, and did not include students studying other courses. The assessments analyzed in Introduction to Psychology A included an online unsupervised quiz, an in-person supervised quiz, and a 1,500 word critical review essay. The assessments analyzed in Positive Psychology included an online unsupervised quiz, an in-person supervised quiz, and a 2,000 word reflection. In both subjects, the online quizzes contributed to 15% of the final grade, while the in-person quizzes contributed 30%. The written assessments contributed to 40% of the final grade in Introduction to Psychology A, and 45% in Positive Psychology.

Students were advised in the subject outlines for both subjects that “Using AI as a tool to assist in your studies may be beneficial; however, it is not a shortcut for your own learning. Copying and pasting AI-generated content as your own is a violation of the UTS academic integrity policy, and it also undermines your learning and professional development.” In the Positive Psychology unsecure online quiz, students were further advised “You are not allowed to use artificial intelligence (e.g., ChatGPT) while answering questions. If you are found to be colluding or using artificial intelligence, you are putting yourself at risk of academic misconduct.”

Results

Preregistered Hypothesis Testing

In line with the preregistered plan, five data points more than three SDs from the mean were adjusted to the M ± 3 SD. We then examined correlations between potential predictors and use of GenAI, as well as correlations between use of GenAI, procrastination, and memory difficulties. Potential predictors were included in a multiple regression model with use of GenAI as the outcome variable.

Intercorrelations

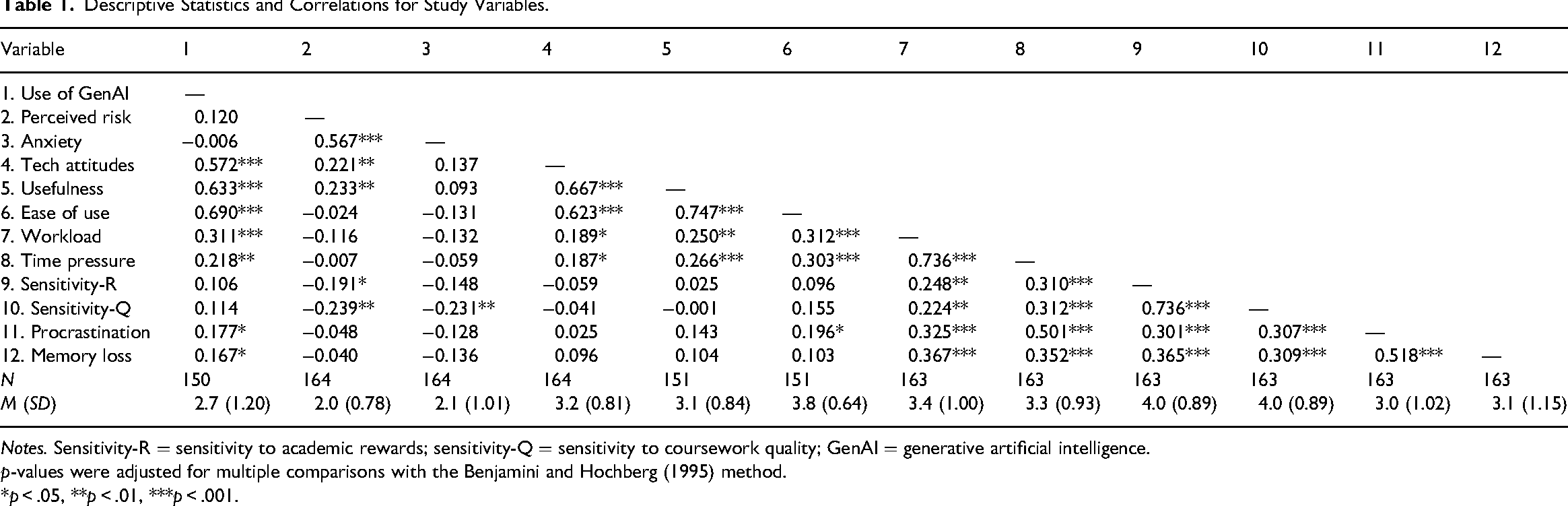

Spearman correlations (corrected for multiple comparisons) were conducted due to violation of the assumption of normality. As shown in Table 1, greater technology influence, perceived usefulness and ease of use, and workload and time pressure were associated with greater use of GenAI (see Supplemental Figure S1). In addition, greater use of GenAI was associated with both greater procrastination and greater memory difficulties.

Descriptive Statistics and Correlations for Study Variables.

Notes. Sensitivity-R = sensitivity to academic rewards; sensitivity-Q = sensitivity to coursework quality; GenAI = generative artificial intelligence.

p-values were adjusted for multiple comparisons with the Benjamini and Hochberg (1995) method.

*p < .05, **p < .01, ***p < .001.

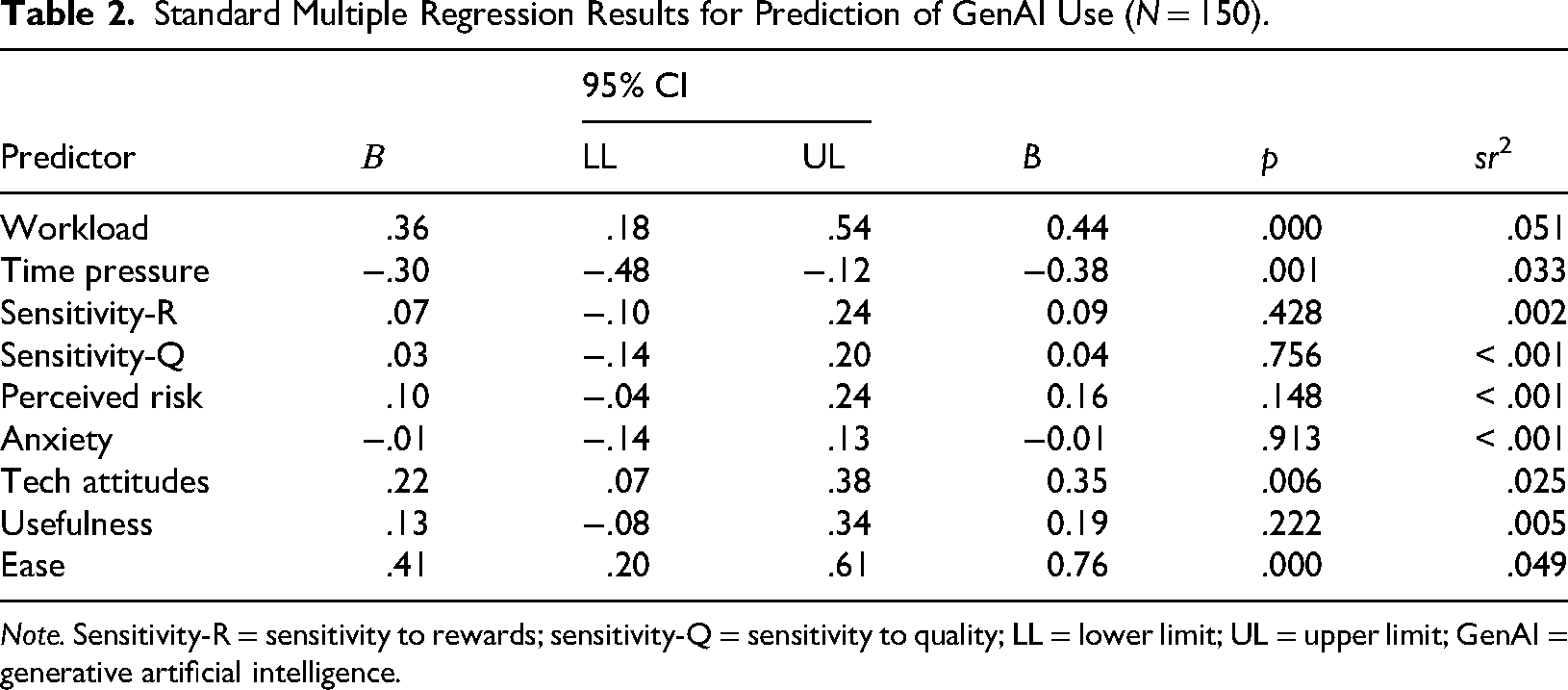

Predictors of GenAI use

Potential predictors (i.e., perceived risk, anxiety, technology attitudes, usefulness, ease of use, workload, time pressure, sensitivity to rewards, and sensitivity to quality) were included in the model. The overall model was significant, F(9, 140) = 19.4, p < .001, adjusted R2 = .53. The significance of each individual predictor within the regression model is shown in Table 2. Greater use of GenAI was predicted by more positive attitudes toward technology, greater perceived ease of use, greater workload, and less time pressure. Use of GenAI was not predicted by sensitivity to rewards, sensitivity to quality, perceived risk, anxiety, or perceived usefulness.

Standard Multiple Regression Results for Prediction of GenAI Use (N = 150).

Note. Sensitivity-R = sensitivity to rewards; sensitivity-Q = sensitivity to quality; LL = lower limit; UL = upper limit; GenAI = generative artificial intelligence.

Exploratory analyses including procrastination and memory loss as predictors (with and without graduate students) did not change the findings. In each case, GenAI was predicted by greater technology influence, greater perceived ease of use, greater workload, and less time pressure (see Supplemental Materials).

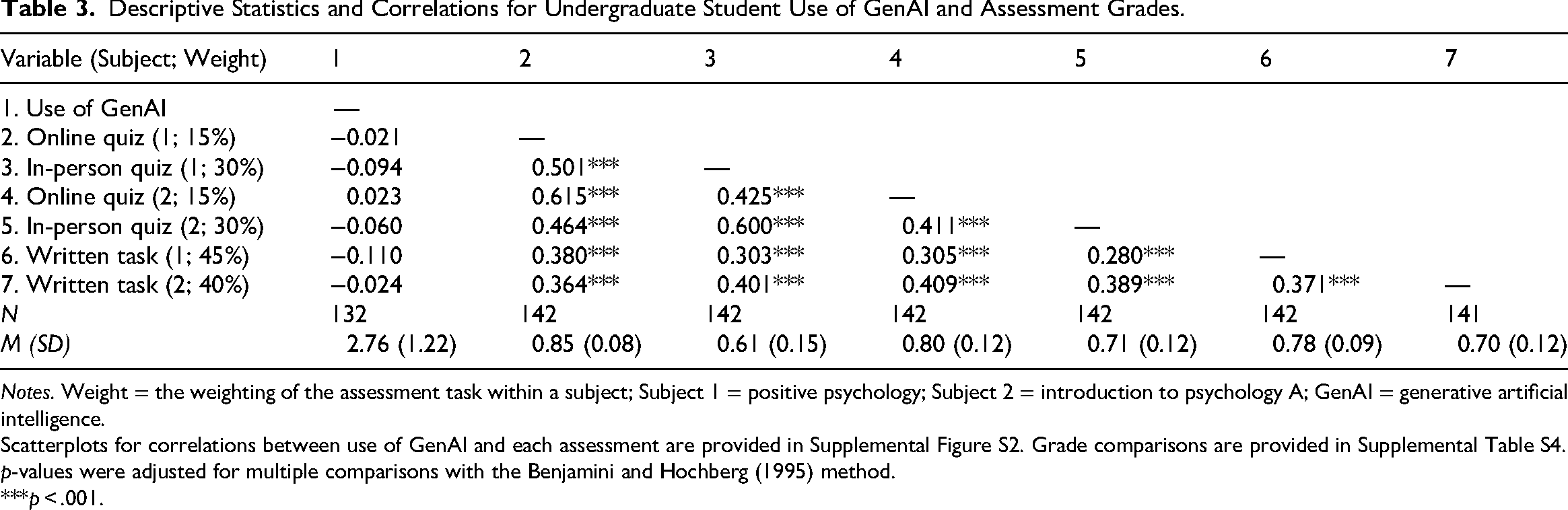

Exploratory Analysis of Assessment Grades

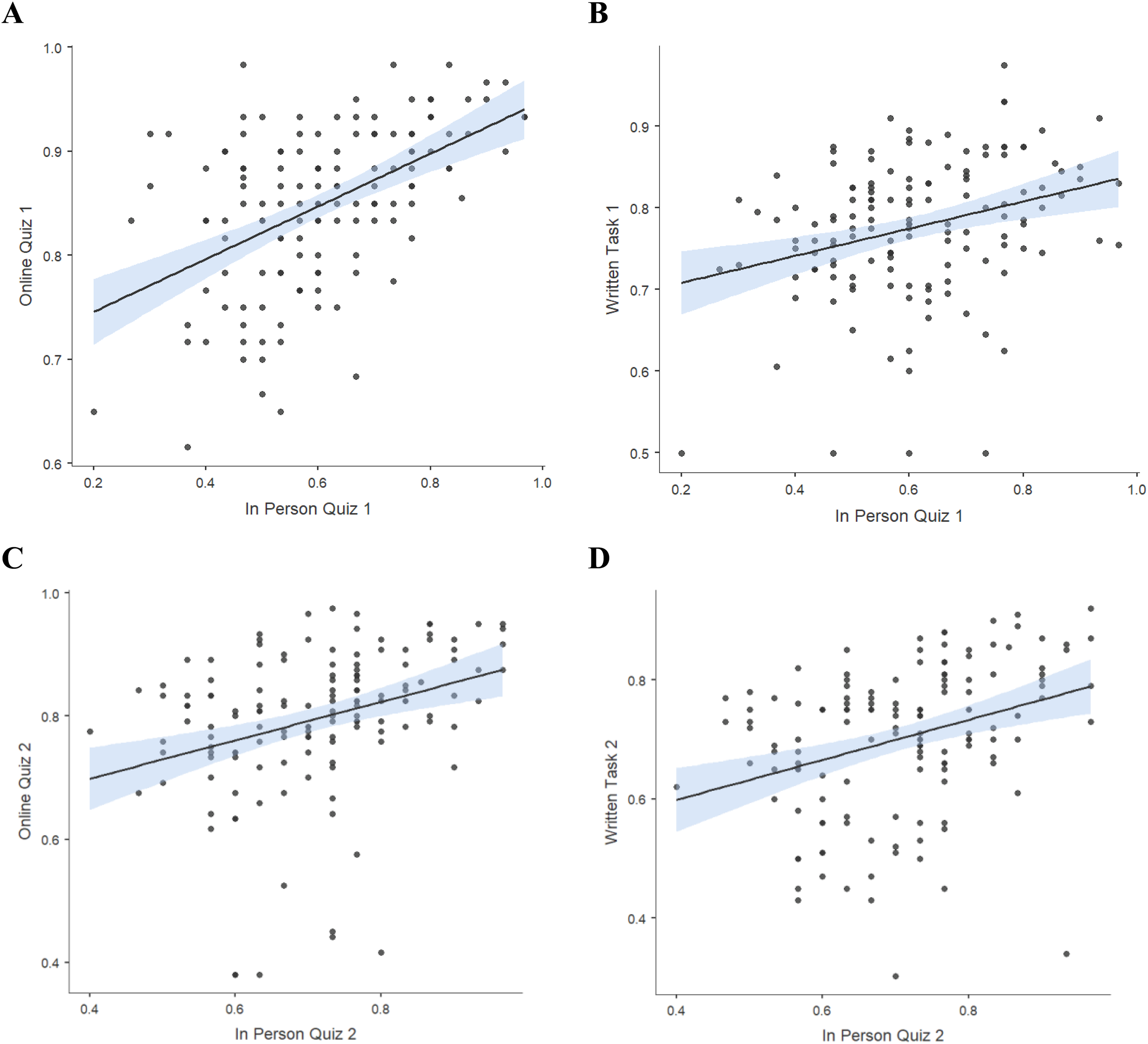

Prior to this analysis, nine data points more than three SDs from the Mean were adjusted to the M ± 3 SD. Spearman correlations (corrected for multiple comparisons) were conducted due to violation of the assumption of normality. As shown in Table 3, use of GenAI for coursework did not correlate with any assessment grades (also see Supplemental Figure S1). In addition, higher grades on the two secure in-person quizzes were associated with higher grades on the two unsecure online quizzes and the two unsecure written assessments. See Figure 1 for scatterplots of associations between grades for the secure assessments and each unsecure assessment.

Associations between grades for in-person quiz 1 and (A) online quiz 1 (N = 142), and (B) written task 1 (N = 142), as well as between grades for in-person quiz 2 (N = 141), and (C) online quiz 2 (N = 142), and (D) written task 2 (N = 141). Note. Online and in-person quiz 1 refer to the positive psychology subject, and online and in person quiz 2 refer to the introduction to psychology A subject. Values on the x- and y-axes represent grades as a proportion of 100%.

Descriptive Statistics and Correlations for Undergraduate Student Use of GenAI and Assessment Grades.

Notes. Weight = the weighting of the assessment task within a subject; Subject 1 = positive psychology; Subject 2 = introduction to psychology A; GenAI = generative artificial intelligence.

Scatterplots for correlations between use of GenAI and each assessment are provided in Supplemental Figure S2. Grade comparisons are provided in Supplemental Table S4.

p-values were adjusted for multiple comparisons with the Benjamini and Hochberg (1995) method.

***p < .001.

Discussion

The overall aim of the current study was to gain an understanding of the predictors and outcomes of GenAI use among psychology university students. There was no support for the hypotheses that greater perceived risk and anxiety, or sensitivity to coursework rewards and quality, would be associated with lesser use of GenAI (H1, H5). However, as predicted, and in line with previous findings (Al-Momani & Ramayah, 2024), more positive attitudes toward the technology predicted greater use of GenAI (H2). There was also partial support for the hypothesis that perceived ease of use and usefulness would predict greater use of GenAI, with only ease of use remaining a significant predictor after controlling for other variables (H3). In line with H4, greater workload predicted greater use of GenAI, while greater time pressure was unexpectedly associated with lesser use of GenAI after controlling for other predictors. There was also support for our preregistered hypothesis that greater use of GenAI would be associated with the outcomes of greater procrastination and memory difficulties (H6). While these variables may also be considered predictors, exploratory analyses demonstrated that they were not associated with GenAI use when controlling for other predictors. In relation to grades as outcomes of GenAI use, exploratory analyses demonstrated that self-reported use of GenAI for coursework purposes was not associated with coursework grades. In addition, grades on unsecure assessments were consistently positively correlated with grades on secure assessments.

Predictors of Psychology Student GenAI Use

In line with theoretical conceptualizations of new technology uptake, including the TAM (Davis, 1989; Davis et al., 1989) and UTAUT (Venkatesh et al., 2003), perceived effort expectancy (i.e., ease of use) and performance expectancy (i.e., usefulness) were positively correlated with use of GenAI. This is consistent with Gado et al.'s (2022) finding among psychology students reporting intention to use AI. However, in the current study, only ease of use remained a significant predictor in the multiple regression model. Thus, psychology students were more likely to use GenAI to the extent that they considered it easy to learn, as opposed to finding it to be more useful than other sources of information. Interestingly, the students in Gado et al.'s study indicated having little knowledge regarding AI. In contrast, 92.1% of students in the current study had used GenAI, and the “use of GenAI” ratings related specifically to coursework-related purposes. It appears that having a greater understanding of the capability of the technology was associated with ease of use being a driving uptake factor. In contrast, GenAI did not appear to be considered useful, at least at the time of the current study, and after controlling for other predictors. As the tool becomes more sophisticated, and as students become more familiar with GenAI, perceived usefulness may become a stronger predictor of the use of GenAI.

The current data suggest that more positive attitudes toward GenAI are associated with greater use of this technology in university coursework. Given that the measure of attitudes encompassed both hedonic and social influences, this finding is consistent with the UTAUT (Venkatesh et al., 2003) and UTAUT2 (Venkatesh et al., 2012). It is logical that use of GenAI was greater among students who were enthusiastic about using this new technology, received positive reviews from friends or colleagues, and/or believed that it was important for academic success. Indeed, Kinskofer and Tulis (2025) found that higher challenge appraisals (i.e., perceiving GenAI use as an opportunity for growth) predicted greater intention to use GenAI. They also found a weak association between threat appraisals and lesser intention to use GenAI. However, in the current study, anxiety related to GenAI use and development of critical thinking skills, along with sensitivity to quality and rewards did not predict use of GenAI. Previous researchers suggested that a lack of association between sensitivity to quality and use of ChatGPT indicated that students perceived their own work to be of higher quality (Abbas et al., 2024). This explanation is likely to also apply to the current findings. Indeed, it is consistent with our finding that perceived usefulness did not predict GenAI use after controlling for other variables. It is more difficult to explain the lack of association between use of GenAI and sensitivity to rewards (i.e., concern about grades) given that Abbas et al. found that greater sensitivity to rewards was associated with lesser use of ChatGPT. However, it may be that, at the time of this study, students had not yet formed an opinion regarding the degree to which GenAI may or may not benefit their grades.

Excessive workload was a significant predictor of GenAI use in the current study, consistent with Abbas et al.'s (2024) investigation of ChatGPT use among university students. One interpretation in line with the theory of moral disengagement (Bandura, 1999), is that students may use “moral justifications” for their unethical behavior to reduce feelings of guilt (Bandura, 1999). One mechanism of moral disengagement is displacement of responsibility whereby a student may blame authority figures or systemic issues for their unethical behavior. For example, students may have used GenAI unethically to reduce stress and then attributed that misuse to external factors such as excessive workload (also see Mulenga & Shilongo, 2024). While the bivariate correlation between time pressure and use of GenAI was positive (Table 1), this association was negative when controlling for other predictors (Table 2). It is possible that our regression revealed a suppression effect whereby the correlation between time pressure and use of GenAI is confounded by workload. It may be that controlling for workload revealed the underlying negative association between use of GenAI and time pressure, previously obscured by overlapping variance. This suggests that, among students with similar workloads, those who feel more time pressured use GenAI less. Alternatively, in early 2024, when GenAI had only recently become widely accessible, students required time to navigate this new technology. Thus, greater workload demands may have only involved greater use of GenAI when there was less time pressure and more time available to become familiar with the new technology. Further research is required to better understand the associations between workload, time pressure, and use of GenAI among psychology students.

GenAI and Academic Integrity

An important strength of our study is that grades on secure and unsecure assessment tasks were not subject to the limitations of self-report. To examine GenAI use and academic integrity in a more objective manner, we explored correlations between grades on different types of assessments and use of GenAI, and correlations between grades for assessments that are differentially impacted by GenAI (i.e., GenAI secure vs. unsecure assessments). In two first-year undergraduate psychology subjects completed in the first half of 2024, we found that use of GenAI did not correlate with grades on secure or unsecure assessments. Additionally, across both subjects, higher grades on secure in-person quizzes were associated with higher grades on unsecure online quizzes and written assessment tasks. This suggests that, on average, students with lower grades on secure assessments also received lower grades on unsecure assessments. The potential use of GenAI to complete unsecure assessments did not appear to artificially inflate the grades of poorer performing students. This was evident not only in the lack of a direct association between reported use of GenAI and coursework grades, but also the strong and consistent positive associations between secure and unsecure assessment grades. Given the scrutiny recently applied to assessment security (Luo, 2024; TEQSA, 2024), this finding is surprising. It suggests that students using GenAI in unsecure assessments are not doing so in a way that artificially inflates their grades.

It should be noted that grades were generally higher in the unsecure relative to secure assessments (see Supplemental Table S4), suggesting that the former may have been less difficult, thus reducing motivation to use GenAI. However, it could also be argued that students felt more time pressure and anxiety in the in-person quiz which lowered their grades (Lynam & Cachia, 2018). Students also rate written assessments as being more difficult than quizzes (Kaipa, 2021; Zeidner, 1987), again suggesting that task difficulty may not be a confounding factor in the current study. Taken together, the current data provide evidence that short-cuts associated with use of GenAI may not have compromised student learning in the first half of 2024 when students were potentially still familiarizing themselves with this new technology. However, it must also be acknowledged that GenAI is rapidly improving while students are also becoming more familiar with the technology. The influence of GenAI use on assessment grades is therefore likely to change with time.

In contrast to the behavioral (grades) data suggesting that GenAI use did not impair learning, perception of excessive workload was a significant predictor of greater GenAI use. This suggests that GenAI may have been used as a short-cut, impairing authentic learning (i.e., deep as opposed to surface level learning; Devlin & Gray, 2007; Guo, 2011). Indeed, in line with our hypotheses, greater use of GenAI was correlated with greater procrastination and memory difficulties, potentially indicating impaired deep learning due to GenAI use. These data align with a recent study reporting that long-term retention of information is poorer for students who used ChatGPT during learning relative to a control group who worked through the learning exercises without any digital tools (Akgun & Toker, 2025). In contrast, a meta-analysis demonstrated that GenAI is associated with improvement on a range of cognitive (e.g., problem solving) and noncognitive (e.g., confidence) academic skills among college students (Sun & Zhou, 2024). Moderators of this association were also identified, for example, academic achievement was greater in the context of independent relative to collaborative learning activities. Further studies are needed that rigorously investigate the circumstances under which GenAI use may impair versus enhance authentic learning that supports acquisition of core competences.

Another potential explanation for the association between excessive workload and use of GenAI is that technology was used to support learning in an ethical manner. In the current study, concerns about reliability of information, privacy risks, and risks associated with plagiarism or violation of university policies (i.e., “perceived risks”) were not associated with self-reported use of GenAI. This could be interpreted as broadly consistent with previous research showing that students are motivated to engage ethically with GenAI (Cavazos et al., 2024). That is, students may not perceive any risk of violating academic policies when using GenAI if they believe their use of the technology is ethical. However, a related possibility is that students are not fully aware of all ethical issues associated with use of GenAI.

Limitations and Future Directions

A limitation of the current research is reliance on students to accurately and honestly self-report use of GenAI in their coursework. Although responses were anonymous, students may not have revealed the true extent to which they had been using GenAI. In addition, the current study assessed use of GenAI for coursework in general rather than whether AI use on the specific assignments influenced grades. It might also be argued that the current data were influenced by participant selection bias. However, most students enrolled in the two subjects (i.e., 142 out of 185 students) completed the survey. A further potential limitation is the high proportion of female participants, limiting the generalizability of the results to other more gender-balanced or male-dominated disciplines. Future research should replicate this study in a larger sample with greater gender balance since gender has been shown to influence potential predictors of GenAI use (see Kinskofer & Tulis, 2025). Measures of cultural diversity should also be included in future studies given the potential impact on use of GenAI (Yusuf et al., 2024). In addition, the inclusion of qualitative data in future research would provide greater insight into motivations underpinning use of GenAI in students’ coursework. Examination of additional predictors of GenAI use would further strengthen future research. For example, Fel et al. (2025) found that perceptions of legal, moral, engineering, and algorithmic (system development) responsibility for the actions of AI significantly influenced AI acceptance among students. In addition, Kinskofer and Tulis (2025) recommend inclusion of motivational constructs grounded in appraisal theory. Given the rapid progression of GenAI, longitudinal research is required to examine the influence of advancements in AI on student learning over time. Longitudinal data would also allow for testing causality of associations, including elucidation of the direction of effects.

Conclusion and Implications

As future psychologists or behavioral scientists, psychology students must develop competence in working effectively and ethically with GenAI (Richmond & Nicholls, 2024). This will be essential if graduates are to take advantage of the benefits while minimizing the potential risks of GenAI in their future careers (American Psychological Association, 2024). As noted, the first step is to identify predictors and outcomes of psychology students’ use of GenAI. The current data suggest that use of GenAI among psychology students can be encouraged by building positive attitudes toward the technology, particularly by ensuring that it is perceived as easy to use. In line with UTAUT, support, including provision of time, for students to learn to use this new technology will be critical. It will also be essential to ensure that GenAI is used to ease student workload in a way that reduces the risk of bypassing deep learning. In particular, the role of time pressure in moderating the association between workload and use of GenAI requires further investigation. With regards to ethical use of GenAI in the first half of 2024, the current assessment grade data provide some assurance that psychology students were using GenAI ethically, and not in a way that artificially inflated grades on unsecure assessments.

Supplemental Material

sj-docx-1-plj-10.1177_14757257261430600 - Supplemental material for Predictors of Psychology Student GenAI Use and Grades on GenAI Secure Versus Unsecure Assessments

Supplemental material, sj-docx-1-plj-10.1177_14757257261430600 for Predictors of Psychology Student GenAI Use and Grades on GenAI Secure Versus Unsecure Assessments by Phoebe E. Bailey, Anoushka Parylo, Kell Tremayne, Catherine O’Gorman and Poppy Watson in Psychology Learning & Teaching

Footnotes

Ethical Approval and Informed Consent Statements

The University of Technology Sydney Human Research Ethics Committee approved this study (Approval No. ETH24-9156). In line with the Ethics Code of the American Psychological Association, participants gave informed consent prior to completing the survey.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.