Abstract

Statistics anxiety (SA), negative attitudes and low self-efficacy persist as barriers to engagement and performance in research methods and statistics. In digital learning environments, multiple-choice quizzes (MCQs) with automated feedback are commonly used, yet the optimal timing of feedback remains underexplored. This study examined how feedback timing (each question, blocks or score-only) affected SA, self-efficacy and feedback perceptions. A total of 332 undergraduate students completed the Abbreviated Statistics Anxiety Rating Scale (STARS), General Self-Efficacy Scale (GSE) pre- and post-test of the 25-item online statistics MCQ, followed by perception questions. A between-subjects multivariate analysis of covariance (MANCOVA) revealed significant differences in feedback timing on post-quiz SA, feedback understanding and perceived benefit. No differences were reported for pre- to post-test SA or self-efficacy. A within-subjects MANCOVA indicated significant pre- to post-test SA reductions in test and class anxiety and help-seeking anxiety, but increased interpretation anxiety. Block feedback led to the highest performance, offering practical insights for reducing anxiety and improving digital tools.

Keywords

Introduction

Recent research highlights that emotional, cognitive, and technological factors jointly shape how students experience and regulate statistics anxiety (SA) in higher education (Akram & Abdelrady, 2025; Putwain et al., 2025). Within this context, understanding how feedback and assessment design influence learning emotions is important, especially with the rise of digital and gamified feedback systems (Firman et al., 2024; Maraza-Quispe et al., 2024).

SA poses a significant barrier in statistics education, characterised by avoidance behaviours, reduced engagement and lower performance (Macher et al., 2012; Paechter et al., 2017). Anxious students often delay assessments, avoid class and seek help less frequently, reinforcing underperformance and diminishing confidence (Cui et al., 2019). Given that SA is associated with self-efficacy, the belief in one's ability to succeed (Bandura, 1977), addressing both constructs through instructional strategies is essential. Self-efficacy supports proactive learning behaviours, such as persistence and effort (Bandura, 2013, 2015; Schunk & Pajares, 2009), enabling students to better manage statistics-related challenges. Effective interventions should therefore target SA reduction and self-efficacy enhancement.

The Expectancy-Value Theory of Anxiety (Pekrun, 1984, 1988, 1992; Pekrun et al., 2023) offers further insights into why students experience learning related anxiety, proposing that anxiety arises from the combination of low expectancy of success and high task value. Within this framework, the perceived importance of success in statistics, combined with doubts about ability, can trigger anxiety responses that undermine engagement and self-efficacy, aligning with contemporary control-value approaches to achievement emotions (Pekrun et al., 2023).

Multiple-choice quizzes (MCQs) are frequently integrated into statistics modules to provide scalable, individualised feedback, being particularly beneficial when classroom time is limited (Förster et al., 2018; Ji, 2023; Lin et al., 2021). While feedback promotes learning, delivery at scale remains challenging in large cohorts with limited instructor contact (Hattie & Timperley, 2007; Nicol & Macfarlane-Dick, 2006; Wisniewski et al., 2020). Students with SA may avoid tutor feedback due to fear of asking, even when at risk of failure (O'Sullivan et al., 2014), making online MCQs with automated feedback a potentially inclusive strategy. Such quizzes promote self-regulated learning, allowing students to monitor understanding and performance (Nicol & Macfarlane-Dick, 2006; Nilson, 2013), particularly valuable in asynchronous or digital environments. Recent studies in technology-enhanced learning support that active learning quizzes and gamified self-assessment tools can improve engagement, self-efficacy and performance when paired with immediate feedback (Cook & Babon, 2017; Vaughan & Finn, 2025).

Feedback and Its Timings

Quizzes with feedback can support self-regulated learning by helping students reflect on pre-assessment knowledge and identify areas for development (Nilson, 2013), potentially alleviating SA. Feedback provides essential information on performance, enhancing achievement (Wisniewski et al., 2020), motivation (Narciss & Huth, 2004) and self-efficacy (Asghar, 2010). Feedback timing, valence and individualisation significantly shape student perceptions (Goldin et al., 2017). Timing is recognised as a key factor affecting the perception and effectiveness of feedback. Two main types of feedback timing are identified: instant feedback, which provides immediate correctness cues, and delayed feedback, which introduces a temporal gap that can range from seconds to months before correctness is provided (e.g., Nutbrown et al., 2016). However, optimal timing remains debated, with mixed empirical results (e.g., Goldin et al., 2017).

Delayed feedback is associated with the Delay Retention Effect (Kulhavy & Anderson, 1972), suggesting enhanced learning via deeper processing (Smith & Kimball, 2010). Butler (2018) found that spacing feedback improved retention on MCQs. However, this effect has been observed in laboratory settings (Kulik & Kulik, 1988), and classroom evidence remains mixed (Chang, 2017). Delayed feedback may also reduce engagement, as students are less likely to revisit tasks or reflect on feedback (Nutbrown et al., 2016).

Instant Feedback

Immediate feedback provides clarification and satisfaction (Fogarty, 2008), helping students resolve misconceptions and close the feedback loop (Wisniewski et al., 2020). Instant feedback prevents the reinforcement of errors and enables real-time correction (Dihoff et al., 2004). In statistics modules, timely feedback prevents compounding misunderstanding (Moreno, 2004). MCQs with instant feedback support self-regulated learning by improving long-term retention and reducing test anxiety (Roediger & Butler, 2011).

Students report a preference for instant feedback, particularly for complex, step-based tasks like those in statistics (Corbett & Anderson, 2001). Providing feedback after each quiz question promotes engagement with correct and incorrect responses (Butler, 2018; Roediger & Butler, 2011), which aligns with statistical tasks that often require a single correct answer (Garfield & Ben-Zvi, 2007). When delivered online, instant feedback enables flexible asynchronous learning (Beetham & Sharpe, 2013). Interactive systems such as ClassPoint and Quizizz have been found to improve student self-efficacy and motivation through immediate formative feedback (Akram & Abdelrady, 2025; Maraza-Quispe et al., 2024). In contrast, delayed end-of-quiz feedback limits opportunities for timely correction and disrupts regulation (Dihoff et al., 2004). While instant and delayed feedback hold pedagogical value, their differential effects remain underexplored in psychology and statistics education.

While both instant and delayed feedback demonstrate cognitive and motivational benefits, their relative effects are best understood through recent cognitive-affective frameworks linking feedback to emotion regulation (Pekrun et al., 2023). For instance, according to the Control-Value Theory (Pekrun, 2006) and Social Cognitive Theory (Bandura, 1977), students’ perceptions of control and competence shape their emotional reactions to feedback. Supporting students’ self-efficacy and motivation may also depend on the nature of the feedback provided. Positive reinforcement, such as praise or the display of high scores, has been found to increase persistence and effort (Ahmed et al., 2022; Bonghawan & Macalisang, 2024; Grünke et al., 2017). To ensure consistency, feedback in this study used a positive valence, offering constructive explanations even when answers were incorrect.

While such framing can enhance motivation, its impact may vary by timing. Self-efficacy not only shapes how feedback is interpreted but also moderates its influence (Bandura, 1977). Students with higher self-efficacy are more likely to view feedback as helpful, whereas those with lower self-efficacy may perceive feedback as reinforcing anxiety (Macher et al., 2012; Pajares, 2003). Timely, positively framed feedback may therefore reduce SA by providing reassurance and supporting real-time correction (Hattie & Timperley, 2007; Wisniewski et al., 2020). Determining optional timing is essential, as the balance between immediate reinforcement and delayed reflection could play a critical role in supporting self-efficacy and managing SA in statistics learning. The present study, therefore, extends research on formative technology-enhanced feedback (e.g., Vaughan & Finn, 2025) by examining how feedback timing interacts with both cognitive and emotional outcomes.

Study Aims, Research Questions and Hypotheses

In statistics education, where SA, self-efficacy and attitudes are key concerns, the timing of reinforcement is likely to be particularly influential (Ahmed et al., 2022; Bonghawan & Macalisang, 2024). This study investigated three conditions: feedback after each question (i.e., each), after every block of five questions (i.e., block), and only a final score provided at quiz completion (i.e., score-only), to evaluate how feedback immediacy and frequency affect student performance, SA, self-efficacy, alongside perceptions of comprehension and benefit.

The each condition allows for immediate correction and minimises uncertainty (Dihoff et al., 2004; Hattie & Timperley, 2007). The score-only condition aligns with traditional delayed feedback paradigms (Kulik & Kulik, 1988; Smith & Kimball, 2010) and is conceptualised as delayed in terms of self-regulated learning processes (Kulhavy & Stock, 1989). Although feedback follows immediately after quiz completion, students receive no corrective input during the task, limiting opportunities for real-time correction. This postponement introduces a temporal gap between errors and feedback, which can impair reflection and anxiety (Kulhavy & Stock, 1989). The block condition, offering feedback at fixed intervals, aims to support reflection without interrupting focus after each question, thus balancing correction opportunities with interval-based review.

RQ1: Does feedback timing affect statistical performance? It is hypothesised that feedback timing will significantly affect performance, with higher scores expected in the each and block conditions than the score-only condition. The each condition it predicted to have the highest performance, as it enables real-time correction and prevents repeated errors (Dihoff et al., 2004; Hattie & Timperley, 2007).

RQ2: Does feedback timing affect SA and self-efficacy? Students in the each and block conditions are expected to report lower SA and higher self-efficacy due to regular, positively framed feedback. Frequent opportunities to confirm understanding may build confidence and reduce uncertainty-related anxiety (Dihoff et al., 2004). In contrast, infrequent feedback can heighten anxiety and undermine motivation (Fogarty, 2008).

RQ3: Does feedback timing affect perceptions of feedback understanding and benefit? Students in the each and block conditions are expected to perceive feedback as more beneficial and easier to understand due to its frequency and immediacy, which supports linking feedback to recent answers and reduces cognitive load (Goldin et al., 2017). In contrast, the score-only condition constitutes delayed feedback, as it follows a temporal gap (approximately 1 min) after quiz completion (Kulhavy & Stock, 1989), making it harder to recall responses and apply corrections (Butler, 2018), therefore perceived as less beneficial. Additional explorative questions are addressed (detailed below in Perception and Exploratory Judgement Questions).

Method

Participants

An opportunity sample of 332 undergraduate students from Birmingham City University participated: foundation year (4%), first year (79%), second year (11%), third year (5%) and other (1%). Students were enrolled in psychology BSc (48.7% including combined courses) or others (nursing BSc, engineering BE and information technology BSc), which also require statistics modules. Participants were randomly assigned via Qualtrics; disciplinary differences were not examined as this was beyond the study's scope.

Ages ranged between 18 and 69 years (

Design

A between-participants design was implemented across three feedback timing conditions: feedback after each question (

Analyses were conducted using a one-way MANCOVA to examine the effect of feedback timing on performance, SA, self-efficacy and feedback perceptions, controlling for pre-test levels. MANCOVA was chosen over regression analyses, as the study aimed to compare group differences rather than predict outcomes, while controlling for covariates.

Materials

Experimental Task, Feedback Conditions and Performance Measure

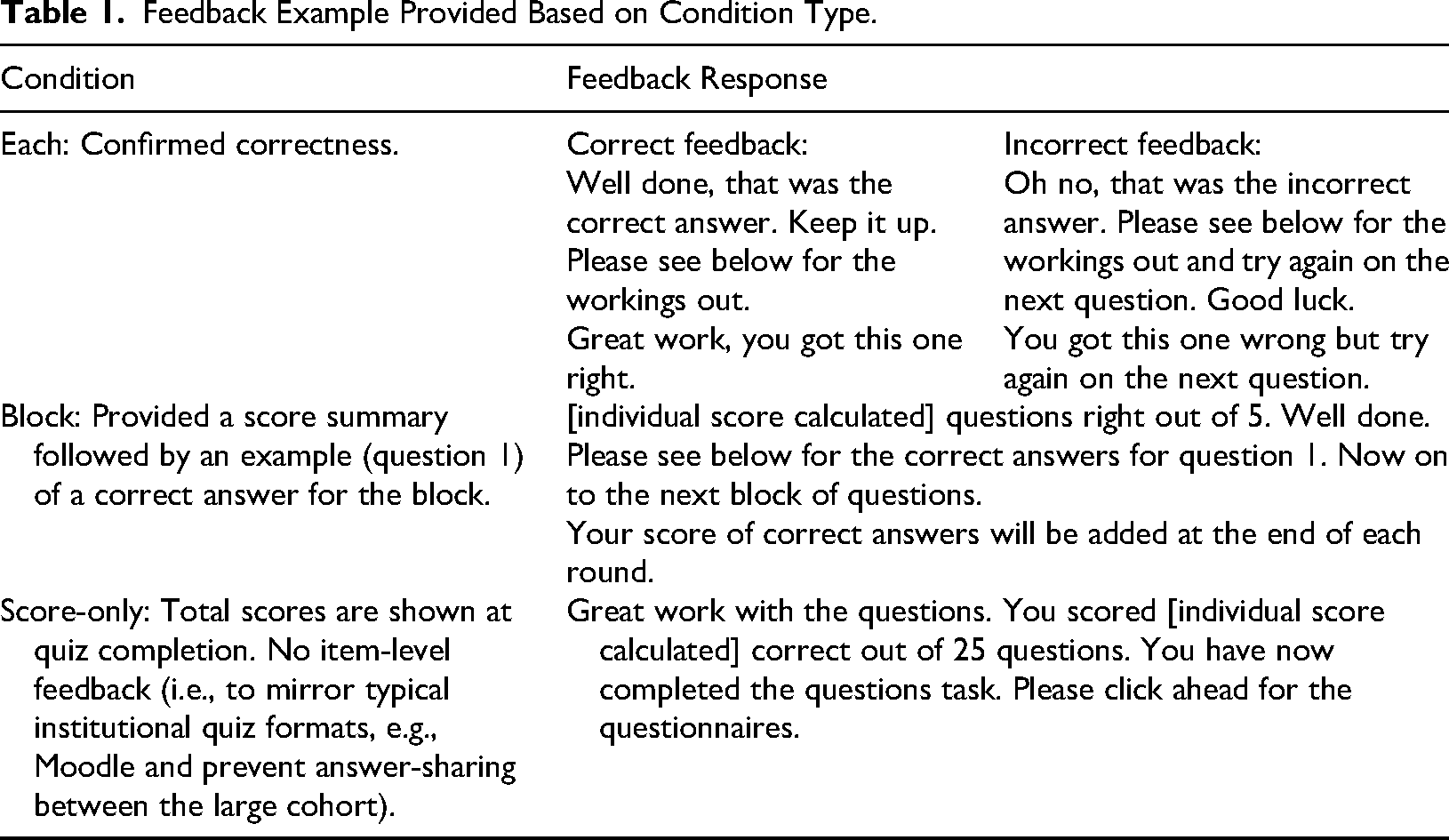

The 25-item MCQ was adapted from GCSE-level questions sourced from BBC Bitesize (a free UK BBC online resource that supports learning, revision, and homework for students from ages 3 to 16+) to include a fourth multiple-choice option. The quiz comprised five topic-based blocks (means, medians, selecting averages, probability and graphs) with five questions each, introduced with a brief context explanation (e.g., how to calculate the mean). The sequence of questions was identical across all feedback conditions. Items reflected core Level 4 statistics curriculum content, providing an appropriate difficulty, formal item difficulty indices were not calculated. Feedback responses utilised positive valence for encouragement (see Table 1) (Lechermeier & Fassnacht, 2018). Quiz performance was scored as the total number of correct responses (range 0–25).

Feedback Example Provided Based on Condition Type.

Abbreviated Statistics Anxiety Rating Scale

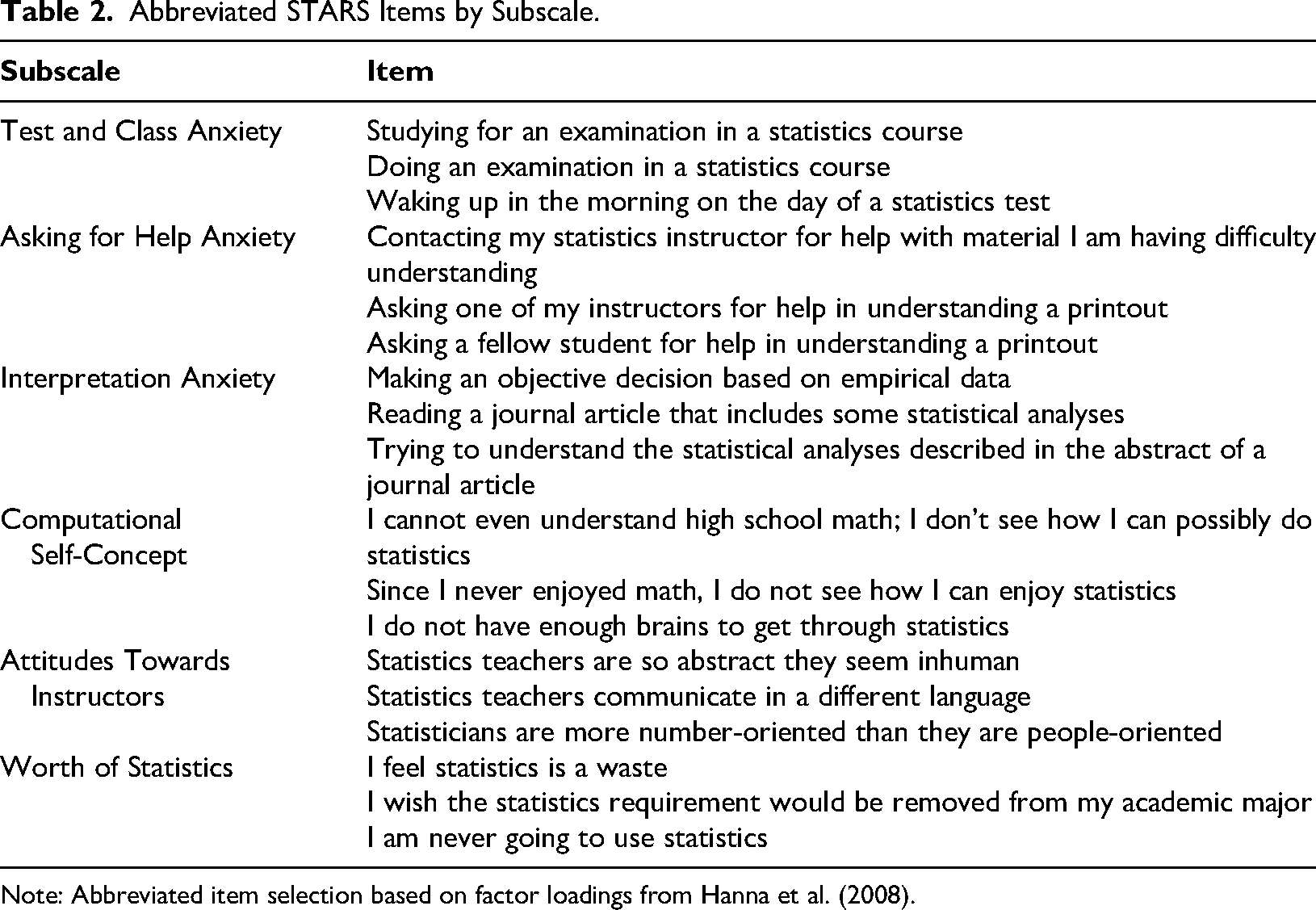

The 18-item Abbreviated Statistics Anxiety Rating Scale (STARS: Zimmerman & Johnson, 2017) was used in this study. Adapted from Hanna et al.'s (2008) 51-item UK STARS, first developed by Cruise et al. (1985). The abbreviated STARS was selected to reduce participant burden and improve retention. The scale included a reduced three items for each of the original six subscales. Three subscales measured anxiety (test and class anxiety, asking for help and interpretation anxiety), on a 5-point Likert scale:

Abbreviated STARS Items by Subscale.

Note: Abbreviated item selection based on factor loadings from Hanna et al. (2008).

The General Self-Efficacy Scale

The 10-item General Self-Efficacy Scale (GSE; Schwarzer & Jerusalem, 1995) was used to measure students’ general confidence in managing academic challenges. Each item (e.g., “I can usually handle whatever comes my way”) was rated on a 4-point Likert scale, (1) indicating

Perception and Exploratory Judgement Questions

Two perception questions assessed feedback understanding (“How much did you understand the feedback that was provided?”) and perceived benefit (“How beneficial did you find the feedback?”) using a scale of (0)

Procedure

Participants were recruited via their first-year psychology statistics module and accessed the study online. After providing informed consent, students were randomly allocated to a feedback condition. The study included guided instructions, the MCQ task and both pre-and post-questionnaires. Ethical approval was granted by the Birmingham City University Psychology College Research Ethics Committee.

Results

Analytic Assumptions

Initial analyses confirmed that assumptions of normality and homogeneity of variance were satisfied. No multivariate outliers were identified, and although Box's M was significant, Pillai's Trace was reported as a robust statistic against violations (Field, 2013). Bootstrapping (1,000 bias-corrected samples) increased robustness (Wright et al., 2011).

MANCOVA

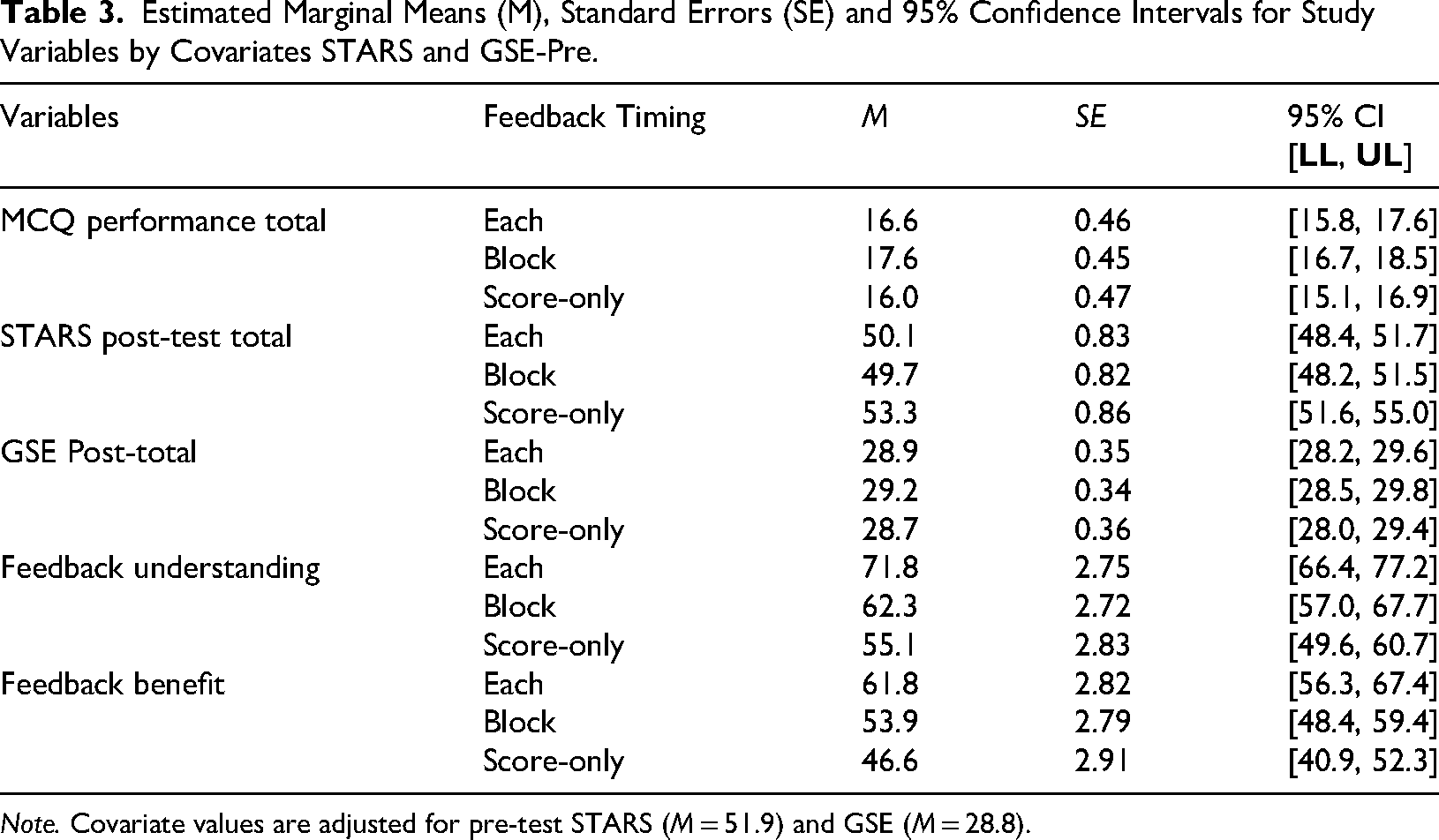

To address the three research aims, a between-subjects MANCOVA explored the difference of feedback timing conditions on MCQ performance, post-test STARS and GSE scores, feedback understanding and benefit. Pre-test STARS and GSE scores were included as covariates (see Table 3).

Estimated Marginal Means (M), Standard Errors (SE) and 95% Confidence Intervals for Study Variables by Covariates STARS and GSE-Pre.

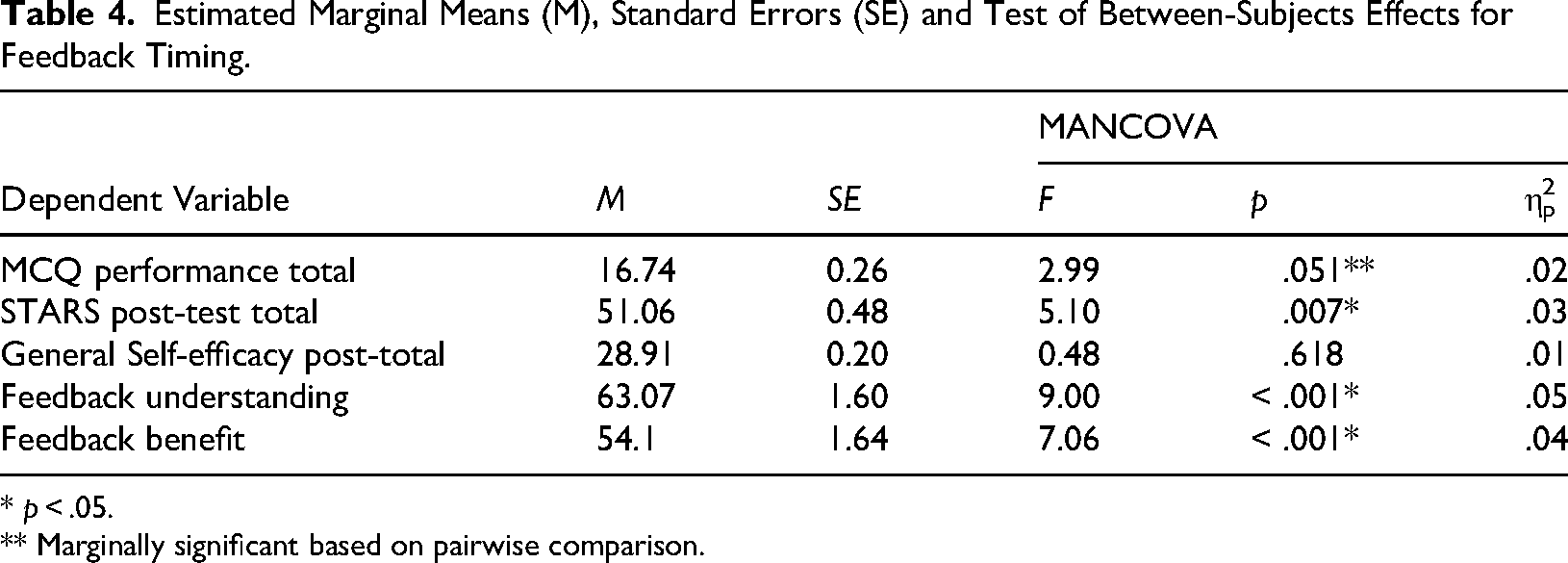

There was a statistically significant main effect of feedback timing on STARS post-test, GSE post-test, feedback understanding, and benefit controlled by pre-test STARS total and GSE

Estimated Marginal Means (M), Standard Errors (SE) and Test of Between-Subjects Effects for Feedback Timing.

*

** Marginally significant based on pairwise comparison.

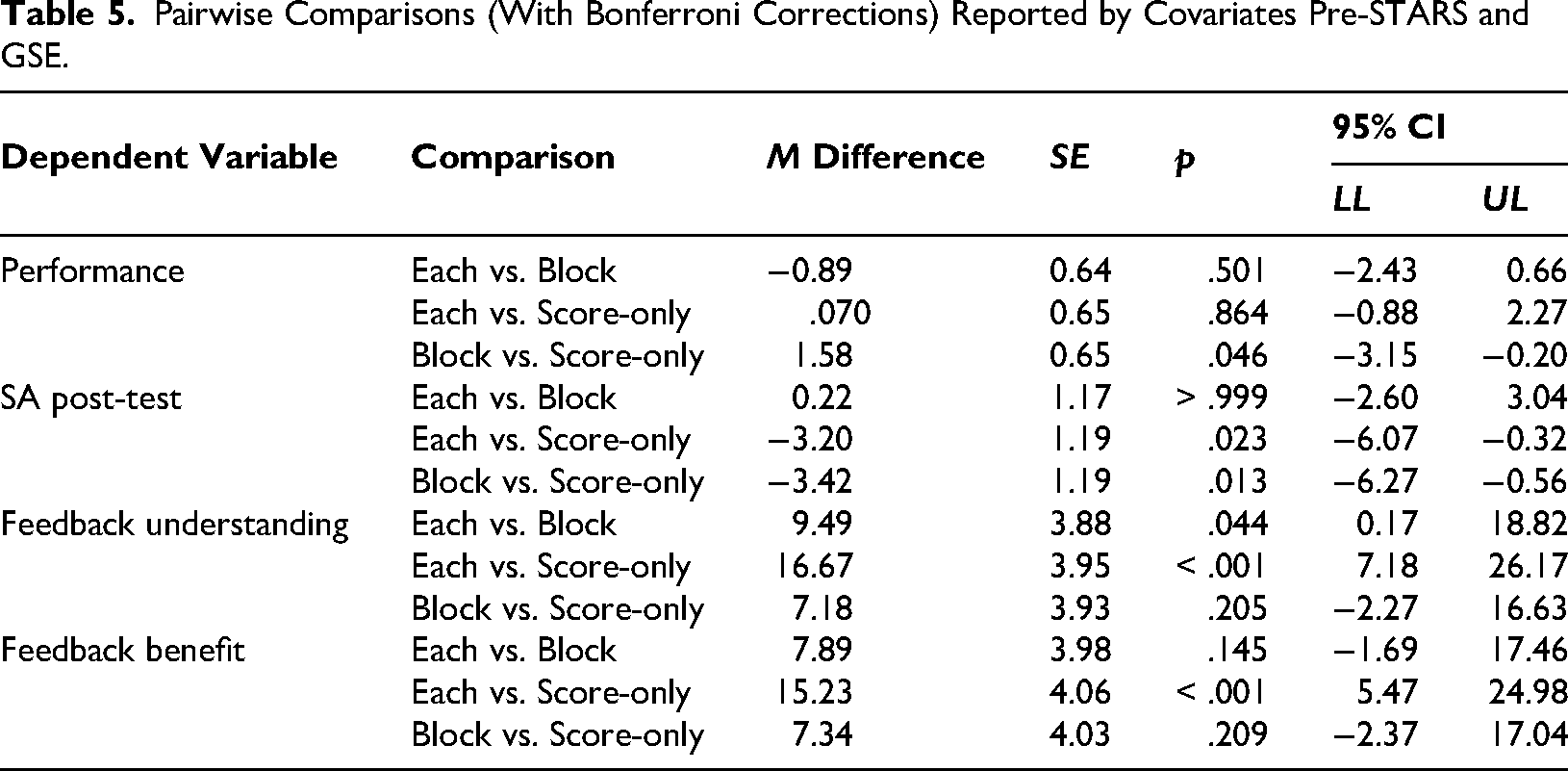

Pairwise comparisons (with Bonferroni corrections) indicate that students in the block feedback condition performed significantly better than those in the score-only condition. No performance differences were found between the each and block conditions. Post-test STARS score was significantly higher (i.e., more anxious) in the score-only condition than in both the block and each conditions. Feedback understanding and perceived benefit were highest in the each-question condition (see Table 5).

Pairwise Comparisons (With Bonferroni Corrections) Reported by Covariates Pre-STARS and GSE.

Covariate Effects

MANCOVA also indicated significant covariate effects. The STARS pre-test covariate was statistically related to post-test STARS and perception variables,

STARS

GSE

Follow-up Discriminant Analysis for MANCOVA

To explore which dependent variables contributed most to group separation, a discriminant analysis was conducted. Two discriminant functions were identified. Function one explained 74.9% of the variance (canonical

Exploratory Findings on Feedback Judgment Variables

Univariate ANOVAs for Judgement Questions

Separate univariate ANOVAs were conducted on exploratory judgement variables not included in the MACOVA. Feedback read differed significantly by feedback timing,

Exploratory Findings on STARS Sub-Scales Pre- to Post-Test

MANOVA

To further explore SA outcomes, a repeated-measures multivariate analysis of variance (MANOVA) was conducted using STARS subscales as within-subjects variables.

Between-Subjects Feedback Timing and STARS Pre-Post Test

The between-subjects MANOVA revealed no significant effect of feedback timing on change in SA from pre- to post-test,

Within-Subjects STARS Pre-Post Test

However, the within-subjects MANOVA revealed a significant effect of pre- to post-test SA not based on condition

Discussion

This study examined how feedback timing (after each question, in blocks, or at the end, score-only) influenced performance, SA, self-efficacy and feedback perceptions, controlling for pre-test levels. Block feedback led to significantly higher performance, while score-only feedback produced the lowest. However, performance differences were only marginally significant. Students in the score-only condition also reported the highest SA, exceeding those in the each and block conditions. These results suggest that delayed feedback may heighten uncertainty, elevating anxiety without effectively supporting learning. While self-efficacy did not differ by condition, perceived understanding and benefit were rated higher in the each and block conditions, reinforcing the value of timely feedback. Each-question feedback was rated most beneficial.

The findings build on existing literature (e.g., Dihoff et al., 2004) by showing that structured, interval-based feedback (block condition) can optimise reinforcement without fragmenting learning. Unlike fully immediate feedback, which may disrupt processing, block feedback offers timely correction and supports consolidation (Butler, 2018). A structured, interval-based approach may effectively sustain performance in statistics education.

Although prior research suggests that delayed feedback may enhance long-term retention by promoting retrieval-based learning (Smith & Kimball, 2010), this was not supported here, as the primary difference observed was in SA rather than performance. Higher SA in the score-only condition supports that delayed feedback increases uncertainty (Moreno, 2004). In subjects like statistics, where concepts often build on one another, students may benefit more from feedback that allows for immediate correction rather than retrieval-based reinforcement. Feedback timing effects may vary by task complexity and discipline, with tools like Quizizz or ClassPoint shaping feedback engagement (Akram & Abdelrady, 2025; Maraza-Quispe et al., 2024). For instance, Smith and Kimball's (2010) study focused on American general knowledge questions over a longer timeframe, whereas the present study examined statistical learning within a single session. Smith and Kimball's (2010) use of trivia-based questions differs from the applied statistical content in this study, where conceptual understanding and numerical reasoning may place greater demands on cognitive processing. Additionally, Butler (2018) examined long-term retention effects across various disciplines, while the present study focused on immediate performance, which may explain why delayed feedback did not show the same benefits here. Future research should explore whether retrieval-based advantages emerge over extended learning periods in statistics education, particularly concerning conceptual understanding rather than procedural recall. In explaining higher performance for immediate block feedback compared to delayed score-only feedback, it is important to consider the task complexity and the structured nature (i.e., step-by-step) of statistics learning. Where ongoing clarification may be more beneficial than delayed reflection. A study showed that highly engaged students can increase their motivation to maintain their performance even with delayed feedback (Fakhri et al., 2024).

However, students experiencing SA are more likely to procrastinate and disengage; as such, delayed feedback is less effective due to students’ lower motivation to maintain their performance (Macher et al., 2012; McEwan et al., 2023; Paechter et al., 2017). The findings suggest that for subjects like statistics in psychology, feedback given at structured intervals may provide an optimal balance between positive reinforcement and reflection. Prospective students are often unaware of the statistical demands of a psychology degree (Barry, 2012; Onwuegbuzie, 1997; Zeidner, 1991), increasing the risk of avoidance when feedback is not timely.

Higher SA in the score-only condition supports that delayed feedback can lead to cumulative misunderstandings, increasing cognitive load and anxiety (Moreno, 2004). The delayed feedback likely heightened uncertainty by not providing reassurance on students’ accuracy. The current findings support this interpretation, as feedback understanding was significantly lower for the score-only condition, suggesting that feedback after specific questions is the most effective for enhancing students’ ability to process and benefit from it. However, this contrasts with the Delay Retention Effect (e.g., Kulhavy & Anderson, 1972). Though Shabani and Safari (2016) found that delayed feedback can reduce anxiety in language learning contexts, statistics may require more immediate, scaffolded correction due to its step-based nature. The current findings support this distinction, suggesting that instant feedback may better enable self-regulation in statistics. These differences underline the importance of domain-specific research on feedback timing and SA.

Emotions such as anxiety are known to influence feedback uptake and perceived value (Värlander, 2008). Students in the each and block conditions reported significantly greater feedback understanding, consistent with research showing that clear, timely feedback enhances perceived control and engagement (Beetham & Sharpe, 2013; Weaver, 2007). This aligns with recent feedback literacy frameworks that emphasise emotional regulation and student engagement (Carless & Boud, 2018; Carless & Winstone, 2020). Higher perceived benefit in the each condition further supports prior research that found frequent feedback helps students identify areas for improvement and management of learning in manageable steps (Corbett & Anderson, 2001; Nutbrown et al., 2016). The significantly greater amount of feedback read in the block condition compared to the score-only condition suggests that interval-based feedback may encourage students to engage more with feedback while maintaining focus on the overall task. The exploratory judgement items, such as the extent to which students read the feedback, provided supportive contextual insight but were not a primary focus of this study. These findings nonetheless suggest that behavioural engagement with feedback may underpin perceived usefulness and comprehension. Detailed, explicit feedback may sustain motivation and self-regulated learning (Zumbrunn et al., 2016).

Although no condition-level differences in SA were observed pre- and post-test, within-subjects analyses revealed reductions in test and class anxiety, and asking for help anxiety. This aligns with cognitive-affective models of feedback, proposing that feedback timing influences learning through both emotional and cognitive factors (Pekrun et al., 2023). Completing a low-stakes statistics quiz (focused on basic knowledge) may help reduce initial fears, extending findings that test anxiety is highest in high-pressure settings (Onwuegbuzie, 1995). It also supports studies demonstrating that structured feedback contributes to self-regulation and reduces test anxiety (Roediger & Butler, 2011).

Interpretation anxiety increased post-test, which may indicate that engaging with unfamiliar content heightened awareness of knowledge gaps. This aligns with findings linking interpretation anxiety to perfectionism, which can lead to negative emotional responses when students feel that learning is externally imposed or beyond their control (Onwuegbuzie & Daley, 1999). This may explain the increase in interpretation anxiety, as highly anxious students may doubt their ability to accurately interpret statistical content, particularly when they compare their competence in statistics with other academic subjects (Onwuegbuzie & Jiao, 1997). However, research has shown that repeated exposure to statistical tasks with scaffolded feedback can enhance conceptual understanding and reduce anxiety over time (Onwuegbuzie & Wilson, 2003).

Specifically, quiz feedback could help students understand their weaknesses concerning specific topics (e.g., on a per-block basis), serving as a foundation for students to articulate the support they need. Students often avoid asking questions because they struggle to identify what they do not understand (Bledsoe & Baskin, 2014), leading to procrastination and unproductive study behaviours (Onwuegbuzie, 2004). Ongoing practice and feedback may therefore play a critical role in helping students manage interpretation anxiety and develop long-term statistical reasoning skills.

Limitations and Future Research

Although prior research identified self-efficacy as a predictor of success in statistics (Macher et al., 2012), this study did not find feedback timing differences, which may be due to study timing. Macher et al. (2012) collected data after a 17-week semester, while this study occurred at the beginning of a module. Self-efficacy may develop progressively through repeated mastery-aligned feedback (Schunk & Usher, 2011). Similarly, Asghar (2010) found that feedback enhanced self-efficacy across the first academic year. The brief, self-led quiz in this study may not have provided sufficient exposure to meaningfully influence self-efficacy. Future research should adopt longitudinal designs with weekly MCQs to better examine how feedback timing shapes the development of SA and self-efficacy.

Another limitation concerns the lack of corrective feedback. Participants in the each-question condition received response accuracy but not the correct answer if incorrect. This constraint, implemented to prevent misconduct in an assessment-linked task, may have limited the perceived usefulness of the task, particularly for those in the score-only condition (i.e., feedback was delayed and non-specific). Absence of corrective feedback reduces opportunities to address misconceptions (Butler, 2018; Shute, 2008). Future studies should use non-assessed contexts where complete feedback, including correct answers, can be delivered.

Participants receiving instant feedback after each question may have experienced increased task fatigue or interruptions to their response flow, as the feedback required additional reading and reflection. Conversely, those in the block and score-only conditions may have found the statistics quiz less cognitively demanding with less feedback to read. The study measured whether feedback was read, individual preferences for feedback timing were not directly assessed, nor was cognitive load. Future studies could include such measures to better understand how personal preference interacts with perceived usefulness, fatigue and learning outcomes.

The abbreviated STARS scale demonstrated strong internal consistency (Zimmerman & Johnson, 2017) but reduced construct coverage compared to the full version (Cruise et al., 1985; Hanna et al., 2008). Nesbit and Bourne (2018) reported inconsistent factor structure and variable subscale reliability in a psychology undergraduate sample, particularly for instructor attitudes. This suggests caution in interpreting subscales data and supports supplementing STARS with other validated measures to isolate anxiety-related constructs from attitudinal dimensions better.

While this study focused on feedback timing, rather than demographic comparison (i.e., disciplinary and year of study differences), gender differences in SA have been widely documented, with female students often reporting higher SA (Chew & Dillon, 2014; Onwuegbuzie, 2000). However, given the limited sample size and word constraints, meaningful subgroup analysis was beyond the current scope. Future research should consider how gender, year of study, discipline and related contextual factors interact with feedback timing, as these may shape both affective and self-efficacy development in statistics learning.

Practical Teaching Recommendations and Conclusions

This study highlights practical implications for reducing SA and supporting learning in statistics. Each-question and block feedback significantly reduced SA (test and class anxiety and asking for help anxiety) compared to score-only feedback, suggesting that frequent, structured feedback supports anxiety management by enabling real-time application and reinforcing effort through positive reinforcement. Each-question feedback was perceived most beneficial but time-intensive; block feedback promoted higher performance. Therefore, block feedback may offer a practical compromise, promoting engagement (evidenced by higher feedback read rates) while preserving student autonomy. Given its effectiveness and efficiency, block feedback is recommended for formative statistics quizzes, balancing performance benefits with manageable engagement for students and instructors. Score-only feedback, though easier to implement, produced the lowest performance and highest anxiety, making it the least desirable. This format may suit low-stakes modules but provides limited support for early-stage conceptual learning in statistics.

Self-efficacy was not affected by feedback timing in this brief intervention, suggesting that regular, repeated feedback may be needed to support confidence growth. Weekly quizzes could help build students’ perceived competence and persistence over a semester.

In summary, timely, structured feedback supports performance and reduces anxiety in introductory statistics. Block or each-question feedback should be embedded in digital tools, particularly for students with limited prior exposure to statistics. Future research should explore how feedback timing affects advanced modules and examine student development across an academic year. In conclusion, instant feedback should supplement, not replace, face-to-face support, enhancing the overall learning experience through digital quizzes.

Footnotes

Informed Consent

Informed consent was obtained from all individual participants included in the study, which included consent regarding publishing data.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declare no potential conflicts of interest with respect to the research, authorship and/or publication of this article.