Abstract

Keywords

Psychologists have a duty to develop and maintain knowledge and skills to practice effectively and safely. Psychology training programs (psychology programs) are responsible for determining if psychology students are capable of practising professionally in the real world (Paparo et al., 2021). Central to this decision are attainment and demonstration of clinical competencies which ensures students have the necessary skills and attributes required to practice psychology competently and safely (Australian Psychology and Accreditation Council, 2019).

While there are many methods available to psychology programs to evaluate skills and knowledge, they are not without limitations. Traditional forms of assessment such as essays and examinations only assess whether knowledge is acquired and generally lack ecological validity and fidelity to practice (Lichtenberg et al., 2007). Similarly, supervisor ratings have been found to be subject to leniency and bias (Gonsalvez & Freestone, 2007), and heterogeneity within clinical placement experience makes reliable determination of competency difficult (Paparo et al., 2021). Furthermore, while knowledge about psychotherapeutic models and techniques are essential, it does not imply that they can be applied with clients (Beccaria, 2013; Pachana et al., 2011). For example, a student who performs well on an essay assessing knowledge of clinical interviewing, may still face difficulty interviewing a real client. As such, assessing real-world clinical skills in a consistent, fair, and impartial manner is a complex challenge (Goodie et al., 2021).

Objective Structured Clinical Examinations

In recent times, there has been an increased uptake of Objective Structured Clinical Examinations (OSCEs) as a form of competency-based assessment (Sheen et al., 2015; Yap et al., 2021). During OSCEs, students role-play clinical vignettes designed to replicate real-world interactions with simulated patients or standardized patients (both terms are used interchangeably [SPs]) (Khan et al., 2013b). Examiners observe the students’ performances and evaluate competencies using objective measures such as checklists, rating scales, or both (Khan et al., 2013a). As such, OSCEs are better suited to assessing demonstration of competencies or skills which cannot be adequately captured by traditional assessment tools (e.g., conducting a mental state examination or administering an assessment) (Khan et al., 2013a). The OSCE is widely regarded as a fair and relevant examination that has been well received by students and examiners, due to multiple factors such as the controlled exposure and proximity to “actual clinical practice” and requirement to translate knowledge into practice (Patrício et al., 2013). OSCEs are also utilized as an assessment tool with the increasing use of simulated learning activities in competency-based psychology programs (Rice et al., 2022). OSCEs have been used within medical and physical health schools for over four decades (Brannick et al., 2011) and continue to be relevant as a formative or summative assessment across 94% of medical schools (Barzansky & Etzel, 2018).

Implementation Guidelines

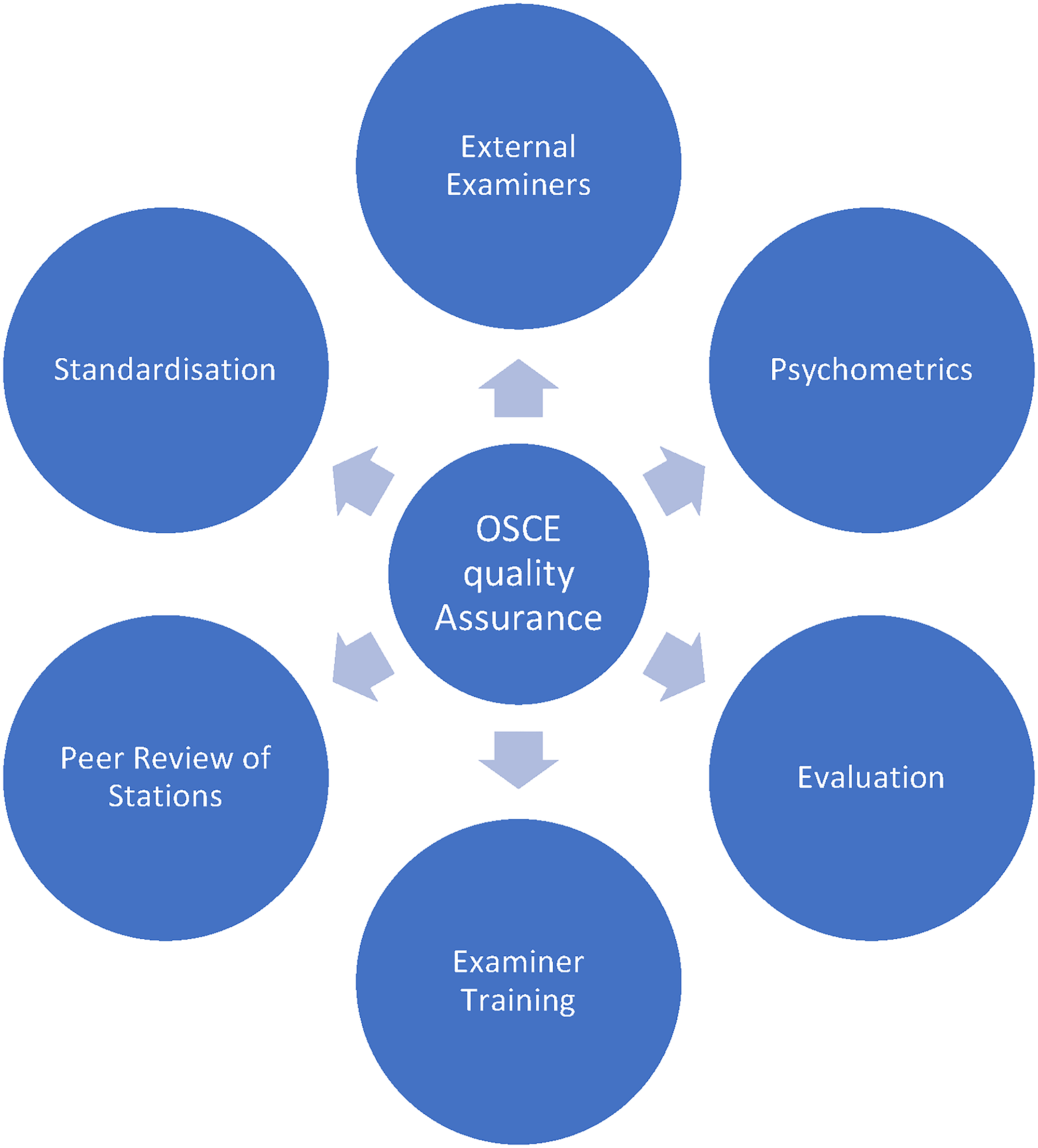

Reliability and validity profiles of OSCEs are highly influenced by the way they are facilitated (Harden, 2016; Khan et al., 2013b). For instance, studies have shown that increasing the number of examiners (Brannick et al., 2011), stations (Khan et al., 2013a), and metrics (Ilgen et al., 2015) increases the overall reliability of OSCEs. A synthesis of implementation methods that improve psychometric qualities of OSCEs is provided by Khan et al. (2013b) as a set of Quality Assurance Guidelines (QAGs; Figure 1). These guidelines are referenced across studies evaluating the development and implementation of OSCEs in medicine (Daniels & Pugh, 2018), pharmacy (Cheema & Ali, 2021), dentistry (Salawu et al., 2022), social work (Bogo et al., 2012), and psychology (Goodie et al., 2021; Sundström & Hakelind, 2022; Yap et al., 2012). However, it should be noted that due to the early adoption and continued widespread use of OSCEs within medical training, QAGs have been developed from medical program OSCEs. As OSCEs expand across health professions, including psychology, it is important to ensure that while the content of the examination may differ, the essential quality indicators are present. Khan et al.'s (2013b) QAGs provide a blueprint for training programs to assess OSCE quality, by identifying each of the core components (as shown in Figure 1). As such, training programs can utilize QAGs during the development, implementation, and evaluation of OSCEs to maximize the strengths and psychometric profile of the assessment (Khan et al., 2013b).

Quality assurance guidelines (Khan et al., 2013b).

In contrast to the depth of studies investigating medical OSCEs, there is a dearth of literature pertaining to the evaluation of OSCEs within psychology programs (Sheen et al., 2015). The adoption of OSCEs as a competency-based assessment within psychology programs was largely based on assessments within adjacent fields such as psychiatry, social work, and mental health nursing (Goodie et al., 2021; Plakiotis, 2017). Evidence of psychometric assessment of OSCEs in psychology has started to emerge in two broad sources of evidence: (1) face validity and (2) predictive and construct validity.

Face Validity

According to Sheen et al. (2021), OSCEs have the potential to enhance students’ educational experiences by providing learning opportunities that are authentic and proximal to their clinical work environments. This is supported by studies investigating student and staff perspectives of the OSCE as “preferrable to other forms of assessment” in addition to being “valid,” “realistic,” “fair,” and “authentic” (Melluish et al., 2007; Roberts et al., 2020; Sheen et al., 2015; Yap et al., 2012).

Predictive and Construct Validity

While this evidence suggests that OSCEs are favorably appraised, the ability of OSCEs to offer insights into the clinical capabilities of students is still under debate. OSCE performance and scores have been shown to significantly correlate with supervisor ratings of Communication and Interpersonal Skills in placement performance (Glatz et al., 2022). However, the same students that failed Communications tasks within an OSCE were still found to meet minimum expectations or higher by supervisors (Meghani & Ferm, 2021). Similarly, OSCE scores converge with other measures of competence for some clinical skills (Diagnosis and Clinical Assessment) but not others (Ethical standard & Communication skills) (Meghani & Ferm, 2021). Lastly, Sundström and Hakelind (2022) conducted an OSCE where they assessed the generalizability of performance scores across stations and found large variability in scores across and within stations, concluding that some tasks were either too difficult or marked less consistently. This large variability in scores across different OSCE stations could indeed mirror the real-world variability in clinical scenarios that students will face in practice. Thus, the measurement of reliability in this context should not be solely predicated on consistency across stations. Instead, it could be more beneficial to evaluate reliability in terms of a student's ability to consistently meet the demands of diverse clinical scenarios. This perspective not only guides the design of OSCEs but also assists in interpreting their outcomes. As such, Sundström and Hakelind found the reliability of their OSCE to be on par with OSCEs in other mental health areas, such as psychiatry (α = 0.51; Hodges et al., 1998) and social work (α = 0.55; Bogo et al., 2011), but far below the acceptable reliability values within medicine (α = 0.66–0.80; Brannick et al., 2011; Khan et al., 2013a). Combined, the initial evidence suggests that while OSCEs may enhance educational experiences, they should be interpreted with caution.

More importantly, it is necessary to determine whether these findings suggest that OSCEs are more effective than existing assessment methods in distinguishing students who have demonstrated the required competency, or if some psychological competencies are less reliably assessed than others. Systematic reviews on the reliability of OSCEs administered within medical schools confirmed that skills which require subjective and idiosyncratic judgments on performance such as communication skills and cultural competence are scored less reliably on rating scales (Brannick et al., 2011; Cömert et al., 2016; Halman et al., 2020; Piumatti et al., 2021). This presents further challenges for the assessment of psychological competencies which require intuitive and situational judgments (Ilgen et al., 2015). Balancing reliability and validity in OSCEs is crucial. Over-emphasis on reliability, through overly objective rubrics, may oversimplify complex skills and risk compromising predictive validity. Ideally, assessments should be both reliable (i.e., ensuring consistent results) and valid (i.e., accurately measuring the intended skills). Achieving this balance necessitates thoughtful design, clear conceptualization of the assessment's goals, and commitment to ongoing review for iterative improvements. However, there are no studies or reviews investigating the psychometric qualities of OSCEs within psychology programs.

Objective

In the absence of direct comparison data, there remains a need to evaluate OSCE quality. Evidence suggests there is a relationship between the psychometric properties of an OSCE (which includes reliability, validity, and educational impact), and the methodology employed in its implementation (Harden, 2016; Khan et al., 2013b). This may present a way forward, through examination of quality by adherence to QAGs. Higher degrees of adherence are likely to represent psychometrically sound OSCEs, while lower degrees may indicate poor psychometric quality. Accordingly, the current systematic review assessed OSCEs within psychology programs against QAGs to indicate the overall psychometric quality.

Methods

Protocol and Registration

A systematic review was conducted according to the Preferred Reporting Items for Systematic Review for Meta-Analysis (PRISMA) recommendations and the protocol was registered with PROSPERO.

Eligibility Criteria

The studies were included based on the following criteria: (1) peer reviewed articles that were published in English; (2) psychology students; (3) OSCE style assessment or similar; and (4) OSCE examination data or feedback is provided. Studies that provided only a general overview of the OSCE or contained a mixed-discipline cohort of students were excluded from the systematic review. It was necessary to screen out studies that did not provide examination data, so that implementation was able to be assessed. Similarly, as the clinical competencies of psychologists differ from those of other mental health professions such as counselors, psychiatrists, social workers, and mental health nurses, it was important to only include studies which included a sample of psychology students within a psychological training setting.

Information Sources

The key indexing databases of Scopus and Web of Science were used to identify relevant studies, with additional searches repeated in psychology specific databases PsycInfo, PsycArticles, and ProQuest Psychology. Relevant references of studies were manually searched. The final search was conducted in September 2022.

Search Strategy

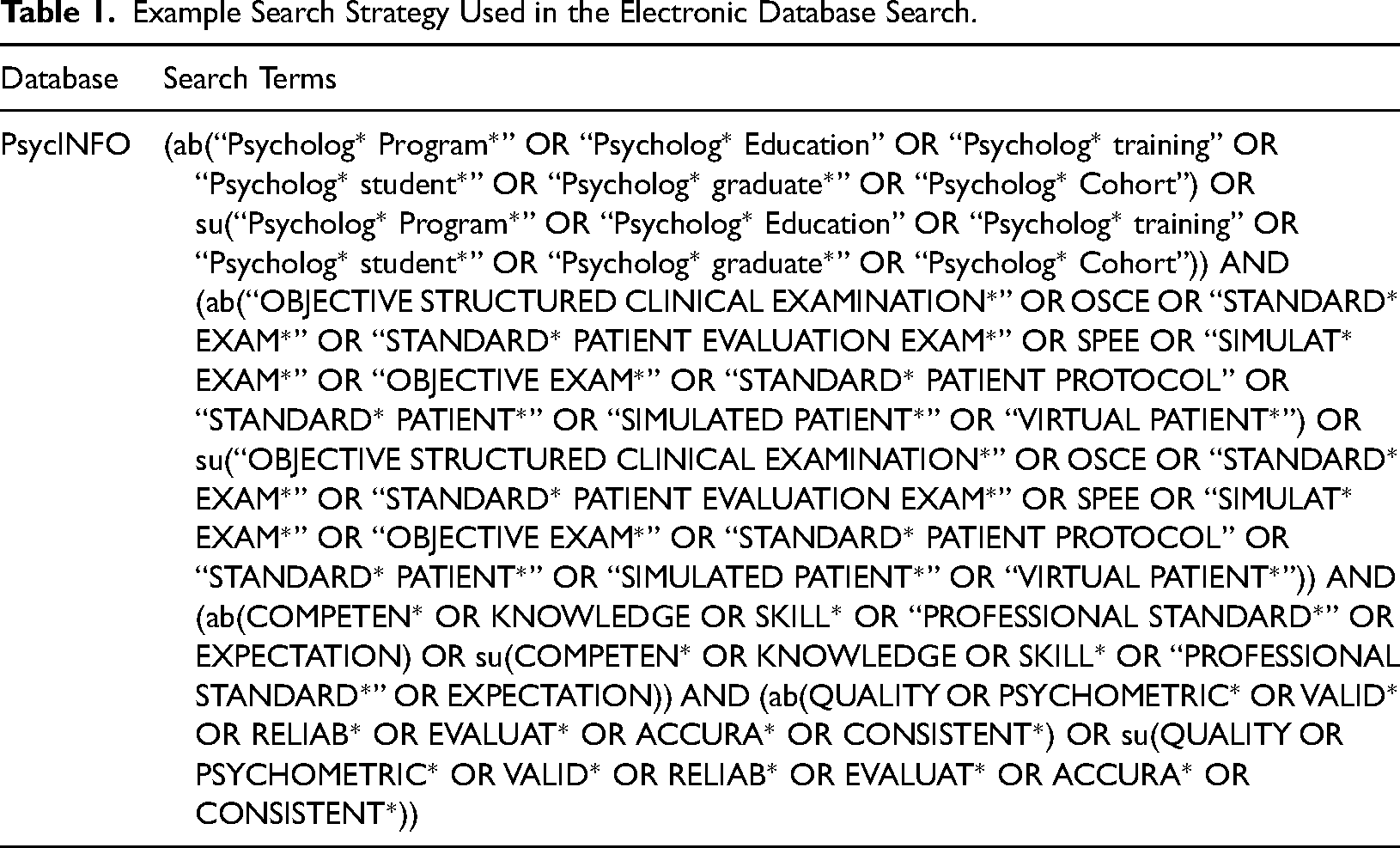

Initial searches were designed in consultation with a research librarian with expertise in literature searching using key terms “psychology program”, “objective structured clinical examination”, “clinical competency”, and “quality”. APA Thesaurus of Psychological Index Terms was used to identify additional relevant terms. The initial strategy was revised upon evidence that psychotherapy and simulation-based assessments met inclusions criteria. Each term and its synonyms were searched independently within abstracts, headings, and study texts and then later combined. Reference lists were also manually searched from retrieved articles (see Table 1 for sample search strategy).

Example Search Strategy Used in the Electronic Database Search.

Selection Process and Study Risk of Bias Assessment

All search results were imported into a reference management software (EndNote) and then into a web database (Covidence). Two reviewers independently performed title and abstract, and full text screening against inclusion and exclusion criteria, after removing duplicates and those studies which did not meet inclusion. Disagreement between reviewers was resolved through discussion and consensus was reached across all studies.

Appraisal of Methodological Quality

Methodological quality and risk of bias of included studies were assessed using the Mixed Methods Appraisal Tool (MMAT), version 2018 (Hong et al., 2018). The MMAT is a critical appraisal tool designed for systematic mixed study reviews and was chosen based on usage within other systematic reviews of OSCEs in other professions (Boland et al., 2020; Nataša Mlinar et al., 2017; Vincent et al., 2022) and appropriateness given the diverse study designs included within the review. For quantitative studies, the MMAT evaluates sampling, construct measurement, nonresponse bias, and statistical analyses, and for qualitative studies it evaluates data collection, interpretation of findings, and overall coherence. Additionally, if studies utilized a mixed methods approach the overall study was evaluated for rationale, integration, and inconsistencies. An overall score was calculated based on the number of criteria satisfied by the included studies (maximum of 5) and represented by a rating indicative of risk of bias; “High” (0 to 1), “Medium” (2 to 3), and “Low” (4 to 5). For mixed methods studies, the appraisal was conducted on the basis that overall quality of a combination cannot exceed the quality of its weakest component (Hong et al., 2019). Thus, the overall quality score is the lowest score of the study components. The initial quality assessment was independently conducted by AV and RLD.

Data Extraction and Synthesis

Data was extracted by one author (AV) using a custom designed template. Items included study characteristics (country, year, study type, sample, aim, and key findings), commonly reported OSCE characteristics, and QAGs (Khan et al., 2013b).

Data Synthesis

Quantitative synthesis of studies was not possible as studies were predominantly quasi-experimental, uncontrolled studies using convenience samples, and were heterogeneous in the implementation and reporting of OSCEs (Cheung & Vijayakumar, 2016). As such, findings are summarized in a narrative form rather than using direct comparison (such as a meta-analysis) in accordance with the synthesis without meta-analysis guidelines (Campbell et al., 2020). Information is presented within text and tables to summarize and explain characteristics of the included studies.

Assessment of OSCE Implementation

Implementation of OSCEs was assessed against the eight QAGs (Khan et al., 2013b). Adherence to the guidelines was scored according to the number of criterions met and represented with a description. In the absence of existing cut off descriptors, scores were categorized according to the number of criteria satisfied; Poor (0 to 2), Fair (3 to 5), and Good (6 to 8).

Results

Study Selection

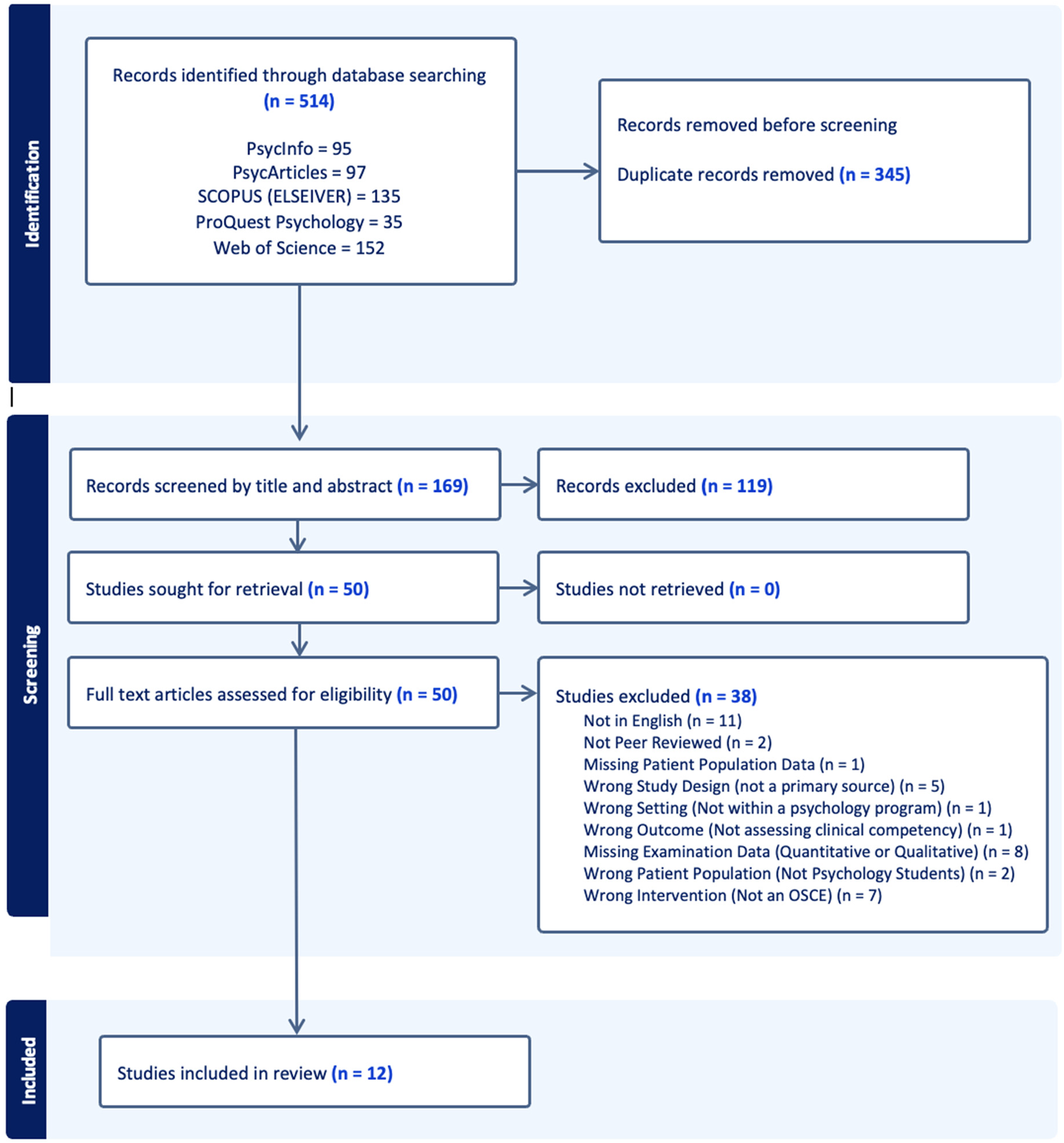

The electronic database search yielded 514 studies. Of these, 345 duplicates were removed and 119 were excluded following title and abstract screening. The full texts of the remaining 45 studies were assessed for eligibility. Of the 50 studies, 38 were excluded by applying the inclusion and exclusion criteria. This resulted in 12 studies for the systematic review. Manual searches of reference lists yielded nil results. Figure 2 presents the study selection process as a PRISMA flowchart.

PRISMA flow chart depicting study selection process.

Study Characteristics

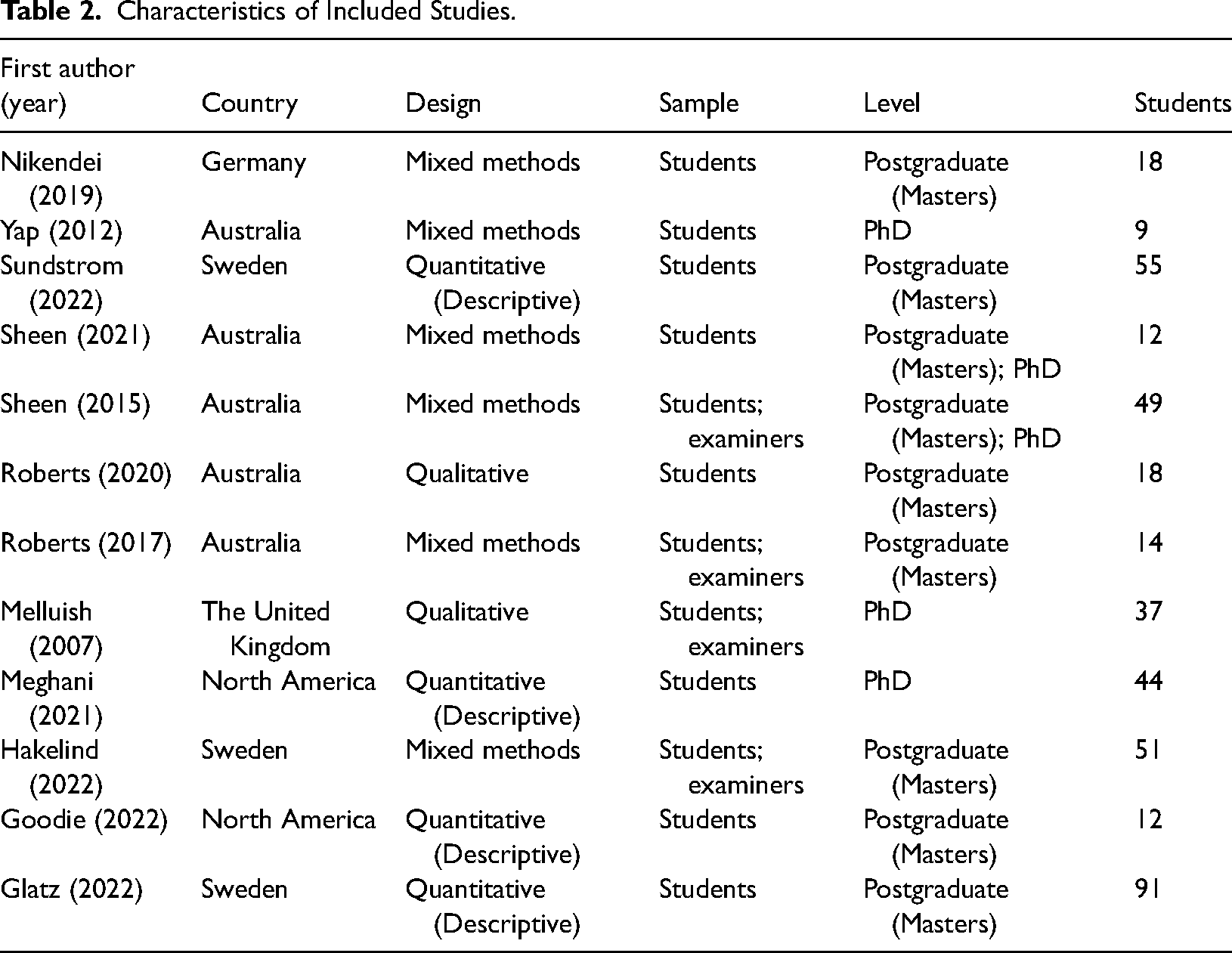

Studies were conducted across a number of countries. In descending order these included Australia (n = 5), Sweden (n = 3), North America (n = 2), the United Kingdom (n = 1), and Germany (n = 1). The study samples ranged from 9 to 91 students and varied in the inclusion of students (n = 8) or students and examiners (n = 4). OSCEs were delivered within postgraduate (masters) degrees (n = 7), PhD degrees (n = 3), or both (n = 2). The included studies were mixed in their respective designs. Mixed methods designs were most dominant (n = 6), followed by descriptive quantitative studies (n = 4) and lastly, qualitative (n = 2). Summary of study characteristics is provided in Table 2.

Characteristics of Included Studies.

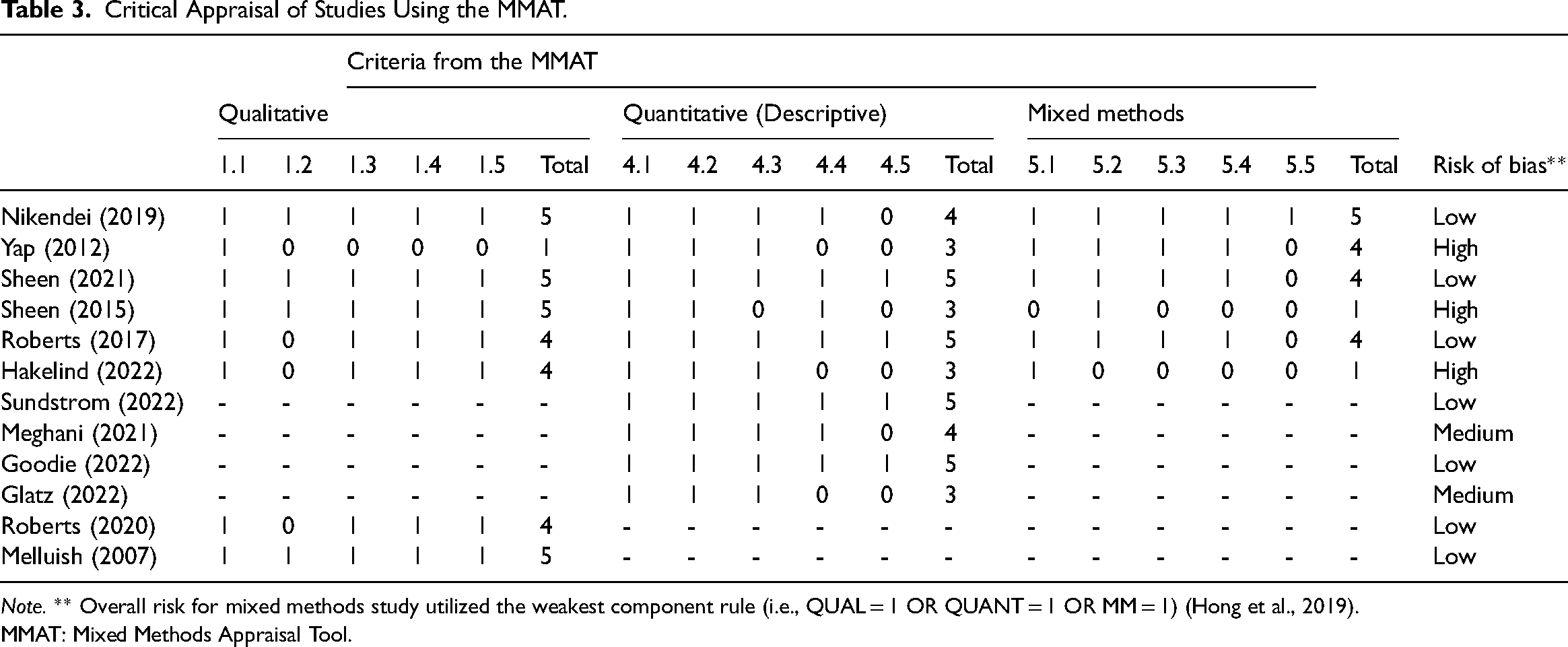

Critical Appraisal of Study Methodology

Studies were critically appraised utilizing the MMAT (Hong et al., 2018). With the exception of one (Yap et al., 2012), studies that utilized qualitative designs and elements were more likely to satisfy MMAT. Only four studies met criteria for appropriate statistical analysis (4.5) out of a potential of 10 studies (Goodie et al., 2021; Roberts et al., 2017; Sheen et al., 2021; Sundström & Hakelind, 2022). Incongruence was observed between quantitative and qualitative criterions within six studies which utilized mixed methods designs (criterion 5.5). The weakest component rule effected all six studies, resulting in lower overall scores (Hakelind & Sundström, 2022; Nikendei et al., 2019; Roberts et al., 2017; Sheen et al., 2015, 2021; Yap et al., 2012). Of these six studies, three performed better on qualitative criterions (Hakelind & Sundström, 2022; Sheen et al., 2015, 2021) and two on quantitative (Roberts et al., 2017; Yap et al., 2012). Overall, risk of bias was rated as “Low” within seven studies (Goodie et al., 2021; Melluish et al., 2007; Nikendei et al., 2019; Roberts et al., 2017, 2020; Sheen et al., 2021; Sundström & Hakelind, 2022), two as “Medium” (Glatz et al., 2022; Meghani & Ferm, 2021), and three as “High” (Hakelind & Sundström, 2022; Sheen et al., 2015; Yap et al., 2012). See Table 3 for critical appraisal of studies according to the MMAT (Hong et al., 2018).

Critical Appraisal of Studies Using the MMAT.

Note. ** Overall risk for mixed methods study utilized the weakest component rule (i.e., QUAL = 1 OR QUANT = 1 OR MM = 1) (Hong et al., 2019).

MMAT: Mixed Methods Appraisal Tool.

OSCE Characteristics

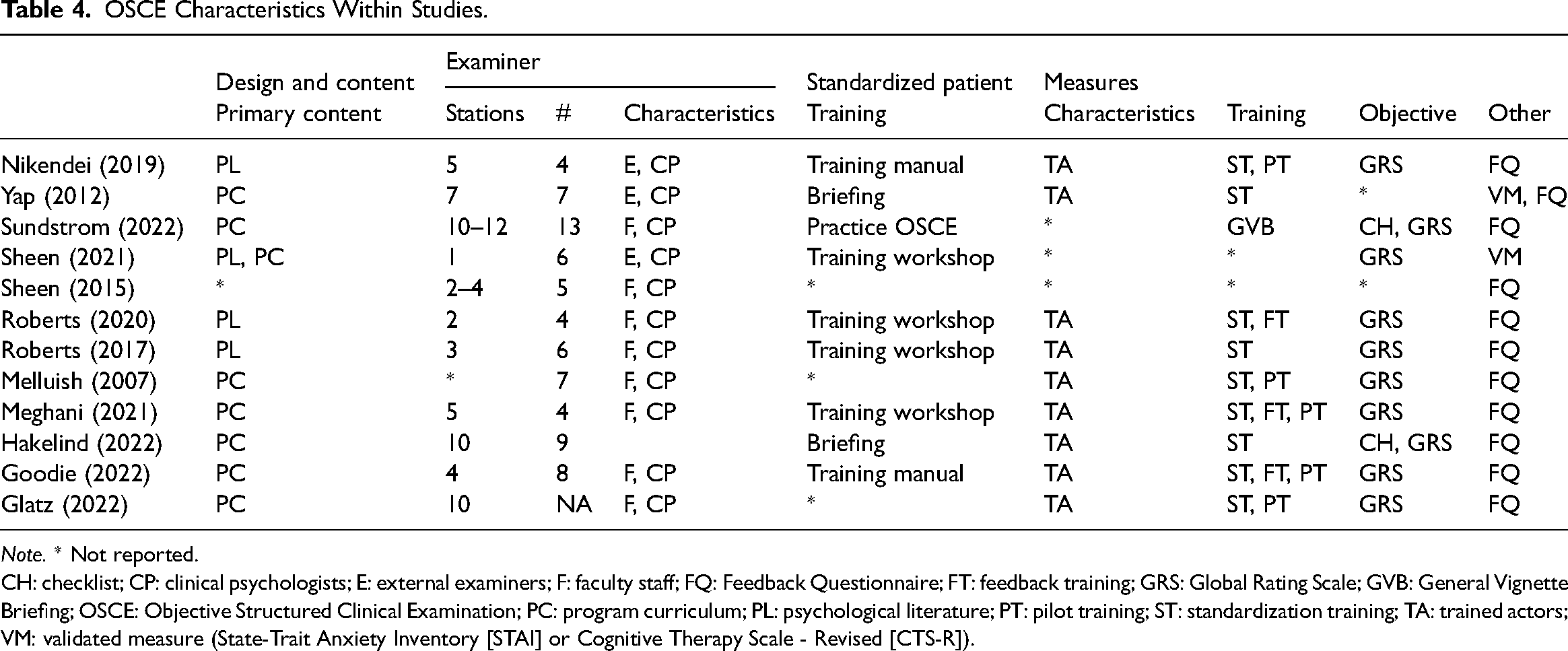

Design and Content

OSCE content development varied across studies. All studies developed stations according to program curriculums, psychological literature, or both and ranged in the number of OSCE stations from 1 to 12. See Table 4 for OSCE characteristics.

OSCE Characteristics Within Studies.

Note. * Not reported.

CH: checklist; CP: clinical psychologists; E: external examiners; F: faculty staff; FQ: Feedback Questionnaire; FT: feedback training; GRS: Global Rating Scale; GVB: General Vignette Briefing; OSCE: Objective Structured Clinical Examination; PC: program curriculum; PL: psychological literature; PT: pilot training; ST: standardization training; TA: trained actors; VM: validated measure (State-Trait Anxiety Inventory [STAI] or Cognitive Therapy Scale - Revised [CTS-R]).

Examiner Characteristics

Examiners largely shared the same characteristics across studies. Numbers ranged from four to 13 per examination. Three studies used external examiners (Nikendei et al., 2019; Sheen et al., 2021; Yap et al., 2012), while others either used faculty staff, experienced clinical psychologists, or both. Three studies did not report training protocols (Glatz et al., 2022; Melluish et al., 2007; Sheen et al., 2015) and for those that did, training workshops was the most common type of training provided, followed by training manuals, briefings, and practice OSCEs.

SP Characteristics

Apart from studies that did not report on characteristics (Sheen et al., 2015, 2021; Sundström & Hakelind, 2022), all studies hired trained actors as SPs. Actors were recruited from SP specialized university databases and trained to varying degrees. The most common was standardization training, while close to half incorporated pilot testing of clinical vignettes and few provided feedback training, whereby the SP provided direct feedback to the student following the OSCE. Two studies used all training approaches (Goodie et al., 2021; Meghani & Ferm, 2021).

Type of Objective Measures

Apart from two studies which did not report objective measures (Sheen et al., 2015; Yap et al., 2012), all studies used global rating scales as their objective measure of clinical competency. Global rating scales are individualized objective measures tailored to the specific requirements of the OSCE. Global rating scales can assess a broad range of outcome domains, including professionalism, clinical skills, knowledge, and reasoning. Two studies also used checklists (Hakelind & Sundström, 2022; Sundström & Hakelind, 2022), Sheen et al. (2021) used a validated measure to assess clinical intervention skills and Yap et al. (2012) assessed levels of anxiety. Ten studies paired objective measures with feedback measures. Additionally, all studies bar one (Sheen et al., 2021) used a student feedback questionnaire. The questionnaire typically explored the students’ perception of the design, validity, and utility of the OSCE assessment. For instance, in Roberts et al. (2017) students were asked to reflect on statements such as “the feedback I received as part of the OSCE facilitated my learning.”

Clinical Competencies

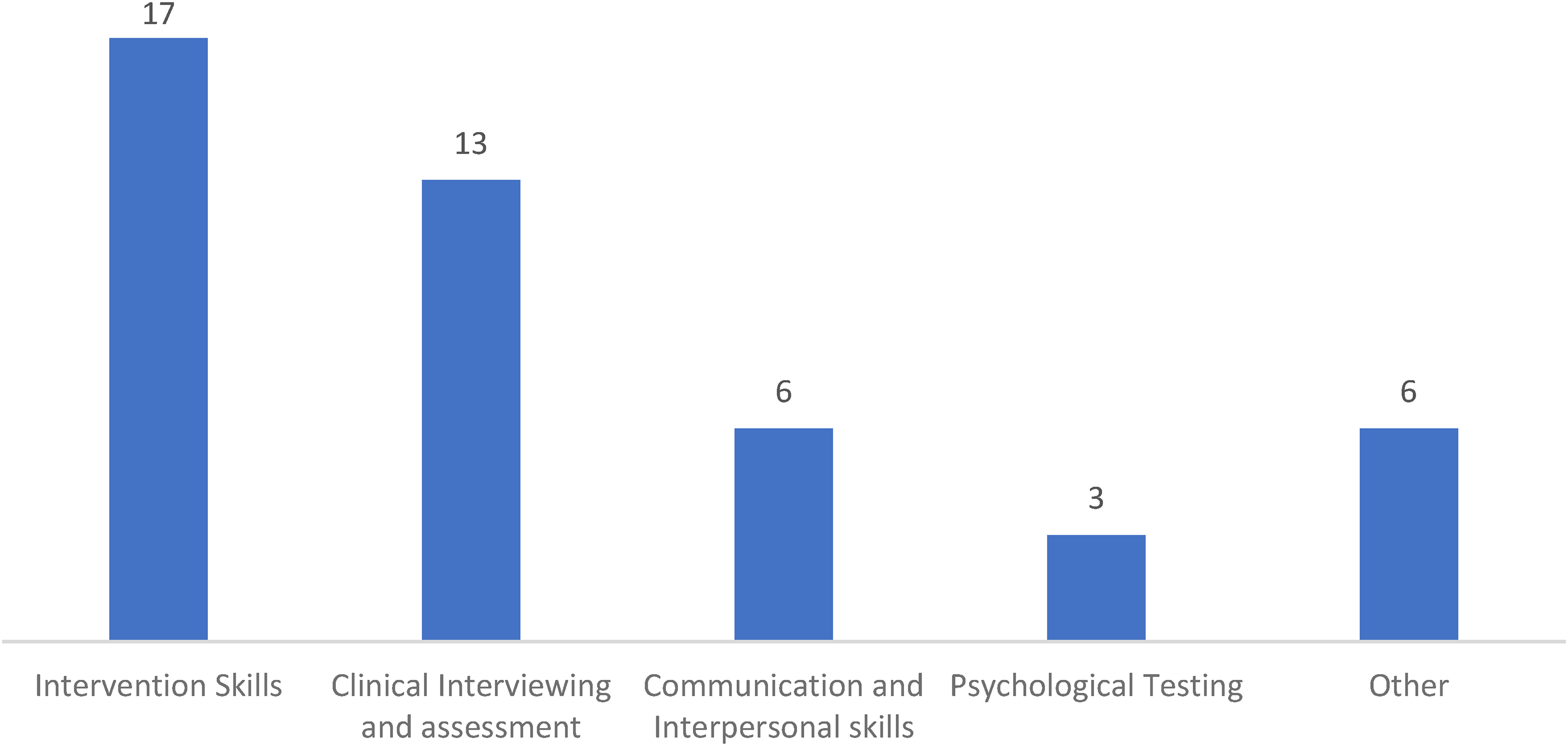

The clinical competencies assessed within programs varied, however some competencies were assessed more frequently than others. The most common competencies assessed throughout in descending order were intervention skills (motivational interviewing, psychodynamic, cognitive behavioural therapy [CBT], psychoeducation, and functional analysis), clinical interviewing and assessment (mental status examination [MSE], clinical interviewing, diagnostic assessment, differential diagnosis, risk assessment), communication and interpersonal skills, and psychological testing. Figure 3 represents the frequency of competencies assessed across all studies.

Frequency chart of competencies assessed within studies. Note. Subcompetencies within each heading are grouped as follows Intervention Skills (motivational interviewing, psychodynamic therapy, cognitive behavioral therapy, psychoeducation, and functional analysis), Clinical Interviewing and Assessment (mental state examination, clinical interviewing, diagnostic assessment, differential diagnosis, and risk assessment), Communication and Interpersonal Skills, Psychological Testing, Other (family assessment, knowledge, reasoning, ethical and legal standards, cultural diversity and note writing).

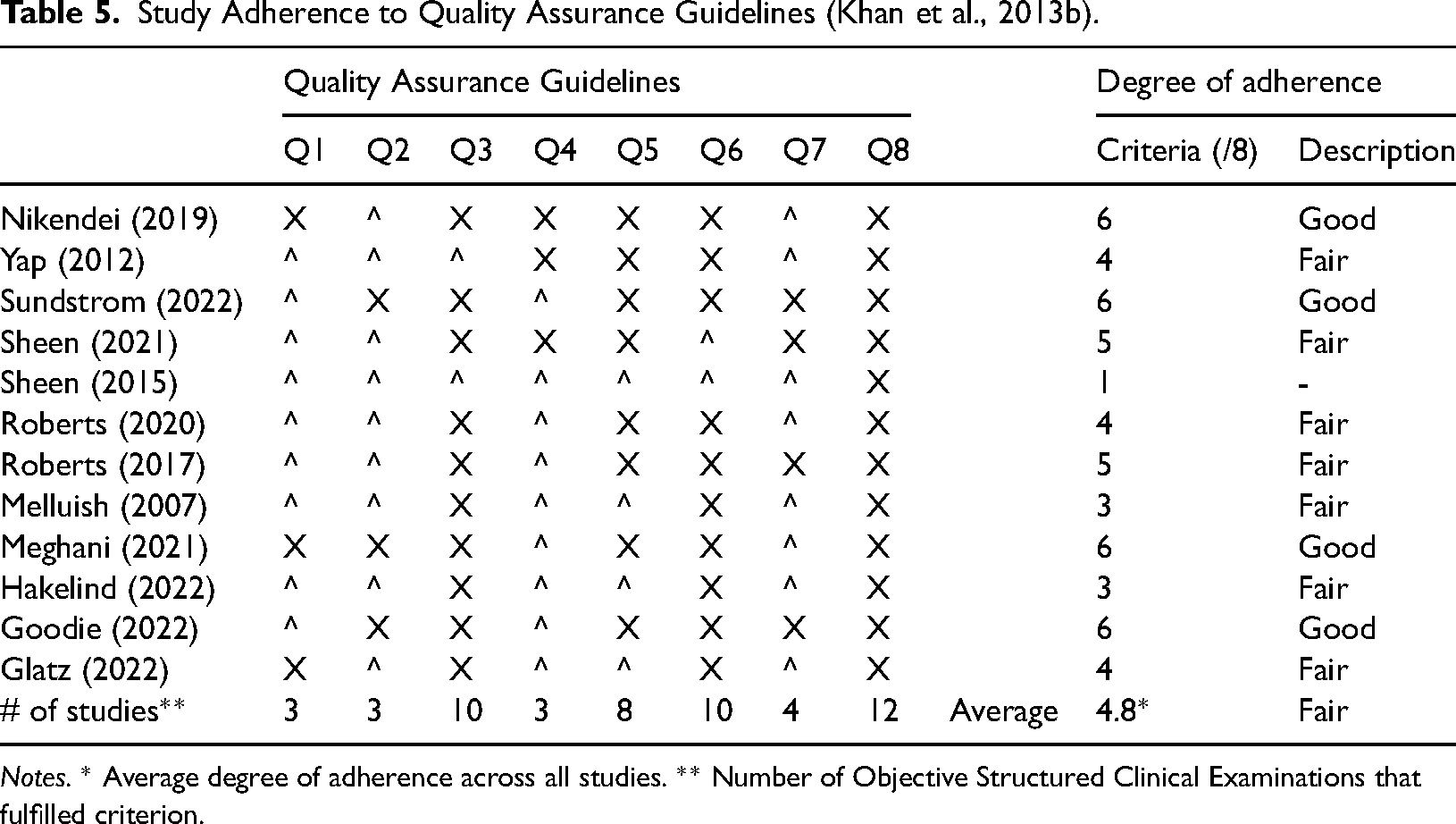

Adherence to QAGs

Assessment of studies revealed varied adherence to Khan et al.'s QAGs (Khan et al., 2013b). No examination satisfied all guidelines. Instead, studies ranged between one to six guidelines being fulfilled with an overall average close to five. 1 In accordance with scoring criteria, degree of adherence to guidelines was rated as “Good” for four studies (Goodie et al., 2021; Meghani & Ferm, 2021; Nikendei et al., 2019; Sundström & Hakelind, 2022) and “Fair” for seven studies (Glatz et al., 2022; Hakelind & Sundström, 2022; Melluish et al., 2007; Roberts et al., 2017, 2020; Sheen et al., 2021; Yap et al., 2012). Overall adherence across studies was “Fair.” The least reported criterions include; Validity (Q1), Peer Review of Stations (Q2), External Examiners (Q4), and Post-Hoc Psychometrics (Q7). On the other hand, all studies met criteria for Evaluation (Q8), followed by Objective Measures (Q3), Standardised Patient Training (Q6), and more than half satisfied Examiner Training (Q5). See Table 5 for study adherence to QAGs.

Study Adherence to Quality Assurance Guidelines (Khan et al., 2013b).

Notes. * Average degree of adherence across all studies. ** Number of Objective Structured Clinical Examinations that fulfilled criterion.

Discussion

The research on OSCEs highlights that specific tool construction and application can influence the correlation, validity, and reliability of this method of evaluation. Accordingly, the current systematic review aimed to infer the quality of OSCE assessments within the professional psychology training field by assessing implementation of OSCEs against QAGs (Khan et al., 2013b). To this end, this review found that overall adherence was “Fair” and by association, the same could be said for the likely psychometric quality of the exams. Analysis of QAGs met across the studies revealed varied patterns of adherence for particular components. These are discussed in descending order of adherence.

Evaluation

In contrast to post hoc analysis, evaluation considers the qualitative experience of key stakeholders within the examination process, to improve the quality and organization of future examinations (Oxlad et al., 2022). According to this definition, this review found that all studies engaged feedback from the key participants, with some exceptions. Feedback was not obtained from SPs and how it was used as part of the quality improvement process, was not discussed. This is a lost opportunity for programs, as the benefits often associated with simulation are gated behind the realistic recreation of assessment scenarios within the examination (Yap et al., 2012).

Balancing the objective, standardized and authentic aspects of OSCEs is critical. Therefore, it is recommended that post completion of OSCEs, studies continue to invite examiners, students, and SPs to provide feedback on their experience. Factors such as flow of the examination, clarity within instruction, appropriateness of tasks and real-world applicability of stations should be gathered at this time (Daniels & Pugh, 2018; Halman et al., 2020). SP feedback also has utility in forecasting experiences of students as practising psychologists. Questions such as “Based on your experience within this exam, how comfortable would you feel seeing this psychologist in real life?” and “Based on your experience within this exam, how likely would you refer a friend or family member to see this psychologist?”

Objective Measures

Most studies utilized custom designed global rating scales (GRS). GRS are better at assessing the quality of multiple skills performed concurrently (Ilgen et al., 2015) however are questionable in terms of reliability for some skills which require more subjective and pragmatic judgment such as communication and interpersonal skills (Brannick et al., 2011; Cömert et al., 2016; Piumatti et al., 2021). Assessment of psychological competencies is more likely to require idiosyncratic judgments in comparison to some other health professions (Goodie et al., 2021). One strategy is to utilize multiple metrics (Ilgen et al., 2015). Checklists and rating scales are commonly used to mark different types of assessments including OSCEs (Wood & Pugh, 2020). Subjectivity can be mitigated by adding task specific checklists to global rating scales which together improve overall validity (Khan et al., 2013a). Another strategy is to provide a more definitive rubric that has been adapted to the competency or task being assessed and contain behavioral anchors allowing for greater discrimination by assessors (Donohoe et al., 2020).

Examiner Training

Majority of examiners underwent some sort of training in advance of the OSCE however reporting of training content varied. Nevertheless, training examiners has been shown to reduce variation and consistency of scoring (Schüttpelz-Brauns et al., 2019). This is due to reliability of scores depending on examiner knowledge of OSCEs (Khan et al., 2013b). Accordingly, standardization of training protocols according to key learning outcomes may assist with maintaining quality, identifying knowledge gaps, and minimizing assessor bias. Several authors have drafted key learning outcomes for examiners within OSCEs (Khan et al., 2013b; Newble, 1988; Schüttpelz-Brauns et al., 2019).

Standardized Patients

All studies hired actors as SPs and provided different types of training. The variation in intensity and type is consistent with the understanding that SPs will require varying degrees of input to find a balance between portraying clinical conditions in a realistic, repeated, and reliable manner (Cleland et al., 2009). This is critical due to psychological conditions and vignettes varying significantly to roles designed for medical examinations from which SPs are usually hired (Goodie et al., 2021). Consequently, it would greatly benefit training programs to develop a database of SPs with expertise in roleplaying psychological conditions across different age ranges and severities. Furthermore, developing key competencies for SPs may allow for staff and students to be trained without compromising on authenticity (Goodie et al., 2021). And last, all SP performances need to be quality tested in advance of OSCEs to ensure portrayals are reliable and realistic (Khan et al., 2013b).

Validity

There was evidence that suggested programs were invested in designing and implementing OSCEs as authentically as possible. This included narrative descriptions of rigorous protocols followed throughout the implementation process to ensure alignment with program curriculums and broader psychological competencies. This is necessary due to the psychology OSCEs having relied on descriptions from medical and other related professional programs which do not cover the range of competencies required in clinical psychology such as psychometric assessments and model-specific clinical intervention skills (Goodie et al., 2021; Harden, 2016). Furthermore despite current findings around face validity (Roberts et al., 2017; Sheen et al., 2015; Yap et al., 2012), a limitation of “face-content” is that it primarily is an indicator of fairness and relevance and not convincing unless supported by further evidence (Downing & Haladyna, 2004). Therefore, in the absence of quantifiable data, there is still uncertainty as to whether these OSCEs are valid for the field.

When assessing or evaluating OSCEs or pilot examinations, correlation with other measures of competence are a good indicator of validity (Khan et al., 2013a). This remains true in light of some competencies being assessed differently depending on whether they are delivered within an exam or demonstrated within an OSCE (Halman et al., 2020). Therefore, one recommendation includes, comparing OSCE results with other measures of competency (Vincent et al., 2022). Second, like the studies in this review, psychology programs need to blueprint, OSCE stations and task against the program curriculum or standards relevant to the psychologist profession (Sundström & Hakelind, 2022). Third, given that some competencies are assessed less reliably than others, it is necessary to determine which psychological competencies should be assessed by OSCEs. And last, investigating the association between OSCE scores and future placement performance or licensing exams has been recommended by several authors (Meghani & Ferm, 2021; Sheen et al., 2015; Sundström & Hakelind, 2022).

Peer Review of Stations

Peer Review of Stations was poorly adhered to throughout the review. Testing the test is vital because the delivery of OSCEs is complex multifaceted, resource intensive, and costly projects in relation to other assessments (Daniels & Pugh, 2018). The large number of moving parts is likely to contribute to error variance, unless identified and corrected in advance (Pell et al., 2010). As such, peer review should screen (using either a checklist or standardized review form) for the most likely sources of bias such as proactive simulated patients whose questions act as prompts to the students, inconsistent application of marking rubrics by assessors, design, equipment and instructions across stations and more (Pell et al., 2010). And as with all large-scale events, the flow and smooth operation on the day is directly correlated with the number of rehearsals and “dry runs” performed in advance of the OSCE (Khan et al., 2013b).

External Examiners

Most studies utilized faculty staff and did not hire external examiners. This presents a threat to the “objective” in OSCEs as studies investigating supervisor feedback have found ratings to be positively skewed, unreliable, influenced by student characteristics and contextual factors (Gonsalvez & Freestone, 2007). Accordingly, staff who are assessing familiar or known students may be more likely to feel pressure to pass them, even if they do not fully meet the competencies (Meghani & Ferm, 2021). In contrast, external examiners are more likely to be able to apply assessment rubrics in a rigorous, objective and fair manner (Khan et al., 2013b). On the other hand, the convenience and cost saving benefits of using faculty staff can be maintained if learning outcomes of OSCE examiners are demonstrated ahead of OSCEs (such as through pilot OSCEs and trial scoring) (Khan et al., 2013b). Secondly, utilizing multiple raters at each station is likely to improve reliability (Brannick et al., 2011). Thirdly, video or audio recording student performances allows for blinding procedures to be implemented to negate the influence of examiner relationships with students (Roberts et al., 2020). Another potential cost effective option is to train student raters as recent studies have found comparable inter-rater reliabilities to expert raters (Donohoe et al., 2020).

Post Hoc Psychometrics

Post hoc analysis of OSCEs was poorly represented across all included studies. Where data was provided, it was either insufficient or inconsistently reported to support the reliability or validity of the exam. This finding is consistent with those of a 2012 review of 104 medical OSCEs which reported that close to two-thirds of all studies failed to report on validity and reliability data (Patricio, 2012). Nevertheless, the heterogeneity in reporting can be understood as resulting from the lack of standardized procedures for the measurement and collection of data (Brannick et al., 2011), structure when reporting and standardized quality metrics (Patricio, 2012). Accordingly, standardized guidelines on reporting examination data would allow for meaningful analysis at the institution and broader psychological field level. Pell et al. (2010) provides OSCE examination metrics that can be used to measure the overall quality of assessments. Pell identified six key metrics including measuring internal consistently, the coefficient of determination, and between group variations. While based on medical OSCEs, they provide a checklist that guides the reporting of results and factors to consider when measuring and analyzing the effectiveness of OSCEs. Until psychology-specific metrics emerge, it is strongly recommended for future programs to design, analyze, and report according to these metrics. These metrics may also be useful in facilitating the development of psychology-specific criteria.

Methodological Quality and Review Limitations

The review findings should be considered in light of possible risk of bias in the included studies as most indicated some risk of bias. Methodological quality and risk of bias of included studies was assessed using the MMAT, version 2018 (Hong et al., 2018). Overall, the majority of studies were categorized as “Low” to “Medium” risk of bias. This is promising, as poor methodological quality is a common criticism found within the literature across programs (Bobos et al., 2021; Brannick et al., 2011; Ilgen et al., 2015). Additionally quantitative studies and quantitative components of mixed methods studies invited more risk. There may be several explanations for this finding. Appropriateness and feasibility were significant considerations around which many study designs were created. The novel and exploratory nature of the aims may have resulted in confounds and biases not being controlled. Second, post hoc psychometrics was a weakness across all studies however more so impacted quantitative studies. Third, mixed method designs primarily focused on qualitative objectives such as perceptions and experiences of OSCEs and accordingly scored higher on qualitative components.

There were also several limitations of this review. In particular, the small sample size of studies limits generalizability of findings and the inclusion of varied study designs makes comparisons subjective. However, this was to be expected given the dearth of literature examining OSCEs within psychology programs (Sheen et al., 2015). There was also a need for review of the study selection criteria in response to insights derived from the results. Expanding inclusion criteria may have facilitated greater coverage, however without an overriding set of principles guiding OSCE development, heterogeneity in the reporting of data would nevertheless complicate meaningful comparison making.

While a significant strength of the current review was its broad examination of studies against OSCE specific QAGs, the way these guidelines were interpreted and appraised may be open to bias. The current review took an approach to note whether the guidelines were reported (like a checklist) instead of quantifying the extent of adherence to the guidelines. While this may have prevented extraction of more meaningful data it does limit the degree of subjectivity. In saying that, the finding that OSCEs have “Acceptable” psychometric quality is consistent with findings from other reviews (Bobos et al., 2021; Brannick et al., 2011; Cömert et al., 2016; Ilgen et al., 2015; Yap et al., 2021).

Implications, Recommendations, and Future Research

The current review has several implications. First, there is a need to authenticate perceptions of validity, authenticity, and reliability within OSCEs with quantitative data. Second, greater focus needs to be placed on conducting statistical analysis of OSCE results and using the outcomes to enhance the quality of the examinations. And third, psychology programs should aim to standardize OSCE implementation and reporting of results.

While this review recommends utilizing the QAGs of Khan et al. (2013b), there are psychology-specific recommendations available that carry similar themes. These were not chosen for the study as they were not derived from analysis of examination data and applied to other assessment types. For example, Yap et al. (2021) provides seven “tips” for implementing psychological OSCEs and Paparo et al. (2021) provides a set of guidelines for simulated learning assessments. It is important that psychology programs reach a consensus on the optimum guidelines and work toward standardizing the implementation and reporting of future clinical examinations in a consistent way with quality assessment and adherence to standardized indicators.

One of the major criticisms of OSCEs is the financial cost in comparison to other forms of assessment (Yap et al., 2012). OSCEs are expensive in terms of resources, time, and personnel required (Kaslow et al., 2009). However, they also enhance students’ learning experiences and provide an opportunity to safely translate knowledge into practice (Sheen et al., 2021). Although OSCEs are an expensive assessment if the decision is made to assess using OSCE then an “all-in” mindset is required in order to ensure maximum return on investment. This will ensure adequate allocation of resources toward each guideline and increase the likelihood that benefits will be realized (Khan et al., 2013b). Furthermore, there are cost-effective strategies available to assist with overcoming barriers to implementation. For instance, psychology programs could share a library of vignettes, OSCE stations and objective measures. Similarly, objectivity can be enhanced by creating an external examiner and SP database where faculty from one program can assess OSCEs from another program and vice versa. Examiner and SP training can be run across institutions and should aim to satisfy learning outcomes proven to improve OSCE implementation (Khan et al., 2013a).

Nevertheless, the current findings suggest that there is still significant scope and need for studies to pilot or evaluate OSCEs using quantitative designs and report data in accordance with guidelines (Pell et al., 2010). Other avenues of investigation include investigating which psychological competencies are best assessed within the OSCE format. For instance, this could involve conducting studies to evaluate the effectiveness of OSCEs in assessing competencies such as crisis intervention skills or therapeutic alliance building, compared to traditional methods like written examinations or essays. A further objective should be to validate the psychometric properties of GRS and checklists used within OSCEs, and demonstrating validity through convergence with other measures of clinical competency. This might involve comparing OSCE performance with performance on assessments such case-based discussions of clinical decision making. Moreover, longitudinal research could be conducted to assess if OSCE performance during training predicts future performance during clinical placements.

Conclusion

The current systematic review broadly examined OSCEs within psychology programs against a set of QAGs, finding overall adherence to be “Fair.” This finding raises concerns around current decisions being made on student competency within psychology programs using OSCEs. At the same time, there is also promise, when recognizing that OSCEs are relatively new within the field and there is substantial scope for refinement (Sheen et al., 2015). It is hoped that this review will motivate future psychology programs to standardize development, implementation, and evaluation of OSCEs.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.