Abstract

To aid in teaching dialogue skills a virtual simulator called

Introduction

Communication plays a pivotal role in many professions (e.g., Silverman et al., 2013), yet academic education, especially at the bachelor level, is predominantly aimed at developing theoretical knowledge. Even in programs in disciplines such as medicine and psychology that revolve around treating patients, advising others, or collaborating with colleagues,

Learning to communicate starts in childhood and continues well after academic education. Traditionally, communication was to a large extent learned as part of a hidden curriculum that did not find its way into formal education (e.g., Hafferty, 1998; Windolf, 1981). Still, basic communication training, and advanced conversation methods may be a welcome addition to perhaps every curriculum, as communication is relevant in many fields (Lala et al., 2017). In healthcare psychology, at least in the Netherlands, verbal communication (or dialogue) skills are a prerequisite for admission to post-master’s programs needed to acquire national registration for individual healthcare professionals, and therefore need to be taught at bachelor and/or master levels.

For many professional conversations, best-practice models exist, for example, for delivering bad news (Van der Molen et al., 1995), persuading others (Cialdini, 1984; Perloff, 2008), negotiating (Fisher et al., 1981) and motivating people (Miller & Rollnick, 2013) to name a few, but how to teach these to students? The predominant mode of instruction at academic institutions remains lecturing (Handelsman et al., 2004; Lee et al., 2020; Konopka et al., 2015). This form of “passive learning” is associated with lower performance in various fields, including science, technology, engineering, and mathematics (Freeman et al., 2014). Dialogue skills are eminently tacit knowledge, which require automated processes, including intuition and emotions. People's explicated cognitions rarely explain to the full what people actually do (e.g., Demetriou & Wilson, 2009). This means that to learn these skills, active (learner-centered) learning is required to be effective (e.g., Berkhof et al., 2011; van der Vleuten et al., 2019). Organizing an environment for active learning is however challenging for various reasons, related to what is learned, how learning takes place, and how we can provide a practical and affordable learning environment.

Dialogue training as tacit knowledge is exceptional, because of the dynamic circumstances in which skills are applied. Any conversation involves at least two parties with their own goals and motives, who set objectives and—either explicitly or implicitly—adapt their strategies based on different interpretations (Hargie, 2011). In addition, each student differs in onset skill levels and talents, which necessitates adaptive instruction. Meanwhile, how instructions land with students, remains largely hidden from view, as students may even shield off their mental processes to save face. Verbal communication is very personal.

This is where active learning through digital simulations may provide solutions. As people learn through experience and feedback, learning requires that we provide an environment that mimics the actual environment of skill application, at least to an extent that it provides a relevant challenging experience. In the current academic training environment, prevalent methods include professional training actors and roleplay exercises between students (e.g., Berkhof et al., 2011; Deveugele et al., 2005). However, each of these methods has its drawbacks. Professional training actors are expensive, which means training all students is unrealistic. Professional actors are thus mostly reserved for assessment situations. Roleplay with fellow students is cost-efficient and does enable individual training. This form of practice however is suboptimal as knowing each other personally may hinder students’ ability to get immersed in the roleplay and a lack of experience with the actual real-life situation means fellow students mostly fail to identify with what is at stake. This means counterplay may not include the complex emotions essential to the experience. So how can we make verbal communication training scalable? Technology might provide an answer. As digital flight simulators enable pilots to practice challenging circumstances endlessly in a safe learning environment, the same counts for simulations with many other complex skills (Aldrich, 2004). Simulation-based learning appears to be an effective means to facilitate the learning of a variety of complex skills in a variety of academic settings (Chernikova et al., 2020).

In medical education, where virtual patients are more prevalent, studies conclude that interactions with digital patients can be effective in the development of skills such as giving bad news and acquiring information (see Bosman et al., 2019 and Lee et al., 2020 for extensive reviews). Responsive simulations enable the student to apply present knowledge, and by providing direct feedback they inform students about what they already know and where they fall short. The case and the context of the simulation clarify the skills’ importance, as they show the consequences of failure and success. Simulations thrive on motivation: the motivation to perform, and when one falls short, the motivation to learn (Aldrich, 2009).

To enable experiential (verbal) communication training with simulations for large groups of students, between 2013 and 2016 Utrecht University in the Netherlands developed a virtual simulator aimed at training dialogues. This simulator—

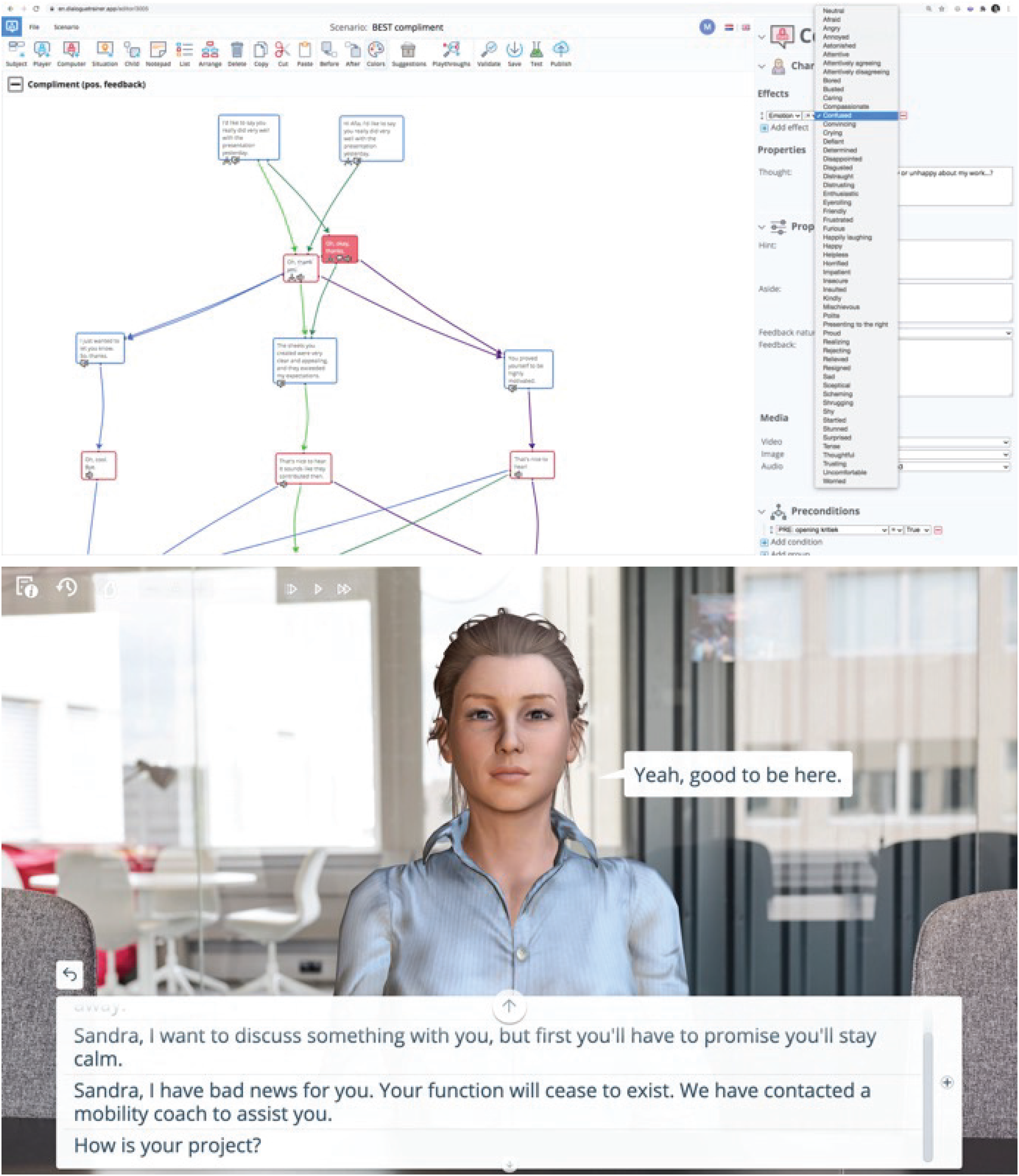

Impression of the editor (top) and user interface (bottom) of the current version of the DialogueTrainer platform (the successor to the

How does learning through such a platform compare to traditional communication teaching instruments such as written text and lectures? These well-tested and commonly used teaching methods clearly have value (e.g., French & Kennedy, 2017), and are designed to help students gain knowledge about conversation dynamics and behavioral patterns. This knowledge is assumed to help students set reasonable objectives in situations and evaluate the effect of a route of action. As compared to the passive “classical” learning instruments, the online

The quality of a simulation is thought to depend partly on its realism (e.g., Aldrich, 2009). The interaction within the

To assess the quality of the platform as a teaching instrument, and advance knowledge about the instructional design of dialogue simulations, we set out to explore the possible effects of the simulations (and platform) on learning as part of a real-life dialogue skills training course in a Psychology bachelor program in the Netherlands. We set up two related one-day experiments during subsequent iterations of the course, comparing the effect of using the

Materials and Methods

Research Setting

The psychology curriculum in the Netherlands is divided into a three-year bachelor’s program and a one-year master’s program (with additional post-master education for healthcare psychologists). In the second or final year of the bachelor program, students participate in a 10-week “professional dialogue skills training course.” The course is obligatory for most students, and one can choose between several different modules within this course depending on the specialization within the bachelor's program. In spring 2015 and 2016, one module of this course focused on bad news conversations and involved the use of the

Participants

All participants (

Study Design

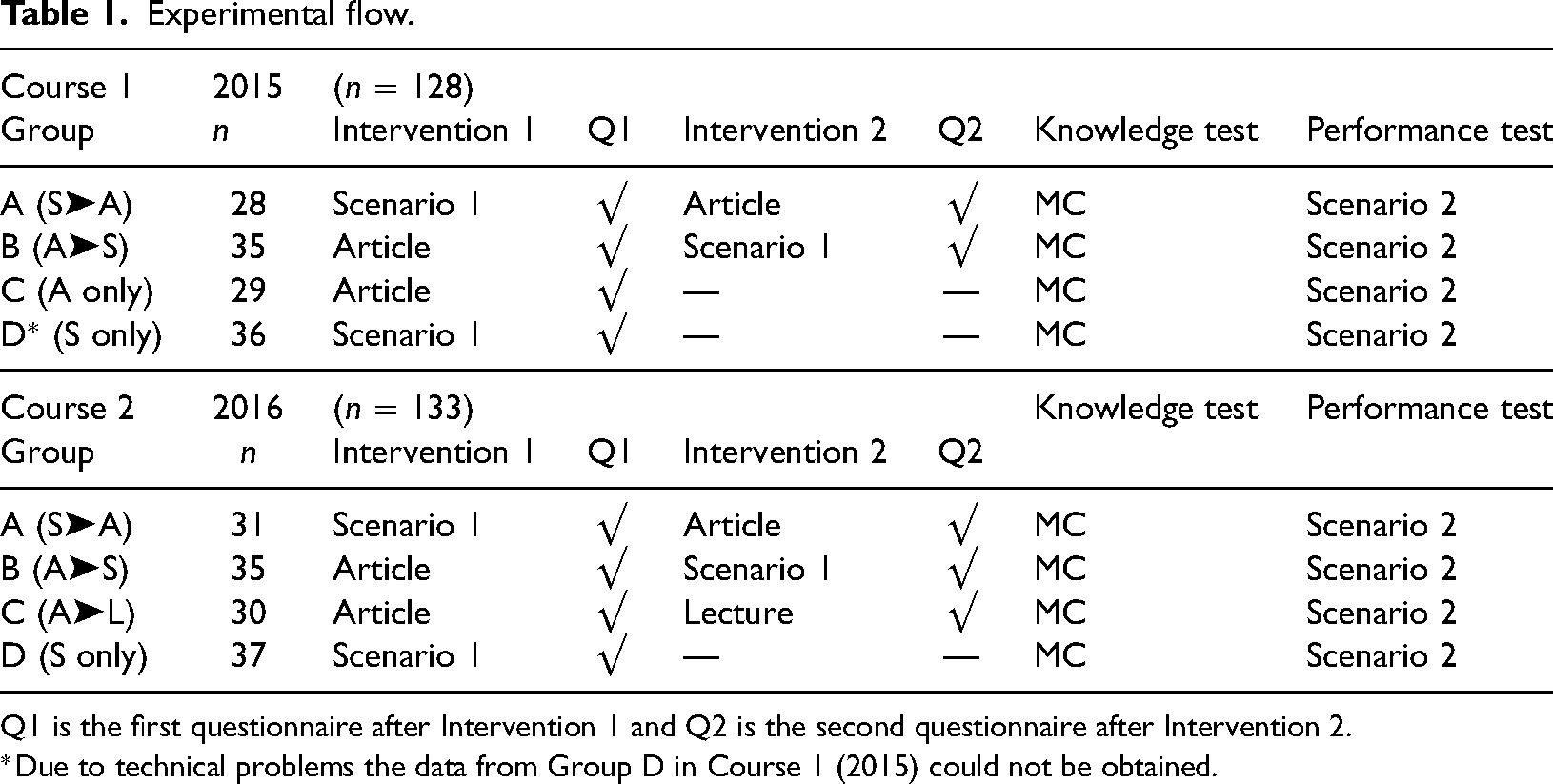

In both 2015 and 2016, the experiment was set up as a quasi-randomized controlled trial. Students were assigned to one of four groups (see Table 1). This assignment was as follows: each student was part of one of four workgroups in the course. Students from the first workgroup were assigned to Group A, the second to Group B, and so on. Since allocation to a specific workgroup in the course during enrolment occurred on a random basis, the allocation to our experimental groups can be deemed random. Each group was presented with one or two instructive interventions about

Experimental flow.

Q1 is the first questionnaire after Intervention 1 and Q2 is the second questionnaire after Intervention 2.

*Due to technical problems the data from Group D in Course 1 (2015) could not be obtained.

After each of the interventions, an online “motivation” questionnaire was filled out by each student. In addition, a set of “emotion cards” (e.g., Hulsbergen et al., 2019) was presented to gauge the emotions students experiences during the interventions. However, as the use of emotion cards was not yet validated at the time, the effects hereof were not further explored.

Each intervention, including filling out the questionnaires took about 1.5 h. Around 4 h after the start of the experiment, all participants took part in a short multiple-choice knowledge test about delivering bad news, which was followed by each participant playing a different bad news scenario.

Interventions

The article students read was a chapter from van der Molen et al. (1995, pp. 143–154), which is commonly used in the Dutch psychology curriculum. Both scenarios were based on the same chapter, in terms of phases in the conversation, alternative routes of action which relate to learning goals, and the effects of those routes of action. The scenarios presented students with a practical situation, namely having to refer a patient to an alternative therapist, which the patient is expected to find undesirable. The scenarios were based on theory from the article, combined with best-practice expertise from professionals drawn from interviews. This resulted in a believable course of the interaction, including answer options in line with what players would say and believable responses from the virtual character. Scenarios were tested individually before implementation, to improve the matching of answer options to the students’ learning goals.

Scenarios were “played” using the

The lecture was based on the book chapter but added a perspective on the function of emotions during the five phases of processing bad news or mourning as described by Kubler-Ross (1969). These phases correspond to the phases that van der Molen et al. (1995) present as the best-practice approach.

Instruments and Outcome Measures

Questionnaire

The (5-point Likert-scale) questionnaire was aimed at six aspects of motivation:

Immersion and Usability were translated and adapted from the

For each participant, the average score on each of the scales on the motivation questionnaire was calculated. Cronbach's alpha was calculated for each scale and appeared reasonable (all

Performance Test

Because

Knowledge Test

The knowledge test consisted of 10 multiple-choice questions, based on the book chapter. On several questions, more than one answer was correct, such as: “Which emotions can you expect to encounter during a bad news talk?” of which all options were correct. For these questions, one point was given for each correct option and deducted for each wrong option. For the other (regular) multiple-choice questions, the correct answer yielded one point. This resulted in a maximum possible score of 17 points, which were converted into percentage scores. Cronbach's alpha for the knowledge test overall was 0.71.

Statistical Analyses

Since the present study is exploratory in nature we conducted separate analyses on each motivation questionnaire scale, on game scores, and on knowledge test scores. All analyses were performed in JASP 0.16 (JASP Team, 2022). Where assumptions of normality and/or homoscedasticity were violated, bootstrap procedures were applied using JASP's R module, based on Berkovits et al. (2000) and Spychala et al. (2020; code obtained from https://nadinespy.github.io).

Questionnaire

For the motivation questionnaire, we focus on the interaction between the group and the instance of the questionnaire (after intervention 1 or after intervention 2). This leaves Groups A (first scenario, then article) and B (first article, then scenario) in both experiments (2015 and 2016) and Group C (first article, then lecture) in 2016 only. First, we conduct a three-way mixed analysis of variance (ANOVA) on the effect of the intervention order, with a group (Groups A and B only) and year as between-subjects factors and questionnaire instance (1 or 2) as a within-subjects factor. If a year does not interact with a group, we subsequently collapse the data across the two experiments to gain more power in comparing these groups. To investigate the effect a lecture has as a second intervention, we then focus on 2016 only in a 2 (Group B vs Group C) × 2 (questionnaire instance) ANOVA with a group as between and questionnaire instance as a within-subjects factor.

Performance Test

The performance test was analyzed similarly to the questionnaires, with a three-way mixed ANOVA on the effect of the intervention order, with group (Groups A and B only) and year as between-subjects factors and scenario instance (1 or 2) as a within-subjects factor. If a year does not interact with a group we subsequently collapse the data across the two experiments to gain more power in comparing these groups. To investigate the effect of having a second intervention, we then focus on 2016 only in a 3 (Groups A, B, and D) × 2 (scenario instance) ANOVA with a group as between and scenario instance as between factor.

Knowledge Test

The knowledge test was analyzed separately for each experiment. We used a one-way ANOVA for each year to compare the test scores between groups.

Results

We will describe our three outcome measures separately, first focusing on the responses to the motivational questionnaires. How do the different interventions influence the students’ immersion, experiences, motivation to learn, and self-efficacy? Then, we will focus on a practical performance measure. Do the different interventions influence performance in playing a new

Motivation Questionnaires

Each of the scales analyzed adhered to the assumptions for a regular ANOVA. We first conducted a 2 × 2 × 2 mixed ANOVA with questionnaire instance (1 or 2; see Table 1) as a within-subject factor, and Group (A or B) and year (2015 or 2016) as between-subject factors, for each of the motivation questionnaire scales. For none of the six motivation scales, the year of the experiment interacted with the group or questionnaire instance and group (all

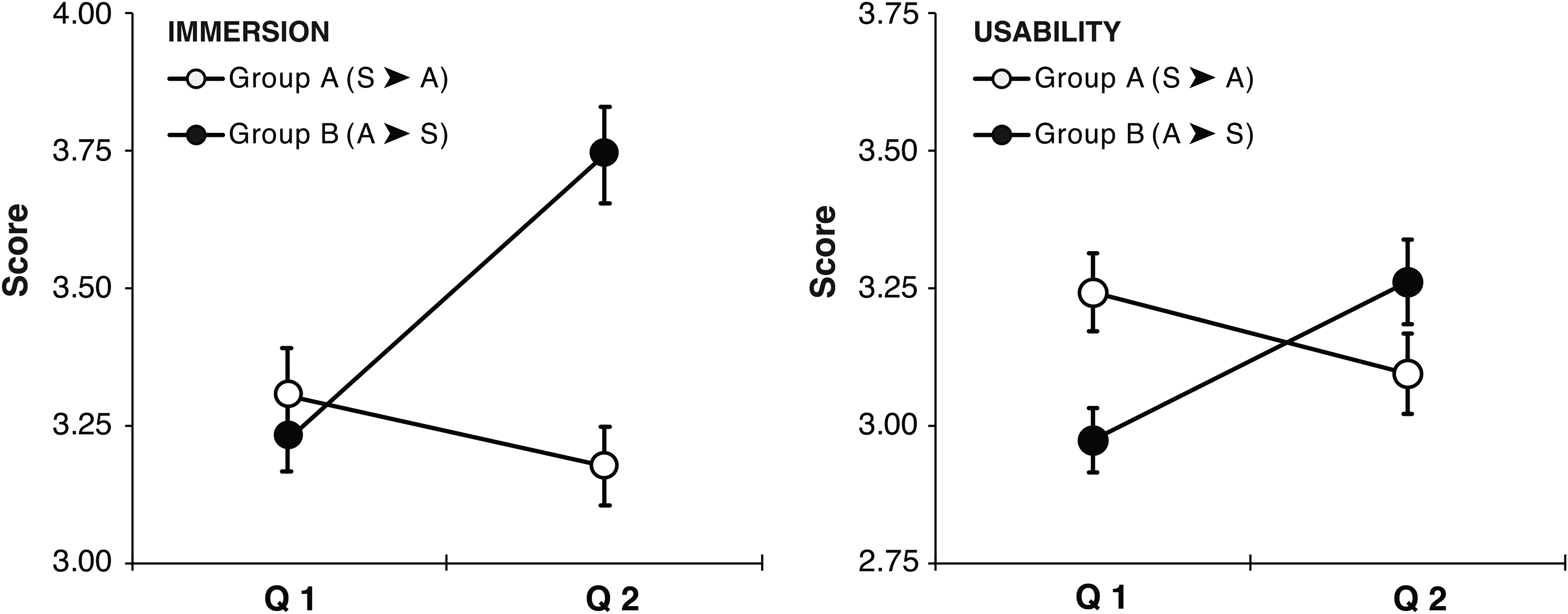

Usability Scales

For both the

Scores on the immersion (left) and usability scale (right) after the two interventions. Q1 and Q2 denote the two instances in which the questionnaire was filled out. Open circles show the scores for Group A that played a scenario as the first intervention and read the article as the second intervention. Closed circles show the scores for Group B that started by reading the article and played the scenario as the second intervention. Error bars denote ±1 SEM.

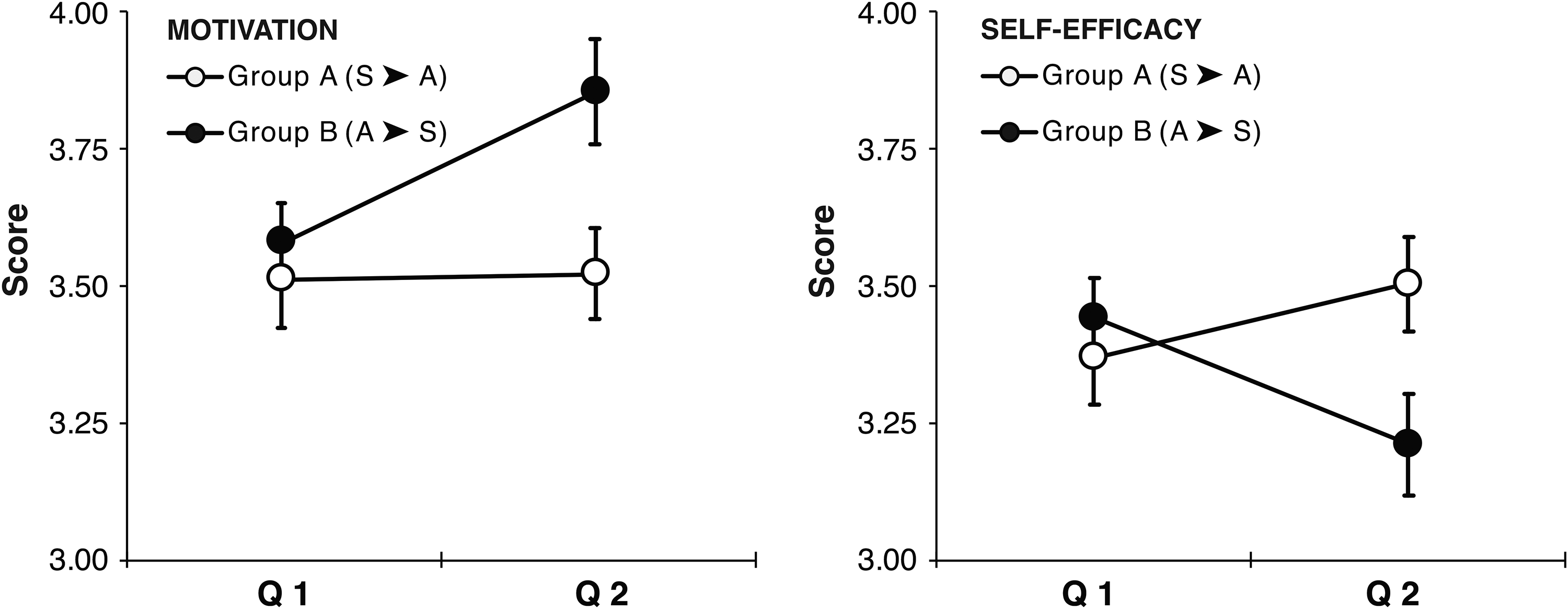

Motivation Scales

For both the

Scores on the motivation (left) and self-efficacy scale (right) after the two interventions. Q1 and Q2 denote the two instances in which the questionnaire was filled out. Open circles show the scores for Group A that played a scenario as the first intervention and read the article as the second intervention. Closed circles show the scores for Group B that started by reading the article and played the scenario as the second intervention. Error bars denote ±1 SEM.

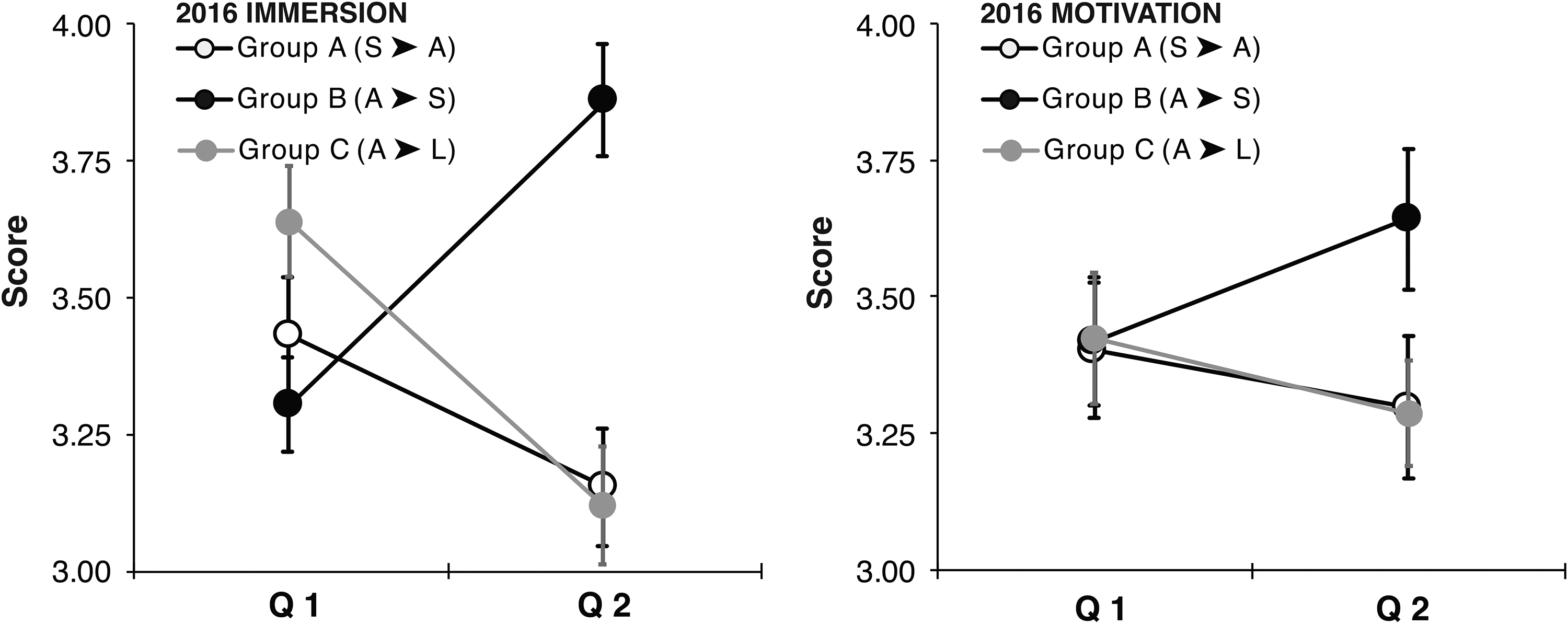

Comparison to a Lecture

To compare the differential effects of playing a scenario and listening to a lecture we subsequently compared Group B (A➤S) with Group C (A➤L) for the second experiment (2016) only (Figure 4 and Supplemental Tables S5 and S6). Since we have about half the number of participants, it is not surprising that we only find significant interactions on a few scales:

Scores on the immersion (left) and motivation (right) scale after the two interventions. Q1 and Q2 denote the two instances in which the questionnaire was filled out. Black circles show the scores for Group B that started by reading the article and played the scenario as the second intervention. Grey circles show the scores for Group C that started by reading the article and attended a lecture on the subject as the second intervention. For reference, open circles show the scores for Group A that played a scenario as the first intervention and read the article as the second intervention. Error bars denote ±1 SEM.

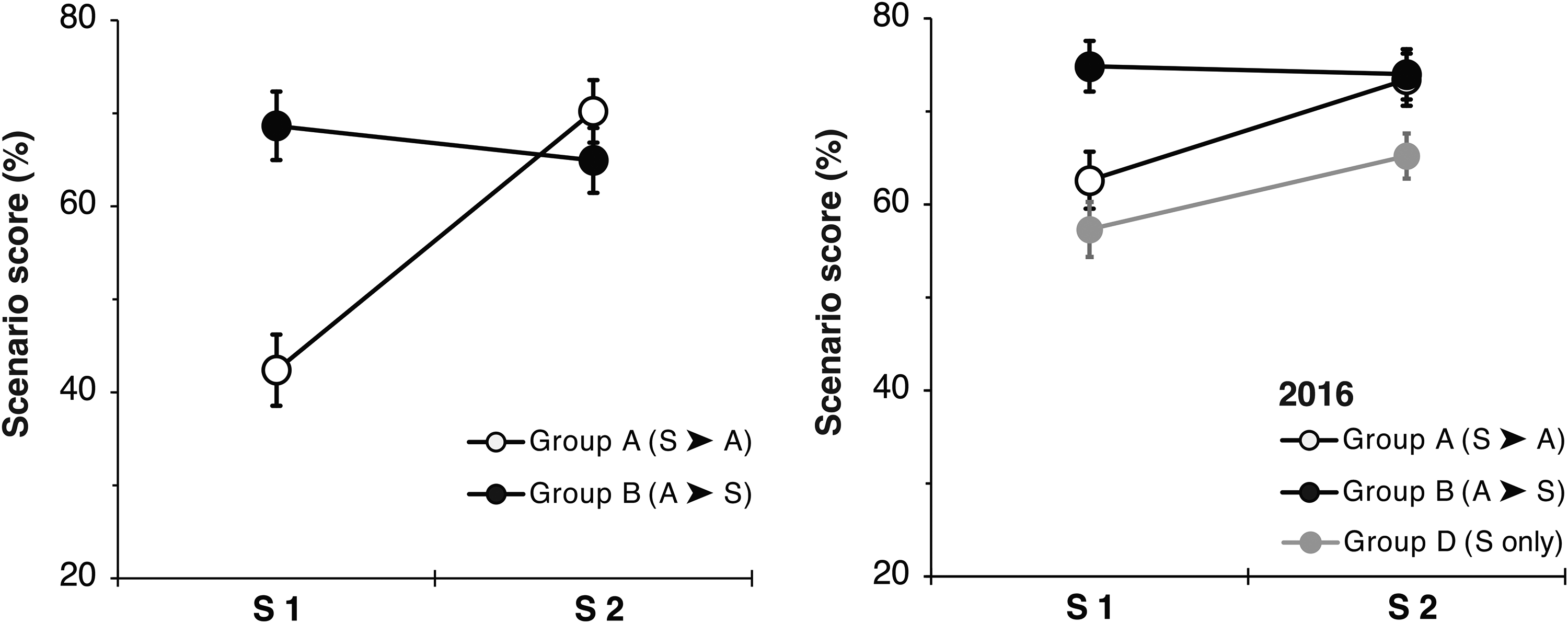

Performance Test

We analyzed whether scores on the second scenario (at the end of the session) were differentially affected by the different interventions. Since playing such a scenario may take a little practice, we were mainly interested in the possible improvement in scenario scores. Since the 2 × 2 × 2 three-way mixed ANOVA (with questionnaire instance as within-subject factor, and group and year as between-subject factors) did not yield a significant interaction between year and group (

Performance scores on the scenarios averaged across years for Groups A and B (left), and for 2016 only to also include the performance of Group D (right). Error bars denote ±1 SEM.

For students from Group D (2016 only), who only played two scenarios without reading the article, the score on the second scenario also improved significantly (see Figure 5, right panel and Supplemental Table S7).

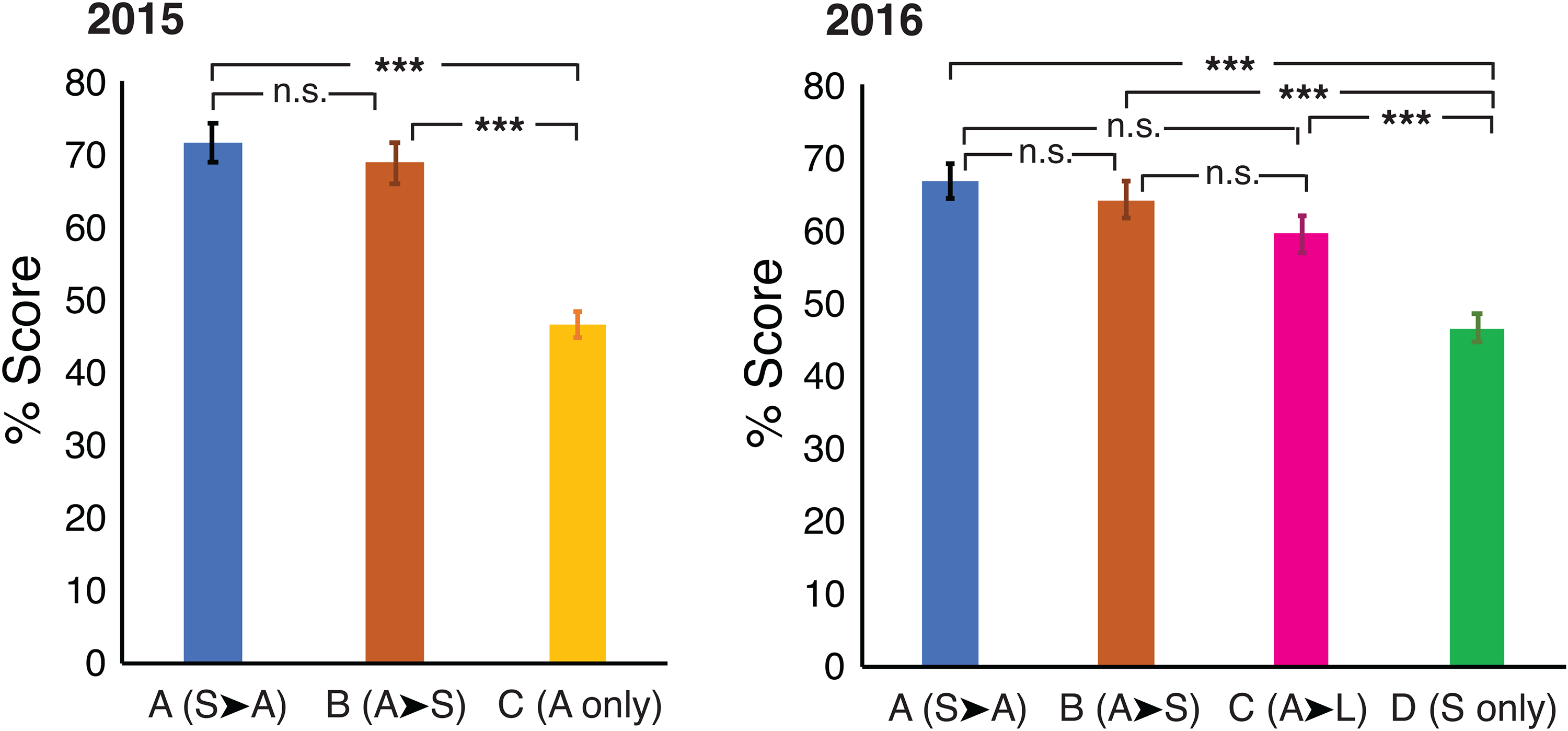

Knowledge Test

For both experiments, the scores on the knowledge test adhered to the assumptions for a regular ANOVA. To compare the scores on the knowledge test, we performed a one-way ANOVA for each experiment (2015 and 2016). For the three groups in 2015, there was a significant effect (

Discussion

We investigated whether the use of an online simulation platform can aid in teaching dialogue skills in (in our case) undergraduate higher education. Rather than the more often used qualitative methods (such as focus groups), we organized one day of an existing dialogue skills course as an experimental setting with a quasi-randomized controlled trial design in two subsequent iterations of this course. In this design, we created three different types of outcome measures: experienced engagement and motivation by the students (operationalized by the questionnaires), a practical performance measure (operationalized as the score on a second scenario), and a theoretical performance measure (operationalized as the score on a knowledge MC test). On all these outcome measures, we observed an effect of using the online simulation platform, yet its precise role appears not as clear-cut.

Discussion of the Results

Playing a scenario using the platform resulted in a higher reported

Similarly, the scores on a second scenario at the end of the experiment were higher than those on the first scenario,

Finally, the scores on the theoretical multiple-choice knowledge test (a traditional testing instrument) showed that time on task (e.g., Guillaume & Khachikian, 2009) probably accounted for the increase in scores on this test when in addition to reading the article a second intervention was added, be it playing the scenario or attending a lecture on the subject (Figure 6). Interestingly, only reading the article and only playing the scenario did not appear to differentially affect performance on the knowledge test. The simulations were not designed to teach students theoretical insights but to practice skills. Yet, the score increase for playing the scenario (after reading the article) was at least comparable to the increase when a lecture was attended. Moreover, although not directly compared as these manipulations were carried out for different experiments in different years, the scores on the knowledge test for the students that only read the article appear comparable to those for students that only played the scenario, which at least in part concurs with research showing “active” learning to improve study performance compared to “passive” learning (e.g., Freeman et al., 2014). This may also be one of the strengths of this platform, as it can provide written feedback during as well as after playing the scenario (Jeuring et al., 2015).

Scores on the knowledge test for the 2015 experiment (left) and the 2016 experiment (right). Groups are indicated on the

Limitations

Still, simulations on the

Our study also has limitations, the most important being that we cannot directly compare the effect of reading an article and playing the scenario, since technical problems prevented us from including the scenario-only condition in 2015. A considerable number of students who started with a scenario in 2015 (Group A) also scored very low on the first scenario (compare the left [aggregate] and right [2016 only] panels in Figure 5). In retrospect, we can only speculate on why this occurred specifically in this group. It was the first instance that

In addition, we chose to incorporate the experiment in a real-life classroom setting. Students that took part in the experiments were enrolled in a course on dialogue skills and therefore possibly interested in the topic already. This also meant that we were not able to include a very large number of participants in each experiment and experimental group, diminishing our power somewhat. The effects on the performance measure (second scenario) and knowledge test are interesting but do not give any information on whether any of the interventions aid retention more than another beyond a single day, which admittedly is not a very realistic or useful time frame for retention in real-life education. However, anecdotal observations from later years in this and other programs do appear to indicate at least some retention: students still recall to break the news right away and not avoid an unavoidable confrontation. This concurs with studies described by Freeman et al. (2014), but also Lee et al. (2020) for the use of simulations in communication training especially, which show positive long-term effects of active compared to passive learning.

Further Research

Conclusion

Notwithstanding the above-mentioned shortcomings, we have demonstrated that simulations such as those provided by

Supplemental Material

sj-docx-1-plj-10.1177_14757257221138936 - Supplemental material for Exploring the use of Online Simulations in Teaching Dialogue Skills

Supplemental material, sj-docx-1-plj-10.1177_14757257221138936 for Exploring the use of Online Simulations in Teaching Dialogue Skills by Michiel H. Hulsbergen, Jutta de Jong and Maarten J. van der Smagt in Psychology Learning & Teaching

Footnotes

Acknowledgments

We would like to thank Dr. Richta IJntema for gracefully including the presented experiments in her course on dialogue skills and for many fruitful discussions, and Sofia Barocca for her comments on an earlier draft of this manuscript.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The first author is currently the CEO of DialogueTrainer B.V.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.