Abstract

The benefits of practice testing for long-term learning are well established in many contexts. However, little is known about learner characteristics that might moderate its effectiveness. The effects of practice tests might depend on individual prerequisites for learning, especially in real-world educational settings. We explored whether the effects of practice testing in a regular university lecture would depend on cognitive (e.g., prior knowledge), motivational (e.g., learning motivation), or emotional (test anxiety) dispositions. We implemented an experimental intervention design in psychology courses for teacher students (N = 208). One week before the lecture, focal learner characteristics were assessed. Immediately after the lecture, participants completed an online review session with short-answer questions (practice testing with corrective feedback) or summarizing statements (restudy), alternating within each participant. One week later, retention of learning contents was assessed with a criterial test containing short-answer and multiple-choice questions. A testing effect emerged (ηp² = .07), with better retention for the tested compared with the restudied contents. Some learner characteristics affected learning outcomes, but no interactions with testing vs. restudy occurred. These results suggest that the testing effect in the university classroom is a robust phenomenon that benefits learning irrespective of primary individual learning prerequisites.

Keywords

The Testing Effect in the Lecture Hall: Does it Depend on Learner Prerequisites?

Learning in a university context is demanding. Students are required to understand and encode large amounts of information in a short period of time, retain the knowledge until the exam and hopefully after, and retrieve and apply the knowledge when it is needed. Learners receive little direct support and guidance in their learning activities, especially in larger courses. One promising way to foster long-term retention even in large-scale university courses is practice testing, that is, practicing the retrieval of learned information (Roediger & Butler, 2011). In typical studies on retrieval practice, a group of students that receive practice tests are compared to a group of students that are given the opportunity to restudy the material, a learning activity that, according to a survey by Karpicke et al. (2009), matches students’ typical study behavior when they study on their own (but see Kuhbandner & Emmerdinger, 2019, who report results showing that students use rereading purposefully for difficult passages and often use a combination of different strategies, including testing in later stages of learning). For example, in a classical study, Roediger and Karpicke (2006) asked students to read an expository text that was divided into subsections. After each section, participants were either given the opportunity to reread the text (restudy group) or were asked to recall as much information as possible and write it down (testing group). In a test immediately after learning, the restudy group performed slightly better than the testing group (81% vs. 75% retention), but in subsequent tests two days later and one week later, students who were asked to recall the learned information as part of their study activities remembered more information than students who had reread the text sections (two days: 68% vs. 54%; one week: 56% vs. 42%).

The testing effect has been demonstrated by a wide variety of studies using different materials, periods of learning, and laboratory and field contexts. Meta-analytic results suggest that the testing effect is a robust phenomenon with medium to large effect sizes (g = 0.51, Adesope et al., 2017; g = 0.50 Rowland, 2014), also in real-world educational settings (g = 0.33, Yang et al., 2021) and psychology classes (d = 0.73, Schwieren et al., 2017; all effect sizes for comparisons of testing vs. restudying). Likewise, the review by Agarwal et al. (2021), found that 57% out of 49 studies in real-world educational settings had medium or large effect sizes. These reviews also revealed important moderators of the testing effect. For example, retrievability is an important factor that can affect test performance. For retrieval attempts without feedback, high retrievability is a precondition of direct positive effects of testing because only successful retrieval attempts elicit retrieval practice (Greving et al., 2022; Greving & Richter, 2018). For retrieval attempts with feedback, retrievability seems to be less important (Kornell & Vaughn, 2016; Kornell et al., 2015). Feedback, specifically corrective feedback, might increase the testing effect by consolidating correct responses and alerting students to knowledge gaps and misconceptions, which might promote indirect positive effects of testing. Finally, testing seems to be superior to restudying especially when learning outcomes are assessed at longer retention intervals of one week or more compared to shortly after learning (Rowland, 2014).

Compared to other types of conditions, learner characteristics have received relatively little attention as potential moderators of the testing effect. Learner characteristics represent one theoretically important class of potential moderators of the testing effect, besides materials, the retrieval task, and contextual variables (Kubik et al., 2021). A number of learner characteristics from cognitive variables (e.g., prior knowledge) to noncognitive variables (e.g., motivational and emotional dispositions) might theoretically affect the effectiveness of practice testing, especially in self-regulated learning when learners’ degrees of freedom are high. The present study examined the role of a range of learner characteristics for the testing effect in large psychology courses at the university, which is geared towards teacher students who typically exhibit a large variability of learner characteristics.

In the following section, we will briefly review potential mechanisms underlying the effects of practice tests on learning. This review provides the basis for a discussion of how various cognitive, motivational, and emotional learner dispositions might interact with practice testing during learning.

Cognitive Mechanisms Underlying the Testing Effect

Practice tests might benefit learning in two ways. First, practice tests have been shown to directly improve later retrieval of knowledge from long-term memory (direct testing effect). According to the elaborative retrieval hypothesis (Carpenter, 2009), retrieving the response to a question in the practice test leads to the activation of related information, which strengthens the integration of the tested information in long-term memory. A similar account is the mediator effectiveness hypothesis (Pyc & Rawson, 2010), which proposes that practice testing creates better mediators, that is, information linked to the target information, which can be activated by a cue. A third major theoretical account of the testing effect is transfer-appropriate processing (Morris et al., 1977), meaning that testing creates conditions during learning that are more similar to the later criterial test, compared to restudying. This similarity of learning and the test, in turn, is assumed to benefit performance in the criterial test. In sum, direct testing effects are assumed to occur because practice testing activates elaborative processes during learning, creating memory structures that foster the retrieval of information in a later test of the learning outcomes.

Second, practice testing might also benefit learning via an indirect route (indirect or mediated testing effect), especially when feedback is provided. In this scenario, practice tests inform test takers about their current performance level, preventing the illusion of knowing (Glenberg et al., 1982), which may occur during restudy. Accordingly, practice testing may improve metacognitive processes, such as judgements of learning, and also indirectly improve the regulation of subsequent self-directed learning activities (e.g., Barenberg & Dutke, 2019; Soderstrom & Bjork, 2014).

Learner Characteristics Potentially Moderating the Testing Effect

Even though positive effects of practice tests seem to be robust, their magnitude may still depend on moderating or boundary conditions (McDaniel & Butler, 2010; see also the meta-analysis by Rowland, 2014). Research exists on the moderating role of characteristics in educational settings such as the provision of feedback (e.g., Butler & Roediger, 2008), the type of questions used during practice testing (multiple choice vs. short-answer questions, e.g., Greving & Richter, 2018; McDaniel & Little, 2019), and the learning materials (e.g., its complexity, Van Gog & Sweller, 2015). In contrast, research on the moderating role of learner characteristics is still scarce. Such moderating effects of learner characteristics are especially likely when instructional measures are implemented in a self-regulated learning setting, which requires that learners monitor and regulate their learning activities (Alexander & Greene, 2017). Based on theoretical considerations, we selected a number of cognitive, motivational, and emotional dispositions for closer investigation in the present study. The possible role of these learner characteristics for the effectiveness of the testing effect and the relevant empirical research is discussed next.

Cognitive Dispositions. Practice testing requires learners to invest mental effort to be effective, especially to elicit direct testing effects. This assumption is inherent, for example, in the elaborative retrieval hypothesis or the mediator effectiveness hypothesis and it follows from the general characterized. Therefore, learners equipped with better cognitive abilities might benefit more from practice testing. However, Jonsson et al. (2021) found in two experiments that testing effects for learning Swedish-Swahili word pairs were independent of cognitive ability (assessed with a wide range of basic cognitive ability measures, from fluid intelligence to working memory). Similar results have been reported by several other studies (Bertilsson et al., 2017; Pan et al., 2015; Wiklund-Hörnqvist et al., 2014), and other studies even suggest that learners with abilities in the lower range might profit more from practice testing than their high-ability counterparts (Agarwal et al., 2017; Brewer & Unsworth, 2012) Apparently, the cognitive processes conducive to learning that are triggered by practice tests place no demands on general cognitive abilities.

Another cognitive learner characteristic that might affect the magnitude of the testing effect is domain-specific prior knowledge. Prior knowledge is relevant for the comprehension of learning materials, and it affects the retrievability of knowledge during practice testing. The complex learning materials typically used in learning settings at the university need to be comprehended to encode and integrate the to-be-learned information in existing knowledge structures, which affects retrievability. Retrievability, in turn, is important for direct testing effects to occur in the lab (Rowland, 2014) and in university classrooms (Greving & Richter, 2018; Greving et al., 2020) because only knowledge that is successfully retrieved can be consolidated by practice testing (especially if no feedback is given). Despite the theoretical importance of prior knowledge, research systematically examining its moderating role is scarce. For example, Carpenter et al. (2016) who showed that high-achieving students benefited more from retrieval practice than do middle- or low-achievers, which they explain by the fact that high-achievers have more prior knowledge and thus can process new knowledge more deeply, fostering elaborative retrieval (for similar results, see Francis et al., 2020; Marsh et al., 2009). In contrast, some studies that have employed a no-treatment control group (instead of the typical restudy control group) found learners with low prior knowledge to benefit more from retrieval practice than high prior knowledge learners (e.g., Cogliano et al., 2019). However, it seems questionable whether a no-treatment control group is an adequate point of comparison for assessing the effectiveness of retrieval practice and the role of prior knowledge. Finally, the only study in which prior knowledge was manipulated experimentally found no moderating effect of prior knowledge on the testing effect (Buchin & Mulligan, in press; for null results using a pre-experimental measure of prior knowledge, see also Xiaofeng et al., 2016).

Motivational Dispositions. Practice testing is a desirable difficulty (Bjork & Bjork, 2011) that makes learning more effective (especially long-term memory) but also subjectively more difficult. By judging retrieval practice as more difficult, learners misinterpret it as ineffective for learning and therefore use it less often in their self-regulated learning activities (misinterpreted-effort hypothesis; Kirk-Johnson et al., 2019). And, in contrast to restudying, learners often make errors and become aware of knowledge gaps and misconceptions when taking practice tests, which can be aversive for learners. Therefore, motivational dispositions and attitudes towards errors described in the literature might be crucial for whether learners can constructively use the negative feedback that inevitably occurs during practice testing. First, achievement goals are typically distinguished into mastery and performance goals (e.g., Dweck, 1986; Harackiewicz et al., 1998). Mastery goals involve engaging in deep learning to understand the learning materials. Learners with strong mastery goals should be motivated to actively seek and use the feedback provided from practice tests. In contrast, performance goals might involve the desire to demonstrate ability to others and to receive extrinsic rewards for the learning activities. Strong performance goals might be associated with a tendency to avoid the potentially negative feedback provided by practice testing. In line with this reasoning, Weissgerber et al. (2016) found evidence that achievement goals are differentially related to the self-regulated use of learning strategies such as practice testing. In a similar vein, Yan et al. (2014) found a positive relationship between the belief that one's intelligence can be increased through effort and the use of beneficial learning strategies such as self-testing.

Second, in a similar vein, learners’ error orientation (Rybowiak et al., 1999) might also affect how effectively they exploit the potential benefits of practice testing. Introduced originally in work and organizational psychology, error orientation refers to an individual's attitudes towards errors occurring at work, comprising different facets such as learning from errors, error strain, or thinking about errors. Given that errors can hardly be avoided during practice testing and that especially mediated effects of practice testing depend on the ability to use errors constructively (that means recognizing and seizing the opportunity to learn from errors), learners’ error orientation might affect the benefits they can gain from practice tests.

Third, learners need for cognition (NFC) might play a role in how effectively they use practice testing. The NFC denotes “an individual's tendency to engage in and enjoy effortful cognitive processing” (Cacioppo et al., 1984, p. 306). Schindler et al. (2019) demonstrated that NFC moderates the generation effect. A generation effect occurs when self-generated information is remembered better compared to information that is read. This effect is related to the testing effect and may also be categorized as a desirable difficulty in learning. Schindler et al. (2019) found that students with lower NFC benefitted more from generation than students with higher NFC. The authors explained this finding by arguing that individuals with higher NFC are already inclined to engage in generative learning activities without specific instructions, whereas individuals with lower NFC need the explicit instruction to generate information to include generation in their learning process. Thus, it seems plausible that individuals with higher cognition needs already use good learning strategies and therefore do not benefit as much from guided practice as individuals with lower cognition needs. But, only one study (Bertilsson et al., 2021) has examined the moderating role of NFC on the testing effect and found no evidence for such a role. However, Bertilsson et al. used relatively simple materials (vocabulary learning), and they suggested that a moderator effect of NFC might occur for more complex materials.

Emotional Dispositions. Affective dispositions are closely linked to motivational dispositions and might also affect whether learners use practice testing effectively. Practice tests create a test situation that includes the possibility of not knowing an answer and receiving negative feedback. This feedback might be more aversive for some learners than for others, depending on certain emotional dispositions. For example, Ramsden (2003) showed that students scoring high on fear of failure use challenging learning strategies less frequently, possibly to avoid negative emotions as a result of mistakes. A similar reasoning applies to the related construct of test anxiety. For example, Weissgerber and Reinhard (2018) found that students scoring high on test anxiety reported to use self-testing strategies less frequently. Text-anxious students might also use self-testing less effectively when confronted with practice tests because the worries and physiological tension that characterize test anxiety prevent them from engaging in the cognitive processes conducive to learning. Previous studies have shown that performance pressure, which likely triggers test anxiety, reduces the beneficial effects of practice testing (Hinze & Rapp, 2014). Tse and Pu (2012) used the Swahili vocabulary learning paradigm introduced by Roediger and Karpicke (2006) to examine how trait test anxiety and working memory together influence the testing effect. They found the testing effect to be decreased in learners high in test anxiety and low in working memory, which may be explained by the fact test anxiety, especially its worry component, increases working memory load.

Rationale of the Present Study

The available research raises the question of how individual differences in cognitive, motivational, and emotional dispositions affect the magnitude of the testing effect, when practice testing is implemented in a typical self-regulated learning setting in higher education. This study examined this question by incorporating practice testing in optional review activities offered to complement compulsory psychology lectures for university teacher students. Restudy was used as the control condition, in line with the main body of research on retrieval practice and given its prevalence in self-regulated learning activities of university students. To maximize experimental control and statistical power, practice testing vs. restudy was implemented within-subjects and within each lecture topic by using alternating questions and summarizing statements. Consistent with previous theory and research on retrieval practice, we hypothesized that a positive testing effect would occur and that this effect would be robust across different lecture topics.

Additionally, we examined for a range of individual differences in cognitive dispositions (prior knowledge, mean retrievability during practice tests), motivational dispositions (learning goals, NFC) and emotional dispositions (text anxiety) whether they would moderate the testing effect in the conditions described above. Given the paucity and overall inconclusive nature of research on the role of learner characteristics during practice testing in real-world educational settings, we pursued this investigation as exploratory research questions.

Method

Participants

Participants were 208 students enrolled in the teacher-training program at the University of [deleted for anonymous review]. Seventy-six students studying to become teachers for elementary school ([deleted for anonymous review]), 6 for non-academic track middle-school teachers ([deleted for anonymous review]), 12 for secondary school (Realschule), 29 for high school ([deleted for anonymous review]), 63 for special education, and 5 to become speech therapists; 17 did not specify their field of study.

Participants were recruited from three different courses (Behavioral and Learning Disorders in Childhood and Youth, Development in Childhood and Youth, Psychology of Learning), all of which are part of the mandatory psychology curriculum for prospective teachers. They could choose to participate in one of five lecture topics that were part of the three courses: Reading and Writing Problems, Attentional Deficit Hyperactivity Disorder, Development of Thinking, Development of Intelligence, and Avoidance Learning.

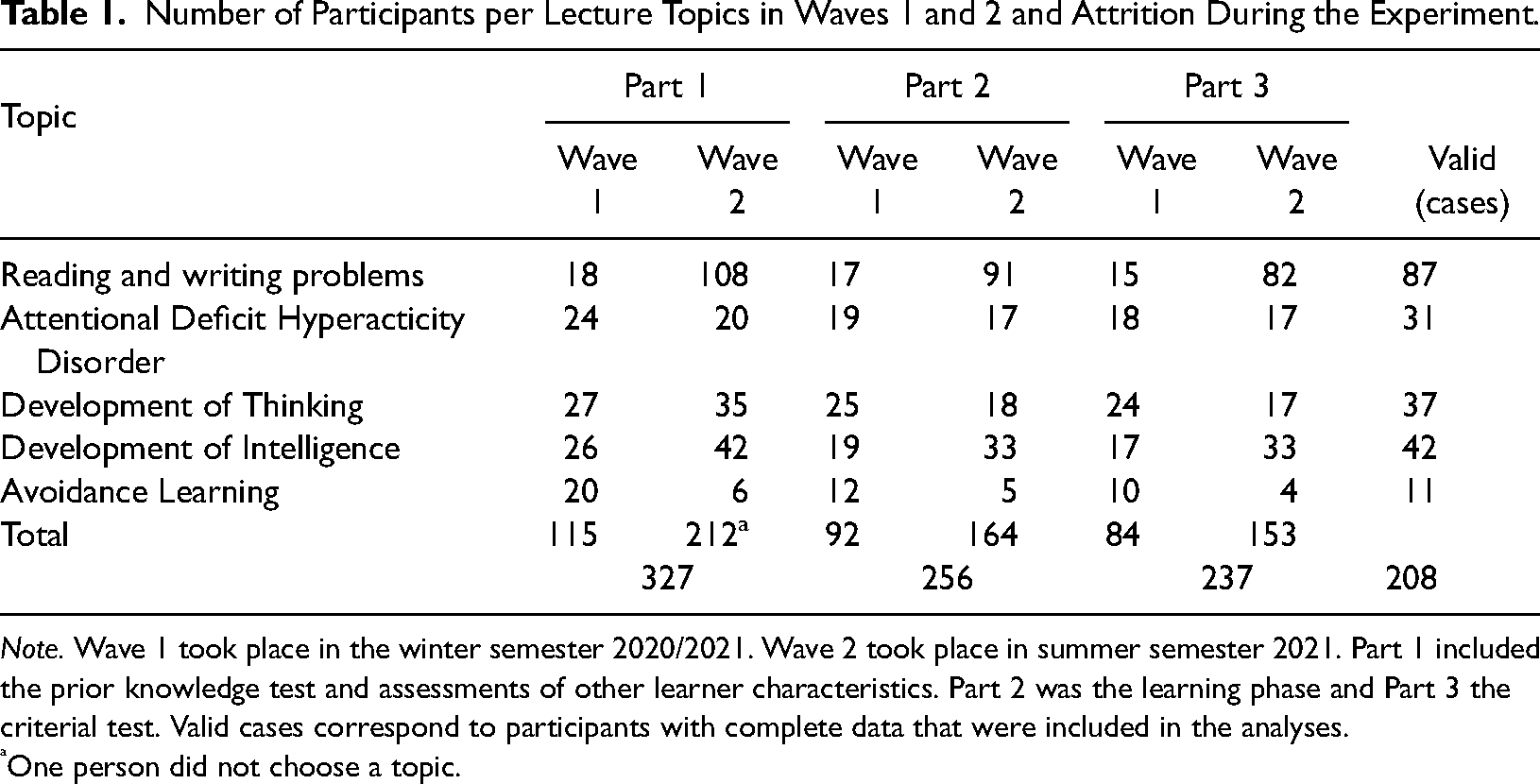

Of the 327 students who initially signed up for the study, only 208 completed all parts of the study. The other 119 students were excluded from the analysis because of incomplete data. Data for the study were collected in two waves in subsequent semesters (November/December 2020, n = 78, April to June 2021, n = 130). The distribution of participants between topics, waves, and parts is shown in Table 1.

Number of Participants per Lecture Topics in Waves 1 and 2 and Attrition During the Experiment.

Note. Wave 1 took place in the winter semester 2020/2021. Wave 2 took place in summer semester 2021. Part 1 included the prior knowledge test and assessments of other learner characteristics. Part 2 was the learning phase and Part 3 the criterial test. Valid cases correspond to participants with complete data that were included in the analyses.

One person did not choose a topic.

Most participants were female (89.9%) and received course credit for their participation (94.7%). Participants not receiving course credit took part in the study voluntarily without any compensation. Participants’ age ranged from 18 to 41 years (M = 20.99, SD = 3.45). Only five participants reported another language than [deleted for anonymous review] as their first language.

Experimental Study Materials

We analyzed the contents of the lecture session for each topic and extracted 25 information units for each topic. For each of the five lecture sessions, 15 of the 25 information units were selected and presented either as a statement (restudy) or as a short answer question (practice testing). The statements were merely presented, and participants decided how long they wished to restudy them. The short answer questions were posed without a time limit. They required participants to answer with one sentence or to provide keywords. After providing their answer, participants were shown the correct answer as feedback. A sample short answer question and corresponding statement read as follows:

Short answer Question: In which order do the stages of writing acquisition occur? Statement: Stages of writing acquisition in order:

- logographic stage - alphabetic stage - orthographic stage

Measures

Criterial Test

The dependent variable was the performance in criterial tests with 20 questions for each topic. Ten questions referred to the 15 information units presented in the experimental study materials. The other ten questions were drawn from the remaining 25 information units of each of the focal lecture sessions and served as filler items. The criterial tests for three of the five topics (Attention Deficit Hyperactivity Disorder, Development of Intelligence, and Avoidance Learning) consisted of multiple-choice questions with four response options, each of which could be correct or incorrect. Partial credit (.25) was given for each response option that was correctly ticked or correctly not ticked. The criterial tests for two of the five topics (Reading and Writing Problems, Development of Thinking) consisted of short answer questions. These were items also scored according to a partial credit scheme (i.e., 0–1 points in intervals of .25). Again, two raters independently coded the responses for 320 questions (20 questions from 16 participants) to estimate inter-rater reliability of the short-answer questions. Cohen's κ was .859 (SE = .025), indicating almost perfect agreement according to Landis and Koch (1977). Again, given the high inter-rater reliability, the remaining answers to the short answer questions were coded by only one coder.

Learner Characteristics

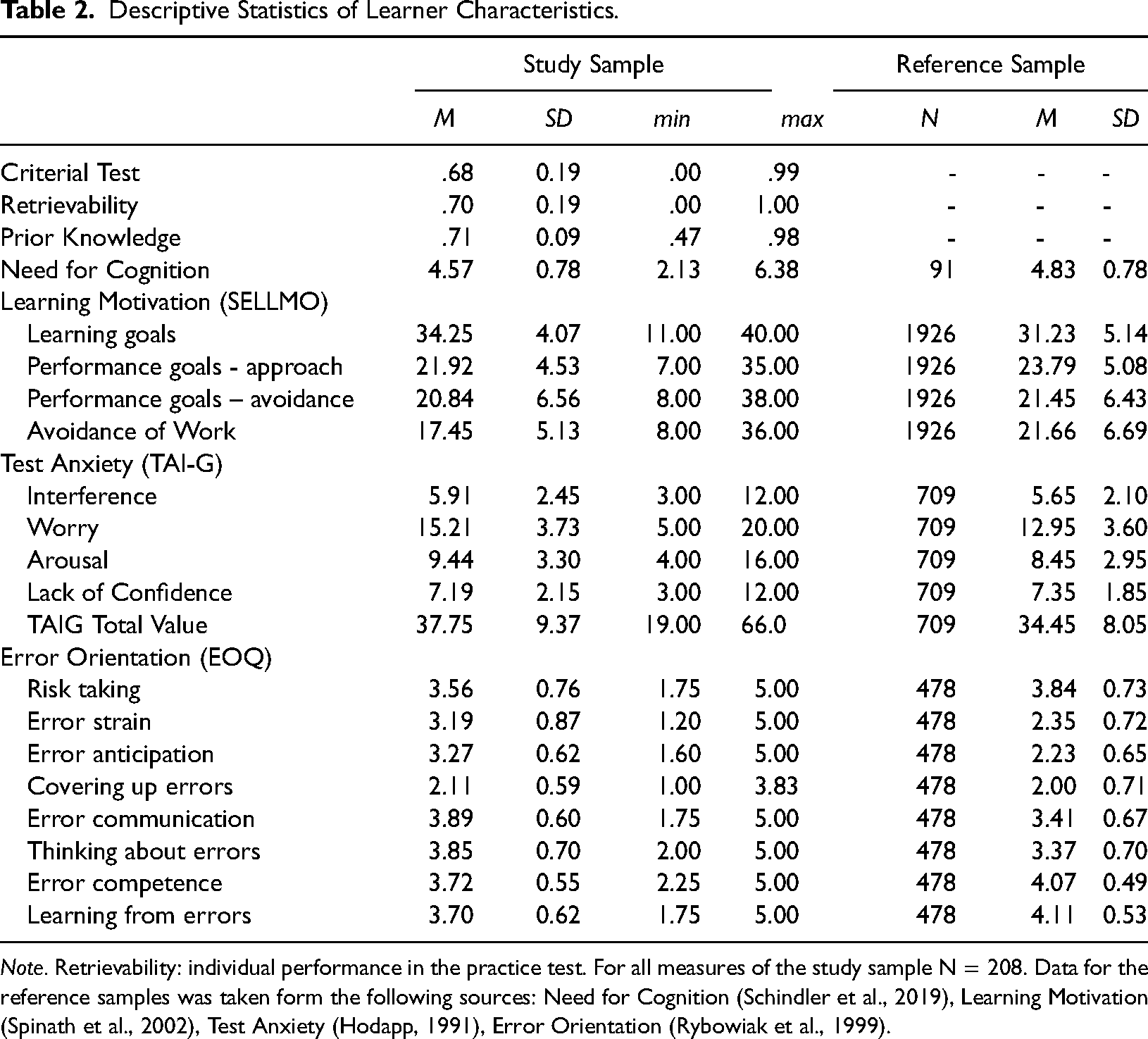

Table 2 shows all descriptive measures of the assessed learner characteristics.

Descriptive Statistics of Learner Characteristics.

Note. Retrievability: individual performance in the practice test. For all measures of the study sample N = 208. Data for the reference samples was taken form the following sources: Need for Cognition (Schindler et al., 2019), Learning Motivation (Spinath et al., 2002), Test Anxiety (Hodapp, 1991), Error Orientation (Rybowiak et al., 1999).

Retrievability. Retrievability was defined as the individual performance in the short-answer questions in the retrieval practice condition. Participants’ answers were scored with partial credit, depending on how much of the answer was correct. The minimum score was 0 for incorrect answers, .25, .50, or .75 were scored for partially correct/complete answers, and the maximum 1 for fully correct/complete answers. For example, participants received 1 point if the answer to the example question was “logographic stage, alphabetic stage, orthographic stage.” If they wrote, for example, “alphabetic stage, orthographic stage” they received .50 points because the answer was correct but incomplete. For incorrect or grossly imprecise answers, such as “learning letters, learning sounds, learning spelling”, participants received 0 points. Two raters independently coded

Prior Knowledge. For each of the five topics, we constructed a prior knowledge test. Based on 15 information units, multiple-choice questions with four alternatives were constructed, each of which could be correct or false. The prior knowledge questions tapped into knowledge that was relevant for understanding the lecture topics. Their content did not overlap with the statements and short answer-questions used in the restudy/practice testing phase. The following is an example of a prior knowledge question for the topic reading and writing problems:

What does the term “precursor skills” refer to?

Skills that require a certain time before they can be acquired or performed well. Skills that need to be acquired before another skill. An example of precursor skills to literacy acquisition might be nonsense rhymes. An example of a precursor skill might be writing since it takes some time to master the skill fluently.

Need for Cognition. NFC was assessed with the short form of the NFC Scale (Bless et al., 1994). The scale contains 16 statements assessing inclination and enjoyment to engage in effortful thinking. The items are rated on a 7-point Likert scale (sample item: “I really enjoy a task that involves coming up with new solutions to problems.”). The internal consistency of the questionnaire was satisfactory (Cronbach's α = .840, N = 208 participants).

Learning and Performance Motivation. Participants’ learning and performance motivation was assessed with the SELLMO-ST inventory (Spinath et al., 2002) that contains 31 self-describing statements about learning motivation rated on a 5-point scale. The items are sorted into four subscales: Learning Goals, Approach Performance Goals, Avoidance Performance Goals, and Tendency to Avoid Work. The internal consistencies (Cronbach's α) for the four subscales (N = 208) were satisfactory, with .811 for the subscale Learning Goals (8 items), .772 for Approach Performance Goals (7 items), .877 for Avoidance Performance Goals (8 items), and .838 for Tendency to Avoid Work (8 items).

Test Anxiety. Test anxiety was measured by the short version of the TAI-G (Spinath et al., 2002; Wacker et al., 2008), which contains 15 items referring to feelings and thoughts concerning test situations rated on a 5-point scale. The internal consistency of the scale was excellent (Cronbach's α = .913, N = 208; sample item: “I think about what happens if I do poorly“).

Error Orientation. Facets of error orientation were assessed with the Error Orientation Questionnaire ([deleted for anonymous review] version, Rybowiak et al., 1999), which contains 33 items expressing different attitudes towards errors rated on a 5-point scale. The internal consistencies (Cronbach's α) of the seven subscales were .624 for Error Competence (4 items, sample item: “When I have made a mistake, I immediately know how to correct it”), .683 for Learning From Errors (4 items, “My mistakes help me to improve my work”), .790 for Error Risk Taking (4 items, “I would prefer to err than to do nothing at all”), .845 for Error Strain (5 items, “I feel embarrassed when I make an error”), .674 for Error Anticipation (5 items, “Whenever I start a piece of work, I am aware that mistakes occur”), .744 for Covering up Errors (6 items, “I’d rather keep my mistakes to myself”), .618 for Error Communication (4 items, “When I have done something wrong, I ask others how to do it better”), and .824 for Thinking About Errors (5 items, “When a mistake occurs, I analyze it thoroughly”) (N = 208).

Procedure

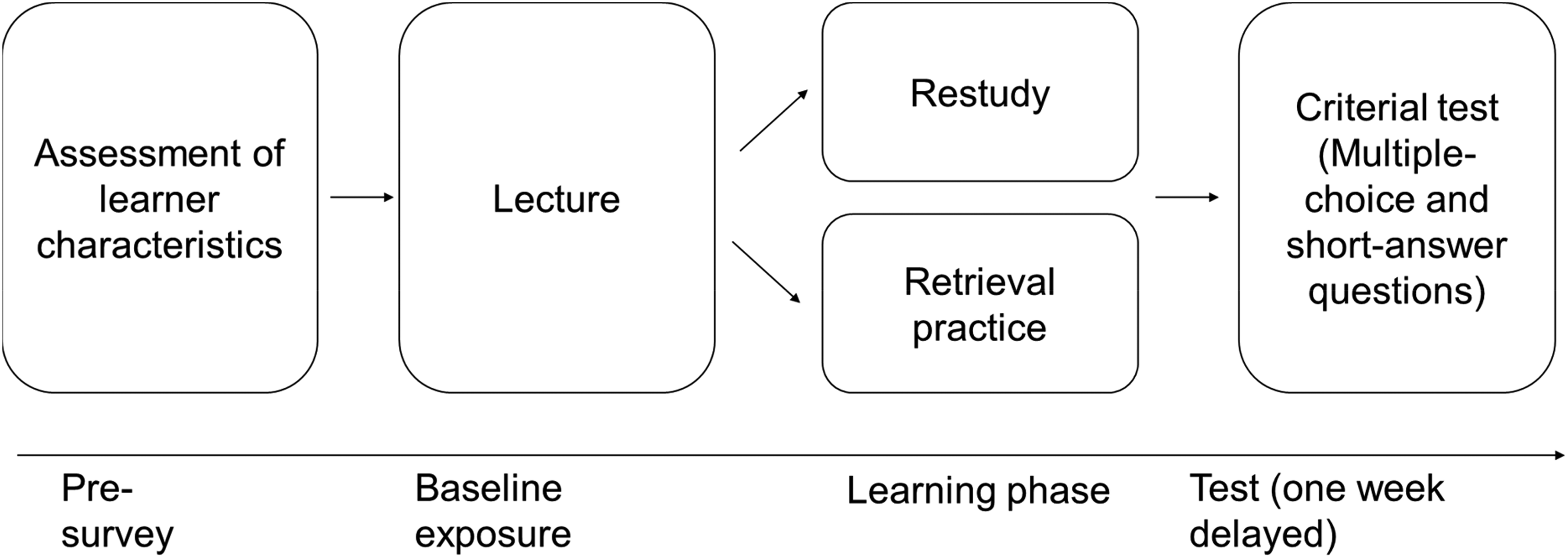

The study was conducted online via the online survey system Unipark (www.unipark.com). Figure 1 provides an overview of the general procedure. Participants selected one specific lecture from the course that they attended and registered via an online university platform, after which they received a link to the first part of the experiment. The first part of the experiment included the prior knowledge test on the chosen topic, the assessment of other learner characteristics, and a questionnaire about basic demographic information.

Design and general procedure of the experiment. Note. Participants signed up for participation for one lecture topic. They then received a pre-survey (online) for assessing learner characteristics (potential moderators of the testing effect), followed 2 to 14 days later by the lecture as the baseline exposure to the learning materials and a learning phase with retrieval practice and restudy (varied within-subjects). One week later the criterial test was provided with multiple-choice or short-answer questions, depending on topic.

Between 2 and 14 days after completing the first part, participants attended the lecture on the selected topic. They received an email with a link to the second part of the experiment and were asked to complete this part immediately after the lecture. About one third (34.0%) of the participants completed it within 1 h after the lecture, 21.5% within one to 4 h, 11.3% within four to 12 h after the lecture, 10.9% on the same day, and 22.3% more than 24 h later. The second part included the experimental intervention (retrieval practice vs. restudy). Participants answered seven or eight short-answer questions and read seven or eight statements about the lecture content (altogether 15 items). After completion of the test, participants were asked to report on the extent that they were distracted during the processing of the test and whether they used additional study materials. Most of participants reported a low level of distraction during completion of the test (M = 2.37, SD = 1.40; range from 1 = not at all to 7 = strongly distracted), and 88.5% reported not using additional study materials to complete the task (N = 208).

The third part of the experiment was scheduled one week after the completion of the second part. Again, participants received a link to the third part via email. About two thirds of participants (66.8%) completed the third part exactly one week after the second part, 13.9% after 8 days, 5.3% after 9 days, and 5.3% after 10 or more days, and 8.7% earlier than 7 days (N = 208). In the third part, participants responded to the questions of the criterial test that included 20 multiple choice or 20 short-answer questions about the contents of the chosen lecture session. Participants reported a low level of distraction during the completion of the criterial test (M = 2.09, SD = 1.32; range from 1 = not at all to 7 = strongly distracted), and 94.7% reported that they had not used any study materials to complete the task (N = 208). Finally, feedback regarding the perceived comprehensibility and usefulness of the study was obtained from participants.

Design

We employed one-factorial designs with the factor learning condition (practice testing vs. restudy) varied within-subjects. The dependent variable was the performance in the critical test. Learning condition was varied within each review session so that each participant reviewed half of the contents as statements and the other half as short-answer questions plus feedback. The assignment of contents to the two learning conditions was counterbalanced across participants by using two different lists. Each participant was randomly assigned to one of the two lists.

Sensitivity

A sensitivity analysis performed with GPower 3 (Faul et al., 2007) revealed that given the sample size of N = 208, the design was sensitive enough to detect a main effect for learning condition with an effect size of f = .102 (d = 0.204) or higher, assuming a correlation of .59 between the levels of the factor learning condition (which corresponds to the actual correlation in the present study), an α-level of .05 and a power (1-β) of .90. For the detection of an interaction effect of any of the learner characteristics with learning condition, the design was sensitive enough to detect an effect with an effect size of f = .051 (d = 0.102) or higher, again assuming an α-level of .05 and a power (1-β) of .90.

Availability of Data and Materials

The data files and analysis syntax underlying the analyses reported in this study are available via Open Science Framework (https://osf.io/9hw2z/?view_only=e56cf9fcb7844469a1f05e7b3cbcc2d8). Materials are available from the authors upon request.

Results

We first estimated a linear model with learning condition as experimental factor (varied within-subjects) and the lecture topics (varied between-subjects) and their interactions. In a second step, we estimated a series of linear models that additionally included each of the learner characteristics as continuous predictor and its interaction with learning condition (Judd et al., 2001) to explore the role of learner characteristics. We performed separate analyses for each learner characteristic to maximize the chance to detect a moderator effect. In a final step, we estimated An α-level of .05 (two-tailed) was used for all statistical tests. We report partial η2 as the effect size measure.

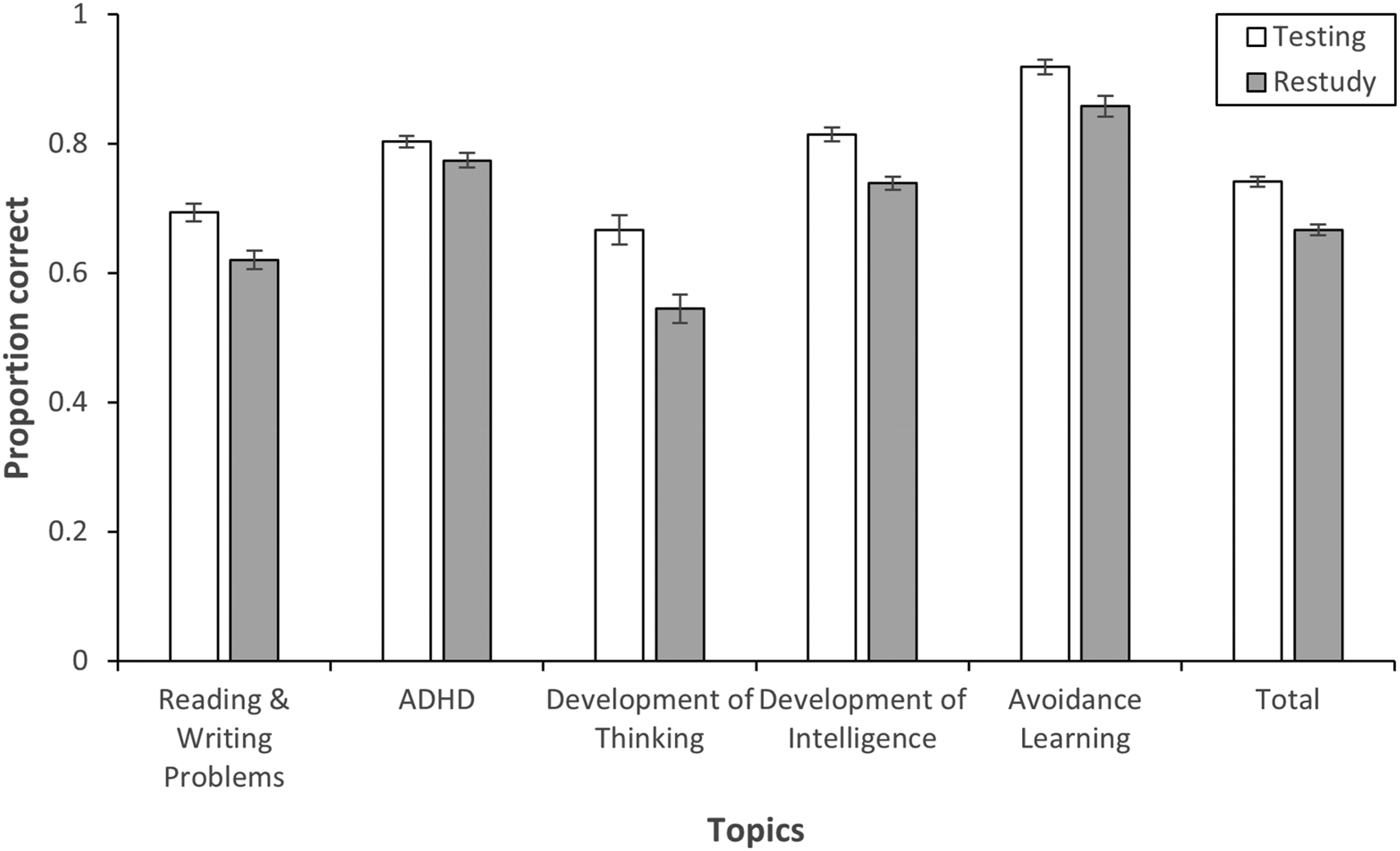

Main Effect of Testing

The model with learning conditions (with no learner characteristics) revealed better learning outcomes in the criterial test for testing (M = .779, SE = .018) compared to restudying (M = .707, SE = .019), F(1, 203) = 15.91, p < .001, ηp² = .073. Therefore, Hypothesis 1 that predicted a positive testing effect was supported. The interaction of testing and lecture topics was not significant, F(4, 203) = 0.84, p = .499, ηp² = .016, suggesting that the testing effect was independent of materials and contents. Figure 2 displays the average testing effect across topics and within each topic.

The testing effect across and within lecture topics. Note. Error bars represent the standard error of the mean. The y-axis shows the proportion of correct responses in the criterial test.

Main Effects of Learner Characteristics

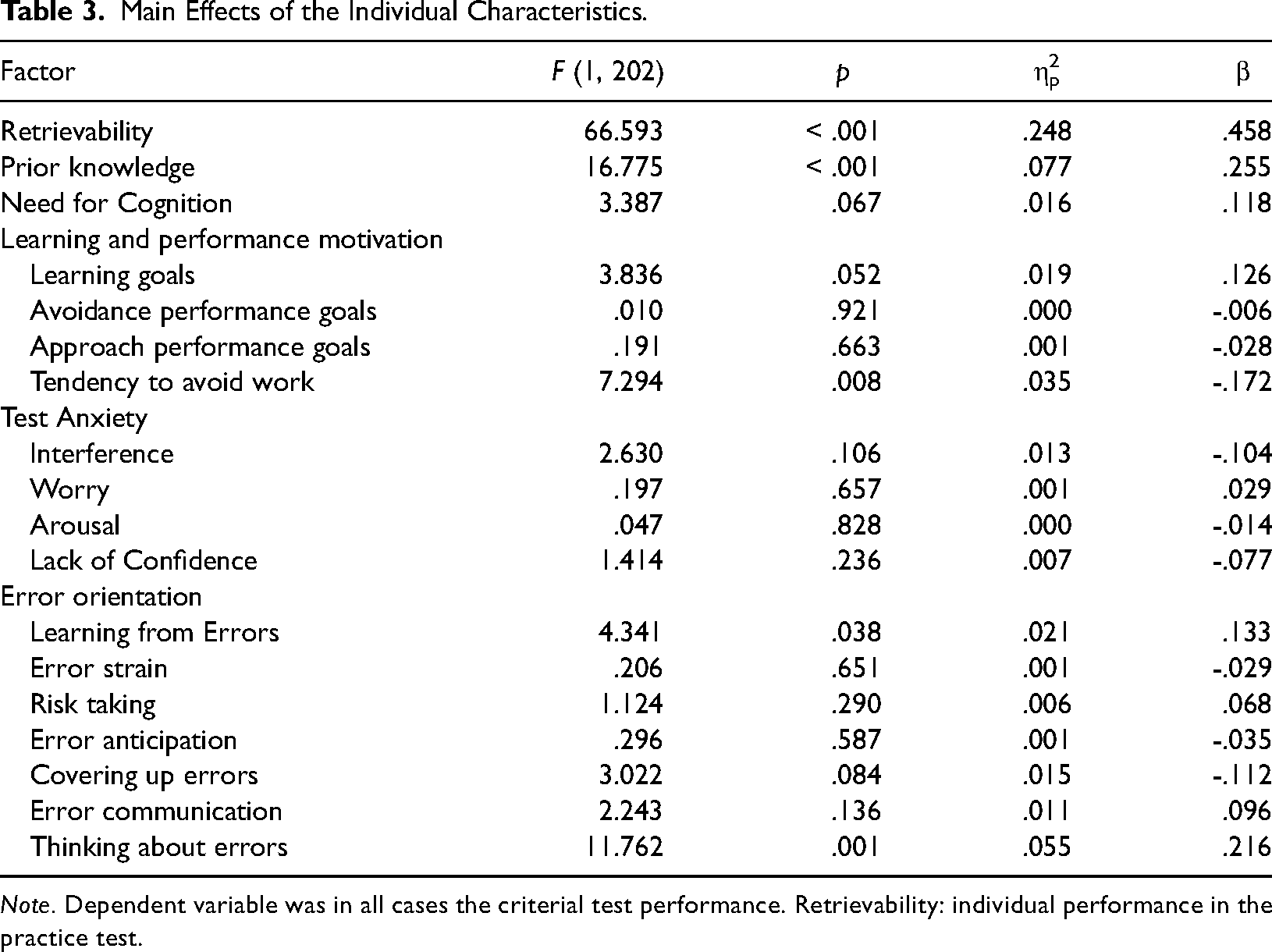

The results for main effects of learner characteristics are shown in Table 3. Significant positive main effects were found for Learning from Errors, F(1, 202) = 4.34, p = .038, ηp² = .021, and Thinking About Errors, F(1, 202) = 11.76, p = .001, ηp² = .055, from the Error Orientation Questionnaire, meaning that students who understand mistakes as learning opportunities and students who think about how errors occur and that they can be prevented in the future achieved better learning outcomes than those scoring lower on these variables. Moreover, the Tendency to Avoid Work scale from the SELLMO-ST showed a significant negative effect, F(1, 202) = 7.29, p = .008, ηp² = .035, meaning that students scoring high on this scale had lower learning outcomes. Finally, prior knowledge, F(1, 202) = 16.78, p < .001, ηp² = .077, and retrievability, F(1, 202) = 66.59, p < .001, ηp² = .248, had positive main effects on learning outcomes.

Main Effects of the Individual Characteristics.

Note. Dependent variable was in all cases the criterial test performance. Retrievability: individual performance in the practice test.

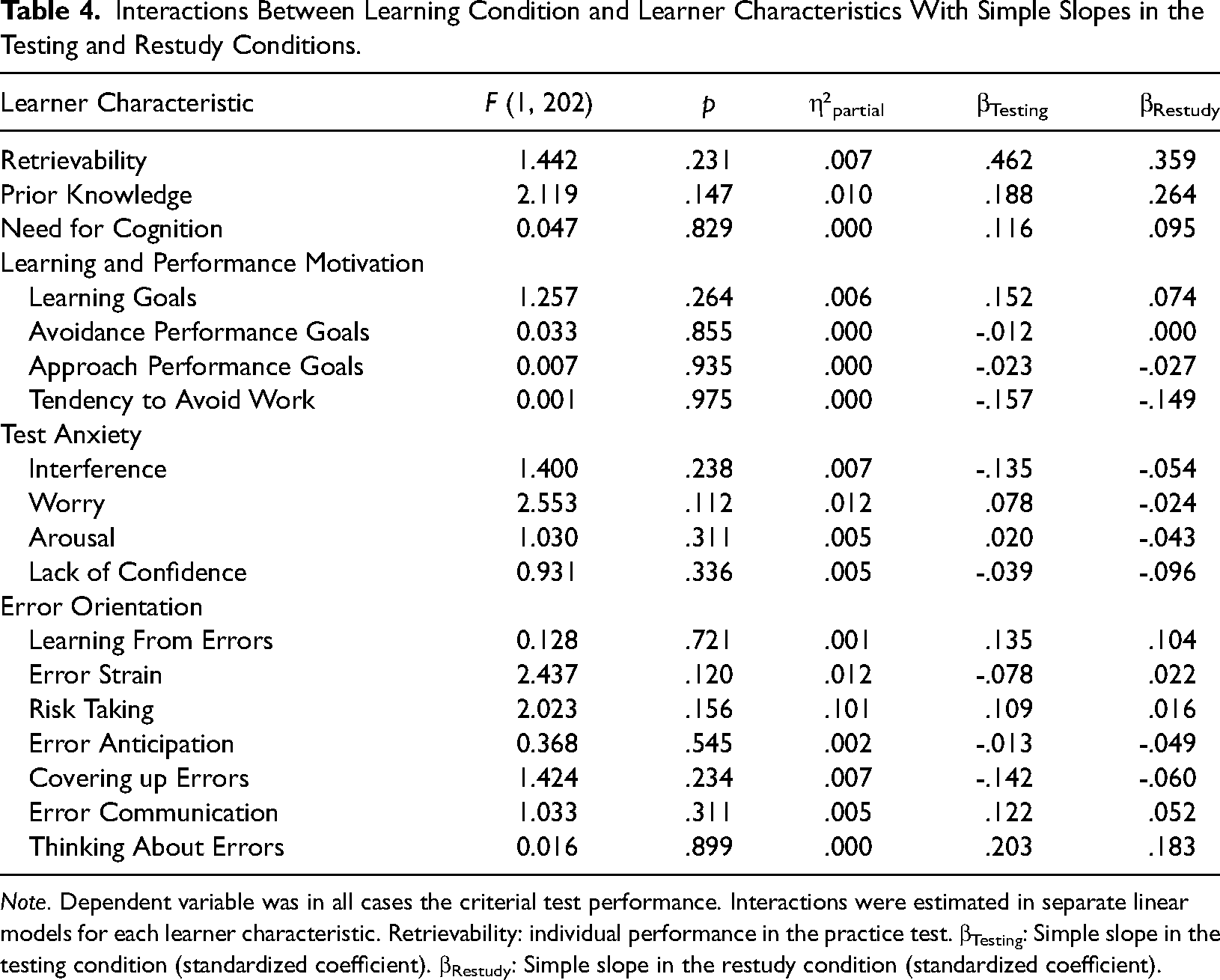

Learner Characteristics as Moderators of the Testing Effect

None of the interactions between learning condition and any of the learner characteristics was significant (Table 4). Thus, we found no evidence that the testing effect depends on learner characteristics.

Interactions Between Learning Condition and Learner Characteristics With Simple Slopes in the Testing and Restudy Conditions.

Note. Dependent variable was in all cases the criterial test performance. Interactions were estimated in separate linear models for each learner characteristic. Retrievability: individual performance in the practice test. βTesting: Simple slope in the testing condition (standardized coefficient). βRestudy: Simple slope in the restudy condition (standardized coefficient).

Perceived Comprehensibility and Usefulness of the Study

Participants also provided feedback on the comprehensibility of the tasks and the usefulness of the study, that is, how useful participants judged the study for their personal learning. Most participants (72.6%) perceived the study as very comprehensible, 24.1% as acceptable, and 3.4% saw need for improvement (N = 237). Most participants also found the study to be useful (49.4% useful, 15.6% very useful), and 30.0% rated it as medium useful. Only 3.8% of participants found the study not very useful and 1.3% not useful at all.

Discussion

The present study examined the testing effect and potential moderating effects of cognitive, motivational, and emotional dispositions of learners in a higher education setting. We implemented a minimal intervention in three regular university courses, with multiple topics and a within-participant and within-topic variation of practice testing vs. restudying. The main finding was that a testing effect emerged across all topics, meaning that tested knowledge was retained better over one week than knowledge that was merely studied. No evidence was found for a moderating effect of any learner characteristic on the strength of the testing effect.

Our study contributes to the literature on real-life educational applications of retrieval practice by providing a rigorous experimental test of the testing effect with actual study materials in the university classroom. Even though a growing number of studies have examined the benefits of retrieval practice on the university or college level with actual course materials (Yang et al., 2021, included 225 effects in their meta-analysis), many of these studies are based on non-experimental designs, such as quasi-experimental designs with a nonrandom allocation of groups of students to learning conditions. Moreover, many studies in real-world educational settings (about 60% in the meta-analysis by Yang et al., 2021) have compared practice testing to no activity at all or restudying the learning materials, which is not a very informative comparison regarding the usefulness of practice testing in higher education. Our study used a within-subjects design to compare practice testing with short-answer questions and corrective feedback to restudying the same contents. The design was unique in that practice testing vs. restudying was varied also within topics. Therefore, any confounds of learning condition and topic can be ruled out. The fact that the testing effect emerged in all five lecture topics included in the experiment supports the generalizability of the positive testing effect found in our study. With a partial η2 of .07 (which corresponds to f = .27 or d = 0.56, Lenhard & Lenhard, 2016), the size of the testing effect was medium to large and in the order of magnitude of testing effects reported for laboratory studies. It is also comparable to or even exceeds the reported effect sizes for classroom studies (psychological classes: d = 0.73, Schwieren et al., 2017; classroom studies in general: g = 0.33; Yang et al., 2021). The size of the effect is remarkable considering the minimalistic nature of the intervention and the variety of influences that can contribute to the results and increase the error variance in a field experiment such as in the current study. One likely explanation for the testing effect, in line with major theoretical accounts of the direct testing effect (e.g., Bjork, 1975; Carpenter, 2009; Pyc & Rawson, 2009), is that practice testing increased cognitive effort and prompted participants to process the learned more deeply. This explanation is backed up by the observation that participants spend more time on practice test items (M = 79.45 s, SD = 51.79 per item) than on restudy items (M = 13.91 s, SD = 19.43). Feedback reading time was relatively short (M = 12.10 s, SD = 11.98), which indicates that participants did not put much emphasis on feedback (in line with the findings by Greving et al., 2022, on the use of feedback during practice testing in the psychology classroom).

In sum, our results provide strong support for the idea that practice testing with short-answer questions and corrective feedback can foster learning when it is implemented as an additional study opportunity in a university course. Given the minimalistic nature of the intervention, which was completed online by participants within a few minutes and in a single session, practice testing has also proven to be a didactic tool that can be implemented in a highly efficient way.

A novel research question addressed by the present experiment was to explore whether a broad array of cognitive, motivational, and emotional dispositions would moderate the testing effect. Despite the fact that each learner characteristic included in our study was selected based on theoretical considerations, no evidence for a moderating role of any learner characteristic was found. To be sure, the logic of the significance precludes the conclusion from a null effect that the effect in question does not exist in the population. However, the study was sufficiently powered to find even a small moderator effect, and we used mostly established tests with a high reliability to assess the learner characteristics and conducted a number of significance tests without correcting the alpha-level for multiple tests. Moreover, several of the learner characteristics, such as retrievability and prior knowledge, exhibited main effects on learning that were well interpretable. Given these facts, it seems quite likely that the testing effect that we examined was indeed not moderated by any of the learner characteristics included in the experiment. This conclusion would be in line with findings by Jonsson et al. (2021), who concluded that the testing effect is “a learning method for all,” which they found to be independent of cognitive ability. Our results broaden this conclusion to a wider range of cognitive, motivational, and emotional characteristics which have not been investigated in previous research on the testing effect. Theoretically, our findings suggest that the mechanisms described in major theories of learning, especially the increased cognitive effort induced by retrieval practice, do not depend on specific learner prerequisites. Apparently, when implemented in a typical psychology course, practice tests are per se strong enough to induce cognitive processes conducive to learning, such as elaborative retrieval, at least if short-answer questions are used and corrective feedback is given.

Despite its informative and clear results, our study also has limitations that need to be discussed. One limitation is that the composition of the sample might have been too homogeneous to elicit moderator effects of learner characteristics. All participants were students pursuing similar fields of study, which might have reduced the variance in those characteristics. Effect could emerge in a more heterogeneous sample with a greater variability of learner characteristics. As the comparison with other samples show (Table 2), some (although not all) of the learner characteristics, most notable the facets of test anxiety, had considerably lower standard deviations in the present sample than in the comparison sample. A similar argument can be made about the sample of items selected for the practice tests and the criterial tests. The lecture topics were not very complex, the questions for all the topics were deliberately kept easy (to ensure high retrievability), and most students likely possessed the prior knowledge necessary for understanding and actively encoding the learning contents. All these characteristics maximize the chances that most learners can profit from the testing effect but concurrently help to keep the potential influence of learner characteristics small. Future research could examine this issue directly by examining the moderating role of learner characteristics with materials of varying difficulty.

Another potential limitation is that lecture topics were not manipulated experimentally. Instead, participants could choose the topic. On consequence of this procedure is that the groups assigned to each lecture vary in size, with some groups being quite small. Also, the students potentially chose the topic they were most interested in and therefore might have been more motivated to learn and to improve their learning outcomes (Triarisanti & Purnawarman, 2019). On the positive side, however, the feature that students could choose the topic for which they wanted to study more approximates a typical self-regulated learning setting, enhancing the ecological validity of the study.

For practical reasons, we were able to include only five items per learning condition (testing vs. restudying) in the criterial test. The relatively low number of items permitted using aggregated measures of learning outcomes combined with an ANOVA approach. The alternative would be using a crossed-classified design with random effects of participants and items in a Generalized Linear Mixed Model (GLMM, Dixon, 2008). Such a design has the advantage of testing whether an effect generalizes not only to population of participants but also to populations of items and materials, but it requires larger numbers of items to achieve sufficient statistical power (Westfall et al., 2014). Based on the ANOVA approach used in the present study, we cannot conclude from our findings whether they generalize to larger population of items and contents. However, we varied lecture topics between-subjects, and the findings were extremely consistent across all five lecture topics. This fact makes us optimistic that the conclusions that can be drawn from our results are not confined to the specific materials used in our study.

As a final limitation, practice testing was limited to short-answer questions and corrective feedback provided online to participants shortly after the actual lectures and an assessment of learning outcomes one week after learning. Therefore, predicting the extent that our results, especially the absence of a moderating role of learner characteristics, generalize to other forms of practice testing (such as practice tests with multiple-choice questions or free recall) and to other practice schedules or retention intervals is difficult.

To conclude, this research contributes to the available body of research by providing a strict experimental test of the effect of practice testing in a higher education context. Our result suggest that practice testing can be implemented effectively and efficiently as an online complement of regular university courses. Individual differences in cognitive, motivational, or emotional dispositions seem not to affect the magnitude of the testing effect, suggesting that it is a “learning method for all” (Jonsson et al. (2021)) that potentially benefits all students.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Federal Ministry of Education and Research (BMBF) in the project CoTeach; This work was supported by the Bundesministerium für Bildung und Forschung (grant number 01JA2020). We thank Ann-Sophie Schriewer for her help in data scoring. We furthermore thank Johanna Abendroth, Lorena Fleischmann, Wolfgang Lenhard, Jan Lenhart, Peter Marx, Sabine Molitor, Julia Schindler, Sandra Schmiedeler, Jennifer Seeger, Janna Teigeler, Andreas Wertgen, Catharina Tibken, Marina Klimovich, Katharina Maschewski, and Tini Katz for letting us conduct our study in their courses.