Abstract

This study investigated the effects of retrieval practice on the cognitive and metacognitive learning outcome in a psychology lecture at university. In a within-subjects design, N = 180 students completed an intermediate knowledge test in the 9th session and a final test in the 13th session of the semester. Both tests assessed students’ correctness of answering and confidence in their answers. In the final test, items that were intermediately tested were answered as correctly as items that were not intermediately tested. The failure to find a testing effect at the level of cognitive performance could not be attributed to interference with item difficulty, as intermediately tested and not tested items were balanced according to their a priori difficulty. However, testing improved performance at the metacognitive level. Confidence ratings were more accurate and less biased in items that were intermediately tested compared to items not intermediately tested. The results are discussed in the context of metacognitive monitoring as a condition of self-regulated learning in an authentic psychology learning context.

Investigating the effects of retrieval practice is an early and long-lasting issue in memory research (e.g., Abbott, 1909; Moreira et al., 2019). The central finding, often referred to as the testing effect, demonstrates that the accessibility of previously retrieved learning material is enhanced in a later, final test (e.g., Roediger III & Karpicke, 2006). This effect has frequently been replicated and recent reviews and meta-analyses corroborated its reliability (Dunlosky et al., 2013; Moreira et al., 2019; Roediger III & Butler, 2011; Rowland, 2014; Schwieren et al., 2017; Yang et al., 2021). Research has provided thoughtful explanations of why repeatedly retrieving information from long-term memory enhances the probability of later recall (for an overview, see Karpicke et al., 2014). Given this solid empirical and theoretical basis, retrieval practice is a promising candidate for being transferred to educational practice (Dunlosky et al., 2013; Dunn et al., 2013; Graesser et al., 2008; Pashler et al., 2007; Roediger III & Pyc, 2012). In this regard, a recent meta-analysis (Moreira et al., 2019) demonstrated benefits of retrieval practice in applied contexts, such as learning at school and university. Moreover, a previous meta-analysis (Schwieren et al., 2017) demonstrated that the testing effect could also be found in learning and teaching psychology by discovering effect sizes similar to those found for non-psychological learning contexts.

Encouraged by this generally positive evaluation of the available evidence, researchers have continued exploring retrieval practice in educational settings to support the transfer from research into practice. The operationalization of educational relevant settings in these studies, however, still varies substantially. Schwieren et al. (2017), for example, included studies in their meta-analysis “when retrieval practice was applied in teaching psychology students or in the context of teaching psychological learning content to non-psychology students” (pp. 181–182). The included studies uncovered that ecological valid and authentic testing effect studies could involve three different facets of authenticity, at least: (a) the setting (i.e. similar to or actual classroom situation), (b) the learning content (i.e. similar to or actually required learning content), and (c) the test situation (i.e. similar to or actual achievement situation). The more the studies investigated actual learning conditions in terms of setting, content, and achievement situation, the higher the ecological validity and authenticity of the studies is assumed to be, because of the personal meaning of learning context and content for participants, and the contribution to their academic achievement. However, implementing actual learning conditions (and actual achievement situations, in particular) requires considering several methodological issues.

The first issue refers to the study design. The typical design of testing effect studies includes three phases: A learning phase in which the learning material is presented to the participants, an intervening phase in which the content is actively retrieved (tested), re-studied, or not presented again, and a final test. In between-participants designs, some participants take an intermediate test and others do not. In this design, a testing effect is indicated by higher performance in the intermediately tested group compared to the participants who took only the final test. In within-participants designs, all participants are intermediately tested but only on parts of the learning content that is tested in the final test. In this case, the testing effect is indicated by higher performance in the intermediately tested items compared to the items tested only in the final test. If actual achievement situations are implemented, conducting a between-participants design is questionable because an intervention, such as retrieval practice, gives rise to the expectation that one group of participants might benefit more than another group for academic achievement. Thus, employing a between-participants design is not recommendable for ethical reasons.

Employing within-participants designs can solve the problem of non-equal treatment in exams but creates a second issue. The items of the intermediate test and the new items of the final test (not previously tested) should have the same a-priori difficulty. Otherwise, an illusionary testing effect might occur (when the items of the intermediate test were a-priori easier than the new items of the final test) or a testing effect might remain undetected (when the items of the intermediate test were a-priori more difficult than the new items of the final test). Neglecting the interference of item difficulty with final test performance would restrict the interpretability of the investigated testing effect.

This issue becomes even more relevant when the actual classroom setting implies not only learning before but also after the intermediate test, as in retrieval practice studies investigating courses spanning a whole semester (e.g., Barenberg & Dutke, 2012; Shapiro & Gordon, 2012). Consequently, items of the intermediate test necessarily refer to learning contents presented before the intermediate test, whereas new items of the final test could refer to learning contents presented before or after the intermediate test. Thus, the item difficulty in the final test is not only defined by the a-priori difficulty of test items but also by the time lag between the initial presentation of learning contents and the final test. As this time lag effect cannot be ruled out in studying the effects of retrieval practice in actual classroom and achievement situations—using a within-participants design—the more the controlling of the a-priori difficulty of items is required. At best, the a-priori difficulty of intermediate test items and new final test items is parallelized as well as the a-priori difficulty of final test items referring to learning contents presented before and after the intermediate test.

However, controlling for a-priori item difficulty is often not considered in this kind of studies. Among the studies reviewed in Moreira et al. (2019) and Schwieren et al. (2017), for example, 19 studies used a within-participants design but only five studies explicitly addressed the item difficulty. Three of these studies did (Howard, 2010; Shapiro & Gordon, 2012; Vojdanoska et al., 2010) and two did not find testing effects (Bing, 1984; Goosens et al., 2016). Thus, the evidence of retrieval practice effects from within-participants studies in applied educational settings seems to be less clear when potential item difficulty effects are controlled for. Thus, finding retrieval practice effects in actual learning and achievement situations seems to be particularly challenging when potential interfering variables are controlled for (such as item difficulty), pointing to the next issue.

The third issue refers to the measures of the testing effect. Recently, Barenberg and Dutke (2019) investigated the effect of retrieval practice not only at the level of cognitive performance (accessibility of learned contents) but also at the level of metacognitive performance (accuracy of confidence judgments). They revealed that retrieval practice did not only enhance learning at the cognitive level, as shown by the proportion of correct answers, but also at the metacognitive level, as shown by more accurate and less biased confidence ratings in the testing condition compared to the control condition. Moreover, the effect sizes at the metacognitive level were even higher than the effect sizes at the cognitive level. Thus, including metacognitive measures in retrieval practice studies can increase the chance of detecting beneficial effects for two reasons. First, applying metacognitive measures broadens the range of measures by adding a new conceptual quality of learning outcome. Second, metacognitive measures may be more sensitive than cognitive measures to detect retrieval practice effects. Therefore, including metacognitive measures in addition to pure cognitive measures seem to be expedient when studying the testing effect in actual learning and achievement situations. Unfortunately, only one of the testing effect studies reviewed in Moreira et al. (2019) and Schwieren et al. (2017) considered metacognitive measures as a dependent variable. This study (Barenberg & Dutke, 2012), however, was severely underpowered so that no firm conclusions could be drawn from.

Framework of the Present Study

In the present study, we aimed at investigating the effects of retrieval practice in an authentic educational context, addressing actual learning contents in actual learning and achievement situations. To this end, we examined the effects of an intermediate test in an introductory psychology university course on the final test performance at the end of the semester (as a part of the final examination in a larger study module). The present study addressed the aforementioned methodological issues and was designed to alleviate (or overcome, at best) the identified shortcomings of previous testing effect studies in the educational context.

First, we refrained from applying a between-participants design to exclude any disadvantage for participants with respect to the final examination at the end of the semester. Instead, we varied retrieval practice within participants, that is the final test consisted of the intermediately tested items and new (not tested) items for all participants.

Second, the items were selected from an item pool that has been used for knowledge tests in this course for several years. Therefore, information about their a-priori difficulty was available. Based on these data, the items used in the intermediate and the final test were parallelized with regard to their a-priori difficulty as well as final test items related to learning contents presented before and after the intermediate test. Thus, potential interference of the item difficulty with the effect of testing on the final test performance should be diminished.

Third, the effects of testing were assessed with regard to the learning outcome at (a) the cognitive level by measuring the number of correct responses and (b) the metacognitive level by measuring the participants’ confidence in the correctness of their responses. The combination of data on correctness and confidence allows assessing the accuracy of metacognitive monitoring employing indices of absolute accuracy, bias, (justified) confidence in correctly answered items and (unjustified) confidence in incorrectly answered items (Dutke & Barenberg, 2015). Thus, a broader range of dependent variables is considered compared to previous testing effect studies to examine cognitive and metacognitive effects of retrieval practice (Barenberg & Dutke, 2019).

We expected that participants would answer intermediately tested items more often correctly and with more accurate confidence ratings than not previously tested items in the final test. In contrast to previous studies, the broader range of measures should increase the chance of detecting retrieval practice effects, and given beneficial effects are found, the interpretability should be clearer because of controlling for the a-priori difficulty of the test items.

Method

Design and Participants

We assessed the impact of retrieval practice on knowledge acquisition in an introductory psychology university course with a within-participants field experiment. Retrieval practice was varied within participants. The participants completed an intermediate knowledge test in the 9th session and a final test in the last (13th) session of the semester. In the intermediate test (t1), they answered 16 items referring to learning contents of the first eight weeks. In the final test (t2), the same items were presented (testing condition items) plus 24 new items referring to the contents of the complete course (control condition items).

The university course consisted of 13 weekly sessions held during the winter semester 2019–20 and referred to educational psychology issues such as learning, motivation, and development in the school context. The testing effect, its potential causes, and a sample study were mentioned in the 7th session. The lecturer, however, did not explicitly relate these issues to the tests to be taken in the context of the course. Participants were N = 214 (n = 157 female) students (to become teachers) of which N = 180 (n = 134 female) participated in both tests and agreed that their data will be analyzed for scientific purpose.

As the sample size was determined by the students’ course choice, the power was calculated post-hoc. The effect size was estimated based on a meta-analysis (Schwieren et al., 2017): They reported a mean weighted effect size of d = .56 and depending on moderators (type of design and type of control condition) mean weighted effect sizes of up to .73, respectively. Given the sample size of N = 180 and assuming a medium-sized testing effect, G*Power (Faul et al., 2009) computed a power of .946 (presuming α = .05).

Materials and Variables

Knowledge tests

As this university course is given every semester, an item pool for the knowledge tests exists which encompasses 423 confidence-weighted true-false (CWTF) items (Dutke & Barenberg, 2015). The item pool included information about item difficulty based on rates of correct solutions in previous tests. CWTF items were constructed in the form of one-sentence statements, which aimed at probing propositions presented in the course or propositions that could be derived by near transfer from the course contents. Forty items of the item pool were selected, 16 items referring to course topics presented before t1 (testing condition items) and 24 additional items referring to topics of the complete course (control condition items). Sixteen of the control condition items referred to topics presented before t1 and eight items referred to topics presented after t1. To minimize effects of confirmation or negation tendencies in answering, one half of the items represented true statements and the other half false statements. Moreover, items were parallelized according to their a-priori difficulty in two ways: (a) the mean difficulty of the testing condition items (M = .76, SD = .18) did not differ from the mean difficulty of the control condition items (M = .77, SD = .16), t(38) = .35, p = .73, and (b) within the control condition items, the mean difficulty of items referring to course topics presented before t1 (M = .78, SD = .15) did not differ from the mean difficulty of items referring to course topics presented after t1 (M = .77, SD = .19), t(22) = .17, p = .86.

Variables

For each statement in the tests, the students judged whether it was true or false and concurrently indicated their confidence in that decision. The true-false decision and the confidence judgment were given on a single scale resulting in four possible response options: (1) I am sure the statement is correct, (2) I think the statement is correct, but I am unsure, (3) I think the statement is incorrect, but I am unsure, or (4) I am sure the statement is incorrect. From these data, different measures were derived describing the students’ cognitive and metacognitive performance (e.g., Barenberg & Dutke, 2019; Dutke & Barenberg, 2015). As a simple measure of correctness, the relative number of correct answers was computed—irrespective of the reported confidence level. As a simple measure of confidence, the relative number of confident judgments was computed—irrespective of the correctness of the answers.

The quality of metacognitive monitoring was assessed by combining data on correctness and confidence (see the Appendix for formulas). The following indices reflect different facets of metacognitive monitoring accuracy typically assessed in metacognition research (see Barenberg & Dutke, 2013; Schraw, 2009; Schraw et al., 2013). The absolute accuracy (AC) of the confidence judgments was computed by adding the proportion of correct and confident answers to the proportion of incorrect and unconfident answers. Thus, the AC score reflects the precision of the confidence judgments across all items, that is the proportion of answers indicating a match of correctness and confidence. The bias of the confidence judgments (BS) was computed by subtracting the relative number of correct answers from the relative number of confident answers. Positive values indicate overconfidence, negative values indicate underconfidence. As the BS score does not differentiate whether correct and incorrect answers are biased in the same way, two conditional probabilities were computed. The confident-correct probability (CCP) indicates (justified) confidence given the answer is correct, and the confident-incorrect probability (CIP) indicates (unjustified) confidence given the answer is incorrect. A high CCP score (indicating a high number of correct answers given with confidence) and a low CIP score (indicating a low number of incorrect answers given with confidence) would reflect that the learner adequately discriminated between learning content already mastered and learning content requiring further learning.

Procedure

In the first session of the course, the participants were informed that they needed to pass two knowledge tests, the first in the 9th and the second in the last week of the semester. Both tests were obligatory study requirements and had to be passed with a minimum of 50% correct answers. Students were also informed that the tests were paper-and-pencil-based, closed-book tests. Sample items were presented in the context of different course topics to familiarize the students with the format and the level of aspiration. In this context, students learned that answering the items required not only the recall of presented information but also transferring learning content to new situations.

All students attending the course in the 9th week of the semester answered the testing condition items (presented in random order) within the first 20 min. of the session. Two days later, summative feedback was given, that is the participants were informed about the number of correct answers they achieved in t1, but no item-specific information was given about correct and incorrect answers.

All students, attending the course in the 13th session, were presented with the testing condition items plus the control condition items (presented in random order). They were instructed to answer all 40 items within 40 min. of the session. Participants were asked to give written consent that their data could be anonymized and used for scientific purpose. Data from students who did not consent were excluded from the experimental data set. Students who missed one of the tests had the opportunity to take the missing test in an extra session during the semester (when t1 was missed) or after the end of the semester (when t2 was missed). However, the data of these students were also excluded from the experimental data set.

Data Analysis

Variables were computed as described in the Variables section and the Appendix. Hypotheses were tested by comparing the t2 means of the dependent variables for testing condition items and control condition items with paired-samples t tests. Results with a lower p value of .05 (two-tailed) are valued as significant. In analyzing the indices of metacognitive monitoring, p was Bonferroni-corrected for multiple dependent tests (resulting in pcorrected = .017). Additionally, the effect size in terms of Cohen's d is reported.

Results

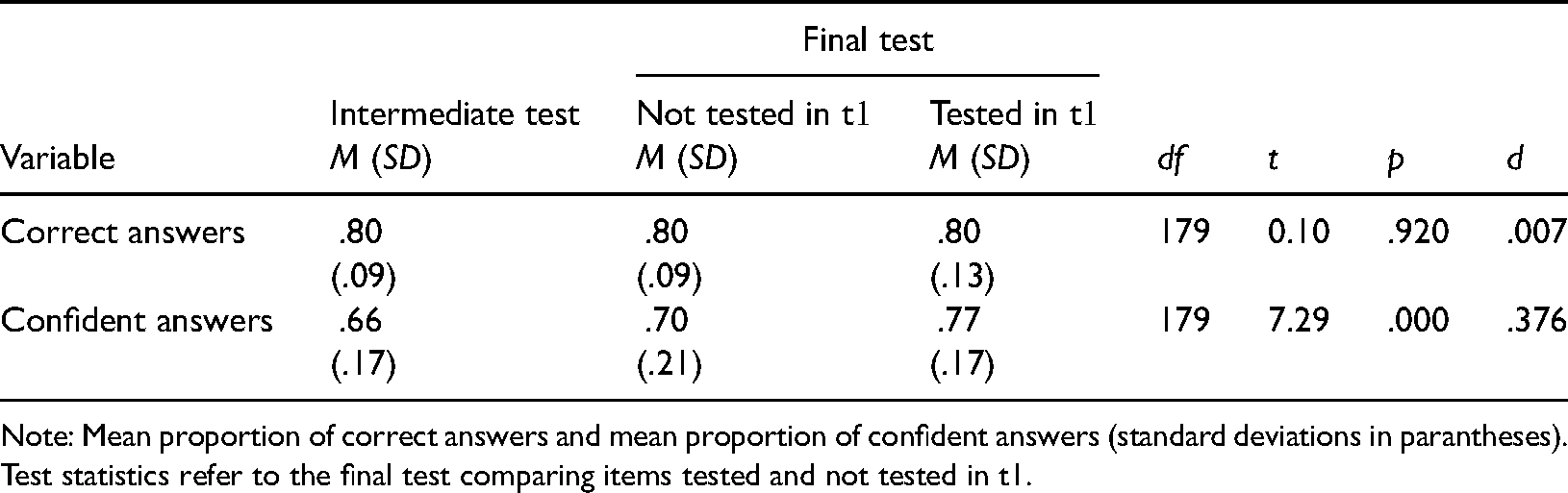

Correct Answers

Comparing the proportion of correct answers (in t2) between testing and control condition items failed to show a difference (Table 1). An additional Bayes Factor analysis revealed that, given the data we found, H0 is more than 16 times more probable than H1 (BF01 = 16.82). Thus, the data failed to show an effect of retrieval practice at the cognitive level.

Correctnes of answers and confidence in the correctness.

Note: Mean proportion of correct answers and mean proportion of confident answers (standard deviations in parantheses). Test statistics refer to the final test comparing items tested and not tested in t1.

To scrutinize this unexpected result, we conducted two further explorative analyses. First, we compared the a-priori mean difficulties of testing and control condition items (see method section) with the actual performance levels in t2 (Table 1). The proportions of correct answers in t2 were higher than the a-priori mean difficulties for testing condition items, t(179) = 6.17, p <.001, as well as for control condition items, t(179) = 3.22, p = .002. Thus, the present sample performed a little better than predicted by the a-priori difficulties. Second, we computed the correlation of intermediate test performance and the difference of final test performance levels between testing and control condition revealing a correlation of r = .02 (p = .82). Thus, in the present sample the occurrence of a testing effect was not affected by the level of test performance in the intermediate test.

Confident Answers

Comparing the proportion of confident answers (in t2) between testing and control condition items showed a significant difference (Table 1). Testing condition items were answered more often with confidence in t2 than control condition items. According to Cohen (1988), the effect can be categorized as small to medium. The proportion of confidence, however, does not provide any information about the adequacy of the confidence ratings. To this end, we analyzed the relation between the correctness of the answers and the confidence in the correctness of the answers in the following section.

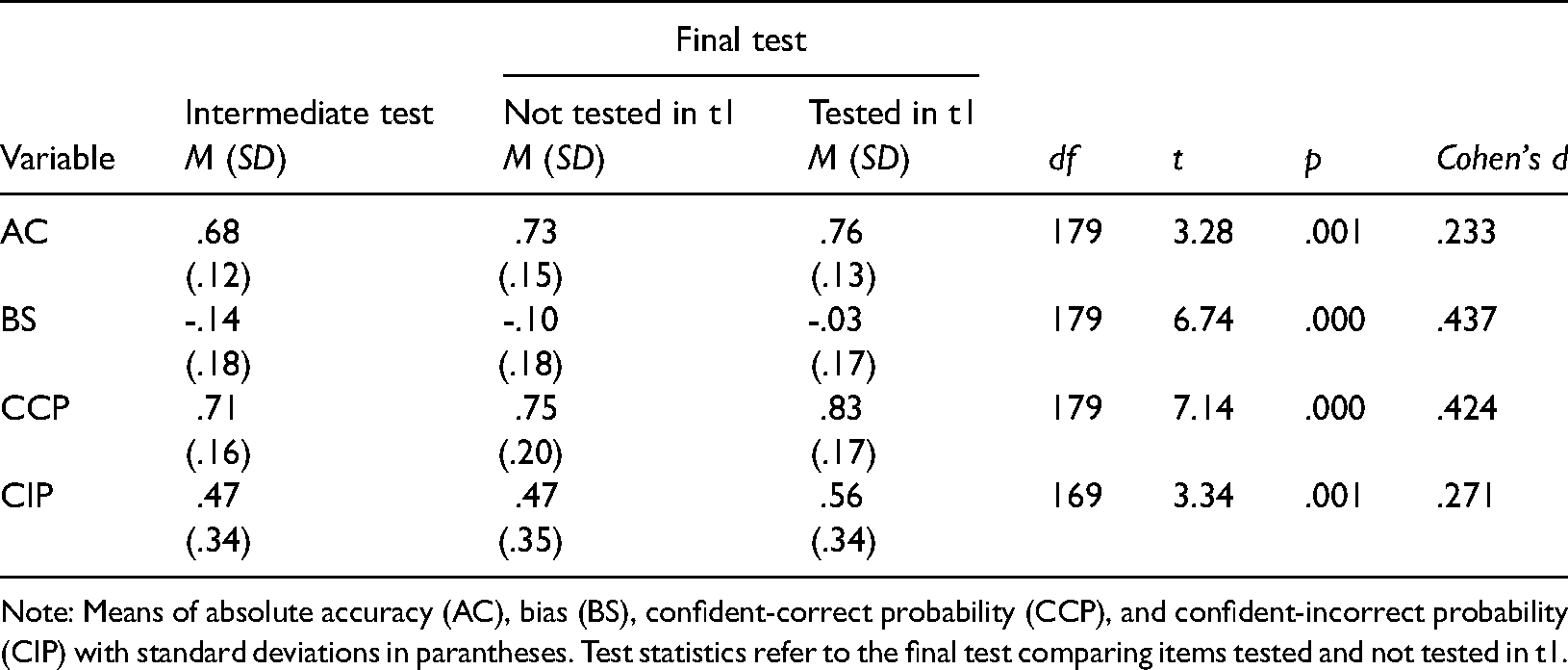

Metacognitive Monitoring Accuracy

As a first general indicator of metacognitive accuracy across all items, we computed the absolute accuracy (AC) in t2 for testing and control condition items. Absolute accuracy was significantly higher for testing condition items compared to control condition items (Table 2), indicating a small effect of retrieval practice.

Indicators of metacognitive monitoring accuracy.

Note: Means of absolute accuracy (AC), bias (BS), confident-correct probability (CCP), and confident-incorrect probability (CIP) with standard deviations in parantheses. Test statistics refer to the final test comparing items tested and not tested in t1.

As a second general indicator of metacognitive accuracy across all items, we computed the bias (BS) of the confidence ratings to assess potential over- or underconfidence. The negative BS scores in Table 2 indicate underconfidence in both testing and control condition items. The extent of underconfidence, however, was more pronounced in control condition items compared to test condition items. The difference was significant, indicating a small- to medium-sized effect of retrieval practice.

However, the bias indicator does not elucidate the extent that the lower underconfidence is due to items answered correctly or due to items answered incorrectly. Therefore, we split up confidence in correctly and incorrectly answered items. The confident-correct probability (CCP) designates the proportion of confident answers, given the answer was correct. CCP was significantly higher for testing condition items than for control condition items, constituting a small- to medium-sized effect (Table 2). Thus, retrieval practice resulted in higher confidence in correct answers, which indicates higher monitoring accuracy for these items. The confident-incorrect probability (CIP) designates the proportion of confident answers although the answers were incorrect. CIP was also significantly higher for testing condition items than for control condition items. The higher confidence in incorrect answers was not expected and indicates lower monitoring accuracy for these items. However, the beneficial effect of testing on the confidence in correct answers (CCP) was larger (d = .424) than the detrimental effect on confidence in incorrect answers (CIP), d = .272.

In sum, although the participants did not benefit from intermediate testing in terms of answering correctly in t2, they answered previously tested items more often with confidence compared to not previously tested items. Regarding the measures of metacognitive monitoring accuracy, retrieval practice resulted in more accurate and less biased confidence ratings across all items. Considering correct and incorrect answers separately, retrieval practice did not only result in higher confidence in correct answers (higher monitoring accuracy) but also in incorrect answers (lower monitoring accuracy).

Discussion

The aim of the present study was twofold. First, we wanted to investigate the effects of retrieval practice in an authentic educational context with high ecological validity by addressing actual leaning conditions in terms of setting, content, and achievement situation. To this end, we examined the effect of an intermediate test in a university course in educational psychology spanning a complete semester and including an actual examination. Second, we addressed three methodological issues identified in previous studies of applied testing effect research. We (a) varied retrieval practice within participants (and not between participants) to prevent any disadvantage for participants in the achievement test, (b) controlled for potential interference effects of item difficulty, and (c) included dependent measures at the cognitive and metacognitive level to provide a more complete picture of retrieval practice effects. Based on the evidence of the testing effect under laboratory conditions (e.g., Rowland, 2014), in diverse applied settings (e.g., Moreira et al., 2019), and with regard to teaching psychology (Schwieren et al., 2017), we expected higher performance in testing condition items compared to control condition items in the final test at the cognitive and the metacognitive level.

Regarding the cognitive level, our results demonstrated that generating a testing effect under field conditions is not trivial. In fact, testing condition and control condition items were equally often answered correctly in the final test. We discuss some potential methodological and theoretical reasons for this null effect. First, the a-priori difficulties of testing condition and control condition items were balanced based on data from independent samples. Otherwise, accidentally higher difficulties in testing condition items and lower difficulties in control condition items could have masked a beneficial effect of testing. Second, control condition items referred to course topics presented before and after t1, whereas testing condition items only referred to course topics presented before t1. Thus, the smaller time lag of parts of control condition items could result in lower item difficulties of these items. To reduce this potential influence, we also balanced the a-priori difficulties of items referring to course topics presented before and after t1. Thus, it was less likely that testing condition items (referring to topics presented before t1) were accidentally more difficult than control condition items (referring to topics presented after t1, in parts). Such an imbalance could have interfered with the testing effect but was reduced by the parallelization of item difficulties.

Third, the magnitude of the testing effect depends on successful recall in the initial test (Halamish & Bjork, 2011; Kornell et al., 2011), in particular when no (item-specific) feedback is given. A failure to find a testing effect could be due to a generally low rate of correct answers in the initial test. This reason, however, is unlikely because the mean percentage of correct answers in the intermediate test (80%) was sufficiently high on average. Thus, more or less successful recall in t1 seemed to be less relevant for performance in the final test. In fact, we revealed that the correlation of intermediate test performance and the occurrence of a testing effect (difference of final test performance levels between control and testing condition) was approximately zero. This might be due to the actual learning context, that is the information of the intermediate test (irrespective of the correctness of answering) could be used to direct subsequent learning activities. Summarized, the failure to find a testing effect on the number of correctly answered items can hardly be attributed to design issues related to item difficulty. This is in line with previous studies controlling for item difficulty, which also failed to find a testing effect in applied contexts using a within-participants design (e.g., Bing, 1984; Goosens et al., 2016).

Instead, the lack of a testing effect may be related to the present sample and the learning context. The students were instructed that the topics of the complete lecture series could be part of the final test. During the whole semester, they had diverse opportunities to acquire, elaborate, and recall the contents presented so far. If the participants intensified their learning behavior while approaching the final test (Taraban et al., 1999), this may imply multiple opportunities for retrieval practice and other effective learning activities beyond the intermediate test. Moreover, the students in the present sample performed better in the final test than predicted by the a-priori difficulties. This result may indicate that the present students applied more effective learning activities than previous samples. Thus, the effects of the intermediate test might have been covered by effects of learning activities that were unrelated to the testing design. It is one important limitation of the present study that we were unable to control for these additional learning activities. In future test effect studies in authentic learning situations of this type, the option of using learning diaries should be considered.

Despite the fact that no testing effect emerged at the level of cognitive performance, retrieval practice did affect metacognitive performance indicating the relevance of including metacognitive measures. Testing condition items (tested in t1) were answered with more confidence in the correctness of the answers than control condition items (not tested in t1). Moreover, in testing condition items, the absolute accuracy of the confidence ratings was higher and the bias was lower than in control condition items. Particularly, the confidence in correctly answered testing condition items was higher than in correctly answered control condition items. Summarized, different indicators based on confidence ratings demonstrated that additional retrieval practice beneficially affected metacognitive monitoring accuracy.

Interestingly, not only the confidence in correct answers increased as a consequence of retrieval practice but also the confidence in incorrect answers. This might be interpreted as a general effect on confidence independent of the participants’ impression of answering correctly or incorrectly. Instead, higher confidence might be triggered by familiarity—due to the earlier appearance of an item in the intermediate test. However, we do not tend to interpret this result as a mere familiarity effect. First, the size of the testing effect on the confident correct probability (CCP) was larger than the testing effect on the confident incorrect probability (CIP). Second, the coverage of the testing effect on the CCP was larger than the coverage of the testing effect on the CIP because much more items were answered correctly than incorrectly in the final test. Thus, the positive effect of retrieval practice (higher confidence in correct answers) clearly outweighed its negative effect (higher confidence in incorrect answers). In conclusion, including metacognitive measures was essential to discover the beneficial effects of retrieval practice in the present study; the potential of retrieval practice would have been misinterpreted without these measures.

What implications can be drawn from the present study for instructors in educational settings such as learning and teaching psychology? First, retrieval practice proved to be an effective tool to support learning processes in the present study, when applied in an authentic educational setting and when interfering variables (such as item difficulties) were controlled for. Although no testing effect emerged at the level of cognitive performance in the present study, retrieval practice improved the learners’ metacognitive monitoring accuracy which is highly relevant in supporting effective self-regulated learning processes (Thiede et al., 2003; Winne & Hadwin, 1998). Previous studies revealed that many students tend to regulate their learning activities in suboptimal ways (e.g., Dunlosky et al., 2013; Wissman et al., 2012) or showed substantial individual differences in self-regulated study behavior (e.g., Barenberg et al., 2018). Thus, interventions to optimize learners’ self-regulation are often needed and retrieval practice may be one option.

Second, the present study demonstrates that assessing metacognitive performance can be rather easily implemented in test situations by employing confidence-weighted true-false items (Barenberg & Dutke, 2013; Dutke & Barenberg, 2015). Employing confidence judgments can provide helpful insights into relevant learning aspects for learners and instructors. On the one hand, learners are stimulated to reflect their understanding of learning topics and the quality of their knowledge. This reflexion can help them to identify learning topics that need more attention and to adjust their learning activities. Moreover, when confidence judgments are repeatedly used, reflecting the appropriateness of the judgments can help the learners to develop their accuracy of metacognitive monitoring. On the other hand, the instructors also gain valuable insights into the learners’ state of comprehension and quality of knowledge. This information can help them to adjust their teaching program. They can design (individualized) instructions to clarify particular learning topics, for example, and they can support the learners’ development of monitoring and self-regulation processes by giving feedback to the appropriateness of their judgments. In conclusion, the results of the present study recommend the application of retrieval practice and the implementation of metacognitive performance assessment in educational settings.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Author Biographies

Appendix

2 × 2 (Correctness × Confidence) Data Array Resulting from the Response Scale (adapted from Barenberg and Dutke, 2013).

The indices of metacognitive monitoring accuracy were computed according to the following formulas:

Absolute accuracy of the confidence judgments (AC): Bias of the confidence judgments (BS): Confident-correct probability (CCP): Confident-incorrect probability (CIP):

The attributes of the answer to each item (correct or incorrect, confident or unconfident) were coded as 1 (given) or 0 (not given).