Abstract

Studying at university places high demands on the control and regulation of one's own learning behavior. In order to support students’ learning, we developed and used a webtool containing elements such as regular performance testing as well as feedback on test performance and self-evaluations. In this report, we first introduce core elements of the applied webtool. Then, we present findings on student's use of this tool. Results show substantial variability in willingness and frequency of tool usage between students. Students with better final school exam grades and students who intended to engage regularly in course postprocessing were more willing to use such a tool. The findings are discussed against the background of regulation requirements in open learning environments and implications on better implementation are derived.

In comparison to school, learning in higher education is characterized by a comparatively high number of degrees of freedom (Goppert et al., 2021). University students need to decide for themselves which course to take and how often to attend, or whether they want to study additional material such as textbooks in order to better comprehend the discussed course content. In different words: students need to regulate their own learning. With regard to the current study, two aspects of self-regulated learning are crucial: First, learners need to be aware of what they know and are capable of but also of what they do not know and are not capable of (Stone, 2000; Zimmerman & Moylan, 2009). Second, self-regulated learning may be seen as a process with cyclic reiterating phases, often labeled as a forethought phase followed by a performance phase and a self-reflection phase (Zimmerman & Moylan, 2009). Through feedback loops, learners continuously adapt their motivation, behavior and cognition. Therefore, implementing learning conditions that promote accurate confidence of one's knowledge seem a promising approach to support students’ metacognition and the regulation of their learning. Self-testing and feedback are two components that support this process (Händel et al., 2020; Stone, 2000). In addition, testing one's own knowledge tends to be effective for student learning (Bangert-Drowns et al., 1991; Roediger & Karpicke, 2006; Schwieren et al., 2017). However, what remains to be an open question is, whether in real-life settings, students are willing to use tools supporting them in their self-regulated learning.

Individual Differences in SRL and the Use of Supporting Tools

Within computer based learning environments, two forms of support devices are differentiated (Clarebout & Elen, 2006): Embedded support devices, which are used in an obligatory manner. And non-embedded support devices, which may be added to the learning environment and which are used in a voluntary manner. The latter are often labeled as “tools” (Clarebout & Elen, 2006). Implicitly, such open learning environments assume that learners are aware of their learning needs and possess sufficient metacognitive skills to successfully plan, monitor, and regulate their learning (Clarebout et al., 2013). However, in authentic settings, not all students are willing to participate in an intervention that aims to promote their learning or to use available learning webtools in a way as suggested (Jansen et al., 2020; Lust et al., 2013). Therefore, it is important to explore variables that relate to students’ participation in such measures. Clarebout et al. (2010), for example, did not find a relation between self-regulation skills and the frequency of tool usage. Basol and Balgalmis (2016) report a positive relation between the frequency of use of voluntary e-tests and students’ perception of e-tests as an active and self-regulation learning tool. However, the applied perceived e-test self-regulation measure does not tend to be informative for students’ general propensity of regulating their learning process.

To sum up, although tools and interventions that aim to promote students’ learning in higher education settings have been developed, students often do not use such tools as intended. The reasons for this are not well understood. The present report therefore has the following two objectives:

Introducing a webtool that intends to foster students’ self-regulated learning and illustrate first formative evaluation findings. Presenting analyses on whether students’ characteristics and self-regulated learning relate to the usage of such a tool within an authentic context.

All analyses were exploratory as we had no strong hypotheses before conducting the analyses.

PsyCoach – A Webtool to Support Students’ Self-Regulation and Learning

The tool was created taking into account various theoretical principles, inter alia based on models of self-regulated learning and metacognition, feedback and testing effects (see Pfost et al., 2020, for a detailed description of the theoretical foundations). We will briefly illustrate core elements and major features to provide insight into the tool.

First, before using the tool, students need to register and choose an anonymous nickname. Furthermore, students have to provide consent on data privacy regulations. Data privacy regulations were in line with the general regulations of the University of Bamberg.

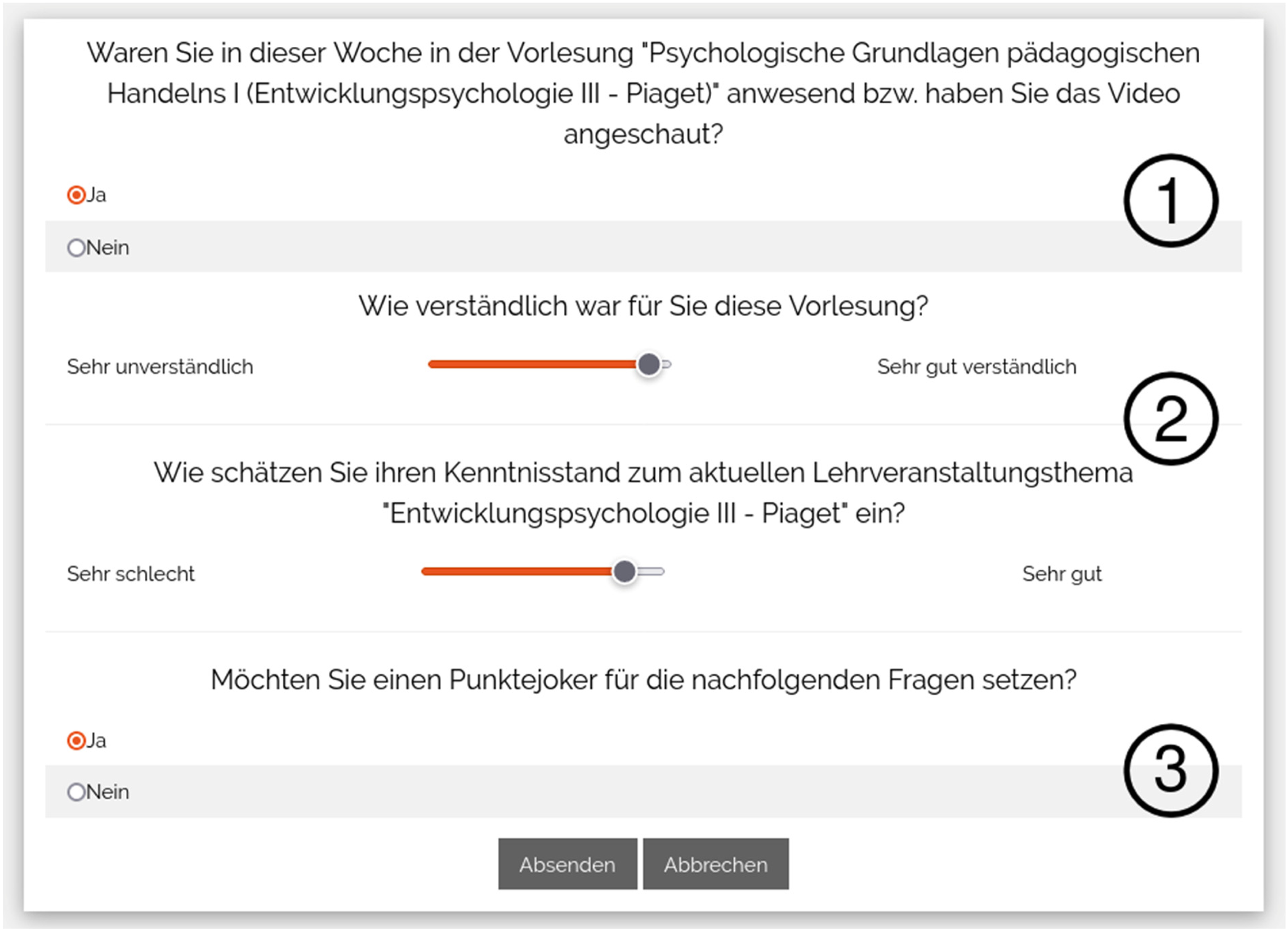

Second, before students have the possibility to answer the weekly test questions, they are asked to provide information on (1) whether they attended the course in the current week; (2) whether they comprehended the provided course content well; and (3) the impression of their current state of knowledge on the course topic (Figure 1).

Weekly self-evaluation questions. Before responding to the weekly test items, students are asked to provide information on (1) whether they attended the (online) lecture; (2) whether the lecture was comprehensible to them and to evaluate their own knowledge of the topic currently discussed; and (3) whether in the subsequent test items the student wants to double its points (bonus card; an integrated gamification element).

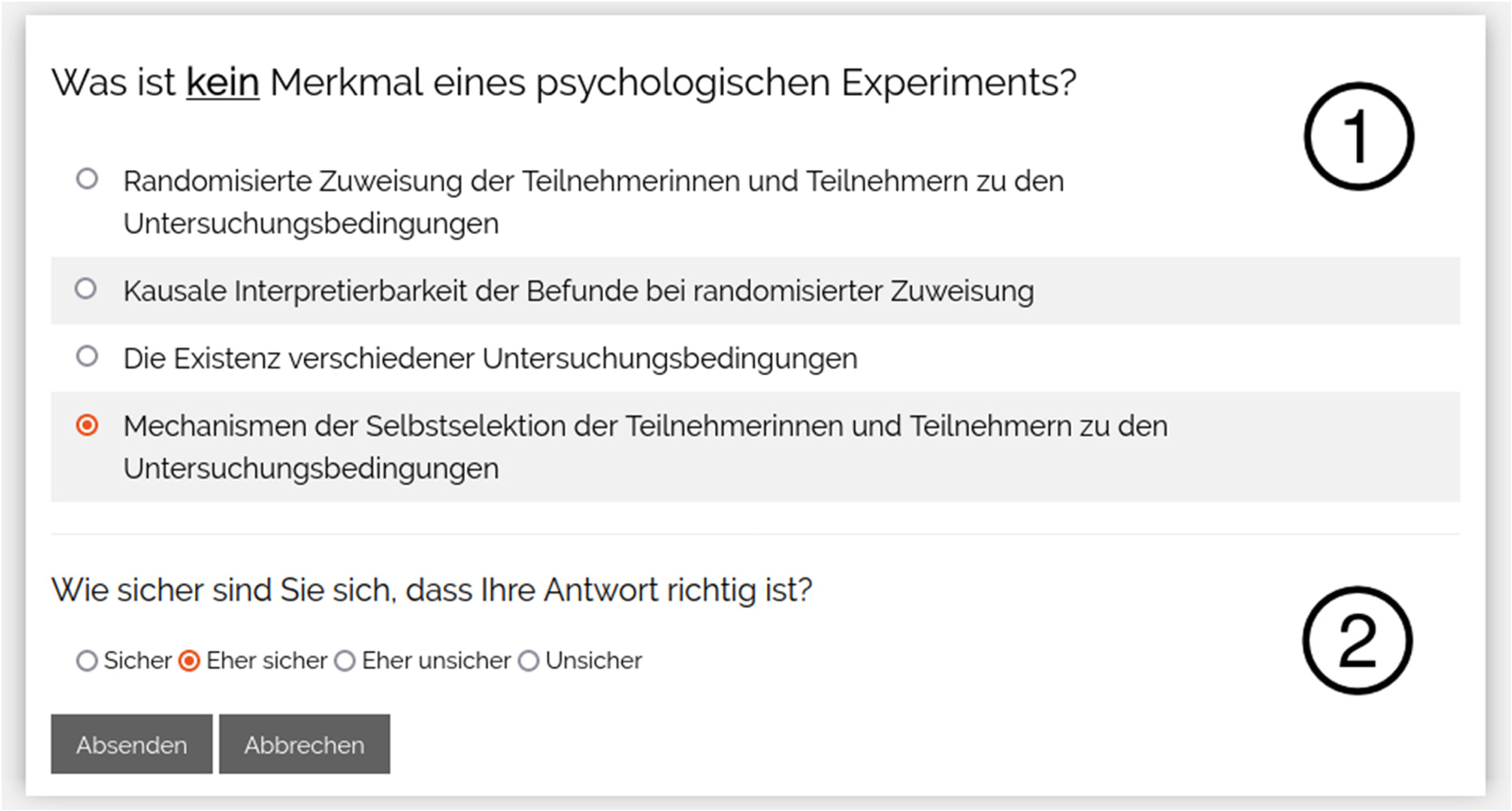

Third, students have the possibility to answer test items on the course content of the current week (Figure 2). Test items can be provided in closed response format or open response format. Furthermore, lecturers have the option to provide feedback on the test items (right/wrong in closed response format; elaborated feedback in open response format). In the terms we considered for evaluation purpose within this paper, we provided between two and five test items in closed response format and between zero and one test item in open response format per week. Students received feedback for items in closed response format immediately after test taking. For items in open response format, students received feedback typically between one day and up to one week after test taking.

Test item. Example test item in closed response format (1). Furthermore, students may indicate their confidence of having answered correctly (2).

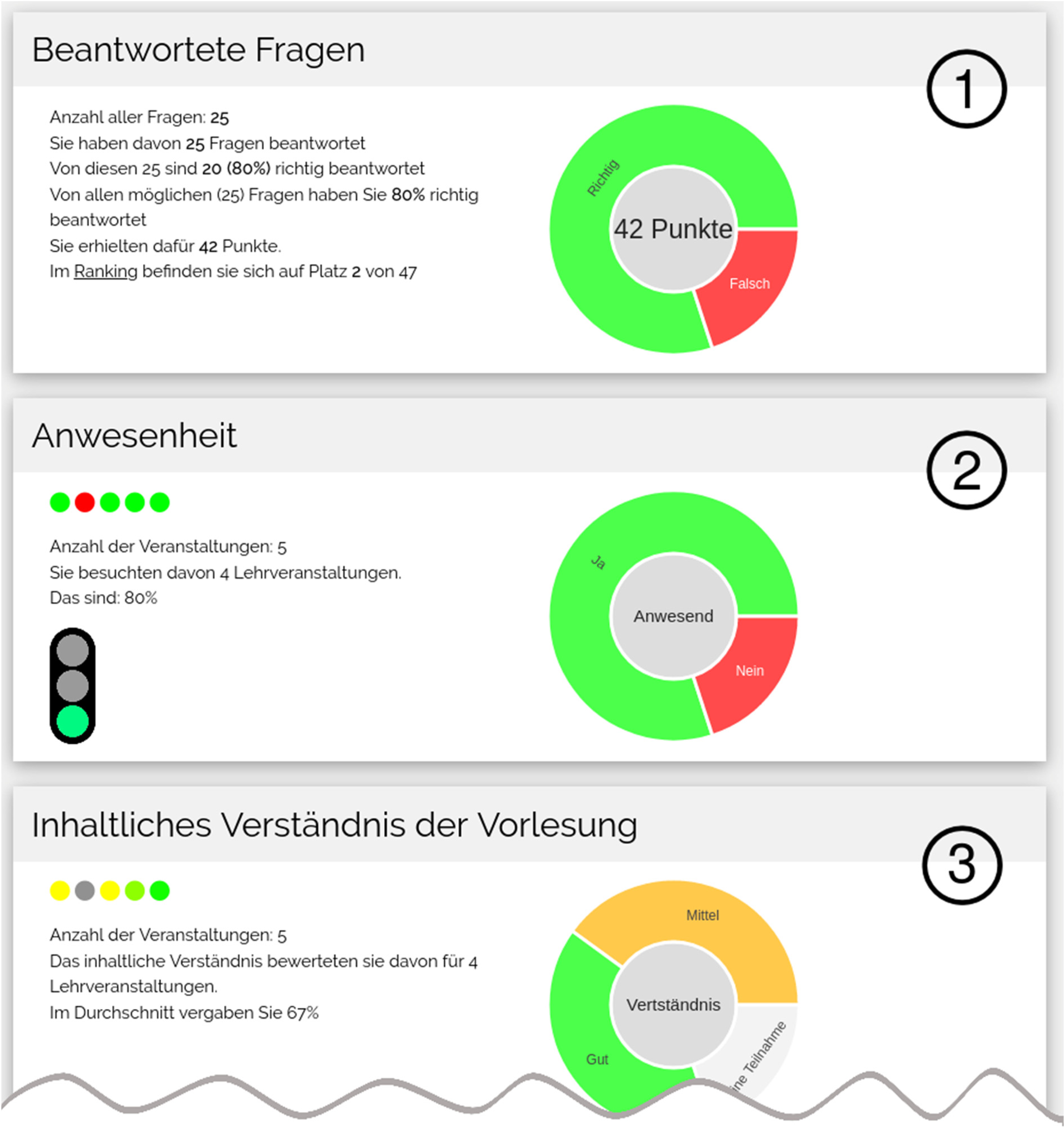

Fourth, students have a summarizing feedback card (Figure 3). On this card, students can access individual statistics on their test performance (e.g., percentage of solved items). In addition, summary information for the current semester on course attendance, comprehension of course content, etc. is provided. The summarizing feedback card is cumulative as it recapitulates data across all weeks within one term. Finally, gamification elements such as an anonymous course ranking (not depicted) that allows for a social comparison with other participating students or a bonus card that doubles the maximum score for a correct response were included.

Feedback card. Students have access to a variety of feedback on performance and behavior. For example, concerning performance feedback, students see the number of test points reached, numbers of items responsed, etc (1). Furthermore, students receive feedback on course participation (2), self-evaluated comprehension of the lectures (3), etc.

Empirical Analyses

Design and Participants

The PsyCoach webtool was used as an additional learning device for students in the field of educational science who are attending an introductory course in psychology at the University of Bamberg, Germany. Within the current report, we present findings and data on the use of the webtool in the winter term 2019/2020 (face-to-face teaching), the winter term 2020/2021 (distance teaching) and the summer term 2021 (distance teaching). In the summer term 2020, the webtool has not come into use. At the beginning of the lecture in each term, the webtool was introduced. Students needed to decide by themselves if they wanted to use the tool as a learning aid or not. In total, 128 students (n = 51 in winter term 2019/2020; n = 54 in winter term 2020/2021; and n = 23 in summer term 2021) used the webtool and answered at least one test item.

In addition, students within the same course were asked to participate in a survey study (see Supplementary Material for further information). Participation was voluntary. In total, questionnaire data from 145 students (76.6% female, 23.4% male, 0.0% diverse) was available. It should be noted that participation in the survey study was not a necessity for using the webtool and vice versa. However, both studies were designed in such a way that they allow to be related with each other. Consequently, for final analyses, the following data were available (total dataset: N = 223): 95 students (42.6%) who did not use the webtool but for whom questionnaire data is available; 78 students (35.0%) who used the webtool, but for whom questionnaire data is not available; and 50 students (22.4%) who used the webtool and for whom questionnaire data is available.

Instruments

Self-regulated learning/Metacognition: Student's self-regulated learning was assessed with four scales: time management, metacognitive planning, monitoring and control as well was regulation. The applied items were adapted from the LIST scale (Boerner et al., 2005; Wild & Schiefele, 1994) (see Supplementary Material).

Follow-up of the lecture: Students were asked whether (0 = no, 1 = yes) they intend to postprocess the course content accompanying the lecture, e.g., by looking-up incomprehensible content or writing short summaries.

Further variables: Students were asked to indicate their gender (male, female, diverse). Furthermore, the average grade of students’ final school exams (Abitur) was asked (from 1 = grades between 1.0 and 1.5; up to 6 = grades between 3.6 and 4.0; higher grades reflect lower average school performance).

Webtool usage: First, the number of test items each individual student tried to solve within one term was recorded. As a different number of test items were provided in different terms (winter term 2019/20: 41; winter term 2020/2021: 57; summer term 2021: 61), for each student the number of tried test items was divided by the number of provided test items in each term (test item response rate). This indicator has a theoretical range between 0 and 100%. Second, the number of correct responses in test items was recorded. Again, as in different terms students could reach a different maximum number of test scores, for each student the reached test score was divided by the maximum test score (percentage of test score reached).

Procedure

We analyzed students’ webtool usage based on two decisions: On the one hand, students decided to use the webtool (coded with 1) or not (coded with 0). On the other hand, students who decided to use the webtool showed different amounts of webtool usage (test item response rate, percentage of test score reached). Depending on the type of variables, phi-coeficients, point-biseral or Pearson correlations were estimated. In order to control for different modes of teaching, linear regession models that consider an additional dummy variable (face-to-face teaching/winter term 2019/2020 = 0; distance teaching/winter term 2020/21 and summer term 2021 = 1) were estimated. Significance of the reported correlation and regression coeficients were tested applying a bootstrapping procedure (BCa-method).

Results

Webtool Usage – Descriptive Data

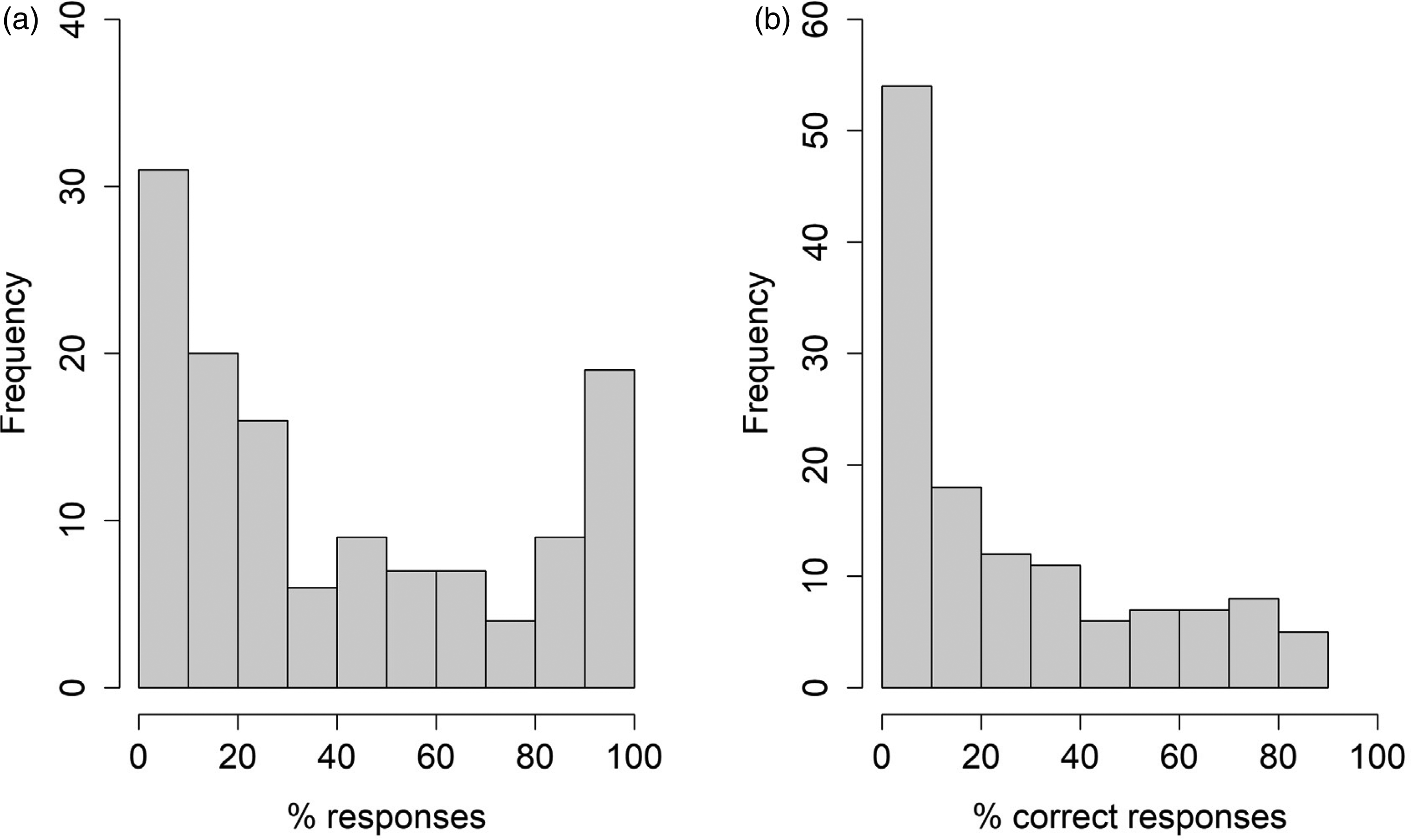

On average, every student responded to 21.4 test items. Divided by the number of test items in each term, this equals to an average response rate of 40.9% to all provided test items. A close inspection of the distribution reveals a positive skewness (v = 0.57) with 25 percent of all students responding to just 10.5% or less of all provided test items (see Figure 4(a)). Furthermore, on average, students received a test score of 19.5 points. Divided by the maximum number of test scores a student could have received in each term, this equals to rate of 26.1% correct responses of the maximum possible test scores (see Figure 4(b)).

Descriptive statistics on webtool usage (n = 128 students). (a) Test item response rate (%). (b) Test score reached (%).

Questionnaire Data and Web-Tool Usage

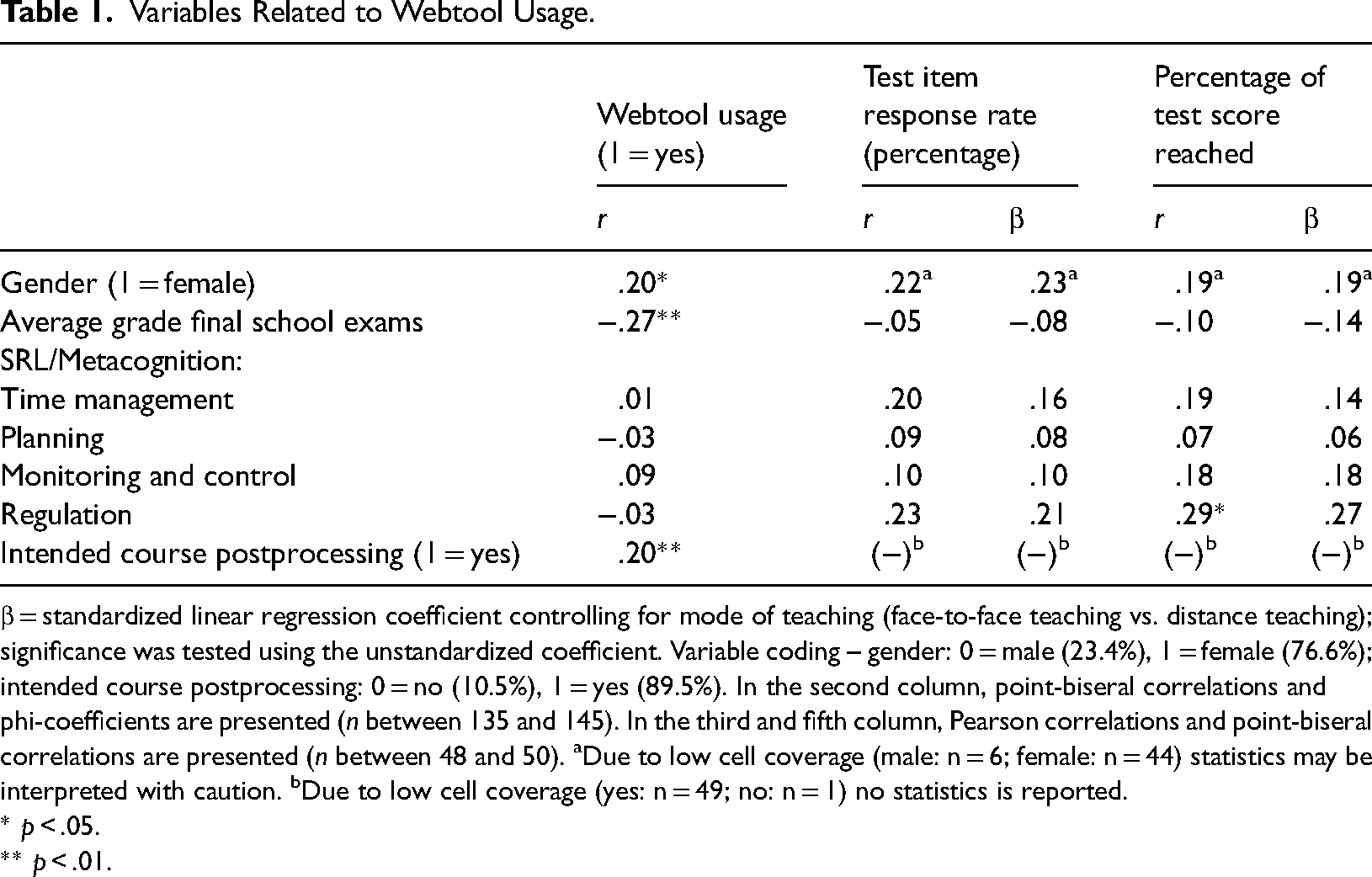

In total, from 145 students for whom such questionnaire data is available, 50 students (34.5%) decided to use the webtool and 95 students (65.5%) did not use the webtool. Point-biseral correlations and phi-coefficients concerning the decision to use the web-tool or not to use the web-tool are depicted in the second colunm of Table 1. Female students and students with better school grades (=lower final school exam grades) decided more often to use the webtool. Furthermore, students who at the beginning of each term intended to regularly posprocess the course content more often used the webtool.

Variables Related to Webtool Usage.

β = standardized linear regression coefficient controlling for mode of teaching (face-to-face teaching vs. distance teaching); significance was tested using the unstandardized coefficient. Variable coding – gender: 0 = male (23.4%), 1 = female (76.6%); intended course postprocessing: 0 = no (10.5%), 1 = yes (89.5%). In the second column, point-biseral correlations and phi-coefficients are presented (n between 135 and 145). In the third and fifth column, Pearson correlations and point-biseral correlations are presented (n between 48 and 50). aDue to low cell coverage (male: n = 6; female: n = 44) statistics may be interpreted with caution. bDue to low cell coverage (yes: n = 49; no: n = 1) no statistics is reported.

* p < .05.

** p < .01.

In the third column of Table 1, the relative number of responded test items (test item response rate) for students who have decided to use the webtool is related to students’ gender, school exam grades and self-regulated learning. Despite some descriptive correlations of .2 and beyond (e.g., for students’ regulation), no significant relations were found. In the fifth column, correlations with the relative test score reached are depicted. The findings show a positive correlation of reached test score with students’ metacognitive regulation. However, controling for the mode of teaching, the relation to metacognitive regulation slightly decreased and did not reach significance level anymore. Further analyses with separate correlations for winter term 2019/2020 in comparison to winter term 2020/2021 and summer term 2021 can be found in the Supplementary Material.

Formative Evaluation

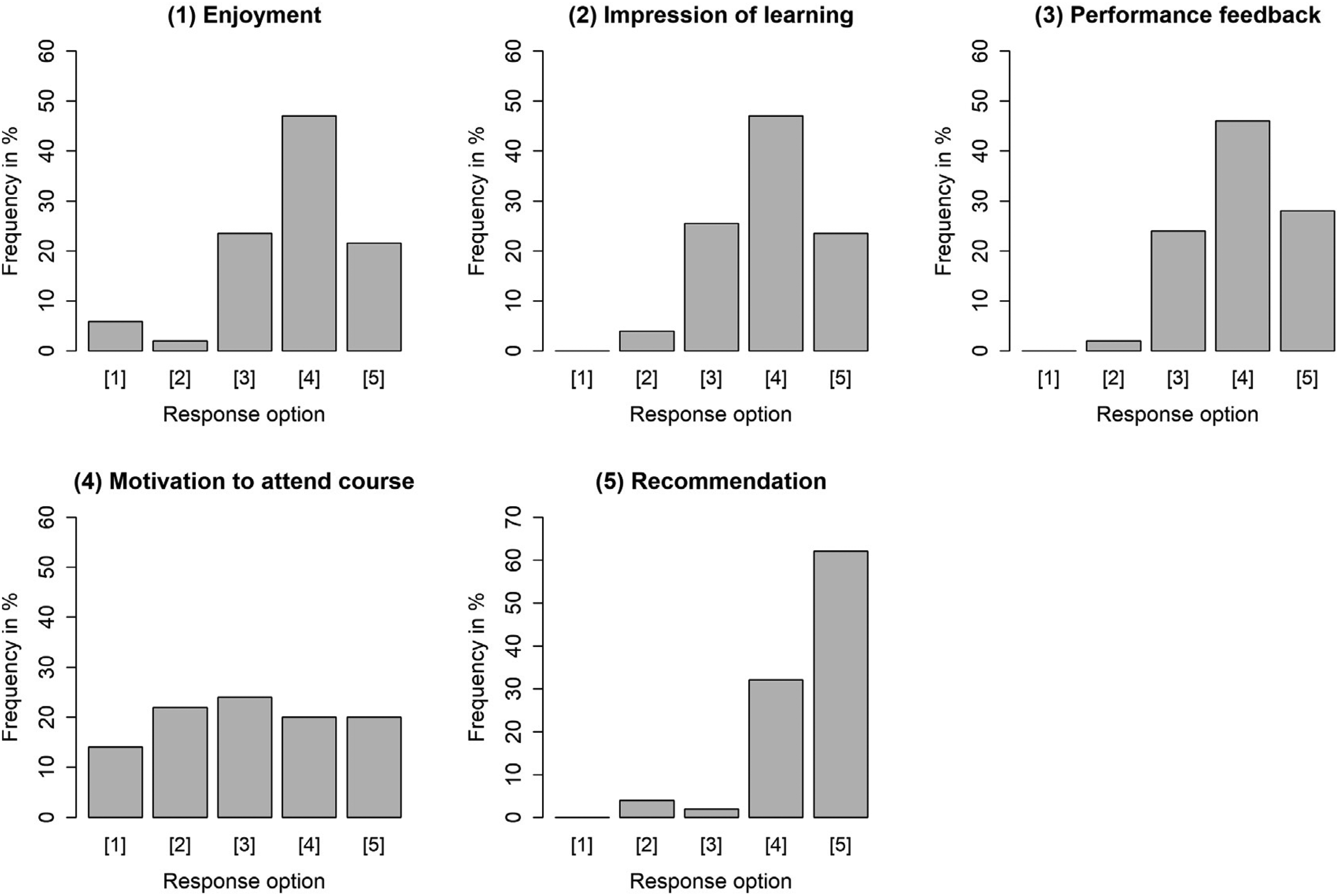

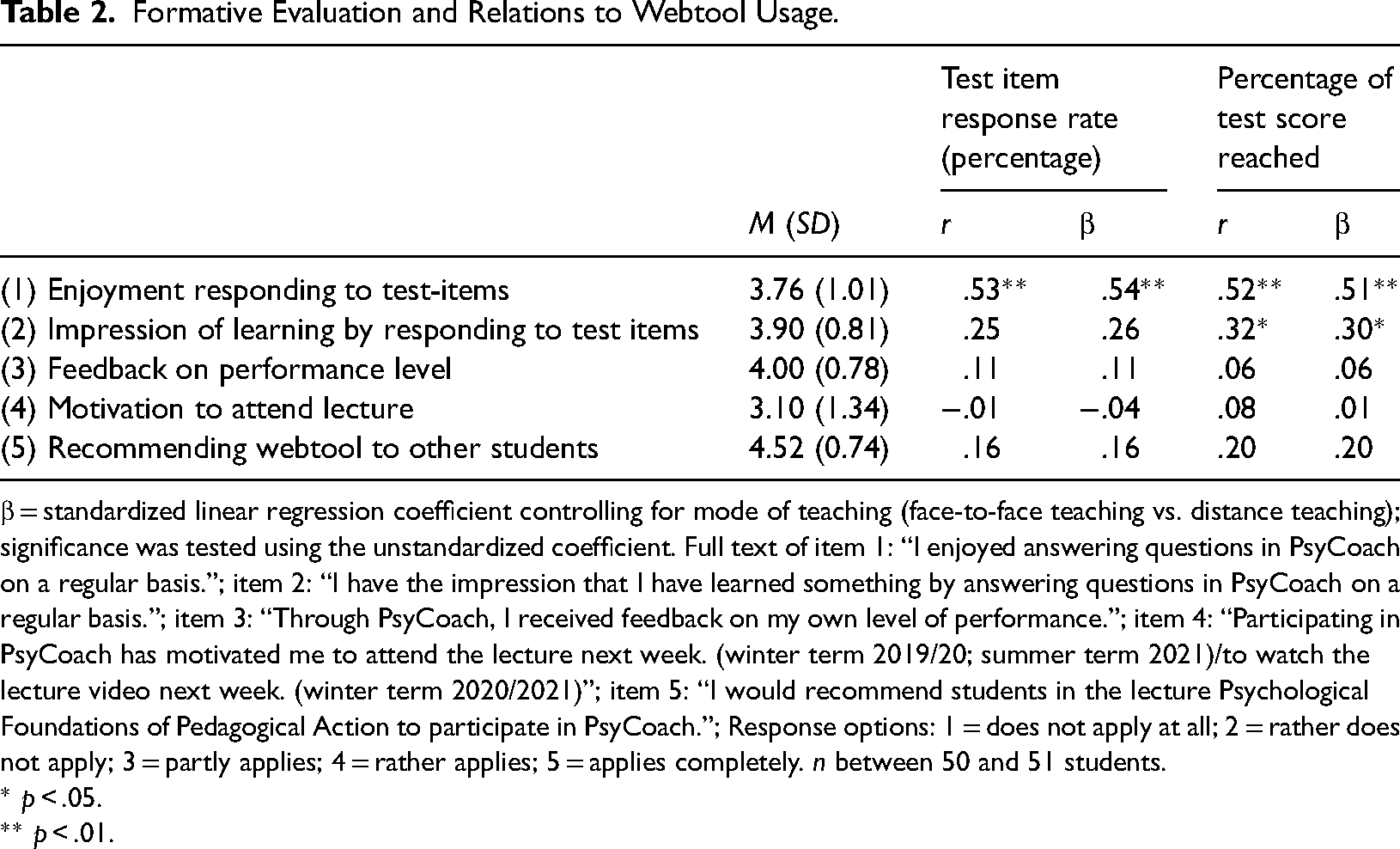

In the second half of each term, students using the webtool were asked to provide evaluative feedback on the webtool (see Table 2, including item statements). In general, the students evaluated the webtool quite positively (Figure 5). Most students agreed or at least partly agreed to statements expressing enjoyment in regularly responding to test items (M = 3.76), the impression of having learned something by responding to test items (M = 3.90), and receiving feedback on performance level (M = 4.00). Furthermore, a large majority of the students (94.0%) rather or completely agreed (response option 4 or 5) with the statement recommending the webtool to other students attending the same lecture (M = 4.52). However, the statement expressing that the webtool motivated students to attend the lecture next week or to watch the lecture video next week, provided more mixed results (M = 3.10): 36% of the students did not or rather not agree with statement and 24% partially agreed, whereas 40% of the students rather or completely agreed with this statement.

Results of formative evaluation. Response options were: 1 = does not apply at all; 2 = rather not applies; 3 = partly/partly; 4 = rather applies; 5 = applies completely. n between 50 and 51 students.

Formative Evaluation and Relations to Webtool Usage.

β = standardized linear regression coefficient controlling for mode of teaching (face-to-face teaching vs. distance teaching); significance was tested using the unstandardized coefficient. Full text of item 1: “I enjoyed answering questions in PsyCoach on a regular basis.”; item 2: “I have the impression that I have learned something by answering questions in PsyCoach on a regular basis.”; item 3: “Through PsyCoach, I received feedback on my own level of performance.”; item 4: “Participating in PsyCoach has motivated me to attend the lecture next week. (winter term 2019/20; summer term 2021)/to watch the lecture video next week. (winter term 2020/2021)”; item 5: “I would recommend students in the lecture Psychological Foundations of Pedagogical Action to participate in PsyCoach.”; Response options: 1 = does not apply at all; 2 = rather does not apply; 3 = partly applies; 4 = rather applies; 5 = applies completely. n between 50 and 51 students.

* p < .05. ** p < .01.

Finally, evaluation statements were related to students’ webtool usage: Results show that students who indicated higher levels of enjoyment in regularly responding to test items used the webtool more often and reached a higher test score. Furthermore, higher test scores were reached by students indicating a higher impression of having learned something by regularly responding to test items.

Discussion

We developped a webtool that students may use to better monitor their learning in the course of one semester. Within this study, just about one third of the students who attended the course and for whom questionnare data is available decided to use the webtool. This number might be affected by bias due to non-response in the survey distributed at the beginning of each term. Still, it illustrates quite well known difficulties in reaching students within open learning environments (Aleven et al., 2003; Clarebout et al., 2008, 2010; Jansen et al., 2020). Therefore, we further explored variables that relate to students’ usage of such a webtool. First, female students tended to use the webtool more often than male students. Weis et al. (2013), for example, have shown that girls tend to better regulate their behavior, which might be a possible explanation for this finding. However, Basol and Balgalmis (2016) did not report gender differences for voluntary test taking behavior. Therefore, a replication of this finding is warranted. Second, webtool usage was related to better school grades. As school grades have shown to be related to variables such as general cognitive abilities or personality traits such as conciousness (Furnham & Monsen, 2009; Hofer et al., 2012), these third variables may explain the found relation. Furthermore, final school exam grades may be treated as good indicators for university students’ self regulation and control, which are of primary importance for study success (Galla et al., 2019). Third, in terms of the relation between self-regulation and webtool usage, we observe ambiguous results: students who indicated no intention to postprocess the course content were significantly unterrepresented in webtool usage. However, neither self-reported time management nor the applied metacognition scales (planning, monitoring and control, regulation) were related to webtool usage. On the one hand, this finding might be attributable to insufficient test power due to low sample size – a problem that generalizes also to the other reported results. On the other hand, self-regulated learning and metacognition were assessed in relation to the course in general and not in relation to webtool usage. Therefore the measures might be too unspecific in the context of this study. Finally, it might be possible that students do not see, at least in advance, that this webtool might support their learning. Therefore webtool usage might not be affected by students strategic reasoning on their learning.

Among students who used the tool, three variables were related to usage characteristics: First, higher self-reported metacognitive regulation aligend with more correct responsens on test items. However, this relation was not found after controlling for the mode of teaching, restricting the interpretability of this correlation. Second, correct responses were correlated with the subjective impression of having learned something by regular tool use. Third, enjoyment of webtool usage was strongly related to frequency of webtool usage and number of correct responses. Due to the correlative nature of our results, caution is required in interpretation. Still, our results might provide a first hint for the importance of intrinsic motivation - in comparison to the facet of extrinsic motivation - for learning within open learning environments. Interestingly, and contrary to the enjoyment facet, perceiving the tool as important due to its feedback function was not related to webtool usage. In addition to the possible explanation of low test power, this finding might also be interpreted in a way that webtool usage might not be object of personal reasoning on optimal learning. Finally, high rates of agreement to this item also restricts the possibility to find strong relations to other variables such as webtool usage.

Formative evaluation findings showed that students who used the webtool provided quite positive feedback: in general, students enjoyed to regulary use the tool and had the impression to receive feedback on their performance level. However, there was no clear tendency in terms of whether students feel additionally motivated by the tool to attend the next lecture.

Limitations

In this report, we presented data on webtool usage within an authentic setting and therefore of high ecologic validity. However, this approach comes with specific limitations. First, response rate on the survey questionnaire was low, especially while distance teaching due to COVID-19. Reduced test power was the consequence. Furthermore, such small sample sizes carry the risk of being affected by random effects and non-response bias cannot be ruled out. Second, a code system was used to relate survey data to webtool data. However, this procedure might not be error free due to poor handwriting or participants generating different codes. Third, the study design does not allow a causal interpretation of the findings. Experimental data on effects is a desideratum of future research. Finally, we do not have specific data on reasons why non-users of the webtool decided not to use the tool. For example, non-users might hold quite negative beliefs towards the usefulness of such a tool or in general believe that beyond the standard materials such as course slides no further support is needed.

Conclusion and Practical Implications

In order to support students’ regulation of their learning, a webtool that allows access to feedback on learning behavior and performance was developed. However, when implementing the tool, we observed that many students did not use the webtool. Therefore, it remains a challenge how to reach as many students as possible. Obligating students to use learning tools may results in higher frequencies of tool use, but has also shown to be related to a lower quality in tool use (Clarebout et al., 2010). Based on the idea that enjoyment and intrinsic motivation may be important for tool use, identifying variables that promote intrinsic motivation such as social relatedness within a group of learners may provide further starting points for future developments.

Supplemental Material

sj-docx-1-plj-10.1177_14757257221122267 - Supplemental material for Self-Regulated Learning, Learner Characteristics and Relations to Webtool Usage in Higher Education

Supplemental material, sj-docx-1-plj-10.1177_14757257221122267 for Self-Regulated Learning, Learner Characteristics and Relations to Webtool Usage in Higher Education by Maximilian Pfost, Peter Kuntner, Simone A. Goppert and Vanessa Hübner in Psychology Learning & Teaching

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.