Abstract

In a clinical psychology training context, there is a need to examine students’ theoretical knowledge as well as their professional competence. One promising method to assess students’ professional competence is the Objective and Structured Clinical Examination (OSCE). In this report, we describe and discuss the implementation of OSCE on a clinical psychology programme at a university in Sweden, including lesson learned regarding the structure and content for this examination. We also report on preliminary results, in which we explored students’ perceived competence and worries, and their supervisors’ reports regarding their clinical practicum, in relation to a new curriculum that includes more simulation-based elements (including the OSCE) than the old curriculum. Results showed that students on the new curriculum reported lower levels of perceived competence before the clinical practicum, but increased significantly more over time in comparison to students on the old curriculum. These results are discussed in relation to the potential role of OSCE in clinical psychology students’ development of professional competence. Due to methodological limitations, these results should be interpreted with caution and should be viewed as exploratory. All in all, this report can be viewed as a guideline for implementation of OSCE on similar programmes in psychology.

Introduction

In university programmes that result in a professional degree (e.g., clinical psychology programmes), there is a need to enable and examine students’ theoretical knowledge as well as their professional competence. Competence is defined as “a measurable pattern of knowledge, skill, abilities, behaviours, and other characteristics that an individual needs to perform work roles or occupational functions successfully” (Rodriguez et al., 2002, p. 310). The professional competence of clinical psychologists includes a combination of knowledge, skills, and abilities necessary to perform their occupational tasks. Higher education aims to prepare students for these tasks, and an important challenge for educators is teaching and examination of professional competence and practical skills. Probably the most challenging part with examinations of professional competence is to ensure high ecological validity so that the examinations manifest a natural clinical setting and that they hinge on relevant competence (von Treuer & Reynolds, 2017). Hence, it is important to ensure that the examinations tap into competencies that are of relevance for professional contexts outside of the university. Besides examining whether the students have reached the learning objectives for a specific course, examinations of professional competence can prepare students for clinical practicums (i.e., clinical internships during and after education), as well as highlighting potential gaps of knowledge that students need to focus on in their future education and professional development.

Examination of students’ professional competence is often done using simulation in some form. Simulation, in this case, refers to techniques that enable experiences of relevant real-world situations in a lab or another context (Gaba, 2004). The different methods comprising the term simulations are many but have in common that they all include an interactive aspect. Examples of techniques include computer-based simulation, virtual reality, and role-plays. Simulation has the purpose of enhancing students’ educational experiences and offer opportunities for learning and examination that are authentic to students’ future profession.

One well-known, valid, and reliable method that is successful in examining clinical and practical competence is the OSCE (e.g., Kaslow et al., 2009; Newble, 2004; Sheen et al., 2015). The OSCE consists of multiple stations that students rotate among on a given schedule. The time spent on each station differs somewhat but is normally between five and 20 min (Yap et al., 2021). Every station examines specific professional competencies, and together, they examine students on a wide range of competences. Every station is set up as a clinically relevant situation, in which the student interacts with a simulated client (played by an actor). The OSCE is developed to be standardized across students by using the same stations, grading instructions, and scripts to actors. While students rotate among the stations, they are being observed and assessed by an examiner at each station. This person observes the students’ interactions with the client, and the students’ competencies are assessed using a standardized scoring sheet with predetermined marking criteria (Harden et al., 2016). Hence, the OSCE allows the student to be assessed on several competencies by multiple examiners which is argued to be one of the strengths (Yap et al., 2021). The OSCE is widely used as a method to assess professional competence at medical programmes but has also in a few studies been used in psychology education programmes, especially in Australia (e.g., Sheen et al., 2015, 2021; Yap et al., 2012, 2021; Zahid et al., 2011). One recent study did a good job at describing the use of this examination on four different programmes within psychology (Yap et al., 2021), but still very little is published about this examination within the area of psychology, and especially in relation to students’ clinical practicum.

Two existing studies provide results of the potential effects of the use of OSCE within the area of psychology. Zahid et al. (2011) used two groups of Australian students on a medical programme on a course in psychiatry. They compared students who were on an old curriculum that did not include the OSCE as a practical examination with students on a new curriculum in which OSCE had been implemented. Students who had done the OSCE differed from the other students. Specifically, they did better on tests that examined professional competence and professional development but did worse on tests that examined theoretical knowledge. One limitation with this study is that the authors did not use the same outcome measure in the comparison, and it will be important to replicate their results. A second recent study, also conducted in Australia, tested whether simulation-based education could impact students’ perceived competencies and showed that such elements increase students’ confidence in applying their learning to real-world settings (Sheen et al., 2021). These findings suggest that clinical examinations might promote students’ professional competence, but it is still unknown whether these elements might help students perform better on their clinical practicum.

Worth noting is that examinations and evaluations of professional skills, such as the OSCE, can be challenging. First, they often demand more resources than examinations of theoretical skills, as students’ performances need to be evaluated individually by several examiners throughout the examination. In addition, costs for actors (both preparation time and time spent on the actual examination) need to be added. The examination can also be challenging for students. Indeed, research has shown that students are often anxious and insecure about their performances on the OSCE (Bagri et al., 2009; Brannick, 2013; Sheen et al., 2015). However, although such examinations might provoke anxiety, students also report positive aspects with the OSCE and that it enables learning and application of theoretical knowledge (Bagri et al., 2009; Sharma et al., 2013; Sheen et al., 2015; Yap et al., 2012). It is possible, then, that although anxiety-provoking and resource-demanding, the OSCE and other simulation-based elements, might help to promote students’ professional competence and might make them feel more prepared for their clinical practicum. Below we describe the clinical psychology programme at Örebro University in Sweden. As part of the new curriculum, we use OSCE as an assessment of students’ professional competence, and in this report, we present details on our implementation of this simulation-based element.

A majority of the overarching learning objectives for the clinical psychology programme are formulated in terms of practical skills and clinical application of theoretical knowledge. The original curriculum at Örebro University was approaching 15 years and societal, as well as scientific development, affecting the Swedish psychology profession demanded revisions to be made. Moreover, with some exceptions, most of the examinations in the old curriculum focussed on assessing students’ theoretical expertise. Hence, to update the programme to better fit the needs of future clinical psychologists and to tackle the gap between overall learning objectives and content of the programme, a new curriculum was implemented in the Fall of 2017.

The new curriculum is built on three large blocks (see Appendix A for details of topics covered in the programme). The first block includes courses that deal with psychology in general, on an individual, group, and societal level. Courses in the second block relate to psychology as a science (e.g., methods and statistics, theory of science). The third block deals with the clinical profession, and includes courses in communication, self-understanding, as well as professional ethics and law. All five years of the programme include courses from these three blocks. The first two years of the education programme, students learn about normative psychological processes with the addition of courses in psychopathology in the second year. Courses in the third year focus on professional competences as a clinical psychologist and includes a 15-weeks long clinical practicum. The last two years of the programme concentrate on elaboration and progression of earlier aspects and implementation of the knowledge. Worth noting is that clinical competencies are well assessed towards the end of the programme at the University's own clinic, where students in the last two years of the programme meet with real clients.

As part of the new curriculum, several aspects of simulation were implemented (e.g., the OSCE, role-play, video-recordings, and demonstrations of clinical skills) to promote students’ professional competence. Some of these elements were already present in the old curriculum, but the focus on and the extent to which they are used are emphasized in the new curriculum. Overall, the main differences between the old and the new curriculums concern the integration of practical and professional skills training as well as examination of these skills throughout the programme. While the students in the old curriculum, indeed, developed professional skills, these skills were seldom systematically examined and evaluated.

The Present Report

In this report, we present details and lessons learned from our implementation of the OSCE in our clinical psychology programme at Örebro University in Sweden. We also share data that we have collected to explore the difference between students on the new curriculum (from now on referred to as “new program students”) and students on the old curriculum (from now on referred to as “old program students”) on their perceived competence and worries, as well as their supervisors’ assessment during their clinical practicum. The results from this data should be viewed as preliminary with some clear limitations (e.g., lack of control for the natural differences between the two curriculums making it possible that any difference in outcome might result from other differences than the presence of OSCE). Therefore, this report should primarily be viewed as a guideline for implementation of OSCE on similar programmes in psychology.

Method

Participants and Procedures

All students were enrolled in the clinical psychology programme at Örebro University in Sweden (n = 91). The implementation of OSCE included only students on the new curriculum. However, we also collected data from the last cohort of students on the old curriculum. The preliminary results presented in the Result section were based on 30 students from the old curriculum and 61 students from the new curriculum. The “new program students” were drawn from two cohorts, including students who started the programme in Spring 2018 (n = 25) and in Fall 2018 (n = 36). The “old program students” started their education in Spring 2017. The training of students on the different curriculums overlapped, but the students were never enrolled in courses together or mixed throughout their programmes. Hence, although they belonged to the same education programme, they were in separate course groups and did not have any formal collaborations during their training.

Before collecting data for the preliminary results, the national authority for ethical issues in research approved the project (number: 2020-01121), and research ethic guidelines have been followed when collecting and handling data. Active consent was a requirement for participation and students were informed about the purpose of the project, that it was voluntary to participate, and about their ability to withdraw their participation whenever they wished. Students were recruited on regular lectures and were given the opportunity to fill out the questionnaire at these occasions. Most students chose to respond to the questionnaires (91% for the old and 95% for the new curriculum, respectively). Most of the students were women (65%), with slightly more equal numbers among “new program students” (61% women) than among “old program students” (79% women).

In addition to student reports, the supervisors at the sites also responded to questions about the students’ performances during the clinical practicum. Most students had one supervisor at their clinical practicum who reported on their performance and at most sites, there were only one student. However, at a few sites, there was more than one student and some students had more than one supervisor. All supervisors reported on each student they supervised. The supervisors were aware of which curriculum their students were in, but they were not informed about our goal to explore differences between the curriculums. Hence, they were not blind to the condition, as they knew if the student had participated in the OSCE or not, but they were not told to compare students on different curriculums. The questions they answered were formulated in a natural way to capture students’ performance, not specific to the different curriculums.

Measures

Both “old program students” and “new program students” answered questions about their perceived competence and worries before and after the clinical practicum, which they complete during Semester 6 (i.e., the semester after they perform the OSCE). The first time point (T1) took place about two weeks before the clinical practicum, and the students answered the following questions: “In the current situation, how well do you feel that you master the following skills?” and “If you were asked by your supervisor to perform the following skills in the first week of your practice, how worried would you be about performing these?” The second time point (T2) took place two weeks after the clinical practicum, and the students answered the following questions: “If you think back on the clinical practicum as a whole, how well did you master the following skills?” and “If you think back on the practicum as a whole, how worried were you when you performed the following skills?” Following these questions, students were given a list of the 38 criteria used to assess their performance on the clinical practicum (see Appendix C for all criteria). The students answered on a scale from 1 (Not at all well / Not at all worried) to 7 (Very well / Very worried). The questions created two overall variables that measured perceived competence and worries. Cronbach's alphas at T1 were.95 and.96, and at T2 they were.96 and.96, for perceived competence and worries, respectively.

These criteria have been used for a long period of time in our clinical psychology programme to assess students’ knowledge and skills in the clinical practicum and were considered appropriate criteria for evaluating students’ perceived competence in relevant areas. We examined correlations between our scales of perceived competence and worries and other established measures of perceived self-efficacy, the Counsellor activity self-efficacy scales (CASES, Lent et al., 2003) and the General self-efficacy scale (GSE, Löve et al., 2012). Our measure of perceived competence related to their clinical practicum correlated positively and significantly with both CASES (r = .62, p < .001) and GSE (r = .42, p < .001). Our scale of worries regarding the clinical practice also correlated significantly with these scales, but negatively (rs = −.36 and −.41, ps < .001). Hence, these results indicate that our measure of perceived competence taps into a similar concept as self-efficacy, which can be defined as a persons’ perceived abilities to perform a certain task (Bandura, 1977).

Statistical Analyses

We used Independent sample t-tests to compare means on students’ perceived competence and worries, and supervisors’ assessments at the start and the end of the clinical practicum. Additionally, we used General Linear Model (GLM), which enables comparisons of change over time between two or more groups. In our case, we compared “new program students” with “old program students” on their perceived competence and worries related to their clinical practicum. The changes in perceived competence and worries were measured using students’ reports before and after the practicum (see above). Finally, we examined the correlation between performance on the OSCE and students’ clinical competence (i.e., students’ perceived competence and worries, and supervisors’ assessments). This was done among the “new program students” only, as the “old program students” had not perform the OSCE.

Results

Below, we present the implementation of the OSCE in our clinical psychology programme at Örebro University in Sweden. Thereafter, we present preliminary results from questionnaires administered to students and supervisors. Importantly, the results of the collected data should be interpreted with caution. The statistical analyses do not inform about causality, as the design lacks necessary control of confounding variables. Hence, the results from these data presented in this report are exploratory.

Implementation of the OSCE

The main goal of implementing the OSCE was to allow an opportunity to practice skills with formative feedback and to examine professional competence, which would be a complement to examinations of theoretical knowledge. The OSCE makes it possible to ensure that students have achieved the goals in terms of skills and abilities and offer students an opportunity for important formative feedback on areas that they might need to develop further. This was considered important to increase the chance for the students to successfully complete their clinical practicum as well as other tasks on the programme that deal with professional skills.

The structure of the OSCE was adopted from another university in Sweden where it had been used at the psychology unit. When pilot testing the OSCE for the first time in Fall 2019, students were evaluated on 12 stations in total. Each station was 10 min long, with a two-minute break in between. The examination was located at the university and followed a common structure for OSCE as seen in earlier studies (see Yap et al., 2021 for details about implementation of OSCE in clinical psychology programmes). However, due to the Corona pandemic, major changes had to be made to the structure of the OSCE. Changes included limiting the number of stations to 5 in total and conducting the examination digitally. Unrelated to the pandemic, it was also decided to increase the duration of the student-actor interaction on each station from 10 to 13 min. This decision was based on experiences from the pilot test, where it became clear that 10 min was not enough for students to perform all the tasks needed in relation to each skill. For that reason, 13 min was chosen because it was the maximum increase in minutes on each station, while still being able to examine all students in one day. The time between each station was increased from two to four minutes to have more time to deal with potential technical difficulties that might arise because of the digital format.

The final structure of the OSCE, then, included five stations covering specific professional skills that were developed by teachers/examiners. Each station had one examiner and one actor, and students rotated among these five stations during the examination. Each examiner created a Zoom-room for the examination that became the base for both the examiner and the actor during the examination. The main instructor of the course had an overarching role in the organization of the OSCE but was not involved in examining students. The examiners were all teachers at the unit as well as clinical psychologists. Trained actors played the role of a client. To prepare for the role, the actor received a semi-specified script and met with the examiner before the examination. The actor was instructed to play the part as similarly as possible with every student while at the same time adapting to specific responses from the students. Hence, the simulation (i.e., the interaction with the actor) followed a semi-structured script, with smaller adaptions.

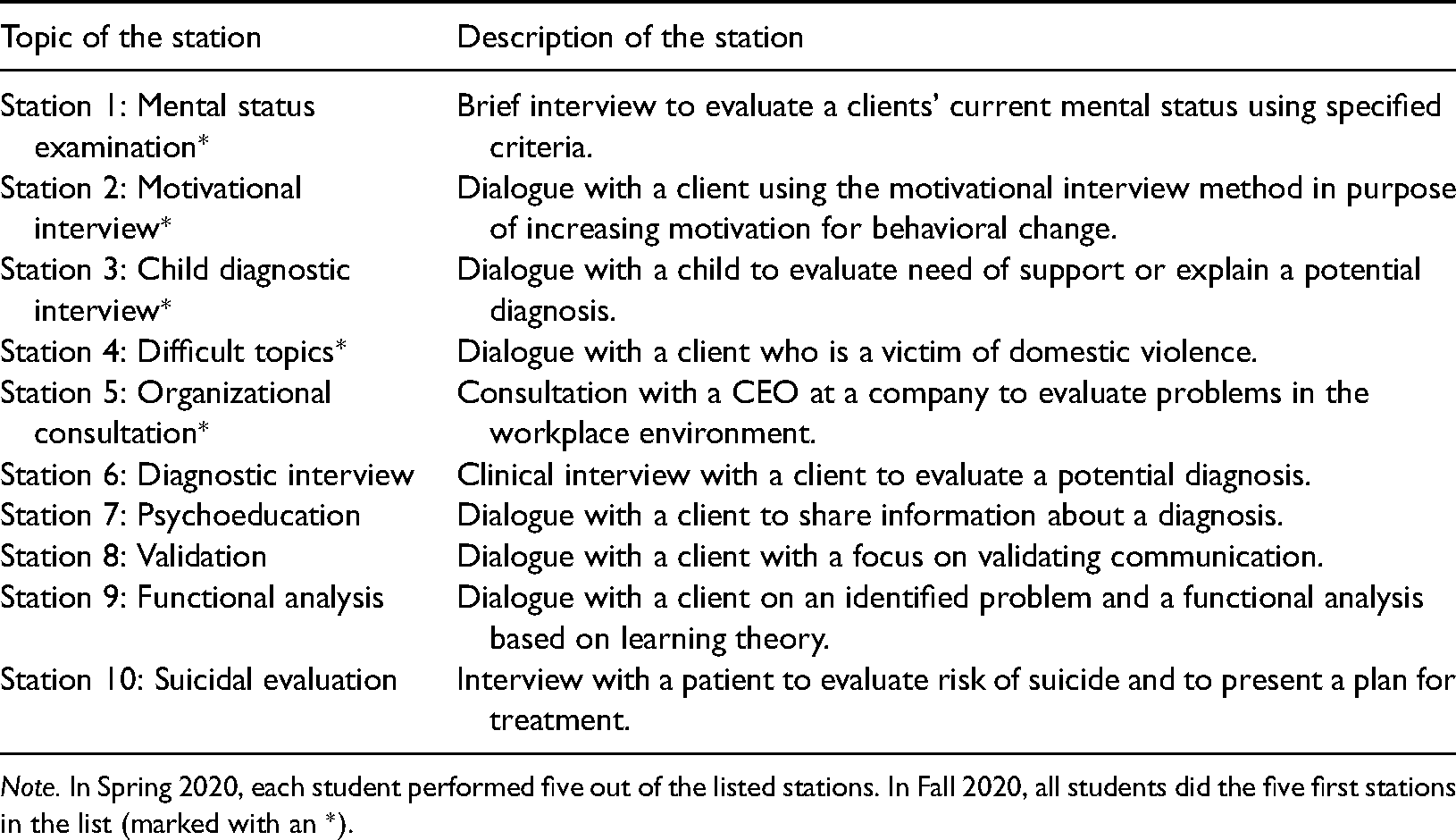

Before entering a station, the student read a case, describing the client they were to meet in the station and the task that they were instructed to perform. After reading this information, the student entered the Zoom-room and interacted with the client. During the interaction, the examiner turned off his/her camera and evaluated the students’ performance using a scoring sheet that matched the learning objectives for the station. After 13 min of interaction and continuous evaluation of skills, the examiner turned on the camera which ended the interaction. Then, the student left the room and went on to the next Zoom-room on their schedule. This was repeated until all students were evaluated on all five stations. All in all, the examination took approximately two hours to complete for each student. See Appendix B for a detailed example of a station and Table 1 for a description of the stations that were used in Spring and Fall 2020.

Description of the stations in the OSCE.

Note. In Spring 2020, each student performed five out of the listed stations. In Fall 2020, all students did the five first stations in the list (marked with an *).

With regards to scoring on the OSCE, the format used was the same as has been used by others working with OSCE (Harden et al., 2016). Students could get a maximum of 10 points on each station, which formed a general score on the OSCE examination. In addition, a global rating was used that consisted of four separate categories: Excellent, Clear pass, Borderline pass and Clear fail. A clear fail in a station meant that the student had done something that would have jeopardized patient safety if performed in a real-life setting. To pass the OSCE, students needed 60% or more on the general OSCE score. However, two or more “clear fails” automatically meant that the student failed the OSCE (regardless of their general OSCE score).

It is important to note that the OSCE is not a free-standing examination in our programme, it is the final examination of a course called “Professional skills”. This course is part of the fifth semester of the new curriculum. At the beginning of the course, students get a list of skills that could be assessed during the OSCE, and they are instructed to use this list as a guide in their preparations. Hence, the students are not aware of what stations are to be examined until they arrive at the OSCE. Throughout the course, students receive instructions and feedback from their teachers on how to develop competencies in relation to the skills on the list. Examples of skills on the list is psychoeducation, functional analysis, validating communication, mental status examination, evaluation of suicide risk, and assessing the occurrence of domestic violence. The stations on the OSCE are developed from this list with a goal of covering a variety of competencies.

Descriptive Statistics

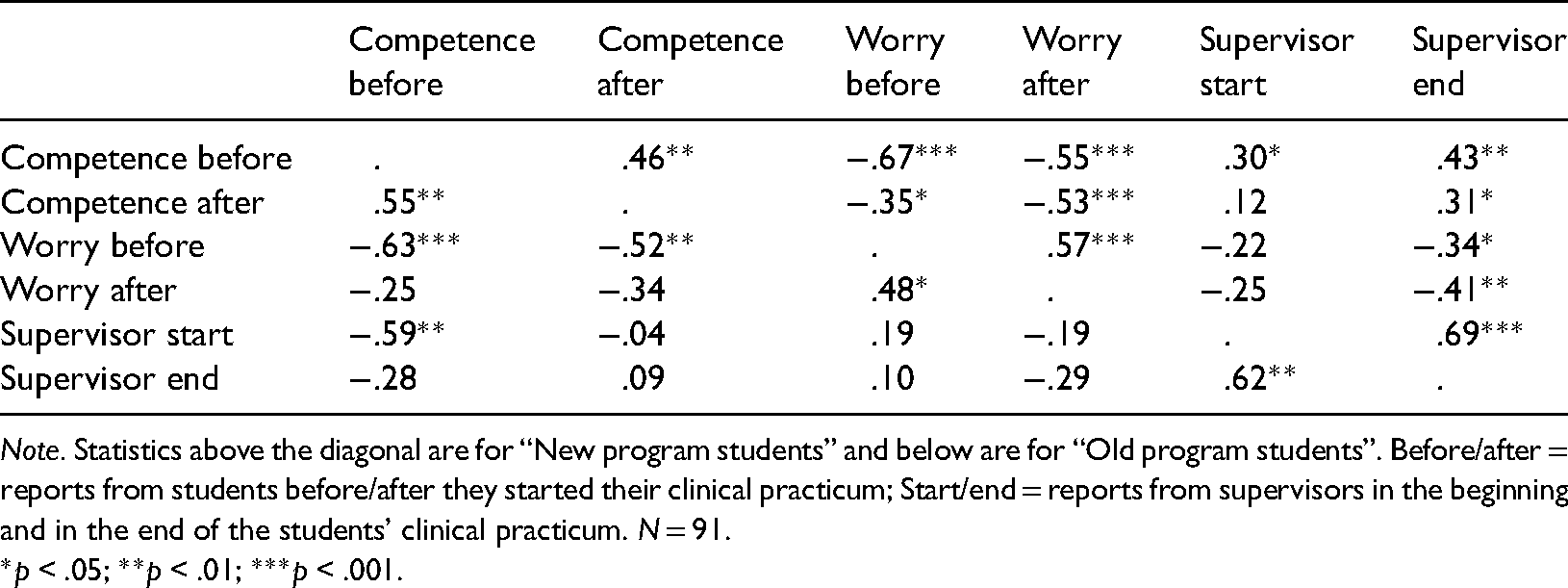

Table 2 shows correlations among the variables for the two student groups separately. As can be seen, all measures showed significant and strong correlations over time. There were positive and significant correlations between “new program students” reports of their competence and their supervisors’ assessment of their competence over time. Hence, students who perceived themselves as having low competence had supervisors who also reported them having lower levels of competence. For “old program students” these correlations were, in general, negative. For example, students’ reports on their competence before the practicum were negatively and significantly correlated with their supervisors’ assessment of their competence at the beginning of the practicum (r = −.59). Hence, these results indicate that the more competent students felt, the lower level of competence their supervisors reported.

Correlations among the variables.

Note. Statistics above the diagonal are for “New program students” and below are for “Old program students”. Before/after = reports from students before/after they started their clinical practicum; Start/end = reports from supervisors in the beginning and in the end of the students’ clinical practicum. N = 91.

*p < .05; **p < .01; ***p < .001.

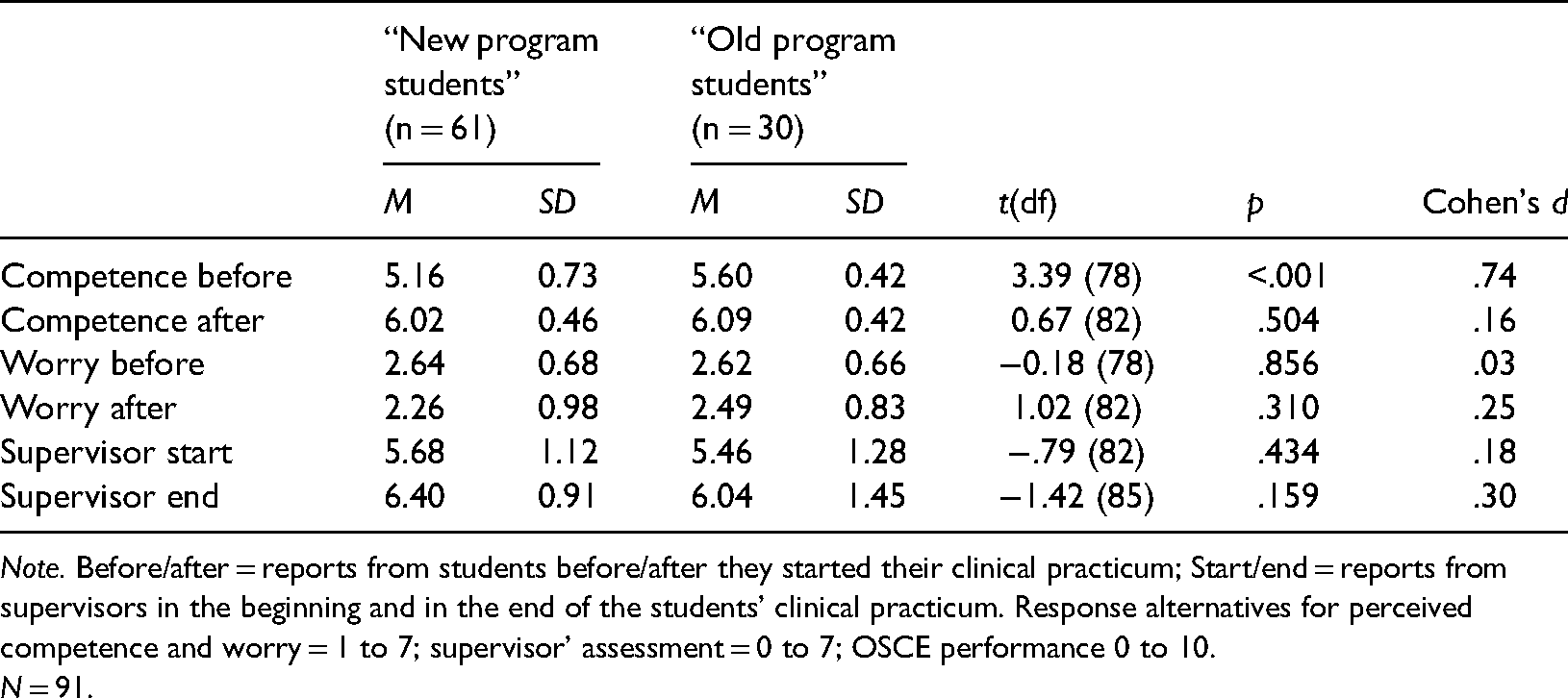

Students’ Competence and Worries Regarding Their Clinical Practicum

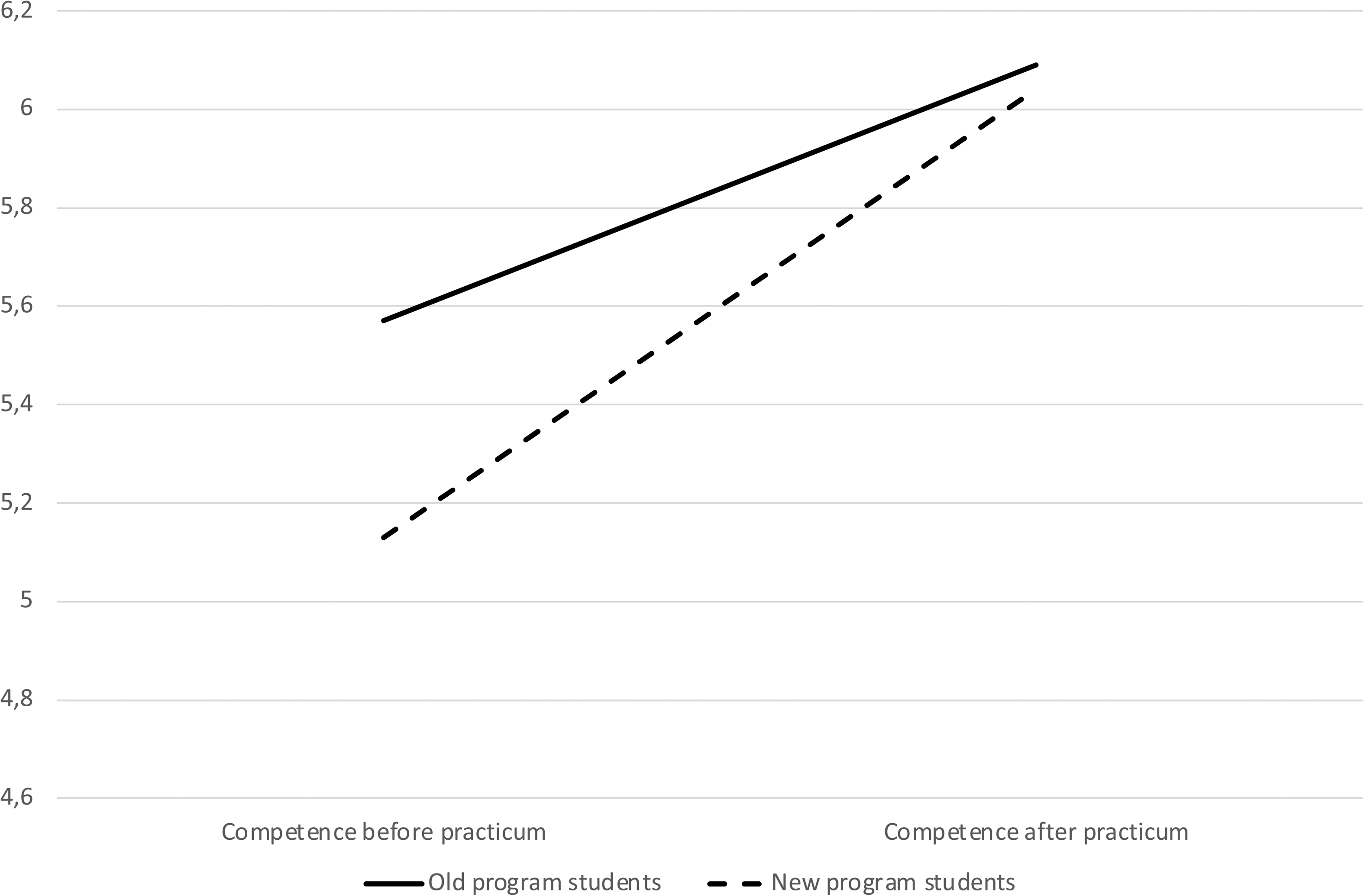

“Old program students” and “new program students” differed significantly before the clinical practicum on their perceived competence (see Table 3 for results). The “old program students” reported significantly higher levels of perceived competence (M = 5.60; SD = 0.42) than the “new program students” (M = 5.16; SD = 0.73), t(78) = 3.39; p < .001. This difference was still significant even after using Bonferroni correction of the p-value (0.05/6 = 0.008), to correct for the multiple t-tests. There were no significant differences between the groups on perceived worry before the practicum, t(82) = −0.41; p = .685. After the clinical practicum, the groups did not differ in perceived competence, t(82) = 0.67; p = .504, or worry, t(82) = 1.02; p = .310.

Results from the independent sample t-test.

Note. Before/after = reports from students before/after they started their clinical practicum; Start/end = reports from supervisors in the beginning and in the end of the students’ clinical practicum. Response alternatives for perceived competence and worry = 1 to 7; supervisor’ assessment = 0 to 7; OSCE performance 0 to 10.

N = 91.

Change in students’ perceived competence regarding their clinical practicum.

Association Between Performance on the OSCE and the Clinical Practicum

These correlations included only students on the new curriculum, hence, students who had performed the OSCE. Students’ scores on the OSCE were not significantly correlated with supervisors’ assessment of their competence at the start of the clinical practicum (r = .20, p = .202), but the OSCE scores correlated significantly with supervisors’ assessment at the end of the practicum (r = .47, p < .001). Hence, students who got higher scores on the OSCE had supervisors who reported higher level of competence at the end of their practicum than did students who received lower scores on the OSCE. Students’ scores on the OSCE did not correlate significantly with students’ reports of their perceived competence (rs = .17 and −.09; ps = .236 and.513, for pre-practicum and post-practicum reports, respectively) or worries regarding the clinical practicum (rs = −.13 and.04; ps = .381 and.795, for pre-practicum and post-practicum reports, respectively). Hence, higher students’ scores on the OSCE were associated with better supervisor assessment of the students’ competence, but students’ scores were not associated with their assessment of perceived competence.

Discussion

In this report, we describe lessons learned and preliminary results from the implementation of OSCE on a new curriculum at the clinical psychology programme at Örebro university in Sweden. This examination was part of a new curriculum that included more simulation-based elements. In sum, we conclude that the original OSCE-format is generally applicable in a Swedish context with clinical psychology students, with smaller changes (e.g., increasing the time for assessment on each station). We also learned that the OSCE is possible to adapt and conduct in a digital format. Further, preliminary results from our data indicate that students who had done the OSCE felt less competent at the start of the clinical practicum but increased more in their perceived competence over the course of the practicum, in comparison to students who had not done the OSCE. Additionally, students with better performance on the OSCE were perceived by their supervisors as more prepared at the clinical practicum. Although interesting, due to methodological limitations, these results should be interpreted with caution should mainly be viewed as exploratory. In sum, the OSCE is a potentially useful method to use in a clinical psychology context, but more research with strong designs are needed to examine its effects.

The finding that students in a programme with more simulation-based elements report lower levels of perceived competence than students who have had less simulation-based elements is somewhat counterintuitive. To understand this unexpected result, it might be necessary to discuss the purpose of examinations of professional competence in a broader sense. For programmes that result in a professional degree (e.g., clinical psychologist), examinations of professional competence are necessary to evaluate students’ abilities to work clinically (von Treuer & Reynolds, 2017). It is, however, possible that these examinations also highlight abilities that students need to develop more. Theoretically, examinations of professional competence might follow the process described in the Dunning-Kruger effect (Kruger & Dunning, 1999) suggesting that people have difficulties in assessing their own abilities and competence. With low levels of knowledge, a person is often unaware of his or her own incompetence, but with more knowledge comes a realization of one's incompetence and a feeling that one will never manage the task as hand. With increased knowledge, people will also increase in perceived competence and become aware of what they know and what they do not know.

Examination is a way to evaluate students’ competence, but it might result in a sense of incompetence as the examination highlights abilities that students have not yet encompassed. Hence, it is possible that examinations of professional competence—such as the OSCE—can be positive in the long run, but these might not be apparent close in time of the examination. This idea might explain the difference in level of change in perceived competence between the two student groups in this report. So, one thing that needs to be discussed is if the OSCE and other simulation-based examinations and elements help students identify areas in which they need to develop. If so, examinations of professional competence might not just be a way of evaluating students’ knowledge and competence, but as part of a larger learning concept encompassing deliberate practice of psychotherapy skills (e.g., Rousmaniere, 2017). These are speculations based on preliminary data with some clear limitations and should be tested in future studies using a stronger research design.

In this report, we also explored the association between students’ performance on the OSCE and their level of competence at the practicum. Interestingly, the level of achievement on the OSCE did not correlate significantly with any of the student reports of their perceived competence or worries in relation to the practicum. Hence, students might not, themselves, feel more competent as a result of achieving well on the OSCE. This might be a result of variations among students in level of self-criticism regarding a discrepancy between actual and desired outcomes and in their level of perfectionism (e.g., Grzegorek et al., 2004; Vanea & Ghizdareanu, 2012), which might explain why highly performing students do not feel more competent than students with lower performance. Achievement on the OSCE did, however, correlate significantly with supervisors’ assessment at the end of the clinical practicum. This speaks to the validity of the OSCE and the fact that the OSCE is a good indication of students’ professional competence that they are evaluated on at their practicum.

Limitations

This report has some limitations that need to be mentioned. The most obvious is the research design of the data collection. The changes to the new curriculum had the goal of benefitting students’ learning, and the differences between the new programme students and the old programme students can, potentially, be a result of differences in the level of simulation-based elements on the two curriculums. However, it should be noted that these are, indeed, two different curriculums. Although simulation-based elements are one thing that differentiate them, there are other things as well that make them different. In our analyses, we were unable to control for other potential differences between the curriculums, and the results should be viewed as exploratory. Future studies should make use of a stronger design to test the effect of simulation-based elements on students’ performance on the clinical practicum.

A second limitation is the potential impact of Covid-19. Most students in our sample have been influenced by Covid-19 either during their performance of the OSCE or in their clinical practicum. “Old program students” did their clinical practicum Fall 2019, and, thus, were not influenced by the pandemic. The “new program students”, however were all doing their clinical practicum during the pandemic (Fall 2020, and Spring 2021). Hence, their experiences on the clinical practicum might have been different than students who did the practicum before the pandemic. It is, for example, possible that the “new program students” were more worried and anxious about doing their clinical practicum, partly because of the ongoing pandemic, than the “old program students” and that might be one explanation for the difference in level of perceived competence before the start of the practicum.

As a third limitation, we asked students to rate their perceived competence and worries to perform 38 assignments on their clinical practicum. These were a list of criteria that is used for evaluating student's performance on their practicum. Although these items are closely tied to the context, the scale is not validated. This scale, however, correlated in expected ways with other established scales of students’ perceived competence, which made us more confident about the validity of this scale. Additionally, supervisors reported on students’ competence. Although they were not asked to report on the competence level in relation to what curriculum the student where enrolled in, they were not blind to the condition. Hence, it is possible that supervisors’ assessments are influenced by the fact that they knew whether the student had performed the OSCE or not.

A fourth limitation regards inclusion of other potentially relevant demographic factors. We did not have information on demographic variables (e.g., students’ age and earlier clinical experiences) that might have influenced the relation between OSCE and students’ clinical practicum. Future studies should examine demographic factors to explore whether the benefits of OSCE differs between student groups.

Implications for Implementation of the OSCE

After implementing the OSCE in our clinical psychology programme, lessons and modifications of the format has been made. These are described and discussed below. Worth noting is that these modifications has been implemented because they fitted our context, and they might not be useful in other programmes. The description of these modifications, however, might work as a starting point in developing this type of examination.

First, because of the pandemic, we had to go from an in-person format to a digital, and we quickly learned that there were both advantages and challenges with the digital format. The most prominent advantage is the possibility for examiners to turn their camera off during the student-actor interactions. This has been reported as positive by both students and examiners because the examiner is hidden and does not disturb the interaction. A challenge that had to be solved when the examination turned digital was how to ensure that everyone (both examiners and students) had clocks that showed the exact same time. The examination follows a minute-by-minute schedule, and it is important that all students enter the right station at the right time. If a student is late for or misses a station, it is not possible to extend the time on that station or redo it. This was solved with a webpage displaying a clock that everyone was asked to follow (https://www.osce-timer.se/). Another change that had to be made in order to fit the digital format was how to provide the students with information about the stations. In an in-person examination, this information would be posted on the door and the student would read it before entering the room, but that was not possible in a digital format. To solve this, we put together all information about all stations in one document and the students received the document about 15 min before their first station.

The second modification we have done is related to number of minutes on and between each station. When pilot-testing the stations, examiners provided feedback that students were stressed because of lack of time. Some of them described the station more as a stress-test than a test of the specific skills that the stations aimed to examine. In addition, it was discussed that it is rare for psychologists to have to prepare and perform their tasks under that kind of time pressure and it was not considered fair to ask that of our students. Because of this, we extended the number of minutes on each station to 13 min (originally 10 min) and between each station to three minutes (originally two minutes), which was considered enough to reduce the stress for students. One benefit of this change is that it gave the examiners more time to write feedback to the students, something that is very important for students’ future development of the skills.

The third, and perhaps most significant, modification that we have done is reducing the number of stations. When changed to a digital format, we went from 12 to five stations. It was not possible to increase the number of minutes on each station and performing the examination digitally without extending the examination from one to two days and conducting the examination over two days was not an option with the available resources. We have compared students’ scores on the OSCE depending on the number of stations (12 and five). To do so, we computed a standardized score by dividing the general OSCE score with the number of stations (12 or five). The results show that students’ scores on stations in general did not differ significantly between the group who did the OSCE with 12 stations and the groups who did it with five stations. More research, however, is needed to evaluate the potential impact of number of stations. Moreover, when making decisions about the format and number of stations, there is a need to also consider the availability of resources in terms of funding and staff. The OSCE is indeed a costly examination (see also Yap et al., 2021) and determining the minimum number of stations needed might be important to increase the possibility of other institutions to implement it.

In addition to the structure of the OSCE itself, a relevant question is when the OSCE should take place within the programme. In our programme, it is located in the middle (the first half of the third year), which works well with our larger programme structure. Before the OSCE, students have gained theoretical knowledge as well as basic professional skills from a role-play format. After the OSCE, students start meeting real clients under supervision (first during their clinical practicum in the third year, and then at the unit's own clinic during the last two years of the programme). The step between role-plays and meeting with real clients is large, and the OSCE provides a suitable step between these two. The OSCE is a way for students to apply the skills they have learnt so far and practice them in a controlled setting before starting to work with real clients. The suitable placing of an OSCE, hence, needs to be determined based on the education curriculum (i.e., in the transition between role-plays and real clients) rather than in a fixed place during the education.

Conclusions and Suggestions for Future Research

This report is one of few focussing on psychology students, and the first to explore simulation-based elements in relation to students’ professional competence. Because this report has some limitations in the design, the results should be seen as exploratory. From our experiences and the preliminary data, there are indications that simulation-based elements can benefit students’ learning, both in their preparation for the clinical practicum (and future clinical work) and in highlighting areas of improvements. It will be important in future studies to examine if and in what way OSCE (and other simulation-based elements) can identify and ameliorate areas of weaknesses and, in that way, help students prepare for their clinical practicum. Students’ own experiences and reactions to OSCE would be especially helpful in our understanding of the potential effects.

The result of this report also highlights other areas of future research. For example, the agreement between students’ and supervisors’ perceptions of students’ competence was greater among students on the new curriculum than it was on the old curriculum. Why is this and how could one understand this result? It is possible that simulation-based elements make students more “correct” and objective in their estimation of their own competence, as indicated by the positive correlation with supervisors’ assessment. Students on the curriculum with fewer simulation-based elements might not get as much chance to reflect on their own competence, and, thus, are less aware of what they know and what they do not know. This is, however, speculations, and the link between simulation-based elements and students’ reflections and perceived level of competence needs to be explored more in depth. All in all, this report gives hands-on suggestions and details regarding the implementation of OSCE as a tool to assess students’ professional competence. More research on the potential effects of the OSCE on students’ learning and development is surely needed.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.