Abstract

The study focused on supporting the distinct processes of assessment and providing feedback within a peer feedback setting in teacher education and investigates the effects on student teachers’ self-efficacy and feedback quality in a quasi-experiment. Student teachers (

In order to promote learning in higher education and to ensure instructional quality, we need to implement promising didactical concepts that especially work for larger groups of learners. In addition, learners in higher education are increasingly asked to regulate their learning processes by themselves (DeCorte, 1996). In that context, peer feedback is a promising approach to support learning in higher education (Falchikov & Goldfinch, 2000; Schneider & Preckel, 2017). It can be used in a formative way (Black & Wiliam, 1998). Peer-based formative feedback requires peers: (a) to

Formative Assessment and Formative Feedback

Formative assessment is currently discussed as a promising approach to foster learning (Black & Wiliam, 1998; Bürgermeister & Saalbach, 2019) and, recently, for higher education especially (Bose & Rengel, 2009; Gikandi et al., 2011; Yorke, 2003). It focuses on the individual (ongoing) learning process, aims to assess what the learner already knows, and provides information about learning challenges or misconceptions (Black & Wiliam, 2009; Heritage, 2007; Sadler, 1989). This gained diagnostic information can subsequently be used by someone else – for example, a teacher – to give feedback to learners (Black, 1993; Black & Wiliam, 2009; Sadler, 1989). Thus, formative assessment and formative feedback are closely entangled (Wiliam & Thompson, 2008), as formative feedback is necessarily based on prior assessment. Providing formative feedback makes it necessary to previously assess relevant competences. So far, a great amount of empirical research reveals positive effects of formative feedback on learners’ motivation (Deci et al., 1999) and achievement (Hattie, 2009; Kluger & DeNisi, 1996) as well as self-efficacy (Asghar, 2010). The latter is seen as highly relevant for the development of motivation and subsequently for cognitive learning outcomes (Schunk & Ertmer, 2000) and for learners’ self-regulation (Zimmerman, 2000). Research shows a close link between learners’ self-efficacy, self-regulation, and proficiency and suggests supporting these mechanisms systematically in higher education (Jackson, 2002; Sun & Wang, 2020).

Even though feedback is discussed as one of the most powerful ways to foster learning (Hattie, 2009), it is not beneficial in every case, as the effect is (amongst others) dependent on the way feedback is given (Hattie et al., 2017; Shute, 2008; Wisniewski et al., 2020).

Effective Formative Feedback

Formative feedback should provide learners with information that enables them to pursue successful learning; that is, to close the gap between their current stage of learning and the learning goal (Heritage, 2007; Sadler, 1989). It is based on assessment activities, which provides the feedback giver with appropriate (formative) information to prepare the feedback (Hattie & Timperley, 2007). According to Hattie and Timperley (2007), beneficial feedback should address three questions, each corresponding to a specific aspect of feedback:

Where am I going? ( How am I going? ( Where to next? (

Thus, the feedback should focus on the underlying learning goal, on information on how the learner is doing currently (strengths, weaknesses), and finally on suggestions for the following learning process in order to help the learner to close the gap between goal and actual stage of learning. In addition, feedback should be given with respect to the task, the underlying processes of the fulfilled task, or to processes of self-regulation/self-monitoring (Hattie & Timperley, 2007). Feedback at the process level is especially beneficial for helping learners to detect failures in single steps within the task-solving processes or even misconceptions and may lead them to choose different strategies in future learning. Feedback should not, in contrast, be given with respect to the person (positive or negative evaluations about the learner, such as “great job”, “good girl”), as this contains too little task-related information, which does not lead to a better understanding of the task. The meta-analysis from Wisniewski et al. (2020) shows that feedback is more effective the more information it contains and that learners especially benefit when it contains information on the task, process, and self-regulation level as well as on how to go on learning.

Peer Feedback – Potentials and Challenges

In higher education especially, it is useful to complement teacher feedback by feedback from other sources such as peers (Evans, 2013; Nicol et al., 2014). This might be helpful and necessary, as teachers are rarely able to give feedback systematically and continuously to individual learners, especially in large courses (Brinko, 1993).

Peer feedback can promote learning in the feedback receiver and has for example, an impact on academic writing (Huisman et al., 2019). In addition, metacognitive processes are supported, as learners are encouraged to regulate their own learning (Ballantyne et al., 2002), which especially holds true for the feedback receivers. As the feedback givers are explicitly asked to judge the quality of their peers’ work, they are explicitly dealing with the underlying learning goals, evaluation criteria, and different ways of solving a task/problem, which might lead to a deeper understanding of the learning contents and expectations (Andrade, 2010; Sadler, 2010).

Feedback from a peer is perceived as helpful, when it contains specific information on how to correct mistakes and continue learning (Strijbos et al., 2010) and when it is completely understood (Kollar & Fischer, 2010). Thus, providing high-quality feedback in order to promote learning is a challenging task, and research shows that students experience this process as rather uncomfortable and difficult (Hanrahan & Isaacs, 2001; Kaufman & Schunn, 2011).

Therefore, student teachers need to be prepared and supported when asked to systematically evaluate the work of a peer and to provide appropriate, clear feedback (Hanrahan & Isaacs, 2001; Walker, 2015). In fact, studying unsupported feedback, Nückles et al. (2005) found that peers tend to align their feedback with each other over time. That is, they tend to harmonize their feedbacks and somewhat develop their own style of writing their feedback. They thus sometimes reduce their feedback to certain aspects and neglect important aspects.

Supporting Peer Feedback

In general, giving formative feedback requires two distinct competencies, namely assessing learning (by implementing a method to collect, analyze, and interpret data on students’ learning) and subsequently providing the feedback itself (by using the information and communicating the assessment results by phrasing a verbal or written feedback) (Herppich, et al., 2018). While there is a large body of literature focusing on (student) teachers’ assessment competencies (Herppich et al., 2018; Karst & Bonefeld, 2020; Südkamp et al., 2012, van Zundert et al., 2010), there is rarely research on teachers’ competencies to subsequently use this information and to provide feedback (captured as feedback quality) and notably little research on how to support these two competencies in student teachers (Rotsaert et al., 2018).

Rubrics to Support Peer Assessment

One approach to help learners in

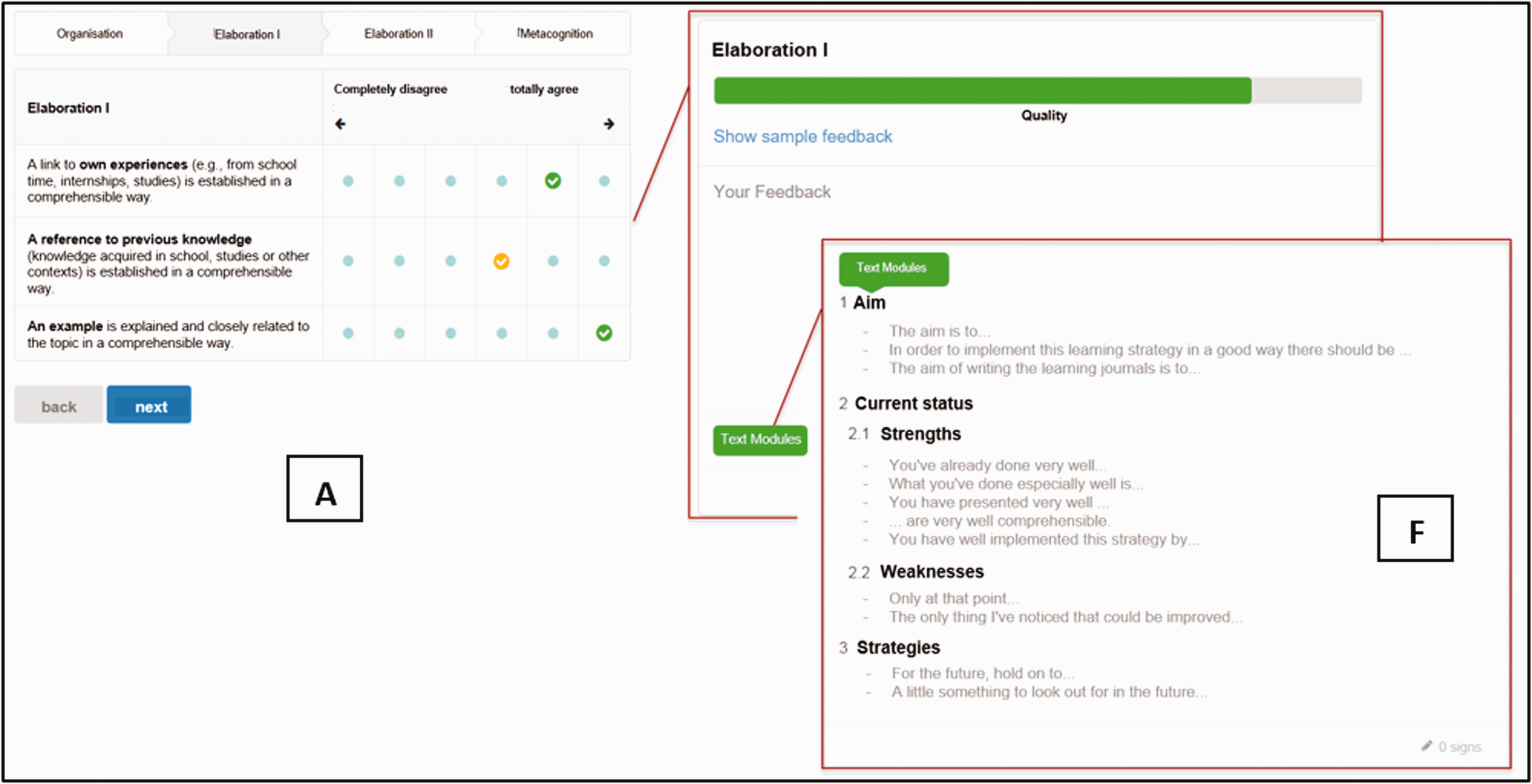

Digital Tool: Showing Assessment Support by Rubrics (A) and Support of Writing the Feedback by Sentence Starters (F).

Procedural Facilitation to Support Providing Peer Feedback

Procedural facilitation refers to instructional support that reduces potentially infinite sets of choices (e.g., in formulating sentences) to limited sets and provides aids to memory. It also structures procedures and thus provides learners with a “plan of action” (Baker et al., 2002) – especially when composing text. It can be implemented by providing sets of questions, sets of prompts, or sets of single text elements. Scardamalia et al. (1984) used a set of sentence starters to facilitate the process of writing. The set was structured according to different processes important to planning a writing task. Thus, their procedural facilitation provided a structure and at the same time concise, concrete aids to formulate ideas. Procedural facilitation has been shown to enhance content and organization of writing products (De La Paz & Graham, 2002; Yeh et al., 2011; Zellermayer et al., 1991).

Rare previous studies on procedural facilitation in the context of peer feedback suggest that using prompts to facilitate providing the peer feedback leads to a large improvement in the quality of the feedback. Gan and Hattie (2014), for example, compared prompted with non-prompted peer feedback on laboratory reports of New Zealand Year 12 students. The prompts asked students to give written feedback on what their peers did or did not do well and to suggest improvements. Prompted feedback-providers gave more feedback on what their peers did well or did not do well and made more suggestions for improvement than their non-prompted counterparts (frequency of statements was considered). In addition, in the prompted condition, feedback quality was rated as higher than in the non-prompted condition, as students gave more feedback at the task, process, and self-regulation level.

Gielen and de Wever (2015) used a feedback template in a wiki environment and asked students in educational sciences to provide peer feedback on a written text (scientific abstract). The degree of provided structure in the template varied. For all students, the template provided a list of evaluation criteria which had to be addressed in the feedback (e.g., intention of research, methodology). In one condition, students only received the help of the list, whereas students in the second condition additionally received guiding questions, and in a third condition a template that was structured according to the principles feed up, feed back, and feed forward. Gielen and de Wever (2015) found that students in the highly structured setting achieved significantly higher feedback quality scores. The feedback quality score captured ratings for three categories: use of criteria, nature of feedback, and writing style.

Simonsmeier et al. (2020) revealed positive effects of a structured web-based peer feedback intervention on academic self-concept in a field experiment in higher education. Students who received a structured peer feedback support and used predetermined dimensions to give feedback on a peer’s scientific paper rated their self-concept higher than students who did not receive this structure. Discussing these findings, the authors point out the importance of analyzing the effect of peer feedback support on other self-beliefs about academic competences, such as self-efficacy. This is in fact meaningful, as learners use information from different sources, such as perceived effort and time persisted, as well as the amount of help received to appraise their self-efficacy (Schunk, 1995).

As self-efficacy can be promoted when applying a strategy that leads to a greater sense of control and that can enhance achievement (Schunk, 1998), we aim at investigating the effect of structured peer feedback support on self-efficacy. As training, that gives opportunities for learners to practice their skills on their own, may facilitate self-efficacy (Schunk, 1995), we investigate effects of our intervention to support peer feedback in the long run.

Learning Strategies in Teaching Psychology to Student Teachers

In the present study, student teachers were asked to give feedback on learning-strategy use to their peers. Essential learning strategies are cognitive as well as metacognitive learning strategies (Bjork, et al., 2013; Brod, 2020; Fiorella & Mayer, 2016; Nückles et al., 2020; Weinstein & Mayer, 1986). Cognitive learning strategies include elaboration and organization. Organization strategies aim to identify interrelations and hierarchies within the new learning contents (e.g., identifying main ideas). Elaboration strategies are to integrate new learning contents into prior knowledge or experiences (e.g., by thinking of an example of a newly learned concept). Important metacognitive learning strategies are the planning and monitoring of cognitive strategies and one’s own comprehension. Monitoring comprehension identifies gaps in one’s understanding, so that this gap can be filled subsequently. Appropriate use of learning strategies has been shown to substantially support content learning (e.g., Glogger et al., 2012; Nückles et al., 2009; cf. Mayer, 2002). In addition, we know that learners with a high self-efficacy use high-level learning strategies, such as elaborative strategies (Wang & Wu, 2008).

In teacher education, fostering learning strategies serves two goals. First, student teachers enhance their own use of learning strategies. Second, student teachers are enabled to assess learning strategies formatively and to foster other learners’ learning-strategy use (including their own future students at school) – an important skill for future teachers (Askell-Williams et al., 2012; Brophy, 2000; Kiewra & Gubbels, 1997; Lohse-Bossenz et al., 2013). Teachers exhibit deficits in assessing and fostering learning strategies (Hamman et al., 2000; cf. Dignath et al., 2008; Dignath-van Ewijk & van der Werf, 2012; Durkin, 1978; Moely et al., 1992). Teacher education programs should therefore provide learning opportunities so that future teachers are able to give beneficial feedback to learners on learning strategies and feel confident in being able to do so.

One effective way to implement the usage of the introduced learning strategies, as well as make them usable for formative assessment, is learning journals (Glogger et al., 2009; Glogger et al., 2012; Nückles et al., 2020), as teachers gain insights into students’ learning process and can provide rich feedback. In learning journals, learners are asked to reflect on the learning contents (e.g., literature of a seminar) by cognitive and metacognitive prompts (e.g., “What practical example linked to this theory/approach do you have?”). For example, an elaboration strategy can be used and thus become visible as “In my internship at school, I observed how children do differ a lot in their ability to regulate their upcoming emotions themselves … [more details follow].”

Aim of the Study and Research Questions

So far, supporting peer feedback processes in teacher education has rarely been studied (Gielen & de Wever, 2015; Sluijsmans et al., 2002), and, to our knowledge, there is no research which has examined supporting assessment and providing the feedback separately. By using a quasi-experimental design, 1 we studied how to support assessment of competencies and giving feedback separately (Herppich et al., 2018) within a peer feedback setting in teacher education. Prospective teachers were asked to assess learning strategies in their peers’ learning journals as well as to give feedback three times in a semester. They were supported with the help of a digital tool. We focused on effects on students’ self-efficacy regarding assessing learning strategies and giving feedback accordingly as well as on feedback quality.

Our research questions and hypotheses are as follows:

We assume that supporting underlying mechanisms and single steps in assessing and giving feedback appropriately leads to a higher perception of one’s ability to adequately assess and provide peer feedback on the usage of learning strategies. To this end, we expect an effect of assessment support on self-efficacy (main effect). We also expect an effect of feedback support on self-efficacy (main effect). We expect both these main effects, as we used a combined measure for self-efficacy which refers to assessing and providing the feedback in equal parts. We thus expect that supporting both underlying processes (assessment and feedback; interaction effect) is more beneficial for self-efficacy than supporting only one process (assessment or feedback) or no process at all, especially when doing so several times. That is, we expect an effect of assessment and feedback support over time (interaction effect with time point).

2.

We assume that supporting underlying mechanisms of assessing or/and giving feedback appropriately leads to a higher quality of the prepared peer feedback in the middle of the semester (main effect). However, we expect the highest quality of feedback when providing the support for both mechanisms, namely to assess and to provide feedback, compared to supporting only one process (assessment or feedback) or no process at all (interaction effect).

Method

Design and Sample

In this quasi-experimental study with a 2 × 2 factorial design, student teachers (

Learning Journals and Learning Strategies

The students were asked to read theoretical and empirical literature in preparation for the seminar session and to write and submit seven learning journals throughout the semester. Journal writing was used to help students to get a deeper insight into fundamental learning contents on developmental psychology and especially to reflect on these topics and their own learning process (cf. Nückles et al., 2020). We prompted cognitive and metacognitive learning strategies (Nückles et al., 2009). To prompt students to apply

We provided

Digital Tool and Peer Feedback

In addition, the student teachers were asked to provide

Students in all groups were asked to provide their feedback in a

Support of writing feedback (F) was realized by providing sentence starters, based on the idea of procedural facilitation (Englert et al., 2007; Scardamalia et al., 1984). In line with theory (Hattie & Timperley, 2007), the sentence starters were structured according to the three main aspects, beneficial feedback should address (see right side of Figure 1): (a) the

Students in the control group received the same interface as the other groups for formulating their feedback, just without the menu with sentence starters and without the rubrics (see online Appendix). Also, they received guidelines, which all students received, namely with basic information on what effective feedback looks like (according to Hattie and Timperley, 2007) and which elements it should contain (addressing the goal, strengths, and weaknesses as well as hints to go on learning).

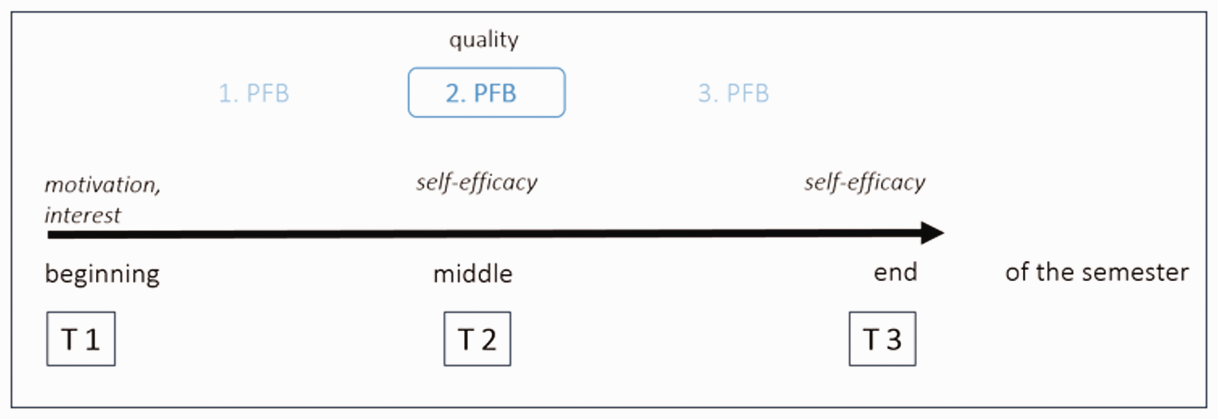

Instruments: Surveys and Coding Scheme to Measure Feedback Quality

There was a survey at three measurement points throughout the semester: at the beginning (t1), in the middle (t2) and at the end of the semester (t3), asking the students to answer questions concerning different motivational and volitional characteristics (see Figure 2).

Measurement Points and Measures in the Study.

At t1 we measured their motivation and interest in the seminar as well as different socio-demographic attributes. After receiving and giving the first (t2) and the third peer feedback (t3) we measured students’ self-efficacy (4 items; α = .86, 3 constructed following Bandura (2006), based on and adapted from Glogger-Frey et al. (2015, 2017)) with a questionnaire. As our intervention is to support assessing the implementation of learning strategies and to support providing the feedback, we also used a combined scale for self-efficacy, namely regarding the process of assessing as well as of providing the feedback.

We used a 5-point scale ranging from “do not agree at all” (1) to “totally agree” (5). In addition, we used a theory-based coding scheme based on Hattie and Timperley (2007), to capture the quality of the second written peer feedback. We focused on the second peer feedback, as student teachers were already used to the procedure of providing peer feedback at this point and as it allows us (in future research) to link the feedback quality and the subsequent performance in the learning journals. The coding system captured three main aspects, namely What goal was to be achieved? (Referring to the underlying goal of applying the single learning strategies.) What is the current stage of learning? (Referring to the strengths when applying the learning strategies.) What is the current stage of learning? (Referring to the weaknesses when applying the learning strategies.) What are the next steps to take? (Defining the next goals and steps and giving hints on how to apply learning strategies successfully.)

These subcategories are each rated on a scale from 0 (

A subsample of 80 feedbacks at t2 (middle of the semester) was coded (20 for each of the four experimental groups). We randomly selected the sub-sample of 80 out of all feedbacks from teacher students with a complete dataset. Interrater reliability was very good (intraclass correlation coefficient (ICC) = .99; 13% of the peer feedbacks were double coded by two independent raters, who were blind to the experimental condition to which the student teachers belonged). As the coding was done after the course ended, the student teachers were not informed about the scores they had earned.

Statistical Analysis

We conducted a 2 × 2×2 mixed ANOVA in SPSS V25 in order to analyze whether differences in student teachers’ self-efficacy refer to the support they received and the point in time. That is, we contrasted the groups with different support measures (between-subject factor: support in assessment: yes/no, support in feedback: yes/no) as well as two points in time (within-subject factor: t2: middle of the semester and t3: end of semester) and report partial eta2 as effect size. As we measured feedback quality just once, we conducted 2 × 2 ANOVAs.

At t1, students in the different groups did not differ significantly in their learning prerequisites interest in the seminar

4

(assessment support:

Results

Student Teachers’ Self-Efficacy (Research Question 1)

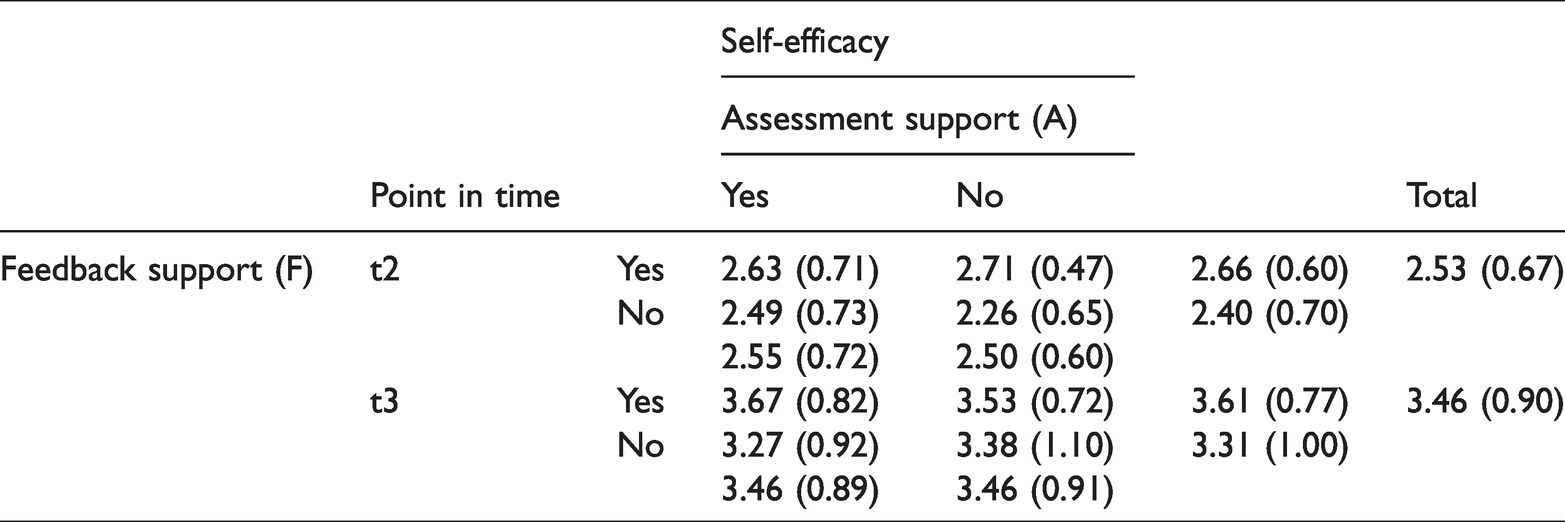

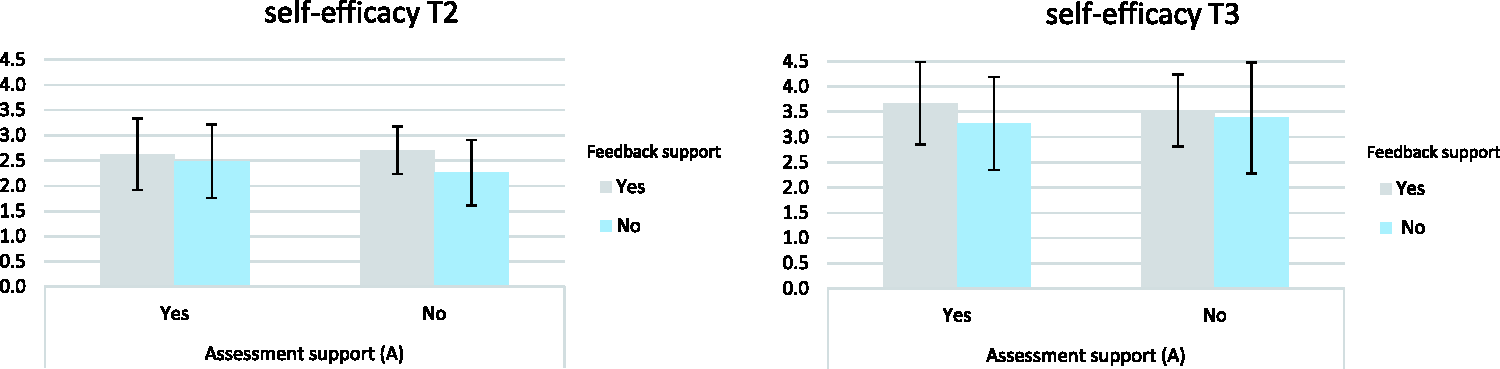

Descriptive statistics show that, in general, student teachers reported a higher self-efficacy regarding assessing learning strategies and giving feedback at the end of the semester (t3) compared to the middle of the semester (t2) (see Table 1, column “Total”), no matter what support they received. At t2 as well as at t3, the group that was supported in the

Descriptive Statistics for Self-efficacy; Separately for Experimental Groups and Time Points.

The conducted 2 × 2×2 mixed ANOVA showed main effects for the

We did not find an interaction effect between assessment and feedback support on self-efficacy, per se,

Three-way Interaction Between Assessment Support, Feedback Support, and Time on Self-Efficacy (5-Point Scale from 1 to 5).

Quality of Peer Feedback (Research Question 2)

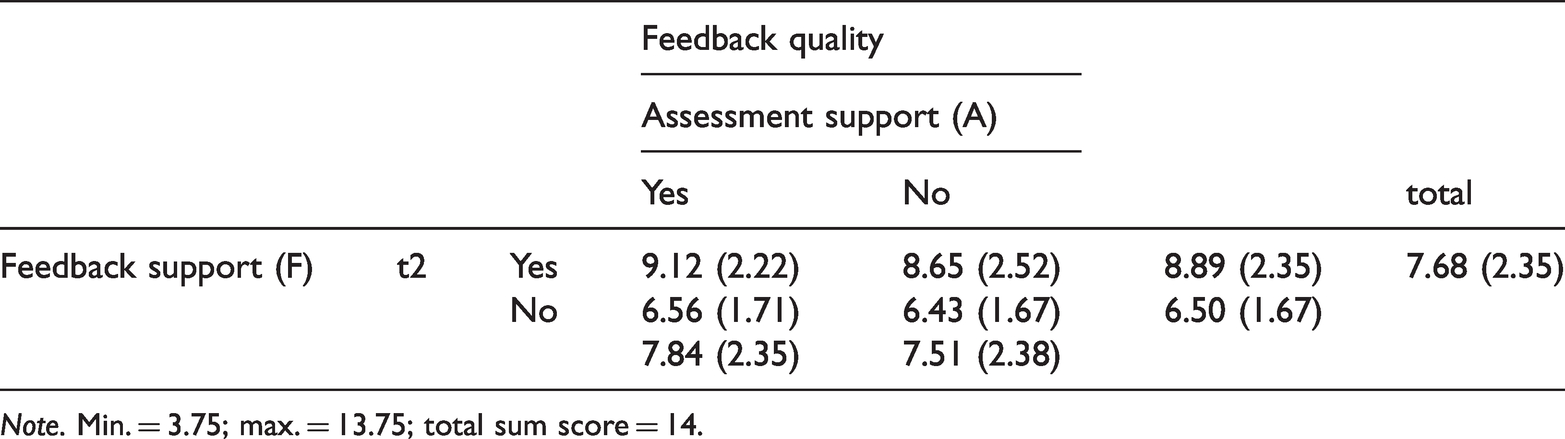

Feedback quality was measured coding different subcategories that referred to the content of the feedback, namely considering the underlying goal of the learning journal, and the strengths and weaknesses of the written document, as well as on strategies for future learning and the adequate link between the weaknesses and advised strategies (total sum = score of 14).

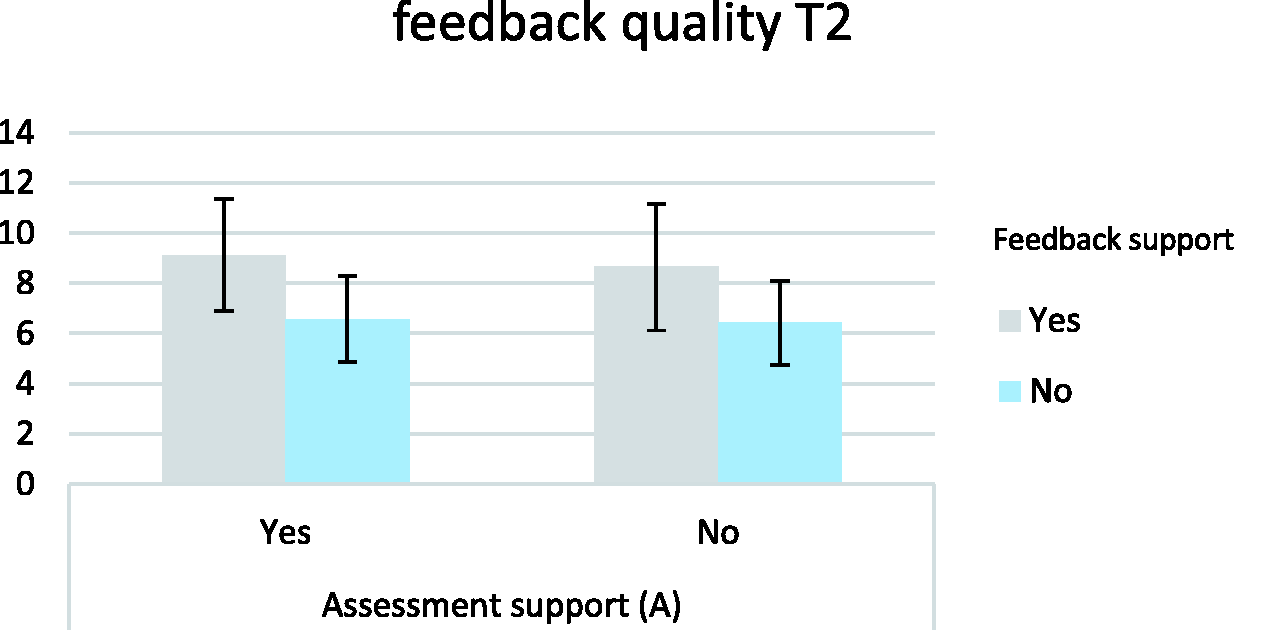

Considering the overall quality of the written peer feedback (see Table 2), supporting the feedback process with the help of procedural facilitation resulted in higher scores, compared to the groups without support in writing the feedback itself (significant main effect of feedback support; control and assessment group:

Feedback Quality at t2; Separately for Experimental Groups.

Effects of Assessment and Feedback Support on Feedback Quality at t2 (Scale from 0 to 14).

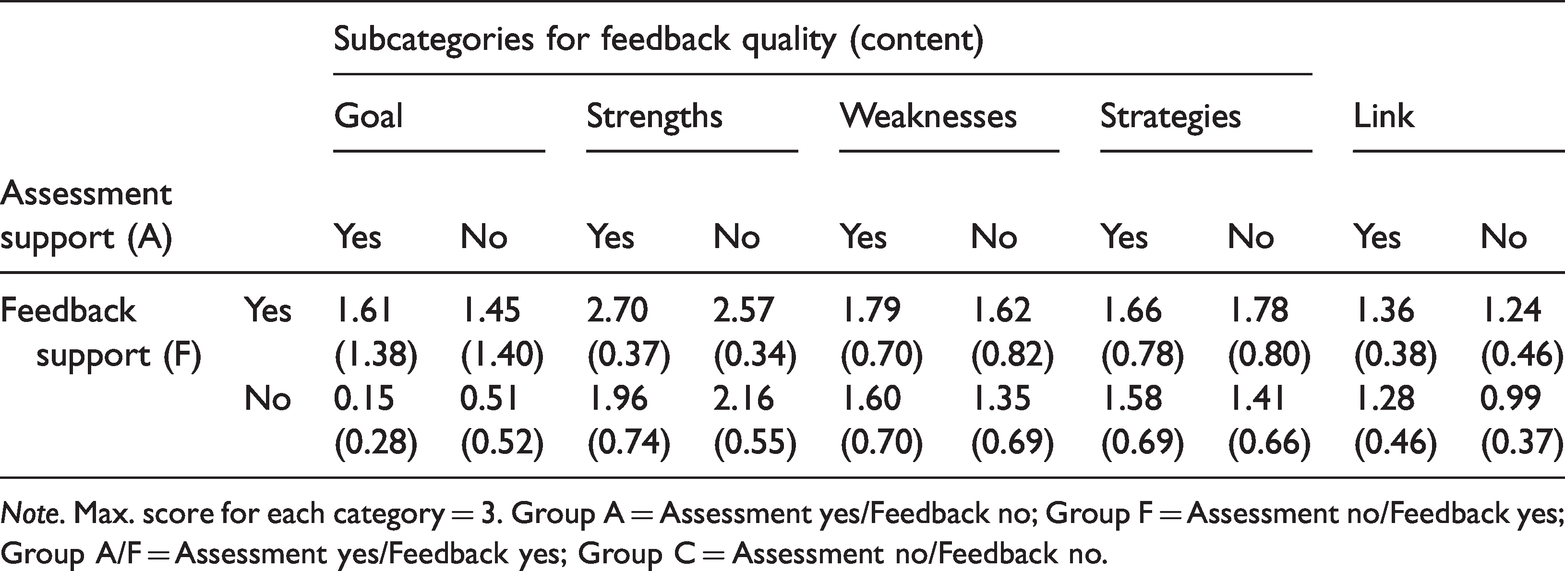

In a next step, we conducted exploratory analyses in order to differentially have a look at the single subcategories of feedback quality and to see if our experimental variation affected these subcategories in a different way. Table 3 illustrates descriptive statistics for the different subcategories of feedback content, namely whether the student did focus on the underlying goal, on strengths and weaknesses of the peers’ learning journal, on giving strategies for further learning, and the link between weaknesses and advised strategies. Results are presented separately for the four experimental groups.

Feedback Quality at t2; Separately for Feedback Quality Subcategories and Experimental Groups.

For all subcategories, results show higher values for student teachers who have been supported in the process of writing the feedback, compared to the students who have not received this support. Differences between these two (pooled) groups are significant for the subcategories of formulating the goal of implementing learning strategies when writing a learning journal (feedback support:

Discussion

The study focused on supporting the conceptually distinct processes of assessment and providing feedback within a peer feedback setting in teacher education and investigated the effects on student teachers’ self-efficacy and feedback quality.

Results show that student teachers perceived their self-efficacy regarding assessing and giving feedback on learning strategies in learning journals as higher after repeatedly giving and receiving peer feedback. They seem to benefit from continuously working with the digital tool throughout the semester and from generating as well as receiving feedback on learning strategies, independently from what support they received. This is in line with theoretical work that assumes that self-efficacy can be fostered by training and practice (Schunk, 1995).

In addition, our empirical findings reveal that student teachers especially benefit from systematic scaffold to phrase the peer feedback purposefully. Over time, from the middle to the end of the semester, we find the combination of supporting the assessment process as well as writing the feedback to be most advantageous for learners’ self-efficacy. After applying the detailed criteria by using the rubrics a few times they are better able to assess peers’ competencies adequately, and to use the assessment information when writing the feedback. Possibly, as rubrics comprise a lot of information, this result shows that students at first need to get used to working with rubrics as an assessment instrument. This might need practice and time in order to result in better learning outcomes, such as self-efficacy (Andrade, 2005; Luft, 1999; Schunk, 1995).

We also find a positive effect of feedback support on the overall feedback quality, that is, student teachers are able to write more beneficial feedback, when provided with scaffold in form of procedural facilitation, and by that with a structure and memory aids of what effective feedback needs to contain. They focus more on the underlying learning goal as well as on the peers’ strengths than student teachers who did not receive the scaffolds. Unsupported students rarely addressed the learning goal and the peers’ strengths in their feedback, which is consistent with previous findings (Glogger et al., 2013). This finding suggests that feedback skills of future teachers need to be specially trained in order to enable them to provide feedback that contains all the information that helps learners to go on studying effectively, including information about strengths and learning goals. Our study provides evidence that procedural facilitation can foster this skill.

Although the sentence starters were well aligned with the subcomponents of our feedback quality measure, the rubrics and the list of relevant criteria used in the assessment support might be valuable for focusing on the different dimensions of feedback content (goal, strengths, and weaknesses), too. Still, using rubrics is not a self-runner for better learning as students’ appropriate usage needs to be ensured (Andrade, 2005, 2010). More direct instruction and explicit rules (Prins et al., 2005) on how to use the scoring rubrics for providing feedback as well as anchors or worked examples to illustrate the different levels of the single criteria might have been necessary here (see Jonsson & Svingby, 2007), in order to promote valid judgments and hence feedback quality (Liu & Li, 2014).

Exploratory results show that assessment support facilitates student teachers’ ability to appropriately link specific weaknesses and strategies for future learning, which is an important aspect of high-quality feedback. This reflects that students, having been supported by the rubrics, have gathered more diagnostic information about their peers’ work than their counterparts who have not been supported by rubrics. Having more diagnostic information enables more tailored instructional decisions (cf. Oudman et al., 2018) – in our case, peer feedback was more tailored to the peers’ strategy use; that is, this aspect of feedback quality was enhanced by assessment support.

Limitations and Implications for Future Research

The study focuses on learning strategies in the domain of educational and developmental psychology, and empirical findings regarding implementing such a digital tool might be domain specific. However, the learning strategies prompted in this study can also be applied by students in other domains, as research on learning journals shows (e.g., biology: Glogger et al. (2012); Schmidt et al. (2012); mathematics: Glogger et al. (2012); Roelle et al. (2012); social psychology: Roelle et al. (2017)). Focusing on the use of rubrics, empirical evidence shows that the topic/domain does not affect the formative effects (Panadero & Jonsson, 2013). Still, of course the content and wording of the single criteria would have to be adapted to the targeted content of learning (in our study: learning strategies), when using the rubrics in other domains.

An interesting further step for future research is to investigate effects of supporting peer feedback on learning, more specifically on the performance in writing learning journals. As current findings show that feedback support with the digital tool leads to students who feel even more competent in assessing learning strategies and in giving feedback, as well as to a higher feedback quality, a next step is to focus on the performance in writing learning journals (Nückles et al., 2005). First exploratory analyses give us hints that the assessment support might make the feedback more “accurate” in that strategies for further learning were apparently more in line with the assessment result of identified weaknesses. Following this issue, future research should also address how accurate this assessment was in a more general understanding. “Accurate” means that student teachers’ ratings of learning strategies match the actual usage of learning strategies in the learning journals. Such judgement accuracy is often a measure of teachers’ assessment competence in the literature (Herppich et al., 2018; Karst & Bonefeld, 2020; Südkamp et al., 2012). The measure for feedback quality we used did not capture accuracy. Future research could investigate the question of what effects a “classical” accuracy measure as a measure of feedback quality would reveal. For example, the correlation or match of the means of student teachers’ with researchers’ ratings of learning strategies could be used to measure feedback quality.

Practical Implications

In general, our empirical findings strengthen the theoretical assumption that assessment and instructional competencies, such as providing feedback, are distinct components of (student) teachers’ professional competencies that need to be fostered individually and systematically (Herppich et al., 2018). In particular, our results suggest that procedural facilitation by sentence starters that help in formulating feedback is efficient for both the quality of peer feedback and for student teachers’ self-efficacy even on a short-term scale. If more time is available, both procedural facilitation and support of assessment by rubrics seem to be appropriate.

However, when teachers plan to implement such a tool in instruction, they need to schedule time for students to get used to it and provide possibilities and practices that help them to feel confident in continuous and self-contained work with the tool (Andrade, 2005; Luft, 1999). Research shows that training student teachers in doing peer assessment leads to higher performance as well as higher satisfaction with the course (Sluijsmans et al., 2002). Thus, more effort needs to be put into developing instructional means that support future teachers in giving beneficial feedback. Our digital tool could be one building block of such instructional means.

Supplemental Material

sj-pdf-1-plj-10.1177_14757257211016604 - Supplemental material for Supporting Peer Feedback on Learning Strategies: Effects on Self-Efficacy and Feedback Quality

Supplemental material, sj-pdf-1-plj-10.1177_14757257211016604 for Supporting Peer Feedback on Learning Strategies: Effects on Self-Efficacy and Feedback Quality by Anika Bürgermeister, Inga Glogger-Frey and Henrik Saalbach in Psychology Learning & Teaching

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.