Abstract

Cumulative assessment refers to interspersed testing in which each assessment covers all previous content and the mean assessments’ grade weighs in for the final exam grade. The effect of cumulative assessment on motivation and performance might differ between summative (i.e. assessment grades weigh in for the final exam grade) and formative (i.e. the assessments grades do not weigh in) variants. The present study explored this hypothesis in two field experiments in a higher education course (Exp 1: n = 102; Exp 2: n = 88). Each experiment used a single-factor, between-subjects design with type of cumulative assessment (i.e. summative vs formative) as independent variable and motivation (Exp 1: self-study time, topic interest, perceived competence; Exp 2: preparation time and self-efficacy) and performance (Exp 2: cumulative assessment performance; Exp1 and Exp2: final exam grade and delayed test performance) as dependent variables. The results of both experiments reinforced each other. In the summative condition, the final exam grade was higher than in the formative condition. However, when the summative assessments were discarded from the final grade, this difference disappeared. Also, in both experiments, the conditions did not differ on motivation measures. Theoretical and practical implications of our findings are discussed.

Keywords

Introduction

An effective approach to enhance final-test performance in higher education is to intersperse summative assessments throughout a course (e.g. Bangert-Drowns et al., 1991; Hopkins et al., 2016; Schwieren et al., 2017; Tuckman, 1998). A recently proposed variant (Kerdijk et al., 2013; Kerdijk et al., 2015) is compensatory cumulative assessment, henceforth cumulative assessment. In this approach, students take a number of summative assessments throughout a course in a spaced manner, i.e. with a one-week or multi-week interval. Furthermore, each assessment covers all previous course content and the combined score on the assessments weighs in for the final course grade.

The design of cumulative assessment taps onto a number of mechanisms that enhance learning and final-test performance. For one, because the scores on the cumulative assessments are combined into a single score, students can compensate for poor performance(s). This in turn, helps them to maintain their study efforts at a high level throughout the course. Also, the cumulative nature of the assessments and the relatively long interval between assessments stimulates spaced repetition, which is commonly defined as spreading repeated study activities over time instead of cramming the same repeated study activities in immediate succession (e.g. Cepeda et al., 2006; Delaney et al., 2010; Dunlosky et al., 2013; Hintzman, 1974; Maddox, 2016; Toppino & Gerbier, 2014). Furthermore, cumulative assessment requires students to engage in (repeated) retrieval, i.e. they have to retrieve previously learned course content from memory to answer the questions on each cumulative assessment. Hence, cumulative assessment encourages spaced repetition and retrieval practice, both of which are known to be highly effective for enhancing performance (e.g. Adesope et al., 2017; Carpenter, 2012; Delaney et al., 2010; Fiorella & Mayer, 2015, 2016; Kang, 2016; Roediger & Karpicke, 2006; Rowland, 2014).

However, the (frequent) use of summative assessment during learning has been criticized as it may (1) lead students to engage in activities that maximize their chances of passing the test rather than in activities focusing on achieving meaningful learning goals, (2) promote a teaching style directed at knowledge transmission rather than knowledge construction and creativity, (3) lower the self-esteem of poorly performing students and (4) result in tests becoming the rationale for classroom activities (e.g. Harlen & Deakin-Crick, 2002; McLachlan, 2006). To prevent these negative consequences of summative assessment, researchers have proposed to use assessment in a formative manner. Where summative assessment is used to categorize students or to inform certification, and hence emphasizes performance, formative assessment is meant to provide feedback that helps students to monitor, improve and accelerate their learning (e.g. Harlen & James, 1997; Sadler, 1989; 1998; Sluijsmans & Seegers, 2018).

The Present Study

Considering the drawbacks associated with summative assessment during learning, the question emerges whether a formative type of cumulative assessment may produce different outcomes on motivation and final-test performance than a summative type. Here, we define both types narrowly: in summative cumulative assessments, the assessments’ grade weighs in for the final grade, whereas it does not weigh in in the case of formative cumulative assessment. Comparing these types would add to the existing literature because there are theoretical reasons to assume that they lead to different outcomes. For example, reasoning from the expectancy-value theory (e.g. Eccles, 1983; Eccles & Wigfield, 2002), one would expect that students are motivated most by assessments that impact their final grade. After all, these grades determine their study success, which is likely to have an important value, amongst others because study success often has financial consequences (at least for the participants in our study). Thus, based on the expectancy-value theory, one would predict that students attach less value to a no-stakes test (formative cumulative assessment) than to a small-stakes test (summative cumulative assessment). Therefore, students might spend less time on their study when formative instead of summative cumulative assessments are used, leading to a lower final-test performance. By contrast, summative cumulative assessments tests might merely serve as an external trigger for learning, which might undermine aspects of students’ motivation (e.g. Deci & Ryan, 2000), such as topic interest. Also, for students who struggle with the course content, summative assessments might lower their perceived competence and self-efficacy. Thus, from this perspective, summative assessment might have a negative effect on motivation and perhaps also on final-test performance.

Apart from being theoretically relevant, comparing summative and formative cumulative assessment is practically relevant because the administration of summative assessment puts more pressure on the teaching staff and examination bureaucracy than formative assessment due to exam regulations that apply certification tests. Hence, if formative cumulative assessment produces at least the same motivational and performance outcomes as summative cumulative assessment, the former variant will be easier to implement in educational practice.

In the present study, we explored the question of whether the type of cumulative assessment (i.e. formative vs summative) influences motivation and performance in a first-year undergraduate course at a Dutch University of Applied Sciences. All students from the cohorts 2016–2017 and 2017–2018 took part in a field experiment. For a random half of the students in each cohort, the performance on cumulative assessments added to the final course exam grade i.e. the summative cumulative assessment condition, whereas for the other half it did not, i.e. the formative cumulative assessment condition. All participants were tested immediately after the course, i.e. the final course exam, and after a 10-week delay. We decided to include a delayed test since Kerdijk and colleagues (2015) suggested that the effect of summative cumulative assessment may only lead to better performance in the long term.

The first goal of the field experiments was to examine whether using summative cumulative assessment versus formative cumulative assessment leads to a difference on the final course exam and/or on the delayed test. The second goal was to compare the summative cumulative assessment condition and the formative cumulative assessment condition on aspects of motivation, namely topic interest, perceived competence (Renninger, 2000; Schiefele & Krapp, 1996), self-efficacy (Bandura, 1997; Schunk, 1987) and self-reported study time.

Experiment 1

Method

Participants and Design

Participants were all students from the cohort 2016–2017 enrolled in the Materials Science 1 course, which is part of the Mechanical Engineering programme at a Dutch University of Applied Sciences. 1 One hundred and twenty-seven students started the course, but 25 of them did not start with the field experiment as they dropped out early in the course. This left a sample of 102 students in Experiment 1. All participants provided written informed consent for their participation. In the sample, 62 students reported their age (M = 18.39, Mdn = 18.00, SD = 1.43, Min = 16, Max = 22), and 63 reported their gender (1 female, 62 male) during the intake. Experiment 1 used a single-factor, between-subjects design, of which we will describe the independent variable and dependent variables subsequently.

The sample consisted of four classes supervised by two teachers, each of whom taught two classes. Students were randomly assigned to classes, and classes were randomly assigned to teachers. Furthermore, within teacher, one class was randomly assigned to the summative cumulative assessment (henceforth SCA) condition and the other to the formative cumulative assessment condition (henceforth FCA) condition. Participants in the SCA condition took six cumulative assessments during the course. The scores on the best five cumulative assessments were averaged, and this average made up 30% of the final course grade (the other 70% was made up of the final course exam score). In the FCA condition, students were given the opportunity to take the six cumulative assessments but they were not obliged to do so. The final course grade in the FCA condition was based entirely on the final course exam score.

We compared the two conditions on the following dependent variables: self-reported independent study time for Materials Science 1, self-reported independent study time for other courses offered during the same quarter, topic interest, self-efficacy, score on the final course exam (without the addition of the cumulative assessment score in the SCA condition), and final course grade (with the addition of the cumulative assessment score in the SCA condition). We also compared both conditions on a delayed test after 10 weeks.

Ethics

The research plan of the present experiment was submitted to the educational committee of the Engineering and Informatics programme. This committee evaluates planned interventions into the educational programme against commonly held ethical standards such as those of the American Psychological Association http://www.apa.org/ethics/code. The educational committee approved the field experiments in the present study.

Course Information

Materials Science 1 is a course about the basics of materials science. It has a course load of two European Credit Transfer System (ECTS), which corresponds to 56 hours. The course is offered in the first quarter of the first year in parallel to other courses. These other courses have a total load of 13 ECTS. In Materials Science 1, one 2-hour interactive lecture is planned during weeks 1 to 7 for each of the four classes in the course. Students have to prepare for each of these lectures by reading chapters from a textbook and by doing homework exercises. Both theory and the homework exercises are discussed during the interactive lectures. In week 8, students take a final course exam.

For a Dutch description of the course content, please consult https://osf.io/9su6m/. English translations of the course content and the used materials are available on request from the first author.

Materials

In Experiment 1, we used four types of materials.

Intake Questionnaire

At the start of the course, students received an e-mail asking them to fill out an intake questionnaire. They were asked to report age, gender, prior education, topic interest and self-efficacy. The six topic-interest statements and the two statements on self-efficacy were adapted from Van Harsel and colleagues (2019). Students had to respond to these statements on a five-point Likert scale ranging from 1 (‘completely disagree’) to 5 (‘completely agree’).

Cumulative Assessments

Students in the SCA condition took six cumulative assessments. The course coordinator (the first author on the present paper) developed these assessments with one of his colleagues who also taught Materials Science 1. The content and assessed knowledge and skills of each of the cumulative assessments were aligned with the final course exam. The only difference between the cumulative assessments and the final exam was the response format. The final course exam contained open questions, whereas each cumulative assessment contained multiple-choice, true-false or various types of short-answer questions. Each cumulative assessment had 10 questions presented to each student in random order. Students received one point for a correct answer and zero points for an incorrect answer, so the total score ranged from zero through 10. All cumulative assessments were computer-based. Lastly, none of the cumulative assessments questions were re-used on the final course exam.

Self-study Time, Topic Interest and Perceived Competence Halfway Through the Course

Halfway through the course (in week 4), students received an e-mail with a questionnaire asking them to report the mean number of hours they spent per week on Materials Science 1 and on other courses offered in the same period. In addition, students received the same topic interest and self-efficacy statements as during the intake.

Final Course Exam and Delayed Test

The final course exam consisted of three open questions with eight sub-questions for which students could receive a maximum of 100 points. The final exam score was expressed on a 10-point scale. The delayed test contained six questions isomorph to the questions on the cumulative assessments and the score was expressed on a 10-point scale.

Procedure

At the beginning of the first course lecture, the teacher informed each class that a research project would be conducted during the course. Students were also informed about the cumulative assessments and that they were assigned to the FCA or the SCA condition. Also, students were informed that the condition assignment would reverse in the follow-up course Materials Science 2. Finally, the teacher encouraged students to fill out the intake questionnaire, which was mailed to them after the first lecture. A reminder for the intake questionnaire was mailed to students two and three days after the initial mail.

In the SCA condition, lessons 2, 3, 4, 5, 6, and 7 started with a cumulative assessment, with each assessment covering the material that had been dealt with until that point. Students were seated behind a computer in separate workstations. The questions were presented in random order and students were given 30 minutes to complete them. Afterwards, each student was informed about his/her performance. Subsequently, the teacher gave the solution steps and the rationale for taking these steps (cf. worked examples) for each of the questions, allowing students to ask for clarification and to take notes. The entire assessment procedure took one hour. In the FCA condition, students were given the opportunity to take the same assessments and – when participating – received the same feedback as in the SCA condition. Yet, their performance did not weigh in to the final course grade.

In week 8 of the course, all students took the final course exam. This was a pen-and-paper test that had to be completed in 100 minutes. The delayed test was administered in the first lesson of Materials Science 2 on a voluntary basis. This test was administered in the same fashion as the cumulative assessments during the course.

Results

For all statistical analyses, we used p < .05 as a threshold for statistical significance.

Intake Questionnaire

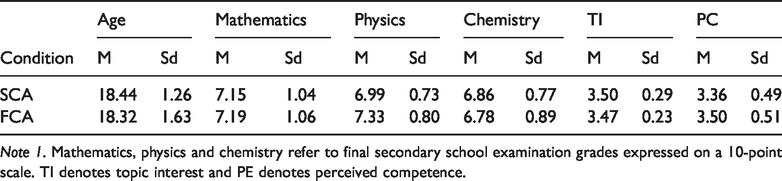

In the sample, 52 students were assigned to the SCA condition and 50 to the FCA. Sixty-two students filled out the intake questionnaire: 34 students from the SCA condition and 28 from the FCA condition. In the SCA condition, five students came from MBO (mid-level, tertiary, professional education), 21 from HAVO (higher general secondary education), seven from VWO (pre-university education) and one had a different background. In the FCA condition, three students came from MBO, 21 from HAVO, three from VWO and one had a different background. In the sample, the topic-interest items had a Cronbach’s alpha of .71, and the correlation between the two self-efficacy statements was .24. Furthermore, the conditions did not differ significantly in the percentage of students that took mathematics (SCA = 100%, FCA = 96%), physics (SCA = 97%, FCA = 93%) or chemistry (SCA = 91%, FCA = 89%) during secondary education. In addition, the differences between conditions on the background variables in Table 1 were small and non-significant. Hence, the two conditions appeared to be highly comparable at the beginning of the field experiment.

Descriptive Statistics for Background Variables of Participants in Experiment 1 as a Function of Condition.

Note 1. Mathematics, physics and chemistry refer to final secondary school examination grades expressed on a 10-point scale. TI denotes topic interest and PE denotes perceived competence.

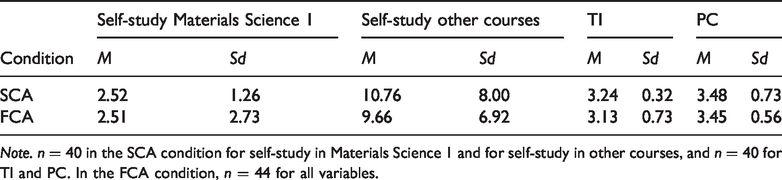

Self-study Time, Topic Interest and Perceived Competence Halfway Through the Course

Halfway through the course, the topic-interest items had a Cronbach’s alpha of .76, and the correlation between the self-efficacy statements was .51. Furthermore, the differences between the two conditions in self-reported self-study per week for the motivation variables in Table 2 were small and non-significant.

Descriptive Statistics for Self-Reported Self-Study Time Per Week for Materials Science 1 and for Other Courses in the Same Quarter, Topic Interest (TI) and Perceived Competence (PC) as a Function of Condition in Experiment 1.

Note. n = 40 in the SCA condition for self-study in Materials Science 1 and for self-study in other courses, and n = 40 for TI and PC. In the FCA condition, n = 44 for all variables.

Final Course Exam and Delayed Test

For Experiment 1, the teachers did not record the number of points students obtained per question for the final course exam and the delayed test. Instead, total scores were calculated across questions based on a scoring sheet. Hence, we could not calculate reliability measures for these outcomes measures in Experiment 1. We solved this issue in Experiment 2.

In the SCA condition, the mean average grade for the best five cumulative assessments was 7.75 (SD = 0.71). Considering that 10 is the maximum score, it is clear that students did very well on the cumulative assessments. The final exam score, without the cumulative assessments weighing in, did not differ significantly between the two conditions (SCA: M = 5.79, SD = 1.30; FCA M = 5.52, SD = 1.30), t(100) = 1.009, p = .316 (two-tailed), Cohen’s d = 0.21. The final course grade, in which the cumulative assessment weighted in for the students in the SCA condition, was significantly higher in de SCA condition (M = 6.37, SD = 1.01) than in the FCA condition (M = 5.52, SD = 1.30), t(100) = 3.681, p < .05 (two-tailed), Cohen’s d = 0.74. The delayed test was taken by 62 students. In this subset, students that had been in the SCA condition during Materials Science 1 scored significantly higher (n = 31, M = 6.18, SD = 1.50) than students from the FCA condition (n = 31, M = 4.96, SD = 2.03), t(61) = 2.710, p < .05 (two-tailed), Cohen’s d = 0.69.

Discussion

The results of Experiment 1 showed that summative cumulative assessments enhanced performance on a final end-of-course-exam compared to formative cumulative assessments when the assessments’ grades were weighted in. This has important practical implications, a point to which we will return in the General Discussion. Without the cumulative assessments’ grades, performance was comparable in the SCA and FCA condition. However, on the delayed test, the SCA condition outperformed the FCA condition. In the General Discussion, we will provide possible explanations of this finding. Furthermore, the SCA and FCA condition scored similarly on indicators of motivation, that is, topic interest, self-efficacy, and self-reported study time.

Experiment 1 was a single study and to investigate the robustness of our findings, we conducted a conceptual replication in the same course with a new cohort. We pre-registered this second field experiment (https://osf.io/qrhuj) (e.g. Nosek et al., 2018; Simons, et al., 2011).

Experiment 2

Method

Participants and Design

Participants were all students from the cohort 2017–2018 who were enrolled in the Materials Science 1 course. One hundred nine students started the course, but 21 of them did not start with the field experiment as they dropped out early in the course. This left an initial sample of 88 students in Experiment 2. All participants provided written informed consent for their participation. In the sample, 76 students reported their age (M = 18.82, Mdn = 18.00, SD = 1.99, Min = 16, Max = 26), and 77 reported their gender (two female, 75 male) during the intake. Students were randomly assigned to the SCA condition (n = 45) and the FCA condition (n = 43).

Experiment 2 used a single-factor, between-subjects design with type of cumulative assessment (i.e. summative vs formative) as independent variable and motivation (i.e. preparation time and self-efficacy) and performance (i.e. cumulative assessment performance; final end-of-course exam grade and delayed test performance) as dependent variables.

Materials and Procedure

The course, the materials and the procedure of Experiment 2 were identical to those in Experiment 1 with the following exceptions: (1) with respect to the motivation variables, the intake questionnaire only contained topic-interest questions, (2) for practical reasons three cumulative assessments (after lectures 2, 4, and 6) were administered, (3) cumulative assessments were obligatory in the SCA and the FCA condition, (4) after each cumulative assessment, participants reported the time they spent preparing for the assessment, (5) a self-efficacy questionnaire of four items was administered after the third cumulative assessment, and (6) the delayed test consisted of three open-ended questions and six multiple-choice/short-answer questions.

Results

For all statistical analyses, we used p < .05 (or a p-value corrected for multiple comparisons) as a threshold for statistical significance. Furthermore, before conducting the analyses, we applied the exclusion criteria from the pre-registration. As a result, 18 participants (10 from the SCA condition and eight from the FCA) were removed from the analyses. 2

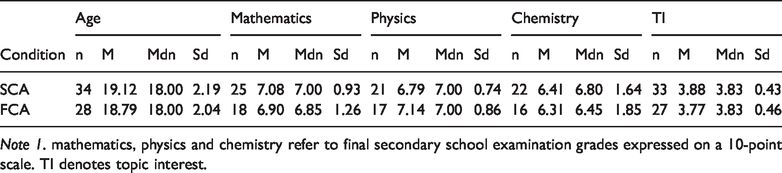

Intake Questionnaire

Of the 70 participants that were left after applying the exclusion criteria, 63 filled out the intake questionnaire. In the SCA condition, four students came from MBO (mid-level, tertiary, professional education), 23 from HAVO (higher general secondary education), six from VWO (pre-university education) and two had a different background. In the FCA condition, seven students came from MBO, 18 from HAVO, two from VWO must be one from VWO and two had a different background. In the sample, the topic-interest items had a Cronbach’s alpha of .50. Furthermore, the conditions did not differ significantly in the percentage of students that took mathematics (SCA = 94%, FCA = 96%), physics (SCA = 89%, FCA = 83%) or chemistry (SCA = 86%, FCA = 75%) during secondary education. In addition, the differences between conditions on the background variables in Table 3 were small and non-significant. Hence, the two conditions appeared to be highly comparable at the beginning of the field experiment.

Descriptive Statistics for Background Variables of Participants in Experiment 2 as a Function of Condition.

Note 1. mathematics, physics and chemistry refer to final secondary school examination grades expressed on a 10-point scale. TI denotes topic interest.

Self-reported Cumulative Assessment Preparation Time

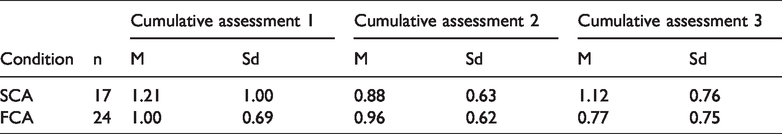

Before each of the three cumulative assessments in the course, students self-reported the time they spent preparing for the test. Relevant descriptive statistics are presented in Table 4. A 2 Condition (SCA vs FCA) x 3 Test (CA1 vs CA2 vs CA3) mixed ANOVA with repeated measures on the second factor did not show a significant main effect of Condition, F(1, 39) = 0.802, MSE = 0.754, p > .05,

Descriptive Statistics for Cumulative Assessment Preparation Time in Hours as a Function of Condition in Experiment 2.

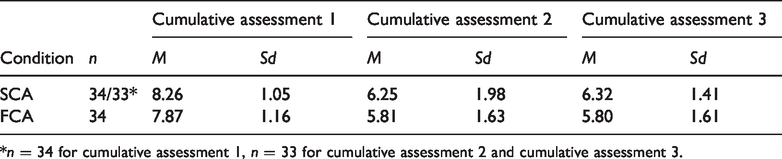

Cumulative Assessment Performance

We calculated Cronbach’s alpha (collapsing across conditions) for each cumulative assessment in the course. However, all Cronbach alpha’s were below the threshold (i.e. cumulative assessment 1 = .23, cumulative assessment 2 = .51, cumulative assessment 3 = .29) we set in our pre-registration as a necessary condition for statistical hypothesis testing. Therefore, we did not carry out the pre-registered statistical analyses. Instead, we only report relevant descriptive statistics in Table 5.

Descriptive Statistics for the Cumulative Assessment Performance on a 10-Point Scale as a Function of Condition and Cumulative Assessment in Experiment 2.

*n = 34 for cumulative assessment 1, n = 33 for cumulative assessment 2 and cumulative assessment 3.

We exploratorily examined Cronbach’s alpha for all cumulative assessments combined collapsed across conditions. This yielded a Cronbach’s alpha of .59. The mean performance was somewhat, albeit not statistically significant, higher in the SCA condition (M = 7.04, SD = 1.09) than in the FCA condition (M = 6.53, SD = 1.00), Welch’s t(60.67) = 1.927, p = .059 (two-tailed), Cohen’s d = 0.49. The scaled JZS Bayes factor (Rouder et al., 2009) was 1.213, denoting weak evidence for the alternative hypothesis.

Self-efficacy

After the third cumulative assessment, 51 students filled out the four items self-efficacy questionnaire. Cronbach’s alpha in this sample was .78. A Welch’s t-test showed that the SCA condition (M = 3.59, SD = 0.73) and the FCA condition (M = 3.47, SD = 0.64) did not differ significantly in self-efficacy, t(39.52) = 0.649, p = .520 (two-tailed), Cohen’s d = 0.21. The scaled JZS Bayes factor was 3.233, denoting substantial evidence for the null hypothesis.

Final End-of-course Exam and Delayed Test

Of the 70 participants that were left after applying the exclusion criteria, 67 took the final end-of-of course exam. The final course exam had a Cronbach’s alpha of .61, thereby meeting the norm for conducting the pre-registered analysis. When the mean cumulative assessment grade was not taken into account, the SCA condition (n = 34, M = 6.07, SD = 1.59) performed similarly to the FCA condition (n = 33, M = 5.80, SD = 1.44), Welch’s t-test t(64.684) = 0.728, p = 0.469 (two-tailed), Cohen’s d = 0.18. The scaled JZS Bayes factor was 3.184, denoting substantial evidence for the null hypothesis.

Although we did not pre-register this analysis, we exploratively compared both conditions on the final exam grade when the mean cumulative assessment grade was taken into account. The resulted in a significant advantage of the SCA condition (n = 34, M = 6.50, SD = 1.31) over the FCA condition (n = 33, M = 5.80, SD = 1.44), Welch’s t-test t(63.998) = 2.079, p = 0.042 (two-tailed), Cohen’s d = 052. The scaled Bayes factor was 1.534, denoting weak evidence for the alternative hypothesis.

The delayed test consisted of three open-ended questions and six questions with a multiple-choice/short-answer formats. Cronbach’s alpha for the former set of questions was .35 and for the latter .20. As the alphas are low, the results of the statistical hypothesis tests should be interpreted with great caution. For the open questions (maximum score = 30), there was no significant difference between the SCA (n = 27, M = 22.63, SD = 4.73) and the FCA condition (n = 24, M = 24.17, SD = 5.76), t(49) = -1.045, p = . 301 (two-tailed), Cohen’s d = −0.29. The same applied to the multiple-choice questions, (maximum score = 60): SCA condition (n = 27, M = 36.30, SD = 10.43), FCA condition (n = 24, M = 39.38, SD = 11.36), t(49) = −1.009, p = . 318 (two-tailed), Cohen’s d = −0.28.

Discussion

The results of field Experiment 2 largely resonated with those from Experiment 1. When the mean grade of the cumulative assessments weighted in for the final course exam grade (i.e. the SCA condition), students performed better than their peers in the FCA condition. However, this advantage was not found when the cumulative assessment grade was not included in the final grade. Furthermore, we could not corroborate the delayed test advantage for the summative condition, which we found in Experiment 1. On indicators of motivation, such as assessment preparation time and self-efficacy, the SCA and the FCA condition scored comparably.

General Discussion

In two field experiments, we compared summative and formative cumulative assessments on final-test performance and motivation. The results of both experiments largely reinforced each other. In the SCA condition, the final course exam grade was consistently higher than in the FCA condition due to the relatively high mean scores on the summative assessments. However, when the summative assessments grades were discarded from the final grade, the difference between the conditions was small and non-significant. Furthermore, in both experiments, we did not find evidence for motivational differences, expressed in self-study time, perceived competence, topic interest, self-efficacy and cumulative assessment preparation time. In addition, the SCA condition outperformed the FCA condition on the delayed test in Experiment 1, but not in Experiment 2.

Theoretical Implications

Prior research on cumulative assessments (e.g. Kerdijk et al., 2013) showed that interspersing summative cumulative assessments throughout a course increased the self-study time, which measures an aspect of motivation, compared to a control group in which the assessments were not administered. Our study adds to this existing research by exploring whether the type of cumulative assessment influences aspects of motivation and performance. The findings of the present study have a number of theoretical implications. Based on the example expectancy-value theory (e.g. Eccles, 1983; Eccles & Wigfield, 2002), one might predict that students attach less value to a no-stakes test (FCA condition) than to a small-stakes test (SCA condition). Therefore, students might be less motivated and perform less well in the FCA condition. Conversely, one might predict that summative tests are merely an external trigger for learning, undermining students’ motivation (e.g. Deci & Ryan, 2000) and perhaps also performance. Contrary to these predictions, we failed to find any differences between the SCA condition and the FCA condition on motivational aspects of learning. This is consistent with findings from the retrieval practice/testing literature (e.g. Kang & Pashler, 2014). Overall, the effects of our experimental manipulation on motivation and learning were small, particularly in Experiment 2 when all students took the cumulative assessments (hence erasing retrieval practice differences). This might be due to the elaborate feedback our students received on their performance. Hence, future research might examine when formative and summative cumulative assessments yield different learning and/or motivation outcomes.

In Experiment 1, we found a benefit of summative cumulative assessment on the delayed test. However, this finding is hard to interpret because performance on the cumulative assessments was not measured in the formative condition. Teachers did observe that cumulative assessment attendance – and hence total test taking – was lower in the FCA condition, so the delayed test benefit might reflect a retrieval practice effect (e.g. Bahrick, 1979; Rawson et al., 2013; Rawson et al., 2018). Alternatively, the delayed test benefit might be due to the type of cumulative assessment, i.e. formative versus summative. In Experiment 2, attendance at the cumulative assessment sessions was mandatory and performance was measured. Subsequently, the summative delayed test benefit disappeared, which suggests the observed benefit in Experiment 1 was due to retrieval practice differences.

Implications for Educational Practice

The students’ mean final course exam grades left room for improvement. This might have been due to the test format of the cumulative assessments. In our experiments, we used test items that required short-answers, multiple-choice answers or true-false answers. The retrieval practice literature, however, suggests that retrieval practice effect on learning is larger for open formats that require more retrieval effort (e.g. Carpenter & DeLosh, 2006). Hence, using these open formats in cumulative assessments might bolster their effectiveness. Another thing that might aid improvement is the feedback type. We provided performance feedback to the students as well as worked-out solutions. Yet, cumulative assessments might be employed more powerfully, if their input is also used to provide students with feedback and feedforward on cognitive, metacognitive and motivational aspects of learning (e.g. Hattie & Timperly, 2007; Zimmerman, 2001; 2011).

Both experiments demonstrated that the mean grade on the final course exam was higher in the SCA condition than in the FCA condition when the cumulative assessments grades were weighted in. Hence, summative cumulative assessment has a considerable and positive impact. However, the use of summative cumulative assessments requires thorough consideration. For example, the cumulative assessments should be aligned with the final exam in content, level of difficulty, and types of processes assessed. Also, final exams, which contain items from all course themes, are probably harder than some of the cumulative assessments, i.e. tests that tap onto a limited number of themes, or even a single theme. Also, summative assessments typically come with a heavier bureaucratic load, as they are part of the formal examination system. Lastly, the positive effect of summative assessment in our experiments was driven by high cumulative assessments scores. Yet, if the cumulative assessments become harder, the positive effect may disappear or even be reversed. Teachers should, therefore, consider the above issues when deciding whether summative cumulative assessments are worth the benefits of higher grades.

Footnotes

Acknowledgements

The authors thank Alain Dirven for his help in preparing and conducting the field experiments in the present study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.