Abstract

The main goal of this study was to test whether feedback from a lecturer and tutor on an initial reflective journal entry fosters reflection quality in a subsequent journal entry and reflection skills in student teachers. To address these questions, we, a team of educators and psychologists, conducted a field experiment during the practical semester. Student teachers (

Introduction

Reflection skills enable continuous learning to become more professional in the future (e.g., Combe & Kolbe, 2004; Dewey, 1933; Finlay, 2008; Gibbs, 1988; Korthagen, 2001; Larrivee, 2000). For student teachers, reflection skills can be beneficial during the practical semester in particular, during which they practice classroom teaching. A common medium to engage student teachers in reflection during a classroom practice-teaching experience (e.g., practical semester) is to have them write a reflective journal (e.g., Hartung-Beck & Schlag, 2020; Lee, 2008; Lindroth, 2014). However, the following example shows that the writing of a reflective journal alone might not be sufficient: However, that meant that I flew very quickly through my lesson plan and had 5–7 minutes left at the end of the lesson, so I had to improvise, which didn’t feel right to me. I felt uncomfortable and overwhelmed. You can improve your analysis in the future if you emphasize such a situation’s consequences for your students. In your analysis, you emphasize the importance and consequences for you and the lesson. In your next reflection, you could make sure to emphasize on the impact of this situation on your students as well.

The Role of Reflection Skills in Student Teachers

Student teachers should benefit substantially from reflection skills that enable them to learn from their own teaching experiences. Focusing on improving reflection skills should therefore be considered a beneficial investment in teacher training (Moore, 2003). Teacher training during the practical semester is an ideal opportunity to foster student teachers’ reflection skills (Schüssler & Weyland, 2017). The practical semester in North Rhine-Westphalia (Germany) is part of student teacher education that must be completed during the master's degree program. During this time, the student teachers teach at a school and gain practical experience. They are supervised by their universities, the centers for teacher education in the respective district, and by the practical training schools (Heinrich & Klewin, 2018). Student teachers spend at least five months in school during their practical semester (Meurel, 2018).

A very promising tool to enhance reflection in this practical semester is the writing of reflective journals. A reflective journal is a reflective writing task for learners entailing a follow-up reflection after a (professional) experience (Lindroth, 2014). The writing task in a journal supports reflection processes and gives learners a high degree of responsibility for their own learning process (e.g., Berthold et al., 2007; Nückles et al., 2020). It is therefore not surprising that keeping a reflective journal is one of the main tools to promote reflective thinking in student-teacher programs (Hatton & Smith, 1995). In such programs, keeping reflective journals supports students’ ability to reflect on their own teaching. This ability to reflect on their own teaching in a reflective journal can lead to action that can ultimately enable them to teach more successfully (Lindroth, 2014). However, Pieper et al. (2020) found that although journal writing can engage student teachers in beneficial reflection processes, student teachers did not always exhibit “optimal” reflection processes, as they partly wrote entries that included hardly any productive reflection. In view of these deficiencies, the provision of feedback can be considered a beneficial supplement to reflective journal writing (see Roelle et al., 2011).

High-Information Feedback in Reflective Journals

From a cognitive perspective, feedback is a type of information that helps to improve one’s performance of a task (see Wisniewski et al., 2020). Recently, Wisniewski et al. (2020) concluded on the basis of their meta-analysis of educational feedback research that feedback is more effective the more information it contains. It helps learners “not only to understand what mistakes they made, but also why they made these mistakes and what they can do to avoid them the next time.” (Wisniewski et al., 2020, p. 12). Hence, such

High-information feedback is very useful in conjunction with self-reflection (Anseel et al., 2011). According to Hattie et al. (2017), during self-reflection feedback recipients must be able to trust that they are free (and encouraged) to admit to errors, misunderstandings, and to struggle, and that taking these natural learning steps is necessary for feedback to be effective. Feedback provided to student teachers should also direct attention to errors in their teaching. On this basis, modifications can be made to improve teaching. Giving feedback motivates the learner in this way, and reinforces effective behavior while minimizing or even preventing ineffective behavior (London, 2003). Hattie et al. (2017) concluded that feedback is most effective when three major questions are addressed: “Where am I going? How am I getting there? Where to next?” (p. 300).

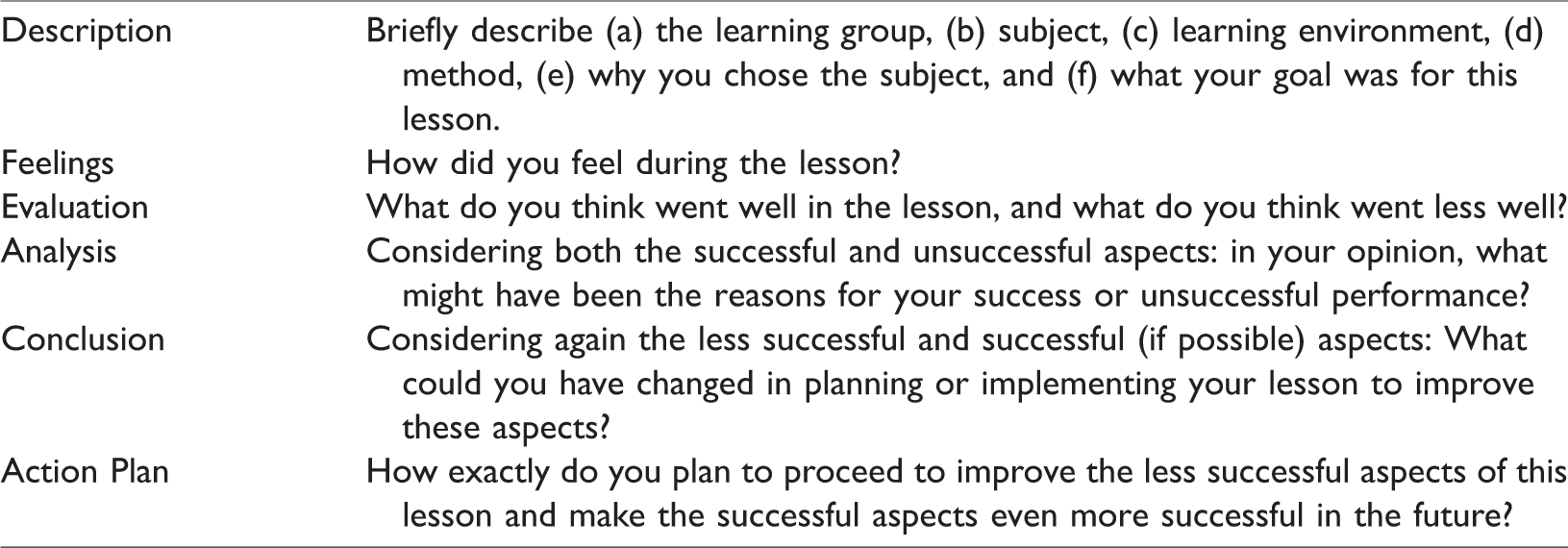

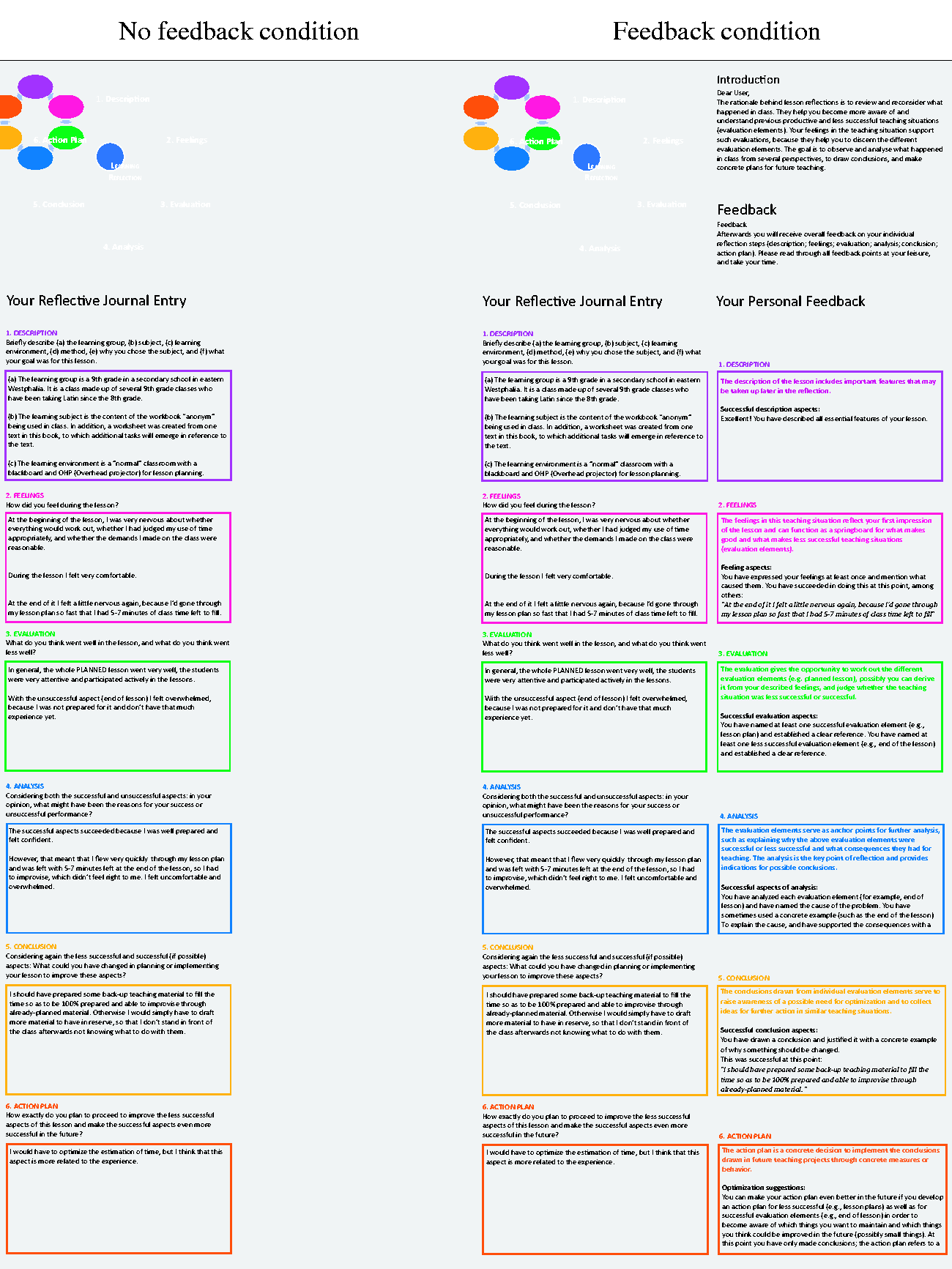

In case of high-information feedback on a reflective journal written in the practical semester, the feedback could provide student teachers with information and guidance on which goals they should aim for and which they have already fulfilled. A viable scheme for providing such feedback to student teachers could be oriented towards Gibbs’ reflective cycle (Gibbs, 1988). Gibbs’ reflective cycle entails the following six stages: (a) description: learners describe the situation, without evaluation, (b) feelings: learners reflect on their feelings in the learning situation, (c) evaluation: the situation is assessed (what went well and what did not), (d) analysis: learners analyze what exactly happened in the situation and what meaning can be derived from it, (e) conclusion: learners examine in detail what to conclude from this experience for themselves, and (f) action plans: in the last and sixth stage, learners develop concrete action plans to implement in future situations. Table 1 shows an example of a student teacher’s reflective journal entry during practical semester and high-information feedback provided on their journal entry. However, to date there has been no direct empirical test of the helpfulness of high-information feedback in reflective journals during the practical semester.

On The Left Is One Example of a Student Teacher’s Journal Entry and on The Right, The Uploaded High-Information Feedback (Translated From German).

Research Hypotheses

Against this background, the central aim of this study was to analyze whether high-information feedback on the quality of written reflections in a reflective journal entry fosters student teachers’ reflection skills. For this purpose, in a team of educators and psychologists we conducted a field experiment in which we provided high-information feedback on the reflective journal entries of student teachers during their practical semester. We were interested in the benefits of the feedback regarding the quality of the reflective journal entries. We were also interested in the high-information feedback’s influence on conceptual knowledge about reflection, and student teachers’ skills in analyzing other students’ reflections. We investigated the following hypotheses:

Hypothesis 1: High-information feedback fosters reflection quality. Hypothesis 2: High-information feedback fosters conceptual knowledge about reflection. Hypothesis 3: High-information feedback fosters student teachers’ skills in analyzing other students’ reflections.

Method

Sample and Design

Fifty-four German student teachers (40 female;

Materials

All materials came as written text that was partly accompanied by explanatory graphics and were provided in an online-platform that was developed for the specific purpose of this study. The participants could access the platform from wherever they wanted and read the respective information individually. The participants could not ask clarifying questions or discuss the materials in groups on the online-platform.

Reflective journal writing instructions

The general information on how to write a reflective journal was identical for all participants. Specifically, all learners received six reflection prompts designed to engage them in crucial reflection processes (see Table 2). These prompted processes were closely aligned with Gibbs’ reflective cycle (1988). For instance, the learners were prompted to describe their lesson (Description: “Briefly describe (a) the learning group, (b) subject, (c) learning environment, (d) method, (e) why you chose the subject, and (f) what your goal was for this lesson”), to evaluate their lessons (Evaluation: “What do you think went well in the lesson and what do you think went less well?”), and to analyze their lessons (Analysis: “Considering both the successful and unsuccessful aspects: In your opinion, what might have been the reasons for your success or for an unsuccessful performance?”). The learners were asked to type their responses to the six reflection prompts in six text boxes positioned on the left side of the screen. We also presented a graphic of Gibbs’ reflective cycle with the six stages of reflection on the left side (see Figure 1).

All Prompts Used in the Reflective Journal Entries for Both Groups (Translated From German).

Design of field experiment (online-platform).

Feedback

The experimental manipulation took place once the learners had written their first journal entry. More specifically, before they could start writing their second journal entry (the earliest point in time the participants could write the second entry was 10 days after their first entry; all information on the experiment’s time course is summarized in the Procedure section), the learners in both conditions were asked to re-read their first journal entry. In contrast to the no-feedback group, the feedback group, after re-reading their first entry, received written high-information feedback on their first journal entry, which corresponded to the six stages of Gibbs (the students were not told that they would receive feedback in advance). The feedback was created according to the Hattie and Timperley feedback model (2007) and informed learners whether they had met the main criteria for effective reflection at each stage of Gibbs’ reflective cycle. Specifically, each stage’s goal was explained again in the first step (e.g., Description: “The description of the lesson includes important features of the lesson that may be taken up later in the reflection”). This part of the feedback was identical for all learners in the feedback group. In the second step, the feedback highlighted examples from the first journal entry for at least one successful reflection aspect. For instance: Evaluation: “You have named at least one successful evaluation element (e.g., lesson plan) and established a clear reference. You have named at least one less successful evaluation element (e.g., end of the lesson) and established a clear reference.” Conclusion: “You could improve your conclusion in the future if you would always mention the (hoped-for) consequences for the students. For example, you could describe the consequences more elaborately when you write: ‘Otherwise I would simply have to draft more material to have in reserve, so that I don’t stand in front of the class afterwards not knowing what to do with them.’” Description: “Your future descriptions will be even better if you learn to describe the learning environment in greater detail. A learning environment comprises essential factors that influence learning (e.g., learning materials, learning tasks, design of the learning situation,…).”

The feedback was prepared by the first author (university lecturer in teacher education and professional school teacher) and one trained feedback giver (tutor), who was trained by the first author. To standardize the feedback as much as possible, both feedback givers used the same feedback scheme and same text modules for all learners. The first author approved all written feedback messages before they were provided to the participants (i.e., before they were uploaded to the online-platform). The feedback was displayed such that the first journal entry was still displayed on the left side of the screen, and on the right side the feedback was presented for each stage of Gibbs’ reflective cycle (in separate text boxes; see Figure 1). The feedback-condition participants were required to read the feedback for at least eight minutes.

After having read the feedback, learners in the feedback group had to write their second journal entry within 17 days. The second journal entry required the exact same task with the same reflection prompts as the first journal entry. The students in the no-feedback group also had 17 days to write their second entry once they had re-read their first entry, but did not receive any feedback.

Instruments and Measures

Pretest

The pretest assessed prior conceptual knowledge about reflection and learners’ skills in analyzing other students’ reflections. Conceptual knowledge was assessed via four open questions requiring written explanations of the concepts, functions, difficulties, and aspects of reflection necessary for successful reflection. For example, the participants had to explain the steps they should take when reflecting (i.e., “Explain the steps to be taken while reflecting”). There was one point to earn for each correct statement. The results of the four items were added to obtain a total score, and then the mean value was determined for the conceptual knowledge about reflection. These items are found in Appendix A.

To assess the learners’ skills in analyzing other students’ reflections, participants received a fictitious reflective journal entry from an unknown student together with the task of highlighting all its good aspects and less good aspects, and to give the unknown person feedback on the journal entry. Participants were given one point for every correct analytic statement. The points were added to acquire the total analytic skills score. This item’s task instruction is found in Appendix B.

Two raters independently scored the written answers from 11 randomly-selected participants (approximately 20% of the sample) to each of the four items and each analytic criterion. A student research assistant and the first author rated the answers; both were blind to the conditions. Interrater reliability (intraclass coefficient for a two-way mixed model with measures of absolute agreement) was in both cases very good, ICC > .85 for conceptual knowledge about reflection and ICC > .85 for learners’ skills in analyzing other students’ reflections. Therefore, the remaining responses were rated by the student research assistant only.

Reflection quality

The learners’ reflective journal entries were analyzed in terms of their reflective processes’ quality. Specifically, regarding each stage of Gibbs’ reflective cycle, the learners’ reflection quality was determined via a scoring protocol. The scoring protocol criteria for the descriptions’ quality were the extent to which the following aspects had been covered: the learning group, topic, learning environment, method, why the topic was chosen, and what the lesson’s goal was. Another example would be the analysis’ quality criteria. In this case, several quality criteria were checked for each evaluation element (e.g., start of teaching) in the analysis. One criterion was that the learners should explain in a straightforward, understandable way, based on a concrete example from their classes (e.g., “The picture used at the start of the lesson failed to motivate the students”), why the situation or action was successful or less successful. In addition, using an example, they should explain what the consequences of this situation or action were for the classroom, and what meaning it had for the students. For each reflection criterion fulfilled, one point was given at each Gibbs stage. The points in each stage were then added and divided by the maximum number of points achievable at that stage. As this made it possible to score one point in each stage, a student’s maximum total score in a journal was six points.

The first author and a research assistant coded the written journal entries for each stage of 11 participants (approximately 20%). Interrater reliability was very good (ICC > .85). Therefore, the remaining journal entry quality was rated only by the research assistant.

Posttest

The posttest was identical to the pretest. Furthermore, the same scoring protocol and same raters were used (all ICCs > .85).

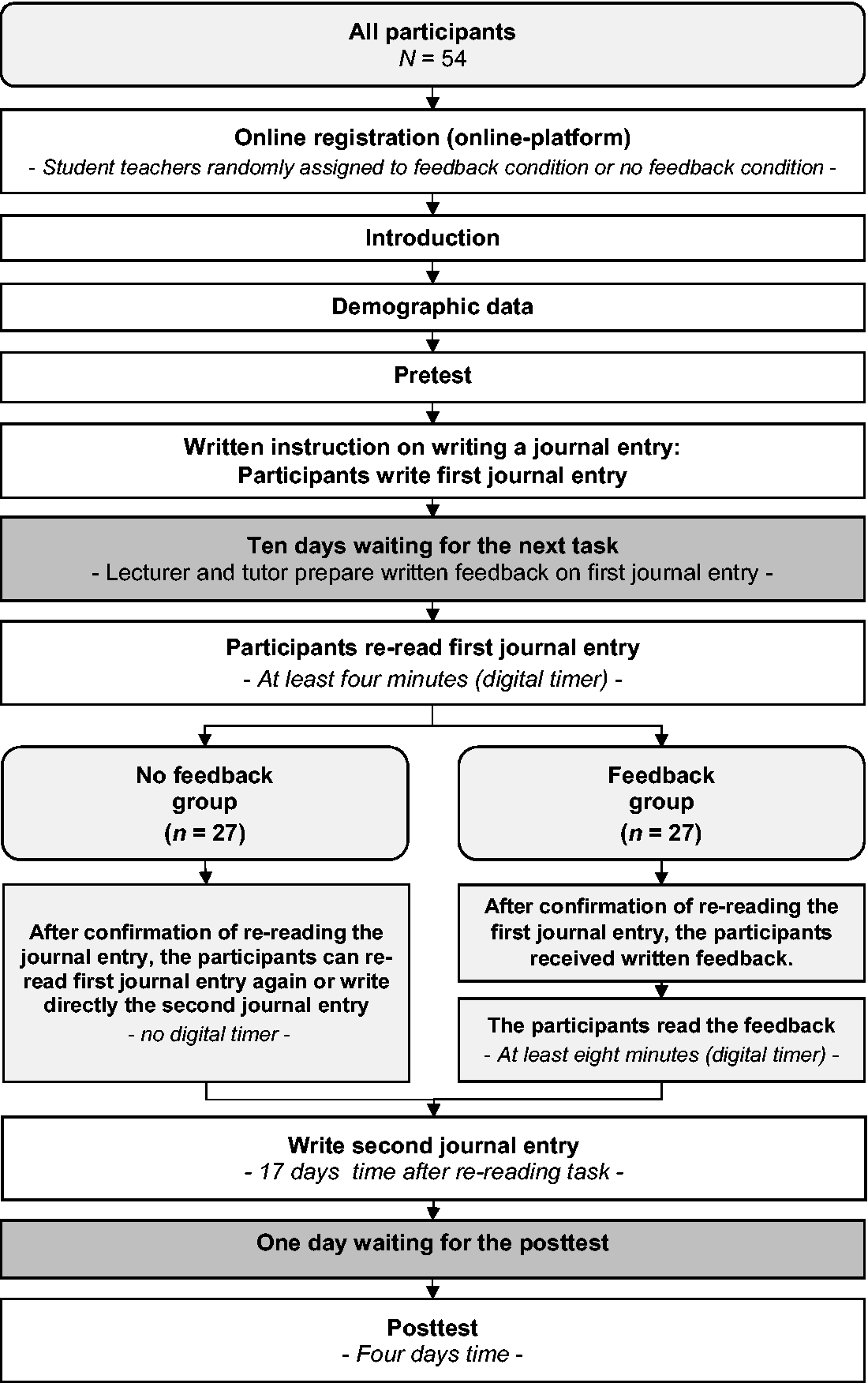

Procedure

All data were assessed in written form via the online-platform on which the participants worked individually (only technical requests were allowed via e-mail). The participants registered during the practical semester on the online-platform. After registration, the participants had seven weeks in all to work on the pretest, write two reflective journal entries, read the feedback (feedback group), and take the posttest. Figure 2 gives an overview of all tasks and times. For each of these subtasks, they were sent three additional reminder e-mails if a subtask was still incomplete. All participants completed all tasks.

Setup of the field experiment.

After registration, the student teachers were informed about the procedure, timeline, tasks, and goal of this study to foster their reflection skills. Afterwards, demographic data were collected. Next, the learners took the pretest, and then wrote their first journal entry. In both groups, the student teachers had to wait for 10 days after reading before receiving the next task. Feedback was provided from the feedback givers during those 10 days (feedback group). In both groups, learners re-read the first journal entry for at least four minutes before writing their second journal entry. After re-reading, only the feedback group got feedback on their first journal entry, and they had at least eight minutes to read it. Then, all students were given 17 days to write their second journal entry. Afterwards, they had to wait for one day, and then take the posttest within four days.

Results

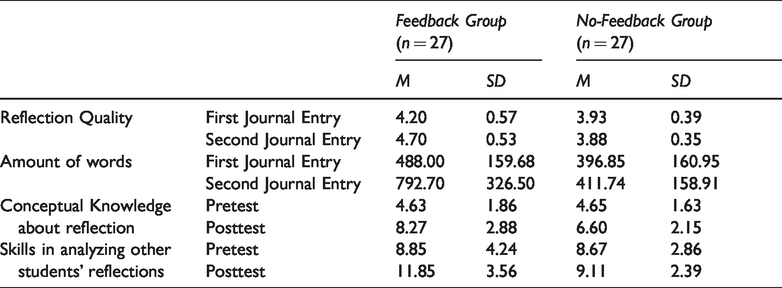

Table 3 presents the means and standard deviations for the two experimental groups on learning processes and learning outcomes. We applied an alpha-level of .05 for all analyses. For the statistical analyses, we used independent

Means and Standard Deviations for Pretest, First Reflective Journal Entry, Second Journal Entry, Posttest in Both Groups.

Preliminary Analyses

First, we analyzed whether the random assignment had resulted in comparable groups. We identified no statistically significant effects in prior conceptual knowledge about reflection,

We also analyzed whether the groups differed in the number of days between the pretest and posttest, and detected no significant differences between the feedback group (

In addition, we analyzed the number of days between the class lessons and journal entries. There were no significant differences between the feedback group (

Learning Processes

Reflection quality

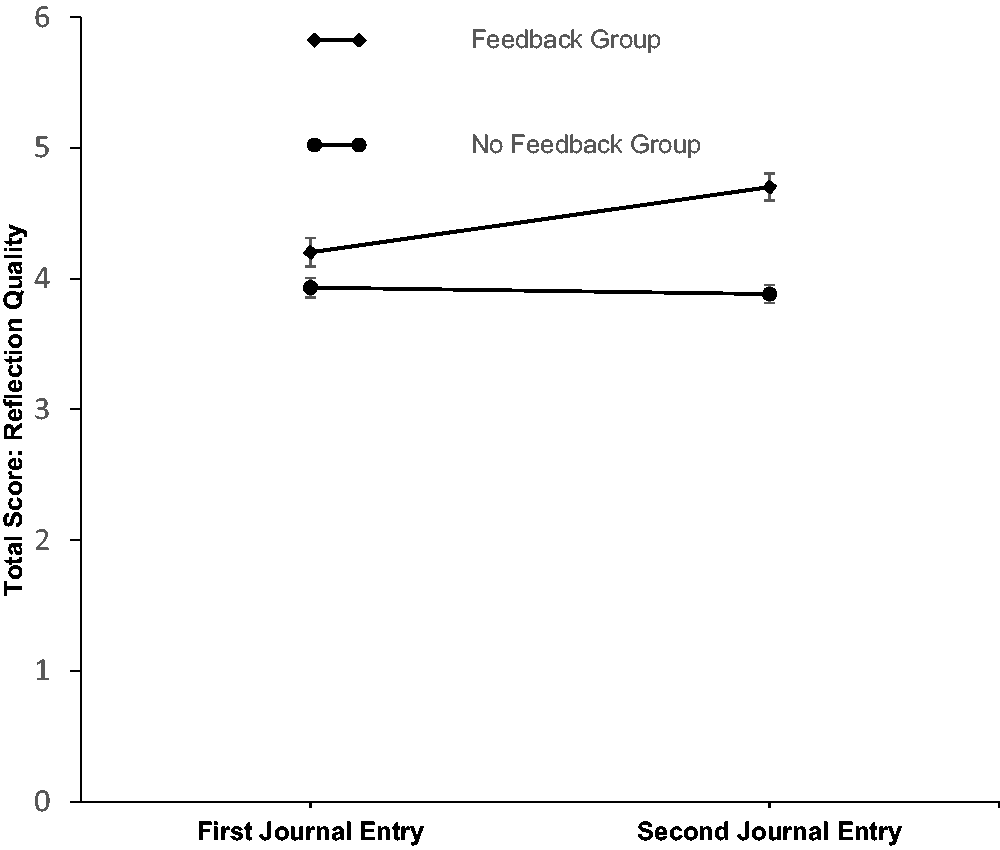

In our first hypothesis, we assumed that feedback would enhance the reflection quality evident in the reflective journal entries. A mixed repeated measures ANOVA (within factor: time: 1st vs. 2nd journal entry; between factor: feedback vs. no-feedback group) revealed a significant main effect of time,

Interaction between reflection quality according to conditions prior to feedback and measurement time. Error bars represent standard error of the mean.

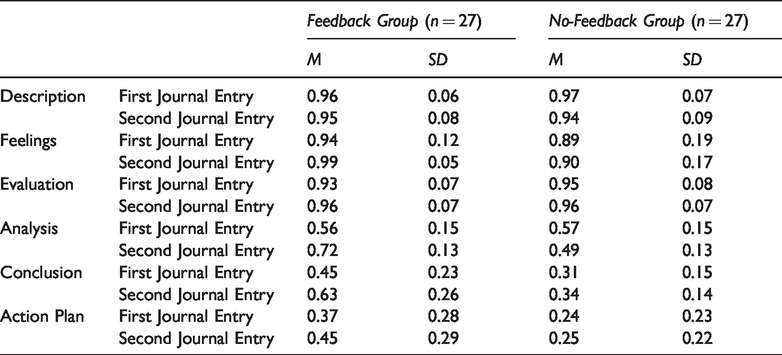

For exploratory purposes, we also analyzed whether the feedback effect was pronounced at all six stages of Gibbs’ reflection cycle (see Table 4 for descriptive statistics). For each stage of Gibbs’ reflection cycle, we conducted a mixed repeated measures ANOVA (within factor: time: 1st vs. 2nd journal entry; between factor: feedback vs. no-feedback group; Bonferroni corrected alpha-levels of .0083 [.05/6]). Focusing on the interaction of time and feedback, we noted that the feedback was most effective in the analysis stage

Means and Standard Deviations for the Reflection Quality First and Second Journal Entries.

Word count

For exploratory purposes, we also analyzed (mixed repeated measures ANOVA: within factor: time: 1st vs. 2nd journal entry; between factor: feedback vs. no-feedback group) revealed a significant main effect for time,

Learning Outcomes

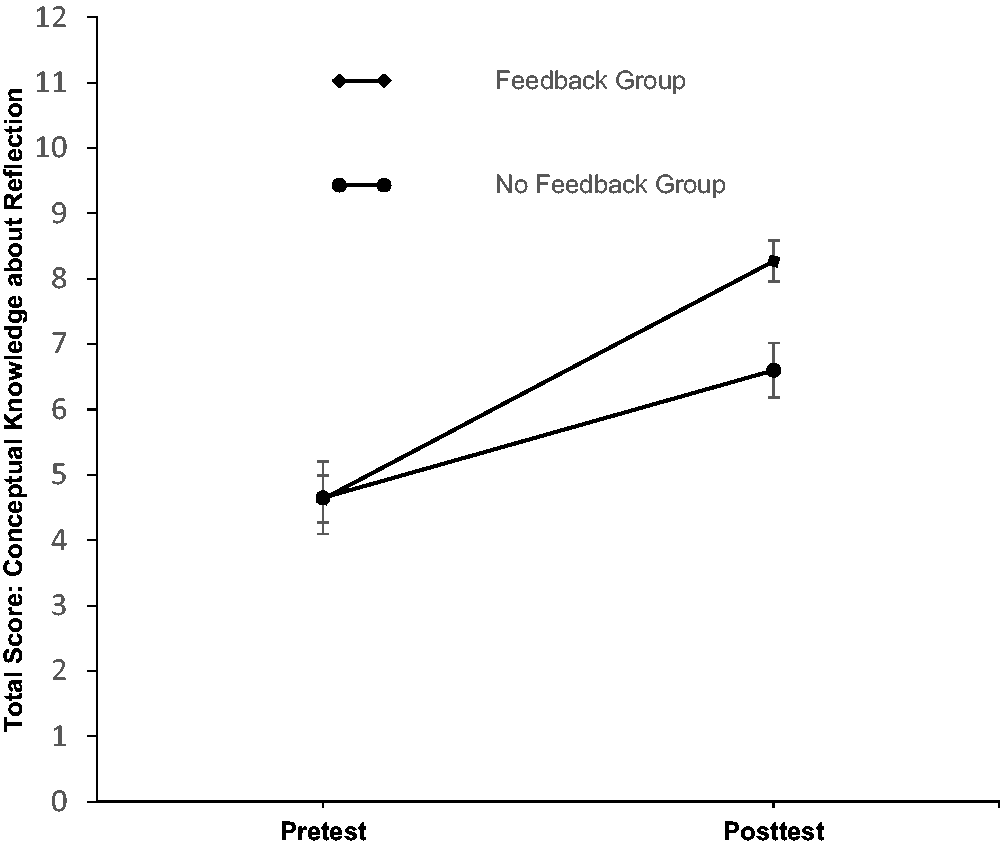

In our second hypothesis, we assumed that feedback would foster conceptual knowledge about reflection. Supporting this hypothesis, a mixed repeated measures ANOVA (within factor: pretest vs. posttest; between factor: feedback vs. no-feedback group) revealed a significant main effect for time,

Interaction between total score of conceptual knowledge about reflection according to conditions prior to feedback and measurement time. Error bars represent standard error of the mean.

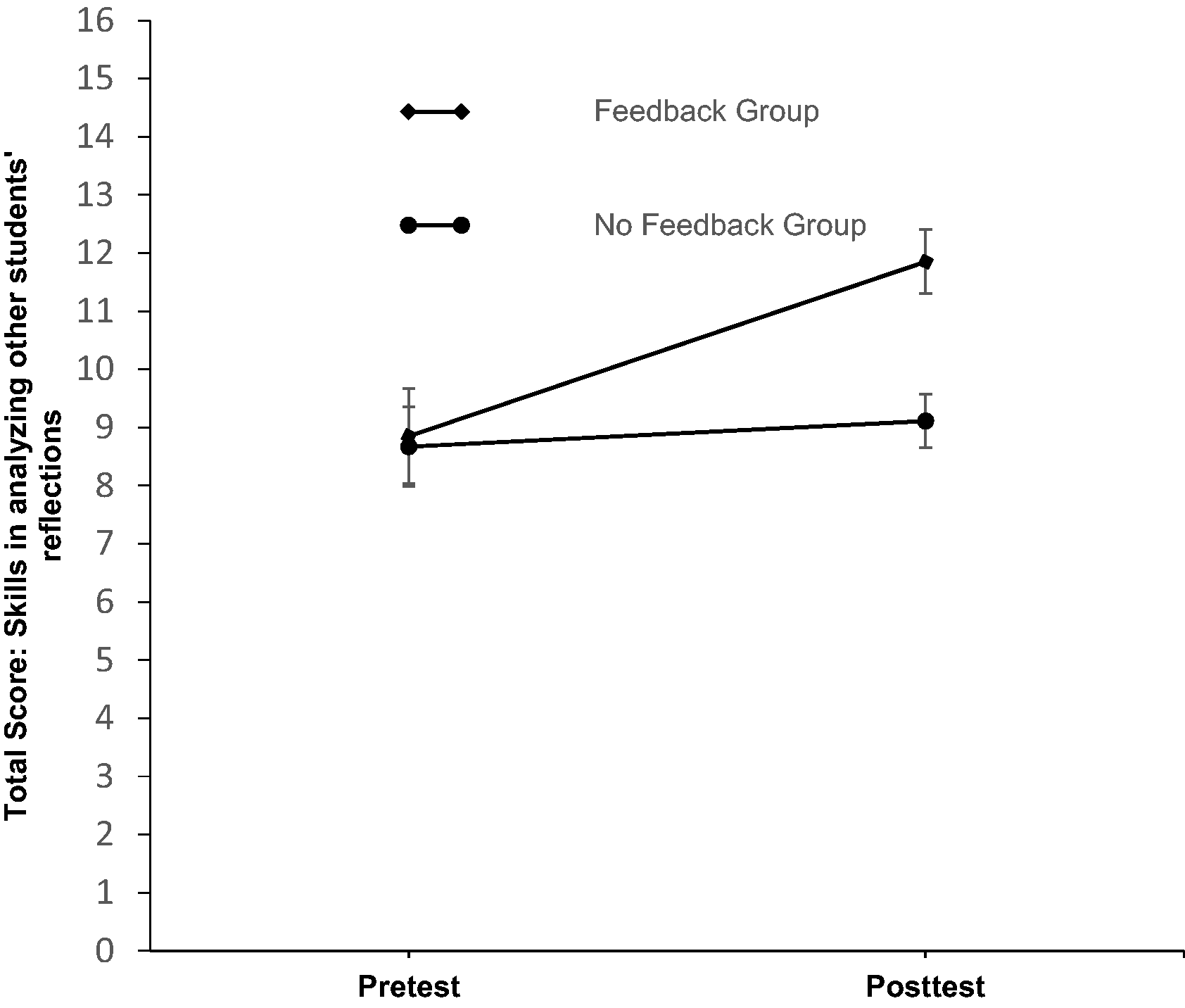

We also predicted that feedback would foster learners’ skills in analyzing the other students’ reflections (Hypothesis 3). A mixed repeated measures ANOVA (within factor: pretest vs. posttest; between factor: feedback vs. no-feedback group) revealed a significant main effect for time,

Interaction between total score of skills in analyzing other students' reflections according to conditions prior to feedback and measurement time. Error bars represent standard error of the mean.

Discussion

In summary, our field experiment showed that high-information feedback fostered student teachers’ reflection skills. In this sample, (a) high-information feedback fostered the reflection quality in a subsequent learning journal entry, (b) high-information feedback enhanced conceptual knowledge about reflection, and (c) high-information feedback fostered skills in analyzing other students’ reflections.

Feedback Fosters Reflection Quality

The reflection quality in the second reflective journal entries increased substantially in the feedback group compared to the no-feedback group. The reason for the feedback group’s better reflection quality might be that just receiving feedback motivated the learners (London, 2003) and helped improve their performance (Wisniewski et al., 2020). Another reason might be the feedback guidelines according to Hattie and Timperley (2007) we relied on in this study. The feedback was focused on informing reflection goals in each reflection stage, explaining what succeeds, what does not, and offering optimization suggestions for future reflections. We assume it was easier for the learners’ learning process (those getting feedback) to have a scaffold upon which to focus on the relevant steps in a reflection (Roelle et al., 2011). Our results also show that feedback is most effective at the analysis stage in Gibbs’ (1988) reflection cycle. In contrast, student teachers did not benefit significantly from feedback at the description, feelings, evaluation, conclusion, and action plan stages. A possible explanation for the similar reflection quality at these stages could be the prompts used in the reflective journals. It seems that these prompts were already sufficient to achieve a high level of reflectivity in these stages (in particular: description, feelings, evaluation). This does not entirely apply to the conclusion and action-plan stages, which may be because learners, even with targeted feedback, perceive no great benefit in formulating conclusions into concrete action plans for their future teaching.

Our results also show that the feedback group wrote significant more words than the no-feedback group in their second journal entry. This may be attributable to the fact that the feedback group wrote more details after feedback because they were still focusing on their own missing details in their last reflection.

Feedback Fosters Learning Outcomes

Our results show that feedback increased conceptual knowledge about reflection in the feedback group more than in the no-feedback group. They also demonstrate [cautiously interpreted (ANOVA significant; BF01 = 1.01)], that feedback marginally enhanced learners’ skills in analyzing other students’ reflections in the feedback group compared to the no-feedback group. An explanation for these results can be the high-information feedback we employed according to Hattie (2017). This feedback included elements of conceptual reflection knowledge and gave the learners the opportunity to engage in a deeper learning process. These findings confirm the results of Thomas and Thomas (2017), who showed that their feedback (i.e., including tailored message content) fostered their students’ learning outcomes (i.e., higher exam scores).

Limitations

One limitation of the present study is that the feedback procedure we applied was tested after a written reflective journal entry on an online-platform designed to capture the genuine teaching situations of student teachers. Strictly speaking, our results can only be generalized to teaching situations at school in which learners reflect and get feedback on an online-platform in teacher education.

Another limitation of this study design is that it does not differentiate between general feedback and high-information feedback in fostering reflection skills. In our study design, we compared only one high-information feedback condition to one no-feedback condition. Future research should analyze which feedback characteristics are needed to obtain its most positive effects.

A further limitation is that we cannot prove whether the student teacher applied the new reflection knowledge (e.g., action plans) successfully. Strictly speaking, our results only confirm that high-information feedback after a written reflection fosters the reflection skills of student teachers. More research is needed to determine how student teachers exploit the feedback provided about their reflections to then develop action plans and implement them in future lessons. Translating the reflection into teaching action needs to continuously assess the correctness of the reflection.

Practical Implication

Our findings reveal that high-information feedback provided by lecturers in the educational sciences fostered reflection skills in student teachers. Providing feedback to low-expertise learners is more effective than to high-expertise learners (see Roelle et al., 2011). We therefore recommend that high-information feedback be provided in particular when learners are having difficulties applying the Gibbs reflection steps (1988), as this instructional measure should enhance their reflection skills. To support lecturers giving feedback, we provide a learning-reflection module in cooperation with lecturers in pedagogy and psychology. This module contains practical materials such as effective reflection prompts and feedback modules on the online-platform “PortaBle” (Das Portal zur Bielefelder Lehrer_innenbildung [The portal for teacher education in Bielefeld]). PortaBle, which is available for all persons in teacher education. Thereby, we strive to implement such feedback procedures fostering reflection skills as a standard support measure in our teacher-education curriculum. This would enable student teachers who receive high-information feedback to keep their conceptual knowledge about reflection and gain stronger reflection skills in the future, benefiting from them over the long term.

In the long run, we plan a scaffolded-fading procedure (see Vygotsky, 1978) starting with intensive feedback at the beginning of the learning process and reducing (or gradually diminishing) the feedback step by step. Elements of student-student feedback that were the most effective form concerning the direction of feedback in the Wisniewski et al. (2020) meta-analysis can also be incorporated in this feedback procedure.

Supplemental Material

sj-zip-1-plj-10.1177_1475725720966190 - Supplemental material for Feedback in Reflective Journals Fosters Reflection Skills of Student Teachers

Supplemental material, sj-zip-1-plj-10.1177_1475725720966190 for Feedback in Reflective Journals Fosters Reflection Skills of Student Teachers by Martin Pieper, Julian Roelle, Rudolf vom Hofe, Alexander Salle and Kirsten Berthold in Psychology Learning & Teaching

Research Data

sj-zip-2-plj-10.1177_1475725720966190 - Supplemental material for Feedback in Reflective Journals Fosters Reflection Skills of Student Teachers

Supplemental material, sj-zip-2-plj-10.1177_1475725720966190 for Feedback in Reflective Journals Fosters Reflection Skills of Student Teachers by Martin Pieper, Julian Roelle, Rudolf vom Hofe, Alexander Salle and Kirsten Berthold in Psychology Learning & Teaching

Footnotes

Acknowledgements

We would like to thank the following student research assistants: Pia Prömper for her assistance in conducting the experiment, in preparing feedback, and in coding the qualitative data, Paula Scheibe for her assistance in conducting the experiment and in coding the quality of reflective journal entries data, Maren Averbeck for her assistance in coding the skills to analyzing the other students’ reflections data, Johanna Freese for her assistance in coding the conceptual knowledge about reflection data, Paul Baal for his support in programming the online-based learning environment. We also thank our native English-speaking copy editor Carole Cürten for proofreading our manuscript. Finally, we would like to thank all students who took part in our experiment as well as all the lecturers supporting our field experiment at Bielefeld University and Bochum University.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research in the article was funded by the “Bundesministerium für Bildung und Forschung, BMBF [Federal Minestery for Education and Research]” under the project number 01JA1908.

Supplemental material

Supplemental material for this article is available online.

Note

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.