Abstract

Many students use ineffective learning strategies. They tend to start too late and learn in a superficial way, without integrating different parts of the study materials. To help students in Psychological Assessment in Youth overcome these problems, we designed online study-aids to spread their learning over the semester (distributed practice) and provide them with self-test questions (practice testing). Study-aids covered the last week’s course readings and consisted of 10 to 15 questions presented in several stimulating closed formats (e.g., connecting one theory with another, or filling out norm scores in a bell curve). Participation was voluntary and promoted using an incentive system. The study-aids were evaluated in two cohorts of students (2018: N = 94; 2019: N = 84). Participation was good: 79% of the students completed the study-aids (range 69–85%). Satisfaction was high: most students indicated that the study-aids supported their studies well (89%). Exam performance improved significantly upon introduction of the study-aids (comparison cohort 2017: N = 69), although more so for the midterm exam (r = 0.47) than for the final exam (r = 0.17). These findings suggest that online study-aids can stimulate effective learning by helping students distribute and self-test their learning.

Introduction

Many students use ineffective learning strategies when they autonomously study course materials (Dunlosky et al., 2013). Psychology students are no exception (Hartwig & Dunlosky, 2012; Gurung, 2005; Gurung et al., 2010; Karpicke et al., 2009). For example, they tend to bunch-study shortly before the exam, resulting in lower retainment of the knowledge in the longer term (Dunlosky et al., 2013). A major challenge for teachers in higher education therefore is to stimulate students to use effective learning strategies, while refraining from less effective strategies. This study presents one possible tool to attain this goal: online study-aids.

What are effective learning strategies? In an influential review study, Dunlosky and colleagues (2013) assessed the utility of 10 common learning strategies by reviewing whether these strategies lead to positive learning outcomes that were robust and generalizable (i.e., across different ages, settings, and types of materials to be learned). They found that frequently used strategies such as summarization, highlighting, and rereading actually had low utility. Only two learning strategies had high utility: distributed practice and practice testing.

Distributed practice (also known as scheduling or spacing) refers to the spreading of learning episodes rather than learning all materials at one point in time. Distributed practice is thought to improve learning because students will encounter the material several times, each time retrieving information learned before. Such retrieval enhances durable storage in memory (Dunlosky et al., 2013). However, although psychology students may be aware that this strategy is beneficial to learning, many of them still mass their learning, often right before the exam (Hartwig & Dunlosky, 2012).

Practice testing (also known as retrieval practice) refers to self-testing or taking practice tests about the reading material (Dunlosky et al., 2013). It enhances retention of the tested information (i.e., the testing effect). This learning strategy is thought to be effective for several reasons (Roediger III & Karpicke, 2006). First, students will need to retrieve information from memory, facilitating retrieval on future occasions. Second, practice testing provides students with metacognitive knowledge of what they know and which topics need further study. Third, practice testing may also facilitate retention of new information (i.e., the forward testing effect) because it prepares students for learning and provides them with cues on how to learn (Yang et al., 2018). Practice testing is even more effective when it is accompanied by feedback involving the correct answer. When students provide incorrect responses on a practice test, feedback helps them to identify misunderstandings and prevents that they persevere in making these errors (Dunlosky et al., 2013; Schwieren, et al., 2017). Unfortunately, only a minority of psychology students uses practice testing as a learning strategy (Gurung, 2005; Karpicke et al., 2009).

How can teachers in higher education invite students to use effective learning strategies? Distributed learning can be promoted through the organization of the course. For instance, one study showed that educational psychology students accessed online readings continuously during the semester if they needed to hand in assignments throughout the course, whereas in courses that only used one final exam, many students accessed the reading materials not until the final quarter of the semester (Barenberg et al., 2018).

Practice testing can be promoted by providing practice tests as part of the course, rather than leaving it to the students to find ways to test themselves. For instance, in one study, students only received credit for a quiz if they passed it prior to the first class session of that week. On average, 64% of the students completed each quiz, suggesting that students are willing to use practice testing if they are stimulated by their teachers (Johnson & Kiviniemi, 2009). A recent meta-analysis identified 19 studies in which teachers applied practice testing in their psychology courses and found an overall positive effect on learning outcomes (Schwieren et al., 2017).

In sum, teachers may enhance their students’ learning outcomes by designing learning activities that stimulate distributed practice and practice testing. This article describes the development of online study-aids, which aim to do just that. We aimed to help students spread their learning by presenting several study-aids spread across the semester and test their knowledge by providing practice questions. Practice testing had positive effects on learning outcomes in some—but not all—earlier studies, suggesting that the effectiveness may depend upon its implementation in a course (Schwieren et al., 2017). Therefore, we took several measures to motivate students to use our study-aids. First, we introduced each question by explaining the relevance of this knowledge for students’ future jobs as youth psychologists. Providing students with a meaningful rationale shows them why a learning activity is useful, enhancing their learning efforts (Niemiec & Ryan, 2009). Second, we used an incentive system to promote participation. We used small rewards (i.e., increases in exam grades) that were explicitly tied to specific performance criteria—a reward procedure known to increase students’ motivation (Cameron, 2001). Third, we provided students with immediate feedback. In a recent study, practice testing increased motivation only in those students who received feedback, perhaps because feedback enhances students’ self-efficacy by making their learning process transparent (Abel & Bäuml, 2020). Below, we describe the teaching context and the development and implementation of the online study-aids. Furthermore, we evaluate the effectiveness of this teaching innovation by assessing student participation, satisfaction, and performance in two cohorts of students, comparing them with a cohort taking the course prior to this innovation.

Teaching Context and Approach

The study-aids were developed for the second-year bachelor course Psychological Assessment in Youth at Utrecht University, The Netherlands. This 10-week half-time course is part of the Bachelor of Science in Psychology program. It is a compulsory course for students who want to enrol in the Clinical Child and Adolescent Psychology Master program and includes about 80 students each year. Students’ intended learning outcomes of the course are that they can (a) translate their scientific knowledge of normal development and developmental psychopathology to conduct psychological assessment in children and adolescents, and (b) select appropriate assessment instruments, use them in the correct way, and use assessment outcomes to guide their clinical decisions.

Before our introduction of the study-aids in 2018, the course consisted of weekly two-hour lectures and weekly four-hour seminars in groups of about 20 students. Lecturers discussed common etiological factors of the development and maintenance of childhood psychopathology (i.e., intelligence, social-emotional factors, school environment and teacher–student interaction, family environment and parenting, and neuropsychological factors) and described how these factors may be assessed. In the seminars, students practiced using tests, questionnaires, and observations, went through all diagnostic and decision-making processes for one case study, and learned to write a case report. In between meetings, students autonomously studied the course readings (i.e., about 550 pages on assessment, aetiology, and specific instruments), without any further support from the teachers.

In 2018, we replaced the lectures with online study-aids. We noted that many students did not study the assigned readings before each seminar, but started studying all readings at once shortly before the exams. This had several disadvantages. First, many students could not profit optimally from the seminar exercises, which were designed to build on and extend the course readings (e.g., discussing how scales of an instrument relate to theory). Second, precious time was spent in the seminars to answer questions on topics that students could have learned about in the course readings. Third, many students failed the exam. The lectures did not prevent these issues. Attendance during the lectures tended to be low (i.e., less than 50% of the students), and several exam questions on issues that were extensively discussed in the lectures were answered incorrectly by a majority of the students. It was clear that we needed to design a new learning activity that would stimulate students to absorb the required knowledge in time and to develop insight into the course readings, to better prepare them for their exams and their future lives as psychologists.

Materials: Development and Implementation

Development of the Online Study-Aids

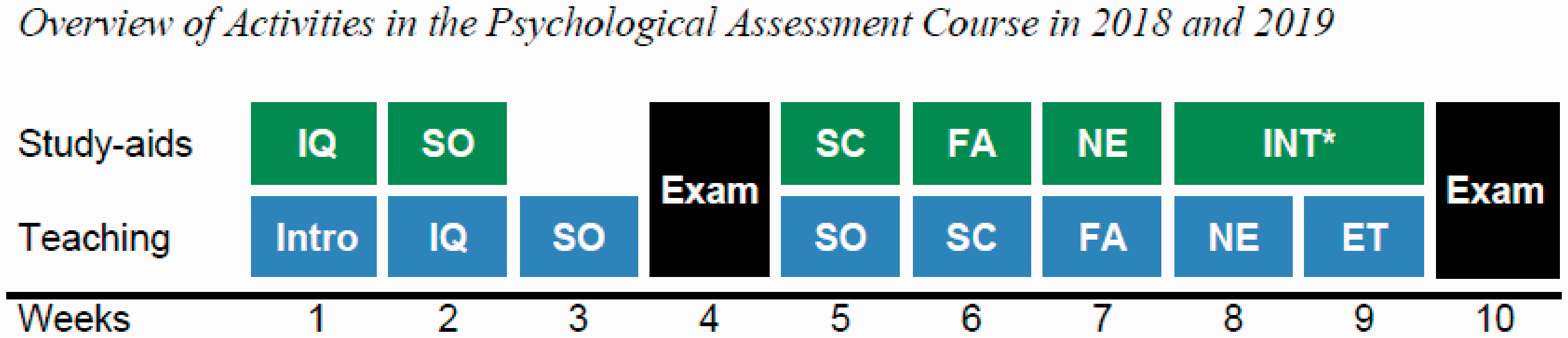

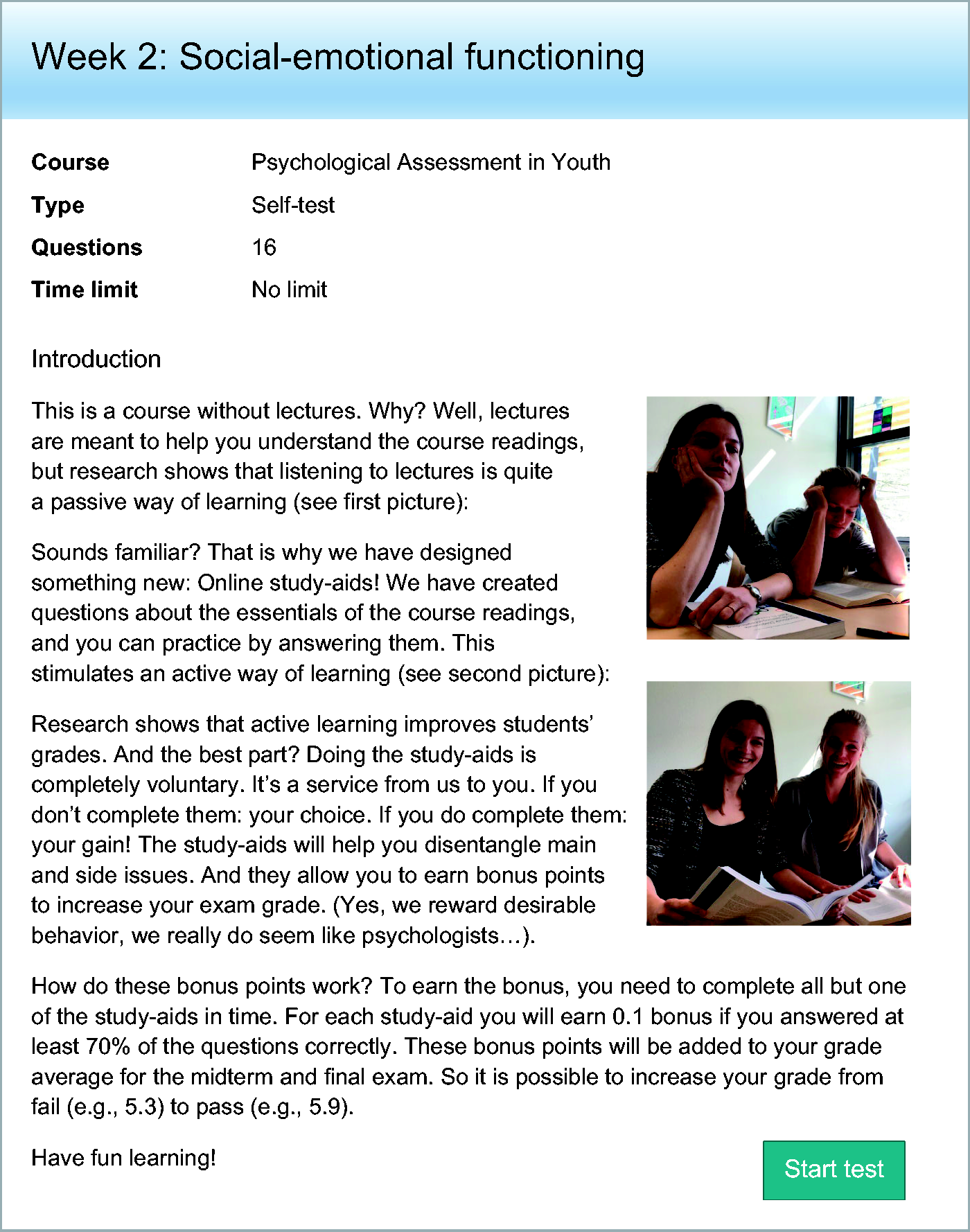

In 2018, we decided to drop the lectures and instead provide students with practice questions assessing their knowledge of the past week’s course readings, which we presented using the digital online assessment tool RemindoTest (Paragin, n.d.). We called these questions “study-aids,” communicating to students that they were meant to assist their learning. Each study-aid consisted of 10–15 questions. We developed five study-aids in 2018 and added an extra one in 2019. We distributed the study-aids across the course to stimulate students’ timely preparation for their seminars and exams (see Figure 1). All study-aids began with an introduction text explaining our rationale for using the study-aids (see Figure 2).

Overview of Activities in the Psychological Assessment Course in 2018 and 2019.

Introduction Text Explaining the Rationale of the Study-Aids.

We developed the questions through careful discussion and revision, aiming to: (a) provide structure by highlighting the most important concepts of the reading material; and (b) help students to integrate different parts of the texts. For each topic previously discussed in the lectures (i.e., intelligence, social-emotional factors, etcetera—see teaching context above), we developed questions reflecting the essential knowledge students needed to know. For example, one key learning goal was that students understood that each intelligence test assesses different facets of intelligence, and so we developed a question asking students to indicate which test assesses which facets of intelligence.

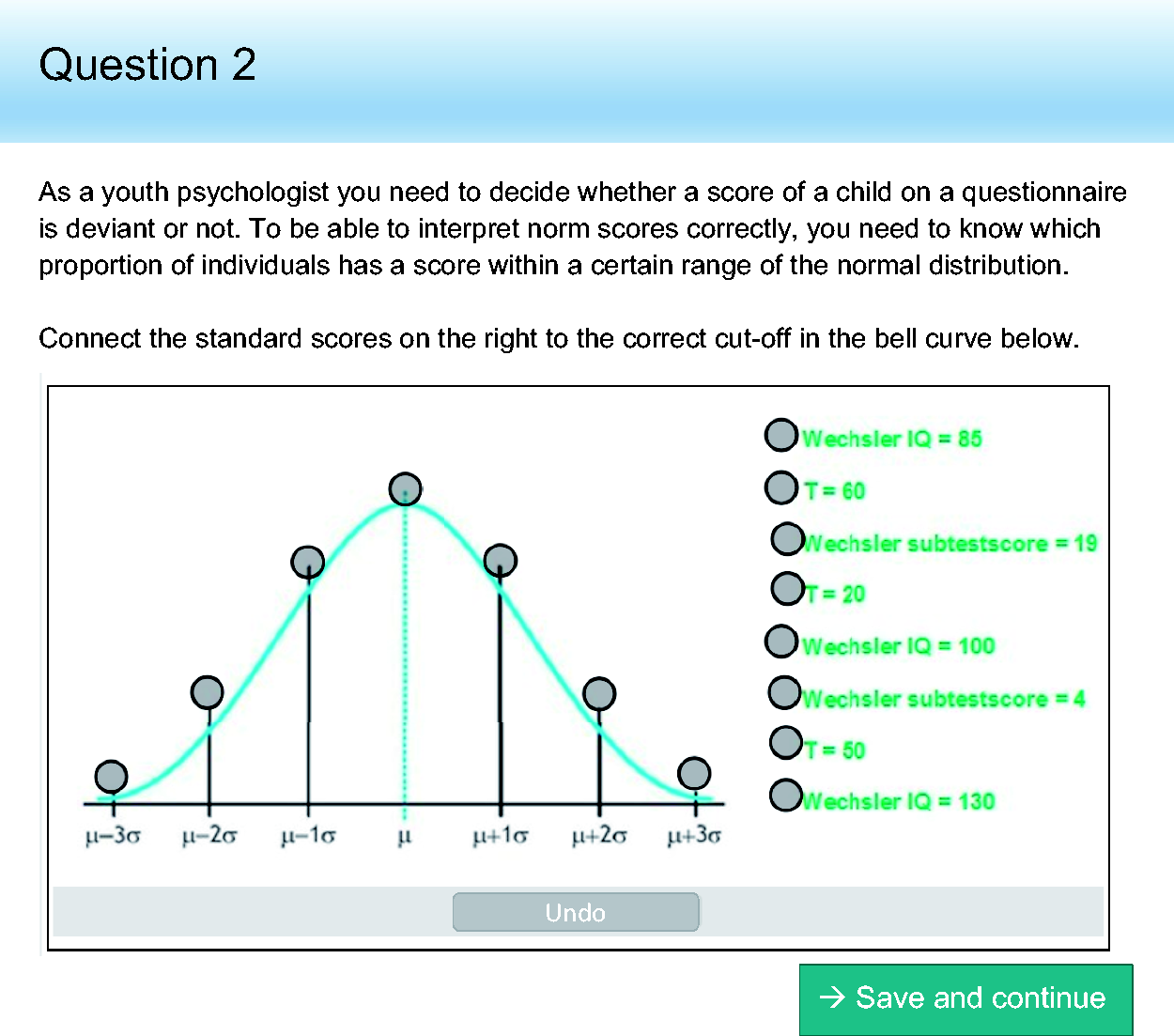

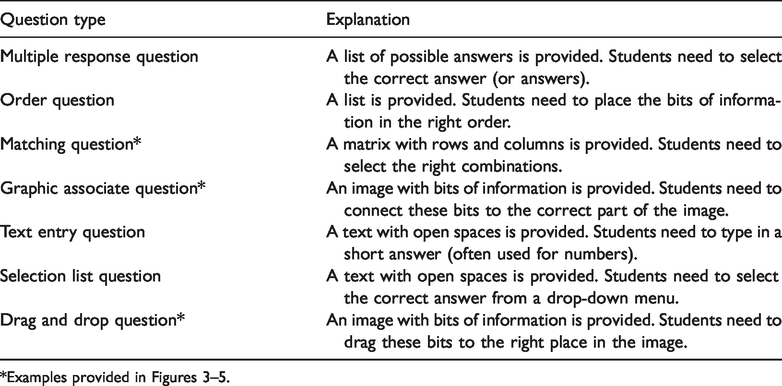

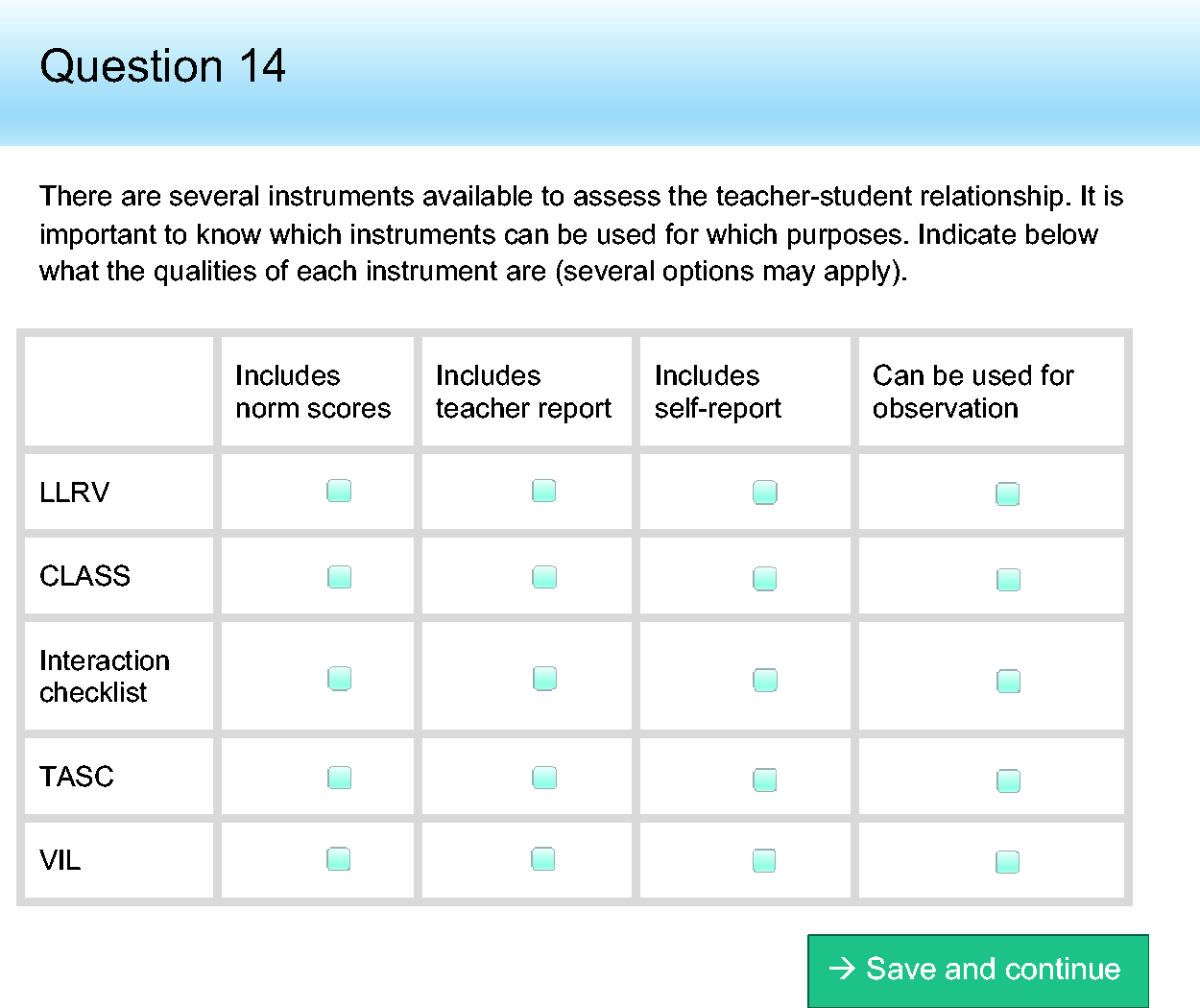

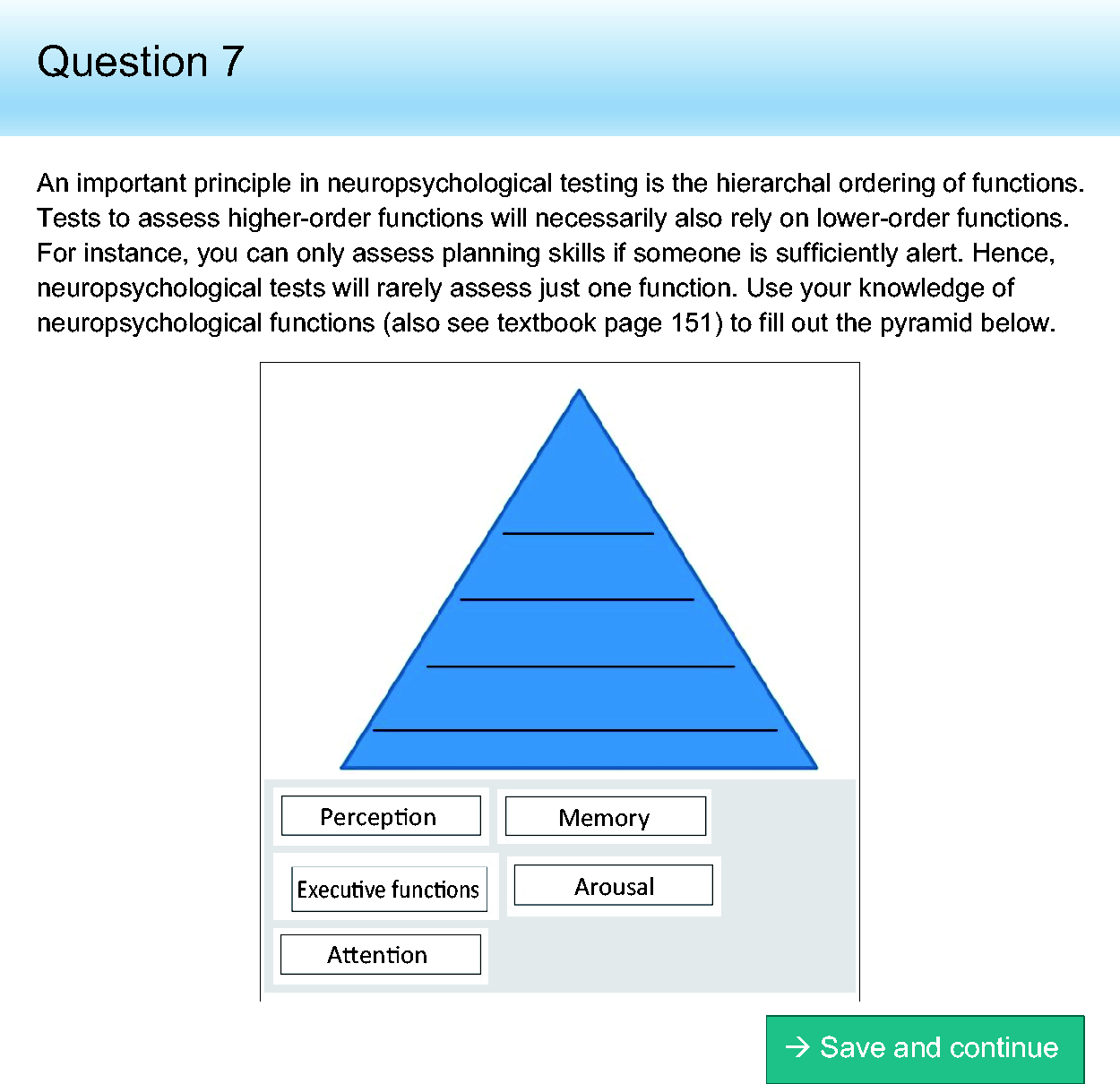

All questions were introduced by explaining the relevance of that particular question. For instance, the bell curve question (see Figure 3) was introduced as follows: “As a youth psychologist you need to decide whether a score of a child on a questionnaire is deviant or not. To be able to interpret norm scores correctly, you need to know which proportion of individuals has a score within a certain range of the normal distribution.” All questions were in closed format, so that students could receive immediate feedback upon completing the study-aids. RemindoTest includes several stimulating closed-format question types, which may help to visualize connections and make learning more fun (see Table 1 for an overview and Figures 3–5 for examples).

Example of a Graphic Associate Question.

Closed-Format Question Types Used in the Online Study-Aids.

Example of a Matching Question.

Example of a Drag and Drop Question.

Implementation in the Course

The study-aids were implemented to stimulate students’ use of two effective learning strategies: distributed practice and practice testing (Dunlosky et al., 2013). To stimulate distributed practice, each study-aid was online only the week before the seminar on that specific topic, forcing students to spread their learning activities over the semester. To stimulate practice testing, students received immediate automated feedback after completing each study-aid, which helped them assess their current knowledge level and knowledge gaps. If students’ answers were incorrect, the automated feedback referred them to the parts and pages of the reading materials that required more attention, thus helping them prepare for their seminars and exams. Students could access the questions, their responses, and the feedback of each study-aid they had completed throughout the semester.

Participation was voluntary and promoted using an incentive system (see Figure 2). Students received a bonus (i.e., a small increase in their exam grade) for each study-aid completed with at least 70% correct responses, but only if they completed at least four out of five study-aids (or five out of six in 2019). Students always received praise for completing a study-aid, even if they did not manage to get 70% correct. In that case, the study-aid result read: “Less than 70% of your responses were correct. This means that you will not receive a bonus this time. However, the most important outcome is that you have actively practiced with the course materials. Well done!”

Evaluation

The study-aids were introduced in the year 2018 (N = 94 students) and used again in the year 2019 (N = 84 students). We evaluated their effectiveness by looking at student participation, student satisfaction, and student performance. To evaluate whether performance increased after implementation of the study-aids, we compared exam results of the 2018 and 2019 cohorts with the 2017 cohort (N = 69). The course content and structure were the same for all cohorts, except that the 2018 and 2019 cohorts received online study-aids instead of lectures. Course dropout was low, with only two students quitting after the midterm exam in 2017 (2.8%), two students in 2018 (2.1%), and one student in 2019 (1.1%).

Student Participation

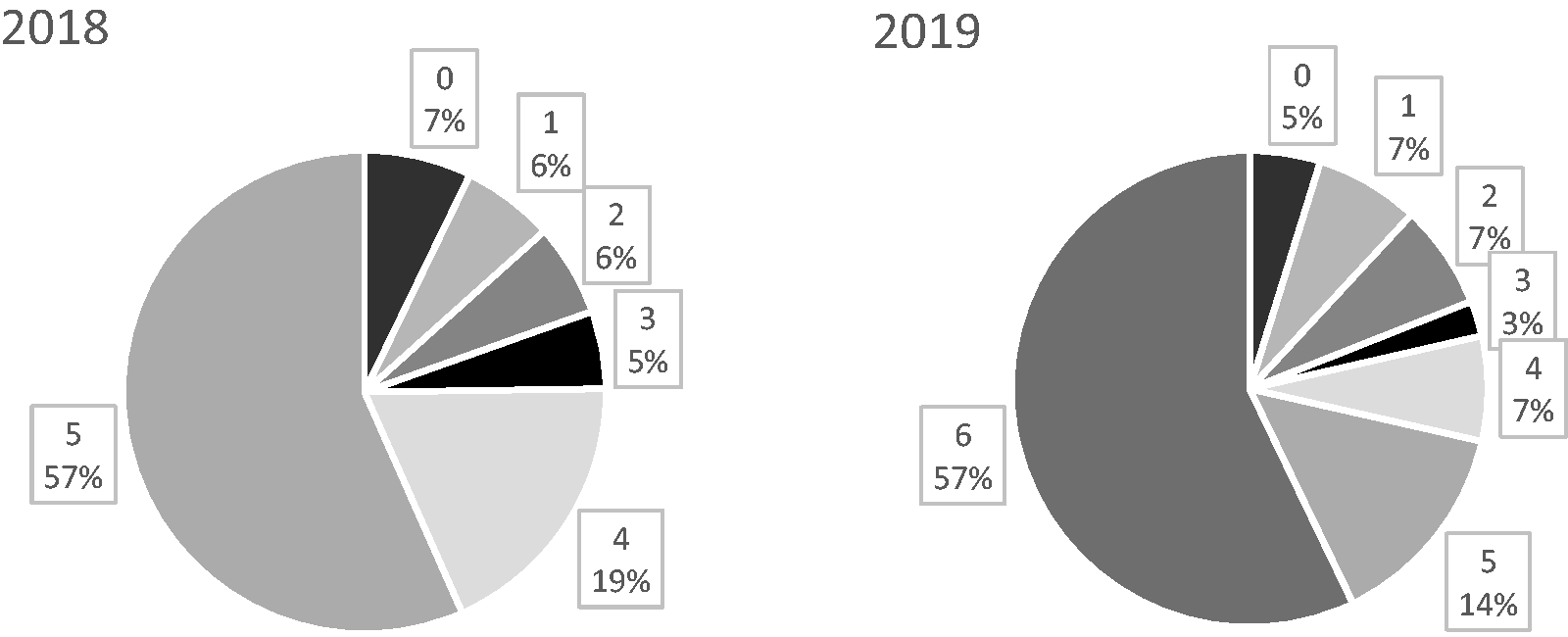

The first important question is how many students actually worked on the study-aids. After all, participation was voluntary. The study-aids were completed by a majority of the students (i.e., between 69% and 85%, with a mean participation rate of 79%). Moreover, we see that most students chose to complete all study-aids available (see Figure 6). The second largest group consisted of students who completed all but one study-aid—the minimum number to obtain a bonus.

Percentages of Students who Completed 0 to 6 Study-Aids During the Course.

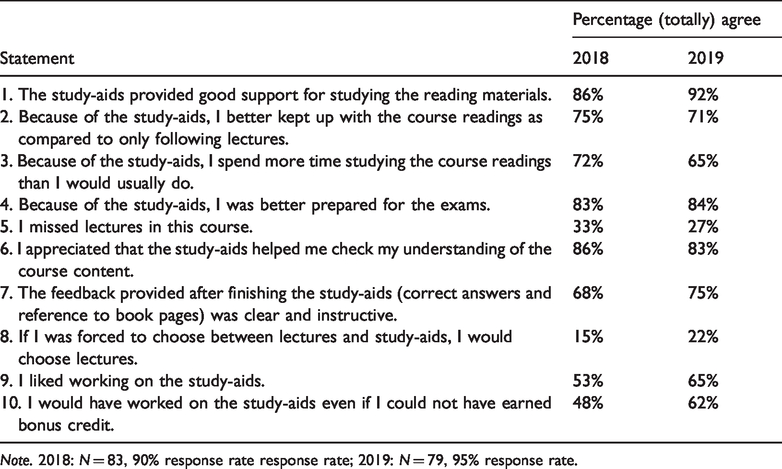

Student Satisfaction

Next, we investigated how students appreciated the study-aids. Student satisfaction was measured using several statements added to the standard student course evaluation (see Table 2). Response rate for this evaluation was 92% in 2018 and 96% in 2019. Students rated their agreement with each statement on a 5-point Likert scale (1 = totally disagree and 5 = totally agree). Table 2 presents the percentage of students agreeing with each statement (i.e., scoring 4 or 5 on the Likert scale). Satisfaction with the study-aids was high. Most students indicated that the study-aids provided good support during studying. Few students indicated preferring lectures over study-aids.

Percentage of Students Agreeing with Study-Aid Evaluation Statements in the Student Course Evaluation Questionnaire.

Note. 2018: N = 83, 90% response rate response rate; 2019: N = 79, 95% response rate.

Student Performance

To evaluate whether performance increased after implementation of the study-aids in the course, we compared exam grades from the 2018 and 2019 cohorts with the 2017 cohort, which still received the lecture-based version of the course. Each year, the course included two 40-question multiple choice exams administered near the middle of the semester (midterm exam) and at the end of the semester (final exam). Each exam contributed 25% to the final course grade. In the Dutch grading system, possible grades range from 1 to 10, with 5.5 or higher being passing grades. Of note, we excluded the bonus credits earned by completing the study-aids from these analyses. Students who were absent on the day of the midterm exam (zero, four, and three students in 2017, 2018, and 2019, respectively) received a substitute test at a later date and were not included in the analyses comparing performance across cohorts. No students were absent from the final exam.

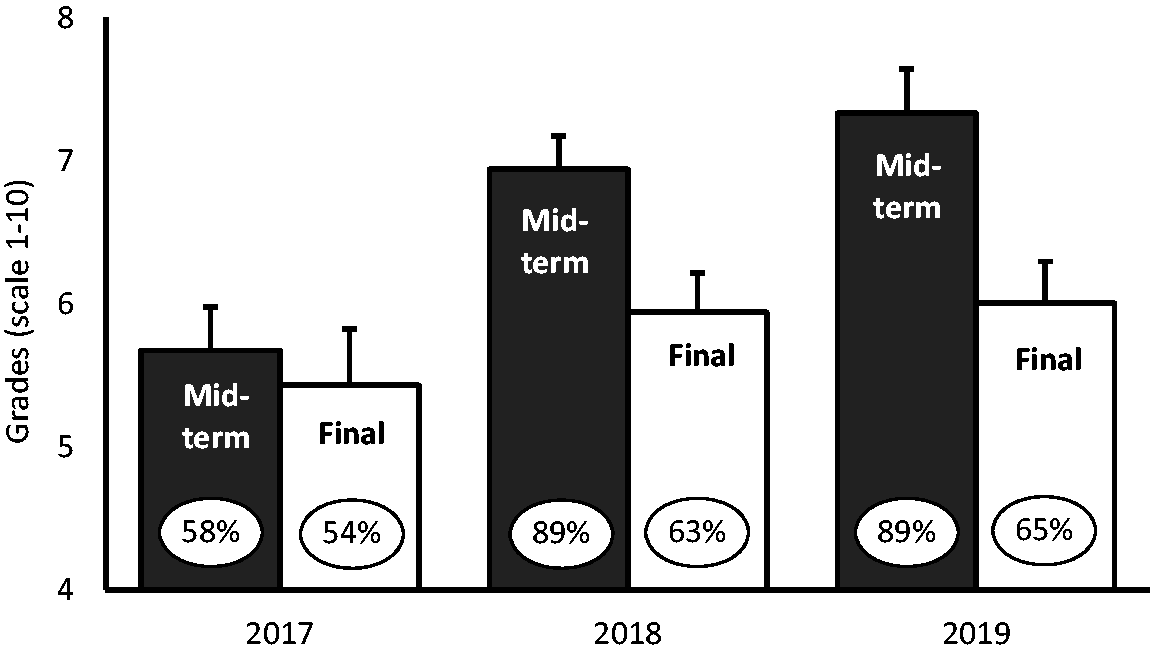

We compared exam grades between cohorts using two one-way independent ANOVAs: one for the midterm and one for the final exam. There was a significant effect of cohort on both the midterm and the final exam performance, F(2, 237) = 34.66, p < 0.001, ω2 = 0.22, and F(2, 239) = 3.54, p = 0.031, ω2 = 0.02, respectively. Next, we probed these effects using planned contrasts. There were large increases in students’ performance on the midterm exam upon introducing the study-aids: on average, students in 2018/2019 performed better than students in 2017, t(237) = 8.13, p < 0.001, r = 0.47 (see Figure 7). Increases on the final exam between 2017 and 2018/2019 were also significant, but smaller, t(239) = 2.65, p = 0.009, r = 0.17. Last, we tested whether exam performance increased between 2018 and 2019, perhaps as a result of introducing a sixth study-aid covering course material tested in the final exam. However, although we found small performance increases between the 2018 and 2019 cohorts on the midterm exam, t(237) = 2.03, p = 0.044, r = 0.13, we found no significant differences for the final exam, t(239) = 0.291, p = 0.772, r = 0.02.

Grade Average and Percentage Passed (%) for the Midterm and Final Exam Before (2017) and After (2018–2019) Introducing the Online Study-Aids.

Conclusions

This study shows that weekly online study-aids may stimulate effective learning. Most students completed the study-aids: the average participation rate was 79%. This is much higher than the participation rate observed for the face-to-face lectures that used to be part of the course (which were attended by less than 50% of the students). Almost all students were very positive about the study-aids, and most preferred them over lectures. Most importantly, students’ exam performance increased upon introducing the study-aids, suggesting that students actually learned more during the course.

One finding stands out as unexpected. Students’ performance on the final exam improved less than their performance on the midterm exam. One explanation may be that, in 2018, some course readings tested in the final exam were not included in the study-aids. Hence, we developed a sixth study-aid in 2019 to cover those readings. However, this did not improve student performance on the final exam. We now reside with an alternative explanation: that is, that students strategically chose to put less effort in our course’s exam to focus on the final exams of other courses (which they could afford after performing well on the midterm exam). This suggests that study-aids are most effective when students additionally invest in preparing for their exams.

Why were the online study-aids effective? We think that the study-aids stimulated students to use effective learning strategies (Dunlosky et al., 2013). Instead of bunch-studying all course readings shortly before the exam, the study-aids helped students spread their study activities across the semester (i.e., distributed practice). Moreover, students could practice with self-test questions and received computer-based feedback (i.e., practice testing), which may have enhanced their retrieval, helped them identify their knowledge gaps, showed them how to learn, and warmed them up for the exams (Roediger III & Karpicke, 2006; Yang et al., 2018). The study-aids provided informative cues to disentangle main and side issues and the questions were developed to address the core content and learning goals of the course.

Another possible reason for the study-aids’ effectiveness is that we developed them to motivate students, enhancing the chances that they would actually use them. We provided the students with meaningful rationales explaining why youth psychologists should know the answer to each particular question, gave them the opportunity to earn rewards, and provided them with instant, automated feedback. These practices are thought to enhance students’ feelings of competence and autonomy, increasing their motivation (Niemiec & Ryan, 2009). The high participation rates suggest that the incentive system was successful. A last possible reason for the study-aids’ effectiveness is that the format of our questions stimulated students to think about the content, make connections, and actively engage with the material. Indeed, such active ways of learning enhance memory as compared with passive reception (Markant et al., 2016). Thus, our study-aids combined many features that may have contributed to their success.

We chose to implement the study-aids using an online platform, RemindoTest (Paragin, n.d.). We think that this largely contributed to the success of the study-aids, both for students and teachers. By using RemindoTest rather than traditional multiple choice quizzes or open-ended questions, we could implement practices that may enhance students’ learning and motivation (such as varied engaging question formats, immediate online feedback, and instant incentives). Moreover, using online study-aids may be time-saving for teachers. Of course, programming the questions in the online tool RemindoTest is quite time-consuming. Compared with traditional open-ended questions, however, the process of feedback and credit assignment is much more efficient. If used as a replacement of face-to-face lectures, the study-aids may even reduce the overall workload of teachers, especially when they are recycled in the years after their initial introduction.

Although our findings and experience with the study-aids in our course were positive, we want to stress that the small scale and the design of this study do not allow firm scientific conclusions about the effectiveness of using online study-aids in psychology courses in general. We did not use a randomized design, and so cannot rule out the possibility that our effects are partly driven by cohort effects. Further, we do not know how our study-aids affect future learning behavior and motivation (although anecdotally, some students remarked that the study-aids inspired them to start studying differently). We hope that this study will inspire teachers to reconsider the design of their courses (and assess its effectiveness). Using study-aids may be an effective and efficient way to stimulate active learning in our students, the psychologists of the future.

Footnotes

Author Note

Anouk van Dijk is now at the Research Institute for Child Development and Education, University of Amsterdam.

We would like to thank Oscar Buma and Jurgen van Oostenrijk for their technical assistance with RemindoTest. We have no known conflict of interest to disclose.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.