Abstract

Technological advances have given us tools—Google, in particular—that can both augment and free up our cognitive resources. Research has demonstrated, however, that some cognitive costs may arise from our reliance on such external memories. We examined whether pretesting—asking participants to solve a problem before consulting Google for needed information—can enhance participants’ subsequent recall for the searched-for content as well as for relevant information previously studied. Two groups of participants, one with no programming knowledge and one with some programming knowledge, learned several fundamental programming concepts in the context of a problem-solving task. On a later multiple-choice test with transfer questions, participants who attempted the task before consulting Google for help out-performed participants who were allowed to search Google right away. The benefit of attempting to solve the problem before googling appeared larger with some degree of programming experience, consistent with the notion that some prior knowledge can help learners integrate new information in ways that benefit its learning as well as that of previously studied related information.

Introduction

The Internet has opened a virtual door to vast amounts of information. With a single search, we have immediate and nearly ubiquitous access to a wealth of facts and knowledge. Additionally, the Internet has become a convenient way to offload information that we think we may need in the future, rather than trying to store such information in our own memories (Clark & Chalmers, 1998; Nestojko et al., 2013; Risko & Gilbert, 2016). Indeed, recent research shows that people tend to rely on the Internet for accessing information, even when doing so is neither necessary nor convenient (Storm et al., 2017).

These uses of the Internet all seem like benefits—allowing us to access, share, and store an enormous amount of information, and with minimal effort. Might, however, they come with associated costs? In particular, do they lead us away from engaging in processing that might support our ability to recall needed information—from our own memories—at a later time? The ease of retrieving information via Google goes against research findings indicating that for information to be well learned and accessible for transfer in the future, it needs to be learned under conditions that present some difficulties or challenges to the learner (e.g., Bjork & Bjork, 2011). By relying on the Internet to store and to provide access to information that we think we may need later, we may be bypassing the very processes that are known to enhance learning and retention.

Can Google Be Made a More Effective Tool for Learning?

The goal of the present research was to explore whether there are ways to use the Internet to promote the subsequent retention of needed information. Specifically, we wondered whether asking people to think first, before consulting Google, about what might be a correct answer to a question—or solution to a problem—might be advantageous for learning. That is, before using Google to find an answer to a given question, could an attempt to search for relevant information in memory strengthen the representation of that pre-existing information? Moreover, could it enhance the learning of new information encountered on Google?

Existing research on pretesting shows that taking a test prior to being exposed to the to-be-learned information can enhance one’s learning of that information (e.g., Grimaldi & Karpicke, 2012; Kornell et al., 2009; Little & Bjork, 2016; Richland et al., 2009; for related evidence, see work on interim testing effects, Wissman et al., 2011; forward testing effects, Pastötter & Bäuml, 2014; Szpunar et al., 2013; Szpunar et al., 2008; and test-potentiated new learning, Little & Bjork, 2016; Yue et al., 2015), thus indicating that the answer to both of these questions could well be “yes.” Thus, when learners turn immediately to the Internet for answers, rather than first attempting to retrieve information on their own (Storm et al., 2017)—they may well be bypassing a potentially productive process and thereby limiting their learning outcomes.

The Benefits of Pretesting

In a typical pretesting study, a pretest condition is compared to some form of baseline condition. In the pretest condition, participants try to answer questions based upon a set of to-be-learned materials before being given the opportunity to study that set of materials; whereas, in the baseline condition, study of the to-be-learned material is not preceded by any kind of pretest. On a later final test given to all participants, those in the pretest condition tend to outperform those in the baseline condition, a result that is observed even when participants in the baseline condition are given additional time to study the to-be-learned material and participants in the pretest condition are not provided with corrected feedback (Carpenter & Toftness, 2017; Little & Bjork, 2016; Peeck, 1970; Pressley et al., 1990; Richland et al., 2009; Rickards et al., 1976).

One explanation offered for the benefits of contemplating a question prior to being given the information necessary to answer that question suggests that learners’ curiosity about the to-be-learned material is thereby increased (e.g., Berlyne, 1954), leading to their enhanced attention, elaboration, and better organization of it during subsequent study as compared with learners who do not try to answer questions about the to-be-learned material prior to its presentation (Hannafin & Hughes, 1986; Peeck, 1970; Pressley et al., 1990). Another explanation for the benefits of pretesting is the suggestion that pretesting may provide learners with a more effective metacognitve evaluation of what they know and do not know (e.g., Bjork et al., 2013), thereby making their encoding of the subsequently presented information more efficient.

Other factors may influence whether, and to what extent, learners benefit from pretesting. Huelser and Metcalfe (2012), for example, have shown that learners are more likely to benefit from pretesting when they generate information that is related to the subsequently presented, to-be-learned information. Thus, having some degree of relevant prior knowledge that could be activated during a pretest might be critical for observing benefits of pretesting.

Aims of the Present Study

The present study was designed to address several questions. First, we sought to examine whether attempting to tackle a challenging problem before looking up the solution on the Internet—akin to taking a pretest—could enhance one’s conceptual understanding and retention of the subsequently to-be-learned information. Perhaps such an attempt would make learners more cognitively alert and attentive to the various types of new content encountered, leading to more elaborative and deeper encoding of it. Additionally, we wanted to explore whether such pretest activity might also benefit one’s retention of previously studied relevant information. That is, could the attempt to tackle a challenging problem before searching for the solution on the Internet enhance not only one’s learning of the additional relevant information found there, but also one’s memory for the previously studied relevant information? Such an effect seems possible given that learners in the present study might draw upon the information that they have just learned as well as previous existing relevant knowledge when attempting to generate solutions to a given problem. And, finally, would the occurrence of these potential benefits of pretesting be affected by the degree of a participant’s pre-existing relevant knowledge?

Basic Experimental Design

To address these aims, we examined the potential learning benefits of a pretest problem-solving task on knowledge formation of fundamental concepts related to computer programming. In a first study phase, all participants studied information related to computer programming, followed by a second phase in which they were given the task of solving a challenging computer problem related to those concepts. Critically, this problem was constructed to be impossible to solve with just the information presented so far, allowing us to give a type of pretest to some but not all of our participants (the pretest vs. no-pretest groups). Participants in the no-pretest group were given immediate access to the needed information via a Google search, while those in the pretest group had to spend some time trying to solve the problem before gaining such access. Lastly, all participants were given a delayed multiple-choice transfer test to assess their retention and understanding of the presented information as well as their ability to transfer it to new contexts.

To examine the role of prior experience, we divided all participants on a post hoc basis into two groups based on their responses to a questionnaire: one in which participants had some degree of prior experience with computer programming and one in which they had no prior experience with computer programming. We predicted that participants with some experience would be more likely to benefit from a pretesting experience than would those with no experience owing to their being more capable of generating—during the pretest—more helpful connections to the to-be-encountered information.

Method

Participants

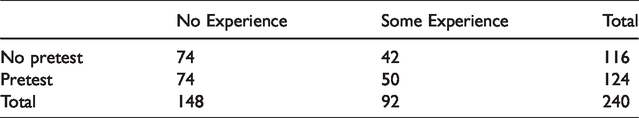

The participants were 240 undergraduate participants (194 identified as women of whom 74 reported having programming experience and 120 reported having no experience; 45 identified as men of whom 18 reported programming experience and 27 reported no programming experience; and 1 identified as non-binary; mean age for all participants = 20.3 years, mean age for participants with experience = 20.20, mean age for participants with no experience = 20.45, range 18–42 years) from the University of California, Los Angeles subject pool and all received course credit for participating. Participants were classified into two groups (some experience and no experience) based on a self-report questionnaire given at the end of the study, and the numbers of each type ending up in the pretest and no pretest conditions are shown in Table 1.

Number of participants in each condition.

The 148 participants classified as having “No Experience” reported having not taken any kind of programming or statistics course that would have exposed them to a programming language or code. The 92 participants classified as having “Some Experience” reported having taken a class (or classes) where programming language or code was introduced, but that the class was not explicitly focused on teaching programming skills (e.g., psychological statistics or biological quantitative reasoning). Such courses provide students with exposure to coding commands in R or other languages to analyze data, which could facilitate the learning of programming principles in the present study but would not be sufficient to allow participants to solve the pretest problem using only their existing knowledge. Participants with more than a year of programming experience and extensive knowledge of various computer languages, such as Python or C++, or who had taken a programming course or a computer science course in any language, were excluded.

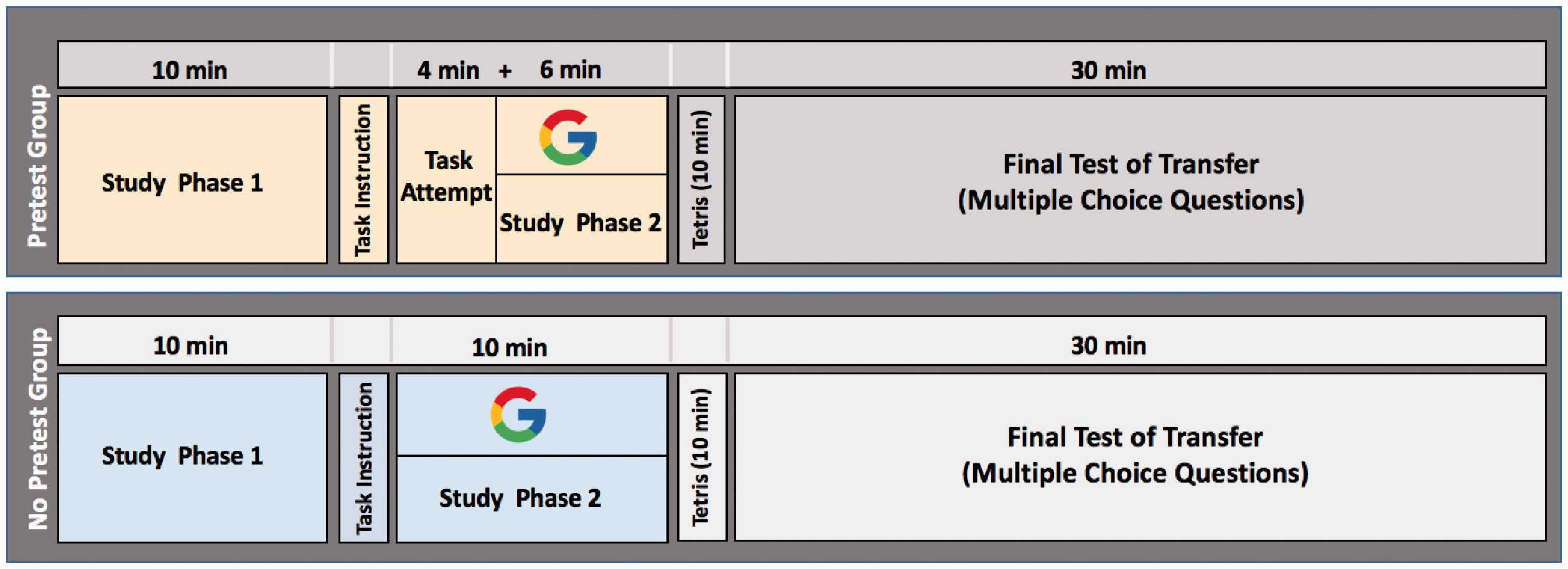

The present experiment involved two study phases, as illustrated in Figure 1. In Phase 1, all participants studied fundamental computer programming principles and concepts about which they were told they would later be tested. In Phase 2, participants were presented with a challenging computer programming task—one for which they had not yet been exposed to all of the information necessary to solve. During this phase, half of the participants were randomly assigned to try to solve the computer programming task before being allowed to use a simulated Google search experience to find additional task-relevant information, including that necessary for solving the task (i.e., the pretest group). The other half did not have to try to solve the task first, but instead were allowed to search for the task-relevant information immediately (i.e., the no-pretest group). Finally, to assess whether the pretest attempt enhanced the learning of both previously presented concepts, as well as subsequently presented concepts, a final multiple-choice test containing transfer-type questions was administered to both groups for all concepts presented in the experiment; that is, both those encountered before and after presentation of the computer programming task.

Materials

All study and test materials were developed by the experimenters, including the information participants encountered when using the simulated Google search engine. Specifically, participants learned a modified form of Python syntax, a programming language known for its limited use of syntactic symbols (such as semi-colons, parentheses, tildas, etc.) to accomplish complex tasks. Because our goal was to teach and test understanding of programming concepts, Python’s intuitive syntax allowed us to limit the working-memory burden on participants during this learning attempt. The programming content was challenging, yet—as determined by pilot data—appropriate for novices. The materials presented during Study Phase 1 and Study Phase 2 were matched for their conceptual difficulty and the number of to-be-learned programming principles.

Study Phase 1

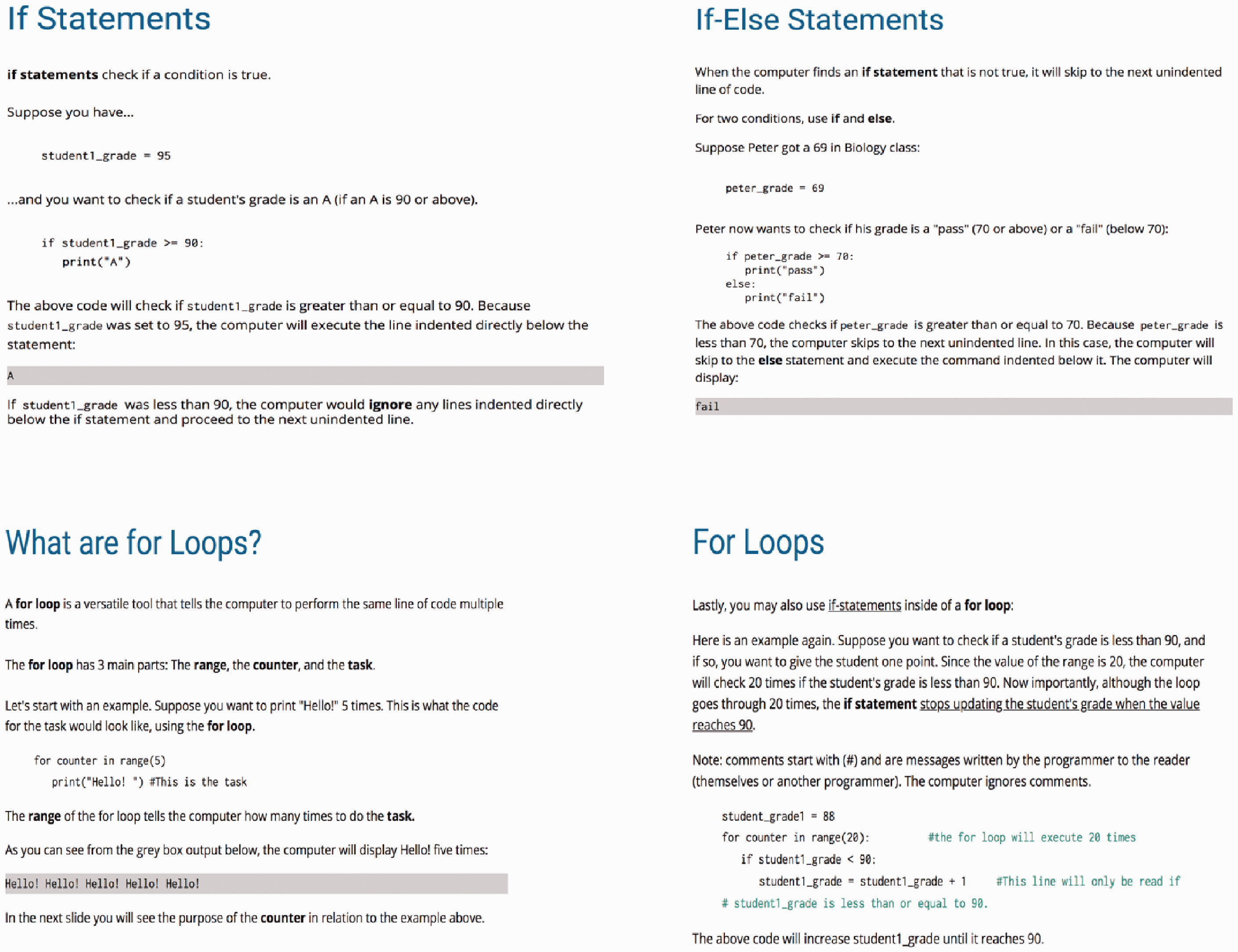

During Study Phase 1, participants received instructions regarding three fundamental programming concepts: (a) how to store and replace a variable; (b) when and how to use if-statements; and (c) the concept of a for loop, which were introduced in a context requiring the manipulation of only one variable. For example, participants studied how to assign a grade to one student (e.g., peter_grade = 69) and how to check if that student’s grade is a “pass” (70 or above) or a “fail” (below 70).

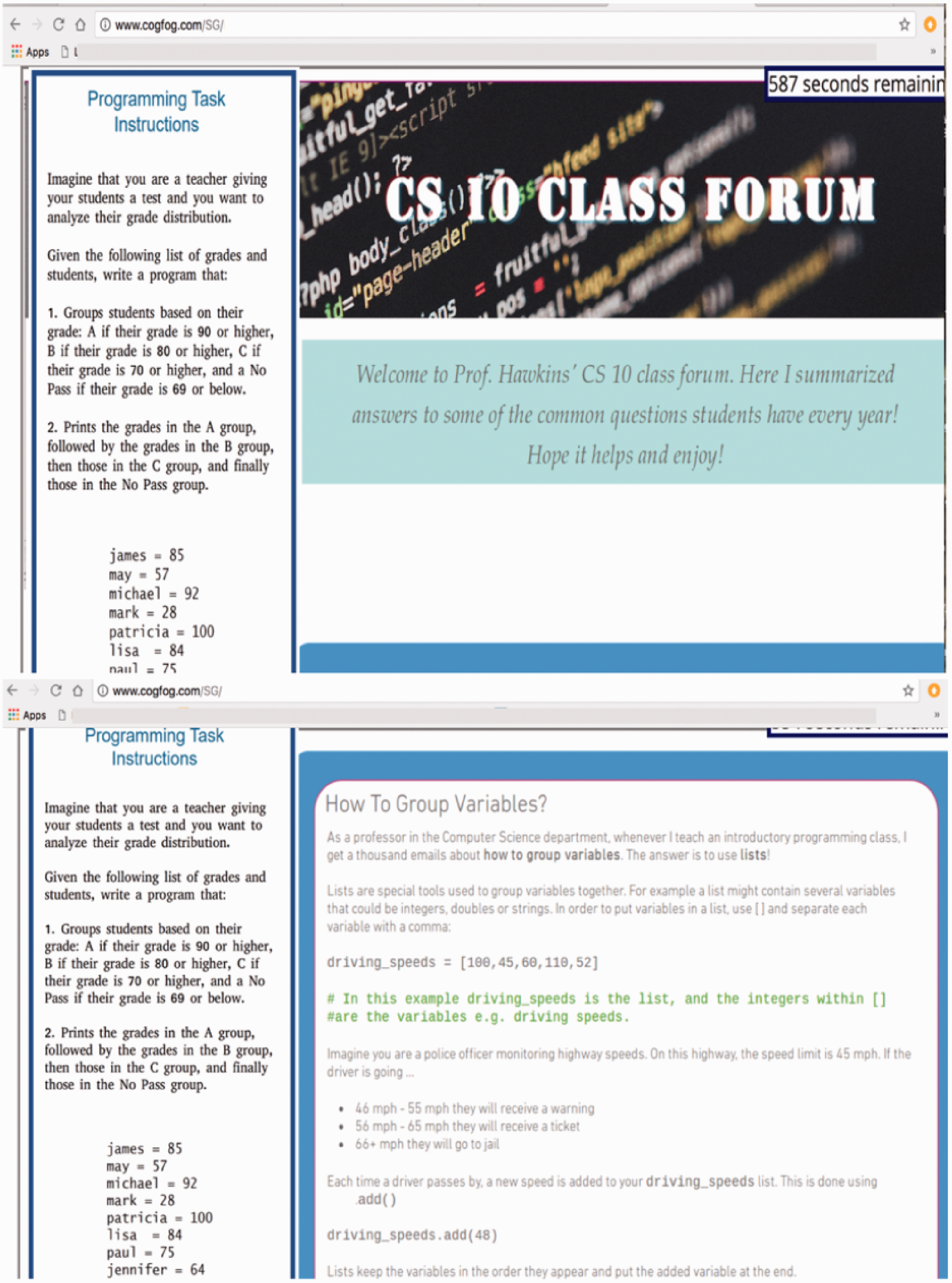

Information was mostly presented in the form of short text passages (i.e., mini-tutorials) accompanied with examples, as depicted in Figure 2.

Study Phase 2

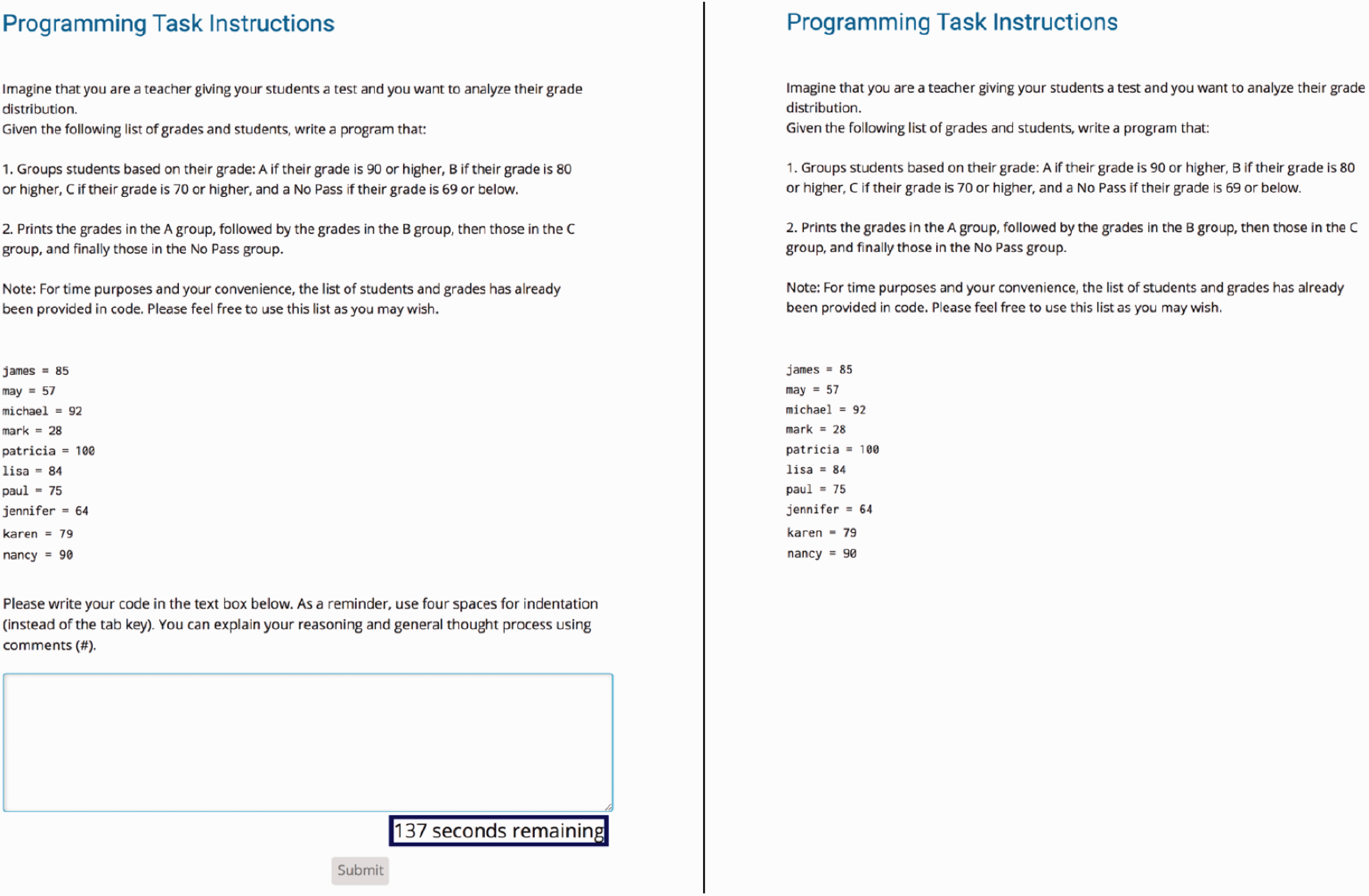

During Phase 2, participants were presented with a challenging programming task, similar to a homework problem in an actual programming course. In this task, for which the exact instructions appear in Figure 3, participants were asked to imagine themselves as a teacher who wanted to write programming code that would allow grouping of students based on their exam grades—a programming task requiring use of some concepts studied in Phase 1 (e.g., how to manipulate one variable). Importantly, however, this task also required some concepts only available via the Google search (e.g., how to manipulate multiple variables) to which participants assigned to the pretest group did not have immediate access. Instead, they were instructed to attempt to solve the task for a while before being given such access and, furthermore, no corrective information was provided to them during their problem-solving attempts. In contrast, participants in the no-pretest group did not attempt to solve the task before being able to access Google where the relevant information could be found.

A schematic representation of Study Phase 1 and Study Phase 2 for the pretest group (top panel) and the no-pretest group (bottom panel), respectively.

Examples of the computer programming content presented during Study Phase 1 in which all participants learned how to manipulate one variable using if statements and a for loop function.

The information necessary to solve the programming task could be found on the simulated Google page, which became available to participants once they were allowed to use Google. A simulated Google page—instead of an actual Google page—was created to rule out potential differences in the nature and quality of individual searches made by participants. Thus, every participant landed on the same web pages and was exposed to the same content (i.e., a continuation of the information presented in Study Phase 1, but now with an added focus on manipulating multiple variables, the missing information necessary for solving the programming task).

In order to ensure that all participants would believe they were searching the “real” Google, we presented everyone with “I’m Feeling Lucky!” instructions before they were able to type their search question into a made-up Google search bar. These instructions emphasized that after being directed to the Google search bar, participants should type in a search phrase relevant to the programming task and then click on the “I’m Feeling Lucky!” button.

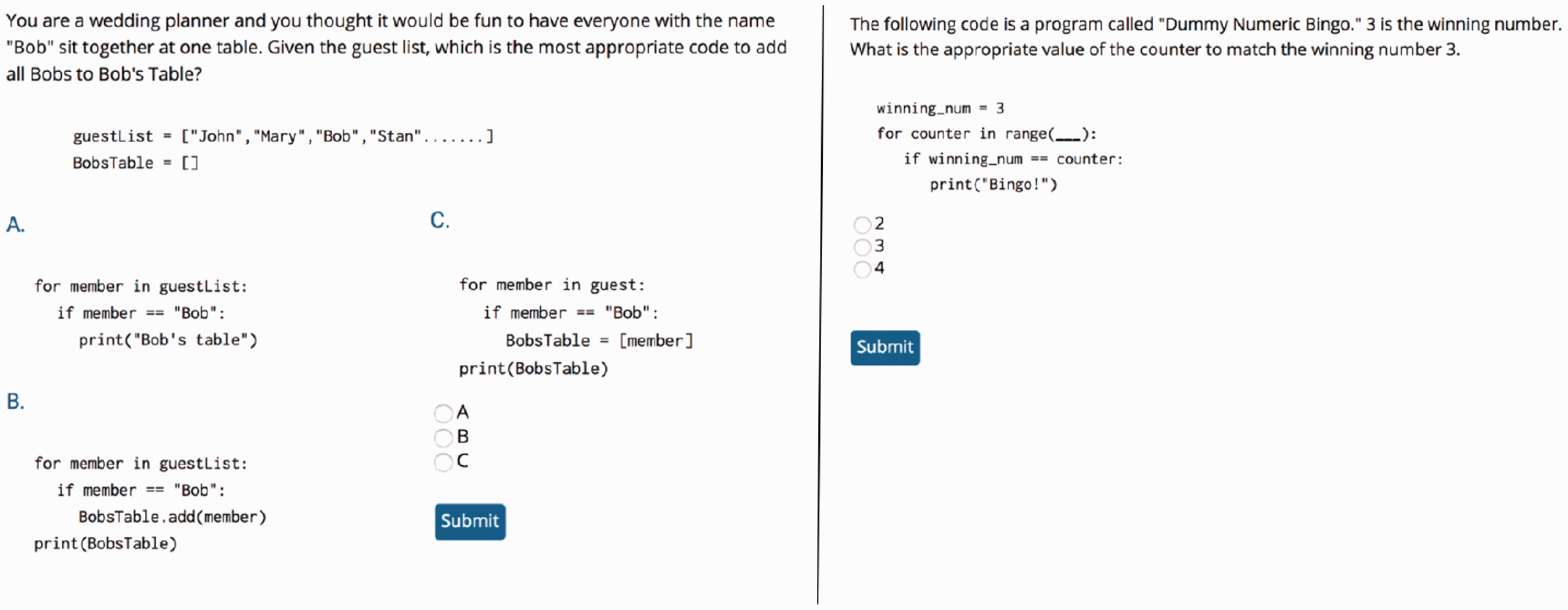

Computer programming task instructions. Solution of the programming task required some elements previously studied in Phase 1 (i.e., how to manipulate one variable using if statements and a for loop function) plus some elements that were only exposed in the information available via the Google search (i.e., group and index multiple variables using a list function.) Instructions for the pretest participants as well as an empty box in which they were to record their problem-solving attempts are shown on the left side of Figure 3. Instructions for the no-pretest participants are shown on the right side of Figure 3.

It was explained to all participants that after entering their search phrase and then clicking on the “I’m Feeling Lucky” button, they would be sent directly to the website that would best address their query, rather that being shown a list of search results among which they would have to choose, as is the usual case with Google.

The simulated website that was then opened in response to all participants’ Google search request took the form of a Google class forum on how to group and index multiple variables using a list function, as exemplified in Figure 4. It is important to note that if participants clicked “Search” instead of “I’m Feeling Lucky!” the computer screen still advanced to the same simulated website.

The simulated Google page, which was the same for all participants, appeared in the form of a class forum on how to group and index multiple variables using a list function.

In the Google class forum, a problem related to the pretest task was illustrated showing participants how a computer program can assess from a list of recorded driving speeds (driving_speeds = [100,45,60,110,52,48]) how many warnings, tickets, and arrests a police officer has warranted in one day using lists, which is a powerful programming tool to group variables (warning = []; ticket = []; jail = []). It is important to point out that the pretest task instructions continued to be available for both pretest and no-pretest participants for the duration of Study Phase 2, as indicated in Figure 4 on the left side of the computer screen.

Final Test

The final test materials consisted of 29 multiple-choice transfer questions on concepts from both study phases, and two of them are illustrated in Figure 5.

The final test materials consisted of multiple-choice transfer questions on concepts from both study phases. As illustrated by the two examples shown in Figure 5, each question had three possible answers: one correct and two incorrect alternatives.

Each question had three possible answers: one correct and two incorrect alternatives. The presentation order of the test questions was block-randomized and counterbalanced across participants to control for order effects. While some participants received questions pertaining to Study Phase 1 content before seeing questions related to Study Phase 2, other participants received questions pertaining to Study Phase 2 content before seeing questions related to Study Phase 1. The questions were based on ones often used in programming courses: namely, participants were presented with a small task and then asked to choose from a list of possibilities the best code to accomplish that task. Incorrect options were inspired by common programming logic mistakes and misunderstandings of programming principles.

Procedure

The present experiment consisted of two study phases (Study Phase 1 and Study Phase 2) and a final test of which a schematic illustration is shown in Figure 6.

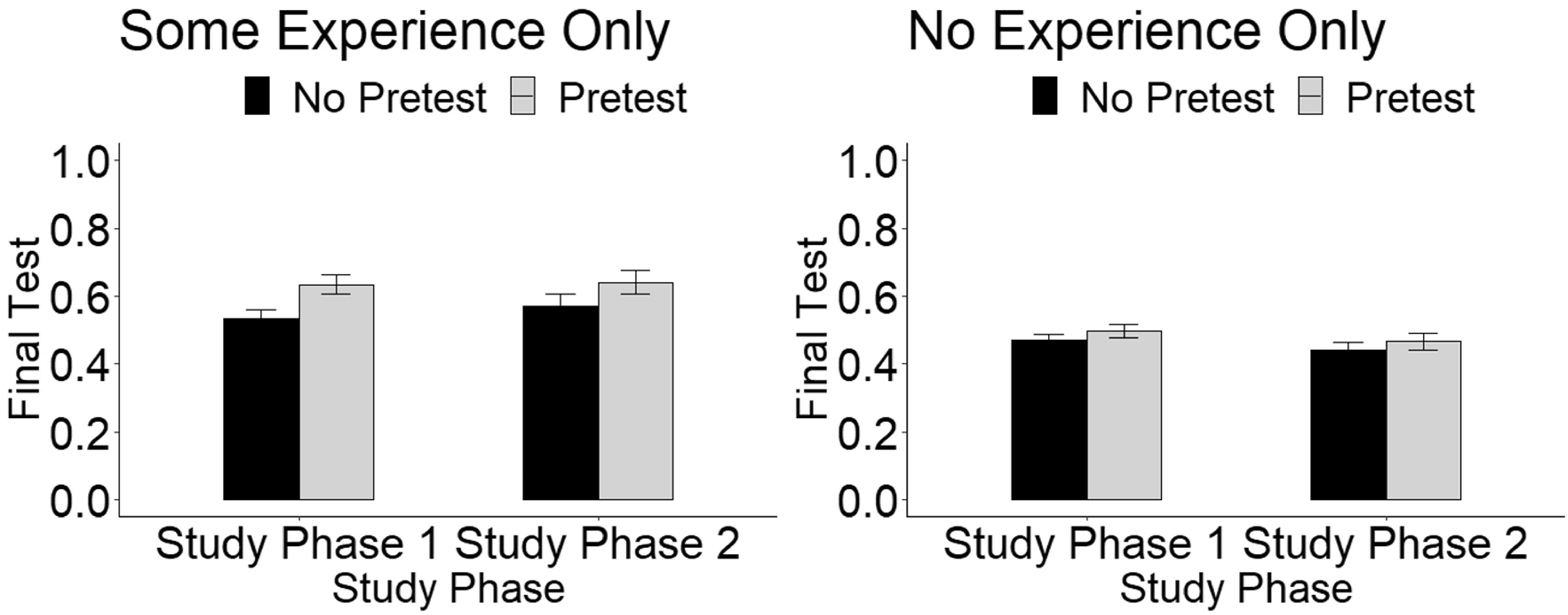

Final test performance on materials from Study Phase 1 and Study Phase 2 as a function of Practice Condition (Pretest and No Pretest) for participants with Some Experience and No Experience.

Study Phase 1 began with participants reading text passages on fundamental computer programming concepts, with each passage presented one at a time on a lab computer. Reading was self-paced except for some passages presenting more challenging concepts (e.g., for loop), and participants were required to stay on such passages for at least 10 seconds before being allowed to move on to the next passage.

Study Phase 2 with the challenging programming task followed immediately after Study Phase 1. Pretest participants were required to attempt to solve the task for at least 4 min before being allowed to consult “Google.” In contrast, no-pretest participants were given immediate access to “Google” to aid with the programming task. In order, however, to ensure that the no-pretest participants had enough time to read the instructions for the to-be-solved programming task, they were required to wait for 20 s on the task instruction page before the computer screen automatically advanced to the simulated Google search.

For both groups, after typing in their search terms and clicking on the “I’m Feeling Lucky!” option, participants were shown the same simulated web page. Critically, the time allotted to explore the web page differed between the two groups. Pretest participants only had 6 min to explore the web page containing the missing information necessary for performing the task after having to spend 4 min trying to solve the task (10 min total). In contrast, no-pretest participants had the entire 10 min to explore the web page containing the additional information necessary for solving the task.

Considering this difference in the available time to encode Study Phase 2 content, it would seem possible that the no-pretest participants might learn more of this content than would the pretest participants. If, however, attempting to solve a problem before being exposed to its solution—even when one’s problem-solving attempts are not successful—promotes one’s learning of subsequently presented relevant information, then perhaps the pretest participants might nevertheless outperform the no-pretest participants on the final test.

Following Study Phase 2 and a subsequent 10-min distractor task, all participants were given the final multiple-choice transfer test on which they had up to 1 min to answer each question. At the end of the experiment, participants filled out a questionnaire to assess how much they enjoyed the experiment, whether they experienced any technical difficulties, and their level of programming experience before participating in the study. Then they were debriefed and thanked for their participation.

Results

Pretest Performance

Responses to the pretest task were graded by research assistants who were blind to participant condition. The research assistants were instructed to follow a rubric out of 8 points that focused on overall concepts rather than syntax. A Welch’s Two Sample t-test showed that participants with some experience (M = 2.56, SD = 1.67) produced answers that were significantly better than participants with no experience (M = 1.45, SD = 1.64), t(104.06) = 3.67, p < 0.001, d = .67, CI95% = [0.51,1.72]. As expected, however, none of the participants generated the correct answer to the pretest problem.

Final-Test Performance

Final-test performance on materials from Study Phase 1 and Study Phase 2 as a function of Practice Type (Pretest and No Pretest) for participants with Some Experience and No Experience is illustrated in Figure 6. To analyze this pattern of results, we first conducted a 2 (Practice Type: Pretest vs. No Pretest) x 2 (Experience: Some vs. No) x 2 (Study Phase: 1 vs. 2) mixed-design ANOVA, where practice type and experience were between-subjects factors, and study phase (1 vs. 2) was a within-subjects factor. This analysis revealed a significant main effect of practice type, F(1, 236) = 5.65, p = .018, CI95% = [.01, .07], d = 0.31, such that final-test performance by participants who completed a pretest (M = .56, SD = .18) was significantly better than that of participants who did not complete a pretest (M = .50, SD = .18). A significant main effect of experience was also revealed, F(1, 236) = 28.80, p < 0.001, CI95% = [.06, .12], d = 0.71, such that final test performance by participants with some experience (M = .60, SD = .17) was significantly better than that of participants with no experience (M = .47, SD = .17). No significant main effect of study phase was observed, performance on Study Phase 1 (M = .52, SD = .18) did not significantly differ from performance on Study Phase 2 (M = .51, SD = .23), F(1,236) = .09, p = .77, CI95% = [−.02, .01].

The three-way interaction (Study Phase by Practice Type by Experience) was not significant, F(1, 236) = .42, p = .52, CI95% = [-.01, .02], nor were the interactions between Study Phase and Practice Type, F(1, 236) = .48, p = .49, CI95% = [−.01, .02] or Practice Type and Experience, F(1, 236) = 1.66, p = .20, CI95% = [-.01, .05]. The interaction between Study Phase and Experience was significant, F(1, 236) = 4.92, p = .03, CI95% = [.00, .03], partial-η2 = 0.02, such that final-test performance based on information presented in each Study Phase depended on the participant’s prior experience.

Although the three-way interaction was not significant, because one of our a priori predictions was that final-test performance would depend upon practice type—that is, whether participants engaged in a pretest or problem-solving activity before being allowed to access the information presented on the Google page—we probed the main effect by conducting more targeted comparisons to see whether a significant pretesting effect was observed for each group of participants. Another a priori hypothesis was that participants with some experience would perform better on both Study Phase 1 and Study Phase 2 materials than would the participants with no experience.

First, we focused our analysis on participants with some programming experience by conducting a 2 (Practice Type) x 2 (Study Phase) mixed-design ANOVA. A significant main effect of Practice Type was observed, such that participants in the Pretest condition (M = .64, SD = .23) significantly outperformed participants in the No Pretest condition (M = .55, SD = .20), F(1, 90) = 4.18, d = .31, p = .04, CI95% = [.00, .24]. Two planned comparisons indicated that this difference was statistically significant with regard to Study Phase 1 performance (Pretest: M = .64, SD = .18; No Pretest: M = .53, SD = .17), t(90) = 3.06, d = .54, p < .01, CI95% = [.04, .18], and marginally significant with regard to Study Phase 2 performance (Pretest: M = .64, SD = .25; No Pretest: M = .57, SD = .24), t(90) = 1.92, p = .057, d = .29, CI95% = [.00, .17]. The interaction between Study Phase and Practice Type, however, was not statistically significant, F (1, 90) = .72, p = .40, CI95% = [-.03, .07].

Next, we conducted the same two-way ANOVA focusing on participants with no programming experience. For this group, participants in the Pretest condition (M = .48, SD = .20) did not outperform participants in the No Pretest condition (M = .46, SD = .16), F(1, 146) = .95, p = .33, CI95% = [-0.12, .04]. Two planned comparisons failed to find evidence of a significant difference with regard to Study Phase 1 performance (Pretest: M = .49, SD = .18; No Pretest: M = .47, SD = .18), t(146) = .90, d = .11, p = .37, CI95% = [−.03, .08], or Study Phase 2 performance (Pretest: M = .47, SD = .21; No Pretest: M = .44, SD = .18), t(146) = .71, p = .48, d = .15, CI95% = [-.04, .10]. The interaction between Study Phase and Practice Type was not statistically significant, F (1, 146) = .00, p = .97, CI95% = [−.04, .04].

Given that the interaction between Programming Experience and Practice Type was not statistically significant, we cannot make strong conclusions about whether participants with experience benefited more from pretesting than participants without experience. The results of the follow-up analyses, however, are suggestive. At a minimum, they provide additional evidence that at least participants with some experience benefited from pretesting. Whether participants without experience benefited from pretesting, however, is unclear, and the possibility that they do not is something that future research should investigate more closely.

Discussion

Participants in the present study received instructions regarding how to solve a programming task for which some of the information necessary to do so could only be found via a simulated Google search of the Internet. Critically, participants in the pretest group had to work at solving this programming task having been instructed only on some—but not all—of the necessary programming concepts for doing so, before being allowed to access information regarding the remaining needed concepts via the simulated Internet search. Thus, these participants could be said to have engaged in a unique type of pretest. In contrast, participants in the no-pretest group did not have to attempt to solve the programming problem before they could search the Internet via Google for the remaining needed concepts. Accordingly, the no-pretest participants also had more time to study the information presented in the simulated Internet search. Despite this advantage of having more time for solving the programming task while in possession of all of the information necessary to do so, the no-pretest participants were nonetheless outperformed by the pretest participants on the final delayed multiple-choice transfer test.

The present work builds upon an array of studies that have observed benefits of taking a pretest before studying the to-be-learned information (e.g., Grimaldi & Karpicke, 2012; James & Storm, 2019; Kapur & Bielazuc, 2012; Kornell, 2014; Kornell et al., 2009; Little & Bjork, 2016; Richland et al., 2009). It also provides an important addition to this body of work in that several prior studies have not reported large or significant benefits of pretesting in learning situations more akin to those found in educational or classroom contexts (e.g., testing deep conceptual understanding and non-pretested portions of the learning content as opposed to simply testing memory for identical pretested factual questions; see Carpenter et al., 2018; Geller et al., 2017; McDaniel et al., 2011; but see Carpenter & Toftness, 2017; Little & Bjork, 2016), or that such benefits were relatively limited in scope (e.g., Hausman & Rhodes, 2018; James & Storm, 2019). One could speculate that the benefits of pretesting observed in the present study might be, in part, attributable to or linked with the unique set-up of the present experiment in that participants (a) studied information related to computer programming;, (b) attempted to generate a solution to a challenging task related to computer programming (a type of pretest for which the participants—while having learned about some of the concepts necessary for solving the task—had not yet received all the information needed to solve that task;, (c) were then exposed to the additional information regarding computer programming necessary to solve the earlier task; and (d) were given a final multiple-choice transfer test to measure how well they had formed a conceptual understanding of the to-be-learned materials. This unique form of pretesting, as later discussed in more detail, may have triggered certain cognitive processes that not only potentiated their new learning, but also enhanced their retrieval of the previously related learned information, thus leading to an overall deeper understanding of the to-be-learned material.

The present findings are also noteworthy because—to our knowledge—they arise from the first experiment designed to examine the potential benefits of pretesting in the context of learning new information via the Internet. Research has suggested that people tend to rely on the Internet to store and access information in a way that may reduce the extent to which they store that information internally (Marsh & Rajaram, 2019; Sparrow et al., 2011). Importantly, as suggested by the present results, pretesting might have the potential to enhance the way students learn new information encountered on the Internet.

Two other aspects of the present results deserve further emphasis. First, participants in the pretest group outperformed participants in the no-pretest group on the final transfer multiple-choice test not only in their answering of questions about information studied after the pretest, but also in their answering of questions about information studied before the pretest. Perhaps attempting to solve a task for which learners have not yet learned all of the information necessary for doing so—a unique form of pretesting—may have evoked elaborative retrieval practice of the previously learned information on how to create and manipulate a single variable while trying to solve a programming task that involves manipulating multiple variables. This retrieval of prior information in relation to the to-be-learned programming concepts may not only have improved their comprehension and integration of the new knowledge (i.e., interpolated testing effect or interim testing effect; see Szpunar et al., 2013; Szpunar et al., 2008; Wissman et al., 2011), but also strengthened their memory traces of the recalled information itself (i.e., the testing effect; see Agarwal et al., 2008; Butler, 2010; Hinze et al., 2013; McDaniel et al., 2007; Roediger & Karpicke, 2006). As a result of the binding of previously and newly related information, a deeper understanding of the to-be-learned concepts may have been formed overall, as reflected in the apparent increased ability of pretest participants to connect and adapt the learned concepts to infer and answer novel conceptual questions on the final test.

Another possibility is that the prior problem-solving attempts of the pretest participants made them more effective and efficient processors of the new material once they were able to find it via the Google search. It seems reasonable to assume that the information they found on Google was processed more deeply as a consequence of initially trying to solve the problem as compared to simply googling the information, which would be consistent with prior research demonstrating the learning benefits of guided discovery (e.g., de Jong and van Joolingen, 1998) and problem-solving attempts prior to instruction (cf. Productive Failure: Kapur, 2010; Kapur & Bielaczyc, 2012; Invention Studies: Roll et al., 2009; Roll et al., 2012; Schwartz & Martin, 2004). Perhaps by trying to think of how to solve the problem, the present pretest participants were encouraged to think more deeply about the various elements of the task, resulting in enhanced subsequent study of the task-related information encountered via their Google search, which then supported better subsequent transfer. In contrast, perhaps the no-pretest participants who were able to consult the Internet without spending time contemplating the problem first were led away from thinking deeply about the various elements of the task, thereby rendering their subsequent learning less likely to support subsequent transfer. A direction for future research would be to explore the effectiveness of different pretesting set-ups or types of pretest activities (e.g., pretest tasks with versus without an initial study phase; attempting to solve a problem relative to other kinds of pretest activities) in a systematic attempt to discover the necessary and/or sufficient characteristics for producing benefits of pretesting.

Finally, it should be noted that the benefit of pretesting was somewhat tenuous for participants without any programming experience. Given that the interaction was not statistically significant, however, we hesitate to make too much of this finding. Nevertheless, it is consistent with the idea that learners need some degree of prior knowledge to engage with a pretest in a way that is likely to enhance learning. It is possible, for example, that having some background knowledge on programming principles helped participants in the present experiment to remember better the information studied prior to presentation of the to-be-solved programming problem. If so, these participants might have been more effective at integrating that information with the new task-related information subsequently found on Google. In comparison, participants with no prior experience in computer programming may have struggled more to form connections between the task requirements and the previously learned information, resulting in their poorer conceptual understanding of both the previously learned information and that encountered during their Google search (for research on the importance of relatedness of the generated pretest response to the to-be-learned information, see Huelser & Metcalfe, 2012; Kornell et al., 2009, experiment 2; Slamecka & Fevreiski, 1983; for research on the association between prior knowledge and new learning, see also work on the expertise reversal effect, e.g., Cooper et al., 2001, experiment 4; Kalyuga, 2007; Leppink et al., 2012; McNamara, 2001; see also Carpenter et al., 2016; Karpicke et al., 2014 for research work on the relationship between individual differences in student achievement and retrieval-enhanced learning.) An important avenue for future research will be to identify more fully how the effectiveness of pretesting may vary in relation to differing levels of pre-existing domain knowledge.

Concluding comment

With the constant pressure and heightened expectations for the adequate preparation of students for higher education and/or the workforce, encouraging learners to think before seeking out easily accessible answers via the Internet or other sources, such as the back of a textbook, would seem to hold much promise as a useful addition to effective teaching practices in any field. Although the extent of the generalizability of pretesting on the learning of various types of skills and knowledge remains unknown and requires additional research to determine, the present results are encouraging and offer perhaps a new and effective way for making instruction more effective in the digital age.

Take away note

For every maxim there seems to be an equal and opposite maxim. In this case, perhaps, the counterpart to “Look before you leap” is “Think before you Google.”

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.