Abstract

The Hagen Cumulative Science Project is a large-scale replication project based on students’ thesis work. In the project, we aim to (a) teach students to conduct the entire research process for conducting a replication according to open science standards and (b) contribute to cumulative science by increasing the number of direct replications. We describe the procedural steps of the project from choosing suitable replication studies to guiding students through the process of conducting a replication, and processing results in a meta-analysis. Based on the experience of more than 80 replications, we summarize how such a project can be implemented. We present practical solutions that have been shown to be successful as well as discuss typical obstacles and how they can be solved. We argue that replication projects are beneficial for all groups involved: Students benefit by being guided through a highly structured protocol and making actual contributions to science. Instructors benefit by using time resources effectively for cumulative science and fulfilling teaching obligations in a meaningful way. The scientific community benefits from the resulting greater number of replications and teaching state-of-the-art methodology. We encourage the use of student thesis-based replication projects for thesis work in academic bachelor and master curricula.

Introduction

Replications are not just an important cornerstone of empirical research (Asendorpf et al., 2013; Simons, 2014), but also an effective tool for learning how to conduct methodologically sound empirical research. In a hands-on fashion, replicators learn about research methods, study designs, statistical analyses, the scientific process, and professional communication skills. Furthermore, they are inspired to reflect on more general values such as transparency and openness in science. In the spirit of recent proposals for new initiatives facilitating the training of students and also contributions to cumulative science (Brandt et al., 2014; Frank & Saxe, 2012; Grahe et al., 2012), we launched the Hagen Cumulative Science Project (HCSP) in October 2015—a large-scale teaching project aimed at replicating current experimental studies in bachelor and master students’ thesis work. By replication we mean that we repeated published studies with sufficient sample sizes and statistical power to determine whether “the [target] finding can be obtained with other random samples drawn from a multidimensional space that captures the most important facets of the research design” (Asendorpf et al., 2013, p. 109). More specifically, we conducted direct replications (Simons, 2014) that varied only in terms of the sample of participants (i.e., German-speaking students), time of data collection (i.e., between 2015 and 2019), place of data collection (i.e., web-based) and to a minimal extent study materials (e.g., materials translated into German) and procedures (e.g., smaller monetary incentives in some studies after consulting with the original authors) in comparison with the original studies.

We specifically focused on direct replications of studies published in the journal Judgment and Decision Making (JDM) for several reasons: (a) the journal includes topics and methods highly relevant to the expertise of the academic chair supervising the replication projects (chair of cognitive psychology: judgment, decision-making, and action), (b) JDM is an open-access journal providing data sets and supplemental material on the website for each article to easily reproduce the results with the original data (description see below), and (c) JDM topics are sufficiently diverse in content (e.g., social judgment, morality, interindividual differences, cooperation, heuristics, risky choice) and methods to allow more general claims than in extremely narrow journals. Based on the experience from more than 80 completed student replications in the HCSP, we share insights concerning the implementation of replication protocols for students with little prior experience in conducting experimental work. We also discuss potential challenges in the process and possible solutions.

In some universities, bachelor theses are the final element of an undergraduate degree. Other universities may include optional honors theses that are similar in format. In psychology, thesis projects typically target a research question utilizing different tools of investigation (e.g., writing a literature review, conducting a correlational or experimental study, or using secondary data or analyses). The time frame for conducting the respective research is commonly short (e.g., 12 weeks in the curriculum of the University of Hagen for full-time students) and is stated in most examination regulations to equate to approximately 300–360 working hours. 1 Within this time frame, students who work empirically are typically asked to (a) begin with a literature review in order to develop their research question, (b) develop a research design, (c) prepare the necessary materials for the empirical study, (d) collect data from participants, (e) analyze the data, and (f) write up their thesis. The teaching goal of the thesis is to familiarize students with practical scientific work. Their skills for independently conducting research should be developed and tested. These skills should include the ability to evaluate research questions critically, develop ideas about the type of evidence necessary to answer the research question, and conduct the respective experiment. In addition to these core elements of scientific research, recent evidence concerning the lack of transparency and openness of research (Wicherts et al., 2006) as well as the prevalence of statistical reporting errors in the past (Nuijten et al., 2016) also calls for focusing more strongly on teaching the transparent and comprehensive reporting of research.

Students’ comprehension of research practices can be fostered through transfer—that is, the direct application of learned concepts in an authentic research context. A growing body of students today may learn about the importance of replicability of empirical work in introductory classes in psychology. Experiencing what replicability means in the context of an individual experiment in their thesis would then allow students to transfer this knowledge to a practical problem. This should help students learn about and reflect on the importance of replicability. Arguably, replications are an efficient tool for motivating students to think like future researchers and extend their methodological skills. Furthermore, replications allow students to tackle and solve many typical problems that they are faced with when conducting thesis work.

Feeling Overwhelmed by Generating an Interesting and New Research Question

When developing their first research question, students oftentimes find it difficult to obtain a comprehensive overview of the relevant research and methods due to the large amount of published papers in a given field. This potential overload of information may often lead to negative feelings of being overwhelmed. As a result, some students may develop research questions that have already been answered but that have been overlooked by the student’s incomplete literature review. With a predetermined study design and statistical analyses of the original article as well as highly structured guidelines on how to proceed, conducting replications contributes to a balanced process between working independently and receiving orientation to successfully conduct a meaningful empirical study. Students can focus on learning skills to conduct a specific study and develop the methodological and statistical expertise needed (Frank & Saxe, 2012). Whether students are able to grasp psychological theory can still be assessed because students are asked to describe the motivation for the research.

Focusing Only on (Overly) Simple Methods and Statistics

Using replications motivates students to not think about research designs in the framework of the statistical procedure with which they feel most comfortable, but rather the appropriate framework for the research question at hand. By re-analyzing the original data under supervision, students will be introduced to statistical tests that could go beyond the methods they learned in class. Although this may be challenging for students at first glance, it ultimately fosters a deeper understanding of statistical tests and statistical modeling in general. The re-analysis also builds up the necessary confidence and expertise for the analysis of the replication data. Additionally, reproducing original analyses as well as replicating the experiment feels like “detective work”, which can be exciting for students (Frank & Saxe, 2012; Janz, 2016).

Lack of Orientation and Structure

Reading scientific articles from the perspective of a scientist planning on replicating the presented work instead of a remote reader and consumer of the research helps students to gain insights into the decision processes of the original authors (Janz, 2016). Furthermore, it allows them to reflect on their own methodological choices more systematically. Experiencing the scientific procedure as an observer and evaluator of the original work as well as an active researcher who is in the process of running the replication teaches the value of reproducible routines as well as the importance of open and transparent documentation (King, 1995; Frank & Saxe, 2012). These insights help students develop a professional routine of making their work accessible to readers with little prior knowledge—a skill valued not only in academia but also in many jobs in industry. Furthermore, the replication process itself allows for a structured schedule with consecutive steps. A replication project thereby lends more orientation and structure, which enables students to manage the process of writing a thesis more effectively.

Low Sense of Purpose and Responsibility: Limited Scientific Thinking

Approximately 90% of student projects are never published (Perlman & McCann, 2005)—they typically do not satisfy the high quality standards in the research field. This is problematic because students might anticipate from the start that their work will never be read, discussed, or built upon. Thus, they may feel that the sole purpose and goal of their work is to receive their degree. Such conditions exclude students from feeling responsible and being part of the collaborative effort of conducting scientific work. Thus, it may also reduce the tendency for deeper, responsible, and more (self-)critical scientific thinking. In contrast, the experience of responsibility, having a real impact and also receiving the merits of contributing to the body of evidence, can be assumed to change the experience of scientific work substantially. The replication initiative focuses on teaching students to appreciate research and the scientific method, develop their methodological and experimental skills, and motivate critical thinking by doing meaningful work. If the replication attempt adheres to scientific standards, students’ theses might even potentially result in their first scientific contribution in the form of a publication.

Misconceiving Scientific Value: It is Not (All) About Finding Statistically Significant Results

Given the strong focus on statistically significant results (i.e., “positive results”) in past research and the resulting replicability problems of empirical science (Francis, 2013), one major task of scientific education today is to safeguard the next generation of scientists against this bias. Conducting replications will teach students not to focus on positive results or the evidence of a single study. Rather, it educates students about the value of cumulative science as well as the evaluation of solid methods irrespective of study results (Cetkovic-Cvrlje et al., 2013; Wagge et al., 2018). Additionally, integrating replications into the standard curriculum raises awareness for the importance of replicability and its role in the scientific process (Höffler, 2013; Carsey, 2014).

Procedural Steps of the HCSP

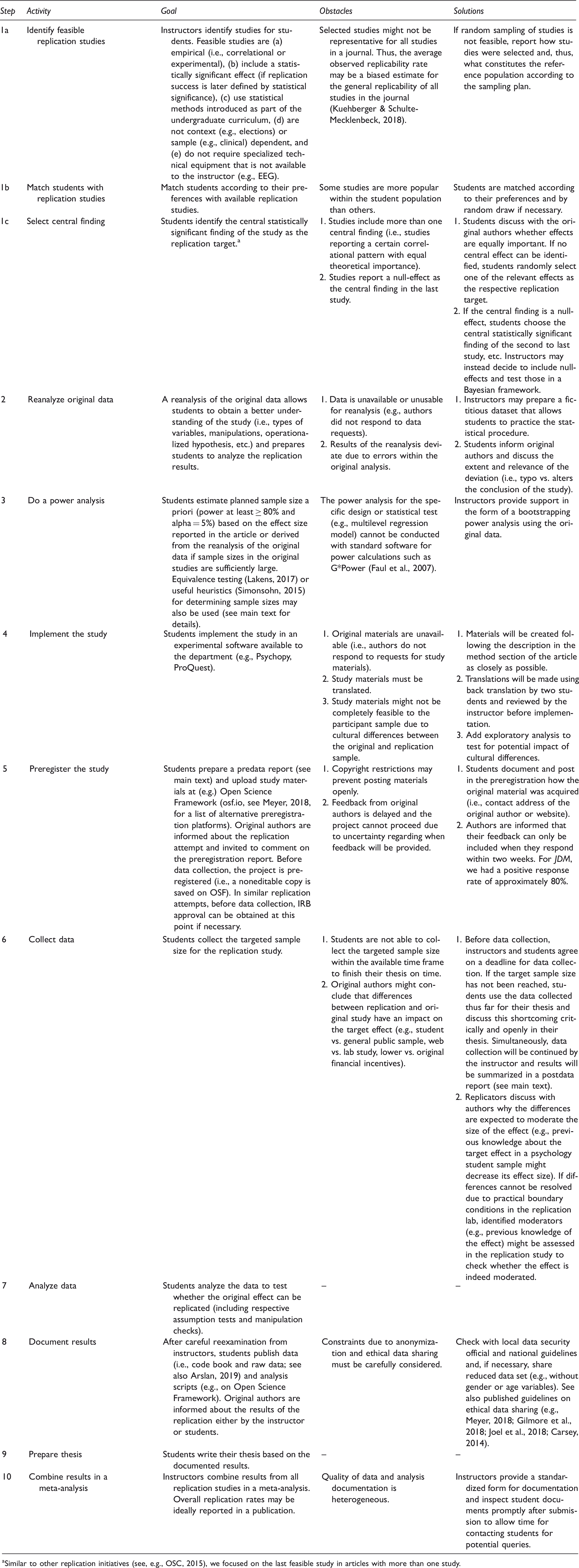

Procedural steps of the Hagen Cumulative Science Project

Similar to other replication initiatives (see, e.g., OSC, 2015), we focused on the last feasible study in articles with more than one study.

During the process, students internalize principles of open science through practical application. After preparations by advisors (steps 1a and 1b, Table 1), students develop and apply their methodological skills with a focus on replicability by re-analyzing the original data (step 2), conducting a power analysis (step 3), collecting, adjusting (e.g., translating materials in a different language) or recreating the materials necessary for a replication (step 4), and professionally communicating with the original authors. Students extend their methodological skills concerning transparency and preregistration by documenting and publishing study materials and an analysis plan of their targeted replication on the open science Framework (step 5). Finally, after collecting data from the university’s subject pool (step 6), students learn how to document data and analysis scripts (step 7) as well as summarize and discuss their own results in light of the original evidence (step 8). In the theory section of their theses, students critically reflect on arguments made within the replicability debate (step 9). Within this section, they also evaluate the replicability of their target effect by elaborating on the original effect size, type of effect, sample of participants, and so forth. In addition to these educational goals, a secondary aim of the HCSP is to increase the number of direct replications in the field and analyze replication rates and their moderators using meta-analysis (step 10). Furthermore, the firsthand experience enables us to develop tools and assistance for similar projects to lower the threshold for future replication efforts. In the following, we will highlight some aspects listed in Table 1 and provide further explanations.

In step 3, students determine the sample size for the study. Specifically, they calculate the number of participants required to achieve a specific level of statistical power of (e.g.) 80% for detecting an expected effect size. When doing so, they must take into account the study design and properties of the statistical test of the replication study. Based on our experience, however, how the targeted effect size is defined in a power analysis is not a trivial matter, since various possibilities to do so exist. Students may use the observed effect size from the original study as an estimator of the population effect size in cases where original studies rely on sufficiently large sample sizes. Students may alternatively target at a higher statistical power of (e.g.) 95% or above to compensate for loss of power due to potentially inflated effect sizes in the literature (Simonsohn, 2015). Students may also define effect sizes according to alternative methods such as equivalence testing (Lakens, 2017) or at least rely on useful heuristics (e.g., 2.5 times the original sample size; Simonsohn, 2015) when deciding how many participants to collect. If the necessary sample size is not feasible, students may also consider increasing the alpha level (and preregister this change from the conventional p < .05 level in OSF) in a compromise power analysis (Faul et al., 2007; Gigerenzer, Krauss, & Vitouch, 2004).

In step 5, students prepare a preregistration of their replication study. Students use a standard form adapted from the Reproducibility Project (Open Science Collaboration, 2015; cf. Brandt et al., 2014) that can be downloaded from https://doi.org/10.17605/OSF.IO/TR6FB. In the predata report, students briefly describe the original research, indicate their central effect (i.e., we followed the protocol of the Reproducibility Project by targeting the central effect in the final study in an article), report a power analysis, and describe their materials, methods, statistical procedure, etc. (see template).

We also created a standardized website for each replication project at OSF, which can be forked from https://doi.org/10.17605/OSF.IO/ZDSKX. 2 The template website consists of folders where students can upload their predata report, study materials (e.g., experimental software, questionnaires, etc.), data, and (re-)analyses of the original and replication data. In step 8, students document their results in a postdata report. The postdata report is identical to the predata report with an addendum including reports of the statistical analysis and a brief discussion.

Additionally, students upload their raw data, a data sheet explaining the meaning of the variables (see also Arslan, 2019), and the analysis script. We encourage students to use open-source software for statistical analyses (e.g., PSPP, JASP, or R) and simple formats (i.e., txt and csv files) for all uploaded files. We also suggest that files follow a common format in terms of content to facilitate the final meta-analysis (step 10). That is, all files ideally consist of a header including the contact address of the student, the reference of the replicated study, and a link to the OSF website of the project. Ideally, analysis scripts begin by loading the raw data, end with printing the inferential statistics of the target effect, and are extensively commented (i.e., each processing step is commented and the version of the software used for the analysis is documented below the header; see also Rouder et al., 2019 for best practices).

For many students, running a replication study is only their first or second experience (e.g., lab practical) with conducting research. Therefore, questions regarding data quality must be considered. To ensure that the replications result in a sound contribution to the research field, instructors must take several measures. For instance, they must ensure that students only replicate feasible studies (step 1a, Table 1). Instructors also need to provide feedback at critical steps of the replication. Instructors may comment and check with students on preregistration reports to avoid errors in the design and analysis stages of the project before the original authors are informed about the replication and data is collected. Instructors may also provide feedback on the postdata report to avoid incorrect application and inferences from statistical analyses. We agree with comparable projects reporting that student replications follow a higher degree of transparency and a close-knit net of quality checks than the original work (Wagge et al., 2018) and thus may also result in similar or better data quality. We plan to publish all projects of the HCSP in OSF to provide examples and prototypes for instructors and students. We are currently evaluating all projects carefully and rate the quality of each replication attempt concerning how well (often for pragmatic reasons) the quality criteria for a replication could be fulfilled. We plan to publish those evaluations as a report prominently in each project. This might also help other instructors who plan similar projects to assess examples of good replication attempts and detect target studies for which further replications might be worthwhile.

Finally, it should be mentioned that replications are not only a valuable means with which to conduct bachelor theses. Student conducted replication studies can also be extended to be sufficiently complex and demanding to be applicable for master theses as well. We adapted the format to students acquiring a master’s degree as follows. In addition to the replication of the target effect, master students independently derive from theory a moderator of the target effect that they then test in the replication study. To be able to generate this novel moderator hypothesis, students must consider the target effect and the theoretical background more intensively and at a broader and/or deeper theoretical level. This extension allows for an additional learning opportunity that requires a good understanding of theory and the development of relevant hypotheses that allow for efficient theory testing. Adding this additional task to the student project might also be feasible for BA theses if more time (and course credits) is allotted to the thesis work in the curriculum. The format may also be adapted for a research methods class: Single steps of the schedule may be practiced (e.g., step 3: how to conduct a power analysis, step 5: how to write and register a predata report in OSF, etc.) or the entire schedule may be realized in a two-semester course.

Conclusion

In the Hagen Cumulative Science Project (HCSP), students successfully conducted more than 80 direct replication studies to realize their bachelor and master theses.

Within the HCSP framework, students learned key competences of empirical research as documented (e.g.) in their written theses. Specifically, in their intense reading and consideration of the published article and the available data as well as in their attempts to reproduce materials, methods, and analyses, students gained deep insights on real, purposeful scientific work and acquired special competencies in the following skills:

evaluating research questions critically by understanding an original study in detail to prepare its replication, reflecting whether the applied methods of the original study allow to answer the posed research question, and obtaining firsthand experience concerning what it takes to conduct and document an empirical study in such a way that other researchers can potentially replicate it.

The format integrates aspects of open science within the individual steps of conducting the empirical project. Students develop concrete skills such as conducting a power analysis and preregistration but also gain a broader understanding of the nature of accumulating knowledge in empirical science.

Studies on the learning experience in traditional theses (e.g., literature reviews, empirical studies investigating new research questions) show that students value the autonomy of working independently and in depth on a self-selected topic, resulting in a strong sense of ownership (Todd et al., 2004). However, the challenge of finding a “researchable” research question (Todd et al., 2004), while still developing methodological and writing skills, can frustrate students and lead to studies of poor quality (Grahe et al., 2012). Structured replication projects can overcome those obstacles.

The HCSP is one of few replication projects integrating students in their attempt to validate empirical results. Similar projects have integrated replication studies at different stages in the curriculum, such as in introductory methods classes (Frank & Saxe, 2012) or advanced research methods classes (Standing et al., 2014; Wagge et al., 2019). The degrees of freedom in the selection of feasible replication projects allow instructors to adjust the individual workload and level of difficulty to students’ abilities. This is possible, for example, by adjusting the group size of students conducting a replication, preselecting studies for replication vs. allowing students to identify suitable studies on their own, and replicating more vs. less challenging replication studies. Students can thus be introduced to replication studies early in the curriculum. Ideally, students learn about the general ideas of transparence and open science (e.g., in an introductory methods class) as well as specific tasks such as conducting their first re-analysis (e.g., in an introductory statistics class) and developing their first preregistration (e.g., in empirical-experimental internships) throughout the course of their studies.

Overall, the framework of the Hagen Cumulative Science Project can be adapted flexibly to different student needs and teaching goals. The format allows instructors to plan, organize, and supervise bachelor and master theses in an effective manner. With this structured approach, students are guided through the process of empirical research (in accordance with principles of open science) and are enabled to make a meaningful contribution to cumulative science.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.