Abstract

Introduction

The replication crisis in the behavioral and social sciences spawned a credibility revolution, calling for new open science research practices that ensure greater transparency, including preregistrations, open data and code, and open access.

Statement of the Problem

Replications of published research are an important element in this revolution as part of the self-correcting process of scientific knowledge production; however, the teaching value of replications is still underutilized thus far.

Literature Review

Pedagogical knowledge points to the value of replication as critical to the scientific method of test and retest. Psychology has already begun mass efforts to reproduce previous experiments. Yet, we have very few examples of how analytical and reanalysis replications, after the data come in, contribute to the reproducibility crisis and can be integrated into undergraduate and graduate courses.

Teaching Implications

Replications with quantitative data can be a pedagogical tool for improving student research method skills and introducing them to best research practices via learning-by-doing.

Conclusion

This article aims to start filling this gap by offering guidance to instructors in designing and teaching replications for students at various levels and disciplines in the social and behavioral sciences, including a supplementary teaching companion.

Keywords

Academic debates recently identified the need for better scientific research practices. Replication is one such practice, and teaching it is an opportunity to promote a range of science improvement techniques (Hamermesh, 2007; Höffler, 2014; Janz, 2016; King, 1995). This article showcases how to teach replication using quantitative data as part of undergraduate and graduate curricula. We present key considerations and materials in preparation and implementation of a successful replication course using our collective experiences teaching and conducting replications, and recommendations from pedagogical literature (e.g., Pownall et al., 2023; Stojmenovska et al., 2019). We include a teaching companion (TC), which we reference throughout as “TC.XX,” where “XX” refers to slide number; and we include a sample syllabus (Bauer et al., 2023).

Most behavioral and social research involves analyses of experimental or observational data in numerical format. Attempting to computationally reproduce previous findings using the same numerical data, known as analytical replication, often fails (Breznau et al., 2023; Hardwicke et al., 2018; Pérignon et al., 2023). Teaching replication is an opportunity for student-researchers to learn about reproducibility problems in science, build skills to improve computational reproducibility, and learn transparent and ethical scientific practices (Brandt et al., 2014; Christensen et al., 2019; Frank & Saxe, 2012; Jekel et al., 2020, see TC. 4). For example, even though most researchers in the social and behavioral sciences support data and code sharing behaviors they have been uncommon in practice (Thoegersen & Borlund, 2022).

Analytical replication is a stepping-stone to learning about re-analyses where student-researchers not only computationally reproduce but also introduce changes to previous statistical methods and models. Thereby they learn to expand or improve previous research. It is even possible to use replication to teach how to conduct experiments. In this article, we focus on the analytical part of any research that has already been conducted and produced numerical results, so that the data are in hand and the student-researcher has a replication goal in mind.

Learning Objectives for Replication Courses Using Quantitative Data

A quantitative replication should introduce students to current research practices, theoretical debates, statistical methods, and research integrity, in alignment with the American Psychological Association’s learning goals for undergraduate psychology majors (APA, 2023, particularly 2.2b and 2.2d). It should prepare them for their own theses and potential academic careers. Objectives might include (a) reading, interpreting, and summarizing previous research findings and methodological techniques, (b) scientific documentation and communication skills, (c) first or new insights into a subfield, (d) independent execution of statistical analyses, (e) identifying and diagnosing questionable research practices (QRPs), (f) learning software and computational social science (CSS) literacy, and (g) building reproducible workflows (Höffler, 2014; Stodden et al., 2014; Stojmenovska et al., 2019). The goals should be clarified in the syllabus and on the first day of class, including instructor reflections on realistic possibilities and limitations of conducting replications (see example syllabus in Bauer et al., 2023).

Prerequisites

Particularly for replication courses, clarifying prerequisites helps align student skills with the tasks, ensures comparable skill levels among classmates and simplifies the teaching process. Replication can be a practical component of a university curriculum linking to prerequisites regarding for example software or specific methods skills. Imagine an introductory course that teaches quantitative methods using a given software. A replication course could build on this, teaching students to then apply these methods with the same software and obtain real research outcomes while receiving methods credits and possibly making steps forward toward a thesis. Students’ learning outcomes benefit from clearly defined prerequisites that are explicitly communicated, for example, many students had not planned to prepare their thesis work in a methods course but suggesting this can inspire them.

Depending on course objectives, a basic understanding of bivariate tests, analysis of variance and multivariate regressions, as well as statistical software exposure are essential. Ethical and open science practices favor free and open-source software, and there is a movement underway to shift to using R and R Studio across the social sciences; however, teachers should keep in mind that powerful data-science tools such as R can bring challenges in learning or computational troubleshooting. This is particularly acute if they have no previous exposure to this software. Teachers should reflect carefully on how much time software learning takes and try to balance this against the main goal of replication learning. Software programed with particular attention to user-friendliness and extensive teaching resources could be useful for beginner replication courses (see Bauer et al., 2023, TC. 16).

Selecting Studies

Particularly at the bachelor level, replication courses will be more successful when focusing on studies where data and code are available. To find such studies (see Bauer et al., 2023, TC. 26), consider journals with strong data-sharing policies or articles with open data and open materials badges (Höffler, 2017a; Jekel et al., 2020). Some platforms or journals provide lists of studies that have been or could be replicated, along with their data and code availability (e.g., ReplicationWiki [Höffler, 2017b], Harvard Dataverse’s “journal dataverses” and Journal of Open Psychology Data). Studies could also be selected as contributions to larger ongoing meta-science projects, in which specific studies are to be replicated (Bauer et al., 2023, TC. 28). In such projects, however, replicators must often meet conditions that may not fit with the goals, timeline, or format of the course.

Given prerequisites, software choice might be critical. If a specific software tool is required per curricula, or if the course’s primary aim is to teach that software, replicating studies that used this software facilitates teaching. Yet, this choice depends on whether students conduct computational reproductions seeking to run available code on available data or start “from scratch” by writing their own code and/or obtaining data from an original study’s designated source. For the latter, note that public data can undergo changes in naming conventions or values over time (Breznau, 2016). Further, having students “translate” a study’s methods description and code into a new workflow can produce valuable learning outcomes, but may lead to divergent results (Breznau et al., 2023).

If a course goal is coverage of a larger subject, then a diversity of studies could be presented. The drawback is the potential difficulty in supervising a variety of projects simultaneously, especially if some studies are outside the instructor’s expertise. Student learning and replication quality might suffer if too many studies are chosen at the same time (Jekel et al., 2020). Nonetheless, with replication itself as the learning goal, or teaching a subfield topic using replications, selecting studies based either on a single topic, method, or hypothesis can be a strategy that provides a focused learning experience; in particular if students engage in peer review or shared learning across each other’s studies (Carless, 2022).

In our experience, students enjoy independently selecting studies as they gain a sense of ownership and motivation regarding the research (Patall et al., 2010). However, this may require that the instructor is willing to supervise the replication of yet-unknown studies. Student selection can also generate asymmetry in the level of difficulty by replication, which leads to concerns about fairness and challenges in course grading. Studies that have been in the news might also be a motivation for students, for example, high-profile scandals of falsified data (Broockman et al., 2020; Simonsohn et al., 2023) or any number of retracted studies found in the Retraction Watch database. 1

Prescreened, available data is often best because seeking to acquire data directly from authors can take considerable time and may be a dead end. A recent study found that 50% of social scientists refused to share data and code after receiving an email request, and nearly a quarter did not respond (Tedersoo et al., 2021). Where code is not available, students with advanced analytical skills could attempt to reproduce data handling and analytical steps described in the associated manuscript. However, students need to be aware that without the original workflow, it can be challenging to reproduce models described in a published study’s method section even when running what appear to be the same models (Breznau et al., 2023).

Alternatively, instructors can offer a portfolio of prescreened studies from which students can pick their favorite. This requires more work prior to the course. For instance, if the course focuses on statistics training, the replication possibilities could all incorporate the requisite methods. In our experience, the instructor or another researcher or assistant should have previously successfully replicated all studies in such a portfolio in advance; or have a strong knowledge of the software, data and methods used in each study. This is most important for undergraduate and early graduate students less equipped to deal with unforeseen complications arising with a replication, and an argument for the use of teaching assistants.

Types of Replications

Different replication types demand different skill levels and are described by dozens of terms that vary both within and between disciplines (see Bauer et al., 2023, TC. 11). We recommend alerting students of these different terminologies. The most basic replication verifies if original results can be reobtained with the same data and models, usually using the same code (“analytical replication” or “checking verifiability,” Fidler & Wilcox, 2018; Freese & Peterson, 2017). Some replications include not only identical statistical tests, but also reproduce an experimental design. This requires more resources and time and is outside our focus here.

Instructors should be aware that even the most basic studies are rarely 100% replicable (Breznau et al., 2023). Many attempts to reproduce previous methods become new replications because the original data, code, or methods are questionable, poorly documented, or not fully available. This means confounding factors are introduced that may lead to different results and even new discoveries. Alternatively, a replication could deviate from an original study’s statistical methods intentionally to provide a better, more theoretically or causally driven test of the same hypothesis, (what we label “reanalysis”; Nosek & Errington, 2017). Replication courses could combine approaches starting with computational work to verify a previous result and then introduce new analytic choices such as data weighting or adding potentially confounding variables which brings an opportunity to offer students learning about the causal inference process (see Bauer et al., 2023, TC. 62).

Assessment

With analytic replication, the primary learning objective, a final replication report with a reproducible workflow and whatever results the student was able to produce, is an ideal output for grading. If topical or methodological goals exist, however, assignments or tests during the course are preferable. For advanced graduate students, a journal article-style paper about a direct replication project might be most beneficial. Students could be assigned to propose an extension and improvement of a study for which they conducted a computational reproducibility check, this opens the possibility to teach preregistration—after the student computationally reproduces results they then think logically, thinks logically and theoretically about the hypothesis and propose an alternative model before analyzing the data, or before being given a second half of a randomly split sample from existing data (Blincoe & Buchert, 2020). Transparent documentation of materials and construction of a reusable data package including a codebook and well-commented code are also excellent grading possibilities. In more CSS-focused courses this could be an opportunity to teach virtual computing environments for “push button” reproducibility (Liu & Salganik, 2019). Peer review reports among the students can be graded and are an opportunity to learn how science “works” in addition to students obtaining qualitative feedback.

Depending on the course goals, instructors might consider having students work in teams and adapt grading schemes accordingly. Grading should not be influenced by replication success, except in cases of direct reproduction of known executable code. Instead, aspects capturing learning achievement such as methodological rigor, completeness of documentation, and knowledge of the literature are preferable (Bauer & Ganser, 2020).

Communication With Original Authors

Although researchers are not obliged to inform original authors about a replication attempt, doing so introduces students to essential forms of professional scientific communication (Janz & Freese, 2020) and is necessary in the still common case where data and code are not available online (Zenk-Möltgen et al., 2018). This again points to the advantages of replicating preselected studies with available materials. Further, already-established contact could yield authors’ advice during the reproduction attempt. Although contact with original authors could reduce misunderstanding or unnecessary struggles, we recommend caution about premature or too frequent contacting (see Bauer et al., 2023, TC. 30, 58).

Communications are preferably first approved by the instructor and made with the instructor mentioned and put in carbon copy. Despite clear, concise, and courteous emails, instructors should prepare students that many authors might be slow to respond, or may never answer (Tedersoo et al., 2021).

Sharing experiences with author communication can be an enriching component of classroom discussions in replication courses. It allows students to gain insights into the complexities and variations in data availability, as well as the factors that may influence authors’ responsiveness to replication requests.

We offer some email templates to assist students in a professional, polite, and knowledge-focused communication approach (see Bauer et al., 2023, TC. 32). We recommend that students mention their goal of learning from replications to help diffuse potential conflict, but not shame (e.g., demanding materials on ethical grounds) or hunt (e.g., claiming the original findings seem bogus).

Once a replication is completed, we encourage students to communicate replication results to the original authors under most circumstances. This correspondence should include the hypothesis tested, the specific result and an interpretation of replication success, and access to any replication report and materials. Throughout, we recommend encouraging students to keep instructors in the loop of the communication process. All parties involved should be reminded that replications that find different results should not automatically be cast in a negative light or problematized (Stanley & Spence, 2014).

What and Why

Explaining the importance of replication is crucial to students’ motivation and development as budding scientists. In the first course meetings, the reliability crisis should be discussed and how replications can (and cannot) resolve it (see Bauer et al., 2023, TC. 4). We suggest presenting replication as an ideal research practice: a process of substantiating knowledge to make it more reliable and useful. This is an ideal time for discussion of Mertonian norms of science, in particular, disinterestedness on the part of the student-researcher in the nature or direction of results (Merton, 1973).

Cooking analogies may motivate students. Just as a recipe provides the essential steps to “replicate” a specific meal, the outcome likely varies across chefs. In research, these differences in outcomes can stem from poorly organized code, data unavailability, or lack of version control (Breznau et al., 2023; Eubank, 2016; Hardwicke et al., 2018; Stockemer et al., 2018). This is an exercise for students to learn that “published” does not equal “true” and mistakes and inconsistencies are part of science. Presenting some examples of mistakes in published research can be helpful here (see Bauer et al., 2023, TC. 60). When evaluating inconsistencies in coding and analyses, students can focus on whether the direction, strength, and statistical significance of the focal effects are consistent with the findings of the original study.

Teaching Reproducible Workflows

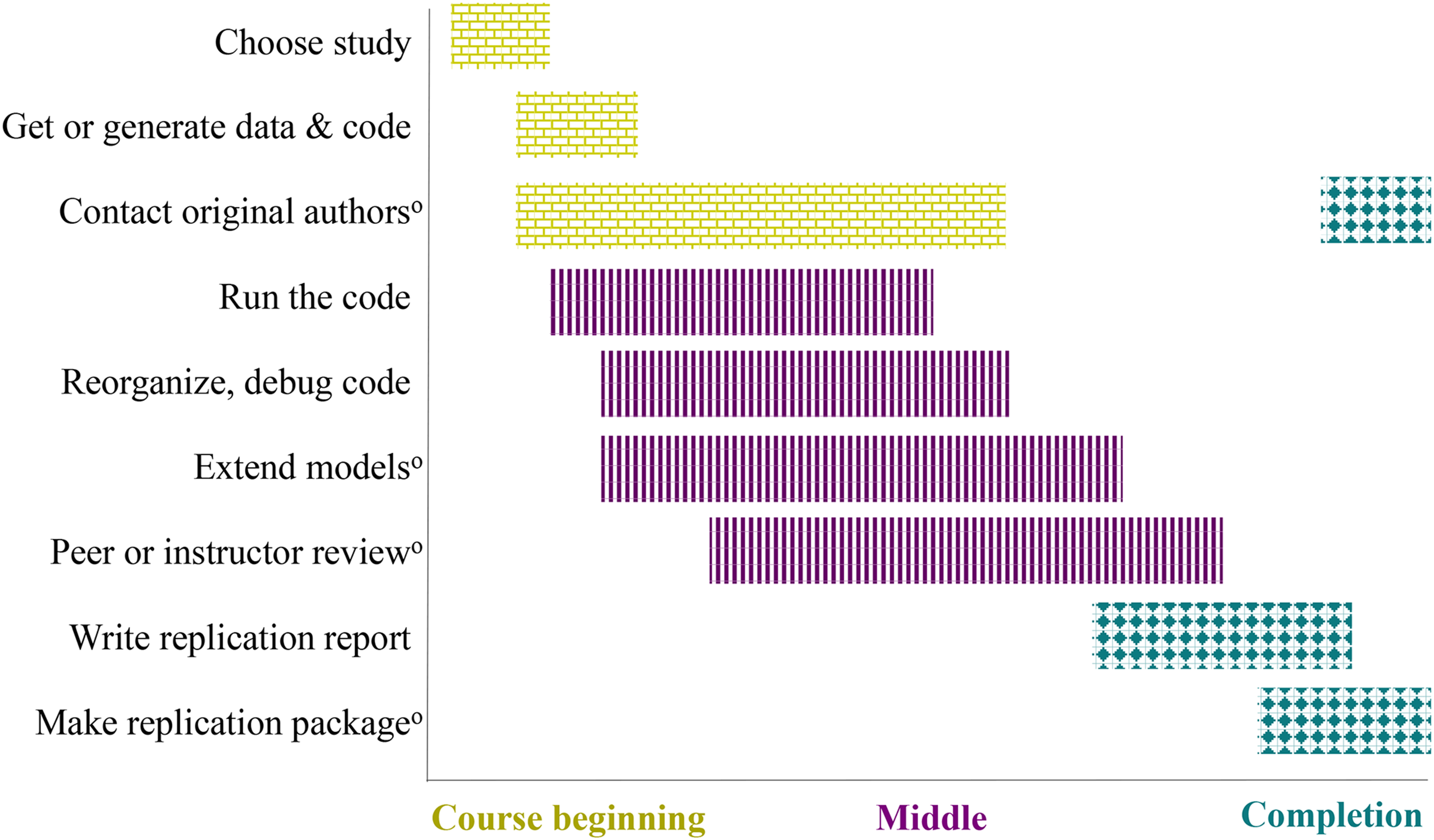

Students should be taught to carefully document every step in their research, starting with the selection of the study (see Figure 1). The bulk of the workflow documentation will take place in the middle phase of the course (striped bars, Figure 1).

A typical analytical replication course workflow. oOptional, but strongly encouraged.

Shared code often has additional sequences not used in a published article. Depending on the course goal, we recommend instructing students to isolate only the relevant code. We also suggest students reorganize “sloppy” code. Instructors can help by providing examples of well-documented syntax (i.e., literate coding, see Bauer et al., 2023, TC. 45). Getting the code to run may be more difficult than imagined. We observed that students invest considerable effort in changing file paths or directories, installing packages, moving forward or backward in software or package version history, and identifying and debugging errors.

Working intensively with code leads to a deep understanding of it, making students more likely to discover mistakes, unreported decisions or QRPs that might lead students to new findings or motivate an extension. Typical extensions include alternative measurement strategies, treatment of missing data, correcting mistakes, alternative treatments and controls, adding newer waves or types of data, checking influential cases, more advanced statistical models, and more sophisticated visualizations.

Documenting and Disseminating Results

We recommend that students write a replication report to document their findings. Instructors could provide a replication report template with a reproducible, well-documented workflow; defined essentially as that which can be reproduced most easily by others (see Bauer et al., 2023, TC. 35). Advanced students or technical courses can instruct students to build apps or use virtual computing environments for “push-button” replications (Liu & Salganik, 2019).

We encourage students to make their replications public, which demonstrates to them that their work can impact science. Although students might aim to produce a journal-style paper, this is not always possible in a short period of time. Therefore, we suggest students to seek out other possible scientific communication formats. Students could post comments on a ReplicationWiki discussion page (Höffler, 2017b) or directly to the original publication (as practiced in journals such as f1000 and Open Research Europe). Blog posts or podcasts, requiring less time investment, are also an option (Vainieri et al., 2023). Students could publish working papers (see Bauer et al., 2023, TC. 23) or consider submitting to relevant journals, potentially to the journal that published the original study, journals dedicated to open science (e.g., ReScienceX, Roesch & Rougier, 2020) and replication studies (Pesaran, 2003), or to methods-focused journals.

Students may worry about reputational backlash in case of an unsuccessful replication attempt. The risks and benefits of publicizing replication results should be discussed during the course. This also holds for deciding how authorship for potential publications will be decided in case of collaborations. We encourage authorship declarations detailing who did what (e.g., using CRediT). Students should become aware that having an impact on science may mean continued work after the course. The instructor should consider that knowing the instructor is available for advice afterwards might be the key motivating students to publish their replication results.

Regardless of publications, the code and workflow should be made transparently available to benefit other researchers (e.g., via OSF or GitHub).

Evaluation

In evaluations of replication courses implemented by the authors, student feedback suggests that learning goals such as understanding how empirical research is put into practice can be achieved. For instance, students noted that they felt “prepared to [conduct research] according to scientific standards,” “learned [about] practical implementations e.g., how to preregister a study, how to simulate power, how research funds influence sample size,” and thought that “similar courses, which are practically oriented and organized like a small project, should be offered more often. They are a great opportunity for students to see how working as a scientist may look like.” Personal communication with students in replication courses further suggests that critical discussions about calibrating beliefs about true effects were an important addition to the curriculum. While much university teaching is about imparting knowledge, raising students’ awareness about the difficulties involved in achieving scientific certainty from evidence-based research adds an important level of complexity that is relevant beyond working in academia. In sum, critically appraising empirical research is a much-needed skill, and implementing replication courses can support skill-building in this area.

Footnotes

Authors's Note

Johanna Gereke is also affiliated with Johannes Gutenberg University Mainz, Institute of Sociology, Mainz, Germany. Jan H. Höffler is also affiliated with EQ-Lab, Guayaquil, Ecuador.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We thank the Academy of Sociology (Akademie für Soziologie) for funding a replication workshop that developed into this paper.

Open Practices

Notes

Correction (April 2024):

The affiliation of a co-author, Jan H. Höffler was updated.