Abstract

Open educational resources (OER) are increasingly attractive options for reducing educational costs, yet controlled studies of their efficacy are lacking. The current study addressed many criticisms of past research by accounting for course and instructor characteristics in comparing objective student learning outcomes across multiple sections of General Psychology taught by trained graduate student instructors at a large research-intensive university. We found no evidence that use of the OER text impeded students' critical thinking compared to use of a traditional textbook, even after accounting for instructor characteristics. To the contrary, we found evidence of a slight increase in content knowledge when using an OER text. Importantly, this effect was driven by improvements from both our lowest-performing students and our highest-performing students. Moreover, student learning outcomes were not influenced by instructor experience, suggesting even novice instructors fared well with OER materials. Finally, students from traditionally underserved populations reported the lower cost of the book had a significantly higher impact on their decision to enroll in and remain enrolled in the course.

Instructors face a dilemma in selecting course textbooks. On one hand, they are important for students' success (Darwin, 2011; Skinner & Howes, 2013), and may even influence student learning more than the teaching methods themselves (Svickni & McKeachie, 2014). On the other hand, students across disciplines report using required textbooks far less than expected (e.g., Clump, Bauer, & Breadley, 2004; Podolefsky & Finkelstein, 2006), with around 60% of students avoiding the purchase altogether (Kinzie, 2006), likely due to prohibitive costs (see Redden, 2011).

Textbook costs have increased at sharper rates than tuition, fees, or housing (U.S. Bureau of Labor Statistics, 2016), which, together, increases demand for financial aid (Ma, Baum, Pender, & Welch, 2017; Popken, 2015). Given that earning a college degree is associated with greater income, health, relationship stability, and overall quality of life (Hout, 2012), increasing access to higher education by reducing costs—without sacrificing educational quality—is a social justice imperative. Indeed, many have challenged the notion that loan-based approaches are effective at ensuring upward mobility for low-income families and are instead calling for other forms of cost reduction (Goldrick-Rab, Kelchen, Harris, & Benson, 2016).

The most effective approach to reducing textbook costs is arguably the adoption of open educational resources (OERs), which are any educational material found in the public domain or introduced with an open publishing license (Butcher, 2015). Freely accessible to the student, OERs can be copied, printed, modified, and distributed at little to no cost (Bissell, 2009), and can be accessed and updated indefinitely. Furthermore, the digital form of most OERs includes many of the same benefits as e-texts (McFall, 2005, but cf. Singer & Alexander, 2017). Accordingly, OERs have become celebrated as a viable alternative that can drastically reduce financial burdens on students.

Despite these advantages and plentiful options (MERLOT, 2015), OERs still show low adoption rates by faculty, likely due to two key factors: low awareness of availability (Allen & Seaman, 2014), and avoidance due to expectations of low quality (Pitt, 2015). Interestingly, preference ratings for OERs are as good or better than traditional alternatives according to instructors' reviews (Allen & Seaman, 2014; Hilton, 2016; Jhangiani, Pitt, Hendricks, Key, & Lalonde, 2016), particularly within social sciences (Fischer, Ernst, & Mason, 2017). Students seem to prefer OERs, as well (Jhangiani & Jhangiani, 2017), and use of OERs has been associated with fewer course withdrawals (Clinton, 2018).

In synthesizing the results of a meta-analysis, Hilton (2016) concluded that “a strong majority of students and teachers believe that OERs are as good or better than traditional textbooks” (p. 585). Of course, as good or better perceptions of quality are far less important than as good or better learning outcomes. Here, the evidence is less clear, particularly within psychology. Some evidence indicates few, if any, differences in student learning between OER and traditional textbooks (Clinton, 2018; Hilton, 2016). However, much of the research examining the effects of OERs on student learning outcomes has been criticized (Griggs & Jackson, 2017a, 2017b), particularly regarding a failure to control for a range of potential confounds.

The current study addresses these criticisms. First, to address the criticism that past research has failed to control for instructor characteristics (Griggs & Jackson, 2017b), we used instructor experience and students' ratings of instructor characteristics (e.g., authoritative, creative, respectful) to account for differences in teaching proficiency between instructors. Further, we used multiple course sections taught by similarly trained graduate student instructors, a previously unstudied population of instructors, allowing us to average effects across instructors to control for instructor variability. Second, to address the criticism that past research has failed to control for course characteristics (such as course difficulty; Griggs & Jackson, 2017b), we used identical outcome measures across all course sections and textbook formats as opposed to course grades. Third, to address the criticism that past research has not been representative of the general college student population and thus lacks external validity (Griggs & Jackson, 2017b), we collected data from a large Highest Research Activity (Carnegie Classification of Institutions of Higher Education, 2017) public university.

We report data collected as part of a long-standing curriculum assessment program before and after a switch to an OER text in General Psychology. Our standard assessment involves measures of content knowledge and critical thinking—key learning outcomes for both the course itself (Gurung et al., 2016; Jhangiani & Hardin, 2015) and the university's General Education curriculum—in all sections of the course twice each semester. This created an opportunity to assess the impact of the switch to an OER text on student learning in ways that address many of the criticisms of prior work. In addition, we examined whether the cost of the assigned text influenced enrollment decisions, particularly among historically underserved students.

Method

Participants

Participants included 3,678 students enrolled in General Psychology during the Fall 2016 (n = 1,896) and Fall 2017 (n = 1,782) semesters at a large public university in the southeastern United States. However, as noted below, most of our analyses relied on smaller subsets of students. All students enrolled in Fall 2016 used the Lilienfeld, Lynn, Namy, and Woolf (2013) traditional textbook and all students enrolled in Fall 2017 used the OpenStax (2017) OER textbook. In both years, the majority of non-honors sections were taught by graduate teaching assistants (GTAs) in a traditional 150-minutes per week face-to-face format. In Fall 2016, this included two late afternoon or evening sections with 54 and 59 students, and nine other sections with 148–150 students each. In Fall 2017, there were six GTA-taught sections, with 146–166 students per section. There were also two to three smaller (25–30 students) honors sections each semester, taught by either experienced GTAs or faculty. Finally, in both semesters there was a larger (n ∼ 380) faculty-taught section that met twice a week for 50 minutes each, with a smaller (n ∼ 30), once a week graduate-student-led discussion section. In Fall 2017, there were also two larger (n = 521) faculty-taught sections that utilized a hybrid format with several online assignments replacing one of the three 50-minute class sessions, along with 14 GTAs who facilitated smaller within-class cohorts within the larger face-to-face class sessions. As described below, because of these differences in format, most of our analyses focus only on the GTA-taught sections.

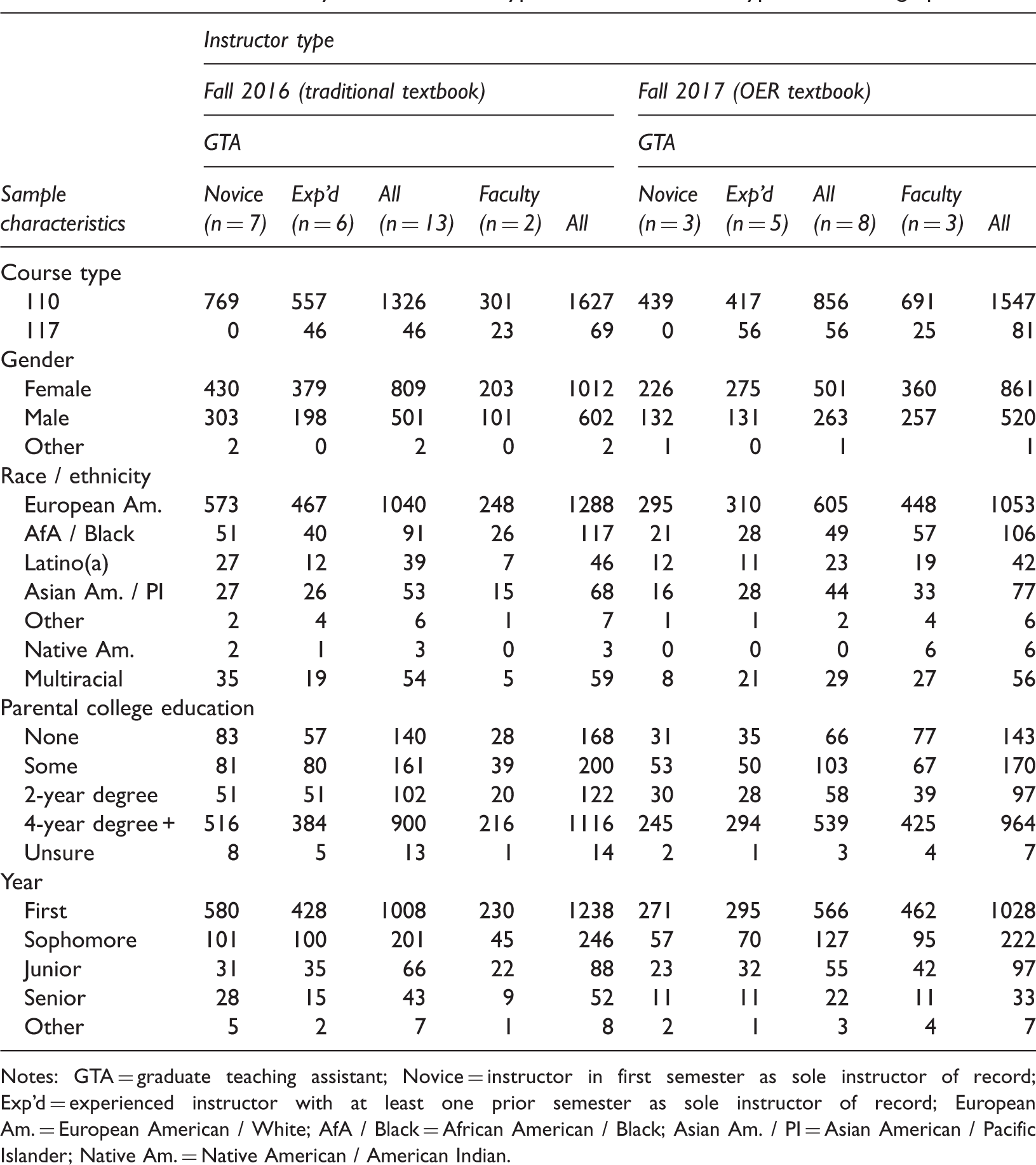

Number of Students by Each Instructor Type, Semester, Course Type, and Demographics.

Notes: GTA = graduate teaching assistant; Novice = instructor in first semester as sole instructor of record; Exp'd = experienced instructor with at least one prior semester as sole instructor of record; European Am. = European American / White; AfA / Black = African American / Black; Asian Am. / PI = Asian American / Pacific Islander; Native Am. = Native American / American Indian.

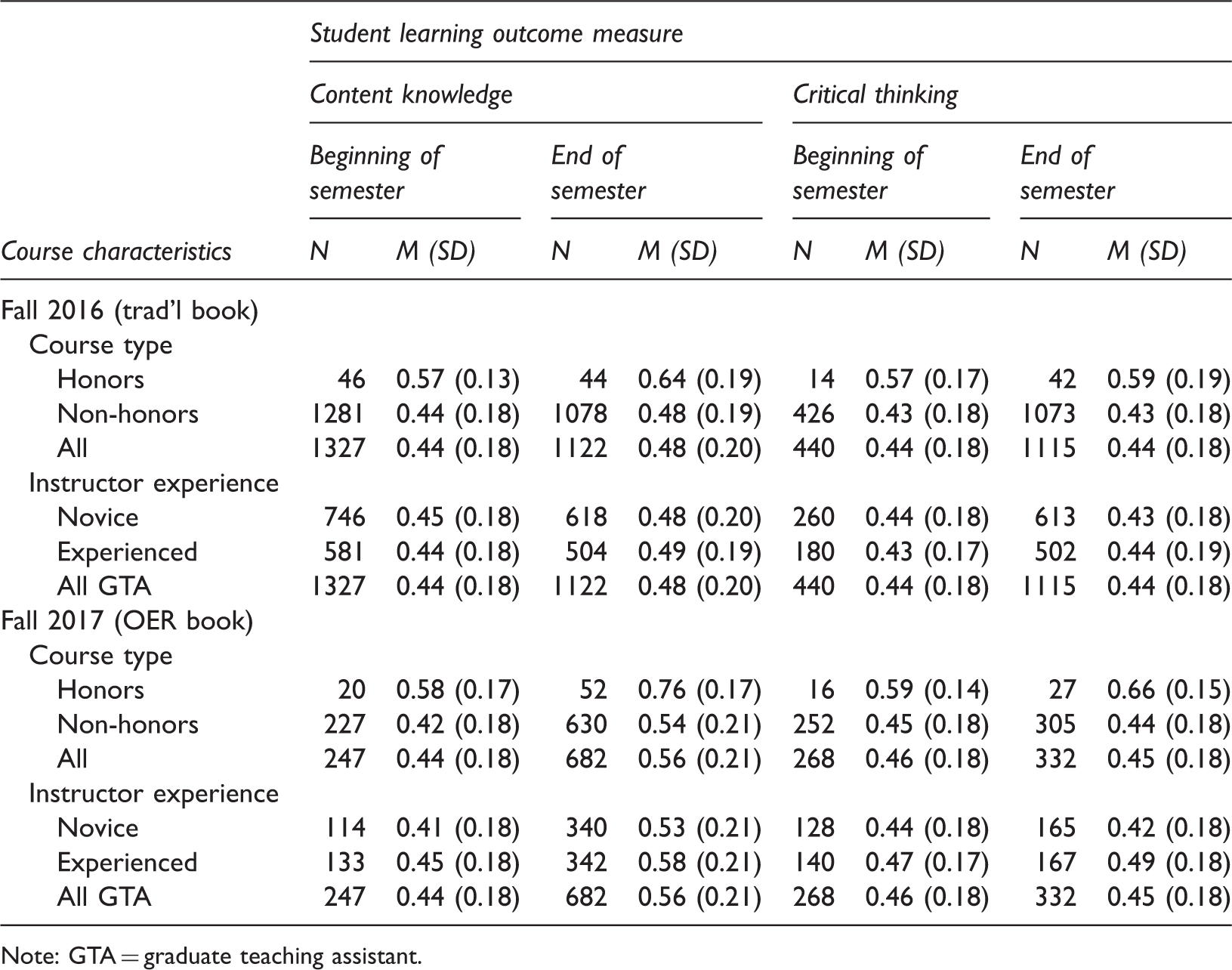

Content Knowledge and Critical Thinking Scores as a Function of Time, Semester, Course Type, and Instructor Experience for GTA-taught Students.

Note: GTA = graduate teaching assistant.

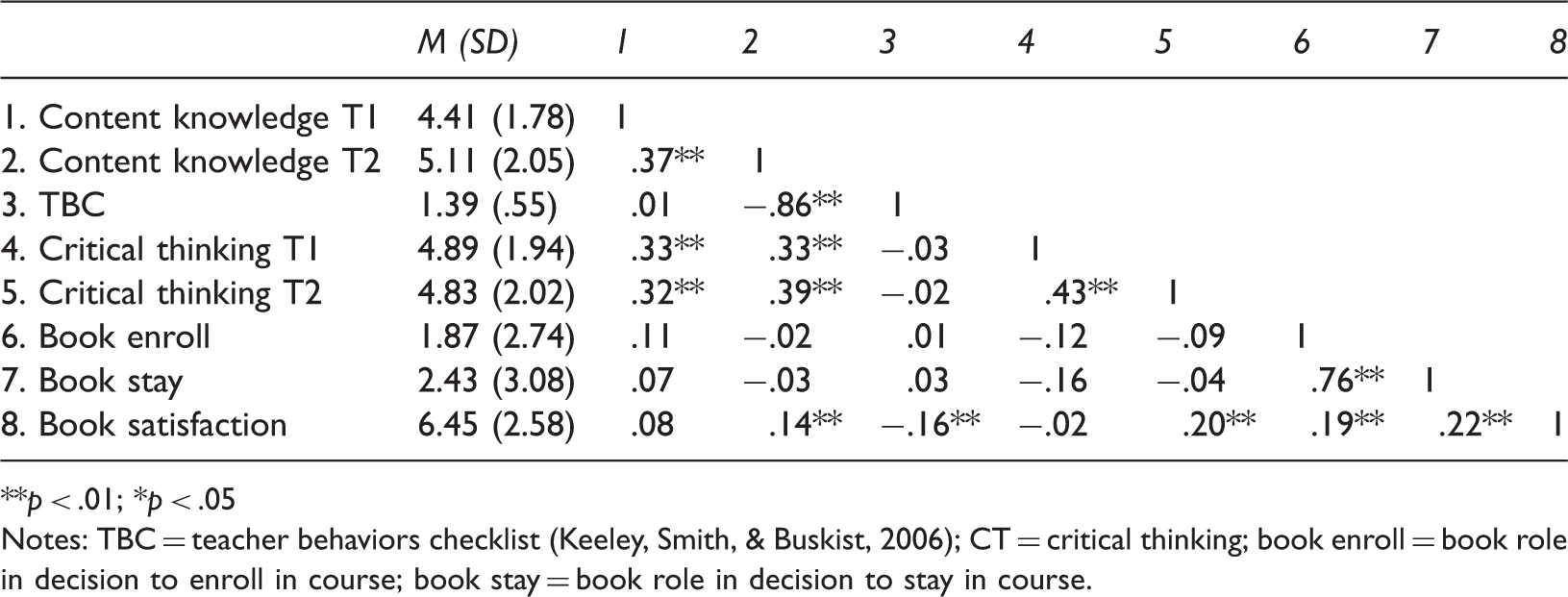

Correlation Matrix.

**p < .01; *p < .05

Notes: TBC = teacher behaviors checklist (Keeley, Smith, & Buskist, 2006); CT = critical thinking; book enroll = book role in decision to enroll in course; book stay = book role in decision to stay in course.

In Fall 2016, the course used an “Inclusive Access” model in which all students were automatically charged for and given access to the required traditional textbook in an electronic book (eBook) format, unless they opted out; 13.1% of students did so. Of course, it is unknown if any of these students obtained access to the book in some other way. In Fall 2017, 9.8% of students who completed the end-of-semester assessment reported never accessing the OER text, despite having free access. The most common reason had to do with feeling the textbook was not needed to do well in the class. Given that we are less interested in the question of how “those who had access” performed than in “when OER is assigned, how do students perform?”, we include all students in our analyses, regardless of whether they reported using the textbook.

Procedure

To encourage completion of the program assessment measures, students received nominal course credit (≤1% of the overall grade) for each of the two assessments; data are used for research purposes following IRB-approved procedures. In each semester, Time 1 (T1) occurred just after the semester add / drop deadline, typically ∼2 weeks after the start of the semester, and Time 2 (T2) occurred at the start of the final week of classes. At each time point, students received a personalized link to the online program evaluation assessments and had one week to complete them in order to earn credit toward their course grade. All students first completed questions about their reasons for enrolling in the course (T1) and suggestions for improving the course (T2) before the content and critical thinking measures described below (in random order), followed by an opportunity to provide feedback on their instructor (T2 only). Measures were administered in slightly different ways to reduce burden on students. In Fall 2016, at both T1 and T2, all students received the content and critical thinking items. In Fall 2017, all students completed the critical thinking items at T1, with half randomly assigned to complete them again at T2; for the content items, half of students were randomly assigned to complete the items at T1 and all students completed them at T2.

Measures

Content knowledge

A 24-item multiple choice test developed by the first author for program evaluation purposes has been used to assess learning outcomes over the previous 10 semesters. These items were developed without reference to any specific textbook and cover the breadth of content instructors were required to cover in the course, including research methods, neuroscience, sensation and perception, learning, memory, child development, social psychology, cognitive biases, disorders, and stress. The majority of the items required students to apply knowledge learned from the course. For example, Pat and Chris broke up, and Pat has been feeling sad ever since. Which of the following would be important in determining whether Pat is experiencing normal sadness after a break-up versus something more serious, like clinical depression? Another example: Which of these is the better example of confirmation bias? A copy of the items is available on request from the first author.

The same 24 items were used in both semesters and at both time points. As part of our efforts to reduce the time required for students to complete the assessment battery, at the end of the semester in Fall 2017, the online survey tool used to deliver the assessment battery randomly selected 10 of the 24 items for each student. Students completed all 24 items at the other time points. To facilitate comparisons across semesters, we randomly selected 10 items for each participant for Fall 2016 T1, Fall 2016 T2, and Fall 2017 T1 and used all 10 items for Fall 2017 T2. Content knowledge scores were calculated based on only these 10 items. Thus, for all time points, we summed the number of correctly answered questions and divided by 10 to yield a percent correct total score that may range from 0 to 1.

Critical thinking

Critical thinking was measured using 11 multiple-choice items that were originally developed by the first author while at another institution to assess learning outcomes in social sciences general education courses. Originally, 20 items were written to assess students' abilities to Identify and critique alternative explanations for claims about social issues and human behavior (10 items) and to Demonstrate knowledge of the appropriate methods, technologies, and data that social and behavioral scientists use to investigate the human condition (10 items). The items were initially developed based on Lawson's (1999) list of questions that reflect basic critical thinking skills in psychology, and then broadened to be relevant to all social and behavioral sciences disciplines. The items were intentionally designed to capture common misunderstandings to help inform curriculum delivery. An example item stem is: You read that researchers studying the effects of divorce on children found that children raised in single-parent homes are more likely to get into trouble at school than children raised by two parents. The researchers conclude that children from single-parent families should be considered at-risk for problems in school. What, if anything, is wrong with this conclusion?

Unpublished data collected by the first author in 2011 showed that students enrolled in senior-level social science capstone courses outside of psychology (n = 31) scored significantly higher than first-year students (n = 40) enrolled in General Psychology. Moreover, 12 of the 20 items distinguished scores on a commercially available and well-validated measure of critical thinking skills; for each of the 12 items, students who correctly answered the item scored significantly higher on the commercially available test than students who did not correctly answer the item. These unpublished data were used to select the 11 critical thinking items used in the program evaluation assessments and reported here. The number of correct answers is summed and divided by 11 to yield a percent correct score that may range from 0 to 1. A copy of the items is available on request from the first author.

Instructor characteristics

Students completed the teacher behaviors checklist (TBC; Keeley, Smith, & Buskist, 2006) for their General Psychology instructor as part of the end-of-semester assessment. Students rated their instructor on 28 characteristics (e.g., sensitive and persistent) with behavioral indicators (e.g., “Makes sure students understand material before moving to new material, holds extra study sessions, repeats information when necessary, and asks questions to check student understanding,” p. 85) using a 5-point scale from 1 (My instructor never exhibits / has exhibited these behaviors reflective of this quality) to 5 (My instructor always exhibits / has exhibited these behaviors reflective of this quality). Responses were averaged to yield a total score (α = .97 in the current sample) that was used as an indicator of instructor characteristics in our analyses.

OER book impact

In Fall 2017 only, participants were asked how they accessed the textbook (hard copy, online platform, downloaded PDF, or not at all). Students were also asked to rate their level of satisfaction with the book (0 = extremely dissatisfied, 10 = extremely satisfied). In spring and summer 2017, the psychology department distributed fliers to advisors across the university to advertise the General Psychology course, highlighting the fact that we were moving to an OER text for Fall 2017. The end-of-course assessment in Fall 2017 also asked students about the extent to which the cost of the textbook influenced their decision to enroll or remain enrolled in the course (0 = none at all, 5 = a moderate amount, 10 = a great deal).

Demographics

Students were asked to report their major, race / ethnicity, gender, and parent education level. A student was operationalized as a first-generation college student (FGCS) if neither primary caregiver obtained any formal education after high school. Racial minorities were operationalized as students who did not identify as White.

Results

Book Impact

Students (n = 1180) in Fall 2017 reported a moderate level of satisfaction with the OER textbook, M = 6.83, SD = 2.53. The cost of the book had little impact on students' decision to enroll in the course, N = 864, M = 1.95, SD = 2.82, and a moderately low, but significantly higher, impact on their decision to stay enrolled in the course, n = 843, M = 2.56, SD = 3.21, t (797) = −6.34, p < .001, d = 0.22. However, first-generation college students (FGCS; n = 68) reported that the cost of the book had a significantly higher impact on their decision to enroll in the course (M = 2.71, SD = 3.44) compared to students who were not FGCS, n = 659, M = 1.86, SD = 2.74, t (81.13) = −2.01, p < .05, d = 0.27, 95% CI [0.01, 1.68]. 1 The decision to remain enrolled was not significantly different for FGCS (M = 3.03, SD = 3.57) and non-FGCS, M = 2.46, SD = 3.16, t (710) = −1.40, p > .21. Additionally, students who did not identify as White reported that the cost of the book had a significantly higher impact on decisions to enroll in the course (n = 166, M = 2.45, SD = 3.02) and remain enrolled (n = 159, M = 2.97, SD = 3.41), compared to students who identified as White, n = 584, M = 1.75, SD = 2.71, t (245.58) = 2.69, p < .01, d = 0.24, 95% CI [0.19, 1.21]; and n = 573, M = 2.34, SD = 3.11, t (235.75) = 2.11, p < .05, d = 0.19, 95% CI [0.04, 1.22], respectively. It should be noted that students who dropped the course did not complete these items and the means for all groups are relatively low, so the results should be interpreted with caution.

Learning Outcomes

Analyses of learning outcomes are limited to those students enrolled in a course section taught by a GTA to avoid confounding effects of book with effects of course format. Preliminary analyses indicated there were no univariate outliers in either content knowledge or critical thinking, but there were 15 univariate outliers in the TBC total scores (defined as scores more than 4 SD from the mean and confirmed through inspection of histograms). Although the overall pattern of results is the same whether the outliers are included or excluded, we report the more conservative results from analyses involving the TBC that excluded these 15 outliers. Chi-square analyses indicated no differences in the composition of the Fall 2016 and Fall 2017 samples by race: X2 (7) = 5.90, p > .55, gender: X2 (2) = 3.04, p > .22, or class year: X2 (4) = 6.33, p > .18.

Supporting the validity of our outcome measures, students enrolled in honors sections scored significantly higher than students enrolled in non-honors sections on both content knowledge, t (106.93) = −10.12, p < .001, d = 1.04, 95% CI [1.64, 2.44], and critical thinking, t (101.76) = −8.67, p < .01, d = 0.91, 95% CI [0.13, 0.20] at the end of the semester. Given that honors students scored higher on our outcome measures and were taught exclusively by experienced instructors, and in light of their small numbers, we exclude honors students from our analyses below to ensure any effects are not being inadvertently driven by honors students. TBC total scores were correlated with content scores, r = .09, p < .001, indicating students who rated their instructors as more frequently exhibiting the characteristics included on the TBC scored higher on the content knowledge measure. Surprisingly, novice instructors scored significantly higher on TBC total scores, M = 4.65, SD = .50, than experienced instructors, M = 4.59, SD = .52, t (1299.53) = −2.21, p < .05, d = 0.11, 95% CI [-0.11, −0.01]. This difference was driven by novice instructors scoring higher than experienced instructors on the Caring subscale, Ms = 4.62 and 4.53, respectively, t (1237.77) = −3.21, p = .001, d = 0.17, 95% CI [−0.16, −0.04].

Differences in average scores

To determine if student learning outcomes varied as a function of instructor experience and text book type, end-of-semester critical thinking and content scores were analyzed using separate 2 (book type: traditional, OER) × 2 (instructor experience: novice, experienced) univariate ANCOVAs, with TBC scores as the covariate. 2 No student had scores on both of these outcomes in Fall 2017, so we could not conduct an omnibus MANCOVA. Descriptive statistics are presented in Table 2; correlations are presented in Table 3.

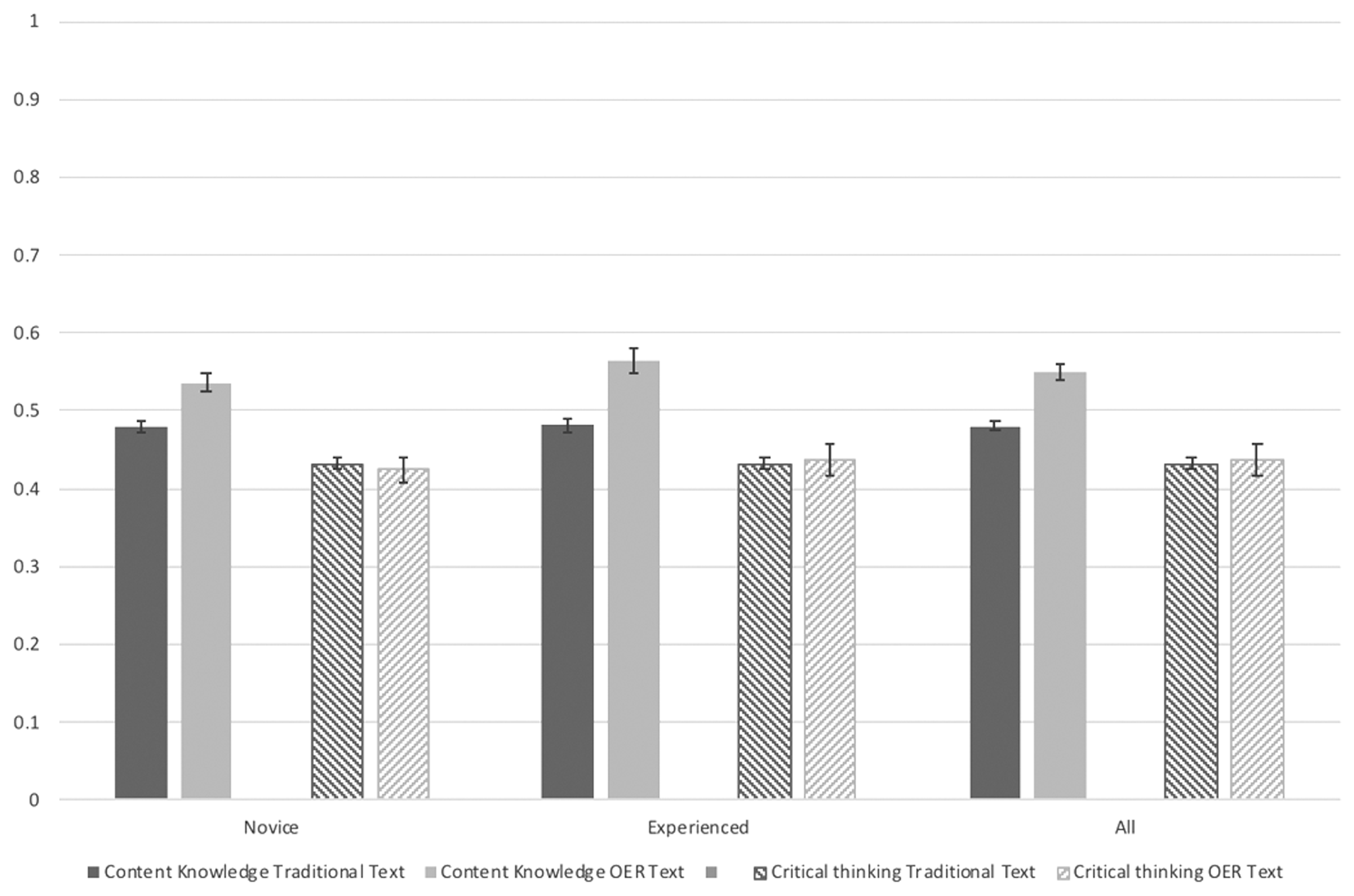

In addition to a very small but significant effect of TBC scores, F (1, 1494) = 5.87, p < .02, Estimated marginal means of end-of-semester content and critical thinking scores as a function of instructor experience level and textbook.

Differences in distribution of scores

We examined the distribution of end-of-semester scores to determine if differences in means were apparent across the entire sample, or for just the lowest-performing students. We coded participants' end-of-semester scores into quartiles based on possible scores (i.e., scores of 25% or lower were coded in the bottom quartile, scores of 75% or higher were coded in the top quartile). There were no differences in quartile distribution of scores on the critical thinking measure, X2 (3) = 0.18, p > .98. However, there were significant differences in the distribution of content knowledge scores as a function of book type, X2 (3) = 37.75, p < .001. When the traditional text was used, 13.1% of students scored in the bottom quartile, 52.1% scored in the second-lowest quartile, 26.7% scored in the second-highest quartile, and only 8.1% scored in the top quartile. However, when the OER text was used, these percentages were 9.2%, 43.0%, 31.9%, and 15.9%, respectively. In other words, fewer students scored less than 50% and more students scored above 50% when the OER text was used, with nearly twice as many students scoring 75% or above. Thus, the mean difference in end-of-semester scores is driven both by decreases in low scores and increases in high scores.

Repeated measures analyses

To rule out the possibility that students may have differed on either content knowledge or critical thinking at the start of the semester, as well as to explore changes in time across the semester, we also conducted 2 (time: beginning of semester, end of semester) × 2 (book type: traditional, OER) × 2 (instructor experience: novice, experienced) repeated measures ANCOVAs, with time as a within-subjects factor, and TBC total scores as the covariate. 3 Only students who provided matched data at both time points are included.

There were no significant main effects or interactions for the critical thinking items (all ps > .44.

Most importantly, there was a small but significant time by book type interaction, F (1, 1166) = 14.92, p < .001,

Discussion

We examined the effects of an OER versus traditional textbook among students enrolled in multiple sections of General Psychology. The present study controlled for instructor characteristics and relied on objective indicators of content knowledge acquisition and critical thinking that were developed without reference to any specific textbook and were identical across all course sections. We found no evidence that use of the OER text impeded learning on any outcome, and some evidence that use of the OER text was in fact associated with a small increase in content knowledge acquisition. We also found that under-represented students (i.e., first-generation college students and racial / ethnic minority students) were more likely than non-FGCS and European American students, respectively, to report that the use of the OER text had affected their decision to enroll in the course and, for minority students, to remain enrolled in the course.

Consistent with past research finding no evidence that OER impede learning (e.g., Hilton, 2016), there were no differences in critical thinking as a function of textbook. However, students learned slightly more (in terms of basic content knowledge) during the semester in which the OER text was used than during the semester in which the traditional text was used, regardless of their ratings of instructors' competence and supportiveness or of the instructors' experience level itself. Moreover, this effect was not simply due to decreasing the proportion of students who scored especially low on the content knowledge measure, but also to increasing the proportion of students who scored especially high. In other words, use of the OER text did not simply decrease the number of students who did not learn very much; it increased the number of students who did learn. The fact that these findings are based on the same criterion measure for all students—a measure that was designed to assess student learning regardless of instructor, textbook, grading policies, or teaching methods—is particularly compelling.

Moreover, our data were collected from students taught by graduate student instructors, all of whom were new to teaching from an OER text. To our knowledge, ours is the first study to explore the use of OER texts by graduate student instructors, as well as the first to include instructor experience as a factor. We did not find any evidence that novice instructors have difficulty effectively using OER texts. However, it is important to note that all of the graduate student instructors had participated in a robust instructor training program. All but one GTA (an experienced transfer student) in both semesters had completed a 1-credit didactic course on college teaching, taught by the first author, followed by a 2-credit “bootcamp” course focused specifically on preparing students to teach General Psychology. The 1-credit course is typically completed by all graduate students in their first semester, and focuses on course design (e.g., articulating learning objectives from a backward design perspective), ethics, diversity and inclusion, assessment of student learning, technology in teaching, course delivery (lecture, discussion, activities), and professional development. Students who were assigned as the instructor of record for a course completed the 2-credit “bootcamp” course in the mini-term, typically just days or weeks before co-teaching the course to a small summer section before solo teaching a large fall section. Students in the mini-term course delivered two practice presentations, including a complete 50-minute practice class, and discussed teaching ideas for all required content. In both 2016 and 2017, the first author shared her own lecture slides, exams, and other teaching materials. The primary difference between the May 2016 and May 2017 course was that in May 2016, discussion focused on how students would choose which content to focus on from the comprehensive traditional text, whereas in May 2017, discussion focused on how students would supplement content from the OER text. Otherwise, both courses were similar in format and content for new instructors in both semesters. Our results indicate that under these circumstances, use of an OER text was associated with comparable or better outcomes for students.

Limitations and Future Directions

Our results, which address many of the criticisms of past work, add to the growing evidence that the use of OER textbooks in psychology increases student access to course materials and, as a result, increases student success—at least when used by trained and / or well-supported instructors. The extensive training the graduate student instructors in this study received is in some ways both a strength and a limitation of this work. It is a strength insofar as our sample is consistent with recent calls for robust training for instructors of General Psychology (Gurung et al., 2016; Jhangiani & Hardin, 2015); it is a limitation in that this training, although increasingly common, is still not representative of the experience of many new instructors. Future research should examine the extent to which OER textbooks are associated with comparable, if not improved, outcomes in other contexts where instructors receive less training and support.

Our results also relied on comparisons between a traditional and OER text that were both primarily accessed online. This again is both a strength and limitation of our work. It is a strength insofar as we largely controlled for format, rather than confounding medium (print versus digital) with textbook type. This is important, given mixed evidence about the effects of medium on learning outcomes (see Singer & Alexander, 2017). It is a limitation, however, to the extent that comparing a traditional print textbook to a digital OER textbook better reflects the choices students and instructors are typically faced with. Future research could provide an even stronger test by using a 2 (medium: print versus digital) x 2 (type: traditional versus OER) design.

Given the contentious presidential election that occurred in the United States during the Fall 2016 semester when the traditional book was used, it is possible that history effects could have affected our results. Such effects were unavoidable, given that data were collected from two different cohorts of students one year apart. However, historical context / cohort effects are difficult to avoid in such research, and there is at least anecdotal evidence that students' academic performance elsewhere was not affected by the election (Ballard & Wang, 2016). Still, future research that collected data from students using a traditional or OER in the same semester would provide stronger evidence.

Although textbook cost had minimal effect on students' decisions to enroll in or remain enrolled in the course, it is still noteworthy that the use of the OER text was rated as significantly more important to underserved students. Many institutions are working to improve retention of underserved students, and our results suggest that adoption of OER textbooks may be one tool for addressing inequalities in retention rates. Future research should also explore the extent to which use of an OER text might affect course enrollment over time. One factor influencing our decision to adopt an OER text was that the General Psychology course had faced declining enrollments for several years. Although the lower enrollment in Fall 2017 compared to Fall 2016 was consistent with this multi-year trend, we are unable to rule out the possibility of differential attrition from the course because we were unable to obtain institutional data on drop rates in the course each semester. However, past research has found that OER are associated with fewer course withdrawals (Clinton, 2018), so it seems unlikely that our results can be explained by more low-performing students withdrawing from the course in Fall 2017 than in 2016. Still, future research should directly examine whether enrollment and retention patterns shift as textbook choices shift.

Future research should also continue to use objective measures of learning outcomes that are not tied to specific instructors or derived from specific textbooks. Jhangiani and Hardin (2015) identified applying content-based knowledge and using scientific reasoning and critical thinking—both of which are clearly tied to the learning goals and outcomes for psychology majors (American Psychological Association, 2013)—as the two essential skills that all General Psychology courses should foster in students. We included measures of each of these skills; however, it is important for future research to include other psychometrically sound measures that explicitly assess students' ability to apply content knowledge and think scientifically.

Finally, the effect of textbook on students' content knowledge was small. Students who used the OER text correctly answered, on average, fewer than one more item out of 10 than students who used the traditional text, and still only answered 54.1% of the items correctly, on average (versus 47.6% for the traditional text). Given that our courses do not use required comprehensive final exams, these low scores are consistent with past research that has found low retention of course material (58%) among introductory psychology students (Landrum & Gurung, 2013). However, to the extent that these learning gains are representative of students' learning and performance more generally in the course, the small gains may still be quite meaningful, as they represent more than half a letter grade difference (i.e., 6.5% increase in final score).

Conclusions

As institutions grapple with how to increase the persistence and success of all students, but especially those underserved, questions of cost continue to be important. The accessibility of higher education is a social justice issue. Our results suggest that traditional textbooks and their associated costs do not necessarily ensure greater academic success in General Psychology courses relative to low-cost OER, at least in the hands of well-trained instructors. Institutions committed to increasing access and decreasing costs should consider devoting resources to instructor training and support in the adoption of OER textbooks, which allow a greater number of individuals access to course material without negatively affecting those who could otherwise afford a traditional textbook.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.