Abstract

Teaching of Psychology includes a great variety of topics, course formats, and assessment approaches. A central concept that incorporated the interface between teaching goals, instructional methods, and examination modalities is referred to as Constructive Alignment (CA). This model addresses possible designs of teaching to improve students’ learning outcomes as well as enhance their learning experiences, and claims to be applicable independent of disciplinary culture or content. Despite the importance of this approach from an instructional point of view, there is hardly any research, so far, that has been concerned with capturing the three dimensions of CA. As a consequence, the aim of our study was to create an instrument to assess the quality of CA within psychology classes. A questionnaire was designed and was additionally analyzed with regard to students’ judgements about overall course evaluation. The questionnaire was employed in two lectures within the field of educational psychology for teacher training students. Results reveal that overall course evaluation can be predicted by the match between course objectives and instructional methods whereas other course evaluation factors failed as predictors. With a high internal consistency, the instrument provides an alternative or a supplement for traditional course evaluation instruments.

Introduction

Research on academic teaching in higher education has become increasingly important. Universities and other academic institutions have developed awareness for the fact that teaching is a central component of academia (Wang, Su, Cheung, Wong, & Kwong, 2013). Thus, the quality of academic teaching has become a competitive factor in higher education, and universities worldwide are implementing specific quality enhancement frameworks based on teaching, learning, and evaluation strategies to improve students’ learning outcomes (Hénard & Roseveare, 2012). In recent years, Biggs’ (1996) model of Constructive Alignment (CA) has become a quality feature in higher education. In the CA approach, the first step is to plan the desired learning outcomes; this is followed by the alignment of teaching and its assessment. CA is a well-known concept among teachers (especially secondary school teachers). Yet, little is known about university students’ perception of whether or not a course is designed after the principles of CA. In addition, it remains unclear how course design based on CA has implications for Student Evaluation of Teaching (SET).

The continuously increasing importance of SET during the past decades is an indicator for quality improvement in higher education (McKimm, 2009; Zabaleta, 2007).

Nevertheless, an instrument for the evaluation of the alignment among Intended Learning Outcomes (ILOs), teaching methods, and assessment tasks is still missing. Such an instrument could enrich common SET. In this research, we developed an additional evaluation tool that focuses on capturing students’ views on the construct of CA. Based on Bigg's (1996) model of CA, we suggest a questionnaire that is able to capture the perspective of students’ rating of the fit among ILOs, teaching methods, and assessment tasks. This synchronization is usually a core element of the Instructional Design process when planning, conducting, and evaluating a learning environment.

Course Planning and the Instructional Design Process

In general, course planning and teaching are not intuitive processes, but should rather be based on scientific rules and theories of psychology or related domains (Sweller, Ayres, & Kalyuga, 2011; Zumbach, 2010). As course planning is dependent on various factors, a careful planning, analysis, design, and evaluation is indispensable (Zumbach & Astleitner, 2016). This design of learning environments, according to professional criteria, is generally defined as “instructional design.” Key elements of the instructional design process include, for example, needs assessment, analysis of learning, assessment of learner characteristics, choice of learning content, choice of didactical approach, design and use of instructional media, and design of learning assessment procedures (Schott, 1991; van Merriënboer & Kirschner, 2013).

In addition to the process of planning and designing a learning environment, a continuous evaluation of the whole procedure and its subsequent steps is crucial. Most commonly, different methods of formative and summative evaluation are applied to optimize single elements of the learning environments or the whole instructional product itself (Man Sze Lau, 2016; Schott, 1991; Zumbach & Astleitner, 2016). CA could serve as a helpful approach here to synchronize the different parts during the process of planning and implementing learning environments (Biggs, 1998; Man Sze Lau, 2016). CA starts with the learning outcomes that students intend to achieve at the end of the course. ILOs should describe precisely what students will be able to do or know at the end of the semester or course. ILOs are usually based on a learning taxonomy (e.g., the one provided by Anderson & Krathwohl, 2001) and should be published and explained to students at the beginning of a course or unit (e.g., within the syllabus). Teaching and assessment methods are aligned according to the ILOs. Evaluating the synchronization among ILOs, teaching- and learning-related instructional approaches and assessment tasks, and formative and/or summative course evaluation should improve course quality (Zumbach & Astleitner, 2016).

Constructive Alignment

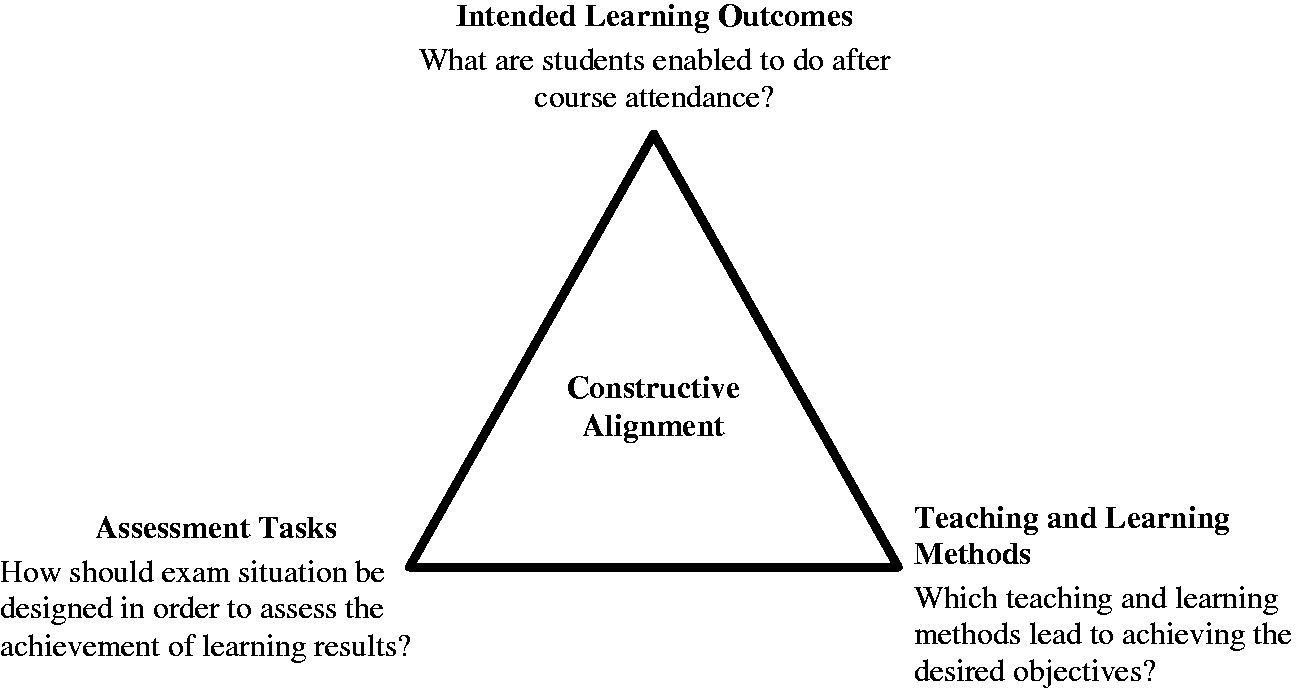

Constructive Alignment (CA) is an approach to enhance the quality of teaching and learning (Biggs & Tang, 2007; Wang et al., 2013). CA focuses on two aspects, … the “constructive” aspect refers to the idea that students construct meaning through relevant learning activities. That is, meaning is not something imparted or transmitted from teacher to learner, but is something learners have to create for themselves. (…). The “alignment” aspect refers to what the teacher does, which is to set up a learning environment that supports the learning activities appropriate to achieving the desired learning outcomes. The key is that the components in the teaching system, especially the teaching methods used and the assessment tasks[,] are aligned to the learning activities assumed in the intended outcomes. (Biggs, 2003, p. 1) Baumert and May (2013); Biggs (1996); translated version.

When designing a course based on the idea of CA, the first step is to define the learning objectives. Decisions about teaching and evaluating assessment methods in an aligned system of instruction have to follow (Biggs, 1996). This should ideally be a fully criterion-referenced system where the objectives define what to teach, how to teach, and how to assess performance. Thus, there have to be precise learning objectives with the teaching methods supporting the students effectively to accomplish these objectives. This also implies that the assessments given have to represent the objectives (Biggs, 2012). As indicated by the triangle, the three elements are arranged within an overall context. They are therefore mutually dependent; hence, the aim is to optimally synchronize all of the factors (see also Biggs, 2014).

CA in Psychology Learning and Teaching

Within the field of higher education, many questionnaires for SET have been developed (e.g., Diehl & Kohr, 1977; Rindermann, 2001; Spinath & Stehle, 2011; Staufenbiel, 2000). Nevertheless, the links among evidence-based improvement of learning and teaching, SETs, and instructional design are often not apparent (Braun, 2011).

Here, CA might be an appropriate concept to make this link clear. Although CA is a rather broad concept that can be used independently from single disciplines, the need to implement this construct within the learning and teaching of psychology is evident. As in other academic disciplines, content/learning objectives, teaching approaches, and assessment vary greatly, mostly depending on learner characteristics. Implicitly and explicitly, most course formats and their syllabi as well as curricula are based on learning taxonomies (e.g., Anderson & Krathwohl, 2001; Krathwohl, 2002). Depending on the level of the taxonomy, different course formats, and conditions of the (pedagogical) environment, degrees of freedom related to instructional design can be limited. If the learning objectives remain on a basic taxonomic level (e.g., knowledge, remembrance, understanding; Krathwohl, 2002) and the number of students is rather high, lecturing might be an appropriate instructional approach. The assessment here should remain on the same taxonomic level (e.g., multiple-choice or short-essay questions on the same taxonomic level).

If the focus of a course is located on a higher taxonomy (e.g., analyzing, evaluating, creating), a large class and lecturing is rather inappropriate. In a course that focuses on applicable problem-solving skills, these skills have to be fostered through students’ active participation (e.g., by Problem-Based Learning or Skills Labs. Consequently, multiple choice exams do not, or hardly, reflect desired learning outcomes. Yap, Bearman, Thomas, and Hay (2012) suggest, in the field of clinical psychology for example, the use of Objective Structured Clinical Examinations (OSCE). In their pilot study they report that students regarded OSCE as a valid, realistic, and fair assessment method. Nevertheless, there are also limitations concerning OSCE: The study by Bogo, Regehr, Katz, Logie, Tufford, and Litvack (2012) within the field of social work showed that students performing well within a practicum performed worse in a corresponding OSCE. This example shows the need for synchronization between assessment and instructional approach, and especially its evaluation, to guarantee the validity of the assessment approach (or the validity of the instructional method).

The need to align the elements of instruction applies to all fields of psychology. If we expect students to use and apply behavioural research methods, it is not sufficient to tell them about research: They have to perform research to understand the possibilities as well as the constraints of methodology. Subsequently, testing their knowledge within a standardized, written exam also seems to lack validity. Here, the grading of research papers is more appropriate.

CA and Academic Course Evaluation

The importance of systematic academic course evaluation as a core approach to support quality management in higher education has improved since the early 1990s (Bialowas, 2016; Braun & Gusy, 2006). Here, academic course evaluation is not regarded as a mere performance rating, but rather as an instructional intervention and, thus, as a quality control instrument (Ernst, 2008). Meanwhile, a broad repertoire of formative and summative evaluation methods have been developed, ranging from open interviews to standardized questionnaires (e.g., Zumbach, Spinath, Schahn, Friedrich, & Kogel, 2007).

From a scientific point of view, academic course evaluations are often used to develop models for an “ideal” teaching model in higher education, and to predict academic achievements. Schneider and Preckel (2017) investigated in their meta-analysis the correlations between 105 variables and achievement in higher education, and separated two dimensions: instructional characteristics and student characteristics. In their analysis, the highest correlations of effective instructional approaches with high student achievement were the implementation of peer assessment, the provision of opportunities for meaningful learning, the clarity, and understandability of instructional material, students’ self-assessment, stimulation by the teacher, and stimulation of social interactions. Student characteristics that correlated highly with academic performance were students’ self-efficacy, grade goal, frequency of class attendance, and intellectual prerequisites (among others). Some of these variables are also frequently implemented in standardized course evaluation questionnaires. The results of this research show that the kind of assessment applied in courses has a major impact on the level of information processing (e.g., multiple-choice exams resulting in a rather shallow level of information processing; Atkins, 1995; Flores et al., 2014). The way in which students perceive the quality of learning is also affected by how exams relate to the overall course design (Brown & Knight, 1994; Drew, 2001; Pereira, Flores, & Niklasson, 2016). Therefore, it is highly important to not exclusively look at single factors influencing students’ learning and academic achievements. Instead, when evaluating courses, it is important to use instruments that focus on the interaction between different course characteristics, rather than looking only at mono-causal relationships (for a list of all items see Appendix 1).

Although the model provided by Biggs (1998) has opened a wide field for curriculum design ideas, little is known about its effectiveness on qualitative aspects of curriculum and course design. Trigwell and Prosser (2013) point out that there is no empirical evidence for qualitative variation in the way academic staff consider all three dimensions for teaching and course design, and how they align those elements for student learning.

Therefore, the aim of our study was to develop an instrument that depicts students’ perspectives on the implementation of the three dimensions of CA in a course and, thus, to obtain additional information to improve course quality. Hence, the following three research questions were addressed:

Can the developed instrument reliably and validly represent the three dimensions of CA? How are the three dimensions of CA related to each other and to course evaluation in general? How are the three dimensions of CA related to SET?

We assume that with some limitations, it is possible to assess students’ perspective of the dimensions of CA reliably and validly. Furthermore, we expected the three dimensions to be strongly related to each other. We also expect that CA has an influence on students’ perception of a course’s quality.

Method

A literary search on instruments using the concept of CA for course evaluation did not provide any results. Therefore, we developed an instrument that represents all three dimensions of CA and provides the possibility to assess students’ perspective of the alignment of ILOs, teaching methods, and assessment tasks. The instrument was applied as a questionnaire-based survey within the field of educational psychology in a pre-service teacher curriculum.

Material

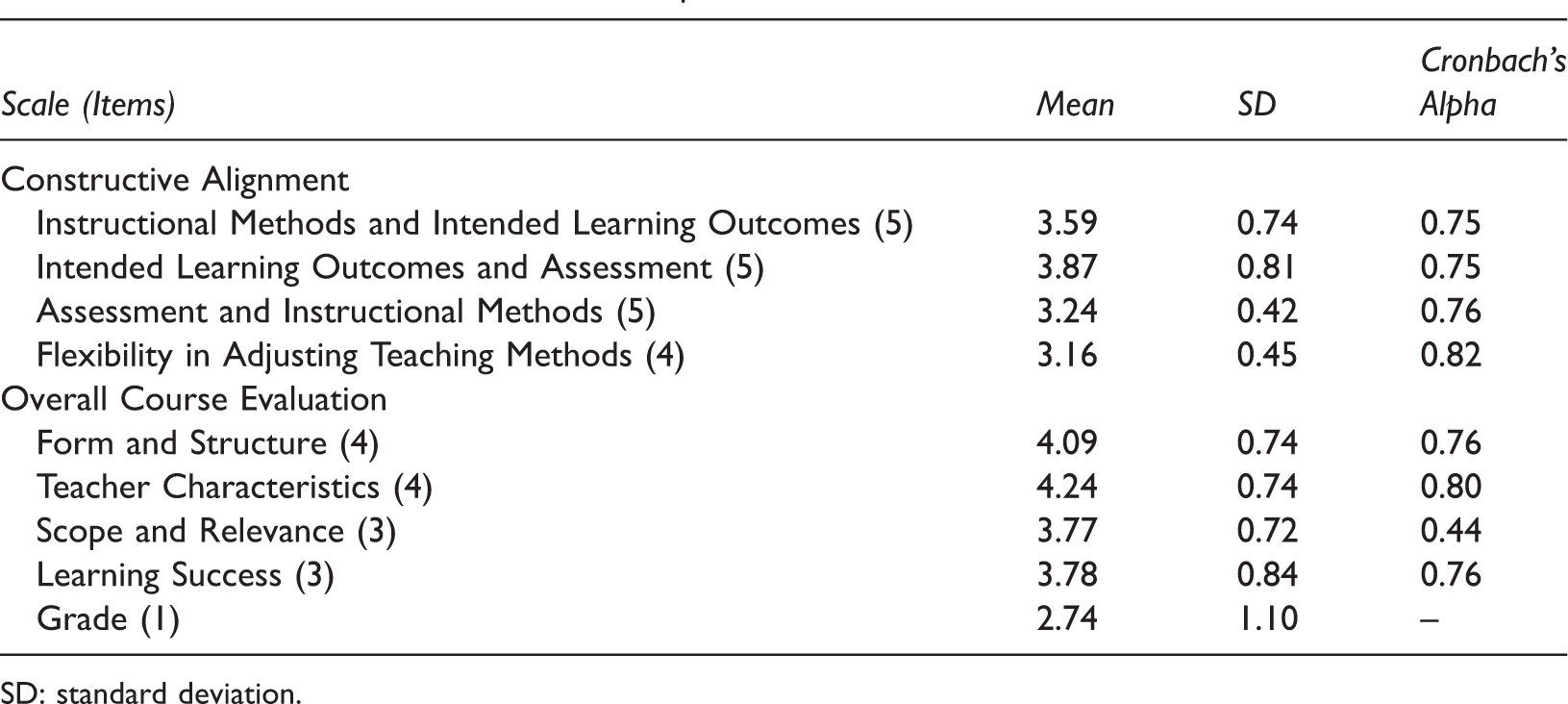

For each dimension of CA, we developed five items. We tested a preliminary version of our questionnaire on a small sample in a faculty of law. The internal consistency of the scales were acceptable, but still required the re-formulation of some items. After adapting these items, the questionnaire had four subscales; the first three scales refer directly to assessing students’ perspective of the implementation of CA.

Fit between instructional methods and ILOs (five items, Cronbach’s Alpha = 0.75; for example, “Teaching methods are adapted to content and learning objectives”). Fit between ILOs and assessment (five items, Cronbach’s Alpha = 0.75; for example, “The assessment reflects content and ILOs”). Fit between assessment and instructional methods (five items, Cronbach’s Alpha = 0.76; for example, “The assessment reflects the different teaching methods”). Flexibility in adjusting teaching methods (four items, Cronbach’s Alpha = 0.82; for example, “The teacher used instructional methods in a flexible manner”). Form and structure (four items, Cronbach’s Alpha = 0.76; for example, “The course is clearly structured”). Teacher characteristics (four items, Cronbach’s Alpha = 0.80; for example, “The teacher is open to any form of constructive criticism”). Scope and relevance (three items, Cronbach’s Alpha = 0.44; for example, “The relevance of the presented teaching content was high (e.g., for the exam, for the future job, for the discipline)”. Learning success (three items, Cronbach’s Alpha = 0.76; for example, “I think that my performance in this course and my learning progress is very high”). A final item assessed the overall evaluation of the course by using the Austrian grading system, ranging from 1 (very good) to 5 (very poor).

A fourth scale was added to assess the flexibility of teachers to adapt the teaching methods accordingly to changes of ILOs during a unit. This scale was designed to assess the instructor’s flexibility during a course, assuming that within a course different learning objectives require different instructional approaches.

In addition, a short scale assessing the overall course evaluation was applied (15 items with four dimensions; all items, except item 15, were 5-point Likert scales, from 1 “does not apply at all” to 5 “fully applies”) (Zumbach et al., 2007).

Data Source and Implementation of the Study

The study was conducted at the end of the summer term in 2017. One-hundred-and-twenty-nine students at a German-speaking research university participated in this study. Ninety-six were female and 32 were male. The mean age was 23.02 (SD = 4.60) and the average number of semesters was 4.38 (SD = 2.16). All participants were enrolled in the pre-service teacher program. The ILOs were presented at the beginning of the course and were available to students within the syllabus during the term.

The questionnaire was given to the students immediately after the written exam at the end of two introductory lectures on educational psychology and developmental psychology for teacher-training students. The students were not graded during this time of the semester. Participation was voluntary.

Results

Research Question 1: Can the Developed Instrument Reliably and Validly Represent the Three Dimensions of CA?

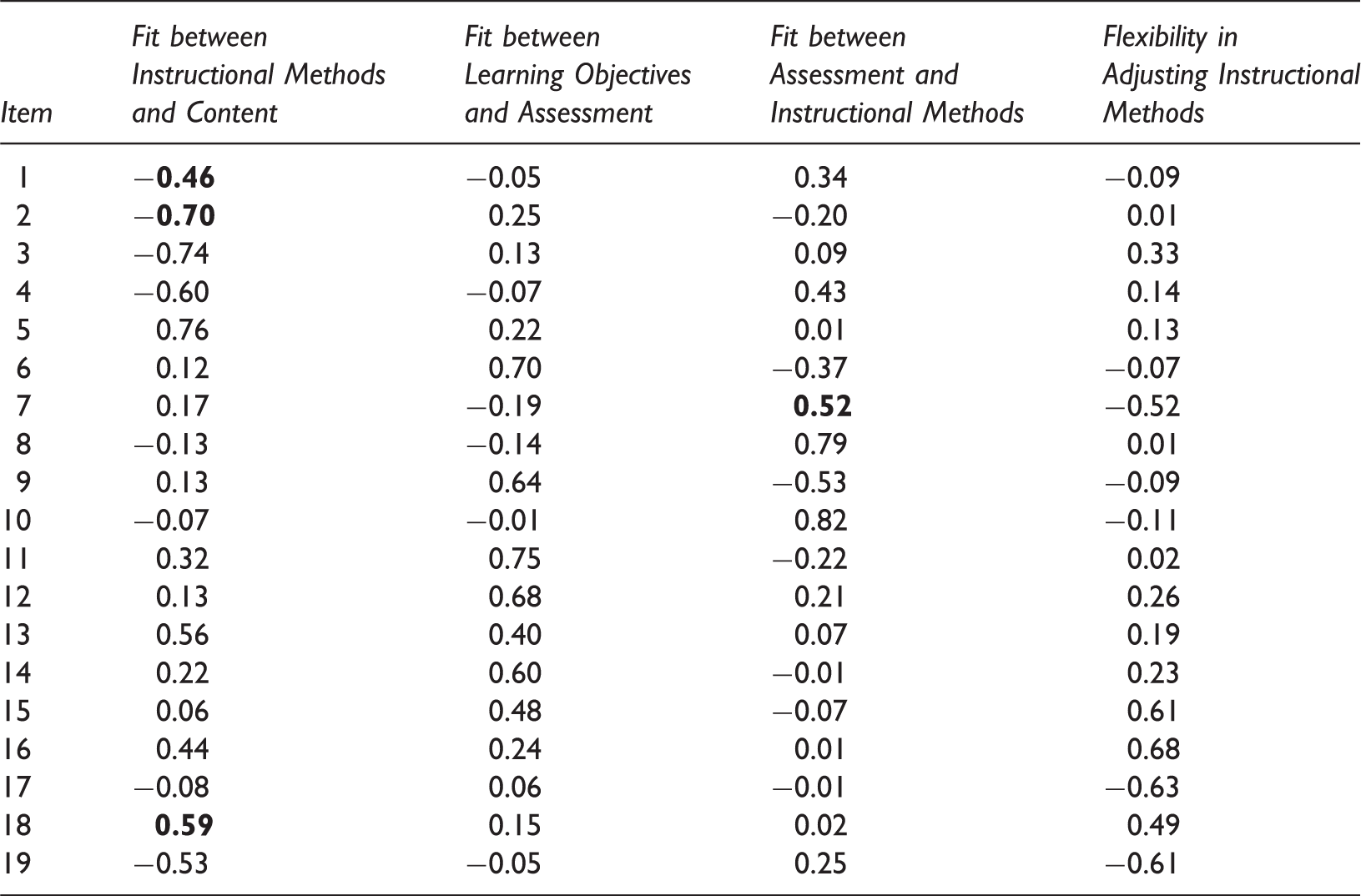

Factor Analysis and Factor Loading.

Mean Values, SD, and Cronbach’s Alpha Values of All Scales.

SD: standard deviation.

Research Question 2: How are the Three Dimensions of CA Related to Each Other and to Course Evaluation in General?

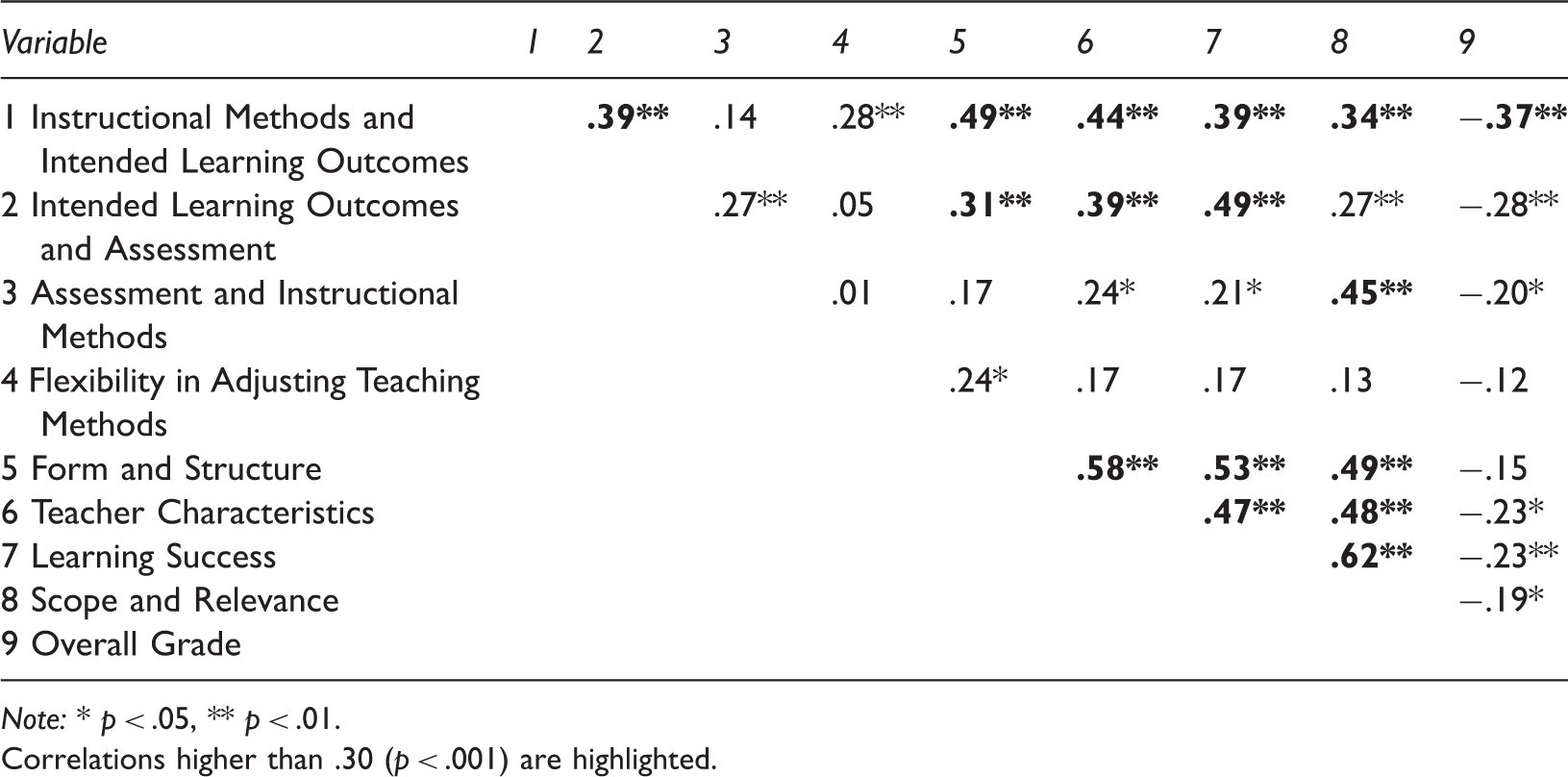

A correlation analysis was conducted to examine the relation between the dimensions of CA and the course evaluation. As the three dimensions of CA are aligned to each other from a theoretical point of view, we analyzed how they correlate with each other and how they correlate with the subscales of the course evaluation. Only correlations higher than 0.30 are reported here (all correlations are listed in Table 3):

There is a correlation between CA subscales “Fit between Instructional Methods and ILO” and “Fit between ILO and Assessment” (r(116) = 0.39, p < 0.01). The subscale “Fit between Instructional Methods and ILO” is correlated with “Form and Structure” (r(115) = 0.49, p < 0.01), “Teacher Characteristics” (r(114) = 0.44; p < 0.01), and ”Learning Success” (r(115) = 0.34, p < 0.01). The subscale “Fit between ILO and Assessment” is also correlated with the three subscales from the overall course evaluation “Form and Structure” (r(119) = 0.31, p < 0.01), “Teacher Characteristics” (r(117) = 0.39; p < 0.01), and “Learning Success” (r(119) = 0.49, p < 0.01). The subscale “Fit between Assessment and Instructional Methods” correlates with “Scope and Relevance” (r(114) = 0.45, p < 0.01). The scale “Form and Structure” correlates with the scales “Teacher Characteristics” (r(120) = 0.58; p < 0.01), ”Learning Success” (r(123) = 0.53, p < 0.01), and “Scope and Relevance” (r(124) = 0.49, p < 0.01) Correlations higher than 0.30 were also found among “Teacher Characteristics” and “Learning Success” (r(120) = 0.47, p < 0.01), and “Scope and Relevance” (r(120) = 0.48, p < 0.01). The subscale “Learning Success” also correlates with “Scope and Relevance” (r(123) = 0.62, p < 0.01). The students’ overall grading of the course only has a correlation higher than 0.30 with the CA subscale “Fit between ILO and Assessment” (r(115) = 0.34, p < 0.01). Bivariate Correlations. Note: * p < .05, ** p < .01. Correlations higher than .30 (p < .001) are highlighted.

Research Question 3: How are the Three Dimensions Related to SET?

To analyze to what extent students’ perspective of CA and factors of SET contribute to the overall grade they give the course at the end of the semester, a regression analysis was conducted. The independent variables were all of the subscales mentioned before. The dependent variable was the overall grade.

A stepwise regression model revealed a variance explanation of 15% (R2 = 0.15, F (1,108) = 18.35, p < 0.001) by the predictors Fit between Instructional Methods and ILO (Standardized Beta = −0.383, t(108) = −4.284, p < 0.01). All other variables were excluded from the model due to non-significance. In a second analysis, we examined if the average sum value of CA has an influence on students’ grading of the course. Independent variables were again all of the subscales. The dependent variable was the overall grade.

A stepwise regression model revealed a variance explanation of 15% (R2 = 0.15, F (1,108) = 19.44, p < 0.000) by only one predictor “CA overall” (Standardized Beta = −0.391, t(108) = −4.441, p < 0.01). All other variables were excluded from the model due to non-significance.

Discussion and Limitations

The discussion on SET is controversial within higher education as well as in educational research. There are doubts concerning its usefulness and validity for both formative and summative purposes (Spooren, Brockx, & Mortelmans, 2013). One major reason for these doubts is that the quality of teaching and the perspective of SET ratings are influenced by different aspects (e.g., teacher characteristics, learner characteristics, design of learning environments, etc.). We assumed here that the perception of CA contributes to an overall academic course evaluation and, thus, may be an additional helpful instrument along with SET to understand student ratings.

The first focus of this study was the design and validation of a questionnaire to assess students’ perspectives on the dimensions of CA in academic teaching (Biggs, 1996). The results of an exploratory factor analysis revealed that the items of the three CA scales load on one factor each, with one exception: The factor that loads for two factors (factor 2 and factor 3) is blurred. One explanation might be the theoretical overlap of these two scales. Both subscales assess the fit between assessment tasks and teaching methods as well as ILOs. It seems that here students had difficulties distinguishing between ILOs and teaching methods. With regard to the reliability of the subscales, the scores of internal consistency showed acceptable values for all of them.

The questionnaire was applied in an educational psychology introductory lecture. The overall learning objectives included getting an introductory overview of the principles, theories, models, application, and research of educational psychology. The instructional approach was a genuine teacher-directed lecture, and the exam consisted of multiple-choice questions and short-essay tasks that aimed to represent the ILOs of the lecture, and remain on a basic taxonomic level of knowing and understanding (Krathwohl, 2002). We focused on validating the CA subscales by predicting the students’ overall course evaluation. The results here reveal that none of the course evaluation instrument’s subscales were able to predict the overall evaluation score. This might be due to a poor internal consistency of the subscale Scope and Relevance, but does not explain why all the other scales, such as Teacher Characteristics or Form and Structure, did not contribute to the explanation of variance as they did in prior research (e.g., Zumbach et al., 2007). The only significant predictor with a fair explanation of variance was the CA-subscale Fit between ILO and Instructional Methods. It seems that the perception of the rather isolated assessment at the end of the term did not contribute to the overall experience of the learning environment. It is likely that the students did not experience the exam at the end of the term as part of the learning environment, but rather as an “appendix.” Another explanation might be that the final exam was seen by students as “given,” because this form of written assessment (multiple-choice and short open questions) is a common approach at the institution where the study was conducted. A lack of experience with alternative assessment approaches could have biased the students’ ratings. Nevertheless, the experience of a synchronization between ILOs and instructional methods seemed to be a good indicator for overall assessment of the course. Here, results reveal that the pre-service teacher students in this study seemed to prefer a course where the target taxonomy level is supported by corresponding teaching approaches. This might be, on the one hand, rather trivial: The use of complex, learner-centered approaches for basic taxonomy levels like knowing or understanding might be an over-complication of the learning environment, whereas the use of teacher-centered approaches for higher-order levels like problem-solving or creating might be an over-simplification. Nevertheless, on the other hand, the amount of pre-given learning objectives in curricula or syllabi very often lead to the latter situation in daily academic teaching practice. Thus, the match between ILO and instructional methods is experienced as a crucial aspect when judging course quality.

With regard to correlations, the results reveal that many of the different subscales assess similar constructs. It has to be noted that the interpretation of the correlations must be done carefully, because the number of correlations as presented here are problematic due to an Alpha-Error-Accumulation. There are medium to high correlations within all the subscales of each questionnaire, but only single correlations among subscales from both instruments. These single correlations indicate that certain aspects of the course evaluation are more strongly related to some subscales of CA than others. Both CA subscales dealing with ILOs are related to course evaluation dimensions that focus on form, structure, teacher presentation, and especially learning outcomes, whereas the perceived scope and relevance of the course was only correlated with the fit between assessment and instructional methods. This indicates that both concepts are related, but not the same. In addition, the results show that the lecture and its assessment were perceived as a rather common approach. Students are familiar with this way of teaching and testing, and very often lack understanding of how the content might contribute to their further and future professional development. Thus, the scope and relevance might also be restricted to this perspective: the scope and relevance of the content is given when the teacher “teaches to the test.”

The questionnaire developed here does not differentiate between academic disciplines or different course formats. This allows an examination of the relation of CA and course evaluation across fields and course formats (e.g., lectures, seminars, practical applications, trainings; continuously assessed vs. none continuously assessed; different numbers of credits), instructional approaches, and different assessment types. Nevertheless, there are many different influences on SET as well as on students’ learning behavior that should not be neglected. In our research, due to a lack of time and resources, we were not able to take a look into correlations between students’ objective grades for the course and the evaluation. For this sample, ethical issues and university policy did not allow the obtainment of person-related data regarding exam grades, but subsequent work is planned to find possible solutions for this.

The limitations of our study are that our results only represent a small sample size, and for further research an overall scale for measuring students‘ perspective on CA should be included in the questionnaire. Additionally, small changes to the design of the study must be made. Students may not remember the ILOs at the end of the semester, so for further research it will be necessary to tell, explain, or ask students again about the ILOs before handing out the questionnaire. This might help students to better reflect on the course and whether the course took the three dimensions of CA into account.

The study presented here is, to our knowledge, a first attempt at assessing the dimensions of CA within the field of educational psychology and might supplement existing SET instruments.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.