Abstract

Studies demonstrate that students’ study behavior is frequently dysfunctional, because they tend to cram shortly before examinations. This behavior is antithetical to spaced learning and can impair academic achievement. We investigated the extent that the temporal distribution of learning activities (a) varies as a function of the organization of the course, (b) is subject to individual differences and (c) affects the metacognitive learning outcome. Participants of four lecture-like educational psychology courses (N = 259) were presented with learning materials stored on the university’s online learning platform. New materials were published weekly and access to these materials was automatically registered. The students completed either a test at the end of the semester (in two end-term-test courses) or fulfilled written assignments throughout the semester (in two multiple-assignment courses). Students in the multiple-assignment courses accessed the materials more continuously than students in the end-term-test courses. Cluster analyses in the end-term-test courses revealed students primarily accessing the materials late in the semester and students accessing the materials continuously throughout the semester. Continuous access was associated with more accurate metacognitive monitoring. The results are discussed in the context of the relation between metacognitive monitoring and the regulation of study behavior.

Sometimes, students organize their learning activities in a suboptimal way. Several studies have demonstrated that many students prefer massed instead of spaced learning (e.g., Taraban, Maki, & Rynearson, 1999), focus on short-term instead of long-term learning outcomes (e.g., Wissman, Rawson, & Pyc, 2012) and tend to use ineffective instead of effective learning strategies (e.g., Dunlosky, Rawson, Marsh, Nathan, & Willingham, 2013). Several studies have revealed that the preparation for tests and exams is postponed over long periods, leading to steeply increasing study times shortly before a test (e.g., Taraban et al. 1999; Wissman et al., 2012). Students in psychology courses are no exception to these behaviors—the samples of several studies relevant to this topic included psychology students (e.g., Hartwig & Dunlosky, 2012; Taraban et al. 1999). In the present study, we investigated students’ study behavior in psychology courses. Two research questions referred to factors affecting the temporal distribution of learning activities, and one research question referred to how differences in the distribution of learning activities affect the metacognitive learning outcome.

Measuring study time reliably in field settings is difficult. Although technical conditions for experience sampling methods have improved (Iida, Shrout, Laurenceau, & Bolger, 2012), they are still costly. The reliability of subjective, retrospective time estimates has been questioned in various contexts (e.g., Hartley et al., 1977; Panter, Costa, Dalten, Jones, & Ogilvie, 2014). Therefore, a different approach was chosen in the present study. We used the students’ access to course materials stored on an online learning platform as an indicator of the temporal distribution of learning activities. Most universities provide online platforms on which course materials are published and the students’ access to these materials can be logged. Although access data to course materials do not necessarily indicate learning activities, they indicate when students think accessing course-related materials would be useful as a basis for their learning activities outside the lecture.

The first research question was whether students can be supported in allocating their study time more equally throughout the semester (i.e., spaced learning behavior). A straightforward hypothesis is that students’ study time allocation is geared towards the temporal pattern of the course requirements. Many university courses are largely teacher-centered and consist of a series of lectures followed by an examination at the end of the semester. In such courses, often no student activities are requested before the end of the semester—except attending the lectures. Although this type of university course has been critically discussed (e.g., Murray, 1978; Short & Martin, 2011), lecture-oriented courses are still widespread. They appear more economical than learner-centered courses, as the latter usually require smaller groups than teacher-centered lectures. Moreover, lectures provide opportunities to describe and analyze larger domains in a structured and coherent way and they can be effectively combined with other instructional methods (e.g., Baeten, Dochy, & Struyven, 2013). However, the temporal pattern of requirements (i.e., low student activation throughout the semester and examination at the end of the semester) may encourage students to postpone learning activities and to avoid the allocation of study time before the end of the semester when the “hot phase” of examination preparation is inevitable. If students’ study time allocation primarily follows the temporal pattern of the course requirements, it should be easy to change this behavior by introducing a different temporal pattern of course requirements. Courses requiring student activities throughout the semester should lead to a more continuous study time allocation, especially when the required activities are obligatory or are subject to the evaluation of the students’ performance. To answer the first research question, we compared lecture-like university courses with different temporal patterns of course requirements. We hypothesized that the temporal patterns of accessing the course materials (as an indicator of the distribution of learning activities) will vary as a function of the temporal patterns of course requirements.

The second research question addresses the extent that the distribution of learning activities is subject to individual differences. Although we hypothesized that students’ study time allocation is in part a function of the temporal pattern of study requirements, students are expected to show dissimilar habits or strategies in the distribution of their learning activities. Previous studies also revealed substantial variance in study time allocation. Some studies, for example, have indicated that the large majority of learners tend to mass their learning activities shortly before a test (e.g., Taraban et al., 1999), whereas other studies have indicated more balanced tendencies of massed versus spaced learning activities among learners (e.g., Hartwig & Dunlosky, 2012). To answer the second research question, we analyzed how students distributed their learning activities in lecture-like courses that required no further obligatory student activities except passing an end-term test. We hypothesized that different patterns of the distribution of learning activities could be identified.

The third research question was whether different patterns of the distribution of learning activities are related to different levels of accuracy in metacognitive monitoring. This question refers to the observation that students’ metacognitive monitoring of their learning results is often inaccurate (e.g., Jacoby, Bjork, & Kelly, 1994; Vesonder & Voss, 1985). However, three reasons suggest that spaced learning can result in more accurate metacognitive monitoring. Two arguments refer to the direct modulation of the encoded information in long-term memory. Spaced learning has the potential to create multiple and variable encoding situations, resulting in more elaborated memory representations (e.g., Glenberg, 1979; Melton, 1970). Secondly, spaced learning supports the consolidation of memory traces resulting in more stable memory representations (e.g., Dudai, 2004; Wickelgren, 1972). Elaboration and consolidation of memory representations could contribute to a more solid basis for accurate metacognitive judgements. The third argument refers to the metacognitive level and focuses on the monitoring process during learning and the diagnostic information that can be derived from a learning session (e.g., Bahrick, 1979; Bahrick & Hall, 2005). Retrieval failures and uncertainty about learning content will become more evident in more widely spaced learning sessions than in massed learning sessions. Thus, cognitive and metacognitive mechanisms suggest that spaced learning activities may facilitate and foster the accuracy of future metacognitive judgements. To answer the third research question, we compared students showing different temporal patterns in accessing course materials within the same type of course. We hypothesized that students who accessed the course materials more continuously would monitor their learning result more accurately than students primarily accessing the materials late in the semester.

Methods

Participants

We investigated four lecture-like educational psychology courses that were given in the summer term 2016 (14 weeks) and in the winter term 2016/2017 (15 weeks). The participants (N = 252) were teacher students in their master’s phase and the percentage of women ranged from 63% to 76% across the courses. Ten students (3.9%) failed one course in the summer term and participated in the corresponding course in the following winter term. Eleven students (4.3%) attended both types of courses (see the following section on course contents and course type).

Materials and Variables

Course content and course type

Course content included basic knowledge about psychology as a scientific discipline, and theories and research findings related to human memory, learning, development and social processes. In all courses, potential applications to instructions in schools were discussed. All courses followed a teacher-centered style and consisted of structured, weekly talks given by the instructor. Students had the opportunity to ask questions and discuss controversial issues.

Two types of courses are distinguished, mainly differing in their study requirements. Two courses required passing a test at the end of the semester (end-term-test courses) and two required completing written assignments throughout the semester (multiple-assignment courses). One of each course type was given in the summer term 2016 (14 weeks) and in the winter term 2016/2017 (15 weeks). The content was highly comparable between both course types, although the organization of the topics differed slightly. For example, the topic of spaced learning was discussed at the beginning of the semester in the multiple-assignment courses and in the middle of the semester in the end-term-test courses. The courses were also different in the method of presenting each topic. For example, theoretical issues were more condensed in the multiple-assignment courses and instead more empirical studies were discussed. The two end-term-test courses were instructed by the same teacher tandem in both semesters, and the two multiple-assignment courses were instructed by one teacher of this tandem in the summer term 2016 and a different teacher in the winter term 2016/2017.

Measuring of the temporal distribution of learning activities

In both course types (end-term-test and multiple-assignment courses), new course materials were published on the university’s online learning platform before each week’s lecture. These materials included the presentation slides used in the current week’s lecture, figures, self-learning tasks, references and explanatory texts. Students could visit the learning platform and inspect or download the materials as soon as they had been published. Each access was automatically registered by the software controlling the online platform. The number of accesses per student was summed over the semester. The percentage of accesses of each student in the quartiles of the semester was used as a dependent measure.

End-term-test measures

In the two end-term-test courses, each test consisted of 40 confidence-weighted, true–false items (Barenberg & Dutke, 2013; Dutke & Barenberg, 2015). A true–false item is a one-sentence statement (e.g., “Learned helplessness involves causal attribution processes”), and the students were asked to decide whether this statement was true or false (e.g., Frisbie & Becker, 1991). This decision required the activation of knowledge acquired in the course. In addition, students judged the confidence that their decision was correct. Thus, each item was answered on a scale presenting four response options: (1) I am sure the statement is true; (2) I think the statement is true, but I am unsure; (3) I think the statement is false, but I am unsure; or (4) I am sure the statement is false.

From this scale, two simple and five complex measures could be derived. The simple measures were the percentages of correct true–false decisions, indicating the correctness of answering irrespective of the students’ confidence level, and the percentage of confident judgements, indicating the confidence in the answers irrespective of their correctness. Metacognitive monitoring was assessed by complex measures reflecting different facets of metacognitive monitoring accuracy often used in the field of metacognition (cf. Schraw, 2009; see Appendix 1 for formulas). The absolute accuracy (AC) of the confidence judgements reflects the overall match between confidence and correctness. AC was calculated by adding the proportion of correct and confident answers to the proportion of incorrect and unconfident answers. The bias of the confidence judgements (BS) was calculated by subtracting the relative number of correct answers from the relative number of confident answers. Positive values indicate general over-confidence; negative values indicate under-confidence. The BS score, however, cannot differentiate whether correct and incorrect answers are biased in the same way. Therefore, two conditional probabilities were calculated: the confident-correct probability (CCP), indicating confidence given the answer was correct, and the confident-incorrect probability (CIP), indicating confidence given the answer was incorrect. A high CCP score (indicating a high number of correct answers given with confidence) and a low CIP score (indicating a low number of incorrect answers given with confidence) would reflect that the learner can adequately discriminate between learning content already mastered and learning content requiring further learning. The extent of this discrimination is represented by the discrimination score (DIS), which is the difference between both probabilities (CCP minus CIP).

Procedure

The end-term-test courses required passing a test in the last week of the semester. No other student activities were required except attending the course, reflecting its content and preparing for the test. The test comprised 40 confidence-weighted, true–false items (Barenberg & Dutke, 2013; Dutke & Barenberg, 2015), which provided the opportunity to derive measures of learning success (number of correct responses) as well as measures of metacognitive monitoring accuracy based on item-specific confidence judgements. The test was passed when students reached more than 50% in their test score (i.e., chance level), which was calculated by the correctness of answers and the confidence in the answers (for details, see Dutke & Barenberg, 2015).

The multiple-assignment courses required completing three written assignments throughout the semester but no end-term test needed to be passed. The students were asked to describe potential practical applications of the research results discussed in the lectures. Students passed the assignments when they submitted three texts, each of them correctly describing at least one research finding and one corresponding application for school.

Design and Data Analysis

Research Question 1

If the temporal pattern of learning activities mainly follows the temporal pattern of course requirements, students in the multiple-assignment courses should access the course materials more continuously than students in the end-term-test courses. To test this hypothesis, we analyzed the relative number of accesses to the learning platform throughout the semester in a mixed-factorial design with the quartiles of the semester (Q1, Q2, Q3 and Q4) as a within-participants factor and the type of course (end-term-test versus multiple-assignment) as a between-participants factor.

Research Question 2

Not all students are expected to show the same temporal distribution of learning activities, particularly when no further obligatory activities are required than passing an end-term test. To explore the extent that the distribution of learning activities was also subject to individual differences, we analyzed the individual patterns of access to the learning platform in the two end-term-test courses (N = 167) by performing a hierarchical cluster analysis.

Research Question 3

We assumed that spaced learning may trigger two cognitive mechanisms, elaboration and consolidation of representations in long-term memory, and one metacognitive mechanism, providing more valid diagnostic information during learning that have the potential to enhance the accuracy of metacognitive monitoring. To explore this hypothesis, we compared the metacognitive performance in students with different patterns of access to the learning platform in the two end-term-test courses.

The alpha level for significance tests was set to .05 for all analyses.

Results

Research Question 1

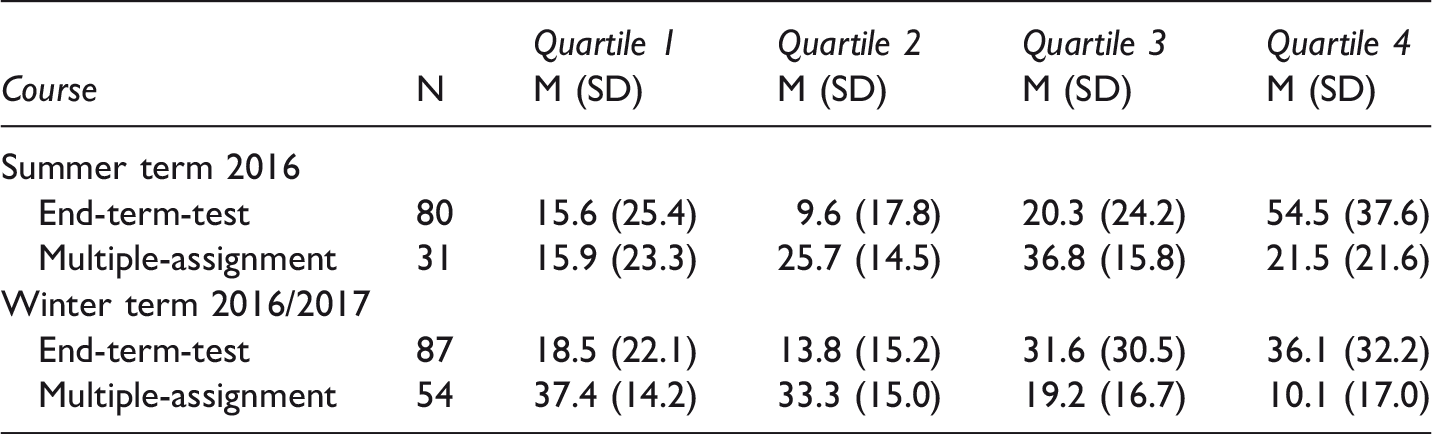

Mean percentages of course material accesses in end-term-test courses and multiple-assignment courses.

We repeated this analysis with the corresponding courses from the winter term 2016/2017. The mean percentages of accesses to the course materials are shown in Table 1. An ANOVA with the same design as with the summer term data was computed. The within-participants factor was not significant (F < 1.0), but the interaction between quartiles and type of course was significant, F(2, 417) = 25.53, p < .001,

Research Question 2

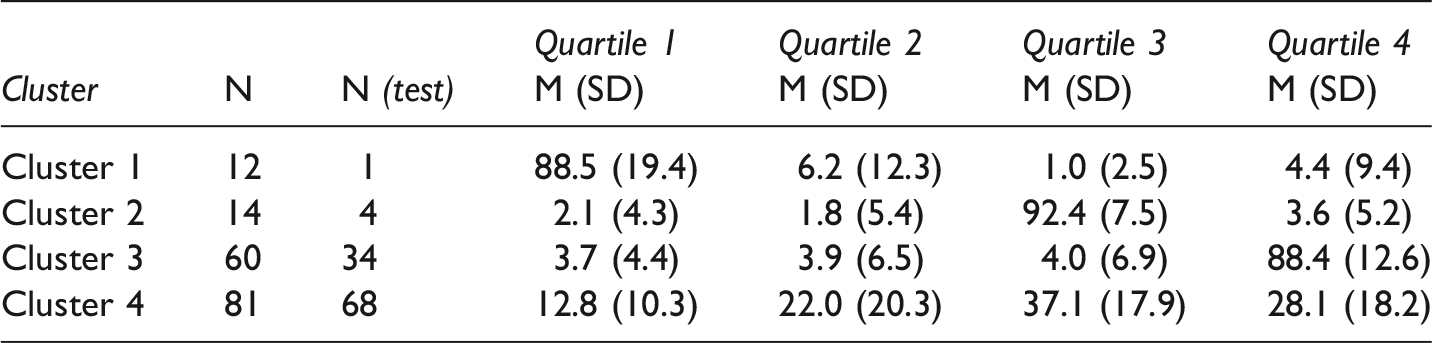

Mean percentages of course material accesses in four clusters of students in end-term-test courses.

Note. N (test) = number of students who participated in the end-term test.

The first two clusters are small and demonstrate inadequate strategies of accessing the course materials. Cluster 1 (N = 12 students) executed almost 90% of its accesses in Q1. Only one of these students decided to participate in the end-term test. The students in Cluster 2 (N = 14) made over 90% of their accesses in Q3. Most of these students also apparently dropped out, and only four of them participated in the end-term test. The third cluster (N = 60) accessed the course materials extremely late. About 88% of the accesses occurred in Q4 and 57% of the students in this cluster participated in the test. The fourth cluster (N = 81) showed a more continuous access strategy: Their mean access rates increased from 12.8% in Q1 to 22.0% in Q2 to 37.1% in Q3 and then slightly decreased to 28.1% in Q4. A high number of students (84%) in Cluster 4 participated in the end-term test. These results are consistent with our hypothesis that different individual access patterns for course materials can be identified.

Research Question 3

Given that Cluster 1 and 2 test data were available for only five students, we omitted these clusters from further analyses and included only students from Cluster 3 (late access) and Cluster 4 (continuous access). Both clusters included students from the summer and the winter terms (Cluster 3: 36 summer/24 winter; Cluster 4: 35 summer/46 winter). Thus, the clusters do not represent behavior specific to one of the two courses. The means of the end-term-test measures were compared between Cluster 3 (late access) and Cluster 4 (continuous access) by one-way ANOVAs.

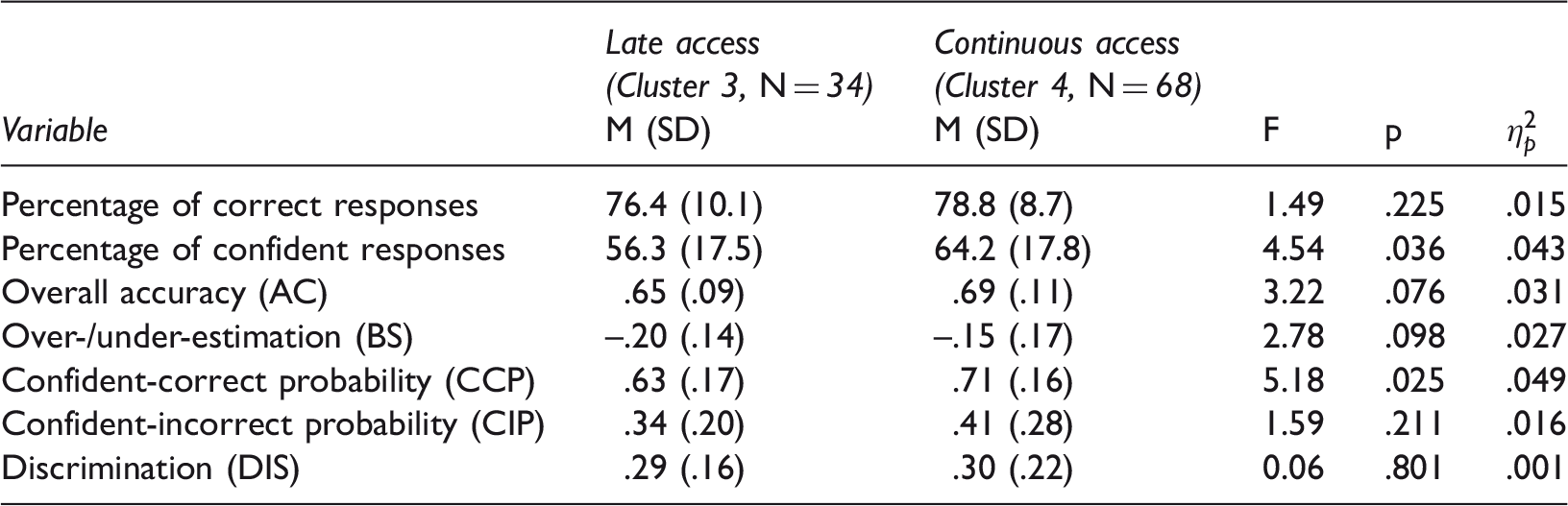

Performance in the end-term test in Cluster 3 (late access) and Cluster 4 (continuous access).

AC: overall accuracy of the confidence judgements; BS: bias of the confidence judgements (over- versus under-estimation); CCP: confidence-correct probability; CIP: confidence-incorrect probability; DIS: discrimination.

As increased overall confidence reveals no information about the accuracy of the confidence judgements, we also analyzed the measures indicating different facets of metacognitive monitoring accuracy (see Table 3 for means, standard deviations and ANOVA results). Students continuously accessing the course materials tended to show higher overall accuracy of their confidence judgements (AC) and lower underestimation of their test responses (BS), although neither mean differences were significant. Analyses of the more specific indicators revealed that the confident-correct probability (CCP) was significantly higher in the continuous access cluster than in the late access cluster. Although non-significant, the confident-incorrect probability (CIP) was numerically higher in the continuous access cluster compared to the late access cluster. Consequently, the discrimination measure (DIS) did not differ between the clusters. The results of most of the metacognitive measures (confidence, AC, BS, CCP) are consistent with the hypothesis that students accessing course materials more continuously throughout the semester monitor their learning result more accurately.

Discussion

Previous studies revealed that students often use ineffective learning strategies (Dunlosky et al., 2013). For example, they tend to postpone their learning activities until the next examination is impending (e.g., Taraban et al., 1999; Wissman et al., 2012). In the present study, we investigated students’ temporal distribution of learning activities in four lecture-like psychology courses. More specifically, we examined the extent that the temporal organization of course requirements (Research Question 1) and individual differences (Research Question 2) affected students’ temporal distribution of learning activities throughout the semester and the extent that different patterns of the distribution of learning activities affected the metacognitive learning outcome (Research Question 3). Bearing in mind the shortcomings of retrospective self-report data on study time allocation, we chose a different approach in the present study and analyzed the students’ accesses to course materials on the university’s online platform as a proxy for their temporal distribution of learning activities.

To answer Research Question 1, we analyzed students’ accesses to the course materials in psychology courses with different temporal patterns of course requirements (end-term-test versus multiple-assignment courses). Based on evidence about spaced learning and successive relearning, accessing the learning materials as soon as they are available would be recommendable to build a growing basis for continuous, spaced and repeated learning activities (Cepeda, Pashler, Vul, Wixted, & Rohrer, 2006; Rawson, Dunlosky, & Sciartelli, 2013). Consequently, spaced learning and successive relearning should have positive effects irrespective of whether course requirements are scheduled earlier or later in the semester and are recommendable for both types of courses. However, the pattern of results found in the present study indicated that many students pursued a strategy of accessing material “not earlier than necessary.” Indeed, students in the end-term-test courses primarily accessed course materials in the third or fourth quartile of the semester, corroborating the findings of previous studies (Graesser, Halpern, & Hakel, 2008; Roediger & Pyc, 2012). The present results also support the interpretation that this behavior can be modified by course instructions, encouraging (or necessitating) earlier and more continuous learning activities such as in the multiple-assignment courses. However, a strict causal interpretation would be inadequate because of the quasi-experimental character of the present study. Moreover, the reader should be aware that comparing the mean access rates of complete courses does not explicitly consider potential different individual patterns of study behavior within courses of the same type. We observed higher standard deviations of the access rates in the end-term-test courses compared to the multiple-assignment courses. These differences might indicate that accessing the course materials in the end-term-test courses was less homogeneous and more influenced by individual differences, which our second research question addressed.

To answer Research Question 2, we analyzed the individual patterns of accesses to the course materials in the end-term-test courses. The hierarchical cluster analysis revealed four different groups of students. Two small groups (N = 12 and N = 14), however, were omitted, because most of the students in these clusters dropped out and thus did not participate in the end-term test. In the remaining two groups, most of the students participated in the end-term test. Thus, their accesses were more likely to indicate actual study behavior. One group accessed the course materials very late in the semester (N = 61) and one group more continuously throughout the semester (N = 88). This result contrasts with previous findings that most of the learners mass their learning activities shortly before an examination (e.g., Taraban et al., 1999) but is consistent with previous findings indicating a greater variance in students’ distribution of learning activities (e.g., Hartwig & Dunlosky, 2012). Thus, the present study underscores the influence of individual differences on students’ study behavior, although the types of individual differences eliciting these behavioral patterns cannot be specified by the present analysis. Moreover, previous studies often failed to find significant associations between study behavior and learning outcomes, particularly when the study behavior was assessed by self-reports (e.g., Hartwig & Dunlosky, 2012). The influence of different patterns of the distribution of learning activities on the learning outcome was addressed by our third research question.

To answer Research Question 3, we compared the test performance between the late access and continuous access group in the end-term-test courses, with a particular focus on the metacognitive learning outcome. To assess the metacognitive learning outcome, we employed confidence-weighted true–false items and assessed the correctness of and the confidence in students’ answers (e.g., Barenberg & Dutke, 2013; Dutke & Barenberg, 2015). As in the study by Hartwig and Dunlosky (2012), the analysis revealed no effect of different patterns of the distribution of learning activities on the cognitive learning outcome (the correctness of the answers). However, students who distributed their accesses continuously throughout the semester were more confident in their test answers than students primarily accessing materials late in the semester. More specifically, students in the continuous access group tended to show more accurate and less biased confidence judgements than students in the late access group. Furthermore, students in the continuous access group were significantly more confident in their correct answers than students in the late access group. When interpreting these results, the reader should bear in mind the quasi-experimental method of the present study. Nonetheless, the present results indicate that different patterns of the distribution of learning activities can affect the metacognitive learning outcome, thus demonstrating first evidence that spaced learning may have beneficial effects on the accuracy of metacognitive monitoring. These results can be explained by the modulating effects of spaced learning on the cognitive level, the elaboration and the consolidation of representations in long-term memory (e.g., Glenberg, 1979; Wickelgren, 1972), which might facilitate the accuracy of confidence judgements. Moreover, on the metacognitive level, spaced learning activities probably make retrieval failures and uncertainty in acquired knowledge more evident than massed learning activities (e.g., Bahrick & Hall, 2005) and thus might result in more accurate confidence judgements in test situations.

Limitations and Perspectives

One general issue is that providing course materials on the university’s online platform is designed to support self-regulated learning activities, and accessing the course materials enables the students to engage in learning activities. Nevertheless, the access to the course materials is still a rough proxy for actual learning activities. Clearly, learning can occur even without these materials, and accessing or downloading learning materials does not necessarily mean that these materials are used or effectively used. However, measuring access rates is an approach to overcome the shortcomings of (retrospective) self-report data, which often fails to correlate with objective learning behavior (e.g., Hartley et al., 1977). Future studies might employ multiple measurements combining access rates with self-report data (e.g., learning diaries) or other behavioral measures (e.g., tasks or tests available on the learning platform) to validate the access rate measure.

A specific limitation in the investigation of Research Question 1 is that the two course types (end-term-test and multiple-assignment courses) differed in two particular aspects apart from the temporal pattern of their course requirements: the format of the course requirement and the content of the courses. One course type required passing a test, whereas the other required completing assignments. With the present design, we could not determine the extent that the type of requirement contributed to the differences in accessing the materials. However, we expect this contribution to be small, because the tests and assignments basically required the same knowledge and skills, including identifying the theoretical basis, knowing empirical findings and constructing possible applications to instruction at school. Thus, the differences between the course requirements refer more to the format rather than to the cognitive processes implicated. The second concern pertains to the content of the course types. The courses covered highly similar content, but their content was not perfectly matched. For example, the weighting of theoretical explanations and empirical findings of the testing effect slightly differed. In the multiple-assignment courses, retrieval processes were less extensively presented than in the end-term-test courses; instead, more testing effect studies were discussed. Although we have no hypothesis why and how these differences could have contributed to the differences in accessing the materials, future studies aiming at replicating the present findings could include courses with identical content and the same course requirements so that the courses solely differ in the temporal patterns of these requirements. Apart from these two concerns, future studies should consider the possibility of randomizing the allocation of students to course types to overcome the shortcomings of a quasi-experimental design, although this approach may be a challenge in many authentic field situations.

Two specific limitations of the investigation of Research Question 2 need to be addressed. Firstly, although we could identify different individual patterns of the distribution of accesses in the end-term-test courses, we cannot specify the type of individual differences eliciting these findings. For example, the different temporal patterns of accessing learning material throughout the semester could be due not only to stable learning preferences across courses and learning situations, but also to learning strategies that are course- or situation-specific. In this context, we also cannot determine the extent to which these different individual patterns can be generalized. The extent that individual differences influence the distribution of learning activities might depend on several factors. The selection of the sample is an essential factor. Teacher candidates (who participated in the present study) often represent a more heterogeneous group of learners than psychology students, for example, because the former were socialized in different academic disciplines with different styles of studying. Thus, the large variance in the distribution of learning activities in the present study might be due to the sample selection. A situational factor is the number and temporal pattern of obligatory course requirements, which define the degree of self-regulated study time allocation. Thus, the variance in the distribution of learning activities may be less pronounced in courses implicating more external control of study time allocation (e.g., see multiple-assignment courses). Lastly, the type of measurement may also be relevant. Objective measurements (e.g., the accesses to course materials in the present study) and subjective measurements (e.g., self-reports about usual learning habits in other studies) might be differentially sensitive to actual learning behavior and are thus comparable to a limited extent only. Future studies could explicitly address the type of individual differences and examine the extent to which these differences interact with sample selection, situational factors and types of measurement.

The results for Research Question 3 should also be interpreted with caution because of the quasi-experimental design. They represent first empirical evidence that spaced learning might have beneficial effects on students’ metacognitive learning outcome, but the effect sizes were small to moderate. Future studies with experimental designs would best replicate our findings. In addition, the theoretical assumptions outlined in this study and the extent to which they explain the findings was not explicitly tested by the present design. Future studies could address these theoretical mechanisms in their designs and assess these processes more explicitly (e.g., by collecting data about the monitoring process during learning activities).

Despite the limitations of investigating study behavior in a field design, our study provided first empirical evidence that spaced or massed access to learning materials might vary as a function of the course organization and individual differences and that spaced access is related to more accurate metacognitive monitoring. In line with many other arguments, the latter point demonstrates that students can benefit from a course organization that requires and reinforces continuous learning activities. Not all students regulate their learning adequately—even when they are prospective teachers instructed in psychology.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.