Abstract

Having a growth mindset has been shown to predict better academic performance in a variety of educational settings. Efforts to instill a growth mindset through educational interventions have demonstrated positive effects on academic success. However, many of the interventions previously tested are relatively time intensive and costly for some instructors at large research-intensive institutions. In this study, we find that a quick and easy mindset intervention can produce some gains in academic performance. This intervention involved no class time, little prep-work, and was easily disseminated to a 300-student Introductory Psychology lecture. Participants (

Keywords

Introduction

Social psychological factors affect academic outcomes above and beyond academic preparedness (Rattan, Savani, Chugh, & Dweck, 2015; Walton, 2014). One of the most well-researched is academic growth mindset (Dweck, 2000). Students who approach tasks with a belief that hard work will result in growth in knowledge and skill (i.e., a growth mindset) tend to outperform peers who approach tasks with a belief that difficulty indicates a lack of ability (i.e., a fixed mindset), especially during specific times of academic challenge, such as transitions from high school to higher education (e.g., Blackwell, Trzesniewski, & Dweck, 2007; Dweck & Sorich, 1999; Henderson & Dweck, 1990; Hong, Chiu, Dweck, Lin, & Wan, 1999; Robins & Pals, 2002). However, more intervention research is needed to address which students, under what conditions, and for which academic tasks a growth mindset is likely to benefit students’ academic outcomes (Chew, 2017; DeWitt, 2017).

Growth Mindset Interventions

Recent experimental research has demonstrated that inducing growth mindsets can produce positive academic outcomes (e.g., Aronson, Fried, & Good, 2002; Blackwell et al., 2007). Blackwell et al. (2007) embedded a growth mindset message in an eight-session study strategy program for middle school students. Students who received the growth mindset message had higher post-intervention mathematics scores than students in the control group. Using a less intensive design, Aronson et al. (2002) found that college students who learned about the malleability of the brain (i.e., growth mindset) had an average Grade Point Average (GPA) 6% higher than participants who learned that an individual’s successes and failures come from a unique set of underlying strengths and weaknesses (i.e., fixed mindset). Even large-scale, standardized interventions have led to meaningful differences in academic performance. Yeager, Walton, Ritter, and Dweck (2013) (as cited in Yeager, Paunesku, Walton, & Dweck, 2013 1 ) found that a brief 30-minute growth mindset intervention in an online orientation session led to better academic outcomes for university freshman, with 64% of the students in the growth mindset group earning at least 12 academic credits at the end of the first semester as compared to only 61% in the control group. These interventions are successful in bolstering academic performance because they are able to effectively alter students’ perceptions of academic difficulty. When students understand the malleability of intelligence, they understand that effort is a means to improve performance and not a sign of weakness (Dweck, 2000). On the other hand, when students believe that intelligence is relatively unchangeable, effort is an indication of a lack in ability (Dweck, 2000). When interventions are able to teach students of the malleability of intelligence, students learn that embracing effort can lead to better outcomes (Hong et al., 1999).

However, some researchers have found that there may be little association between growth mindset and achievement (Bahník & Vranka, 2017) and that growth mindset interventions may not lead to increased academic achievement (Burnette, Russell, Hoyt, Orvidas, & Widman, 2017; Donohoe, Topping, & Hannah, 2012). For example, Burnette et al. (2017) examined academic outcomes for girls in rural schools who were randomized in to either a growth mindset or control condition. They found that while the growth mindset intervention led to increased growth mindset beliefs, there was no direct effect of intervention condition on students’ academic achievement nor academic attitudes (e.g., learning efficacy). In sum, while there are some studies that show limited or null effects of growth mindset on academic achievement there is evidence to suggest that carefully constructed mindset interventions can produce meaningful differences across diverse academic outcomes.

Common intervention design

Most effective growth mindset interventions have two key features in common: active participation; and timing (Walton, 2014; Yeager & Walton, 2011).

Active participation

Interventions typically contain a “saying-is-believing” aspect in which participants are first exposed to the growth mindset message and are then instructed to formalize the material in their own words (e.g., Aronson et al., 2002; Blackwell et al., 2007; Walton, 2014). This active approach ensures that participants read, understood, and deeply processed the message (Walton, 2014).

Timing

Growth mindset interventions tend to target times when students are academically challenged and have the opportunity to improve future academic performance. For example, studies in real educational settings have targeted students at the beginning of a difficult educational transition, such as the transition between elementary and middle school (Blackwell et al., 2007) or the transition between high school and higher education (Aronson et al., 2002). In laboratory experiments, the intervention tends to occur after a participant receives negative feedback on a performance measure, thereby simulating a time of academic difficulty (e.g., Hong et al., 1999), but before subsequent performance measures. This simulated laboratory situation would be similar to negative feedback received on the first midterm within a course, as it is a time of academic difficulty (i.e., poor performance in a new course) and there is still time to improve performance (i.e., there are subsequent exams). This attention to appropriate timing may increase students’ application of the intervention message when difficulty is encountered in the future, resulting in meaningful differences in future performance that can “snowball” over time (Walton, 2014).

Continuing growth mindset intervention research

Research on growth mindset and growth mindset interventions continues to grow, adding to our understanding of the positive benefits of malleable mindsets and the conditions in which these mindsets are useful. However, there remain empirical questions on how best to apply growth mindset research and theory to educational settings. For example, recent research has been aimed at determining how best to scale growth mindset interventions so that they remain effective when disseminated to large samples or entire populations (Paunesku et al., 2015; Yeager et al., 2016). Alongside this, recent research has also highlighted the need to further explore how growth mindset theory and findings are being translated to educational settings (e.g., via teacher trainings and student support services). In some cases, translations to educational settings may not be holding true to underlying theory and leading to misunderstandings of what holding a growth mindset means and how it should be encouraged in our students. As we continue to examine growth mindset interventions for what works (e.g., characteristics of a good intervention and appropriate scaling), for whom (e.g., across different student populations), and when (e.g., students in educational transition), we must also consider possible contextual constraints that may limit the effectiveness of such interventions. Here, we discuss two possible contextual constraints: time and resource limitations; and false growth mindset messages.

Time and resource limitations

Mindset interventions are promising, but dissemination may be limited by the time and effort required for the “active” saying-is-believing component. Less intensive interventions might yield meaningful effects when delivered to high numbers of students, because the benefits can be spread over many more students. Typical large, general education university classrooms are promising sites for mindset interventions given the academic challenge and (for many) the pivotal timing in the transition from high school to college. However, instructors in these classes may be unlikely to adopt any intervention that adds to the instructor workload. The ratio of university students to teaching staff has increased over the past two decades (Prosser & Trigwell, 2014). As enrollment in general education university classrooms increase, these course instructors may face additional teaching obstacles – particularly with regards to classroom resources, teaching time, and addressing individual student needs – without additional support (e.g., more teaching assistants). Therefore, there is a need to understand how to contextualize growth mindset interventions for such settings to make them easily adaptable to a variety of general education university classrooms while still producing meaningful and significant effects.

Following this reasoning, Paunesku (2013) tested a low intensity, high reach intervention by adding brief messages at the top of a free online content provider website (Khan Academy). As participants learned about new topics, they saw a growth mindset message, no message, random science facts, or a standard encouragement (e.g., “Just do your best”). Paunesku (2013) then measured the number of subject areas participants became proficient in and found that those who received a growth mindset encouragement message became proficient in 3% more of the subject areas they attempted. These results suggest that it is possible that repeated growth mindset messages led participants to internalize the message over time, without completing a “saying-is-believing” activity. It is also possible that the intervention targeted specific behaviors in the particular context of the online content provider website. In this context, participants have to continue to complete practice problems one at a time in order to gain mastery in a content area. Therefore, participants in Paunesku’s (2013) study who received the growth mindset message were encouraged to continue to do their practice problems in a way that participants in other message groups were not, leading to more subject area proficiencies. Over time, small nudges and the success that follows could build mindsets, potentially leading to durable, self-perpetuating mindsets that affect performance. Thus, while incorporating a “saying-is-believing aspect” is the typical mindset intervention design, a less intensive approach could still produce meaningful effects if well-timed and context appropriate. When multiplied over the number of beneficiaries, even lower power interventions can yield effects when broadly disseminated. The present study offered an opportunity to examine the potential for a low cost, easy to implement intervention in an applied setting.

False growth mindset messages

The benefits of growth mindsets and downsides of fixed mindsets have become popular in education, to the point that it might seem that few educators would promote fixed mindsets any longer (Gross-Loh, 2016). However, in Carol Dweck’s (2016) update to her classic book “Let’s take a look at courses where you’ve performed well and

Present Research

In the current study, we build upon Paunesku’s (2013) initial success with a low intensity mindset intervention to test a minimal, quick, and cost-effective mindset intervention that could be easily disseminated to a large introductory psychology course using a double-blind experimental design with random assignment. We intentionally chose to trade a stronger dose of the intervention for wider adoption as this intervention design has the potential to be adapted to a range of university classrooms where time and resources are limited. At the same time, we sought to compare the effects of a growth mindset message to other forms of encouragement that students may encounter in university classrooms: a fixed mindset message (i.e., “just focus on your strengths”); and a more neutral control (e.g., “thank you for coming to class”).

Hypothesis

We predicted that, among students who retained the intervention message (i.e., passed a manipulation check), students who received a growth mindset letter would earn higher scores on post-intervention exams than students who received the control letter. In turn, we hypothesized that students who received a control letter would earn higher scores than those who receive the fixed mindset letter.

Method

Participants

Introductory psychology students (

Materials

Prior psychology knowledge

A twenty-four item multiple-choice introductory psychology pre-test was given to participants on the first day of class. No students were aware of the pre-test before the day it was given. Participants were not given feedback on their answers to the questions and were not shown their pre-test scores. All exam packets were collected at the end of the exam.

Academic achievement in introductory psychology

Participant scores on four midterms and one final exam measured academic achievement in introductory psychology. All midterm exams consisted of 48 content questions and the final exam consisted of 71 content questions. Each exam had multiple versions, differing only in the order of the content questions, which were randomized using testbank software. All exams in the course were cumulative.

Pre-intervention midterm score (M1)

The first midterm exam score was recorded for each participant and was used as the only measure of pre-intervention academic achievement.

Post-intervention midterms (M2–M4)

There were three post-intervention midterm exams (M2–M4). All midterm exams were cumulative and varied in the number of chapters covered. By course policy, participants were allowed to drop their lowest midterm score. Therefore, the number of participants in each analysis varies from exam to exam, as not all students took every post-intervention midterm exam.

Final exam

The final exam covered all textbook chapters in the course and included 71 content questions and an extra credit manipulation check for the intervention. All analyses for the final exam score are based on the 71 content questions.

Intervention materials

Each intervention letter contained a small visual graphic in the top left-hand corner, a notable quote relating to the letter condition across the top of the page, and the actual intervention message for the student signed by the instructor of the course. The growth mindset letter read, in part,

Procedure

The study was reviewed and approved by an Institutional Review Board. On the first day of class, students completed the pre-test. Nine days after the pre-test, students took M1. Participants were randomly assigned to receive a growth mindset, fixed mindset, or control message after M1. We developed three versions of M1 (versions A, B, and C) and distributed them to students so that the first student in the row received version A, the next student received version B, the third student received version C, and so forth. The only difference between the three versions of M1 was the ordering of the content questions, there were no differences in the content itself. This re-ordering of the content questions was to minimize cheating on the exam and was not an integral part of the intervention procedure, except that it created three clearly defined groups. The same results could also have been achieved by keeping the order of all content questions the same across all exams, but leading students to believe the exams were different by assigning them different labels (versions A, B, and C). Students read the questions from their exam packets and recorded their answers on a separate test form to submit for scoring. At the end of the exam, students turned in their test forms to the pile that corresponded to their midterm version (A:

Participant grades for all subsequent exams in the course were recorded. As mentioned above, each subsequent exam also had multiple versions differing only in the order of the content questions. Unlike M1, the order of the content questions for M2–M4 and the final exam did not vary as a function of intervention group (i.e., all participants in the growth mindset condition received Version A for M1 but could have received any version for subsequent exams). In addition, an extra credit multiple-choice manipulation check at the end of the final exam asked participants to recall the message in their M1 intervention letter. Responses were in a multiple-choice format with three choices corresponding to each of the messages in the letters, one choice indicating that the participant did not read or remember the letter at all, and the final choice being that the student did not receive a letter or take M1. Participants were not required to answer the question correctly in order to receive extra credit. All participants who took the final exam responded to the question.

The intervention was designed to build upon key features of previous successful mindset interventions by: (a) allowing for easy dissemination in large classes; (b) being timed to occur right after the first occurrence of academic difficulty in the course; and (c) being deployed in a course with an exam every other week, allowing students ample opportunity for future academic performance.

Results

Three sets of results are presented in the current project. First, we present results for the between group comparisons in the sample of participants who passed the manipulation check at the end of the term (

Between Group Comparisons

Participants who retained the intervention letter’s message are included in the following analyses:

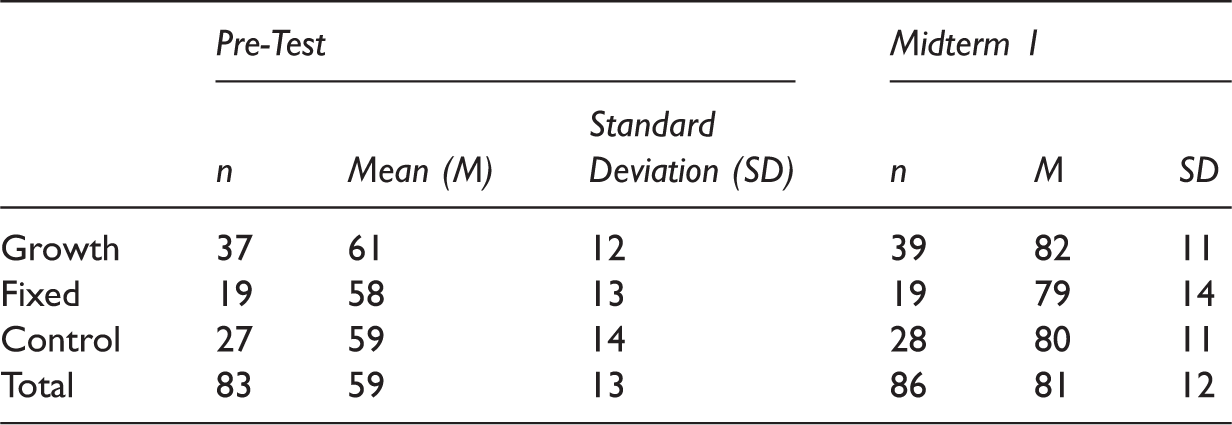

A comparison of participants who passed the manipulation check (

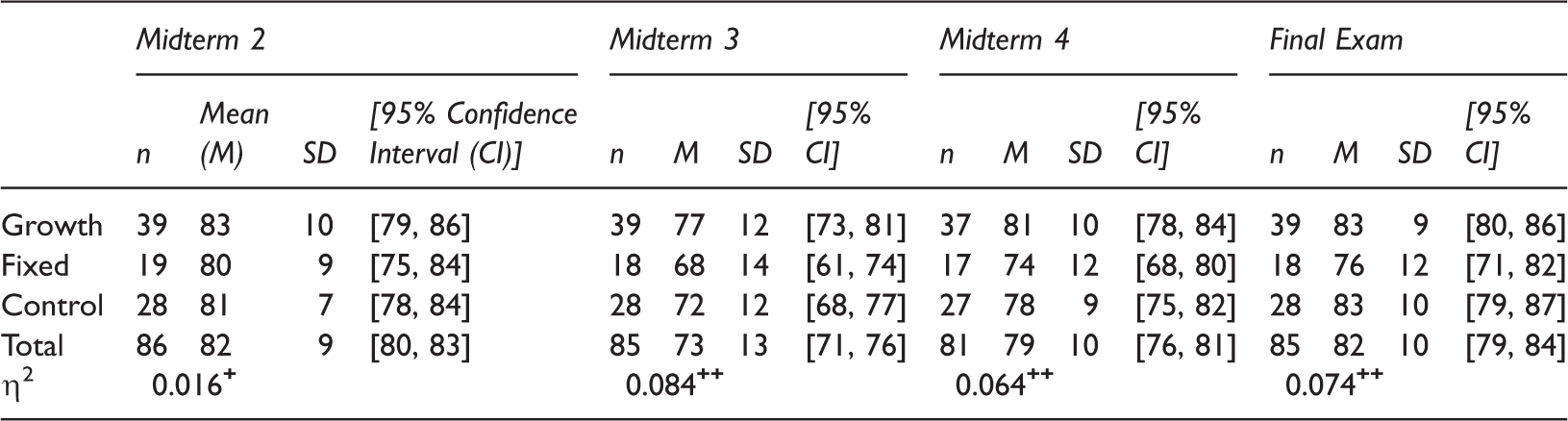

Before conducting the one-way ANOVAs, we examined the distributional properties for all exam scores across the three intervention conditions for the subsample. All were approximately normally distributed (|skew range| = [0.03, 1.1]; |kurtosis range| = [0.06, 2.2]). An analysis of the homogeneity of variances indicated that there was equality of variance for all dependent measures (

Pre-Intervention Exam Means by Intervention Group.

Post-Intervention Exam Means (Standard Deviation (SD)) by Intervention Group.

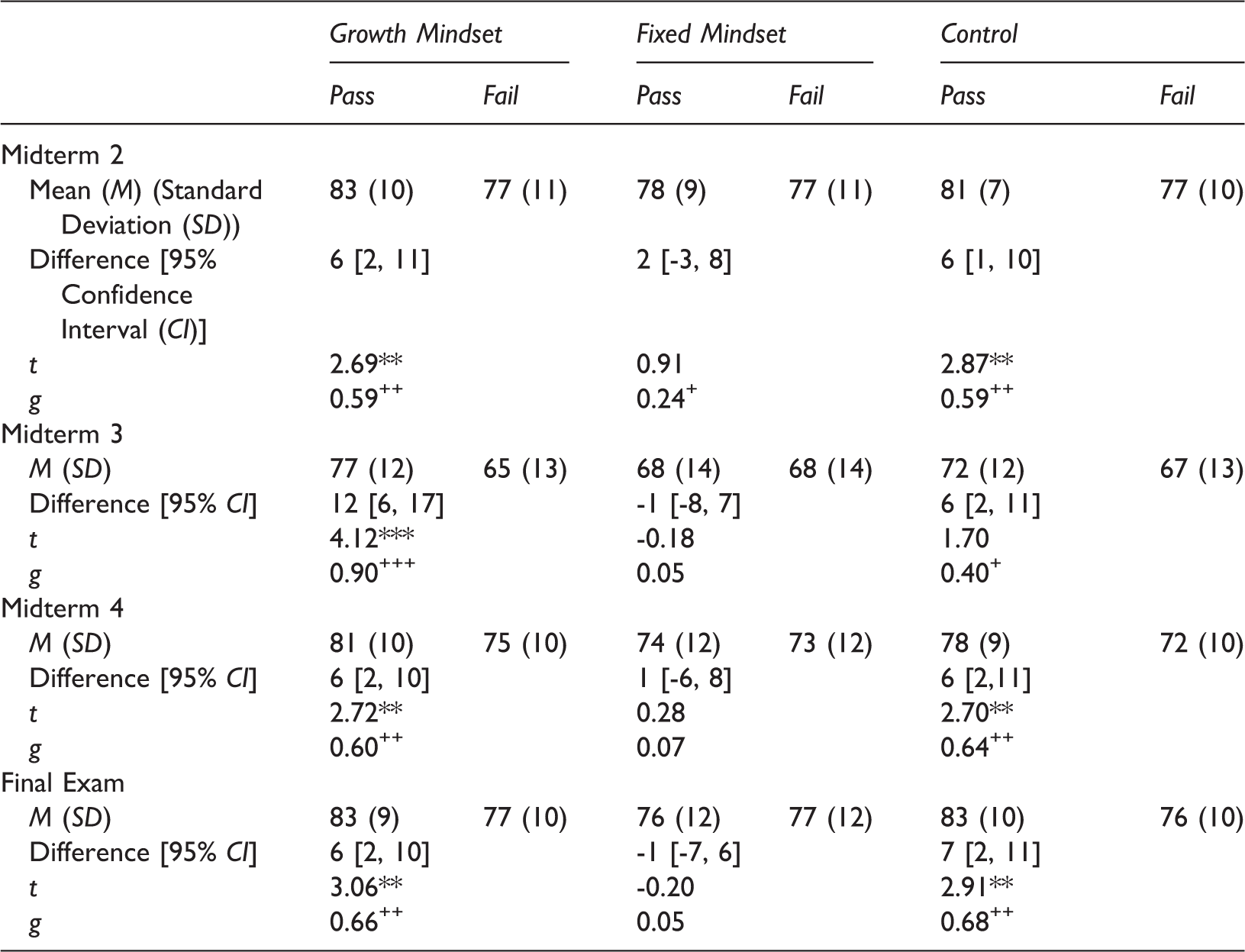

Within-Group Comparisons

We further examined the effects of the intervention letters, as there was some evidence of unexpected positive benefits in the control group. There were no differences between the growth mindset and control groups on M3 or the final exam, making it possible that students gained positive effects from the control letter’s message. If the message in the control group was non-neutral, it would limit the conclusions we could draw between the growth mindset and fixed mindset groups.

Three possible scenarios could have resulted in differences in post-academic achievement between the growth and fixed mindset groups. Scenario A: The fixed mindset letter, resulted in a decline in academic performance for participants who read and retained the fixed mindset message, and the growth mindset and control message had no effects. Scenario B: Both the growth mindset and control message had positive effects on the participants and the fixed mindset message had no effect. Scenario C: A combination of scenarios A and B. That is, there were small iatrogenic effects for the participants in the fixed mindset condition and small benefits for participants in the growth mindset and control conditions, resulting in the larger observed differences between the groups. In each scenario, participants in both the growth mindset and control groups would demonstrate higher scores on post-intervention measures of academic success.

Within-Group Comparisons.

small; ++moderate; +++large effect size.

Growth mindset group

There were no statistically significant differences between participants who passed (

We ran a hierarchical regression analysis on M2–M4 and the final exam. The first model used pre-tests and midterm one scores as predictors. This model explained a significant amount of the variance in M2 (

Fixed mindset group

There were no significant differences between participants who passed (

Control group

There were no statistically significant differences between participants who passed (

The same hierarchical regression analyses used in the growth mindset group were run for the control group for M2, M4, and the final exam. The first model used the pre-test and M1 as predictors and explained a significant amount of the variance in M2 (

Discussion

The goal of this study was to test a quick, easy, mindset intervention that included key components of previously successful interventions and could be widely disseminated in large general education classrooms. The intervention was designed to motivate effort at a time of high academic challenge when there were enough opportunities upcoming for that effort to pay off. We hypothesized that students who received and remembered a growth mindset letter after the first midterm would master more content than students in the control or fixed mindset groups. After examining each post-intervention midterm and final exam, we found no statistically significant between group differences on midterms two and four. However, participants in the growth mindset group significantly outperformed those in the fixed mindset group by 9% on midterm 3 and by 7% on the final exam. Throughout the term, we saw differences between the growth and fixed mindset group of 3% M2 (ns), 9% at M3 (

To our knowledge, this study is the first to test a mindset intervention in a large-lecture classroom without an active, “saying-is-believing” component (Aronson et al., 2002; Blackwell et al., 2007; Paunesku et al., 2015; Walton, 2014). Previous research has indicated that the active components are important (Walton, 2014). However, in the current study – and in similar designs (e.g., Paunesku, 2013) – this well-timed passive intervention still resulted in some positive benefits for academic achievement.

Two plausible explanations for the observed effects are possible. First, some participants may have remembered the message all term because they found a way to personally use the information in ways that are similar to previous active intervention methods. We have no direct data on this; however, as seen in regression analyses, retaining the growth mindset letter was the only message that accounted for additional variance in post-intervention achievement. If students were only able to recall their intervention message due to a personal active activity, we should have also observed differences in post-intervention achievement in the fixed mindset group.

Second, it is possible that the growth mindset letter may have led students to actually use the answer key it came with to grade M1, thereby increasing their performance on future exams. Since all of the exams in the course were cumulative, students who used their previous exams to review material for upcoming exams would have had an advantage. If the growth mindset letter encouraged students to take the extra step of grading their own exams and using the material to study, it would have benefitted them more on future exams when compared to other students who did not. As in Pauesku’s (2013) study, exposure to a growth mindset message (as opposed to other statements such as scientific facts or typical forms of encouragement) led to small behavioral differences that translated in to meaningful benefits over time. In the current project, reading the growth mindset message, but not the fixed or control message, may have increased the likelihood of students dedicating time to using the answer key (i.e., small behavioral differences) for M1 and all course exams, which led to increased performance on M1 and future exams (i.e., meaningful benefits over time). Thus, the letter could have created a recursive process leading to lasting change, or “snowball,” that is central to wise psychological interventions (Walton, 2014). This is further supported in the results of the regression analyses where passing the manipulation check in the growth mindset condition led to better performance on M3, even after controlling for the pre-test and M1 performance. Additionally, we saw no difference between students who passed and failed the manipulation check in the fixed mindset or control conditions. Therefore, it is plausible that the growth mindset message encouraged students to expend the effort to grade their exams whereas the fixed mindset message did not have any effect.

There were also two other notable aspects of our findings. First, as we examined the effect of the intervention separately on each post-intervention exam, we were able to determine that the effect of the growth mindset letter was not the same across all exams. In this study’s between group analyses, we found significant group differences on M3 and the final exam, but not M2 and M4. As noted in previous literature, holding a growth mindset is most valuable to students when facing academic difficulty and findings suggest there are few differences between growth and fixed mindset students when tasks are not particularly challenging (e.g., Blackwell et al., 2007). As seen in Table 2, the average exam score for M3 was much lower than averages for the other exams, suggesting that the test was more difficult than the others. Alongside this, the final exam could also be considered a more difficult test (even if the overall exam mean was not much different) as the test covered all of the material in the course and occurs at a significantly stressful time for most students, as they are studying for multiple high-stakes tests. Therefore, we suggest that these differential effects demonstrate the value of a growth mindset during times of academic challenge and stress. Second, this study’s within group analyses, demonstrated that there were significant differences on M1 performance between participants who passed and failed the manipulation check in both the growth mindset and control groups (there was also a trend in the same direction for the fixed mindset group, though it was not significant). This finding suggests that higher achieving students may be more likely to read the intervention message when no explicit instruction to do so is provided. Therefore, adding instructions to read the letter or requiring it as a required component of the course may benefit the lower achieving students the most. Overall, these additional findings are aligned with previous research (i.e., highlighting the significant effects of a growth mindset during times of academic challenge; e.g., Blackwell et al., 2007) and offer insight to intervention strategies and techniques in future research.

Limitations and Future Directions

Several limitations in the current study are addressed, including aspects of the methodology and the generalizability of our findings. First, our intention was to test the lowest intensity intervention possible, calculating that even a low response rate and limited effectiveness would still yield meaningful differences across the large population of students who take large general education classes that, at large public institutions, are often both under-resourced and fail a relatively high number of students. Indeed, in this study, only 31% of the participant sample exposed to the intervention was able to recall their intervention message at the end of the term, indicating that this intervention is limited in reach. Still, 31% of General Psychology students at the institution where the study was conducted represents over 1,000 students per year, and even small shifts in the number of students who move from F to D – (which confers credit for these participants) or from D+ to C – (which is a common grade required by majors) could have significant implications for retention and graduation rates. As the current study was aimed at testing a minimal intervention design, we did not instruct participants to read the message and devoted no time and attention to it in class. Alongside this, there were large differences in the number of students who failed and passed the manipulation check within each condition. As the letters were crafted to be very similar in appearance, length, depth of message, and reading difficulty, we have no data to address the underlying cause of this inequality. These low response rates and unequal group sizes may reduce our statistical power to detect true differences between the intervention conditions. Future research may address these low response rates and unequal group sizes by instructing students to read the letter, making the letter available on multiple platforms (e.g., online and in paper form), or exposing participants to multiple doses of the same intervention message. Second, our results demonstrated that the control letter may have conferred a benefit and future research should use an alternative message or have the control group receive no letter at all. Third, we cannot completely exclude the possibility of cross-contamination between intervention conditions as it is possible that students shared their intervention message with peers, resulting in students being exposed to multiple conditions. However, this occurrence would be equally likely across all intervention conditions. Fourth, we also acknowledge that the small possibility that the effects observed in the current study occurred by chance. As this was a single experiment more research is needed to establish the true effect of using such a minimal intervention design, including experiments with different student populations (e.g., cultural and ethnic minorities) and in different locations. In addition, the study focused on academic achievement in Introductory Psychology. Future research could determine transference effects on academic performance in future terms, other courses, or on overall GPA. Other studies have found substantial long-term effects of intensive two-hour interventions (Blackwell et al., 2007). Evidence is mixed on whether academic mindsets are domain-general or domain-specific (Martin, Bostwick, Collie, & Tarbetsky, 2017; Stipek & Gralinski, 1996). If domain-general, universities could implement growth mindset interventions in key high enrollment courses such as Introductory Psychology and see benefits across other courses. However, if mindsets are domain-specific, interventions should target specific courses. Finally, although

Conclusion

A low-cost intervention resulted in a 3–9% difference between the growth and fixed mindset conditions on measures of post-intervention academic achievement in a large lecture Introductory Psychology course. The intervention is sustainable, can be easily tailored to fit specific course structures and content, and has some preliminary evidence of boosting student achievement in college. Future projects may examine appropriately timed minimal designs in other contexts with the goal of furthering our understanding of contexts and interventions that enhance academic outcomes.

Supplemental Material

Supplemental material for Quick, Easy Mindset Intervention Can Boost Academic Achievement in Large Introductory Psychology Classes

Supplemental material for Quick, Easy Mindset Intervention Can Boost Academic Achievement in Large Introductory Psychology Classes by Keiko C P Bostwick and Kathryn A Becker-Blease in Psychology Learning & Teaching

Footnotes

Acknowledgements

Keiko C. P. Bostwick, School of Psychological Science, Oregon State University; Kathryn A. Becker-Blease, School of Psychological Science, Oregon State University. Keiko Bostwick is now at the School of Education, University of New South Wales, Australia. The authors thank Frank Bernieri for helpful research design and data analysis suggestions, and General Psychology Coordinator Ameer Almuaybid for assistance with gathering and de-identifying data. This manuscript is based on Keiko Bostwick’s master’s thesis.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplementary material

Supplementary material for this article is available online.

Note

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.