Abstract

Some students taking infant development classes have limited, direct experience of interacting with infants. This paper reports on a pilot of an innovative, research-informed workshop that provides hands-on experience through the use of infant simulators. The workshop adapted the Leiden Infant Sensitivity Simulator Assessment, which uses the RealCare Baby © infant simulator, to examine attachment theory’s sensitivity hypothesis. Students’ ratings indicated that an infant simulator activity was helpful in understanding the research and encouraged critical thinking about the research, hypothesis, and attachment theory in general. Moreover, the infant simulator activity was as helpful as a multiple-choice question activity for promoting understanding of the research. The workshop encouraged higher level thinking about the research, hypothesis, and theory in general, and there was no difference in the extent to which the infant simulator activity and a plan-a-study activity encouraged critical thinking. The findings of this pilot suggest that infant simulators can be successfully embedded into and serve as an effective tool for teaching about theoretical concepts surrounding infant–caregiver interaction.

Introduction

A challenge in teaching infant development, and in particular infant–caregiver interaction, is that some undergraduate students lack direct, hands-on experience with infants in a caregiving role. With limited personal experience, students might find it difficult to understand the cognitions and emotions that shape a caregiver’s behavior. Another challenge, especially with large class sizes, is the limited opportunities for students to adopt the researcher’s perspective and to directly observe caregiver interaction because of, for example, limited access to and willingness of nurseries or other childcare organizations, time taken to gain the necessary ethical and legal requirements for working with children, and students’ personal interest (or lack of) in interacting with infants. In this paper, an innovate workshop that aimed to overcome these challenges through the use of infant simulators is evaluated. Previous research (Bath, Cunningham, & McIntosh, 2000; Jang & Lin, 2017) has examined the use and effect of infant simulators on students’ own attitudes and beliefs towards parenting, whereas this pilot explores the use of this technology as a learning tool for understanding key developmental concepts.

The use of technology in developmental psychology classes is not new. Symons and Smith (2014) and Symons, Adam, and Smith (2016) have used the virtual reality technology (VRT), MyVirtualChild©. As part of their class, undergraduate students raised the virtual child from infancy to late adolescence, with tasks drawing on key developmental concepts (e.g., parenting styles). Symons and Smith (2014) reported that the majority of students found the activity helpful and that it encouraged their critical thinking. Moreover, the researchers found evidence of psychological engagement. Students reported forming a relationship with the virtual child and experienced positive (happiness and pride being reported most frequently) and negative emotions (e.g., anger and sadness). Students’ responses also showed engagement through self-reflection and with the course content. Thus, the use of VRT is one way to give students experience of being in a caregiving role and, as Symons and colleagues’ research demonstrates, can tap into the psychological experience of the caregiver and serve as a psychologically engaging learning activity.

Whilst there are many strengths to VRT, a limitation is the lack of hands-on interaction with a child that responds contingently and in real-time to the caregiver’s behavior. For example, when observing a caregiver’s sensitivity, a key element is the extent to which the caregiver accurately interprets the infant’s signals and responds appropriately (Ainsworth, Bell, & Stayton, 1974). To this end, infant simulators such as the RealityWorks RealCare Baby ©, are an ideal technology for embedding into infant development classes. The RealCare Baby © is a highly responsive, programmable infant simulator. The simulator resembles an infant in height (21ins), weight (7lbs), and physical appearance (e.g., see https://www.realityworks.com/products/realcare-baby). Like a real infant, the simulator vocalizes (e.g., cries and giggles) and its cries signal different needs (e.g., to be fed). The simulator can be programmed according to three schedules (easy, medium, and hard) and records temperature and mishandling (see Voorthuis et al., 2013 for a comprehensive description of the infant’s capabilities). These infant simulators have been used in research with adolescents and school-based interventions on, for example, teenage pregnancy prevention (e.g., Herrman, Waterhouse, & Chiquoine, 2011; Somers & Fahlman, 2001), though it should be noted that some interventions have not been successful (e.g., Brinkman et al., 2016). The simulators have also been used with undergraduate students, typically with limited direct parenting experience, to examine their attitudes towards parenting. Bath et al. (2000) reported that undergraduate medical students did not find the simulator realistic and the researchers did not deem the technology as useful in helping these students to understand parenting, knowledge that would support their practice with real-life parents. Jang and Lin (2017) reported that undergraduate students in a family studies course found interacting with the simulators made them think about parenting (e.g., responsibilities and practicalities). Researchers have also used infant simulators to examine adults’ reactions to infant crying (e.g., Bruning & McMahon, 2009) and, more recently, attachment-related concepts in the form of caregiver sensitivity (e.g., Bakermans-Kranenburg, Alink, Biro, Voorthuis, & van Ijzendoorn, 2015; Voorthuis, et al., 2013).

Building on the academic research that has employed this technology, a research-informed workshop was designed for undergraduate students taking a 10-week developmental module on attachment behavior. Unlike previous research (e.g., Bath et al., 2000; Jang & Lin, 2017), the purpose of the workshop and the use of the infant simulators was to develop students’ understanding and higher-level thinking about attachment concepts rather than to examine their parenting cognitions. The workshop was designed around the Leiden Infant Simulator Sensitivity Assessment (LISSA; Voorthuis et al., 2013). LISSA is a standardized tool to assess caregiver sensitivity using infant simulators. The LISSA has several parts; however, for the workshop activity discussed below, the focus was on the laboratory-based procedure. Voorthuis et al. (2013) found the LISSA to be a reliable and valid tool to assess sensitivity in a sample of undergraduate, largely female students of whom none were parents but the majority had some caregiving experience with a young child.

Attachment Theory and the Caregiver Sensitivity Hypothesis

Bowlby’s (1969) attachment theory is a core topic of many developmental modules and textbooks. Ainsworth, Blehar, Waters, and Wall’s (1978) Strange Situation Procedure is considered the “gold standard” tool for assessing the quality of the attachment relationship (Clarke-Stewart, Goossens, & Allhusen, 2001). In understanding that infant–caregiver attachment varies in terms of security and insecurity a next step is to understand why. According to Bowlby (1997, p. 204) Ainsworth “was convinced that the developing relationship between child and adult was not shaped equally by the two participants…[She] attributed disproportionate influence to… [the mother] rather than to the child.” Thus, the (caregiver) sensitivity hypothesis predicts that differences in attachment security are largely the consequence of the sensitive responsiveness of the caregiver (van Ijzendoorn & Sagi-Schwarz, 2008). Research (e.g., see van Ijzendoorn & Bakermans-Kranenburg, 2004) is largely in favor with Ainsworth’s view. Sensitive responsiveness, whether measured or manipulated in intervention-based research, predicts attachment security, whereas less sensitive caregiving is linked to attachment insecurity (e.g., see De Woolf & van Ijzendoorn, 1997; van Ijzendoorn & Bakermans-Kranenburg, 2004; van Ijzendoorn, Bakermans-Kranenburg, & Juffer, 2005). Yet, key questions remain about the relationship between sensitivity and attachment security (Fearon & Roisman, 2017) and innovative, standardized tools to assess sensitivity are needed (e.g., Bakermans-Kranenburg et al., 2015).

The Workshop

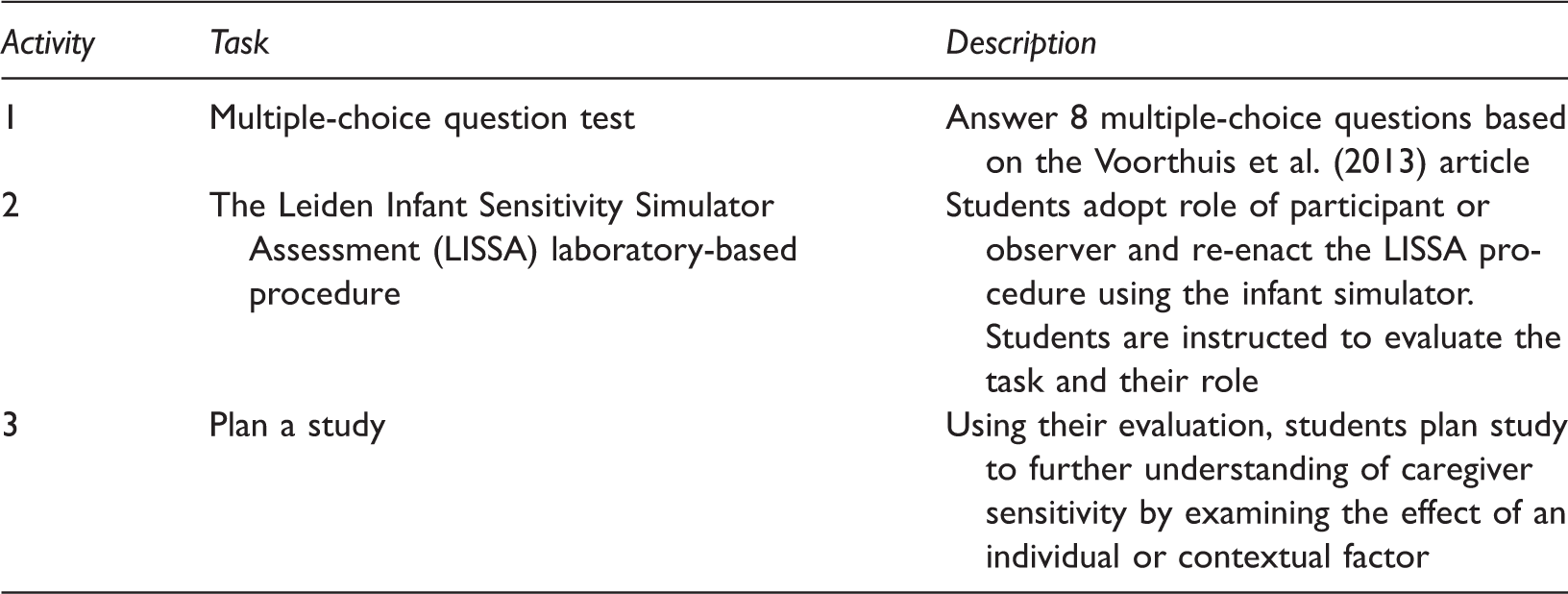

Overview of Workshop Activities.

Multiple Choice Questions (MCQs)

Activity 1 involved an 8-question multiple-choice answer test based on the research article (see Appendix B for examples). Questions were presented to the whole class and responses collected via a clicker response system. Immediate feedback was provided.

LISSA

For Activity 2, the class was split into small groups of 6–8 students, each with a postgraduate teaching assistant (TA). Students were given time to become familiar with the infant simulator and its accessories (e.g., how to feed and change its nappy). As a group, the students and TA then went through the LISSA laboratory-based procedure step-by-step. Specifically, in the original study participants engaged in three 10-minute episodes with the infant simulator, which was set to a difficult schedule: no intervention (free play); completing a questionnaire (competing demands 1); and completing a computer task (e.g., the Tower of Hanoi) (competing demands 2). The participant’s task was to care for the infant (e.g., engage in necessary behaviors to stop the infant from crying). For each episode, a team of trained observers rated the participant’s behavioral response using Ainsworth et al.’s (1974) Sensitivity Scale.

For the workshop, one member of the group self-elected to act as the participant, whilst the remaining group members acted as observers. As the participant, students were asked to consider the experimental task, their feelings and interaction with the simulator, and feelings about being observed (e.g., reactivity; possibility to ‘fake’ sensitivity). As the observer, students were asked to consider the challenges of observing human interactions (e.g., the importance of intensive training; understanding inter-coder reliability). Students were also informed to consider both the participant’s and observer’s roles and, whilst doing so, to think about the procedure’s strengths and weaknesses.

The student ‘participant’ then completed the three episodes. To support the student observers (many of whom would have no experience of behavioral coding at this stage in their degree) a list of 11 caregiving behaviors was created from existing scales of maternal sensitivity (e.g., When baby is distressed, is able to quickly and accurately identify its source). The student observers rated the student participant on each behavior on a 7-point scale (1 = does not describe caregiver’s behavior at all to 7 = extremely accurate description of caregiver’s behavior). Then, consistent with the Voorthuis et al. (2013) procedure, the observers independently rated the participant’s global sensitivity using Ainsworth et al.’s (1974) Sensitivity Scale. The observers then discussed their individual and global ratings to reach consensus. Activity 2 finished with students summarizing the strengths and weaknesses of LISSA for assessing caregiver sensitivity, based on their individual roles.

Plan a Study

The focus of Activity 3 was to consider the usefulness of LISSA in furthering research on caregiver sensitivity. Using the knowledge and experience from Activities 1 and 2, students were asked to consider how the weaknesses of the procedure could be overcome and to design a study using LISSA to examine variations in caregiver sensitivity. To bridge Activities 2 and 3, students were asked to consider how the participants’ own attachment representation might influence how they view the LISSA procedure, and how it might affect the way they behave in the study; this question was drawn from the Discussion section of Voorthuis et al. (2013). Students were asked to identify a target population (e.g., new fathers), an individual or contextual factor (e.g., caregiver’s attachment representation), an overview of how the population or factor will be integrated into the LISSA procedure (e.g., caregiver’s complete the Adult Attachment Interview prior to completing LISSA), and what they would expect to find (e.g., fathers with secure attachment representations would have higher observed sensitivity scores than those with insecure attachment representations). The Voorthuis et al. (2013) article provided a number of examples (e.g., physiological correlates; intervention) that students could use; however, groups also generated their own ideas.

Evaluation of the Workshop

The aforementioned workshop was designed to examine whether infant simulators embedded within an active learning task could be effective as a learning tool for understanding attachment theory’s caregiver sensitivity hypothesis. Given the focus of the task, it was expected that the LISSA activity would promote greater understanding of the sensitivity hypothesis than attachment theory in general. In contrast to simply listing the strengths and weaknesses of a research method, the LISSA activity encouraged students to actively engage with the procedure through role play. Active (vs. directive) learning strategies have been found to promote higher level thinking (Richmond & Hagan, 2011). Yet, the LISSA activity also has features which could reduce its effectiveness in stimulating students to engage deeply. At a surface level the infant simulator is a toy doll that studies have found lacks realism (Bath et al., 2000; Voorthuis et al., 2013). These features could encourage students to disengage or perceive that the activity lacks academic rigour. As such, it was also important to examine whether the LISSA activity promoted higher level thinking by comparing it to the other group-based active learning task in the workshop, the plan a study activity.

Method

Participants and Procedure

Six groups of Year 2 undergraduate students (N = 288) at a British university took part in the workshop. Anonymous responses to the multiple-choice questions were retained from one group (N = 41 students). Post-workshop all attendees were invited to complete the survey (described below) anonymously. Thirty-seven students completed the survey.

Workshop Evaluation Survey

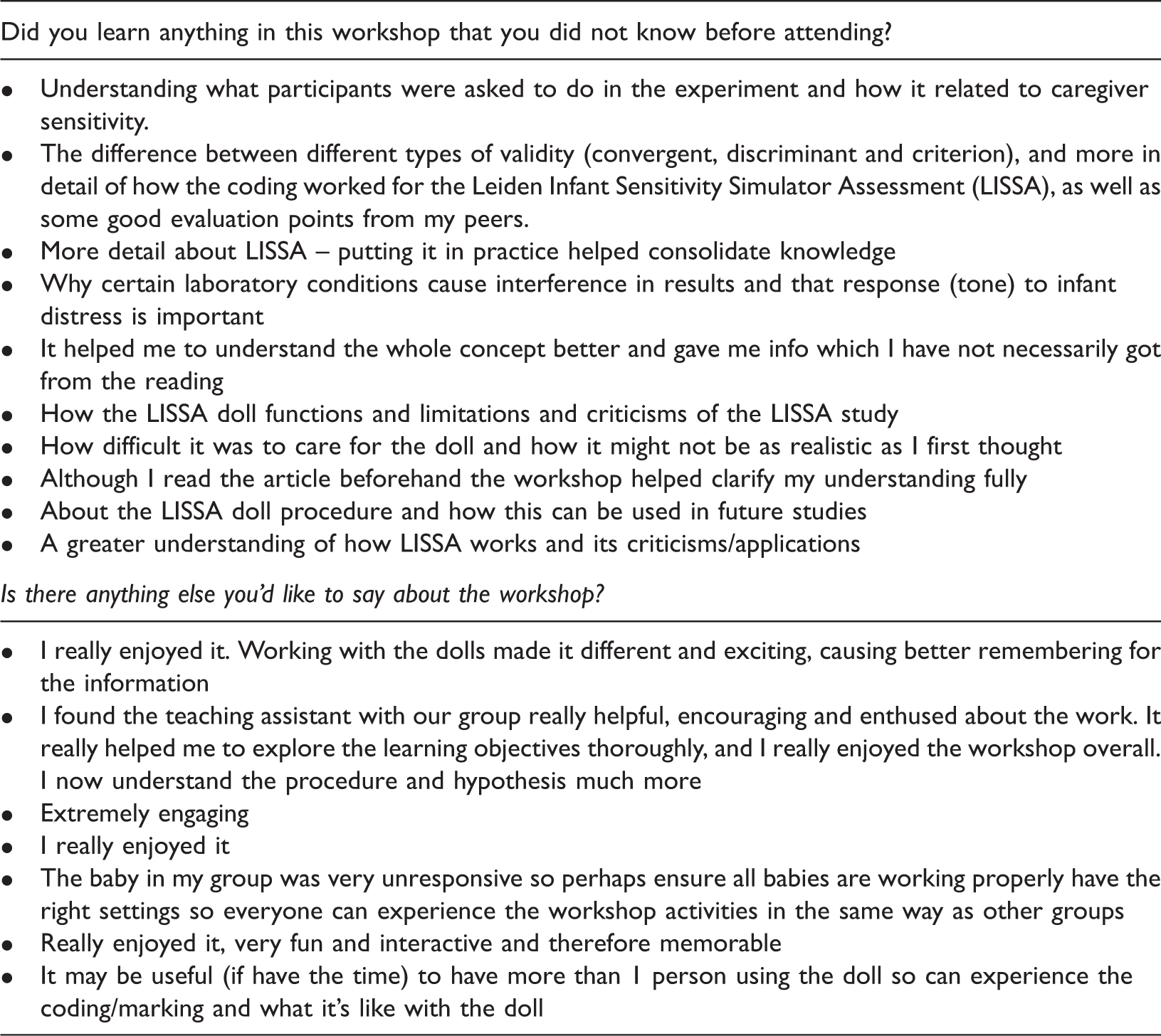

Two open-ended questions asked which activities were the most and least helpful in promoting understanding of the caregiver sensitivity hypothesis. Based around a 100-point scale (0 = not at all helpful to 100 = extremely helpful), four questions asked respondents to rate how helpful the MCQ test was for understanding the research article and how helpful the LISSA activity was for understanding the research article, the caregiver sensitivity hypothesis, and attachment theory in general. In addition, based on Symons and Smith (2014), questions asked the extent to which the LISSA and the plan a study activity encouraged critical thinking about the research article, the caregiver sensitivity hypothesis, and attachment theory in general (0 = not at all to 100 = a great deal). Finally, respondents were asked whether they had learned anything in the workshop that they did not know before attending, and to provide any other comments they wished to make.

Results

Conceptual Understanding

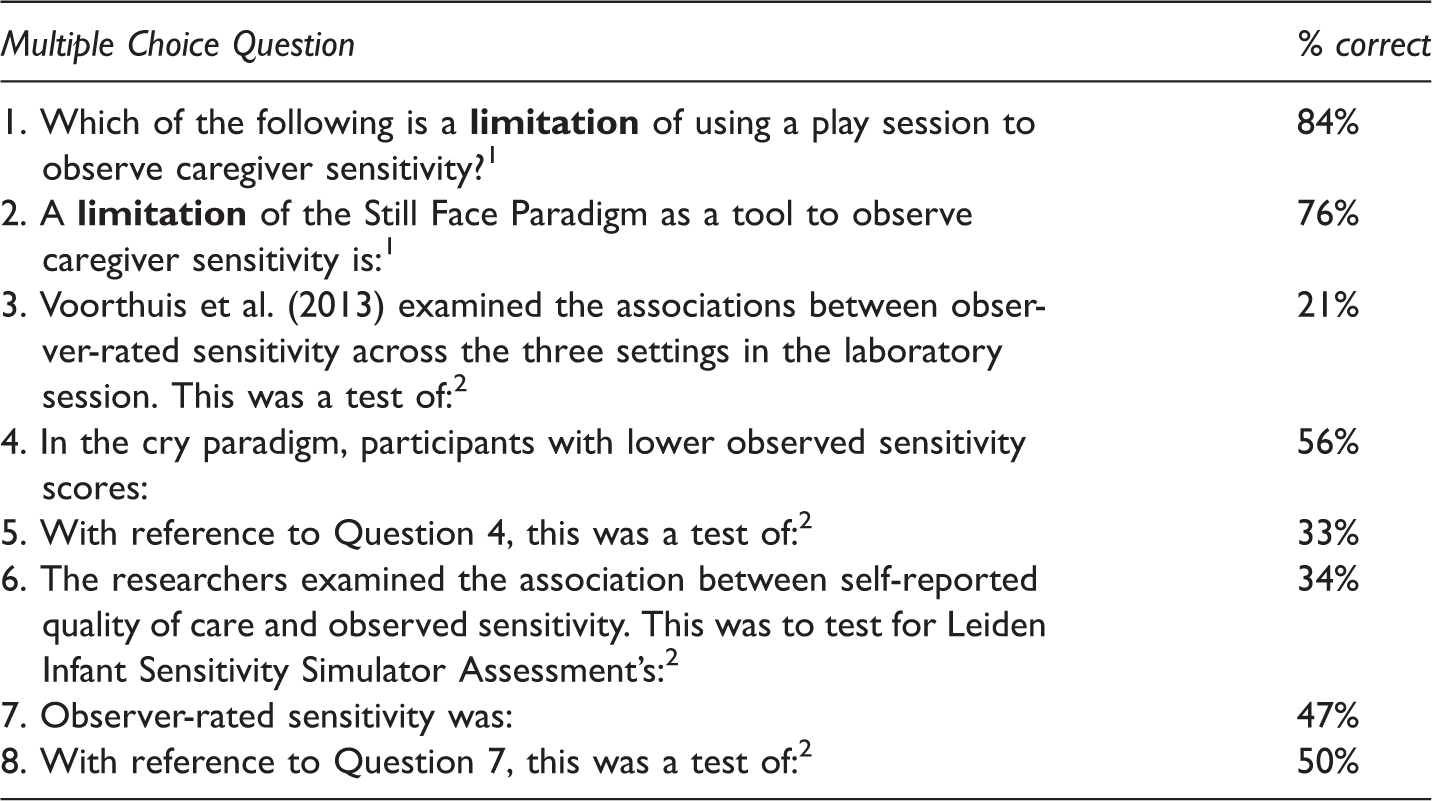

Percentage of Correct Responses to Multiple Choice Questions.

Note 1Question is included in Appendix B.2 Possible answers were different types of validity and reliability covered in the article. Questions 1 and 2 are based on the article’s introduction, questions 4 and 7 on the article’s results section. Questions 3, 5, 6, and 8 are based on information from the article’s introduction and results sections.

Responses to questions 3 and 5 indicated difficulties understanding the different types of reliability and validity. By the time students answered question 8, they had received some feedback from the tutor on some of these tests.

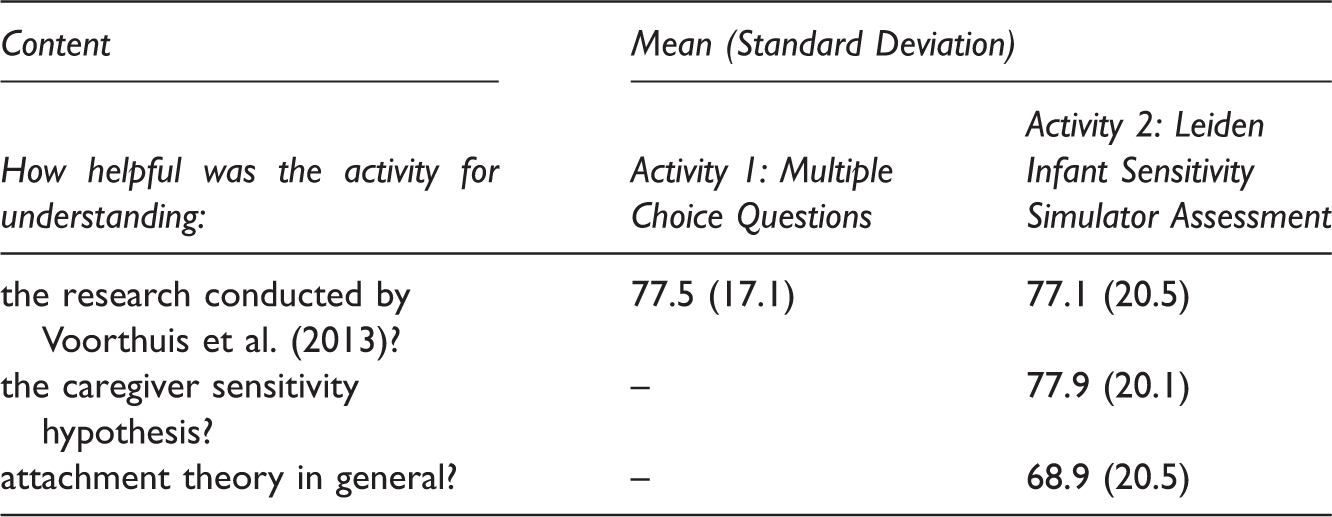

Mean Ratings for the Helpfulness of Activities 1 and 2.

Note. Responses could range from 0 (not at all helpful) to 100 (extremely helpful).

A paired sample t-test revealed that students rated the LISSA activity as more helpful for understanding the sensitivity hypothesis than attachment theory, in general, t(35) = 2.347, p = 0.025 (see Table 3).

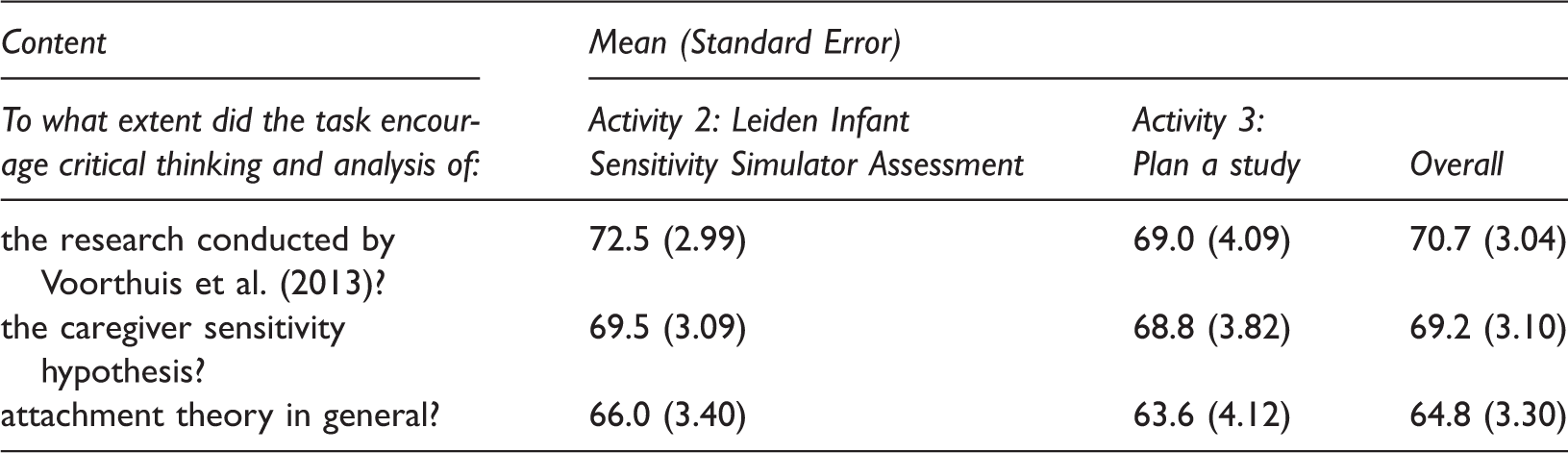

Encouraging Higher Level Thinking

Mean Ratings for the Extent to which Activities 2 and 3 Encouraged Critical Thinking.

Note. Responses could range from 0 (not at all) to 100 (a great deal).

Students’ Open-ended Responses

Based on a count of the activities listed for each response, students indicated that the most useful activity for understanding the caregiver sensitivity hypothesis was the LISSA activity, being listed by 43% (N = 16) of the students, followed by the MCQ activity (19%; N = 7), reading the article (11%; N = 4), non-task related discussion with the TA (8%; N = 3), and the plan a study activity (5%; N = 2); 14% (N = 5) listed two activities (the MCQ activity plus either the LISSA activity, discussion with the TA, or the plan a study activity). In terms of the least useful task for understanding the caregiver sensitivity hypothesis, the majority of students indicated nothing/none (32%; N = 12) or did not provide a response (37%; N = 14). Of those that referred to one of the three activities, 14% (N = 6) reported that MCQ activity was the least useful for understanding the caregiver sensitivity hypothesis.

Examples of Students’ Responses to the Open-ended Questions.

Discussion

These findings suggest that infant simulators can be successfully embedded into an active learning-based undergraduate workshop and, according to the students, serve as an effective tool for developing their understanding and encouraging higher level thinking about developmental concepts. In adopting a research-informed approach to the workshop, based on Voorthuis et al.’s (2013) laboratory-based LISSA procedure, this pilot study extends previous findings based on research that has used infant simulators in undergraduate classes (e.g., Bath et al., 2000; Jang & Lin, 2017) by demonstrating that the equipment can be used to contribute to students’ learning as well as shaping their attitudes and beliefs about parenting.

Students’ responses indicated that the overall aim of the workshop, to examine attachment theory’s sensitivity hypothesis, was met. Interacting with the infant simulators in the structured LISSA activity was rated as more helpful in promoting understanding of the caregiver sensitivity hypothesis than attachment theory in general. Moreover, the majority of students nominated the LISSA activity as the most useful activity for understanding the caregiver sensitivity hypothesis. As an example, much discussion was generated by the behavioral coding activity. The student observers asked pertinent questions such as how coding schemes work (e.g., how to code when a behavior was not displayed) and how to reduce their observation of several minutes to a single, caregiving score. Such discussion suggested that students’ ideas about the concepts were challenged by the LISSA activity. Links were made with issues raised in the lecture (e.g., the importance of intensive training), further helping students to grasp what researchers are actually doing when they draw conclusions about the link between caregiver sensitivity and attachment style.

As a learning activity, students’ ratings did not differentiate between the LISSA activity and two, typical active learning tasks. Students rated the LISSA activity as helpful for understanding of the research article as the MCQ activity. Indeed, the LISSA activity and the MCQ test focused on slightly different aspects of the research article (e.g., procedure and findings, respectively) which could account for why both were rated as similar in their helpfulness. Alternatively, the MCQ test involved more tutor-input than expected. The test was intended to be a short activity (∼15-minutes duration), but because of students’ difficulties with some of the questions (which were likely due, in part, to a failure to read the article in advance of the workshop) the tutor spent significant time explaining some of the concepts covered in the research article. Students’ ratings for the extent to which the LISSA and the plan a study activity encouraged higher level evaluation and analysis were also very similar. This latter finding is particularly noteworthy given initial concerns that features of the infant simulator might be disruptive. Although the LISSA activity was ‘fun’ (see Table 4), it was highly structured and the TAs were on hand to prompt students to evaluate the procedure. Further, their critique of the procedure was necessary to guide the plan a study discussion. Thus, the success of the infant simulators as a learning tool might in part be due to the highly structured nature of the workshop. Indeed, that the activities were completed in a specific order is an important caveat to note when assessing the usefulness of the LISSA activity. Students’ experience of each activity likely affected their experience of the subsequent activity (e.g., students’ evaluation of LISSA shaped their ideas for future research directions). With this in mind, caution should be heeded in judging the extent to which the LISSA activity promoted higher level thinking relative to the other activities.

It should be highlighted that students’ ratings indicated that the workshop encouraged higher level thinking about the article, hypothesis, and attachment theory in general. Despite the relatively narrow aim of the workshop, the activities led students to think more broadly about attachment theory; the key theory covered on the course. Indeed, infant–caregiver interaction is at the core of all attachment theory’s hypotheses given its integral role in shaping individual differences in attachment quality.

Limitations and Suggestions for Teaching Practice

Nevertheless, notwithstanding the limitation that the order of activities was fixed, the statistical analyses were also underpowered given the small sample sizes. There were also a number of issues with the use of the technology and the design of the workshop. Although some students found interacting with the infant simulators to be fun and engaging, some students rated the LISSA activity as low in terms of helpfulness for conceptual understanding and some were observed not to engage fully with the activity. Whilst group dynamics and discussion were not systematically observed, the success of the LISSA and the plan a study activity might rest on students engaging fully and the TA being prompt in picking up signs of non-engagement or distraction. Indeed, the LISSA and the plan a study activity seemed to work best with groups of 6 or fewer students.

Consistent with other studies (e.g., Bath et al., 2000), some students found that the infant simulator lacked realism, with comments that it did not look like a real baby, that it could not close its eyes, and it did not respond behaviorally (e.g., by moving its arms or legs, or clinging) to the caregiver’s actions. Whilst such comments might have lead some students to withdraw from the activity, their comments could also be used as starting point for discussing the technology’s validity and further use in examining the sensitivity hypothesis and caregiving behavior in general.

Some students reported that the infant’s crying had affected them and some in the participant role referred to their inability to stop the infant’s crying with their caregiving behavior. Indeed, exposure to the difficult cry schedule has been found to increase negative affect (Bruning & MacMahon, 2009). Prior to the workshop, students were advised to contact the lecturer if infant crying was likely to be a problem for them; however, no students came forward. In retrospect, it might be difficult to anticipate how crying will affect mood, especially where an individual has limited experience with infants. The workshop could, therefore, be improved by explicitly referring to the possible effects on mood or including a debriefing session.

This pilot workshop was based around a specific hypothesis and the Voorthuis et al. (2013) article; however, it could easily be adapted to other articles (e.g., Bakermans-Kranenburg et al., 2015; Bruning & MacMahon, 2009) and developmental concepts (e.g., effects of exposure to crying; goodness of fit and infant temperament). The workshop could also be broken into three separate, but related activities if time is constrained. For example, Activity 1 could be embedded within a lecture setting and Activity 3 could form part of a more general research methods class.

Taken together, these findings suggest that the use of infant simulators in undergraduate teaching can have effects beyond students’ attitudes and beliefs about parenting to actual engagement with psychological research and concepts, namely attachment theory’s sensitivity hypothesis. It remains to be tested whether the learning experience translates to enhanced performance on assessments related to the caregiver sensitivity hypothesis or attachment theory in general. Further, a comparison between the use of the infant simulator and VRT or video-taped interactions is required to clarify whether the hands-on element of the workshop facilitates students’ learning.

Footnotes

Acknowledgements

I would like to thank Edward Ong Yung Chet, Benjamin Crossey, Sian Griffiths, Fyqa Gulzaib, Min (Susan) Li, and Harpreet Sihre for facilitating the workshops. I would also like to thank the anonymous peer reviewers for their thoughtful feedback on a previous version of this paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and publication of this article.