Abstract

Whether instructional-communication feedback sent to struggling students and succeeding students following course exams would significantly increase their exam scores and significantly decrease their exam-skipping behavior relative to students in the control group was investigated. An experimenter-blind study utilizing feedback and the personalization principle was conducted. Undergraduate students (N = 122) were randomly assigned to the control group and the experimental group – the instructional-communications group. After each exam, an instructional intervention using instructional-communication feedback was sent. Struggling students who received instructional-communication feedback had higher exam scores and skipped fewer exams than struggling students in the control group for all exams. For exam 2, there was a statistically significant exam score difference (one half-letter grade: 4.93%) between struggling students in the experimental and control groups. For exam 2, the proportion of students in the experimental group that engaged in exam-skipping behavior was significantly smaller than the control group, with a percentage difference of 9.46% – a 43.07% decrease relative to the control group. For struggling students, instructional communications with tailored content significantly increased student test performance. For all students, instructional communications significantly decreased student exam-skipping behavior. The results of this study provide evidence of the efficacy of using personalization-based tailored instructional communications.

Keywords

Because course exams play such a key role in assessing the learning of college students, it is important to identify effective strategies college instructors may use to contribute to the test performance of college students, especially struggling students, students whose test performance is lower than 70%. College instructors use exams to provide students with the opportunity to demonstrate that they have learned course content and can solve new problems related to course content. Outside of in-class instruction – including lectures, discussion, and in-class assignments – what if there were a way for college instructors to elicit deeper learning in students? Would the use of a strategy that elicits deeper learning in individuals help students, and struggling students in particular, perform better on course exams?

General Feedback Effects

Feedback has been noted for its power to significantly impact learning, and the effects of individual feedback on learning and performance are well known (DeShon, Kozlowski, Schmidt, Milner, & Wiechmann, 2004). Feedback is defined as the receipt of information by a person (e.g., teacher, peer, self), object (e.g., book), or experience about an individual’s understanding or performance (Hattie & Timperley, 2007). Performance feedback is one of the most well-known effective interventions to improve learning (DeShon et al., 2004). Previous meta-analyses on feedback in classrooms that included 196 studies produced an average feedback effect size of .79, with feedback emerging as one of the highest influences on student achievement (Hattie & Timperley, 2007). One of the primary purposes of feedback is to decrease the difference between a student’s current understanding and the student’s future performance (Hattie & Timperley, 2007).

A general model of self-regulation suggests that a key variable impacting individual effort is individual performance feedback (DeShon et al., 2004). The multi-level model of feedback effects suggests feedback on individual performance leads to individual goals as well as behavioral choices, with the behavioral choices impacting individual efforts that subsequently impact future individual performance (DeShon et al., 2004). It is theorized that, in individuals, feedback affects individual goals as well as behavioral choice, leading to individually focused efforts (DeShon et al., 2004). In a previous study (Muis, Ranellucci, & Franco, 2013) on performance feedback and its impact on student achievement in an undergraduate chemistry course, researchers found that students in the performance-approach feedback group had significantly higher achievement than students in other groups, with a six-point difference between the earned final grade of students in the performance-approach feedback group and control group.

In order for feedback to be effective, it must build on effective classroom instruction, because it is of little to no use when there has been no initial learning (Hattie & Timperley, 2007). When effective instruction is in place, feedback should happen second, building on the effective instruction. Feedback takes on an instructional purpose (Hattie & Timperley, 2007), and instructional feedback should include information about the process of learning that will fill the gap between what a student understands and what is aimed for the student to understand (Sadler, as cited in Hattie & Timperley, 2007). Feedback as a theoretical construct, because of its demonstrated power to impact learning, was used as the theoretical basis for this study. Feedback with an instructional purpose – instructional communications – were designed for use in this study.

Design Elements of Instructional-Communications Feedback

Personalization principle

Personalization principle, one of two principles in Mayer’s cognitive theory of multimedia learning (Mayer, 2008), describes how deeper learning can be elicited in individuals using conversational instructional messages. Deeper learning refers to students’ ability to use learned information to solve new problems (Moreno, Mayer, Spies, & Lester, 2001). According to personalization principle, deeper learning happens when instructional messages are written in conversational style, rather than formal style (Moreno & Mayer, 2004). It is theorized that feelings of presence, which include social and/or physical presence, serve as mediator variables (Moreno & Mayer, 2004). Social presence is a “sense of being with and interacting with another social being” (Moreno & Mayer, 2004, p. 171). Physical presence is a sense of being present at and interacting in a particular place (Moreno & Mayer, 2004).

According to the personalization principle, when instructional messages have been designed to promote feelings of social presence or physical presence, or both, the personalization-instructional method is being used (Moreno & Mayer, 2004). The deeper learning that occurs as a result of applying the personalization-instructional method is known as the personalization effect. A meta-analysis of conversational style language revealed that researchers have created conversational style language to catalyze the personalization principle by implementing one or more of four approaches: a) using the first person, rather than the second or third person, to address the learner, b) using language that directly addresses the learner, c) including polite language, and d) using language that emphasizes the author’s views and personality (Ginns, Martin, & Marsh, 2013).

Multiple approaches to integrating individual feedback and multimedia with learning, including the personalization principle, have been discussed in the literature (Kartal, 2010; Kurt, 2011; Lim, O’Connor, & Remus, 2003; Lou, Abrami, & d’Apollonia, 2001; Mayer, 2008; Sklar & Zwick, 2009) and many of the essential concepts and findings, including personalization principle and learning, have been discussed in previous research (Kartal, 2010). Although multiple theoretical frameworks exist, Mayer’s cognitive theory of multimedia learning (Mayer, 2008) was selected as the guiding framework for the design elements of the instructional interventions, because of its focus on deeper learning and its standing as the “most comprehensive theory about learning with instructional multimedia” (Wouters, Paas, & Van Merrienboer, as cited in Kartal, 2010, p. 615).

PB-T communications, PB communications, and tailored message content

PB-T (personalization-based and tailored) instructional communications are designed to elicit deeper learning via use of the personalization-instructional method and tailoring principles (Gibbs, 2013). Similarly, PB (personalization-based) instructional communications are designed to elicit deeper learning by way of the personalization-instructional method, but do not use tailoring principles. PB-T instructional communications combine tailored message principles and conversational style language, which is prescribed for the personalization instructional method. In earlier work, Thomas (publishing as Gibbs, 2013) conceived the concept and created the term PB-T instructional communications.

The goal of using PB-T instructional communications is to elicit deeper learning in the communication recipient resulting from the personalization effect. Tailored messages are customized for a target individual in order to capture that individual’s attention, provide useful information that the individual is believed to need, and positively impact the individual’s cognitive-behavioral responses (Stellefson, Hanik, Chaney, & Chaney, 2008). A typical tailored communication has captured researcher-defined relevant participant data (Gibbs, 2013) along with the participant scores on some dependent variable of interest (e.g., attitude, behavior, learning) from a baseline interview with participants at time 1. A tailored communication is sent to participants at time 2, and participants’ post-test scores on the dependent variable of interest are measured again at time 3.

Positive messages and statements

Positive language focuses on what is possible, and positive messages emphasize positive actions, as well as positive consequences (Simoneaux & Stroud, 2014). Positive messages are messages that contain affirming, encouraging, and supportive message content. Sending positive instructor-written messages to students has been recommended as a best practice in distance learning courses (Sull, 2011, 2014), and we believed that it was possible that sending positive messages would be helpful to commuter students attending a non-residential community college. We believed that it would be easier for students to process and elaborate on positive messages, because researchers have concluded that positive messages are easier and require less cognitive effort to process and elaborate upon than negative messages (Lim, O’Connor, & Remus, 2005).

Research Questions and Hypotheses

In previous research (Isbell & Cote, 2009) that grouped college students into struggling-student and succeeding-student groups, researchers sent a friendly personalized email to struggling students following the first exam and prior to the second exam. Isbell and Cote found that the result of sending a single personalized email message to students was better performance on the second and fourth course exams. To personalize the email, they told students that their exam grade was below the course average, explained when and how students could access teaching assistants, reminded students about available online course materials and upcoming review sessions, and expressed concern for the student. The researchers noted that additional research that utilizes a more labor-intensive approach utilizing recurring messages was needed. This research study was designed using a labor-intensive approach and recurring messages – instructional communications.

Research questions were developed in three areas: struggling-student exam performance, succeeding-student exam performance, and student exam-skipping behavior.

Struggling Students

Succeeding Students

Exam Skippers

Method

Participants

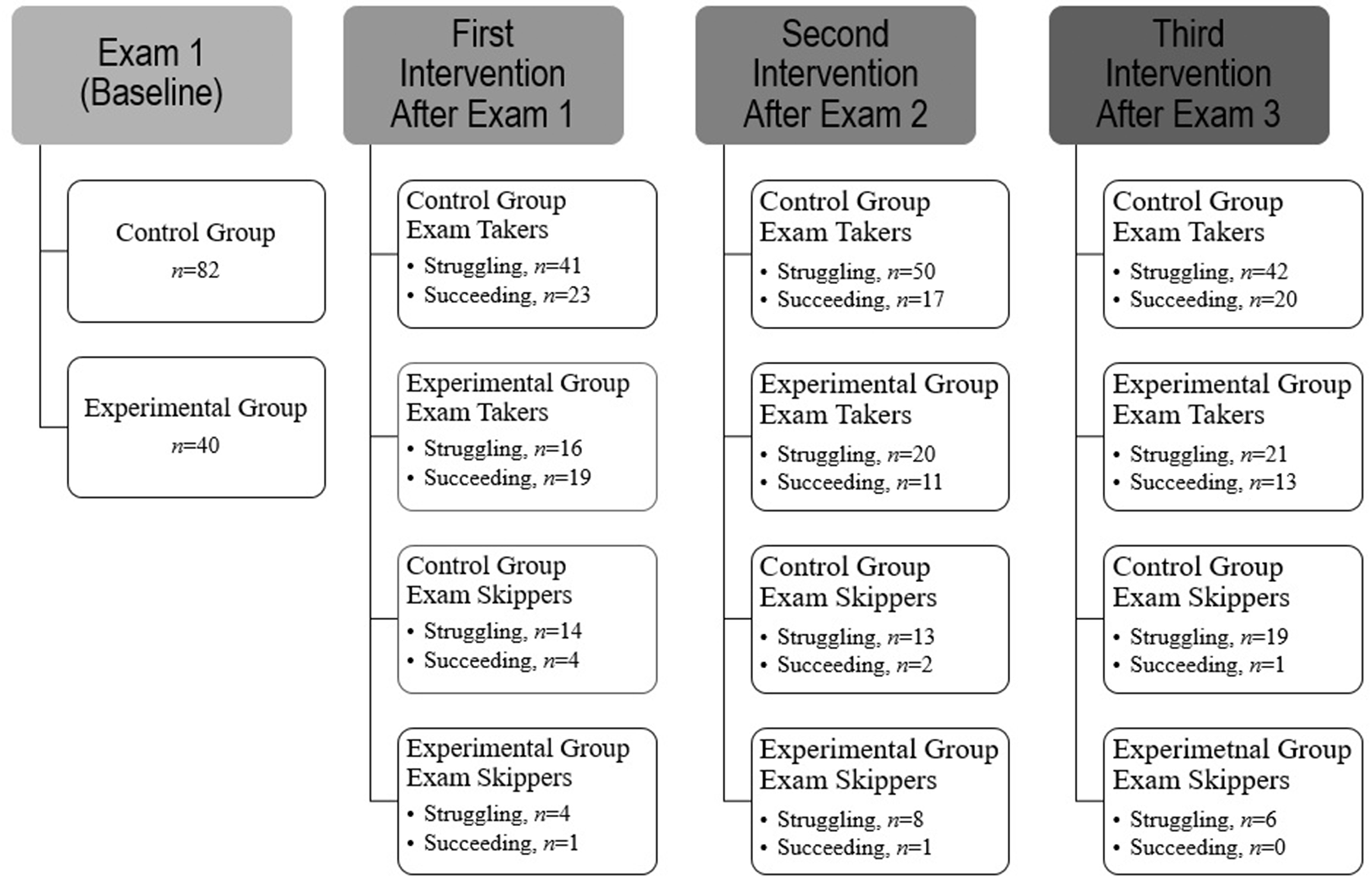

The sample was comprised of first-year and second-year college students (N = 122) in four Introduction to Psychology courses at a non-residential community college on the east coast of the United States. The minimum score, 70%, in the average range (70%–79%) of scores of the college’s grading system (A: superior, 90%–100%, B: good, 80%–89%, C: average, 70%–79%, D: pass without recommendation, 60%–69%, F: Failure, 0–59%) was used to determine whether a student was a struggling student or succeeding student. The Introduction to Psychology courses were taught by the first author of this study.

Initially, there were three groups, a postal-mail instructional-communications group, an email instructional-communications group, and a control group. Although one third of the participants were randomly assigned to the email group, after data collection for the study was completed, it was determined that email message-blocking software intercepted all instructional communications sent to this group. This meant that participants planned for inclusion in the email group were part of the control group (n = 82).

Research Design

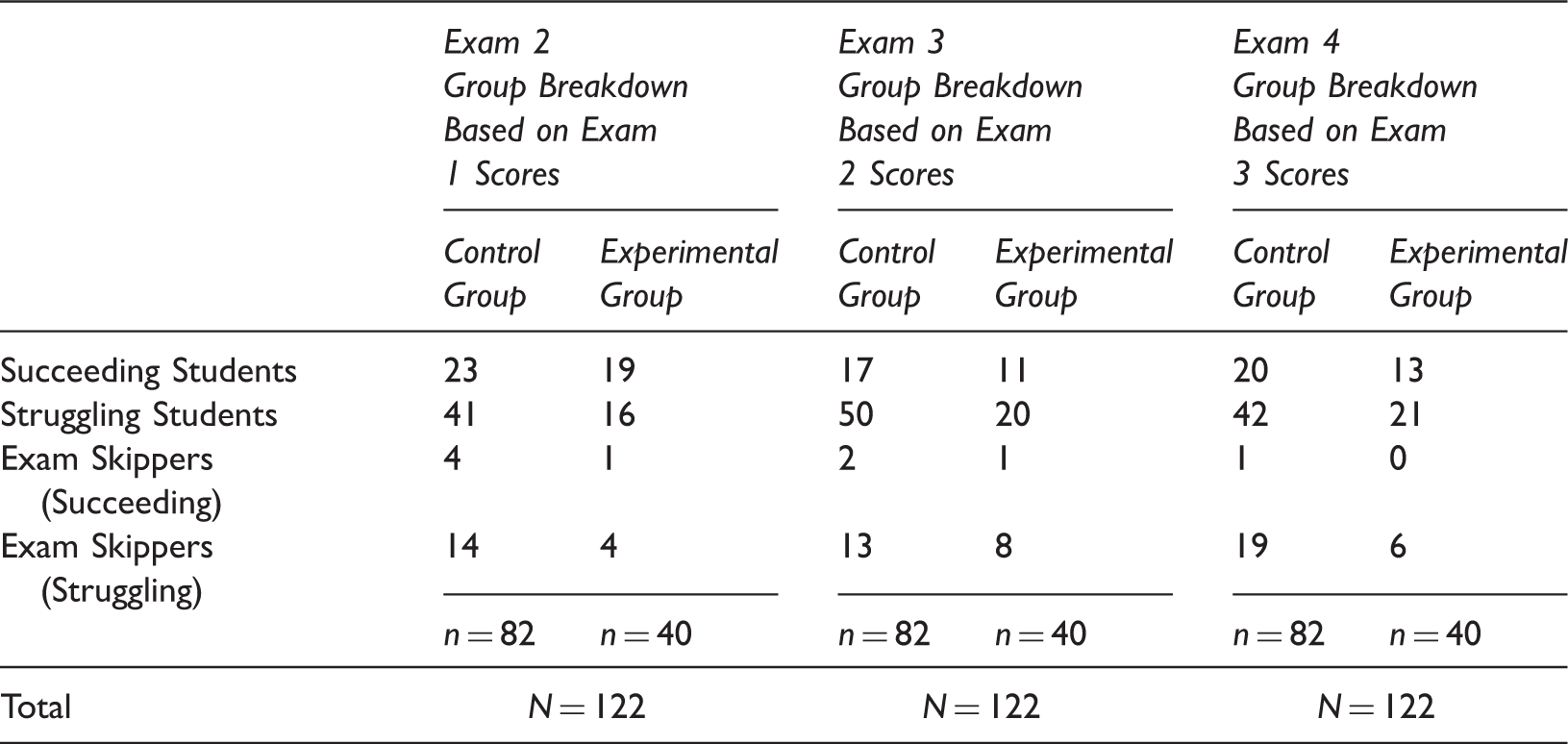

An experimenter-blind between-groups experimental procedure was used. Whether a student was in the experimental group – the instructional-communications group or control group was constant across all three instructional interventions (See Figure 1) as a result of random assignment of participants at the start of the study and is explained in explicit detail in the procedures section. Three instructional interventions were developed. An instructional intervention was developed for exam 2 following exam 1. Similarly, an instructional intervention was developed for exam 3 following exam 2, and a third was developed for exam 4 following exam 3.

Participant Group Breakdown at Baseline and by Instructional Intervention.

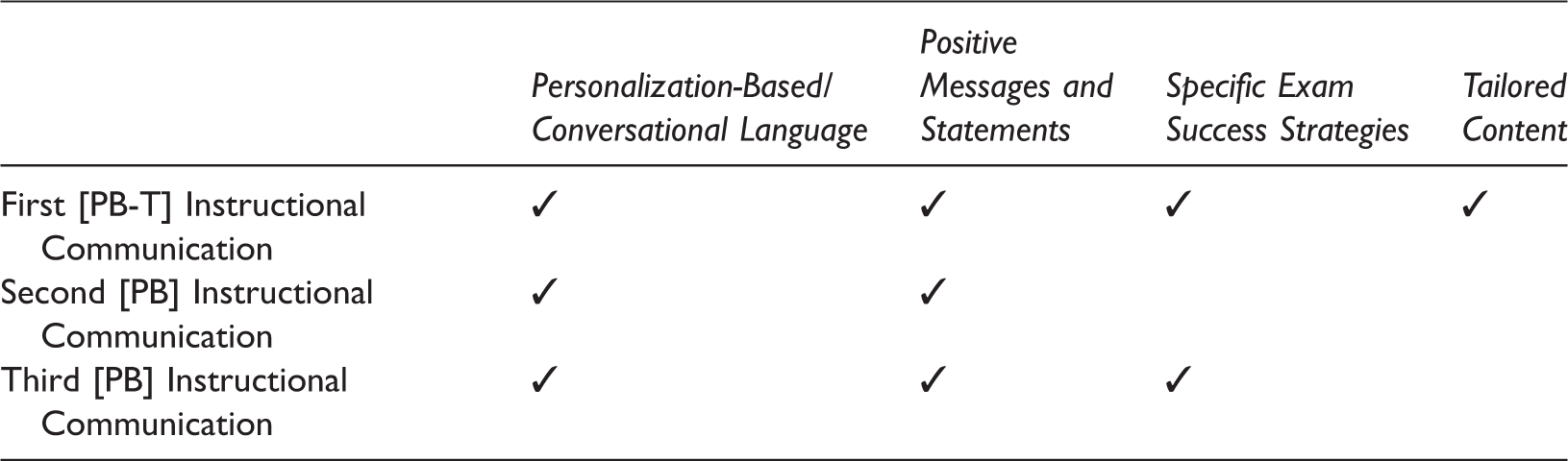

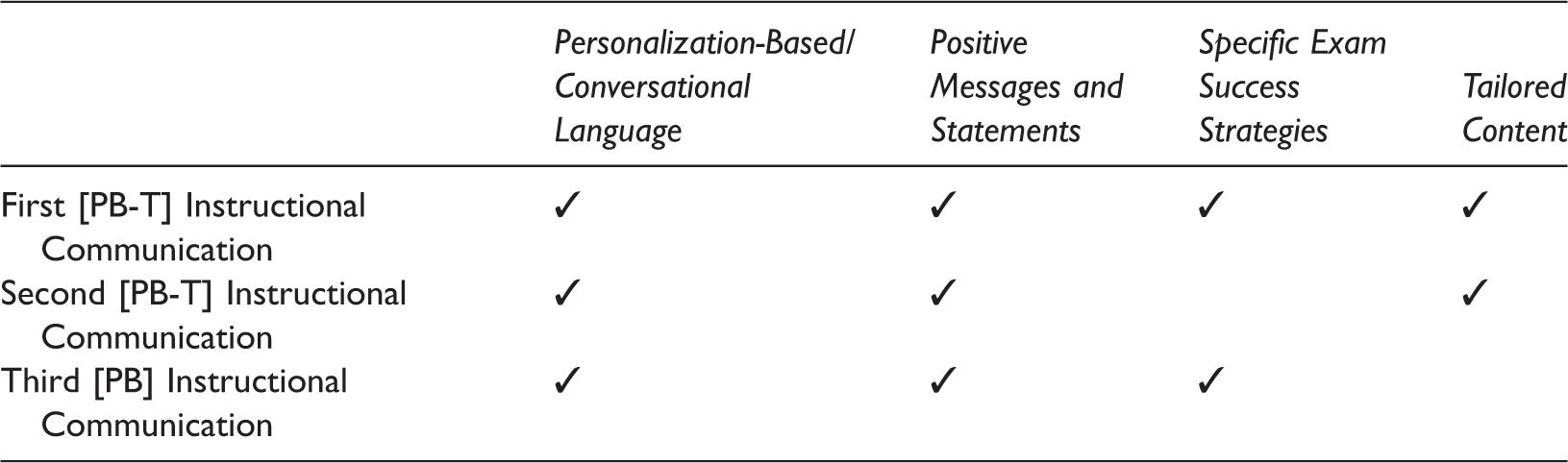

Attributes of Instructional Communications Sent to Struggling Students

Materials

Brief Instructor-written Communication

We believed that it was possible that students in the experimental group might mention having received a letter from the instructor and determined that a brief instructor-written letter would be sent to all students in the study, in case a student mentioned receiving a letter from the instructor to the instructor. The purpose of the brief instructor-written communication was to safeguard the experimenter-blind nature of the study. The brief instructor-written communication was prepared for use after exam 1, after exam 2, and after exam 3. This communication was neither personalized nor tailored. The four-sentence, instructor-written communication included (a) college letterhead, (b) a general greeting, (c) reference to an enclosed college 1-page enclosed college activity flyer, and (d) the signature of the instructor.

Instructional Communication

The first author developed three instructional communications for each instructional intervention, one for use prior to exam 2, another for use prior to exam 3, and a final communication for use prior to exam 4. All instructional communications were one page in length (see Appendix A). The narrative approach to each one-page communication included a) using first and second person, rather than third person, b) using sentences that directly addressed the student, and c) making the instructor’s views and personality visible. This narrative approach reflects attributes of instructional text language noted as having the potential to improve student learning by researchers (Ginns, Martin, & Marsh, 2013) who conducted a meta-analysis of studies that used personalization-based conversational language.

Attributes of Instructional Communications Sent to Succeeding Students

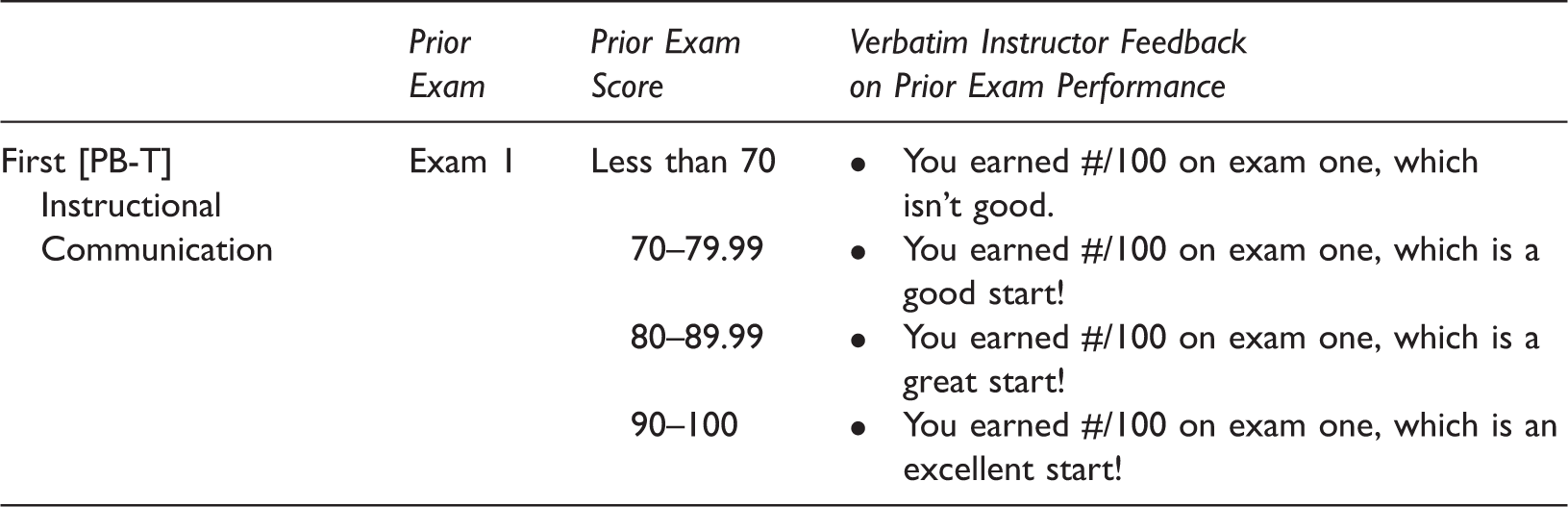

Tailored Content in First Instructional Communication

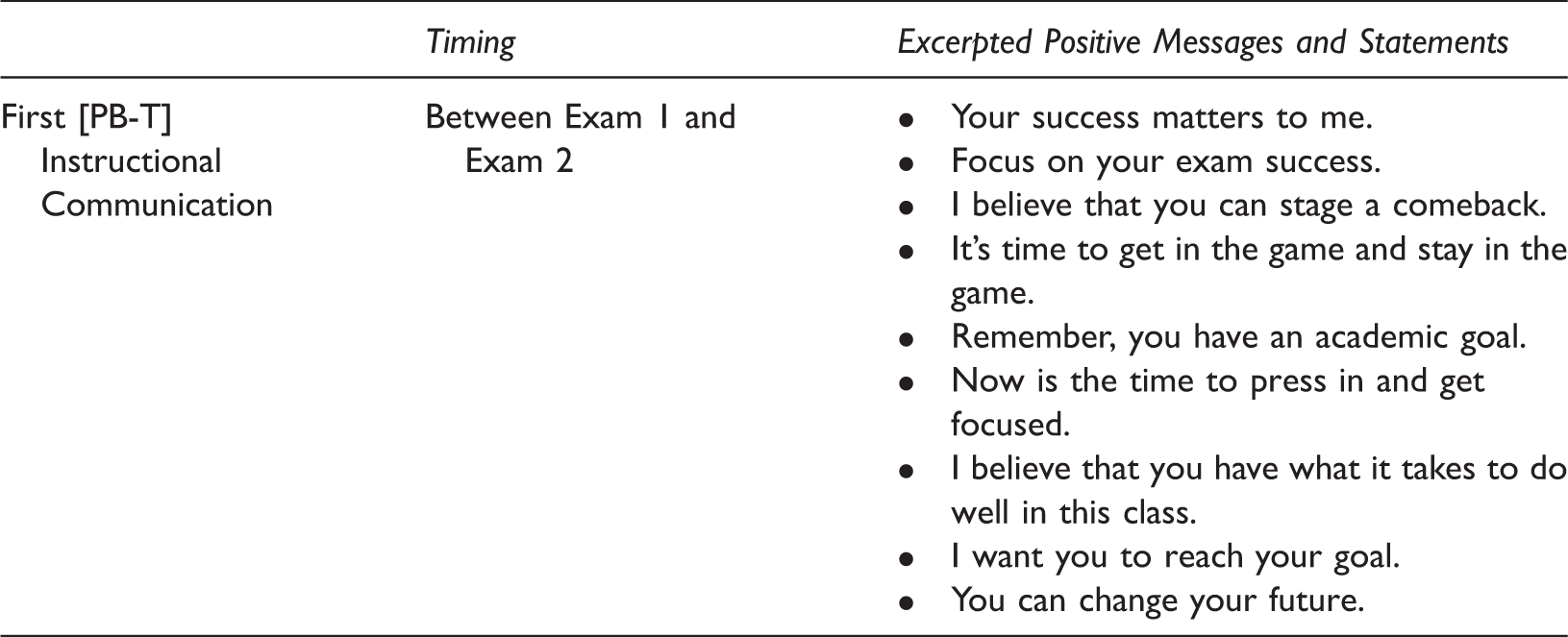

Positive Messages in First Instructional Communication Sent to Struggling Students

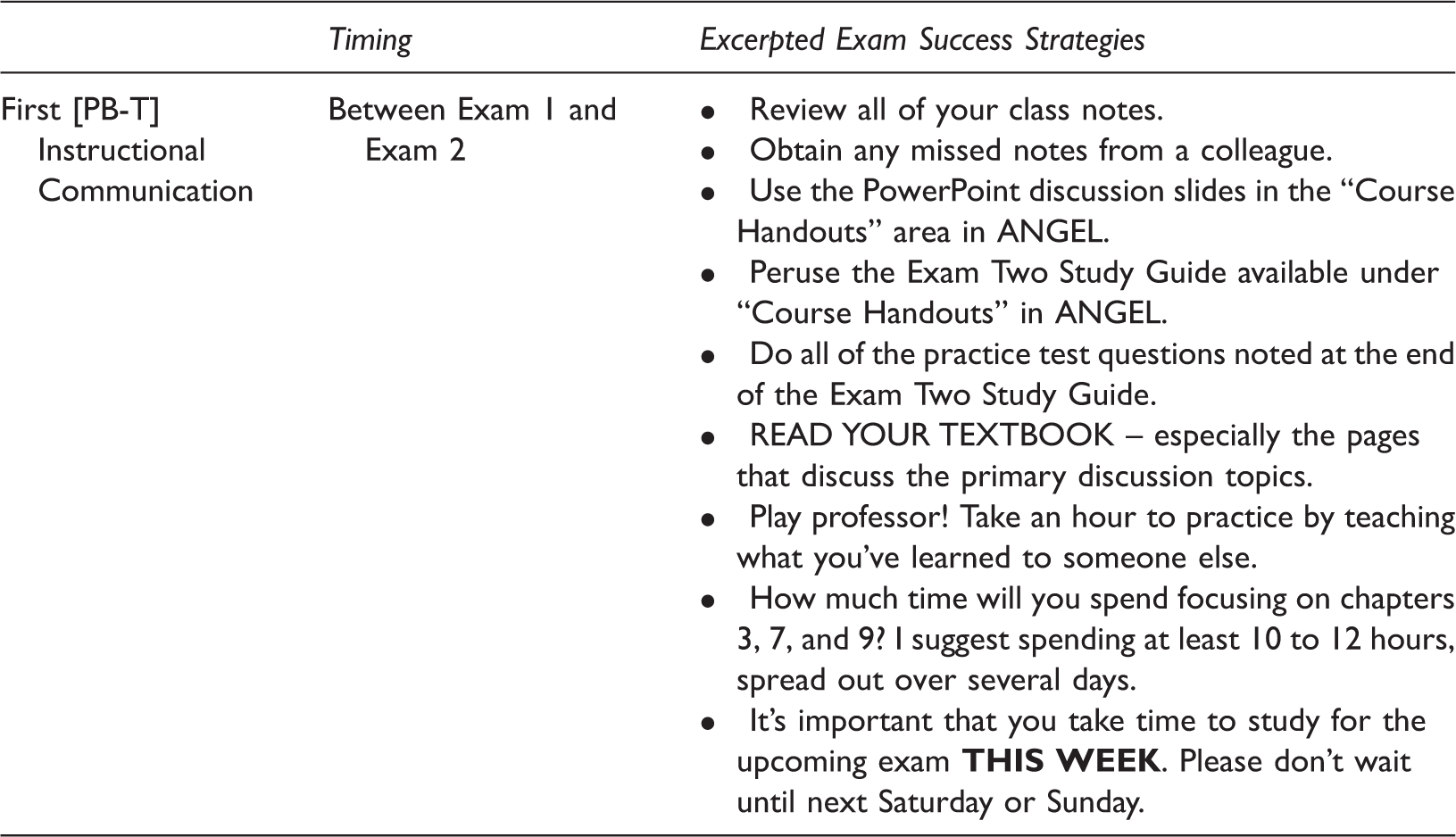

Specific Exam Success Strategies in First Instructional Communication

Specific exam success strategies were not used in the second and third instructional communications (See Tables 1 and 2) due to our decision to limit each communication to a singular 8.5 in. × 11 in. (22 cm × 28 cm) page. The personalization-based conversational language in combination with the positive messages and statements content in the second instructional communication was a full page. We elected to omit specific exam success strategies, since specific exam success strategies had been discussed orally in class prior to exam 1 and prior to exam 2, and were included in the first instructional communication.

For tailored content included in the first instructional communication sent to struggling students and succeeding students, as well as the second instructional communication sent to succeeding students, the instructor-defined relevant criteria to tailor the communication to each student were previous test score and instructor feedback on immediately prior exam performance (See Table 3). Tailored content highlighting student test scores and including evaluative language on struggling student test scores in all the second and third instructional communications (See Tables 1 and 2) was also omitted due to the instructor’s concerns about struggling students’ feelings about receiving a letter with an exam score after every exam in case any students were consistently in the struggling student group across all exams.

Exam Content and Format

Each of the four exams described in this study utilized the multiple-choice format and were comprised of 50 questions. Discrete, non-overlapping content was used for each exam. None of the exams was cumulative (e.g., midterm, final).

Procedure

An experimenter-blind procedure was used. At the beginning of the second week of the semester, the instructor privately compiled a list of all students (N = 122) and randomly assigned students to groups 1, 2, and 3. Next, the second author of this study privately and independently of the first author randomly assigned each group to the numbers 1, 2, and 3 to determine which numbered groups would be the control, email instructional-communication, and postal-mail instructional-communication group. The results of random assignment were that group 1 was the control group, group 2 was the postal-mail instructional-communications group, and group 3 was the email instructional-communications group. Participants in group 2 were the experimental group. As explained in the participants section of this paper, group 1 and group 3 participants became the control group.

Brief Instructor-written Communication

All students were sent a brief instructor-written communication, along with a college activity flyer. Whether students were assigned to the control group or instructional-communications group, students were mailed the brief instructor-written communication. All students were mailed the brief instructor-written letter along with a college flyer advertising a college activity (e.g., college-sponsored weekend bike race flyer) in a single envelope. The brief instructor-written communications were sent in weeks 5, 9, and 13 of a traditional 15-week semester, 10 days after the prior exam and 18 days before the next exam. The first set of brief instructor-written communications was sent in week 5, the second in week 9, and the third in week 13.

PB and PB-T Instructional Communications

The PB and PB-T instructional communications were prepared by the instructor for each group and placed in three bundles secured by a large rubber band with a note. On the note placed on top of the bundle of instructional communications in sealed envelopes was the number associated with the group. The instructor gave the numbered bundles to the second author of this study. Instructional communications were prepared for the control and experimental groups by the instructor, although only the instructional communications for the experimental group were needed and would be mailed.

The instructor handed off the instructional communications to the second author who returned only the appropriate communication, based on the student’s membership in group 1, group 2, or group 3, to the instructor in a sealed large envelope, which the instructor took to the college post office for postal-mail processing. The superfluous communications used to protect the experimenter-blind nature of the study were retained by the second author. The instructional communications were mailed in weeks 5, 9, and 13 of a traditional 15-week semester, 10 days after the prior exam and 18 days before the next exam. The first set of instructional communications were sent in week 5, the second in week 9, and the third in week 13.

Results

Number of Struggling and Succeeding Students Post Baseline (Exam 1)

Struggling Students

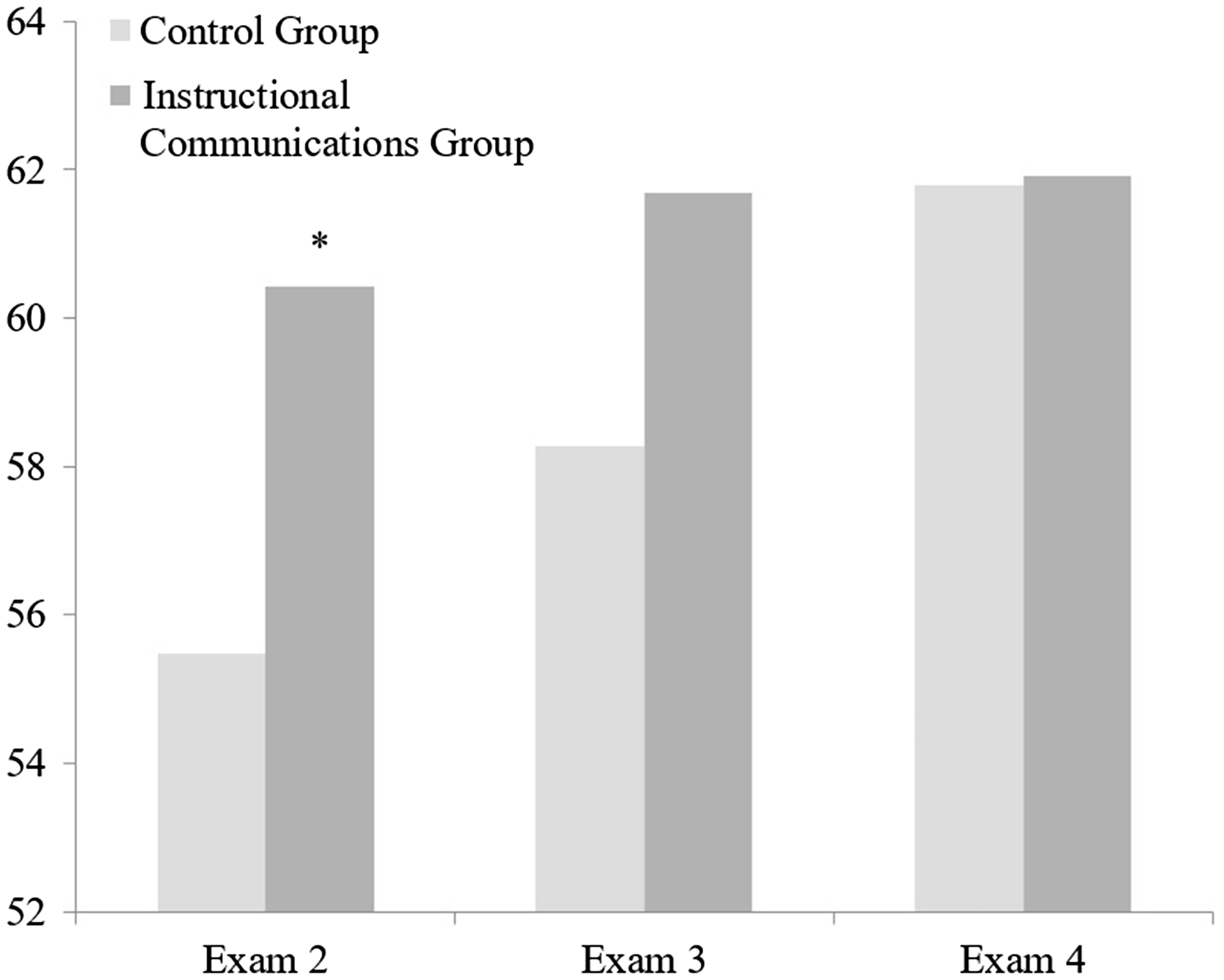

For each exam, excluding exam skippers, there were more struggling students (exam 1, n = 66, 58.41%), (exam 2, n = 57, 57.58%), (exam 3, n = 70, 71.43%), (exam 4, n = 63, 65.63%) than succeeding students (exam 1, n = 47, 41.59%), (exam 2, n = 42, 42.42%), (exam 3, n = 28, 28.57%), (exam 4, n = 33, 34.37%). The hypothesis that struggling students who received instructional communications following an exam would have higher exam scores than struggling students in the control group on the subsequent exam was partially supported (See Figure 2). Hypothesis 1A was confirmed. Struggling students who were instructional communication receivers had higher exam scores than their struggling counterparts in the control group. Exam 1 struggling students who received instructional communications (PB-T) after exam 1 and prior to exam 2 had significantly (t(55) = −1.902, p = .031, d = .575) higher exam scores, M = 60.42, SD = 8.05, n = 16 on exam 2, than students in the control group whose exam scores, M = 55.49, SD = 9.05, n = 41, were half a letter grade lower. The mean difference in exam scores between these students who were classified as struggling students (exam score < 70) based on their exam 1 scores and who received instructional communications following exam 1 and before exam 2 and their struggling-student counterparts in the control group was 4.93%.

Mean Post-Intervention Exam Scores of Struggling Students by Group.

The results did not support hypotheses 1B or 1C. The instructional-communication group’s (PB) mean exam 3 score (M = 61.68, SD = 11.24, n = 20) and mean exam 4 score (M = 61.91, SD = 15.09, n = 21) were higher than the controls group’s mean exam 3 score (M = 58.27, SD = 14.19, n = 50) and mean exam 4 score (X = 61.79, SD = 12.57, n = 42). However, the difference between the instructional-communications (PB) group’s and control group’s mean scores was not statistically significant for exam 3 (t(68) = −.957, p = .171, d = .266) or exam 4 (t(61) = −.032, p = .487, d = .008).

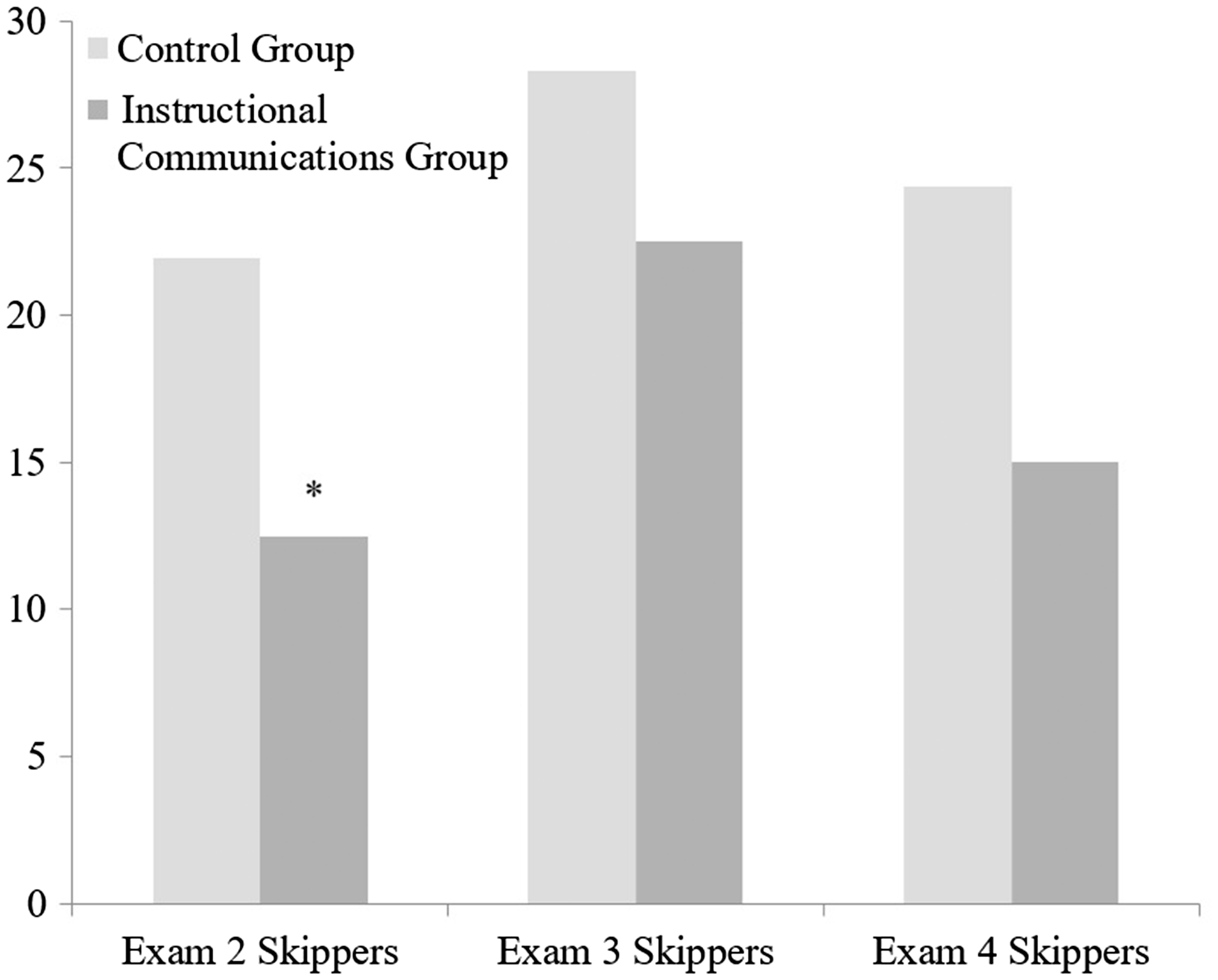

Exam Skippers

The hypothesis that students sent instructional communications would be significantly less likely to skip exams than students in the control group was partially supported (See Figure 3). Hypotheses 3A was confirmed. For exam 2, the results of a one-tailed z-test of independent proportions revealed a statistically significant (z = 1.71, p = .043) difference in the proportion of exam skippers for students in the instructional-communications group with 12.5% of students (n = 5/40) in the experimental group (struggling students [PB-T], n = 4, succeeding students [PB-T], n = 1) skipping exam 2 and 21.95% (n = 18/82) of students in the control group (struggling students [PB-T], n = 14, succeeding students [PB-T], n = 4) skipping exam 2.

Proportion of Exam Skippers by Group. *Significant at the p < .05 level.

The results did not support hypotheses 3B or 3C. For exam 3, the results of a one-tailed z-test of independent proportions indicated no statistically significant (z = −0.549, p = .291) difference in the proportion of exam skippers for students in the instructional-communications group (n = 9/40, 22.5%, struggling students [PB], n = 8, succeeding students [PB-T], n = 1) and students in the control group (n = 15/82, 18.29%, struggling students [PB], n = 13, succeeding students [PB-T], n = 2). Similarly, no statistically significant difference (z = 1.189, p = .117) in the proportion of exam skippers for students in the instructional-communications group (n = 6/40, 15%, struggling students [PB], n = 6, succeeding students [PB], n = 0) and control group (n = 20/82, 24.39%, struggling students [PB], n = 19, succeeding students [PB], n = 1) was found for exam 4.

Succeeding Students

The results did not support hypotheses 2A, 2B, or 2C. Succeeding students sent instructional communications did not have higher exam scores than their succeeding student counterparts in the control group. The results of an independent samples t-test indicated that there was no significant difference in the test scores of succeeding (exam score ≥ 70) students who were in the instructional-communications group and their counterparts in the control group for exam 2 (t(40) = .721, p = .241, d = .217), exam 3 (t(26) = −.592, p = .279, d = .233), and exam 4 (t(31) = −.032, p = .487, d = .477). For students classified as succeeding students based on their exam 1 score, the exam 2 results were M = 70.27, SD = 10.02, n = 19 for the instructional communications (PB-T) group and M = 72.18, SD = 7.38, n = 23 for the control group. For students classified as succeeding students based on their exam 2 scores, the exam 3 score results were M = 75.15, SD = 8.08, n = 11 for the instructional communications (PB-T) group and M = 73.04, SD = 9.88, n = 17 for the control group. For students classified as succeeding students based on their exam 3 scores, the mean exam 4 score was M = 80.89, SD = 7.93, n = 13 for the instructional communications (PB) group and M = 76.01, SD = 12.09, n = 20 for the control group.

Discussion

The results of this study indicated that struggling students (exam score < 70) did benefit from receiving an instructor-written communication, but only when the instructor-written communication was a PB-T instructional communication. When a PB-T instructional communication was mailed to struggling students 10 days after the first course exam and 18 days prior to the second course exam, struggling students’ exam performance increased significantly (t(55) = −1.902, p = .031, d = .575) and struggling students’ exam-skipping behavior decreased significantly (z = 1.71, p = .043) for the next exam, with student exam performance increasing by a half-letter grade, 4.93%, and exam-skipping behavior for all students in the experimental group decreasing by 43.07% (21.96% vs. 12.5%) relative to the control group.

Explanation of Results

Some of the results in this study could be explained by general feedback effects. It may be the case that tailored feedback is more effective than non-tailored feedback, as non-tailored feedback is too general and cannot be used by students to adapt their learning behavior. This may explain why an effect was found between exam 1 and exam 2 for struggling students, but not between subsequent exam administrations. Also, it may be that exam success strategies were effective, but mainly for students who did not have sufficient exam success strategies.

If exam success strategies were effective mainly for struggling students who did not have sufficient exam success strategies, it explains why succeeding students did not benefit from instructional-communications feedback like struggling students. Perhaps struggling students implemented the PB-T instructional-communications feedback that included tailored content to revise their learning activities. The longer the amount of time the students had to implement feedback, the smaller the difference became between the experimental and control groups. A smaller difference emerged at exam 3 relative to exam 2, and no difference at exam 4 relative to exam 3. Succeeding students may have been succeeding because they already had a good understanding of exam success strategies and therefore feedback did not help them.

In addition, a significant impact on struggling-student exam performance and struggling-student exam-skipping behavior was only found for the first instructional intervention that also used tailored content. This finding suggests that exam success strategies content may be additive in its impact on struggling students’ exam performance and exam-skipping behavior. It also suggests that tailored content may be necessary for a significant positive impact on struggling students’ exam performance and exam-skipping behavior.

A second reason that may explain the lack of efficacy of the second and third instructional communications is that – as suspected with exam success strategies – content, personalization, and positive self-statements may be additive in their influence. The results of this study suggest personalization and positive self-statements, as well as exam success strategies content, are at best additive in their impact on struggling students’ exam performance and exam-skipping behavior, while tailored content is necessary for a significant positive impact on struggling students’ exam performance and exam-skipping behavior. In this study, positive messages and statements were used in all three instructional interventions, but the inclusion of positive messages and statements in each instructional intervention was not sufficient to produce significantly impactful results on student exam performance or exam-skipping behavior for struggling students or succeeding students. Other researchers (Wang & Crooks, 2015) have concluded that their findings suggest that the personalization principle is additive in its influence on student learning.

Another plausible explanation for the lack of efficacy of the second and third instructional communications on test performance is habituation. Other researchers (Chang, Yung-Hsiu, Lin, Chang, & Chong, 2014) have found a similar result in which the effect of student engagement following positive messages decreases after time 1 for college students. The decreased impact of the second and third instructional interventions may be due to habituation effects. Students may have had a decreased response to the stimulus intervention upon repeated presentations of variations of the stimulus in the second and third intervention. Considering sequential and longitudinal differences between test scores from exam 1 and exam 4 could provide more information about why the first instructional intervention worked, but the second and third instructional interventions did not.

Surprisingly, none of the three instructional interventions had a statistically significant impact on the exam scores or exam-skipping behavior of succeeding students in the experimental group relative to the control group. The results of this study suggest that the communication content of instructional communications for succeeding students needs to include tailored content that is different from the tailored content of struggling students to elicit an increase in test performance for succeding students. In this study, the communication content focused on what students could do to improve their performance on the next exam. Perhaps an instructional communication focusing on capitalizing on the previous test performance success of succeeding students and/or improving one’s standing relative to other succeeding students would be more positively impactful than content that focuses on how to improve the student’s test performance relative to the previous test performance.

PB-T Communications vs. PB Communications

The results of this study provide evidence of the efficacy of using PB-T instructional communications to improve student exam scores and decrease exam-skipping behavior. PB (Personalization-Based) instructional communications without tailored content were not effective for struggling students when used for the second and third instructional interventions. PB-T (Personalization-Based and Tailored) instructional communications merge the deeper learning goal of the personalization effect, grounded in the personalization-instructional method, with the advantageous benefits of tailoring. We found that PB-T instructional communications are a unique type of communication, which have not been previously discussed in the peer-reviewed literature, and which had efficacy for increasing student test performance and decreasing exam-skipping behavior in college students.

Ethical Considerations

In an article on teaching research and teaching experiments, Czarnocha (2008) notes that the ethical problem facing an instructor who conducts teaching experiments is that “…she doesn’t want the control class, whose students are used as [an] object of comparative assessment, not to receive the benefit of the teaching experiment…” (p.8). We endeavored to engage in this action research, because we strongly believe in the transformative potential of action research in higher education. In the current modern action research movement, which began in the 1980s, educators have been encouraged to “conduct their own classroom research and form conclusions about best practices” (Manfra, 2009, p. 37). There are many ethical issues in action research and educational research, and a prominent ethical question that was considered by the authors of this study is “Is it ethical to implement an instructional intervention to raise students’ test scores and decrease students’ exam-skipping behavior for one group, but not another?”

We reasoned that if the first author of the study used previously studied and implemented best-known practices to teach students as she had in previous semesters, that it would be acceptable, although the students would not benefit from the potentially positively impactful instructional interventions conceptualized. We also reasoned that, although we believed that the instructional interventions would work, we needed to determine whether they really worked, if there was a causal relationship between the independent and dependent variables. We concluded that students who did not receive the intervention would not be harmed, since current standards for teaching practice could be utilized with students. There was no guarantee that the instructional interventions would benefit students in the experimental group.

At the college at which this study was conducted, the culture and leadership of the college embraced action research, and approval was given by the college to conduct this action research study. In addition, we concluded that the potential benefits of the study outweighed the potential downside that students who experienced standard teaching practice would likely perform as well as students in the prior academic year, but maybe not as well as students who received the experimental instructional interventions. Ideally, a teaching experiment is situated so that all students who were the participants in the research are the first beneficiaries (Czarnocha, 2008). In this case, the course was only one semester, so it was impossible for the control group students to become potential beneficiaries in a second semester as a result of those students being placed in the experimental group in the second semester and students who were in the experimental group in the first semester being placed in the control group in the second semester.

Limitations of the Study

This study has several limitations. Although an experimenter-blind experimental research design was utilized, the sample size (N = 122), and in particular the size of the experimental group (n = 40), was relatively small, given the number of groups for each level of the independent variable. Future research studies should utilize a larger sample size to ensure that sufficient power is achieved to detect statistically significant differences for each intervention. A second unavoidable limitation in the design of this study is that there was no mechanism to ensure that students did not exchange exam success strategies between groups. Another limitation of this study is that the constellation of instructional communication attributes in each instructional intervention was not identical for each instructional intervention. Whether each instructional intervention would be effective if used three consecutive times or in an order or combination other than the order and combination utilized in this study is unknown.

Recommendations for Future Research

Although it was outside the scope of this study, we wondered how struggling students and succeeding students develop between exams. For example, are there completely different persons in the respective groups? Alternatively, do struggling students tend to fall within the struggling students group across exams? Do succeeding students remain in the succeeding students group across exams? It was observed that succeeding students in the experimental group’s exam scores (exam 2, M = 70.27; exam 3, M = 75.15; exam 4, M = 80.19) increased by 5 points twice, between the second and third exam and between the third and fourth exam, in contrast to struggling students in the experimental group whose exam scores (exam 2, M = 60.42; exam 3, M = 61.68; exam 4, M = 61.79) on the same exams varied by less than 1 point. A longitudinal study is needed, because these questions could not be addressed in this article. A longitudinal description of consistency in achievement could shed more light on how feedback impacts exam success. Also, outside the scope of this study but of value, would be a qualitative analysis of students’ experience of the intervention. One research question for a qualitative or mixed-methods study is: How did students experience the instructional interventions?

For future research, we also suggest researchers conduct additional study on the use of PB-T instructional communications as an intervention for decreasing exam-skipping behavior. For these studies, we recommend that researchers implement measures to reduce the likelihood of habituation effects in multiple instructional-intervention designs. Future research might also include studies that measure the impact of PB-T instructional communications on exam performance and exam-skipping behavior using other communication modalities, including email and text messaging. Further, while the instructional interventions in this study were used with exams utilizing the multiple-choice mode, future research with exams using other modes is needed. Whether PB-T instructional communications are more impactful for some groups (e.g, non-traditional vs. traditional students; online students vs. face-to-face students) than others is also another research question that warrants exploration.

Implications and Conclusion

While this study is contextualized in one applied setting – a college classroom – the results have implications for other settings as well. Exams are necessary in order to allow subsequent placement in other settings. For example, performance feedback has important implications for work-based settings (Dearnley, Taylor, Laxton, Rinomhota, & Nkosana-Nyawata, 2013). In a work setting, employers may need to differentiate between job applicants who would succeed and those who might struggle in a particular job.

Some researchers (Brom Bromova, Dechterenko, Buchtova, & Pergel, 2013) have questioned whether personalized messages developed based on the personalization principle are effective, and the results of this study suggest that Personalization-Based Tailored (PB-T) communications, which are designed using the personalization principle and tailored content, can be effective for increasing exam performance. Struggling students’ exam performance increased significantly (t(55) = −1.902, p = .031, d = .575) when a PB-T instructional communication was mailed to struggling students 10 days after the first course exam and 18 days prior to the second course exam.

This was the first study to utilize a series of three personalization-based instructional communications as instructional interventions aimed at increasing college students’ exam scores and decreasing college students’ exam-skipping behavior. This study extends previous research (Isbell & Cote, 2009) that utilized a single instructor-written communication after exam 1 by examining the effect of an instructional communication after exam 1, after exam 2, and after exam 3. Similar to Isbell & Cote’ study, this study focused on the impact of instructional communications on struggling students (exam score < 70) exam scores, but this study also determined the impact of instructional communications on succeeding students (exam score ≥ 70) exam scores and student exam-skipping behavior. The 43.07% (21.96% vs. 12.5%) decrease in exam-skipping behavior relative to the control group is an important finding that has positive implications for college instructors and, in particular, instructors of college courses in which a large proportion of students tend to skip course exams.

Footnotes

Acknowledgements

We wish to acknowledge and thank Barbara Ann Yoder for her inspiring and thoughtful comments on earlier drafts of this article. Also, we wish to thank the peer reviewers and editor who provided helpful feedback on this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.