Abstract

Previous research has indicated that an intervention called “exam wrappers” can improve students’ metacognition when they are using wrappers in more than one course per academic term. In this study, we tested if exam wrappers would improve students’ metacognition and academic performance when used in only one course per academic term. A total of 86 students used either exam wrappers (an exercise with metacognitive instruction), sham wrappers (an exercise with no metacognitive instruction), or neither (control). We found no improvements on any of three exams, final grades, or metacognitive ability (measured with the Metacognitive Awareness Inventory, MAI) across conditions. All students showed an increase in MAI over the course of the semester, regardless of condition. We discuss the challenges of improving metacognitive skills and suggest ideas for additional metacognitive interventions.

Instructors know metacognition is critical to optimal learning and, correspondingly, try different ways to foster it (Kaplan, Silver, Lavague-Manty, & Meizlish, 2013; Major, Harris, & Zakrajsek, 2016). Metacognition, defined as “knowledge and cognition about cognitive phenomena” (Flavell, 1979, p. 906), consists of assessing task goals and requirements, evaluating status, planning a strategy, applying the strategy, and reflecting on and adjusting the strategy as needed (Ambrose, Bridges, DiPietro, Lovett, & Norman, 2010; Dunlosky & Metcalfe, 2008). After a brief review of research on metacognition we present data regarding a specific intervention designed to enhance student monitoring and adjustment.

Assessment, evaluation, and reflection on tasks

The task assessment phase of metacognition appears to be related to experience. For example, evidence from one laboratory study indicated there were qualitative differences in how writers conceptualized their task demands (Carey, Flower, Hayes, Schriver, & Haas, 1989). More experienced writers produced complex task representations that included goals, purpose, and reader audience. On the other hand, less effective writers produced a more superficial task representation focused on form.

The most successful students are those who evaluated and adapted their own goals according to the different demands across multiple classroom contexts (Nelson, 1990). Unfortunately, several resources suggest that students commonly lack the ability to adjust their current approach to a more successful one. For example, students are likely to use preferred procedures for problem-solving, even when new, recommended procedures are more efficient (Fu & Gray, 2004). This lack of adjustment in behavior is further supported by students’ self-reported use of ineffective, even detrimental, study behaviors such as reviewing class notes, summarizing, and highlighting material in the textbook (Bartoszewski & Gurung, 2015; Dunlosky, Rawson, Marsh, Nathan, & Willingham, 2013; Gurung, Weidert, & Jeske, 2010).

Acquiring and practicing metacognition

Students who struggle to naturally engage in metacognitive behaviors can improve learning when instructors require explicit use of these skills. For example, students who were prompted to self-explain their learning had greater knowledge gains than students who received no prompt (Chi, Leeuw, Chiu, & LeVancher, 1994). In another study, students who were given explicit self-regulated learning training outperformed students in a control condition (Azevedo & Cromley, 2004). Students also performed better on comprehension tasks when engaged in comprehension monitoring activities (Palincsar & Brown, 1984). A major summary of meta-analyses related to student achievement found that teaching metacognitive strategies had a large effect size with regard to student achievement (d = .69; Hattie, 2009). Given this evidence, we were interested in testing an explicit approach to fostering metacognitive skills in the classroom.

Fostering metacognition: Exam wrappers

There are many possible activities to choose from for addressing students’ metacognition at a pedagogical level. One such intervention, an exam wrapper, was designed by Lovett (2013) and provides students with a structured reflection opportunity about exam performance. Exam wrappers prompt students to reflect on three important components to learning: exam preparation (study skills used), types of errors made on exams, and adjustments for future learning (modifications to study habits to better prepare for the next exam). Exam wrappers do not take up much class time (making them teacher-friendly), do not require much time on the part of the student (making them student-friendly), are easy to adapt across different courses, and address several components of metacognition (assessing strengths/weaknesses, performance evaluation, strategy identification, creation of behavioral adjustments).

Unfortunately, there are few published accounts about the use of exam wrappers in the classroom setting. Lovett (2013) implemented exam wrappers across introductory biology, calculus, chemistry, and physics, demonstrating improved metacognition over the academic term. This positive change increased as a function of the number of courses students enrolled in that used the exam wrappers (one, two, or three courses). Although there seemed to be no change for the students enrolled in only one course, there was no control group. Therefore, the results indicated greater gains when students saw the exam wrapper across multiple disciplines but did not address the question of whether using the wrappers in one course is also beneficial compared to not using wrappers at all.

Two other studies support the use of exam wrappers. Achacoso (2004) also found that students increased their metacognitive skills due to the use of exam wrappers. This increase in metacognition was demonstrated by an overall increase in mean exam scores and students’ ability to monitor and adjust study strategies. Thompson (2012) implemented exam wrappers in an intermediate Spanish course and compared the results to a control group. The exam wrapper group did not show more gains in self-monitoring strategies (as measured by the Metacognitive Self-Regulation (MSR) scale of the Motivated Strategies for Learning Questionnaire (MSLQ; Pintrich, Smith, Garcia, & McKeachie, 1991)) compared to the group without exam wrappers. However, post-hoc analysis showed that the students in the control group were more proficient in Spanish at the start of the study. A post-hoc analysis of metacognitive skills specifically for first-year students (less proficient in Spanish) showed a mean MSR increase for these students only if they had completed exam wrappers (Thompson, 2012).

Overall, findings suggest exam wrappers may improve metacognitive skills and/or course performance. However, one weakness of the existing studies is the lack of statistical analyses with regard to quantitative measures of both metacognition and academic performance. Our study is the first quasi-experimental investigation of the use of exam wrappers in community college students (a typically under-researched population). We asked two main questions: Does using exam wrappers (1) change performance? (2) increase metacognition? We hypothesized that the use of exam wrappers would increase exam scores and metacognition.

Method

Participants

Students (N = 96) enrolled across five sections of Introductory Psychology courses taught by the first author at a community college (approximately 24,000 students enrolled annually) in north-central Florida participated in this study. Ten students withdrew over the course of the semester. The remaining 86 (53 women, 33 men) ranged in age from 17 to 53 years (Mage = 21.5 years). Students came from diverse backgrounds. Students were 74.4% White, 11.6% Black, 8.2% Hispanic, and 2.3% Asian/Pacific Islander (3.5% declined to state). There were 38 first-generation college students. The average cumulative GPA was 2.98 (range: 0.5–4.0, SD = 0.68).

Materials

We measured metacognition using the Metacognitive Awareness Inventory (MAI; Schraw & Dennison, 1994). The MAI uses 52 items to measure two factors of metacognition: knowledge of cognition (KC; declarative, conditional, and procedural knowledge; 17 items, α = 0.54) and regulation of cognition (RC, planning, information management strategies, debugging strategies, comprehension monitoring, and evaluation; 35 items, α = 0.63). For each item, students indicated whether the statement was “true” (1 point) or “false” (0 points) about themselves. The total MAI score reflected the number of true statements.

We adapted the exam wrapper (Appendix 1) from a previously published version used for biology, chemistry, and physics (Lovett, 2013). Importantly, the exam wrapper asked students to reflect on (1) how they prepared for the exams, (2) what kinds of errors they made on the exams, and (3) how they should prepare for the next exam. The “sham” wrapper (Appendix 2) was designed as a placebo and simply asked students to report which questions they answered incorrectly and the course topic to which the questions corresponded.

Procedure

We notified students of the intent to use classroom surveys and their personal performance data via informed consent approved by the Institutional Review Board. To prevent the students from feeling coerced to participate, the instructor left the room while a student assistant administered the consent forms and delivered them to the instructor in a sealed envelope. The instructor examined the data only after submitting final grades for the semester. Students completed the MAI as part of classroom instruction two weeks after the start of the semester (after the drop/add period—Time 1) and again on the last day of classes (Time 2).

Students took three mid-semester exams (non-cumulative) during the 16-week term. At the beginning of class following each exam (during which the instructor returned students’ graded exams), students either (1) reviewed their exams and were allowed to ask questions about it (control condition), (2) completed an exam wrapper, or (3) completed a “sham” wrapper (placebo).

Students were non-randomly assigned to an experimental group based on the course section they were enrolled in. To help prevent course day/time characteristics from influencing the experimental conditions, the sections were divided such that two sections, one morning and one afternoon on Tuesday and Thursday, received the exam wrappers, two sections, one morning and one afternoon on Tuesday and Thursday, received the “sham” wrappers, and a mid-day section on Monday and Wednesday received no wrapper.

Results

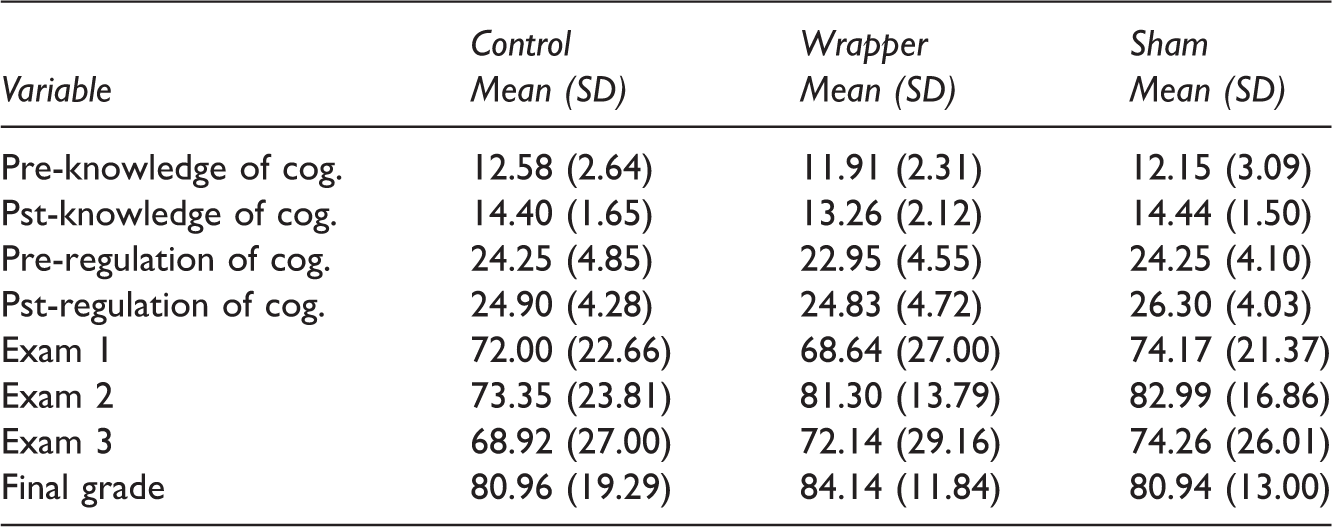

Means and standard deviations of major variables.

Does using exam wrappers aid performance?

To determine if completing the exam wrappers affected academic performance, we analyzed students’ scores on each of three exams and their final grades in the course across three conditions. A one-way ANOVA showed no significant effect of the use of exam wrappers on Exam 1(F(2,73) = .45, p = .64, η2 = .01), Exam 2 (F(2,73) = 1.51, p = .23, η2 = .04), Exam 3 (F(2,73) = .19, p = .83, η2 = .01), or on final grades (F(2,73) = .47, p = .63, η2 = .01).

Given it is possible that students’ MAI scores could be associated with their exam scores, we conducted a set of analyses of covariance (ANCOVAs) testing for differences on exam and final grade scores across conditions controlling for MAI at Time 1. We found no significant effects.

It may be the case that students need to have completed all of the exam wrappers in order to see any benefit in terms of final grades. To consider this, we conducted an additional set of analyses. We included only students who had completed the MAI at Time 1 and Time 2, all three mid-semester exams, and all exam wrappers. This resulted in a reduction of the sample from 76 students to 25 (13 students in the exam wrapper condition, 12 students in the “sham” wrapper condition, and no students from the control condition). An independent samples t-test showed no significant difference in any of the three exam scores or the final grades between students in the exam wrapper condition versus the “sham” wrapper condition (t(23) = –.59, p = .55).

Does using exam wrappers change metacognitive ability?

Using exam wrappers could influence MAI scores even if they did not change exam scores. We first ran an ANCOVA to test if MAI scores were higher in the experimental condition. There were no significant differences between Time 2 measure of KC across conditions controlling for Time 1 KC (F(2,51) = 1.95, p = .15, η2 = .07) or for RC (F(2,73) = .34, p = .72, η2 = .01). We then calculated a difference score for each factor of the MAI to measure changes in metacognition over the course of the semester. A one-way ANOVA indicated that there was no significant effect of condition on either the change in KC factor (F(2,49) = 1.29, p = .28) or the change in RC factor (F(2,49) = .70, p = .50).

Again, given that increases in MAI may only be seen in students completing all exam wrappers we tested for change in MAI scores in the smaller subset described above. There was no significant difference in either the KC factor (t(23) = .79, p = .43) or the RC factor (t(23) = 1.29, p = .21) between the exam wrapper and “sham” wrapper conditions.

It may also be the case that students naturally see an improvement in metacognitive skills over the course of the semester (i.e., maturation). To test this, we used a dependent-samples t-test to compare the post-semester MAI scores (MKC = 12.58, SDKC = 2.48; MRC = 24.10, SDRC = 3.60) to the pre-semester MAI scores (MKC = 14.02, SDKC = 1.83; MRC = 25.66, SDRC = 4.35). We found a significant difference between the scores for the KC factor (t(49) = 4.68, p < .01) and also between the scores for the RC factor (t(49) = 2.96, p < .01). In both cases, the scores were higher at the end of the semester than at the beginning when combining scores from all experimental conditions.

To capitalize on the unique design of this study we explored the relationship between exam and course scores and the measures of metacognition. Whereas scores on the first two exams did not relate to either the KC or RC components of the MAI, Time 2 KC was significantly related to Exam 3 grades (r(60) = .27, p = .034). Both components of the MAI measured at Time 1, KC (r(60) = .30, p = .015) and RC (r(60) = .34, p = .006), related to final grades.

Discussion

The use of exam wrappers did not cause an increase in exam scores, final course grades, or MAI scores. Although exam wrappers did not seem to increase metacognition for the groups that completed them, the general relationship between better metacognition and learning was supported by our correlational analyses. KC at both points of the semester and RC at the start of the semester showed statistically significant associations with some exam scores.

Although a null result regarding the effect of exam wrappers was unexpected, it aligns with the finding by Lovett (2013) that an increase in metacognition ratings only improved for students using exam wrappers in more than one course during a semester. It may be the case that this type of metacognitive intervention needs to be adopted across departments where students are likely to take more than one course using it or the exam wrapper needs to be more engaging.

Another possible explanation for the lack of an effect is that students did not recognize the value or benefit of metacognitive skills and the associated activity, since it was being used in only one of their courses. In order to maintain use of metacognitive skills, students have to be explicitly taught about them (Brown & Palincsar, 1982 ). Future research should investigate methods to expand the exam wrapper intervention, including dedicating class time to discussing the utility of exam wrappers and/or returning the exam wrappers to students to discuss their strategies for improvement.

There were several limitations to this study. Firstly, although we counterbalanced conditions to control for the time of class meeting, we did not randomly assign students to different conditions. Note that there were no significant differences in key demographic data across conditions. Furthermore, the instructional design of the course did not require that all students take the final exam (i.e., the lowest exam score is dropped, so students may opt out), so this could not be used as a measure of performance. Lastly, there was some difficulty in obtaining consent and data from each relevant time point simply due to the natural ebb and flow of course attendance.

One suggestion for future research would be to examine the qualitative study behavior data collected on exam wrappers from the students in this study. It is possible that, although there were no changes in performance or MAI scores, the students still exhibited meaningful changes in their approaches to studying.

The finding that metacognitive awareness increased for all students from the beginning to the end of the semester parallels results from Thompson (2012), who found equal gains in metacognitive skills across the course for a control and intervention group. This increase could simply be a maturation effect—as students progressed in their education, their monitoring, evaluation, and adjustment of strategies also increased. Studies have shown that measures of metacognition differentiate between experienced and less experienced learners (Hacker, Bol, Horgan, & Rakow, 2000; Thompson, 2012; Young & Fry, 2008 ). However, since most studies focus on the effects of a specific intervention or strategy to promote metacognition, it is difficult to map the natural development (or lack thereof) of metacognition in college students.

Overall, it has been previously demonstrated that explicit instruction of effective learning techniques can enhance student learning (Askell-Williams, Lawson, & Skrzypiec, 2012). The critical questions now are (1) what are the most effective metacognitive intervention for students? and (2) how can teachers implement these interventions to help their students be successful?

One possibility for more effective metacognitive interventions is to make students “aware of their own lack of awareness and faulty beliefs” (Chew, 2007, p.23). As an example of one such strategy, the first author used one or two class periods in the fourth week of the semester to implement a demonstration on student misconceptions of learning (Chew, 2010). The demonstration challenged students’ incorrect belief that motivation alone leads to learning and discussed the importance of metacognition and self-awareness as they relate to learning in an academic setting. The demonstration included simulation of an experiment testing deep versus shallow processing and intention to learn. Perhaps this helped students build a foundation for successful monitoring and adjustment of study behaviors. A single instructional session regarding effective study habits can protect against harmful behaviors such as massed practice (Arnott & Dust, 2012). Therefore, it is not unfounded to propose that the increase in MAI scores in our own data, regardless of condition, could have been a result of this teaching demonstration. This is certainly a point for future research.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.