Abstract

Objective(s)

The focus on competency attainment by professional psychology trainees obligates training programs to assess these competencies prior to completion of an internship. However, little is known about how trainees may perceive such testing. This study examines relationships between performance on an Oral Final Competency Examination of a clinical case and trainee perceptions of that examination.

Method

Oral Final Competency Examinations were conducted utilizing the California School of Professional Psychology model with 48 interns over five internship years. Trainees presented a case to examiners, were rated in six competency domains by two examiners, and completed a questionnaire regarding their perception of the exam process two weeks later.

Results

While all trainees passed the examination, those with lower scores perceived the examination and examiners less favorably. Prior experience with similar tasks and self-reported performance anxiety were surprisingly not related to exam performance.

Conclusions

This study found that trainees’ perceptions of an end-of-internship oral competency examination were strongly related to their examination performance. It is important that training program faculty reinforce the responsibility trainees have for their own performance, rather than re-evaluating the examinations themselves based on student feedback, which may be influenced by student performance.

In the last decade, the intensified focus on competency attainment, termed the “culture of competence,” has reflected a paradigm shift in professional psychology, education, and practice (Roberts, Borden, Christiansen, & Lopez, 2005). Building on the landmark 2002 multi-organizational Competencies Conference (Kaslow, 2004), Rodolfa et al. (2005) developed the three-dimensional Competency Cube model, which delineated domains of foundational and functional competencies to be considered at progressive stages of professional development. Subsequently, the American Psychological Association’s Board of Educational Affairs Assessment of Competency Benchmarks Work Group developed an initial competency benchmarks document (Fouad, et al., 2009), operationalized by an associated Competency Assessment Toolkit (Kaslow, et al., 2009) developed by another Board of Educational Affairs task force. The Benchmarks were revised again in 2012 to facilitate their practical use, including specification of behavioral anchors characterizing each stage of professional development. Nonetheless, recognizing the difficulty in developing anchors specific to training programs, yet another American Psychological Association (APA) workgroup (Hatcher, et al., 2013) created sets of forms suitable for rating trainees at each developmental stage, available on the APA Education Directorate website.

Of course, regular evaluation of trainees takes place in hundreds of professional programs in classroom, practicum, placement, internship, and postdoctoral residency settings. Data reflecting trainee competency attainment must be demonstrated among the proximal outcomes required by the APA Commission on Accreditation (APA, 2012). While examples of these systems are publicly available (e.g., on the Veterans Affairs Psychology Training Council Sharepoint site) and lore regarding their effectiveness abounds (e.g., the Veterans Health Administration Internship Directors listserv), there is very little empirical literature on the validity of evaluation systems and even less on how these evaluation systems are regarded by trainees.

Hadjistavropoulos, Kehler, Oeluso, Loutzenhiser, and Hadjistavropoulos (2010) surveyed Canadian Psychological Association accredited programs with respect to use of case presentations for trainee evaluation. While case presentations were a method of evaluation at some point in training in 70% of the programs responding, only 15% (3 programs) reported that a committee of raters utilized a formal system. In England, Tweed Graber and Wang (2010) devised a Clinical Skills Assessment Rating Form (CSA-RF) means of which practitioners rated DVD clips of trainees interviewing simulated patients. However, the CSA-RF lacked sufficient inter-rater reliability (.04–.12) in the several domains sampled. No data on trainees’ perceptions of this method were offered. More recently, Masters, Beachem, and Clement (2015) have described an elaborate three-stage Comprehensive Clinical Competency Examination (CCCE) utilizing trainees working with “standardized patients” and an oral defense with a faculty committee. However, no empirical data have been presented with respect to outcomes or trainee perceptions of the procedure. The authors do remark that “many students have commented on the boost to their confidence and self-efficacy following passage of the CCCE” (p. 173).

Our internship program faculty found it remarkable that at no point in the education and training of doctoral psychologists was there a requirement to perform satisfactorily on an examination of actual practice. Several years ago, the State of Ohio modified its licensure requirements to permit applicants to accumulate all supervised practice requirements prior to completion of their doctoral degrees. This administrative change meant that new doctoral graduates no longer had to complete an additional postdoctoral year of supervision subsequent to the internship. Accordingly, our faculty determined that we had the responsibility to gauge intern competencies prior to their beginning independent practice. In addition to the evaluative purpose of an oral examination, we wanted to provide the interns with an examination experience that modeled those they would be likely undergoing in their anticipated career trajectories, such as future licensing (and relicensing!) examinations and specialty certification examinations by the American Board of Professional Psychology (ABPP).

We considered the conclusion of internship as the capstone of doctoral clinical experiential training and the ideal point at which to evaluate summative clinical competencies, analogous to the doctoral dissertation oral examination for gauging scientific, scholarly, and research competencies. Some preliminary research on the topic (Goldberg, DeLamatre, & Young, 2011) comparing oral case examinations using four different competency models, including the 2007 version of the APA Benchmarks, concluded that the California School of Professional Psychology (CSPP) Clinical Proficiency Progress Review Model (Petti, 2008) provided the broadest range of outcome scores, was the most useful in providing feedback to interns, and was most preferred by both interns and examiners. Utilizing the CSPP model, four successive cohorts of interns were then examined (Goldberg & Young, 2015), and no significant relationships were found between total exam scores and supervisors’ final ratings of overall competence. Other than for clinical decision-making – a competency not included in the APA Benchmarks – there was only weak correspondence between domain competency areas (e.g., assessment) on the exam and on rotations. It was concluded that rotation differences in patient population and in specific methods of assessment and intervention acquired accounted for this failure to demonstrate generality of competencies and suggested that, when skill sets were so differentiated, they would be more accurately characterized as proficiencies.

Petti (2008) reported on two dimensions of trainee satisfaction with the CSPP examination, which is conducted to assess student readiness for internship rather than as an exit measure of competence. Seven successive years of students (n = 1000+) rated examiners at 4.46 on constructiveness of feedback and 4.35 on the degree to which examiners facilitated their performance, using Likert scales of 1 (low) – 5 (high). The current study explores a wider range of trainee perceptions of this exam format in five successive internship cohorts and relates these perceptions to examination outcomes.

Method

Training Program

The Louis Stokes Cleveland Department of Veterans Affairs Medical Center’s one-year, full-time Doctoral Internship Program has been in existence since 1962 and accredited by the APA since 1979. It currently includes 11 interns who pursue one of five tracks: our general mental health track or prespecialization tracks in health psychology, geropsychology, neuropsychology, or rehabilitation psychology. Each intern completes three four-month, essentially full-time rotations in different medical center milieus, such as Inpatient Psychiatry, Veterans Addiction Recovery Center, Primary Medical Care, Pain Management Center, etc. Assignments depend on the track pursued and each intern’s training needs and interests. Interns spend approximately 40% of their time in patient contacts and the balance in individual supervision, treatment team conferences, didactic seminars, and administrative tasks. By the conclusion of our 2,080-hour training year, interns are expected to demonstrate the beginning independent professional level of competencies in the areas of assessment, intervention, clinical judgment, and professional role behaviors. Completion of a one-year, full-time internship (or equivalent) is a nearly universal requirement for licensure as a professional psychologist in all states and in Canadian provinces.

Participants

All subjects were full-time doctoral interns from APA accredited doctoral programs and in good standing in our program. Trainees had been informed before applying to our internship that passing a Final Oral Case Examination was a requirement to successfully complete our program and were periodically advised of this during the internship year. Forty-eight interns were examined over five successive internship years, 2009–2010 through 2013–2014.

Materials and Procedure

The primary pedagogical purposes of our oral examination were (1) evaluative, that is, to assess interns’ degree of attainment of fundamental competencies during the internship, and (2) experiential, that is, to provide the interns with experience simulating the conditions of oral examinations they will encounter in their future careers, such as those for licensing and specialty board certification. The examination was conducted at the mid-point of the final four-month rotation of our training year, at which time trainees had completed nearly all (five-sixths) of their training. (CSPP conducts their examination with third-year doctoral students as a measure of readiness to apply for internships.) The competency model and method of evaluation employed was the CSPP Clinical Proficiency Progress Review (“the CSPP model”), adapted for our setting (Petti, 2008). The CSPP model utilizes two examiners to evaluate six domains (assessment, formulation, intervention strategy, relationship, self-examination, and professional communication skills). Although each domain consists of several components, each domain receives a summary score, rated on a six-point scale, with a range from 1 = significantly below expectations through 6 = greatly exceeds expectations. We summed the six summary domain scores to obtain each examiner’s total examination score for the trainee. We then totaled the two examiners’ scores for each trainee. Thus, a trainee could attain a total examination score from a minimum of 12 to a maximum of 72, with descriptive ranges of (1) below 36 = insufficient/remedial/failing; (2) 36–59 = sufficient; and (3) 60+ = outstanding. Each year, examiners received intensive group training on how to conduct a fair and valid oral examination from two faculty with extensive experience examining candidates for specialist certification by the ABPP. By intent, examiners were assigned examinees whose clinical work they had not directly supervised in order to simulate the conditions of anticipated future examinations. Thus, prior contextual relationship factors affecting the examination process were minimized.

Examination Format

CSPP conducts its oral examination based on a previously submitted written case report. Since we had previously extensively evaluated trainees’ written work, we modified that procedure instead to require oral presentation of a current clinical case, with whom the trainee had (a) performed psychological testing including at least one broad spectrum empirically validated assessment tool (Minnesota Multiphasic Personality Inventory, 2nd Edition (MMPI-2), Personality Assessment Inventory (PAI), or Millon Clinical Multiaxial Inventory, 3rd Edition (MCMI-III)); (b) conducted a clinical assessment interview; and (c) undertaken individual intervention. Trainees were provided with a structured outline of content to be included in the oral case presentation on which they would be examined. Since this outline had been required of trainees in two previous clinical case presentations to a staff consultant and peer trainees, this was a familiar format. Trainees were informed that, since APA regards the internship as generalist training, the scope of the examination would be general and address professional and ethical issues as well as specific case material. To allow for maximum transparency, the trainees were provided with the CSPP model article (Petti, 2008) and the actual rating forms, with descriptive categories and rating criteria, to be utilized by the examiners. The examination was 105 minutes in duration, conducted as follows: (1) trainee presentation of the case without interruption – 30 minutes; (2) examination by two faculty examiners – 45 minutes; (3) examiners’ discussion and rating without trainee present – 15 minutes; (4) feedback to trainee by examiners – 15 minutes. Examiners were assigned who had not directly supervised the trainee in his/her clinical rotation work and had not served as consulting psychologist on two prior case presentations to the trainee group. These conditions are consistent with those of state licensing and ABPP oral examinations. They also further minimize the possibility that prior relationship factors might affect the examination process. In addition, since trainees could pursue several program tracks, one examiner with mental health expertise and one examiner with health psychology expertise was assigned to each trainee’s examination team, in order to ensure that the exam would encompass sufficient breadth of content.

Trainee Questionnaire

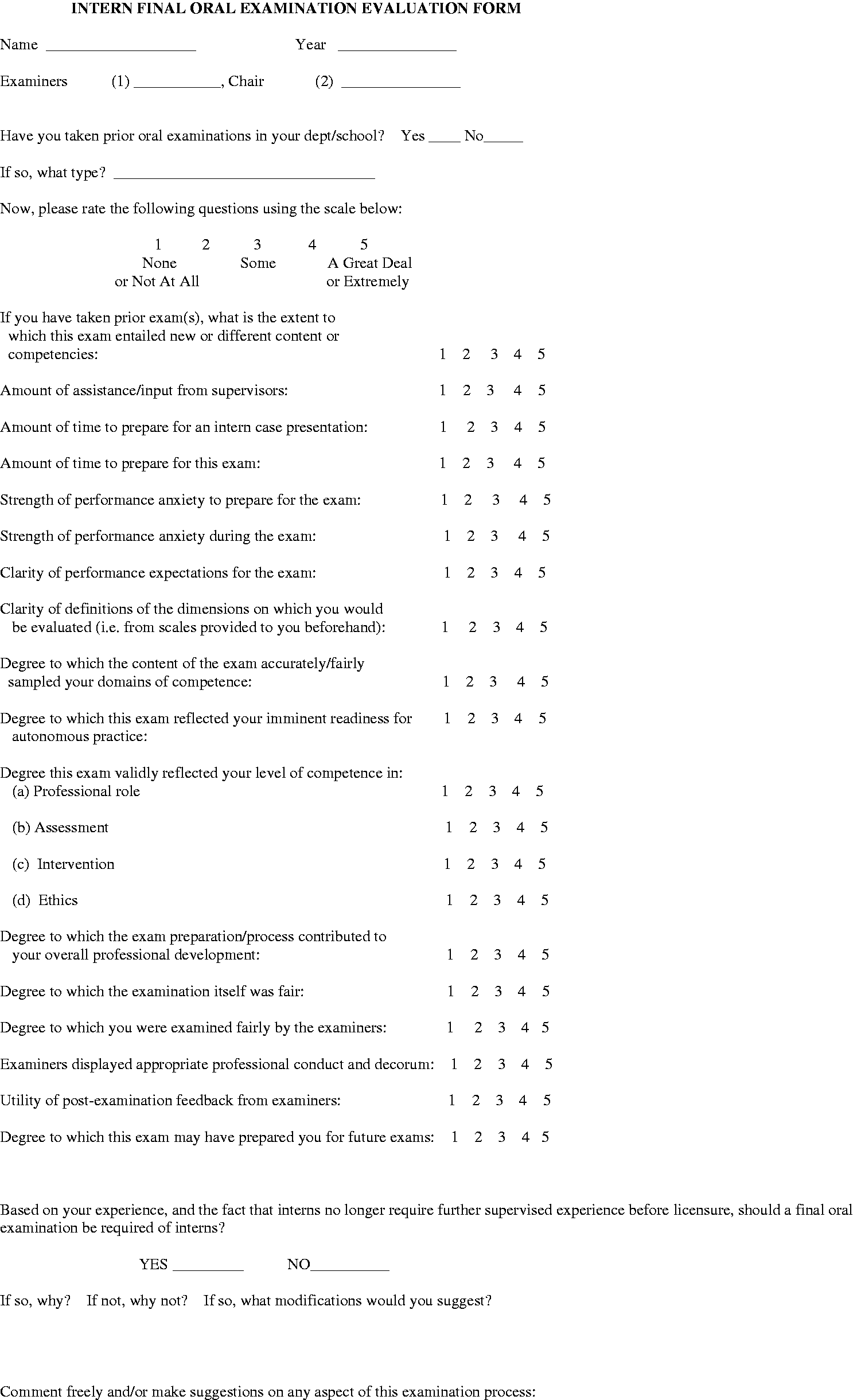

Two weeks subsequent to the examination, the trainees as a group were required to complete an Intern Final Oral Examination Evaluation Form (‘the questionnaire’) about the examination experience. Trainees rated their experience on 19 items utilizing a Likert scale ranging from 1 = “none or not at all”, through 3 = “some”, to 5 = “a great deal or extremely”. Item content included ratings of amount of preparation for the exam, strength of performance anxiety, clarity of performance expectations, degree to which the exam reflected the trainee’s self-rated competence in different domains, exam and examiner fairness, utility of examiner feedback, etc. We also asked whether a final examination should continue to be required.

Data Analysis

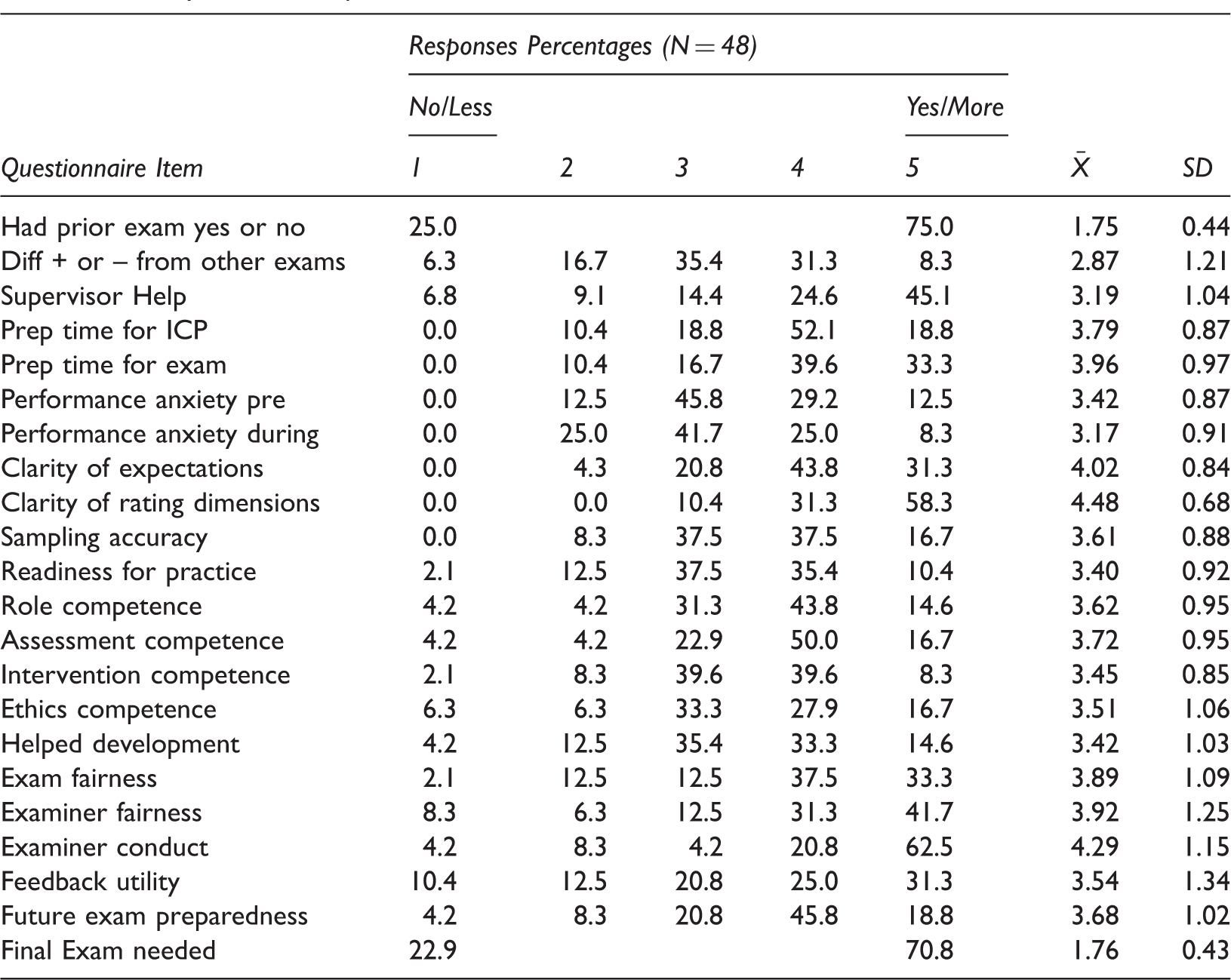

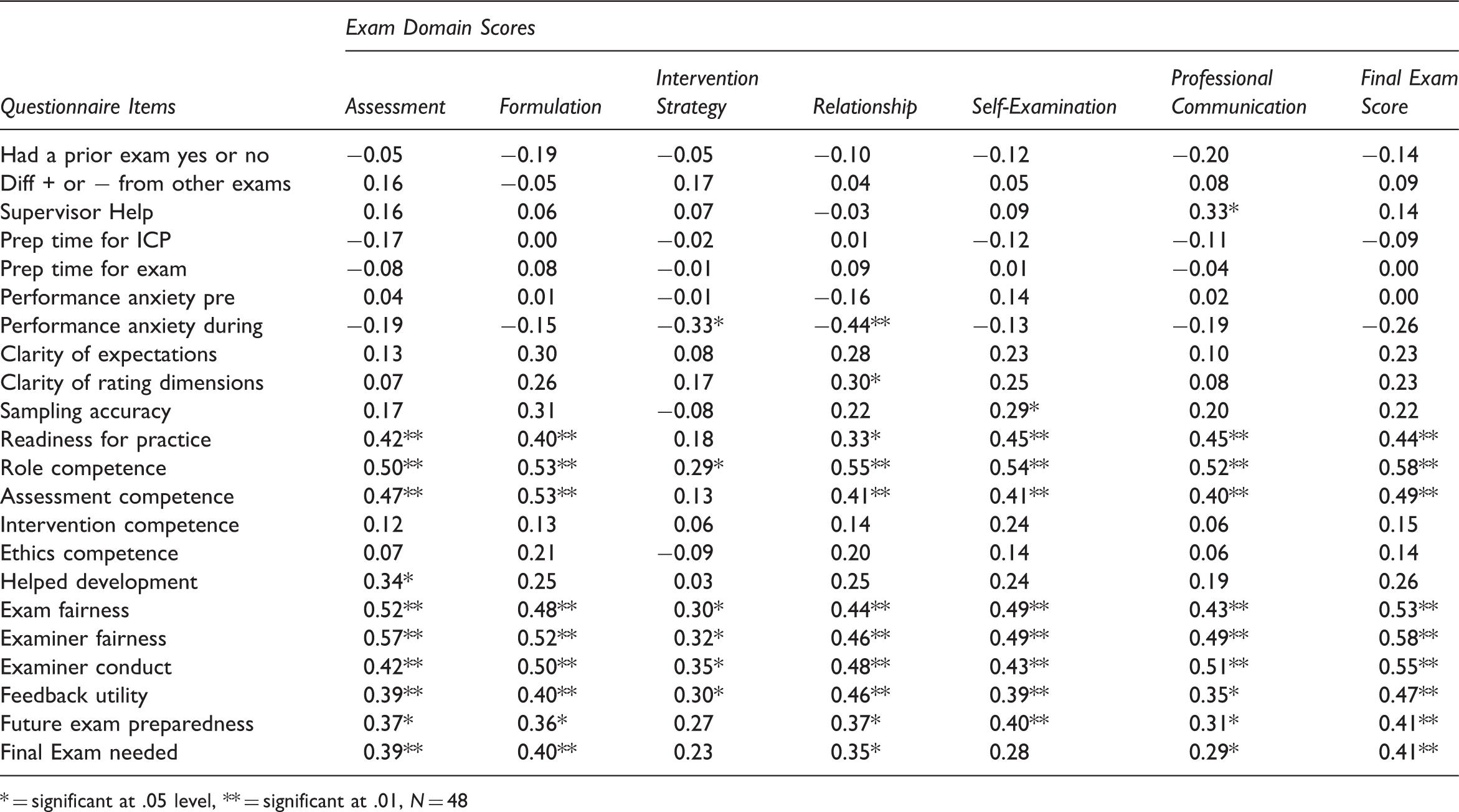

Descriptive statistics for questionnaire items are presented in Table 1 and a copy of the survey comprises Figure 1. Questionnaire items were analyzed to ensure appropriate distribution of variance for follow-up analyses. Zero-order correlations were computed for both the total exam score and the six exam domains with the 19 questionnaire items, presented as Table 2.

Evaluation Form. Survey Item Descriptive Statistics Zero-Order Correlations between Questionnaire Items and Exam Performance = significant at .05 level, ** = significant at .01, N = 48

Results

All 48 trainees passed the examination. The group obtained an average total examination score of 50.49, a standard deviation of 7.20, and a range from 36 to 70. All trainees also completed the Oral Final Examination Evaluation Form.

Table 1 shows the response distribution, mean, and standard deviation for each of the 19 questionnaire items. While most items show an essentially normal distribution, there are some notable exceptions. More specifically, items assessing how well the trainees understood the exam rating dimensions (clarity of rating dimensions), how fair they felt the examiners were (examiner fairness), how much they felt their supervisors helped them prepare their presentations (supervisor help), and how professionally they felt the examiners conducted themselves (examiner conduct) all showed ceiling effects. The distribution of the clarity question is understandable since the rating dimensions were explicitly defined for trainees prior to the exam. The examiner fairness and examiner conduct questions’ distributions were interesting in that trainees seemed to draw a distinction between examiner fairness and conduct. These somewhat skewed distributions may have the effect of artificially truncating the magnitude of some item correlations with exam performance. No items showed floor effects, and the majority showed a general positive lean. Inter-item correlations suggest a good-variable response set was largely at play (i.e., interns either gave universally positive ratings, or showed more variance in their responses).

Table 2 shows the relationships between questionnaire items and exam performance. Substantial statistically significant positive correlations were found between total exam score and self-rated readiness for practice (r = .44, p < .01), self-rated professional role competence (r = .58, p < .01), self-rated assessment competence (r = .49, p < .01), examiner and examination fairness (r = .58, p < .01), examiner conduct (r = .55, p < .01), utility of examiner feedback (r = .47, p < .01), and preparedness for future examinations (r = .41, p < .01). In addition, generally strong and statistically significant positive correlations were found between all six domain scores and five of the seven questionnaire variables above, with correlations ranging from r = .55 (p < .01), through r = .29 (p < .05). The intervention strategy domain score failed to correlate significantly with self-rated readiness for practice and preparedness for future examinations. Total exam score was positively and significantly related to self-rated preparedness for future examinations (r = .41, p < .01). There was no significant relationship between total exam score and two performance anxiety variables or between total exam score and prior oral exam experience, preparation time, or amount of supervisor’s assistance. Finally, a point-biserial correlation was computed between total exam score and whether the exam should continue to be required in the program (Yes/No). Continuation of the exam was significantly positively related to total exam score (r = .41, p < .01). It is worth noting that the total number of significant correlations suggests that the probability of type I error inflation is moot in this case.

Discussion

In this study, where relationships were found between examination performance and trainee perceptions, better performance was associated with positive evaluation of the examination experience. Conversely, poorer performance was associated with lower rating of the examination experience, particularly with regard to fairness of the exam, fairness of the examiners, and whether an exam should be continued. The results are consistent with common sense expectations and may reflect either, or both, of the following possibilities: (1) that trainees externalize the responsibility for poorer performance, attributing it to the examiners or to conditions of the examination or (2) that examiners inadvertently or subtly conveyed negative or hostile attitudes toward some trainees, resulting in poorer performance and lower ratings. In our opinion, having conducted examiner training minimizes the possibility of (2). Furthermore, it is interesting to note that self-rated performance anxiety did not relate to exam performance, further reducing the likelihood that possibility (2) is operative. That being said, the possibility that examiner bias existed cannot be fully discounted in such an examination paradigm. In our opinion, it seems more likely that trainees who performed less well on our examination self-protectively rationalized their performance by blaming the exam and examiners. Additionally we believe that it is important that training program faculty reinforce with trainees the need to accept responsibility for their performance and its deficits rather than externalizing that responsibility onto their evaluators. However, it further behooves programs to take steps to minimize examiner bias when using oral examinations as tools for evaluation.

It is interesting to compare our cohort’s perceptions of their examination experience with that of CSPP students (Petti, 2008). On five-point Likert scales, CSPP students rated examiners as 4.46 on providing constructive/critical feedback and 4.35 on facilitation of examination performance (mean of two items = 4.41). Both of these items entail perceptions of whether examiners were “inappropriately critical.” In contrast, our trainees rated examiners as 4.29 on examiner conduct, 3.92 on examiner fairness, and 3.54 on utility of examiner feedback (mean of three items = 3.92). Thus, our interns rated examiners less favorably than did CSPP students. This may reflect differences in examination format (written case report vs. oral case presentation), time at which trainees’ perceptions were obtained (immediately vs. two weeks’ after examination), differences in professional developmental level (third year doctoral vs. end of internship), and/or generational differences in the trainee cohorts (2000–2006 vs. 2009–2014). Unfortunately, Petti (2008) did not examine whether students’ perceptions of their examiners related to their examination performance. By design, our interns were examined by staff members with whom they had not had a prior supervisory relationship. It will be recalled that this was an intentional feature of the examination since one pedagogical aim was to simulate future professional oral examinations, such as state licensing and specialty board examinations, where the examinee has no prior relationship to the examiners. Any effect that the relationship between examiner and trainee may have had was thus likely to be entirely due to their interaction within the examination. Development of such a relationship, however peremptory and limited, is an intrinsic and valuable part of the examination experience and, as such, cannot be separated from the results of the examination. Under a different design paradigm, a prior positive relationship might have evoked better performance and a more positive perception of the examiners. Another possible explanation for the discrepancies might be the timing and circumstances under which the exam was conducted. It is not unreasonable to conjecture that failing an examination at the end of internship might be seen as more aversive than failing a similar examination earlier in doctoral training. Thus, our trainees may have been more likely to view the exam and examiners negatively.

It is also worthwhile to consider and discuss trainee perceptions which did not significantly relate to exam performance in the current study. Specifically, time factors (amount of time to prepare for examination), supervisor’s help (amount of assistance/input from supervisors), performance anxiety (as noted above: strength of performance anxiety to prepare, strength of performance anxiety during exam), advance information (clarity of rating dimensions, clarity of performance expectations), and (for those with pre-internship oral examination experience) degree of congruence with past exams. While one might speculate that these historical, contextual, and situational factors significantly affect exam performance, we found this not to be the case.

Additionally, there are some limitations to the current study that need to be acknowledged. While Petti (2008) reported that 14% of students failed the CSPP examination, all of our trainees passed, albeit with a wide distribution of passing scores. It is quite possible that our examiners may be reluctant to fail trainees due to the potentially serious consequences of failing this examination and, secondarily, the additional administrative burden that this would incur. These consequences include the trainee failing the internship program and thus being unable to proceed to postdoctoral residencies and jobs already secured. Additional administrative responsibilities in the case of trainee failure include documentation of failure, reports of contact to the director of training, assisting in the development of a remediation plan for the trainee, attending a special Training Committee meeting, and participation in formulating conditions of a re-examination. Accordingly, there may have been a tendency for examiners to score trainees who were marginal performers at the bottom of the distribution, higher than they should have scored absent a genuinely catastrophic failure. This type of positive examiner bias, based on sympathy with the position of the trainee, is unfortunately a facet of any oral-only examination and should be taken into consideration when interpreting our results.

Another potential limitation of the study design was that the trainees knew their scores and received feedback on the examinations two weeks’ prior to completing the trainee questionnaire. Thus, some of the interns’ ratings may have been unduly influenced by the examination results rather than examination process. However, since none of the trainees failed the examination, we feel that it is unlikely that the delay had a substantial effect on their responses to the questionnaire. Future studies may consider administering the trainee questionnaire before providing feedback and scores to the trainee, which may provide a different view of how they perceive the process. It may also be of interest to investigate the effects, if any, of concordance of examiner expertise and theoretical orientation with that of the trainee.

Despite these limitations, it is notable that trainees who performed better on the examination tended to feel more prepared for future examinations, one explicit objective of our program. While trainees who did less well tended not to feel as well prepared for the future, in our opinion, the examination may have served as a “wake-up call” to them with regard to examinations such as licensure (oral), re-licensure, and specialty board certification that are likely to be required of them in their future careers.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.