Abstract

The International Computer and Information Literacy Study (ICILS 2013) provides, for the first time, information about students’ computer and information literacy (CIL), as well as its acquisition, based on a computer-based test for students and background questionnaires. Among the 21 education systems that participated in ICILS 2013, there are 12 European countries, making secondary comparative in-depth analyses at a European level particularly fruitful. Accordingly, while the four articles in this Special Issue each deal with different topics and adopt different methodologies, they all share a common element and provide European comparisons in the ICILS context. The editorial in turn outlines the aim of the ICILS 2013 study and its relevance for European education research as well as its contextual framework and approach to the measurement of students’ CIL. The potential and challenges of such large-scale assessment studies are also discussed.

The International Computer and Information Literacy Study (ICILS): relevance and aim

The potential of information and communication technologies (ICT) for teaching and learning has been an issue since the introduction of computers in education in the 1960s – not only for education policy and practice, but also for educational research (Voogt and Knezek, 2008). In this context, different research perspectives have been identified internationally in the last decades- in particular, learning to use ICT as a tool (e.g. Voogt, 2008) and using ICT to improve students’ learning processes and learning outcomes (e.g. Erstad et al., 2015). Because ICT is becoming more and more relevant in our increasingly digitalized society, a third perspective is gaining in importance: how to use ICT in a competent way and how to equip students with new kinds of skills in order to ensure their successful participation in the so-called digital age (e.g. Anderson, 2008; Eickelmann, 2011; Kozma, 2003; Voogt et al., 2013).

Accordingly, it is becoming increasingly important for schools in Europe and worldwide to focus on providing their students with what are commonly referred to as digital competencies or computer and information literacy (CIL, e.g. Fraillon et al., 2013). The European Commission defines digital competencies as one of eight key competencies for lifelong learning (European Commission, 2013). However, ensuring that students acquire these skills, and preparing them for life through computer-assisted teaching and learning in schools, is an ambitious task and presents teachers with a major challenge (Voogt and Knezek, 2008).

The International Computer and Information Literacy Study (ICILS 2013; 2010–2014) investigated, for the first time, the CIL of secondary school students in 21 education systems (Fraillon et al., 2014). Among these, there are 12 European countries, rendering the ICILS dataset particularly fruitful for secondary analyses at the European level. ICILS 2013 was coordinated by the International Association for the Evaluation of Educational Achievement (IEA), an independent, international cooperative of national research agencies (e.g. Fraillon et al., 2013). The aim of ICILS 2013 was to investigate the CIL level of eighth grade students as well as the contexts in which CIL is developed (Fraillon et al., 2013). The results of ICILS 2013 were published in November 2014 (European Commission, 2014; Fraillon et al., 2014).

ICILS 2013 thus joins a series of IEA studies with an explicit focus on ICT in the education context. After the Computers in Education Study (COMPED 1989–1992; Pelgrum and Plomp, 1991, 1993; Pelgrum et al., 1993), the Second Information Technology in Education Study (SITES) Module 1 (1998–1999; Pelgrum and Anderson, 2001) and Module 2 (1999–2002; Kozma, 2003), as well as SITES 2006 (2004–2008; Law et al., 2008), ICILS 2013 is the fifth IEA study in this field of research. It is, however, the first such study in which the students’ competencies were assessed using computer-based achievement tests.

Unlike other international large-scale assessments such as TIMSS (Trends in International Mathematics and Science Study), PIRLS (Progress in International Reading Literacy Study), and PISA (Programme for International Student Assessment), ICILS does not focus on competencies in subjects such as mathematics, reading or science but, rather, on cross-curricular competencies. In ICILS 2013, CIL is defined as ‘an individual’s ability to use computers to investigate, create and communicate in order to participate effectively at home, at school, in the workplace and in the community’ (Fraillon et al., 2013: 17).

The ICILS 2013 contextual framework

The ICILS 2013 framework provides, for the first time, a model that is in line with the cross-curricular perspective of CIL. It is based on previous conceptual frameworks that sought to explain educational outcomes by choosing a multilevel approach (e.g. Bos et al., 2003; Ditton, 2000; Scheerens, 1990; Scheerens and Bosker, 1997). In doing so, different levels (system level, school level, classroom level, individual level) are explicitly taken into account. The ICILS 2013 contextual framework also takes into consideration empirical evidence in this area; for example, from the IEA’s SITES 2006 (Law et al., 2008).

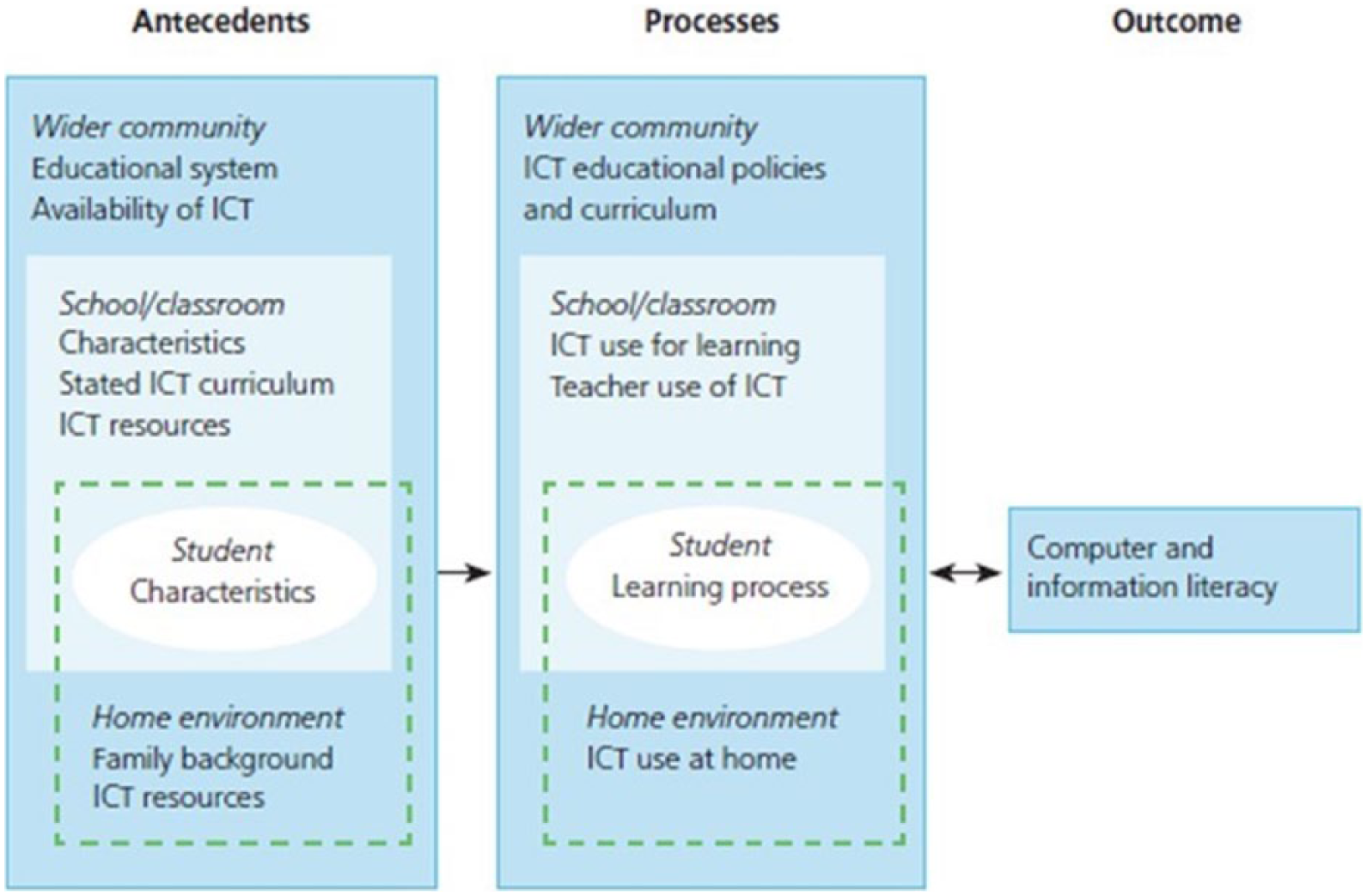

Figure 1 shows the contextual framework of ICILS 2013. It differentiates between antecedents and processes. Antecedents can be characterized as exogenous factors that determine the ways in which the acquisition of CIL takes place (Fraillon et al., 2013), and can be considered contextual factors that are not directly influenced by learning-process variables or outcomes. Processes are those factors that directly influence the acquisition of CIL (Fraillon et al., 2013). They are constrained by antecedent factors. It is assumed that both antecedents and processes need to be taken into account in order to explain students’ CIL.

Contextual framework of ICILS 2013 (Fraillon et al., 2014: 37).

As already mentioned, the ICILS framework considers a multilevel approach by differentiating between four levels. These levels are: (1) the individual learner; (2) the home environment; (3) the school and classroom; and (4) the wider community (Fraillon et al., 2013). These four levels have the following characteristics (e.g. Fraillon et al., 2014).

Individual learner: this level includes the characteristics of the learner, the processes of learning, and the learner’s level of CIL.

Home environment: this level includes characteristics relating to the students’ backgrounds, especially in relation to the learning processes associated with family, home and other immediate out-of-school contexts.

School and classroom: this level includes all school-related factors. Given the cross-curricular nature of CIL, classroom level and school level are not considered separately.

Wider community: this level describes the wider context in which the acquisition of CIL takes place. It includes local community contexts (e.g. access to internet facilities) as well as characteristics of the education system and country.

Measuring CIL and the contexts of its acquisition

In ICILS 2013, the CIL construct is conceptualized with regard to two strands (or categories) that frame the skills addressed by the CIL instruments (Fraillon et al., 2013). Each of these two strands is made up of several aspects – that is, specific content categories. The first strand, ‘Collecting and managing information’, focuses on the receptive elements of the CIL construct and incorporates three aspects:

(1) Knowing about and understanding computer use;

(2) Accessing and evaluating information;

(3) Managing information.

The second strand, ‘Producing and exchanging information’, focuses in particular on the productive parts of the CIL construct. It consists of four different aspects:

(1) Transforming information;

(2) Creating information;

(3) Sharing information;

(4) Using information safely and securely (Fraillon et al., 2013).

This structure was used ‘as an organizational tool to ensure that the full breadth of the CIL construct was included in its description and would thereby make the nature of the construct clear’ (Fraillon et al., 2014: 71). As it transpires, the empirical verification of the CIL construct shows that this differentiation does not seem to be useful because there is a very high correlation between the two strands (e.g. Bos et al., 2014; Fraillon et al., 2014). Accordingly, all analyses in the context of ICILS 2013 consider CIL as a one-dimensional construct.

To provide a measure of their CIL, students completed a computer-based CIL test in a live software environment (e.g. Fraillon et al., 2013); that is, the software (e.g. word processing, presentation) reacts according to the students’ inputs during the test. The test consisted of four 30-minute modules, with each student assigned two modules at random. Each module had a real-life theme with an overarching story (e.g. after-school exercise or school trip). Three different types of tasks were used (e.g. Fraillon et al., 2013):

(1) Information-based response tasks (paper-and-pencil like questions: for example, multiple-choice);

(2) Skill tasks (using interactive simulations of software or applications to complete an action: for example, copying, pasting, opening a browser, ‘save as’);

(3) Authoring tasks (which required modifying or creating information products by using different software or applications – for example, to create a homepage or a poster).

Based on the data obtained from the assessment of the students’ results, it was possible for the first time to compute empirically-based competence levels of CIL. Further details of the CIL test can be found in the work of Fraillon et al. (2014), or Jung and Carstens (2015).

Furthermore, the contexts in which CIL is acquired were assessed by means of different questionnaires (for more details, see Fraillon et al., 2014):

Teacher questionnaire, which included, for example, questions about teachers’ attitudes toward the use of ICT in teaching and learning or their participation in professional development;

Principal questionnaire, which included for example, questions about the characteristics of the respective school and its approach to ICT in teaching and learning;

ICT coordinator questionnaire, which included for example, questions about the respective school’s ICT resources or ICT support;

National context questionnaire, which included, for example, questions on the respective country’s plans and policies for using ICT in education.

ICILS 2013 was administered to about 60,000 students in their eighth year of schooling in over 3,300 schools in 21 participating educational systems around the world (Schulz et al., 2014). The following 12 European countries participated in ICILS 2013: Croatia, the Czech Republic, Denmark, Germany, Lithuania, the Netherlands, Norway, Poland, the Slovak Republic, Slovenia, Switzerland, and Turkey. These countries form the centre of the European comparisons in this Special Issue. For further information about ICILS 2013 sampling, see Jung and Carstens (2015).

The results of ICILS 2013 revealed that students’ CIL varies significantly across Europe (European Commission, 2014; Fraillon et al., 2014). Several of the participating European countries – the Czech Republic, Denmark, Poland, Norway, and the Netherlands – fall into the group of so-called top performing countries; that is, countries with a high average level of students’ CIL. Indeed, the Czech Republic demonstrated both the highest level of students’ CIL and the lowest spread in student scores – that is, the least inequality in student achievement. The study thus shows that in 2013 European countries were at different stages concerning the integration of technology into schools and supporting the acquisition of CIL by students. This makes the European context even more interesting with regard to further in-depth analyses.

Potentials and challenges in the ICILS context in Europe

The results of the ICILS 2013 study provided representative information at the level of students’ CIL in Europe in addition to consideration of contextual variables about teaching and learning with ICT. Important groundwork has thus been provided for the scientific community as well as for policy makers. Scientists can use the publicly-available ICILS 2013 database to carry out research at different levels (student, teacher, school, school system).

1

ICILS 2013 has also provided a baseline study for the future measurement of CIL and the acquisition of CIL across countries (Fraillon et al., 2014). In the context of policy-making, the European Commission concluded, after the publication of the results of ICILS 2013, that ICILS is a valuable source of evidence and information for the policy dialogue between the European Commission and Member States […]. The findings from the survey will feed into exchanges with stakeholders and Member States through ET2020 Working Groups on Transversal skills, Digital and Online Learning and Schools. (European Commission, 2014: 18)

Within the participating countries in Europe, the national results have been taken up differently. In Germany, for instance, different measures have been initiated based on the results of ICILS 2013; for example, the development and publication of a common strategy on education in a digitalized world, aiming among other things to integrate teaching and learning with ICT in all subjects (KMK, 2016). The results of the ICILS 2013 survey also establish the groundwork for the development of the second cycle of ICILS that will take place in 2018 (main study). From a European perspective, several new countries (e.g. France, Italy, and Portugal) will participate in the second round of ICILS, thus providing new perspectives for a future European comparison in the context of CIL as well as with regard to the situation for teaching and learning with ICT.

From the researcher’s point of view, the ICILS research design does have its limitations, despite the innovative potential. Because it was developed primarily as a monitoring study of education systems, its results are cross-sectional and hence cannot indicate causalities. Both the study and respective results can therefore be seen essentially as a starting point for further, in-depth research into teaching and learning with ICT. For example, case studies with a qualitative or mixed-methods approach in individual countries could explore specific characteristics at the individual student or school level in more detail. This could enrich and deepen the empirical evidence gained from the data to describe the current state of educational systems with regard to the use of ICT in teaching and learning and students’ ICT competencies. It could also help to initiate school development processes aimed at developing country-specific strategies for promoting CIL acquisition among students in Europe.

For the future, there are several challenges to face with regard to measuring ICT skills of students. Ainley et al. (2016) summarized three main aspects.

(1) Over time comparisons. Rapid technological changes (e.g. smartphones, tablets) affect the way of using and applying ICT in and out of schools. Assessments of ICT skills needs to react to respective changes. The challenge for such assessments is to incorporate new aspects on the one hand without losing comparability over time on the other (Ainley et al., 2016).

(2) Cross-country comparisons. In order to use computer-based testing successfully, a satisfactory ICT infrastructure (computers or equivalent devices) with access to the test software has to be assured (Ainley et al., 2016). The future challenge will be to use an assessment design that ensures similar test conditions, by developing modes of test delivery that are feasible across all countries.

(3) Cross-group comparisons. As with every other comparative assessment, assessments measuring ICT skills should not systematically advantage or disadvantage certain groups of students. In the context of ICT there is empirical evidence that different sub-groups of students do have less access to ICT than other groups. It must be recognized and acknowledged that these different levels of familiarity with ICT affect the way in which assessments are processed by students. The future challenge is to find a way to ensure fair testing conditions for all sub-groups of students, for the purpose of comparability.

We want to close this introduction with some thoughts on evidence-based policy, because ICILS can be considered an example of how data and policy interact. Data gathered from ICILS are used for data-driven policy-making, leading to a new cycle of data collection and probably (more) policy in the future. Keeping in mind the experiences with other large-scale assessment studies such as PISA or TIMSS, it cannot be assumed that there is a simple ‘empirical study → uniform interpretation of results → derivation and implementation of policy measures → improvement’ cause-and-effect relationship (Schulz-Heidorf and Gerick, 2017). This is the case for two reasons. First, results of empirical studies always need interpretation, leading to different recommendations of action; and, second, (educational) policy has underlying rationalities that are different to those of (educational) research. The former is necessarily normative and led by political interests (Schulz-Heidorf and Gerick, 2017); whereas educational research – in contrast – should focus on its genuine rationality in providing data to answer current and future questions in the context of teaching and learning with ICT and focusing on developing appropriate and up-to-date research measures to ensure that policy makers can rely on this knowledge in their decision making.

The articles in this Special Issue

This special issue contains four articles that use ICILS 2013 as their starting point. All of these articles describe research that is based on in-depth secondary analyses of the ICILS 2013 data from a European perspective. They were presented at a joint symposium of the ‘Assessment, Evaluation, Testing and Measurement’ (Network 9) and ‘ICT in Education and Training’ (Network 16) networks at the European Educational Research Conference (ECER) in Budapest in 2015. The results of the enriching discussions during this symposium are also included in the articles. The aim of this Special Issue is to keep the discussion of this important topic in teaching and learning going in the scientific community.

The four articles can be differentiated into two groups: those by Ihme, Senkbeil, Goldhammer and Gerick, and Punter, Meelissen and Glas, focus on the student level and the CIL construct and argue in particular from a methodological standpoint. The articles by Drossel, Eickelmann and Schulz-Zander, and Eickelmann and Vennemann, focus on the school level, thus drawing on in-depth questions about teaching and learning with ICT in the context of ICILS 2013 by analysing school and teacher characteristics.

In their article ‘Assessment of computer and information literacy in ICILS 2013: Do different item types measure the same construct?’, Ihme et al. adopt a methodological perspective on the three different item formats used in ICILS to measure students’ CIL. In doing so, they investigate whether these three item formats measure the same construct in all European countries participating in ICILS 2013.

In the article, ‘Gender differences in computer and information literacy: An exploration of the performances of girls and boys in ICILS 2013’, Punter et al. use the students’ CIL achievement data. They test the hypothesis that gender differences in performance for computer literacy items would slightly favour boys, whereas gender differences in performance for information literacy items would slightly favour girls. They also analyse and compare variations in such differences across European countries.

Drossel et al., in their article ‘Determinants of teachers’ collaborative use of ICT for teaching and learning – a European perspective’, focus on the school level and analyse teacher collaboration in the ICT context as a feature of school quality. Furthermore, they examine whether there are conditions that are positively related with collaborative behaviour on the part of teachers in a European comparison.

The article ‘Teachers’ attitudes and beliefs towards ICT in teaching and learning in European countries’ by Eickelmann and Vennemann similarly adopts a school level perspective and focuses on teachers’ beliefs and attitudes towards the potentials of ICT in teaching and learning as a central condition for a successful implementation of ICT. The authors describe a typology of teachers with different attitudes towards the potentials of ICT and show how this typology is distributed across three European countries.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Declaration of conflicting interests

The authors declare that there are no conflicts of interest.