Abstract

The combination of different item formats is found quite often in large scale assessments, and analyses on the dimensionality often indicate multi-dimensionality of tests regarding the task format. In ICILS 2013, three different item types (information-based response tasks, simulation tasks, and authoring tasks) were used to measure computer and information literacy in order to balance technological and information-related aspects of computer and information literacy. The item types differ in the cognitive processes and the type of knowledge they measure and in the strands and aspects of the ICILS 2013 framework they address. In this article, we explored which factor models that assume item type factors or type of knowledge factors fit the data. For the factors of the best fitting models, regression analyses on SES, frequency of computer use, self-efficacy, and gender were computed to work out the different meanings and the convergent and discriminant validity of the factors. The results show that three-dimensional models with correlated factors for item type or type of knowledge fit best. Regression analyses discover substantive implications of between-item and within-item models. The effects are discussed and an outlook is given.

Keywords

Introduction

In the International Computer and Information Literacy Study (ICILS), three item formats were used for measuring computer and information literacy (CIL): non-interactive/constructed response tasks, simulations tasks, and authoring tasks. In this article, we investigate if these item formats measure the same construct and if the item formats have different correlations with background variables like socio-economic status (SES). The analyses focus on data of the 12 European countries participating in ICILS 2013.

Theoretical background

Computer and information literacy

In ICILS 2013 and in line with other recent conceptualizations (e.g., Calvani et al., 2012; ETS, 2002), CIL is not exclusively confined to technological literacy (declarative and procedural knowledge of hardware and software applications, understanding of technological concepts). Instead, information literacy (i.e., the ability to use digital media to locate and critically evaluate information to use it effectively for one’s own purposes) also plays an important role (Fraillon et al., 2013; see also Calvani et al., 2012). According to this conceptualization, “Computer and information literacy refers to an individual’s ability to use computers to investigate, create, and communicate in order to participate effectively at home, at school, in the workplace, and in society” (Fraillon et al., 2013: 17). Thus, CIL can be understood as a unidimensional construct comprising the above-mentioned facets of technological and information literacy, and comprising a specific set of knowledge and skills (Fraillon et al., 2013).

Dimensionality of composite assessments

The combination of different item formats (= composite assessments) is found quite often in large scale assessments (e.g., PISA, TIMSS) of reading comprehension, mathematical literacy, and other subjects (Kim et al., 2010; Lissitz et al., 2012; Rauch and Hartig, 2010; Rodriguez, 2003). Nonetheless, secondary analysis on the dimensionality of composite assessments of large scale assessment data and experimental research indicate multi-dimensionality of tests regarding the task format (e.g., Kuechler and Simkin, 2010; Lissitz et al., 2012; Rauch and Hartig, 2010). Two prominent explanations for this multi-dimensionality are that compared to other item types, multiple choice items invite an increase in guessing behavior and measure important constructive cognitive processes less effectively (e.g., Kuechler and Simkin, 2010; Lissitz et al., 2012). Recent findings indicate multi-dimensionality of tests regarding the task format. Mostly, multiple choice and constructed response items were compared (e.g., Kuechler and Simkin, 2010; Lissitz et al., 2012; Rauch and Hartig, 2010). In these studies, multiple choice items are used mainly to assess more basic types of cognitive processing, and constructed response items are used to assess more complex types of cognitive processing or higher order thinking (e.g., Rauch and Hartig, 2010; Stiggins and Chappuis, 2011). Multiple choice items are assumed to be appropriate to measure declarative (factual or conceptual) knowledge (e.g., Kuechler and Simkin, 2010; Mayer, 2002) but are unable to tap higher order thinking and allow for a higher probability of guessing correctly, which causes lower reliabilities in the test for lower ability students (Cronbach, 1988). Yet, multiple choice items are largely used in cognitive assessment, and item-writing guidelines can help measure even complex cognitive characteristics (Haladyna et al., 2002).

There is a lack of research comparing not only traditional task formats (like multiple choice and constructed response) but also innovative item formats like digital reading (OECD, 2013), complex problem-solving (e.g., Greiff et al., 2013; OECD, 2013), or the domain of ICT (information and communication technologies) literacy (e.g., Fraillon et al., 2013; Goldhammer et al., 2013). Innovative item formats are crucial to assess new and (more) behavior-based skills, for example new skills needed by workers who perform non-routine problem-solving tasks (Kozma, 2009).

Thus, the use of innovative item formats extends the construct representation (e.g., Goldhammer et al., 2013; Sireci and Zenisky, 2006). Since ICT literacy can be understood as a construct comprising facets of technological (declarative knowledge) as well as information-based skills (procedural skills) (e.g., ETS, 2002; Senkbeil et al., 2013; Zylka et al., 2015) which are operationalized using different item types, multi-dimensional measurement models appear to be more appropriate to describe the data than a unidimensional measurement model. Using these models, additional diagnostic information such as competency profiles which show individual strengths and weaknesses can be provided, differential correlations of the facets with background variables can be examined, and a better understanding of the construct CIL is enabled.

Item types used in ICILS 2013

The ICILS 2013 test is designed to provide students with an authentic computer-based assessment experience balanced with the necessary contextual and functional restrictions to ensure that the tests are delivered in a uniform and fair way. In order to maximize the authenticity of the assessment experience, the instrument uses a combination of purpose-built applications and existing live software. Students need to be able to both navigate the mechanics of the test and complete the questions and tasks presented to them (Fraillon et al., 2013).

The CIL test in ICILS 2013 contains three different item types (for a detailed description, see Fraillon et al., 2013): (1) information-based response tasks are conventional tasks which include non-interactive representations of problems or information. The response format is comparable to conventional paper-pencil tasks, and it is either multiple choice or drag and drop or constructed response (i.e., entering a word or a short text); (2) skill tasks are performance-based tasks which require the use of a software application to solve it. They may call for one-step interaction (e.g., open an internet browser) or multi-step interaction (e.g., save a file with a certain file name). These tasks can be linear (e.g., copy a graphic and paste it into a text) or non-linear (e.g., use of filters in a data base); (3) authoring tasks (or large tasks) call for creating or modifying information products (e.g., a slide show) using authentic software applications. It can be necessary to use multiple resources (e.g., email, websites, spreadsheets) at the same time and integrate this information.

Item types, cognitive processes, and types of knowledge

The framework for CIL in ICILS 2013 is constituted by two structural dimensions, strands and aspects. The CIL construct comprises two strands. Both strands refer to the overarching conceptual category for framing the skills and knowledge addressed by the CIL instruments (see Fraillon et al., 2013 for a detailed description of the framework). The first strand (collecting and managing information) embraces the receptive and organizational elements of information processing and management. This strand contains three aspects (knowing about and understanding computer use, accessing and evaluating information, managing information). The second strand focuses on using computers as productive tools for thinking, creating, and communicating. This strand has four aspects (transforming information, creating information, sharing information, using information safely and securely).

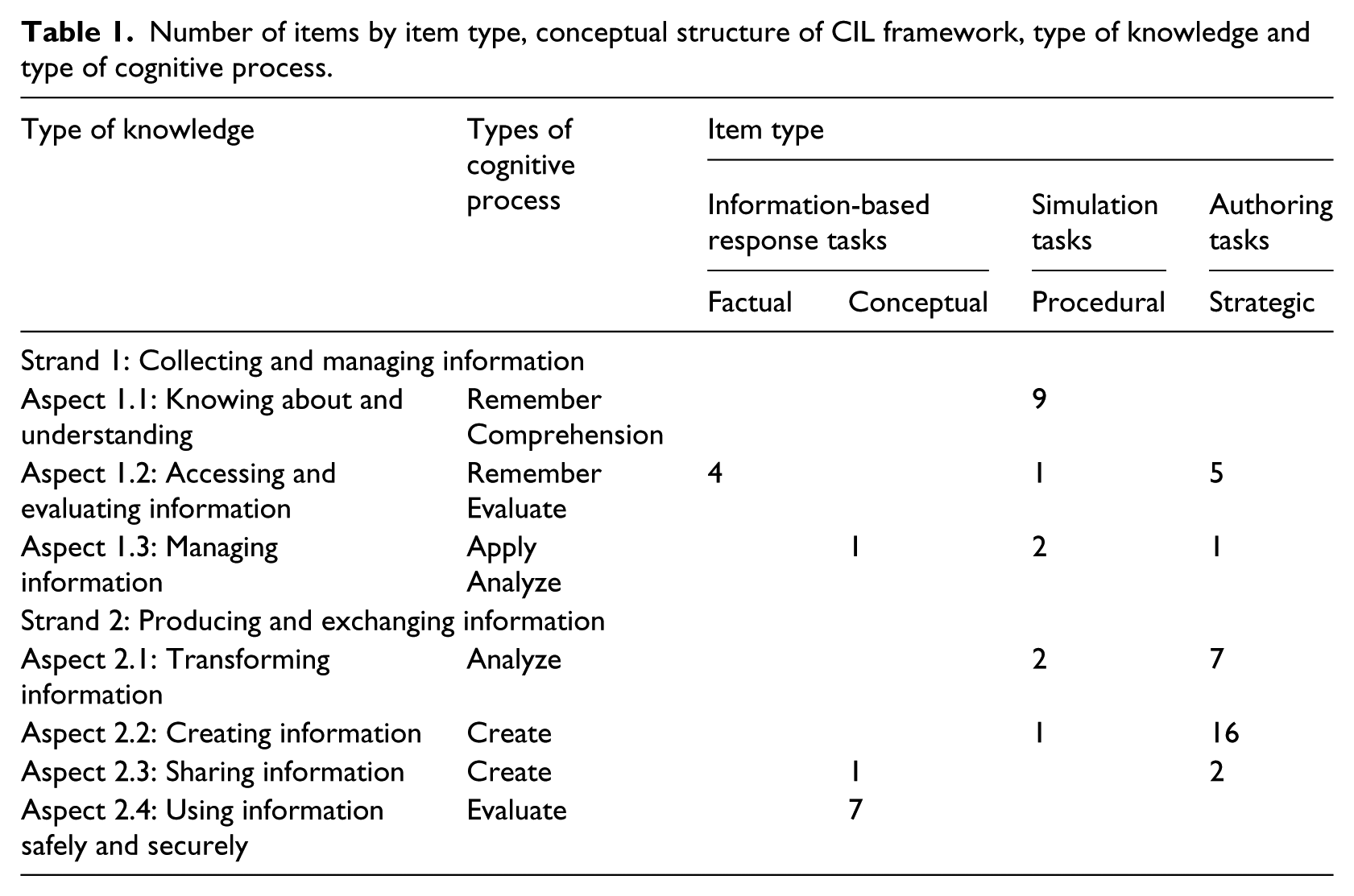

The assignment of the item types to the strands and aspects shows that each aspect is measured by items of only one or two different item types (see Table 1). Thus, item content (aspect) and item type are confounded. This might potentially lead to a dimensional structure by item type which can be partly explained by item content. It should be noted that the two strands represent two primary uses of computers and do not implicate a structure with more than one subscale of CIL (Fraillon et al., 2013). The latent correlation between student achievement on the two strands was nearly 1 (r = .96; Fraillon et al., 2014).

Number of items by item type, conceptual structure of CIL framework, type of knowledge and type of cognitive process.

As can be seen from the ICILS 2013 Assessment Framework, the choice of the item type in ICILS 2013 was mainly determined by the cognitive demands and the required types of knowledge in the particular item (Fraillon et al., 2013). This can be illustrated through the assignment of the aspects and strands of the ICILS 2013 framework to cognitive processes and types of knowledge following the revision of Bloom’s taxonomy of learning objectives (Anderson et al., 2001; see also Krathwohl, 2002; Mayer, 2002). The taxonomy distinguishes between six types of cognitive processes and four types of knowledge:

Types of cognitive processes: remember, comprehension (understanding), apply, analyze, evaluate, create (production)

Types of knowledge: factual knowledge, conceptual knowledge, procedural knowledge, metacognitive (strategic) knowledge

According to Mayer (2002), factual knowledge consists of “the basic elements students must know to be acquainted with a discipline or solve problems in it” (Anderson et al., 2001: 29). It includes knowledge of terminology and specific facts. Conceptual knowledge includes knowledge of categories, principles, and models (e.g., students may form a mental model of how a word processor works). Procedural knowledge includes knowing procedures, techniques, and methods as well as the criteria for using them (e.g., logging onto the internet). Metacognitive (strategic) knowledge includes knowing strategies for how to accomplish tasks, knowing about the demands of various tasks, and knowing one’s capabilities for accomplishing various tasks (e.g., general heuristics for how to operate computer programs).

Remember refers to retrieving knowledge from long-term memory; comprehension (understanding) refers to constructing meaning from instructional messages (e.g., interpreting, classifying); apply means executing a procedure in a given situation; analyze includes, for example, differentiating (distinguishing important from unimportant parts); evaluate refers to making judgments based on criteria and standards; and create refers to putting elements together to form a coherent whole (e.g., planning and producing).

Applying the taxonomy on the ICILS 2013 framework results in the following allocation (see Table 1). This allocation is in accordance with approaches to measuring ICT literacy in Germany (e.g., Goldhammer et al., 2014; Senkbeil and Ihme, 2014; Zylka et al., 2015):

Theoretical computer knowledge (knowledge about routines by handling computer-related problems and tasks; terminological knowledge refers to the process of gaining and applying computer-related knowledge) and evaluation of information that requires terminological, factual, and conceptual knowledge are measured with non-interactive tasks (information-based response tasks).

Properly defined demands (e.g., sorting a database, changing settings in a dialogue box) and basic skills (e.g., opening or saving a document, clicking on a hyperlink) which involve procedural knowledge (ability in performing computer-based actions) are measured using simulation tasks.

Transforming or creating information products (e.g., slide shows, posters) using different software applications which require not only procedural knowledge but also strategic knowledge (organized knowledge structures and systematic methods to solve complex problems) are measured by authoring tasks comprising more than one simulated software application.

To sum up, item types and item content are confounded in the ICILS 2013 test. With regard to the assignment of the item-by-item format to the three factors, the factors can be named theoretical CIL (non-interactive tasks), procedural CIL (simulated tasks), and strategic CIL (authoring tasks).

Relevant properties of innovative item formats

From a more theoretical perspective, the different item types which are used in ICILS 2013 comprise different properties which may affect cognitive requirements to solve computer-related tasks. In their taxonomy for innovative task formats, Parshall et al. (2010) distinguish three central properties of the assessment structure of items: (1) complexity comprises the number and variety of elements that are needed to be considered when responding to an item. Multiple choice items are examples of items with a low level of complexity. Graphics, tables, and the like with retrievable information as well as functional elements increase the complexity of items; (2) fidelity is the amount of realistic and accurate reproduction of the actual objects, situations, tasks, and environments of interest. A low level of fidelity can be found in multiple choice items, a medium level in simulations-based tasks, and a high level in tasks situated in a simulation comprising more than one software application; and (3) interactivity describes how much an item reacts to the input of the examinees. Single click items like multiple choice items have minimal interactivity. Items with high interactivity have multiple reciprocal actions between examinee and test item.

In the case of the ICILS 2013 test, the information-based response tasks are mainly multiple choice tasks and thus have low complexity, fidelity, and interactivity. Simulation-based tasks comprise simulations of software applications which lead to higher complexity (more elements need to be considered), higher fidelity (a more accurate reproduction of the reality by using simulations instead of screenshots), and comparably high or higher interactivity (one or multiple steps) compared to information-based response tasks. Compared to the other two item types, authoring tasks have the highest complexity (more than one software application has to be used), fidelity (most realistic representation of the workflow using office software), and interactivity (many interactions in large tasks).

Research questions

On the basis of a complete (i.e., content valid) theoretical framework, the assessment of CIL in ICILS 2013 comprises knowledge-based as well as behavioral, performance-oriented aspects of CIL. These aspects were measured economically using different item types. The question remains whether these different item types are suited to measure the same construct. If not, what exactly are the differences between these measures? To address these research gaps, we formulated the following two superior research questions.

Research question 1

What is the best factor model to describe the CIL item data? What is the empirical size of correlations? Is a unidimensional scaling of the CIL test reasonable despite multi-dimensionality?

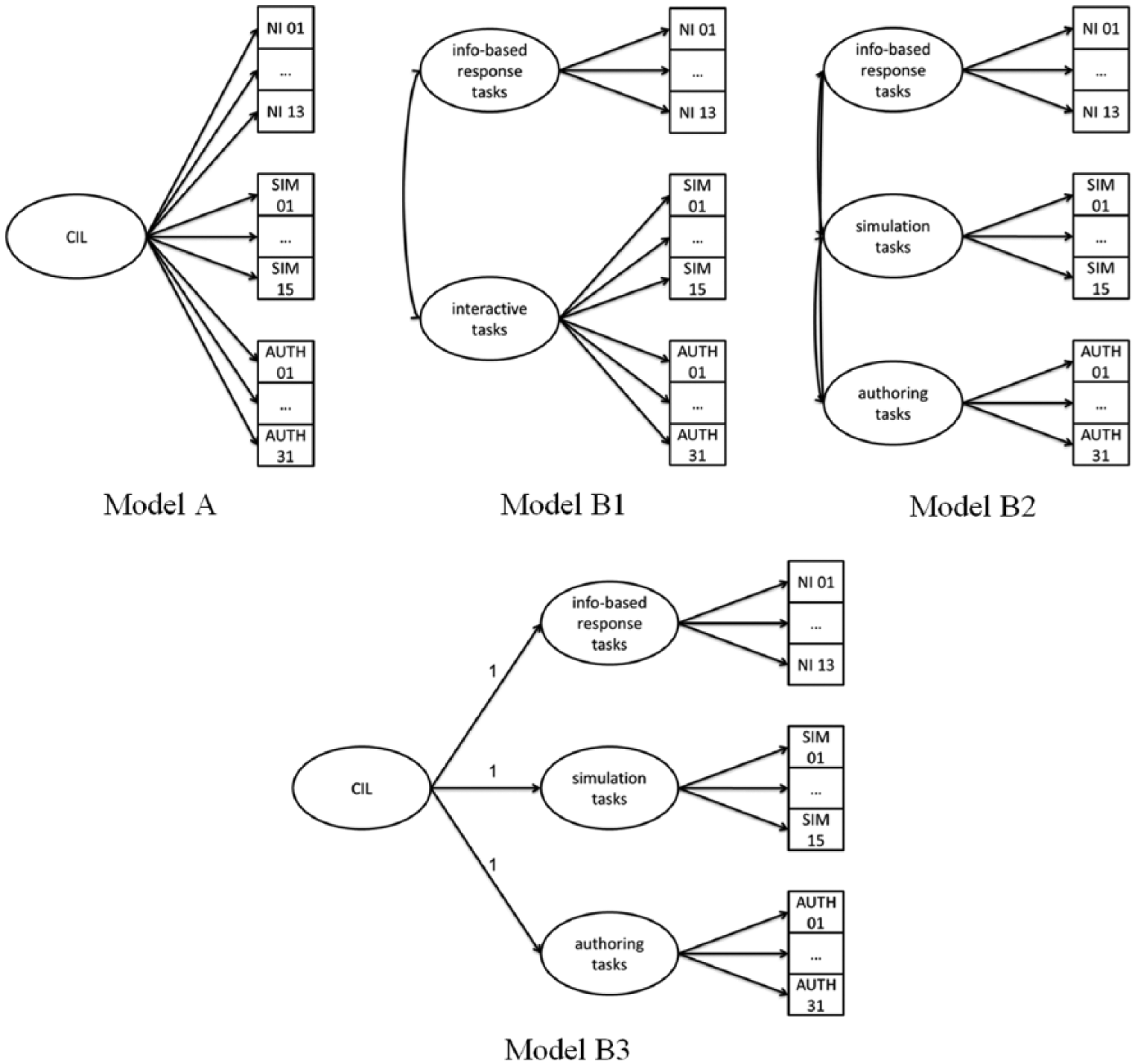

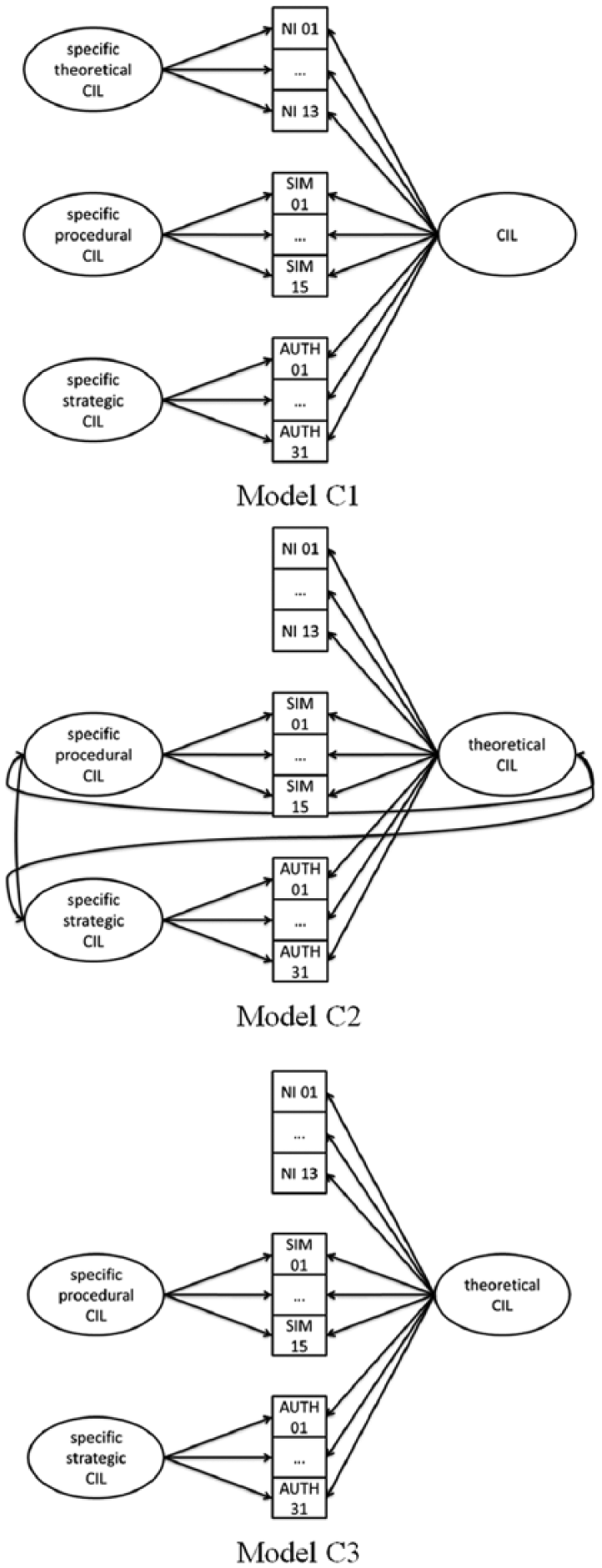

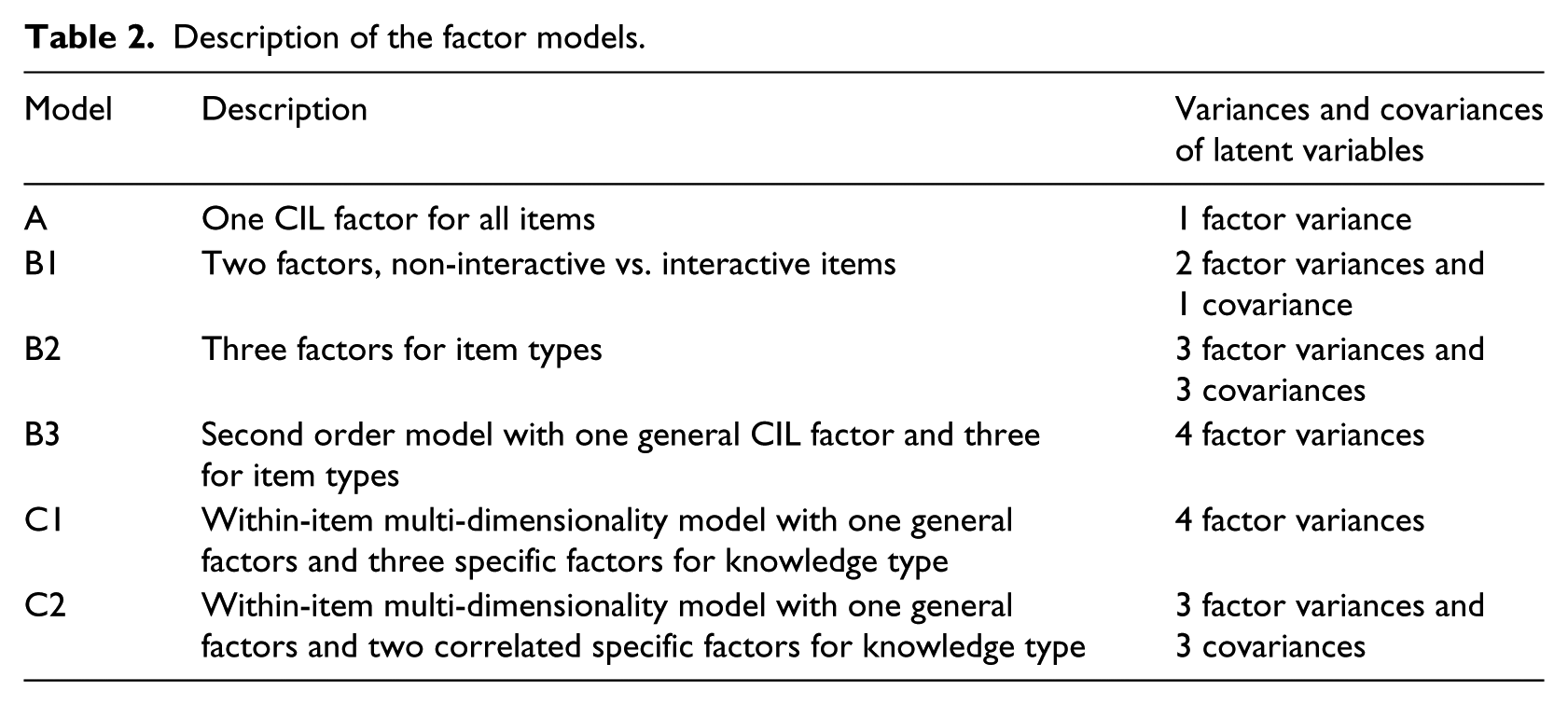

We address these questions by comparing seven different probabilistic one parameter logistic (1PL) factor models with between-item or within-item multi-dimensionality (see Figures 1 and 2 and Table 2). In model A, only one CIL factor for all items is tested. Three factor models B with between-item multi-dimensionality with item type factors are formulated. In model B1, there are two factors for non-interactive tasks and for innovative tasks (i.e., simulation-based tasks and authoring tasks). Model B2 contains three factors, one for each of the three item types. Model B3 is a second order factor model with three item type factors at the first level and a general CIL factor at the second level. Three factor models C with within-item multi-dimensionality are formulated in which the factors represent basic or general CIL or specific procedural CIL parts. Model C1 is a nested factor model with a general CIL factor and three specific factors comprising the specific variance parts of theoretical, procedural, and strategic CIL that are not cleared up by the general CIL factor. All four factors in this model are uncorrelated. Models C2 and C3 are similar to model C1, but they contain only two specific factors for procedural and strategic CIL. In this case, the general factor stands for theoretical CIL. In model C2, the general factor and the specific factors may correlate, but we expect the empirical correlations to be small. In model C3, these factors are uncorrelated. Please note that all models are 1PL models and thus, models B2 and C2 are mathematically equivalent, and models B3 and C1 are mathematically equivalent. Therefore, the model fit for these models will be the same.

Unidimensional model and models with between-item multi-dimensionality.

Models with within-item multi-dimensionality.

Description of the factor models.

Research question 2

2. If there is multi-dimensionality, what exactly are the differences between these CIL measures in terms of regression on SES, frequency of computer use, self-efficacy, and gender?

Variables that should be accounted for when measuring CIL in adolescent subjects include the frequency of computer use, the SES, the self-efficacy, and gender (cf. Van Deursen et al., 2011). People who spend more time using the computer for purposes similar to the demands of the test tasks acquire more knowledge about using a computer and improve their skills (Van Deursen et al., 2011). Especially in Germany, there is little regular computer use in schools with little variance across schools (Eickelmann et al., 2014), so in this study we combined use at school and use at home as one measure. Previous research has shown that many students easily acquire technical ICT skills, such as learning to navigate and use the search engines, but that they have deficiencies in information and strategic skills because they lack experience in dealing with information-related problems such as goal-oriented information search at home as well as in school (e.g., Calvani et al., 2012; Kuiper et al., 2005; Li and Ranieri, 2010; Van Deursen and Van Diepen, 2013). Thus, we assume a higher correlation of frequency of ICT use with theoretical and procedural CIL than with strategic CIL.

The SES of the family has a strong influence on CIL (Fraillon et al., 2014; Wendt et al., 2014). Families with higher SES are, in general, more highly educated and have more home possessions. They possess improved computer technology (DiMaggio, 2004) and are more able to apply the content provided on the internet to their functional needs (Katz and Rice, 2002). At the same time, adolescents need instructional support in handling information from the internet (Walraven et al., 2009), and parents with a higher level of education are more likely to give the necessary support (Vekiri and Chronaki, 2008). Accordingly, large differences in competence can be expected between adolescents (e.g. Van Deursen and Van Diepen, 2013) due to accumulation processes. The effect of SES applies not only to CIL but to all cognitive measures, especially to general cognitive abilities. Compared to open response tasks (or to procedural and strategic CIL, respectively), information-based response tasks (theoretical CIL) can more likely be solved through reasoning and thus, measure more construct-irrelevant variances (Huff and Sireci, 2001). This disadvantage might lead to a higher correlation with SES mediated through general cognitive abilities.

Self-efficacy plays a crucial role in self-regulatory learning. It is the subjective conviction to be able to show a certain behavior in a certain situation (Bandura, 1997). These convictions influence the individual’s outcome expectation and will also predict the actual ability to perform the behavior. Compeau and Higgins (1995) showed the impact of computer-related self-efficacy on outcome expectations as well as on performance. The predictive value of self-efficacy is especially high when the self-efficacy items are congruent to the test items (Pajares, 2002). This congruence is not particularly connected to the item format (the types of knowledge), so no differential correlations with the item types are expected.

Gender-specific differences in ICT-related aspects are getting smaller, but still exist (Christoph et al., 2015). For example, boys have access to more items of hardware than girls and are more intensive users of ICT at home than girls for leisure uses. Girls are more likely to use home computers for educational purposes than boys (e.g., Helsper, 2010; Selwyn, 2008; Valentine et al., 2005). In accordance with these usage differences, previous research shows that boys possess more theoretical computer knowledge and basic computer skills than girls (e.g., Goldhammer et al., 2013; Ilomäki and Rantanen, 2007). As regards information-related skills (e.g., researching information on the web), no gender-related differences can be found (e.g., Hargittai and Shafer, 2006; Van Deursen et al., 2011). Accordingly, it was assumed that boys have more theoretical CIL, and therefore perform better in non-interactive tasks, and that no gender differences can be found for procedural and strategic CIL (simulated and authoring tasks).

The item types differ in their complexity, interactivity, and fidelity, and thus should also differ in their construct validity. We therefore computed regression analysis of the item type factor scores from the best fitting models on the frequency of use of computers, the SES, computer-related self-efficacy, and gender. Depending on the model used to derive the ability scores (within- or between-item model) we expected to observe different effects of the individual difference variables on the ability dimensions (Rauch and Hartig, 2010). In the models B1, B2, and B3, differential regression effects of these variables would indicate differences in construct validity between the item types. In the models C1, C2, and C3, differential regression effects would indicate differential meanings of the CIL facets (theoretical CIL, procedural CIL, strategic CIL). Differential regression effects would also additionally justify the assumption of multi-dimensionality, for they indicate the specific content-related differences between the factors.

Methods

Sample

Twenty-one educational systems participated in ICILS 2013. For research question 1, we selected the 12 European participants: Croatia, Czech Republic, Denmark, Germany, Lithuania, Norway, Poland, Slovak Republic, Slovenia, Switzerland, the Netherlands, and Turkey. This led to an overall sample size of N = 32,643 students. The sample consisted of 16,673 male (51.1%) and 15,969 female (48.9%) students (one missing information), with a mean age of 14.4 years (sd = 0.60 y).

For research question 2, we selected Germany and other European countries with similar distributions of SES (home possessions and HISCED) and with comparably high proportions of migrants, because only then could similar relations between the considered variables be expected and thus, analyses with transnationals permitted. The criteria led to the selection of Germany, Denmark, Norway, the Netherlands, and Switzerland. The overall sample size was N = 11,850. The sample consisted of 6,060 male (51.1%) and 5,789 female (48.9%) students (one missing information), and they had a mean age of 14.6 years (sd = 0.62 y).

Instruments

CIL test

The ICILS 2013 test instrument consisted of 4 test modules with a total of 70 tasks. Each participant received 2 out of 4 test modules with 31 to 39 tasks. The test modules were applied in balanced multi-matrix design (Fraillon et al., 2014).

Socio-economic status

The SES was captured by HISCED and the home literacy index. The HISCED is the highest parental education of a participant, classified according to the International Standard Classification of Education (Fraillon et al., 2015) on a scale from 0 to 4. The mean value was 2.66 (SD = 1.15). For the home literacy index, the participants stated how many books they have at home on a 5-point Likert scale from None or very few (0–10 books) (0) to Enough to fill three or more bookcases (more than 200 books) (4). The mean score was 2.29 (SD = 1.26).

Frequency of use of ICT

For the measure for frequency of use, two items for computer use at home and computer use at school were combined on one scale. Both items have a 5-point Likert scale from Never (1) to Every day (5). Use at home had a mean of 4.50 (SD = 0.78) and use at school had a mean of 3.19 (SD = 1.08).

Self-efficacy

Students’ self-confidence in solving basic computer-related tasks was measured by six items about how well participants could do specific tasks. The response categories were “I know how to do this,” “I could work out how to do this,” and “I do not think I could do this.” The reliability of this scale was .75 in our sample. We used the derived scale score in a single indicator model in which the residual variance was computed as the measure of unreliability multiplied by the variance.

Statistical analysis

For further details with regard to methods or instruments, see also Jung and Carstens (2015). All factor models and structural equation models were computed with Mplus 7.31 (Muthén and Muthén, 1998–2015). There were only a few missing values for all variables (less than 4% for every variable). Missing values were handled using the Mplus default FIML.

Results

Research question 1

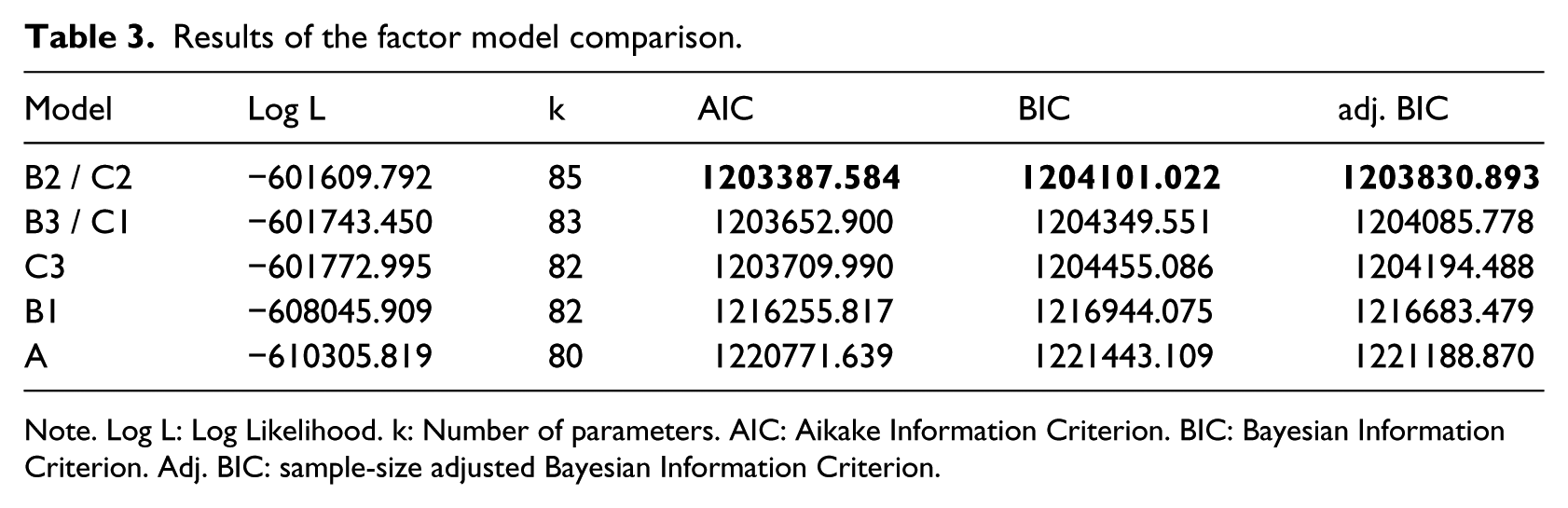

We compared seven factor models using the AIC, BIC, and sample-size adjusted BIC to select the models best fitting the data. This led to the selection of the two models with three correlated factors (model B2 or model C2, see Table 3), which are mathematically equivalent. In model B2, the correlation between authoring tasks and simulation-based tasks is r = .73 (p < .001), the correlation between authoring tasks and non-interactive tasks is r = .73 (p < .001), and the correlation between non-interactive tasks and simulation-based tasks is r = .88 (p < .001).

Results of the factor model comparison.

Note. Log L: Log Likelihood. k: Number of parameters. AIC: Aikake Information Criterion. BIC: Bayesian Information Criterion. Adj. BIC: sample-size adjusted Bayesian Information Criterion.

In model C2, the theoretical CIL factor correlates positively with the specific strategic factor (r = .06 p < .001) and negatively with the specific procedural factor (r = −.13, p < .001). The two specific factors are positively correlated (r = .27, p < .001).

The empirical results support multi-dimensionality regarding item types or types of knowledge. Nonetheless, the high factor correlations in model B2 suggest that the report of a unidimensional CIL scale is justified.

Research question 2

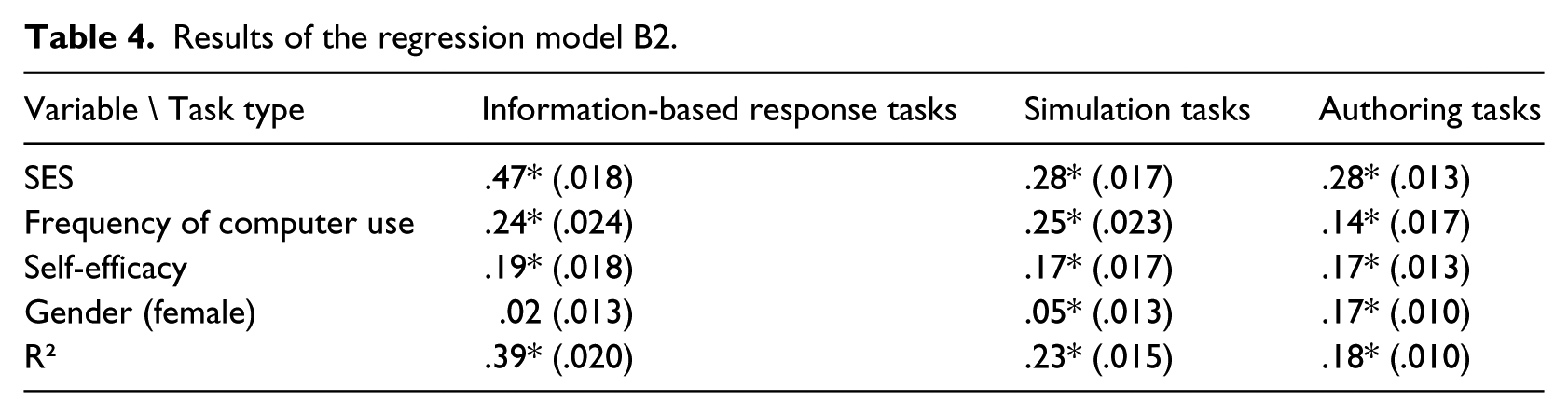

We computed regression analyses of the CIL factors in the best fitting models on SES, frequency of computer use, self-efficacy, and gender. The results of the regression analysis of the factors in model B2 (see Table 4) show that for all item types, the highest regression coefficient can be found for SES. It is higher for information-based response tasks (.47) than for the other two item types (.28). The regression coefficient of frequency of computer use is higher for information-based response tasks (.24) and simulation tasks (.25) than for authoring tasks (.14). The regression coefficient of self-efficacy is about the same for all three item types (.17 to .19). Gender has no significant regression coefficient on information-based response tasks and only a small one on simulation tasks (.05 in favor of the girls), but it has an effect on authoring tasks (.17 in favor of the girls).

Results of the regression model B2.

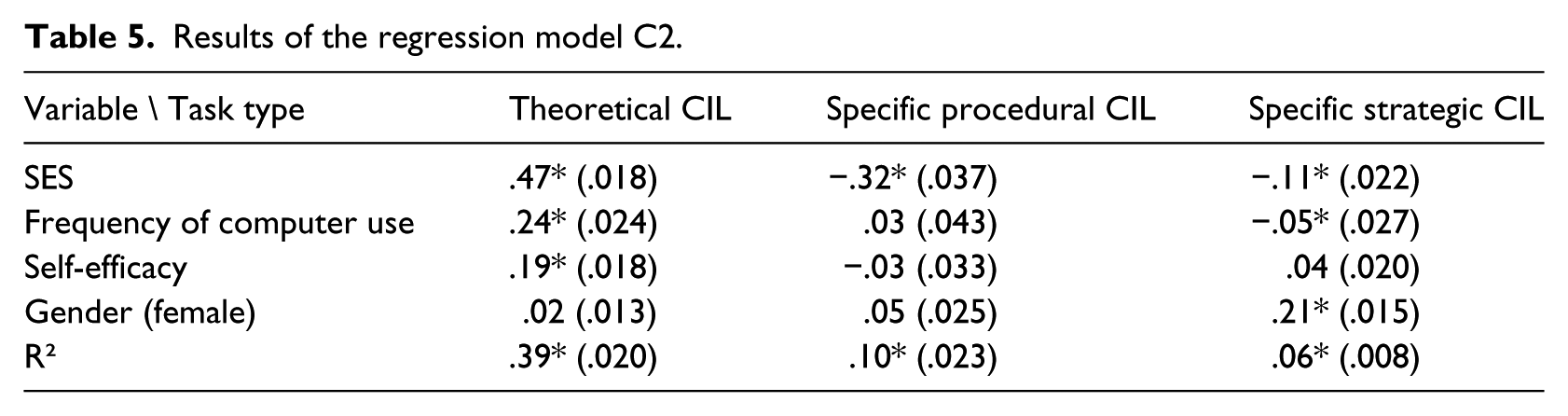

The results of the regression analysis of the factors in model C2 (see Table 5) show that for theoretical CIL, SES (.47), frequency of computer use (.24), and self-efficacy (.19) have significant regression coefficients, but gender does not. On the specific procedural CIL factor, only SES has a significant (in this case a significantly negative) regression coefficient. That means that for students with the same theoretical CIL, a higher SES leads to lower specific procedural CIL. On the specific strategic CIL, SES also has a negative effect, and female gender has a positive effect.

Results of the regression model C2.

In model B2, the results support the hypotheses regarding SES, frequency of use, and self-efficacy. Regarding gender, as expected, we found only small differences. However, contrary to expectations, girls performed better than boys in procedural and strategic CIL. This can be seen in line with recent findings as will be explained in the discussion. The results for model C2 indicate additional important diagnostic information for the specific procedural and strategic CIL factors which will be illustrated in the discussion.

Discussion

The aim of this article was to find the best factor model for the CIL items of ICILS 2013 which were information-based response tasks, simulation-based tasks, and authoring tasks and to explore whether the factors in the best fitting models differentially correlated with other variables. We highlighted that items of different item types also differed with regards to content. The information-based response tasks measure factual and conceptual CIL, simulation-based tasks measure procedural CIL, and authoring tasks measure strategic CIL. Items of different types vary also in the types of cognitive processes and in the aspects of the ICILS 2013 framework they measure. Thus, using these three item types ensures a broad construct representation in the ICILS 2013 test. But then, it also means that item type and item content are confounded, and so item type factor models and item content factor models will fit alike.

To find the best factor models, we compared the fit of seven factor models describing the factorial structure of the items with different item types. Based on several fit indices, the between-item dimensionality model B2 and the within-item dimensionality model C2 were selected. Both models included three correlated factors, and their fit was mathematically equivalent (Hartig and Höhler, 2008). Model B2 contains the assumption of three correlated item type factors, one for each item type. These three factors correlate substantially, which implies that items with different item types measure similar but not identical competencies. The correlations of the three factors (.73 to .83) are of the same magnitude as Senkbeil and Ihme (2014) found between a paper-pencil multiple choice ICT literacy test and a performance-based test. The latent correlations of the three dimensions indicate that there is a big overlap of common abilities. They are high enough to permit the interpretation of CIL as a unidimensional, facetted construct. Nonetheless, these non-perfect correlations indicate the unique meaning of each item type for the measurement of CIL, and the superior fit of this model over the unidimensional model indicates a substantial amount of item type variance.

The results of the regression analysis for the between-item multi-dimensionality factors of this model indicate differential regression effects on the item type factors. The information-based tasks are specifically highly dependent on SES. As expected, adolescents with higher parental education and more home literacy possessions perform specifically well in the information-based tasks. According to Vekiri (2010), it can be assumed that students from more privileged SES backgrounds benefit from easy ICT access at home and parental support when using (exploring) ICT. Thus, students from privileged families have more opportunities to use and to experience success with computers so that they acquire more ICT knowledge and basic skills than students from low SES backgrounds (Meelissen and Drent, 2008). With regard to strategic CIL, it can be assumed that students benefit less from parental support because many adults as well as children or young people have problems in solving information-related tasks (e.g., Gibbs et al., 2011; Van Deursen et al., 2011; Walraven et al., 2009). Thus, the correlation between SES and strategic CIL was significantly lower. As regards the frequency of ICT use, the results are in line with our hypothesis. In the regression on authoring tasks, the frequency of computer use plays a smaller role. Apparently, it is not the quantity of computer use that leads to a better performance in authoring tasks, but presumably the quality of computer use. Scholars assume that adolescents who spent much time on the computer with gaming or other leisure activities have less time to spend on the relevant applications (e.g., ICT use for educational purposes or goal-oriented information searching) and thus might gain less strategic CIL (e.g., Kuiper et al., 2005; Selwyn, 2009; Senkbeil and Ihme, 2014; Tu et al., 2008). The gender differences in authoring tasks are also larger than in the other two item types. With the increasing digitalization of everyday life, gender differences in ICT literacy have declined steadily in recent years and can no longer be found in numerous studies (e.g., Calvani et al., 2012; Li and Ranieri, 2010; Van Deursen and Van Diepen, 2013). Furthermore, girls are more likely to use ICT for educational purposes than boys (e.g., Selwyn, 2008; Valentine et al., 2005) and so perform better in authoring tasks which represent typical school tasks (i.e., preparing a presentation for lessons, preparing a poster in a school-related context).

In contrast to the between-item multi-dimensionality model, the within-item model (model C2) explicitly decomposes the skills required for the CIL test. The within-item model allows skill scores to be estimated separately for the procedural and strategic dimensions. Model C2 contains a general factor for theoretical CIL and two factors representing the specific parts of procedural CIL and strategic CIL, respectively. In this model, the highest factor correlation is found between the two specific factors, while theoretical CIL barely correlates with specific strategic CIL and negatively with procedural CIL. Small positive or even negative correlations can be expected in this model class because of the formulation of the specific factors as differences to the general factor (in this case theoretical CIL).

In the regression on the within-item multi-dimensional model, the regression coefficients on theoretical CIL look similar to those for information-based response tasks in model B2. The specific factors in within-item multi-dimensionality models expose strengths and deficits of certain subgroups more strongly than do factors in between-item multi-dimensionality models. SES had a negative effect on the specific factors for procedural and strategic CIL, which implies that the effect of SES is higher on theoretical CIL than on procedural CIL and strategic CIL. A potential explanation could be that students with low SES background lack theoretical CIL, while they acquire procedural CIL through intensive ICT use in their leisure time. Regarding frequency of use, our hypothesis is supported that theoretical CIL can be acquired through frequent and mainly self-controlled ICT use, while this does not apply to procedural and strategic CIL (cf. Van Deursen and Van Diepen, 2013). This confirms the assumption of many researchers that strategic and procedural CIL should be more intensively taught in classroom (e.g. Calvani et al., 2012; Van Deursen and Van Diepen, 2013). Regarding gender, female students have a higher strategic CIL all other things being equal. Apparently, boys lack a problem-oriented or goal-oriented handling of ICT (e.g., Selwyn, 2008).

Overall, our findings show that the three item types used in ICILS 2013 measure not only CIL with three different methods but also three content-related facets of CIL which are highly correlated but have differential correlations to other variables. These results are in line with findings of other studies which investigated the multi-dimensionality of ICT literacy assessments (e.g., Van Deursen et al., 2011; Zylka et al., 2015). Hence, using these item types serves to cover the entire width of the construct from factual and conceptual CIL through procedural CIL to strategic CIL. In our opinion, this strategy is strongly recommended for forthcoming studies such as ICILS 2018.

Limitations

At present, especially in the within-item multi-dimensionality model, the interpretation of the regression effects remains speculative. Accordingly, intervention studies are necessary for an empirical coverage.

Some of the reported results can be explained in more technical terms, for example the advantage of students with higher SES in the information-based response tasks can come about due to the item format (multiple choice items) because of their presumably higher general cognitive level (Huff and Sireci, 2001). In general, it is inconclusive whether the factorial structure rests with one or the other because of the confounding of item type and content (type of knowledge).

Outlook

One explanatory variable missing in these data is cognitive ability. In future studies and analyses, we plan to include general cognitive ability measures to learn more about the conceptual distinction of CIL and its specific properties.

Footnotes

Declaration of Conflicting Interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.