Abstract

This article examines the use of geolocative technologies, methods and data in the production of digital heritage objects. Digital mediations of heritage have become pervasive, yet despite their increased circulation, they largely remain critically under theorised. The production of digital heritage objects is defined by the use of GPS instruments and the iterative processing of geospatial data. Despites this, however, they are rarely used in ways that could be described as cartographic. As such, this article explores the consequences of having so much of what is increasingly circulated, and consumed as heritage being now produced through the same tools and methods associated with maps, GIS or navigation. This article will use empirical data produced through an ethnography of The Cherish Project. Cherish is a multi-national research initiative centred around the objective of delivering a comprehensive baseline of 3D data for heritage sites currently threatened by climate change on both coasts of the Irish Sea. Building upon crucial literatures from the critical GIS and digital sectors of geographical scholarship, this article makes key contributions to how the theoretical orientations of the discipline might evolve towards new understandings of data and digital cultural objects. In both reorienting geographical literatures around theorists like Bruno Latour, Manual DeLanda and Yuk Hui – and contextualising such readings in the empirical work this paper volunteers – something unique and useful materialises. Namely, geospatial technologies, methods and geospatial data are revealed as agents of territorialisation and coding, the likes of which reconcile the situated, performative and thoroughly contingent nature of cartographic science with the derivation of discreet digital heritage objects.

Introduction

The use of digital heritage objects has become pervasive. In museums, classrooms, galleries and lecture halls they now serve a diverse range of applications. Yet despite their increased circulation, digital heritage objects remain critically under-theorised. They have been absorbed across the heritage sciences with great enthusiasm, but without much pointed discussion of how – by virtue of their digitality – they change or affect what is discussed and researched as heritage. Not only are digital heritage objects commonly circulated as being ‘static, timeless, uncomplicated, or easily knowable’, 1 but as scientific images with distinct application in a variety of research contexts, they are further assumed to ‘show exactly the things themselves as they appear’. 2

This article examines the use of geolocative technologies, methods and data in the production of digital heritage objects. Specifically, it explores how geolocative and cartographic methods and data are integral to that production; from the earliest stages of site survey and data acquisition to their eventual formalisation as cohesive three-dimensional forms and models. Because digital heritage objects have found robust application in contexts ranging from geomorphological analysis to public-facing historical showcases, they function as dynamic tools for both research and interpretive learning. 3 Particularly in how they are being purposed towards the conservation of threatened, eroding or damaged heritage assets, digital heritage objects are unique in that they can function as both objects of memorialisation and advocacy, and as technical objects of scientific investigation. 4 Although much of their production is defined by the use of GPS instruments and the iterative processing of geospatial coordinate-based information, they are rarely used in cartographic ways. What exactly are the consequences, then, of having so much of what is increasingly circulated, researched, observed and consumed as heritage produced through the same tools normally associated with maps, GIS or navigation?

Unpacking the epistemological and ontological consequences of the increased geolocative digitality of the heritage sciences is a complex matter. For one, any discussion about what digital heritage objects are is also necessarily bound up in attendant considerations of the ontological multiplicity of the data from which they are comprised. 5 Following Rob Kitchin, this article contends that heritage data ‘do not exist independently of the ideas, instruments, practices, context, and knowledges used to generate, process, and analyse them’. 6 Therefore, their ontological status is best described in terms of the situation and contingency inherent to how they are produced, interfaced and used. Much of what will also be detailed in the sections to follow is how the ontological status of digital heritage data is also densely bound up in the geospatial technologies and methods used to produce them. To trace the production of digital heritage objects – this paper will show – is to both describe how heritage data becomes iteratively spatialised and how archaeological knowledges produced through multi-sited and contingent processes are eventually rendered as standardised units with little tangible relationship to their own provenance or means of production.

Therefore, this article calls upon geographical discourses in both critical GIS studies and cartographic theory. As mapping technologies have become more advanced, more democratised and pervasively embedded in smartphone technology and social media, geographers have developed conceptual tools to explore both how such technologies challenge traditional understandings of cartographic science and the increased influence geo-spatial tech ostensibly has on modern life. 7 The issue is that there is comparatively little literature published to date on the relationship between geo-spatial technology and the digital turn of both archaeology and the heritage sciences. Furthermore, while such literature can illuminate how the production of digital heritage objects works, it is less effective at detailing how such processes bear on the ontological status of the digital objects produced. This article contends that while much of critical GIS applies to the challenges it targets, further theoretical resources and orientations become necessary to do so. This paper concludes that theoretical tools such as assemblage theory (specifically its orientation around notions of ‘territorialisation’), circulating reference and more become necessary for geographers to better consider the digital turns of archaeology and the heritage sciences. Given that the heritage sciences are both increasingly centred around the production of digital objects that are also cultural objects – and that such practices are so reliant on geospatial methods and technologies – digital heritage objects prove a fertile space to explore how geospatial technology is changing the production of cultural knowledge.

To develop this argument, this article uses empirical data produced through an ethnography of The Cherish Project. Cherish is a multi-national, EU-funded, research initiative taken up between four archaeological and geological research entities located in Ireland and Wales. 8 Centred around the objective of delivering a comprehensive baseline of 3D data for heritage sites currently threatened by climate change on both coasts of the Irish Sea, Cherish is unique in that it is almost entirely oriented around the development of digital visualisation methodologies like UAV 9 survey, laser scanning, geo-physical survey 10 and LiDAR. Across all Cherish’s methods is the pervasive use of GPS instruments, geo-spatial coordinate data, and the circulation of spatial reference networks. Examining the production of digital heritage objects via UAV survey, this article specifically addresses the geo-spatial and geo-locative basis of such practices. The empirical sections detail the mobility of heritage data across sites and interfaces, and the extent to which the production of digital heritage objects is inherently multi-sited. In so doing, it details how the relationship between multi-sitedness, process and the resulting digital objects is predicated on data being formalised by geospatial technologies and methods. We see datasets produced in the field undergo significant translations as they travel between hardware, software, locations and site-specific operations. Crucially, it details how these translations are facilitated via the spatialising/territorialising capacities of the technology and methods required to produce them.

This article compliments discourse in cultural geography by attending to literatures of assemblage theory, circulating reference and further discourse in the ontologies of digital objects. It not only develops the means necessary to trace how geospatial technologies crucially function to territorialise the circulation and multi-sited production of digital heritage data and objects but asks critical questions about the elision of science and culture embedded within these practices. It describes how geo-spatial technologies enable the iterative and mutable ontologies of data objects to function as stable cultural objects, but in so doing also critically details the consequences of pursuing such stability; namely, the resulting disconnect between a stable digital cultural object and the distributed, multi-sited, multi-vocal network required to produce it. As such, this article makes new contributions to existing geographical literatures; first in the theoretical orientations it introduces to existing work on the relationship between geospatial technologies and digital culture, and secondly by establishing just how significantly cartographic sciences have influenced both the digital turns of archaeology and the heritage sectors as well as the ontologies of the digital objects central to such disciplines.

Conceptualising 3D models as geospatial data

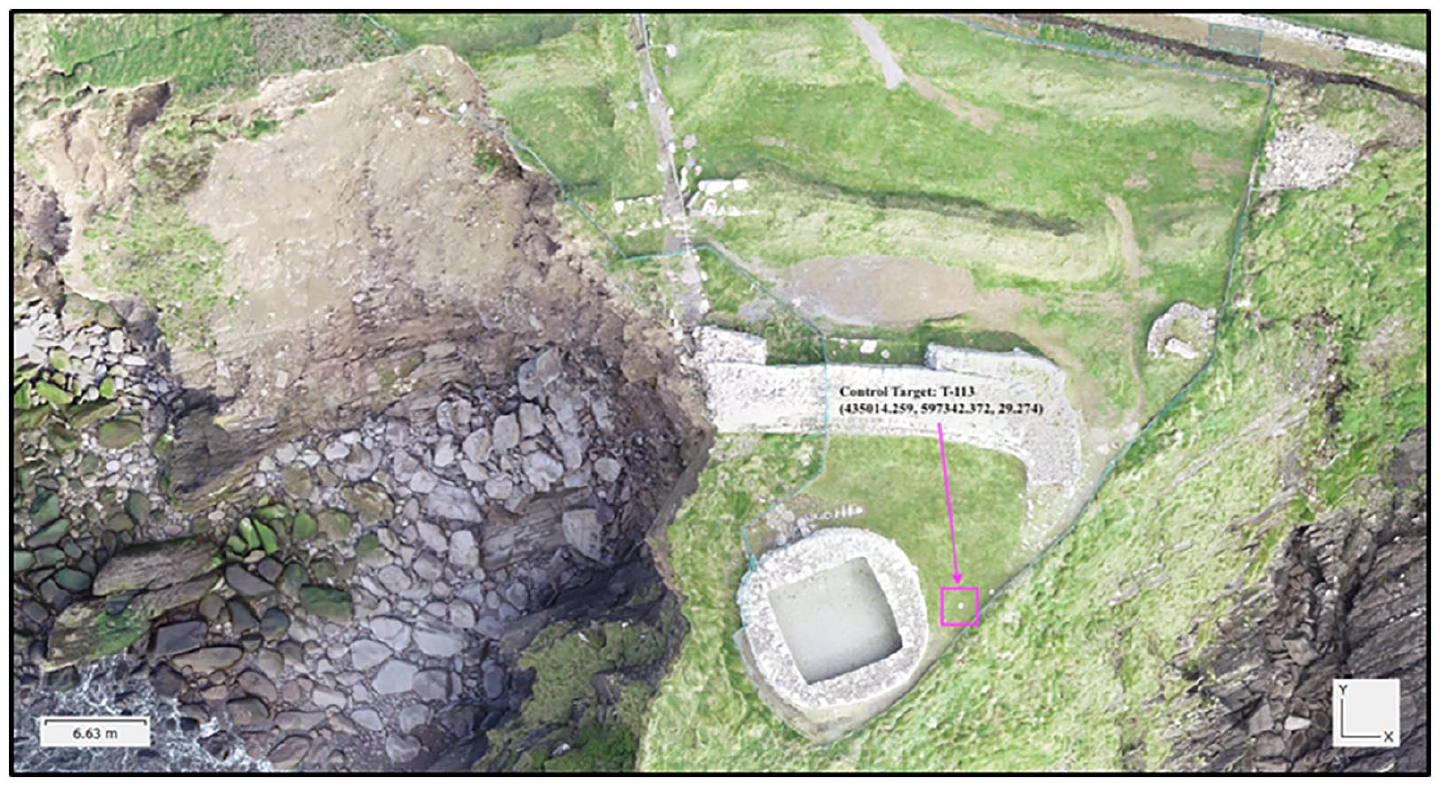

As heritage data coheres into digital objects, it travels between field sites and office spaces, moving across a number of instruments, interfaces and software. Take, for example, the image of the 3D model in Figure 1. This model is one of several 3D models the Cherish Project has made of Dunbeg Fort, an iron-age promontory fort located in County Kerry on the south-east coast of Ireland. To produce models like this, Cherish practitioners require a large number of instruments and software including drones, tablets, digital cameras, GPS antennas, control targets, powerful desktop computers, a storage server, Adobe Bridge, Microsoft Excel, Esri ArcMap and Agisoft Photoscan Pro. 11 The data comprising such models typically begin as hundreds of individual digital images and a series of GPS coordinates registered across the site being surveyed. The empirics of this article detail how these initial datasets not only travel between sites, but how they are iteratively processed into point clouds, wireframe meshes and eventually into cohesive models.

A screen grab of a finished model of Dunbeg Fort. Data produced by Robert Shaw, model rendered by Aaron Deevy, Discovery Programme, Ireland, photo by Sterling Mackinnon.

Crucially, the empirical passages of this article also detail how cartographic products, GPS instruments, coordinate-based data, and geospatial softwares stabilise and regulate the circulation, distribution, and varied visualisation of heritage data in such a way that it can be reliably used to conduct scientific research on archaeological sites. To bore down into the consequences (both epistemic and ontological) of centring the production of heritage research on such tools and methods, also requires the use of the theoretical orientations which this section gathers. We begin by exploring the applicability of contemporary geographical discourse in critical GIS studies. It then takes up Bruno Latour’s discussion of circulating reference to address how the multi-sited, distributed and mobile nature of the workflows described here relate to one another to resolve in visual and accurate research tools and outputs.

This discussion then leads to an overview of assemblage theory (particularly that of Manual DeLanda) to situate how the distribution and arrangement of geo-spatial tech and data across such workflows co-function with the other assembled components (including the expertise of modelling practitioners) to result in the emergence of digital heritage objects and the knowledges they carry. Finally, this section performs an overview of contemporary discourse concerning the ontologies of digital objects. Specifically, how a rigorous understanding of digital objects (digital heritage objects in particular) is deeply rooted in attendant considerations of the assemblages, platforms and practices through which they are used.

Taken collectively, the theoretical orientations gathered here enable us to better grasp how a process largely defined by the mobility of its component parts can reliably resolve in models and knowledges applicable in an array of research contexts. Applying such theory also enables us to see how the ontologies of digital heritage objects can alternately be defined both in terms of their application in research 12 as well as in terms of the contingency of the processes that produce them. When read with the empirical sections that follow, we will further see how these definitions can occasionally be seen as being at odds with one another.

‘Mapping’ and the production of geo-spatial data

The processual cohesion, visualisation and mobility of digital heritage objects is predicated on the complex use of both geo-locative data and geospatial technologies. As a result, such practices translate well to discussions of critical GIS, which the geographical discipline has taken up since its ‘cultural turn’ in the late 1980s. From J.B. Harley’s seminal ‘Deconstructing the Map’ (1989) to Clancy Wilmott’s recent publications on ‘mobile mapping’, geographers have long explored the social, political and phenomenological consequences of the increasingly pervasive influence of GIS and geo-referenced data across a wide spectrum of contexts and applications. 13

As the influence of geo-spatial technology has evolved and become enmeshed in the digital mediations of social life, geographical scholarship has also evolved. Contemporary discourse around such topics increasingly explores how the engagement with – and production of – geo-locative data has become performative or situated. To get at how digital heritage objects should or could be understood, then, we need to explore both how and why they are made. While not maps, per se, part of what this paper establishes is the contention that any digital heritage object derived from geographical survey (a great majority are), can also be understood as a cartographic product. A product which, following John Pickles, should not only defined by its capacity to operate in ‘particular social situations’ or ‘fulfil a particular function’, but as ‘an inscription that does (or sometimes does not do) work in the world’. 14 Finally, many of the sites of production inherent to these objects, Pickles contends, are virtual spaces of ‘data manipulation and representation whose nominal tie to the earth (through GPS and other measuring devices) is infinitely manipulatable and malleable’. 15

For Clancy Wilmott, much of this malleability pertains to the fact that geo-spatial data and geo-media have collapsed into many other forms of media and proliferated across an array of applications. 16 This is more than evident in the overview of photogrammetric modelling processes this article provides; nearly every instrument, software or interface assembled in such projects is made relational and cohesive by their shared reliance on GPS data and geo-referencing. Wilmott’s notion of ‘mobile mapping’ well describes how modern production of geo-referenced data is densely bound to the performative and embodied production of space. 17 As in the practices of urban navigation Wilmott specifically uses to explore this concept, in the Cherish Project nearly ‘all forms of data gathered with mobile devices are potentially, habitually, and often subtly, geo-tagged with location and time data, implicitly spatio-temporalizing media according to the cartographic logic of the map’. 18 What Wilmott further addresses, however, is how critical discourses on ‘new’ practices of mapping require attending to how such practices are performative, and that the ‘doing’ of mapping is further predicated on the dense entanglement of ‘mobile GPS technologies, satellites and communications infrastructures, the situated spatial and temporal environment, and users’ embodied actions. . .’. 19 Following Wilmott, then, while digital heritage objects are not – in and of themselves, maps – they are produced through distinct methods of what Wilmott terms ‘mobile mapping’. As a result, to theorise about the digital heritage objects this paper concerns means understanding how such objects are relational, because they are composed ‘of maps and spaces and people in situated moments’, ‘based not in objects, but in interrelations – both fixed and fluid – across space and time’. 20 Gillian Rose, likewise, has published extensively about the dense relationality of digital cultural objects (namely, the images produced by architects and developers in Doha, Qatar). Rose is specifically attendant to questions about the multi-sitedness and distribution of complex visualisation workflows. Making sense of visualisations produced in this way, Rose contends, requires not only carefully attending to the nodal situation of interfaces and the points of unique relational friction that gather around them, but in also situating such factors within wider co-constitutional networks. 21

‘Mobile mapping’ is further unique in how it discusses geometry. Here the geometric foundations of the cartographic sciences are presented not as agents of representational fixity, but as the means through which mapping efforts become mobile, scalable and valent. It is a contention which helps to elaborate how we understand the distribution of locative data across the multi-sited processes described in the sections to follow and how digital heritage objects might also be understood in terms of their unique mobility and mutability. Digital heritage objects like the 3D models Cherish hosts on their Sketchfab page – or the DEMs and ortho-mosaics they use to conduct morphological analysis of site erosion – are cohesive structures whose geometries are formed through the coordination of geo-locative measurement. Furthermore, it is the rigour and cohesiveness of their geometric structuring that enables them to be visualised across interfaces and screens in several contexts and applications. Digital heritage objects, like digital maps, ‘operate according to these [geometric] logics – through the flat cartographical interface of the screen combined with the operational codes and commands that enable its mutability’. 22

Geo-spatial data, distributed workflows and the formalisation of digital objects

Rose and Wilmott provide a helpful framework for understanding the multi-sitedness and distribution of the Cherish workflow for 3D models detailed in the empirical section. Namely, how the networks inherent to this workflow are not only developed via situated relational networks centred around site-specific engagements with key interfaces and platforms, but the complexity of this workflow is also stabilised via the pervasive distribution of geo-spatial referencing. What such literatures lack, however, is the means to describe how such conditions bear on the ontological status of the digital objects ultimately produced by these workflows. As such, further tools are required to understand how the mobility and distribution of such data also pertain to the project’s output of reliable and stable research models. It is here, that the aforementioned use of circulating reference, assemblage theory and current scholarship on digital objects becomes necessary. Particularly in the project of parsing out how the ontological status of digital heritage objects can simultaneously be defined in terms of the structural fixity of their basis in geospatial data and in terms of the site-specific contingency inherent to their production and interfacing.

It is difficult not to think about Bruno Latour’s germinal essay Circulating Reference (1999) whilst tracing the production of a digital heritage object from survey to application. 23 Central to Latour’s argument is the contention that there is no representational distance separating a phenomena observed by scientists and the knowledges produced through such observational practices or methods. For Latour, scientists translate ‘the world into words’ via intricate networks of reference. Crucially, however, Latour further maintains that systems of references are not ‘simply the act of pointing or a way of keeping, on the outside, some material guarantee for the truth of a statement’, but a way of ‘keeping something constant through a series of transformations’. 24 In UAV survey, for instance, practitioners enact a series of field methods to produce visual datasets (digital photographs) and coordinate-based datasets (normally exported as .csv files containing eastings, northings and elevation). As these datasets travel from the field to the office, they are iteratively processed using visualisation software, eventually comprising cohesive three-dimensional models. Latour contends that ‘scientists master the world, but only if their world comes to them in the form of two-dimensional, superposable, combinable inscriptions’. 25 As such, much as the soil scientists in Latour’s essay manage to reassemble the soil of the Amazon in their laboratories when they return from the field with their samples, the work I observed following the Cherish Project often functioned to translate anthropogenic earthworks and crumbling monuments into new digital objects, rendering such sites more accessible to scientists as a result.

However, circulating reference is a concept largely shaped by notions of transactional causality. As Latour follows his respondents, the translation of observations to data and figures – and, ultimately, to published work – is traced as a logical sequence of a to b to c, etc. To use circulating reference to consider the production of digital heritage objects, however, requires supplemental analytical tools and theories. For one, the production of digital heritage objects is complicated by the fact that heritage data are defined by their mutability and propensity for recombination. The workflows used to produce such objects, furthermore, are both non-linear and modular. One of the Cherish Project key deliverables is a project-wide protocol for best practice in UAV survey, yet every individual survey and modelling project/site is also defined by the unique contingencies of who is undertaking the work and how they interpret the prescribed approaches. As modelling projects develop, the transference and processing of modelling data is shaped and dictated by such contingencies. Even with all this in mind, Cherish reliably produces work to uniform standards of accuracy and resolution. Here, assemblage theory – specifically the work of Manual DeLanda – is particularly useful.

Built around key concepts of emergence, territorialisation and coding, the appeal of DeLanda’s theory of assemblage is its capacity to address heterogenous socio-technical wholes in a wide number of contexts. DeLanda places great emphasis on the exterior relationships and frictions that exist between the individual components that make up an assemblage, further contending that these relationships are the means by which a given assemblage comes to both possess and produce the properties, capacities and tendencies of emergence. 26 A stable assemblage is one from which the same properties, tendencies or things emerge. 27 Put differently, in targeting the frictions generated from the collective exteriority of a given assemblage’s component parts – and in typifying these generative interchanges as having empirically definable characteristics – one is capable of grasping or understanding an assemblage by virtue of what is produced through its respective co-functioning. If, then, we define Cherish’s workflow for UAV survey as an assemblage – and the output of 3D models as emergent from an assemblage’s capacity to co-function (or not) – DeLanda proves particularly helpful in parsing how the contingency, distribution and mobility of such workflows (detailed in the empirical sections below) come to be stabilised and regulated.

In DeLanda’s work territorialisation concerns ‘the degree to which an assemblage’s component parts are drawn from a homogenous state, or the degree to which an assemblage homogenises its components’. 28 Factors such as ‘habit’ and ‘technology’ are presented here as two key factors capable of maintaining or disrupting an assemblage’s capacity to alternately hang together, fall apart or change. The maintenance of routines or protocols, such as ‘the repetition of traditional rituals or systematic performance of regulated activities’, are here also posited as territorialising factors capable of both stabilising ‘the identity of organisations’ and giving them ‘a way to reproduce themselves’. 29 Coding, by comparison, refers ‘to the role played by the special expressive components in an assemblage in the fixing of the identity of the whole’. 30 The most obvious of such being examples like language or genetics. In the context of this article’s consideration of digital heritage initiatives, however, DeLandian notions of coding are used to address the synchronisation and mobility of data types that enable the processual generation of digital heritage objects. The most obvious example of this is the pervasive registration of all survey data (visual or otherwise) in set geographical coordinate systems and map projections.

The next sections of this paper demonstrate how the use of geo-locative data and geo-spatial technologies code and territorialise the assemblages it associates with the production of digital heritage objects. Furthermore, it illustrates how such tools are critical to the cohesion of heritage data as digital objects. As coordinate-based datasets are processed against the visual datasets produced through UAV survey, coordinate information is inscribed as metadata into the resulting visual outputs (such as images, point-clouds, wireframe meshes and 3D models). Following Kitchin’s contention that metadata describe the ‘lineage and provenance’ of how data is derived – as well as media theorist Yuk Hui’s claim that digital objects are ‘formalised by metadata and metadata schemes’ – the cohesion of digital objects is therefore predicated on the assembling of information inscribed in the various datasets used to compose them. 31 All digital-visual objects are uniquely unstable and ontologically multiple and defined by their mutability and multi-mediality. 32 Every time digital visual information is accessed on a browser it is reconfigured to the specifications and particularities of the devices used to interface it. 33 As such, what we understand as a digital object is determined not only by the formalisation of the metadata schemes native to the data that compose them, but also to the assemblages of hardware and software used to visualise them. Following Johan Redström and Heather Wiltse, digital objects not only ‘combine a range of technologies, computation, communication, sensors, interfaces, etc. to become what they are’, digital objects ‘can be recombined, reused, and thanks to digital technology even be made active in many places at the same time’. 34

Within Cherish, the hardware and softwares used to process, visualise and model heritage data are modular and mutable. Not only do softwares undergo frequent updates, but the instruments and hardware used towards such ends are often upgraded as new equipment becomes available. Furthermore, as heritage data moves from the parent databases where they are stored to such platforms and interfaces (and back again), the data itself is dramatically altered. Considered in DeLanda’s terms, many of the components composing these assemblages are likely to change. The key, however, is that despite their mutability, that which emerges from their respective co-functioning (i.e. digital heritage objects) not only remains consistent, but uniformly formatted to specific standards for resolution and accuracy. The remainder of this article addresses how the pervasive use of geo-locative information and geo-spatial technology/software function in capacities which territorialise and code these assemblages to regulate the digital objects which they produce (as well as the workflows that produce them). Or, after Latour, as the means of ‘keeping something constant through a series of transformations’. 35 In so doing it not only details how these processes work, but further keys in on the central frictions between the contingency or modularity of these multi-sited workflows and the processes of territorialisation and coding that enable them to produce ‘stable’ digital objects fit for science.

‘Acquiring’ data in the field, assembling the field through data

Between 2018 and 2019 I made monthly trips to the offices and field sites of the Discovery Programme, Ireland and the Royal Commission on Ancient and Historical Monuments Wales to study their efforts to survey sites identified by Cherish as threatened or eroding. I travelled with teams of surveyors and archaeologists in Wales and Ireland to observe on-site digitisation/survey protocol and the subsequent processing of data into what would become formalised 3D models. Across Cherish, practitioners use the term ‘acquisition’ to describe the capture and derivation of the initial datasets. It is a choice of words which indicates something crucial about the way they conceptualise fieldwork and surveys; namely, that the data they require is always already present at field sites, simply waiting to be ‘acquired’. In my observations, however, I found the enactment of data acquisition to actually be predicated not only on a field site being assembled, but that the resulting data is consequently produced through these acts of assembling. To produce data through UAV survey, Cherish practitioners assemble a number of spatial concepts to define their chosen ‘sites of acquisition’. These acts of spatialisation result in the production of what this article refers to as ‘spatial registers’. Each of these acts of spatialisation can also be further understood as acts of territorialisation. These spatial registers not only determine how practitioners engage with landscapes they survey but serve as the primary means through which the data produced becomes standardised and mobile, thus enabling it to be distributed across other sites of production and reassembled as models later. In contrasting the empirical details to follow with the theoretical tools outlined above, the paper illustrates not only how digital heritage objects are produced, but outlines the specific bearing such processes and their enactment have on the ontic status of the digital heritage objects Cherish renders.

The first of these registers are the ‘unproblematic’ or ‘self-defined’ spatialities Cherish practitioners associate with the landscapes their surveys target. Often the boundaries of sites of survey are determined by the ‘natural’ shape and orientation of the headlands and promontories. The second of these registers are the ‘abstract’ or ‘virtual’ spatialities practitioners bring to the field. Here practitioners register field sites of survey in reference to global positioning satellite networks to ascribe geographic coordinate systems and regional map projections to the data produced. Practitioners then assemble local spatial networks by registering fixed coordinate readings for key markers distributed across a site of acquisition, thus enabling them to geo-reference key features identifiable in photographs throughout subsequent stages of processing. Finally, there are the spatial registers produced by the acts of measurement inherent to the numerous methods of field photography necessary for UAV survey. Tracing the assembling of these spatial registers at field sites reveals both how the datasets iteratively produced in the field come to relate to one another and how the terms of their spatial relationality define their capacity to be reassembled and visualised later. Furthermore, in using the conceptual tools provided by both circulating reference and assemblage theory to examine these processes (and in reading them together specifically) we see how the resulting digital objects are ontologically shaped by the iterative stages of their production and the distinct interrelationship of these discrete stages.

When a team of Cherish practitioners arrives on site, the process begins by calibrating (via satellites) the GPS antennas used throughout the survey. This is achieved by either locating Virtual Reference Stations or by establishing Real Time Kinematic networks in the field. Virtual Reference Stations function as ‘fixed’ locations configured by collating local satellite clusters adjacent to the survey sites. Upon returning to the office, the coordinates produced in the field are then post-processed against the fixed location data provided by the VRS, thus refining geo-locative measurements to thresholds of accuracy within centimetres. Real Time Kinematic Networks, by contrast, are used when targeted sites are out of range of VRS services. Using a mobile base-station to collate readings from local satellites at the time of the survey, the constructed RTK functions as a fixed coordinate from which real-time locative adjustments can be made to the coordinate readings produced by the roving antennas practitioners carry (Figure 2). In either case, the preliminary steps of data acquisition are likewise defined – via different means – through a process of first establishing an ‘abstract’, or ‘virtual’, spatial register at the site of survey. The significance of this lies in how the prescribed steps that follow are defined by documenting different performances of movement and position in reference to this spatial register.

The RTK unit used by the RCAHMW pictured here at Castell Bach, an iron-age promontory fort in Ceredigion, Wales. Photo by Sterling Mackinnon.

The next stage of UAV survey concerns the distribution and registration of what are known as ‘control targets’. Control targets are designed to first be visible to the drone, and secondly within the images that the drone produces (Figure 3). The primary function of the control target is to fix a coordinate reading to an object that will be visually identifiable to the practitioner at later stages of processing. Practitioners do this by first staking the targets to the ground and using a staff-mounted GPS antenna to register readings for the eastings, northings and elevations of isolated points distributed across a site of survey (Figure 4). Each target and its corresponding coordinate set is then given a code and stored with the remaining codes/targets on an SD card. And while the drones used to photograph sites also automatically inscribe coordinate data into the images they produce, these coordinates are not accurate enough to ensure that the resulting datasets and models can be comparatively analysed with data produced from other surveys. As the primary means through which resulting models become situated in the geographical coordinate systems of computational space, control targets serve two primary field functions. Most of the targets compose what will determine boundaries of the resulting models, while a select few of the remaining targets are deployed within these boundaries to check and confirm the accuracy of the coordinates later populated throughout the model. Taken collectively, the distribution and arrangement of control targets across a site of survey comprise the third of the aforementioned spatial registers used in the acquisition of photogrammetric data. Namely, the ‘local’ and networked spatial registers which, in turn, ensure the subsequent registers of ;measurement and description’ inherent to site photography are accurate and commensurate with the other datasets from past or future surveys. The production of the local spatial register is critical for stitching together the overall multi-sitedness of this work (in the sense of that which happens onsite and that which happens later, elsewhere, at a computer). The effect of this spatial register on the workflow as a whole could be illuminated via the conceptual tool of circulating reference alone. However, what is far more significant is the territorialising impact (via DeLanda) this register has on the organisation and reproducibility of such workflows when viewed in the aggregate.

Control target T109, one of the 10 control targets Robert registered at Dunbeg Fort in Co. Kerry, Ireland. Photo by Sterling Mackinnon.

Coordinate and target T-108, Dunbeg Fort. Photo by Sterling Mackinnon.

From here practitioners use their drones to produce orthogonal and oblique overviews of the field. The orthogonal datasets function to not only exhaustively photograph the ‘planar’ surfaces of the site, but to ensure that all control targets placed across the field are visually documented (Figure 5). The oblique datasets, by contrast, function to ensure that the sloping contours of targeted sites (such as cliff faces) are also documented (Figure 6). At a survey’s conclusion practitioners leave the field with the coordinate data documenting the location control targets and both visual datasets. The capacity for these datasets to be visualised together and eventually cohere as three-dimensional digital objects with prescribed uses depends on the distribution and translation of the spatial registers produced in the field and registered through the use of geo-locative technologies and data.

An orthogonal image from Dunbeg Fort. Adjacent to the stone feature is control target T-113 with its respective northings and eastings. Photo by Robert Shaw, Discovery Programme.

An oblique image from Dunbeg Fort. Photo by Robert Shaw, Discovery Programme.

Reassembling the field in the office

Where the above section details how geo-spatial data is distributed in the field, this section addresses how this data comes to function off-site. When a UAV survey project moves from the field to the office, the data produced on-site is transferred from the drones and GPS antennas to the databases where they will remain stored. During my time observing The Discovery Programme and the RCAHMW, both institutions primarily used Agitsoft’s ‘Photoscan’ software to produce photogrammetric models from their UAV surveys. 36 As soon as Cherish practitioners begin enacting their protocol for photogrammetry, the data they have ‘acquired’ in the field is subjected to a series of translations. Some of these processes are almost entirely black-boxed to the practitioners; they simply submit data inputs into the software and the program does the rest. Other operations are highly dependent on the decision-making and technical skill of the practitioners. From the moment a photogrammetry project moves from the field to the office, it becomes one of processual refinement. The oblique and orthogonal images are processed against one another to eventually produce point clouds, meshes and cohesive polygonal surfaces. The coordinate data for the control targets registered on-site is iteratively distributed across the models as they develop from clouds to meshes, and surfaces. Traced carefully, these stages of digital object visualisation reveal themselves as one of intricate ‘circulating reference’. Yet, after DeLanda, it is the coding and territorialising influences of the geo-locative instruments, methods and data used throughout which enable practitioners to abstract a physical heritage site into a digital heritage object with ranging applications.

Before practitioners import any of the field data, they begin by calibrating the parent coordinate system of Photoscan’s digital workspace. The software defaults to WGS 84 (World Geodetic System) as a basic means to geo-reference the inputted data, but can be further configured to the map projections that better fit with the data acquired. For the Discovery Programme, and all data gathered in Ireland, this means, for instance, configuring the workspace to the Irish Transverse Mercator projection. Any adjustment to the parent coordinate system and map projections indicates how the ‘abstract’ or geo-referenced spaces of the interface must correspond to the virtual/abstract spatial registers produced in the field (via Virtual Base-Stations or Real Time Kinetic).

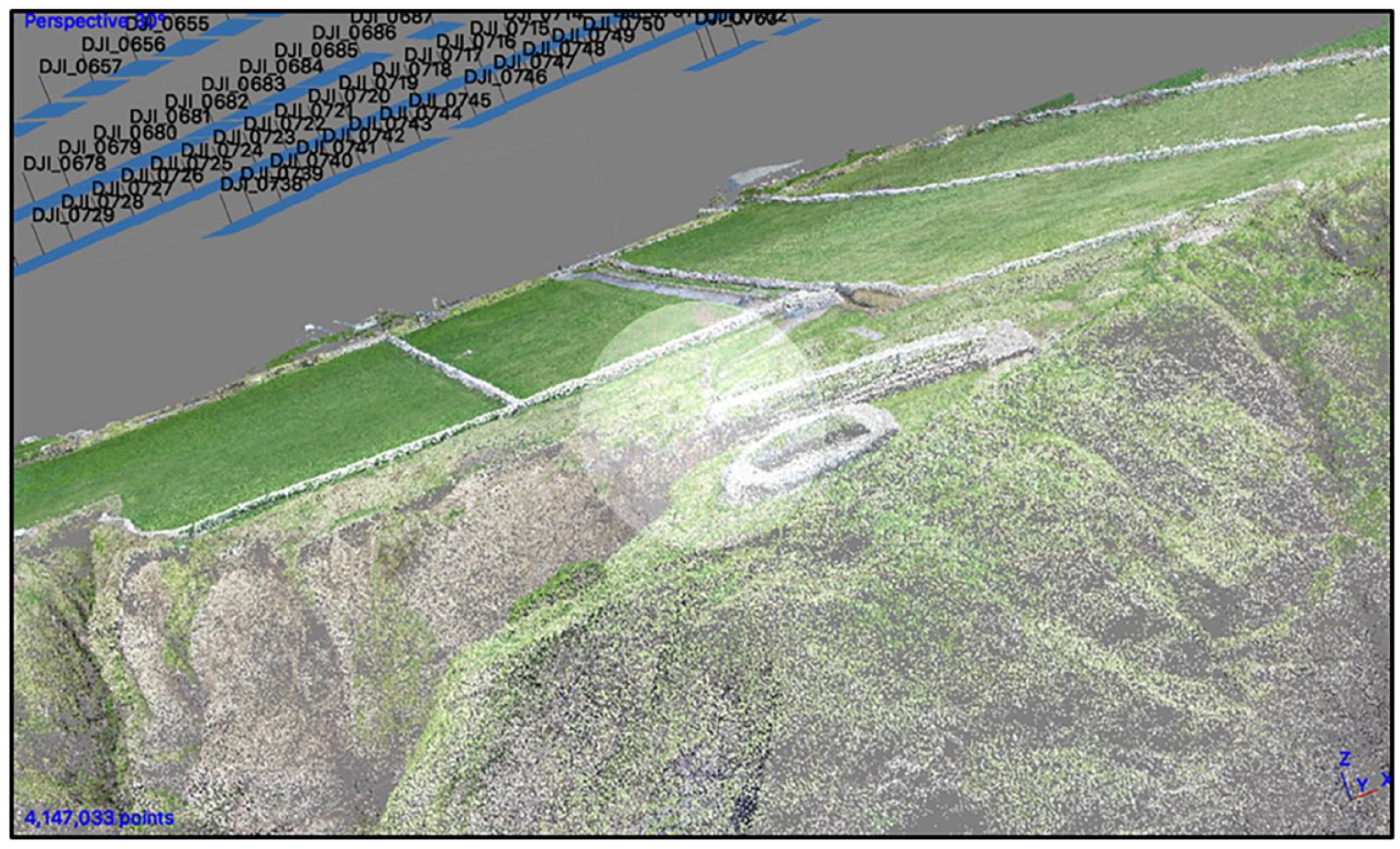

The next stage is ‘photo alignment’. Here both the visual datasets are submitted to the software for a process where the GPS coordinates inscribed in the images by the drone are used to orient each of the individual images in the now geo-referenced workspace of the software. What is at play here is a unique fitting-together of an abstract spatial register (the calibrated coordinate system for the workspace) and the descriptive spatial register inscribed in the image as a result of the drone’s performance. Furthermore, during the ‘photo-alignment’ stage, each of the images is processed against one another to derive first what are known as ‘key-points’ 37 (significant/identifiable features normally selected based on texture or contrast). The software then processes the key-points to derive ‘tie-points’, 38 which are produced via the pixel matching of image-to-image comparison, with shared features located across two or more photos being extracted as geo-referenced points. Taken in the aggregate, these points come to comprise a ‘sparse cloud’. Sparse clouds are massive when compared to the datasets from which they are derived. The input data for a UAV survey project of Dunbeg Fort, for example, began with 256 images and the coordinate data for eight control points. Once processed, however, the resulting point cloud was comprised of 4,147,093 tie-points (Figure 7). While the coordinates attached to each of the tie points in a given sparse cloud are capable of cohering as a shape that looks like the site surveyed, they will not meet the standards of accuracy set by Cherish until the control target data is distributed across the cloud.

A screen grab of a sparse cloud after alignment. This cloud is composed of 4,147,093 points. Model by Daniel Hunt, photo by Sterling Mackinnon.

To do so, practitioners reproduce the local reference networks they assembled in the field with the control targets. Cherish practitioners begin by gathering a series of reference materials produced earlier in the field. Both visual and non-visual, these materials are principally composed of the coordinate data acquired by their roving GPS antennae, as well as photographs taken of these physical locations with key identifiers visible in the background. Here each target is coded with a name (T1–Tn), as well as values for easting, northing and elevation. The work of deploying control within the sparse cloud requires finding the targets within the photographs that have already been uploaded. At this stage Photoscan can visualise both the sparse point cloud and the respective ‘positions’ of the photos from which the cloud was derived. Matching the correct coordinates with the correct images of the targets, however, is often complicated by the fact that the code names emblazoned on the targets are commonly not identifiable within the images returned from the drone. This requires consulting the supplementary images the practitioners have produced in the field of the targets as they were being registered. Collectively, then, the process requires pinging between three distinct visual sets of data: the sparse cloud, the images from which the sparse cloud was derived and the field photographs.

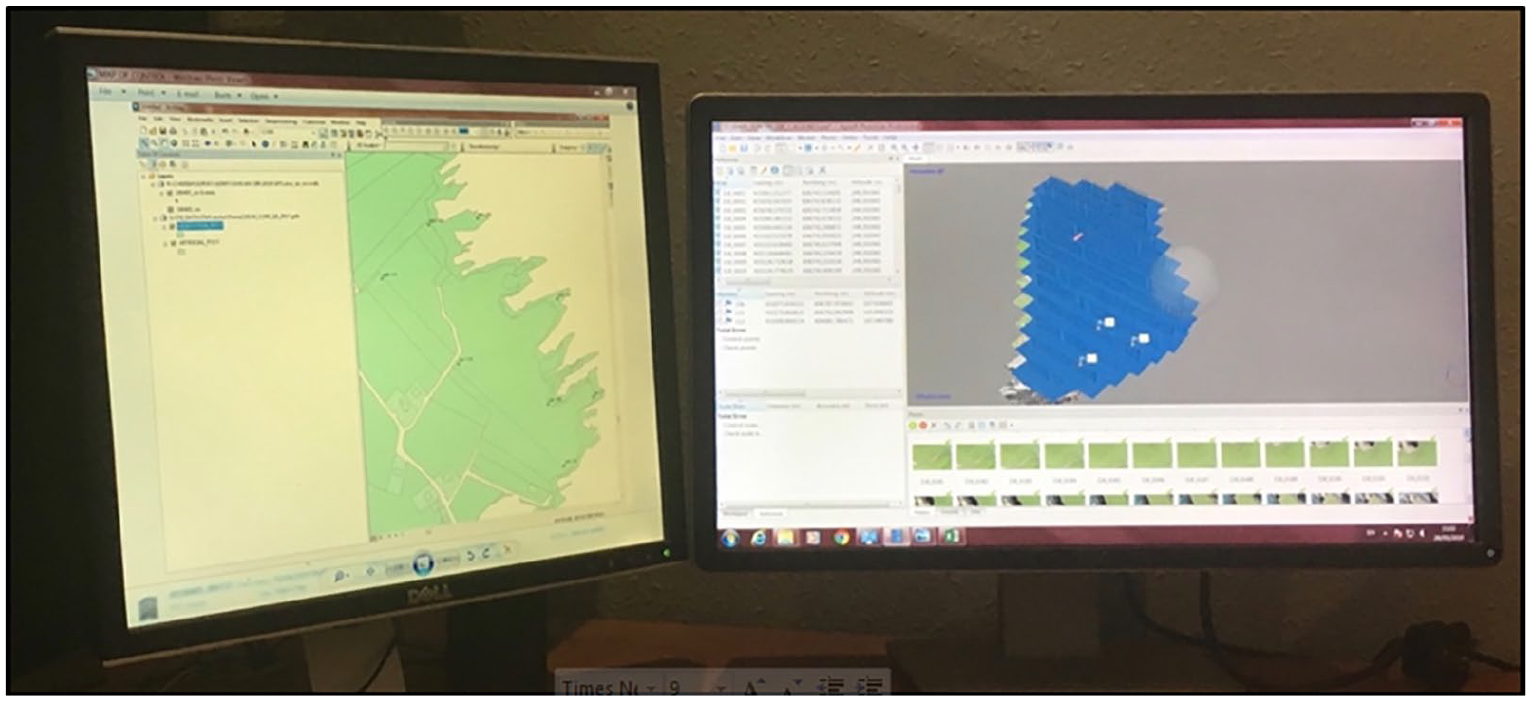

In some cases Cherish practitioners will expedite the process of locating control targets within the drone images by sending the coordinate data to ArcMap, to produce a simple map indicating where in the landscape the original targets had been deployed. Crucially, however, is the degree to which Cherish practitioners frame the use of such methods as a means to reproduce and re-track the original acquisition of control targets in order to re-apply them in the digital context. Navigating this collection of visual tools, Cherish practitioners re-trace the steps of their initial acquisition, locating the targets in the appropriate photos and attaching the corresponding coordinates to the appropriate pixels in the digital images (Figure 8). From here, the software distributes the placed markers across all images with pixel matches, ultimately adjusting the geo-spatial alignment for the entire cloud to their desired standards of accuracy.

Cherish practitioners use maps like the one on the left as reference tools while locating the corresponding control targets within the images and the sparse cloud generated on the right-hand monitor. Photo by Sterling Mackinnon.

In order for sparse clouds to truly become ‘formalised’ as tools for geo-morphological analysis or public outreach, however, they must go through several more steps. First, sparse clouds are both ‘cleaned’ and ‘optimised’. Here the sparse clouds are submitted to a series of operations designed to identify the distribution of error and remove faulty points ahead of further processing. Both procedures are remarkably black-boxed to the user. These cleaned and optimised sparse clouds are then resubmitted to the software to be visualised as ‘dense clouds’. Dense clouds are rendered in much the same way as the initial sparse clouds; key points and tie points are derived from the same primary visual outputs, but this time in tandem with the cleaned and optimised sparse cloud as well. To the untrained observer, dense clouds appear remarkably similar to their sparse counterparts. But inspect the number of tie-points and their relative complexity becomes apparent. On average the total geo-rectified tie-points composing a dense cloud increases by a factor of 50. 39 While not explicitly final outputs, Cherish uses dense clouds as the primary templates from which a great number of models and outputs can be derived. Their value, then, is defined by their propensity to be re-structured and re-visualised towards different ends.

What is particularly noteworthy here is how the gradual cohesion of a photogrammetric digital heritage object is predicated on the processual distribution and proliferation of geo-locative data across all visual data inputs and outputs. The two processes are mutually generative. Following John Law, the derivation of digital heritage objects ‘also implies the production of a particular kind of space’. 40 By its complexity, the inter-relationality of the datasets from which its derived, and the thorough distribution of accurate geo-locative data within it, a dense point cloud is both a digital object and geographic coordinate system in its own right. To follow the process from the ‘acquisition’ of survey data, to the refinement and rendering of that data as objects, is – in Yuk Hui’s terms – to observe the ‘datafication of objects’ transition into the ‘objectification of data’. 41 Moreover, in tracing the formalising agency of geospatial data and software across these processes, and in reflecting them back onto the theory assembled in this article, something new materialises. Namely, that geospatial tech and data not only territorialise the digital objects produced via these methods, but the relationships practitioners maintain with hardware and software at key stages.

V. Conclusion

Building upon crucial literatures from the critical GIS and digital sectors of geographical scholarship, this article makes key contributions to how the theoretical orientations of the discipline might evolve towards new understandings of data and digital cultural objects. In all likelihood, the digital turns of archaeology and the heritage sciences will become more pronounced as new technologies are folded into their many respective practices. As a result, it is fair to expect that the proliferation of digital heritage objects will increase. Already, the various outputs of practices like the Cherish project are defined by their dazzling photo-realism, by the uncanny likeness they often bear to the physical sites upon which they are based (an affect often heightened when the ‘source material’ for such models has been compromised, eroded or destroyed). It is imperative, however, that both the academy and the public resist the temptation to approach these digital cultural objects as strictly expository, as easily knowable, as neutral or objective facsimiles for ‘real’ artefacts or sites elsewhere. We must approach them as digital objects composed of data. Data which, as this article has detailed, are mobile, mutable and further defined by the assemblage of components, tools, processes and platforms gathered to render them as objects.

This article has traced the production of digital heritage objects from ‘acquisition’ and through their subsequent processing across software and methods. It has described how the production of digital heritage objects is not only thoroughly multi-sited, but a practice further defined by site-specific contingencies. Most importantly, this article has followed the specific use of geospatial technologies across these practices and, in so doing, has traced how such practices are rendered capable of producing standardised and research-ready outputs. It has demonstrated just how central the use of geospatial tools, data and methods is to the production of these digital cultural objects.

Part of this project is also about acknowledging that methods used to produce digital heritage objects are defined by factors of contingency. While practices like those described reliably resolve in accurate high-resolution outputs, the workflows that produce them are highly modular and capable of being imagined very differently. Furthermore, this article also demonstrates that the objects themselves are not very transparent, and that to consider the ontological status of digital heritage objects often requires the type of research that produced the empirics used in this article.

Despite their weighty basis in the cartographic sciences and processes, which can also be accurately described as methods of ‘mapping’, these objects are not maps. They are not used for navigation and with few exceptions are rarely used as elements or features in mapping interfaces. 42 And so we return to consider the broader consequences of using geospatial technology, data and methods, to articulate heritage. For one, this article has demonstrated how such factors, after Bruno Latour, function as key points of reference for heritage data as it travels between sites and across interfaces. In another, following Clancy Wilmott, the inherently geometric basis of coordinate-based data further serves to ensure the valency and scalability of geospatial information. When taken together with the assemblage thinking of Manual DeLanda, the distribution of geospatial data across such workflows are further revealed to both territorialise and/or code the multi-sited assemblages they produce. In both reorienting such work around theorists like Latour, DeLanda and Hui and contextualising such readings in the empirical work this article volunteers, something unique and useful materialises. Namely, geospatial technologies, methods and data are revealed as agents of territorialisation and coding, the likes of which elide the situated, performative and contingent nature of cartographic sciences with the derivation of discreet digital heritage objects.

The increased reliance of both the archaeological and heritage sectors on geospatial technologies raises fascinating questions about the types of knowledges these objects carry. How does the legacy of geospatial technology complicate questions about access and expertise? How might heritage modelling practices be subverted (as digital mapping practices have in other sectors and contexts)? How do the precision and formality so often associated with such technologies complicate the messy and contested debates around heritage? There is still so much be done, so much to ask and consider, and geographers, I believe, should play a large part in taking it all on.

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethics statement

This work was produce research undertaken during the author’s DPhil which was approved by The University of Oxford’s Central University Research Ethics Committee (CUREC).