Abstract

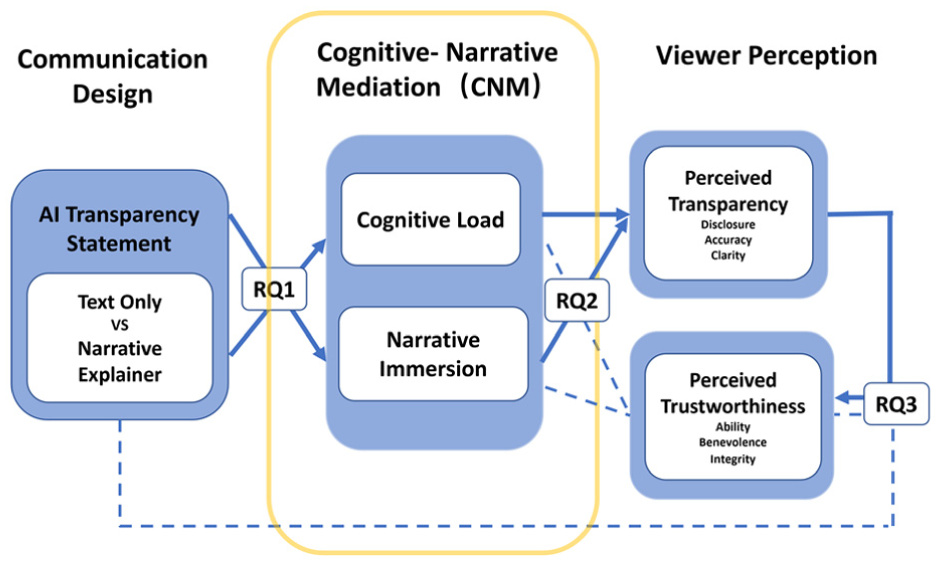

As artificial intelligence (AI) becomes increasingly embedded in public-sector services, governments face a communication challenge that extends beyond disclosure: how to support citizens’ interpretation and evaluation of these systems. Although initiatives such as the Australian government’s AI Transparency Statements (ATS) policy aim to promote accountability, it remains unclear whether conventional text-heavy disclosures effectively support public understanding and trust. This study advances a Cognitive–Narrative theory of AI transparency communication by introducing the Cognitive–Narrative Mediation (CNM) framework, which explains how visual communication formats shape perceived trustworthiness through users’ experiential processing. Drawing on cognitive load theory and narrative engagement research, perceived transparency is conceptualised as a psychologically constructed judgement associated with two experiential pathways: cognitive ease and narrative immersion. In a controlled experiment (

Keywords

Introduction

Governments worldwide are struggling with how to govern artificial intelligence (AI) in ways that earn public trust. In Australia, for example, a recent survey found that only about one-third (36%) of Australian respondents were willing to trust AI, even though most Australians strongly endorse fairness, transparency, accountability, privacy, and security as prerequisites for trusting AI. 1 In response, governments have introduced mandatory disclosure mechanisms. AI Transparency Statements (ATS) are public-facing disclosures that describe how government agencies deploy and govern AI systems, including their purpose, application context and oversight mechanisms. Under the Australian Government’s policy framework, these disclosures are intended to support public trust. 2

However, transparency has long been recognised as a double-edged sword. Simply increasing the volume or technical detail of disclosed information may backfire. When transparency is presented in overly dense, complex, or poorly framed formats, citizens may feel confused or overwhelmed. This tension is often described as the transparency-trust paradox, suggests that transparency outcomes depend not only on what is disclosed, but also on how disclosure is communicated and experienced by citizens.3–7 Despite the Digital Transformation Agency’s (DTA, 2024) mandate that AI transparency statements serve to build public trust by providing clear disclosures, it remains unclear whether these conventional, text-heavy formats are effectively meeting this objective. Prior work shows that transparency outcomes depend on framing, clarity, cognitive effort and communicative design, not solely on the completeness of disclosed content.8,9 Though Tomlinson and Schnackenberg 10 established the sequential link from transparency to trustworthiness, the communicative precursors that trigger this process in AI governance remain unexplored.

Existing research on trust in automated and algorithmic systems has primarily examined what is explained about AI, such as explanation facilities, 11 transparency and interface factors, 12 and formal models of human-AI trust. 13 Much less attention has been paid to trust in the medium of disclosure itself: the communicative interface through which AI is explained to the public. From a human-computer interaction perspective, this implies that user trust is shaped not only by a system’s performance but also by the interpretability and usability of the explanation layer.14–16 A substantial body of work in multimedia learning, narrative communication and visualisation demonstrates that visual and multimodal formats shape comprehension, engagement, and cognitive effort.17,18 Communication-design research further suggests two experiential pathways—cognitive effort and narrative engagement—through which format may influence trust-related perceptions, aligning with dual-process models.19,20 Consistent with these insights, narrative videos naturally integrate the design features associated with both pathways by combining spoken narration, visuals, and pacing, and are associated with lower extraneous cognitive load and higher engagement.16,18,21 In addition, by embedding information in narrative structures like scenarios, characters, or everyday examples, they may also foster engagement and experiential involvement.22,23

To formalise these perspectives, we introduce the Cognitive-Narrative Mediation (CNM) framework, which models trustworthiness judgements in AI transparency communication as a dual-pathway process grounded in cognitive processing and narrative transportation theories.22,24 The CNM framework posits that perceived transparency is a psychologically constructed state rather than a static message attribute. We argue that effective media design first shapes two experiential pathways—cognitive ease and narrative immersion that together function as the psychological antecedents associated with transparency perception. In other words, CNM explains not whether transparency leads to trustworthiness, but how transparency comes to be perceived in the first place. Importantly, the CNM framework is not concerned with maximising trust or to promote persuasive manipulation in AI governance communication. Instead, it focuses on how transparency disclosures are experienced and interpreted by citizens. CNM distinguishes communicative legibility from persuasion, and conceptualises trustworthiness as an evaluative outcome that should be appropriately calibrated, not strategically amplified. From this perspective, the framework seeks to reduce unnecessary cognitive friction and interpretive opacity, thereby supporting conditions for informed and appropriate trust rather than unquestioning acceptance.

Empirically, this study contributes an initial experimental test of the Cognitive-Narrative Mediation (CNM) framework by operationalising its proposed mediating mechanisms through an AI transparency statement issued by the Australian Bureau of Statistics (ABS). We compare a text-only disclosure with an equivalent disclosure supplemented by a narrative-style video explainer conveying the same core information. From a visualisation perspective, this comparison represents a bundled shift in communication format rather than an isolated manipulation of narrative elements. Narrative framing is therefore treated as one component within a broader format bundle that also includes multimodal presentation, guided attention and fixed temporal pacing. Accordingly, our claims are restricted to format-level effects consistent with the CNM framework. This design allows us to examine whether communication format differences are associated with changes in perceived cognitive ease, narrative immersion and perceived transparency, and whether these psychological responses are linked to evaluations of agency trustworthiness. While behavioural trust is an important downstream outcome,10,25 the present study therefore focuses on perceived trustworthiness as the immediate evaluative outcome.

Related work

This section reviews how transparency has become a core principle in AI governance and how disclosure requirements have been formalised across jurisdictions, often without specifying how information should be communicated. We then draw on organisational trust research to distinguish perceived trustworthiness as an ability-benevolence-integrity (ABI) judgement and transparency as a disclosure-accuracy-clarity (DAC) construct. Building on these foundations, we review communication design and dual-process theories to discuss that trust in AI transparency is associated with two routes: cognitive effort and narrative engagement.

Transparency in AI governance

Transparency has become one of the most widely endorsed principles in AI ethics and governance. Jobin et al. reports that 73 of 84 global AI guidelines reference transparency or explainability

26

and Fjeld et al. likewise finds near-universal emphasis on these themes across major AI principles.

27

A review of nearly 200 governance documents by Corr

However, translating transparency into practice is more complex than defining it as a principle. Legal restrictions and privacy considerations constrain what can be disclosed, while the presentation of information critically shapes whether citizens can understand it.29,30 Excessive technical detail may overwhelm lay audiences, resulting in avoidance or disengagement.31–33 Conversely, insufficient context or ambiguous language can be perceived as evasive or untrustworthy.5,34 In response, governments have formalised transparency obligations through instruments such as algorithmic impact assessments, algorithmic transparency recording standards, and AI use inventories.35–37 Australia’s Digital Transformation Agency (DTA), for example, requires agencies to publish AI transparency statements explaining the purpose, context and oversight of AI systems. 2 Despite these mandates, most implementations rely heavily on text-based documentation, raising questions about whether citizens meaningfully benefit from such disclosures. 38

Empirical evidence on the public impact of AI disclosures remains sparse. As recent studies underscore, there is a persistent gap between the proliferation of disclosure mechanisms and their practical efficacy: while transparency initiatives continue to expand, systematic evaluations of how they actually shape citizens’ understanding or foster institutional trust remain limited.39,40 A meta-analysis of 49 studies shows that transparency is associated with higher trust on average, but the effect is variable and can turn negative under information overload. 7 This gap motivates the present study: rather than examining what is disclosed, we focus on how the format of disclosure shapes perceived transparency and judgements of trustworthiness.

Perceived trustworthiness and transparency

Understanding how citizens judge an agency requires a clear distinction between trustworthiness and trust. Foundational work conceptualises trustworthiness as a belief-based assessment comprising three dimensions: ability (competence), benevolence (good intentions), and integrity (adherence to principles), while trust refers to the willingness of a party to be vulnerable to the actions of another, based on the expectation that the other will perform a particular action. 25 Although conceptually distinct, trustworthiness judgements constitute the belief-based evaluations that precede and inform decisions to trust. Accordingly, this study focuses analytically on trustworthiness while drawing on established theories of trust formation where relevant. Within this framework, trustworthiness perceptions (ABI) function as the antecedents of trust, such that weakening any dimension may undermine the foundation for trust.10,41 Parallel to trustworthiness assessments, transparency can be conceptualised through the Disclosure-Accuracy-Clarity (DAC) model. 41 These properties map onto ABI-based judgements: disclosure signals integrity, accuracy signals competence and clarity supports comprehension while also conveying epistemic respect for citizens. When effectively designed, transparency disclosures foster “appropriate trust,” avoiding both blind faith and excessive scepticism. 12

Traditionally, prior research has approached transparency as a property of disclosure practices, operationalised through objective indicators such as the availability and extent of information provided. 29 However, an emerging body of work reconceptualises transparency as a subjective psychological judgement formed by how audiences perceive, interpret, and understand disclosed information.5,6 From this viewpoint, transparency should not be understood merely as the amount of information disclosed, but as an emergent outcome shaped by how that information is cognitively processed and experienced through the interface. 42 Consequently, the willingness to trust is not determined solely by whether an AI system is transparent in principle, but is more closely related to whether citizens perceive it as transparent, which is reflected in their trustworthiness (ABI) evaluations.

Communication design foundations for AI transparency

Across communication design, visualisation, and HCI, trust-related formation is increasingly conceptualised as involving two complementary routes: an effortful, analytical route and a more intuitive, experiential route. This distinction is consistent with classic dual-process accounts, including the elaboration likelihood model (ELM) 19 and dual-process perspectives, 20 which differentiate central/System 2 processing (deliberate, systematic evaluation) from peripheral/System 1 processing (fast, cue-based and experience-driven). Recent work on trust in visualisation aligns with this view, showing that visual design cues influence trust judgements through both analytical assessments and more heuristic, impression-based processes. 43 Within the Cognitive-Narrative Mediation (CNM) framework, these two routes are conceptualised as concurrent experiential pathways. The analytical pathway captures how communication format shapes the cognitive demand of interpreting an AI transparency statement, operationalised as perceived cognitive load and processing difficulty. The experiential pathway reflects how narrative, affective, and social cues embedded in communication formats shape trust-related perceptions, operationalised through narrative immersion. Together, these pathways represent two conceptually distinct yet complementary experiential mediators through which communication format is associated with perceived trustworthiness.

Cognitive load in AI transparency communication

Although transparency aims to build trust, poorly structured or overly technical disclosures often have the opposite effect. Researchers refer to this as the transparency-trust paradox.29,44 Schilke and Reimann describe a similar transparency dilemma, where technical over-disclosure erodes legitimacy. 45 This dilemma is rooted in organisational behaviour, where disclosure formats often prioritise formal compliance with institutional pressures rather than optimising for citizen comprehension and experiential ease.29,44 Empirical evidence also suggests that clarity plays a critical role in shaping the effectiveness of transparency: confusing or overwhelming disclosures undermine comprehension and, consequently the intended benefits of transparency.30,31

Cognitive Load Theory provides a cognitive explanation by distinguishing intrinsic complexity from avoidable extraneous load introduced by poor design.24,46 In the context of AI transparency statements, dense and bureaucratic disclosures are associated with extraneous load, making explanations more difficult to process. Consistent with this pattern, research in transparency contexts finds that simpler explanations often outperform more detailed ones. 42 From a communication-design perspective, clarity, structure and processing fluency play a central role in shaping cognitive evaluations. Messages that require less effortful processing tend to be evaluated more positively, including higher perceived competence and reliability. 47 In algorithmic-governance contexts, citizens frequently tend to prefer understandable explanations over technically complete ones. 14 Drawing on this body of work, multimedia learning principles such as segmentation, visual-verbal integration and dual-modality narration are frequently linked to lower perceived extraneous cognitive load and improved comprehension in explanatory communication.21,48 In AI decision-making contexts, prior research indicates that cognitive load plays an important role in AI-related judgements. 49 In this study, perceived cognitive load is operationalised as a self-reported construct rather than an independently manipulated experimental factor.

Narrative immersion in AI transparency communication

Beyond analytical comprehension, communication formats can also shape trust through experiential and affective mechanisms. Prior research shows that people tend to process narrative information in a more intuitive and experiential manner than abstract exposition, supporting meaning-making, perceived understanding and causal coherence.22,50,51 Specifically, narrative structures such as characters, motives and causal arcs, organise complex information into coherent wholes that support engagement, interpretation, and meaning-making.52,53 Building on these mechanisms, research on government and service chatbots indicates that conversational, narrative-style interfaces are associated with citizens’ trust-related evaluations and acceptance of AI-mediated public services.54–56

Narrative transportation theory conceptualises immersion as a state of focused attention and emotional involvement in which audiences become absorbed in the narrative world.22,23,57 Immersion has been shown to reduce counter-arguing, increase identification, and amplify affective responses, which together shape evaluative judgements.22,57 Consistent with these mechanisms, applied research in health and public communication further indicates that narrative framing is linked to higher perceived credibility and interpersonal warmth, largely through emotional engagement and social identification. 17 From the perspective of interface design, complementary evidencrom human-computer interaction and automation research indicates that trust-related evaluations are shaped not only by informational content, but also by experiential cues embedded in system design. Studies further show that human-like expressiveness and anthropomorphic features have been linked to higher perceived authenticity, social presence and trust by shaping users’ experiential evaluations,58–60 and potentially nudging attitude change. 61

Notably, engagement and factual learning do not always move together, as suggested by recent work on video-based communication and interpretive experience. 62 Research on video-based communication shows that highly engaging explanatory formats often enhance enjoyment, perceived understanding and credibility judgements more than they improve objective factual comprehension accuracy.63–65 This dissociation highlights the need to distinguish experiential and analytical pathways. In the CNM framework, narrative immersion does not directly generate trust; instead, it mediates how communication formats shape the perception and evaluation of transparency explanations.

Conceptual model and research questions

Based on these insights, we designed an empirical study (Figure 1) using an authentic Australian AI transparency statement as the stimulus to provide an initial empirical test of the CNM framework. The predictor is communication format (text-only version and text-plus video version). As illustrated in Figure 1, the research questions (RQ1, RQ2, and RQ3) are positioned at the intersections of the pathways to highlight the critical nodes where psychological transformation occurs. Figure 1 presents the CNM framework as a process-oriented model that links communication format to judgements of trustworthiness through experiential mediation. Specifically, the framework specifies two parallel experiential pathways through which communication format is associated with perceived trustworthiness:

1. Cognitive pathway: format → perceived cognitive load (cognitive ease) → perceived transparency → perceived trustworthiness;

2. Narrative pathway: format → narrative immersion → perceived transparency → perceived trustworthiness.

Empirical test of the CNM framework via a dual-pathway mediation model.

In this conceptual model, the solid lines represent the hypothesised sequential associations specified by the CNM framework, while the dashed lines indicate the direct effects, which we anticipate will be secondary to the transformative power of the mediators. By employing serial mediation analysis, we test the sequential flow from communication format to experiential processing, and subsequently to perceived transparency and trustworthiness.

Research questions

Based on these insights, we formulate three research questions. RQ1 (Format Effect): Is supplementing a text-based ATS with an add-on video explainer format associated with higher perceived trustworthiness than a text-only format? RQ2 (Dual-Pathway Mechanisms): To what extent are perceived cognitive load and narrative immersion statistically associated with perceived trustworthiness as parallel experiential pathways linked to communication format? RQ3 (Translation Mechanism): How does perceived transparency bridge the gap between citizens’ processing experiences and their trustworthiness judgement? The following section describes the study design and analytical approach used to examine these relationships empirically.

Method

Participants

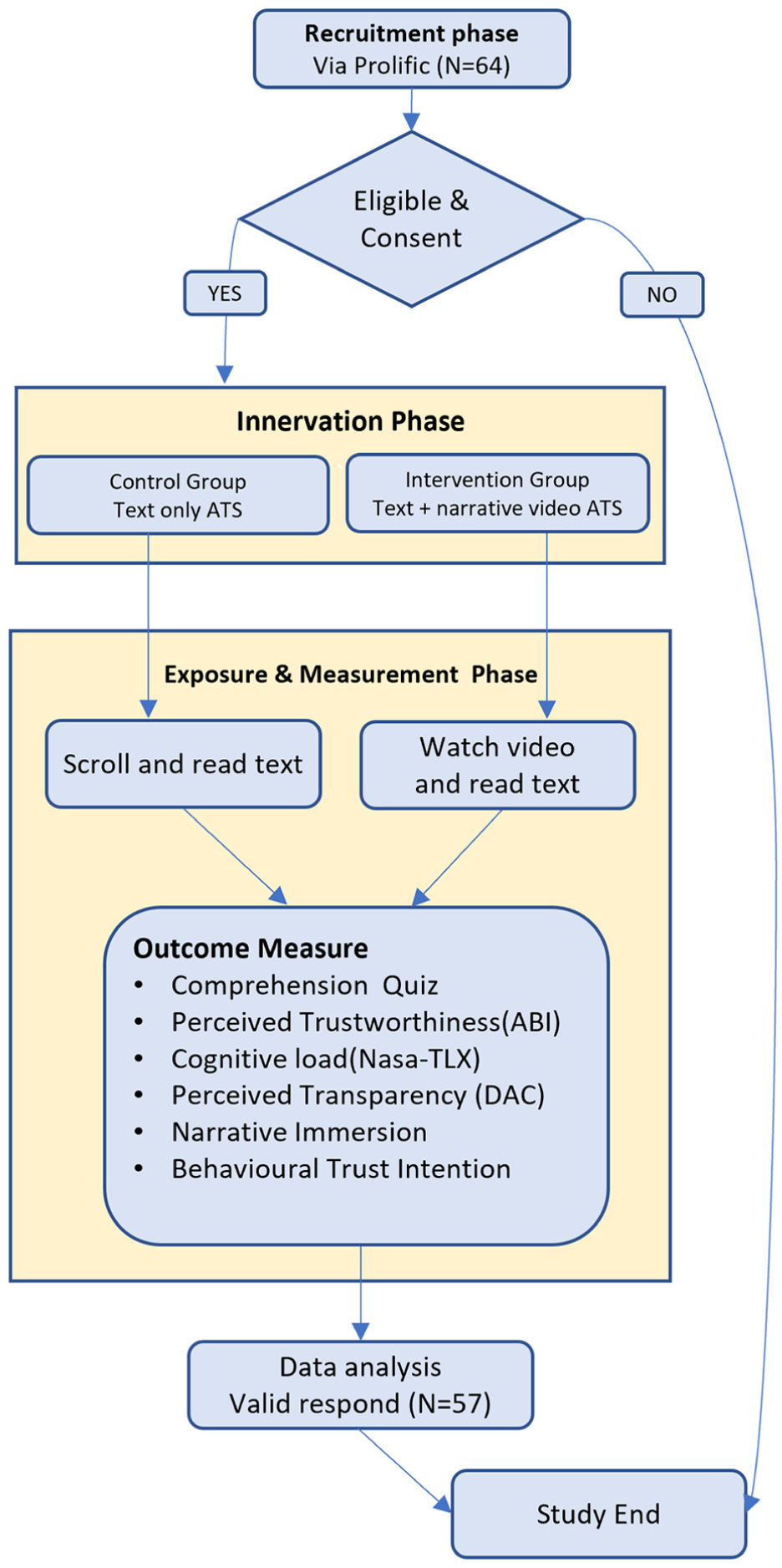

Participants were recruited via Prolific and restricted to English-speaking adult residents (aged 18 years or older) in Australia, in order to match the policy context examined in the study. To ensure response quality, participation was limited to individuals with a Prolific approval rate of at least 95%. A total of 64 participants completed the study. Seven cases were excluded (three from the text condition and four from the narrative condition). Specifically, responses with abnormally short or long completion times were removed, as were responses with extremely low factual recall performance (fewer than two items correct on the factual recall quiz). This resulted in a final sample of

Design and stimuli

We conducted a randomised controlled experiment with two between-subjects conditions. The independent variable was transparency statement format:

Text-Only Condition (baseline format): Participants in this condition read a conventional AI transparency statement adapted from the Australian Bureau of Statistics, produced under the Digital Transparency Guidelines. The document was approximately 400 words in length and presented all standard components required by the guidelines, including the system’s purpose, application context, internal oversight and ethical safeguards. Headings and bullet points were retained to facilitate readability, consistent with plain-language best practices. The major modification made was the removal of an unrelated hyperlink present in the original online version. Participants could scroll through and read the content at their own pace. The full text used in both conditions is provided in Supplemental Appendix A.

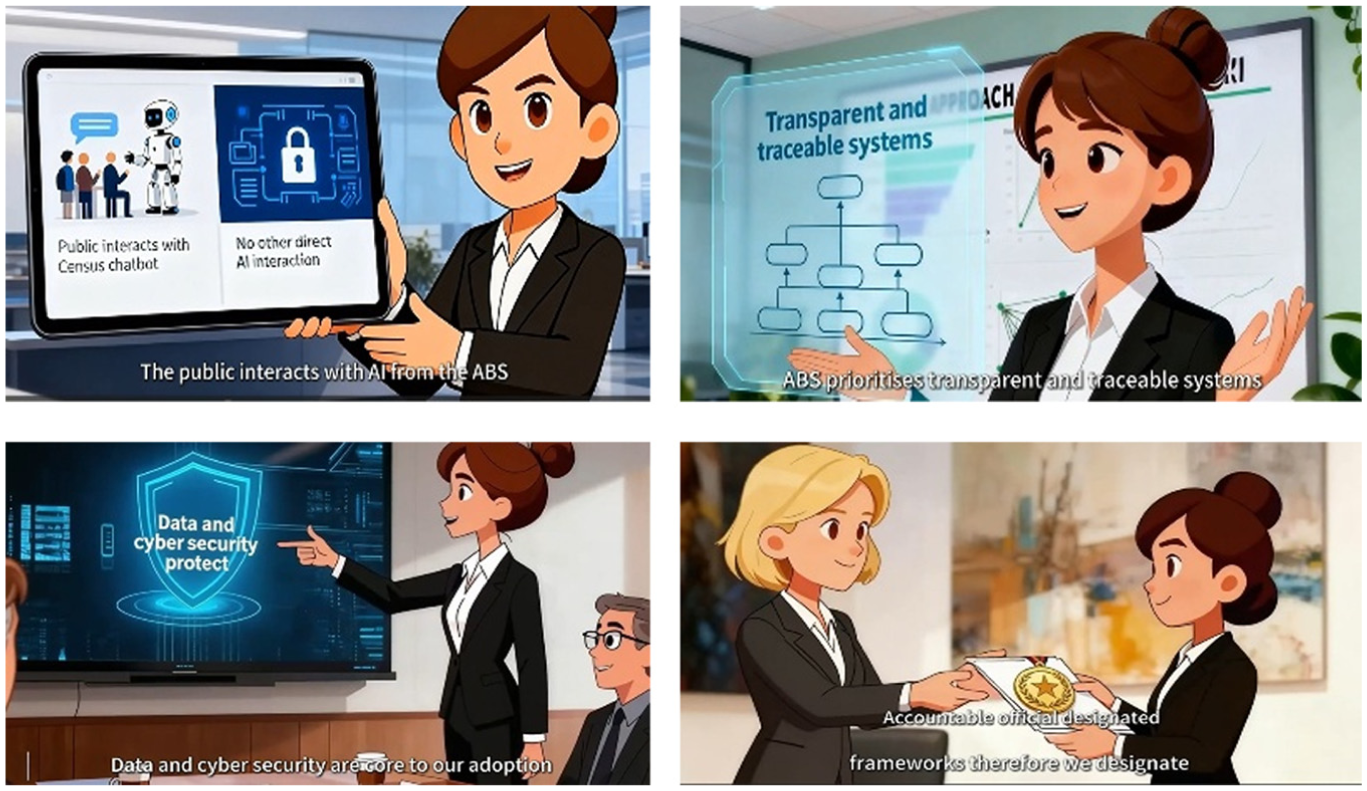

Video Condition (narrative-style video explainer format): Participants viewed an add-on narrative-style video (1 min 40 s) accompanying the same text-based transparency statement. The video conveyed identical factual content, restructured into a short character-led narration featuring a fictional staff member (“Sarah”) interacting with the agency’s AI application in a typical government workplace context (Figure 2). The stimulus was constructed using common narrative-visualisation design principles rather than arbitrary animation, incorporating features such as structured storytelling, semantic visual encoding and sequential information flow. Specifically, the video employed scene-based structuring to segment disclosure content and symbolic visual encodings (e.g. interface metaphors, icons, and split-screen contrasts) to represent abstract policy concepts. Fixed temporal pacing standardised exposure duration, while character-led framing and motion cues guided viewer attention. Narration was synchronised with visual elements to maintain verbal-visual alignment.

Screenshots of video explainer.

Content equivalence was maintained across conditions: all information presented in the text-based statement also appeared in the video, either verbally or visually. The video was produced using a standard animation pipeline implemented in CapCut, with narration delivered by a neutral human voice with an Australian accent. Detailed scene construction and visual encoding specifications are provided in Supplemental Appendix B.

Procedure

Figure 3 illustrates the experimental procedure. Participants were randomly assigned to one of the two format conditions. In the text condition, the instruction: “Please read the AI transparency statement carefully. You may scroll at your own pace and re-read before clicking Next.” In the video condition, the instruction was: “Please watch the following video explainer of the AI transparency statements. Make sure your sound is on.” The page embedded the video at the top with the full text version below it; participants were required to spend at least 1 min 40 s (the video’s duration) on the page before proceeding. They could rewatch the video before scrolling through the text while on that page, but after clicking “Next” they could not return to the stimulus page.

Flowchart of experimental procedure.

Immediately after exposure, participants completed an online questionnaire. To minimise order effects, the factual recall quiz was presented first, followed by perceived transparency, trustworthiness, cognitive load, narrative immersion, behavioural trust intention, and demographics. An attention-check item was embedded mid-survey. Participants who failed the attention check or answered fewer than two factual recall items correctly were excluded. Optional open-ended comments were collected at the end.

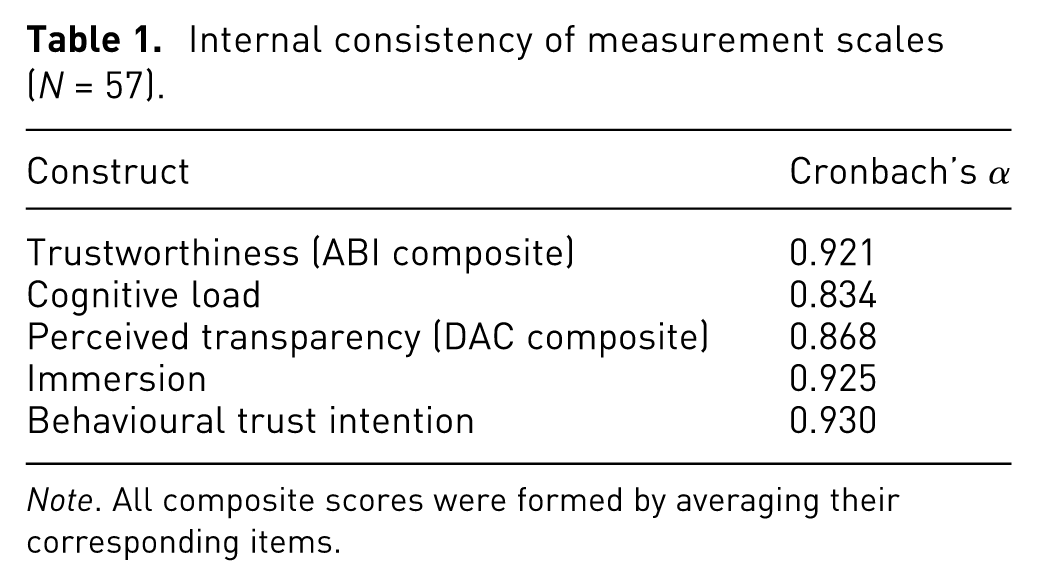

Measures

All items (except the objective factual recall quiz) used 7-point Likert scales. Internal consistency was acceptable to excellent across constructs (Cronbach’s

Internal consistency of measurement scales (

Construct separation and overlap control

Several CNM constructs are theoretically adjacent because they capture different layers of the same disclosure experience. To reduce avoidable overlap, perceived cognitive load was operationalised as a processing-experience measure (mental demand and pace/strain), whereas perceived transparency (DAC) captured a post-exposure evaluative judgement of openness, accuracy and clarity. Narrative immersion focused on attentional absorption and experiential engagement with the explanation rather than understanding or agreement with its content. Trustworthiness (ABI) measured beliefs about the agency’s competence, integrity and benevolence, while behavioural trust intention captured downstream willingness to rely. Discriminant validity was assessed using Pearson inter-construct correlations among the composite measures. Correlation magnitudes are reported in the results section.

Perceived Trustworthiness (ABI): We used six items to measure each dimension of Ability-Benevolence-Integrity (ABI) model.10,25,66 Two items assessed Ability (e.g. “The statement shows that the agency is capable of applying AI effectively.”), two assessed Integrity (“The statement portrays the agency as open and honest about how it uses AI.”), and two assessed Benevolence (“The statement shows that AI is applied in ways that benefit society.”). Higher scores reflect greater perceived trustworthiness of the agency’s AI use.

Perceived Cognitive Load: Five items assessed the perceived mental demand and ease of following the explanation, adapted from NASA-TLX dimensions and prior explanatory interface studies. 67 Example items: “The pace of the statement was comfortable to follow without strain,” and “It was easy to understand this AI statement.” Scores were coded such that higher values indicate lower perceived cognitive load.

Perceived Transparency (DAC): Perceived transparency was measured using six items reflecting the Disclosure-Accuracy-Clarity (DAC) framework, with two items per dimension. For example, disclosure: “The statement provides information on how AI is applied across relevant functions,” accuracy: “The statement presents the AI information accurately,” and clarity: “The statement makes it easy to follow the content.” Higher scores indicate the agency is perceived as more transparent.

Narrative Immersion: To capture how absorbed participants were in the explanation, we used four items adapted from the Narrative Engagement Scale 57 and transportation measures. 22 Sample items: “The [text/video] kept my attention from start to finish,” and “I felt absorbed in the explanation.” We adjusted wording for each condition (e.g. “the text kept my attention …” vs “the video kept my attention …”).

Objective Factual Recall: To assess participants’ immediate retention of key information from the AI transparency statement, we included a brief factual recall quiz consisting of three multiple-choice questions. The items targeted explicitly stated elements of the ATS: (1) the AI application mentioned in the statement, (2) which AI system interacts directly with the public and (3) how accountability is ensured in the agency’s use of AI. Each question had four response options with one correct answer. Responses were scored dichotomously (1 = correct, 0 = incorrect) and summed to form a total factual recall score (range: 0–3). This measure is intended to verify attention and immediate factual recall rather than deeper conceptual understanding or interpretive comprehension. The full questionnaire, including the factual recall items and response options, is provided in Supplemental Appendix C.

Behavioural Trust Intention: Five items assessed participants’ willingness to rely on the agency and its AI system, such as “After this explanation, I feel confident that the agency will use its AI system responsibly,” and “I would be open to accepting decisions that involve the use of this AI system.” These reflect “willingness to rely.”66,68 Although hypothetical, this measure captures participants’ intended trust-related responses.

Finally, participants were invited to provide optional open-ended feedback regarding what they liked or disliked about the format they viewed.

Data analysis

We analysed the data using IBM SPSS Statistics 29, including Andrew Hayes’ PROCESS macro for mediation analyses.

69

The study employed a between-subjects design comparing a text-only format and a text-plus video explainer format. All hypothesis tests used a two-tailed significance threshold of

Results

Between-group comparison

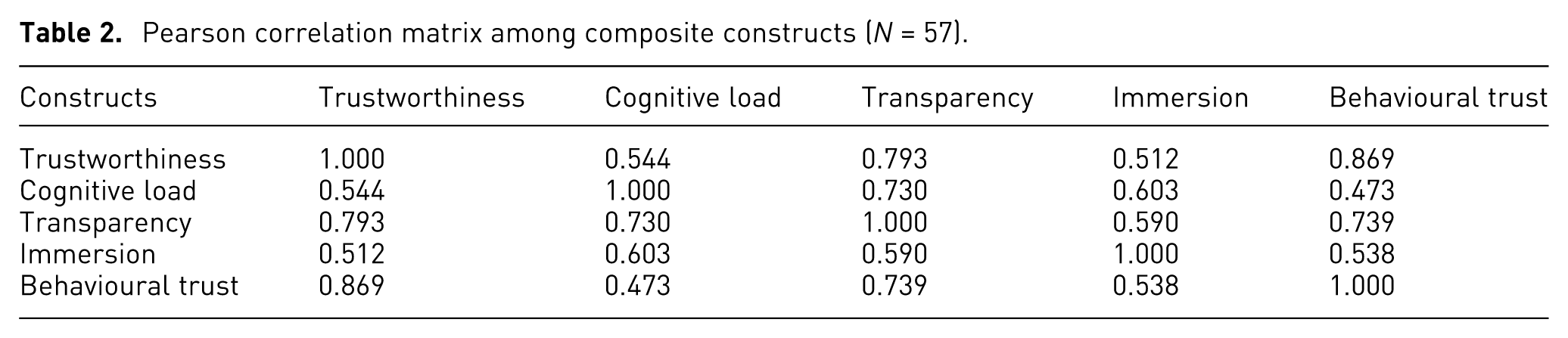

Before hypothesis testing, we examined inter-construct correlations to assess potential measurement redundancy among the composite measures (Table 2). Most correlations remained below commonly cited thresholds associated with problematic redundancy. Moderate correlations are theoretically plausible because the CNM framework treats cognitive ease, narrative immersion and perceived transparency as distinct yet related psychological processes that arise from the same disclosure experience, rather than as independent dispositional traits.

Pearson correlation matrix among composite constructs (

The strongest association was observed between perceived trustworthiness and behavioural trust intention (

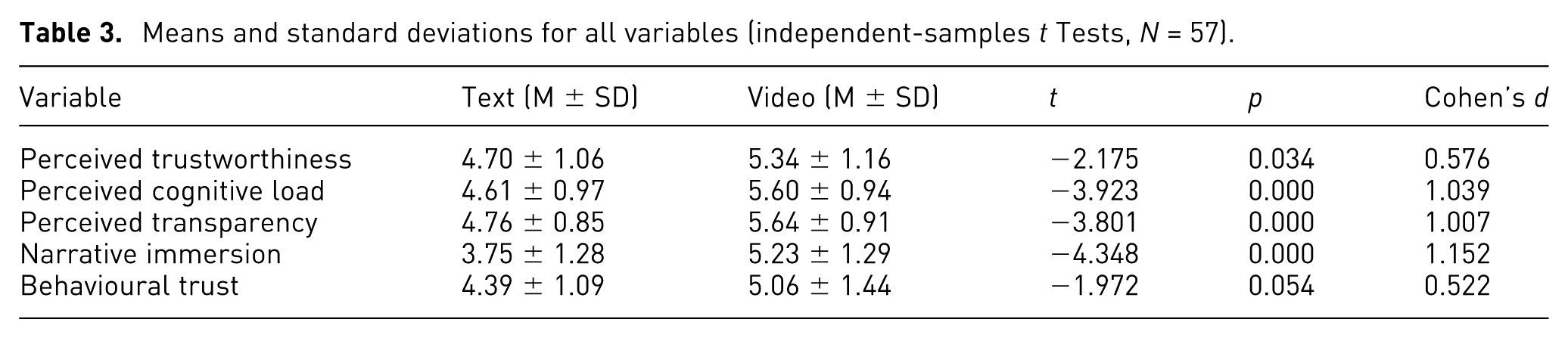

Table 3 and Figure 4 present the means, standard deviations and independent-samples

Means and standard deviations for all variables (independent-samples

Between-group comparison: text-only versus video explainer condition.

Participants who viewed the video explainer reported higher perceived trustworthiness of the agency (M_video = 5.34, SD = 1.16) than those who read the text-only statement (M_text = 4.70, SD = 1.06),

In contrast, behavioural trust intention did not differ significantly between conditions (M_video = 5.06, SD = 1.44; M_text = 4.39, SD = 1.09),

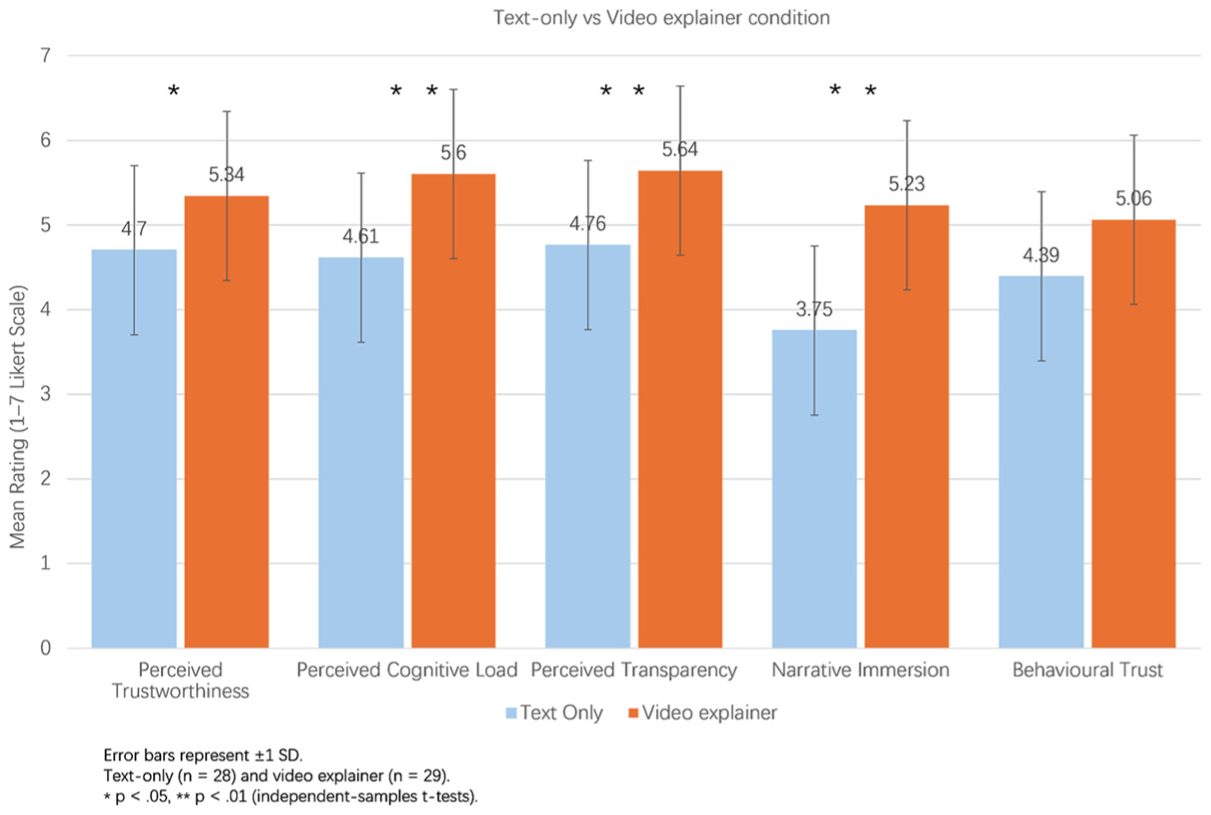

Dual-pathway mediation analyses (RQ2)

To examine the dual-pathway mechanism proposed by the CNM framework (RQ2), we conducted two separate mediation analyses using Hayes’ PROCESS Model 4. 69 Narrative immersion and perceived cognitive load (higher scores indicate lower load) were specified as mediators to examine whether each experiential pathway statistically mediated the relationship between communication format and perceived trustworthiness. Results are reported in Table 4.

Mediation model full outputs (PROCESS Model 4,

Model 1: Narrative immersion as mediator

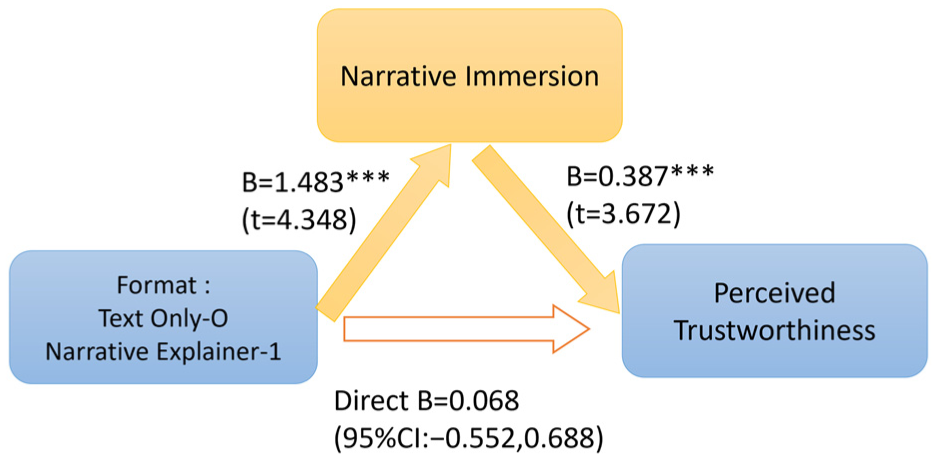

In the immersion mediation model, the total effect of format on trustworthiness was significant (

Mediation model for the narrative pathway. Solid arrows indicate statistically significant paths; visually de-emphasised arrows indicate non-significant paths.

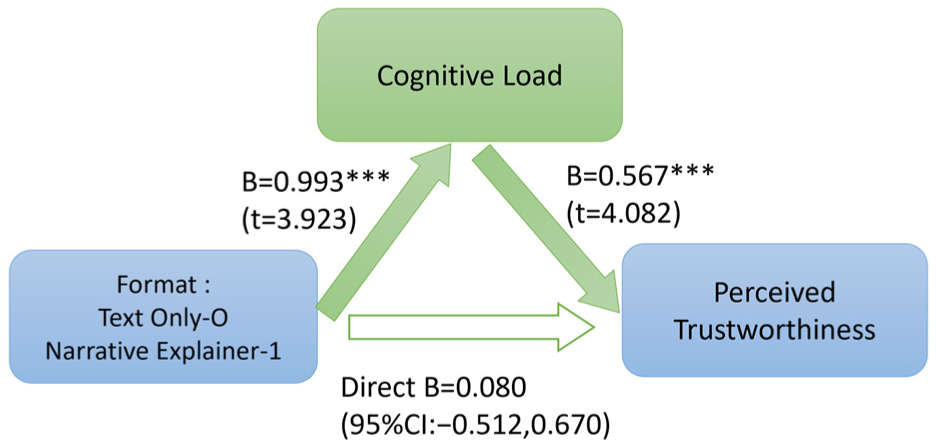

Model 2: Cognitive ease as mediator

Using the lens of cognitive-load mediation model (Figure 6), where cognitive load served as the sole mediator, a similar pattern emerged. Format also significantly increased ease of processing (

Mediation model for the cognitive pathway. Solid arrows indicate statistically significant paths; visually de-emphasised arrows indicate non-significant paths.

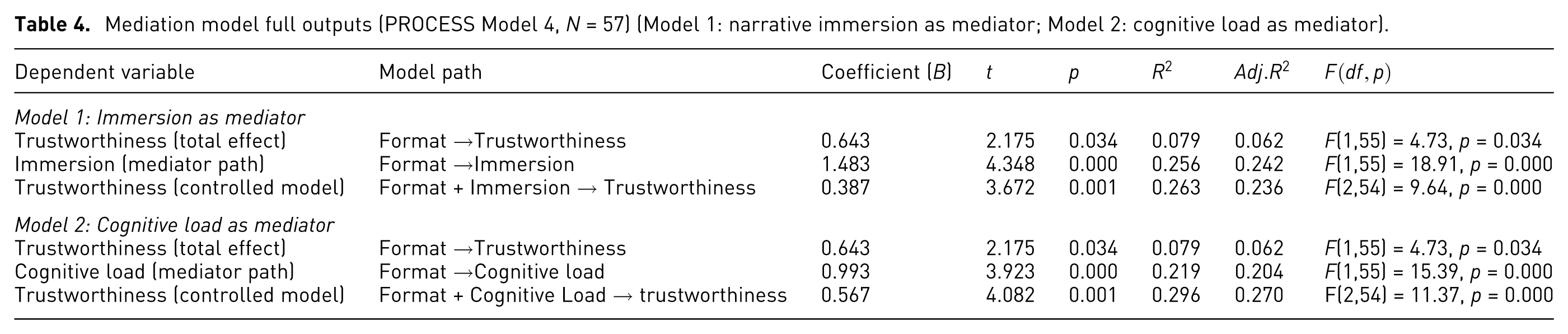

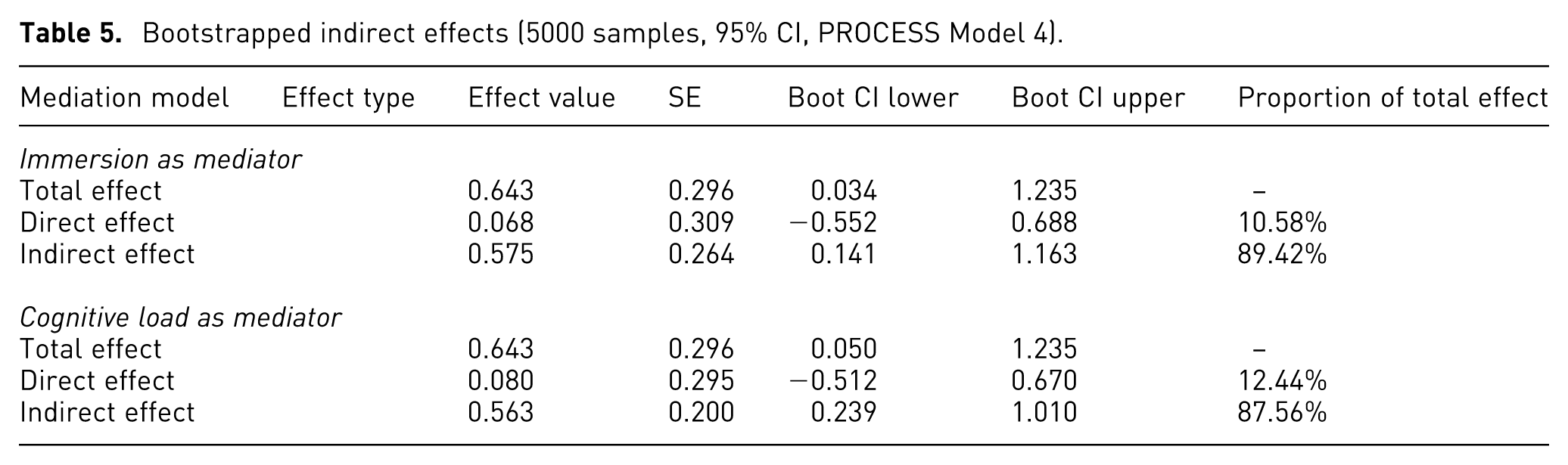

Bootstrapped indirect effects

Bootstrapped analyses (5000 samples) confirmed the significance of both mediation effects (Table 5). For immersion, the indirect effect was

Bootstrapped indirect effects (5000 samples, 95% CI, PROCESS Model 4).

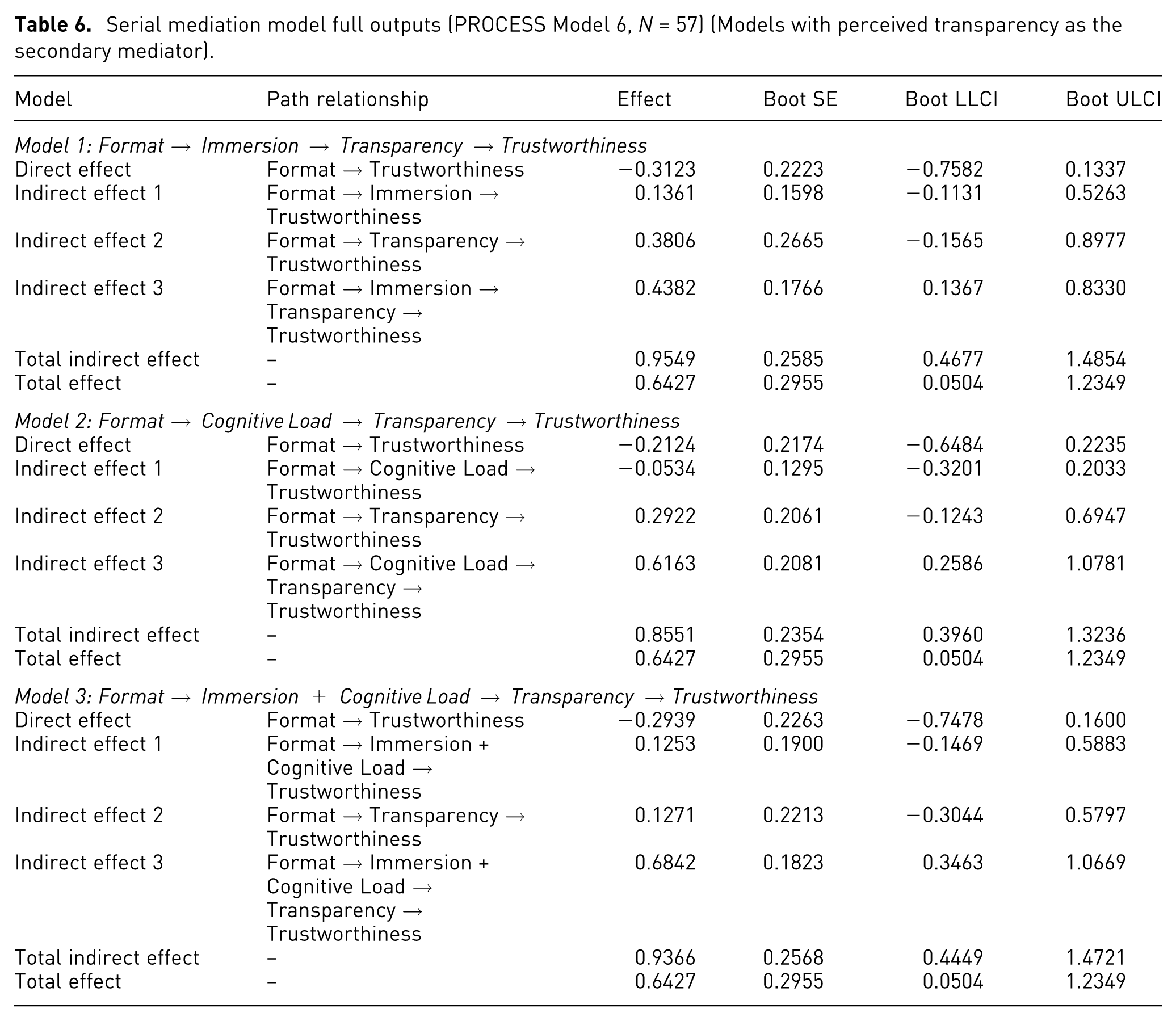

Serial mediation via perceived transparency (RQ3)

We next tested the extended mechanism proposed by the CNM framework (RQ3). Using PROCESS Model 6, we estimated a set of serial mediation models in which format influenced trustworthiness via a two-stage pathway: first through either narrative immersion or cognitive ease, and subsequently through perceived transparency (results in Table 6).

Serial mediation model full outputs (PROCESS Model 6,

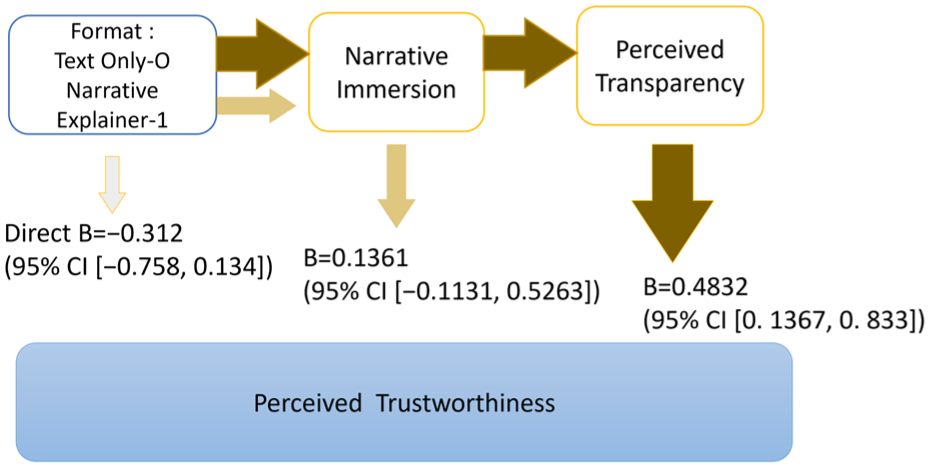

Model 1: Format narrative immersion transparency trustworthiness

In the immersion-based serial model, the indirect path from format to trustworthiness via narrative immersion and then transparency was significant (

Serial mediation model for the narrative pathway. Arrow thickness and colour intensity correspond to the relative strength of the estimated associations.

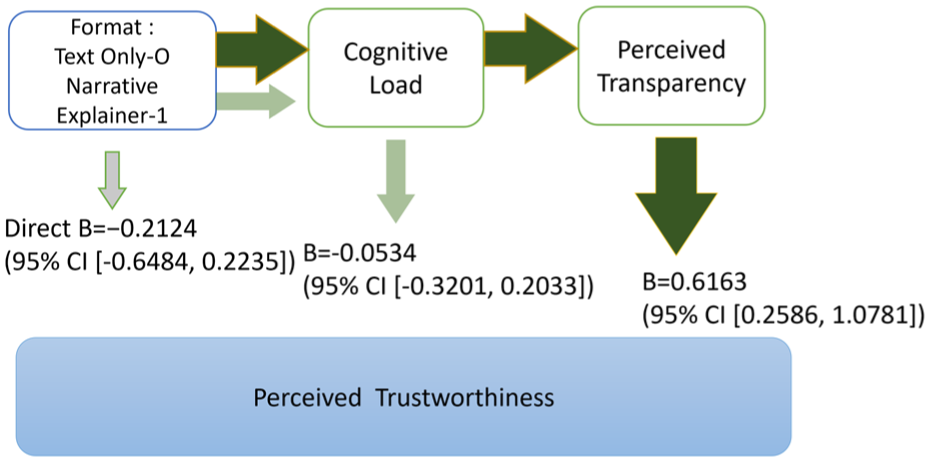

Model 2: Format

Trustworthiness

Similarly, the serial path through cognitive ease and then transparency was significant (

Serial mediation model for the cognitive pathway. Arrow thickness and colour intensity correspond to the relative strength of the estimated associations.

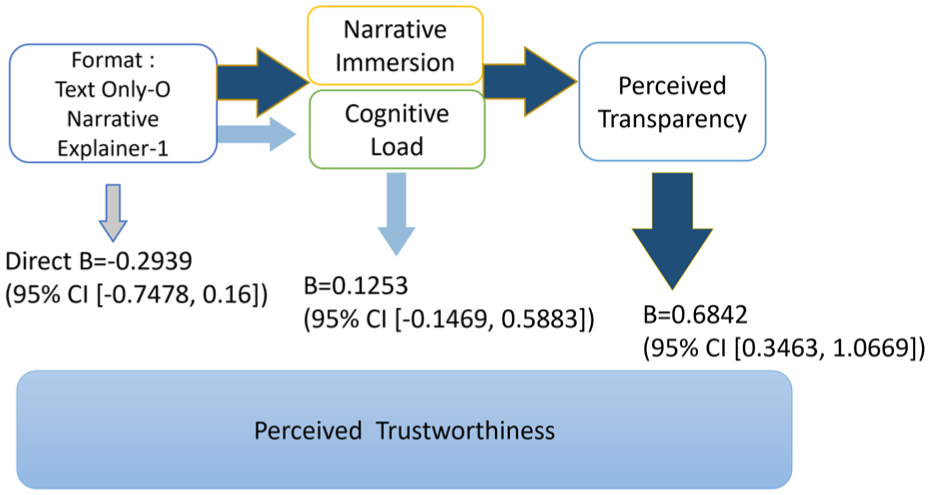

Model 3: Format → Narrative immersion and cognitive ease → Transparency → Trustworthiness

To examine how multiple experiential factors may jointly contribute to transparency judgements, narrative immersion and cognitive ease were included together in a serial mediation model. The sequential indirect effect from format through experiential processing and perceived transparency to trustworthiness was significant (

Serial mediation model including narrative immersion and cognitive ease. Arrow thickness and colour intensity correspond to the relative strength of the estimated associations.

In each model, the direct effect of format on trustworthiness was non-significant once experiential variables and perceived transparency were included, indicating that the association between communication format and trustworthiness judgements was largely accounted for by these experiential pathways.

Discussion

This study examined how communication format shapes citizens’ perceptions of transparency and perceived trustworthiness in government AI disclosures through the Cognitive-Narrative Mediation (CNM) framework. In the present experimental context, the findings indicate that supplementing a text-based AI transparency statement with a video explainer was associated with higher perceived trustworthiness by altering how disclosures were experienced—specifically by reducing perceived cognitive load and increasing narrative immersion, which in turn informed perceptions of transparency. Below, we discuss the key findings in relation to prior work, followed by implications for transparency communication design.

Format effects and perceptual experience (RQ1)

The first set of results shows that format matters for how transparency is experienced. Participants who viewed the narrative video explainer perceived the agency as more transparent and more trustworthy, found the explanation easier to understand and reported higher engagement than those who read the text-only statement. Because the two conditions contained equivalent substantive content, these differences reflect changes in how information was processed rather than what was disclosed. This supports the view that transparency is not merely an informational state but a subjective, experiential construct.

Qualitative feedback from the text-only group underline the inherent limitations of a purely textual ATS. Some participants described the document as “not engaging … plain and boring. Visuals are also needed,” while another noted that “while the text was clear and succinct, I still needed to read it a few times through to understand and comprehend it.” These comments suggest that even relatively well-written government texts can still feel effortful for citizens. As an “add-on” tool, the video explainer partially compensates for these deficits at the level of experiential processing, without altering the legal authenticity of the ATS, since the video was strictly grounded in the official text.

From a cognitive perspective, the video’s temporal pacing, audio-visual cues, and human-centred storyline likely reduced extraneous cognitive load by segmenting information, guiding attention to key points. This aligns with multimedia learning research showing that narration-visual integration and temporal contiguity can ease processing demands and support comprehension.18,21,24 Evidence from instructional video research further indicates that the on-screen presence of a human presenter enhances viewers’ visual attention and social-cognitive engagement during multimedia learning. 70 Although this evidence comes from educational settings, the underlying attentional and social cues are not unique to learning environments. Similar mechanisms have been documented in narrative communication research, where character-driven stories increase immersion and emotional involvement.22,57 Consistent with these mechanisms, participants’ comments on the video explainer such as “this format was much easier to understand and comprehend,” suggest that the narrative-style video format supported more fluent and accessible processing. However, these gains should be interpreted as consequences of the overall explainer-format bundle, rather than as evidence that any single component, including narrative framing, is independently responsible.

The absence of a statistically significant effect on behavioural trust intention is theoretically informative rather than problematic. While the video explainer restructured the experiential basis on which transparency and trustworthiness judgements were formed, it did not produce an immediate shift in participants’ willingness to rely on. This distinction underscores that perceived trustworthiness reflects an evaluative judgement, whereas behavioural trust involves a downstream commitment that unfolds over longer temporal horizons. In the context of AI governance, transparency design choices may therefore shape how disclosures are interpreted and evaluated before they translate into concrete behavioural reliance.

Overall, the RQ1 findings indicate that supplementing an ATS with a video explainer reshapes how transparency and trustworthiness are perceived, while keeping the underlying content unchanged.

Dual-pathway mediation: Evidence for the CNM mechanism (RQ2)

The mediation analyses provide evidence statistically consistent with the dual-pathway mechanism proposed by the CNM framework. When tested in separate mediation models, both perceived cognitive ease and narrative immersion served as mediators linking communication format to perceived trustworthiness. Once these mediators were entered into the models, the direct effect of format on perceived trustworthiness became nonsignificant, with the majority of the total effect transmitted through the mediators (approximately 89.42% via the narrative pathway and 87.56% via the cognitive pathway) (Table 5). Here, narrative immersion and cognitive ease pathways refer to the measured experiential constructs rather than independently manipulated stimulus attributes.

This pattern indicates that the association between communication format and trustworthiness judgements is statistically consistent with an indirect effect involving experiential processing. When a disclosure feels easier to follow and more engaging, participants may infer greater competence, benevolence and integrity, consistent with the ABI model of trustworthiness.25,41 Importantly, the presence of two mediation pathways is consistent with established dual-process accounts of information processing, including the elaboration likelihood model 19 and broader dual-process perspectives, 20 which distinguish more deliberative, systematic processing from faster, experience-driven modes of evaluation. Within this theoretical framing, cognitive ease and narrative immersion correspond to theoretically distinct experiential dimensions: one concerns whether information can be processed with minimal effort, while the other concerns whether information is meaningfully organised and experientially coherent.

Communication format exerts its influence primarily through experiential conditions rather than acting as an independent trust signal. Once these conditions are accounted for, the residual direct effect of format is no longer statistically detectable in this sample, indicating that trustworthiness evaluations are more closely associated with experience than with format cues per se in this sample. Building on these dual-process traditions, CNM advances prior work by specifying how differentiated experiential routes are structurally mediated in AI transparency communication, rather than treating trust as a direct outcome of format or persuasive cues.

Extended mediation: Transparency as a cognitive-narrative bridge (RQ3)

The serial mediation analyses further clarify how perceived transparency functions as a conceptual bridge between users’ experiential responses and their trustworthiness judgements. The results show that both immersion and ease of processing significantly predicted perceived transparency, which in turn predicted perceived trustworthiness. That is, when explanations were experienced as easier to process or more engaging, participants were more likely to perceive the agency’s disclosure as clearer, more open, and more accurate, consistent with the DAC model of transparency.10,41

This finding reframes transparency as a psychologically constructed state rather than a fixed property of the message.5,6 It also helps explain the transparency-trust paradox documented in prior work: transparency is associated with more positive trust outcomes only when the audience can meaningfully process and interpret the disclosure.7,42,45 If information is merely dumped on citizens in dense or technical form, it may formally satisfy disclosure requirements while failing to recognise as genuinely transparent or trustworthy.29,71

By addressing RQ3, this study advances understanding of the bridging role of perceived transparency within the DAC-ABI framework. 10 While prior research has documented a close link between transparency perceptions and trustworthiness judgements, our findings indicate that such perceptions are shaped by how audiences process and experience disclosed information, rather than emerging automatically from disclosure itself. From this perspective, perceived transparency functions as a proximal evaluative step through which media-induced experiences inform judgements of institutional trustworthiness. Communication design, particularly narrative-style video explainers, can play a meaningful role in shaping how citizens interpret an agency’s competence and integrity, rather than merely serving as a neutral channel for information delivery.

Implications for the design of AI transparency communication

These findings carry several practical implications for the design of government AI transparency communication and public-facing explanations.

First, agencies increasingly explore representational formats beyond static, text-heavy disclosures. Formats such as short videos, infographics or interactive summaries alter how ATS information is encountered and interpreted. Consistent with research on multimodal presentation, combining narration with visual guidance may influence information uptake without altering substantive content. Crucially, alternative formats should remain tightly anchored to official disclosures to preserve informational accuracy and institutional credibility. Second, the patterns associated with the narrative framing embedded in the video stimulus indicate the potential value of relatable human perspectives in transparency communication. Government communication often adopts an impersonal and bureaucratic tone, which can make institutional practices difficult for citizens to follow. Presenting AI use through ordinary perspectives can make disclosures more socially interpretable and may support perceptions associated with benevolence and integrity. At the same time, narrative approaches should remain genuine and non-manipulative. Overly dramatic or overtly persuasive storytelling risks being perceived as strategic messaging or propaganda, which may undermine trust rather than enhance it.52,53,72 Third, the mediation patterns suggest that perceived cognitive ease functioned as an experiential pathway shaping evaluative responses. Participants’ judgements were associated with how easily disclosures could be processed and interpreted. While not a universal prescription, this finding indicates that reducing avoidable complexity may support transparency communication. Practices such as plain language, explicit structure and concise information hierarchy may contribute to improved interpretability across communication forms. Finally, the CNM perspective suggests that AI transparency tools across different communication environments such as videos, dashboards, or other explanatory interfaces are more likely to foster perceived transparency and trustworthiness when they jointly reduce extraneous cognitive load and support experiential engagement.

From a visualisation perspective, the video stimulus serves as an illustrative instance of how representational design choices including temporal organisation, symbolic encoding, and attention-guidance cues, may be associated with viewers’ processing experiences. The CNM framework therefore provides a conceptual lens for interpreting how visualisation design characteristics embedded within formats may relate to users’ experiential and evaluative responses in transparency-oriented communication. The present findings should be interpreted within the constraints of the experimental configuration rather than as prescriptive design rules. They are intended to inform researchers and designers about plausible experiential mechanisms associated with communication-format variations, not to define an exhaustive visualisation design space.

Limitations and future research

First, as with many studies relying on self-report measures of subjective experience, some degree of construct overlap is unavoidable. Accordingly, the mediation results are interpreted as supportive of the CNM framework at a structural level rather than as precise estimates of distinct psychological effects. In particular, perceived cognitive load, narrative immersion, and perceived transparency capture adjacent aspects of viewers’ experience during a single exposure to the disclosure. Although discriminant validity checks indicate that these constructs are not statistically redundant, their conceptual proximity may amplify estimated mediation effects. The mediation results are therefore interpreted cautiously as supportive rather than definitive evidence for the CNM framework. Importantly, this study was designed for theory testing and mechanism identification rather than population-level effect estimation.

Second, the sample size (

Third, the study was conducted in a controlled experimental environment using a hypothetical scenario. This design enabled precise manipulation of communication format and strengthened internal validity, but it may not fully capture how citizens encounter and interpret AI transparency statements in everyday contexts. Responses to ATS presented on official government websites, especially when they inform consequential decisions, may differ from those observed in the laboratory. Field experiments, A/B testing in live digital environments, and longitudinal designs would provide valuable insight into whether the observed effects persist, attenuate, or evolve over time.

Fourth, although the experimental stimulus was grounded in an authentic AI transparency statement, certain elements of wording and structure were adapted to support narrative clarity. In practice, agency disclosures are often shaped by legal, political, and organisational constraints that were not fully represented in the experimental materials. Future research conducted in collaboration with government agencies could employ live or minimally modified ATSs to examine how narrative explainers’ function under realistic institutional conditions.

Fifth, although the mediation analyses are statistically consistent with the CNM framework, they do not establish causal mediation. Cognitive ease and narrative immersion were not independently manipulated, and their ordering reflects a theoretically grounded assumption rather than an experimentally verified causal sequence. Moreover, these experiential constructs may be interrelated—for example, cognitively easier content may also feel more engaging, or immersive narratives may be experienced as less effortful. Future research should adopt orthogonal or factorial designs that independently manipulate cognitive load and narrative immersion, such as through variations in information density, narrative richness or pacing, to more rigorously test causal pathways and mediator independence.

Sixth, at the experimental-design level, the comparison reflects a bundled communication-format manipulation rather than the isolation of any single design factor. The conditions therefore differed along multiple format-related dimensions, including modality, attentional guidance, exposure control and pacing. Consequently, the observed effects cannot be cleanly attributed to any single design component. The video explainer imposed a fixed, guided pace, whereas the text condition allowed self-paced reading, meaning some observed differences may partly reflect temporal structuring. Prior studies of linear data videos similarly note that structural interventions do not uniformly reduce perceived cognitive burden under fixed pacing conditions. 73 Future research could further isolate temporal influences by directly varying pacing across formats.

Seventh, behavioural trust was measured using self-reported intentions rather than observed behaviour. These intention-based measures capture immediate, self-reported evaluations rather than observed behaviour or trust calibration in real-world government decision-making contexts. While the video condition produced clear effects on perceived transparency and trustworthiness, its influence on behavioural intentions was more modest. Future work should incorporate behavioural indicators, such as service uptake or interaction logs, to better capture downstream trust-related outcomes. In addition, the questionnaire included single-item background measures of general trust in government agencies and trust in AI systems, providing a coarse indication of baseline trust orientations. These items do not capture source-specific credibility or institutional trust and were therefore not treated as control variables in the analyses. Future research should explicitly consider baseline trust orientations and source-specific credibility in government AI transparency studies.

Finally, the present study focused on a single video format. The CNM framework itself is not tied to any specific medium and predicts that communication formats which jointly support cognitive fluency and narrative engagement may influence trust-related perceptions. Future studies could extend this work to other formats, such as interactive explainers, conversational agents, serious games or immersive VR/AR experiences, and examine potential moderators including prior trust in government, AI literacy, and political orientation.

Conclusion

This study examined how communication format shapes perceived transparency and trustworthiness within the Cognitive-Narrative Mediation (CNM) framework. By comparing a text-only AI Transparency Statement (ATS) with a text-plus video explainer while maintaining informational equivalence, the analysis focused on how disclosures are experienced rather than what is disclosed. Across conditions, perceived transparency (DAC) was statistically associated with cognitive ease and narrative immersion, consistent with the dual-pathway structure proposed by the CNM framework. The video explainer condition yielded higher perceived transparency, accompanied by higher cognitive ease and narrative immersion. These experiential differences were also reflected in perceived trustworthiness judgements.

At a theoretical level, the findings highlight the potential role of perceptual and experiential factors within the DAC-ABI framework. Transparency evaluation, in this view, is closely tied to users’ processing experiences and representational design rather than disclosure content alone. For public-sector communication, the results suggest that complementing formal ATS documents with accessible explanatory formats may facilitate how AI use is interpreted and evaluated, provided that informational accuracy and completeness are preserved. From a visualisation perspective, the study illustrates how representational characteristics may relate to citizens’ experiential responses in transparency-oriented contexts. More broadly, the CNM framework underscores that disclosure alone does not guarantee perceived transparency or trustworthiness, emphasising the importance of communication and representation design in AI governance.

Supplemental Material

sj-docx-1-ivi-10.1177_14738716261435103 – Supplemental material for From perceived transparency to trustworthiness: Testing the Cognitive–Narrative Mediation (CNM) framework in government AI policy communication

Supplemental material, sj-docx-1-ivi-10.1177_14738716261435103 for From perceived transparency to trustworthiness: Testing the Cognitive–Narrative Mediation (CNM) framework in government AI policy communication by Yongqing Chen, Jie Liang, Nina Errey, Didar Zowghi, Muneera Bano, Kaye Chan and Jane Hogan in Information Visualization

Footnotes

Acknowledgements

We thank the anonymous reviewers for their constructive comments and suggestions. We are also grateful to all user study participants for their time and valuable contributions.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.