Abstract

Research in machine learning has become very popular in recent years, with many types of models proposed to comprehend and predict patterns and trends in data originating from different domains. As these models get more and more complex, it also becomes harder for users to assess and trust their results, since their internal operations are mostly hidden in black boxes. The interpretation of machine learning models is currently a hot topic in the information visualization community, with results showing that insights from machine learning models can lead to better predictions and improve the trustworthiness of the results. Due to this, multiple (and extensive) survey articles have been published recently trying to summarize the high number of original research papers published on the topic. But there is not always a clear definition of what these surveys cover, what is the overlap between them, which types of machine learning models they deal with, or what exactly is the scenario that the readers will find in each of them. In this article, we present a meta-analysis (i.e. a “survey of surveys”) of manually collected survey papers that refer to the visual interpretation of machine learning models, including the papers discussed in the selected surveys. The aim of our article is to serve both as a detailed summary and as a guide through this survey ecosystem by acquiring, cataloging, and presenting fundamental knowledge of the state of the art and research opportunities in the area. Our results confirm the increasing trend of interpreting machine learning with visualizations in the past years, and that visualization can assist in, for example, online training processes of deep learning models and enhancing trust into machine learning. However, the question of exactly how this assistance should take place is still considered as an open challenge of the visualization community.

Keywords

Introduction

Machine learning (ML) techniques and tools have become ubiquitous in the process of analyzing data for diverse purposes. The underlying ML models are used, for instance, to predict future events based on the data at hand.1,2 However, ML models are not always trusted by analysts, even if they offer high-quality results in comparison with other analytical methods. 3 In many cases, they are considered as black boxes, that is, the internal functionality of the underlying algorithms is not entirely understandable for analysts,4–6 and even ML experts struggle to tune and parameterize those models. 7 The sheer complexity of the algorithms that were invented is a critical factor that makes the data analysis process challenging.8–10

Nowadays, it is widely accepted that information visualization (InfoVis) can aid in this process and offer guidance toward more (and better) interpretable ML models. 11 Explorable and transparent ML models enable users to understand, trust, and manage these models. InfoVis plays a crucial role in analyzing such models and, as a result, provides guidance to users, interaction techniques to control them, and information about their inner workings that are often hidden away. 12

Within the past 5 years, the number of publications that focus on the interpretation of ML models using InfoVis techniques has significantly increased, which makes it difficult for novice researchers or analysts to get in the field quickly. In order to acquire the necessary knowledge, they have to spend many hours searching and reading related papers and articles. An obvious solution is to look for more comprehensive survey articles that provide an overview of a specific aspect of ML models and how to interpret them; but even here, they will find a fairly high number of survey papers. In this case, in particular, they might also often not be able to find the needed information due to either too-specific focus or a mixture of various topics in such survey papers.

This challenge caught our attention during the search for relevant work to base our research on improving the interpretability and explainability of ML models with the use of InfoVis. Throughout that stage, we gathered and analyzed several surveys that summarize the related work in the topic with the goal of getting an overview of the field. But due to the number of surveys, the different perspectives shown in each, and the lack of common ground between them, we found that task to be much less straightforward than we expected.

In order to contribute to this challenge, we present this meta-survey—or “survey of surveys” (SoS)—which summarizes and describes survey publications that focus mainly on the exploration and interpretability of ML models, that is, opening the black box of the various types of ML algorithms and visualizing them. At this point, it is important to clarify our use of the terms interpretable and explainable ML, following the definitions of Gilpin et al. 13 It is common for interpretability and explainability to be used interchangeably, but Gilpin et al. argue that there are solid reasons to differentiate between them: the main purpose of interpretability is to describe the internals of an ML model in an understandable way for humans. In addition to that, explainable ML should explain the models that are already understandable, in order to obtain the trust of users or to generate new insights about the reasons for their decisions, subsequently answering the question of why a specific decision has been made by the model. Here, any explanation is also assessed according to its completeness, that is, the ability to describe the model operations precisely, also in other, more general situations. Both properties, interpretability and completeness, often contradict each other, because the most accurate explanation may not be very interpretable. Consequently, “explainable models are interpretable by default, but the reverse is not always true.” 13 Thus, we carefully select and use these terms throughout our paper according to these guidelines; for more details, we refer the reader to the paper by Gilpin et al. 13

Our meta-analysis proposed in this article has two main target groups:

Early-stage researchers ( ) who struggle to collect information from surveys and papers that refer to the interpretability and explainability of ML algorithms. We intend to support early-stage researchers by providing information that may guide them to the correct surveys according to their goals, help them not to lose time when reading extensive surveys that are not useful for their current research focus, or simply better organize and plan their reading schedule.

) who struggle to collect information from surveys and papers that refer to the interpretability and explainability of ML algorithms. We intend to support early-stage researchers by providing information that may guide them to the correct surveys according to their goals, help them not to lose time when reading extensive surveys that are not useful for their current research focus, or simply better organize and plan their reading schedule.

Senior researchers ( ) in InfoVis and/or visual analytics (VA), who are interested in the interpretability and explainability of ML models, may want to learn more details on how the existing surveys were conducted or how they differentiate from each other. They may also benefit from research gaps derived from those surveys and areas not yet covered.

) in InfoVis and/or visual analytics (VA), who are interested in the interpretability and explainability of ML models, may want to learn more details on how the existing surveys were conducted or how they differentiate from each other. They may also benefit from research gaps derived from those surveys and areas not yet covered.

Although we provide a detailed and descriptive summary of the current ecosystem of surveys in our focus area, there are also inherent limitations of this work that must be considered. The available number of surveys is relatively small (especially when compared to McNabb and Laramee’s SoS paper), 14 and they were published in only 5 years. This is not due to methodological issues, but an inherent characteristic of the research area itself, which is still quite young. The surge of research papers in the past decade has been reflected in a similar wave of recent surveys in the past few years, which motivated our work. Thus, while this is an early work and our scope is arguably restricted, that characteristic has been taken into account in our methodology. Our goal was not to derive a deep theoretical framework from the available data; we would not be able to do that with such a small sample in a relatively restricted time period. Instead, we focus on describing the current surveys at a high level, where they differ, and how they treat the current research to help with guiding researchers into more meaningful and efficient future work. As the research area matures and more papers and surveys are available, more interesting and meaningful patterns and trends will surely arise in the future from larger samples of primary research. Concretely, our contribution comprises the following points:

The aggregation and description of research opportunities (i.e. open challenges) described in survey papers concerning interpretable and explainable ML to assist the mentioned main target groups.

The identification of research subtopics and trends under the umbrella of interpretable ML.

The analysis of various temporal and topical aspects related to the survey papers themselves and the publications they discuss.

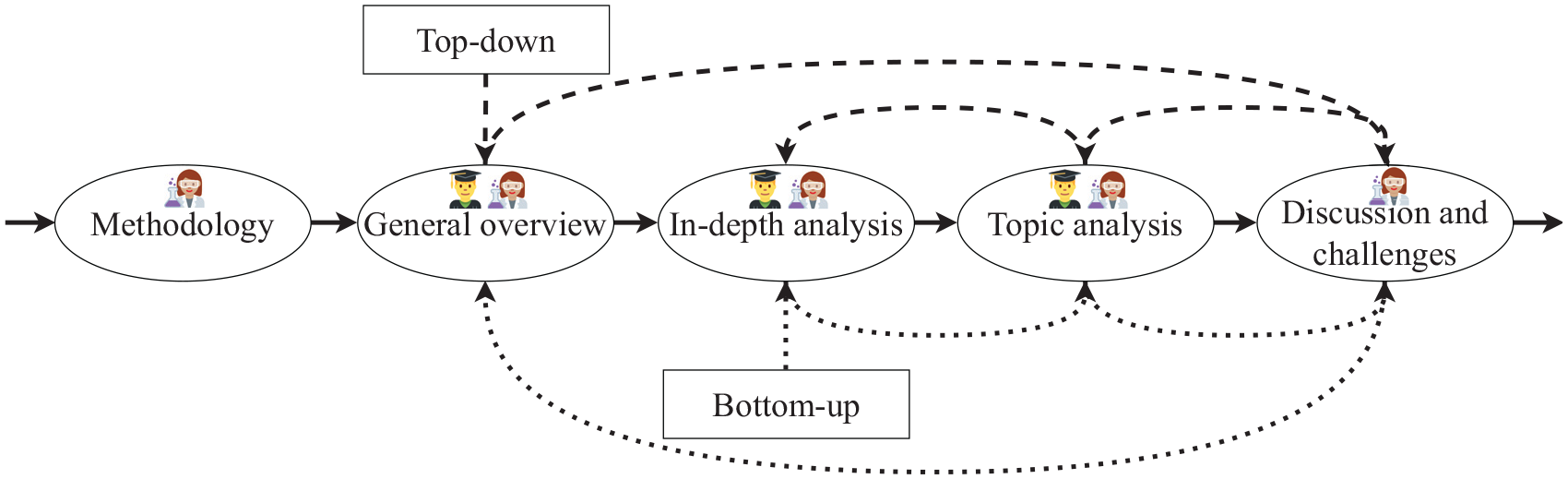

To support a smooth and progressive immersion of our readers into interpretable ML with the help of visualization, we propose two alternative flows: (a) bottom-up, starting from the summaries of each survey paper, or (b) top-down, starting from our collection of high-level information on the whole field of visualizing ML models. Figure 1 displays both flows and how early-stage researchers and senior researchers might follow specific paths in order to read this paper.

Flowchart to guide readers on possible paths to read and interpret our SoS. The usual path is depicted with solid arrows connecting each section linearly. The dotted arrows show the two alternative paths that correspond to the bottom-up and top-down flows. Following the standard path, early-stage researchers are probably most interested in getting an overview first, then moving on to the details and specific models, and finally finding relationships between survey papers, topics, and individual papers. However, senior researchers might be curious to learn how to write SoS or validate our results by reading the “Methodology” section. Finally, from the “Discussion and challenges” section, they might extract some knowledge from the lessons learned and explore the research opportunities we identified. However, in contrast to the suggested early-stage researchers’ path, the order which senior researchers might follow is more varied, depending on individual preferences and interests.

The structure of our SoS is given as follows: in the next section, we describe the differences between our work and other SoS papers focusing on InfoVis and VA. The subsequent section contains the methodology that we followed in order to gather the survey papers. After this, a first general overview of our research subject is described from aggregated data that we collected. The subsequent section is about a more detailed analysis of the single categories in which we classified the collected survey papers. Next, we present the results of a topic modeling approach applied to all individual papers discussed in the individual surveys. In the penultimate section, we discuss observations and our interpretations from the results presented in the previous sections, and we present open challenges/research opportunities in the field. Finally, the last section concludes this article.

Related work

Due to the potentially large number of survey articles that aim to overview research on a specific topic, it is common in various scientific areas and communities to have SoS papers or meta-surveys that, in turn, classify those survey articles. Some examples are the SoS by Saini et al. 15 on vehicular ad hoc networks or the work by Giraldo et al. 16 on security/privacy approaches for cyber-physical systems. Visualization journals and conferences have introduced specific tracks that try to tackle the potentially large number of survey papers. For instance, ACM Computing Surveys publish comprehensive, readable tutorials and survey papers that give guided tours through the literature and explain topics to those who seek to learn the basics of areas outside of their specialties. Also, conferences such as EuroVis have a special track for state-of-the-art (STAR) reports, which is vital as the community grows.

To the best of our knowledge, there are only three SoS papers for the InfoVis and VA domains, written by Alharbi and Laramee, 17 Alharbi et al., 18 and McNabb and Laramee. 14 Alharbi and Laramee 17 discussed 13 survey papers on text visualization and categorized them into five different groups. In Alharbi et al., 18 the authors gathered 11 survey papers regarding visualizations of computational biology and described solved issues and open challenges. The authors of the latter 14 gathered more than 80 survey papers and extensively analyzed half of those. Although there is a small overlap with this work in terms of surveys included (3 out of 18), the goals of the two SoS papers are different: while McNabb and Laramee 14 concentrate on different topics of InfoVis and provide potential future directions for the community as a whole, we focus specifically on the exploration and interpretability of ML models using InfoVis.

Methodology

Our work covers the subject of interpretable ML models and exploration of ML models using visualization in the many stages of the process of analyzing data with ML, for example, (a) labeling and pre-processing the data, (b) letting the user handle the data with queries, (c) making the algorithm transparent during its execution, (d) interacting with the ML algorithm to steer it, and (e) comparing different algorithms and evaluating the results.

Following the guidelines from McNabb and Laramee, 14 and after an initial pilot phase where we manually searched for survey papers from the last 10 years, we converged on using combinations of relevant keywords such as “black box,” “ML,” “interactive,” “models,” “deep learning,” “neural networks,” and other derivatives with similar meanings. The majority of the included surveys were found by also including words such as “survey,” “overview,” “taxonomy,” or “state of the art,” but some of them were harder to find, so we had to include more keywords such as “literature review,” “review,” “categorization,” or “classification.” We primarily focused on important venues and proceedings that regularly publish InfoVis papers, including IEEE Transactions on Visualization and Computer Graphics (TVCG), Computer Graphics Forum (CGF), IEEE Computer Graphics and Applications (CG&A), Information Visualization Journal (IVJ), Computers & Graphics (C&G), Visual Informatics (VisInf), IEEE Visual Analytics in Science and Technology (VAST), IEEE InfoVis, Eurographics Visualization (EuroVis), IEEE Pacific Visualization (PacificVis), ACM Conference on Human Factors in Computing Systems (CHI), and ACM Intelligent User Interfaces (IUI). For both support and validation, we also looked through the SoS discussed in the previous section 14 and searched for relevant survey papers among their references.

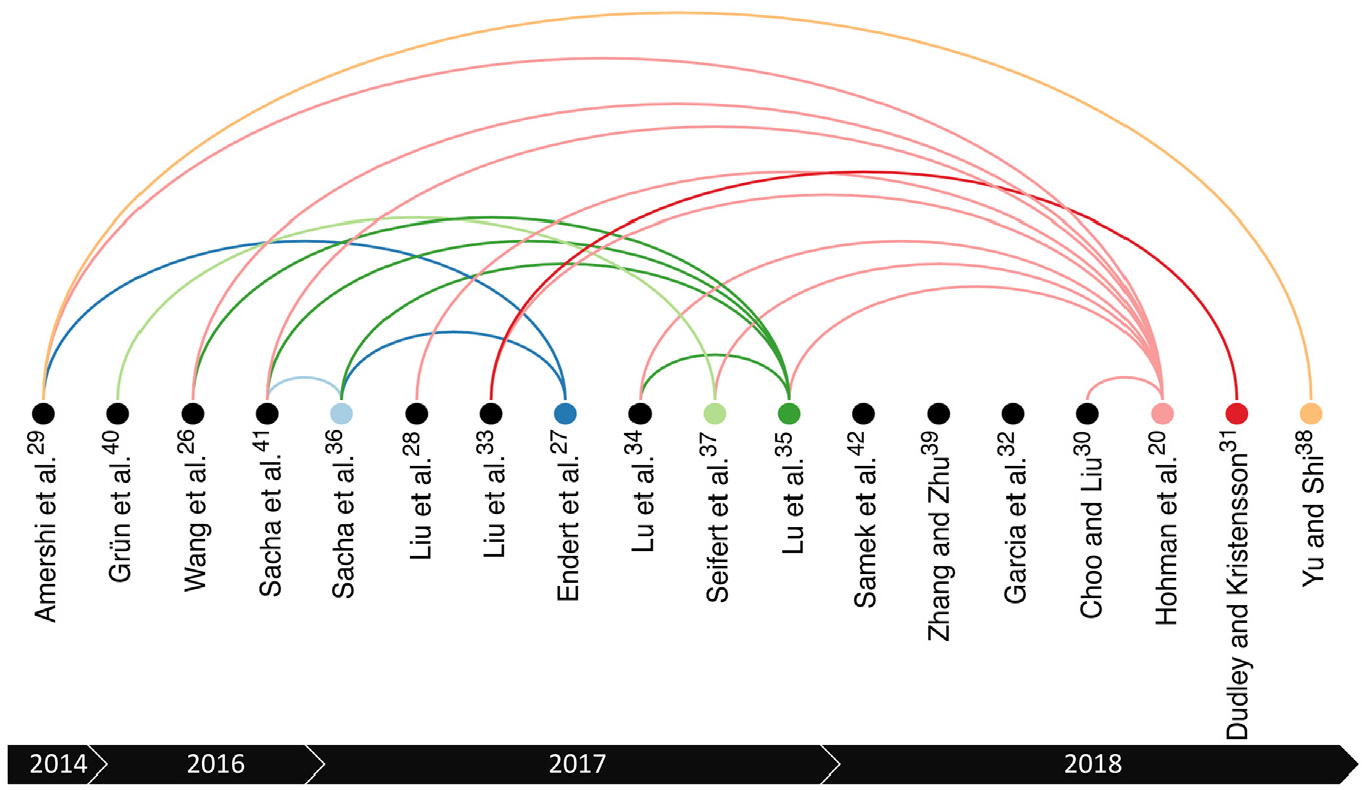

This selection process was repeatedly executed (and its results updated) for 8 months, during which we monitored and scanned the InfoVis publications in order to identify as many surveys as possible. For the sake of completeness, the Related Surveys sections and references from the identified surveys were also used as sources of new surveys (a process known as snowballing). 19 In more detail, this selection phase was performed in three steps: (a) we started by reading the titles of every reference to detect potential closely related survey papers; (b) then, we checked the abstract of each survey selected in Step 1 and decided if at least a part of it refers to interpretable and/or explainable ML models; (c) finally, we checked the Related Surveys section of each survey paper selected in Step 2 and tried to find even more candidate surveys. Some of the Related Surveys sections 20 were very informative and helped us to considerably increase the number of results that we gathered (see Figure 2).

Citations between our selected survey papers, from 2014 to 2018. The color of the nodes and links illustrate the sources of citations, that is, papers that cite at least one previous survey. Note that this color-encoding is different from the one used in Table 3.

Throughout this search procedure, we identified some related (and potentially interesting) survey papers, which were not included in our analysis for a variety of reasons. On one hand, Minar and Naher, 21 for example, is accessible but not published in a peer-reviewed venue. Abdul et al., 22 on the other hand, was not considered relevant since the authors provide a very general overview of the full landscape of existing papers on explanations and interpretable systems to highlight research opportunities and open challenges for the human–computer interaction (HCI) community. Two more papers that we excluded are Adadi et al. 23 and Guidotti et al., 24 which focus on describing mostly non-visualization methods used in order to open the black boxes and explain models. Finally, Sacha et al. 25 gathered a series of papers that focus on VA for assisting ML in order to evaluate their ontology. Although the gathered papers are related to our work, the authors do not analyze these papers in detail, and they did not perform a reproducible search as in a typical literature review, survey, or taxonomy paper.

Some of the survey papers—such as Wang et al., 26 Endert et al., 27 and Liu et al. 28 —initially seemed too general, but after a more detailed investigation we agreed that they actually contain information and papers related to the interpretation of ML models with the use of visualization. Consequently, we included them in our SoS, but we did not use them in their entirety, only the related and important parts.

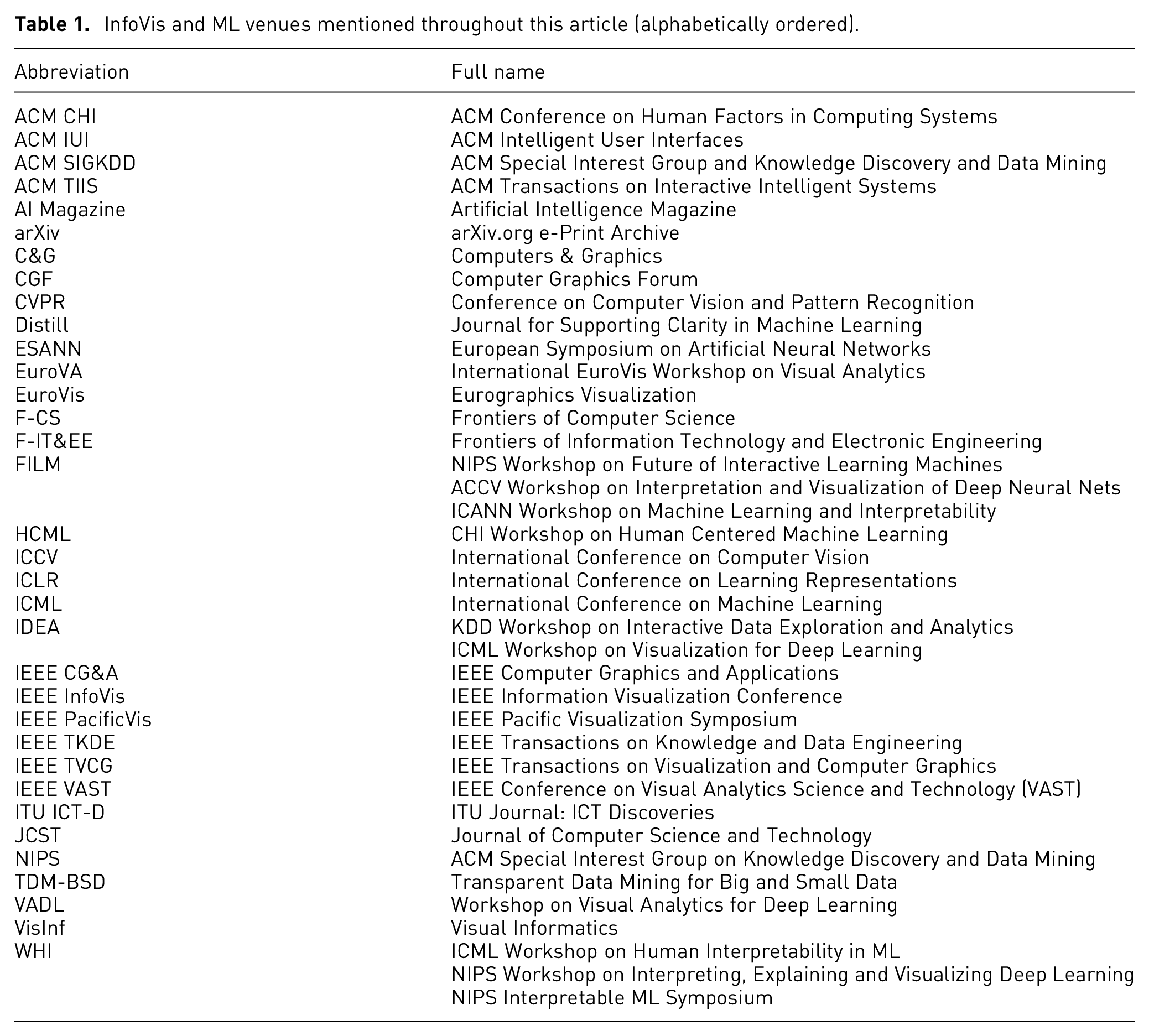

In Table 1, we list (in alphabetical order) the abbreviations and full names of the venues mentioned throughout our article, for reference. Some of the venues also aggregate other smaller subvenues, such as the entry IDEA, which includes two different workshops: KDD Workshop on Interactive Data Exploration and Analytics and ICML Workshop on Visualization for Deep Learning.

InfoVis and ML venues mentioned throughout this article (alphabetically ordered).

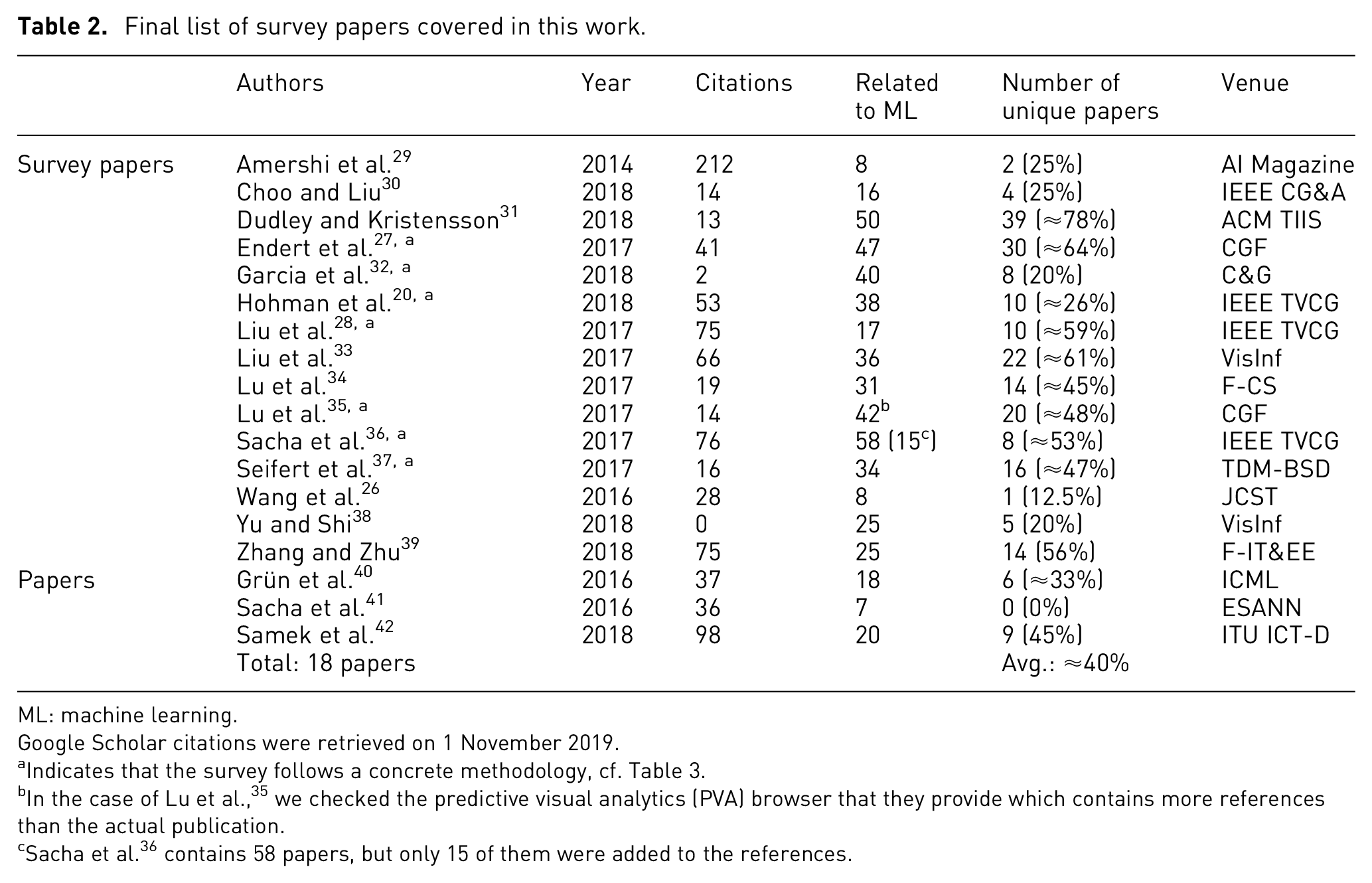

In Table 2, we present the final list of all the survey papers included in this meta-analysis, along with some associated details, such as the venues from which we retrieved them. We can observe that not all the venues were part of the initial search. We started with the primary, most important venues (as described previously), but survey papers from the secondary venues were also added due to the use of the aforementioned snowballing process. As a result, we almost exclusively got survey papers from 2016 to 2018.

Final list of survey papers covered in this work.

ML: machine learning.

Google Scholar citations were retrieved on 1 November 2019.

Indicates that the survey follows a concrete methodology, cf. Table 3.

In the case of Lu et al., 35 we checked the predictive visual analytics (PVA) browser that they provide which contains more references than the actual publication.

Sacha et al. 36 contains 58 papers, but only 15 of them were added to the references.

Although we believe that we managed to identify all important survey papers related to the subject, a threat to the validity of this methodology could be to miss surveys which contain only a small paragraph on our subject of interest. Such surveys may focus on another research topic and were probably not included.

General overview

We start our meta-analysis of the survey literature in interpretable ML models using visualization by presenting an overview of the final results of our search, according to the methodology described in the previous section. Table 2 lists all 18 papers we covered, divided into two categories: (a) survey papers, including papers with the usual survey format and published in well-known venues and (b) papers, which are research papers (not surveys) that contain briefer but also interesting literature reviews.

Although most of the results are in the period of 2016–2018, one paper from 2014 was found through our search process. Table 2 also shows information related to the relevance of included surveys, such as the number of citations (as extracted from Google Scholar at the time of writing) and the number of unique research papers found in each, that is, papers that do not appear in any other survey. On average, a published survey in the area includes approximately 40% of new, unique papers, which points to the advantages of regularly keeping up with new surveys instead of focusing only on a few. This information can be relevant both to experts, who might wish to ignore a survey if it already contains too many covered papers, and to newcomers, to whom it might be important to obtain different points of view for the same papers in order to broaden their perspective.

Figure 2 shows the citations between our selected papers. In general, they cite at most two of the previous surveys, except Hohman et al. 20 and Lu et al. 35 This suggests the importance of a meta-survey, such as ours with a comprehensive outlook on the area: in contrast to what one might assume, recent individual surveys do not necessarily mention or cover the previous work in a comprehensive way.

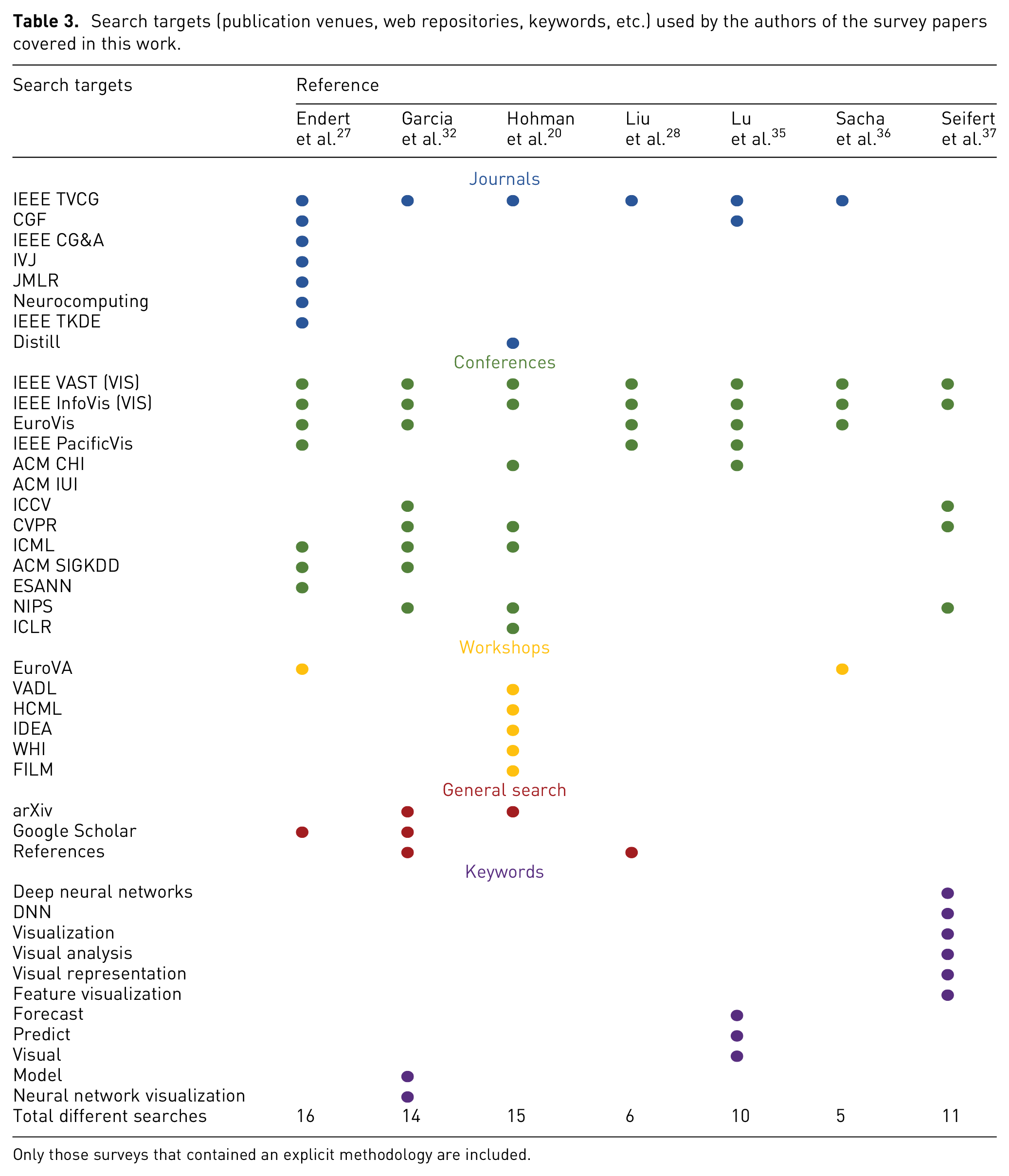

In Table 3, we summarize the methodology information of those seven surveys where the authors described their methodology explicitly, including the venues that they searched during the process of collection. As we can observe, only 7 out of the 18 survey papers follow a concrete methodology. This seems to be a weak point of the survey literature in the area, which needs improvement. On one hand, the most popular venues (i.e. with high impact factors) were usually covered by the authors, although with a variable degree of consistency (as can be seen from the relative sparsity of the matrix). On the other hand, some other venues which are known to contain papers on VA for interpreting ML models (e.g. ACM IUI) were not covered by any of the included survey papers. Another potential weak point is that most of the survey papers searched for only IEEE TVCG from the journals category. In addition, 3 out of the 7 survey papers made a general search on the web. Garcia et al. 32 and Liu et al. 28 found papers by checking the references of other publications, which makes it hard to assess the systematization of their process objectively. In addition, 3 out of these 7 survey papers searched for specific keywords, such as deep neural networks (DNNs), which also inspired our own methodology.

Search targets (publication venues, web repositories, keywords, etc.) used by the authors of the survey papers covered in this work.

Only those surveys that contained an explicit methodology are included.

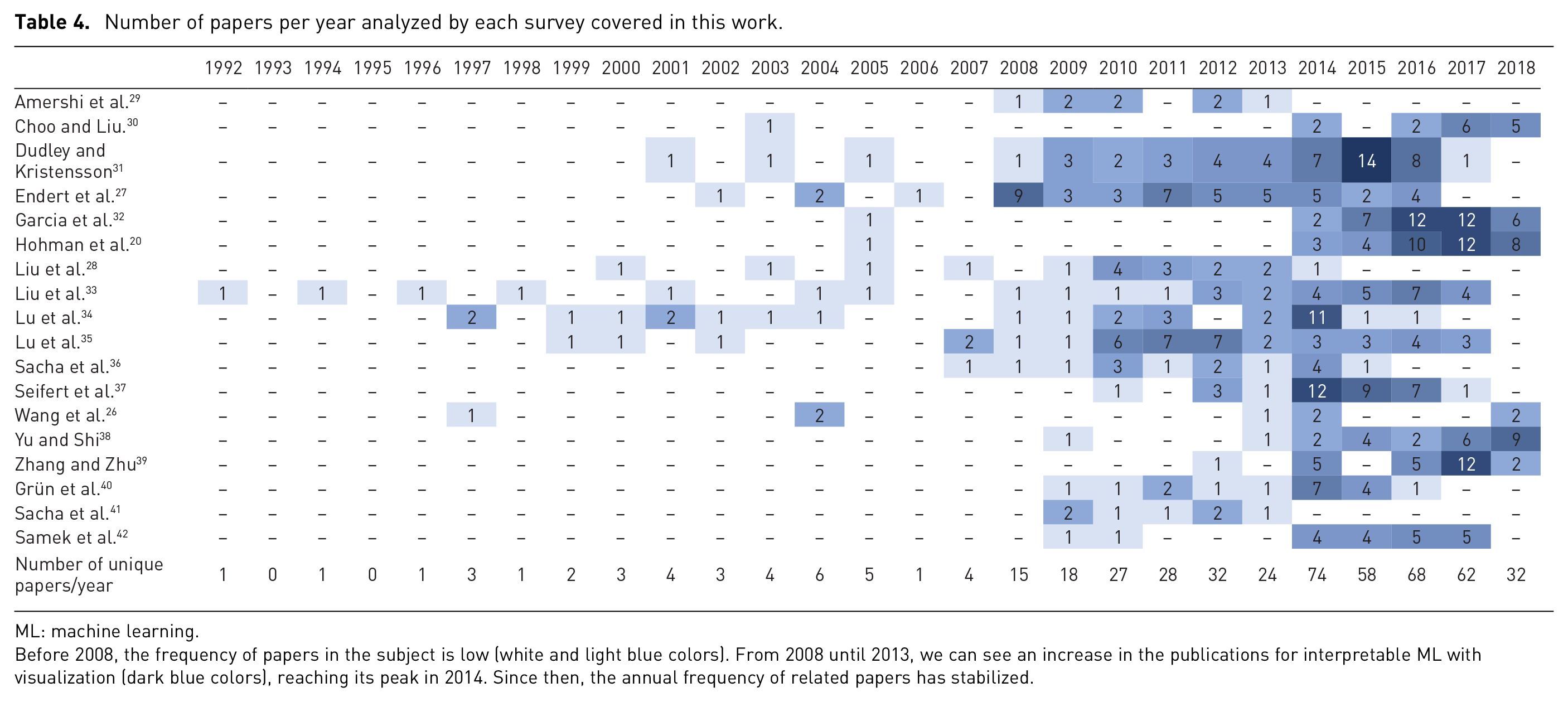

In Table 4, we present the yearly distribution of papers analyzed by the included surveys. Although the surveys seem to have covered long periods of time, it is hard to capture a clear picture since most of them do not give explicit information about the considered time frame. From our analysis, the survey authors include only a few (2 on average) papers from 1992 to 2007, while the last 10 years are much more heavily covered with the number of papers published each year still growing. For example, we can observe that in the period of 2008–2013, there were 144 papers, while 2014–2017 alone surpassed that with 262 papers on the subject.

Number of papers per year analyzed by each survey covered in this work.

ML: machine learning.

Before 2008, the frequency of papers in the subject is low (white and light blue colors). From 2008 until 2013, we can see an increase in the publications for interpretable ML with visualization (dark blue colors), reaching its peak in 2014. Since then, the annual frequency of related papers has stabilized.

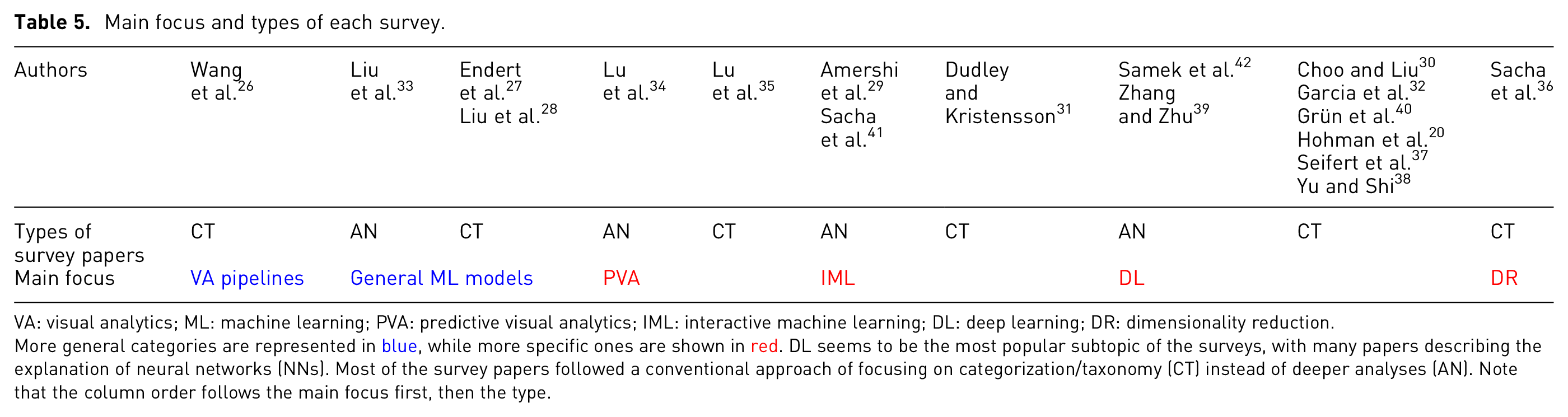

Regarding the main focus of each of the covered surveys, shown in Table 5, we can observe that 8 out of the 18 survey papers (≈44%) are mainly related to the broad family of deep learning (DL) techniques, including terms such as convolutional neural networks (CNNs) or recurring neural networks (RNNs). Other topics that are found include VA pipelines, general ML models, predictive visual analytics (PVA), interactive machine learning (IML), and dimensionality reduction (DR). Moreover, we consider 12 out of the 18 survey papers as being classification/taxonomy-centered (CT) surveys, in which the authors heavily focus on the work of constructing strict categories and separating papers accordingly. However, we consider 6 out of the 18 survey papers as being analysis-oriented (AN), which means that they include more details about the studied papers instead of focusing on the categorization task.

Main focus and types of each survey.

VA: visual analytics; ML: machine learning; PVA: predictive visual analytics; IML: interactive machine learning; DL: deep learning; DR: dimensionality reduction.

More general categories are represented in blue, while more specific ones are shown in red. DL seems to be the most popular subtopic of the surveys, with many papers describing the explanation of neural networks (NNs). Most of the survey papers followed a conventional approach of focusing on categorization/taxonomy (CT) instead of deeper analyses (AN). Note that the column order follows the main focus first, then the type.

In-depth analysis

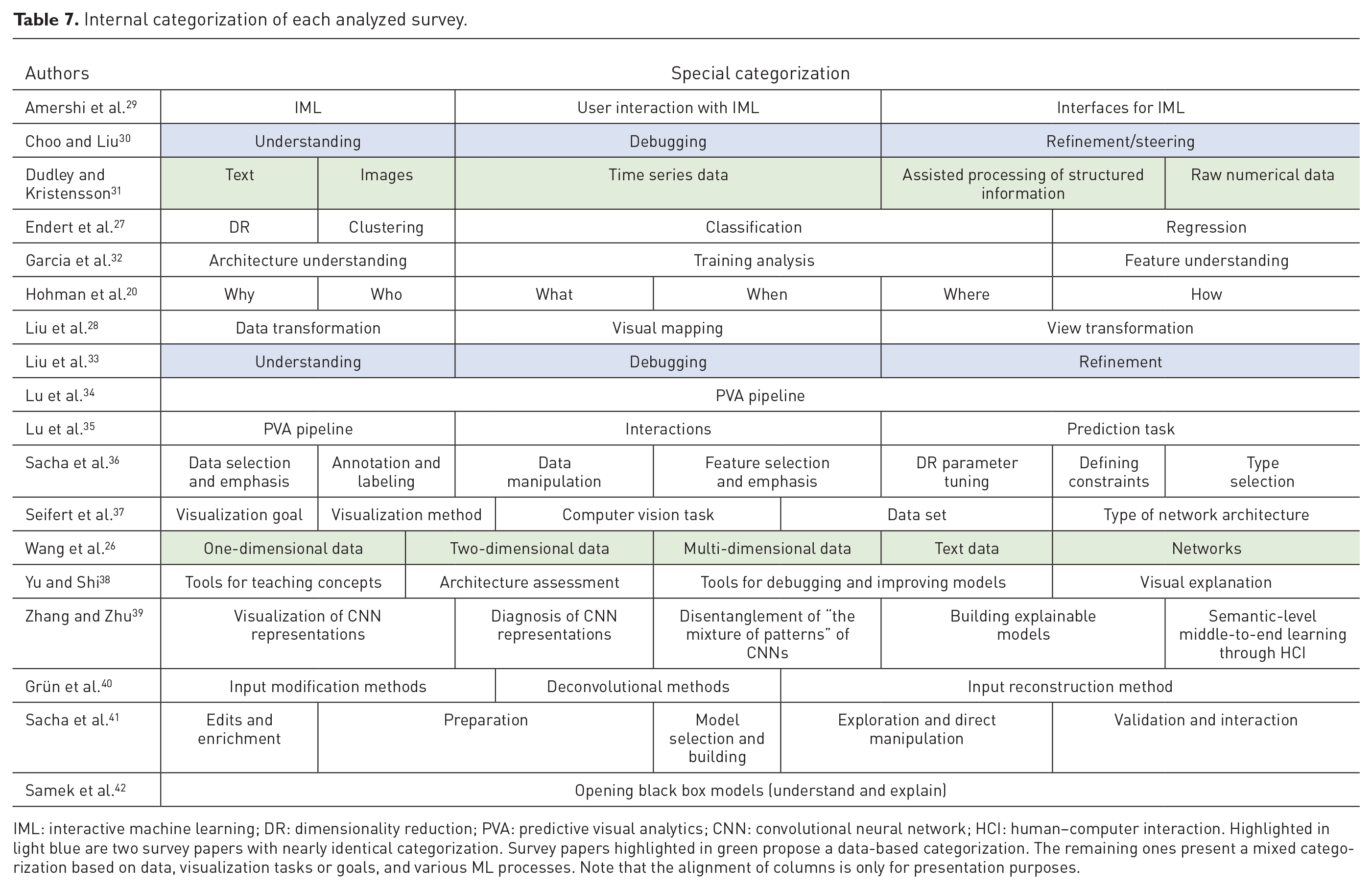

After selecting and reading the survey papers, we classified them into separate categories regarding content-related patterns, and whether they deal with more general versus more specific concepts, as shown in Table 5: VA pipelines and general ML models (more general); PVA, IML, DL, and DR (more specific).

In this section, we present an in-depth analysis of the content of the selected survey papers, organized according to our proposed categorization. Following our proposal to support a wide range of different readers from the field, we indicate inline which parts of each survey should be more interesting for each type of reader: early-stage researchers ( ) or senior researchers (

) or senior researchers ( ). Overall, the symbol

). Overall, the symbol  indicates more general concepts and overviews, while the symbol

indicates more general concepts and overviews, while the symbol  points to more detailed analyses and descriptions. Note that we also highlight the main differences in the used categorizations throughout the 18 surveys later in the “Discussion and challenges” section and in Table 7 as well.

points to more detailed analyses and descriptions. Note that we also highlight the main differences in the used categorizations throughout the 18 surveys later in the “Discussion and challenges” section and in Table 7 as well.

Visual analytics pipelines

Only one survey

26

has been classified as dealing with VA pipelines in general.  The authors of this survey present the usual VA pipeline and compare it with other visualization pipelines, taken from papers that propose them in context of one-dimensional data, two-dimensional data, multi-dimensional data, text data, and networks.

The authors of this survey present the usual VA pipeline and compare it with other visualization pipelines, taken from papers that propose them in context of one-dimensional data, two-dimensional data, multi-dimensional data, text data, and networks.  One of the discussed works is the PVA pipeline by Lu et al.,

34

see below.

One of the discussed works is the PVA pipeline by Lu et al.,

34

see below.  For some pipelines, Wang et al.

26

discuss individual stages in detail, such as visual mapping, view generation and coordination, and interaction. All those steps lead to models which allow the analysts to decide between visual methods and automatic analysis methods.

For some pipelines, Wang et al.

26

discuss individual stages in detail, such as visual mapping, view generation and coordination, and interaction. All those steps lead to models which allow the analysts to decide between visual methods and automatic analysis methods.  Moreover, the authors propose a general visualization pipeline that fits the problem of interpreting ML models with three main stages: (a) feature selection and generation, (b) model building and selection, and (c) model validation.

Moreover, the authors propose a general visualization pipeline that fits the problem of interpreting ML models with three main stages: (a) feature selection and generation, (b) model building and selection, and (c) model validation.  Concretely, feature selection has two fundamental requirements: to not decrease the classification accuracy and to sustain the distributions of classes as they were before the filtering/selection process. For feature generation, algorithms have been developed to make these processes fully automatic, but they often make mistakes that an expert with the appropriate views and knowledge might be able to avoid. In model building and selection, it is vital to have a visual interface that connects ML models with users, hence enabling examinations of hypotheses and obtaining insights. In many cases, model validation is entangled with the previous stage of the pipeline. The final piece of the puzzle is the capability to iterate over this pipeline to achieve better model selection and validation, which leads to even better feature selection and thus forming an infinite loop that can tackle challenging problems. Overall, this survey paper serves as a starting point for early-stage researchers to get familiarized with the pipeline of how the interpretation of ML models works and where visualization is suitable.

Concretely, feature selection has two fundamental requirements: to not decrease the classification accuracy and to sustain the distributions of classes as they were before the filtering/selection process. For feature generation, algorithms have been developed to make these processes fully automatic, but they often make mistakes that an expert with the appropriate views and knowledge might be able to avoid. In model building and selection, it is vital to have a visual interface that connects ML models with users, hence enabling examinations of hypotheses and obtaining insights. In many cases, model validation is entangled with the previous stage of the pipeline. The final piece of the puzzle is the capability to iterate over this pipeline to achieve better model selection and validation, which leads to even better feature selection and thus forming an infinite loop that can tackle challenging problems. Overall, this survey paper serves as a starting point for early-stage researchers to get familiarized with the pipeline of how the interpretation of ML models works and where visualization is suitable.

General machine learning models

The work of Liu et al.

33

(a predecessor of Choo and Liu

30

) categorizes papers related to general ML models in three classes: (a) understanding, (b) debugging, and (c) refinement.

The work of Liu et al.

33

(a predecessor of Choo and Liu

30

) categorizes papers related to general ML models in three classes: (a) understanding, (b) debugging, and (c) refinement.  The scope of this survey is not specifically about IML; instead, it presents a much broader discussion and analysis of recent work in visualization of ML models. Their overview shows several paradigms from DL, classification, clustering, and general ML models, which they fit in their three-class categorization with a strong focus on the explanation of the model rather than the other stages of the pipeline.

The scope of this survey is not specifically about IML; instead, it presents a much broader discussion and analysis of recent work in visualization of ML models. Their overview shows several paradigms from DL, classification, clustering, and general ML models, which they fit in their three-class categorization with a strong focus on the explanation of the model rather than the other stages of the pipeline.  According to Liu et al.,

33

researchers have developed point-based and network-based visualization approaches in order to understand how neural networks (NNs) behave when pre-processing, processing, and analyzing data, in addition to VA tools that diagnose model performance for binary classifiers, multi-class classifiers, and topic models. The available VA systems allow interaction for enhancing the performance of both supervised and unsupervised models.

According to Liu et al.,

33

researchers have developed point-based and network-based visualization approaches in order to understand how neural networks (NNs) behave when pre-processing, processing, and analyzing data, in addition to VA tools that diagnose model performance for binary classifiers, multi-class classifiers, and topic models. The available VA systems allow interaction for enhancing the performance of both supervised and unsupervised models.

Endert et al.

27

wrote a survey on a topic that is related but not exactly the same as ours: integrating ML into VA techniques (as opposed to using VA for interpreting ML models, which is our case). Although their survey contains quite a bit of unrelated information, they included many papers that match our criteria, which made us decide to include them in our work.  Their categorization starts with DR, clustering, classification, and regression, and then crosses those categories with two separate requirements: (a) modify parameters and computation domain and (b) define analytical expectations.

Their categorization starts with DR, clustering, classification, and regression, and then crosses those categories with two separate requirements: (a) modify parameters and computation domain and (b) define analytical expectations.  Endert et al.

27

give detailed explanations and analysis of each category, including a table that guides the reader to more information about those tools and techniques.

Endert et al.

27

give detailed explanations and analysis of each category, including a table that guides the reader to more information about those tools and techniques.

The final survey in this category, Liu et al.,

28

describes approaches for the visualization of high-dimensional data using the stages of the typical visualization pipeline to divide their papers into three categories: (a) data transformation, (b) visual mapping, and (c) view transformation. While initially this might not be explicitly relevant to our subject, in some of their categories, we found interesting papers for this work, including articles dealing with DR, subspace clustering, and regression analysis.

The final survey in this category, Liu et al.,

28

describes approaches for the visualization of high-dimensional data using the stages of the typical visualization pipeline to divide their papers into three categories: (a) data transformation, (b) visual mapping, and (c) view transformation. While initially this might not be explicitly relevant to our subject, in some of their categories, we found interesting papers for this work, including articles dealing with DR, subspace clustering, and regression analysis.

Summary of the three surveys

The latter two survey papers concentrate on presenting various tools and providing future challenges without any meta-analyses. They follow similar categorizations of algorithm types, but the difference between them is the focus. For Endert et al., 27 the main subjects are tools for adjusting the parameters of an algorithm, or even replacing the algorithm used, and supporting users to interact with results of the computational process, versus methods that enable users to monitor the output of an algorithm and improve it. Liu et al. 28 used the InfoVis reference model and, specifically in the data transformation part of the pipeline, included papers addressing the problem of exploration of ML models. The first survey by Liu et al. 33 also describes the model analysis, which is a further step of the pipeline after data processing/transformation, and feature selection before evaluation/validation. Thus, researchers can benefit by browsing through these surveys that cover different aspects of a pipeline of ML, depending on the area they are more interested in.

Predictive visual analytics

According to the literature review in Lu et al.,

34

the pipeline of PVA consists of four main blocks: (a) data pre-processing, (b) feature selection and generation, (c) model training, and (d) model selection and validation; complemented by two extra blocks that interact with the pipeline: (e) visualization and (f) adjustment loop.

the pipeline of PVA consists of four main blocks: (a) data pre-processing, (b) feature selection and generation, (c) model training, and (d) model selection and validation; complemented by two extra blocks that interact with the pipeline: (e) visualization and (f) adjustment loop.  The authors provide extensive details for each block and describe example papers related to data cleaning, data transformation, and data integration and fusion, which all are part of data pre-processing.

The authors provide extensive details for each block and describe example papers related to data cleaning, data transformation, and data integration and fusion, which all are part of data pre-processing.  Additionally, a comparative analysis has been performed with white box and black box methods, revealing the differences between them.

Additionally, a comparative analysis has been performed with white box and black box methods, revealing the differences between them.  Various applications for different types of data are presented, such as tabular data, time-series data, spatio-temporal data, textual data, and image data.

Various applications for different types of data are presented, such as tabular data, time-series data, spatio-temporal data, textual data, and image data.  Before reaching to the conclusion and open challenges, they define a list of comparisons with methods before PVA and after the use of PVA in a quantitative manner.

Before reaching to the conclusion and open challenges, they define a list of comparisons with methods before PVA and after the use of PVA in a quantitative manner.

Another survey paper about PVA, written by Lu et al.,

35

was published a few months after the first one. They follow a similar approach by classifying papers considering the PVA pipeline that we described in the previous paragraph.  In addition, there are two new categories: interaction and prediction. For example, regression, classification, clustering, and others are the main subcategories of the prediction task, and select, explore, filter, and others, are subcategories of interaction.

In addition, there are two new categories: interaction and prediction. For example, regression, classification, clustering, and others are the main subcategories of the prediction task, and select, explore, filter, and others, are subcategories of interaction.  Two tables show the results of co-occurrence and correlation analyses of classes of interactions, model types employed, and stages of the PVA pipeline according to their classification.

Two tables show the results of co-occurrence and correlation analyses of classes of interactions, model types employed, and stages of the PVA pipeline according to their classification.  In addition to the survey paper, they also offer an online web-based browser that contains papers on PVA, where the users can search through the papers with specific filters, such as selecting specific classes, years, or keyword search.

In addition to the survey paper, they also offer an online web-based browser that contains papers on PVA, where the users can search through the papers with specific filters, such as selecting specific classes, years, or keyword search.

Summary of the two surveys

From Table 2, we can conclude that approximately half of the papers are unique in the two survey papers described above, which suggests that both have their value. Researchers interested in learning more about specific applications of PVA with different types of data should focus on the first survey paper 34 since an extensive analysis of the pipeline is made, including various papers in each step. However, the second survey paper 35 contains only a short analysis of the PVA pipeline but focuses much more on interaction techniques used in the tools. Its co-occurrence and correlation matrices serve as a rich meta-analysis of the covered papers, which should benefit both new and experienced researchers. The paper selection in that survey suggests that three groups are beneficiary from this survey: end-users (i.e. non-expert users), domain experts (experts in a particular field), and modeling experts (experts in models but not in a specific application).

Interactive machine learning

The first survey that falls into this category is from Amershi et al.

29

Instead of focusing on the classification of papers into different categories, they emphasize the description of different applications of IML, for example, image segmentation and gesture-based music. Furthermore, a significant part of their discussion is geared toward user interaction from a human perspective.

Instead of focusing on the classification of papers into different categories, they emphasize the description of different applications of IML, for example, image segmentation and gesture-based music. Furthermore, a significant part of their discussion is geared toward user interaction from a human perspective.  They highlight, for example, the strong tendency of humans to give more positive rewards/feedback than negative rewards/feedback to learners during reinforcement learning (i.e. where an agent senses and acts in a task environment and receives numeric reward values after each action).

They highlight, for example, the strong tendency of humans to give more positive rewards/feedback than negative rewards/feedback to learners during reinforcement learning (i.e. where an agent senses and acts in a task environment and receives numeric reward values after each action).  They further demonstrate that feeding a visualization system with positive feedback through interaction, even if it might have taken the wrong decisions, is currently a prominent issue that requires a solution. Consequently, systems that guide their users in a correct way are desirable to solve this problem.

They further demonstrate that feeding a visualization system with positive feedback through interaction, even if it might have taken the wrong decisions, is currently a prominent issue that requires a solution. Consequently, systems that guide their users in a correct way are desirable to solve this problem.  According to the authors, people react positively and appreciate transparency in ML systems. As labeling is still a demanding process, they found that people want to superimpose the data labels with additional comments, and this is when transparency becomes valuable.

According to the authors, people react positively and appreciate transparency in ML systems. As labeling is still a demanding process, they found that people want to superimpose the data labels with additional comments, and this is when transparency becomes valuable.

In the last section of their survey, Amershi et al.

29

present papers that include implemented tools supporting interaction with ML models.  The authors asserted the levels of assistance that users get through interaction in three stages of the execution of ML algorithms: input, intermediate steps, and output. For instance, user interaction can be useful at the input stage for tuning hyperparameters and comparison purposes between the various ML techniques. They consider as intermediate steps both the human interaction with the system and receiving guidance from the system during the execution of an ML algorithm. Concrete actions, for example, might be queries to alternate the flow and/or the outcome of the procedure or quality assessment during the iteration of the algorithms. At the final stage, the users should be capable of evaluating the output and deciding which faulty aspects should be excluded from the next analysis.

The authors asserted the levels of assistance that users get through interaction in three stages of the execution of ML algorithms: input, intermediate steps, and output. For instance, user interaction can be useful at the input stage for tuning hyperparameters and comparison purposes between the various ML techniques. They consider as intermediate steps both the human interaction with the system and receiving guidance from the system during the execution of an ML algorithm. Concrete actions, for example, might be queries to alternate the flow and/or the outcome of the procedure or quality assessment during the iteration of the algorithms. At the final stage, the users should be capable of evaluating the output and deciding which faulty aspects should be excluded from the next analysis.  For each presented stage, they cite papers and tools that support these operations. All these observations brought them to the conclusion that it is essential to combine and take advantage of different ML models.

For each presented stage, they cite papers and tools that support these operations. All these observations brought them to the conclusion that it is essential to combine and take advantage of different ML models.

2. Dudley and Kristensson

31

present a survey of existing approaches and applications in IML with a categorization based on the type of data (application).  The authors begin by discussing implementations and techniques that allow users to interact with ML models in the visualization of text, images, speech, audio, video, and motion. The remaining part of their survey contains more general considerations about how to deal with assisted processing of structured information and raw numerical data. The former is about IML systems that utilize structured data to train models that improve user abilities. These examples are related to the application domain or the experts’ special knowledge of the training process. The latter refers to trials that omit the data type and process numerical data. Those approaches are unrelated to the data types and could be imagined as similar to spreadsheet applications that brought the key statistical functionalities to non-expert users.

The authors begin by discussing implementations and techniques that allow users to interact with ML models in the visualization of text, images, speech, audio, video, and motion. The remaining part of their survey contains more general considerations about how to deal with assisted processing of structured information and raw numerical data. The former is about IML systems that utilize structured data to train models that improve user abilities. These examples are related to the application domain or the experts’ special knowledge of the training process. The latter refers to trials that omit the data type and process numerical data. Those approaches are unrelated to the data types and could be imagined as similar to spreadsheet applications that brought the key statistical functionalities to non-expert users.  These techniques are suitable for experts but also for non-experts depending on the approaches that the authors deploy. After the analysis of the literature, they describe the design that an IML interface should have and present a workflow of the IML process according to their notes.

These techniques are suitable for experts but also for non-experts depending on the approaches that the authors deploy. After the analysis of the literature, they describe the design that an IML interface should have and present a workflow of the IML process according to their notes.

3. Finally, Sacha et al. 41 propose a human-centered ML framework that is general enough to fit different existing tools. The conceptual framework enhances several interaction options by dividing the process into five steps: (a) edits and enrichment, (b) preparation, (c) model selection and building, (d) exploration and manipulation, and (e) validation and interaction. In edits and enrichment, users should be able to interfere with the data instances and labels (e.g. by adding or editing labels in the training process) and check preliminary “what-if” hypothesis scenarios in order to better understand the data itself. The preparation step is important to allow users to affect groups of observations by applying transformations, for example, scaling and weightings, selecting specific features, or filtering. In model selection and building, users should be able to interact with ML models by choosing different algorithms and adjusting their parameters. The aim of the exploration and direct manipulation step is to support the interpretation and validation of the previous ML models by visualizing the data and model spaces, the quality, and the structure of ML algorithms. The final validation and interaction step concerns the potential analysts working with a visualization tool which integrates all the functionalities into an individual system in order to check the results and derive final insights.

Summary of the three surveys

Even though the three described survey papers focus on different aspects of IML (e.g. importance of users, approaches for specific applications, and general framework), they converge into a common pipeline, that is, a similar framework/workflow that is useful for visualization researchers. First of all, the data should be easily labeled, cleaned, filtered, and reviewed—with assistance from visualization—before being used as input to ML algorithms. After that, especially according to Sacha et al., 41 comes a transformation stage, characterized by giving weights and manipulating the data features or instances, for example. Then, the three works suggest model selection and comparison until the best suitable for each case (data set/application) is found; and afterward, to allow the user to steer the model toward the best possible solution. Exploration and manipulation for the assessment of the quality while the process is ongoing, or after the results are shown to the user, is also a crucial part of the common pipeline. Dudley and Kristensson 31 extend this part of the pipeline and state that monitoring and keeping track of the time and cost of the process should be visualized after the end of this process. Then, finally, comes validation and interaction with the use of visualization in order to fit the model into a specific application and to tackle a specific problem. The workflows or frameworks that are presented by the papers in this category show how visualization can aid users in various ways when attempting to solve a complicated task. Early-stage researchers should focus on those areas, and these survey papers for IML are a good starting point.

Deep learning

DL is currently a very popular area of ML, not only in artificial intelligence (AI) but also in the InfoVis community. This is reflected in the statistics of our SoS: over one-third of the surveys that we collected are about DL, which are the eight surveys that we discuss in the following:

To connect DL with VA, Choo and Liu

30

consider three major directions: (a) model understanding, (b) debugging, and (c) refinement/steering. Model understanding intends to reveal the reasoning behind model predictions and the internal operations of DL models. When a DL model underperforms or is not able to converge, then the model debugging process can be utilized in order to distinguish and resolve such problems. Model refinement/steering refers to techniques that interactively include the users’ expertise in the development and refinement process of a DL model. That could be achieved, among others, through user interactions, supporting semi-supervised learning, or active learning.

To connect DL with VA, Choo and Liu

30

consider three major directions: (a) model understanding, (b) debugging, and (c) refinement/steering. Model understanding intends to reveal the reasoning behind model predictions and the internal operations of DL models. When a DL model underperforms or is not able to converge, then the model debugging process can be utilized in order to distinguish and resolve such problems. Model refinement/steering refers to techniques that interactively include the users’ expertise in the development and refinement process of a DL model. That could be achieved, among others, through user interactions, supporting semi-supervised learning, or active learning.

The survey authors list libraries and tools that were used in model understanding and debugging which could be helpful for both young and senior researchers.

The survey authors list libraries and tools that were used in model understanding and debugging which could be helpful for both young and senior researchers.  Computational methods for the interpretation of DL from ML and AI communities are also introduced. Before analyzing research opportunities, the authors discuss VA approaches and cite articles that give clues on how to troubleshoot and improve models (i.e. model understanding and refinement). VA tools for RNNs and CNNs are broadly discussed as well.

Computational methods for the interpretation of DL from ML and AI communities are also introduced. Before analyzing research opportunities, the authors discuss VA approaches and cite articles that give clues on how to troubleshoot and improve models (i.e. model understanding and refinement). VA tools for RNNs and CNNs are broadly discussed as well.

Garcia et al.

32

explain the classical ideas behind ML and DL from a mathematical perspective, including deep feedforward networks (DFNs), CNNs, and RNNs. Additionally, they present a VA workflow which shows the tasks of the multiple phases of DL networks, placing emphasis on the value of VA for DL networks.

Garcia et al.

32

explain the classical ideas behind ML and DL from a mathematical perspective, including deep feedforward networks (DFNs), CNNs, and RNNs. Additionally, they present a VA workflow which shows the tasks of the multiple phases of DL networks, placing emphasis on the value of VA for DL networks.  The authors’ contributions include the separation of their collection of publications into three categories, depending on their particular visualization goal: (a) network architecture understanding, (b) visualization to support training analysis, and (c) feature understanding. They conclude by testing techniques that different tools use with common DL architectures (i.e. DFNs, CNNs, and RNNs).

The authors’ contributions include the separation of their collection of publications into three categories, depending on their particular visualization goal: (a) network architecture understanding, (b) visualization to support training analysis, and (c) feature understanding. They conclude by testing techniques that different tools use with common DL architectures (i.e. DFNs, CNNs, and RNNs).

Hohman et al.

20

investigate VA tools that explore DL models by classifying papers into six categories, each based on a research question: “Why would people want to use visualization in DL?”; “Who is able to take advantage from visualization of DL?”; “What data, features, and relationships could be visualized in DL?”; “How is it possible to visualize the data, features, and relationships?”; “When in the DL process is visualization useful?”; and “Where DL visualization could be necessary?” Accordingly, each of the analyzed papers is assigned to one or more of these questions, depending on whether it contributes to that aspect or not. Interpretability and explainability as well as debugging an improving model are two subcategories that most of the papers belong to. According to the authors, a common approach for visualization designers/researchers is to perform instance-based analysis and exploration, that is, specific data instances are tested to understand how they develop throughout a model pipeline. Additionally, most of the discussed tools at this survey paper are used to observe the results of an in-depth learning process instead of the middle steps of the training process. The DL models that these visualization papers focus on are mostly related to CNNs, RNNs, and GANs.

Hohman et al.

20

investigate VA tools that explore DL models by classifying papers into six categories, each based on a research question: “Why would people want to use visualization in DL?”; “Who is able to take advantage from visualization of DL?”; “What data, features, and relationships could be visualized in DL?”; “How is it possible to visualize the data, features, and relationships?”; “When in the DL process is visualization useful?”; and “Where DL visualization could be necessary?” Accordingly, each of the analyzed papers is assigned to one or more of these questions, depending on whether it contributes to that aspect or not. Interpretability and explainability as well as debugging an improving model are two subcategories that most of the papers belong to. According to the authors, a common approach for visualization designers/researchers is to perform instance-based analysis and exploration, that is, specific data instances are tested to understand how they develop throughout a model pipeline. Additionally, most of the discussed tools at this survey paper are used to observe the results of an in-depth learning process instead of the middle steps of the training process. The DL models that these visualization papers focus on are mostly related to CNNs, RNNs, and GANs.

The two research questions that Seifert et al.

37

tried to answer are “What are the insights that can be gained from DNN models by using visualizations?” and “Which visualizations are appropriate for each kind of insights?” To reach their conclusions, they surveyed visualization papers and separated them into five main categories: (a) the visualization goals, which are mostly related to an assessment of the architecture, (b) the visualization methods, which are single-image pixel-based displays, (c) the computer vision tasks, which are mostly classification and representation learning, (d) the network architecture types, which are in most cases CNNs, and (e) the data sets that are used, with ImageNet

43

being the most popular.

The two research questions that Seifert et al.

37

tried to answer are “What are the insights that can be gained from DNN models by using visualizations?” and “Which visualizations are appropriate for each kind of insights?” To reach their conclusions, they surveyed visualization papers and separated them into five main categories: (a) the visualization goals, which are mostly related to an assessment of the architecture, (b) the visualization methods, which are single-image pixel-based displays, (c) the computer vision tasks, which are mostly classification and representation learning, (d) the network architecture types, which are in most cases CNNs, and (e) the data sets that are used, with ImageNet

43

being the most popular.  Moreover, they offer a table showing the relationships between visualization goals and applied methods, a table with an overview of data sets, which is a unique feature across the surveys that we reviewed, and tables with the aggregated results.

Moreover, they offer a table showing the relationships between visualization goals and applied methods, a table with an overview of data sets, which is a unique feature across the surveys that we reviewed, and tables with the aggregated results.

Zhang and Zhu

39

separate their survey paper into five distinct sections. The first one is about visualization of CNN representations in intermediate network layers. The second deals with the diagnosis of CNN representations, and the third discusses issues of disentanglement of “the mixture of patterns” encoded in every filter of CNNs. The fourth is about building explainable models, and finally, the last one concerns semantic-level middle-to-end learning through human–computer interaction. They do not follow a clear methodology of categorization. Instead, they analyze the field according to the above-mentioned viewpoints.

Zhang and Zhu

39

separate their survey paper into five distinct sections. The first one is about visualization of CNN representations in intermediate network layers. The second deals with the diagnosis of CNN representations, and the third discusses issues of disentanglement of “the mixture of patterns” encoded in every filter of CNNs. The fourth is about building explainable models, and finally, the last one concerns semantic-level middle-to-end learning through human–computer interaction. They do not follow a clear methodology of categorization. Instead, they analyze the field according to the above-mentioned viewpoints.

Grün et al.

40

describe a new taxonomy for feature visualization methods that—according to the authors—fit most of the relevant papers.

Grün et al.

40

describe a new taxonomy for feature visualization methods that—according to the authors—fit most of the relevant papers.  This taxonomy supports the task of providing an overview of feature visualization papers together with the open-source library FeatureVis

40

for MatConvNet

44

which they created. FeatureVis is used for the visual analysis of DL models and the direct development of users’ network architectures.

This taxonomy supports the task of providing an overview of feature visualization papers together with the open-source library FeatureVis

40

for MatConvNet

44

which they created. FeatureVis is used for the visual analysis of DL models and the direct development of users’ network architectures.  Grün et al. place papers in three distinct categories as follows: (a) input modification methods, (b) deconvolutional methods, and (c) input reconstruction methods. They define their characteristics and summarize the related literature of each category.

Grün et al. place papers in three distinct categories as follows: (a) input modification methods, (b) deconvolutional methods, and (c) input reconstruction methods. They define their characteristics and summarize the related literature of each category.

Samek et al.

42

summarize the field of interpreting DL models by focusing on the techniques/tools that try to open the black box of the models. They do not have a precise categorization, and not all original papers are analyzed in detail. The main goal of this survey is to foster awareness of the usefulness of having interpretable and explainable ML models using methods such as sensitivity analysis (SA)

45

and layer-wise relevance propagation (LRP).

46

Samek et al.

42

summarize the field of interpreting DL models by focusing on the techniques/tools that try to open the black box of the models. They do not have a precise categorization, and not all original papers are analyzed in detail. The main goal of this survey is to foster awareness of the usefulness of having interpretable and explainable ML models using methods such as sensitivity analysis (SA)

45

and layer-wise relevance propagation (LRP).

46

Yu and Shi

38

surveyed visualization tools that assist the user in reaching four high-level goals: (a) teaching concepts, (b) assessment of the architecture, (c) debugging and improving models, and (d) visual exploration of DNNs, CNNs, RNNs, as well as deep generative models. They target four distinct groups of people: (a) beginners, (b) practitioners, (c) developers, and (d) experts, respectively. These groups are all, according to the authors, related to the aforementioned four visualization goals.

Yu and Shi

38

surveyed visualization tools that assist the user in reaching four high-level goals: (a) teaching concepts, (b) assessment of the architecture, (c) debugging and improving models, and (d) visual exploration of DNNs, CNNs, RNNs, as well as deep generative models. They target four distinct groups of people: (a) beginners, (b) practitioners, (c) developers, and (d) experts, respectively. These groups are all, according to the authors, related to the aforementioned four visualization goals.

Summary of the eight surveys

Due to the relatively large number of surveys in this category, we present here a brief comparison instead of a short summary. First, we look at the differences with respect to their focus on specific DL models, and second, with respect to the chosen visualization methodologies.

Garcia et al.,

32

Hohman et al.,

20

Seifert et al.,

37

and Yu and Shi

38

discuss papers for visualizing DNNs, CNNs, and RNNs. A specific type of DNNs, called DFNs, is examined only by Garcia et al.

32

Garcia et al.,

32

Hohman et al.,

20

Seifert et al.,

37

and Yu and Shi

38

discuss papers for visualizing DNNs, CNNs, and RNNs. A specific type of DNNs, called DFNs, is examined only by Garcia et al.

32

Long short-term memory (LSTM) and generative adversarial networks (GANs) are also considered in the taxonomy of Hohman et al.

20

Multicolumn deep neural networks (MCDNNs) were reported by Seifert et al.,

37

along with deep convolutional neural networks (DCNNs), which are variations of NNs and CNNs. Deep generative models (DGMs), such as GANs, and variational autoencoders (VAEs), were included in Yu and Shi.

38

In most cases, the described visualization tools provide visual explanations for experts, and debugging and improving models for developers as target groups.

Long short-term memory (LSTM) and generative adversarial networks (GANs) are also considered in the taxonomy of Hohman et al.

20

Multicolumn deep neural networks (MCDNNs) were reported by Seifert et al.,

37

along with deep convolutional neural networks (DCNNs), which are variations of NNs and CNNs. Deep generative models (DGMs), such as GANs, and variational autoencoders (VAEs), were included in Yu and Shi.

38

In most cases, the described visualization tools provide visual explanations for experts, and debugging and improving models for developers as target groups.

Furthermore, Hohman et al. 20 and Seifert et al. 37 provide discussions on concrete visualization methods. They discovered that instance-based exploration and pixel-based approaches are the most common. Aggregation of information20,32,38 is also a usual way to describe the inner parts of the algorithms, along with feature and instance explorations. In these four mentioned survey papers,20,32,37,38 there are examples of tools developed for model users, developers, practitioners, and non-experts, but the survey authors conclude that experts are the main target group for most of them. Also, DNNs and CNNs are the most prominent NNs which are usually visualized. Choo and Liu 30 and Samek et al. 42 motivate why we need visualization to support DL, while Zhang and Zhu 39 and Grün et al. 40 work with CNNs following a similar approach with visualizing, diagnosing/debugging, building explainable models, and allowing the user to steer/interact with the model. The difference here is that Grün et al. 40 focus on feature visualization techniques, while Zhang and Zhu 39 focus on the values of model interpretability. Choo and Liu’s 30 paper does not have any specific model when describing those cases. Samek et al. 42 include examples of different application domains in which those models can be used.

Dimensionality reduction

Sacha et al.

36

focus in their survey on DR techniques and tools.

They propose very detailed and comprehensive categorizations, initially separating the original papers into seven guiding scenarios for DR interaction: (a) data selection and emphasis, (b) annotation and labeling, (c) data manipulation, (d) feature selection and emphasis, (e) DR parameter tuning, (f) defining constraints, and (g) DR type selection. Some of the papers they collected belong to more than one scenario at the same time, in some cases even reaching the four different scenarios for a single paper. The authors also assess interaction and usability covered by those papers by categorizing them into five more categories: (a) direct manipulation of visual elements, (b) controls such as sliders or buttons, (c) command line interface, (d) other, and (e) not applicable for the unmatched papers. Finally, they also distinguish between two more categories related to the tasks in which the methods are used: clustering and classification.

They propose very detailed and comprehensive categorizations, initially separating the original papers into seven guiding scenarios for DR interaction: (a) data selection and emphasis, (b) annotation and labeling, (c) data manipulation, (d) feature selection and emphasis, (e) DR parameter tuning, (f) defining constraints, and (g) DR type selection. Some of the papers they collected belong to more than one scenario at the same time, in some cases even reaching the four different scenarios for a single paper. The authors also assess interaction and usability covered by those papers by categorizing them into five more categories: (a) direct manipulation of visual elements, (b) controls such as sliders or buttons, (c) command line interface, (d) other, and (e) not applicable for the unmatched papers. Finally, they also distinguish between two more categories related to the tasks in which the methods are used: clustering and classification.  Their approach is closer—in comparison to others—to the mental map and the logic that people usually follow in order to classify and find what techniques they should use in visualization. Therefore, this survey works nicely as a guide for better understanding the design space and supporting the implementation of new DR-based tools. The authors also show the relationships between the identified DR techniques and the above-listed interaction scenarios, including a table with temporal statistics of interaction and DR techniques.

Their approach is closer—in comparison to others—to the mental map and the logic that people usually follow in order to classify and find what techniques they should use in visualization. Therefore, this survey works nicely as a guide for better understanding the design space and supporting the implementation of new DR-based tools. The authors also show the relationships between the identified DR techniques and the above-listed interaction scenarios, including a table with temporal statistics of interaction and DR techniques.

This survey paper provides a robust meta-analysis of the included papers, including previously identified patterns such as calculating correlation between analyzed papers. It should be a valuable source for experienced researchers to retrieve new information about the subfield of explaining DR with visualization.

This survey paper provides a robust meta-analysis of the included papers, including previously identified patterns such as calculating correlation between analyzed papers. It should be a valuable source for experienced researchers to retrieve new information about the subfield of explaining DR with visualization.

Topic analysis

In order to detect interesting relationships and emerging topics among the 18 survey papers, we applied topic modeling to the individual papers discussed by them, following the overall visual text analysis approach proposed by Kucher et al. 47

Methodology

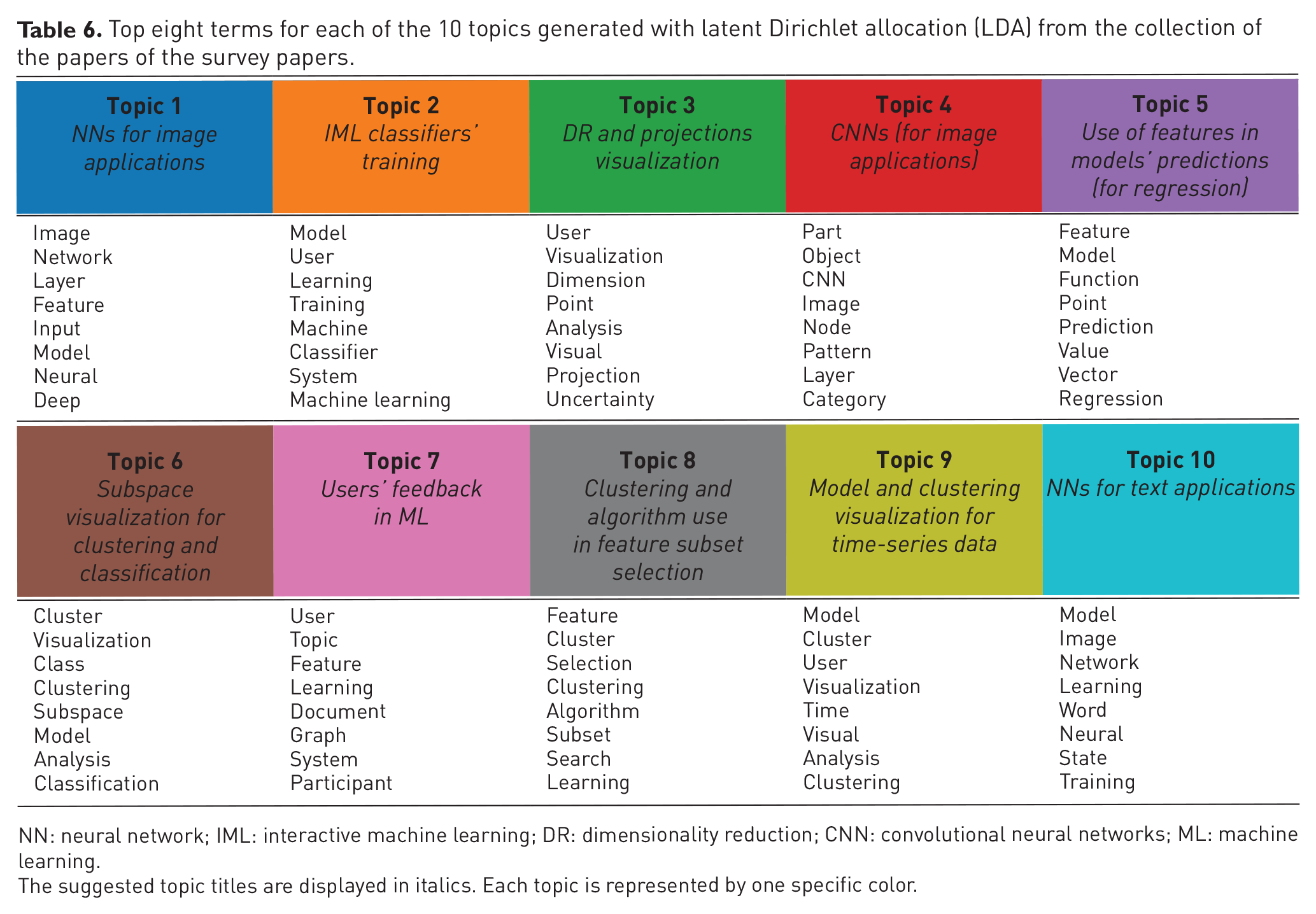

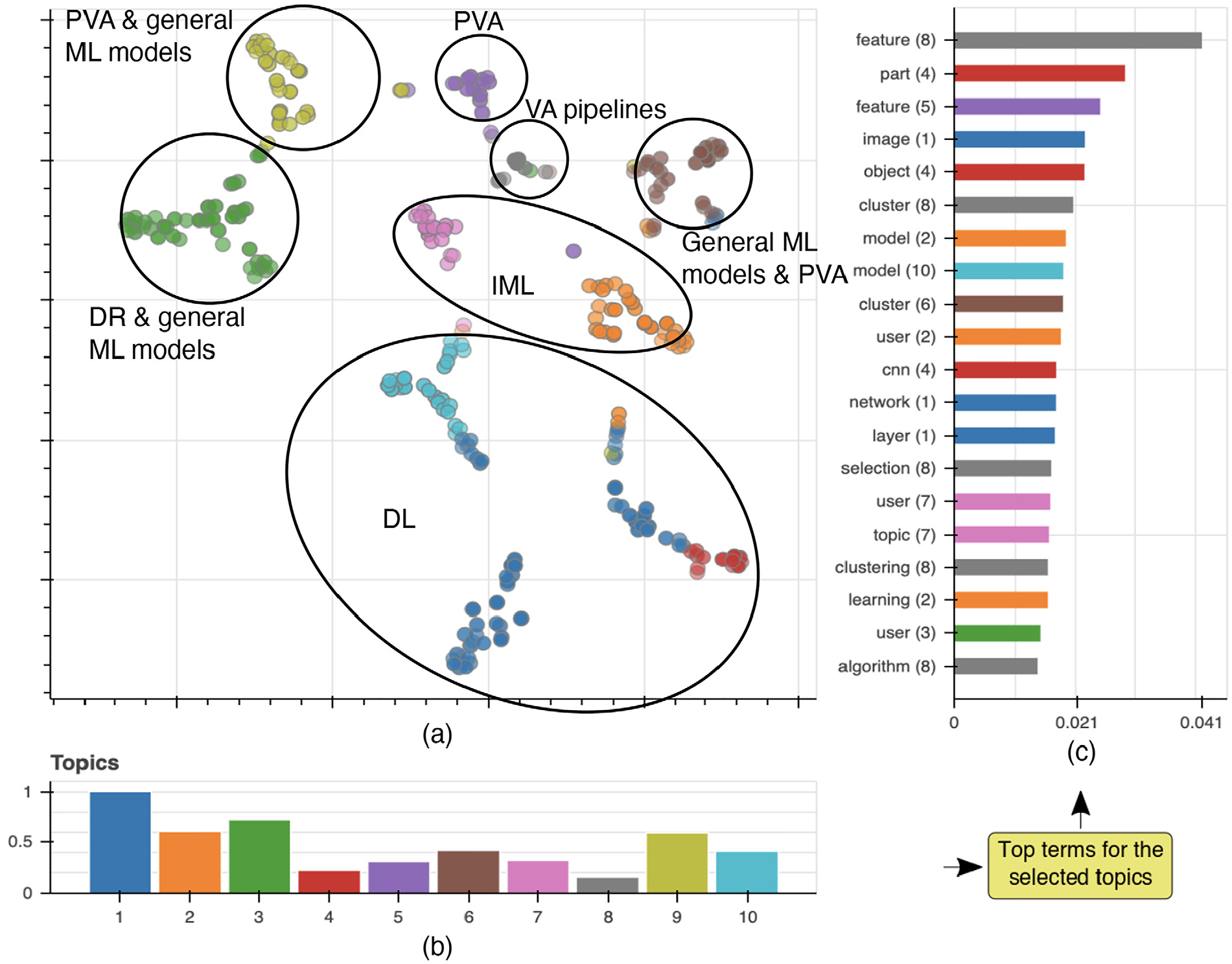

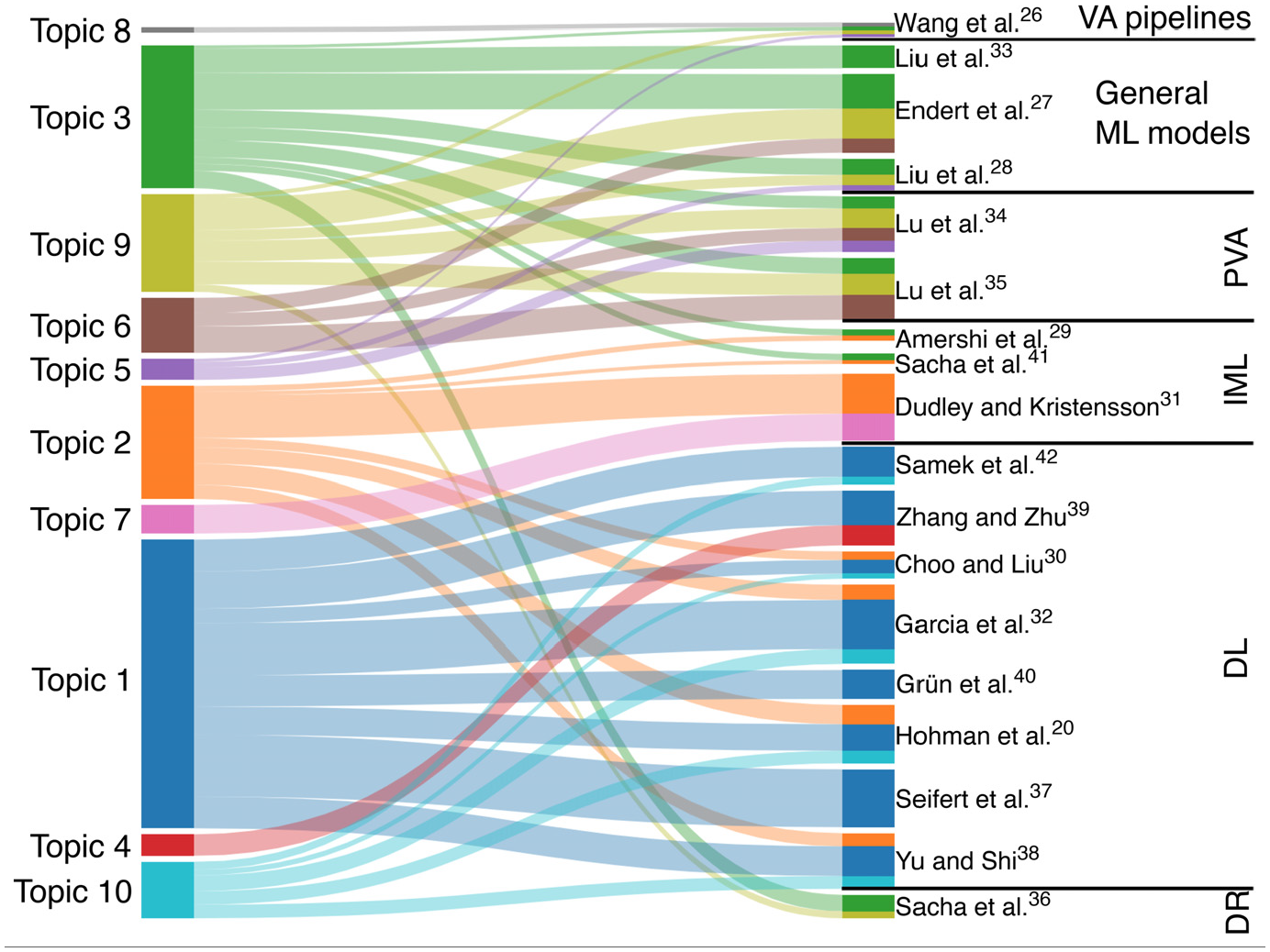

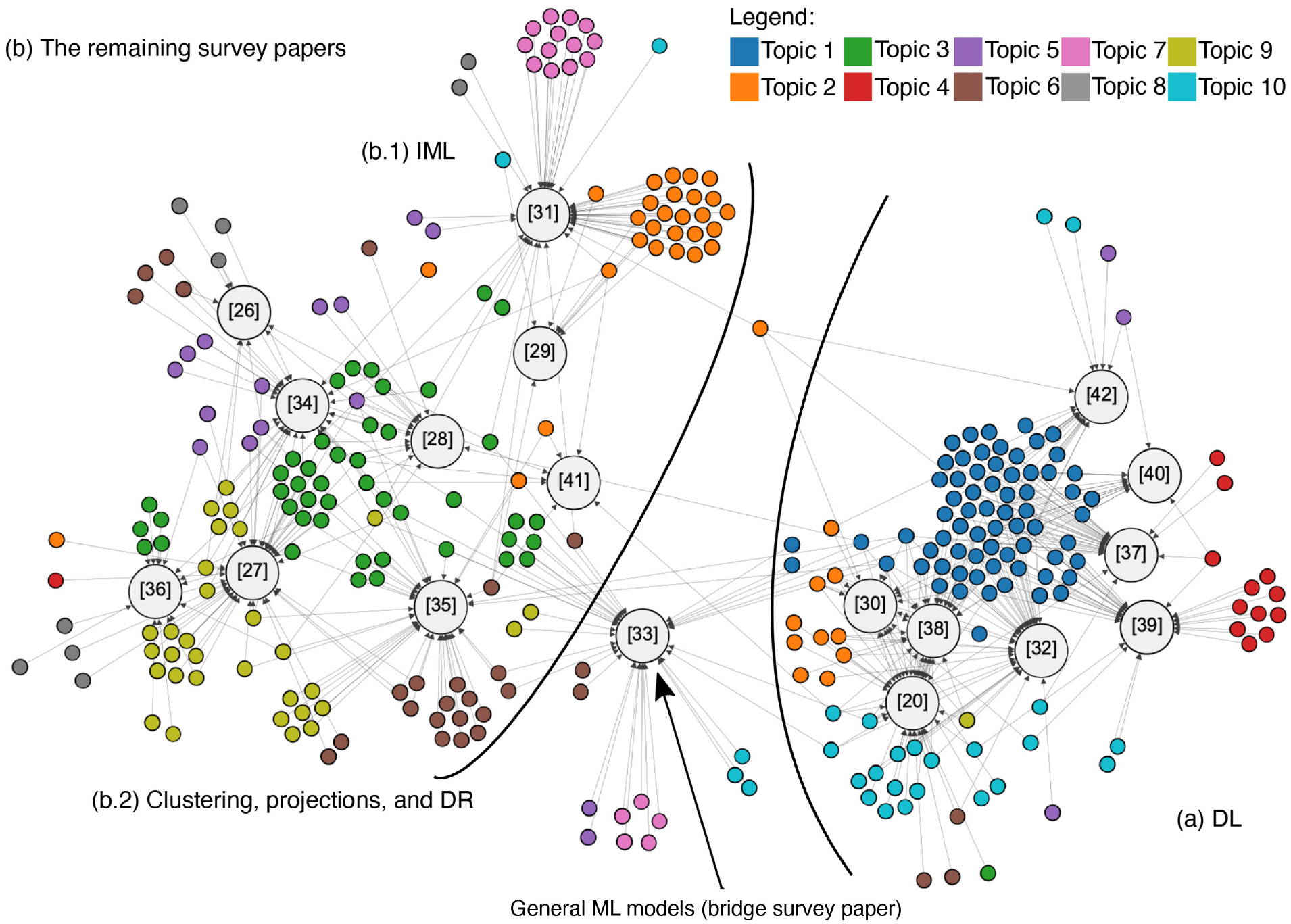

First, we selected only the unique papers of each survey paper, collected all their PDF files, and converted them to text. Next, we processed their full texts with the latent Dirichlet allocation (LDA) algorithm,48,49 a standard approach for topic modeling. In order to validate the LDA results—it might produce different results at different executions—we ran the same process multiple times and found equivalent results without significant changes. In the end, our LDA results led to ten topics; the top 8 terms for each topic are presented in Table 6. Our implementation uses Python with natural language toolkit (NLTK) 50 for the pre-processing of stop words and Gensim 51 for the topic modeling stages. The names of the topics were manually proposed after discussions between the authors considering the top terms and the contents of the survey papers in each topic. The results are visualized with the help of the interactive visualization tool described by Kucher et al., 47 see Figure 3.

Top eight terms for each of the 10 topics generated with latent Dirichlet allocation (LDA) from the collection of the papers of the survey papers.

NN: neural network; IML: interactive machine learning; DR: dimensionality reduction; CNN: convolutional neural networks;ML: machine learning.

The suggested topic titles are displayed in italics. Each topic is represented by one specific color.

Exploration of emergent topics extracted from all individual papers discussed in the surveys. (a) Papers’ embedding created with t-SNE and based on the extracted topics (black outlines were manually drawn and represent our categorization). (b) Bar chart of topics with their relevance (scaled from 0 to 1). (c) Bar chart of top terms with the highest relevance for all the topics (topics are identified by both numbers in parentheses and colors; note that a single term can be present in multiple topics).

Topics

In the following description list, we briefly summarize the 10 topics we identified:

Topic 1. NNs are often used in image applications, and visualizations are frequently employed to get a better understanding of pre-trained networks. However, visual representations of NNs that display the middle layers and try to open the black box of NNs are less explored according to the related survey papers (cf. Table 5). Consequently, such visualizations might be good candidates to focus on in future research.

Topic 1. NNs are often used in image applications, and visualizations are frequently employed to get a better understanding of pre-trained networks. However, visual representations of NNs that display the middle layers and try to open the black box of NNs are less explored according to the related survey papers (cf. Table 5). Consequently, such visualizations might be good candidates to focus on in future research.

Topic 2. During a training process, it is desirable to include humans in the loop that inspect and correct the classifier’s errors and decisions.

31

However, more research is necessary for defining the appropriate level of the end-users and classifiers distribution of work, setting the right occasions of interference from the user side to the system, and finding the frequency of these interactions of the human and model.

Topic 2. During a training process, it is desirable to include humans in the loop that inspect and correct the classifier’s errors and decisions.

31

However, more research is necessary for defining the appropriate level of the end-users and classifiers distribution of work, setting the right occasions of interference from the user side to the system, and finding the frequency of these interactions of the human and model.

Topic 3. Data Manipulation and DR Type Selection were only found seven and four times in individual papers of Sacha et al.,

36

respectively. Sacha et al. propose that an appropriate selection of DR algorithms, while users explore, modify, and manage the data, can be a worthwhile direction for future research.

Topic 3. Data Manipulation and DR Type Selection were only found seven and four times in individual papers of Sacha et al.,

36

respectively. Sacha et al. propose that an appropriate selection of DR algorithms, while users explore, modify, and manage the data, can be a worthwhile direction for future research.

Topic 4. CNNs are in several cases used in image applications. Model understanding and diagnosis visualization tools and feature visualization methods aid in explaining them.

39

Topic 4. CNNs are in several cases used in image applications. Model understanding and diagnosis visualization tools and feature visualization methods aid in explaining them.

39

Topic 5. Use of features in models’ prediction is not a trivial task, especially due to the computational resources that are needed while using predictive analytics algorithms and allowing the user to steer the process. As a result, one research question is “how the feature selection process can be supported during a real-time algorithm execution?” along with the problem generated by the lack of formal validation studies in the area of PVA.34,35

Topic 5. Use of features in models’ prediction is not a trivial task, especially due to the computational resources that are needed while using predictive analytics algorithms and allowing the user to steer the process. As a result, one research question is “how the feature selection process can be supported during a real-time algorithm execution?” along with the problem generated by the lack of formal validation studies in the area of PVA.34,35

Topic 6. Dealing with subspace visualization for clustering and classification types of ML is an interesting topic which is already in the focus of the visualization community. Subspace clustering methods not only support the search for various robust projections but also deal with the difficulties of the ever-increasing complexity of the data by breaking them down into lower-dimensional subsets.

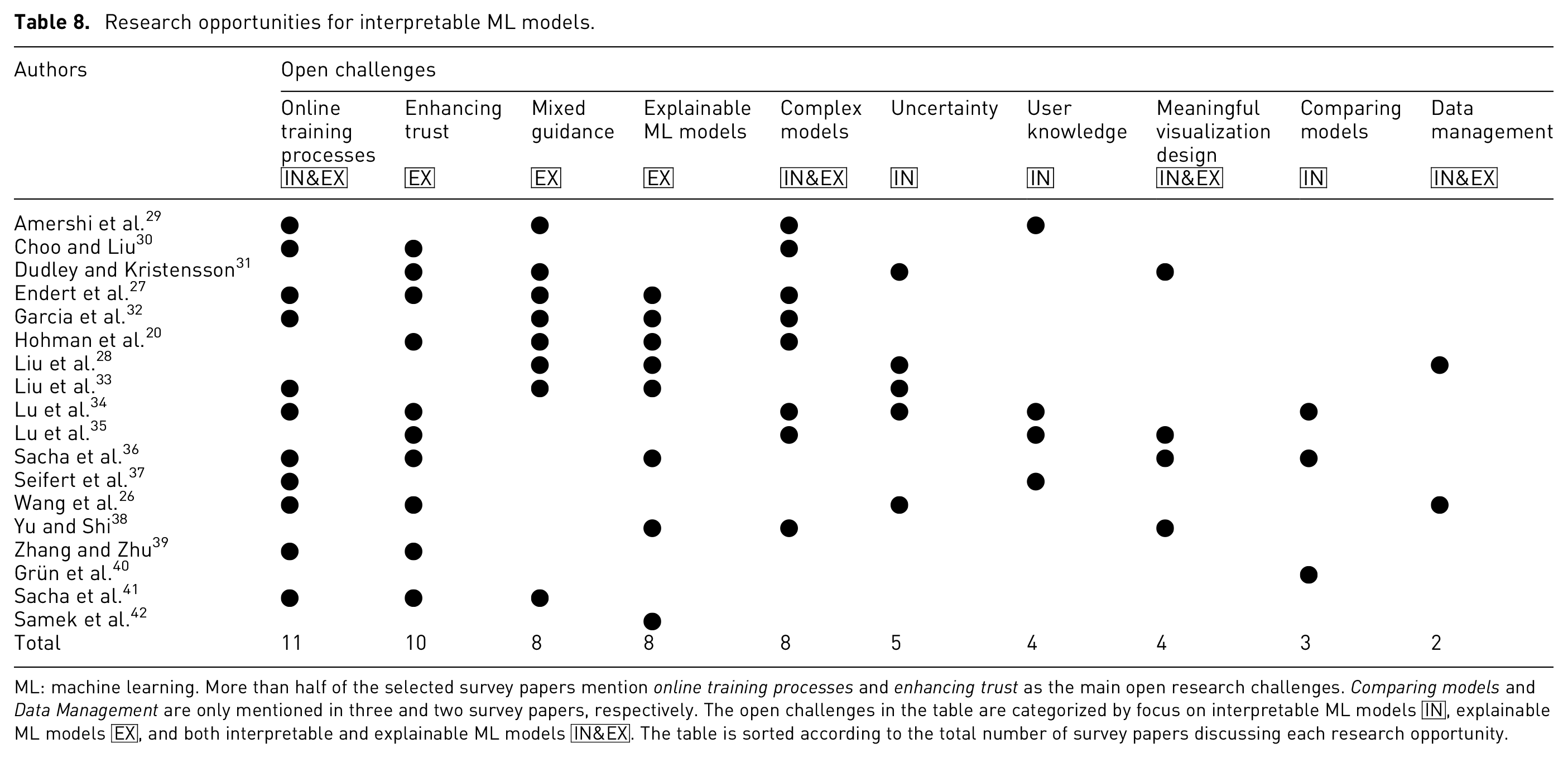

27