Abstract

Technological advancements and market pressures are driving the development of pedagogical course design approaches. By using organizational design research into structuring organizations and work processes to improve effectiveness and efficiency, we focus on two structural constructs from organizational design research: standardization (of coordination including active learning components) and centralization (of decisions making for course implementation). This paper examines the impact of changes to these constructs during the conversion of a course from a traditional (face-to-face) to a blended/flipped modality. Findings show that structuring a course based on standardization and centralization can affect the student outcomes in the course. Specifically, revealing no statistical difference in short-term student performance from the traditional lecture approach to the blended/flipped approach; however, lower variability in performance occurred across sections. In addition, a lagged learning effect derived from an exit exam in students’ last semester, revealed a statistical difference with students from the blended/flipped approach achieving higher long-term learning scores. We offer this as an argument for the effectiveness of the standardized active learning components embedded within the new course structure.

Introduction

The ever-changing landscape of higher education has created a sense of urgency among universities, especially since the arrival of COVID-19. This disruption period encouraged the evaluation of learning pedagogies that reach and educate today’s students while addressing market pressures.

Two popular learning pedagogies, blended and flipped, have been increasingly adopted (Fisher et al., 2021). Research suggests that blended learning, defined as seamlessly fusing face-to-face with technology-mediated learning (Fisher et al., 2021), has untapped potential (Yuping et al., 2015). Flipped learning requires students to engage with content, often from a technology resource, before class. Findings showed that flipped and blended learning positively influence students’ perceptions of engagement, performance, and satisfaction (Fisher et al., 2021; Heijstra & Siguroardottir, 2018).

Technology-mediated learning encouraged the movement away from a small class (sage on the stage) structure while implementing different learning pedagogies and increasing the possible course structures. The ease of scaling these implementations often offsets the cost and skills required in the short term for technological implementations. With the increased use of these approaches, it is necessary to investigate these technology-mediated class structures using various learning pedagogies to understand how they can help universities address market pressures while continuing to provide quality education.

The pedagogical use of technology enabling these different forms of classroom structure may be relatively new; however, we are not without theoretical models for ways of addressing these changes. We look to organizational theory for potential models of organizing work. Frederick Taylor (Kanigel, 1997) initially provided the idea of standardization of processes in his scientific management method to improve efficiency. Additionally, Weber (1949) argued that the importance of centralized decision-making in a bureaucratic organization provided clarity. These structural components are still standard in today’s organizational design research. Observing course design similarities with organizational design components in that employees or participants (students) seek management and leadership from someone above them with authority (faculty), we adopt a lens that sheds light on the new classroom structure being employed. Wagner and Van Dyne (1999) introduced the elements of organizational design into classroom structure by incorporating standardization and centralization to explain the dimensions of small and large classroom structures. Building on this research, our study seeks to address the following questions:

Where do we place a blended class on the standardization/centralization continuum?

What can we learn from organizational structure to help move a course to a more efficient blended/flipped format?

And finally:

What are the impacts on our product, student education (short and long-term and variability over students), and their perceptions of the course?

Theoretical background

Organizational theory

In organizational theory research, standardization of coordination relates to the extent of coordinated, interdependent tasks by a standard process or procedure. Some researchers identified Frederick Taylor as the first to propose such a concept (Locke, 1982). Taylor’s scientific method pushed for standardized tools to create one best way to perform a function but now extends well beyond using tools for manual labor tasks. The incorporation of standardization in Weber’s work on bureaucracies focused on increased organizational efficiency with procedures and processes. More recent researchers applied principles of standardization to organizational routines (Feldman & Pentland, 2003; Gilbert, 2005) to leverage “best practices.”

In highly standardized environments, anticipating coordination issues led organizations to adopt a more standardized approach, which ensured uniformity and certainty in addressing the issue. Aroles and McLean (2016) noted that the standardization of routines brings stability and a sense of order in dynamic and uncertain environments. There is a psychological benefit to using standardization when there is high uncertainty. Still, others (Winter & Szulanski, 2001) argue that standardization allows firms to transfer their own “best practices” across their organizations and can be a source of competitive advantage (Jensen & Szulanski, 2007). Even though some researchers argued that standardized processes or routines can lead to flexibility (Feldman & Pentland, 2003), others point to routines as sources of rigidity and inertia (Gilbert, 2005). In organizational environments, lower levels of standardized coordination result in high level of mutual adjustment by organization members affected by the interrelated activities with shared information and adjustments based on the situation. Hence, they displayed stability through the routines and flexibility by sharing information and adapting.

Additionally, Weber’s (1949) work on the bureaucratic nature of the organization is viewed as foundational in the organizational design field. For Weber, centralization pertained to the concentration of authority for decision-making at higher organizational levels to plan changes and act (Child, 1972). Organizations with high levels of centralization embodied decision-making power among a few or even one individual at the top of the organization. A benefit of centralized control was the enhanced speed of decision-making because fewer organizational members participate in the process, but a tradeoff was the reduced amount of information brought to the process (Miller, 1987). Centralization also reduced individual organization members’ autonomy since decision-making is centralized at a higher level.

Conversely, highly decentralized organizations distributed decision-making power to lower-level subordinates in the hierarchy (Wong et al., 2011). A benefit of decentralized control involved tapping into broader perspectives during the decision-making process but doing this increased the time required to reach a consensus. The benefits of decentralized decision-making may be important in more customized service offerings, which embody high levels of environmental uncertainty (Johnston et al., 2019). However, decentralized decisions were shown to impact other parts of an organization and led to suboptimal performance levels (Johnston et al., 2019).

In hospital scheduling research, as the workload increased with more patients, the need for more perspectives was reduced and the benefits of centralization, such as cost savings, increased (Diwas et al., 2013). Centralization also led to the early adoption of technologies that enhanced efficiency and enabled economies of scale and knowledge synergy (Baum & Wally, 2003). Removing decision-making redundancy allowed for greater efficiency in time and created synergy among organizational departments. In settings where little knowledge is gained by increasing the number of people involved in the decision-making, centralized decision-making may be more appropriate. In more certain environments and with more consistent practices, the reliance on centralized decision-making yielded its greatest benefits on efficiency and clarity.

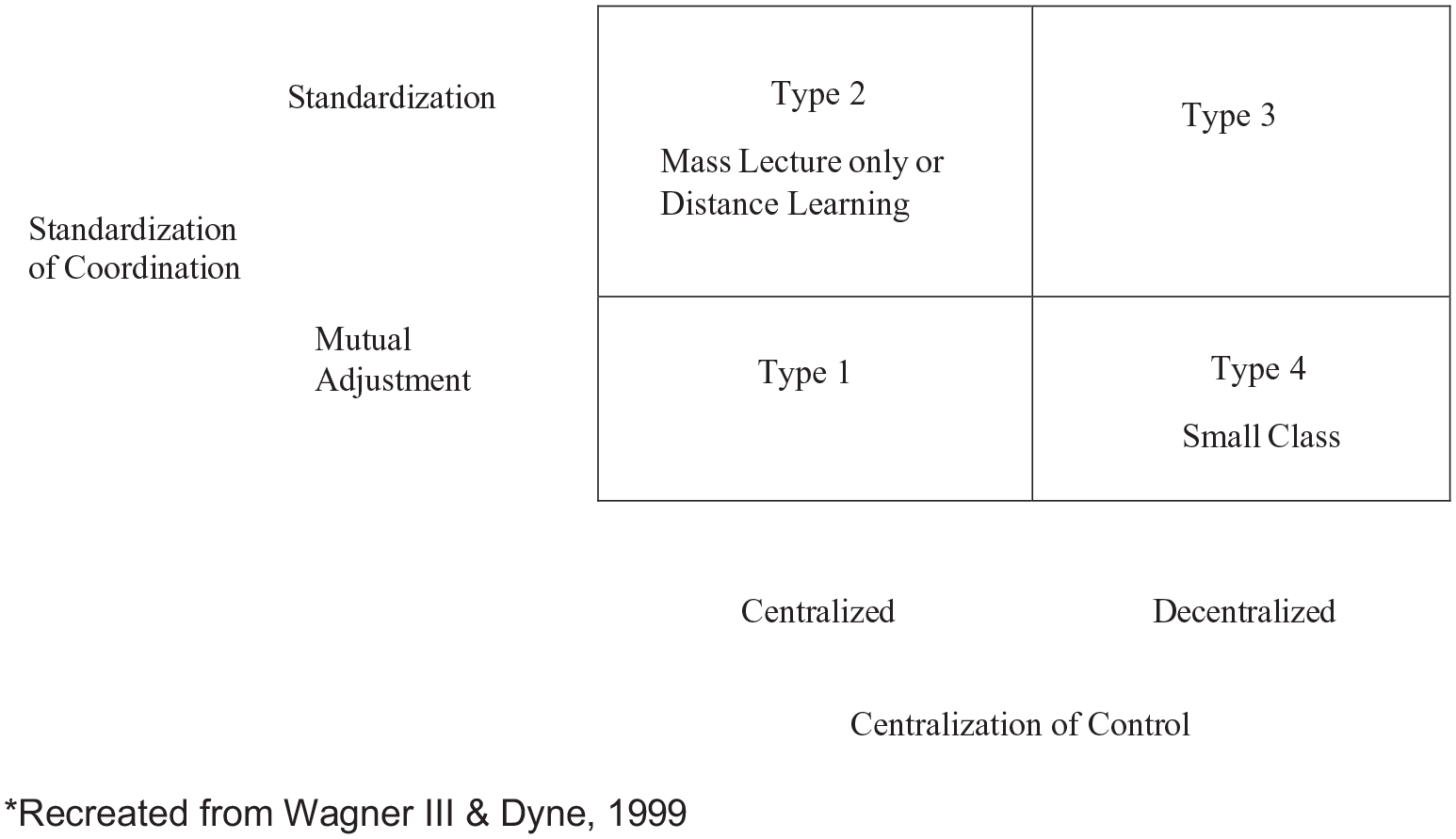

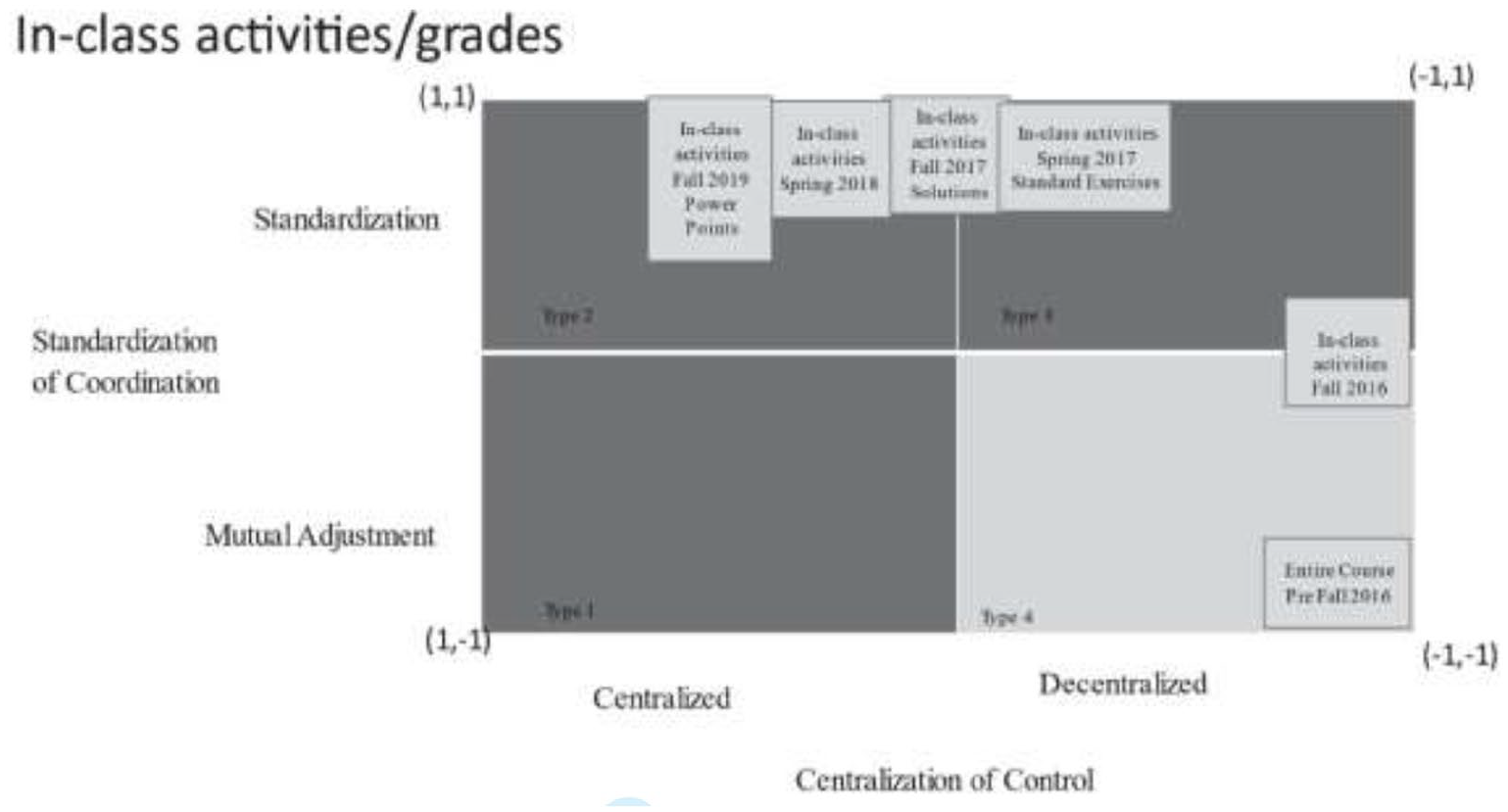

The types of course design structural components that faculty create embody similar characteristics to organizational structural dimensions (Wagner & Van Dyne, 1999) See Figure 1. Just as organizational theory suggests that centralization of control increases efficiency and speed in addressing pressing issues, centralization in course design enables these same benefits. This occurs when new course development involves a faculty committee in which decision-making is shared; it can become a time-consuming task. During such committee tasks, individual faculty members usually concede on different class structure aspects they prefer, in an attempt to arrive at an agreed structure/approach. These tradeoffs may impact the effectiveness of individual faculty members based on their teaching styles. Also, if an issue in the delivery of a selected course design occurs, say for instance rampant cheating on a common exam, the faculty committee may again need to meet to decide how to adjust to this issue. Centralization in course design can ease these issues.

Taxonomy of classroom structures.

In a similar manner, standardization of coordination in organizations ensures uniformity and certainty when dealing with routine issues (Gilbert, 2005). We see a parallel in course design considerations. Standardization of coordination in course design provides more uniformity on routine issues, including how much lecture time will occur in each section versus how much time is offered for active learning through exercises/cases as recommended by McLean and Attardi (2018) for flipped classrooms. A classroom structure that details the exact content included and how to present it enabled uniformity and certainty but compromised flexibility.

Therefore, we continue in the same vein of research as Wagner and Van Dyne (1999) by leveraging their insights as the foundation for this research while expanding their configuration to include a third classroom structural configuration referred to as a blended/flipped classroom. We used these three classroom structures to illustrate differences as follows:

Traditional “small” classroom with low levels of standardization and low levels of centralization across sections. (Traditional education model with each professor teaching their sections with complete autonomy—Type 4 in Figure 1.)

Large Classroom with high levels of standardization and high levels of centralization, when a course includes all students taking a particular course from one faculty member— (Most closely resembling a massive open online course or MOOC with one professor—Type 2 in Figure 1.)

Blended/flipped classroom: Large Lecture (asynchronous) with “lab” section configuration (synchronous) incorporates moderate levels of standardization with moderate levels of centralization (somewhat ambiguous in Figure 1 at the intersection of all four types and depends on how the course structure and implementation decisions are made).

Within course design, the amount of standardization for a single faculty lead course is 100%. However, as more sections of the same class are taught by different faculty members, the coordination among instructors must increase, if there is a desire for uniformity of learning across sections. Such considerations of standardization may include but are not limited to the amount and type of exams, quizzes, how to incorporate participation, types of projects and assignments, and the nature of the final exam. In addition to these different course components, there is an issue of the weight of each course component across sections to create a uniform approach to teaching a particular class. Investigations of standardization in course components via a common project across multiple sections with multiple faculty members revealed benefits for students across all sections of a common course (Foltz et al., 2004). We posit that similar benefits will arise from a standardized approach within a blended classroom structure by providing students with the same opportunities for success across all sections. The standardization of the same project, analysis techniques, and exams on those techniques should yield similar student benefits. We, therefore, offer the following hypothesis about the level of standardization on student course performance:

H1: As the content delivery mode moves from

For course design, centralization of control provides the benefits of concentrated decision-making and timeliness of decision-making. However, centralization also limits the amount of information considered and reduces the autonomy of individual faculty who abide by the centralized decisions. Classroom structures with high levels of centralized control represent the traditional format for course delivery: one class taught and developed by one faculty member. However, decentralized classroom structures involve all interested members: other faculty members teaching the course, department chairs, teaching assistants and even students, to participate in the design and delivery decisions of the classroom structure. However, when there are multiple sections of a course, decision-making may become more decentralized depending on the course and faculty teaching the course. Also, many universities are employing higher numbers of part-time instructors or adjunct instructors to teach common courses and in these situations, universities may employ a lead professor and have course committees that share the decision-making for that required course. For large enrollment courses with common learning objectives, the need to meet the same course objectives in all sections becomes more difficult with decentralized decision-making Therefore, we offer the following hypothesis to test the effect of centralized decision-making in large class structures with the following hypothesis:

H2: As the level of

We also seek to add insight about the interaction of these two classroom structural elements. Increased centralization concentrates decision-making and aids in the speed of decision-making while standardization increases uniformity across sections. While the interaction of these components will lead to positive outcomes in instruction and student outcomes, using completely different textbooks, class assignments, lecture formats, etc. can lead to idiosyncratic differences, and some of these may be unintended. Just like allowing individual faculty members to make decisions about learning objectives and approaches to achieve them leads to idiosyncratic differences as well. Therefore, we offer the following hypothesis:

H3: As both

A final component that we seek to address is the perception of learning from the student during these structural changes. Just as we sought to maintain the previous level of learning, we specifically test their perceptions to address this concern. Therefore, we offer our final hypothesis as follows:

H4: As a course undergoes a significant transformation, specifically shifting both the levels of

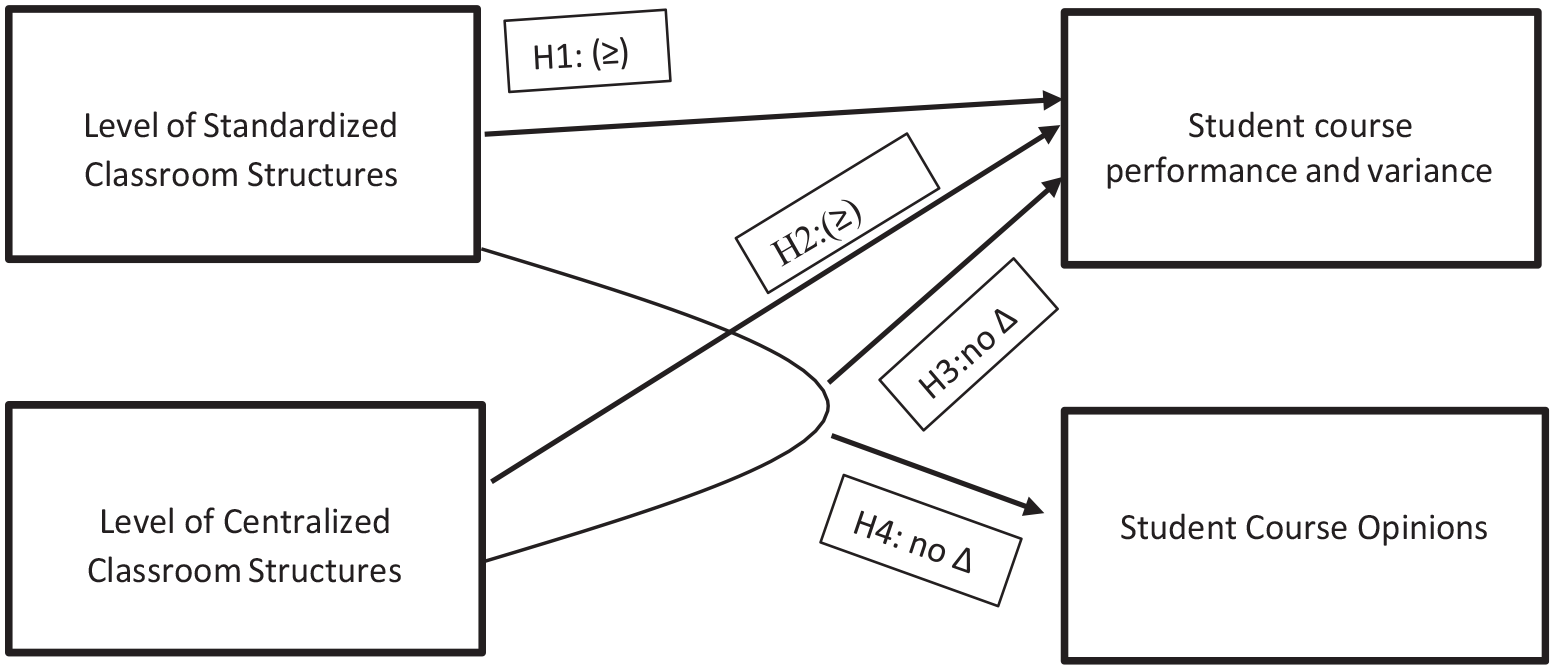

The structural course components, outcomes, and anticipated relationships between them are represented in Figure 2.

Conceptual model of large classroom structures and class outcomes.

Methodology

Study design

In the summer of 2016, a large urban university converted all sections of a required, junior-level Business Analysis class to a blended/flipped format. The traditional class this course replaced was taught by individual faculty members with complete autonomy—low levels of standardization and centralization across the sections. Also, there were no graduate teaching assistants involved in these sections. We refer to the data from periods with this traditional structure as pre-Structural Change (pre-SC).

The blended/flipped design replaced all of the sections of the traditional class format of twice-weekly sessions with a blended approach with weekly asynchronous video components and a once-a-week face-to-face meeting led by graduate teaching assistants (GTA). One faculty member served as the lead professor for all sections of this course offered and developed all the videos, materials, and training for the graduate teaching assistants. We refer to the data from these periods as post-Structural Change (post-SC). Data was collected data from the Spring of 2013 to the Fall of 2020 with enrollment per semester ranging from 505 to 820 students. Data in the pre-SC periods were collected only from the sections of the lead professor of the post-SC to avoid treatment biases due to different professors entering into the analysis. Therefore, the sample size pre-SC is only from the lead professor’s sections, and post-SC data is from all sections offered.

The previous version of the course represented a decentralized structure with mutual adjustment (Type 4) in Wagner and van Dyne’s (1999) taxonomy. The lead professor assured that the section’s professor/instructor had the skills and teaching resources (textbook recommendation but not required) to lead their section. Each professor/instructor decided the specifics course structure, the weighting of different course components, and the teaching approach.

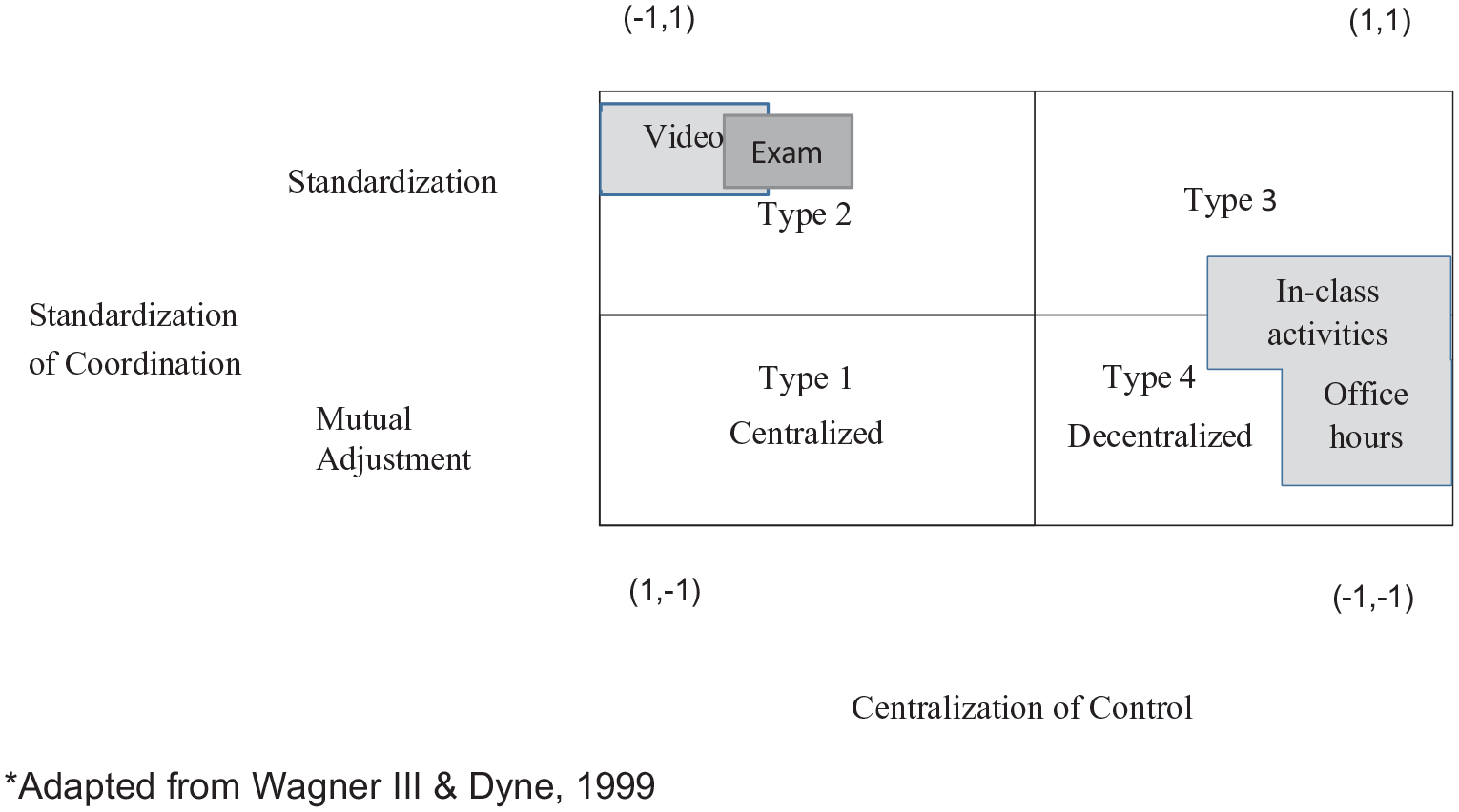

Converting to this flipped/blended format did not easily fit into Wagner and Dyne’s (1999) taxonomy since course components existed at different points on the standardization and centralization continuum. Therefore, we separated the course components as follows:

Videos (asynchronous): Required viewing of material and completing online quizzes prior to attending class.

In-class sessions (synchronous): Active learning through explanation/discussion of problems from content and in-class exercises and quizzes

Exams: Either in-class or testing center

Office hours: Either with the lead professor or GTA.

All four components were then placed on Wagner and van Dyer’s (1999) taxonomy (see Figure 3). Coordinates have been added to the chart to indicate that components can fall within a continuum of 1 to −1 for standardization and centralization. The following section describes the course components under the constructs of standardization and centralization.

Extension of Wagner and van Dyne (1999) Large Classroom Taxonomy for course components.

Standardization of coordination

The goal of standardization, whether in business or the classroom, was the use of standards to guide the work product, creating uniform outcomes in contrast to a mutual adjustment environment where individuals have more influence on the specifics of the final product and how it is achieved. Professors typically have significant control over their course and, therefore, exercise substantial mutual adjustment. However, standardization provides certain benefits, such as when course learning outcomes are required for future classes. Standardizing across all course sections provides a level playing field for prerequisites required in future courses.

Based on the continuum previously described, a standardization score of 1 indicated that all course components are the same across all sections. A standardization score of −1 indicates there was no coordination between sections, and therefore all instructors chose how to achieve the output required (mutual adjustment).

Standardization of coordination: Videos, pre-class quizzes, and exams

Using videos for all sections provided a high level of standardization of coordination of course content. The lead professor created all the content videos. Videos were separated into topic-oriented chunks, usually less than 15 min in duration. Standardized video content, available 24 hr per day, 7 days a week, delivered to all students the same opportunities for success as seen when a standardized process for a database project was used in a MIS course (Foltz et al., 2004). Knowing that students do not always prepare for class by viewing online material in advance of the in-class sessions (Ryan et al., 2015), standardized pre-class quizzes were prepared by the lead professor. The lead professor also standardized course exams based on the video content.

Standardization of coordination: In-class sessions and quizzes

Unlike the video content, the initial plan for the in-class sessions was to function like the face-to-face sessions it replaced. The GTAs no longer needed to lecture but continued in-class items such as question-and-answer sessions and active learning sessions focused on Excel. The lead professor held weekly team meetings with graduate teaching assistants to maintain control and consistency of the materials covered in all sections (standardization). The lead faculty also provided in-class quiz questions and Excel exercises (standardization of active learning components). Each GTA was allowed to tailor their section to individual student needs (mutual adjustment). The limited standardized instructions for the in-class sessions in the fall of 2016 included:

Ask if students have any questions.

Have students check their grades on LMS (iCollege) to make sure they received credit for assignments.

Hand out the in-class quiz.

Collect the quiz and then go over the correct answers.

Work on an Excel activity.

Standardization of coordination: Office hours

GTA’s office hours were listed in the syllabus as by appointment (mutual adjustment). The lead professor had set office hours every week.

Centralization of control

The design of the decision-making systems varied by the extent power was dispersed among members. The two extremes of centralization are one person making most decisions or decentralization with decisions delegated among faculty and instructors.

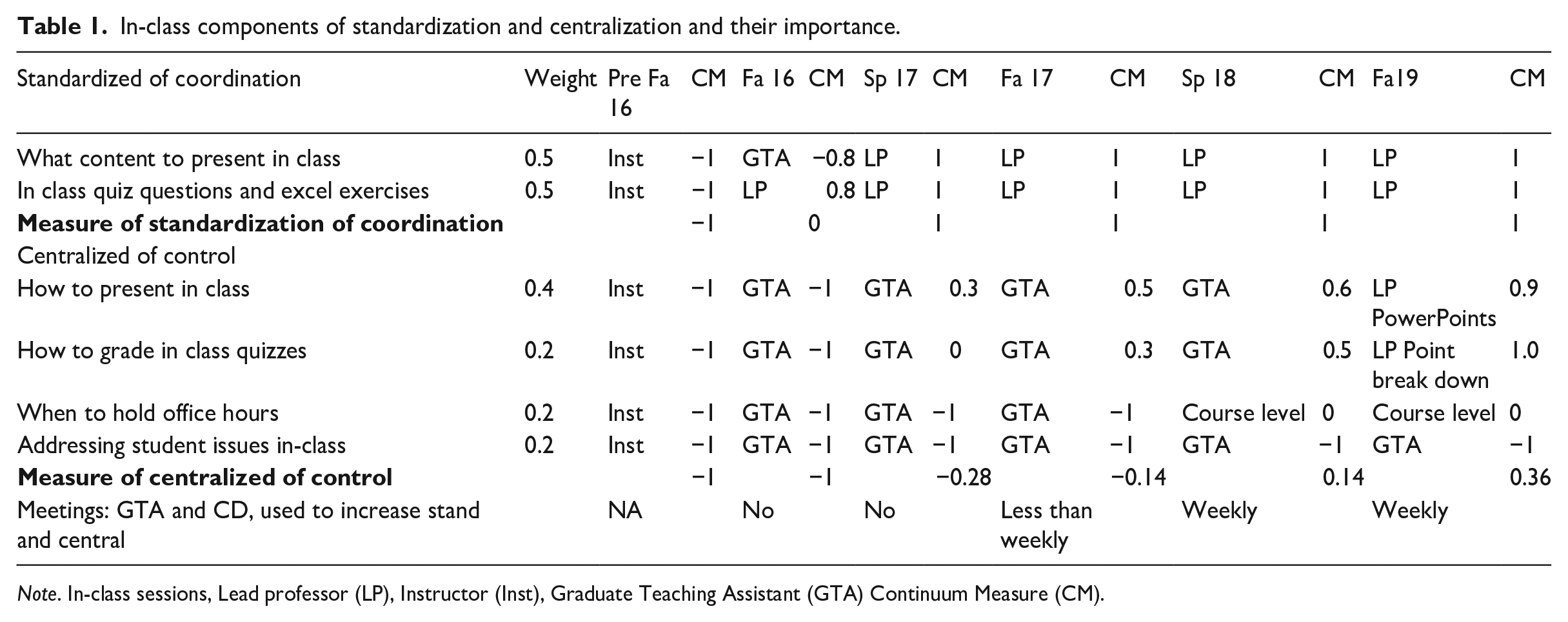

A centralization of control score of 1 indicated that one person makes all decisions for a component; therefore, students experience the same classroom environment, and issues are resolved by the decision of one person. A centralized score of −1 indicated that there was no coordination of decision-making among sections. All decisions were made at an individual GTA level responding to situations as they arise. Centralization of control included areas where daily decisions were made, including addressing student issues, grading, and class presentation decisions. Each element was assigned a value between −1 and −1. Those values are based on the amount of specific direction graduate teaching assistant (GTA) instructors were given. Weights were then assigned to the elements based on impact on course outcomes. Table 1 provides a breakout of the key elements.

In-class components of standardization and centralization and their importance.

Note. In-class sessions, Lead professor (LP), Instructor (Inst), Graduate Teaching Assistant (GTA) Continuum Measure (CM).

Centralization of control: Videos and exams

The lead professor was the centralized decision-maker for developing all content, the weekly schedule, exams, and quizzes for the course and addressed any student or GTA issues. The only variation over the semesters considered was exam locations. The exams took place in the testing center when available; otherwise, exams were given in the classroom, impacting control over decision-making. When in the testing center, there was a higher degree of centralized control based on the standards set by the director of the testing center. In the classroom sessions, the lead professor provided some overall guidance but the GTAs made decentralized decisions on addressing issues that occurred.

Centralization of control: In-class sessions and office hours

Based on the decision to continue the in-class sessions similarly to the previous face-to-face format, the authority for all decisions impacting the classroom time was left to the section GTA. This included how to present the content, grading assignments, holding office hours, and addressing student in-class issues.

With the new structure, it was essential to monitor graduate student teachers to prevent lecturing from creeping back into the in-class sessions. As stated by Rodriquez (2015) about flipping library instruction, “The biggest hurdle for librarians may be to avoid lecturing during in-class sessions” (p. 18). If graduate student teachers fell into the role of lecturing during the in-class meetings, it nullified the purpose of the blended/flipped design. Office hours provided decentralized support for students with their GTA or the lead professor.

Evolution of in-class sessions

At the beginning of the study, the intent was that components would remain as positioned in Figure 3. However, after the first semester, it was obvious that changes needed to be made to the in-class sessions. Therefore, active learning. in-class components evolved over the research period. See Figure 4. Table 1 provides a breakout of the key elements of the in-class sessions over the research period to illustrate the changes that increased the amount of standardization and centralization. Further information is provided in the results section.

Evolution of in-class components.

Measures

We used several data sets over multiple semesters, pre- and post-SC to analyze the impact of the format change. These data sets included measures of students’ learning during and after the course, course historical grade distributions, and end-of-semester student opinion surveys. The following describes these data sources.

Measure: Student assessment of instruction quality, survey data

End-of-the-semester student evaluations provided a longitudinal view of students’ opinions of the course. The survey contained 35 questions. A listing of the questions is available by request from the authors. The period used was Fall 2016 to Spring 2019. For Fall 2019, the student survey standardized questions used by the university changed. Therefore, we did not use data after the survey change to assure comparability of identical survey items.

Measure: Student assessment of learning, exam data

To address our primary research question about the impact of format change on student learning, we used exam data to compare standardization and centralization effects. This longitudinal research design required pre- and post-structural change (sc) exam data. We collected student exam grades from 2 years prior to implementing this format change. To reduce the impact of ancillary factors, we used pre-conversion only data from sections led by the lead professor. For post-conversion, we collected data from all sections. We used exam scores for the initial test of the impact of the structural change. All pre- and post-structural change exams were similar in design with approximately 25 questions for exams and 50 questions for the final. Though specific questions varied based on the exam version, the topics covered and type of questions on all exams were similar (i.e. similar questions with different numbers). We used data for all sections. Data for summer sessions was not available. This covers seven semesters (100 sections) before and seven semesters (116 sections) after conversion. The data used covered semesters from Spring 2017 till Spring 2020. We performed hypothesis tests, by semester, to determine if these scores were significantly different for the two structures.

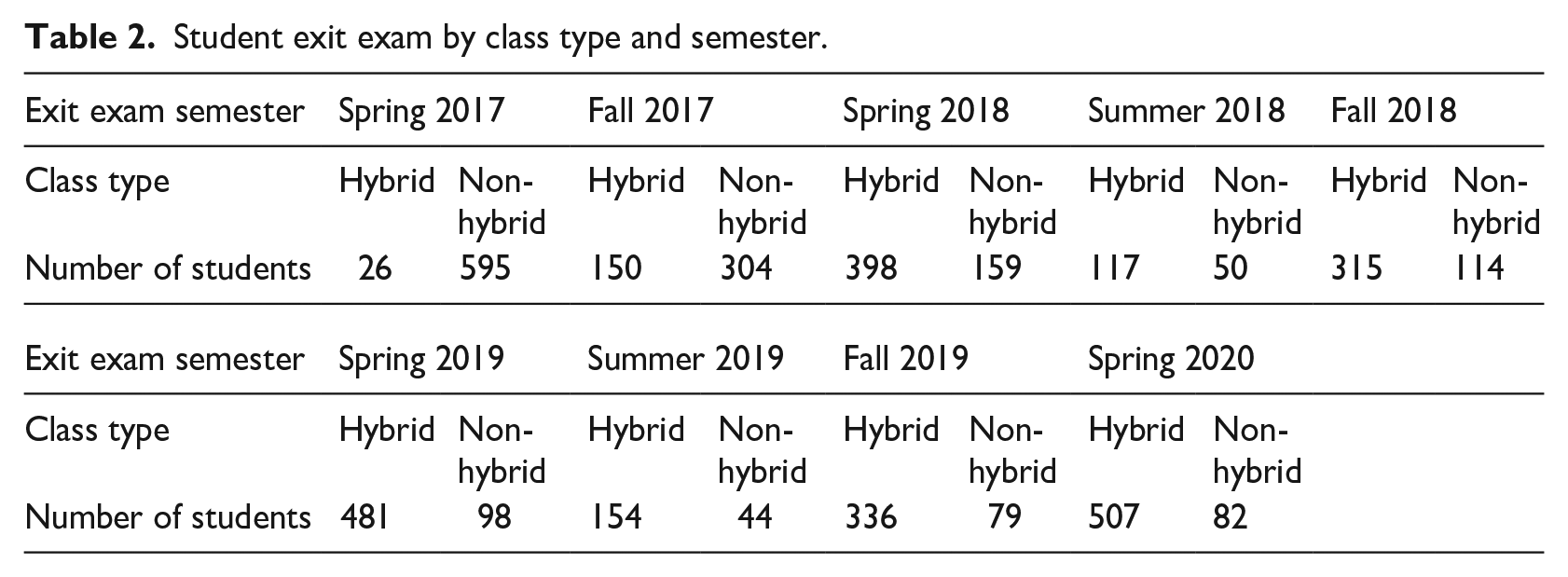

Measure: Student assessment of learning, graduation exit exam data

To address our research question about the efficacy of a blended/flipped format compared to a traditional format for the same class, we collected data from student performance on an exit exam taken just before graduation with samples from both formats to gauge the long-term learning effects. For our assessment of learning across all undergraduate business majors at our College of Business, we used a previously developed exit exam with 13 questions pertaining to business analysis. All questions were multiple-choice in design. These questions mirrored the stated course objectives but used specific problems and analyses to ascertain the retention of this knowledge base (A listing of the questions is available by request from the authors). These questions remained consistent over the entire period of our data collection. Although more robust measures of long-term learning could benefit our understanding, this particular exit exam provided the comparability of our students’ performance in both time periods pre-SC and post-SC. Switching to a new measure for the blended approach would introduce instrument bias across the two samples and potentially lead to spurious differences. See Table 2 for sample size by semester.

Student exit exam by class type and semester.

Results

Impact of standardization on student exam performance

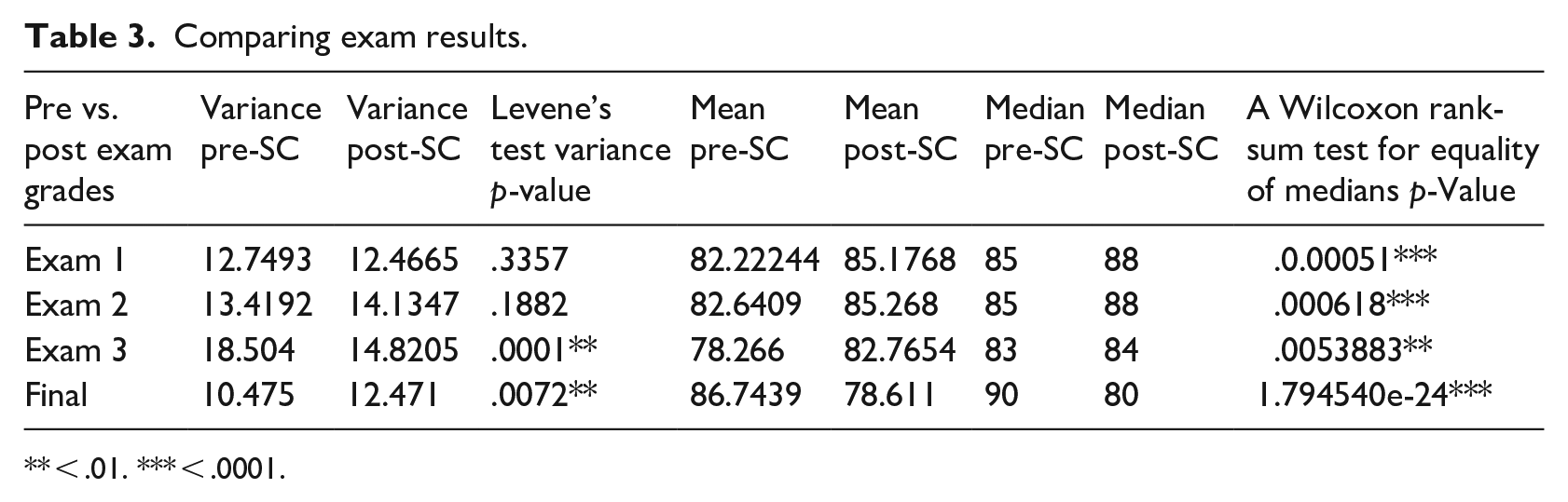

Since the major structure change (SC) between these two-time periods coincided with the use of videos by the lead professor for all sections, we considered this an initial measure of the impact of standardization. Performance on the three-semester exams and final exam accounted for a significant portion of a student’s grade. Therefore, we used student exam grades as an outcome measure. We gathered data from pre-Fall 2016 semester face-to-face sections taught by the lead professor as our pre-structure change (pre-SC) baseline. This included approximately 300 students in 7 sections. This data was compared to exam results from 15 sections with approximately 650 students from the first semester of the post-structure change (Fall 2016). For this test we are analyzing at the pre and post-change level not at the individual section level.

To test for the impact of the format change on student learning, we performed hypothesis tests with the null hypothesis that the median exam scores pre-SC were equal to or greater than the scores post-SC. Boxplots of the data failed to support the normal distribution of the data. Therefore, we used nonparametric tests, a Levene’s test for equality of variance and a Wilcoxon Rank-Sum test for equality of medians. For the results of these tests, see Table 3.

Comparing exam results.

< .01. *** < .0001.

At a significance level of less than .01, the medians for exam 1, exam 2, and the final exam reject the null hypothesis (H1) and the findings show support that the medians of post-SC tests were greater than the medians pre-SC. The medians for all three exams increased after we imposed the new structure. The final exam was the exception to this pattern, with the post-SC being lower. We believe this was due to the lack of standardization on the final exam review materials provided for the GTAs. We performed exam reviews during the in-class sessions. The GTAs, still in the mutual adjustment category for these sessions, individually determined what content to cover in the review session.

Impact of centralization on exam variance across sections

Changing the format and faculty involved in delivering this course required a different level of centralization than the traditional face-to-face course. The decision-making for all exams was centralization and made by the lead professor for all sections.

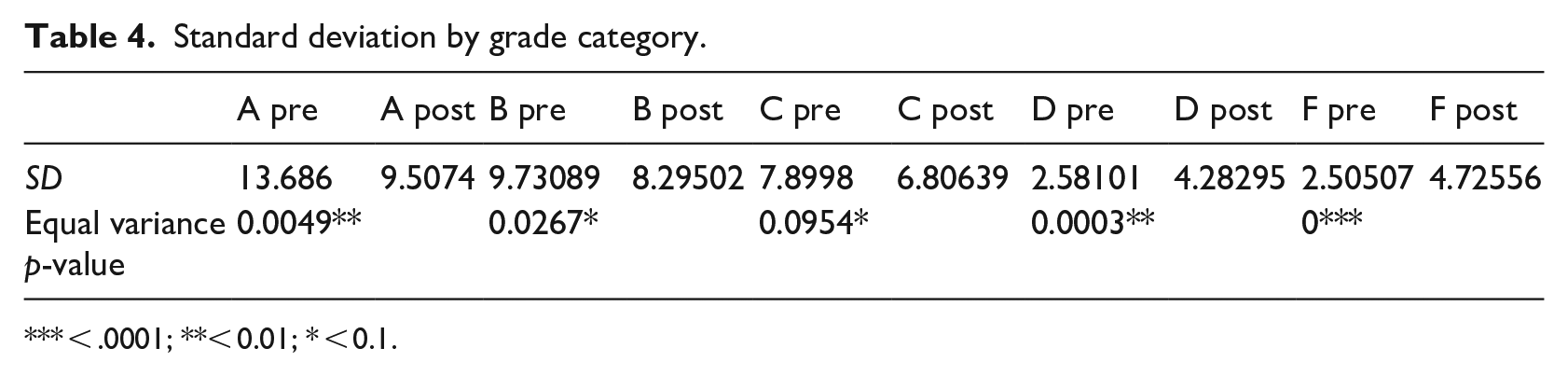

To address this H2, we considered student grades recorded during 7 years, Spring 2013 to Fall 2019. This covers seven semesters (100 sections) before the conversion and seven semesters (116 sections) after. Based on these boxplots, we treated the data as nonparametric. Therefore, we used a Levene’s test to determine equality of variance.

Just as average salary may not be a good indicator of a company’s pay equity, we used the percentage of grades in given categories (A, B, C, D, and F) to evaluate the variance between sections in a given semester. There was an indication of a reduction in variance over time.

To compare students’ grades, we separated data categorically into pre- and post-structural changes. Table 4 provides the standard deviation by this categorization. To confirm if the standard deviation had decreased over time, we performed a hypothesis test to determine if the pre-SC standard deviation was greater than or equal to the post-SC standard deviation by grade category.

Standard deviation by grade category.

< .0001; ** < 0.01; * < 0.1.

Categories A and C showed a significant decrease in the standard deviation in the post-SC condition. Category B showed that the standard deviation was equal for both conditions. Categories D and F showed that the standard deviation increased in the post-SC condition.

This supported the conclusion that the variance across sections has decreased in the post-SC environment. Though the variance decrease was not across all grade categories, categories A, B, and C over the 7 years account for 87.53% of grades. Since the results in the D and F categories were lower percentages, a small variation may significantly impact the results.

Impact of standardization and centralization on course mastery

Focusing on assuring the retention of student learning, the following hypothesis addressed the impact on long-term learning from the changes from traditional to blended/flipped format due to the increased amounts of standardization and centralization.

To evaluate the format change on student mastery of the course content and test H3, we considered results from student exit exams given shortly before graduation. This exam provided an indication of student retention of course content from the time of enrollment in the class of this study and the time the average student took for the exit exam, a 1.5 years lag. Since we separated scores by pre- or post-change, we are not able to determine the semester a student took the course. This limits our ability to separate the standardization and centralization impact. The course-specific content, contained in 13 questions, broadly represents the course topics covered.

First, we looked at overall student performance. (The authors can provide these results upon request.) We performed tests for equal variances for each semester. Spring of 2019 was the only semester that rejected equal variances. Based on these results, the large sample sizes, and similar distributions for both conditions, we performed T-tests for each semester to determine if the mean scores are significantly different for the two formats.

Using a p-value of less than .15, in five of the nine semesters students from post-SC sections achieved higher scores than students on pre-SC sections. For the remaining semesters, the tests could not reject equal means. Therefore, students in the post-SC sections for all semesters performed at least as well as students from the previous format.

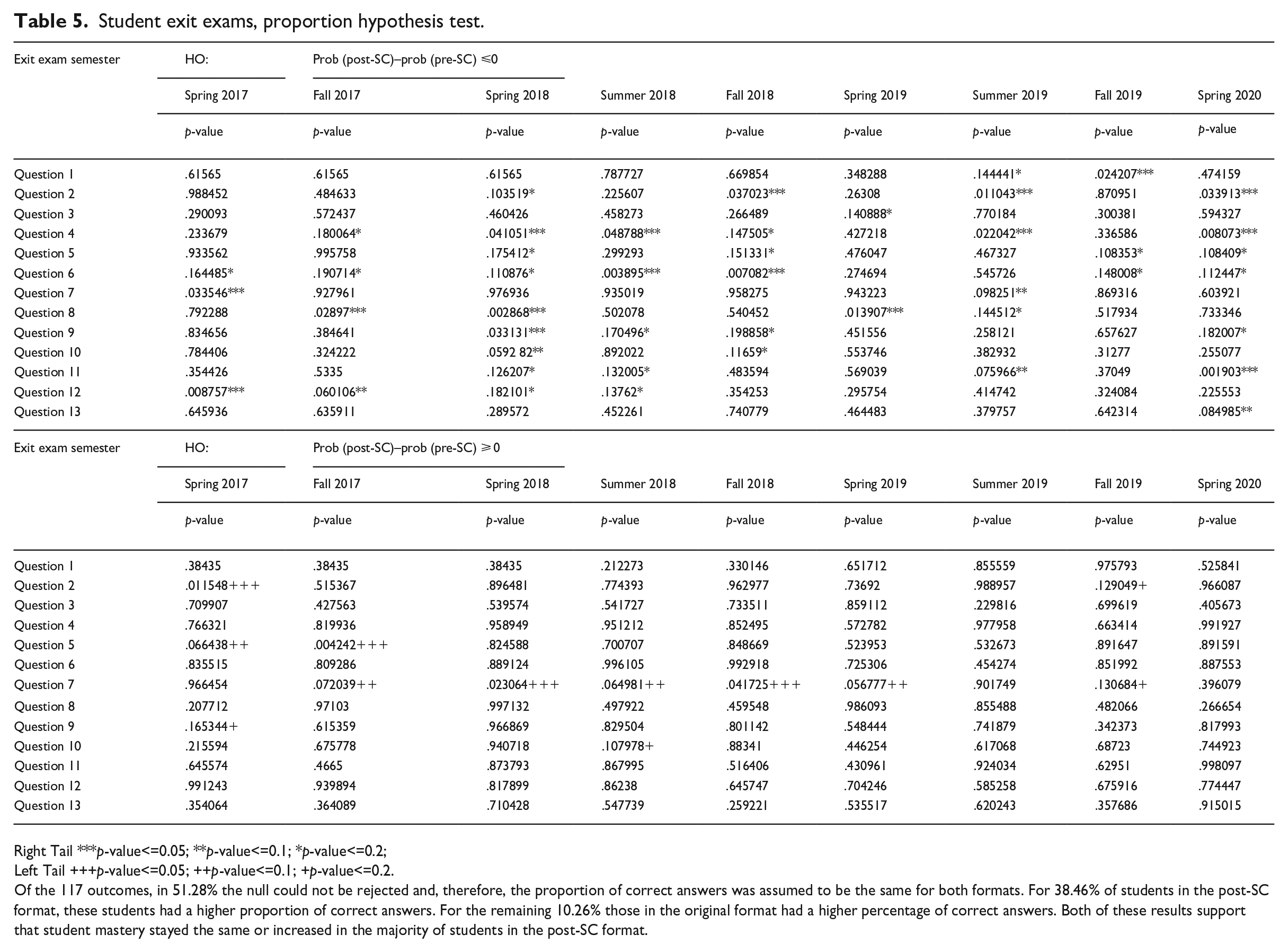

Next, we considered the performance on the thirteen individual exam questions. We performed proportion hypothesis tests, for each semester, comparing the percent correct for students in the post-SC format to those in the pre-SC format. We used this proportion test to determine the frequency of no significant difference between the two categories, the frequency of students from the post-SC course who performed significantly better than the pre-SC format students, and the frequency of students from the pre-SC course who performed significantly better than the post-SC format students (see Table 5).

Student exit exams, proportion hypothesis test.

Right Tail ***p-value<=0.05; **p-value<=0.1; *p-value<=0.2;

Left Tail +++p-value<=0.05; ++p-value<=0.1; +p-value<=0.2.

Of the 117 outcomes, in 51.28% the null could not be rejected and, therefore, the proportion of correct answers was assumed to be the same for both formats. For 38.46% of students in the post-SC format, these students had a higher proportion of correct answers. For the remaining 10.26% those in the original format had a higher percentage of correct answers. Both of these results support that student mastery stayed the same or increased in the majority of students in the post-SC format.

Of the 117 outcomes, in 51.28% the null could not be rejected and, therefore, the proportion of correct answers was assumed to be the same for both formats. For 38.46% of students in the post-SC format, these students had a higher proportion of correct answers. For the remaining 10.26% those in the original format had a higher percentage of correct answers. Both of these results support that student mastery stayed the same or increased in the majority of students in the post-SC format.

Impact of centralization and standardization on student feedback

By creating standard operating procedures across all sections from the lead professor’s videos, training of GTAs, and weekly meetings to maintain the procedures in each section, standardization of this course structure increased dramatically. Also, there was a high level of centralized control with centralized decision-making on course components, exam questions, grading, etc. We made these changes to increase student learning while maintaining or improving course evaluations.

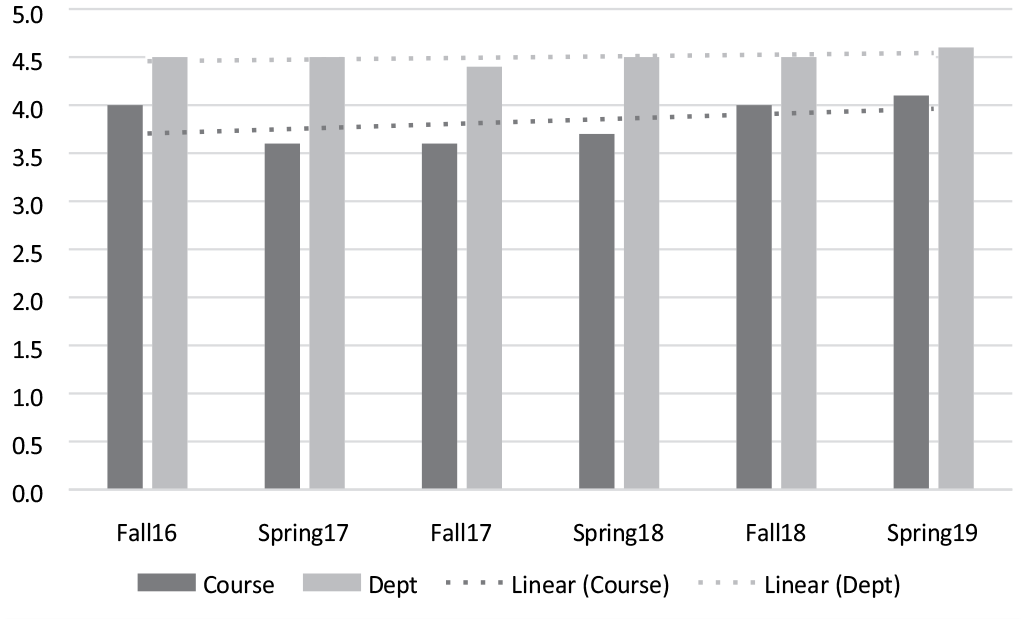

We used student evaluations of instruction as a measure of H4. In the fall of 2016, the average rating for all questions and all GTAs was approximately 11% below the same measure for all courses in the same department. Considering this was a major course delivery change and the first time teaching this content for all GTAs, we expected this drop in student evaluation of instruction due to its novelty from the traditional course approach.

However, the drop continued in Spring 17, putting the course approximately 20% below other courses in the department representing a longer resistance period for this major change. We addressed this issue by increasing the amount of centralized control and standardization. We made this decision to address the variation in skill levels of the GTA’s, therefore resulting in a more consistent environment across sections. Then signs of recovery started in the Fall of 2017 and Spring of 2018. Followed by significant improvement in Fall 2018 and Spring 2019. We provided an overview of the aggregated mean scores for all questions in all sections for the new format course compared to the combined scores for all questions in all department courses in Figure 5.

Mean score of questions from student end-of-the-semester review.

Discussion

This research aimed to test the constructs of standardization and centralization from organizational theory to guide changes to a course converted from a face-to-face format to a blended/flipped format following Wagner and Van Dyne’s (1999) initial model. The large sample size across the two formats provided a statistically robust view of the impact of the format change compared to considering only a section or two.

The major finding was that there did not appear to be a negative effect of this course structure conversion. The regular exam score means were not statistically different across formats. We propose that this lack of differences occurred because the videos used in the blended/flipped format were very similar to the same professor's approach in the traditional format class. We attributed the final exam difference in means and variance between the two formats to the review sessions for the final exam. In the previous lecture format, the lead professor taught the course multiple times and focused on questions from previous exams in which students struggled. The graduate student teachers lacked this experience to draw upon and provided a review based on their own proclivities.

The existence of websites like Ratemyprofessor.com provided evidence that students shop for professors (class sections). Although we cannot generalize why students shop sections, professor shopping may indicate that students recognize a lack of consistency across course sections. Standardizing a course with sections taught similarly across a team of similarly trained graduate student teachers with a common online component (standardized and centralized), provided a test of the structural components’ impact on student results. The decrease in variability with the blended/flipped format, we believe helped lead to the results seen in the increased student mastery of course content scores.

Considering the effectiveness of the blended/flipped format compared to the traditional format from a lagged exit exam revealed some interesting results. Students retained the knowledge of the course in a statistically similar amount as in the traditional format in the initial periods of this implementation. We interpreted this finding as the blended/flipped format being as effective as the traditional format for knowledge retention. However, a significant difference between exit exam scores occurred in the third semester of implementation of the blended/flipped format. These results revealed a lag effect for converting to a blended/flipped format from a traditional format and pointed to a positive learning effect for our institution. The blended format with active learning sessions contributed to higher levels of knowledge retention from a lagged instrument and led to our call for further studies that address the lagged effect of blended learning on long-term learning effects. We attributed this mean score positive difference to our improvements in the blended/flipped format through standardizing components through centralized decision-making.

In addition to our findings, we learned many lessons about converting a large-scale course and share these insights for others to consider when attempting such a conversion. We found that some students resisted this format change; they preferred the standard lecture format. However, we realized a need to respond to any student email complaints quickly. The timeliness of our response was crucial.

Another lesson learned pertained to the scope of this offering. When the lead professor taught a traditional section and made an error on an exam problem, she/he easily addressed it in class. However, in a class with high levels of standardization of coordination across multiple sections, we noticed that a little problem became magnified. Any errors, including a bad quiz question, quickly rippled through the sections causing agitation in students and much effort to resolve.

A new faculty role also resulted from this course design structure—managing a team. We found that problems/issues inevitably ended up at the leader’s doorstep (lead professor). With that, there was a high need for centralized control and standardized coordination to maintain fairness across sections for students when common issues arose. Therefore, the lead professor became the deciding voice on all student matters for all sections. Centralized control on these student issues provided the clarity and consistency necessary to minimize the escalation of student issues.

Although we provided varied insights from our research, it is not without its limitations. In our attempt to replicate prior studies on the efficacy of blended/flipped formats, we fall prey to the same criticisms for using subjective measures via an opinion survey. We sought to minimize these criticisms by incorporating objective student outcome measures. However, using grades as outcome measures incorporates other explanations we did not control (see Hussey & Smith, 2002, for a discussion about issues with learning outcomes). For instance, prior GPA in major coursework could help control for one of these additional explanatory factors. Another limitation of our research is the reliance on multiple choice exam questions from our institutions’ Exit Exam. Although this was a convenient approach to gauge the long-term learning effects of our blended approach with active learning sessions, perhaps more problem-solving project-based assignments could provide greater insights to the depth of retained knowledge.

Conclusion

When the decision to change the format of our course to a blended/flipped course occurred, the initial reaction from many students and faculty was not overwhelmingly positive. Many assumed that the decision was purely a financial one and would harm student learning. Regardless of the different motives for changes of this type, our results showed that this conversion to a blended/flipped format did not lead to a negative impact on student learning. In fact, some of our findings suggest a positive effect on student learning. However, the roles of the faculty and students needed to change for the successful implementation of this format. The change was not easy for everyone involved (students) or informed (other faculty) to accept. There seemed to be a desire to “prove” that this different format as inherently good or bad, from some students and fellow faculty members.

Our results provided us with data to answer our internal constituents. However, we had an exit exam with objective student performance data on specific components of our course from years prior to the blended/flipped conversion to address the long-term learning effect. Not all university settings will have such historical data to perform the longitudinal data analysis of our study. Therefore, there is a need for more research on objective student performance measures. Are other blended/flipped courses experiencing long-term learning or retention of material beyond the enrollment semester at the same level? Are there idiosyncratic issues related to learning management systems or student culture which enhance or detract from long-term learning benefits? Embarking on such a study requires a longitudinal research design with a control group (traditional format in our case) to strengthen the generalizability of such studies. We, therefore, call for more longitudinal studies to test the efficacy of the blended/flipped format.

We recommend that choosing this format or any other specific format for a class should focus on the specific class and the tradeoffs to the student learning objectives sought. We present this as a call for more research on blended/flipped class conversions analyzing the amount of standardization and centralization dimensions of class structures as first posited by Wagner and van Dyne (1999). Our course design encompassed many decisions about course structural design components, but our components do not constitute an exhaustive list. What other components of standardization and centralization of class structures can impact student learning? Research into other standardized or centralized components will provide a better understanding of these components that positively or negatively impact a learning environment.

In essence, our experience led us to identify the gap in the research literature for large classroom structures posited (Wagner & van Dyne, 1999) when considering a blended/flipped course. Our efforts helped illuminate the need to consider structural elements in pedagogical designs just as in organizational designs. However, previous research presented organizational structures as relatively static and lasting components (Gilbert, 2005) with recent research calling this assumption into question (Feldman & Pentland, 2003). In our experience, we approached these dimensions as somewhat static but quickly realized that the blended/flipped approach enabled through technology led to a more dynamic structure than anticipated. However, we also offered our experience of the dynamism in both standardization and centralization during our initial iterations as a guide for others considering such a large-SCale conversion. Expected levels of dynamism will reduce with additional iterations. Nonetheless, we offered an extension of the current structure of large classrooms to not only identify the need for dynamism in course structures and within the taxonomy but also to demonstrate to others one of the main benefits of leveraging technology for a blended/flipped delivery approach—real-time adjustments.

The participants of this study did not give written consent for their data to be shared publicly, so due to the sensitive nature of the research, supporting data is not available.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.