Abstract

Online instruction has helped colleges and universities to adjust to budget constraints, limited resources, and student preferences. One way for instructors to adapt to these new expectations is to gain efficiency in larger classes by using team-based assignments and peer grading. Although online peer grading has been used for some time, concerns with this approach include interpersonal pressures, competency, and fairness. These challenges may be overcome with cross-course peer grading. The purpose of the study was to assess the perceived effectiveness and perceived justice of having senior student teams in a capstone course anonymously grade written assignments submitted by novice student teams in an introductory course in the same discipline. The study took place using two sections of an online introductory course (n = 159) and two sections of an online capstone course (n = 75) at the same university using a case analysis assignment. No significant differences were found in instructor and peer-assigned grades. The results of this study show that senior students benefited by increasing their assessment confidence. Students who had their submissions graded experienced distributive and procedural justice. Therefore, instructors can more confidently utilize cross-course peer grading knowing there are educational benefits for both those doing the grading and those whose work is graded.

Keywords

Recent budget constraints have required more classes to be taught with higher enrollments, demanding more efficiency, and making certain online elements a more integrative and permanent part of the education landscape. Efficiency can be gained in larger classes by having students complete written assignments in teams. Peer assessment is another efficiency measure, saving time for the instructor (Adachi et al., 2018). Peer grading in groups adds yet another layer of efficiency by reducing the number of people needed to conduct assessments. Peer grading in-class can benefit students by communicating about feedback in-person, however utilizing technology for peer assessment also enhances student outcomes (Zheng et al., 2020).

However, both students and faculty have expressed reservations about competency and fairness in peer grading (Carvalho, 2013). For example, some students fear there will be retaliation for giving a low score (Vanderhoven et al., 2015). These concerns can be overcome by utilizing experienced students as graders with an anonymous peer grading process (Li et al., 2020). Student graders benefit from participating in peer grading by transferring the assessment skills to a professional setting after they graduate (Klucevsek, 2016). Moreover, employers expect college graduates to possess skills in critiquing the work of others to improve team performance (Trede & Jackson, 2021).

Prior research has established the efficacy of peer group grading among students in the same course (Vander Schee & Birrittella, 2021). However, having students in an advanced course assess the work of novice students in a different course merits further investigation. Cross-course peer grading (CCPG) takes place where student teams in a senior capstone course anonymously grade the written submissions of novice student teams in an introductory course. This study, therefore, addresses the research question, what is the perceived effectiveness and perceived justice of CCPG?

The remainder of this manuscript is organized as follows. First, we provide a review of the relevant literature and hypotheses developed from peer grading, content expertise, anonymity, and perceived justice. Second, we describe the cross-course peer grading process used in this study. Third, we discuss the methods including sample, data collection, and measures. Next, we present the data analysis and results. Lastly, we discuss the findings and limitations and directions for future research.

Literature review and hypotheses development

Peer grading

Peer grading as a learning and social interaction has a foundation in social development theory (Vygotsky, 1978). Prior research has used the terms peer review, peer feedback, peer grading, and peer assessment interchangeably (Wilson et al., 2015). Panadero and Alqassab (2019) define peer assessment as “an arrangement in which individuals consider the amount, level, value, worth, quality, or success of the products or outcomes of learning of peers of similar status” (p. 1254). Peer grading falls under the umbrella of peer assessment with a focus on considering the performance of others, based on an established rubric (Nicol et al., 2014), who may or may not have a similar level of academic experience (Adachi et al., 2018).

Peer grading helps the instructor by creating efficiencies and saving time (Haddadi et al., 2018), promotes student ownership in the course (Ritchie, 2016), and helps better prepare students for work product critique in a post-graduation business environment (Trede & Jackson, 2021). One of the benefits of peer grading is having students experience critiquing the work of other students before having to make an evaluative presentation in a professional setting where the stakes are much higher (Jayathilake & Huxham, 2021; Onyia, 2014). Students will be placed in virtual teams early in their careers. Thus opportunities to engage in virtual experiences, including assessment, will enhance their preparedness for the workplace (Loucks & Ozogul, 2020). The results of the meta-analysis conducted by Zheng et al. (2020) showed that students who participated in technology-based peer grading outperformed those who did not experience peer assessment on their graded activities. Furthermore, the difference in performance was greater for written assignments than presentations.

Peer grading in groups increases the visibility of each student’s grading actions, thus encouraging students to be more accountable for their contribution and further develop interpersonal communication skills (Mayfield & Tombaugh, 2019). Peer group grading is more efficient because individual assessments are more cumbersome for instructors to orchestrate and require students to grade more assignments (Carless, 2020). However, a concern is that only one team member does most of the work while the credit is equally shared with non-contributing team members. Lack of effort may be misconstrued as social loafing when genuine difficulty in completing the grading task may be the source of apparent apathy (Freeman & Greenacre, 2011). These situations can be mitigated by keeping the grader team size small, regardless of the size of the teams submitting assignments, and requiring self-reported involvement (Broadbent, 2018).

Prior research on CCPG is limited. Kwan and Leung (1996) found disagreement in grades assigned by tutors and peer groups. Third-year students evaluated the research proposals of second-year students in the study by Sloman and Thompson (2010). Student feedback demonstrated that both groups were comfortable with the in-person cross-year assessment and found it beneficial. Ernst et al. (2015) used cross-institutional individual peer review citing the benefits of increased objectivity and critical evaluation skills by the graders. However, online CCPG using teams of senior students in a capstone course and novice students in an introductory course has yet to be examined.

Content expertise

Reciprocal peer reviews (i.e., students grading each other’s work) offer the benefit of learning from giving feedback to others (Cho & Cho, 2011). However, this advantage is most appropriate for developmental assignments. When it comes to grades that count in the course, students are leery of expertise possessed by their in-course classmates (Klein, 2018; Mulder et al., 2014). Variation in academic experience and achievement can be compensated by utilizing mature students, or fourth-year students who major in the content area, as graders to provide high-quality feedback (Gielen & De Wever, 2015). CCPG incorporates both elements in that seniors in a capstone course have higher proficiency and academic experience in the discipline than their novice student counterparts.

Students develop assessment judgments through practice and instruction in peer grading (Panadero, 2016). Furthermore, skills learned from peer grading can be readily transferred to a professional setting (Klucevsek, 2016). Team assessment can further develop critical review with the perspectives contributed by other knowledgeable colleagues. Part of improving one’s assessment skills is developing confidence and believing that one is capable and competent to do so (Vanderhoven et al., 2015). Therefore, it is posited that: H1: Grades assigned by senior student teams will not differ from instructor-assigned grades. H2: Senior students will experience assessment confidence from the CCPG.

Anonymity

Social identity theory (Tajfel, 1978) proposes that group members develop self-conception in group membership (Hogg, 2020). The idea is that group members sense an affiliation with each other that may create a potentially competitive situation. However, anonymous peer group grading encourages candid scoring for the graders by reducing negative social effects such as fear of disapproval, compromising friendships (Panadero et al., 2013), and fear of retaliation for giving a low grade (Li et al., 2020). Moreover, students report being more objective and critical in an anonymous grading situation while instructors find anonymous peer grading has higher validity (De Grez et al., 2012). The assurance of anonymity can be heightened when the peer graders are in a different section of a course, or in a different course altogether. Thus, anonymity adds to the overall perception of impartial assessment as a student satisfier (Broadbent, 2018).

A criticism of anonymous peer grading is that it does not reflect actual professional practice where one has to offer self-identifying critique (Panadero, 2016). Although anonymity in peer grading limits students by not having interactive feedback (Rotsaert et al., 2017), it does foster a safe and more objective setting. A study by Wagar and Carroll (2012) found students prefer anonymous evaluations and find them the most fair. Furthermore, a study by Wetsch (2009) found students were motivated to do their best when they knew their work would be evaluated by peers, even when the assessment was conducted anonymously. Thus, having anonymity in peer group grading may encourage greater effort and reduce concerns regarding peer group grading that may hinder student performance. The combination of such can foster perceptions of effectiveness in the process as a component of the course. Therefore, it is posited that: H3: Novice students will perceive the CCPG as an effective course component. H4: Senior students will perceive the CCPG as an effective course component.

Perceived justice

According to social exchange theory (Homans, 1961), there are three dimensions of justice including distributive (Adams, 1965), procedural (Thibaut & Walker, 1975), and interactional (Bies & Moag, 1986). Distributive justice refers to the fairness of outcome distributions or whether the expectation of a gain is proportional to the investment (Orsingher et al., 2010). Procedural justice has been described as the fairness of the process used to determine outcome distributions (Colquitt et al., 2001). Interactional justice reflects the degree to which people are treated with dignity and respect (Greenberg, 1993).

Perceived justice is compromised when students feel taken advantage of or do not sense they have anything to gain. Novice students may feel they put in more work in their written assignments than the instructor when grading is delegated to other students. However, the benefits of peer grading include learning from the peer grading process and increasing future academic performance on similar assignments (Li et al., 2020). Prior research shows that students are also concerned about being fairly assessed by their peers (Sridharan et al., 2019). However, a study by Luo et al. (2014) found peer grading by student groups provided increased assurance of perceived procedural justice because more than one student was involved in the evaluation. Interactional justice is less of a consideration when peer graders primarily utilize an established rubric and provide limited written feedback, as is the case in this study. Therefore, it is posited that: H5: Novice students will experience distributive justice using CCPG. H6: Novice students will experience procedural justice using CCPG.

Cross-course peer grading

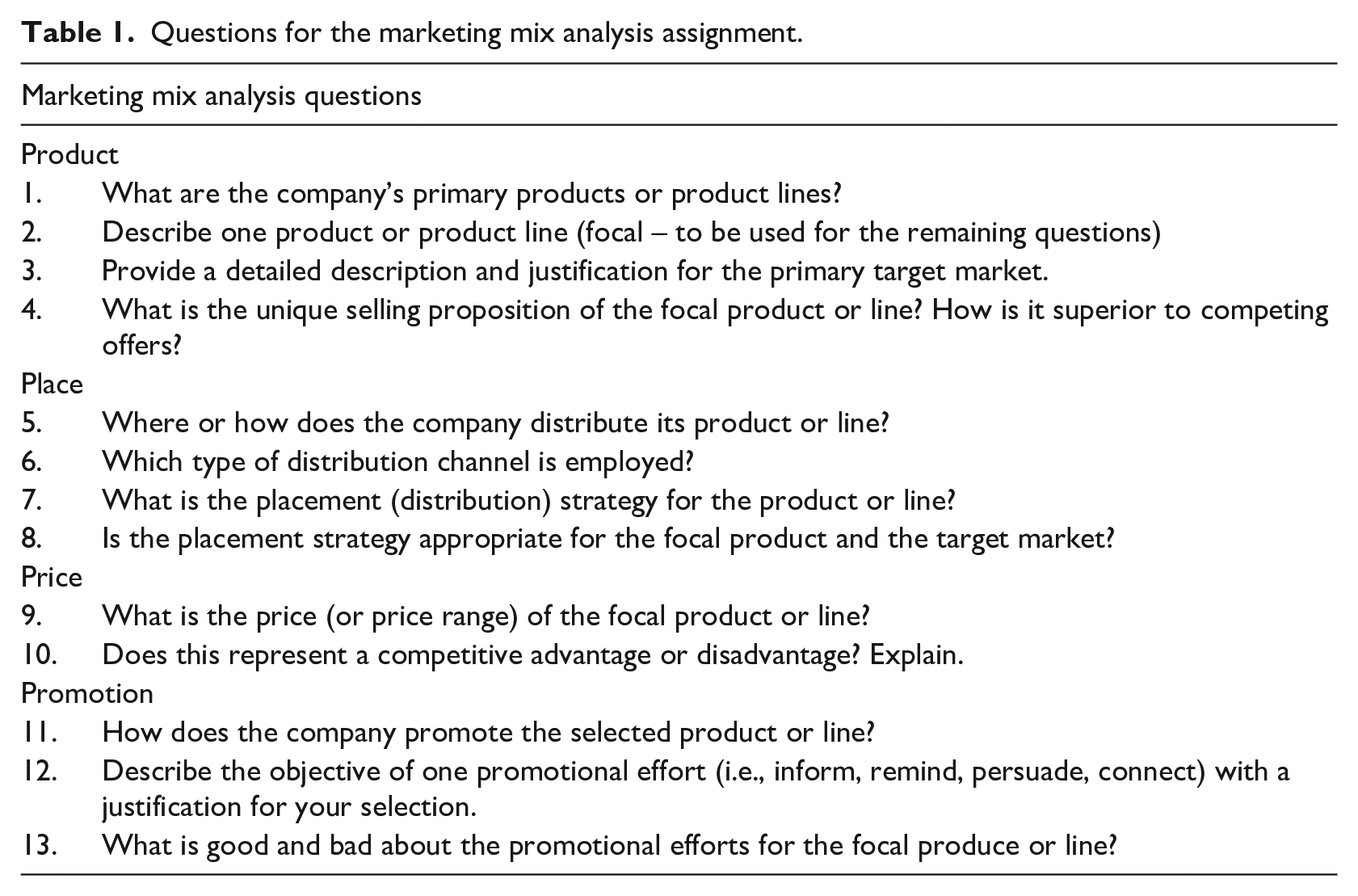

Novice students were assigned a case study that included a marketing mix analysis to be completed in teams of six to eight students. The four-page assignment required students to describe and analyze four key components of an organization’s marketing mix (i.e., product, place, price, promotion) by answering the questions posed at the end of the assignment (see Table 1). This portion of the case study was targeted for CCPG because the senior students were well versed in this foundational disciplinary content. Senior students received instruction on how to assess a submission discussing examples of prior submissions at each earned letter grade level. Furthermore, using peer grading for short written case analyses works well for improving student performance (Passyn & Billups, 2019). Submissions were made through the course learning management platform (i.e., Canvas). The assignment was limited to four pages as part of a larger case analysis worth 12% of the total grade in the course.

Questions for the marketing mix analysis assignment.

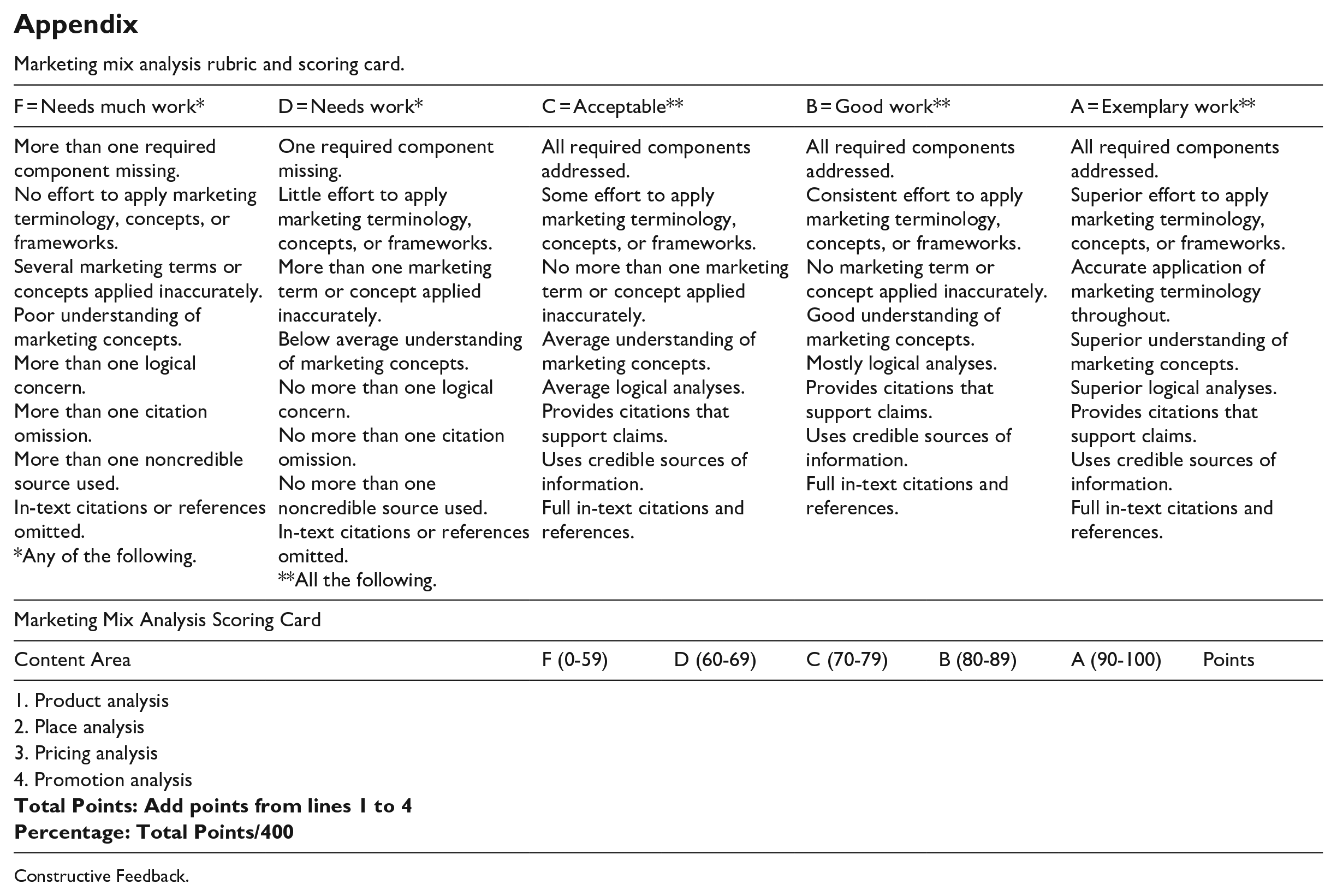

Senior marketing capstone students self-selected into teams of two as recommended by Vander Schee and Birrittella (2021) who note that using smaller teams minimizes social loafing while maintaining accountability. As recommended by Aurand and Wakefield (2006), they were then provided with an overview of the marketing mix analysis assigned to the novice student teams, including written instructions, verbal overview, grading rubric, and scoring card that would be used to assess their assigned submission (see the Appendix). In this study, teams of senior students were given a structured rubric to follow because student graders are more likely to give a balanced review considering both positive and negative aspects with this approach (Gielen & De Wever, 2015). Senior teams were given 1 week to complete the assessment and provide written feedback for a de-identified novice student team submission.

Once the novice student teams submitted their team assignments in the LMS system, the introductory course instructor deidentified the digital files and provided them to the capstone course instructors. The capstone instructors, then distributed the digital files to the senior teams for grading. Senior students were afforded approximately 1 week to complete the CCPG. The CCPG activity took place in the second to last week of the term. Senior students were also asked to provide constructive written feedback on the submission that would be useful to the novice students. Each senior team submitted one peer grading rubric in Word format. These files were deidentified and uploaded via the LMS system as part of the feedback provided to the novice student teams.

Method

Sample

CCPG was administered in two sections of an introductory course at a public regional university. One section of the introductory class was offered in an asynchronous online format which did not feature scheduled class meetings. This format relied heavily on recorded lectures, discussion boards, email, and chat rooms for interactions. The other section was offered in a synchronous online format and featured scheduled class meetings that were hosted on Zoom (videoconferencing). Both sections were taught by the same instructor. Student graders were enrolled in one of two sections of an online capstone course in the same discipline. The capstone course sections were taught by different instructors, however both sections employed the synchronous online format and were hosted on Zoom.

Measures

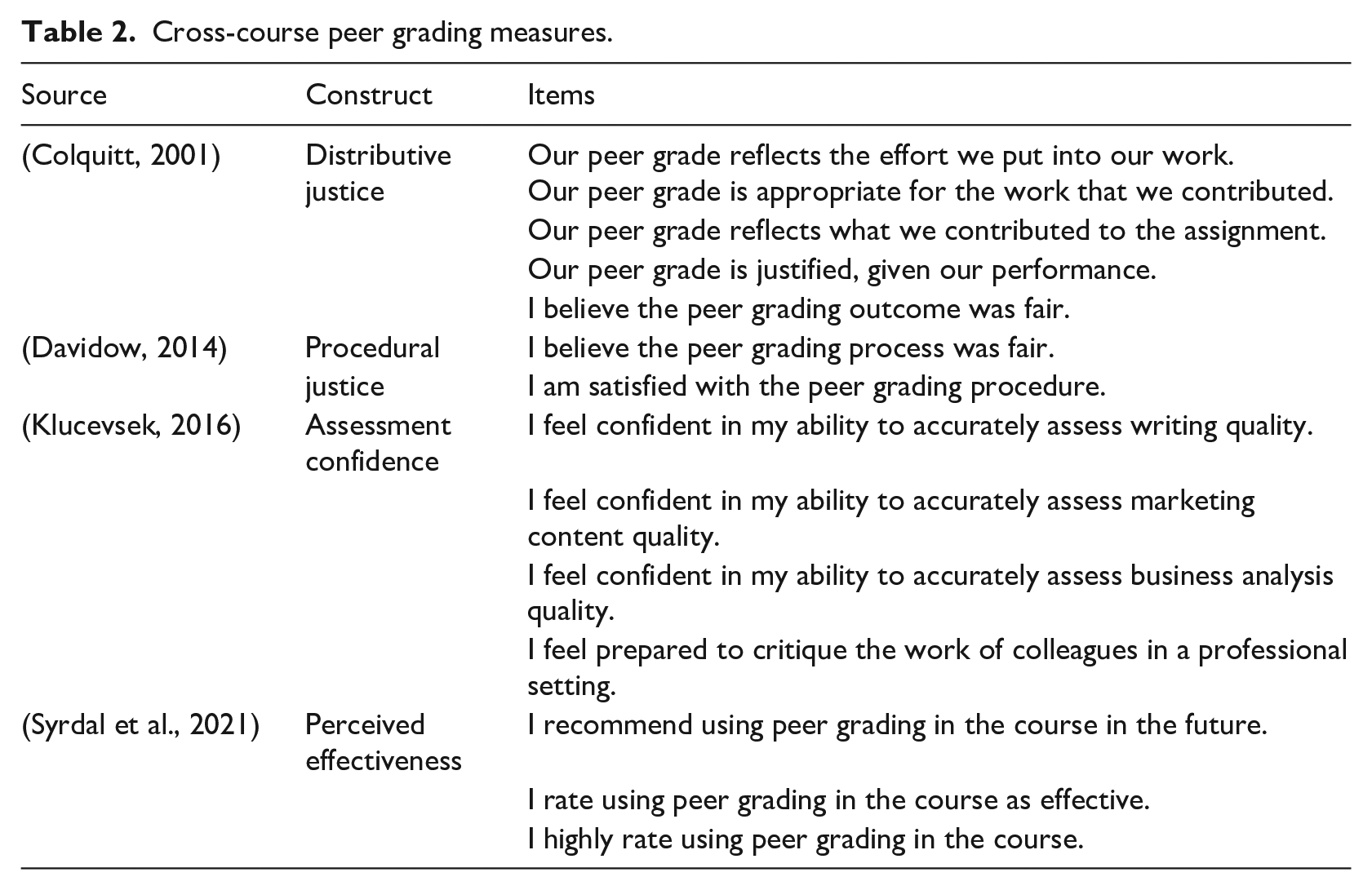

Novice students were given a survey near the end of the semester. The three-item scale from Syrdal et al. (2021) was utilized to capture perceived effectiveness of CCPG as a course component. A five-item scale adapted from Colquitt (2001) measured distributive justice. Two items from the scale developed by Davidow (2014) were applicable to CCPG and thus were utilized to measure procedural justice. Senior students were surveyed at the beginning and at the end of the semester. The pre-CCPG survey completed by the senior students inquired about assessment confidence using a four-item scale adapted from Klucevsek (2016). The same scale was replicated in the post-CCPG survey along with the three-item scale from Syrdal et al. (2021) to measure perceived effectiveness of the CCPG as a course component. Responses to all measures were collected using a scale from 1 (strongly disagree) to 7 (strongly agree). See Table 2 for scale items. The survey also collected demographic data.

Cross-course peer grading measures.

Exploratory factor analysis was utilized to assess the convergent validity of items for the dimensions on the survey administered to novice students. The analysis utilized varimax rotation based on eigenvalues greater than 1.0. Three dimensions emerged, explaining 90.5% of the variance. Cronbach alpha scores for all dimensions exceeded the .70 benchmark recommended by Nunnally (1978), thus establishing internal validity. The Cronbach alphas for the distributive justice scale (α = .96), procedural justice scale (α = .92), and perceived effectiveness scale (α = .95) indicate high internal consistency reliability.

Exploratory factor analysis was also utilized to assess the convergent validity of items for the dimensions on the post-CCPG survey administered to senior students. The analysis utilized varimax rotation based on eigenvalues greater than 1.0. Two dimensions emerged (i.e., perceived effectiveness and assessment confidence), explaining 76.8% of the variance. Cronbach alpha scores for all dimensions exceeded the .70 benchmark recommended by Nunnally (1978), thus establishing internal validity. The Cronbach alphas for the perceived effectiveness and assessment confidence scales were 0.96 and 0.79, respectively, revealing high internal consistency reliability.

Finally, the grades that senior teams awarded to the novice student teams on the marketing mix analysis assignment were recorded, along with the grades awarded by the instructor, and two teaching assistants. A third instructor who did not teach either of the focal classes collected the data via Qualtrics. Further, at the start of the survey students were instructed that they would be directed to a separate survey to input their name to receive credit for survey completion. This was conducted to delink the survey results from the reports that were provided to the instructors to award credit. Survey items were randomized within categories and responses to the survey instrument were anonymous, as recommended by Larson (2019).

Results

The surveys were completed by 139 out of 159 novice students (87% response rate) and 62 out of 75 senior students (83% response rate). A paired samples t-test was conducted to assess differences in the marketing mix analysis assignment grades awarded by the instructor and the senior student teams. Results indicate the difference in senior team grades (M = 79.28, SD = 18.856) and instructor grades (M = 77.66, SD = 14.087) was not significant, t(29) = 0.680, p = .502). Thus, H1 was supported.

A paired samples t-test was conducted to assess differences in assessment confidence for senior students. Results indicate an increase in assessment confidence from pre-CCPG (M = 5.89, SD = 0.630) to post-CCPG (M = 6.21, SD = 0.574) as significant, t(61) = 3.949, p < .001.). The effect size (d = .63) exceeded Cohen’s (1988) convention for a medium effect size (d > .50). Thus, H2 was supported.

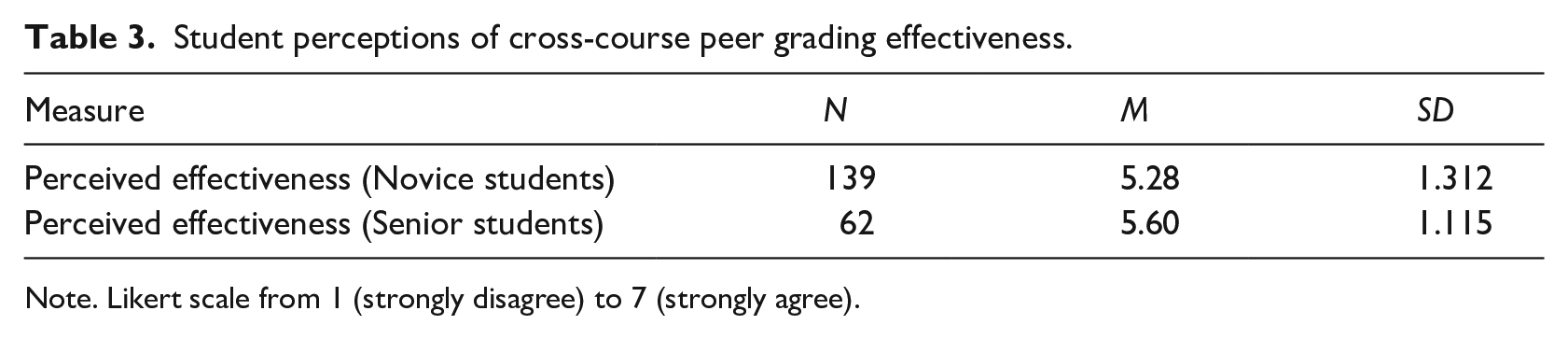

The post-CCPG survey given to both the novice and the senior students asked about perceived effectiveness. A one-sample t test for the perceived effectiveness scale for novice students was significant (M = 5.28, SD = 1.312, test value = 5, p = .007). The test value of 5 can be used as a base measure for level of agreement in pedagogical research (Vander Schee et al., 2022). The effect size (d = 1.31) exceeded Cohen’s (1988) convention for a large effect size (d > .80), providing further evidence that novice students perceived the CCPG to be effective as a course component. Thus, H3 was supported. A one-sample t test for the perceived effectiveness scale for senior students was significant (M = 5.60, SD = 1.115, test value = 5, p < .001). The effect size (d = 1.11) exceeded Cohen’s (1988) convention for a large effect size (d > .80), providing further evidence that senior students perceived the CCPG to be effective as a course component. Thus, H4 was supported. See Table 3.

Student perceptions of cross-course peer grading effectiveness.

Note. Likert scale from 1 (strongly disagree) to 7 (strongly agree).

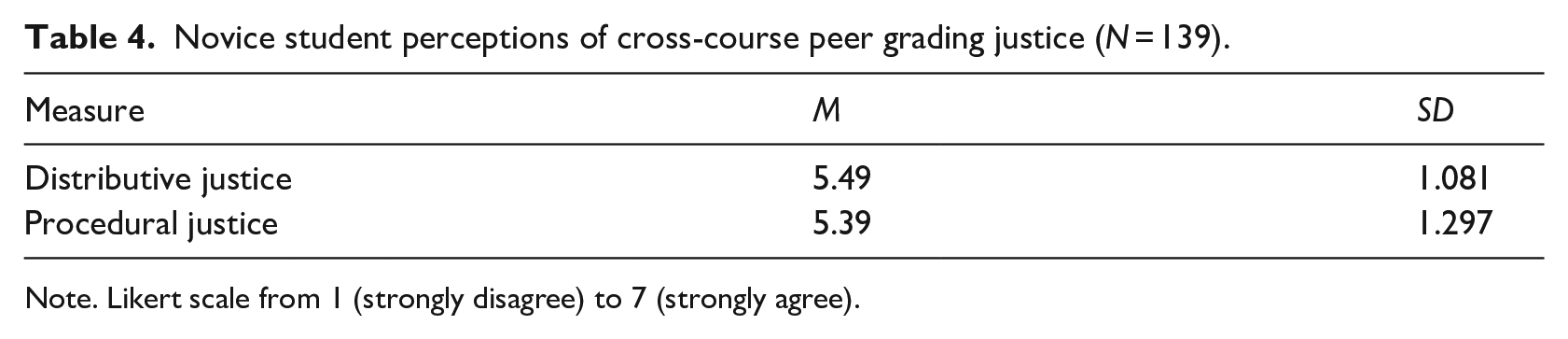

The post-CCPG survey administered to novice students also included measures for distributive justice and procedural justice. Results of one-sample t tests show that students experienced distributive justice (M = 5.49, SD = 1.081, test value = 5, p < .001) and procedural justice (M = 5.39, SD = 1.297, test value = 5, p < .001) using CCPG. The effect sizes (d = 1.08, d = 1.30, respectively) exceeded Cohen’s (1988) convention for a large effect size (d > .80), providing further evidence that novice students perceived the CCPG to exhibit distributive and procedural justice. See Table 4. Thus, H5 and H6 were supported.

Novice student perceptions of cross-course peer grading justice (N = 139).

Note. Likert scale from 1 (strongly disagree) to 7 (strongly agree).

t-tests were also conducted to check for differences between the online asynchronous and virtual synchronous introductory course sections regarding perceived justice, perceived effectiveness, and grades assigned by the instructor and senior students. No significant differences were found for any of the measures.

Discussion and conclusions

Online learning is likely to increase as it frees institutions from limitations of physical classroom space and therefore creates a path for a resource-efficient increase in course enrollments. Instructors will have to adapt to the larger class sizes afforded by online instruction by being more efficient in course delivery. One way to be more productive is to gain efficiency in assessment of student learning. Although multiple choice testing may automate assessment, utilizing team-based projects can also reduce the time needed for grading. Instructor evaluation time in this study was reduced by approximately 70% using CCPG with written assignments.

The results of this study show no significant difference in instructor and grades assigned by senior students. This finding builds on the work by Vander Schee and Birrittella (2021) who used online peer grading of group written assignments among student groups in the same course and found agreement with student and instructor evaluations. Although this research was also conducted in a completely online environment, this study used CCPG where senior student teams graded the written submissions of novice student teams in a different course. Also, online delivery format was not a factor as no differences were found between the online asynchronous and virtual synchronous introductory course sections.

The results of this study show that senior students increased their confidence regarding assessment skills from the CCPG experience. Written feedback provided by senior teams was constructive and aligned very closely with the rubric. Comments generally focused on thoroughness, appropriate application of concepts, and writing quality. This finding is consistent with prior studies where graders build confidence and advance their assessment skills from peer grading (Cho & Cho, 2011). While some senior students might feel they are “doing the instructor’s job,” the ability to judge the quality of the work of others is an essential skill needed in the workplace. Moreover, in this study senior students rated CCPG as an effective course component and recommend it for future use.

The objectivity of CCPG overcomes the challenge of self-assessment where high-achieving students tend to under-rate themselves (Topping, 1998) and low-achieving students tend to over-rate themselves (De Grez et al., 2012). Peer grading within a course introduces concerns regarding competency and social repercussions (Li et al., 2020). CCPG addresses accountability for student graders because they work with other senior students to complete the peer assessment.

Survey results from novice students indicate they perceive CCPG with senior teams as graders is effective and provides both distributive and procedural justice. This finding is encouraging for students who might otherwise see the process as being unfair. It is likely that novice students felt reassured by the accountability and objectivity in having their submissions evaluated anonymously by teams of senior students, as opposed to individual grading by other novice students in their own course. The combination of content expertise, anonymity, and team-based assessment may enhance perceived fairness and effectiveness of CCPG. Although CCPG requires coordination of student teams and possibly collaboration among instructors, the results of this study demonstrated that the benefits for instructors and students alike are worth the investment.

Limitations and future research

The results of this study demonstrate that CCPG improves instructor efficiency, helps graders increase assessment confidence, and provides perceived justice for students being graded. However, some things should be kept in mind when making application to other settings. The study was conducted at one institution with two instructors working together in two different courses. This requires coordination among the faculty and collaboration among the students. The senior graders were enrolled in a capstone course and therefore could expect to engage in activities such as CCPG as part of their training. Students in other courses may not as readily appreciate the benefits of being graders in CCPG.

Students who do not perceive the peer grading process as high stakes may give cursory attention to the task (Klein, 2018). In retrospect, it was also noted that using “understanding” in the grading rubric could introduce subjectivity as this construct is difficult to assess without further clarification. The survey was not tested in advance with a target population separate from the study participants to assess validity and one scale only had two items, both of which remain as limitations of the study. Students in the capstone course were verbally encouraged to constructively participate in CCPG, however the possibility of social loafing and social desirability were additional limitations.

Future research could explore whether the weight of the assignment affects attentiveness and quality of peer grading. Students with less experience do not necessarily have the requisite knowledge and therefore may be less engaged in the grading process (Haddadi et al., 2018). Future research could help ascertain the level of expertise needed for effective CCPG. This study utilized seniors, however using third-year students might also produce similar results. Comparing the results of senior teams and individuals as graders merits further investigation.

Peer grading in rounds, or scaffolding, allows for revision and resubmission (Könings et al., 2019). Research in this area could explore how team peer feedback mirrors academic research and professional business analysis where teams work collaboratively to improve the final work product. This approach was addressed by Roman et al. (2020) using peer formative feedback, although not using a CCPG format. Seniors are less than 1 year away from full-time employment or graduate school enrollment where they will be expected to engage in peer review or critique team-created materials. Studies that examine the influence of CCPG beyond the collegiate experience could ascertain the long-term benefits. Finally, future research could explore using technology beyond the learning management system, such as mobile apps to provide peer assessments (Blau et al., 2019).

Footnotes

Appendix

Marketing mix analysis rubric and scoring card.

| F = Needs much work* | D = Needs work* | C = Acceptable** | B = Good work** | A = Exemplary work** | |||

|---|---|---|---|---|---|---|---|

| More than one required component missing. No effort to apply marketing terminology, concepts, or frameworks. Several marketing terms or concepts applied inaccurately. Poor understanding of marketing concepts. More than one logical concern. More than one citation omission. More than one noncredible source used. In-text citations or references omitted. *Any of the following. |

One required component missing. Little effort to apply marketing terminology, concepts, or frameworks. More than one marketing term or concept applied inaccurately. Below average understanding of marketing concepts. No more than one logical concern. No more than one citation omission. No more than one noncredible source used. In-text citations or references omitted. **All the following. |

All required components addressed. Some effort to apply marketing terminology, concepts, or frameworks. No more than one marketing term or concept applied inaccurately. Average understanding of marketing concepts. Average logical analyses. Provides citations that support claims. Uses credible sources of information. Full in-text citations and references. |

All required components addressed. Consistent effort to apply marketing terminology, concepts, or frameworks. No marketing term or concept applied inaccurately. Good understanding of marketing concepts. Mostly logical analyses. Provides citations that support claims. Uses credible sources of information. Full in-text citations and references. |

All required components addressed. Superior effort to apply marketing terminology, concepts, or frameworks. Accurate application of marketing terminology throughout. Superior understanding of marketing concepts. Superior logical analyses. Provides citations that support claims. Uses credible sources of information. Full in-text citations and references. |

|||

| Marketing Mix Analysis Scoring Card | |||||||

| Content Area | F (0-59) | D (60-69) | C (70-79) | B (80-89) | A (90-100) | Points | |

| 1. Product analysis | |||||||

| 2. Place analysis | |||||||

| 3. Pricing analysis | |||||||

| 4. Promotion analysis | |||||||

|

|

|||||||

|

|

|||||||

Constructive Feedback.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.