Abstract

Rubrics are often used as tools for criteria-based assessments. Although students indicate that they appreciate comments given as feedback which make reference to the rubric and provided in addition to it, there is little information on how this type of feedback actually differs from in-text comments with respect to focus, level, and function of the feedback. The focus refers to three major questions in evaluating students’ understanding of information: Where am I going? How am I going? and Where to next? That is, feedup, feedback, feedforward. The level refers to the level at which feedback is directed. That is, the level of task performance, the level of the process of understanding how to do a task, the regulatory or metacognitive process level, and/or the self or personal level. Finally, the function refers to the type of content of the feedback. For example, feedback can be a question, suggestion, or correction. More information on this issue could better inform the decisions on how to provide written feedback to students on written coursework/assignments. The study described in this article gathered data from almost 1000 feedback instances. The results revealed that about two-thirds of the feedback instances were provided in-text and about one-third were comments which made reference to the rubric and were provided in addition to it. The results show that comments in both modalities are overrepresented by feedback at the task level, but that comments which made reference to the rubric and provided in addition to it contain somewhat more feedforward and process-related comments. The largest differences were found in the function of feedback. Whereas in-text comments ask for clarifications, provide corrections, and ask questions, written comments which made reference to the rubric and are provided in addition to it include mainly affirmations, argumentations, and suggestions. Implications for practitioners are discussed.

Feedback in the learning process

In today’s education, there is an increasing interest in assessment for learning. This interest is raised mainly by the acknowledgment that grading alone does not sufficiently promote student learning. Feedback is seen as a critical component of the learning process and has gained considerate attention in both research and practice resulting in abundant literature relating to feedback in higher education and how best to provide it in order to promote learning (Boud and Molloy, 2013a; Gibbs and Simpson, 2004; Hattie and Timperley, 2007; Lizzio and Wilson, 2008; Orsmond and Merry, 2011; Rust et al., 2003).

Thus far, the research has resulted in many different feedback definitions and classification systems, from which guidelines result. One well-known definition and corresponding classification of feedback is the one provided by Hattie and Timperley (2007), who define feedback as “information provided by an agent (teacher, peer, book, parent, self, experience) regarding aspects of one’s performance or understanding” (p. 81). They distinguish three different foci of feedback: feedup illuminates the goals for a student. Feedback provides insight into where the student stands. Feedforward gives information on how to proceed. A positive aspect of this particular classification is the focus on the feedforward function of feedback which is believed to strengthen its effect (see also Brown and Glover, 2005). Feedforward, namely, informs students about how to improve future work. Hattie and Timperley (2007) also distinguish four different content levels of feedback (i.e. task, process, regulation, and person). Feedback related to the task gives information about the task itself, for example, for an essay it might be “What you state over here is not clear” or “this is not true” Feedback on the process level refers to the strategy the student (should) used to fulfill the task. An example of such feedback is “interlink the insights from literature, instead of summing them up” (Arts et al., 2016). Feedback that stimulates reflection on the task is classified as feedback on the level of self-regulation. An example of such feedback is “Is the method you used in your study the right one to answer your research question?” Finally, feedback such as “You are a bright student” is classified as feedback on the level of the person (Hattie and Timperley, 2007) and provides an opinion of the feedback agent on the (characteristics of the) learner.

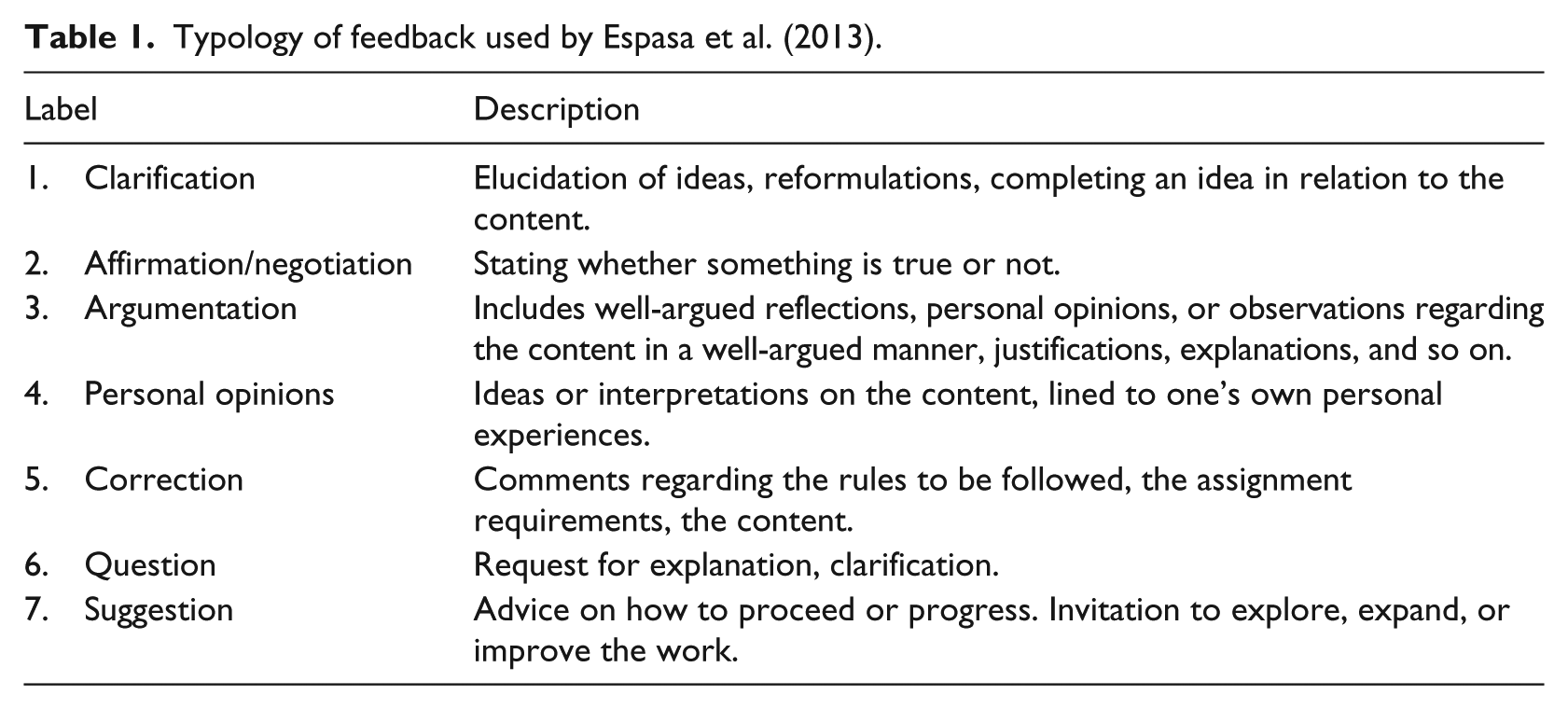

Whereas Hattie and Timperley (2007) focus on the aim of the feedback, Glover and Brown (2006) focus more on the function or content of (written) feedback and distinguish between (1) indications, (2) corrections, and (3) indications or corrections with an explanation. An indication might be, “Something is missing here,” whereas a correction might be a spelling error that is corrected by the feedback agent. In the third category, an argumentation of why something is indicated or corrected is added. A more recent and elaborative classification based on the function of feedback is provided by Espasa et al. (2013). In this typology, seven different categories—based on the semantic function (i.e. the type of content of the feedback)—are distinguished. The typology with a short description is displayed in Table 1.

Typology of feedback used by Espasa et al. (2013).

Although there are some guidelines regarding the focus, level, and function of feedback, such as that feedback should not be too detailed or it should also provide information on how to proceed, a greatly understudied area is the modality in which feedback is provided. However, the modality of feedback can strongly influence the content and aim of the feedback. The relatively few studies investigating this issue have mainly focused on written versus oral feedback (e.g. Chalmers et al., 2018), written versus audio feedback (e.g. King et al., 2008; Morris and Chikwa, 2016), or providing exemplars versus individual feedback (e.g. Handley and Williams, 2011). In a study by Morris and Chikwa (2016), for example, students either received audio feedback or written feedback. Unexpectedly, students seemed to highly favor written feedback over audio feedback because it was much more detailed. This is confirmed by a study of Walker (2009), who found that students find written feedback very useful. But also written feedback can be provided in different ways and not all modalities might be equally effective. In a study of Huxham (2007), it was found, for example, that students favor personal versus model feedback, but modeled feedback led to higher grades. It was suggested that a hybrid form of feedback might be best. In a similar vein, Nordrum et al. (2013) investigated student perceptions and content of in-text versus rubric-referenced feedback. They found interesting results regarding the content of feedback for the two different modalities leading to the conclusions that rubric-referenced feedback and in-text feedback might trigger different feedback behaviors of lecturers. However, the question is what kind of feedback behavior that is and if it suits the intended (theoretical) aim of the modality.

In-text feedback

The most widely used feedback modality is in-text feedback, which can be handwritten comments inserted in the hard/printed versions of text, but today, most often lecturers use text-processing programs such as Microsoft Word to provide in-text feedback on online/digital text. This program offers two different options for in-text feedback, namely, comments (annotations) and track changes (Elola and Oskoz, 2016). The track change function enables the lecturer to directly change certain parts of the student’s text and the student can easily see the changes because they are given a different color. Because the original text is also presented, the student can reflect upon the changes and decide whether or not to accept the changes. Besides track changes, text-progressing programs also provide the possibility to add comments next to the text. These comments can be used to place a correction next to the text or it can be used to “correspond” with the student by adding questions, suggestions, or information instead of directly making changes for the student (AbuSeileek and Abu-Al-Shar, 2014), which offers the possibility of providing more elaboration.

Rubric-referenced assessment

A different way to look at assessment and feedback is using predefined criteria to base the assessment or feedback on (Sadler, 2005). This way of assessment has become increasingly important in higher education as a result of a more student-centered approach to learning in which formative assessment is seen as an important part of instructional design and higher quality standards of assessment. An instrument that is often used in criteria-based assessments (see Panadero and Jonsson, 2013 for a recent overview), both for formative and summative purposes, is a rubric. A rubric is a matrix in which assessment criteria are defined and described at different levels of performance (Reddy and Andrade, 2010). Compared with other means of feedback and assessment, the major advantage of rubrics is that clear relations between learning outcomes, learning activities, and assessment can be seen (Hyland et al., 2006). In addition, by using rubrics, criteria for good performance are more transparent and it is suggested that they can help raters to focus more on content and development than on mechanics, which is a crucial prerequisite for both assessment for learning and deep approaches to learning (see, for example, Nicol and Macfarlane-Dick, 2006; Reddy and Andrade, 2010; Rezaei and Lovorn, 2010).

However, there is also criticism on the use of rubrics as a method for criteria-based assessment. Shute (2009), for example, stresses that criteria-based assessments in general are still subjective in nature as lecturers and students interpret the criteria differently (see also Li and Lindsey, 2015). There are also some who warn that rubrics reduce feedback on the particular assignment and the feedback becomes too generic and vague for comprehension (e.g. Wilson, 2007 cited in Li and Lindsey, 2015). Ali et al. (2017) and Li and Lindsey (2015) show that such vagueness is a reflection of lecturers’ perceptions of criteria and the subsequent learning impasse where neither lecturers nor students fully understand the link between assignments and assessment criteria. Many lecturers, therefore, add some written comments for the student (i.e. rubric-referenced feedback) in which the lecturer explains and clarifies their (formative or summative) judgment or criteria.

Differences between feedback channels

Although in-text feedback and rubric-referenced feedback are often used in educational practice, there is very little research on which kind of feedback these modalities yield and how they are distinct. However, the way a lecturer uses these modalities and communicates the feedback to a student is very important, considering feedback effectiveness and efficiency (Dawson et al., 2018; Li and Lindsey, 2015). One of the few studies on this issue is the study by Nordrum et al. (2013), who conducted a study of feedback procedures in order to explore if and how in-text commentary and rubric-referenced assessment could enhance learning. More specifically, they wanted to investigate how students comprehend, incorporate, and act upon the two feedback modalities as well as whether both modalities were necessary and beneficial in the course. They also wanted to evaluate the current feedback practice and create a better synergy effect between both feedback channels. For that purpose, Nordrum et al. (2013) provided students with in-text comments (e.g. editing symbols and margin notes of both lower and higher order concerns, and a brief paragraph of general comments) and rubric-referenced feedback indicating the level for each criterion as well as subgrades for the major categories of language, structure, and content, and finally an overall grade.

During the course, they gathered students’ perceptions of the two feedback channels by reflective notes, questionnaires, and interviews. They found that students attribute different functions to in-text and rubric-referenced feedback. More specifically, the in-text commentary was seen by students as useful for lower order concerns such as text corrections, pointing out errors or commenting on the quality of the arguments, whereas rubric-referenced feedback was seen as useful for higher order concerns such as pointing out common errors or providing a new perspective on the text. Moreover, whereas 78% of the student (

In a study conducted by Li and Lindsey (2015), it was examined how lecturers and students perceive the intended purpose of rubrics. The results show rather different perspectives between lecturers and students. Students were more likely to see rubrics as an instructional tool as compared to lecturers. However, students also indicated that “writing with a rubric seems to be more about meeting the standards rather than writing and growing” (p. 76). Moreover, in the same study, it was also found that the criteria of the rubrics were very differently interpreted by both students and lecturers. Adding feedback to rubrics might thus be important to provide more information on how the criteria are interpreted by the lecturer, and stimulate assessment for learning, which is an important motivation for many lecturers to actually use rubrics.

Despite the results of studies on in-text comments as feedback and feedback which makes reference to the rubric, it remains unclear how lecturers actually use the different feedback channels, that is, if the feedback provided in the two feedback channels actually differs. It is, however, clear that students assign different functions to the feedback that is provided in rubrics and in-text. It also becomes clear that from a more theoretical perspective, the feedback might be very different in the two feedback modalities. Written comments as feedback which make reference to the rubric is expected to foster assessment for learning by providing feedback that is less focused on mechanics and more focused on feedforward on the level of self-regulation and the process of writing. Written comments provided by way of in-text comments is more suited to provide detailed feedback on the task itself. Ideally, the two feedback modalities would thus be used for different purposes, resulting in different kinds of feedback. However, we do not know if this is the case in practice and therefore there is a need to further explore the kind of feedback provided by lecturers in each of the two feedback channels. That is, to explore if and how in-text commentary and comments as feedback which make reference to the rubric are provided. There is therefore a need to investigate how lecturers use the two feedback channels and whether both channels are necessary and/or complementary.

Methods and materials

Context and participants

The study was conducted in winter 2017/2018 in a Master’s program in the educational department of a university of applied sciences in the Netherlands. The program offered people working in the field as a teacher, policy officer, or instructional developer the opportunity to learn more about testing and assessment. The Master’s program was offered as a part-time study (24 hours of education per week), combining face-to-face meetings and online education. As the program is a part-time program, it attracts many students who already have quite some working experience. The mean age was therefore also quite high (

The study was based on the feedback provided by three lecturers. They are experts on (formative or summative) assessment and had several years of experience in teaching.

Assignments

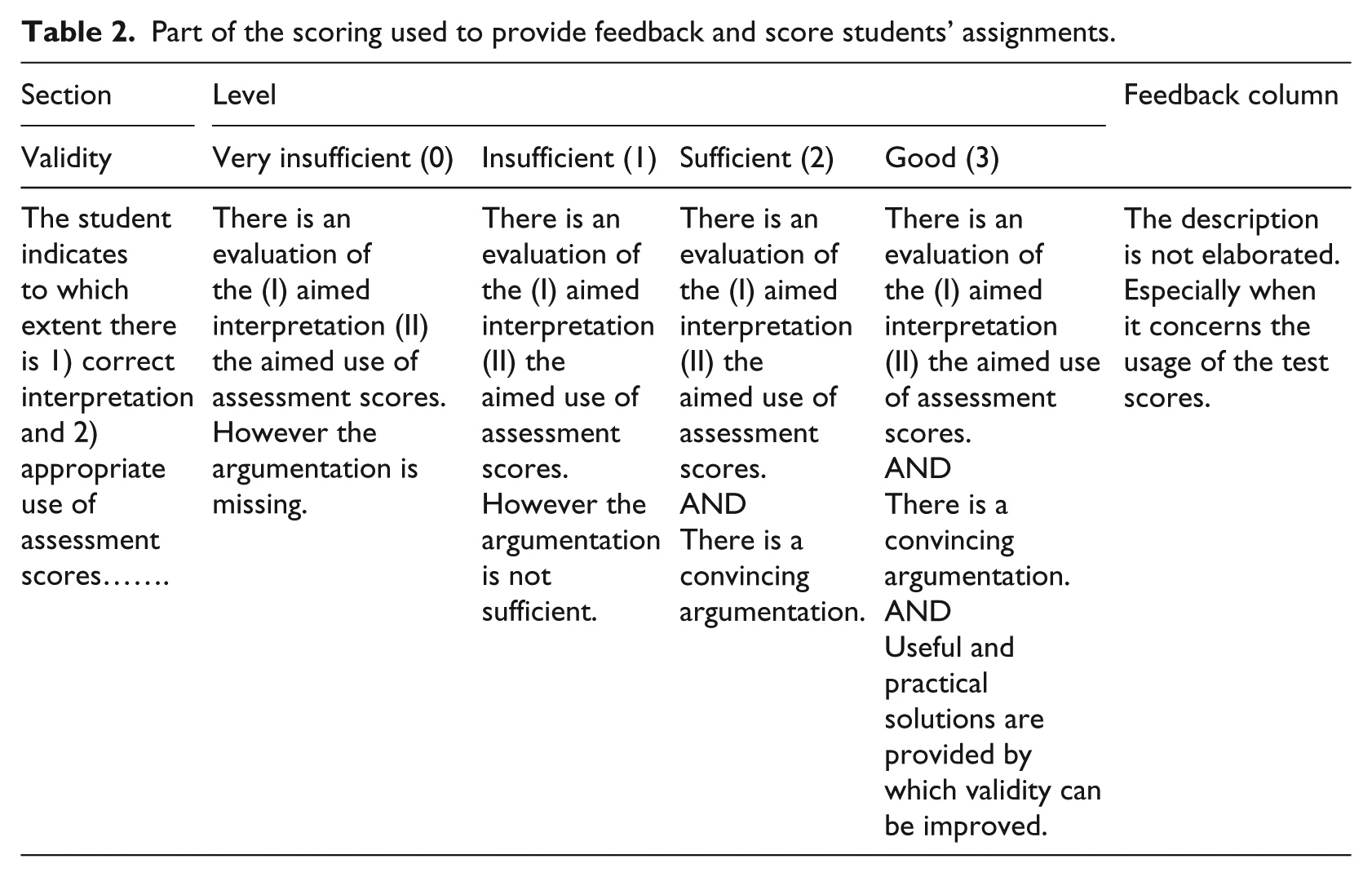

Feedback on 18 written assignments was collected (six assignments per lecturer). The assignments were randomly selected from a larger pool of assignments from two similar courses. In the first course, students worked on an assignment in which they had to evaluate the validity and reliability of a test at their workplace (primary, secondary, or tertiary education) and advise how to redesign the test. In the second course, students wrote a research article in which they analyzed a specific assessment topic on two different educational levels (for instance, a comparison of formative assessment in primary and higher education). The assignments used in this study are very common in bachelor and master’s courses in the Netherlands and worldwide. The assignment consisted of a problem definition, theoretical framework, design, analysis/results, conclusion, and discussion. The interim versions of the assignments were about 15–17 pages long (including title page, references, etc.). The final versions were evaluated by one of the three lecturers involved in the courses using the following criteria: quality of the problem definition, theoretical framework, design, analysis, conclusion, discussion, content, and writing skills. Part of the rubric used to score/mark the assignments is provided in Table 2.

Part of the scoring used to provide feedback and score students’ assignments.

Feedback

Feedback by the lecturers was provided during the course on an interim version of the assignment. The feedback thus had a formative purpose. Lecturers provided feedback in two ways. Feedback was provided in the text using track changes and annotations (Word©) and lecturers filled in the rubric and added feedback at the end of every row (see Table 2 for an example).

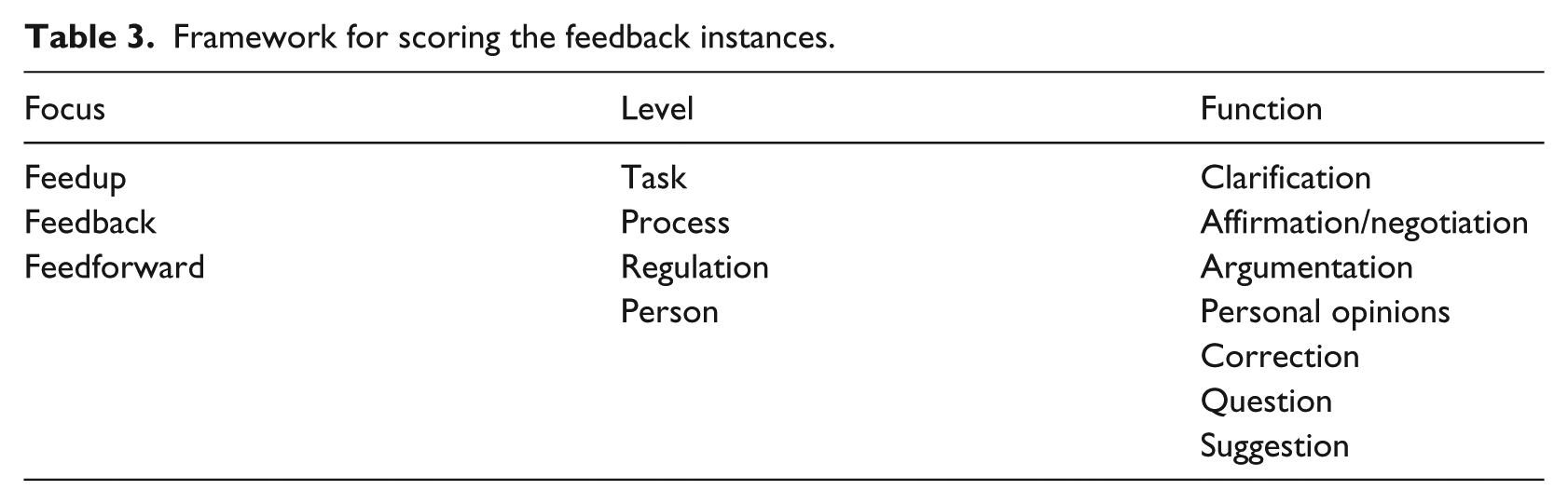

Scoring model

To analyze the feedback, a scoring model was designed combining the theoretical model of Hattie and Timperley (2007) and the typology of Espasa et al. (2013). These frameworks were used for several reasons. First, the framework of Hattie and Timperley is one of the few which explicitly includes feedforward (Boud and Molloy, 2013b; Hounsell et al., 2008; Sadler, 1989) as a category which is relevant considering the function of rubrics suggested in the literature (cf. Ali et al., 2017). Second, the framework is well known in higher education. Third, the framework has been used in earlier studies focusing both on peer- and self-assessment as well as in lecturer provided feedback (Arts et al., 2016; Gan, 2011; Harris et al., 2015) and its theoretical underpinning fits very well the theoretical underpinning of rubrics. The framework of Espasa et al. (2013) was added as it provides more detailed insight into the content of the feedback that is provided to students. The combined framework is presented in Table 3. In the remainder of this article, we use italics to refer to feedback as information on how the student is doing and use feedback without italics if we refer to feedup, feedback, and feedforward.

Framework for scoring the feedback instances.

Procedure

Ethical approval was granted by the university before data collection. Students and lecturers were informed about the study during the teaching sessions and staff meetings and lecturers agreed to participate in the study by signing an informed consent form. The students received feedback on the interim version and then handed in the final version of their assignment some weeks later. The written feedback on the interim version was collected after the course and analyzed by the researchers.

Data analyses

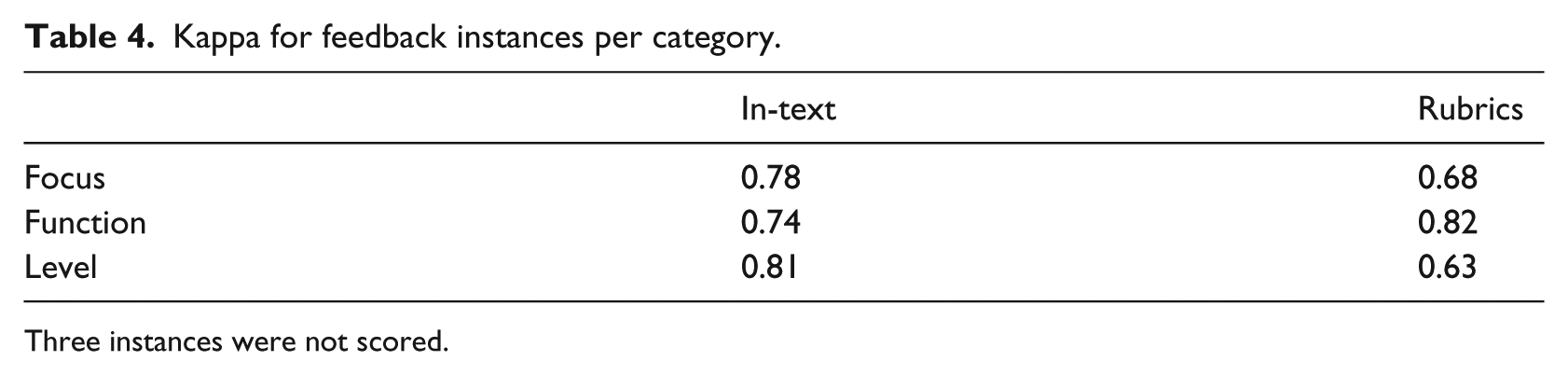

The scoring model was tested and discussed among the raters to come to a shared notion (i.e. conceptual development). Based on this conceptual scoring model, three raters scored two example assignments, initial agreement was calculated and the outcomes were discussed face-to-face (coder training). Then in the final stage (final reliability assessment), a subset of the assignments was scored by two raters using a crossed design (Hallgren, 2012). Following initial scoring, differences were discussed face-to-face and the scoring model was further specified. Then, the raters looked again at their scoring and adapted their rating accordingly to the modified definitions. Final interrater reliability was sufficient for all three categories (see Table 4). A fourth rater was consulted to take a final decision on the instances if the initial raters did not find agreement.

Kappa for feedback instances per category.

Three instances were not scored.

For all 18 assignments and rubrics, the feedback utterance were scored in Microsoft Word and these scores were transferred to Microsoft Excel 2010©. A feedback utterance could be a spelling mistake that was corrected by the lecturer (i.e. using the track- change function in Word), reformulations, added text, or a written comment (i.e. using the comment function in Word or the last column of the rubric) that constituted a meaningful unit. A feedback utterance could receive more than one label (e.g. feedback and feedforward or task and process). Within Microsoft Excel 2010, frequencies for the three categories focus (i.e. feedup, feedback, feedforward), level (i.e. task, process, regulation, person (Hattie and Timperley, 2007), and function (question, suggestion, etc.; Espasa et al., 2013) were calculated.

Results

The results show that in-text feedback is much more detailed (804 comments) compared to rubric-referenced feedback (128 comments).

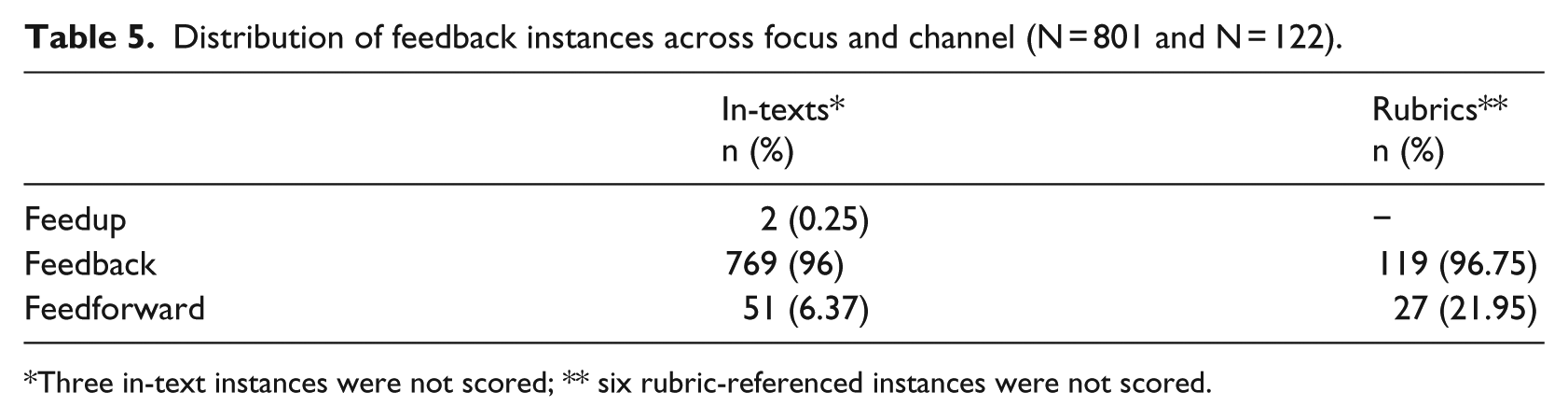

Focus of feedback

In Table 5 it can be seen that most instances (96% and 96.75%) informed students about how they were doing and thus had a focus on feedback. Examples of feedback instances are “I do not understand this,” “An Excel form cannot be used as attachment,” and “The structure of this section is different than I would expect [..].” The results show that feed up and feedforward were hardly used by the lecturers in the in-text comments (about 0% for both feedup and feedforward). Rubrics contained no feedup comments but quite some feedforward instances (21.95%; e.g. “You could give the reader more information about the aim of this document” and “The design choice needs to be further explained”).

Distribution of feedback instances across focus and channel (N = 801 and N = 122).

Three in-text instances were not scored; ** six rubric-referenced instances were not scored.

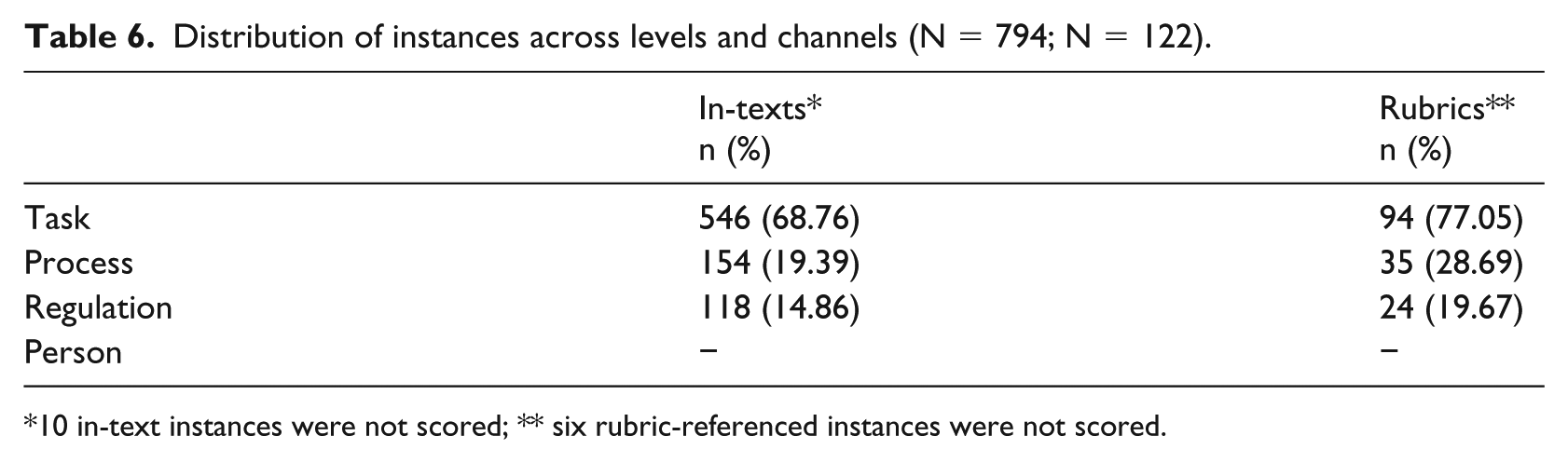

Levels of feedback

The results of the level (task, process, regulation, or person) analysis are shown in Table 6.

Distribution of instances across levels and channels (N = 794; N = 122).

10 in-text instances were not scored; ** six rubric-referenced instances were not scored.

By far, most instances were at the task level, both the in-text instances (68.76%) and rubric-referenced instances (77.05%; e.g. “Very clearly written and a nice analysis of overlap and differences. Usage of tables is very functional” and “Your research question is formulated in a very complex way”). In the rubrics, there was more feedback at the process level (28.69%; e.g. “Use ‘first, ‘second, etc. to structure your paragraph” and “Describe how you made this comparison”) and regulation (19.67%; e.g. “What is your answer to the main research question?” and “Do you have suggestions for further research?”) compared with in-text commentary (19.39% and 14.86%; e.g. task feedback: “This part is well written but does not belong in the introduction (..) You could include it in your research plan" (…) e.g. process feedback: "Here again you have made a choice. Why did you do a literature search and not a survey?”).

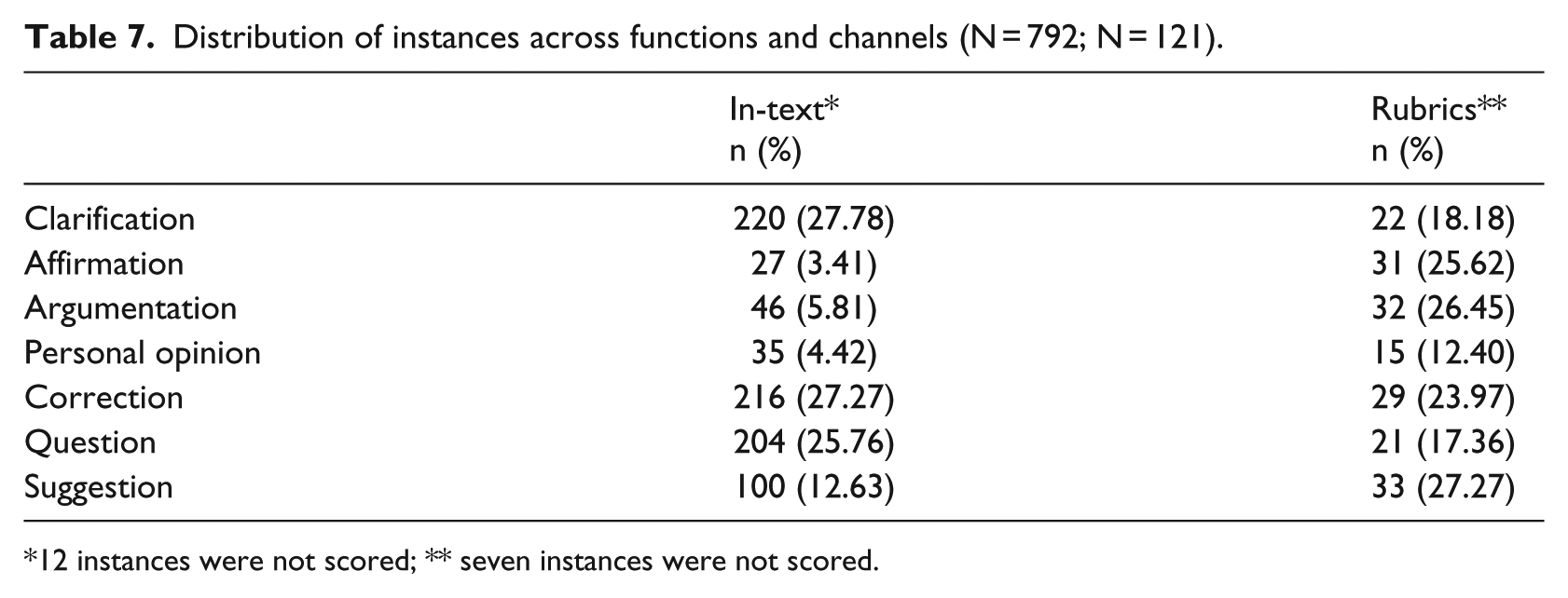

The function of the feedback

In Table 7 the function of feedback is displayed. The predominant functions of feedback in the texts were clarifications (27.78%; e.g. “Quality of assessment is important in all areas of education. To guarantee a good assessment quality you follow the PDCA cycles (..)”) followed by instances in the form of corrections (27.27%; e.g. “1,” “One sentence is not a paragraph.”(..) [..].(..) vocational education and university education). In rubrics on the other hand, the predominant functions of feedback were suggestions (27.27%; e.g. “Your conclusions could be somewhat more nuanced” or “You could give your reader more information about the aim in the introduction”), followed by argumentations (26.45%; e.g. “There has been used additional literature but this is limited. Most literature was provided in the course” and (…) you describe exactly the main issue. Good framing of the two aspects) and affirmations (25.62%; e.g. “Nice introduction”). In-text comments on the other hand rarely included affirmations (3.41%; e.g. “This is what I mean with descriptive. Nice sentence!”) or argumentations (5.81%; e.g. “This is too much repetition of previous information. Reconsider if you need to state this again or if you want to repeat only your main question OR phrase your sub-questions as main question”).

Distribution of instances across functions and channels (N = 792; N = 121).

12 instances were not scored; ** seven instances were not scored.

Discussion and conclusion

This study aimed to gain a better understanding of how feedback by way of comments which make reference to the rubric compared with feedback provided as in-text comments, is used by lecturers and if the way that these feedback modalities are used can be improved. We therefore studied the type, level, and function of the feedback in the two modalities.

The results show that there are far more in-text comments than there are comments used in addition to the feedback provided by way of rubric-referenced ones, over five times more, in fact. This confirms previous criticism on criteria-based assessment (e.g. Shute, 2009) in the sense that rubrics lead to less detailed feedback. Furthermore, the results are in line with research (Arts et al., 2016; Harris et al., 2015) showing that lecturers mainly focus on feedback at the task level, and that this is the case in both modalities. However, the results also show that comments provided in addition to the use of the rubric contain more feedforward comments and process-oriented comments. This fits very well with the intended purpose of rubrics in general (i.e. focusing on development). The results are also in line with the findings of Nordrum et al. (2013), in the sense that lecturers use comments provided in addition to the use of the rubric for different functions compared with in-text comments. In-text feedback is, for example, mainly used for clarifications, corrections, and questions, whereas comments provided in addition to the use of the rubric are mainly used for affirmations, argumentations, and suggestions. However, despite the differences in feedback between the two modalities, the overlap in the focus and level of in-text feedback and comments provided in addition to the use of the rubric was very high, which leads to the question of which modality to use and why.

Although the results provide interesting insight into how the two feedback modalities are used in educational practice, it is important to address some limitations of this study. First, although we had good arguments to use the framework of Hattie and Timperley (2007), this framework may not be the most optimal one for exploring differences between feedback modalities. We needed several rounds of calibration to achieve an adequate level of interrater agreement (see also Arts et al., 2016), and from a content-wise perspective, different frameworks may be more appropriate. A more relevant distinction might, for example, be lower order comments (text corrections, etc.) and higher order comments (structure, argumentation), as research has shown that in-text commentary is relevant for lower order concerns such as text corrections, pointing out errors or commenting on the quality of the arguments, whereas rubric-referenced feedback aims for higher order concerns such as pointing out common errors or providing a new perspective on the text (Nordrum et al., 2013). Future research, therefore, might look into different frameworks to code the feedback in the two modalities. Second, the study was conducted among a very small and selective sample. It can be expected that in different disciplines and assignments, for younger students, and in different countries/cultures, that is, in different contexts, lecturers use in-text comments and rubric-referenced feedback differently. Due to this, the generalizability of the results is limited and more research is needed using different types of assignments, and students and lecturers from different disciplines and countries/cultural contexts.

Despite these limitations, a practical implication of our results is that lecturers should (re)consider the aim and modality of their feedback very carefully, and adapt the focus, level, and function of the feedback toward that intended aim and chosen modality. Choosing purposefully for a certain modality of feedback may help to attune the focus, level, and function toward its intended aim. A useful distinction may be to use rubric-referenced feedback for “explaining” (affirmations, argumentations) why a student reached (at that point in time) a certain level of the rubrics score and what he or she needs to do to reach the next level (feedforward at the process and regulation level using suggestions), whereas in-text feedback may mainly be used for feedback at the task level aimed at improving argumentation, structure, language, and so on, on a text-based level by using corrections, suggestions, and clarifications.

In conclusion, the study has shown that comments as in-text feedback and comments which are provided in addition to the use of a rubric contains different feedback. However, the results also showed that there is quite some overlap in the kind of feedback that is provided through the two feedback modalities with a strong focus on feedback at the task level. Comments which are provided in addition to the use of a rubric seem to trigger more feedforward and process-oriented comments of lecturers and are particularly used for affirmative, argumentative, and suggestive comments. In-text feedback is mainly provided at the task level and contains mostly clarifications, corrections, and questions. An important implication of our research is that—independent of the written feedback modality—there is a strong focus on providing feedback at the task level in written feedback comments, but there are also some important differences in the kind of feedback provided through the two different modalities. As providing both kinds of feedback is a very effortful and time-consuming process, lecturers should carefully consider the main aim of the feedback as well as the resources available to them (e.g. the number of markers available to do the marking, whether the assessment is formative or summative in nature, the amount/nature of any moderation that has to be undertaken, and the timeframe by which feedback has to be returned to students) to make well-informed decisions about what kind of feedback to provide and which modality best fits this purpose.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.